Instruction Selection Copyright 2003 Keith D Cooper Kennedy

- Slides: 23

Instruction Selection Copyright 2003, Keith D. Cooper, Kennedy & Linda Torczon, all rights reserved.

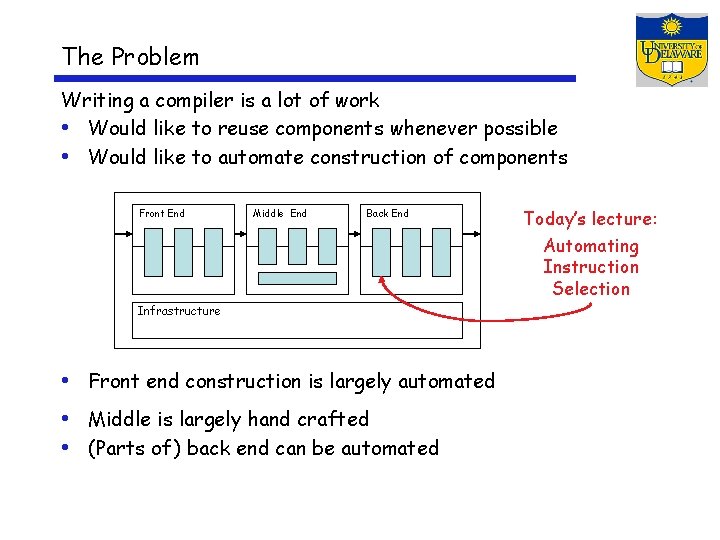

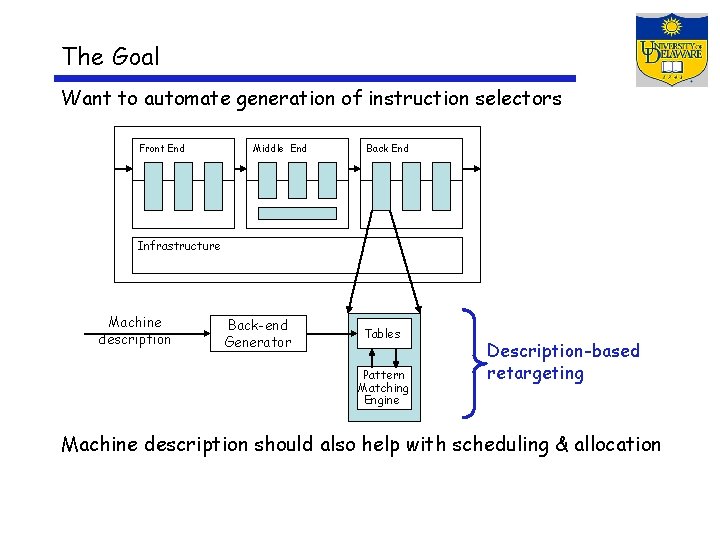

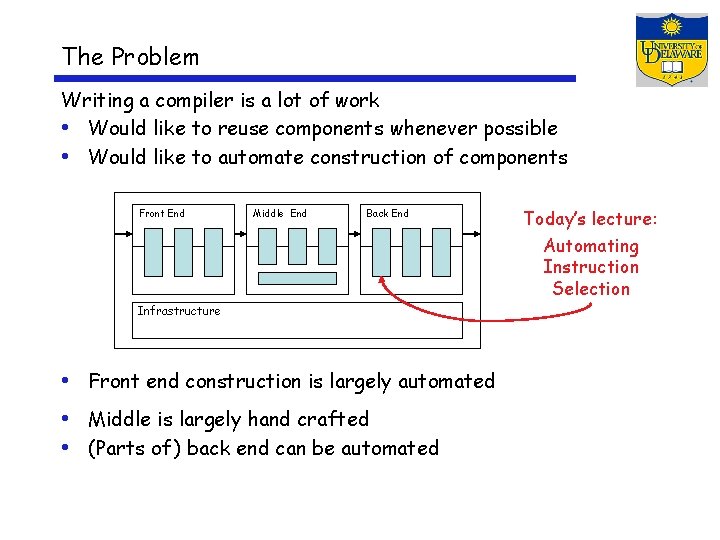

The Problem Writing a compiler is a lot of work • Would like to reuse components whenever possible • Would like to automate construction of components Front End Middle End Back End Today’s lecture: Automating Instruction Selection Infrastructure • Front end construction is largely automated • Middle is largely hand crafted • (Parts of ) back end can be automated

Definitions Instruction selection • Mapping IR into assembly code • Assumes a fixed storage mapping & code shape • Combining operations, using address modes Instruction scheduling • Reordering operations to hide latencies • Assumes a fixed program (set of operations) • Changes demand for registers Register allocation • Deciding which values will reside in registers • Changes the storage mapping, may add false sharing • Concerns about placement of data & memory operations

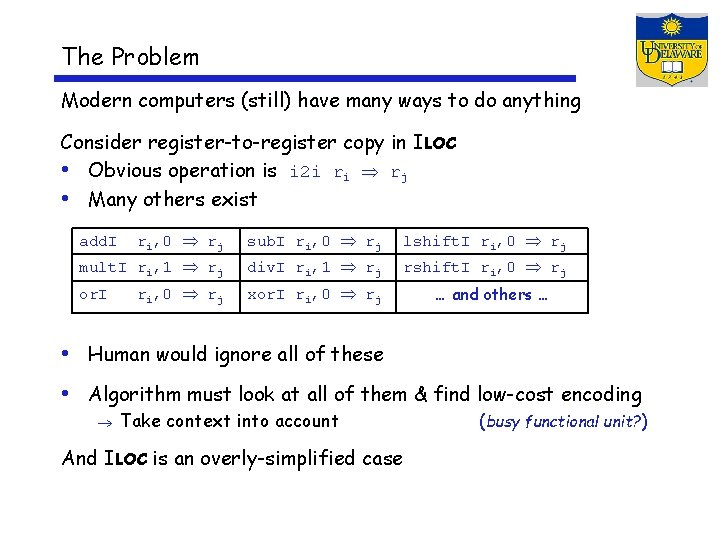

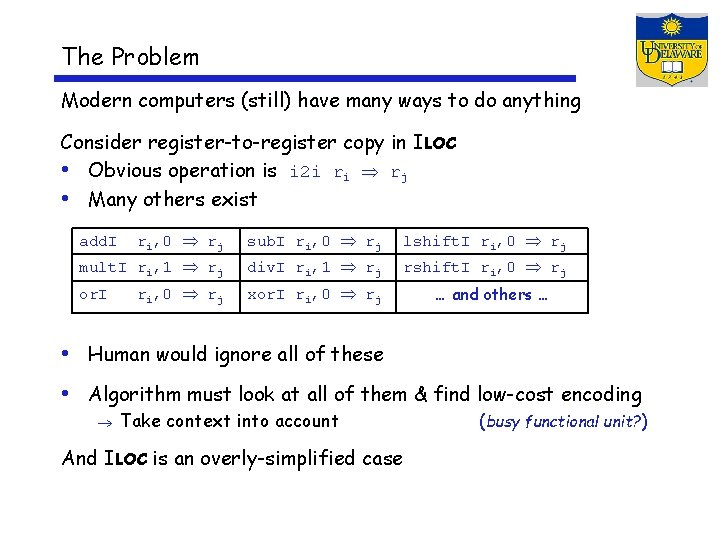

The Problem Modern computers (still) have many ways to do anything Consider register-to-register copy in ILOC • Obvious operation is i 2 i ri rj • Many others exist ri, 0 rj sub. I ri, 0 rj lshift. I ri, 0 rj mult. I ri, 1 rj div. I ri, 1 rj rshift. I ri, 0 rj xor. I ri, 0 rj add. I or. I … and others … • Human would ignore all of these • Algorithm must look at all of them & find low-cost encoding Take context into account And ILOC is an overly-simplified case (busy functional unit? )

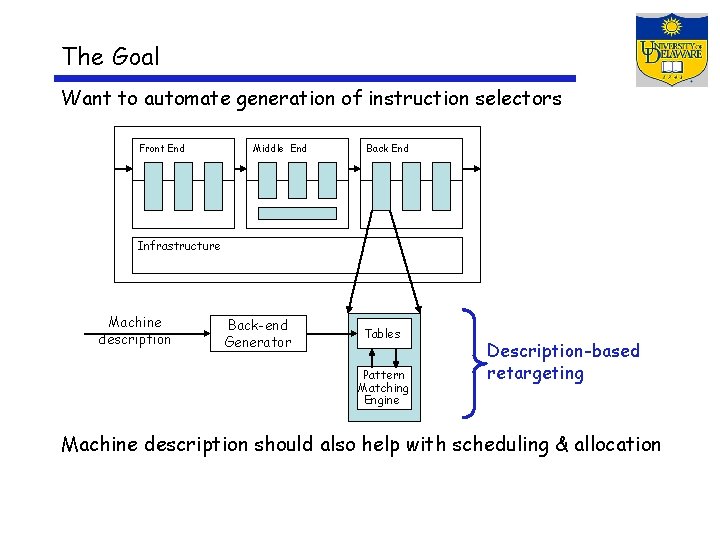

The Goal Want to automate generation of instruction selectors Front End Middle End Back End Infrastructure Machine description Back-end Generator Tables Pattern Matching Engine Description-based retargeting Machine description should also help with scheduling & allocation

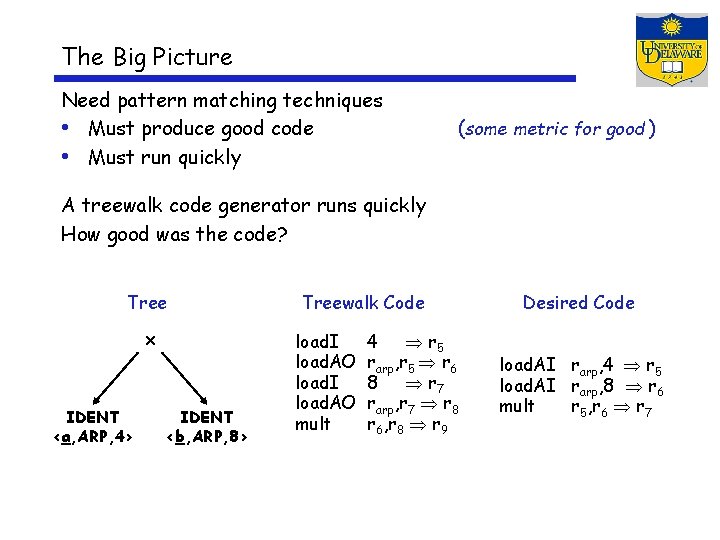

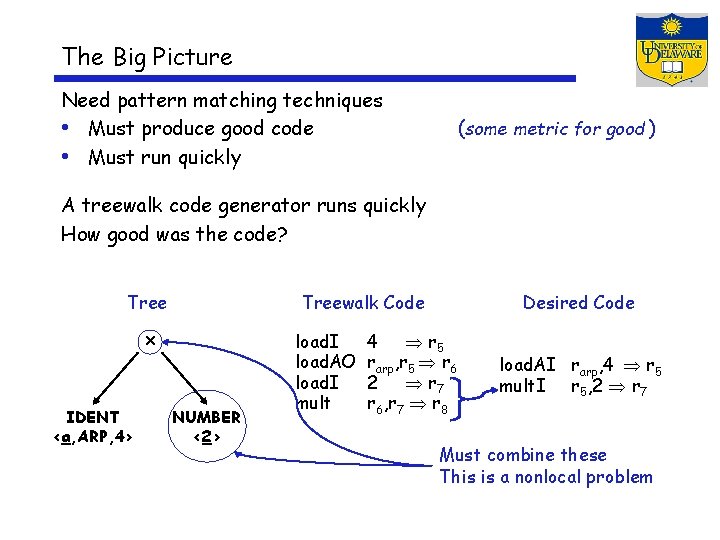

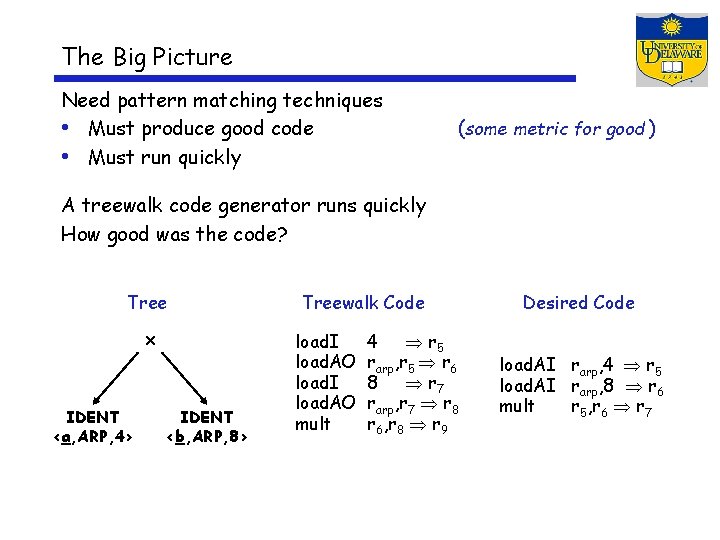

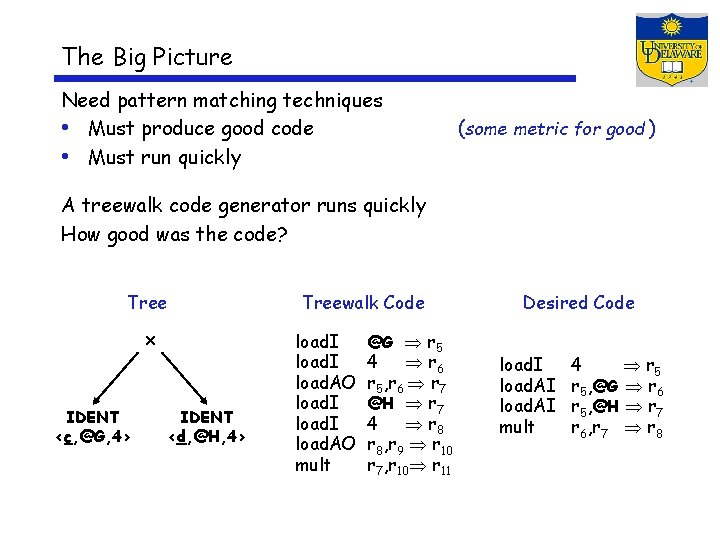

The Big Picture Need pattern matching techniques • Must produce good code • Must run quickly (some metric for good ) A treewalk code generator runs quickly How good was the code? Tree x IDENT <a, ARP, 4> IDENT <b, ARP, 8> Treewalk Code load. I load. AO mult 4 r 5 rarp, r 5 r 6 8 r 7 rarp, r 7 r 8 r 6, r 8 r 9 Desired Code load. AI rarp, 4 r 5 load. AI rarp, 8 r 6 mult r 5, r 6 r 7

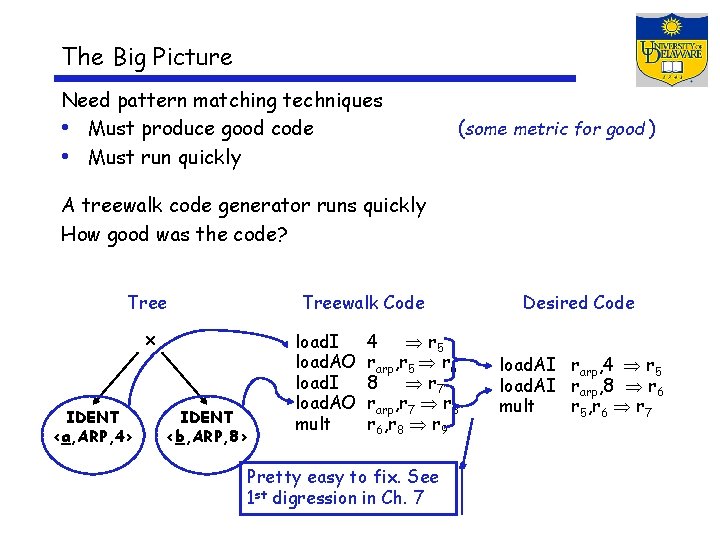

The Big Picture Need pattern matching techniques • Must produce good code • Must run quickly (some metric for good ) A treewalk code generator runs quickly How good was the code? Treewalk Code x IDENT <a, ARP, 4> IDENT <b, ARP, 8> load. I load. AO mult 4 r 5 rarp, r 5 r 6 8 r 7 rarp, r 7 r 8 r 6, r 8 r 9 Pretty easy to fix. See 1 st digression in Ch. 7 Desired Code load. AI rarp, 4 r 5 load. AI rarp, 8 r 6 mult r 5, r 6 r 7

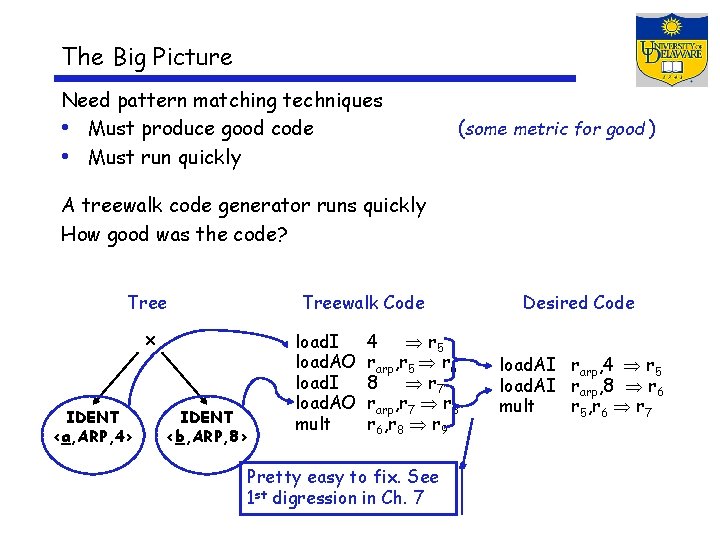

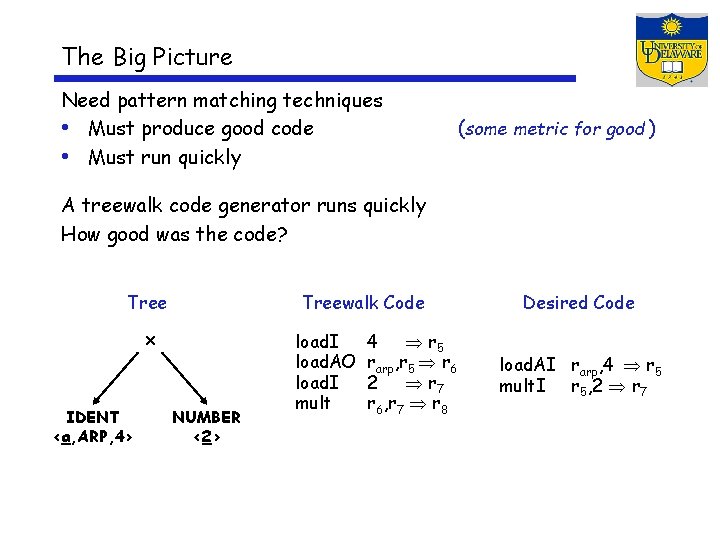

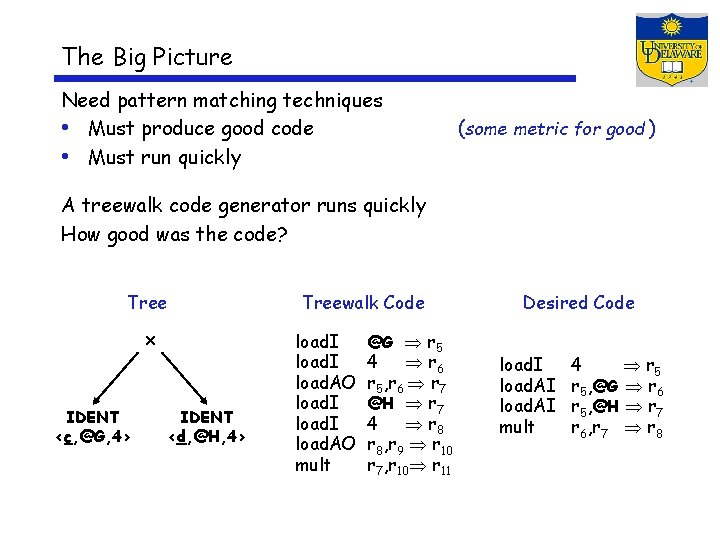

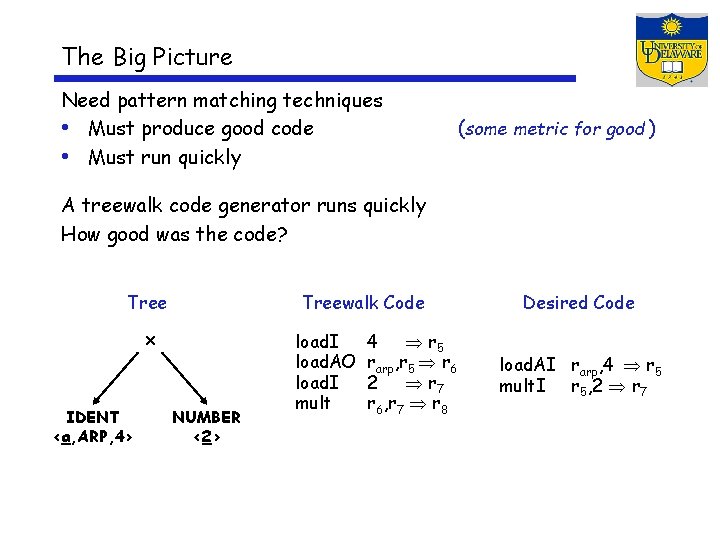

The Big Picture Need pattern matching techniques • Must produce good code • Must run quickly (some metric for good ) A treewalk code generator runs quickly How good was the code? Treewalk Code x IDENT <a, ARP, 4> NUMBER <2> load. I load. AO load. I mult 4 r 5 rarp, r 5 r 6 2 r 7 r 6, r 7 r 8 Desired Code load. AI rarp, 4 r 5 mult. I r 5, 2 r 7

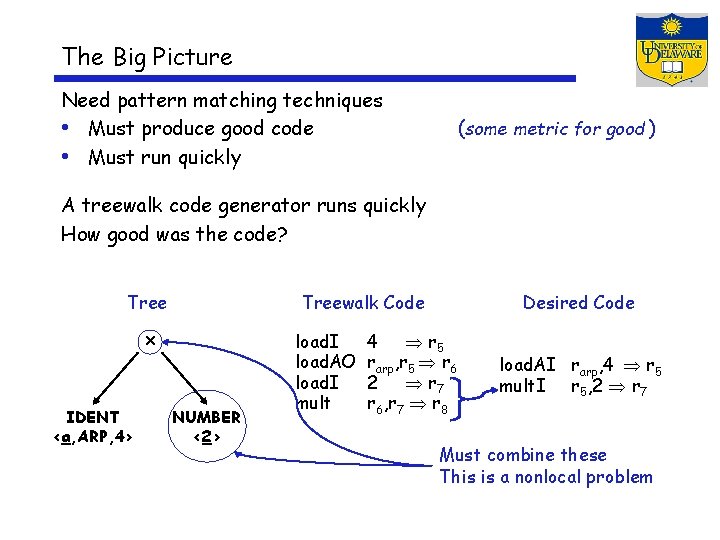

The Big Picture Need pattern matching techniques • Must produce good code • Must run quickly (some metric for good ) A treewalk code generator runs quickly How good was the code? Treewalk Code x IDENT <a, ARP, 4> NUMBER <2> load. I load. AO load. I mult Desired Code 4 r 5 rarp, r 5 r 6 2 r 7 r 6, r 7 r 8 load. AI rarp, 4 r 5 mult. I r 5, 2 r 7 Must combine these This is a nonlocal problem

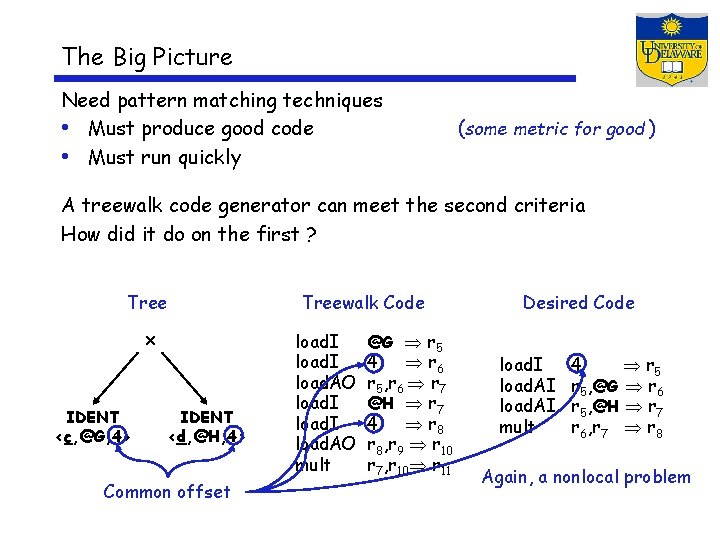

The Big Picture Need pattern matching techniques • Must produce good code • Must run quickly (some metric for good ) A treewalk code generator runs quickly How good was the code? Tree x IDENT <c, @G, 4> IDENT <d, @H, 4> Treewalk Code load. I load. AO mult @G r 5 4 r 6 r 5, r 6 r 7 @H r 7 4 r 8, r 9 r 10 r 7, r 10 r 11 Desired Code load. I load. AI mult 4 r 5, @G r 6 r 5, @H r 7 r 6, r 7 r 8

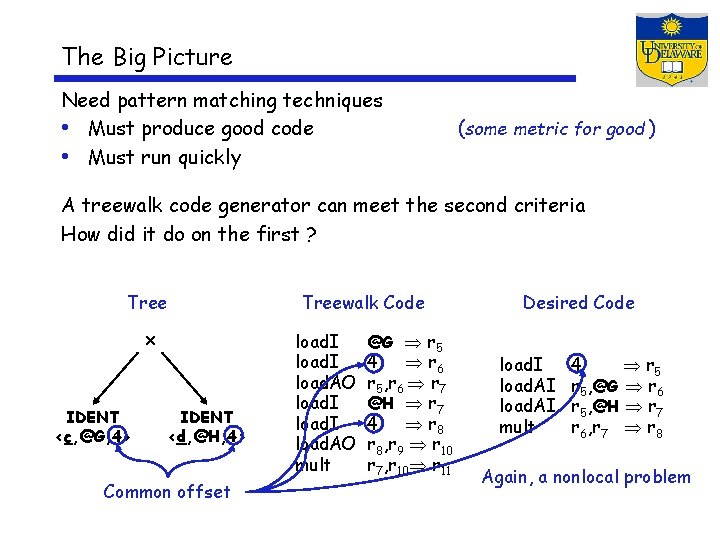

The Big Picture Need pattern matching techniques • Must produce good code • Must run quickly (some metric for good ) A treewalk code generator can meet the second criteria How did it do on the first ? Tree x IDENT <c, @G, 4> IDENT <d, @H, 4> Common offset Treewalk Code load. I load. AO mult @G r 5 4 r 6 r 5, r 6 r 7 @H r 7 4 r 8, r 9 r 10 r 7, r 10 r 11 Desired Code load. I load. AI mult 4 r 5, @G r 6 r 5, @H r 7 r 6, r 7 r 8 Again, a nonlocal problem

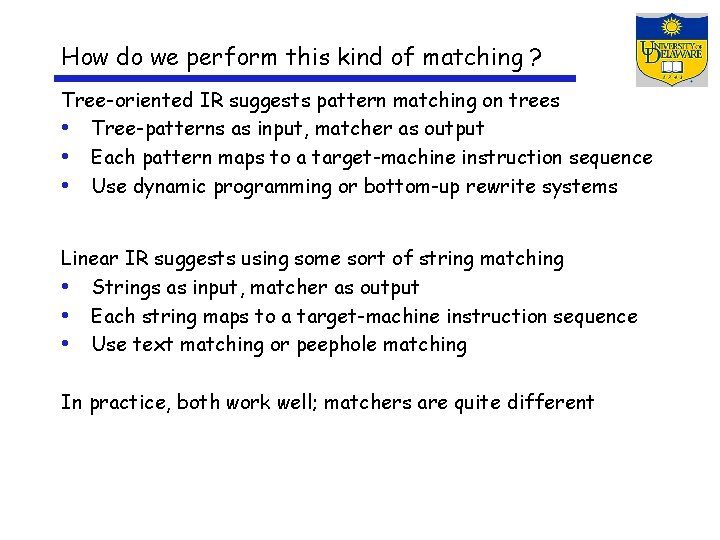

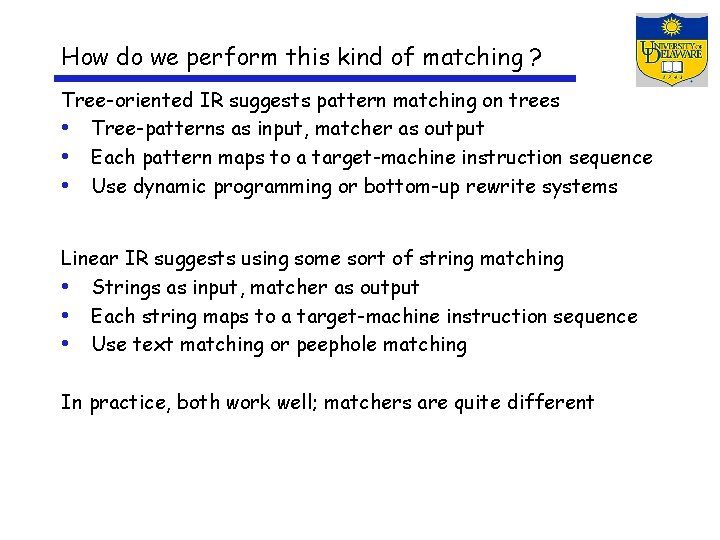

How do we perform this kind of matching ? Tree-oriented IR suggests pattern matching on trees • Tree-patterns as input, matcher as output • Each pattern maps to a target-machine instruction sequence • Use dynamic programming or bottom-up rewrite systems Linear IR suggests using some sort of string matching • Strings as input, matcher as output • Each string maps to a target-machine instruction sequence • Use text matching or peephole matching In practice, both work well; matchers are quite different

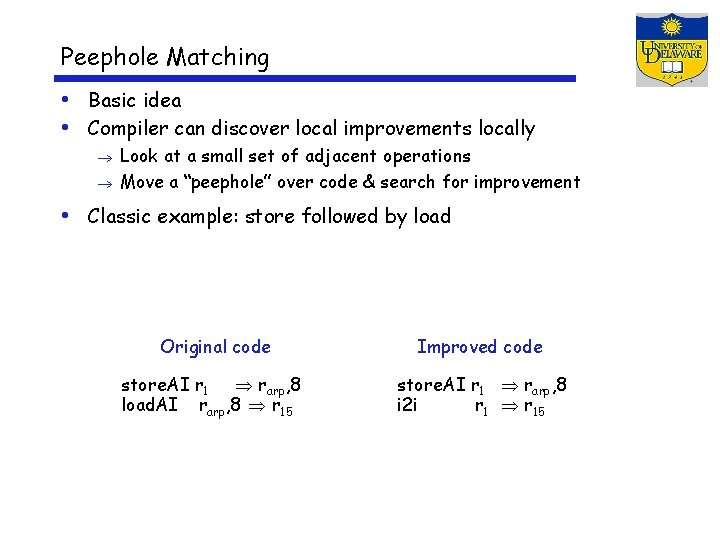

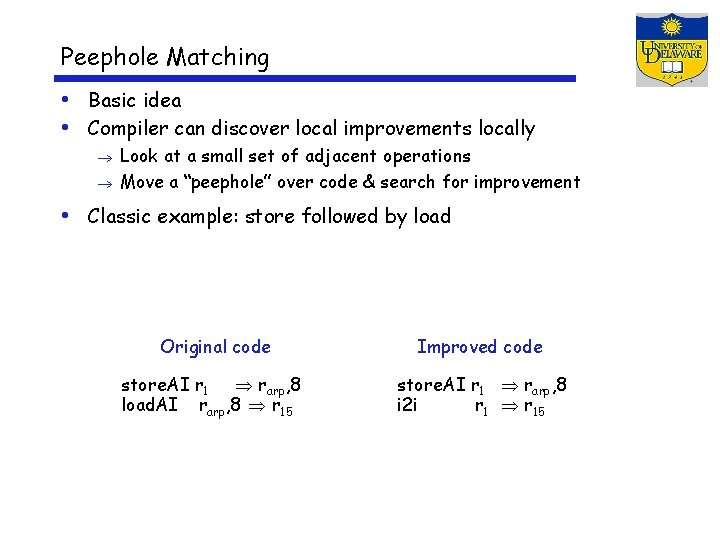

Peephole Matching • Basic idea • Compiler can discover local improvements locally Look at a small set of adjacent operations Move a “peephole” over code & search for improvement • Classic example: store followed by load Original code Improved code store. AI r 1 rarp, 8 load. AI rarp, 8 r 15 store. AI r 1 rarp, 8 i 2 i r 15

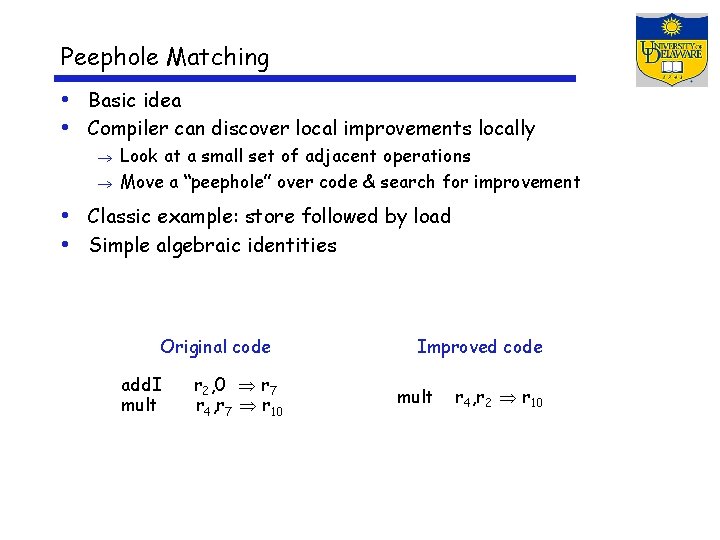

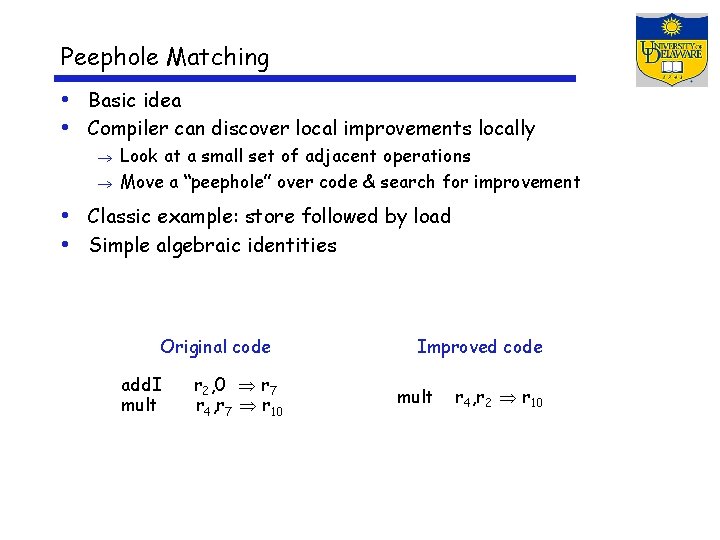

Peephole Matching • Basic idea • Compiler can discover local improvements locally Look at a small set of adjacent operations Move a “peephole” over code & search for improvement • Classic example: store followed by load • Simple algebraic identities Original code add. I mult r 2, 0 r 7 r 4, r 7 r 10 Improved code mult r 4, r 2 r 10

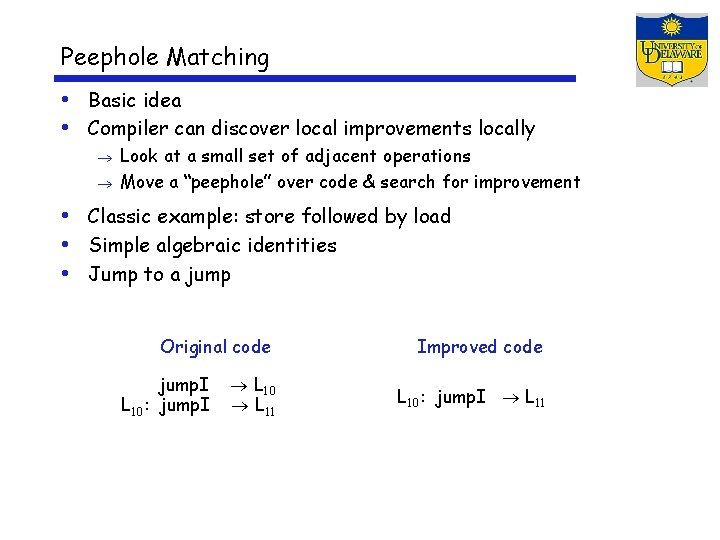

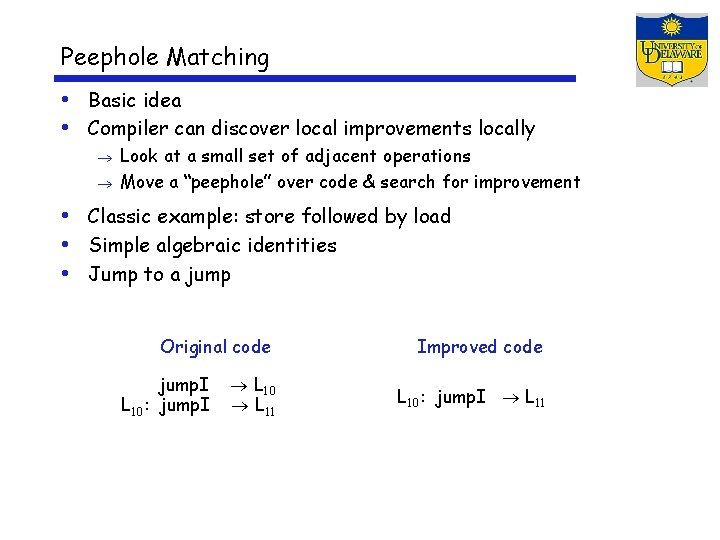

Peephole Matching • Basic idea • Compiler can discover local improvements locally Look at a small set of adjacent operations Move a “peephole” over code & search for improvement • Classic example: store followed by load • Simple algebraic identities • Jump to a jump Original code jump. I L 10: jump. I L 10 L 11 Improved code L 10: jump. I L 11

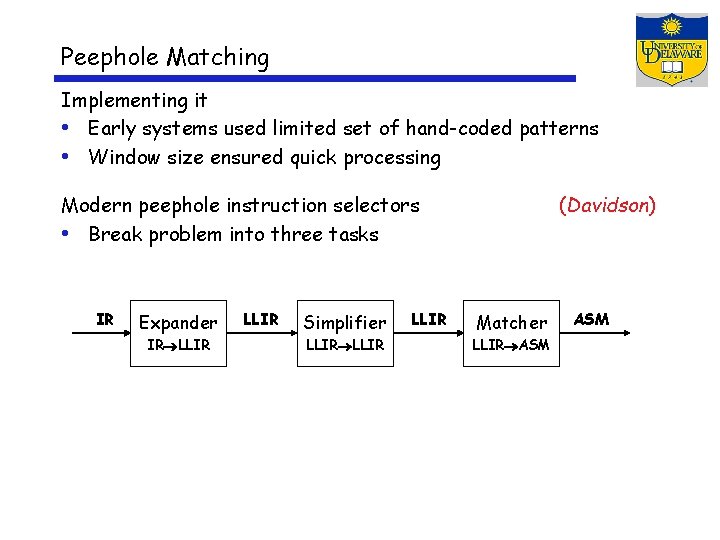

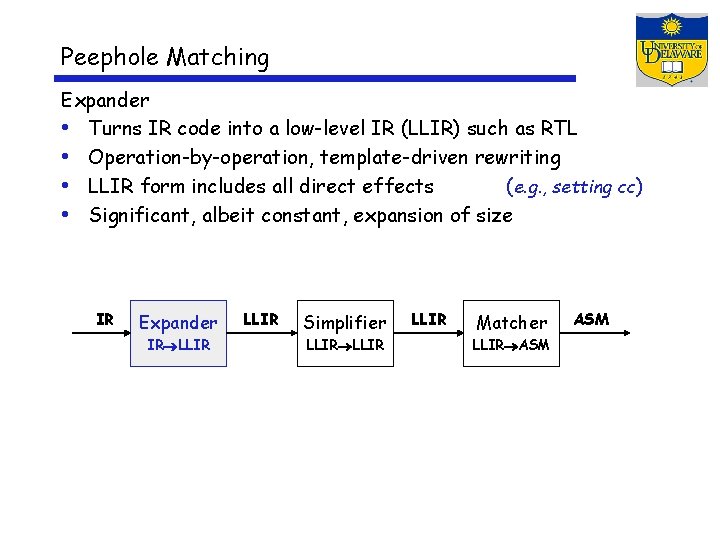

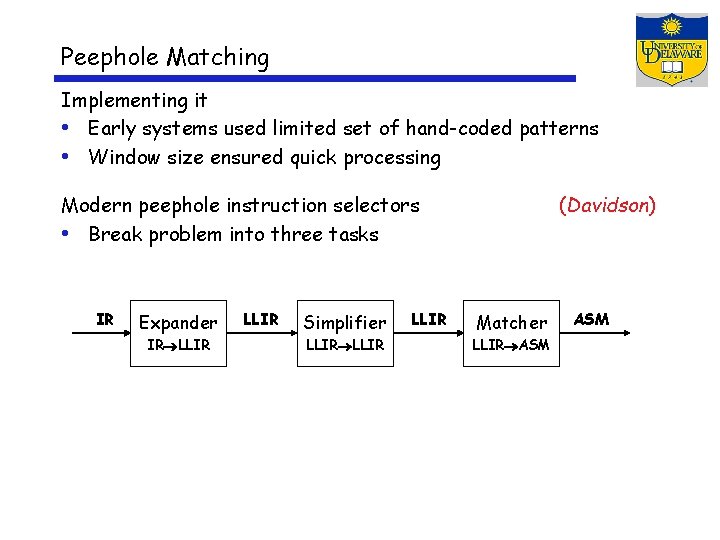

Peephole Matching Implementing it • Early systems used limited set of hand-coded patterns • Window size ensured quick processing Modern peephole instruction selectors • Break problem into three tasks IR Expander IR LLIR Simplifier LLIR (Davidson) Matcher LLIR ASM

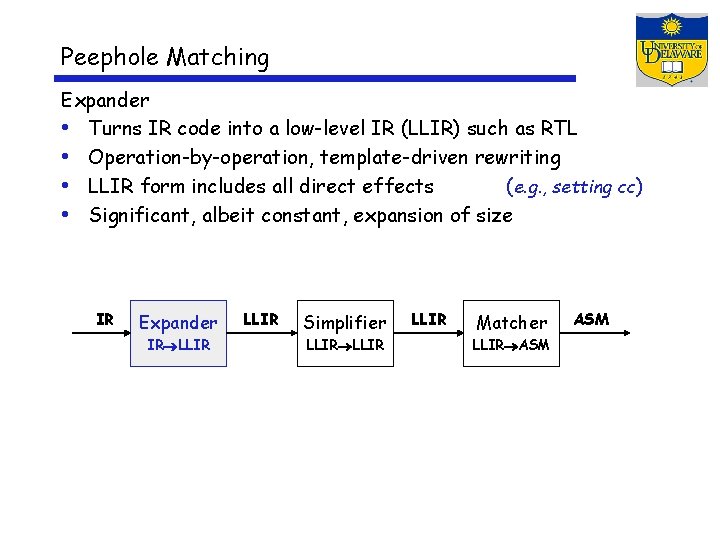

Peephole Matching Expander • Turns IR code into a low-level IR (LLIR) such as RTL • Operation-by-operation, template-driven rewriting • LLIR form includes all direct effects (e. g. , setting cc) • Significant, albeit constant, expansion of size IR Expander IR LLIR Simplifier LLIR Matcher LLIR ASM

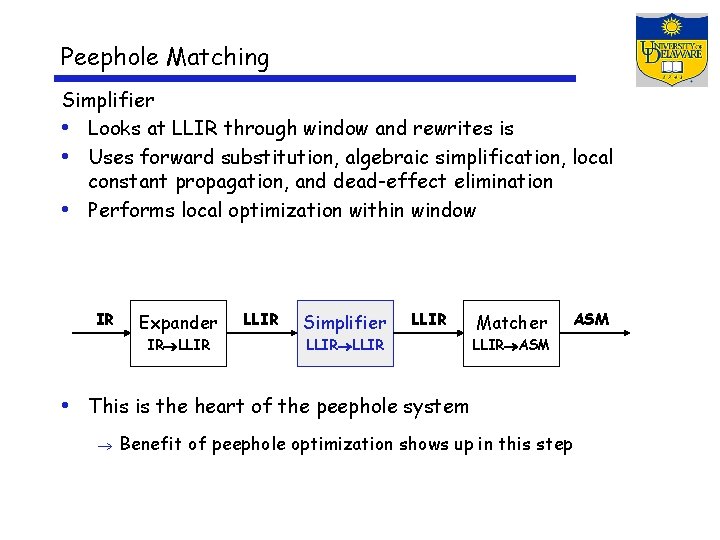

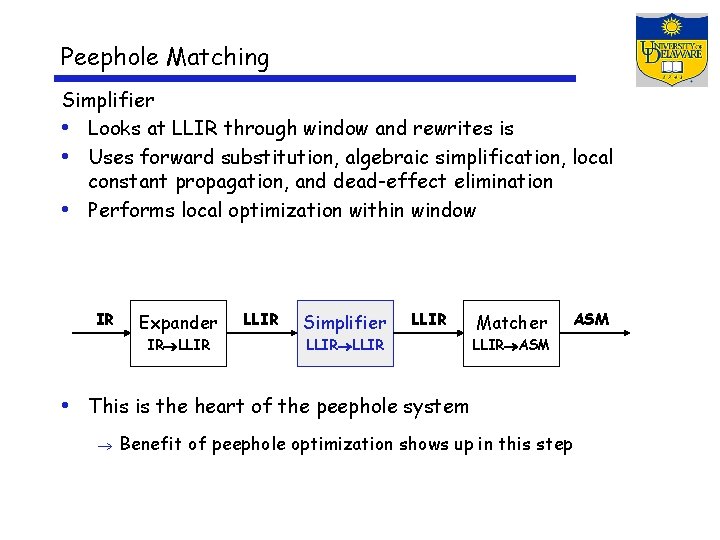

Peephole Matching Simplifier • Looks at LLIR through window and rewrites is • Uses forward substitution, algebraic simplification, local constant propagation, and dead-effect elimination • Performs local optimization within window IR Expander IR LLIR Simplifier LLIR Matcher ASM LLIR ASM • This is the heart of the peephole system Benefit of peephole optimization shows up in this step

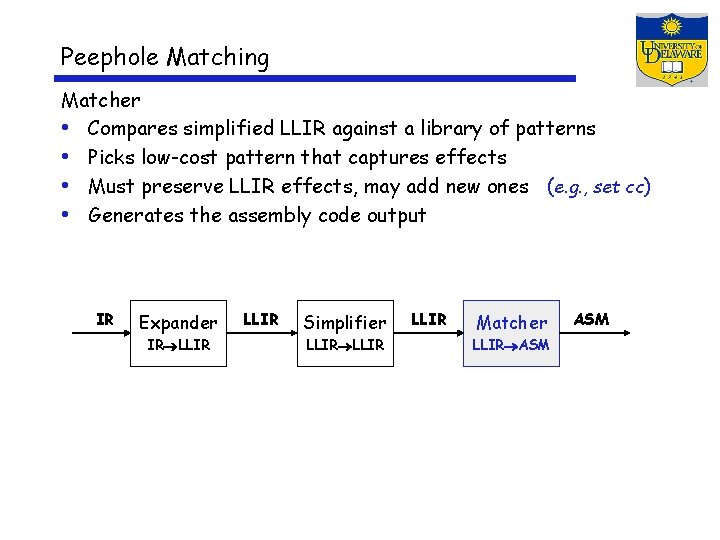

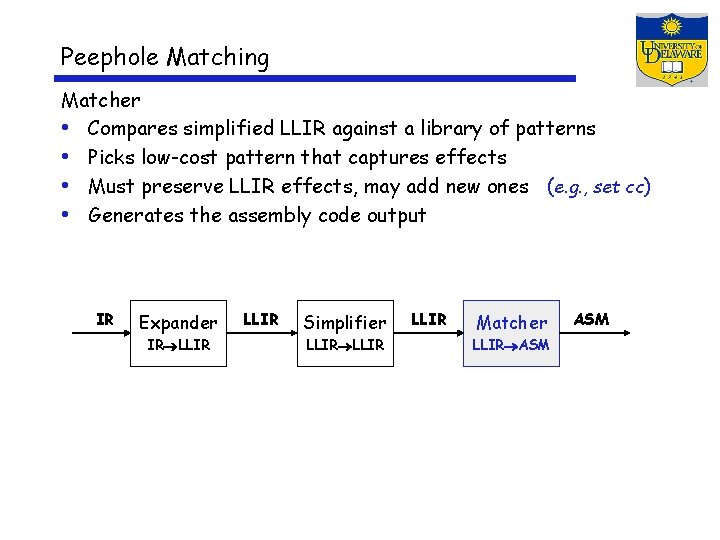

Peephole Matching Matcher • Compares simplified LLIR against a library of patterns • Picks low-cost pattern that captures effects • Must preserve LLIR effects, may add new ones (e. g. , set cc) • Generates the assembly code output IR Expander IR LLIR Simplifier LLIR Matcher LLIR ASM

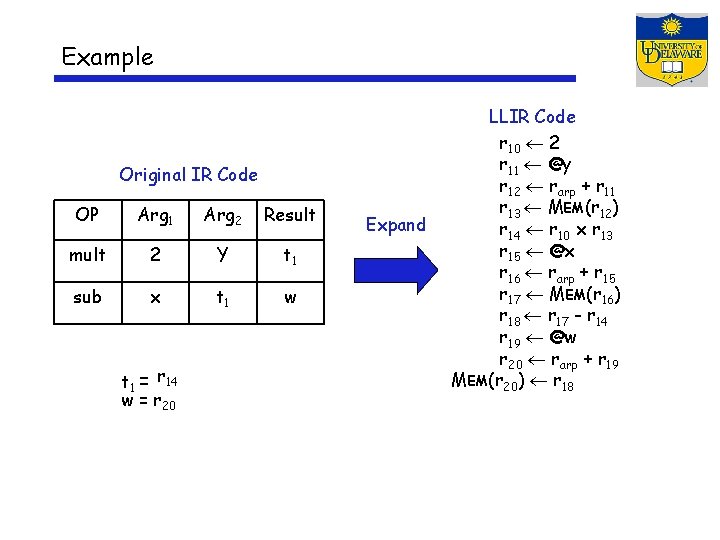

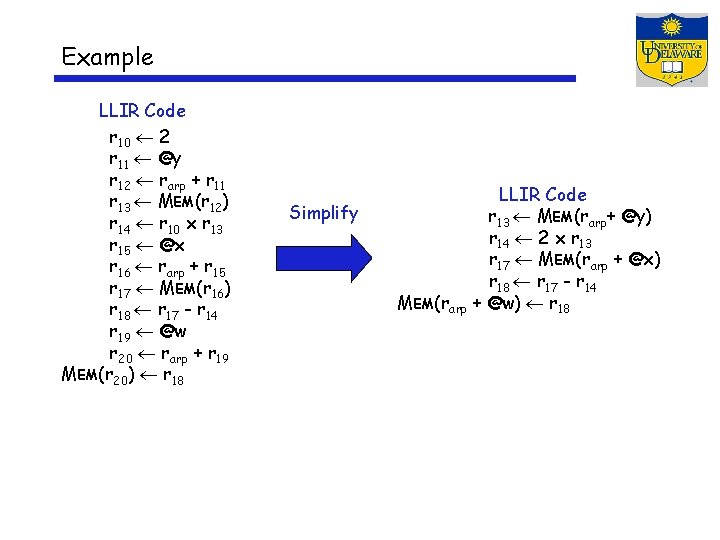

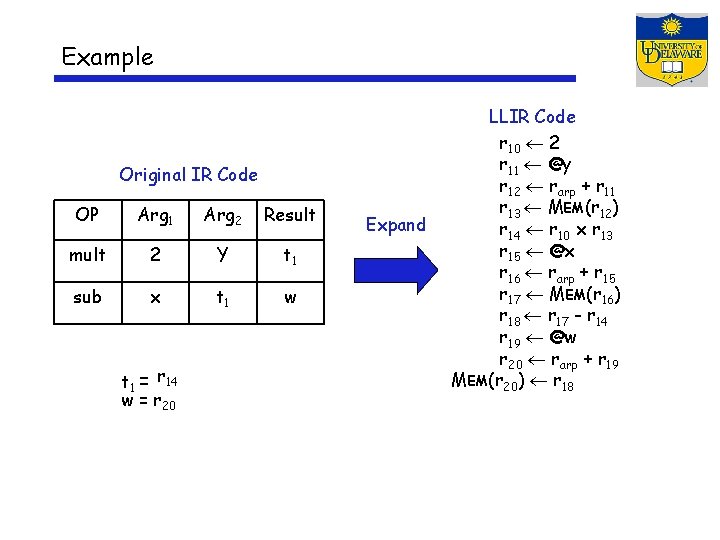

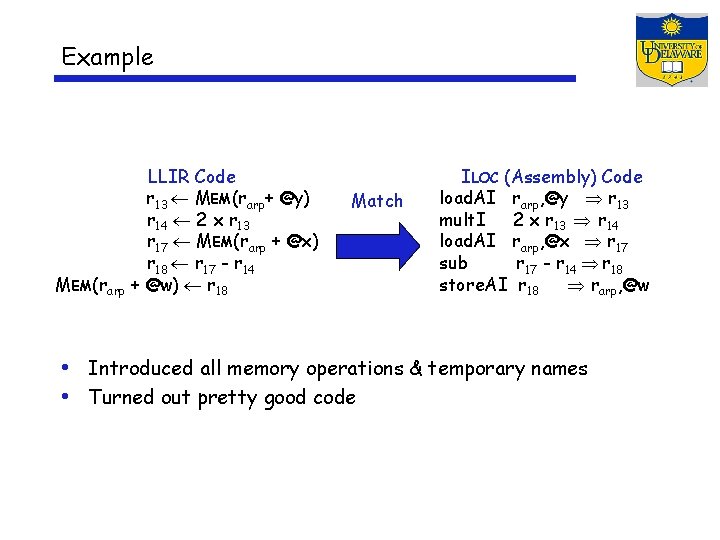

Example Original IR Code OP Arg 1 Arg 2 Result mult 2 Y t 1 sub x t 1 w t 1 = r 14 w = r 20 Expand LLIR Code r 10 2 r 11 @y r 12 rarp + r 11 r 13 MEM(r 12) r 14 r 10 x r 13 r 15 @x r 16 rarp + r 15 r 17 MEM(r 16) r 18 r 17 - r 14 r 19 @w r 20 rarp + r 19 MEM(r 20) r 18

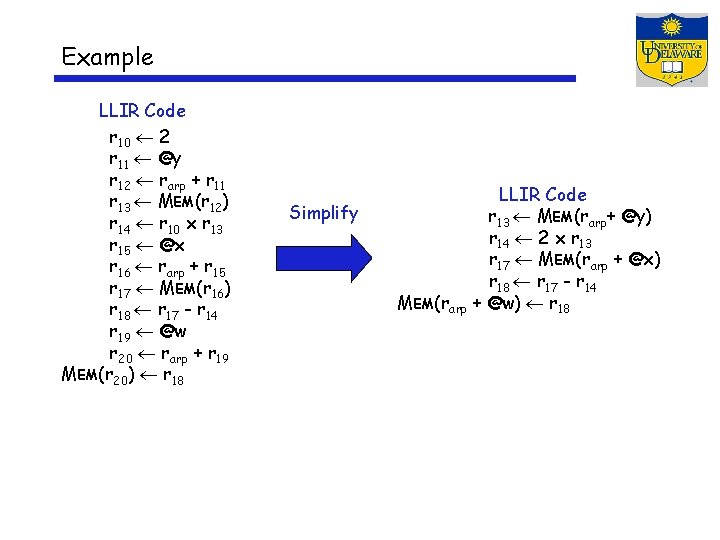

Example LLIR Code r 10 2 r 11 @y r 12 rarp + r 11 r 13 MEM(r 12) r 14 r 10 x r 13 r 15 @x r 16 rarp + r 15 r 17 MEM(r 16) r 18 r 17 - r 14 r 19 @w r 20 rarp + r 19 MEM(r 20) r 18 Simplify MEM(rarp LLIR Code r 13 MEM(rarp+ @y) r 14 2 x r 13 r 17 MEM(rarp + @x) r 18 r 17 - r 14 + @w) r 18

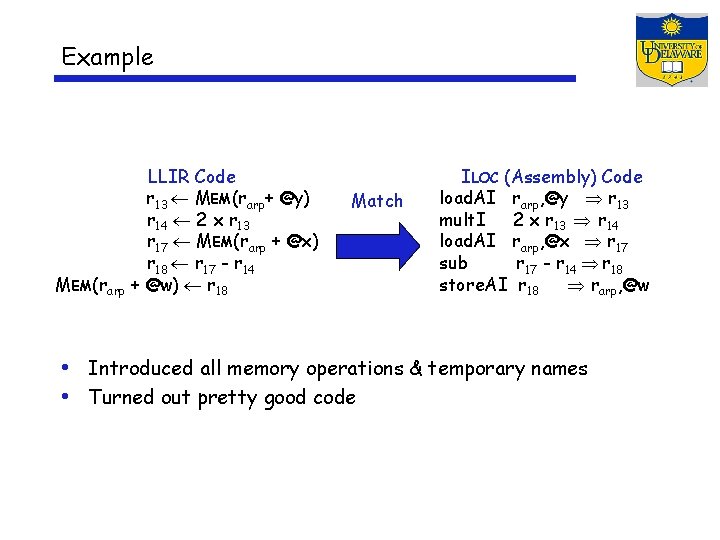

Example MEM(rarp LLIR Code r 13 MEM(rarp+ @y) r 14 2 x r 13 r 17 MEM(rarp + @x) r 18 r 17 - r 14 + @w) r 18 Match ILOC (Assembly) Code load. AI rarp, @y r 13 mult. I 2 x r 13 r 14 load. AI rarp, @x r 17 sub r 17 - r 14 r 18 store. AI r 18 rarp, @w • Introduced all memory operations & temporary names • Turned out pretty good code

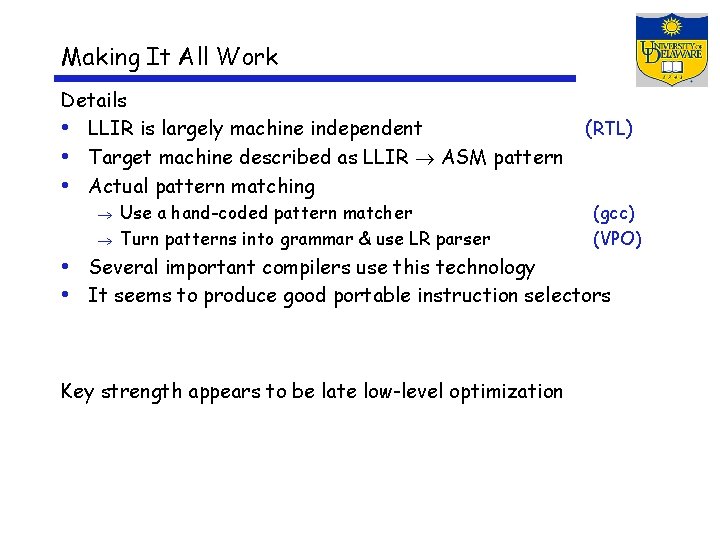

Making It All Work Details • LLIR is largely machine independent • Target machine described as LLIR ASM pattern • Actual pattern matching Use a hand-coded pattern matcher Turn patterns into grammar & use LR parser (RTL) (gcc) (VPO) • Several important compilers use this technology • It seems to produce good portable instruction selectors Key strength appears to be late low-level optimization