Instance Based Learning CS 478 Instance Based Learning

Instance Based Learning CS 478 - Instance Based Learning 1

Instance Based Learning Classify based on local similarity l Ranges from simple nearest neighbor to case-based analogical reasoning l Use local information near the current query instance to decide the classification of that instance l As such can represent quite complex decision surfaces in a simple manner l CS 478 - Instance Based Learning 2

k-Nearest Neighbor Approach Simply store all (or some representative subset) of the examples in the training set. l When desiring to generalize on a new instance, measure the distance from the new instance to one or more stored instances which vote to decide the class of the new instance. l No need to pre-process a specific hypothesis (Lazy vs. Eager learning) l – Fast learning – Can be slow during execution and require significant storage – Some models index the data or reduce the instances stored

k-Nearest Neighbor (cont. ) Naturally supports real valued attributes l Typically use Euclidean distance l Nominal/unknown attributes can just be a 1/0 distance (more on other distance metrics later) l The output class for the query instance is set to the most common class of its k nearest neighbors l where (x, y) = 1 if x = y , else 0 l k greater than 1 is more noise resistant, but a very large k would lead to less accuracy as less relevant neighbors have more influence (common values: k=3, k=5) CS 478 - Instance Based Learning 4

k-Nearest Neighbor (cont. ) l Can also do distance weighted voting where the strength of a neighbors influence is proportional to its distance Inverse of distance squared is a common weight l Gaussian is another common distance weight l In this case can let k be larger (even all points if desired), because the more distant points have negligible influence l CS 478 - Instance Based Learning 5

Regression with k-nn Can also do regression by letting the output be the weighted mean of the k nearest neighbors l For distance weighted regression l l Where f(x) is the output value for instance x CS 478 - Instance Based Learning 6

Attribute Weighting One of the main weaknesses of nearest neighbor is irrelevant features, since they can dominate the distance l Can create algorithms which weight the attributes (Note that backprop and ID 3 etc, do higher order weighting of features) l No longer lazy evaluation since you need to come up with a portion of your hypothesis (attribute weights) before generalizing l Still an open area of research l – Should weighting be local or global? – What is the best method, etc. ? CS 478 - Instance Based Learning 7

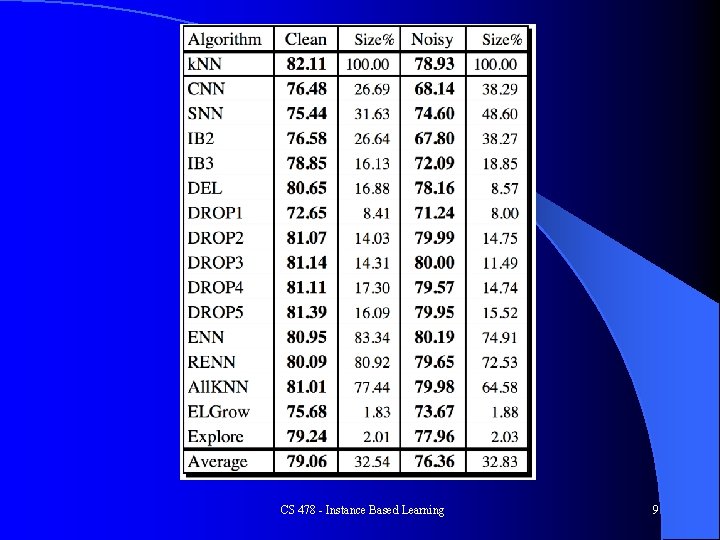

Reduction Techniques l Wilson, D. R. and Martinez, T. R. , Reduction Techniques for Exemplar-Based Learning Algorithms, Machine Learning Journal, vol. 38, no. 3, pp. 257 -286, 2000. Create a subset or other representative set of prototype nodes l Approaches l – Leave-one-out reduction - Drop instance if it would still be classified – – – correctly Growth algorithm - Only add instance if it is not already classified correctly - both order dependent More global optimizing approaches Just keep central points Just keep border points (pre-process noisy instances) Drop 5 (Wilson & Martinez) maintains almost full accuracy with approximately 15% of the original instances CS 478 - Instance Based Learning 8

CS 478 - Instance Based Learning 9

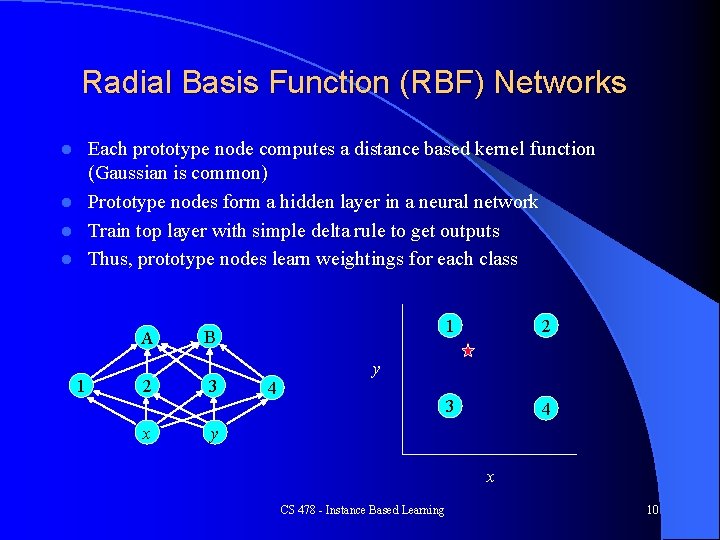

Radial Basis Function (RBF) Networks Each prototype node computes a distance based kernel function (Gaussian is common) l Prototype nodes form a hidden layer in a neural network l Train top layer with simple delta rule to get outputs l Thus, prototype nodes learn weightings for each class l A 1 2 x B 3 4 1 2 3 4 y y x CS 478 - Instance Based Learning 10

Radial Basis Function Networks Number of nodes and placement (means) l Sphere of influence (deviation) l – Too small - no generalization, should have some overlap – Too large - saturation, lose local effects, long training l Output layer weights - linear or non-linear nodes – Delta rule variations – Direct matrix weight calculation CS 478 - Instance Based Learning 11

Node Placement l l l l One for each instance of the training set Random subset of instances Clustering - Unsupervised or supervised - k-means style vs. constructive Genetic Algorithms Random Coverage - Curse of Dimensionality Node adjustment - Competitive Learning style Dynamic addition and deletion of nodes CS 478 - Instance Based Learning 12

RBF vs. BP Line vs. Sphere - mix-and-match approaches l Potential Faster Training - nearest neighbor localization Yet more data and hidden nodes typically needed l Local vs Global, less extrapolation (ala BP), have reject capability (avoid false positives) l RBF can have problems with irrelevant features just like nearest neighbor l CS 478 - Instance Based Learning 13

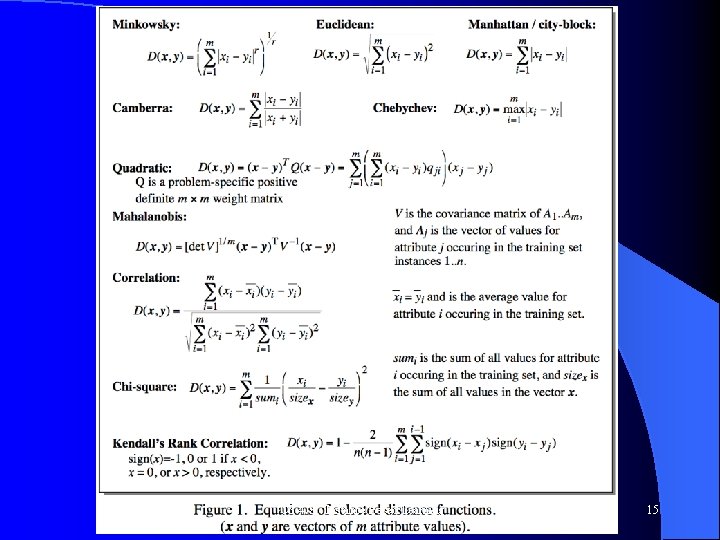

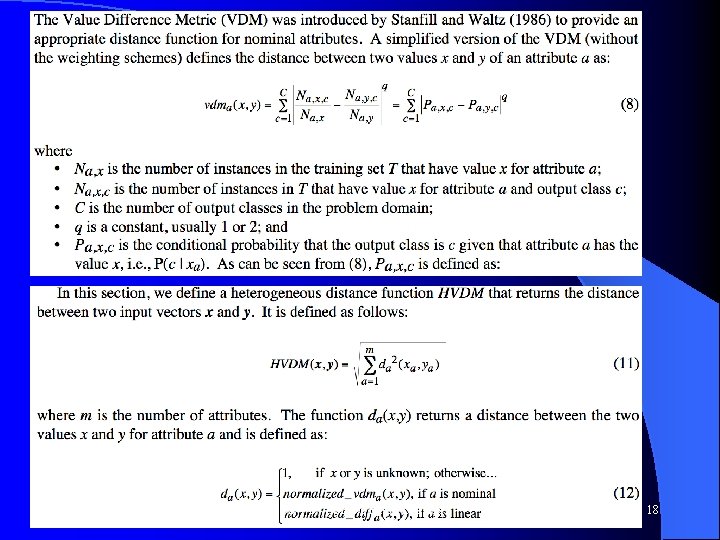

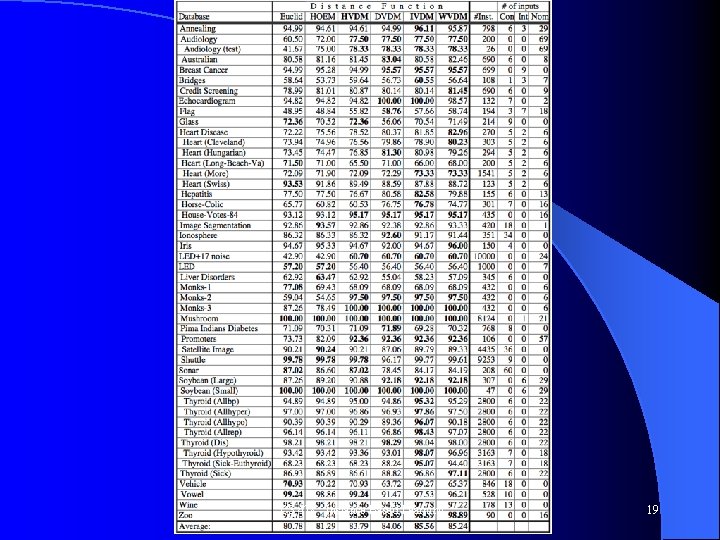

Distance Metrics Wilson, D. R. and Martinez, T. R. , Improved Heterogeneous Distance Functions, Journal of Artificial Intelligence Research, vol. 6, no. 1, pp. 1 -34, 1997. l Normalization of features l Main question: How best to handle nominal inputs l CS 478 - Instance Based Learning 14

CS 478 - Instance Based Learning 15

CS 478 - Instance Based Learning 16

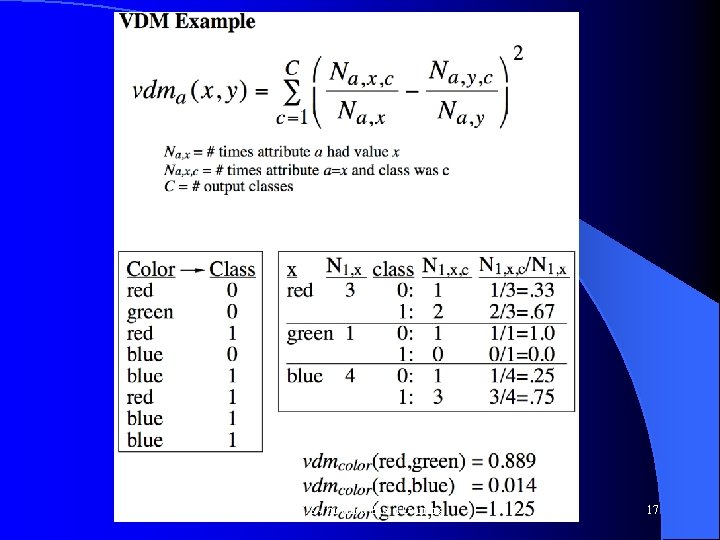

CS 478 - Instance Based Learning 17

CS 478 - Instance Based Learning 18

CS 478 - Instance Based Learning 19

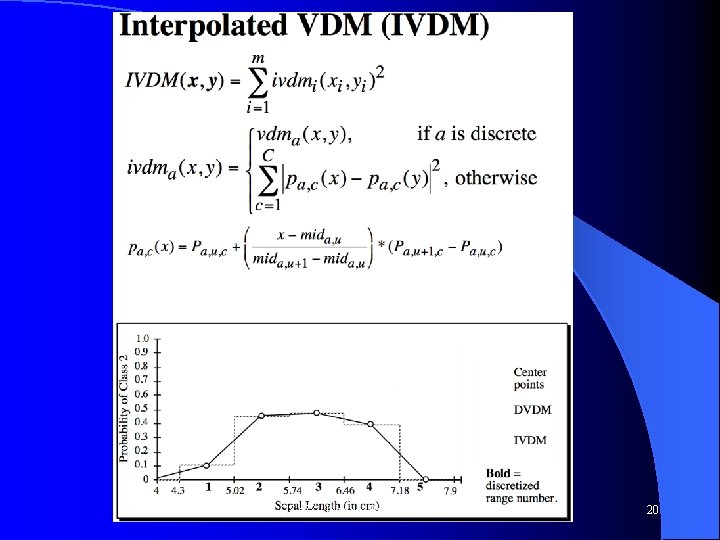

CS 478 - Instance Based Learning 20

Instance Based Learning Assignment l See http: //axon. cs. byu. edu/~martinez/classes/478/Assignments. html CS 478 - Instance Based Learning 21

- Slides: 21