inst eecs berkeley educs 61 c UCB CS

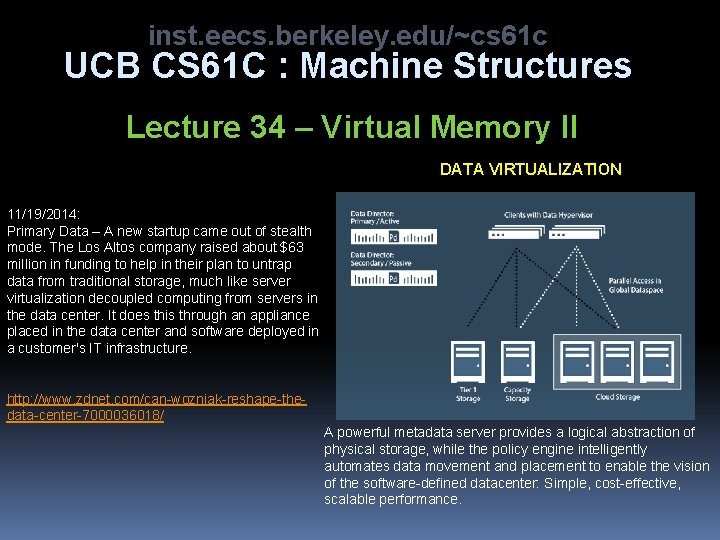

inst. eecs. berkeley. edu/~cs 61 c UCB CS 61 C : Machine Structures Lecture 34 – Virtual Memory II DATA VIRTUALIZATION 11/19/2014: Primary Data – A new startup came out of stealth mode. The Los Altos company raised about $63 million in funding to help in their plan to untrap data from traditional storage, much like server virtualization decoupled computing from servers in the data center. It does this through an appliance placed in the data center and software deployed in a customer's IT infrastructure. http: //www. zdnet. com/can-wozniak-reshape-thedata-center-7000036018/ A powerful metadata server provides a logical abstraction of physical storage, while the policy engine intelligently automates data movement and placement to enable the vision of the software-defined datacenter: Simple, cost-effective, scalable performance.

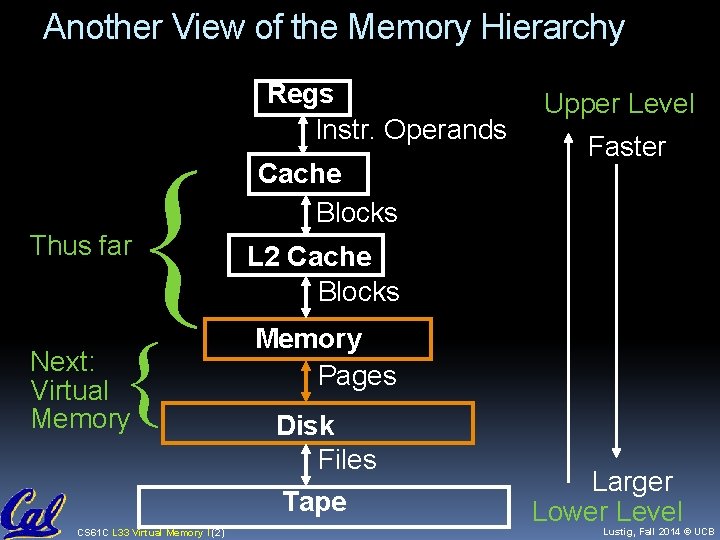

Another View of the Memory Hierarchy { Thus far { Next: Virtual Memory CS 61 C L 33 Virtual Memory I (2) Regs Instr. Operands Cache Blocks Upper Level Faster L 2 Cache Blocks Memory Pages Disk Files Tape Larger Lower Level Lustig, Fall 2014 © UCB

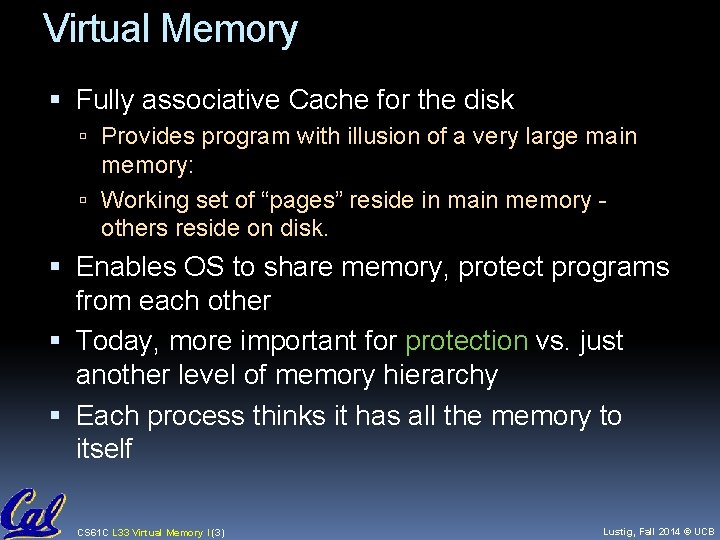

Virtual Memory Fully associative Cache for the disk Provides program with illusion of a very large main memory: Working set of “pages” reside in main memory others reside on disk. Enables OS to share memory, protect programs from each other Today, more important for protection vs. just another level of memory hierarchy Each process thinks it has all the memory to itself CS 61 C L 33 Virtual Memory I (3) Lustig, Fall 2014 © UCB

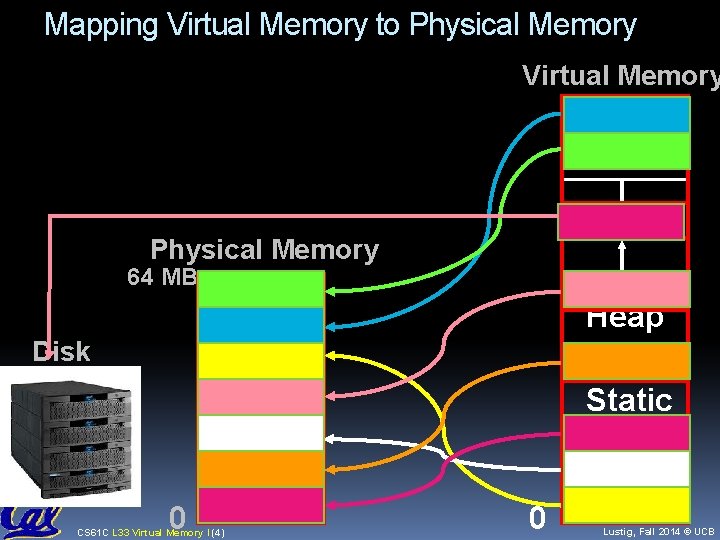

Mapping Virtual Memory to Physical Memory Virtual Memory Stack Physical Memory 64 MB Heap Disk Static 0 CS 61 C L 33 Virtual Memory I (4) 0 Code Lustig, Fall 2014 © UCB

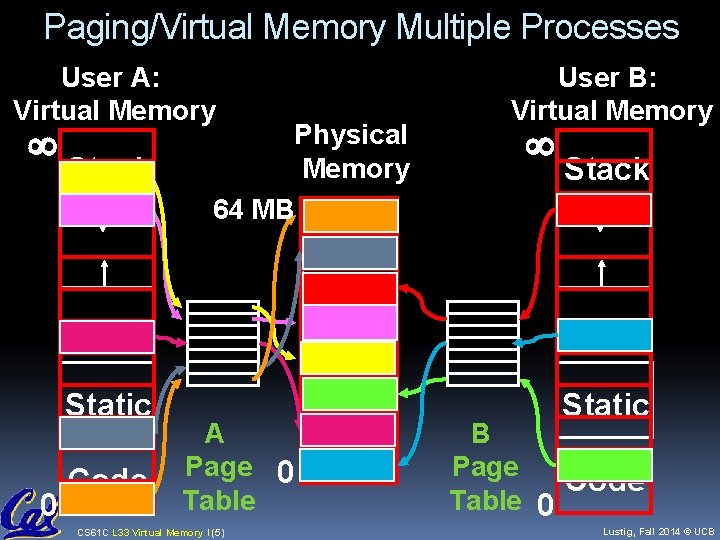

Paging/Virtual Memory Multiple Processes User A: Virtual Memory User B: Virtual Memory Stack ¥ 0 Physical Memory 64 MB ¥ Heap Static Code A Page 0 Table CS 61 C L 33 Virtual Memory I (5) B Page Code Table 0 Lustig, Fall 2014 © UCB

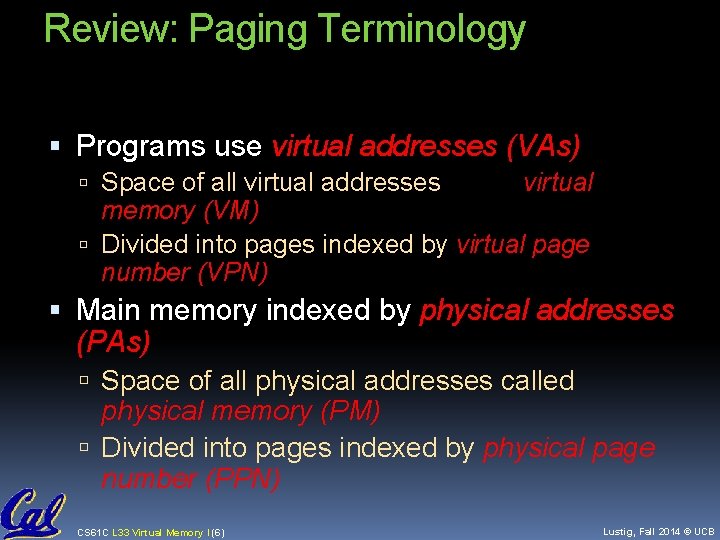

Review: Paging Terminology Programs use virtual addresses (VAs) Space of all virtual addresses called virtual memory (VM) Divided into pages indexed by virtual page number (VPN) Main memory indexed by physical addresses (PAs) Space of all physical addresses called physical memory (PM) Divided into pages indexed by physical page number (PPN) CS 61 C L 33 Virtual Memory I (6) Lustig, Fall 2014 © UCB

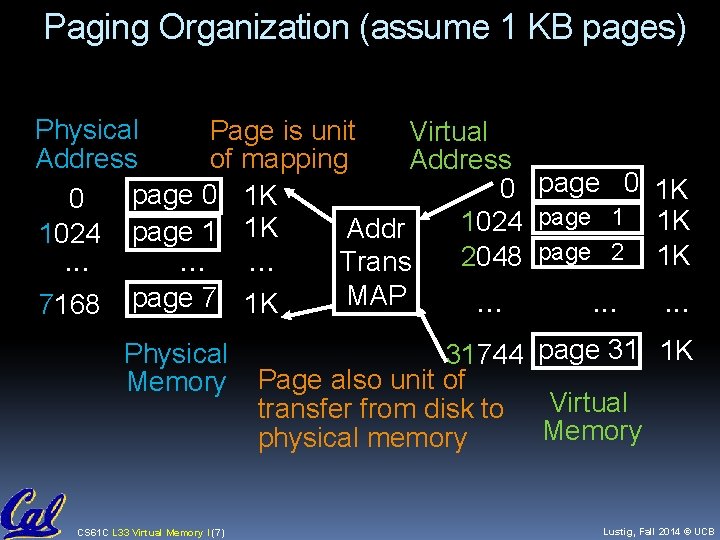

Paging Organization (assume 1 KB pages) Physical Page is unit Virtual Address of mapping Address page 0 0 page 0 1 K 0 page 1 1024 1 K Addr 1024 page 1 2048 page 2. . Trans MAP. . . 7168 page 7 1 K Physical Memory CS 61 C L 33 Virtual Memory I (7) 1 K 1 K 1 K . . . 31744 page 31 1 K Page also unit of Virtual transfer from disk to Memory physical memory Lustig, Fall 2014 © UCB

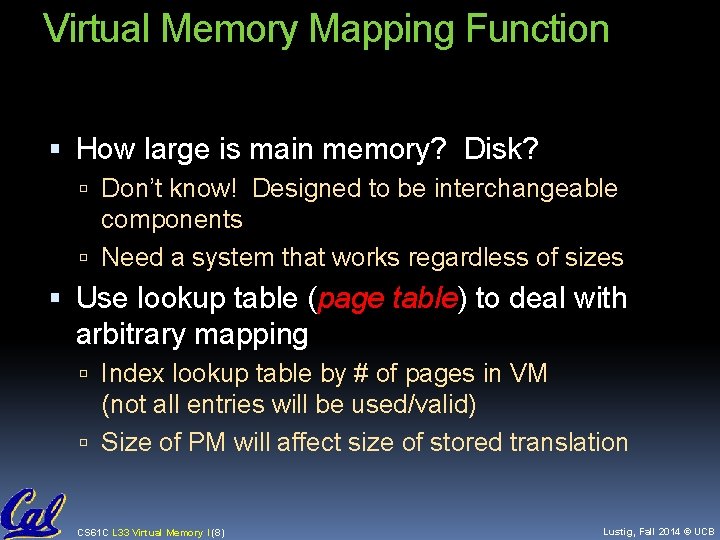

Virtual Memory Mapping Function How large is main memory? Disk? Don’t know! Designed to be interchangeable components Need a system that works regardless of sizes Use lookup table (page table) to deal with arbitrary mapping Index lookup table by # of pages in VM (not all entries will be used/valid) Size of PM will affect size of stored translation CS 61 C L 33 Virtual Memory I (8) Lustig, Fall 2014 © UCB

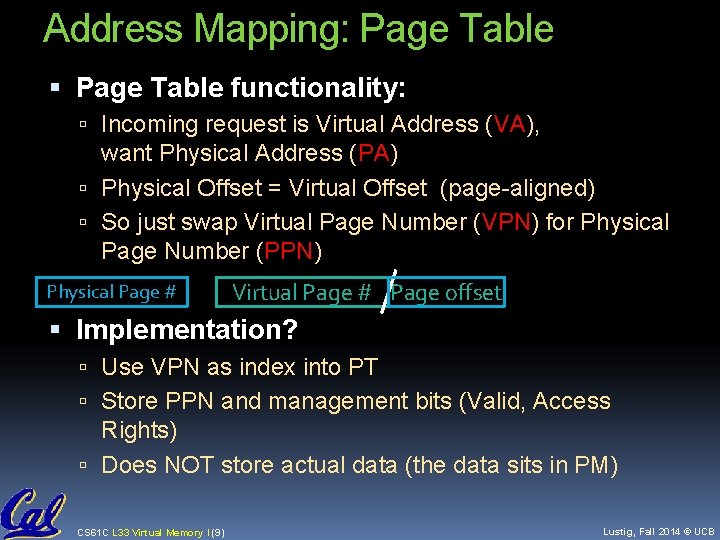

Address Mapping: Page Table functionality: Incoming request is Virtual Address (VA), want Physical Address (PA) Physical Offset = Virtual Offset (page-aligned) So just swap Virtual Page Number (VPN) for Physical Page Number (PPN) Physical Page # Virtual Page # Page offset Implementation? Use VPN as index into PT Store PPN and management bits (Valid, Access Rights) Does NOT store actual data (the data sits in PM) CS 61 C L 33 Virtual Memory I (9) Lustig, Fall 2014 © UCB

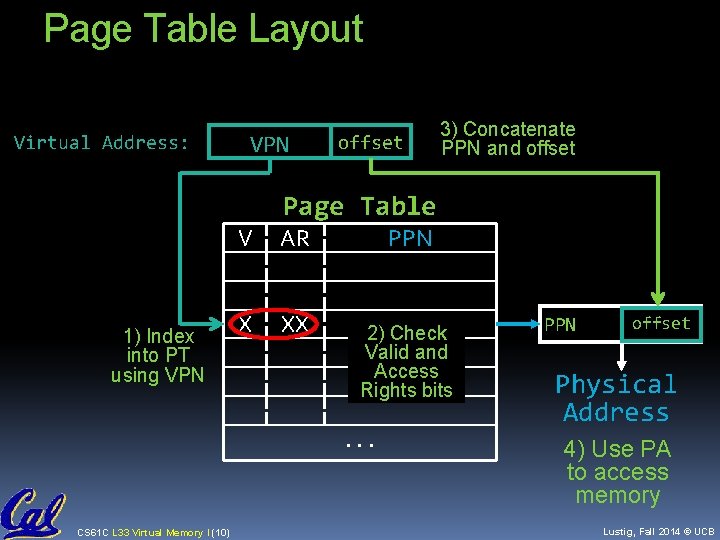

Page Table Layout Virtual Address: VPN offset 3) Concatenate PPN and offset Page Table 1) Index into PT using VPN V AR X XX PPN 2) Check Valid and Access Rights bits . . . CS 61 C L 33 Virtual Memory I (10) offset PPN Physical Address 4) Use PA to access memory Lustig, Fall 2014 © UCB

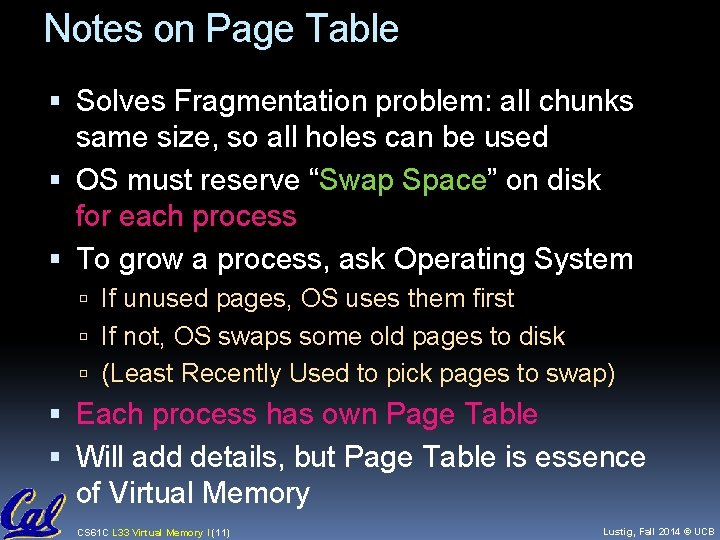

Notes on Page Table Solves Fragmentation problem: all chunks same size, so all holes can be used OS must reserve “Swap Space” on disk for each process To grow a process, ask Operating System If unused pages, OS uses them first If not, OS swaps some old pages to disk (Least Recently Used to pick pages to swap) Each process has own Page Table Will add details, but Page Table is essence of Virtual Memory CS 61 C L 33 Virtual Memory I (11) Lustig, Fall 2014 © UCB

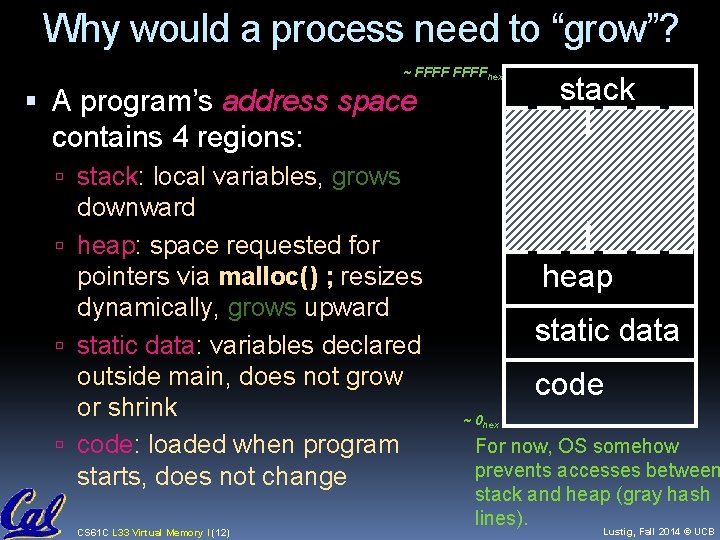

Why would a process need to “grow”? ~ FFFFhex A program’s address space contains 4 regions: stack: local variables, grows downward heap: space requested for pointers via malloc() ; resizes dynamically, grows upward static data: variables declared outside main, does not grow or shrink code: loaded when program starts, does not change CS 61 C L 33 Virtual Memory I (12) heap static data code ~ 0 hex For now, OS somehow prevents accesses between stack and heap (gray hash lines). Lustig, Fall 2014 © UCB

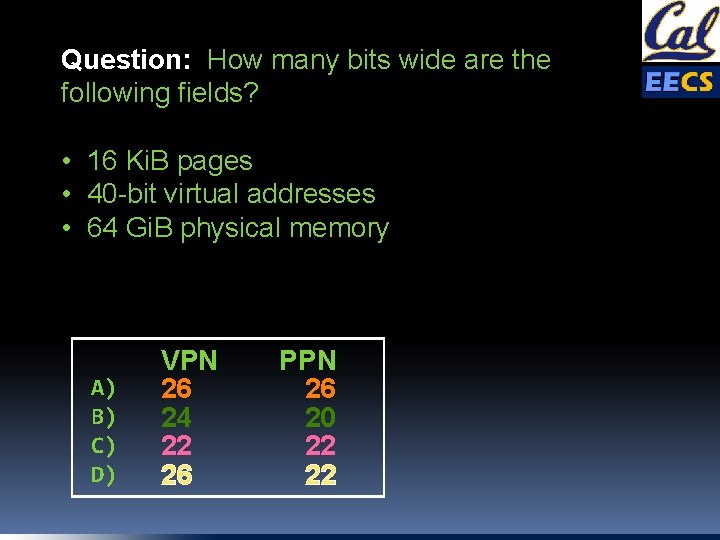

Question: How many bits wide are the following fields? • 16 Ki. B pages • 40 -bit virtual addresses • 64 Gi. B physical memory A) B) C) D) VPN 26 24 22 26 PPN 26 20 22 22

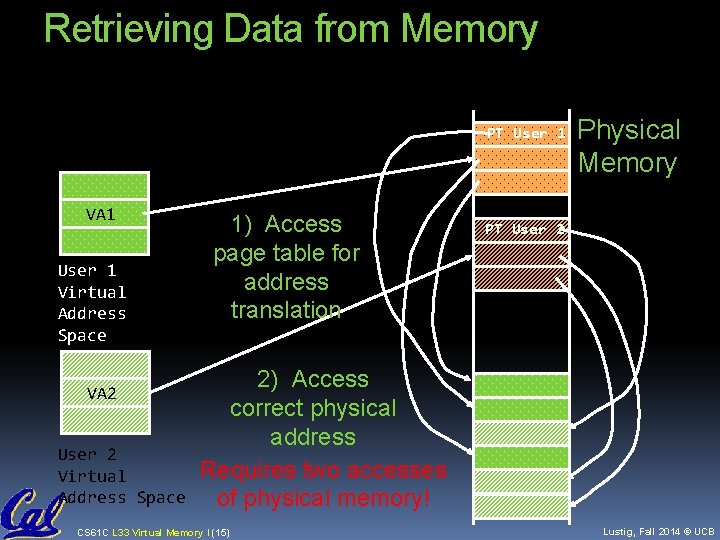

Retrieving Data from Memory PT User 1 VA 1 User 1 Virtual Address Space VA 2 User 2 Virtual Address Space 1) Access page table for address translation Physical Memory PT User 2 2) Access correct physical address Requires two accesses of physical memory! CS 61 C L 33 Virtual Memory I (15) Lustig, Fall 2014 © UCB

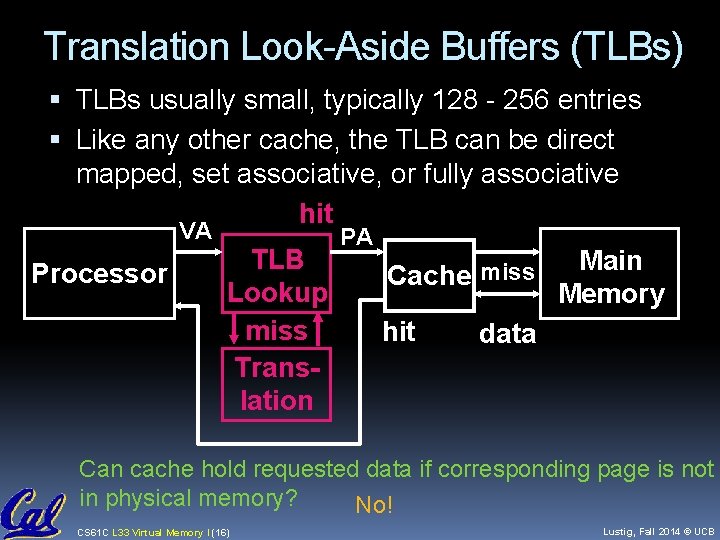

Translation Look-Aside Buffers (TLBs) TLBs usually small, typically 128 - 256 entries Like any other cache, the TLB can be direct mapped, set associative, or fully associative hit VA Processor TLB Lookup miss Translation PA Cache miss hit Main Memory data Can cache hold requested data if corresponding page is not in physical memory? No! CS 61 C L 33 Virtual Memory I (16) Lustig, Fall 2014 © UCB

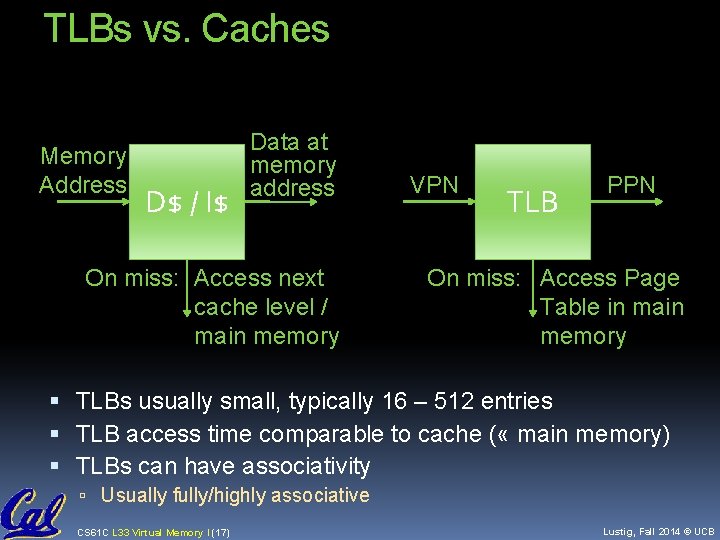

TLBs vs. Caches Memory Address D$ / I$ Data at memory address On miss: Access next cache level / main memory VPN TLB PPN On miss: Access Page Table in main memory TLBs usually small, typically 16 – 512 entries TLB access time comparable to cache ( « main memory) TLBs can have associativity Usually fully/highly associative CS 61 C L 33 Virtual Memory I (17) Lustig, Fall 2014 © UCB

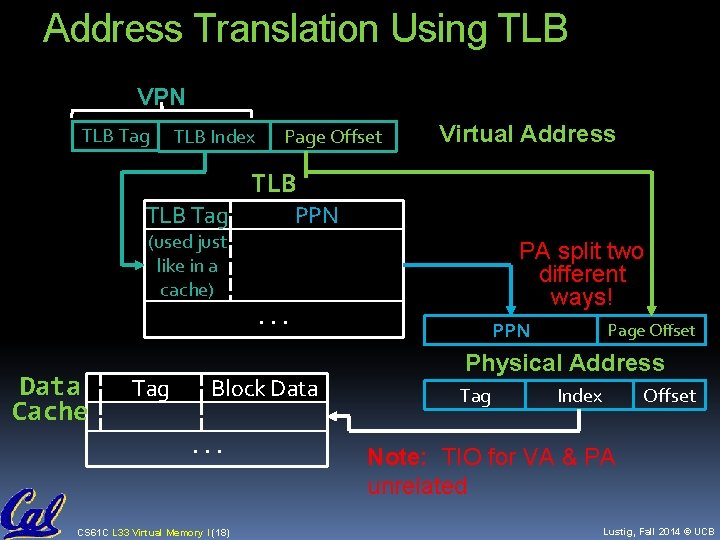

Address Translation Using TLB VPN TLB Tag TLB Index Page Offset Virtual Address TLB Tag (used just like in a cache) Data Cache Tag PPN . . . Block Data. . . CS 61 C L 33 Virtual Memory I (18) PA split two different ways! PPN Page Offset Physical Address Tag Index Offset Note: TIO for VA & PA unrelated Lustig, Fall 2014 © UCB

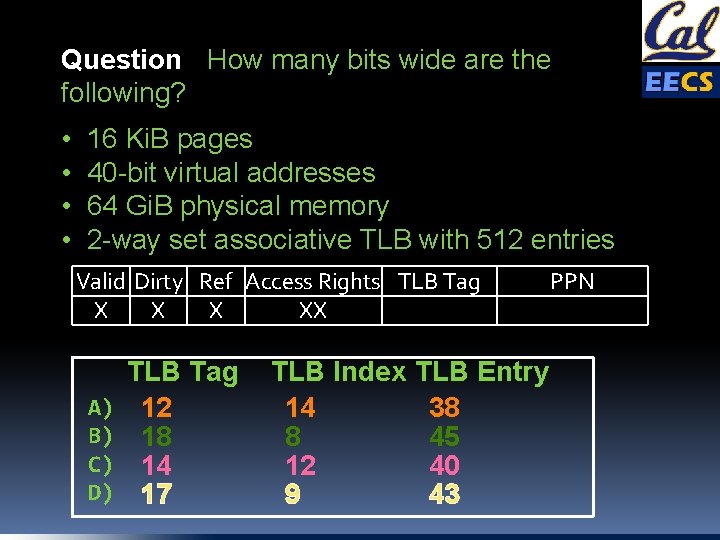

Question: How many bits wide are the following? • • 16 Ki. B pages 40 -bit virtual addresses 64 Gi. B physical memory 2 -way set associative TLB with 512 entries Valid Dirty Ref Access Rights TLB Tag X XX A) B) C) D) TLB Tag 12 18 14 17 TLB Index 14 8 12 9 TLB Entry 38 45 40 43 PPN

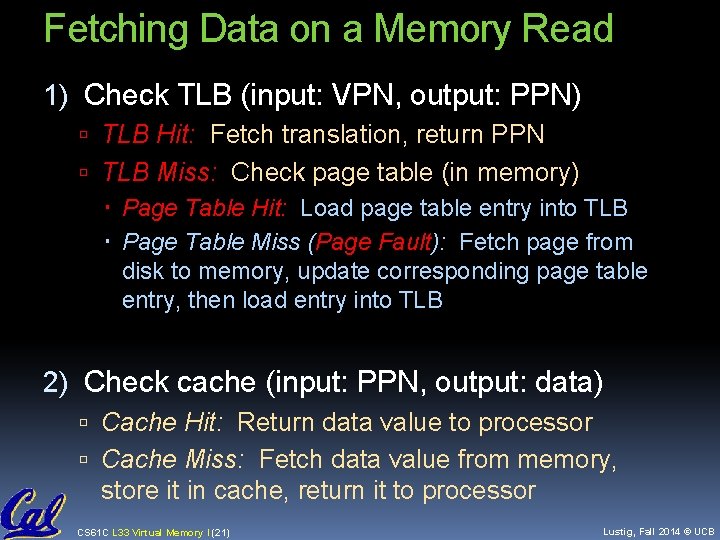

Fetching Data on a Memory Read 1) Check TLB (input: VPN, output: PPN) TLB Hit: Fetch translation, return PPN TLB Miss: Check page table (in memory) Page Table Hit: Load page table entry into TLB Page Table Miss (Page Fault): Fetch page from disk to memory, update corresponding page table entry, then load entry into TLB 2) Check cache (input: PPN, output: data) Cache Hit: Return data value to processor Cache Miss: Fetch data value from memory, store it in cache, return it to processor CS 61 C L 33 Virtual Memory I (21) Lustig, Fall 2014 © UCB

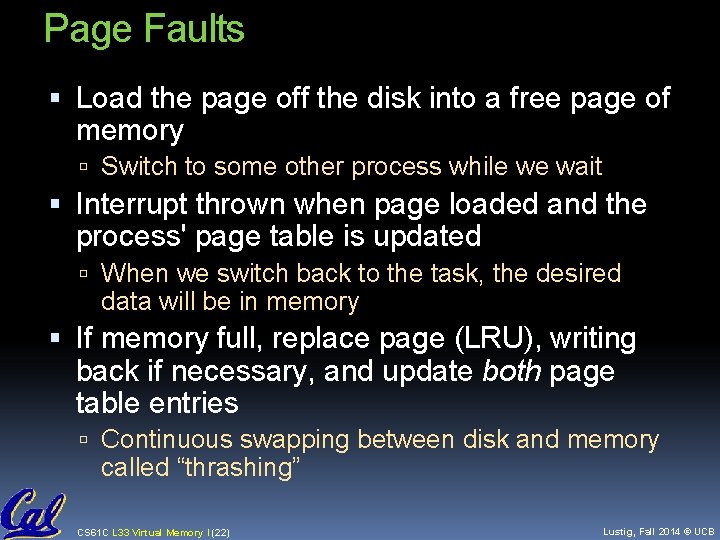

Page Faults Load the page off the disk into a free page of memory Switch to some other process while we wait Interrupt thrown when page loaded and the process' page table is updated When we switch back to the task, the desired data will be in memory If memory full, replace page (LRU), writing back if necessary, and update both page table entries Continuous swapping between disk and memory called “thrashing” CS 61 C L 33 Virtual Memory I (22) Lustig, Fall 2014 © UCB

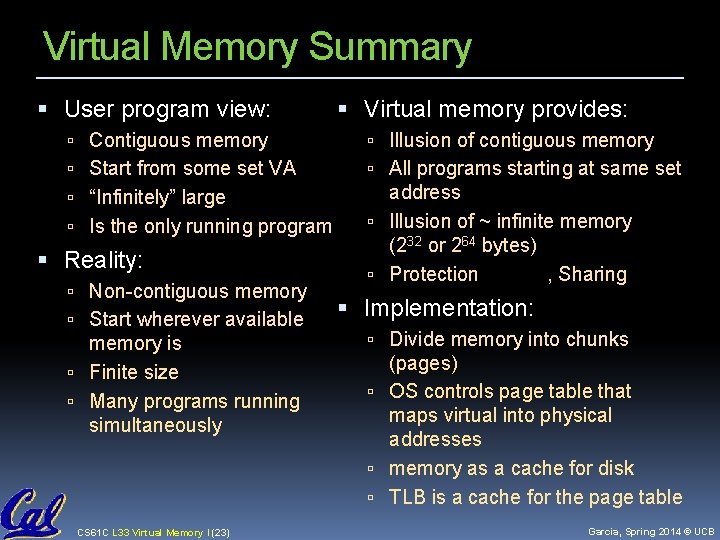

Virtual Memory Summary User program view: Virtual memory provides: Contiguous memory Illusion of contiguous memory Start from some set VA All programs starting at same set “Infinitely” large address Illusion of ~ infinite memory (232 or 264 bytes) Protection , Sharing Is the only running program Reality: Non-contiguous memory Start wherever available memory is Finite size Many programs running simultaneously CS 61 C L 33 Virtual Memory I (23) Implementation: Divide memory into chunks (pages) OS controls page table that maps virtual into physical addresses memory as a cache for disk TLB is a cache for the page table Garcia, Spring 2014 © UCB

- Slides: 21