Infrastructurebased Resilient Routing Ben Y Zhao Ling Huang

Infrastructure-based Resilient Routing Ben Y. Zhao, Ling Huang, Jeremy Stribling, Anthony Joseph and John Kubiatowicz University of California, Berkeley Sahara Winter Retreat, 2004 ravenben@eecs. berkeley. edu

Challenges Facing Network Applications n Network connectivity is not reliable q q Disconnections frequent in the wide-area Internet IP-level repair is slow n n n Wide-area: BGP 3 mins Local-area: IS-IS 5 seconds Next generation network applications q q Mostly wide-area Streaming media, Vo. IP, B 2 B transactions Low tolerance of delay, jitter and faults Our work: transparent resilient routing infrastructure that adapts to faults in not seconds, but milliseconds 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

Talk Overview n n n Motivation A Structured Overlay Infrastructure Mechanisms and policy Evaluation Summary 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

The Challenge n Routing failures are diverse q Many causes: n q Occur anywhere with local or global impact: n n Router misconfigurations, cut fiber, planned downtime, protocol implementation bugs Single fiber cut can disconnect AS pairs Isolating failures is difficult q q Wide-area measurement is ongoing research Single event leads to complex inter-protocol interactions End user symptoms often dynamic or intermittent Requires: n n 12/16/2021 Fault detection from multiple distributed vantage points In-network decision making necessary for timely responses Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

An Infrastructure Approach n Our goals q q q n Overlay focused on resiliency Route around failures to maintain connectivity Respond in milliseconds (react instantaneously to faults) Our approach q q Large-scale infrastructure for fault and route discovery Nodes are observation points (similar to Plato’s NEWS service) Nodes are also points of traffic redirection (forwarding path determination and data forwarding) Automated fault-detection and circumvention n n 12/16/2021 No edge node involvement: fast response time, security focused on infrastructure Fully transparent, no application awareness necessary Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

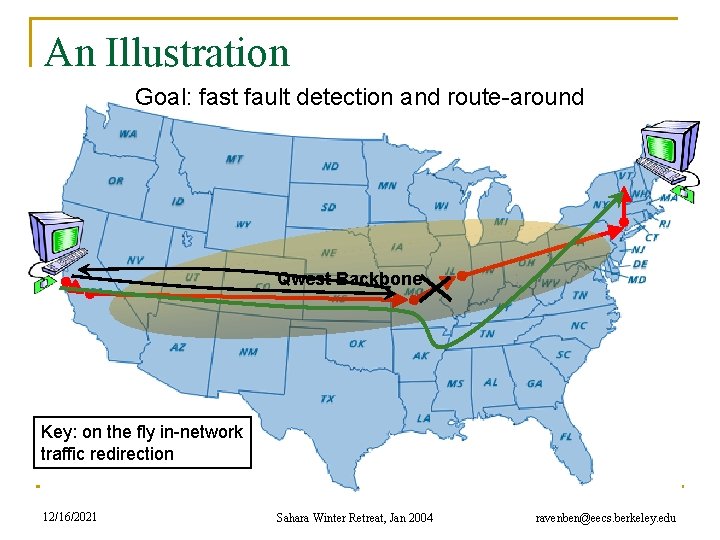

An Illustration Goal: fast fault detection and route-around Qwest Backbone Key: on the fly in-network traffic redirection 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

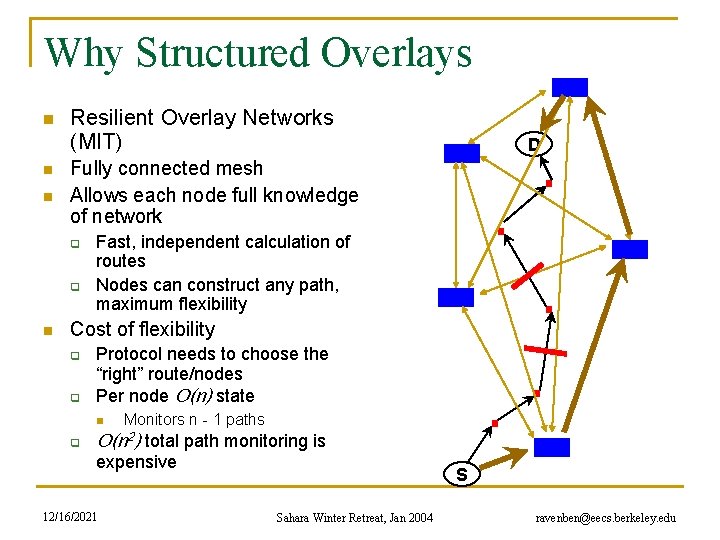

Why Structured Overlays n n n Resilient Overlay Networks (MIT) Fully connected mesh Allows each node full knowledge of network q q n D Fast, independent calculation of routes Nodes can construct any path, maximum flexibility Cost of flexibility q q Protocol needs to choose the “right” route/nodes Per node O(n) state n q Monitors n - 1 paths O(n 2) total path monitoring is expensive 12/16/2021 S Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

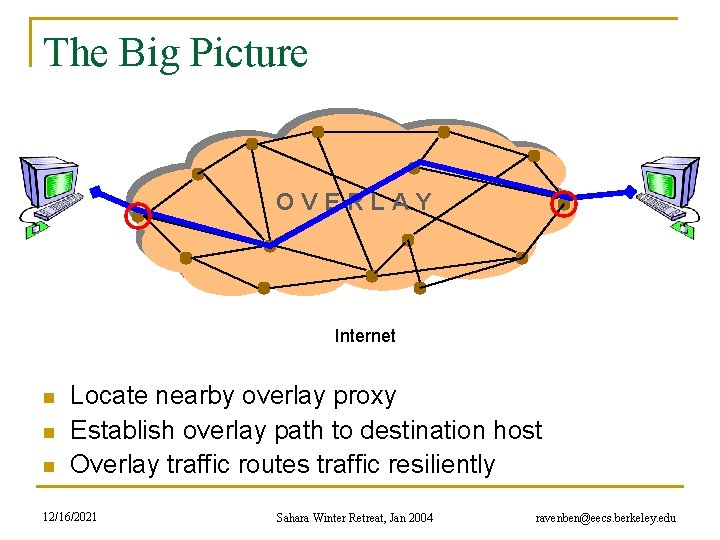

The Big Picture v v v OVERLAY v v v v Internet n n n Locate nearby overlay proxy Establish overlay path to destination host Overlay traffic routes traffic resiliently 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

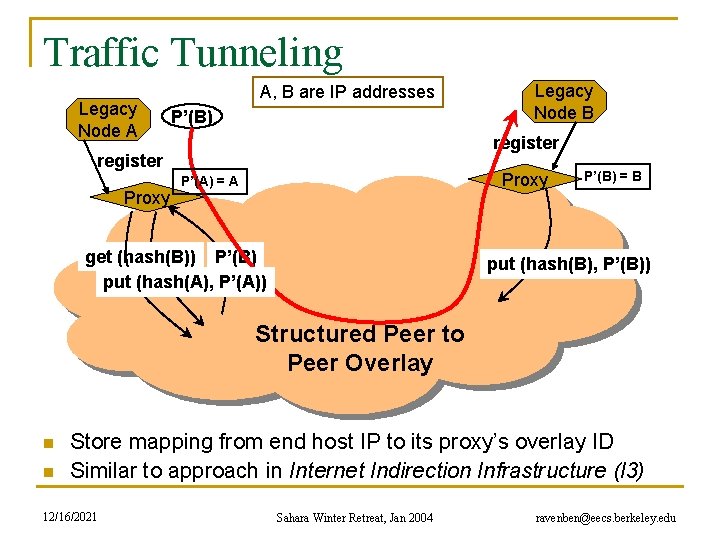

Traffic Tunneling Legacy Node A A, B are IP addresses P’(B) B register Proxy Legacy Node B Proxy P’(A) = A get (hash(B)) P’(B) put (hash(A), P’(A)) P’(B) = B put (hash(B), P’(B)) Structured Peer to Peer Overlay n n Store mapping from end host IP to its proxy’s overlay ID Similar to approach in Internet Indirection Infrastructure (I 3) 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

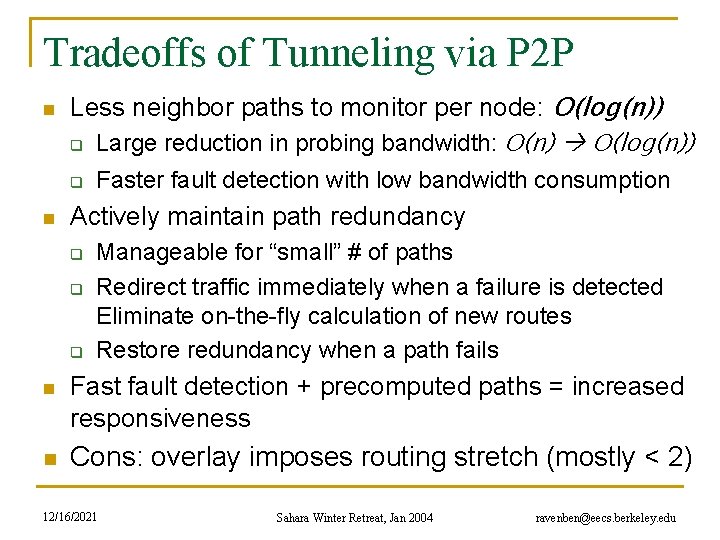

Tradeoffs of Tunneling via P 2 P n Less neighbor paths to monitor per node: O(log(n)) q Large reduction in probing bandwidth: O(n) O(log(n)) q n Faster fault detection with low bandwidth consumption Actively maintain path redundancy q q q Manageable for “small” # of paths Redirect traffic immediately when a failure is detected Eliminate on-the-fly calculation of new routes Restore redundancy when a path fails n Fast fault detection + precomputed paths = increased responsiveness n Cons: overlay imposes routing stretch (mostly < 2) 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

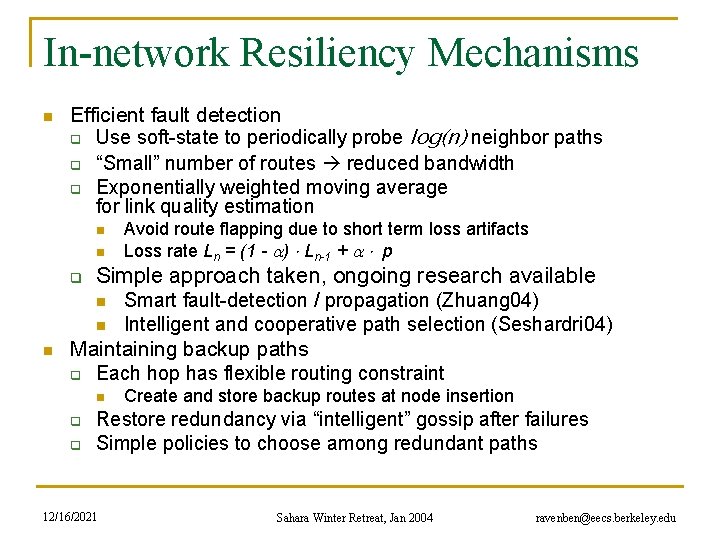

In-network Resiliency Mechanisms n Efficient fault detection q Use soft-state to periodically probe log(n) neighbor paths q “Small” number of routes reduced bandwidth q Exponentially weighted moving average for link quality estimation n n q Simple approach taken, ongoing research available n n n Avoid route flapping due to short term loss artifacts Loss rate Ln = (1 - ) Ln-1 + p Smart fault-detection / propagation (Zhuang 04) Intelligent and cooperative path selection (Seshardri 04) Maintaining backup paths q Each hop has flexible routing constraint n q q Create and store backup routes at node insertion Restore redundancy via “intelligent” gossip after failures Simple policies to choose among redundant paths 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

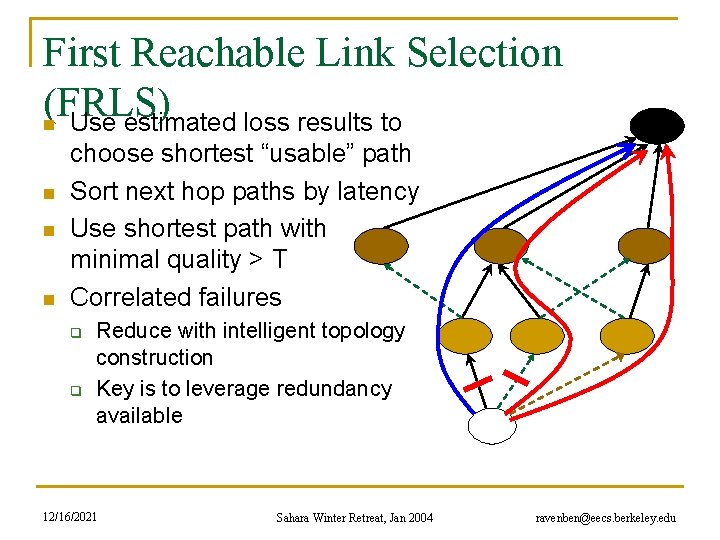

First Reachable Link Selection (FRLS) n Use estimated loss results to n n n choose shortest “usable” path Sort next hop paths by latency Use shortest path with minimal quality > T Correlated failures q q Reduce with intelligent topology construction Key is to leverage redundancy available 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

Evaluation n Metrics for evaluation q q n How much routing resiliency can we exploit? How fast can we adapt to faults (responsiveness)? Experimental platforms q Event-based simulations on transit stub topologies n q Data collected over multiple 5000 -node topologies Planet. Lab measurements n Microbenchmarks on responsiveness More details in paper (ICNP 03) and poster session 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

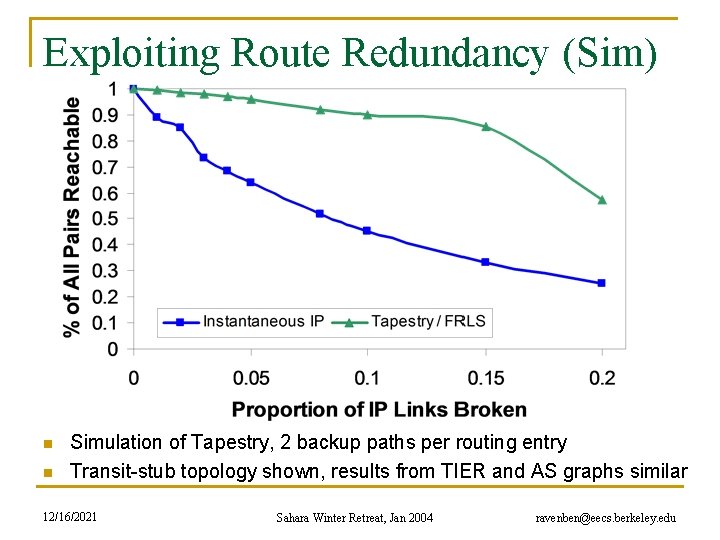

Exploiting Route Redundancy (Sim) n n Simulation of Tapestry, 2 backup paths per routing entry Transit-stub topology shown, results from TIER and AS graphs similar 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

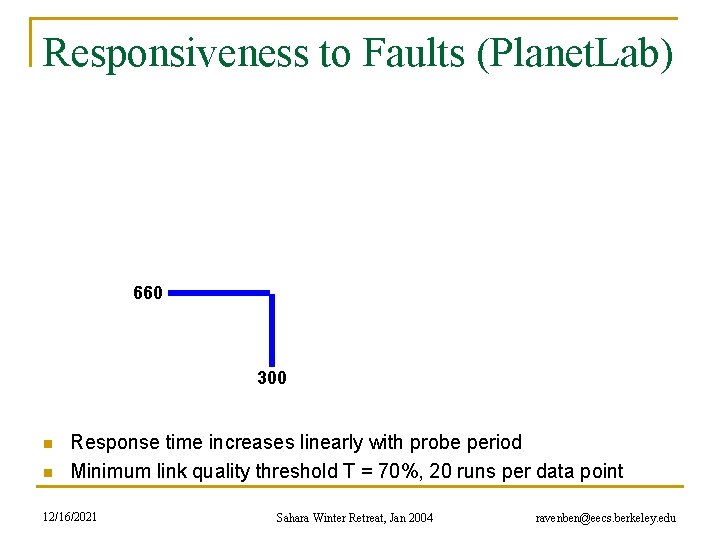

Responsiveness to Faults (Planet. Lab) 660 300 n n Response time increases linearly with probe period Minimum link quality threshold T = 70%, 20 runs per data point 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

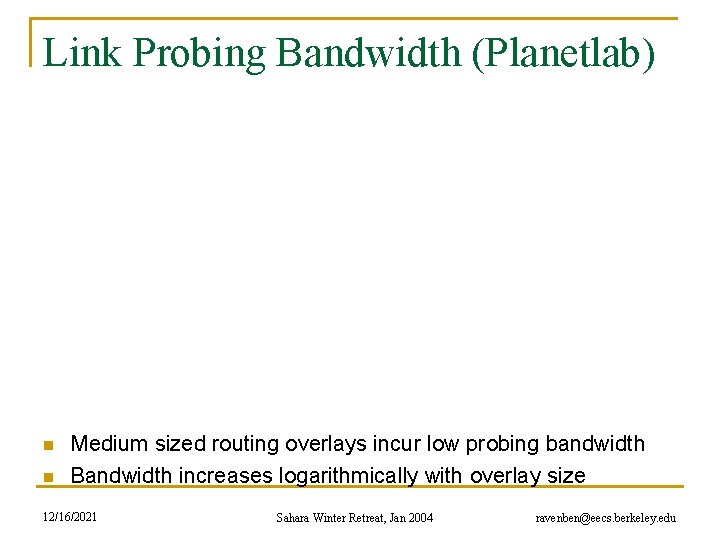

Link Probing Bandwidth (Planetlab) n n Medium sized routing overlays incur low probing bandwidth Bandwidth increases logarithmically with overlay size 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

Conclusion n Pros and cons of infrastructure approach q Structured routing has low path maintenance costs n q q Transparent to user applications Can no longer construct arbitrary paths n n n Structured routing with low redundancy close to ideal connectivity Incur low routing stretch Fast enough for highly interactive applications q q n Allows “caching” of backup paths for quick failover 300 ms beacon period response time < 700 ms On overlay networks of 300 nodes, b/w cost is 7 KB/s Ongoing questions q q Is there lower bound on desired responsiveness? Should we use multipath redundant routing for resilience? How to deploy as a single network across ISPs? VPN-like routing service? 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

Related Work n Redirection overlays q q n Topology estimation techniques q q q n Detour (IEEE Micro 99) Resilient Overlay Networks (SOSP 01) Internet Indirection Infrastructure (SIGCOMM 02) Secure Overlay Services (SIGCOMM 02) Adaptive probing (IPTPS 03) Internet tomography (IMC 03) Routing underlay (SIGCOMM 03) Many, many other structured peer-to-peer overlays Thanks to Dennis Geels / Sean Rhea for their work on BMark 12/16/2021 Sahara Winter Retreat, Jan 2004 ravenben@eecs. berkeley. edu

- Slides: 18