INFORMATION FUSION FOR CYBER SITUATION AWARENESS George Tadda

INFORMATION FUSION FOR CYBER SITUATION AWARENESS George Tadda Fusion Technology Branch Information Directorate Air Force Research Laboratory E-mail: george. tadda@rl. af. mil Phone: 315 -330 -3957

Outline • • • Introduction Motivation Situation Awareness Reference Model Metrics Application of Lessons Learned Approved for Public Release #

Work in Situation Awareness (SA) • Used reference models to demonstrate/build prototype systems for: • Cyber (Defense & Security (D&S) ’ 05, SIMA ‘ 05) • Tactical (ISIF ’ 02) • Global (ISIF ’ 04) • Maritime • and Many Others • Developed Metrics (D&S ’ 04) to Evaluate Level 2 Systems and applied them to Cyber (D&S ’ 05) – After much discussion we questioned the difference between tracking objects and situations and whether the majority of the metrics are just another way to measure integrity of tracks • Additional Activities: – Jean Roy, under The Technical Cooperative Program, presented a definition of situational analysis and included in "Concepts, Models, and Tools for Information Fusion“ – Snidaro, M. Belluz, G. Foresti, “Domain knowledge for security applications”, ISIF’ 07 defined types of events (simple, spatial, and transitive) – Dale Lambert, formalizing situation awareness through mathematics Approved for Public Release # 07 -210

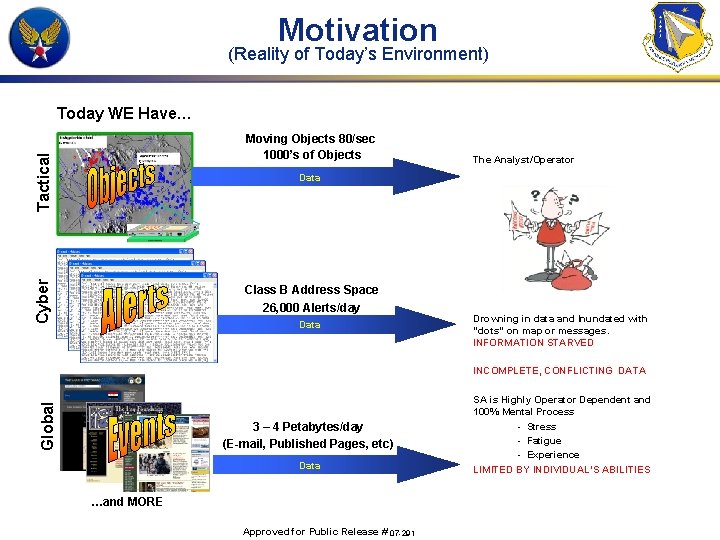

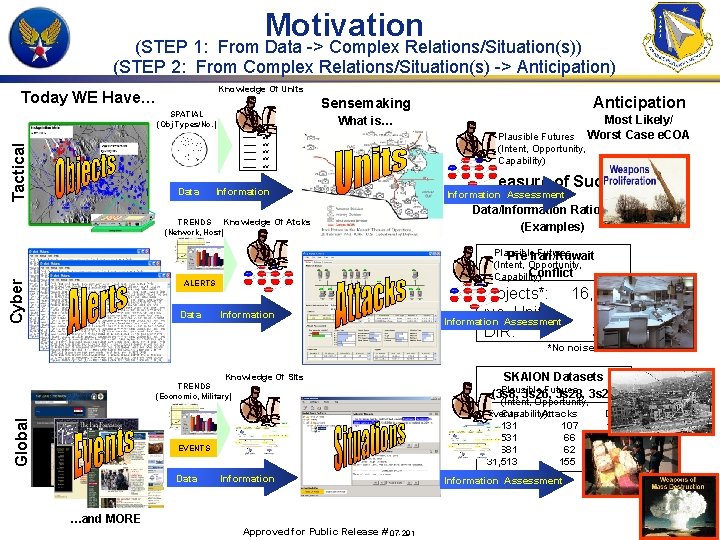

Motivation (Reality of Today’s Environment) Today WE Have… Tactical Moving Objects 80/sec 1000’s of Objects The Analyst/Operator Cyber Data Class B Address Space 26, 000 Alerts/day Data Drowning in data and Inundated with “dots” on map or messages. INFORMATION STARVED Global INCOMPLETE, CONFLICTING DATA 3 – 4 Petabytes/day (E-mail, Published Pages, etc) Data …and MORE Approved for Public Release # 07 -291 SA is Highly Operator Dependent and 100% Mental Process - Stress - Fatigue - Experience LIMITED BY INDIVIDUAL’S ABILITIES

Motivation (STEP 1: From Data -> Complex Relations/Situation(s)) (STEP 2: From Complex Relations/Situation(s) -> Anticipation) Knowledge Of Units Today WE Have… SPATIAL (Obj Types/No. ) Unit A Sensemaking Anticipation What is… Most Likely/ Worst Case e. COA Tactical xx xx xx Data Information Knowledge Of Atcks TRENDS (Network, Host) Cyber A Measure of Success Information Assessment Data/Information Ratio (DIR) (Examples) Plausible Futures Pre Iran/Kuwait (Intent, Opportunity, Conflict Capability) ALERTS Data Plausible Futures (Intent, Opportunity, Capability) Information Objects*: 16, 203 No. Units: 42 Information Assessment DIR: 386 *No noise/clutter Knowledge Of Sits Global TRENDS (Economic, Military) EVENTS Data SKAION Datasets Plausible Futures (3 s 8, 3 s 26, 3 s 28, 3 s 29) (Intent, Opportunity, Events Attacks DIR Capability) 20, 131 107 188 19. 531 66 296 8, 681 62 140 31, 513 155 203 Information …and MORE Approved for Public Release # 07 -291 Information Assessment

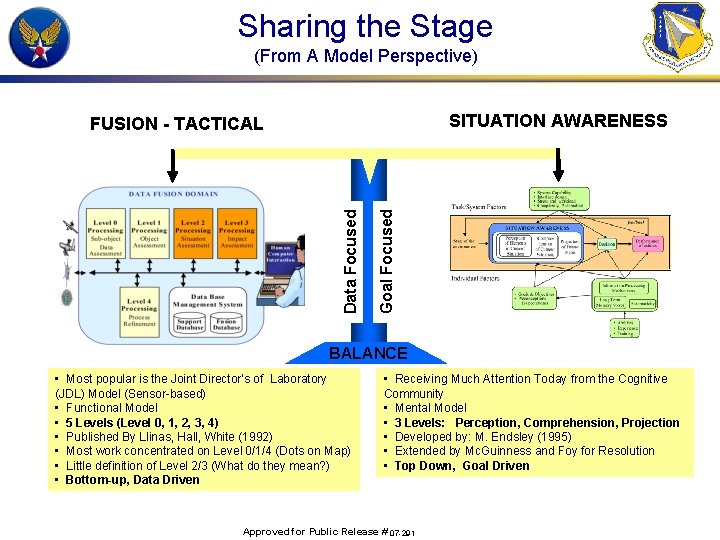

Sharing the Stage (From A Model Perspective) SITUATION AWARENESS Goal Focused Data Focused FUSION - TACTICAL BALANCE • Most popular is the Joint Director’s of Laboratory (JDL) Model (Sensor-based) • Functional Model • 5 Levels (Level 0, 1, 2, 3, 4) • Published By Llinas, Hall, White (1992) • Most work concentrated on Level 0/1/4 (Dots on Map) • Little definition of Level 2/3 (What do they mean? ) • Bottom-up, Data Driven • Receiving Much Attention Today from the Cognitive Community • Mental Model • 3 Levels: Perception, Comprehension, Projection • Developed by: M. Endsley (1995) • Extended by Mc. Guinness and Foy for Resolution • Top Down, Goal Driven Approved for Public Release # 07 -291

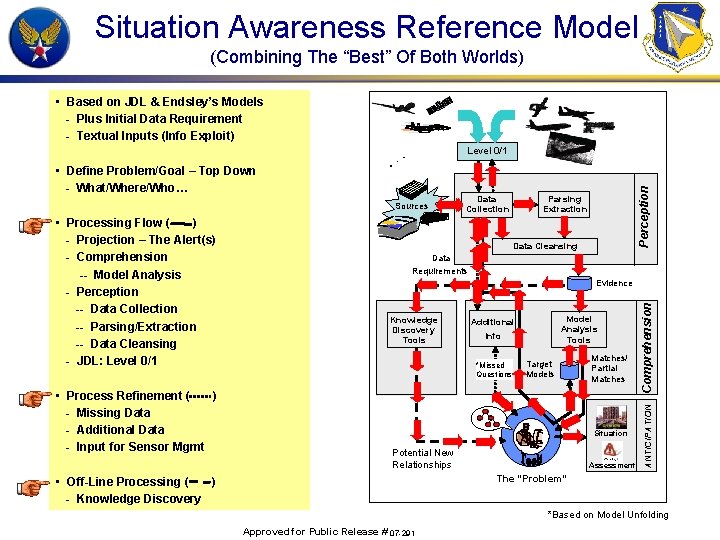

Situation Awareness Reference Model (Combining The “Best” Of Both Worlds) • Based on JDL & Endsley’s Models - Plus Initial Data Requirement - Textual Inputs (Info Exploit) • Define Problem/Goal – Top Down - What/Where/Who… Sources Data Cleansing Data Requirements Evidence Knowledge Discovery Tools Model Analysis Tools Additional Info *Missed Questions Target Models Matches/ Partial Matches Situation Potential New Relationships Assessment Comprehension • Process Refinement ( ) - Missing Data - Additional Data - Input for Sensor Mgmt Parsing Extraction ANTICIPATION • Processing Flow ( ) - Projection – The Alert(s) - Comprehension -- Model Analysis - Perception -- Data Collection -- Parsing/Extraction -- Data Cleansing - JDL: Level 0/1 Data Collection Perception Level 0/1 The “Problem” • Off-Line Processing ( ) - Knowledge Discovery *Based on Model Unfolding Approved for Public Release # 07 -291

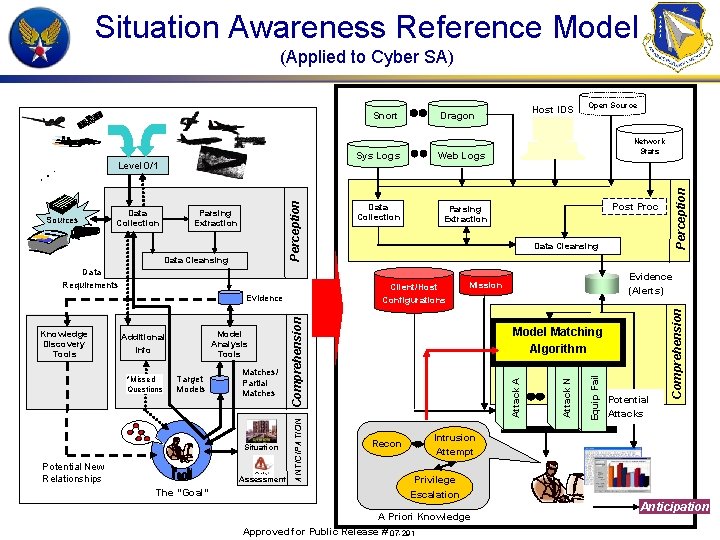

Situation Awareness Reference Model (Applied to Cyber SA) Data Requirements Target Models Matches/ Partial Matches Situation Potential New Relationships Assessment The “Goal” Evidence (Alerts) Mission Comprehension *Missed Questions ANTICIPATION Model Analysis Tools Additional Info Post Proc Data Cleansing Client/Host Configurations Evidence Knowledge Discovery Tools Parsing Extraction Network Stats Model Matching Algorithm Recon Potential Attacks Comprehension Data Cleansing Data Collection Open Source Equip Fail Parsing Extraction Web Logs Attack N Data Collection Sys Logs Attack A Sources Perception Level 0/1 Host IDS Dragon Perception Snort Intrusion Attempt Privilege Escalation A Priori Knowledge Approved for Public Release # 07 -291 Anticipation

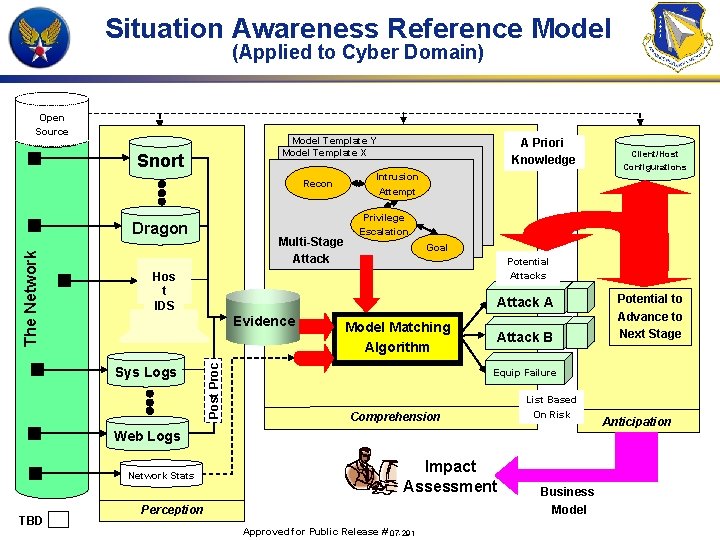

Situation Awareness Reference Model (Applied to Cyber Domain) Open Source Model Template Y Model Template X Snort Recon Multi-Stage Attack Intrusion Attempt Goal Potential Attacks Attack A Evidence Sys Logs Model Matching Algorithm Attack B TBD Potential to Advance to Next Stage Equip Failure Comprehension List Based On Risk Web Logs Network Stats Client/Host Configurations Privilege Escalation Hos t IDS Post Proc The Network Dragon A Priori Knowledge Impact Assessment Perception Approved for Public Release # 07 -291 Business Model Anticipation

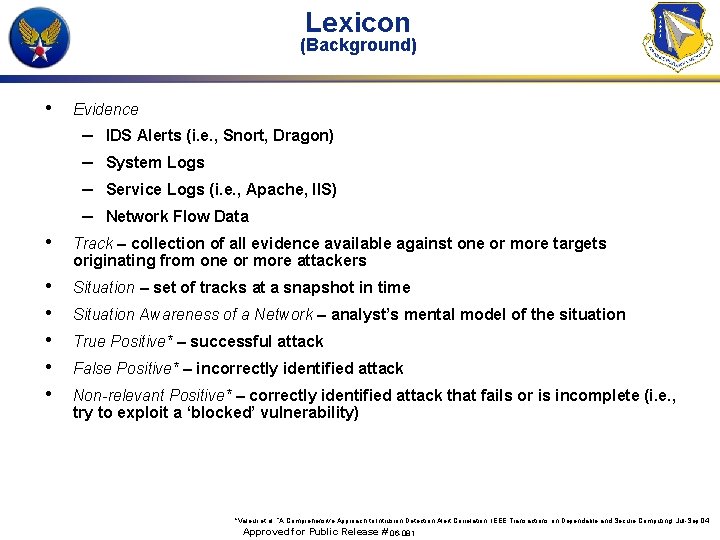

Lexicon (Background) • Evidence – – IDS Alerts (i. e. , Snort, Dragon) System Logs Service Logs (i. e. , Apache, IIS) Network Flow Data • Track – collection of all evidence available against one or more targets originating from one or more attackers • • • Situation – set of tracks at a snapshot in time Situation Awareness of a Network – analyst’s mental model of the situation True Positive* – successful attack False Positive* – incorrectly identified attack Non-relevant Positive* – correctly identified attack that fails or is incomplete (i. e. , try to exploit a ‘blocked’ vulnerability) *Valeur et al, “A Comprehensive Approach to Intrusion Detection Alert Correlation, IEEE Transactions on Dependable and Secure Computing, Jul-Sep 04 Approved for Public Release # 06 -081

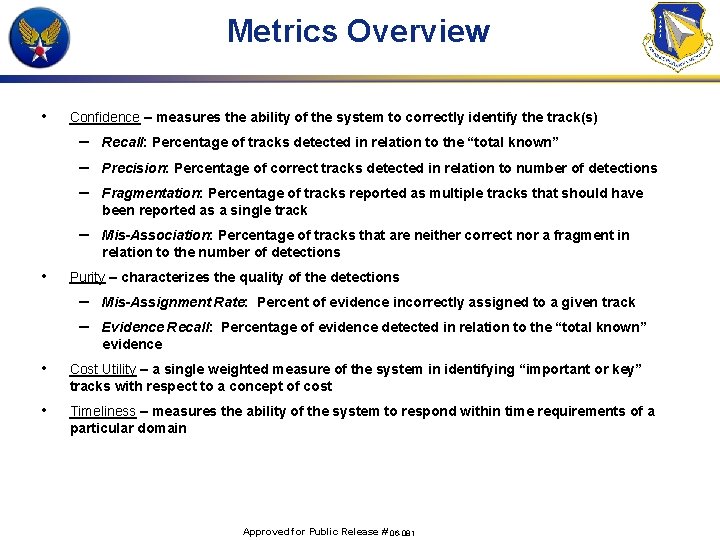

Metrics Overview • • Confidence – measures the ability of the system to correctly identify the track(s) – – – Recall: Percentage of tracks detected in relation to the “total known” – Mis-Association: Percentage of tracks that are neither correct nor a fragment in relation to the number of detections Precision: Percentage of correct tracks detected in relation to number of detections Fragmentation: Percentage of tracks reported as multiple tracks that should have been reported as a single track Purity – characterizes the quality of the detections – – Mis-Assignment Rate: Percent of evidence incorrectly assigned to a given track Evidence Recall: Percentage of evidence detected in relation to the “total known” evidence • Cost Utility – a single weighted measure of the system in identifying “important or key” tracks with respect to a concept of cost • Timeliness – measures the ability of the system to respond within time requirements of a particular domain Approved for Public Release # 06 -081

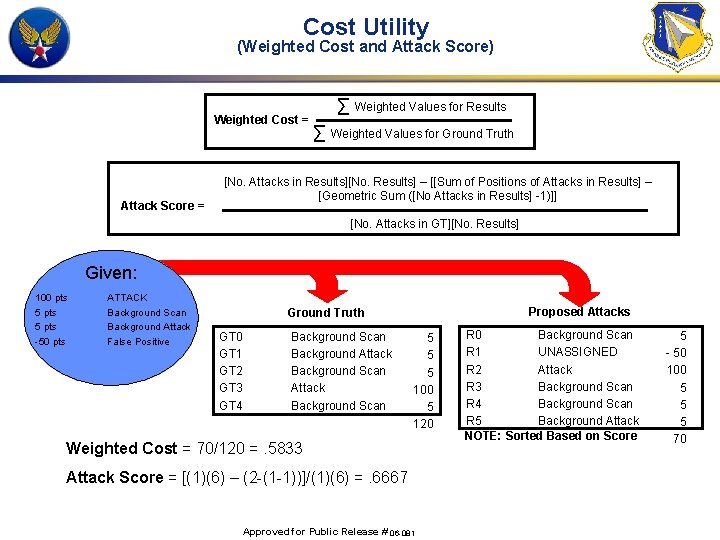

Cost Utility (Weighted Cost and Attack Score) Weighted Cost = Attack Score = ∑ Weighted Values for Results ∑ Weighted Values for Ground Truth [No. Attacks in Results][No. Results] – [[Sum of Positions of Attacks in Results] – [Geometric Sum ([No Attacks in Results] -1)]] [No. Attacks in GT][No. Results] Given: 100 pts 5 pts -50 pts ATTACK Background Scan Background Attack False Positive Proposed Attacks Ground Truth GT 0 GT 1 GT 2 GT 3 GT 4 Background Scan Background Attack Background Scan 5 5 5 100 5 120 Weighted Cost = 70/120 =. 5833 Attack Score = [(1)(6) – (2 -(1 -1))]/(1)(6) =. 6667 Approved for Public Release # 06 -081 R 0 Background Scan R 1 UNASSIGNED R 2 Attack R 3 Background Scan R 4 Background Scan R 5 Background Attack NOTE: Sorted Based on Score 5 - 50 100 5 5 5 70

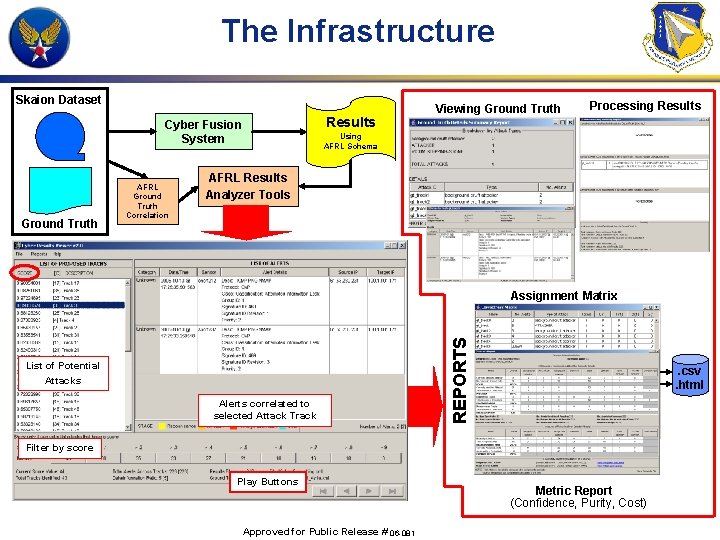

The Infrastructure Skaion Dataset Results Cyber Fusion System Ground Truth AFRL Ground Truth Correlation Viewing Ground Truth Processing Results Using AFRL Schema AFRL Results Analyzer Tools List of Potential Attacks Alerts correlated to selected Attack Track REPORTS Assignment Matrix . csv. html Filter by score Play Buttons Approved for Public Release # 06 -081 Metric Report (Confidence, Purity, Cost)

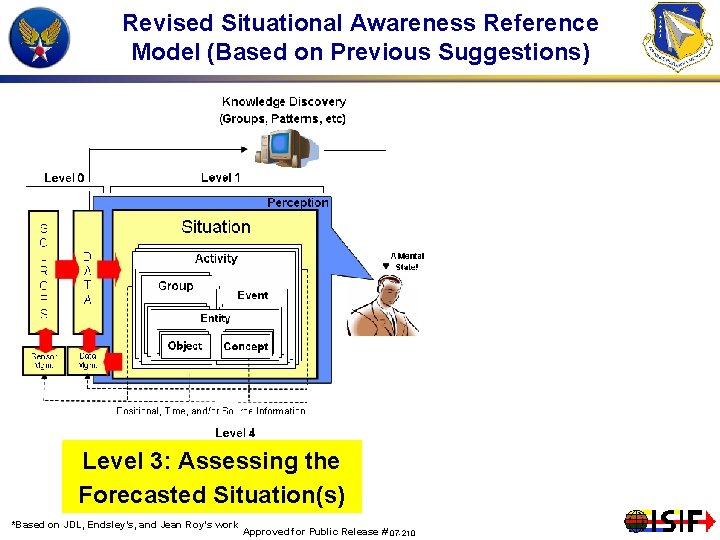

Work has Raised Many Questions … Resulting in Few Answers • Where do groups, events, activities fit in? – – Can we not track a group, an activity (Why only Objects? ) Is a group or activity only a complex object? • • What is a Situation? Is there more than one? Is it Context-based? • What about forecasting or projecting the “future” state? Where does Knowledge Discovery exist? Forensics? What is Situation Assessment? Is Threat Assessment only of the future – what about current threat? No one model answers ALL of these questions and even addresses them! Approved for Public Release # 07 -210

…so Then What • Treating Situation as a composite of activities and tracking activities as complex objects allows for a “cleaner” distinction between fusion levels – Situation(s)-> Activity(s) -> Group(s)/Entity(s) -> Event(s): These are ALL OBJECTS THAT CAN BE TRACKED – Object Assessment has really been performing Tracking & Identification – LET’S TRACK ALL TYPES OF OBJECTS • Knowledge Discovery and a priori knowledge necessary and integral to building “complex” objects (e. g. , Groups, Activities) – Updating knowledge/relationships (models) is continuous and part of refinement process • Define Situation Assessment based on Jean Roy’s Definition for Situational Analysis: – Behavior Analysis – Intent Analysis – Capacity/Capability Analysis – Threat Analysis Approved for Public Release # 07 -210 – Activity Level Analysis – Salience Analysis – Impact Analysis

…and • Use Time to distinguish between JDL Level 2 and 3 as does Endsley’s comprehension and projection – – • Same analysis is done for both levels only difference is time Thus JDL Level 2 is assessment of “current situation and JDL Level 3 is the assessment of the current situation projected forward in time. Process Refinement involves not only sensor movement/collection (sensor management) BUT fusion algorithm management (which algorithms and which parameters to use) and model management from ALL processes. Possible sources to refinement include: L 1: Prediction where object is moving/next event L 2: Missing data, increase certainty of current assessments L 3: Forecasted actions/placement to pre-position sensors Approved for Public Release # 07 -210

Revised Situational Awareness Reference Model (Based on Previous Suggestions) Level Tracking Level 1: 3: 2: Object Assessing the and Identification Forecasted Current Situation(s) *Based on JDL, Endsley’s, and Jean Roy’s work Approved for Public Release # 07 -210

Wrap Up • We proposed a revised Reference Model that includes many of the lessons learned to date • Plans are to continue to apply this revised model to Air, Cyber and Space Situation Awareness – UNIVERSAL SITUATION AWARENESS – …with emphasis on current and forecasted situation assessment Approved for Public Release # 07 -210

- Slides: 18