Information Extraction with Tree Automata Induction Raymond Kosala

![k-testable algorithm [Rico-Juan, et al. ] Given: a set of positive examples T, a k-testable algorithm [Rico-Juan, et al. ] Given: a set of positive examples T, a](https://slidetodoc.com/presentation_image_h2/7f8852deec52e66d50aef06cf819237e/image-17.jpg)

- Slides: 24

Information Extraction with Tree Automata Induction Raymond Kosala 1, Jan Van den Bussche 2, Maurice Bruynooghe 1, Hendrik Blockeel 1 1 Katholieke Universiteit Leuven, Belgium 2 University of Limburg, Belgium

Outline • Introduction: – information extraction (IE) – grammatical inference • • Approach k-testable and g-testable algorithms Preliminary result Further work 2

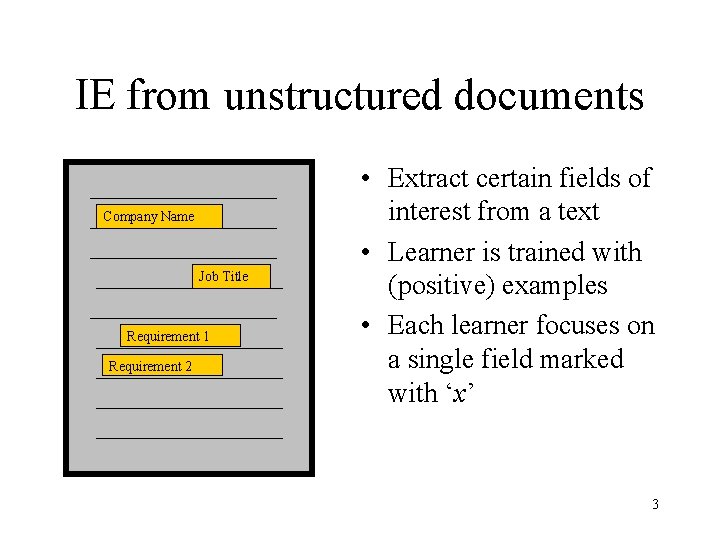

IE from unstructured documents Company Name Job Title Requirement 1 Requirement 2 • Extract certain fields of interest from a text • Learner is trained with (positive) examples • Each learner focuses on a single field marked with ‘x’ 3

Grammatical inference • • • : finite alphabet Regular language L * Given: set of examples (pos. or neg. ) Task: infer a DFA compatible with examples Quality criterion: – Exact learning in the limit – PAC – etc. • Large body of work 4

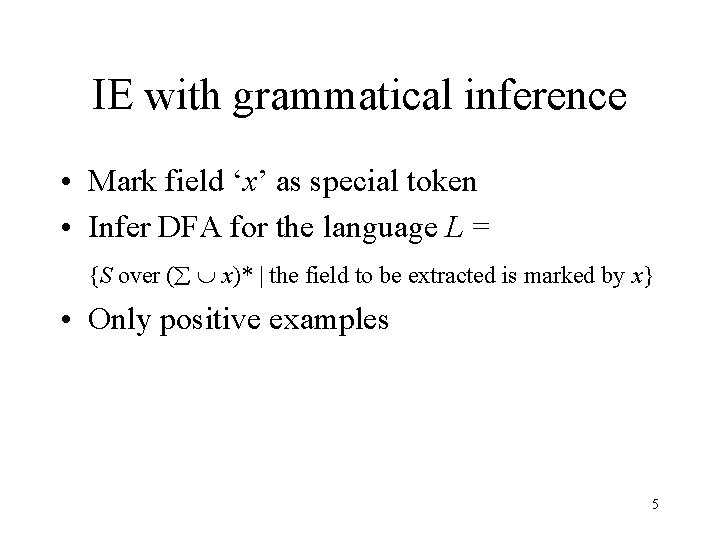

IE with grammatical inference • Mark field ‘x’ as special token • Infer DFA for the language L = {S over ( x)* | the field to be extracted is marked by x} • Only positive examples 5

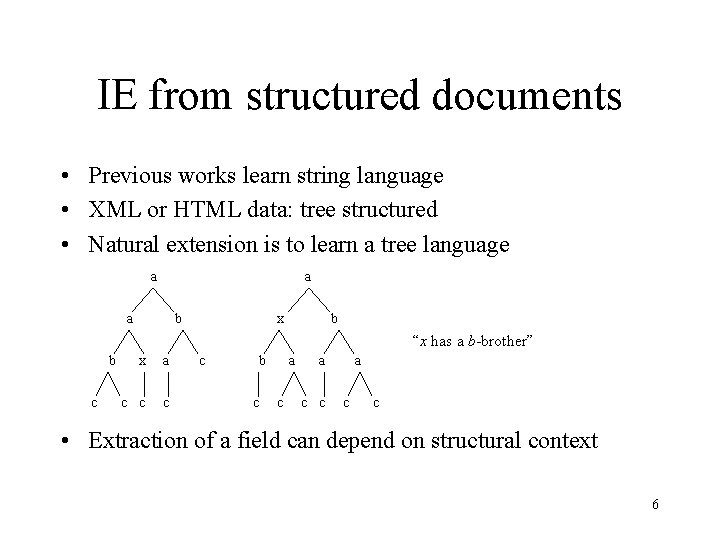

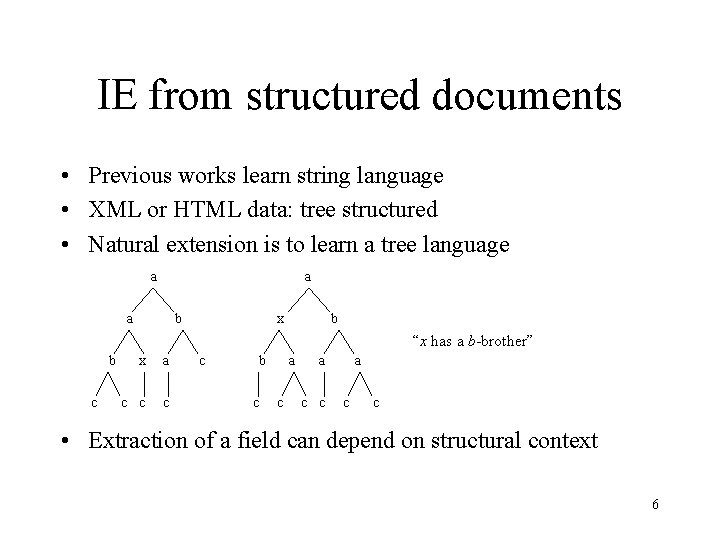

IE from structured documents • Previous works learn string language • XML or HTML data: tree structured • Natural extension is to learn a tree language a a a b x b “x has a b-brother” b c x a c c b c a c c • Extraction of a field can depend on structural context 6

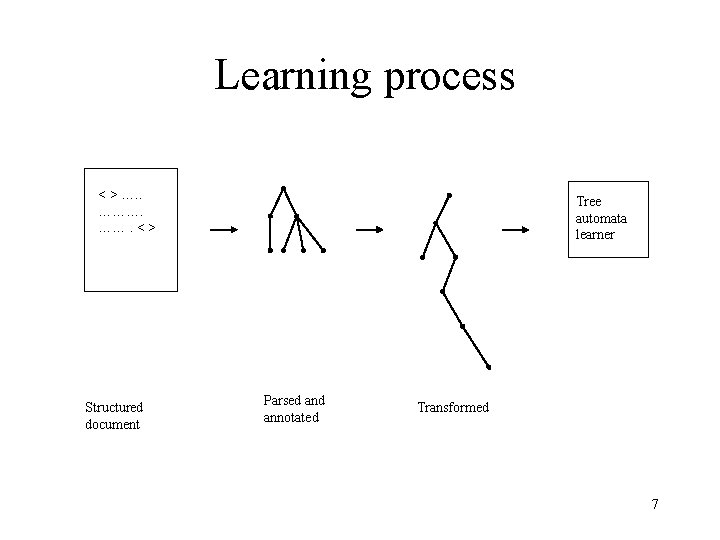

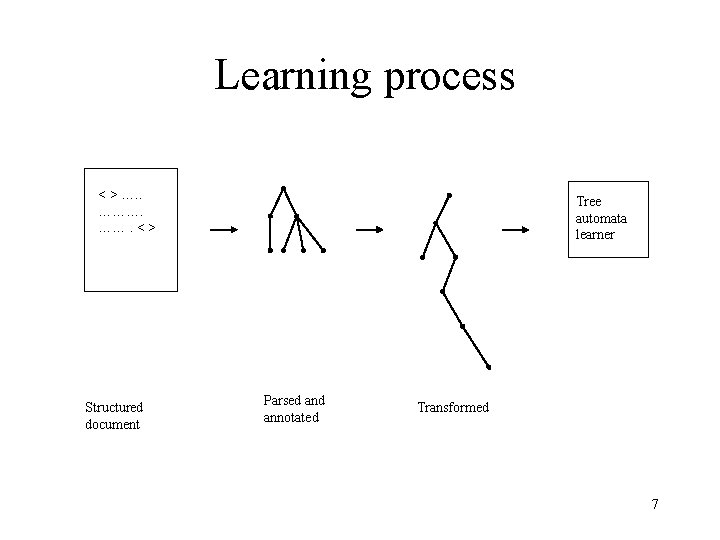

Learning process < > …. . ………. < > Structured document Tree automata learner Parsed annotated Transformed 7

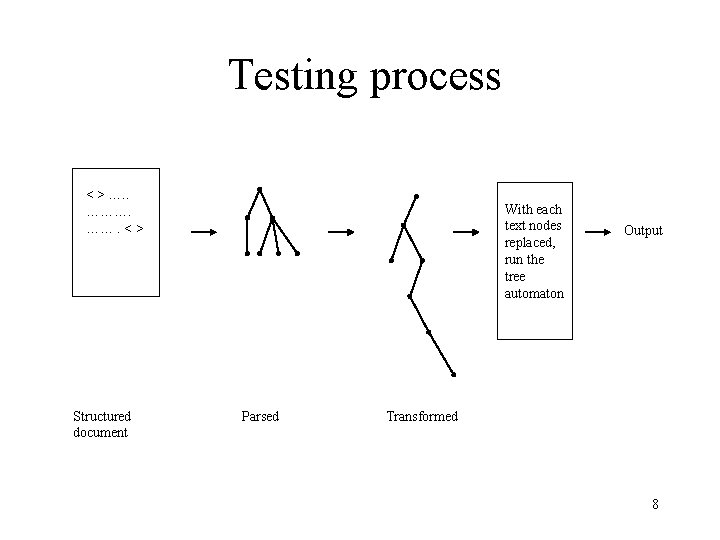

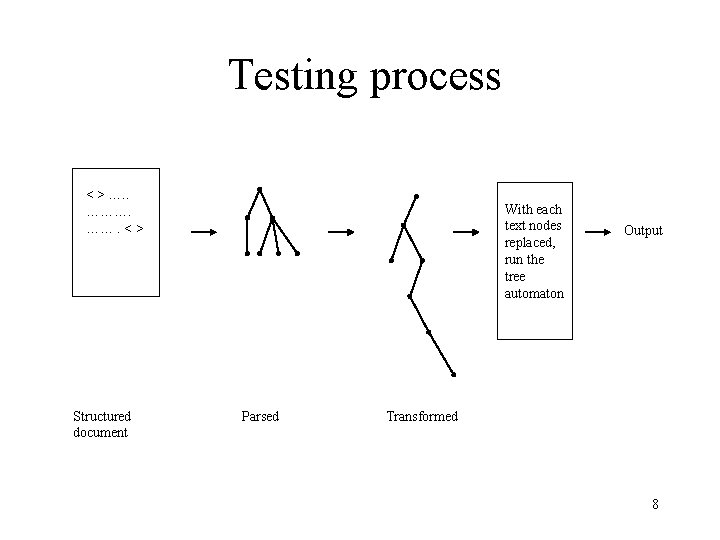

Testing process < > …. . ………. < > Structured document With each text nodes replaced, run the tree automaton Parsed Output Transformed 8

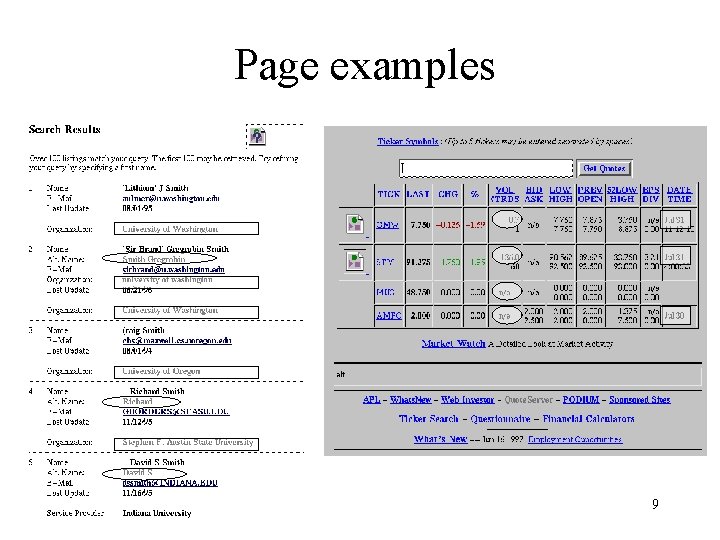

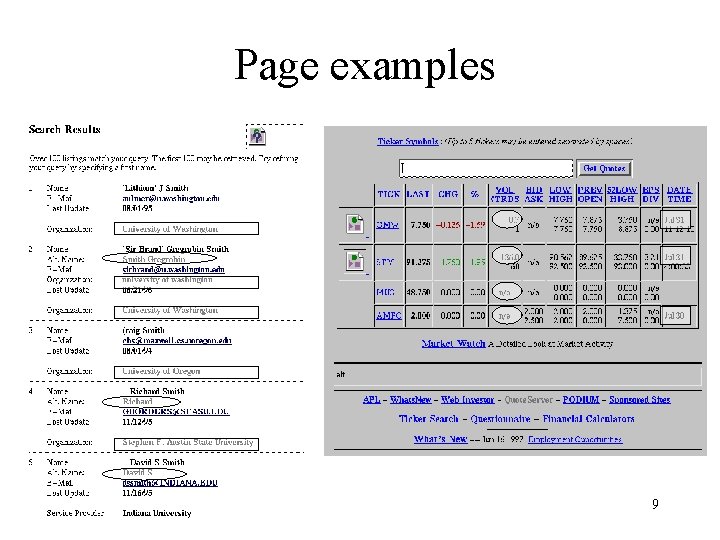

Page examples 9

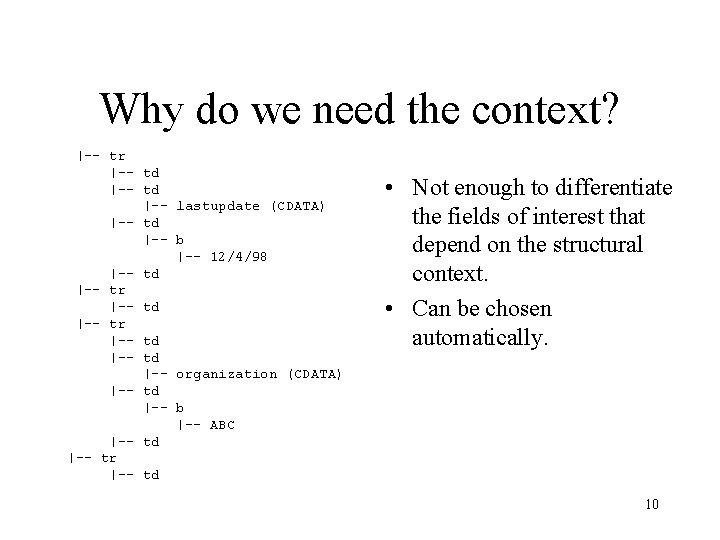

Why do we need the context? |-- tr |-- td |-- lastupdate (CDATA) |-- td |-- b |-- 12/4/98 |-- td |-- tr |-- td |-- organization (CDATA) |-- td |-- b |-- ABC |-- td |-- tr |-- td • Not enough to differentiate the fields of interest that depend on the structural context. • Can be chosen automatically. 10

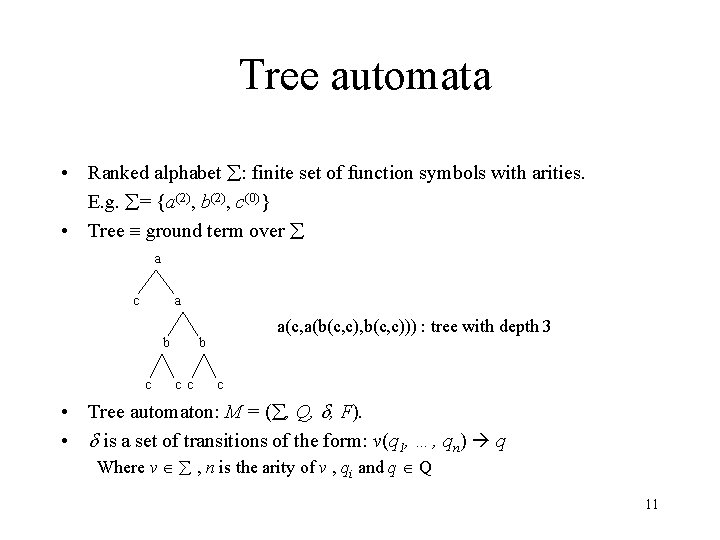

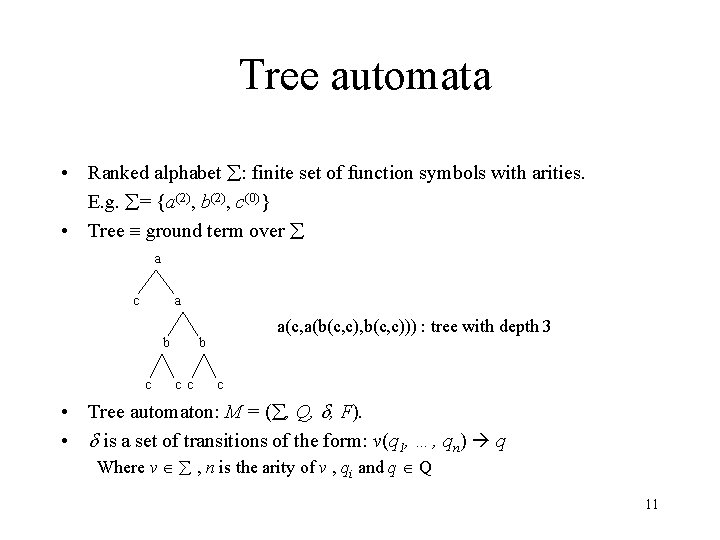

Tree automata • Ranked alphabet : finite set of function symbols with arities. E. g. = {a(2), b(2), c(0)} • Tree ground term over a c a b c a(c, a(b(c, c), b(c, c))) : tree with depth 3 b c c c • Tree automaton: M = ( , Q, , F). • is a set of transitions of the form: v(q 1, …, qn) q Where v , n is the arity of v , qi and q Q 11

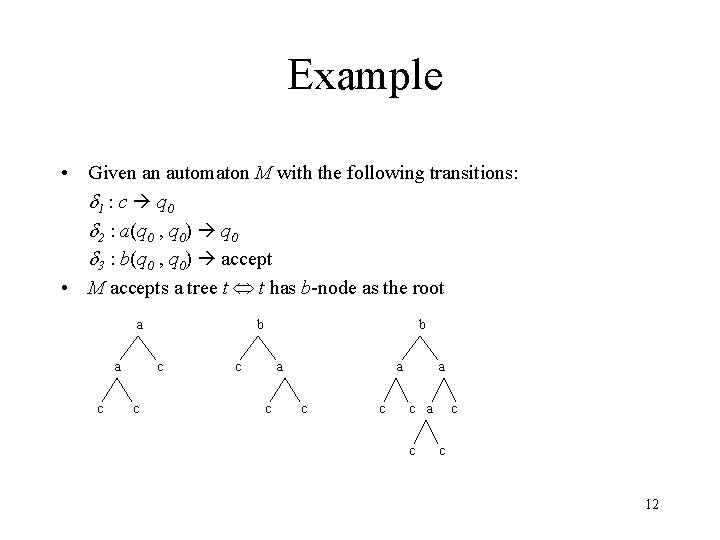

Example • Given an automaton M with the following transitions: 1 : c q 0 2 : a(q 0 , q 0) q 0 3 : b(q 0 , q 0) accept • M accepts a tree t t has b-node as the root a a c b c a c a c c c 12

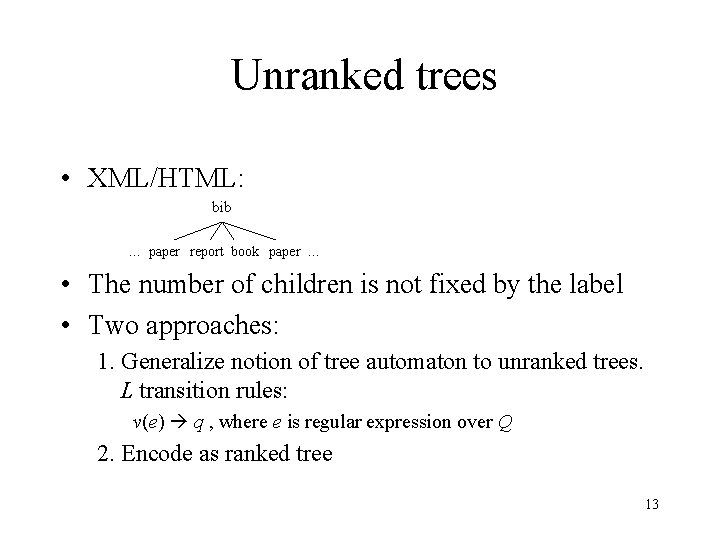

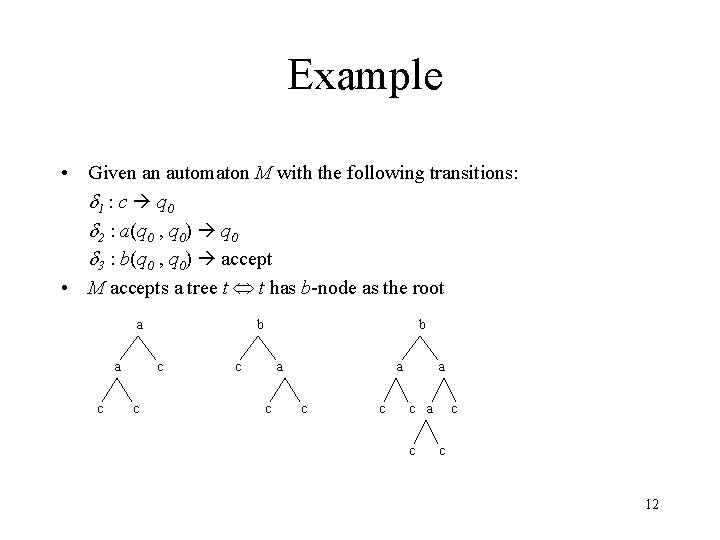

Unranked trees • XML/HTML: bib … paper report book paper … • The number of children is not fixed by the label • Two approaches: 1. Generalize notion of tree automaton to unranked trees. L transition rules: v(e) q , where e is regular expression over Q 2. Encode as ranked tree 13

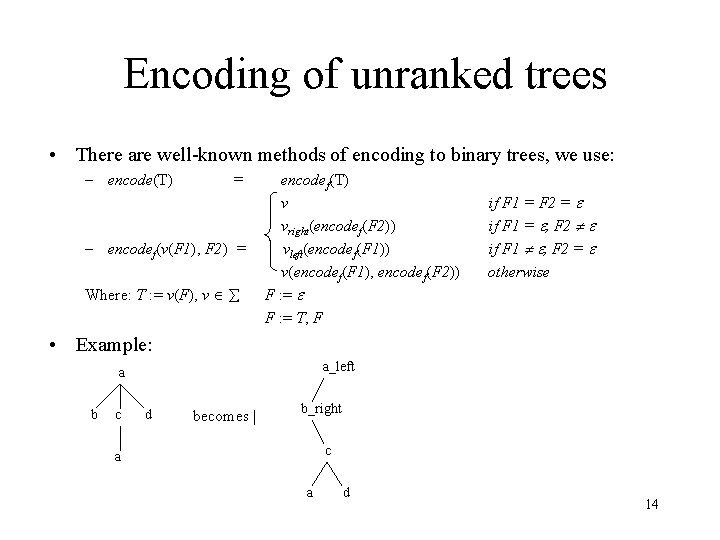

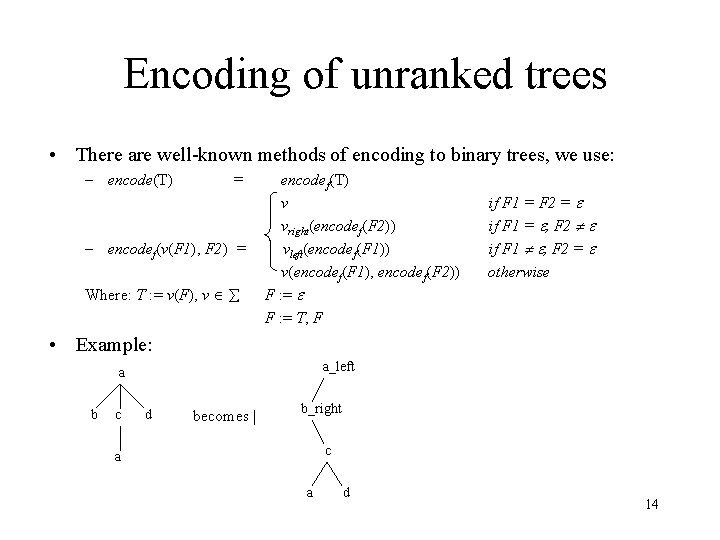

Encoding of unranked trees • There are well-known methods of encoding to binary trees, we use: – encode(T) = – encodef(v(F 1), F 2) = Where: T : = v(F), v encodef(T) v vright(encodef(F 2)) vleft(encodef(F 1)) v(encodef(F 1), encodef(F 2)) F : = T, F if F 1 = F 2 = if F 1 = , F 2 if F 1 , F 2 = otherwise • Example: a_left a b c d becomes | b_right c a a d 14

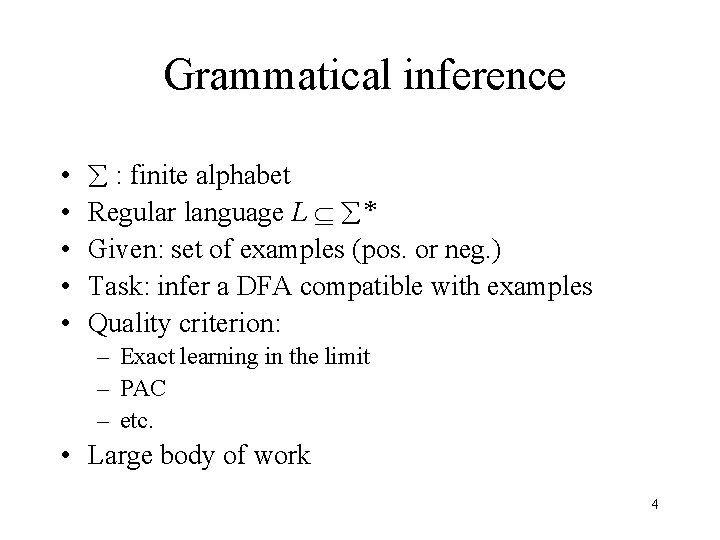

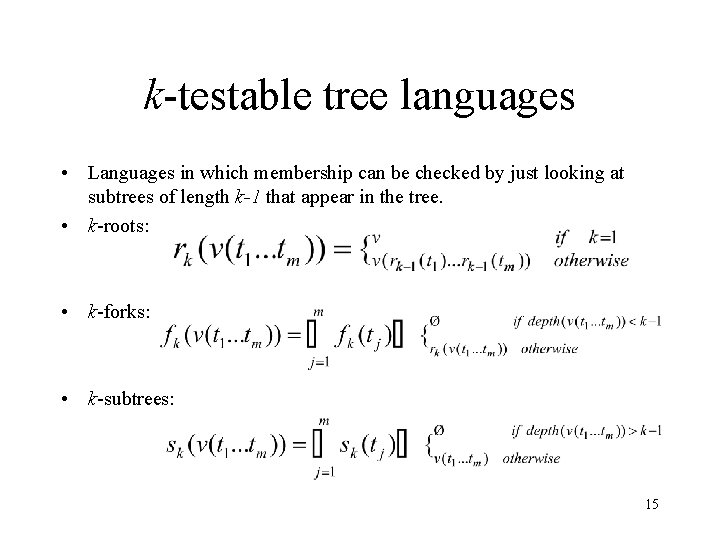

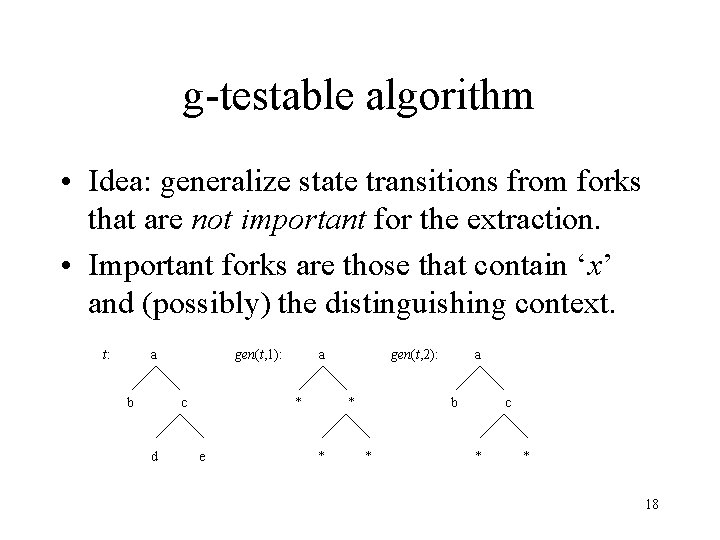

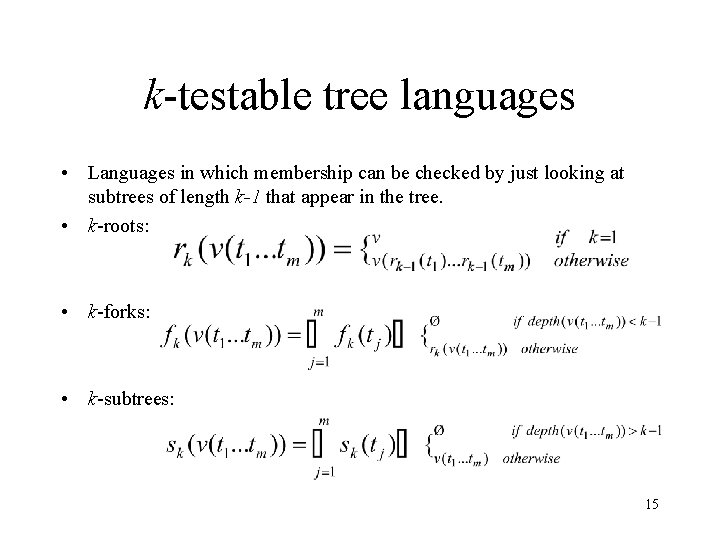

k-testable tree languages • Languages in which membership can be checked by just looking at subtrees of length k-1 that appear in the tree. • k-roots: • k-forks: • k-subtrees: 15

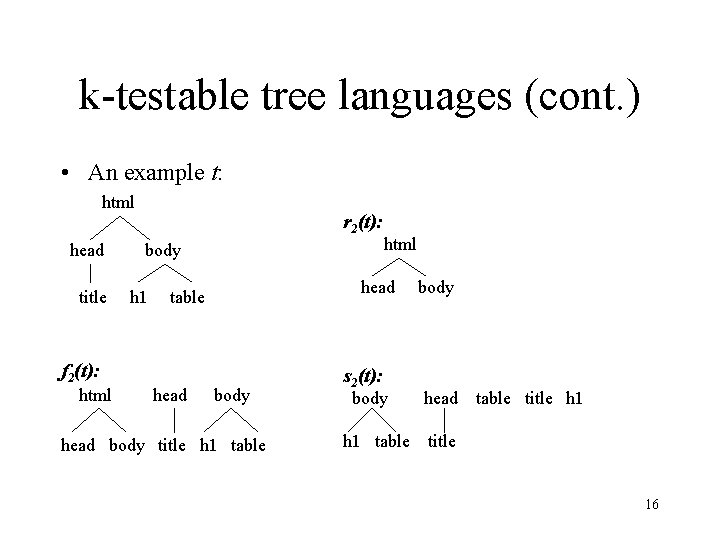

k-testable tree languages (cont. ) • An example t: html head title r 2(t): body h 1 head table f 2(t): html head html body head body title h 1 table s 2(t): body h 1 table body head table title h 1 title 16

![ktestable algorithm RicoJuan et al Given a set of positive examples T a k-testable algorithm [Rico-Juan, et al. ] Given: a set of positive examples T, a](https://slidetodoc.com/presentation_image_h2/7f8852deec52e66d50aef06cf819237e/image-17.jpg)

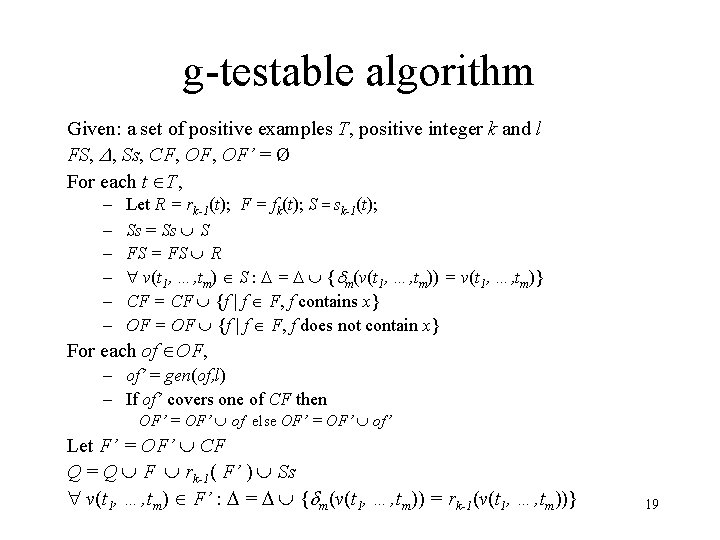

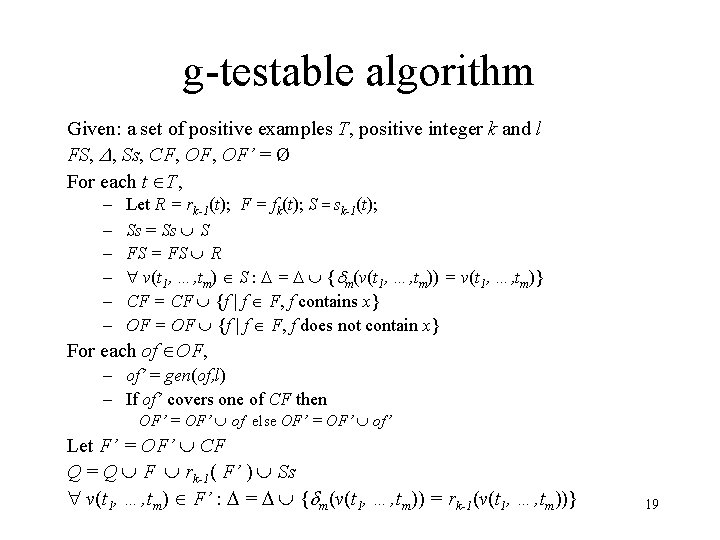

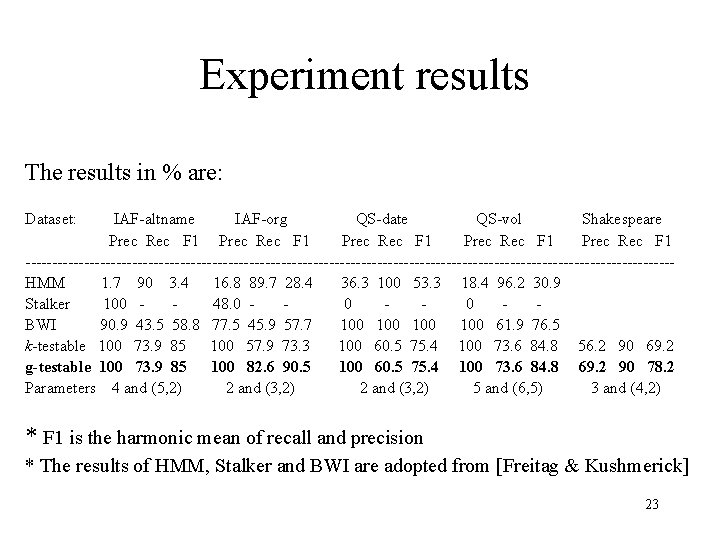

k-testable algorithm [Rico-Juan, et al. ] Given: a set of positive examples T, a positive integer k Q, FS, = Ø For each t T, – Let R = rk-1(t); F = fk(t); S = sk-1(t); – – Q = Q R rk-1( F ) S ; FS = FS R; v(t 1, …, tm) S : = { m(v(t 1, …, tm)) = v(t 1, …, tm)} v(t 1, …, tm) F : = { m(v(t 1, …, tm)) = rk-1(v(t 1, …, tm))} 17

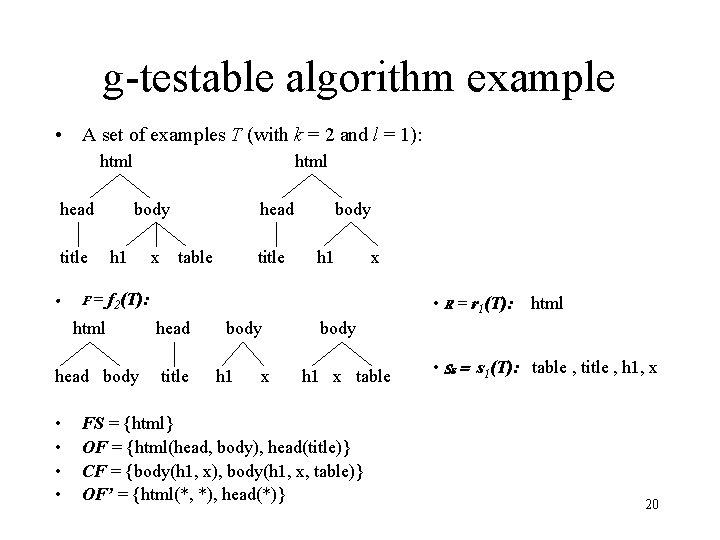

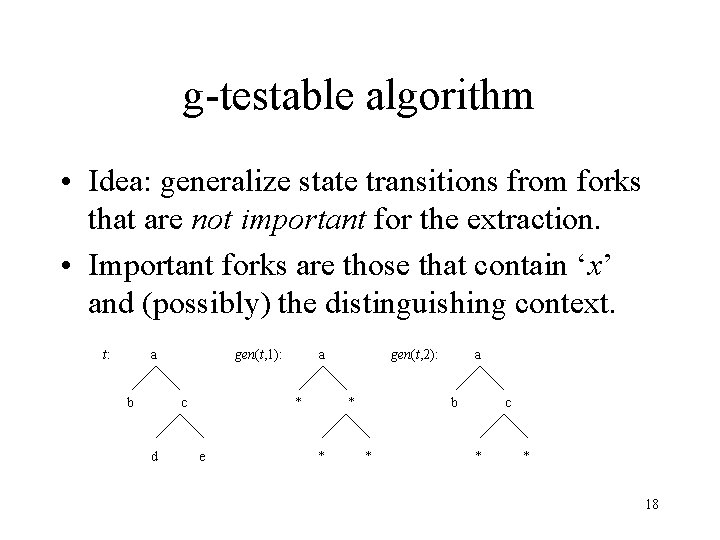

g-testable algorithm • Idea: generalize state transitions from forks that are not important for the extraction. • Important forks are those that contain ‘x’ and (possibly) the distinguishing context. t: a b gen(t, 1): c d a * e gen(t, 2): b * * a * c * * 18

g-testable algorithm Given: a set of positive examples T, positive integer k and l FS, , Ss, CF, OF’ = Ø For each t T, – – – Let R = rk-1(t); F = fk(t); S = sk-1(t); Ss = Ss S FS = FS R v(t 1, …, tm) S : = { m(v(t 1, …, tm)) = v(t 1, …, tm)} CF = CF {f | f F, f contains x} OF = OF {f | f F, f does not contain x} For each of OF, – of’ = gen(of, l) – If of’ covers one of CF then OF’ = OF’ of else OF’ = OF’ of’ Let F’ = OF’ CF Q = Q F rk-1( F’ ) Ss v(t 1, …, tm) F’ : = { m(v(t 1, …, tm)) = rk-1(v(t 1, …, tm))} 19

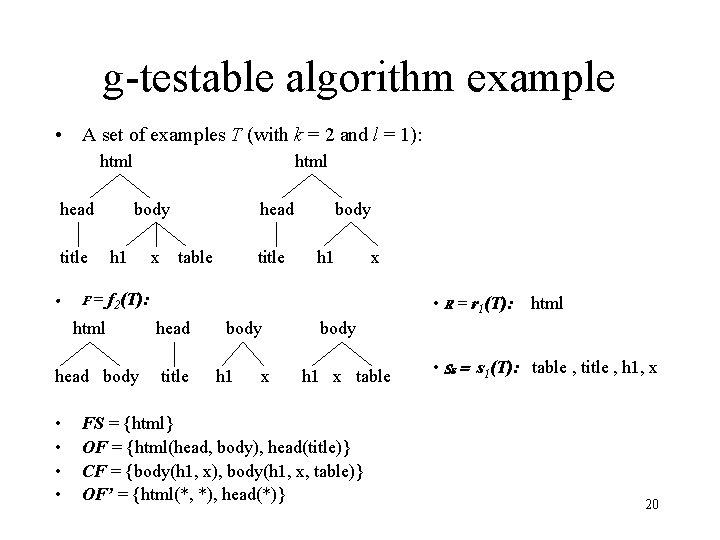

g-testable algorithm example • A set of examples T (with k = 2 and l = 1): html head title • html body h 1 table title body h 1 x F = f 2(T): html head body • • x head title body h 1 x table FS = {html} OF = {html(head, body), head(title)} CF = {body(h 1, x), body(h 1, x, table)} OF’ = {html(*, *), head(*)} • R = r 1(T): html • Ss = s 1(T): table , title , h 1, x 20

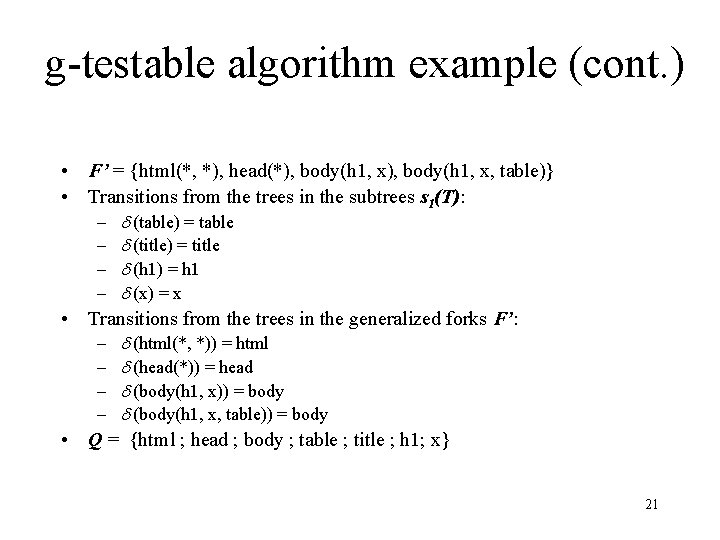

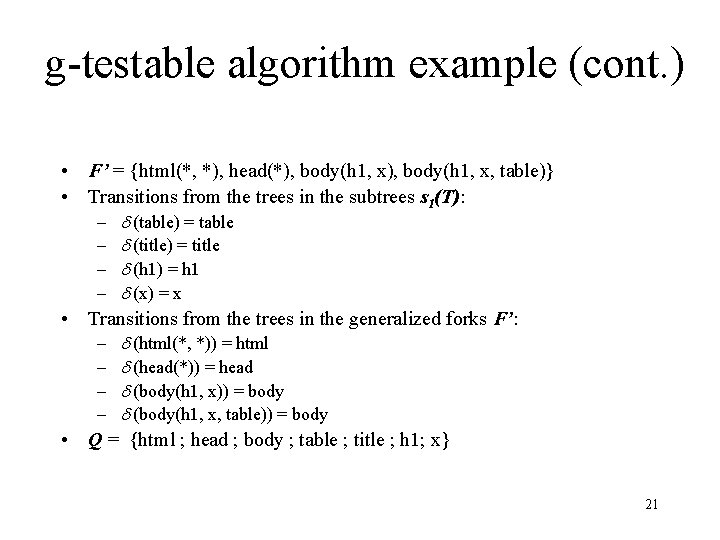

g-testable algorithm example (cont. ) • F’ = {html(*, *), head(*), body(h 1, x, table)} • Transitions from the trees in the subtrees s 1(T): (table) = table (title) = title (h 1) = h 1 (x) = x Transitions from the trees in the generalized forks F’: – (html(*, *)) = html – (head(*)) = head – (body(h 1, x)) = body – (body(h 1, x, table)) = body Q = {html ; head ; body ; table ; title ; h 1; x} – – • • 21

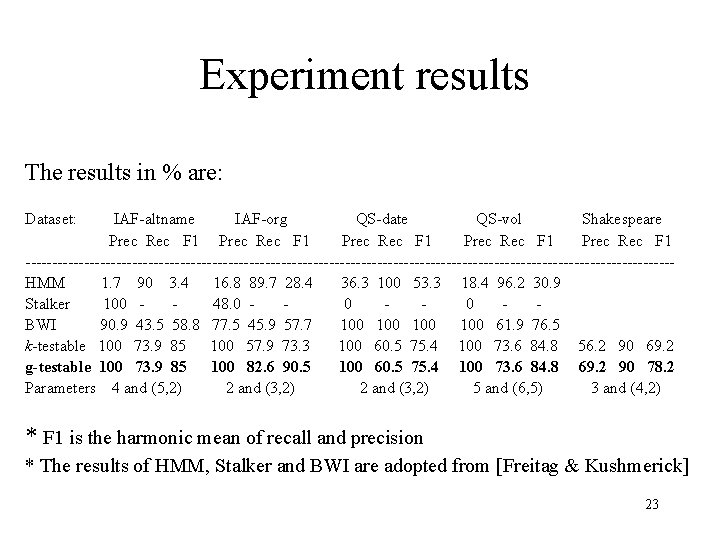

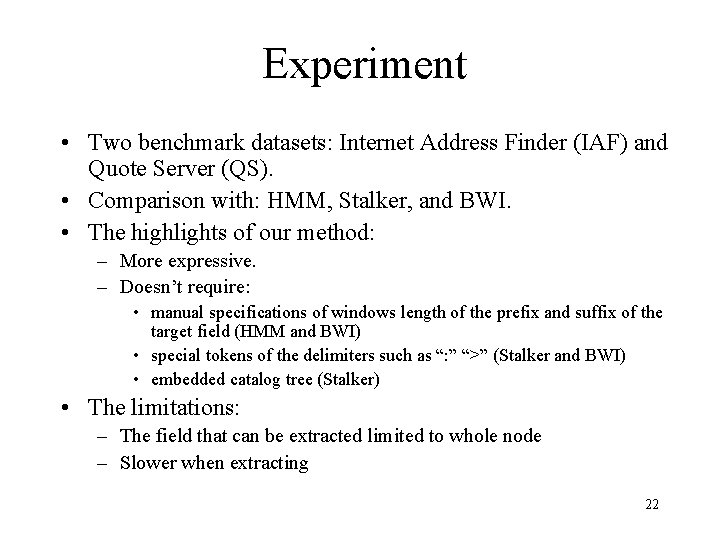

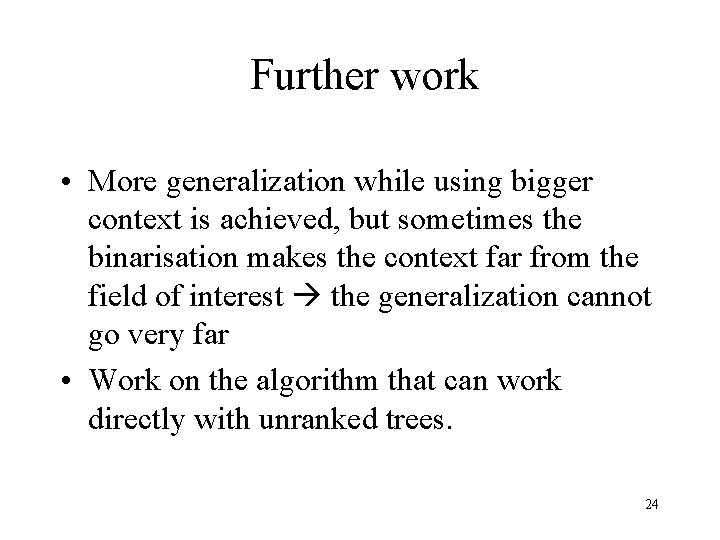

Experiment • Two benchmark datasets: Internet Address Finder (IAF) and Quote Server (QS). • Comparison with: HMM, Stalker, and BWI. • The highlights of our method: – More expressive. – Doesn’t require: • manual specifications of windows length of the prefix and suffix of the target field (HMM and BWI) • special tokens of the delimiters such as “: ” “>” (Stalker and BWI) • embedded catalog tree (Stalker) • The limitations: – The field that can be extracted limited to whole node – Slower when extracting 22

Experiment results The results in % are: Dataset: IAF-altname IAF-org QS-date QS-vol Shakespeare Prec Rec F 1 Prec Rec F 1 -------------------------------------------------------------HMM 1. 7 90 3. 4 16. 8 89. 7 28. 4 36. 3 100 53. 3 18. 4 96. 2 30. 9 Stalker 100 48. 0 0 0 BWI 90. 9 43. 5 58. 8 77. 5 45. 9 57. 7 100 100 61. 9 76. 5 k-testable 100 73. 9 85 100 57. 9 73. 3 100 60. 5 75. 4 100 73. 6 84. 8 56. 2 90 69. 2 g-testable 100 73. 9 85 100 82. 6 90. 5 100 60. 5 75. 4 100 73. 6 84. 8 69. 2 90 78. 2 Parameters 4 and (5, 2) 2 and (3, 2) 5 and (6, 5) 3 and (4, 2) * F 1 is the harmonic mean of recall and precision * The results of HMM, Stalker and BWI are adopted from [Freitag & Kushmerick] 23

Further work • More generalization while using bigger context is achieved, but sometimes the binarisation makes the context far from the field of interest the generalization cannot go very far • Work on the algorithm that can work directly with unranked trees. 24