Information and interactive computation Mark Braverman Computer Science

Information and interactive computation Mark Braverman Computer Science, Princeton University January 16, 2012 1

Prelude: one-way communication • Basic goal: send a message from Alice to Bob over a channel. communication channel Alice Bob 2

One-way communication 1) Encode; 2) Send; 3) Decode. communication channel Alice Bob 3

Coding for one-way communication • There are two main problems a good encoding needs to address: – Efficiency: use the least amount of the channel/storage necessary. – Error-correction: recover from (reasonable) errors; 4

Interactive computation Today’s theme Extending information and coding theory to interactive computation. I will talk about interactive information theory and Anup Rao will talk about interactive error correction. 5

Efficient encoding • Can measure the cost of storing a random variable X very precisely. • Entropy: H(X) = ∑Pr[X=x] log(1/Pr[X=x]). • H(X) measures the average amount of information a sample from X reveals. • A uniformly random string of 1, 000 bits has 1, 000 bits of entropy. 6

![Efficient encoding • H(X) = ∑Pr[X=x] log(1/Pr[X=x]). • The ZIP algorithm works because H(X=typical Efficient encoding • H(X) = ∑Pr[X=x] log(1/Pr[X=x]). • The ZIP algorithm works because H(X=typical](http://slidetodoc.com/presentation_image_h2/908f2a737d31547d69306020ddbcf4d5/image-7.jpg)

Efficient encoding • H(X) = ∑Pr[X=x] log(1/Pr[X=x]). • The ZIP algorithm works because H(X=typical 1 MB file) < 8 Mbits. • P[“Hello, my name is Bob”] >> P[“h)2 cj. Cv 9]dsn. C 1=Ns{da 3”]. • For one-way encoding, Shannon’s source coding theorem states that Communication ≈ Information. 7

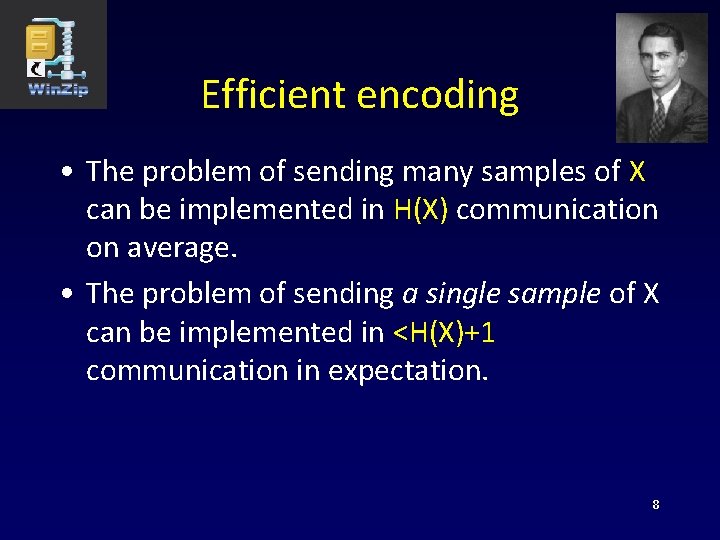

Efficient encoding • The problem of sending many samples of X can be implemented in H(X) communication on average. • The problem of sending a single sample of X can be implemented in <H(X)+1 communication in expectation. 8

![Communication complexity [Yao] • Focus on the two party setting. A & B implement Communication complexity [Yao] • Focus on the two party setting. A & B implement](http://slidetodoc.com/presentation_image_h2/908f2a737d31547d69306020ddbcf4d5/image-9.jpg)

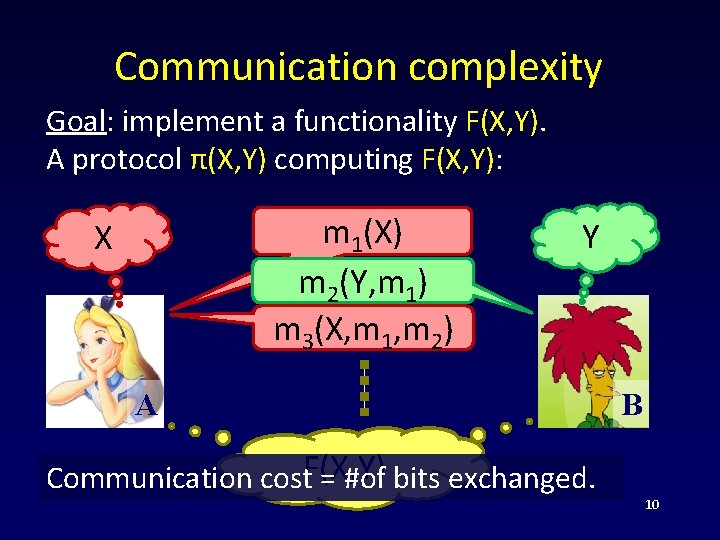

Communication complexity [Yao] • Focus on the two party setting. A & B implement a functionality F(X, Y). X Y F(X, Y) A e. g. F(X, Y) = “X=Y? ” B 9

Communication complexity Goal: implement a functionality F(X, Y). A protocol π(X, Y) computing F(X, Y): m 1(X) m 2(Y, m 1) m 3(X, m 1, m 2) X Y A Communication cost. F(X, Y) = #of bits exchanged. B 10

Distributional communication complexity • The input pair (X, Y) is drawn according to some distribution μ. • Goal: make a mistake on at most an ε fraction of inputs. • The communication cost: C(F, μ, ε) : = minπ computes F with error≤ε C(π, μ). 11

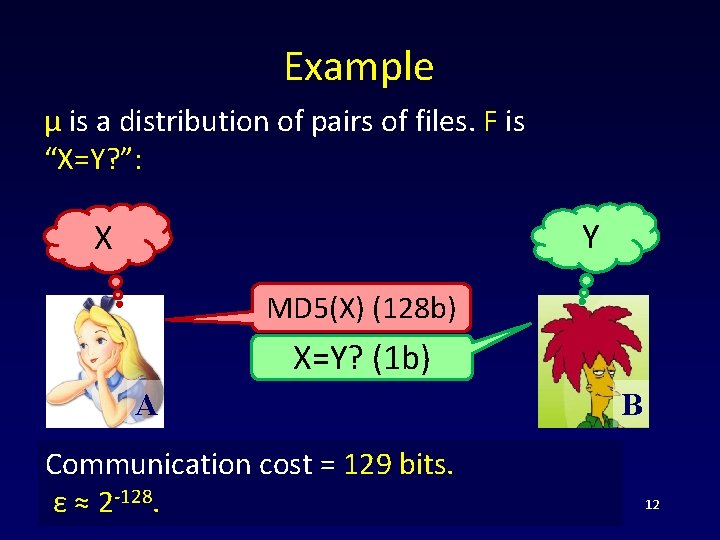

Example μ is a distribution of pairs of files. F is “X=Y? ”: Y X MD 5(X) (128 b) X=Y? (1 b) A Communication cost = 129 bits. ε ≈ 2 -128. B 12

Randomized communication complexity • Goal: make a mistake of at most ε on every input. • The communication cost: R(F, ε). • Clearly: C(F, μ, ε)≤R(F, ε) for all μ. • What about the converse? • A minimax(!) argument [Yao]: R(F, ε)=maxμ C(F, μ, ε). 13

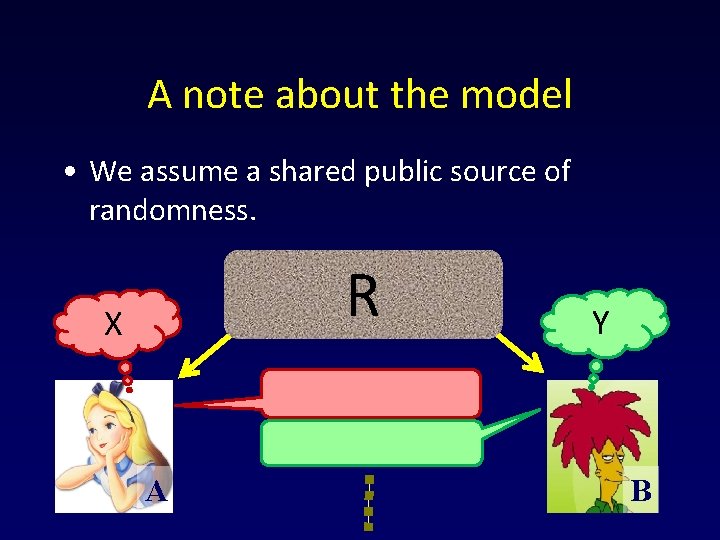

A note about the model • We assume a shared public source of randomness. R X A Y B 14

The communication complexity of EQ(X, Y) • The communication complexity of equality: R(EQ, ε) ≈ log 1/ε • Send log 1/ε random hash functions applied to the inputs. Accept if all of them agree. • What if ε=0? R(EQ, 0) ≈ n, where X, Y in {0, 1}n. 15

Information in a two-way channel • H(X) is the “inherent information cost” of sending a message distributed according to X over the channel. communication channel Alice X Bob What is the two-way analogue of H(X)? 16

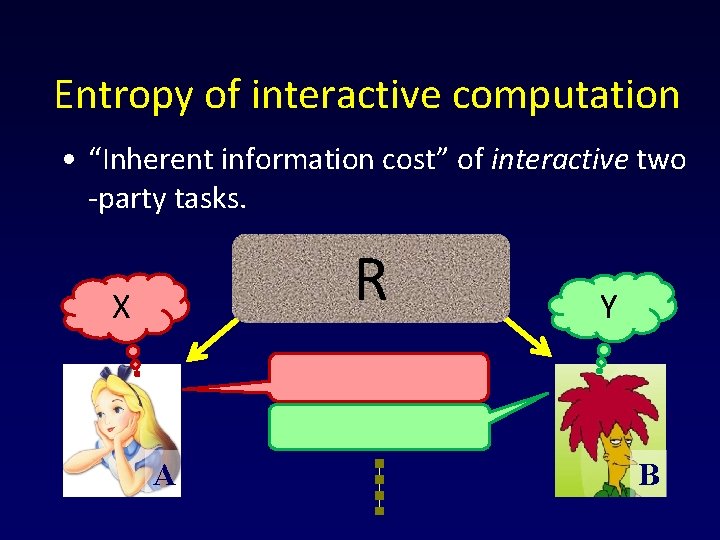

Entropy of interactive computation • “Inherent information cost” of interactive two -party tasks. R X A Y B

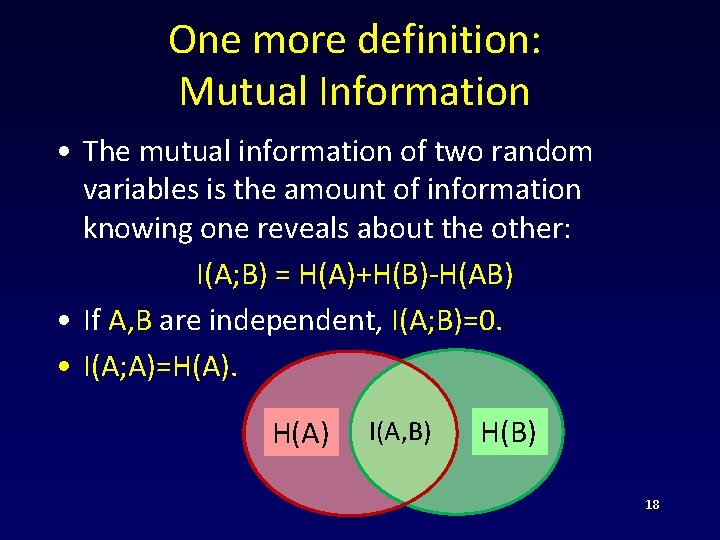

One more definition: Mutual Information • The mutual information of two random variables is the amount of information knowing one reveals about the other: I(A; B) = H(A)+H(B)-H(AB) • If A, B are independent, I(A; B)=0. • I(A; A)=H(A) I(A, B) H(B) 18

![Information cost of a protocol • [Chakrabarti-Shi-Wirth-Yao-01, Bar-Yossef. Jayram-Kumar-Sivakumar-04, Barak-B-Chen -Rao-10]. • Caution: different Information cost of a protocol • [Chakrabarti-Shi-Wirth-Yao-01, Bar-Yossef. Jayram-Kumar-Sivakumar-04, Barak-B-Chen -Rao-10]. • Caution: different](http://slidetodoc.com/presentation_image_h2/908f2a737d31547d69306020ddbcf4d5/image-19.jpg)

Information cost of a protocol • [Chakrabarti-Shi-Wirth-Yao-01, Bar-Yossef. Jayram-Kumar-Sivakumar-04, Barak-B-Chen -Rao-10]. • Caution: different papers use “information cost” to denote different things! • Today, we have a better understanding of the relationship between those different things. 19

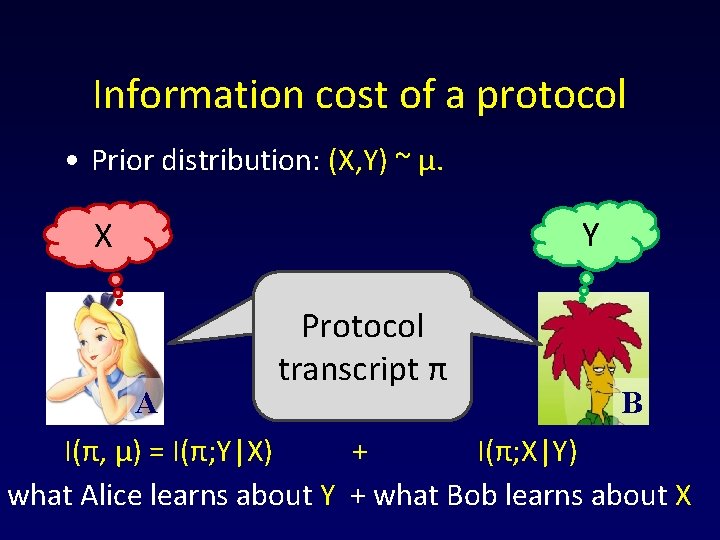

Information cost of a protocol • Prior distribution: (X, Y) ~ μ. Y X Protocol π transcript π B A I(π, μ) = I(π; Y|X) + I(π; X|Y) what Alice learns about Y + what Bob learns about X

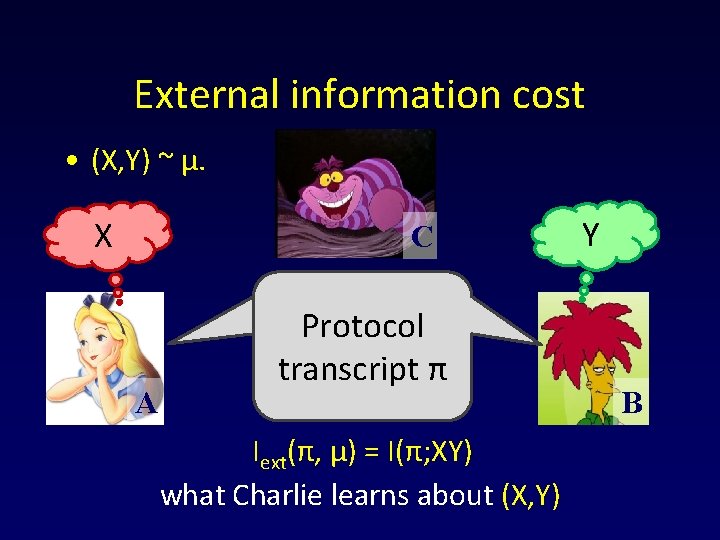

External information cost • (X, Y) ~ μ. X C A Protocol π transcript π Iext(π, μ) = I(π; XY) what Charlie learns about (X, Y) Y B

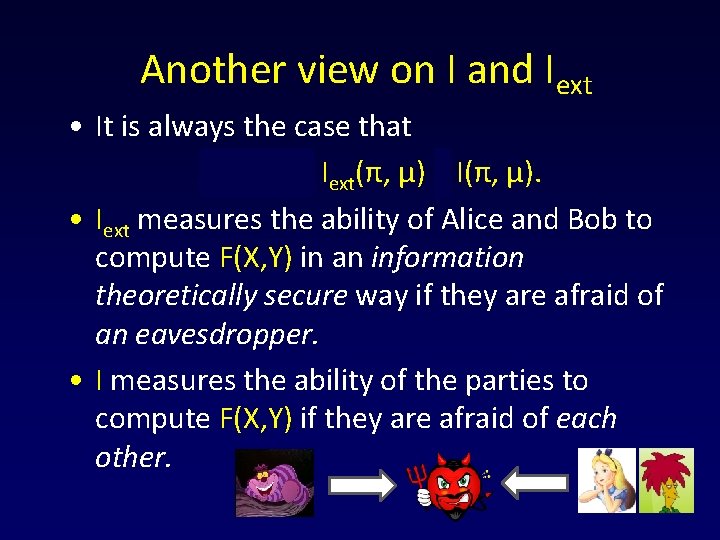

Another view on I and Iext • It is always the case that C(π, μ) ≥ Iext(π, μ) ≥ I(π, μ). • Iext measures the ability of Alice and Bob to compute F(X, Y) in an information theoretically secure way if they are afraid of an eavesdropper. • I measures the ability of the parties to compute F(X, Y) if they are afraid of each other.

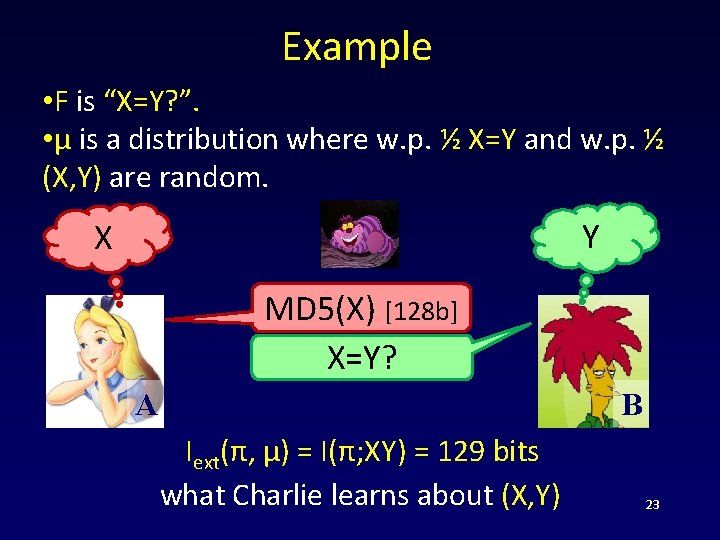

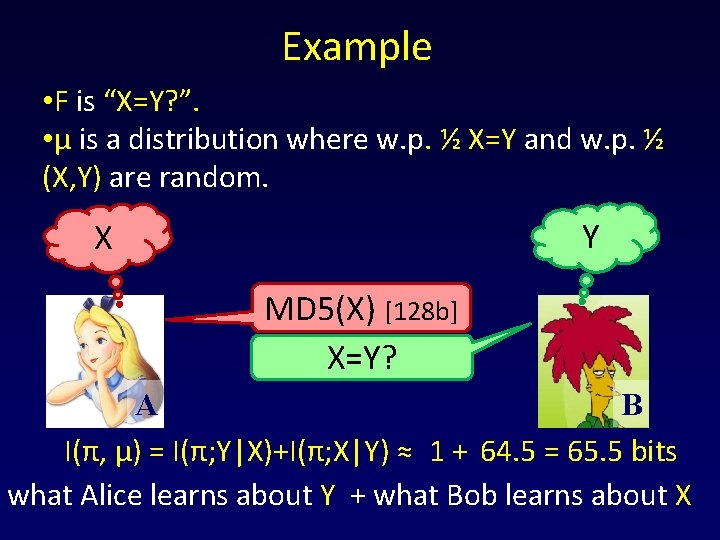

Example • F is “X=Y? ”. • μ is a distribution where w. p. ½ X=Y and w. p. ½ (X, Y) are random. Y X MD 5(X) [128 b] X=Y? B A Iext(π, μ) = I(π; XY) = 129 bits what Charlie learns about (X, Y) 23

Example • F is “X=Y? ”. • μ is a distribution where w. p. ½ X=Y and w. p. ½ (X, Y) are random. Y X MD 5(X) [128 b] X=Y? B A I(π, μ) = I(π; Y|X)+I(π; X|Y) ≈ 1 + 64. 5 = 65. 5 bits what Alice learns about Y + what Bob learns about X

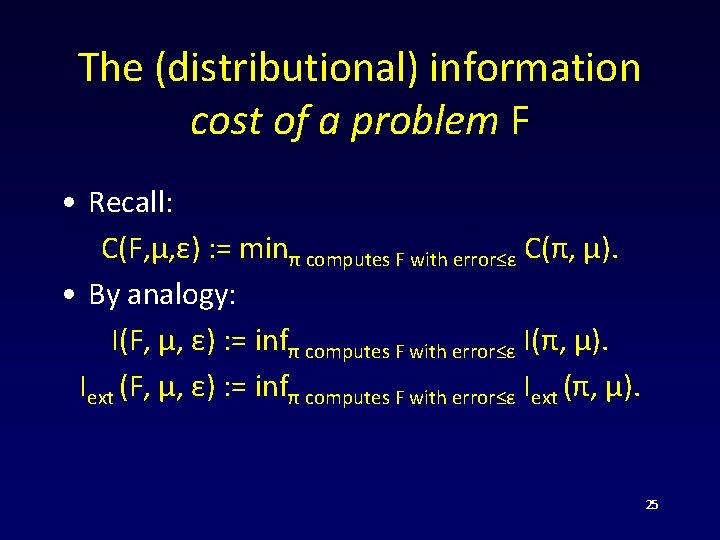

The (distributional) information cost of a problem F • Recall: C(F, μ, ε) : = minπ computes F with error≤ε C(π, μ). • By analogy: I(F, μ, ε) : = infπ computes F with error≤ε I(π, μ). Iext (F, μ, ε) : = infπ computes F with error≤ε Iext (π, μ). 25

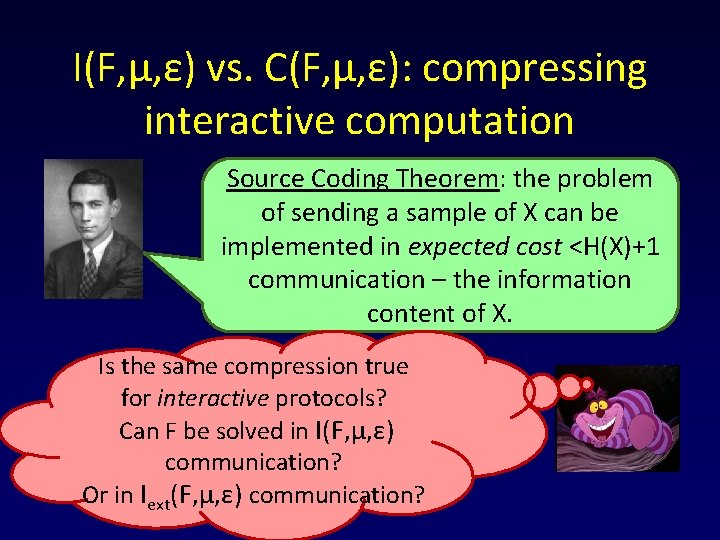

I(F, μ, ε) vs. C(F, μ, ε): compressing interactive computation Source Coding Theorem: the problem of sending a sample of X can be implemented in expected cost <H(X)+1 communication – the information content of X. Is the same compression true for interactive protocols? Can F be solved in I(F, μ, ε) communication? Or in Iext(F, μ, ε) communication?

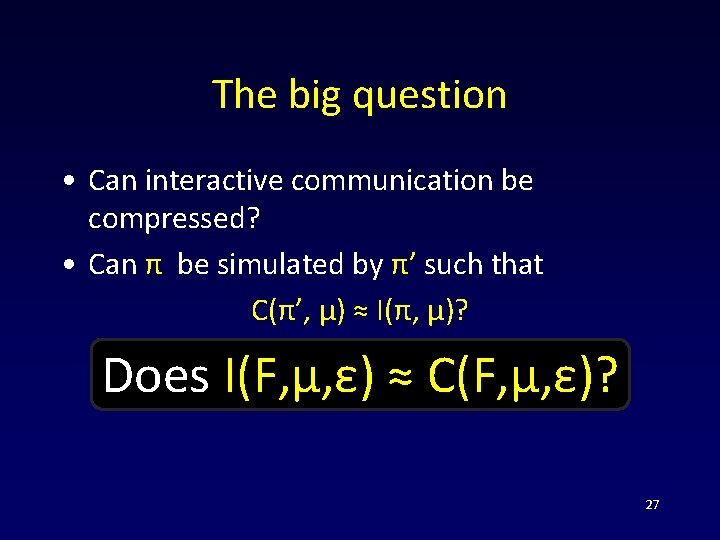

The big question • Can interactive communication be compressed? • Can π be simulated by π’ such that C(π’, μ) ≈ I(π, μ)? Does I(F, μ, ε) ≈ C(F, μ, ε)? 27

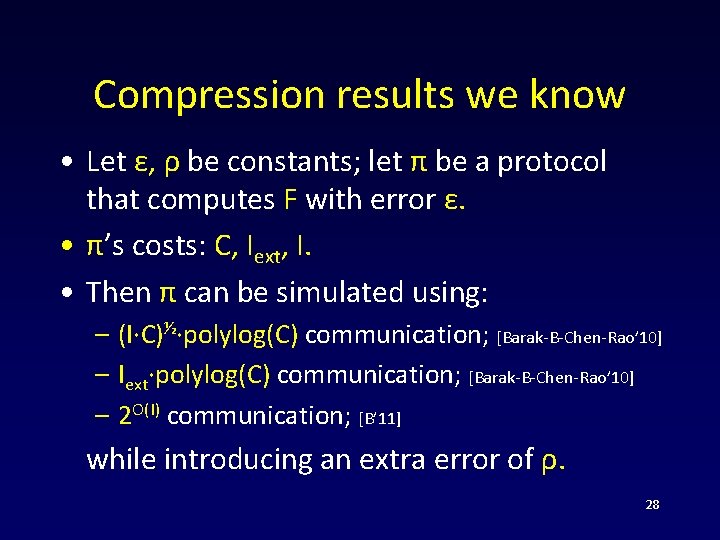

Compression results we know • Let ε, ρ be constants; let π be a protocol that computes F with error ε. • π’s costs: C, Iext, I. • Then π can be simulated using: – (I∙C)½∙polylog(C) communication; [Barak-B-Chen-Rao’ 10] – Iext∙polylog(C) communication; [Barak-B-Chen-Rao’ 10] – 2 O(I) communication; [B’ 11] while introducing an extra error of ρ. 28

The amortized cost of interactive computation Source Coding Theorem: the amortized cost of sending many independent samples of X is =H(X). What is the amortized cost of computing many independent copies of F(X, Y)?

![Information = amortized communication • Theorem[B-Rao’ 11]: for ε>0 I(F, μ, ε) = limn→∞ Information = amortized communication • Theorem[B-Rao’ 11]: for ε>0 I(F, μ, ε) = limn→∞](http://slidetodoc.com/presentation_image_h2/908f2a737d31547d69306020ddbcf4d5/image-30.jpg)

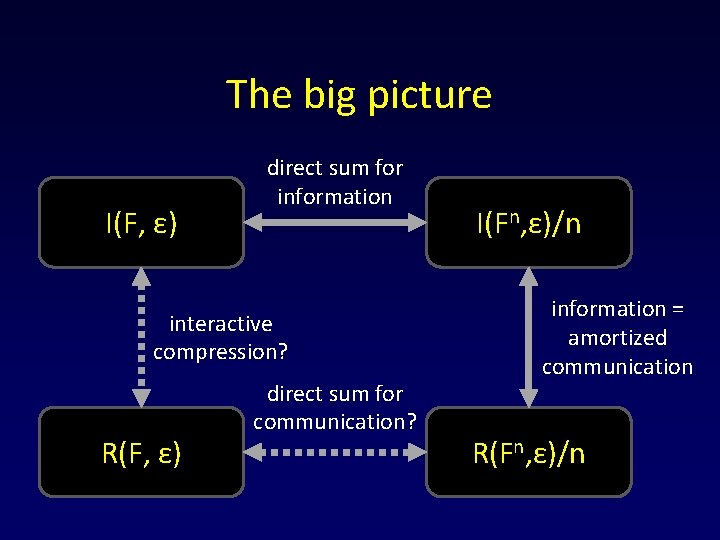

Information = amortized communication • Theorem[B-Rao’ 11]: for ε>0 I(F, μ, ε) = limn→∞ C(Fn, μn, ε)/n. • I(F, μ, ε) is the interactive analogue of H(X). 30

![Information = amortized communication • Theorem[B-Rao’ 11]: for ε>0 I(F, μ, ε) = limn→∞ Information = amortized communication • Theorem[B-Rao’ 11]: for ε>0 I(F, μ, ε) = limn→∞](http://slidetodoc.com/presentation_image_h2/908f2a737d31547d69306020ddbcf4d5/image-31.jpg)

Information = amortized communication • Theorem[B-Rao’ 11]: for ε>0 I(F, μ, ε) = limn→∞ C(Fn, μn, ε)/n. • I(F, μ, ε) is the interactive analogue of H(X). • Can we get rid of μ? I. e. make I(F, ε) a property of the task F? C(F, μ, ε) R(F, ε) I(F, μ, ε) ?

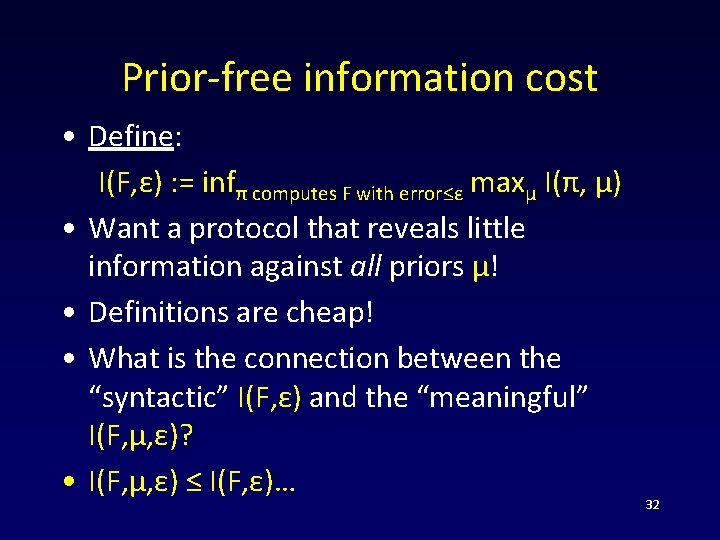

Prior-free information cost • Define: I(F, ε) : = infπ computes F with error≤ε maxμ I(π, μ) • Want a protocol that reveals little information against all priors μ! • Definitions are cheap! • What is the connection between the “syntactic” I(F, ε) and the “meaningful” I(F, μ, ε)? • I(F, μ, ε) ≤ I(F, ε)… 32

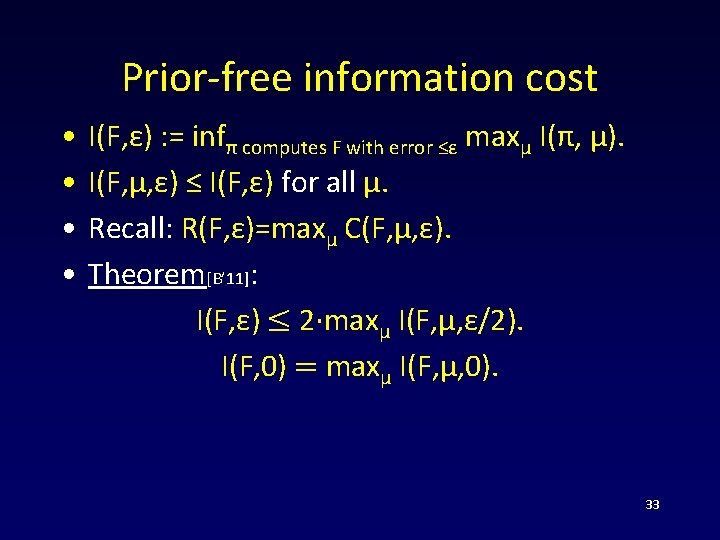

Prior-free information cost • • I(F, ε) : = infπ computes F with error ≤ε maxμ I(π, μ). I(F, μ, ε) ≤ I(F, ε) for all μ. Recall: R(F, ε)=maxμ C(F, μ, ε). Theorem[B’ 11]: I(F, ε) ≤ 2·maxμ I(F, μ, ε/2). I(F, 0) = maxμ I(F, μ, 0). 33

Prior-free information cost • Recall: I(F, μ, ε) = limn→∞ C(Fn, μn, ε)/n. • Theorem: for ε>0 I(F, ε) = limn→∞ R(Fn, ε)/n. 34

Example • R(EQ, 0) ≈ n. • What is I(EQ, 0)? 35

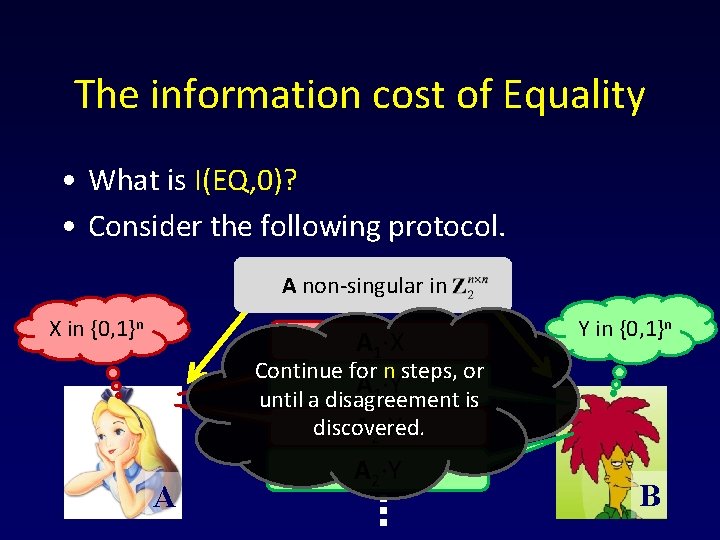

The information cost of Equality • What is I(EQ, 0)? • Consider the following protocol. A non-singular in X in {0, 1}n A 1∙X Y in {0, 1}n Continue for n steps, or A 1∙Y until a disagreement is discovered. A 2∙X A A 2∙Y B 36

Analysis (sketch) • If X≠Y, the protocol will terminate in O(1) rounds on average, and thus reveal O(1) information. • If X=Y… the players only learn the fact that X=Y (≤ 1 bit of information). • Thus the protocol has O(1) information complexity. 37

Direct sum theorems • I(F, ε) = limn→∞ R(Fn, ε)/n. • Questions: – Does R(Fn, ε)=Ω(n∙R(F, ε))? – Does R(Fn, ε)=ω(R(F, ε))? 38

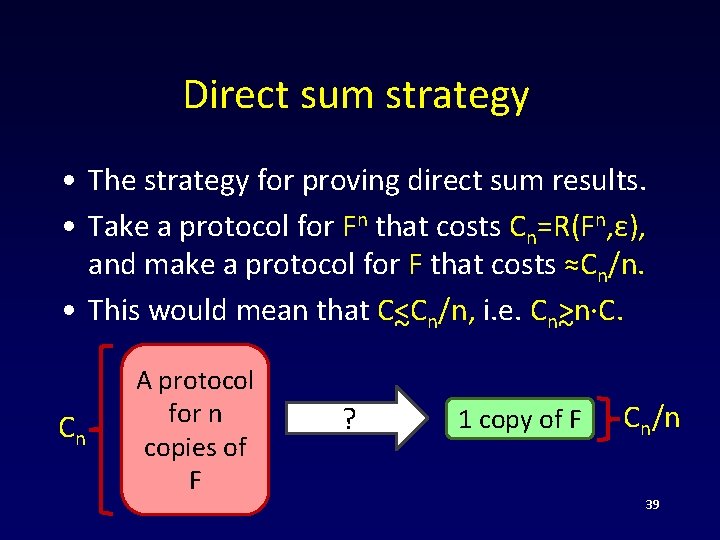

Direct sum strategy • The strategy for proving direct sum results. • Take a protocol for Fn that costs Cn=R(Fn, ε), and make a protocol for F that costs ≈Cn/n. • This would mean that C<Cn/n, i. e. Cn>n∙C. ~ ~ Cn A protocol for n copies of F ? 1 copy of F Cn/n 39

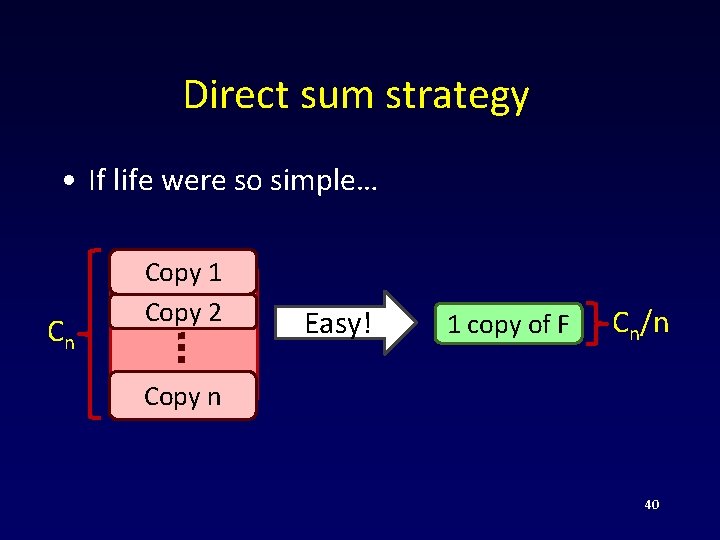

Direct sum strategy • If life were so simple… Cn Copy 1 Copy 2 Easy! 1 copy of F Cn/n Copy n 40

Direct sum strategy • Theorem: I(F, ε) = I(Fn, ε)/n ≤ Cn = R(Fn, ε)/n. • Compression → direct sum! 41

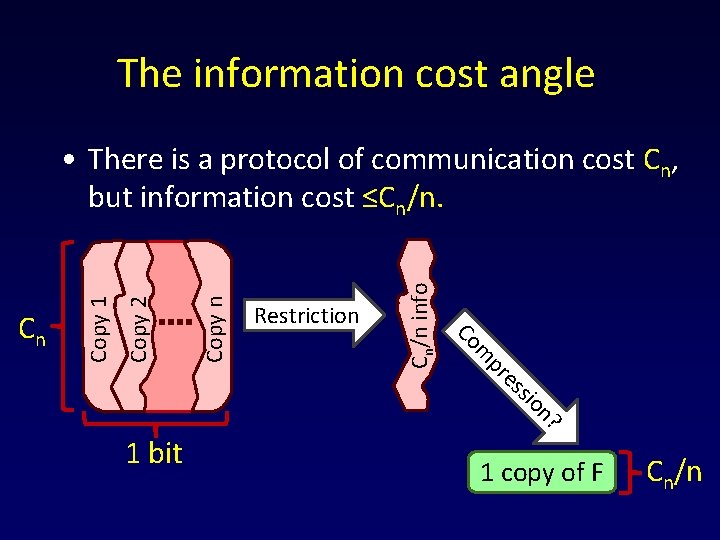

The information cost angle Cn/n info Copy n n? sio es pr m Copy 2 Restriction Co Cn Copy 1 • There is a protocol of communication cost Cn, but information cost ≤Cn/n. 1 bit 1 copy of F Cn/n

![Direct sum theorems Best known general simulation [BBCR’ 10]: • A protocol with C Direct sum theorems Best known general simulation [BBCR’ 10]: • A protocol with C](http://slidetodoc.com/presentation_image_h2/908f2a737d31547d69306020ddbcf4d5/image-43.jpg)

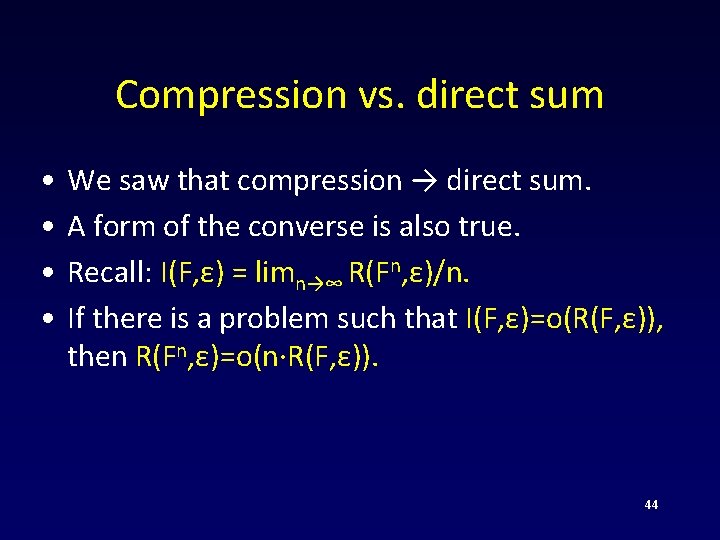

Direct sum theorems Best known general simulation [BBCR’ 10]: • A protocol with C communication and I information cost can be simulated using (I∙C)½∙polylog(C) communication. ~ 1/2∙R(F, ε)). • Implies: R(Fn, ε) = Ω(n 43

Compression vs. direct sum • • We saw that compression → direct sum. A form of the converse is also true. Recall: I(F, ε) = limn→∞ R(Fn, ε)/n. If there is a problem such that I(F, ε)=o(R(F, ε)), then R(Fn, ε)=o(n∙R(F, ε)). 44

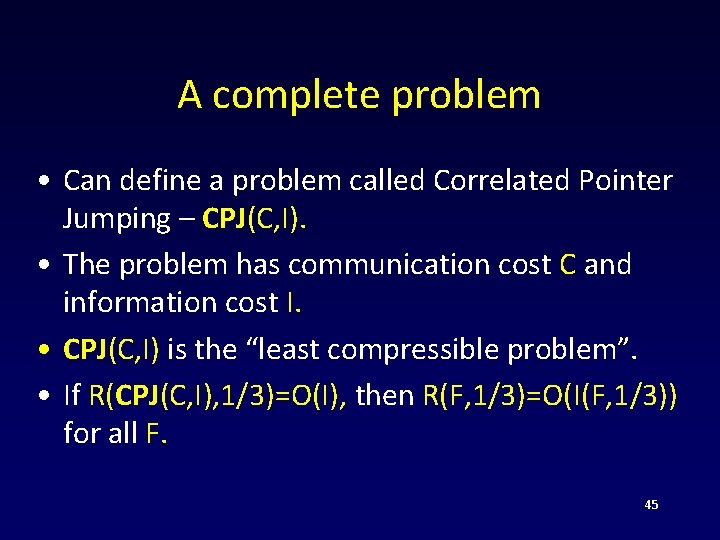

A complete problem • Can define a problem called Correlated Pointer Jumping – CPJ(C, I). • The problem has communication cost C and information cost I. • CPJ(C, I) is the “least compressible problem”. • If R(CPJ(C, I), 1/3)=O(I), then R(F, 1/3)=O(I(F, 1/3)) for all F. 45

The big picture I(F, ε) direct sum for information interactive compression? R(F, ε) direct sum for communication? I(Fn, ε)/n information = amortized communication R(Fn, ε)/n

Partial progress • Can compress bounded-round interactive protocols. • The main primitive is a one-shot version of Slepian-Wolf theorem. • Alice gets a distribution PX. • Bob gets a prior distribution PY. • Goal: both must sample from PX. 47

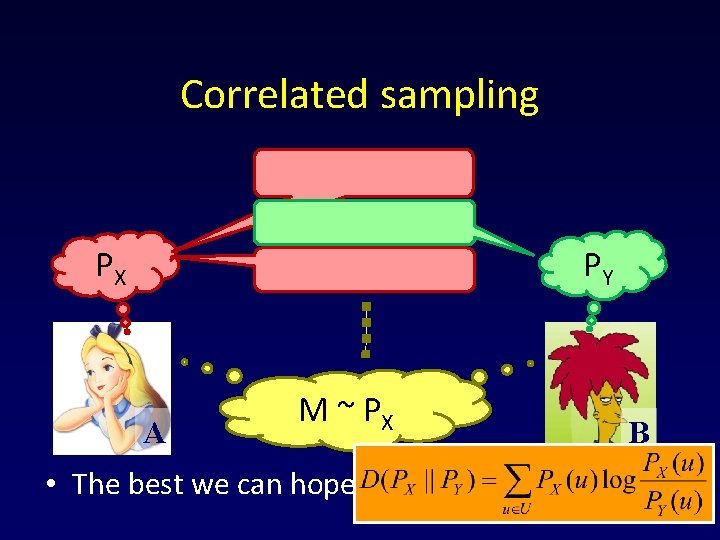

Correlated sampling PY PX A M ~ PX • The best we can hope for is D(PX||PY). B 48

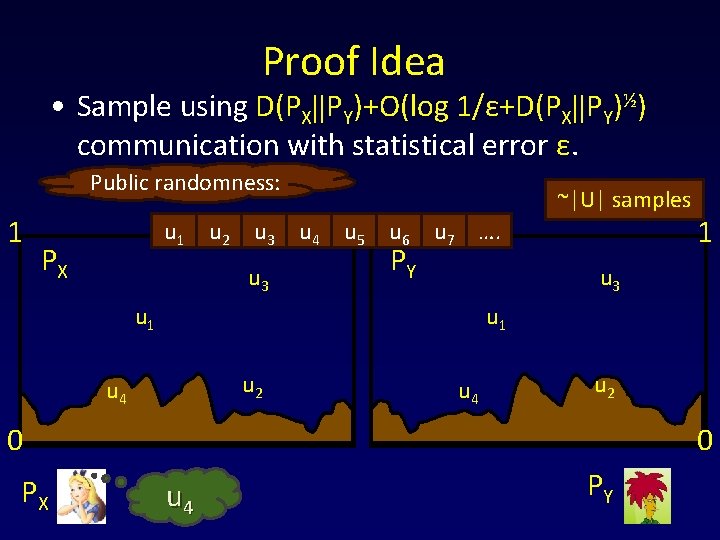

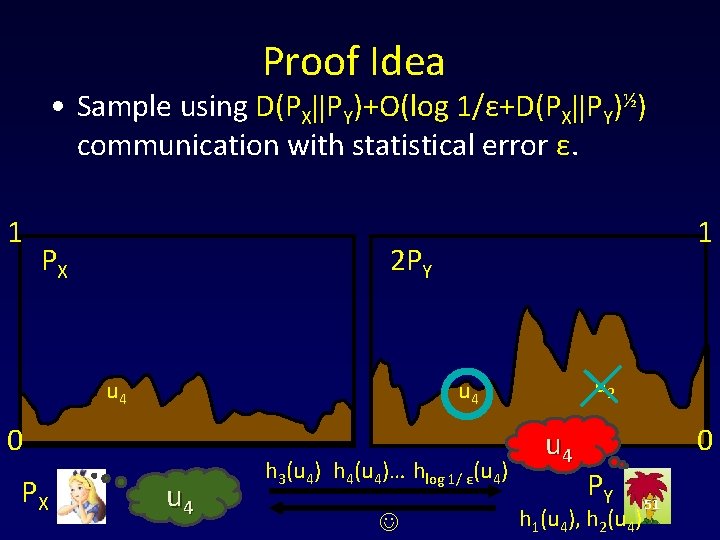

Proof Idea • Sample using D(PX||PY)+O(log 1/ε+D(PX||PY)½) communication with statistical error ε. Public randomness: 1 u q 1 PX u q 2 u q 3 u 3 ~|U| samples u q 4 u 5 q u q 6 PY u q 7 …. u 3 u 1 u 2 u 4 u 2 0 PX 1 0 u 4 PY 49

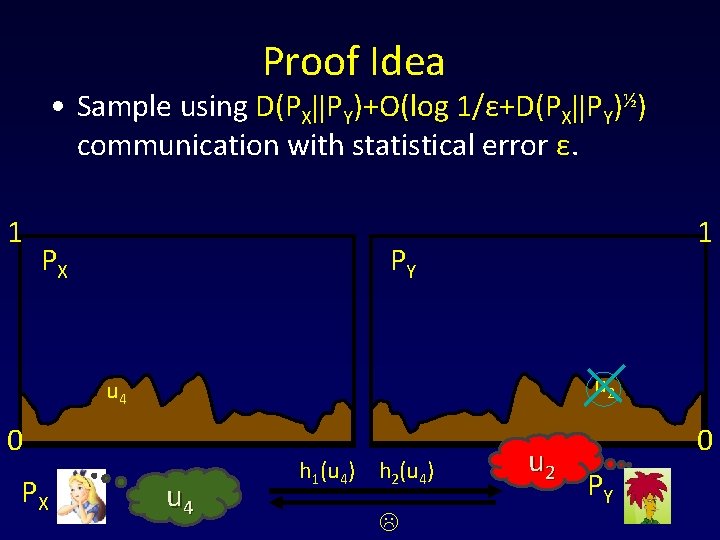

Proof Idea • Sample using D(PX||PY)+O(log 1/ε+D(PX||PY)½) communication with statistical error ε. 1 PX 1 PY u 2 u 4 0 PX u 4 h 1(u 4) h 2(u 4) u 2 0 PY 50

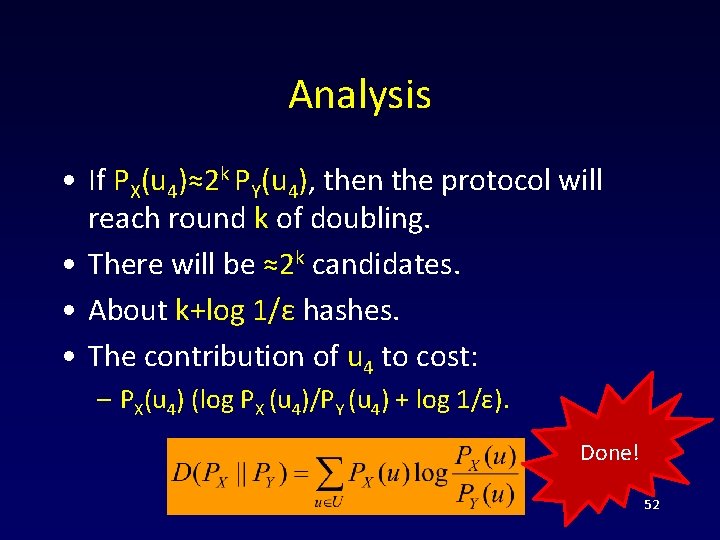

Proof Idea • Sample using D(PX||PY)+O(log 1/ε+D(PX||PY)½) communication with statistical error ε. 1 PX 2 PY u 4 u 2 u 4 0 PX 1 u 4 h 3(u 4) h 4(u 4)… hlog 1/ ε(u 4) u 4 0 PY h 1(u 4), h 2(u 4) 51

Analysis • If PX(u 4)≈2 k PY(u 4), then the protocol will reach round k of doubling. • There will be ≈2 k candidates. • About k+log 1/ε hashes. • The contribution of u 4 to cost: – PX(u 4) (log PX (u 4)/PY (u 4) + log 1/ε). Done! 52

Directions • Can interactive communication be fully compressed? R(F, ε) = I(F, ε)? • What is the relationship between I(F, ε), Iext(F, ε) and R(F, ε)? • Many other questions on interactive coding theory! 53

Thank You 54

- Slides: 54