Infini Band Was originally designed as a system

Infini. Band • Was originally designed as a “system area network”: connecting CPUs and I/O devices. – A larger role: replaceing all I/O standards for data centers: PCI (backplane), Fibre Channel, and Ethernet: everything connects through Infini. Band. Not long haul yet. – A less role: Low latency, high bandwidth, low overhead interconnect for commercial datacenters between servers and storage. • Can form local area or even large area networks. • Has become the de-facto interconnect for high performance clusters (100+ systems in top 500 supercomputer list).

• Infiniband architecture – Specification (Infiniband architecture specification release 1. 3, March 3, 2015) available at Infiniband Trade Association (http: //www. infinibandta. org)

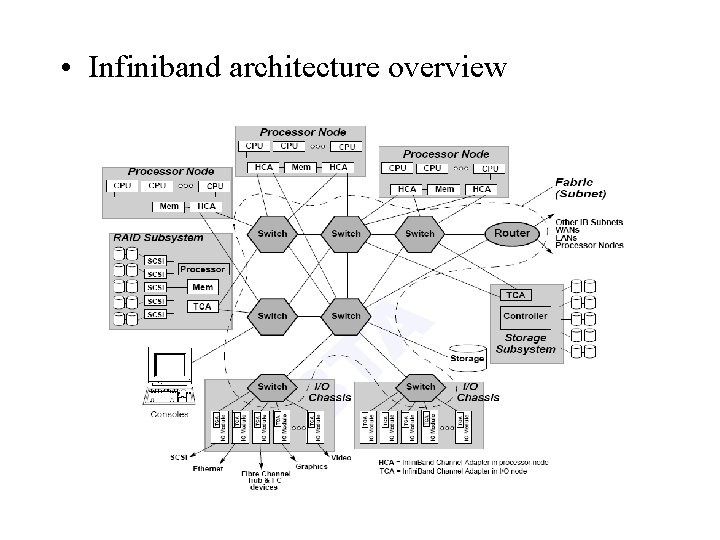

• Infiniband architecture overview

• Infiniband architecture overview – Components: • Links, Channel adaptors, Switches, Routers – The specification allows Infiniband wide area network, but mostly adopted as a system/storage area network. • Cabling specification? – Topology: • Irregular • Regular: Fat tree, hypercube, etc

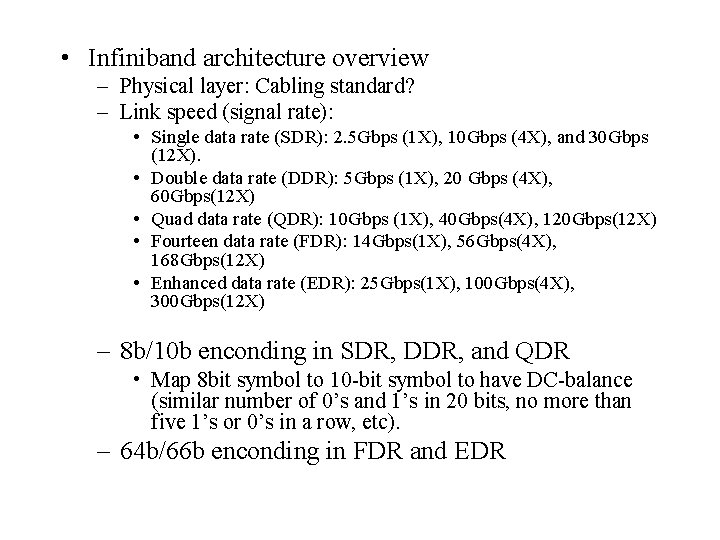

• Infiniband architecture overview – Physical layer: Cabling standard? – Link speed (signal rate): • Single data rate (SDR): 2. 5 Gbps (1 X), 10 Gbps (4 X), and 30 Gbps (12 X). • Double data rate (DDR): 5 Gbps (1 X), 20 Gbps (4 X), 60 Gbps(12 X) • Quad data rate (QDR): 10 Gbps (1 X), 40 Gbps(4 X), 120 Gbps(12 X) • Fourteen data rate (FDR): 14 Gbps(1 X), 56 Gbps(4 X), 168 Gbps(12 X) • Enhanced data rate (EDR): 25 Gbps(1 X), 100 Gbps(4 X), 300 Gbps(12 X) – 8 b/10 b enconding in SDR, DDR, and QDR • Map 8 bit symbol to 10 -bit symbol to have DC-balance (similar number of 0’s and 1’s in 20 bits, no more than five 1’s or 0’s in a row, etc). – 64 b/66 b enconding in FDR and EDR

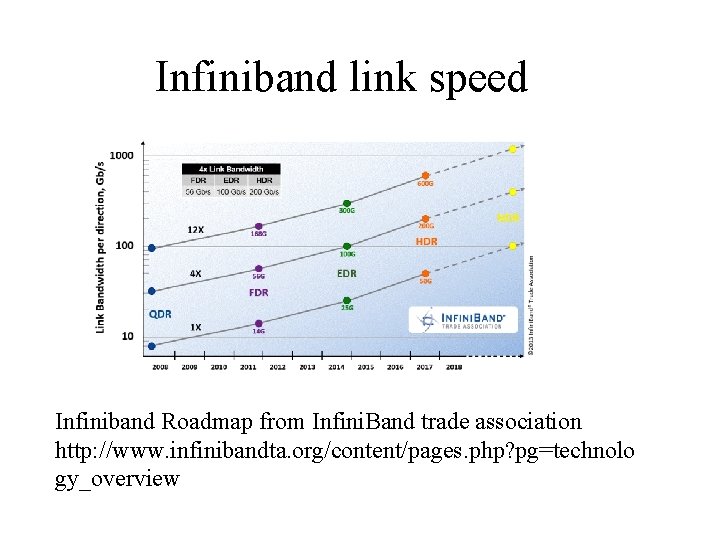

Infiniband link speed Infiniband Roadmap from Infini. Band trade association http: //www. infinibandta. org/content/pages. php? pg=technolo gy_overview

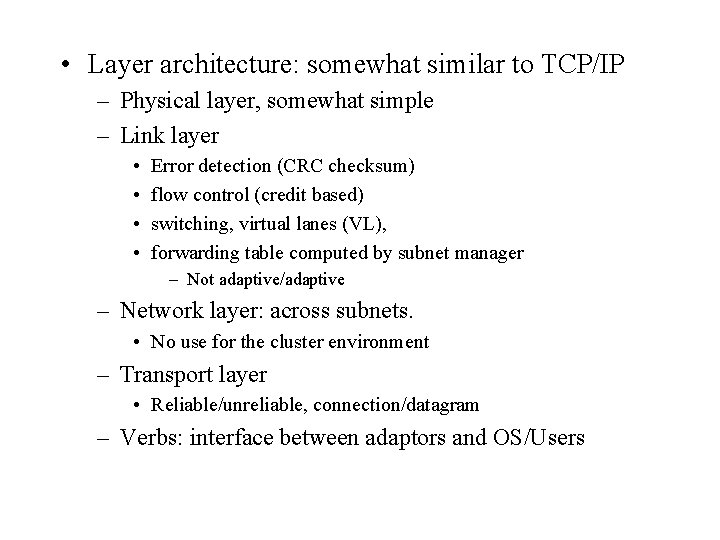

• Layer architecture: somewhat similar to TCP/IP – Physical layer, somewhat simple – Link layer • • Error detection (CRC checksum) flow control (credit based) switching, virtual lanes (VL), forwarding table computed by subnet manager – Not adaptive/adaptive – Network layer: across subnets. • No use for the cluster environment – Transport layer • Reliable/unreliable, connection/datagram – Verbs: interface between adaptors and OS/Users

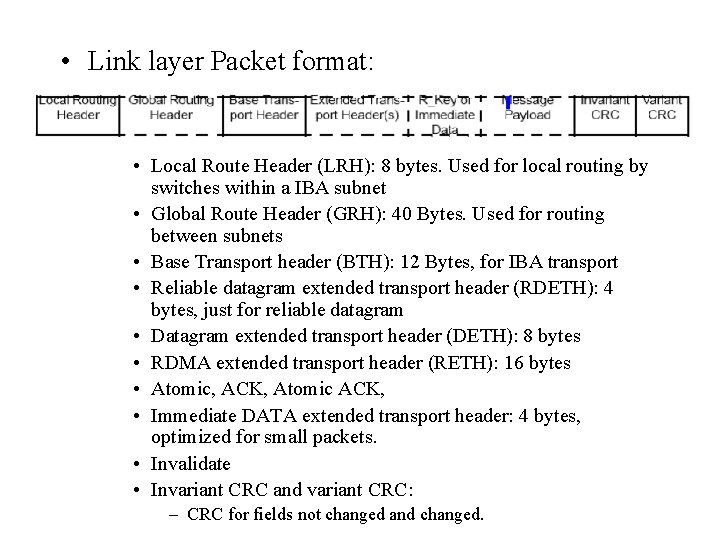

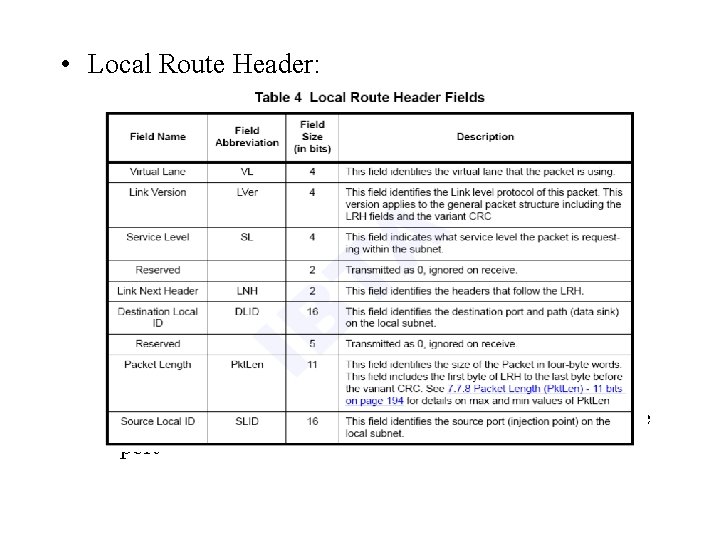

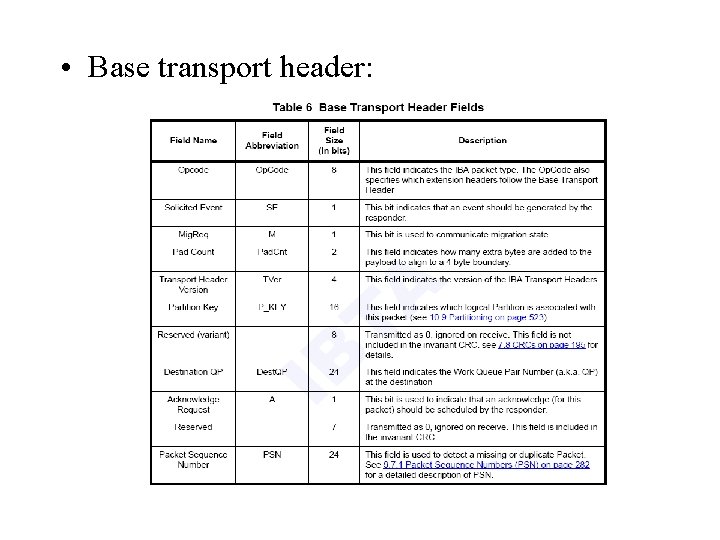

• Link layer Packet format: • Local Route Header (LRH): 8 bytes. Used for local routing by switches within a IBA subnet • Global Route Header (GRH): 40 Bytes. Used for routing between subnets • Base Transport header (BTH): 12 Bytes, for IBA transport • Reliable datagram extended transport header (RDETH): 4 bytes, just for reliable datagram • Datagram extended transport header (DETH): 8 bytes • RDMA extended transport header (RETH): 16 bytes • Atomic, ACK, Atomic ACK, • Immediate DATA extended transport header: 4 bytes, optimized for small packets. • Invalidate • Invariant CRC and variant CRC: – CRC for fields not changed and changed.

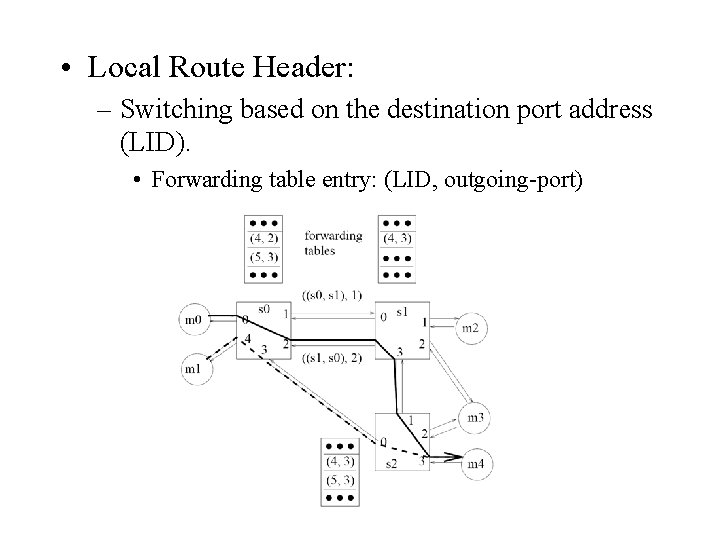

• Local Route Header: – Switching based on the destination port address (LID) – Multipath switching by allocating multiple LIDs to one port

• Local Route Header: – Switching based on the destination port address (LID). • Forwarding table entry: (LID, outgoing-port)

• Local Route Header: – Multipath switching by allocating multiple LIDs to one port, see the previous example. • GRH: same format as IPV 6 address (16 bytes address)

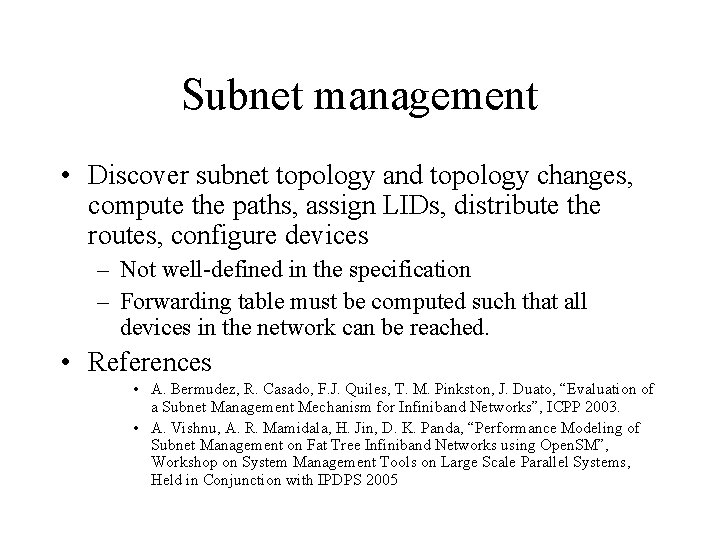

Subnet management • Discover subnet topology and topology changes, compute the paths, assign LIDs, distribute the routes, configure devices – Not well-defined in the specification – Forwarding table must be computed such that all devices in the network can be reached. • References • A. Bermudez, R. Casado, F. J. Quiles, T. M. Pinkston, J. Duato, “Evaluation of a Subnet Management Mechanism for Infiniband Networks”, ICPP 2003. • A. Vishnu, A. R. Mamidala, H. Jin, D. K. Panda, “Performance Modeling of Subnet Management on Fat Tree Infiniband Networks using Open. SM”, Workshop on System Management Tools on Large Scale Parallel Systems, Held in Conjunction with IPDPS 2005

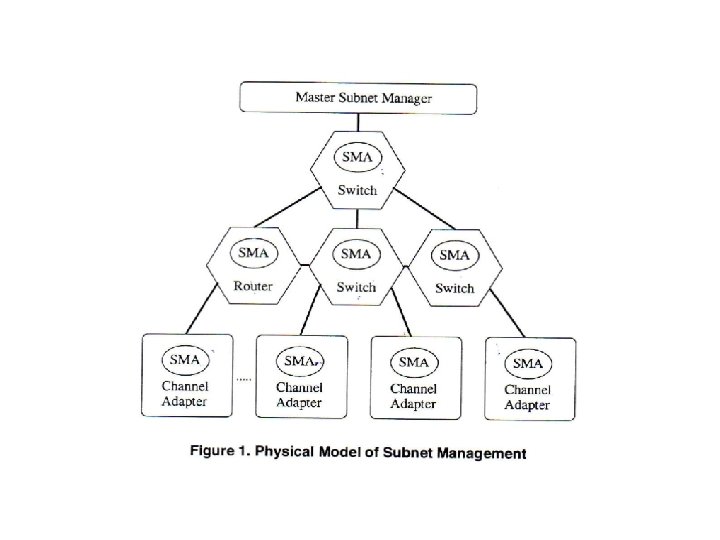

• Infini. Band devices and entities related to subnet management • Devices: Channel Adapters (CA), Host Channel Adapters, switches, routers • Subnet manager (SM): discovering, configuring, activating and managing the subnet • A subnet management agent (SMA) in every device generates, responses to control packets (subnet management packets (SMPs)), and configures local components for subnet management • SM exchange control packets with SMA with subnet management interface (SMI).

• Subnet management packets (SMP) – 256 bytes of data – Use unreliable datagram service on the management virtual lane (VL 15) – Two routing schemes • LID routed: use lookup table forwarding – Use after the subnet is setup. E. g. Check the status of an active port • Direct routed: has the information of the output port for each intermediate hop. – Subnet discovery for the subnet is setup

• Subnet management packets (SMP) – Define the operation to be performed by SM – Get: get the information about CA, switch, port – Set: set the attribute of a port (e. g. LID) – Get. Resp: get response – Trap: inform SM about the state of a local node • A SMA stop sending Trap message until it receives Trap. Repress packet. • Topology information can be obtained by a sweep and by peridical Traps.

• Subnet Management phases: – Topology discovery: sending direct routed SMP to every port and processing the responses. – Path computation: computing valid paths between each pair of end node – Path distribution phase: configuring the forwarding table

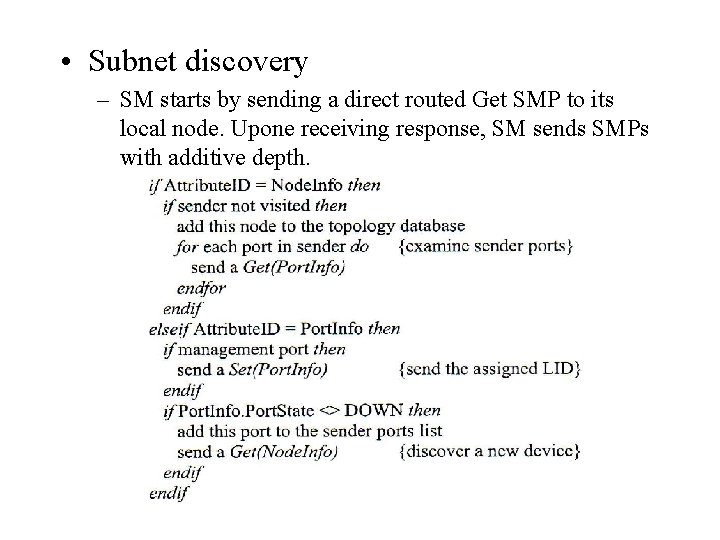

• Subnet discovery – SM starts by sending a direct routed Get SMP to its local node. Upone receiving response, SM sends SMPs with additive depth.

• Path computation: – Compute paths between all pair of nodes – For irregular topology: • Up/Down routing does not work directly – Need information about the incoming interface and the destination and Infiniband only uses destination – Potential solution: » find all possible paths » remove all possible down link following up links in each node » find one output port for each destination – Other solutions: destination renaming – Fat tree topology: • What is the best that can be achieved (optimal routing) is also not clear.

• Path distribution: – Ordering issue: the network may be in an inconsistent state when partially updated, which may result in deadlock during this period. • Traditional solution, no data packets for a period of time • deadlock free reconfiguration schemes. – How to do this correctly, effectively, and incrementally is still open.

• Base transport header:

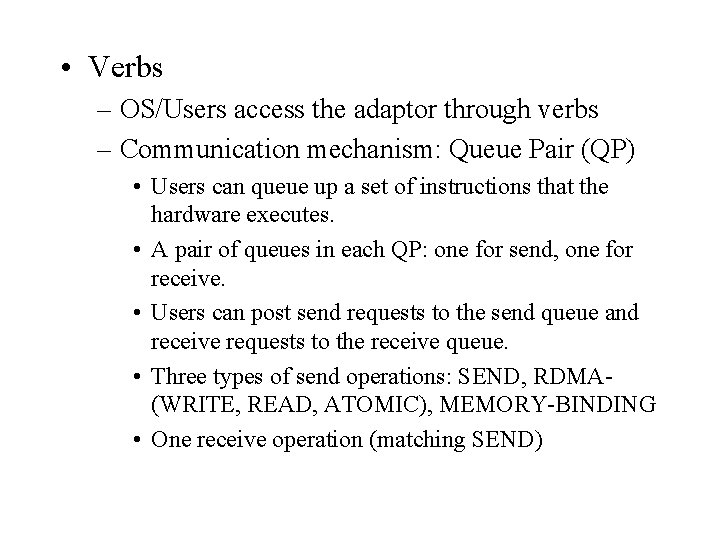

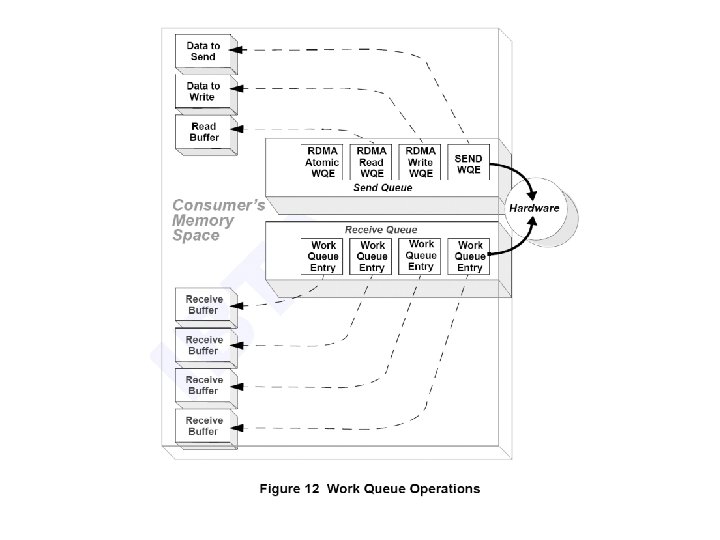

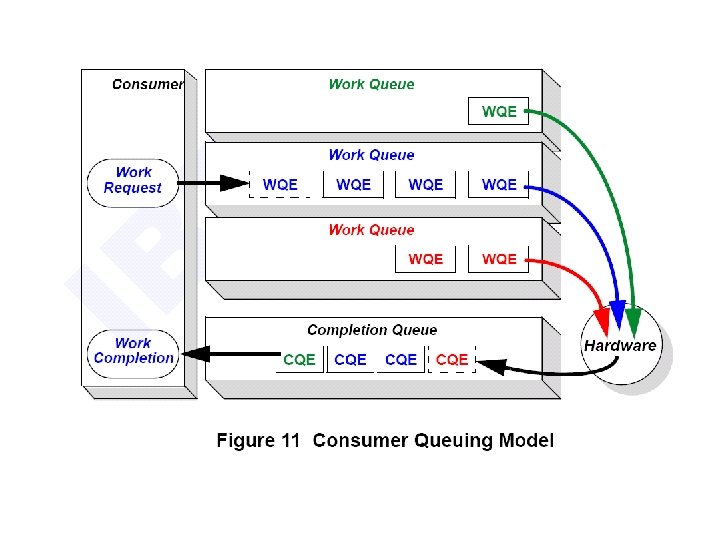

• Verbs – OS/Users access the adaptor through verbs – Communication mechanism: Queue Pair (QP) • Users can queue up a set of instructions that the hardware executes. • A pair of queues in each QP: one for send, one for receive. • Users can post send requests to the send queue and receive requests to the receive queue. • Three types of send operations: SEND, RDMA(WRITE, READ, ATOMIC), MEMORY-BINDING • One receive operation (matching SEND)

• Queue Pair: – The status of the result of an operation (send/receive) is stored in the complete queue. – Send/receive queues can bind to different complete queues. • Related system level verbs: – Open QP, create complete queue, Open HCA, open protection domain, register memory, allocate memory window, etc • User level verbs: – post send/receive request, poll for completion.

• To communicate: – Make system calls to setup everything (open QP, bind QP to port, bind complete queues, connect local QP to remote QP, register memory, etc). – Post send/receive requests. – Check completion.

• Infini. Band has an almost perfect software/network interface (Chien'94 paper): – The network subsystem realizes all user level functionality. – User level accesses to the network interface. A few machine instructions will accomplish the transmission task without involving the OS. – Network supports in-order delivery and fault tolerance. – Buffer management is pushed out to the user.

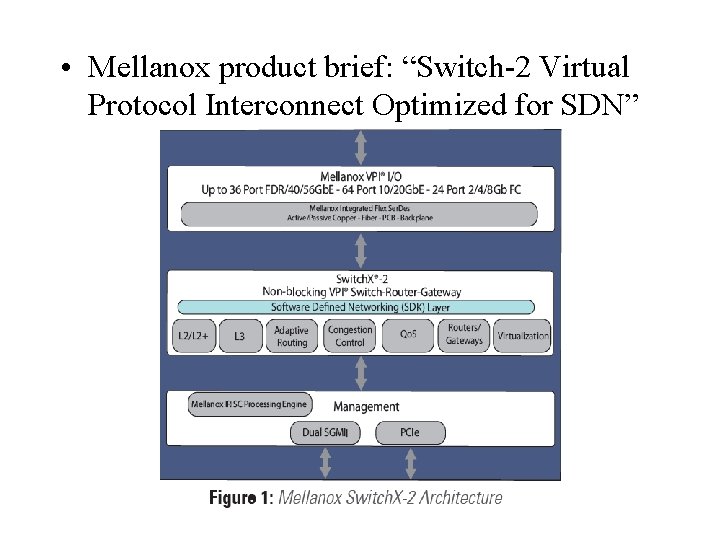

• Mellanox product brief: “Switch-2 Virtual Protocol Interconnect Optimized for SDN”

• Mellanox product brief: “Switch-2 Virtual Protocol Interconnect Optimized for SDN” – Virtual protocol interconnect • Automatically sensing Infiniband, Ethernet and Fiber channel, and data center bridging • Flexible port configuration – 36 IB FDR ports or 40/56 Gb. E Ports – 64 10 Gb. E ports – 24 2/4/5 Gb FC ports – SDN support • Complete support for Openflow and Subnet management • Remote configurable routing table, overlay, control plan.

Some claims (Infini. Band advantages) • Infiniband Ethernet can carry each other’s traffic (Eo. IB) and (IBo. E), and both can carry TCP/IP • Infini. Band is in general faster – 10 G Ethernet. vs. IB DDR (20 G) and QDR(40 G) – 40 G Ethernet. vs. IB EDR (100 G) • Infini. Band is no longer hard to use • Infini. Band is optimized for fat-tree • Infini. Band still has more features than Ethernet – Fault tolerance, multicast, etc

- Slides: 30