Inference Tasks and Computational Semantics Key Concepts Inference

- Slides: 38

Inference Tasks and Computational Semantics

Key Concepts • Inference tasks • Syntactic versus semantic approach to logic • Soundness & completeness • Decidability and undecidability • Technologies: • Theorem proving versus model building

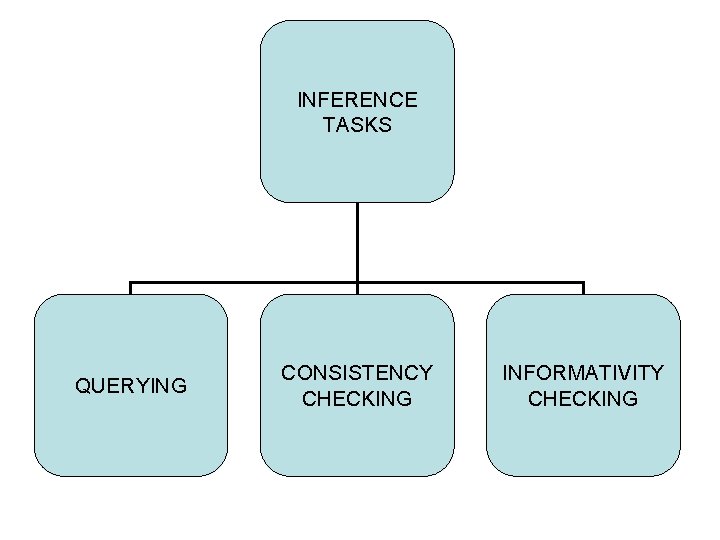

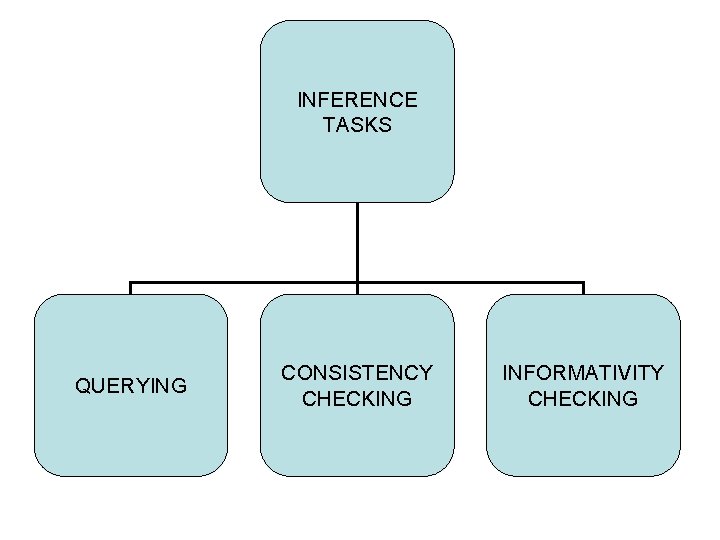

INFERENCE TASKS QUERYING CONSISTENCY CHECKING INFORMATIVITY CHECKING

QUERYING • Definition: – Given: Model M and formula P – Does M satisfy P? • P is not necessarily a sentence, so have to handle assignments to free variables. • Computability: yes if models are finite

Consistency Checking • Definition: Given a formula P, is P consistent? • Idea: consistent iff satisfiable in a model M, so task becomes discovering whether a model exists. • This is a search problem. • Computationally undecidable for arbitrary P.

Informativity Checking • Definition: given P, is P informative or uninformative? • Idea (which runs counter to logician's view) – informative = invalid – uninformative = valid (true in all possible models) • Informativity: is genuinely new information being conveyed? Useful concept from Po. V of communication • Computability: validity worse than consistency checking since all models need to be checked for satisfiability.

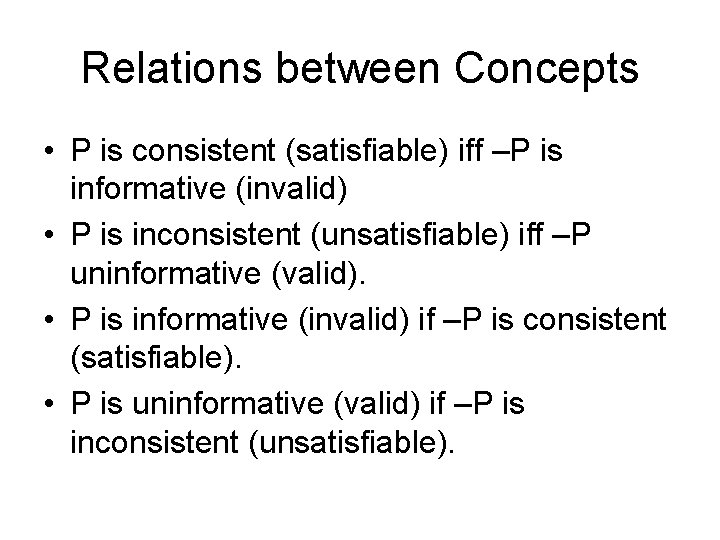

Relations between Concepts • P is consistent (satisfiable) iff –P is informative (invalid) • P is inconsistent (unsatisfiable) iff –P uninformative (valid). • P is informative (invalid) if –P is consistent (satisfiable). • P is uninformative (valid) if –P is inconsistent (unsatisfiable).

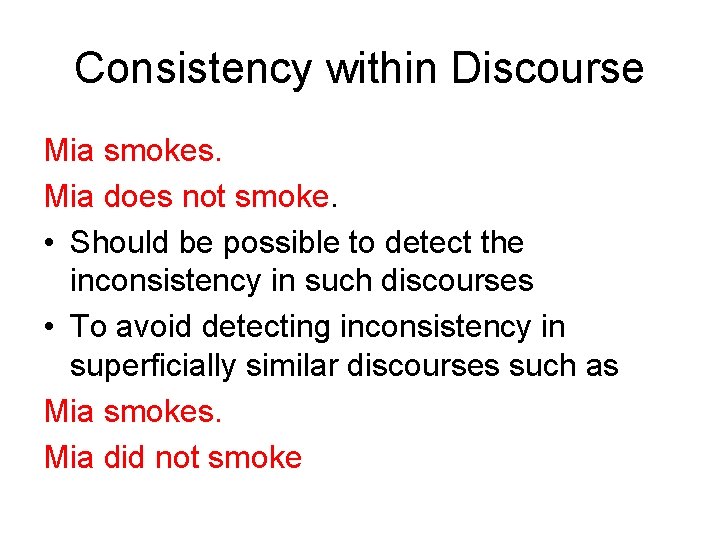

Consistency within Discourse Mia smokes. Mia does not smoke. • Should be possible to detect the inconsistency in such discourses • To avoid detecting inconsistency in superficially similar discourses such as Mia smokes. Mia did not smoke

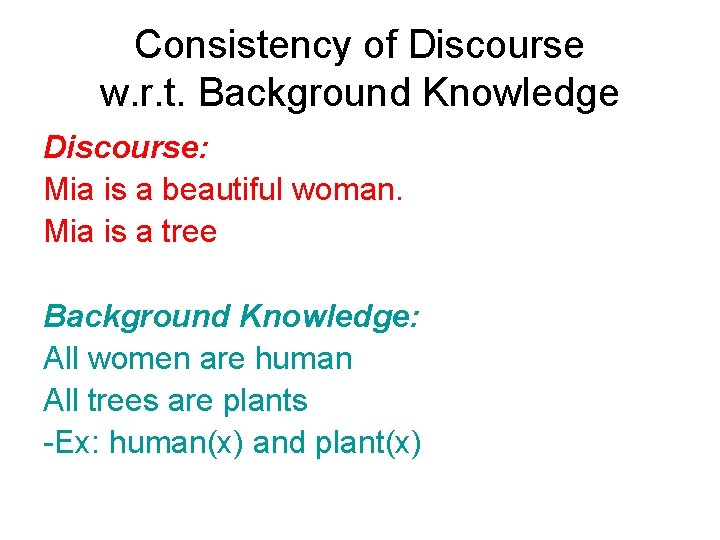

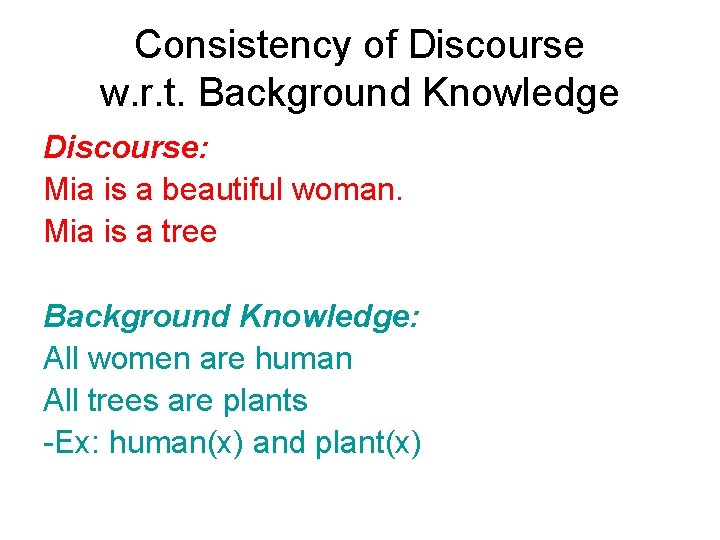

Consistency of Discourse w. r. t. Background Knowledge Discourse: Mia is a beautiful woman. Mia is a tree Background Knowledge: All women are human All trees are plants -Ex: human(x) and plant(x)

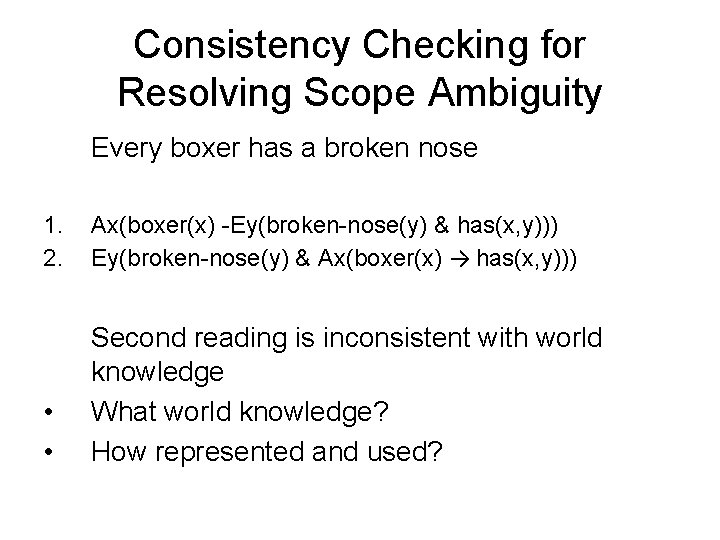

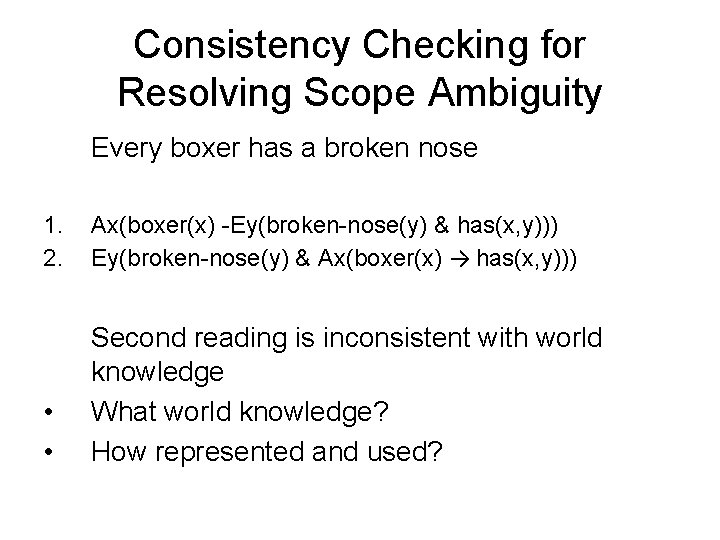

Consistency Checking for Resolving Scope Ambiguity Every boxer has a broken nose 1. 2. Ax(boxer(x) -Ey(broken-nose(y) & has(x, y))) Ey(broken-nose(y) & Ax(boxer(x) → has(x, y))) • • Second reading is inconsistent with world knowledge What world knowledge? How represented and used?

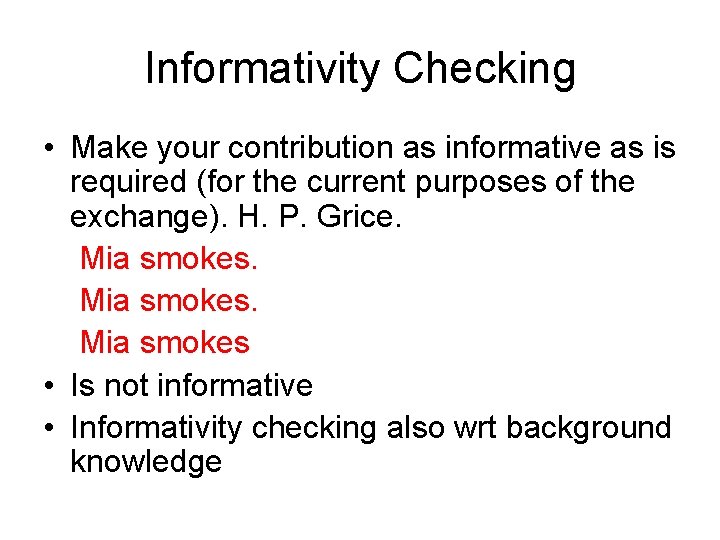

Informativity Checking • Make your contribution as informative as is required (for the current purposes of the exchange). H. P. Grice. Mia smokes • Is not informative • Informativity checking also wrt background knowledge

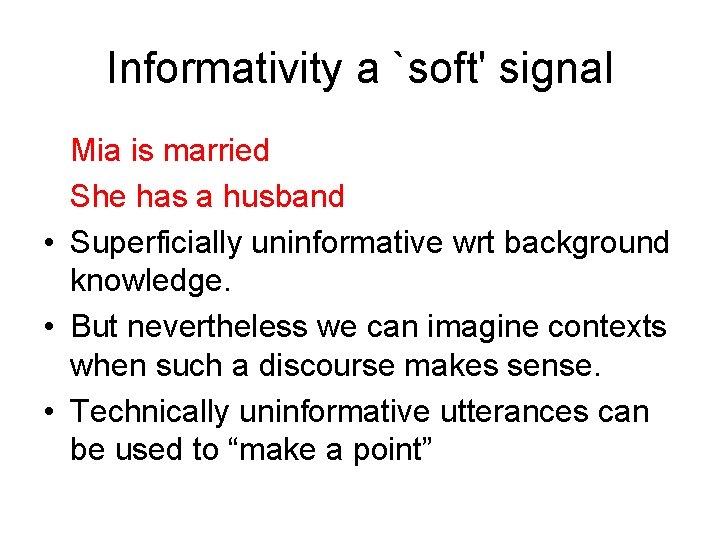

Informativity a `soft' signal Mia is married She has a husband • Superficially uninformative wrt background knowledge. • But nevertheless we can imagine contexts when such a discourse makes sense. • Technically uninformative utterances can be used to “make a point”

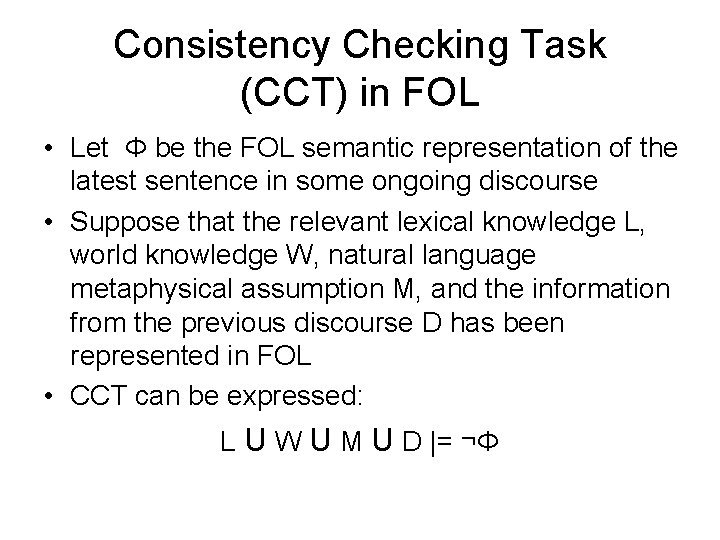

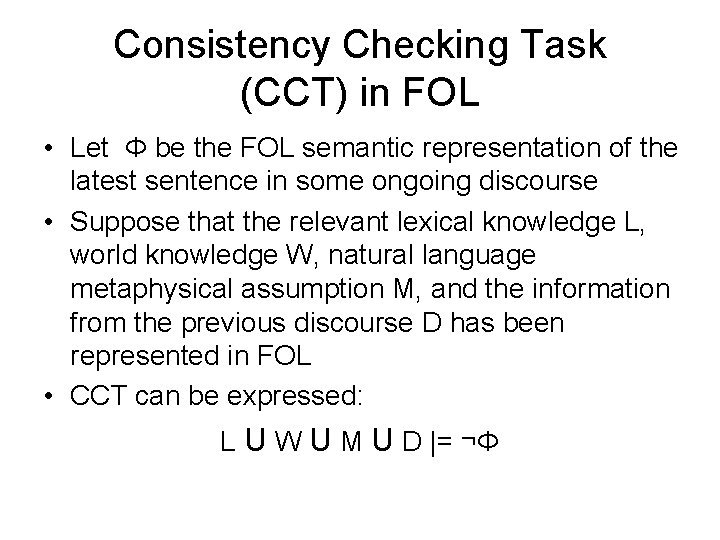

Consistency Checking Task (CCT) in FOL • Let Φ be the FOL semantic representation of the latest sentence in some ongoing discourse • Suppose that the relevant lexical knowledge L, world knowledge W, natural language metaphysical assumption M, and the information from the previous discourse D has been represented in FOL • CCT can be expressed: L U W U M U D |= ¬Φ

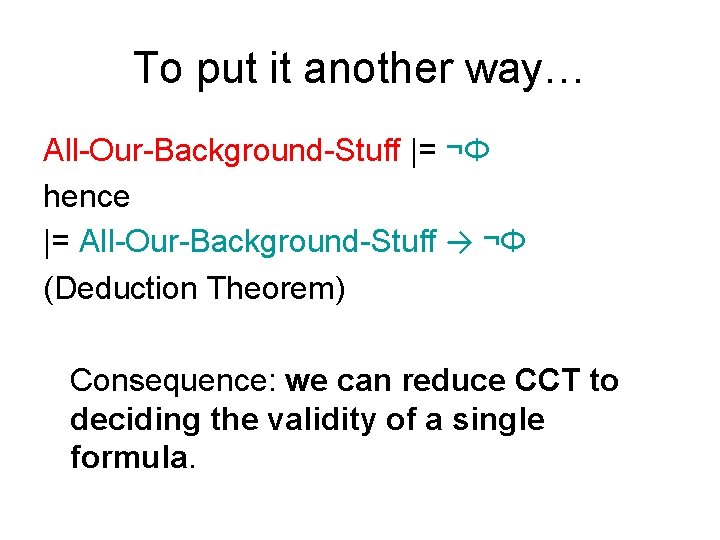

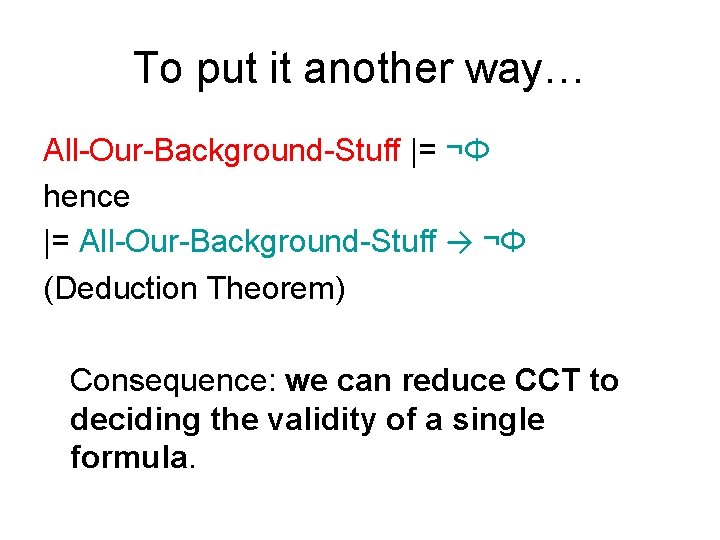

To put it another way… All-Our-Background-Stuff |= ¬Φ hence |= All-Our-Background-Stuff → ¬Φ (Deduction Theorem) Consequence: we can reduce CCT to deciding the validity of a single formula.

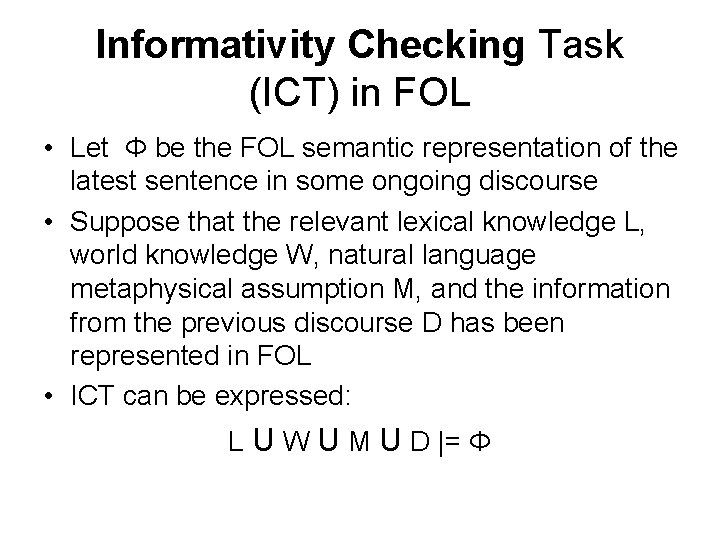

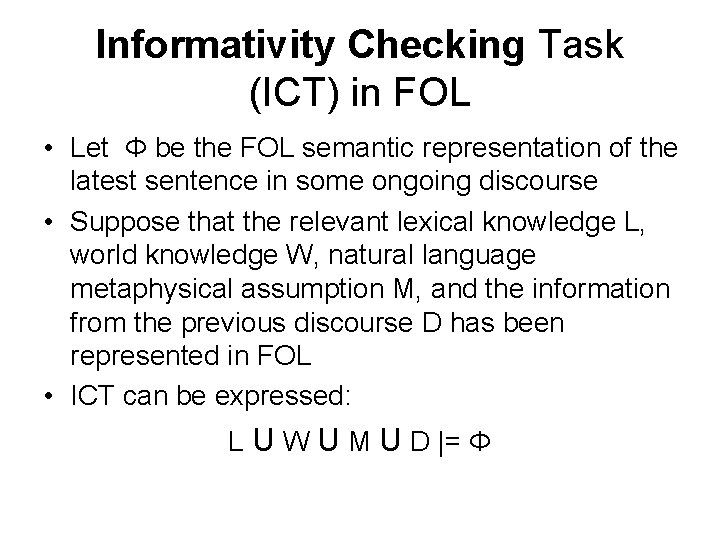

Informativity Checking Task (ICT) in FOL • Let Φ be the FOL semantic representation of the latest sentence in some ongoing discourse • Suppose that the relevant lexical knowledge L, world knowledge W, natural language metaphysical assumption M, and the information from the previous discourse D has been represented in FOL • ICT can be expressed: L U W U M U D |= Φ

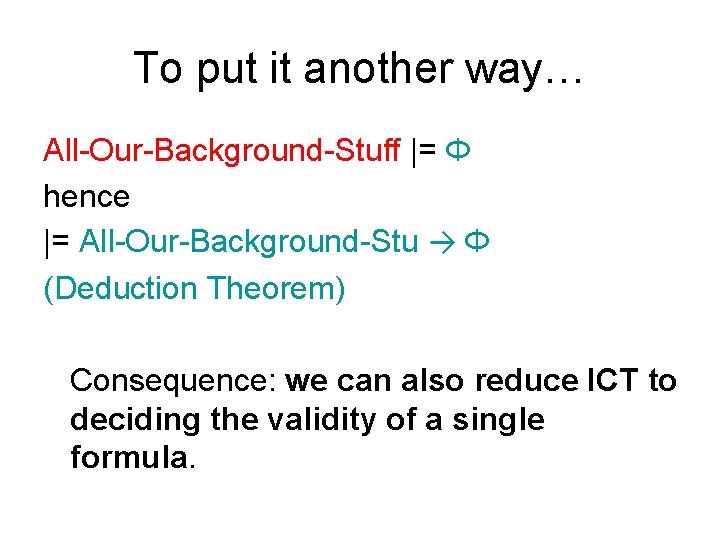

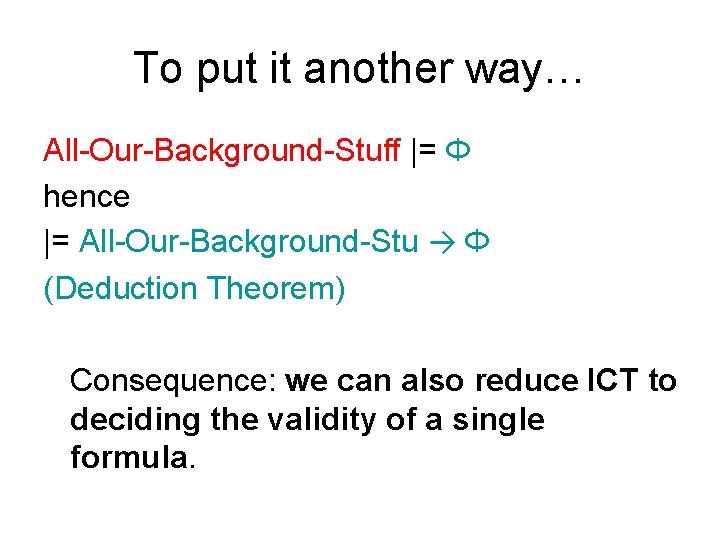

To put it another way… All-Our-Background-Stuff |= Φ hence |= All-Our-Background-Stu → Φ (Deduction Theorem) Consequence: we can also reduce ICT to deciding the validity of a single formula.

Yes but … • This definition is semantic, i. e. given in terms of models. • This is very abstract, and • defined in terms of all models. • There a lot of models, and most of them are very large. • So is it of any computational interest whosoever?

Proof Theory • Proof theory is the syntactic approach to logic. • It attempts to define collections of rules and/or axioms that enable us to generate new formulas from old • That is, it attempts to pin down the notion of inference syntactically. • P |- Q versus P |= Q

Examples of Proof Systems • Natural deduction • Hilbert-style system (often called axiomatic systems) • Sequent calculus • Tableaux systems • Resolution • Some systems (notably tableau and resolution) are particularly suitable for computational purposes.

Connecting Proof Theory to Model Theory • Nothing we have said so far makes any connection between the proof theoretic and the model theoretic ideas previously introduced. • We must insist on working with proof systems with two special properties • Soundness • Completeness.

Soundness • Proof Theoretic Q is provable in proof theoretic system |- Q. • Model Theoretic Q is valid in model theoretic system |= Q • A PT system is sound iff |- Q implies |= Q • Every theorem is valid

Remark on Soundness • Soundness is typically an easy property to prove. • Proofs typically have some kind of inductive structure. • One shows that if the first part of proof is true in a model then the rules only let us generate formulas that are also true in a model. • Proof follows by induction

Completeness • Proof Theoretic Q is provable in proof theoretic system |- Q. • Model Theoretic Q is valid in model theoretic system |= Q • A PT system is sound iff |= Q implies |- Q • Every valid formula is also a theorem

Remark on Completeness • Completeness is a much deeper property that soundness, and is a lot more difficult to prove. • It is typically proved by contraposition. We show that if some formula P is not provable then is not valid. • This is done by building a model for ¬P • The 1 st completeness proof for a 1 st-order proof system was given by Kurt Godel in his 1930 Ph. D thesis.

Sound and Complete Systems • So if a proof system is both sound and complete (which is what we want) we have that: |=Φ if and only if |-Φ • That is, syntactic provability and semantic validity coincide. • Sound and complete proof system, really capture the our semantic reality. • Working with such systems is not just playing with symbols.

Blackburn’s Proposal • Deciding validity (in 1 st-order logic) is undecidable, i. e. no algorithm exists for solving 1 st-order validity. • Implementing our proof methods for 1 st-order logic (that is, writing a theorem prover only gives us a semi-decision procedure. • If a formulas is valid, the prover will be able to prove it, but if is not valid, the prover may never halt! • Proposal – Implement theorem provers, – but also implement a partial converse tool: model builders.

Computational Tools • Theorem prover: A tool that, when given a 1 storder formula Φ attempts to prove it. • If Φ is in fact provable a (sound and complete) 1 st-order prover can (in principle) prove it. • Model builder: a tool that, when given a 1 storder formula Φ, attempts to build a model for it. • It cannot (even in principle) always succeed in this task, but it can be very useful.

Theorem Provers and Model Checkers • Theorem provers: a mature technology which provides a negative check on consistency and informativity • Theorem provers can tell us when something is not consistent, or not informative. • Model builders: a newer technology which provides a (partial) positive check on consistency and informativity • That is, model builders can tell us when something is consistent or informative.

A Possible System • • • Let B be all our background knowledge, and Φ the representation of the latest sentence: Partial positive test for consistency: give MB B & Φ Partial positive test for informativity: give MB B & ¬Φ Negative test for consistency: give TP B → Φ Negative test for informativity: give TP B → ¬Φ And do this in parallel using the best available software!

Clever Use of Reasoning Tools (CURT) • Baby Curt No inference capabilities • Rugrat Curt: negative consistency checks (naive prover) • Clever Curt: negative consistency checks (sophisticated prover) • Sensitive Curt: negative and positive informativity checks • Scrupulous Curt: eliminating superfluuous readings • Knowledgeable Curt: adding background knowledge • Helpful Curt: question answering

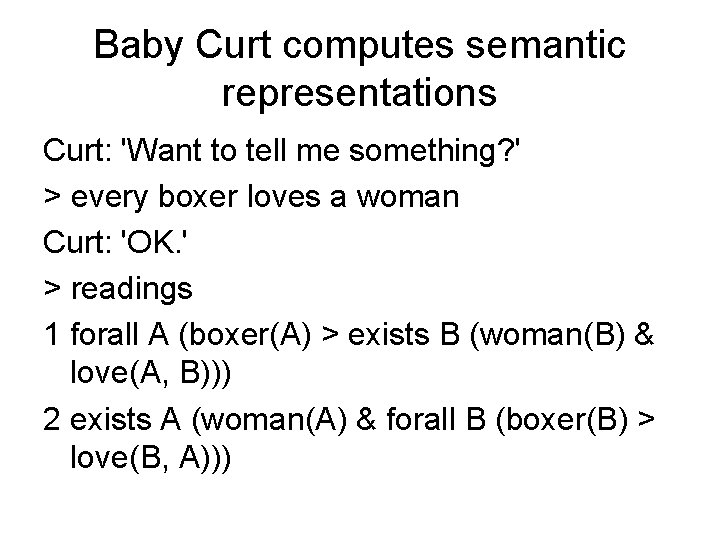

Baby Curt computes semantic representations Curt: 'Want to tell me something? ' > every boxer loves a woman Curt: 'OK. ' > readings 1 forall A (boxer(A) > exists B (woman(B) & love(A, B))) 2 exists A (woman(A) & forall B (boxer(B) > love(B, A)))

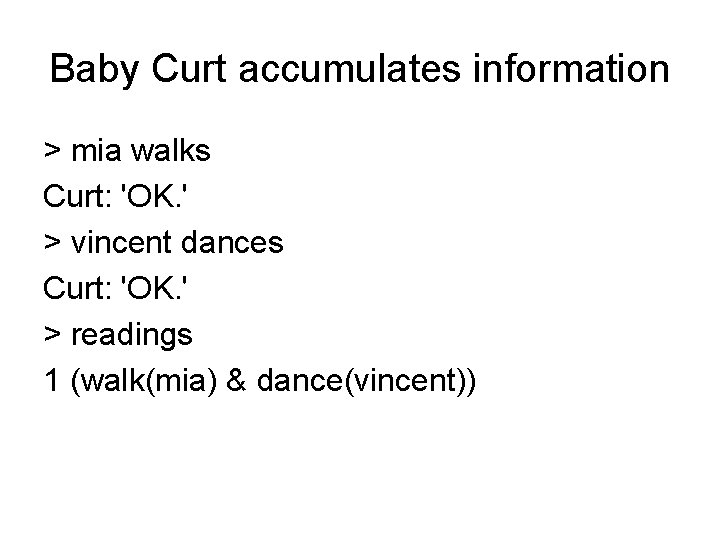

Baby Curt accumulates information > mia walks Curt: 'OK. ' > vincent dances Curt: 'OK. ' > readings 1 (walk(mia) & dance(vincent))

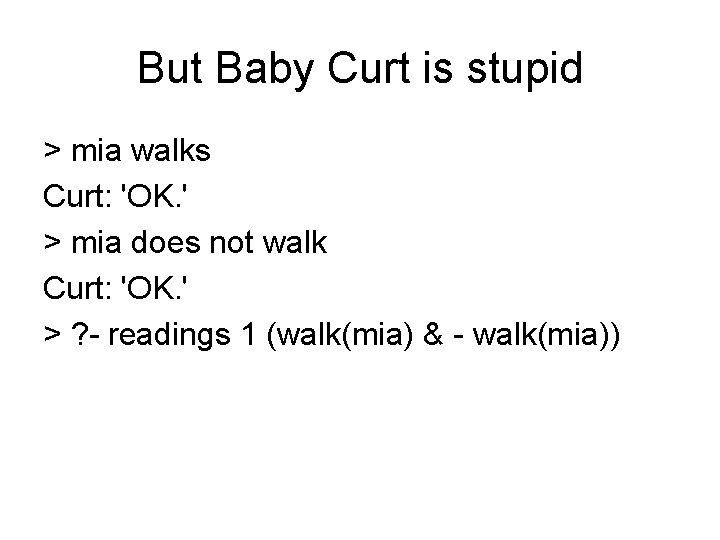

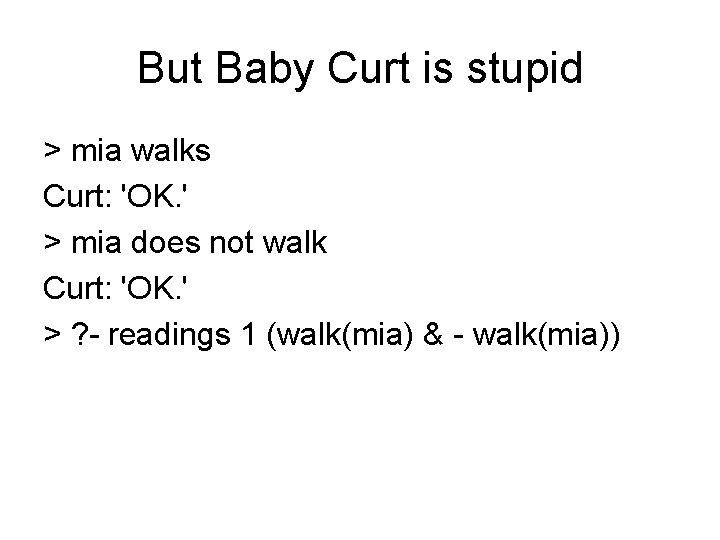

But Baby Curt is stupid > mia walks Curt: 'OK. ' > mia does not walk Curt: 'OK. ' > ? - readings 1 (walk(mia) & - walk(mia))

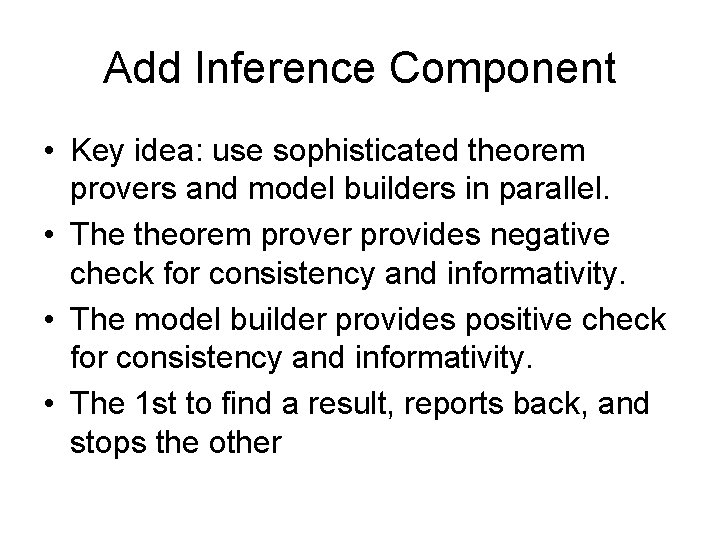

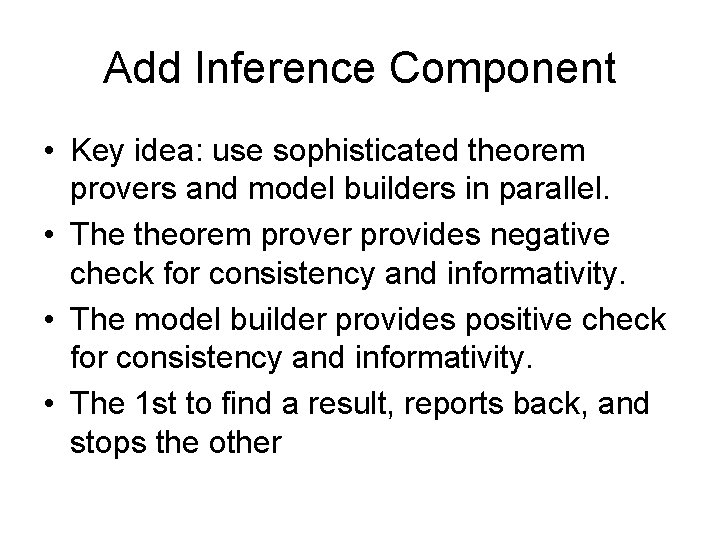

Add Inference Component • Key idea: use sophisticated theorem provers and model builders in parallel. • The theorem prover provides negative check for consistency and informativity. • The model builder provides positive check for consistency and informativity. • The 1 st to find a result, reports back, and stops the other

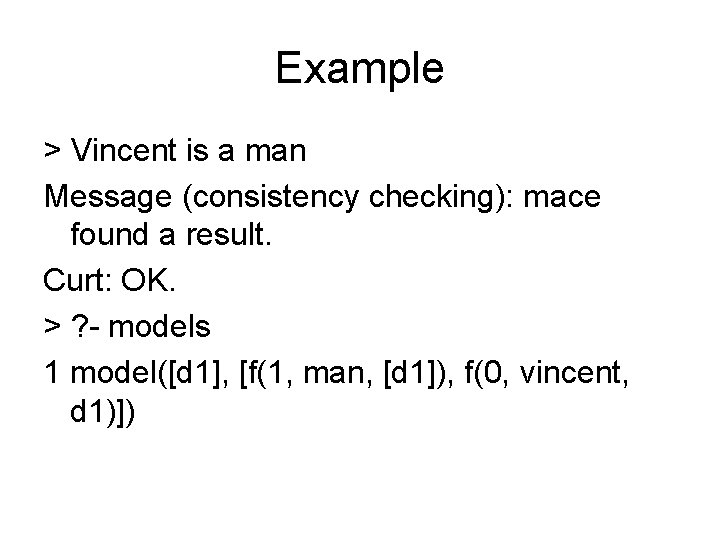

Example > Vincent is a man Message (consistency checking): mace found a result. Curt: OK. > ? - models 1 model([d 1], [f(1, man, [d 1]), f(0, vincent, d 1)])

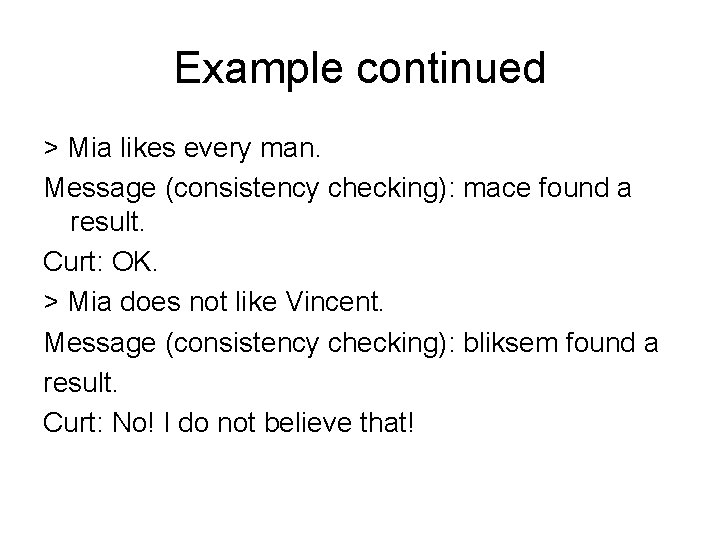

Example continued > Mia likes every man. Message (consistency checking): mace found a result. Curt: OK. > Mia does not like Vincent. Message (consistency checking): bliksem found a result. Curt: No! I do not believe that!

Example 2 > ? - every car has a radio Message (consistency checking): mace found a result. Message (consistency checking): bliksem found a result. Curt: 'OK. ' > ? - readings 1 forall A (car(A) > exists B (radio(B) & have(A, B)))

Issues • Is a logic-based approach to feasible? How far can it be pushed? • Is 1 st-order logic essential? • Are there other interesting inference tasks? • Is any of this relevant to current trends in computational linguistics, where shallow processing and statistical approaches rule? • Are there applications?