Inexact Matching of Ontology Graphs Using Expectation Maximization

![Computational Complexity • Complexity of the E step is O([|Vd||Vm|]2) • In the M Computational Complexity • Complexity of the E step is O([|Vd||Vm|]2) • In the M](https://slidetodoc.com/presentation_image_h2/56d8a569a4babea8c55680ea68972173/image-13.jpg)

- Slides: 18

Inexact Matching of Ontology Graphs Using Expectation. Maximization Prashant Doshi, Christopher Thomas LSDIS Lab, Dept. of Computer Science, University of Georgia

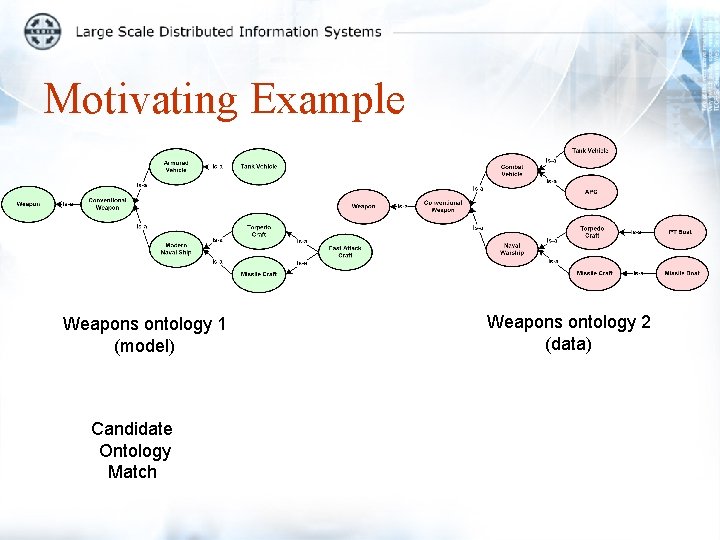

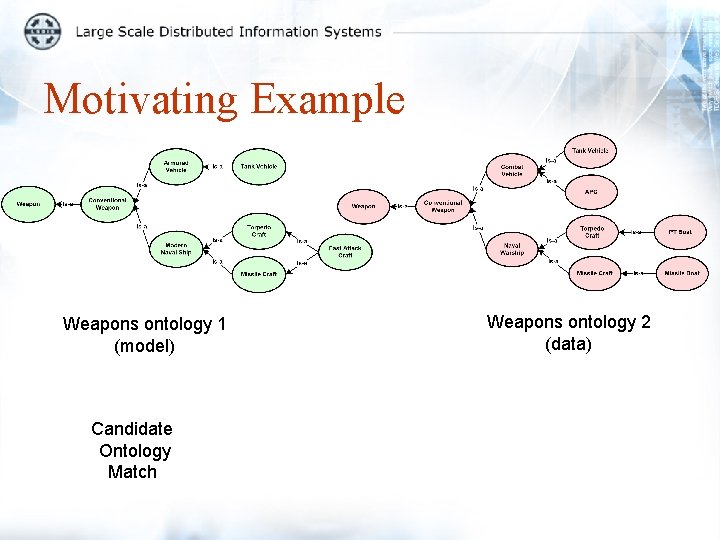

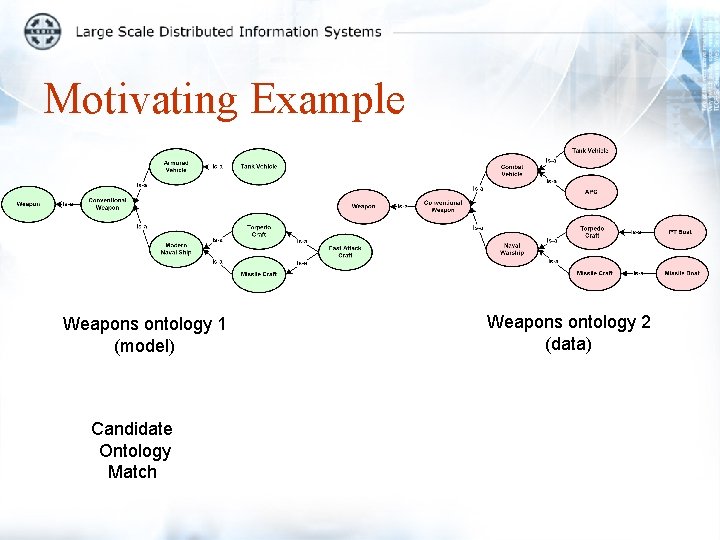

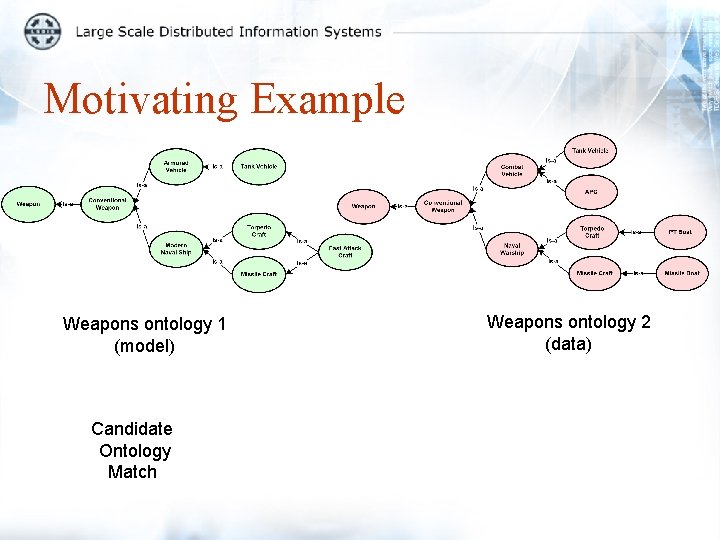

Motivating Example Weapons ontology 1 (model) Candidate Ontology Match Weapons ontology 2 (data)

Motivating Example Weapons ontology 1 (model) Candidate Ontology Match Weapons ontology 2 (data)

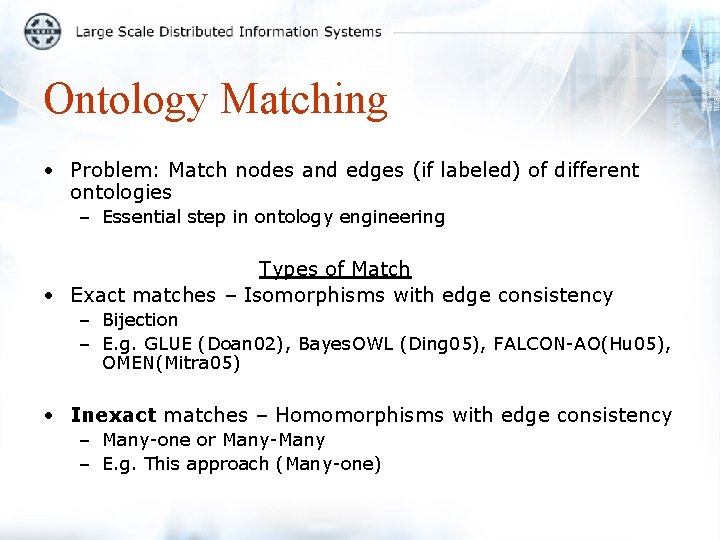

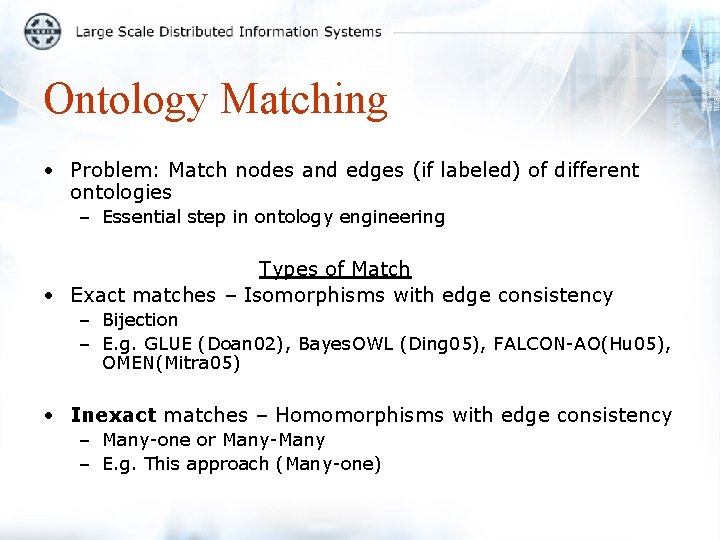

Ontology Matching • Problem: Match nodes and edges (if labeled) of different ontologies – Essential step in ontology engineering Types of Match • Exact matches – Isomorphisms with edge consistency – Bijection – E. g. GLUE (Doan 02), Bayes. OWL (Ding 05), FALCON-AO(Hu 05), OMEN(Mitra 05) • Inexact matches – Homomorphisms with edge consistency – Many-one or Many-Many – E. g. This approach (Many-one)

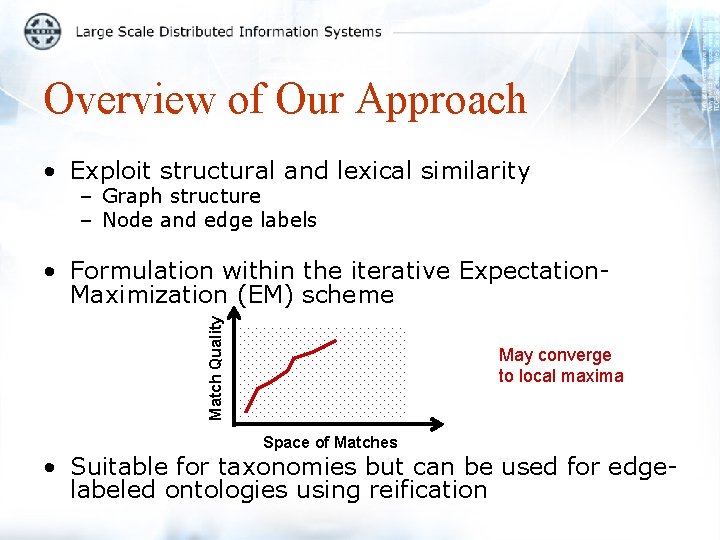

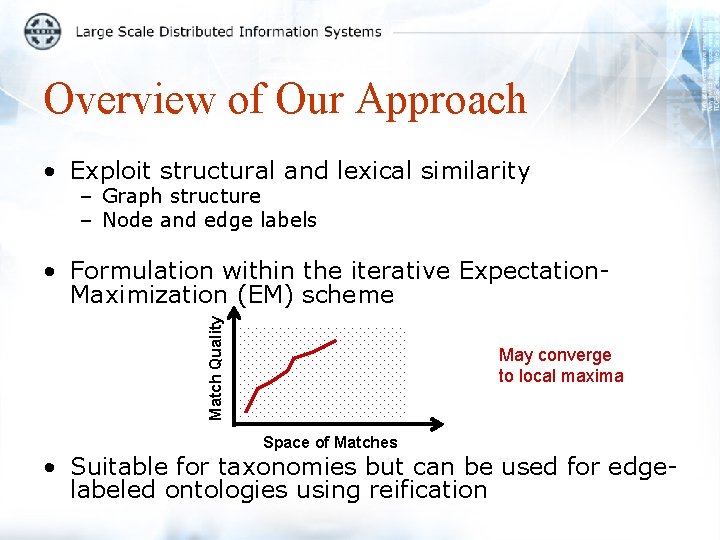

Overview of Our Approach • Exploit structural and lexical similarity – Graph structure – Node and edge labels Match Quality • Formulation within the iterative Expectation. Maximization (EM) scheme May converge to local maxima Space of Matches • Suitable for taxonomies but can be used for edgelabeled ontologies using reification

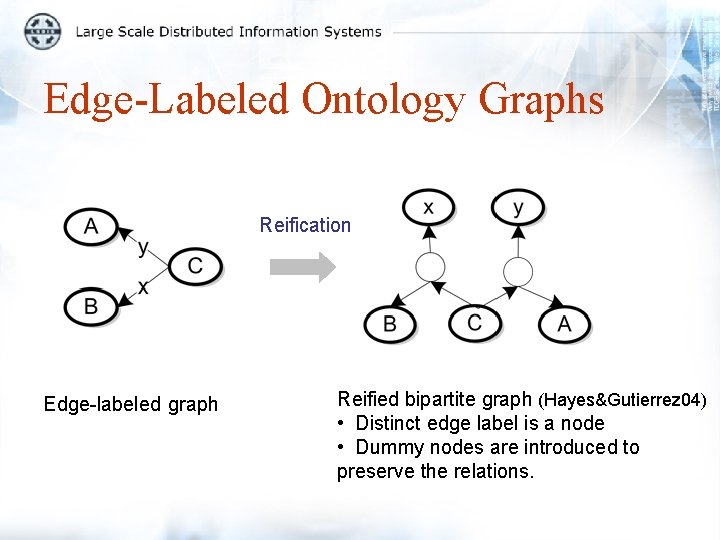

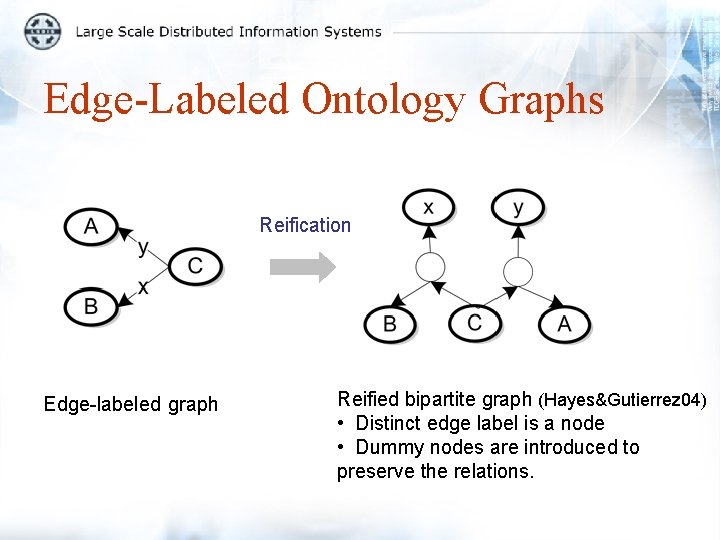

Edge-Labeled Ontology Graphs Reification Edge-labeled graph Reified bipartite graph (Hayes&Gutierrez 04) • Distinct edge label is a node • Dummy nodes are introduced to preserve the relations.

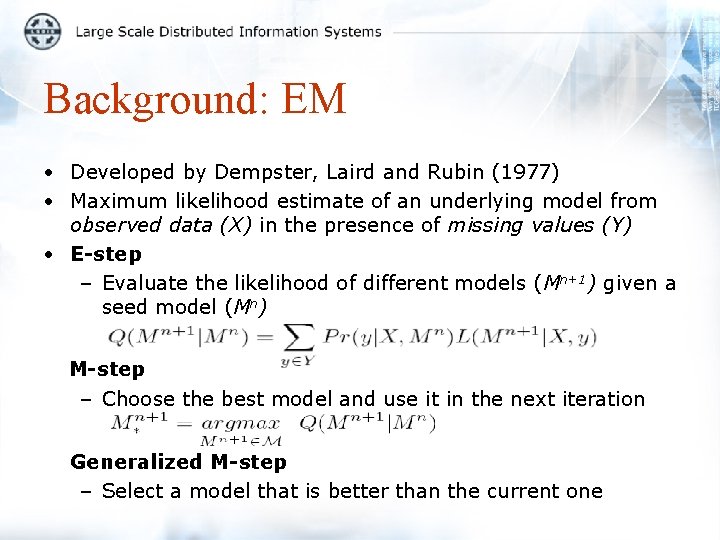

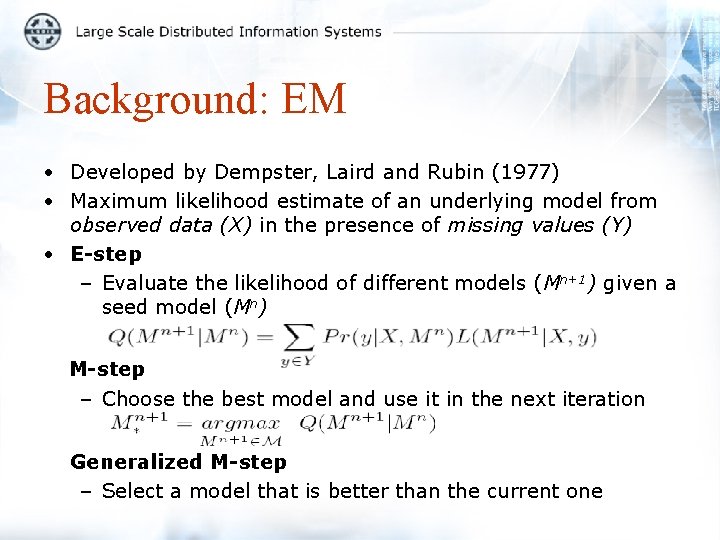

Background: EM • Developed by Dempster, Laird and Rubin (1977) • Maximum likelihood estimate of an underlying model from observed data (X) in the presence of missing values (Y) • E-step – Evaluate the likelihood of different models (Mn+1) given a seed model (Mn) M-step – Choose the best model and use it in the next iteration Generalized M-step – Select a model that is better than the current one

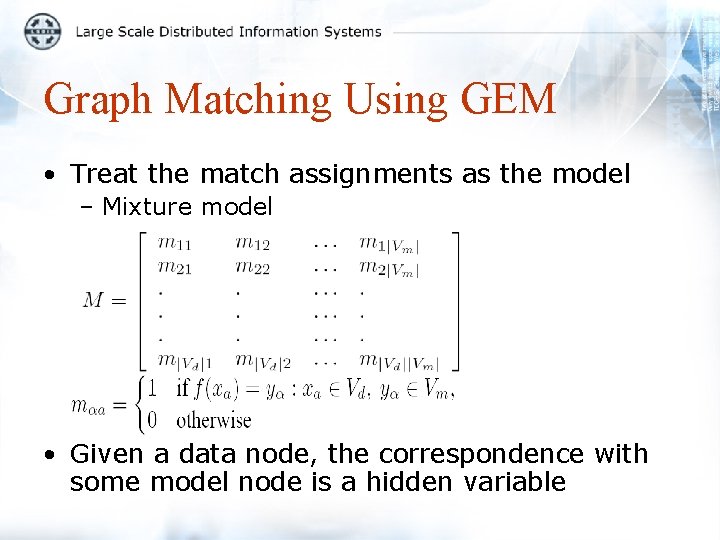

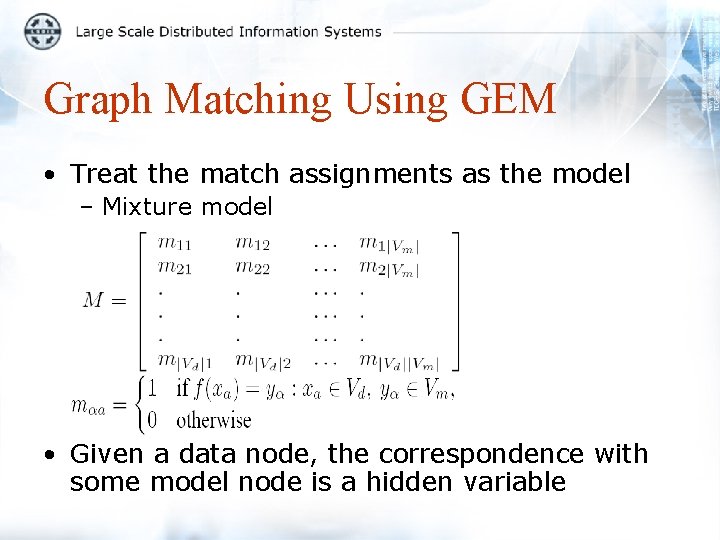

Graph Matching Using GEM • Treat the match assignments as the model – Mixture model • Given a data node, the correspondence with some model node is a hidden variable

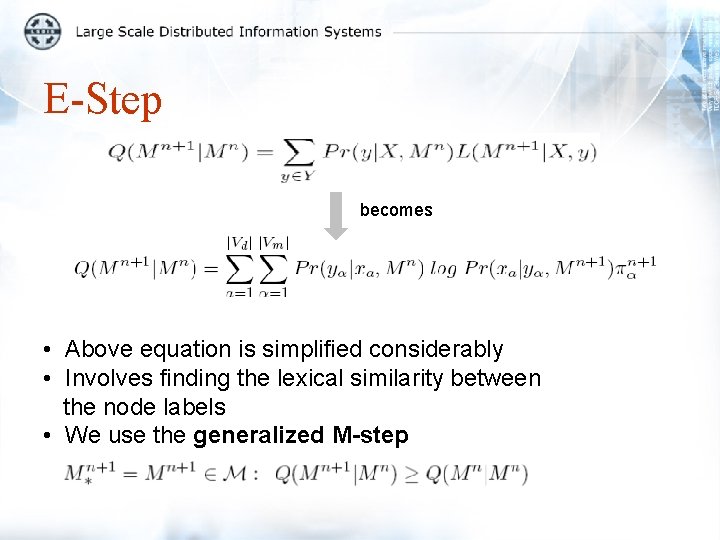

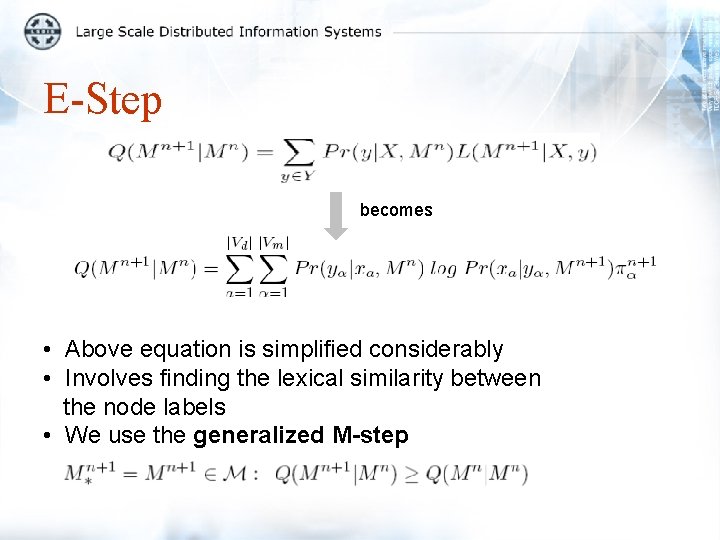

E-Step becomes • Above equation is simplified considerably • Involves finding the lexical similarity between the node labels • We use the generalized M-step

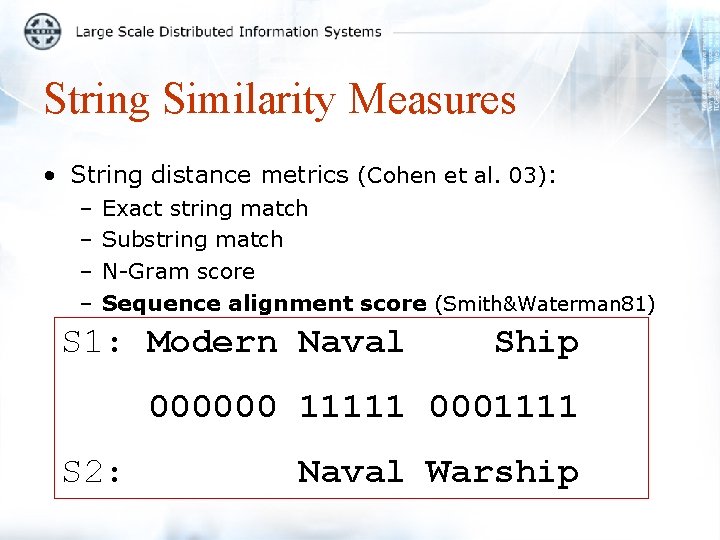

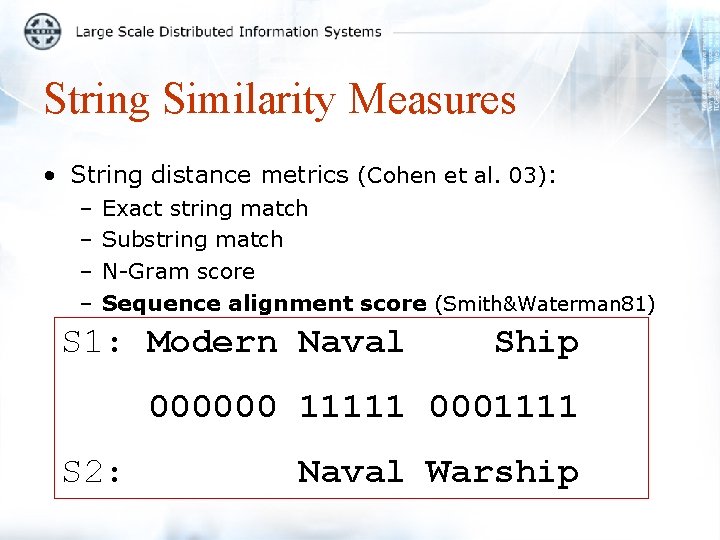

String Similarity Measures • String distance metrics (Cohen et al. 03): – – Exact string match Substring match N-Gram score Sequence alignment score (Smith&Waterman 81) S 1: Modern Naval Ship 000000 11111 0001111 S 2: Naval Warship

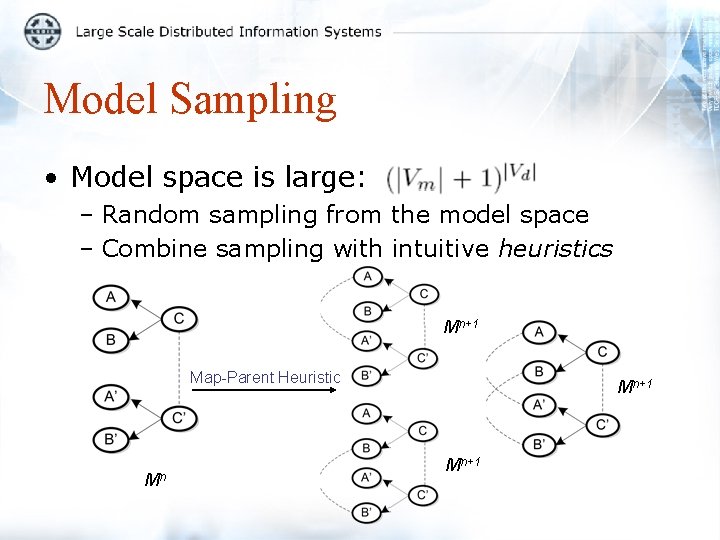

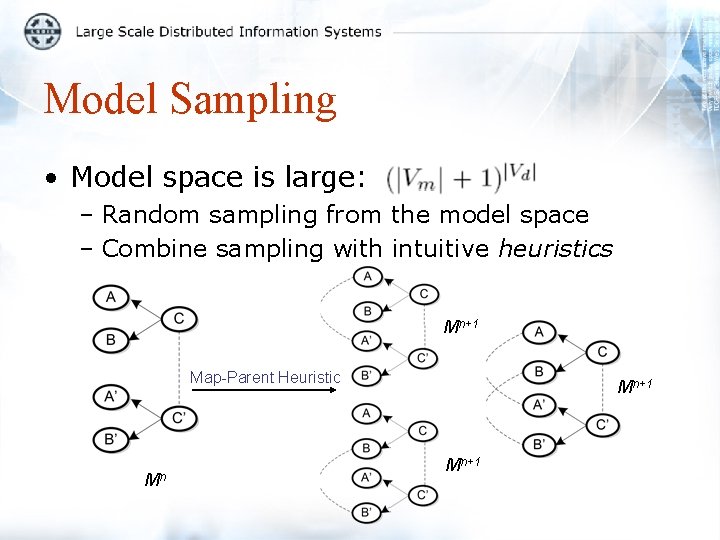

Model Sampling • Model space is large: – Random sampling from the model space – Combine sampling with intuitive heuristics Mn+1 Map-Parent Heuristic Mn Mn+1

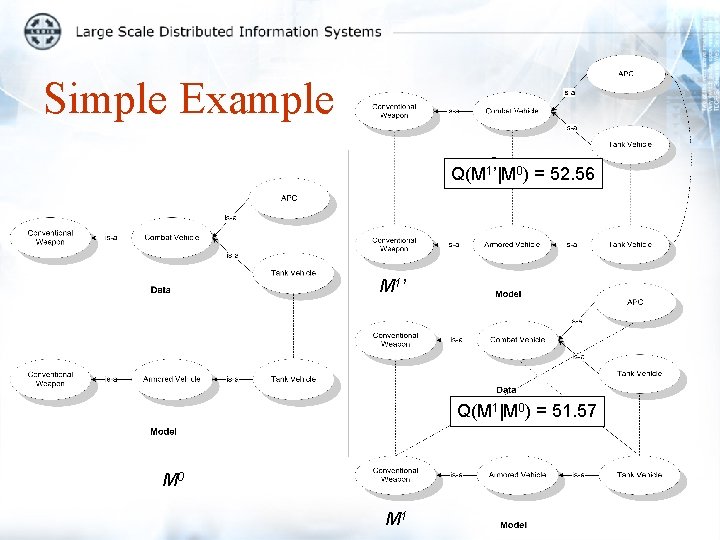

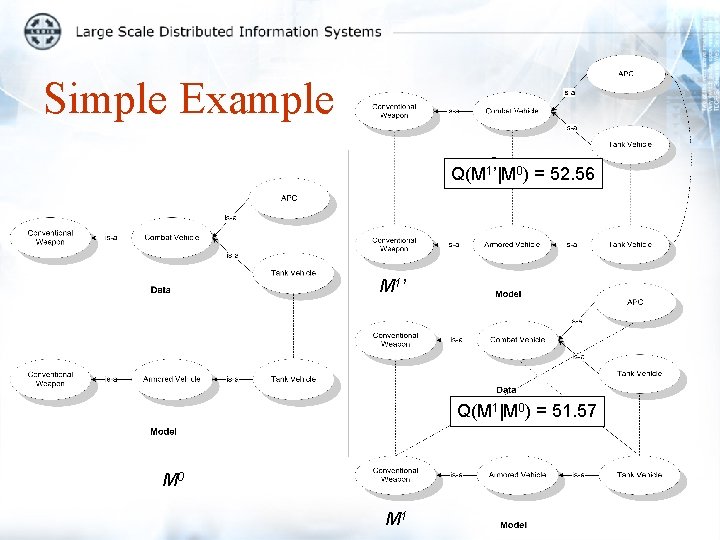

Simple Example Q(M 1’|M 0) = 52. 56 M 1 ’ Q(M 1|M 0) = 51. 57 M 0 M 1

![Computational Complexity Complexity of the E step is OVdVm2 In the M Computational Complexity • Complexity of the E step is O([|Vd||Vm|]2) • In the M](https://slidetodoc.com/presentation_image_h2/56d8a569a4babea8c55680ea68972173/image-13.jpg)

Computational Complexity • Complexity of the E step is O([|Vd||Vm|]2) • In the M step, if we generate K samples within a sample set, the worst case complexity is O(K[|Vd||Vm|]2)

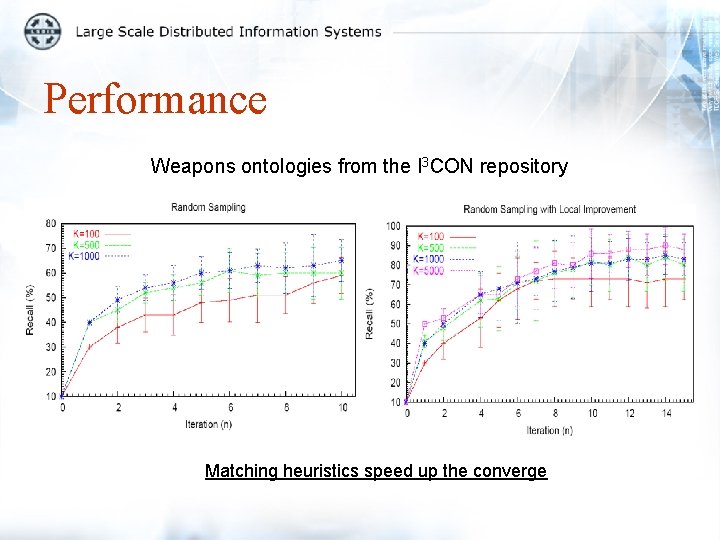

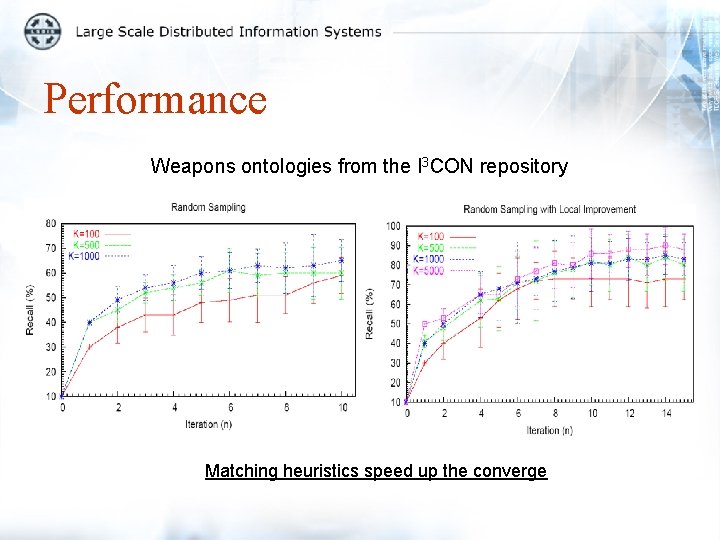

Performance Weapons ontologies from the I 3 CON repository Matching heuristics speed up the converge

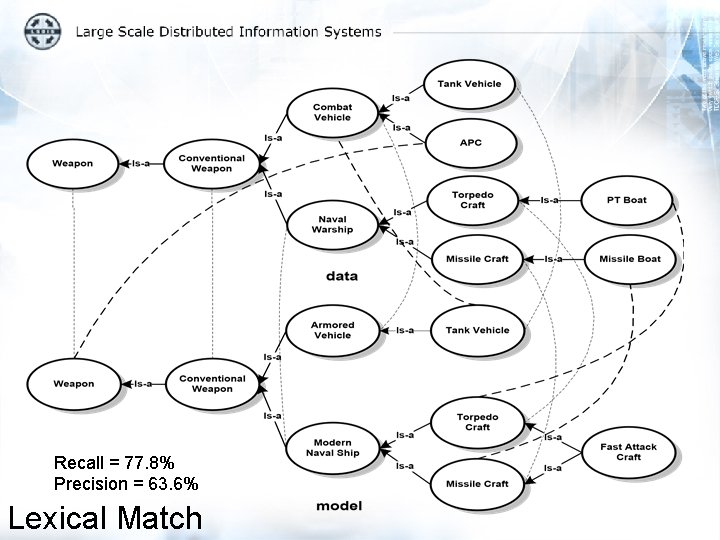

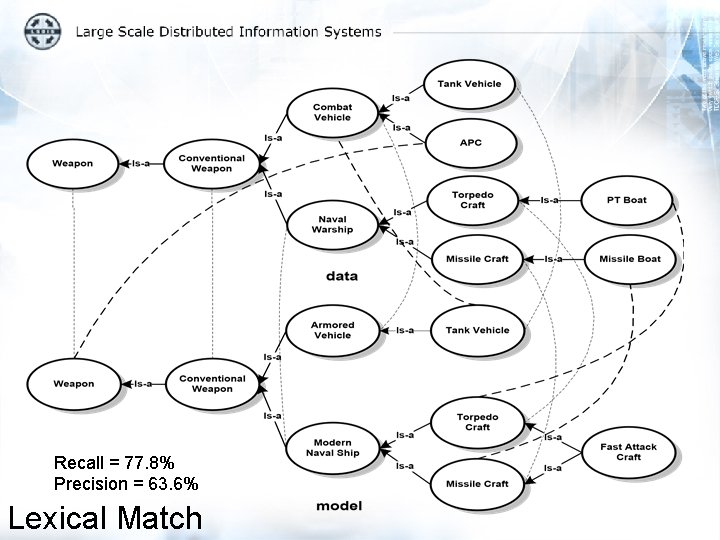

Recall = 77. 8% Precision = 63. 6% Lexical Match

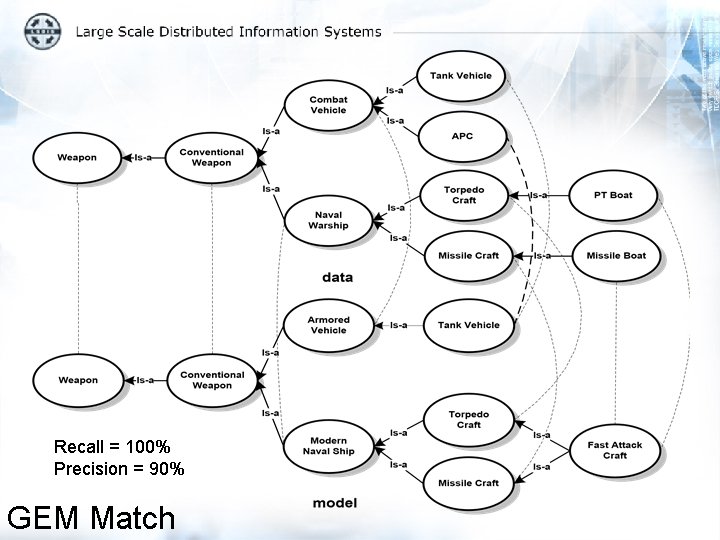

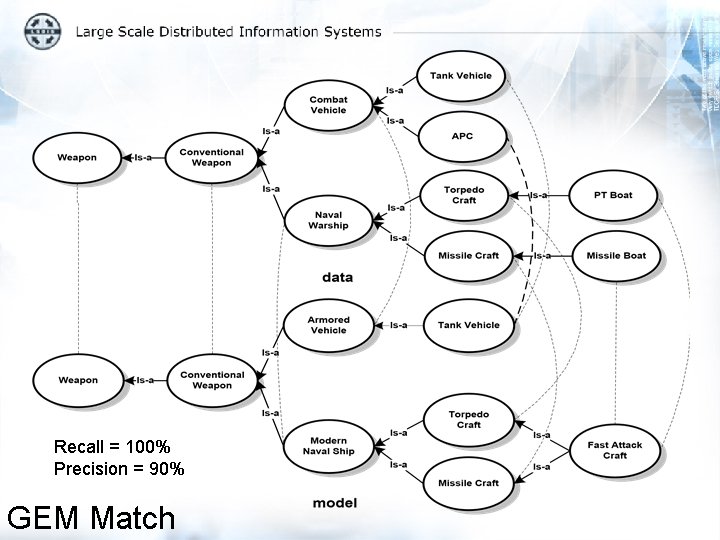

Recall = 100% Precision = 90% GEM Match

Discussion • A principled technique for inexact matching of ontology schemas using Generalized EM – Considers structural and label similarity – Produces the most likely match • Many-one correspondence allows mapping between clusters of different semantic granularity • Computational complexity is a issue – More efficient ways to cover the model space

Thank you Questions