Index Construction Adapted from Lectures by Prabhakar Raghavan

Index Construction Adapted from Lectures by Prabhakar Raghavan (Yahoo and Stanford) and Christopher Manning (Stanford) Prasad L 06 Index. Construction 1

Index construction n How do we construct an index? What strategies can we use with limited main memory? n Our Sample Corpus n n Number of docs = n = 1 M n n n Each doc has 1 K terms Number of distinct terms = m = 500 K 667 million postings entries 2

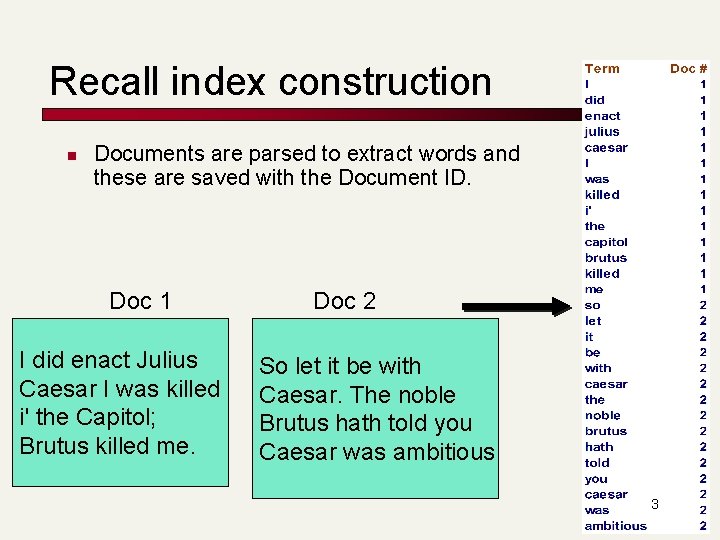

Recall index construction n Documents are parsed to extract words and these are saved with the Document ID. Doc 1 I did enact Julius Caesar I was killed i' the Capitol; Brutus killed me. Doc 2 So let it be with Caesar. The noble Brutus hath told you Caesar was ambitious 3

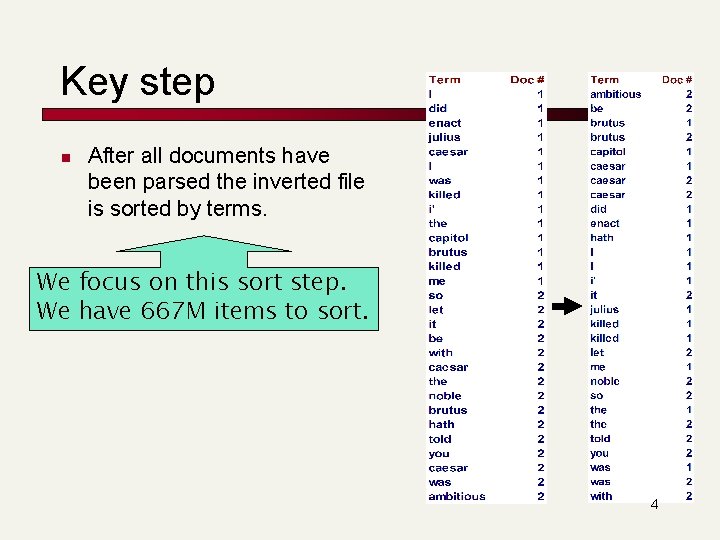

Key step n After all documents have been parsed the inverted file is sorted by terms. We focus on this sort step. We have 667 M items to sort. 4

Index construction n As we build up the index, cannot exploit compression tricks n n Parse docs one at a time. Final postings for any term – incomplete until the end. (actually you can exploit compression, but this becomes a lot more complex) At 10 -12 bytes per postings entry, demands several temporary gigabytes 5

System parameters for design n Disk seek ~ 10 milliseconds Block transfer from disk ~ 1 microsecond per byte (following a seek) All other ops ~ 10 microseconds n E. g. , compare two postings entries and decide their merge order 6

Bottleneck n n n Parse and build postings entries one doc at a time Now sort postings entries by term (then by doc within each term) Doing this with random disk seeks would be too slow – must sort N=667 M records If every comparison took 2 disk seeks, and N items could be sorted with N log 2 N comparisons, how long would this take? 7

Sorting with fewer disk seeks n n 12 -byte (4+4+4) records (term, doc, freq). These are generated as we parse docs. Must now sort 667 M such 12 -byte records by term. Define a Block ~ 10 M such records n n n can “easily” fit a couple into memory. Will have 64 such blocks to start with. Will sort within blocks first, then merge the blocks into one long sorted order. 8

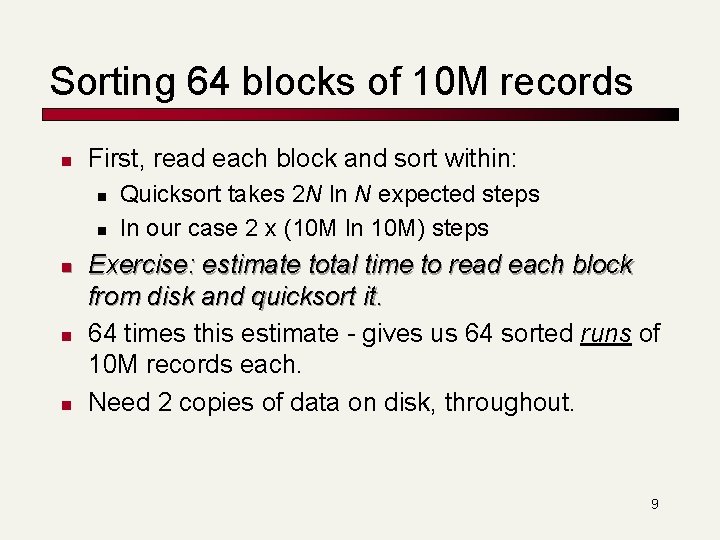

Sorting 64 blocks of 10 M records n First, read each block and sort within: n n n Quicksort takes 2 N ln N expected steps In our case 2 x (10 M ln 10 M) steps Exercise: estimate total time to read each block from disk and quicksort it. 64 times this estimate - gives us 64 sorted runs of 10 M records each. Need 2 copies of data on disk, throughout. 9

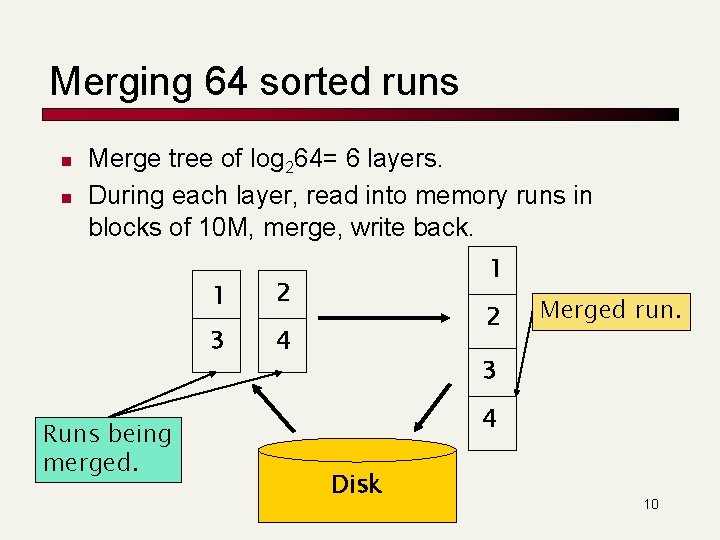

Merging 64 sorted runs n n Merge tree of log 264= 6 layers. During each layer, read into memory runs in blocks of 10 M, merge, write back. Runs being merged. 1 2 3 4 1 2 Merged run. 3 4 Disk 10

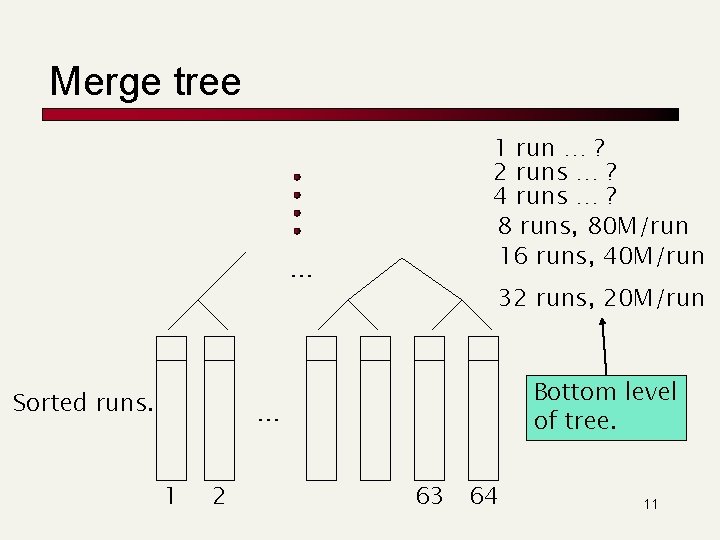

Merge tree … Sorted runs. 1 run … ? 2 runs … ? 4 runs … ? 8 runs, 80 M/run 16 runs, 40 M/run 32 runs, 20 M/run Bottom level of tree. … 1 2 63 64 11

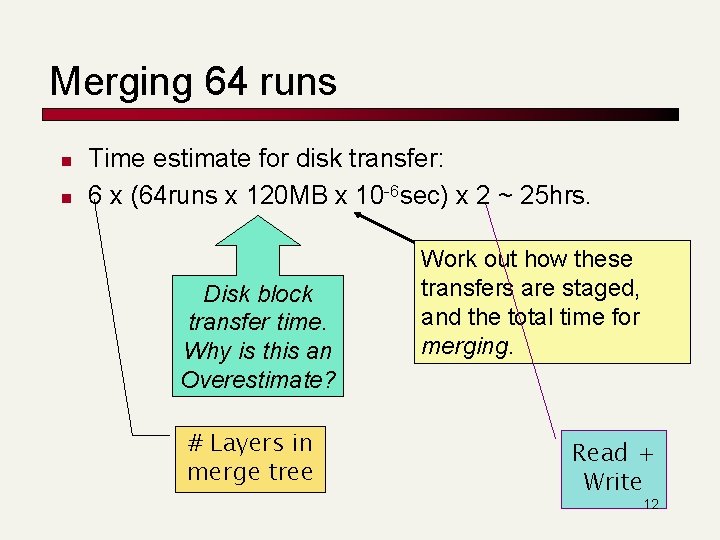

Merging 64 runs n n Time estimate for disk transfer: 6 x (64 runs x 120 MB x 10 -6 sec) x 2 ~ 25 hrs. Disk block transfer time. Why is this an Overestimate? # Layers in merge tree Work out how these transfers are staged, and the total time for merging. Read + Write 12

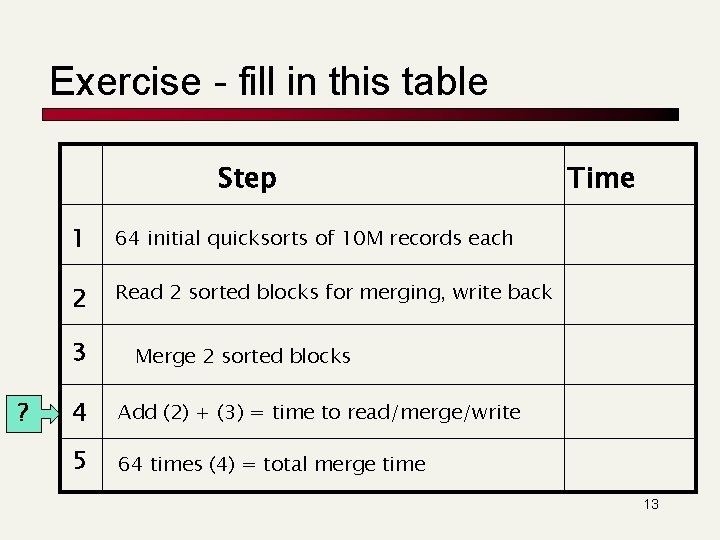

Exercise - fill in this table Step 1 64 initial quicksorts of 10 M records each 2 Read 2 sorted blocks for merging, write back 3 ? Time Merge 2 sorted blocks 4 Add (2) + (3) = time to read/merge/write 5 64 times (4) = total merge time 13

Large memory indexing n n Suppose instead that we had 16 GB of memory for the above indexing task. Exercise: What initial block sizes would we choose? What index time does this yield? 14

Distributed indexing n For web-scale indexing (don’t try this at home!): must use a distributed computing cluster n Individual machines are fault-prone n n Can unpredictably slow down or fail How do we exploit such a pool of machines? 15

Distributed indexing n n n Maintain a master machine directing the indexing job – considered “safe”. Break up indexing into sets of (parallel) tasks. Master machine assigns each task to an idle machine from a pool. 16

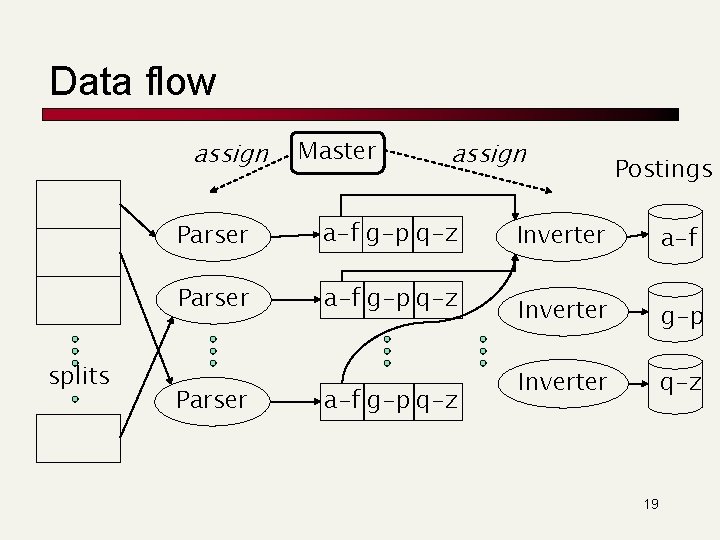

Parallel tasks n We will use two sets of parallel tasks n n n Parsers Inverters Break the input document corpus into splits n Each split is a subset of documents Master assigns a split to an idle parser machine n Parser reads a document at a time and emits (term, doc) pairs n 17

Parallel tasks n Parser writes pairs into j partitions n Each for a range of terms’ first letters n n (e. g. , a-f, g-p, q-z) – here j=3. Now to complete the index inversion 18

Data flow assign splits Master assign Parser a-f g-p q-z Postings Inverter a-f Inverter g-p Inverter q-z 19

Inverters n n n Collect all (term, doc) pairs for a partition Sorts and writes to postings list Each partition contains a set of postings Above process flow a special case of Map. Reduce. 20

Dynamic indexing n Docs come in over time n n n postings updates for terms already in dictionary new terms added to dictionary Docs get deleted 21

Simplest approach n n Maintain “big” main index New docs go into “small” auxiliary index Search across both, merge results Deletions n n n Invalidation bit-vector for deleted docs Filter docs output on a search result by this invalidation bit-vector Periodically, re-index into one main index 22

Index on disk vs. memory n n Most retrieval systems keep the dictionary in memory and the postings on disk Web search engines frequently keep both in memory n massive memory requirement n feasible for large web service installations n less so for commercial usage where query loads are lighter 23

Indexing in the real world n Typically, don’t have all documents sitting on a local filesystem n n n Documents need to be spidered Could be dispersed over a WAN with varying connectivity Must schedule distributed spiders Have already discussed distributed indexers Could be (secure content) in n Databases Content management applications Email applications 24

Content residing in applications n n Mail systems/groupware, content management contain the most “valuable” documents http often not the most efficient way of fetching these documents - native API fetching n n Specialized, repository-specific connectors These connectors also facilitate document viewing when a search result is selected for viewing 25

Secure documents n Each document is accessible to a subset of users n n n Usually implemented through some form of Access Control Lists (ACLs) Search users are authenticated Query should retrieve a document only if user can access it n n So if there are docs matching your search but you’re not privy to them, “Sorry no results found” E. g. , as a lowly employee in the company, I get “No results” for the query “salary roster” 26

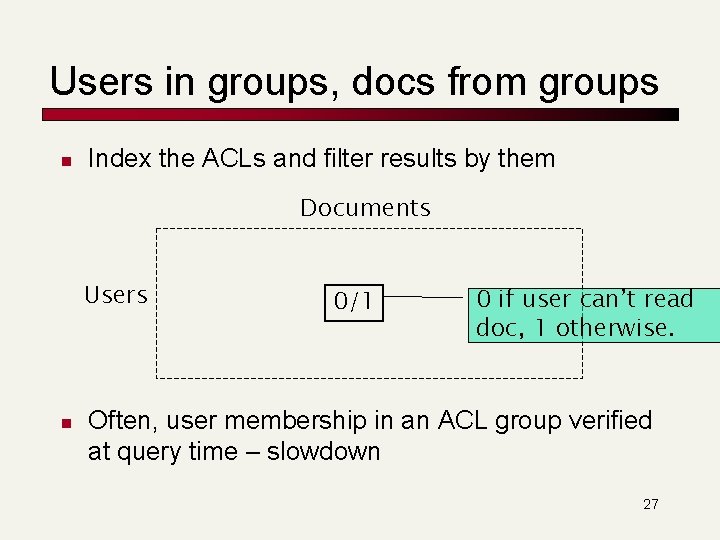

Users in groups, docs from groups n Index the ACLs and filter results by them Documents Users n 0/1 0 if user can’t read doc, 1 otherwise. Often, user membership in an ACL group verified at query time – slowdown 27

“Rich” documents n n (How) Do we index images? Researchers have devised Query Based on Image Content (QBIC) systems n “show me a picture similar to this orange circle” n (see, vector space retrieval) In practice, image search usually based on metadata such as file name e. g. , monalisa. jpg New approaches exploit social tagging n E. g. , flickr. com 28

Passage/sentence retrieval n n n Suppose we want to retrieve not an entire document matching a query, but only a passage/sentence - say, in a very long document Can index passages/sentences as minidocuments – what should the index units be? This is the subject of XML search 29

- Slides: 29