Index Compression Adapted from Lectures by Prabhakar Raghavan

Index Compression Adapted from Lectures by Prabhakar Raghavan (Yahoo and Stanford) and Christopher Manning (Stanford) Prasad L 07 Index. Compression 1

Last lecture – index construction n n Key step in indexing – sort This sort was implemented by exploiting diskbased sorting n n Fewer disk seeks Hierarchical merge of blocks Distributed indexing using Map. Reduce Running example of document collection: RCV 1 Prasad L 07 Index. Compression 2

Today n Collection statistics in more detail (RCV 1) n Dictionary compression n Postings compression Prasad L 07 Index. Compression 3

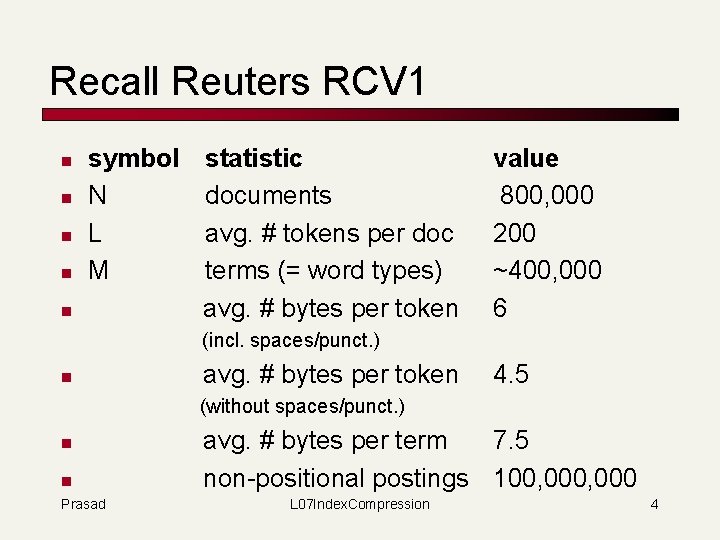

Recall Reuters RCV 1 n n symbol N L M n statistic documents avg. # tokens per doc terms (= word types) avg. # bytes per token value 800, 000 200 ~400, 000 6 (incl. spaces/punct. ) n avg. # bytes per token 4. 5 (without spaces/punct. ) n n Prasad avg. # bytes per term 7. 5 non-positional postings 100, 000 L 07 Index. Compression 4

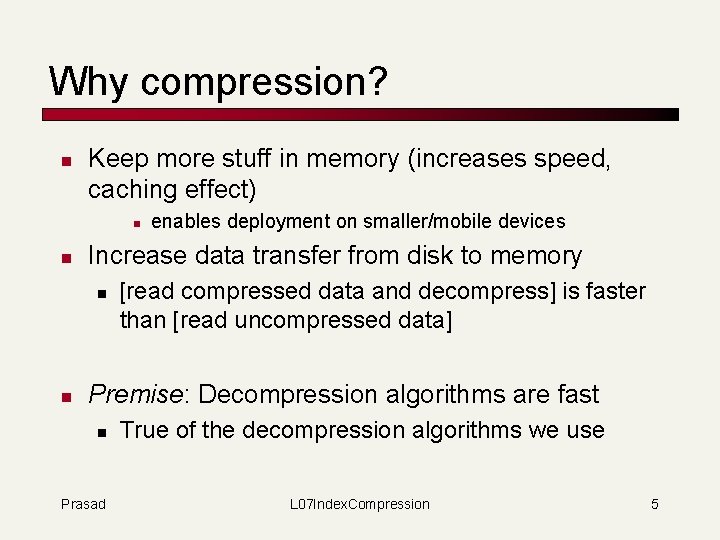

Why compression? n Keep more stuff in memory (increases speed, caching effect) n n Increase data transfer from disk to memory n n enables deployment on smaller/mobile devices [read compressed data and decompress] is faster than [read uncompressed data] Premise: Decompression algorithms are fast n Prasad True of the decompression algorithms we use L 07 Index. Compression 5

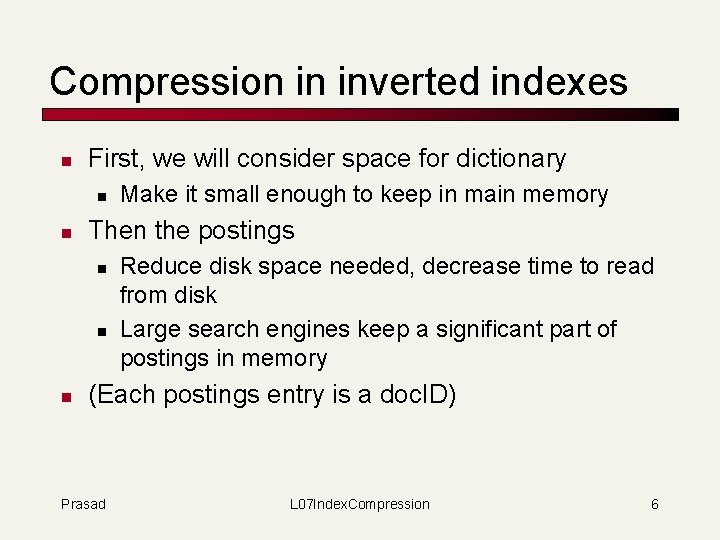

Compression in inverted indexes n First, we will consider space for dictionary n n Then the postings n n n Make it small enough to keep in main memory Reduce disk space needed, decrease time to read from disk Large search engines keep a significant part of postings in memory (Each postings entry is a doc. ID) Prasad L 07 Index. Compression 6

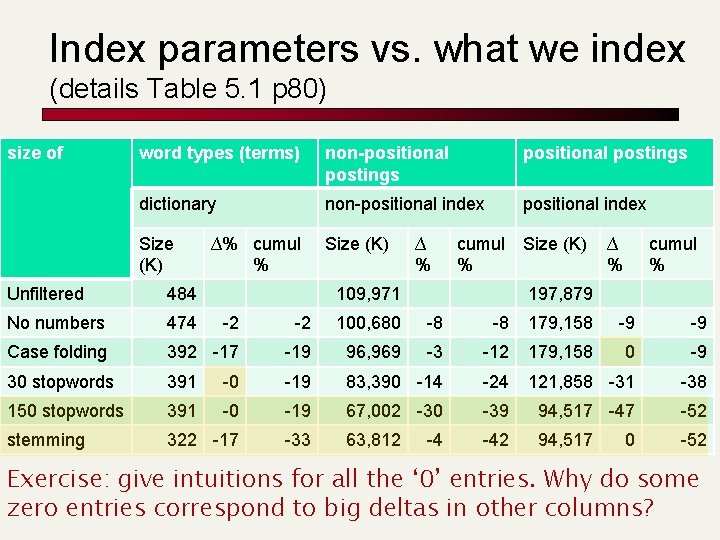

Index parameters vs. what we index (details Table 5. 1 p 80) size of word types (terms) non-positional postings dictionary non-positional index Size (K) ∆% cumul % ∆ % cumul Size (K) % 109, 971 ∆ % cumul % Unfiltered 484 197, 879 No numbers 474 -2 -2 100, 680 -8 -8 179, 158 -9 -9 Case folding 392 -17 -19 96, 969 -3 -12 179, 158 0 -9 30 stopwords 391 -0 -19 83, 390 -14 -24 121, 858 -31 -38 150 stopwords 391 -0 -19 67, 002 -30 -39 94, 517 -47 -52 stemming 322 -17 -33 63, 812 -42 94, 517 -52 -4 0 Exercise: give intuitions for all the ‘ 0’ entries. Why do some zero entries correspond to big deltas in other columns?

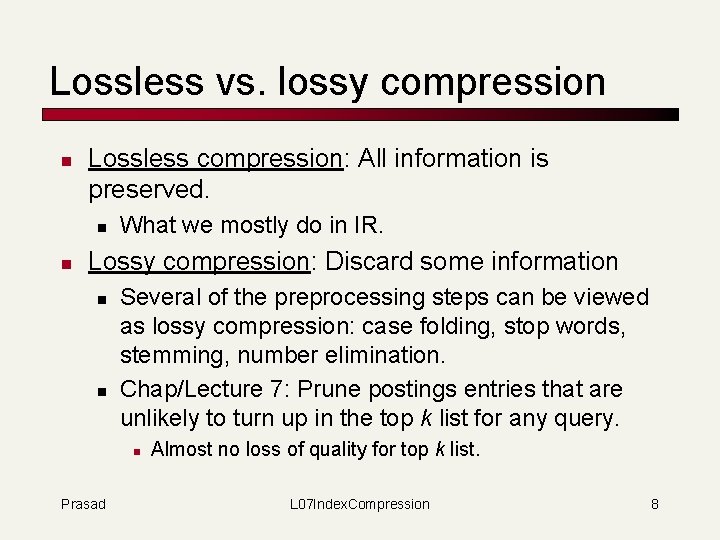

Lossless vs. lossy compression n Lossless compression: All information is preserved. n n What we mostly do in IR. Lossy compression: Discard some information n n Several of the preprocessing steps can be viewed as lossy compression: case folding, stop words, stemming, number elimination. Chap/Lecture 7: Prune postings entries that are unlikely to turn up in the top k list for any query. n Prasad Almost no loss of quality for top k list. L 07 Index. Compression 8

Vocabulary vs. collection size n n Heaps’ Law: M = k. Tb M is the size of the vocabulary, T is the number of tokens in the collection. Typical values: 30 ≤ k ≤ 100 and b ≈ 0. 5. In a log-log plot of vocabulary vs. T, Heaps’ law is a line. Prasad L 07 Index. Compression 9

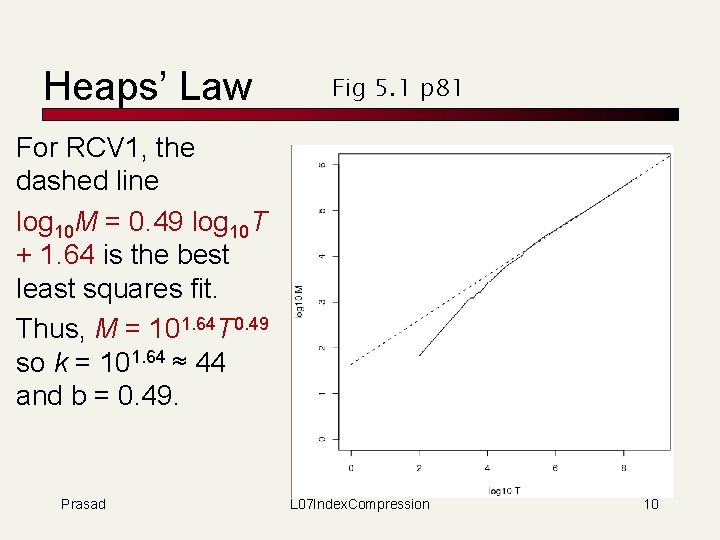

Heaps’ Law Fig 5. 1 p 81 For RCV 1, the dashed line log 10 M = 0. 49 log 10 T + 1. 64 is the best least squares fit. Thus, M = 101. 64 T 0. 49 so k = 101. 64 ≈ 44 and b = 0. 49. Prasad L 07 Index. Compression 10

Zipf’s law n n n We also study the relative frequencies of terms. In natural language, there a few very frequent terms and very many rare terms. Zipf’s law: The ith most frequent term has frequency proportional to 1/i. cfi ∝ 1/i = c/i where c is a normalizing constant cfi is collection frequency: the number of occurrences of the term ti in the collection. Prasad L 07 Index. Compression 11

Zipf consequences n If the most frequent term (the) occurs cf 1 times n n n the second most frequent term (of) occurs cf 1/2 times the third most frequent term (and) occurs cf 1/3 times … Equivalent: cfi = c/i where c is a normalizing factor, so n log cfi = log c - log i n Linear relationship between log cfi and log i Prasad L 07 Index. Compression 12

Compression n First, we will consider space for dictionary and postings n Basic Boolean index only n No study of positional indexes, etc. n We will devise compression schemes Prasad L 07 Index. Compression 13

DICTIONARY COMPRESSION Prasad L 07 Index. Compression 14

Why compress the dictionary n Must keep in memory n Search begins with the dictionary n Memory footprint competition n Embedded/mobile devices Prasad L 07 Index. Compression 15

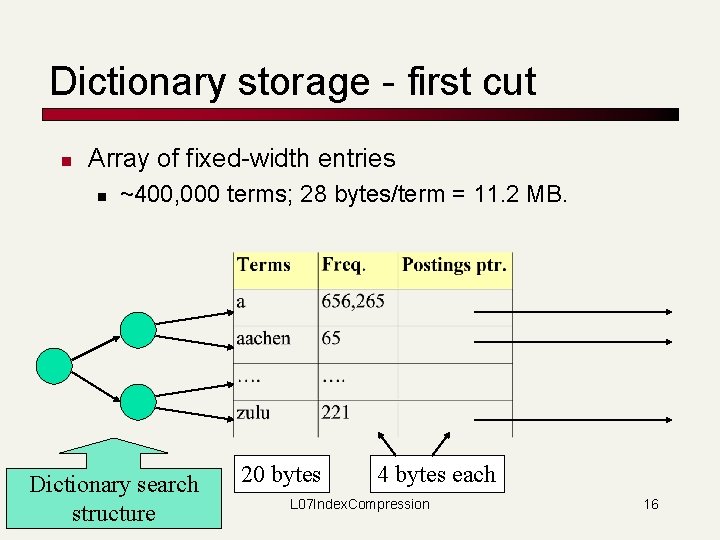

Dictionary storage - first cut n Array of fixed-width entries n ~400, 000 terms; 28 bytes/term = 11. 2 MB. Dictionary search structure 20 bytes 4 bytes each L 07 Index. Compression 16

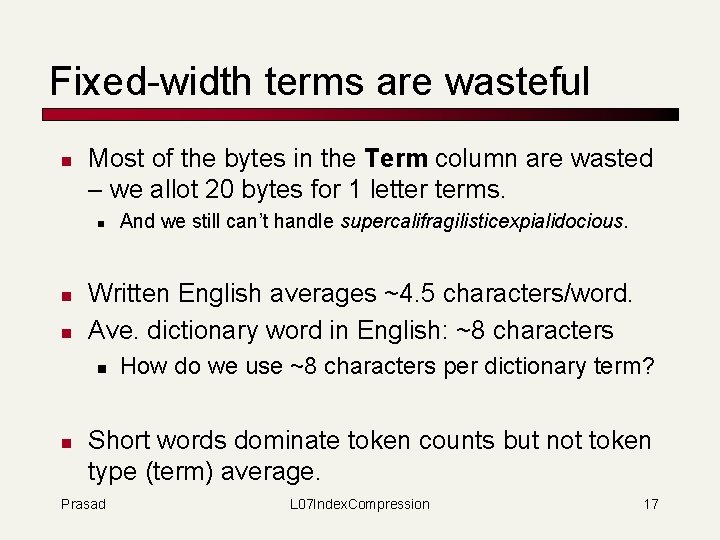

Fixed-width terms are wasteful n Most of the bytes in the Term column are wasted – we allot 20 bytes for 1 letter terms. n n n Written English averages ~4. 5 characters/word. Ave. dictionary word in English: ~8 characters n n And we still can’t handle supercalifragilisticexpialidocious. How do we use ~8 characters per dictionary term? Short words dominate token counts but not token type (term) average. Prasad L 07 Index. Compression 17

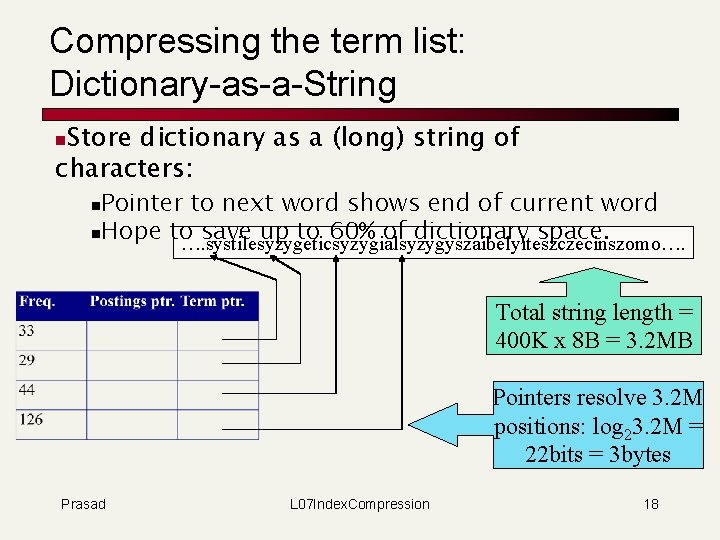

Compressing the term list: Dictionary-as-a-String Store dictionary as a (long) string of characters: n Pointer to next word shows end of current word n. Hope to save up to 60% of dictionary space. …. systilesyzygeticsyzygialsyzygyszaibelyiteszczecinszomo…. n Total string length = 400 K x 8 B = 3. 2 MB Pointers resolve 3. 2 M positions: log 23. 2 M = 22 bits = 3 bytes Prasad L 07 Index. Compression 18

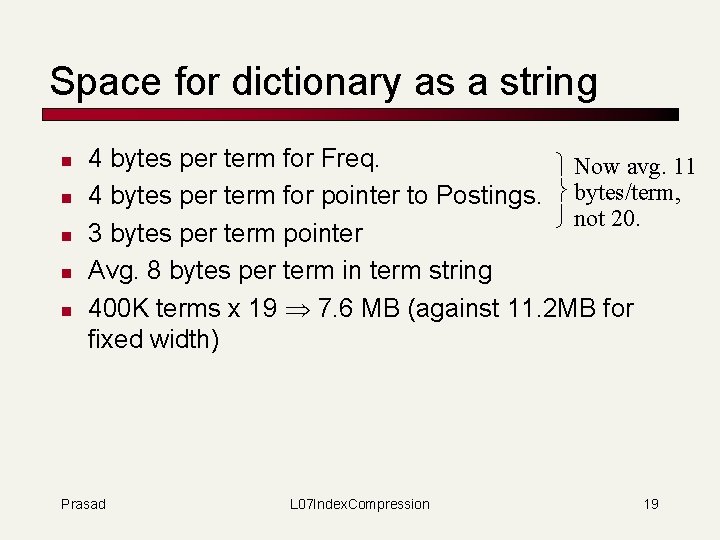

Space for dictionary as a string n n n 4 bytes per term for Freq. Now avg. 11 4 bytes per term for pointer to Postings. bytes/term, not 20. 3 bytes per term pointer Avg. 8 bytes per term in term string 400 K terms x 19 7. 6 MB (against 11. 2 MB for fixed width) Prasad L 07 Index. Compression 19

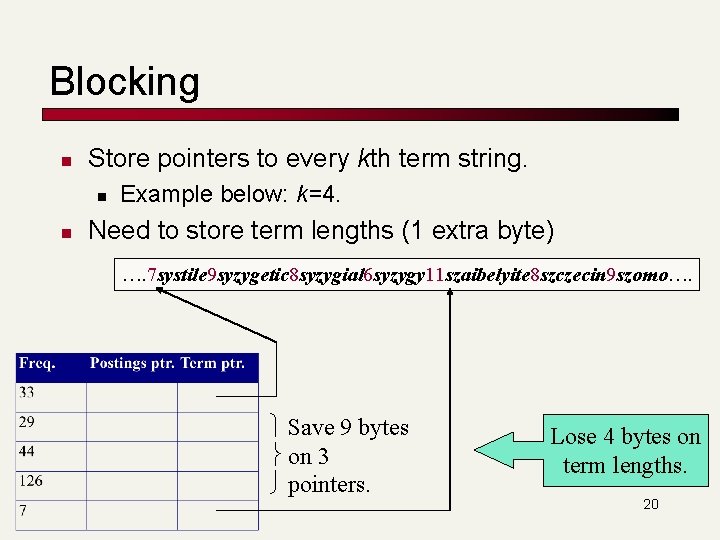

Blocking n Store pointers to every kth term string. n n Example below: k=4. Need to store term lengths (1 extra byte) …. 7 systile 9 syzygetic 8 syzygial 6 syzygy 11 szaibelyite 8 szczecin 9 szomo…. Save 9 bytes on 3 pointers. Lose 4 bytes on term lengths. 20

Net n Where we used 3 bytes/pointer without blocking n 3 x 4 = 12 bytes for k=4 pointers, now we use 3+4=7 bytes for 4 pointers. Shaved another ~0. 5 MB; can save more with larger k. Why not go with larger k? Prasad L 07 Index. Compression 21

Exercise n Estimate the space usage (and savings compared to 7. 6 MB) with blocking, for block sizes of k = 4, 8 and 16. n n Prasad For k = 8. For every block of 8, need to store extra 8 bytes for length For every block of 8, can save 7 * 3 bytes for term pointer Saving (+8 – 21)/8 * 400 K = 0. 65 MB L 07 Index. Compression 22

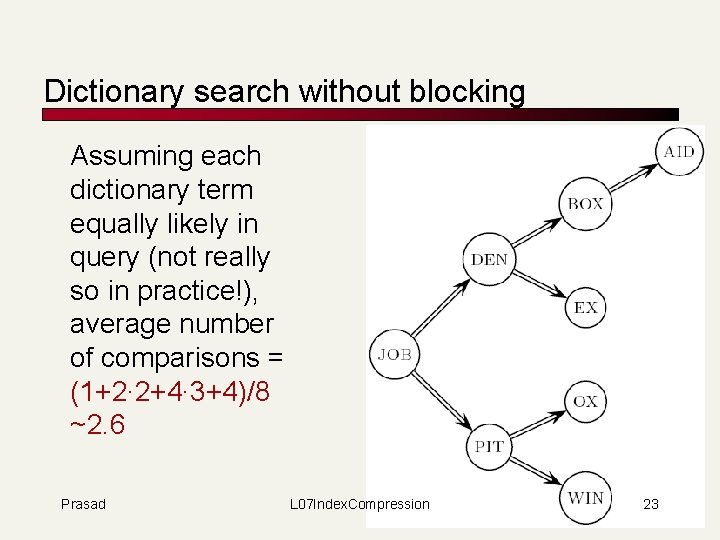

Dictionary search without blocking Assuming each dictionary term equally likely in query (not really so in practice!), average number of comparisons = (1+2∙ 2+4∙ 3+4)/8 ~2. 6 Prasad L 07 Index. Compression 23

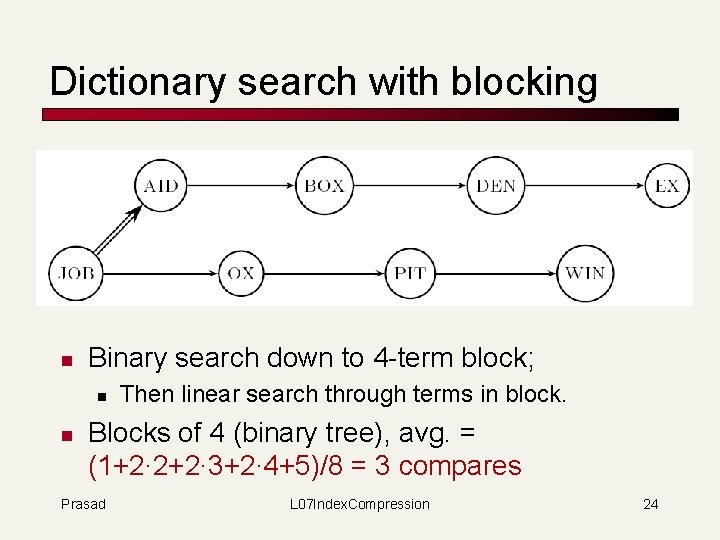

Dictionary search with blocking n Binary search down to 4 -term block; n n Then linear search through terms in block. Blocks of 4 (binary tree), avg. = (1+2∙ 2+2∙ 3+2∙ 4+5)/8 = 3 compares Prasad L 07 Index. Compression 24

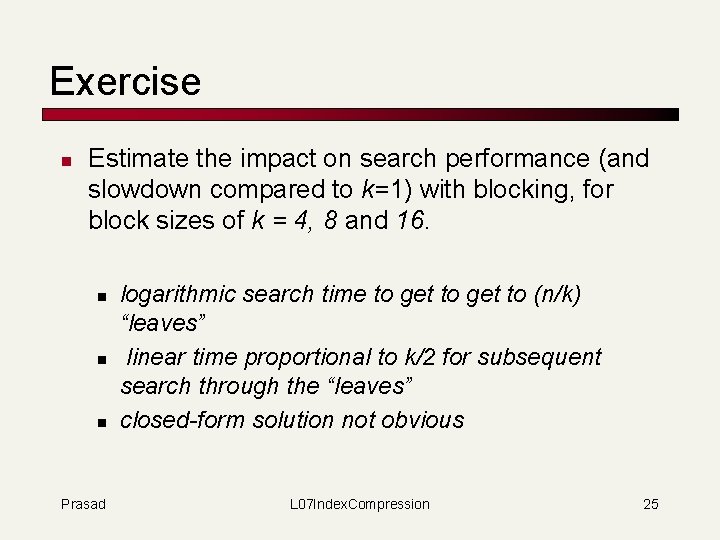

Exercise n Estimate the impact on search performance (and slowdown compared to k=1) with blocking, for block sizes of k = 4, 8 and 16. n n n Prasad logarithmic search time to get to (n/k) “leaves” linear time proportional to k/2 for subsequent search through the “leaves” closed-form solution not obvious L 07 Index. Compression 25

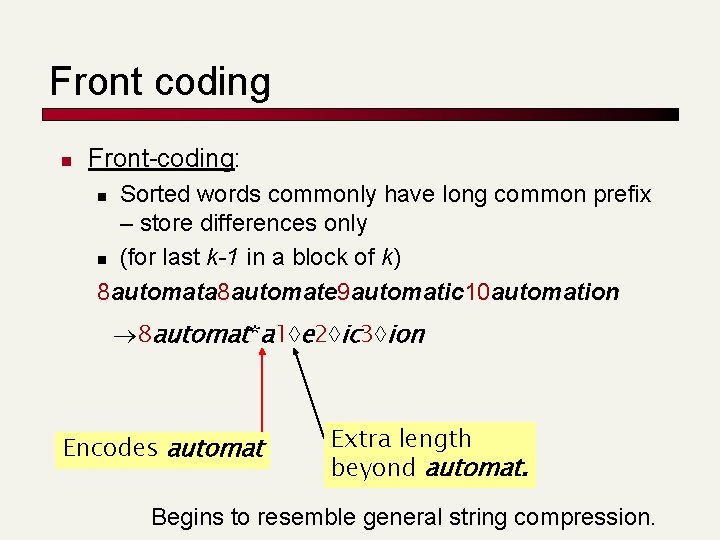

Front coding n Front-coding: Sorted words commonly have long common prefix – store differences only n (for last k-1 in a block of k) 8 automata 8 automate 9 automatic 10 automation n 8 automat*a 1 e 2 ic 3 ion Encodes automat Extra length beyond automat. Begins to resemble general string compression.

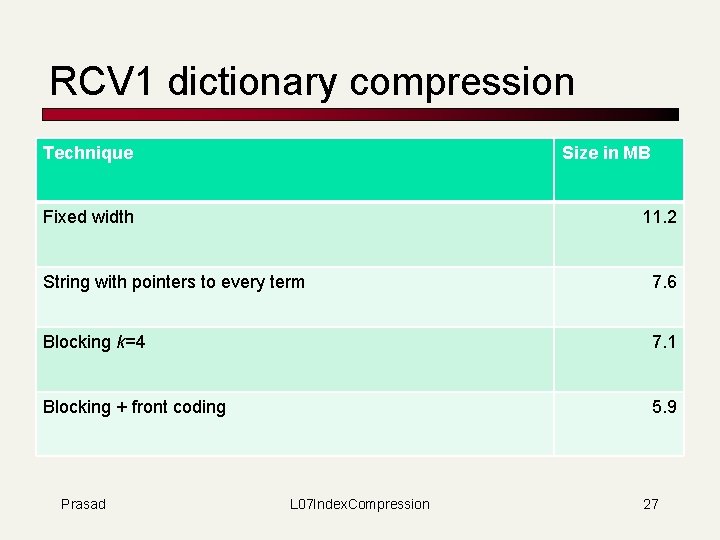

RCV 1 dictionary compression Technique Size in MB Fixed width 11. 2 String with pointers to every term 7. 6 Blocking k=4 7. 1 Blocking + front coding 5. 9 Prasad L 07 Index. Compression 27

POSTINGS COMPRESSION Prasad L 07 Index. Compression 28

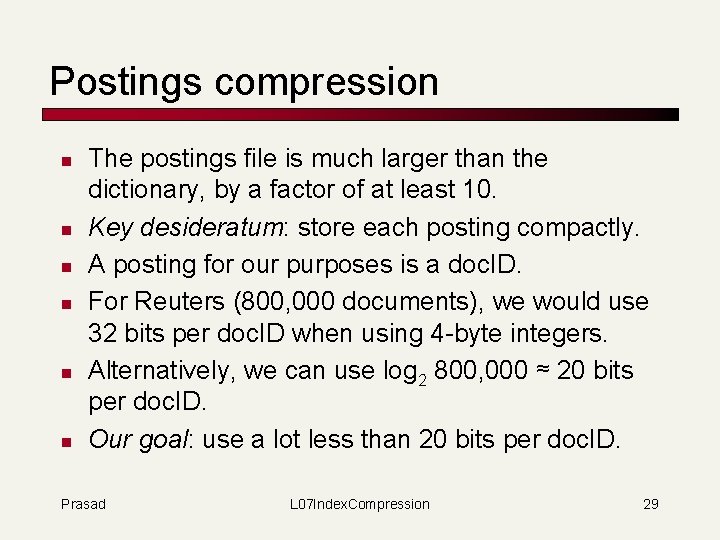

Postings compression n n n The postings file is much larger than the dictionary, by a factor of at least 10. Key desideratum: store each posting compactly. A posting for our purposes is a doc. ID. For Reuters (800, 000 documents), we would use 32 bits per doc. ID when using 4 -byte integers. Alternatively, we can use log 2 800, 000 ≈ 20 bits per doc. ID. Our goal: use a lot less than 20 bits per doc. ID. Prasad L 07 Index. Compression 29

Postings: two conflicting forces n n A term like arachnocentric occurs in maybe one doc out of a million – we would like to store this posting using log 2 1 M ~ 20 bits. A term like the occurs in virtually every doc, so 20 bits/posting is too expensive. n Prasad Prefer 0/1 bitmap vector in this case L 07 Index. Compression 30

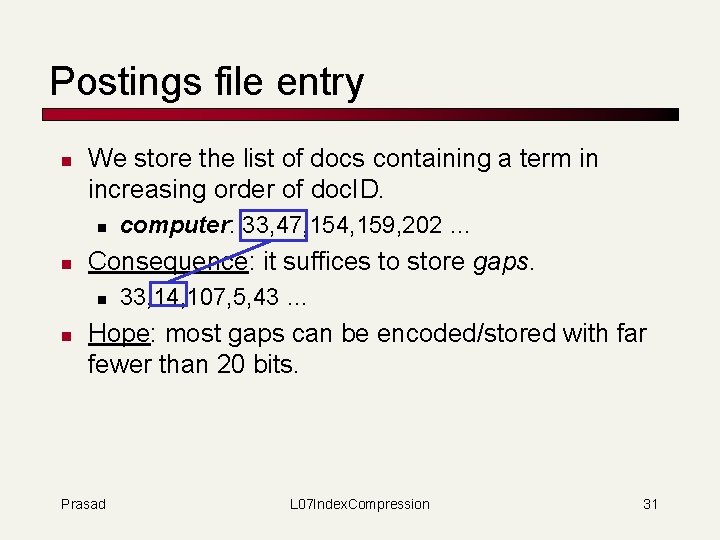

Postings file entry n We store the list of docs containing a term in increasing order of doc. ID. n n Consequence: it suffices to store gaps. n n computer: 33, 47, 154, 159, 202 … 33, 14, 107, 5, 43 … Hope: most gaps can be encoded/stored with far fewer than 20 bits. Prasad L 07 Index. Compression 31

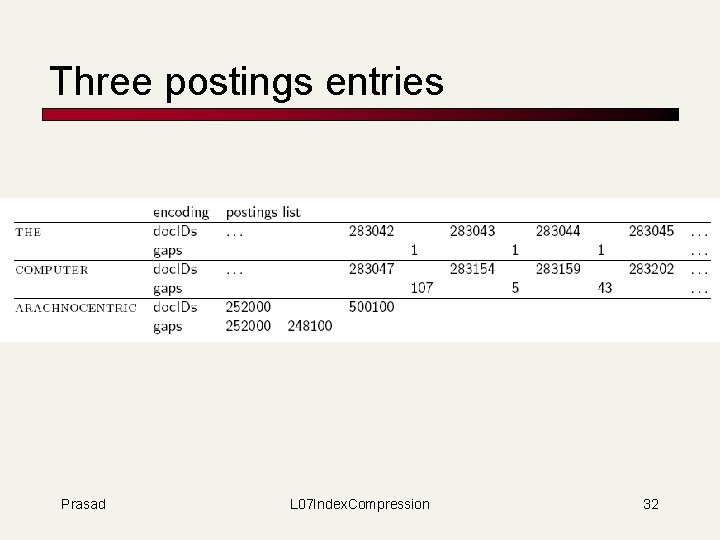

Three postings entries Prasad L 07 Index. Compression 32

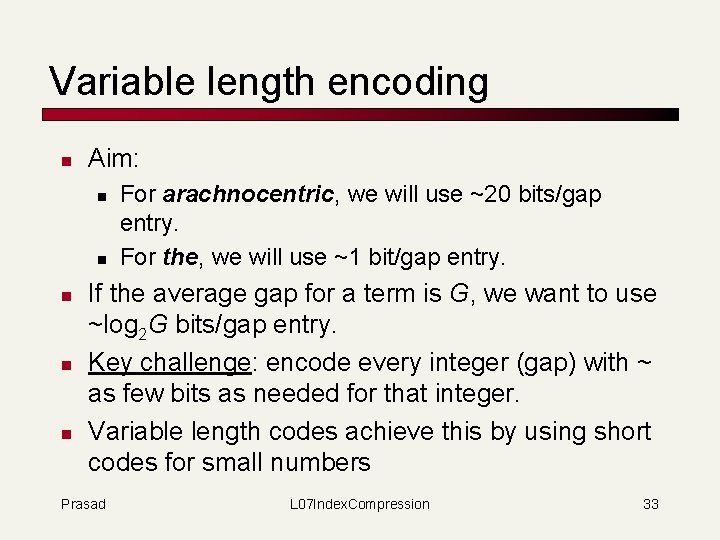

Variable length encoding n Aim: n n n For arachnocentric, we will use ~20 bits/gap entry. For the, we will use ~1 bit/gap entry. If the average gap for a term is G, we want to use ~log 2 G bits/gap entry. Key challenge: encode every integer (gap) with ~ as few bits as needed for that integer. Variable length codes achieve this by using short codes for small numbers Prasad L 07 Index. Compression 33

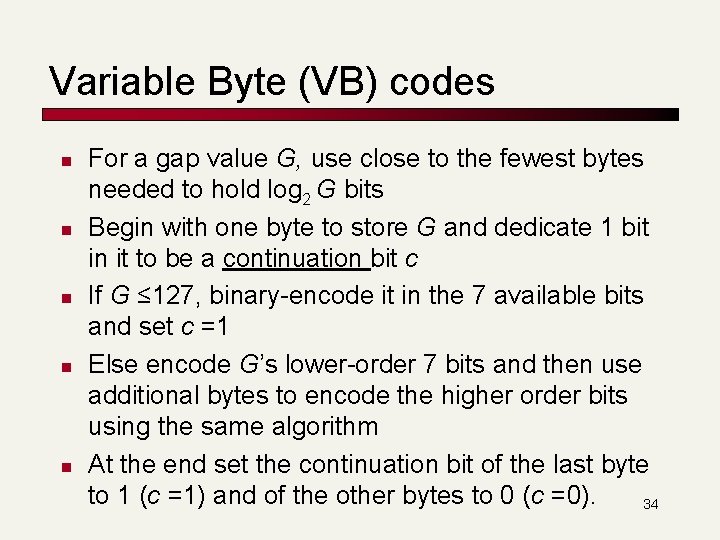

Variable Byte (VB) codes n n n For a gap value G, use close to the fewest bytes needed to hold log 2 G bits Begin with one byte to store G and dedicate 1 bit in it to be a continuation bit c If G ≤ 127, binary-encode it in the 7 available bits and set c =1 Else encode G’s lower-order 7 bits and then use additional bytes to encode the higher order bits using the same algorithm At the end set the continuation bit of the last byte to 1 (c =1) and of the other bytes to 0 (c =0). 34

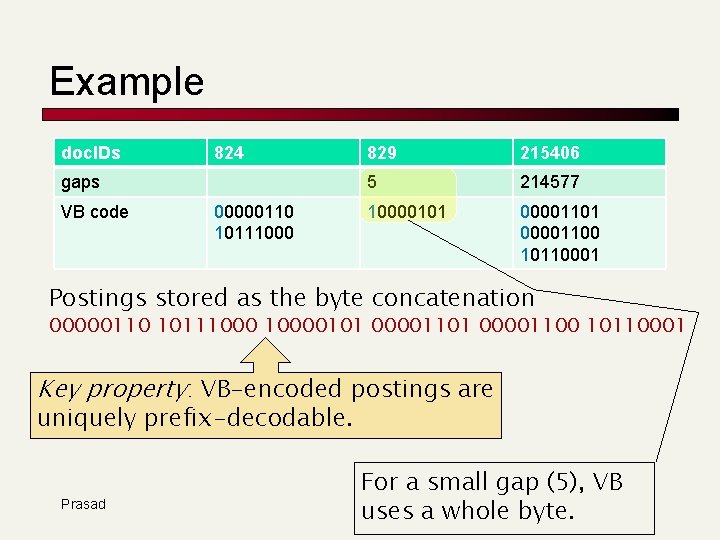

Example doc. IDs 824 gaps VB code 00000110 10111000 829 215406 5 214577 10000101 00001100 10110001 Postings stored as the byte concatenation 00000110 101110000101 00001100 10110001 Key property: VB-encoded postings are uniquely prefix-decodable. Prasad For a small gap (5), VB uses a whole byte.

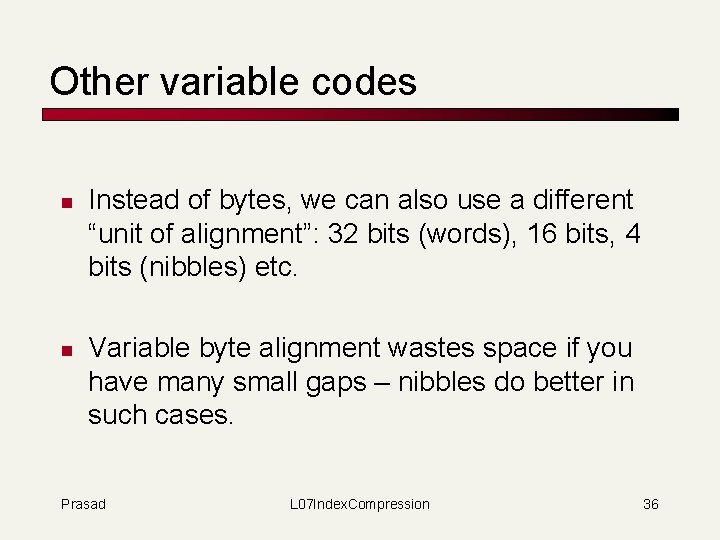

Other variable codes n n Instead of bytes, we can also use a different “unit of alignment”: 32 bits (words), 16 bits, 4 bits (nibbles) etc. Variable byte alignment wastes space if you have many small gaps – nibbles do better in such cases. Prasad L 07 Index. Compression 36

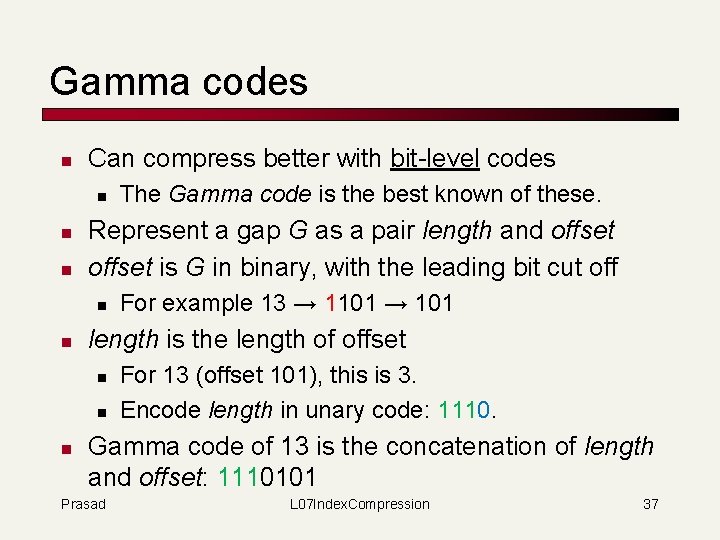

Gamma codes n Can compress better with bit-level codes n n n Represent a gap G as a pair length and offset is G in binary, with the leading bit cut off n n For example 13 → 1101 → 101 length is the length of offset n n n The Gamma code is the best known of these. For 13 (offset 101), this is 3. Encode length in unary code: 1110. Gamma code of 13 is the concatenation of length and offset: 1110101 Prasad L 07 Index. Compression 37

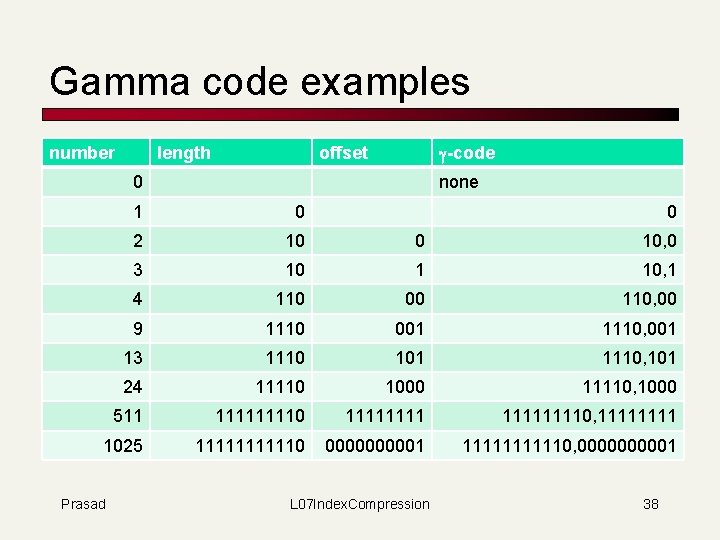

Gamma code examples number length g-code offset 0 none 1 0 2 10 0 10, 0 3 10 1 10, 1 4 110 00 110, 00 9 1110 001 1110, 001 13 1110 101 1110, 101 24 11110 1000 11110, 1000 511 11110 111111110, 1111 1025 111110 000001 111110, 000001 Prasad 0 L 07 Index. Compression 38

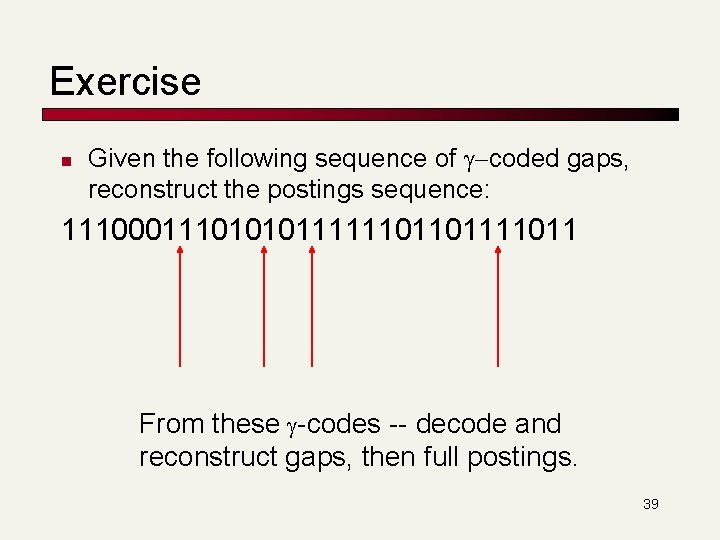

Exercise n Given the following sequence of g-coded gaps, reconstruct the postings sequence: 11100011101010111111011011 From these g-codes -- decode and reconstruct gaps, then full postings. 39

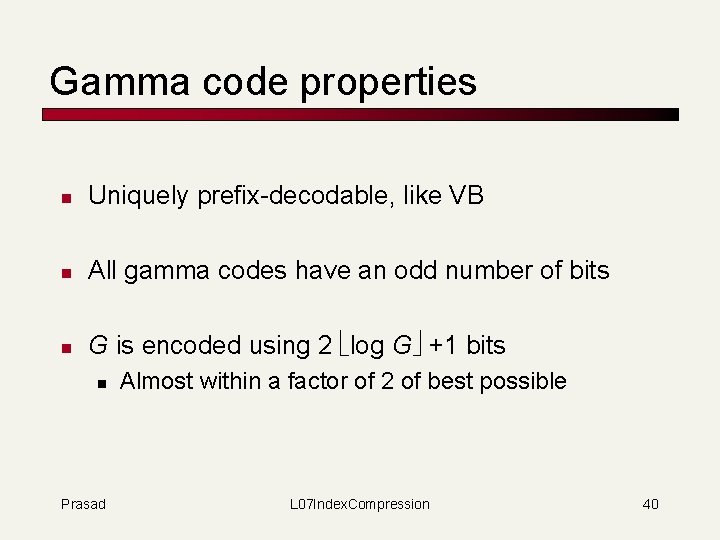

Gamma code properties n Uniquely prefix-decodable, like VB n All gamma codes have an odd number of bits n G is encoded using 2 log G +1 bits n Prasad Almost within a factor of 2 of best possible L 07 Index. Compression 40

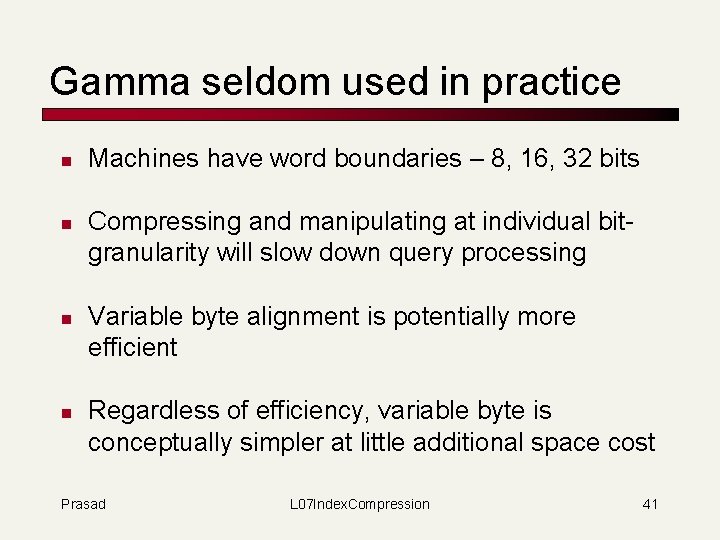

Gamma seldom used in practice n n Machines have word boundaries – 8, 16, 32 bits Compressing and manipulating at individual bitgranularity will slow down query processing Variable byte alignment is potentially more efficient Regardless of efficiency, variable byte is conceptually simpler at little additional space cost Prasad L 07 Index. Compression 41

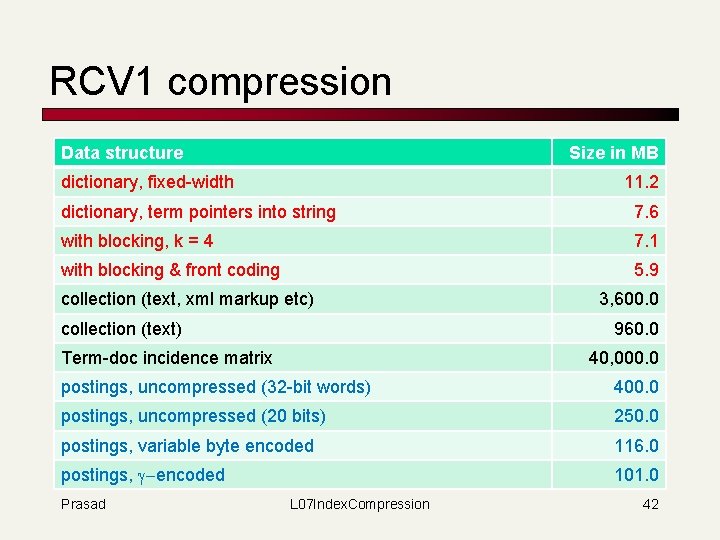

RCV 1 compression Data structure Size in MB dictionary, fixed-width 11. 2 dictionary, term pointers into string 7. 6 with blocking, k = 4 7. 1 with blocking & front coding 5. 9 collection (text, xml markup etc) collection (text) 3, 600. 0 960. 0 Term-doc incidence matrix 40, 000. 0 postings, uncompressed (32 -bit words) 400. 0 postings, uncompressed (20 bits) 250. 0 postings, variable byte encoded 116. 0 postings, g-encoded 101. 0 Prasad L 07 Index. Compression 42

Index compression summary n n n We can now create an index for highly efficient Boolean retrieval that is very space efficient Only 4% of the total size of the collection Only 10 -15% of the total size of the text in the collection However, we’ve ignored positional information Hence, space savings are less for indexes used in practice n Prasad But techniques substantially the same. L 07 Index. Compression 43

Models of Natural Language Necessary for analysis : Size estimation 44

Text properties/model n How are different words distributed inside each document? n n n Zipf’s Law: The frequency of ith most frequent word is 1/i times that of the most frequent word. 50% of the words are stopwords. How are words distributed across the documents in the collection? n Fraction of documents containing a word k times follows binomial distribution. 45

n n n Probability of occurrence of a symbol depends on previous symbol. (Finite-Context or Markovian Model) The number of distinct words in a document (vocabulary) grows as the square root of the size of the document. (Heap’s Law) The average length of non-stop words is 6 to 7 letters. 46

Similarity n n n Hamming Distance between a pair of strings of same length is the number of positions that have different characters. Levenshtein (Edit) Distance is the minimum number of character insertions, deletions, and substituitions needed to make two strings the same. (Extensions include transposition, weighted operations, etc) UNIX diff utility uses Longest Common Subsequence, obtained by deletion, to align strings/words/lines. 47

Index Size Estimation Using Zipf’s Law SKIP : from old slides 48

Corpus size for estimates n n Consider N = 1 M documents, each with about L=1 K terms. Avg 6 bytes/term incl. spaces/punctuation n n 6 GB of data. Say there are m = 500 K distinct terms among these. 49

Recall: Don’t build the matrix n n A 500 K x 1 M matrix has half-a-trillion 0’s and 1’s (500 billion). But it has no more than one billion 1’s. n n So we devised the inverted index n n matrix is extremely sparse. Devised query processing for it Now let us estimate the size of index 50

Rough analysis based on Zipf n n n The i th most frequent term has frequency proportional to 1/i Let this frequency be c/i. Then The k th Harmonic number is Thus c = 1/Hm , which is ~ 1/ln m = 1/ln(500 k) ~ 1/13. So the i th most frequent term has frequency roughly 1/13 i. 51

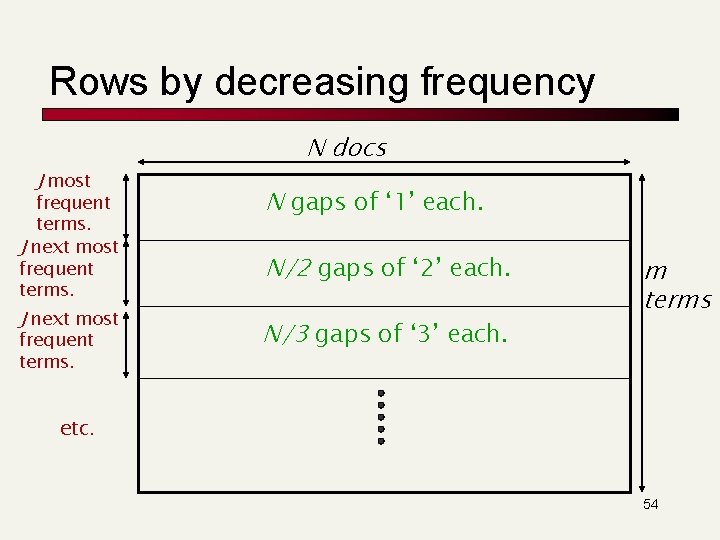

Postings analysis (contd. ) n Expected number of occurrences of the i th most frequent term in a doc of length L is: Lc/i ≈ L/13 i ≈ 76/i for L=1000. Let J = Lc ~ 76. Then the J most frequent terms are likely to occur in every document. Now imagine the term-document incidence matrix with rows sorted in decreasing order of term frequency: 52

Informal Observations n n n Most frequent term appears approx 76 times in each document. 2 nd most frequent term appears approx 38 times in each document. … 76 th most frequent term appears approx once in each document. First 76 terms appear at least once in each document. Next 76 terms appear at least once in every two documents. … Prasad L 07 Index. Compression 53

Rows by decreasing frequency J most frequent terms. J next most frequent terms. N docs N gaps of ‘ 1’ each. N/2 gaps of ‘ 2’ each. N/3 gaps of ‘ 3’ each. m terms etc. 54

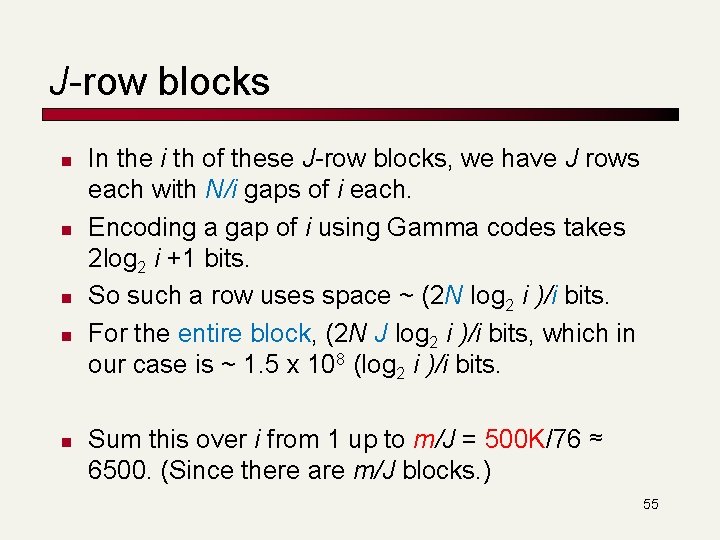

J-row blocks n n n In the i th of these J-row blocks, we have J rows each with N/i gaps of i each. Encoding a gap of i using Gamma codes takes 2 log 2 i +1 bits. So such a row uses space ~ (2 N log 2 i )/i bits. For the entire block, (2 N J log 2 i )/i bits, which in our case is ~ 1. 5 x 108 (log 2 i )/i bits. Sum this over i from 1 up to m/J = 500 K/76 ≈ 6500. (Since there are m/J blocks. ) 55

Exercise n n Work out the above sum and show it adds up to about 55 x 150 Mbits, which is about 1 GByte. So we’ve taken 6 GB of text and produced from it a 1 GB index that can handle Boolean queries! n Neat! (16. 7%) Make sure you understand all the approximations in our probabilistic calculation. 56

- Slides: 56