Improving Your Science SampleSize Planning PreRegistration and Reproducible

Improving Your Science Sample-Size Planning, Pre-Registration, and Reproducible Data Analysis Christophe Bernard Aix-Marseille University, Editor of e. Neuro Bob Calin-Jageman Dominican University Brian Wandell Stanford University, Flywheel. io Marina Picciotto Yale University, Editor of the Journal of Neuroscience Open Science Page https: //osf. io/5 awp 4/ aka https: //tinyurl. com/improve-neuro-2018

The “New Statistics” for Neuroscience: Using Effect Sizes and Confidence Intervals for Better Inference Bob Calin-Jageman Neuroscience Program, Dominican University @The. New. Stats The first principle is that you must not fool yourself – and you are the easiest person to fool. - Richard Feynman, 1974

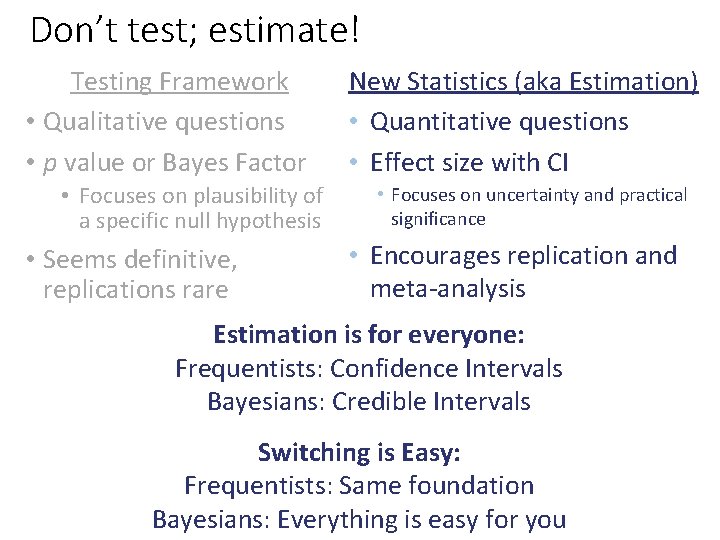

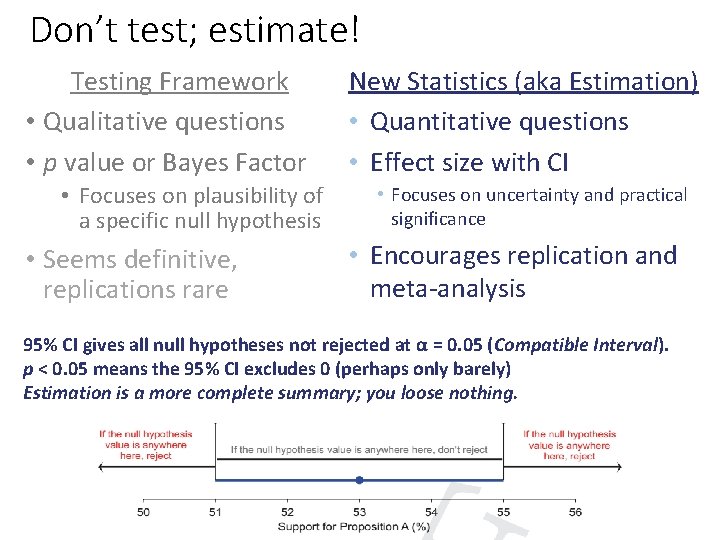

Two roads diverged in the woods… Statistical inference has two traditions: Testing Approach • Qualitative questions • p value or Bayes Factor • Focuses on plausibility of a specific null hypothesis • Seems definitive, replications rare Estimation Approach (“New Stats”) • Quantitative questions • Effect size with CI • Focuses on uncertainty and practical significance • Encourages replication and meta-analysis Same mathematical foundations; just different ways of summarizing the data and very different ways of thinking. Estimation fosters better inference and better science

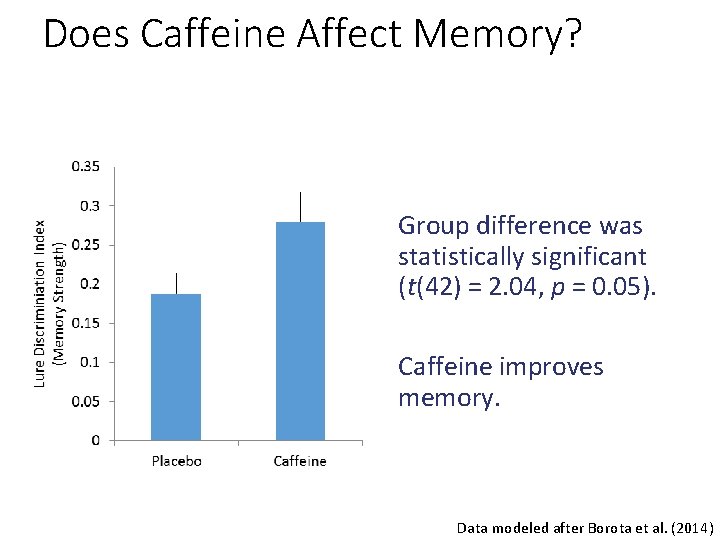

Does Caffeine Affect Memory? Group difference was statistically significant (t(42) = 2. 04, p = 0. 05). Caffeine improves memory. Data modeled after Borota et al. (2014)

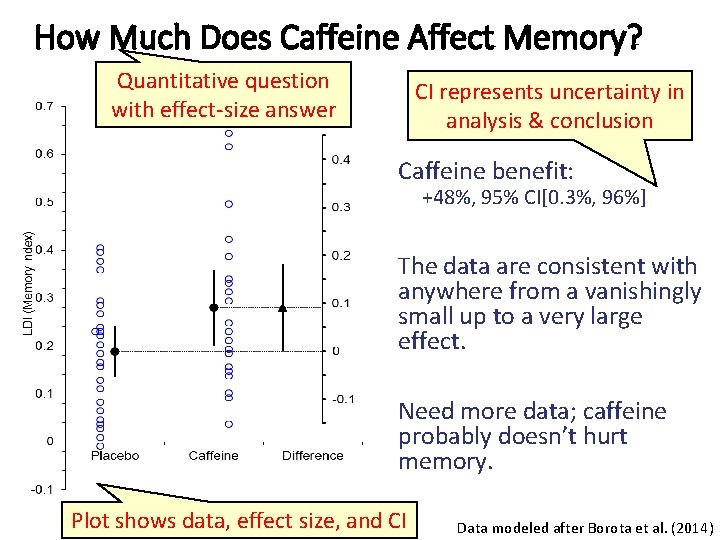

Does. Much Caffeine Affect Memory? How Does Caffeine Memory? Quantitative question with effect-size answer CI represents uncertainty in analysis & conclusion Caffeine benefit: +48%, 95% CI[0. 3%, 96%] The data are consistent with anywhere from a vanishingly small up to a very large effect. Need more data; caffeine probably doesn’t hurt memory. Plot shows data, effect size, and CI Data modeled after Borota et al. (2014)

How Much Does Caffeine Affect Memory? • We repeated the experiment…. • We combined data across the two experiments for the placebo and 200 mg caffeine conditions to increase power. • We found that performance for the 200 mg caffeine condition was higher than that for placebo (t(71) = 2. 0, p = 0. 049) Data modeled after Borota et al. (2014)

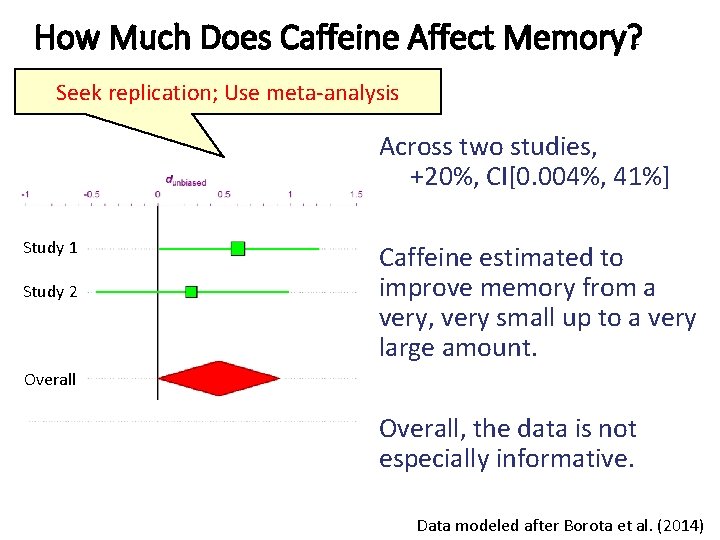

How Much Does Caffeine Affect Memory? Seek replication; Use meta-analysis Across two studies, +20%, CI[0. 004%, 41%] Study 1 Study 2 Caffeine estimated to improve memory from a very, very small up to a very large amount. Overall, the data is not especially informative. Data modeled after Borota et al. (2014)

Don’t test; estimate! Testing Framework • Qualitative questions • p value or Bayes Factor • Focuses on plausibility of a specific null hypothesis • Seems definitive, replications rare New Statistics (aka Estimation) • Quantitative questions • Effect size with CI • Focuses on uncertainty and practical significance • Encourages replication and meta-analysis Estimation is for everyone: Frequentists: Confidence Intervals Bayesians: Credible Intervals Switching is Easy: Frequentists: Same foundation Bayesians: Everything is easy for you

Don’t test; estimate! Testing Framework • Qualitative questions • p value or Bayes Factor • Focuses on plausibility of a specific null hypothesis • Seems definitive, replications rare New Statistics (aka Estimation) • Quantitative questions • Effect size with CI • Focuses on uncertainty and practical significance • Encourages replication and meta-analysis 95% CI gives all null hypotheses not rejected at α = 0. 05 (Compatible Interval). p < 0. 05 means the 95% CI excludes 0 (perhaps only barely) Estimation is a more complete summary; you loose nothing.

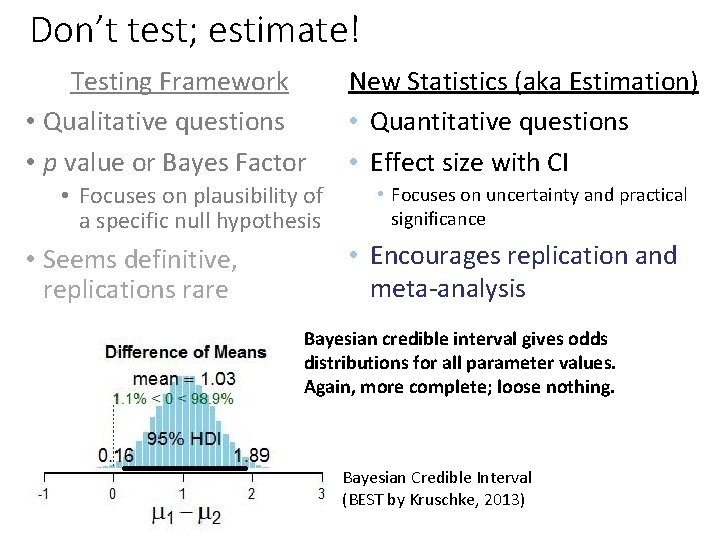

Don’t test; estimate! Testing Framework • Qualitative questions • p value or Bayes Factor • Focuses on plausibility of a specific null hypothesis • Seems definitive, replications rare New Statistics (aka Estimation) • Quantitative questions • Effect size with CI • Focuses on uncertainty and practical significance • Encourages replication and meta-analysis Bayesian credible interval gives odds distributions for all parameter values. Again, more complete; loose nothing. Bayesian Credible Interval (BEST by Kruschke, 2013)

Estimation -> Better inference • Mitigate overconfidence in small samples • Reduce confirmation and publication bias • Help avoid analytic errors • Calibrate expectations for replications • Makes planning sample size much easier • Helps you do more robust and reliable science • And more… The primary product of a research inquiry is one or more measures of effect size, not p values. -Jacob Cohen, 1990

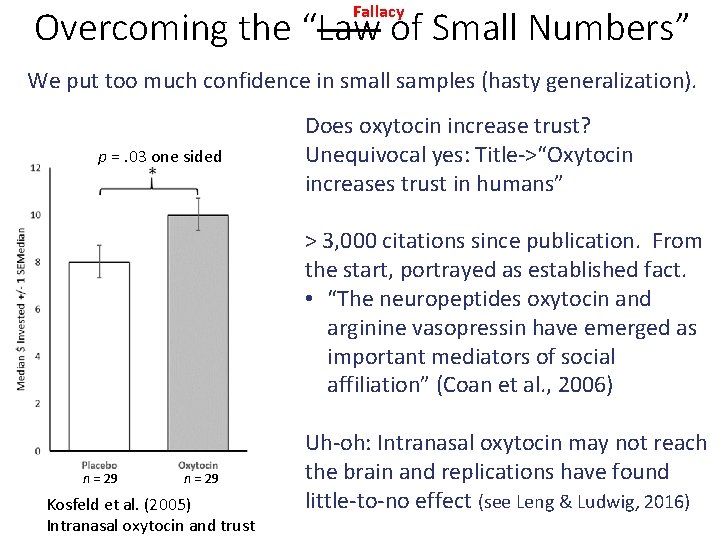

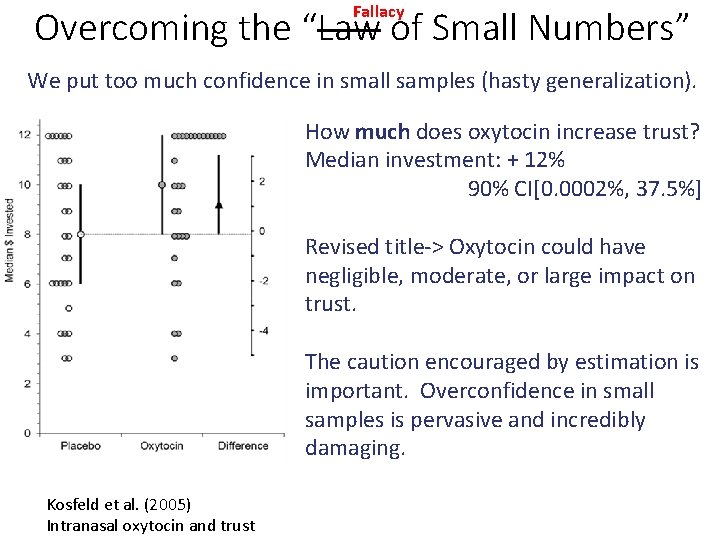

Fallacy Overcoming the “Law of Small Numbers” We put too much confidence in small samples (hasty generalization). p =. 03 one sided Does oxytocin increase trust? Unequivocal yes: Title->“Oxytocin increases trust in humans” > 3, 000 citations since publication. From the start, portrayed as established fact. • “The neuropeptides oxytocin and arginine vasopressin have emerged as important mediators of social affiliation” (Coan et al. , 2006) n = 29 Kosfeld et al. (2005) Intranasal oxytocin and trust Uh-oh: Intranasal oxytocin may not reach the brain and replications have found little-to-no effect (see Leng & Ludwig, 2016)

Fallacy Overcoming the “Law of Small Numbers” We put too much confidence in small samples (hasty generalization). How much does oxytocin increase trust? Median investment: + 12% 90% CI[0. 0002%, 37. 5%] Revised title-> Oxytocin could have negligible, moderate, or large impact on trust. The caution encouraged by estimation is important. Overconfidence in small samples is pervasive and incredibly damaging. Kosfeld et al. (2005) Intranasal oxytocin and trust

Fallacy Overcoming the “Law of Small Numbers” We put too much confidence in small samples (hasty generalization). • In oxytocin research, typical human behavior study has power of 0. 16 (Walum et al. , 2016). • In psychology and cognitive neuroscience studies, typical power to detect small, medium, and large effects is 0. 12, 0. 44, 0. 73 (Szucs & Ioannidis, 2016) • Typical power is 0. 08 in neuroimaging and. 18 -. 31 in animal model neuroscience research (Button et al. , 2013) • Low power -> imprecise CIs (uninformative) and inflated effect size estimates (misleading). • “Worse than doing nothing” (Mitchell, 2018, p. 112) We can fix this: • Report CIs • Ensure conclusions reflect the entire range of uncertainty. • Plan for precision (for a Mo. E reasonable for your research question) I suspect that the main reason they [CIs] are not reported is that they are so embarrassingly large! - Cohen, 1994

Reducing confirmation bias • Science depends on even-handed weighing of evidence. • In the decision-making approach, we are more skeptical of negative results and fail to publish them (e. g. Button et al. , 2013). Can’t prove negative Low power You goofed +

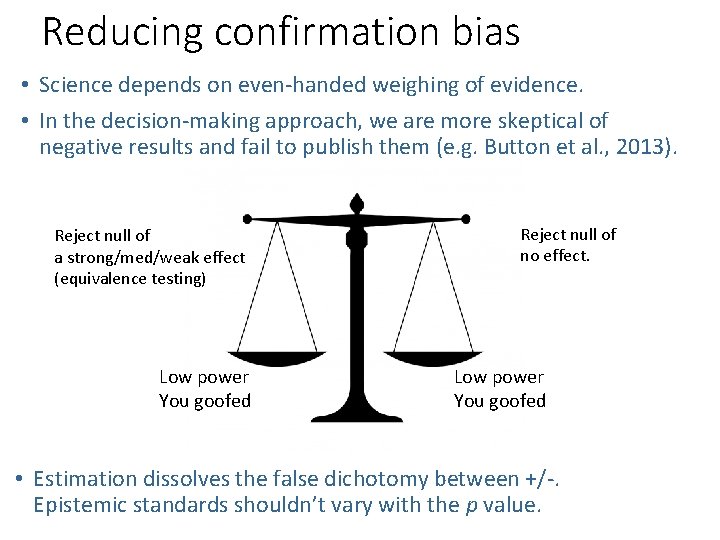

Reducing confirmation bias • Science depends on even-handed weighing of evidence. • In the decision-making approach, we are more skeptical of negative results and fail to publish them (e. g. Button et al. , 2013). Reject null of a strong/med/weak effect (equivalence testing) Low power You goofed Reject null of no effect. Low power You goofed • Estimation dissolves the false dichotomy between +/-. Epistemic standards shouldn’t vary with the p value.

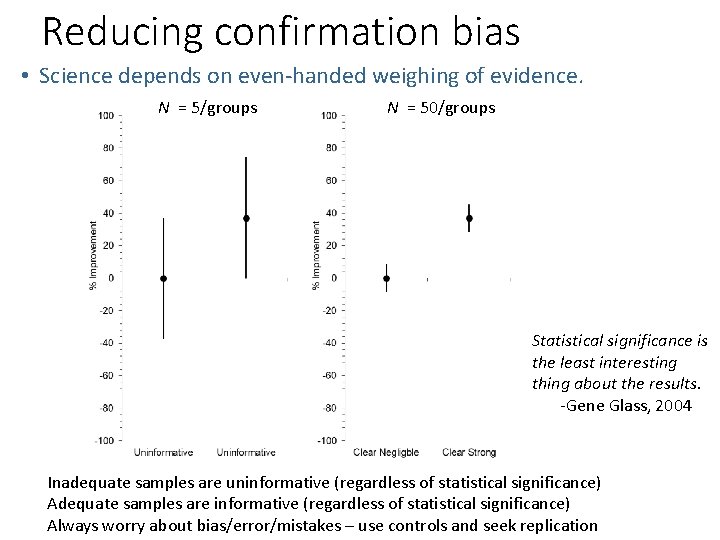

Reducing confirmation bias • Science depends on even-handed weighing of evidence. N = 5/groups N = 50/groups Statistical significance is the least interesting thing about the results. -Gene Glass, 2004 Inadequate samples are uninformative (regardless of statistical significance) Adequate samples are informative (regardless of statistical significance) Always worry about bias/error/mistakes – use controls and seek replication

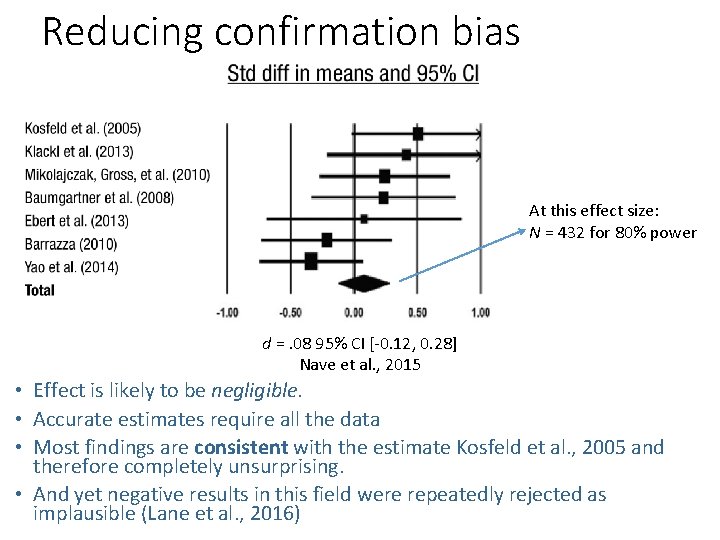

Reducing confirmation bias At this effect size: N = 432 for 80% power d =. 08 95% CI [-0. 12, 0. 28] Nave et al. , 2015 • Effect is likely to be negligible. • Accurate estimates require all the data • Most findings are consistent with the estimate Kosfeld et al. , 2005 and therefore completely unsurprising. • And yet negative results in this field were repeatedly rejected as implausible (Lane et al. , 2016)

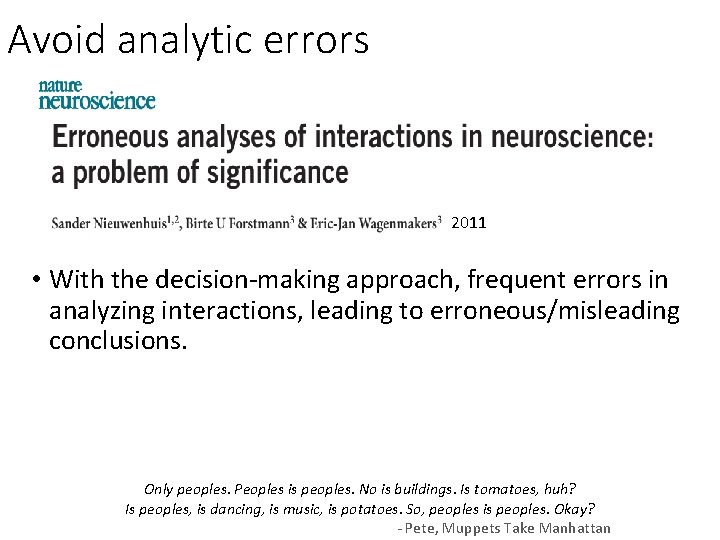

Avoid analytic errors 2011 • With the decision-making approach, frequent errors in analyzing interactions, leading to erroneous/misleading conclusions. Only peoples. Peoples is peoples. No is buildings. Is tomatoes, huh? Is peoples, is dancing, is music, is potatoes. So, peoples is peoples. Okay? - Pete, Muppets Take Manhattan

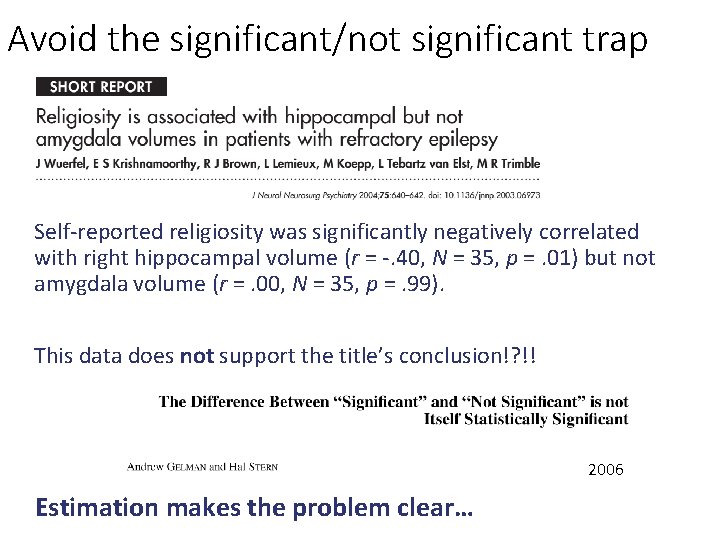

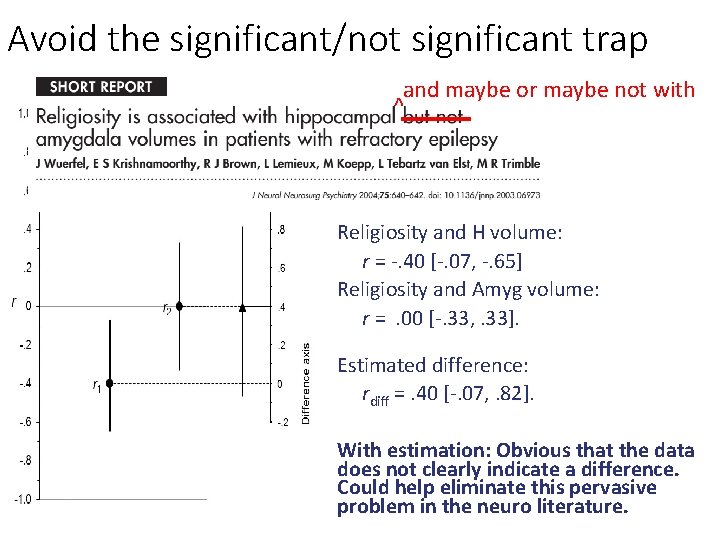

Avoid the significant/not significant trap Self-reported religiosity was significantly negatively correlated with right hippocampal volume (r = -. 40, N = 35, p =. 01) but not amygdala volume (r =. 00, N = 35, p =. 99). This data does not support the title’s conclusion!? !! 2006 Estimation makes the problem clear…

Avoid the significant/not significant trap and maybe or maybe not with ^ Religiosity and H volume: r = -. 40 [-. 07, -. 65] Religiosity and Amyg volume: r =. 00 [-. 33, . 33]. Estimated difference: rdiff =. 40 [-. 07, . 82]. With estimation: Obvious that the data does not clearly indicate a difference. Could help eliminate this pervasive problem in the neuro literature.

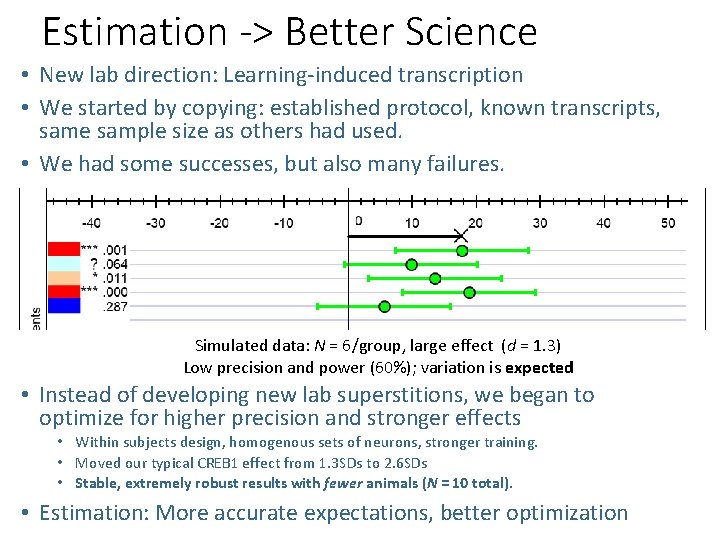

Estimation -> Better Science • New lab direction: Learning-induced transcription • We started by copying: established protocol, known transcripts, same sample size as others had used. • We had some successes, but also many failures. For CREB 1? WTF? Crap students? Seasonal variation? Bad batch of animals? We started developing lab superstitions Simulated data: N = 6/group, large effect (d = 1. 3) Low precision and power (60%); variation is expected • Instead of developing new lab superstitions, we began to optimize for higher precision and stronger effects • Within subjects design, homogenous sets of neurons, stronger training. • Moved our typical CREB 1 effect from 1. 3 SDs to 2. 6 SDs • Stable, extremely robust results with fewer animals (N = 10 total). • Estimation: More accurate expectations, better optimization

Estimation -> Better inference • Mitigate overconfidence in small samples • Reduce confirmation and publication bias • Help avoid analytic errors • Calibrate expectations for replications • Makes planning sample size much easier • Helps you do more robust and reliable science • And more… The primary product of a research inquiry is one or more measures of effect size, not p values. -Jacob Cohen, 1990

For better science, use estimation • Ask quantitative questions and give quantitative answers (effect sizes). • Countenance uncertainty by visualizing and interpreting confidence intervals or credible intervals. (Remember CIs represent best case scenario for sampling error only) • Seek replication and use meta-analysis as a matter of course. • This will help, but more is needed (e. g. Open Science practices, different incentives, etc. ) There is no uncertainty that we can’t quantify. - Emery Brown, MIT

Yeah, but… • We need to make decisions • CIs support decision making, but with fuller context • p values aren’t bad; it’s the way we use them • Ok, but to use a p value well you end up needing to consult the CI • It’ll never get through review: • Hodgkin and Huxley didn’t p all over their manuscripts. • Journals are getting hip to estimation (though room to grow) • “Consistent with the recommendation of the American Statistical Association (Wasserstein & Lazar, 2016), we do not report p values, reporting instead effect sizes and 95% CIs. Where the CI does not include 0 that result is statistically significant at α =. 05. ” • Won’t change a thing. • Worrisome possibility. What is needed is not just a mechanical change in reporting, but an awakening in terms of thinking about uncertainty.

Getting Started Open Science Page https: //osf. io/5 awp 4/ aka https: //tinyurl. com/improve-neuro-2018 • Forthcoming in American Statistician: https: //psyarxiv. com/3 mztg • Estimation Approach: • https: //tinyurl. com/new-stats-resources - links to videos, tools, and other resources • @The. New. Stats– Twitter feed on resources and applications of estimation o Software: Estimate in a paper? Tweet me! o ESCI – free Excel tools for the New Stats o ESPSS – plugins for SPSS to get New Stats output o Jamovi and JASP – Free open-source software that supports Bayesian and frequentist estimation o Lots of good R packages for estimation approach o Open Science Framework o Main page: https: //osf. io o Pre-registration challenge: https: //cos. io/prereg/ o Sample-size planning: o Not just about power; learn about Planning for Precision: https: //osf. io/5 awp 4/

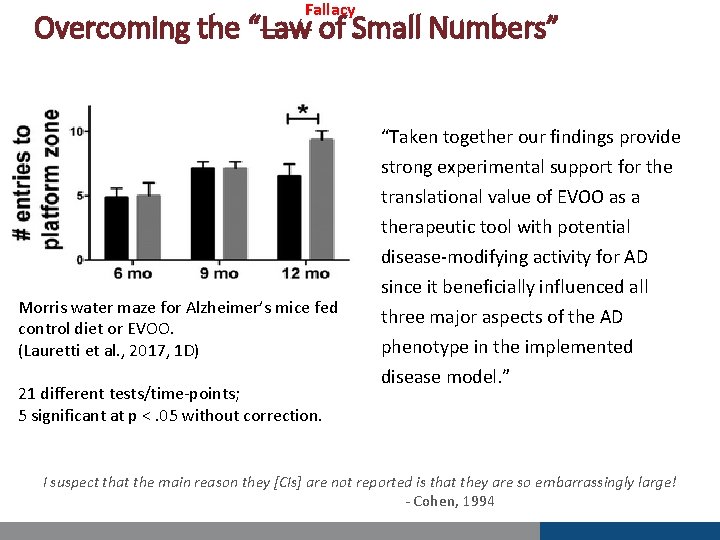

Fallacy Overcoming the “Law of Small Numbers” “Taken together our findings provide Morris water maze for Alzheimer’s mice fed control diet or EVOO. (Lauretti et al. , 2017, 1 D) 21 different tests/time-points; 5 significant at p <. 05 without correction. strong experimental support for the translational value of EVOO as a therapeutic tool with potential disease-modifying activity for AD since it beneficially influenced all three major aspects of the AD phenotype in the implemented disease model. ” I suspect that the main reason they [CIs] are not reported is that they are so embarrassingly large! - Cohen, 1994

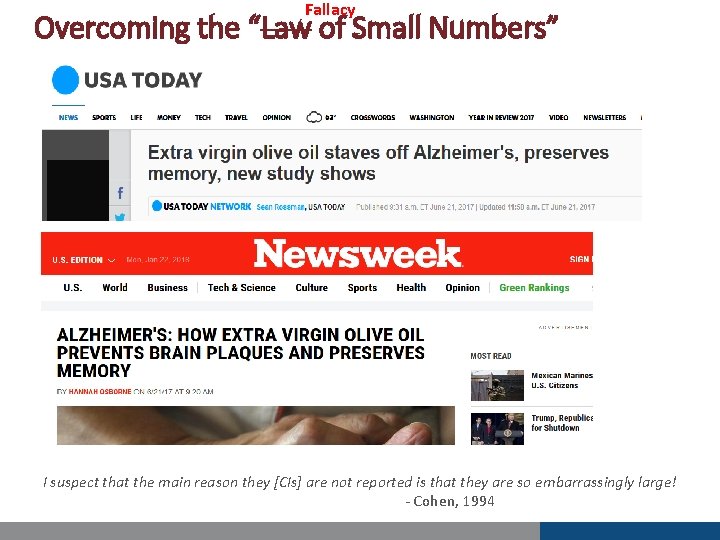

Fallacy Overcoming the “Law of Small Numbers” I suspect that the main reason they [CIs] are not reported is that they are so embarrassingly large! - Cohen, 1994

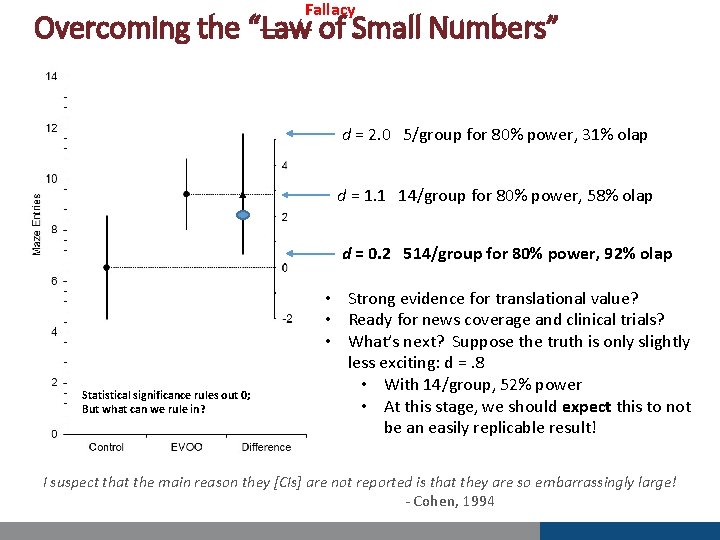

Fallacy Overcoming the “Law of Small Numbers” d = 2. 0 5/group for 80% power, 31% olap d = 1. 1 14/group for 80% power, 58% olap d = 0. 2 514/group for 80% power, 92% olap Statistical significance rules out 0; But what can we rule in? • Strong evidence for translational value? • Ready for news coverage and clinical trials? • What’s next? Suppose the truth is only slightly less exciting: d =. 8 • With 14/group, 52% power • At this stage, we should expect this to not be an easily replicable result! I suspect that the main reason they [CIs] are not reported is that they are so embarrassingly large! - Cohen, 1994

- Slides: 30