Improving Openness and Reproducibility of Scientific Research Tim

Improving Openness and Reproducibility of Scientific Research Tim Errington Center for Open Science http: //cos. io/

Mission: Improve openness, integrity, and reproducibility of scientific research

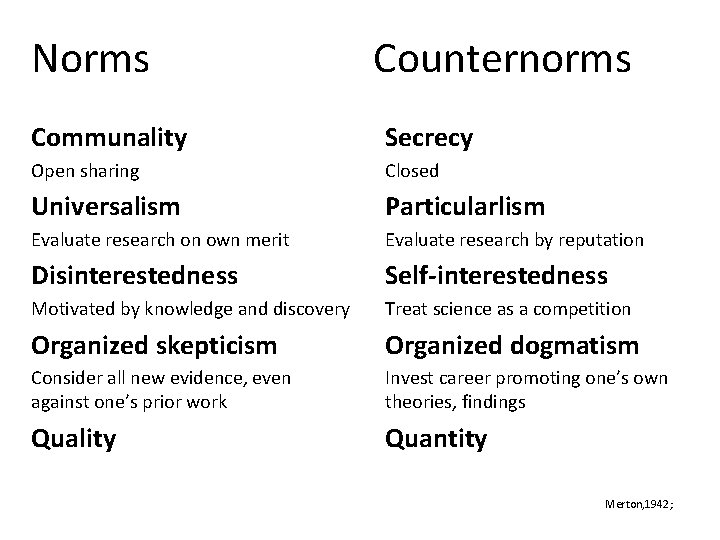

Norms Counternorms Communality Secrecy Open sharing Closed Universalism Particularlism Evaluate research on own merit Evaluate research by reputation Disinterestedness Self-interestedness Motivated by knowledge and discovery Treat science as a competition Organized skepticism Organized dogmatism Consider all new evidence, even against one’s prior work Invest career promoting one’s own theories, findings Quality Quantity Merton, 1942;

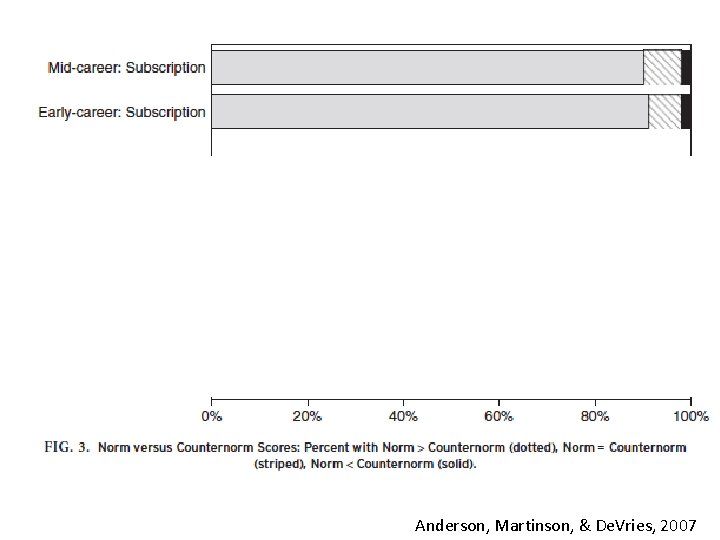

Anderson, Martinson, & De. Vries, 2007

Barriers 1. Perceived norms (Anderson, Martinson, & De. Vries, 2007) 2. Motivated reasoning (Kunda, 1990) 3. Minimal accountability (Lerner & Tetlock, 1999) 4. Concrete rewards beat abstract principles (Trope & Liberman, 2010) 5. I am busy (Me & You, 2016)

Incentives for individual success are focused on getting it published, not getting it right Nosek, Spies, & Motyl, 2012

Scientific Ideals ● Innovative ideas ● Reproducible results ● Accumulation of knowledge Central Features of Science ● Transparency ● Reproducibility

What is reproducibility? • Computation Reproducibility: – If we took your data and code/analysis scripts and reran it, we can reproduce the numbers/graphs in your paper • Empirical Reproducibility: – We have enough information to rerun the experiment or survey the way it was originally conducted • Replicability: – We use your exact methods and analyses, but collect new data, and we get the same statistical results

Why should we care? • To increase the efficiency of your own work – Hard to build off our own work, or work of others in our lab • We may not have the knowledge we think we have – Hard to even check this if reproducibility low

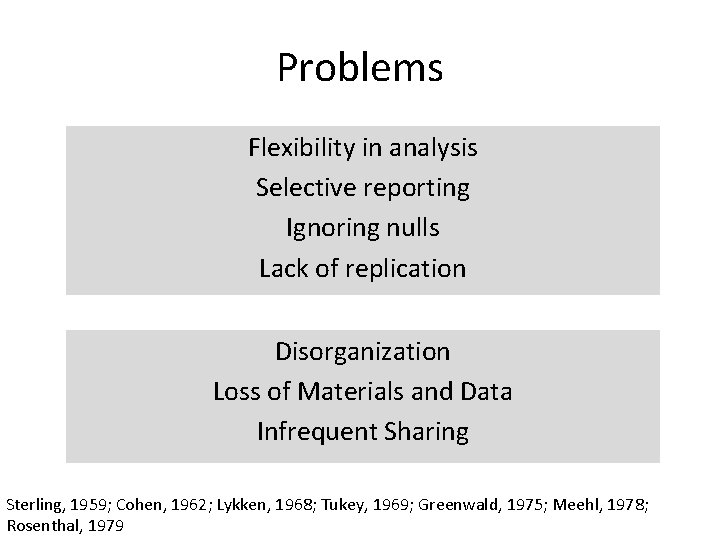

Problems Flexibility in analysis Selective reporting Ignoring nulls Lack of replication Sterling, 1959; Cohen, 1962; Lykken, 1968; Tukey, 1969; Greenwald, 1975; Meehl, 1978; Rosenthal, 1979

Researcher Degrees of Freedom All data processing and analytical choices made after seeing and interacting with your data • Should I collect more data? • Which observations should I exclude? • Which conditions should I compare? • What should be my main DV?

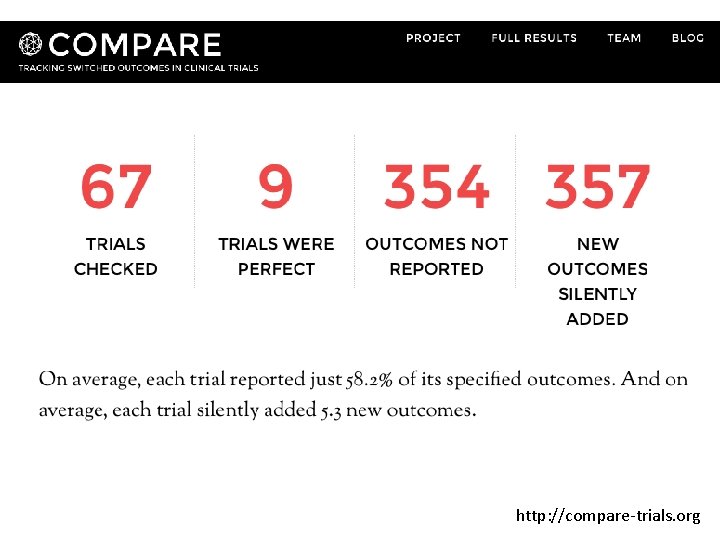

http: //compare-trials. org

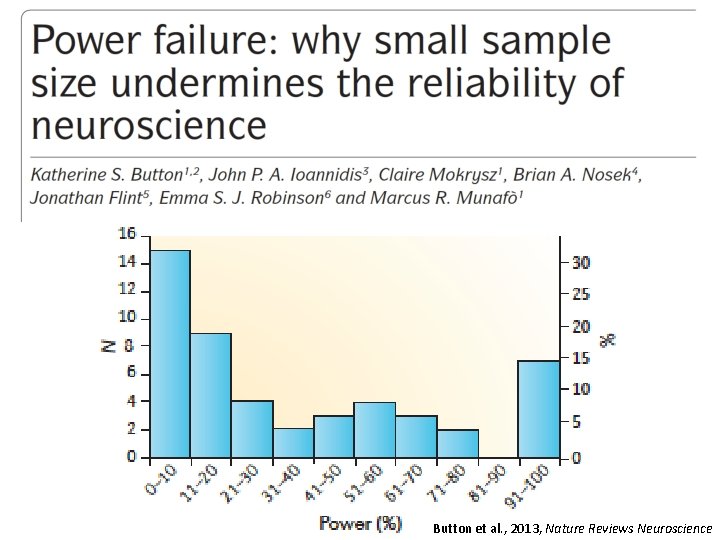

Button et al. , 2013, Nature Reviews Neuroscience

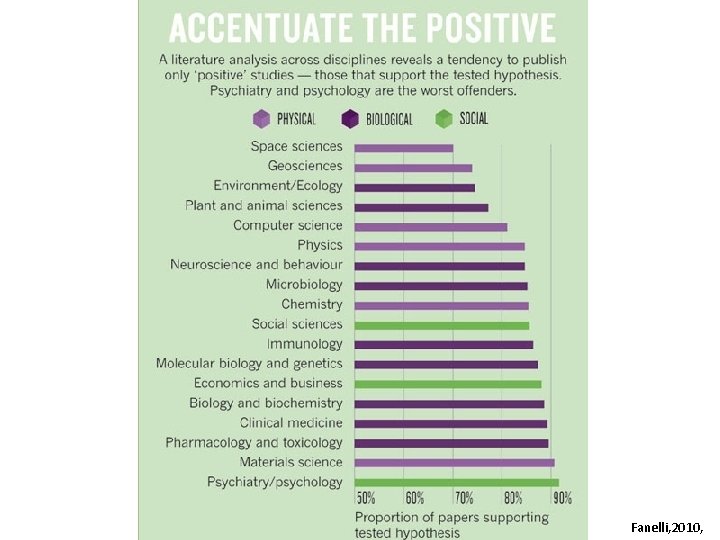

Fanelli, 2010,

Problems Flexibility in analysis Selective reporting Ignoring nulls Lack of replication Disorganization Loss of Materials and Data Infrequent Sharing Sterling, 1959; Cohen, 1962; Lykken, 1968; Tukey, 1969; Greenwald, 1975; Meehl, 1978; Rosenthal, 1979

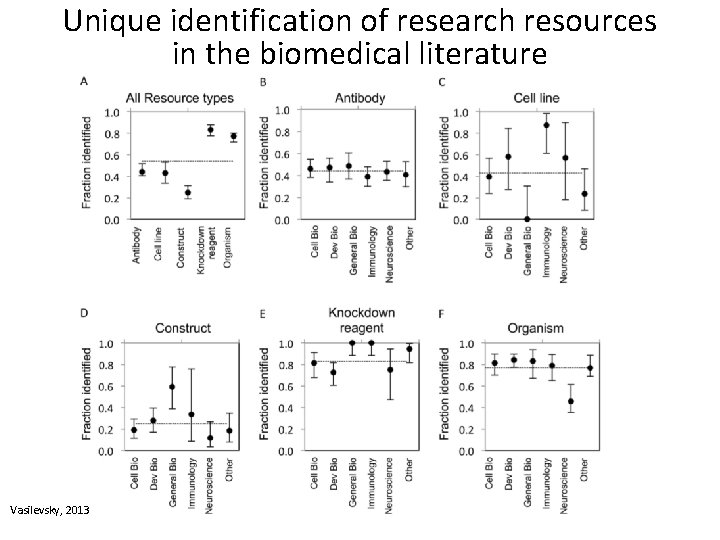

Unique identification of research resources in the biomedical literature Vasilevsky, 2013

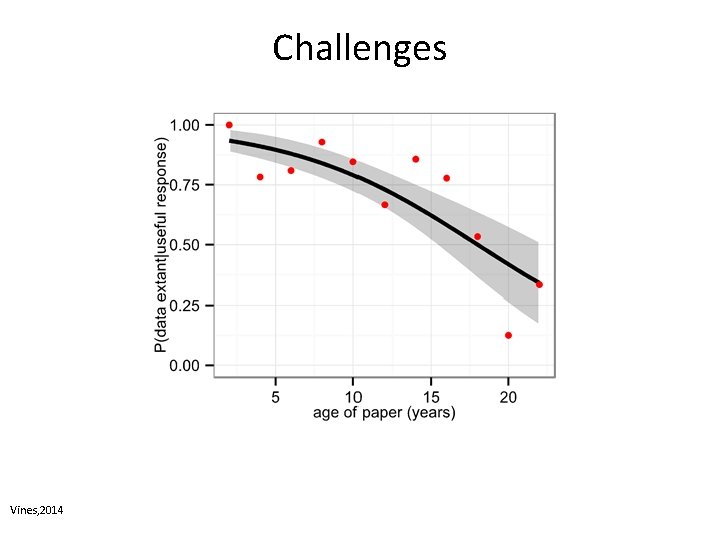

Challenges Vines, 2014

Evidence to encourage change Incentives to embrace change Training to enact change Technology to enable change

Evidence to encourage Metascience Incentives to embrace Community Training to enact Technology to enable Infrastructure

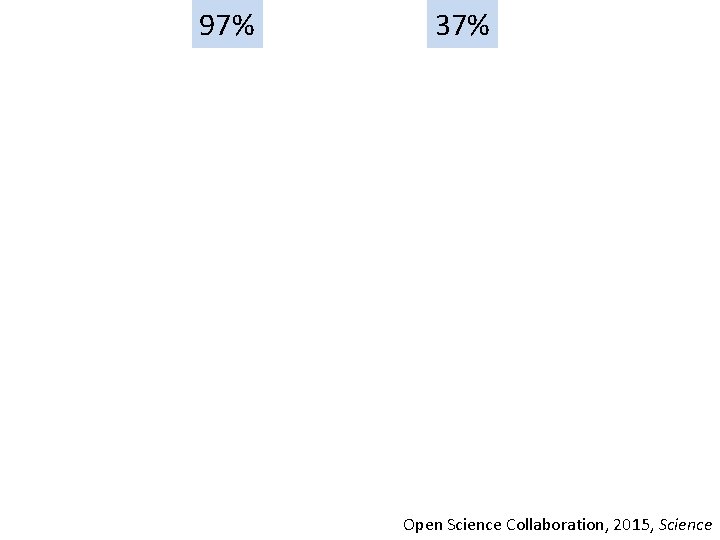

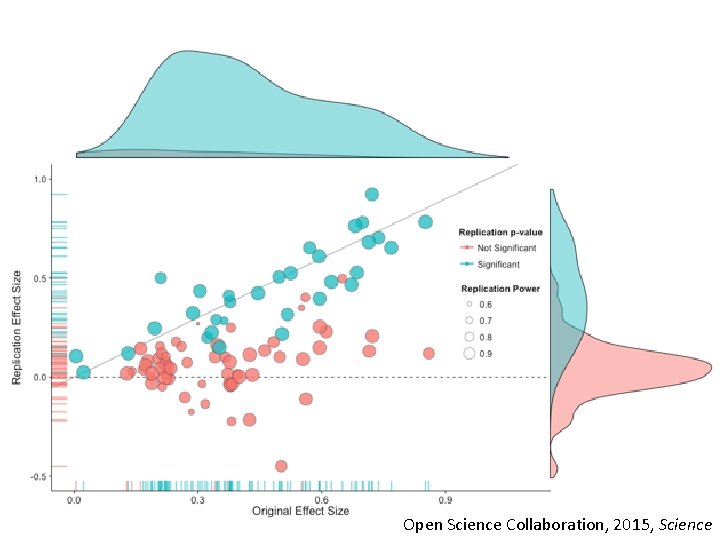

97% xx 37% Open Science Collaboration, 2015, Science

Open Science Collaboration, 2015, Science

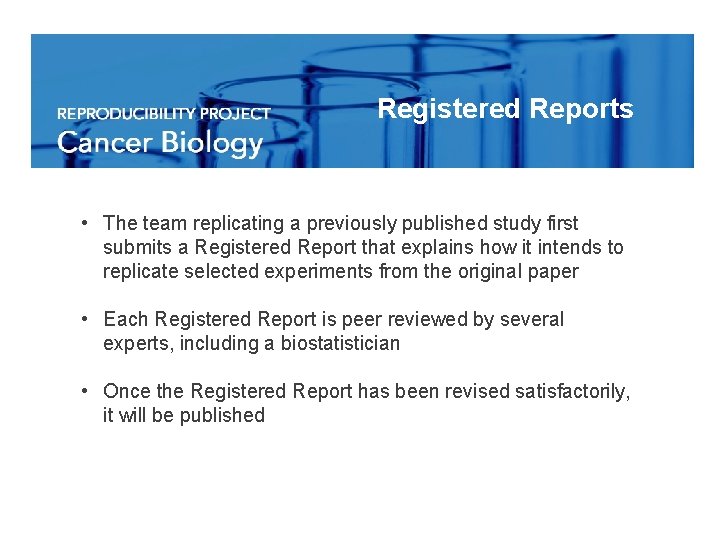

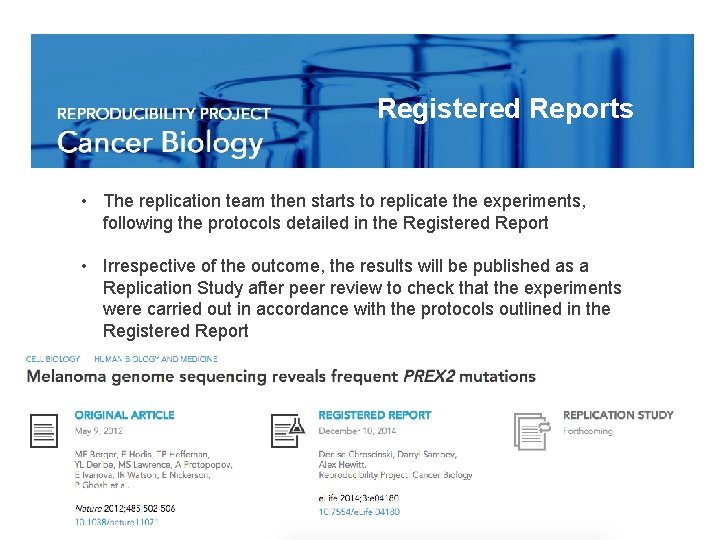

Registered Reports • The team replicating a previously published study first submits a Registered Report that explains how it intends to replicate selected experiments from the original paper • Each Registered Report is peer reviewed by several experts, including a biostatistician • Once the Registered Report has been revised satisfactorily, it will be published

Registered Reports • The replication team then starts to replicate the experiments, following the protocols detailed in the Registered Report • Irrespective of the outcome, the results will be published as a Replication Study after peer review to check that the experiments were carried out in accordance with the protocols outlined in the Registered Report

https: //cos. io/rpcb

Incentives to embrace change

538 Journals 58 Organizations http: //cos. io/top

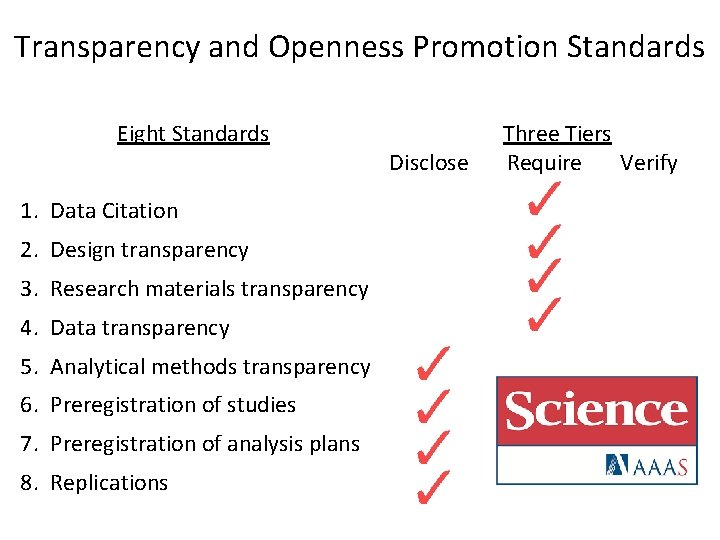

TOP Design Principles Low barrier to entry: 3 levels (disclose, require, verify) Modular (8 standards) Agnostic to discipline

TOP Guidelines 1. 2. 3. 4. 5. 6. 7. 8. Data citation Design transparency Research materials transparency Data transparency Analytic methods (code) transparency Preregistration of studies Preregistration of analysis plans Replication

Transparency and Openness Promotion Standards Eight Standards 1. Data Citation 2. Design transparency 3. Research materials transparency 4. Data transparency 5. Analytical methods transparency 6. Preregistration of studies 7. Preregistration of analysis plans 8. Replications Disclose Three Tiers Require Verify

Why you might want to share • Journal/Funder mandates • Increase impact of work • Recognition of good research practices

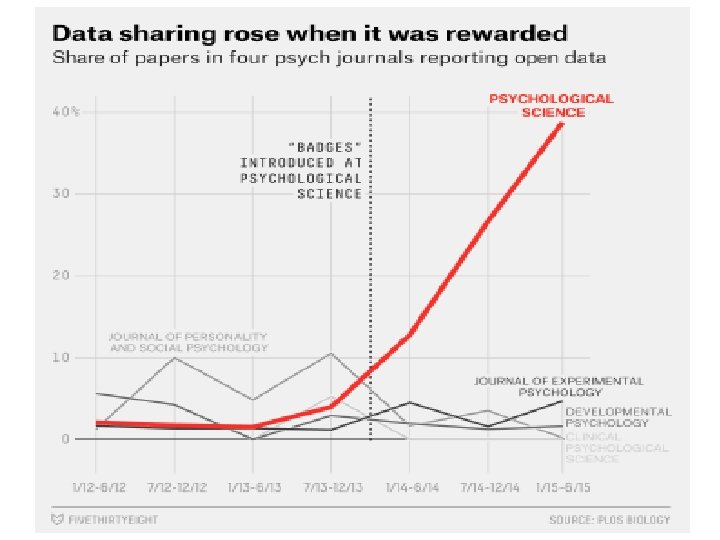

Incentives: Making Behaviors Visible Promotes Adoption

36

Two Modes of Research Context of Discovery Exploration Data contingent Hypothesis generating Context of Justification Confirmation Data independent Hypothesis testing

Preregistration Purposes 1. Discoverability: Study exists 2. Interpretability: Distinguish exploratory and confirmatory approaches Why needed? Mistaking exploratory as confirmatory increases publishability and decreases credibility of results

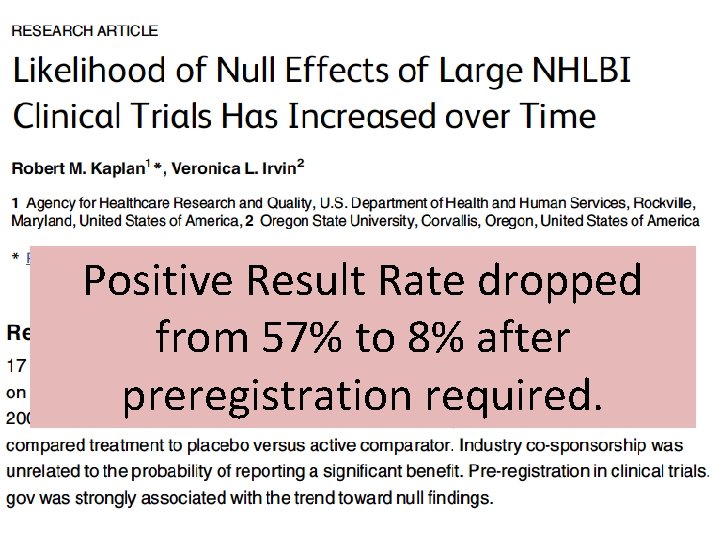

Positive Result Rate dropped from 57% to 8% after preregistration required.

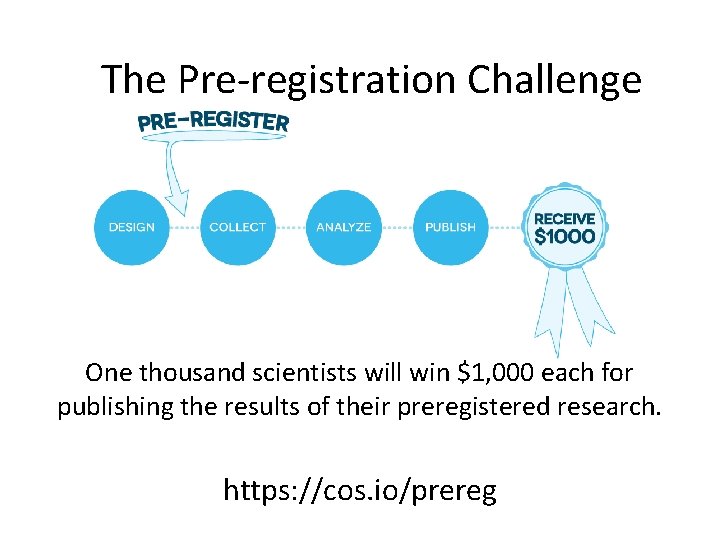

The Pre-registration Challenge One thousand scientists will win $1, 000 each for publishing the results of their preregistered research. https: //cos. io/prereg

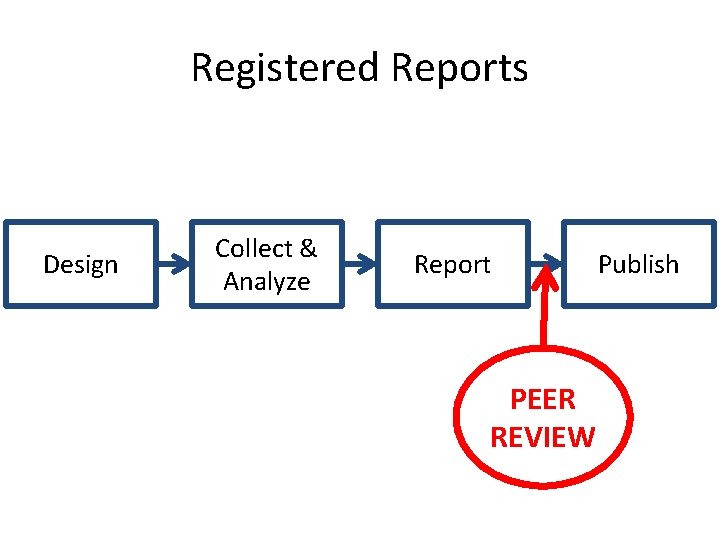

Registered Reports Design Collect & Analyze Report PEER REVIEW Publish

Who Publishes Registered Reports? (just to name a few) See the full list and compare features: osf. io/8 mpji

Training to enact change

Free training on how to make research more reproducible http: //cos. io/stats_consulting

Technology to enable change

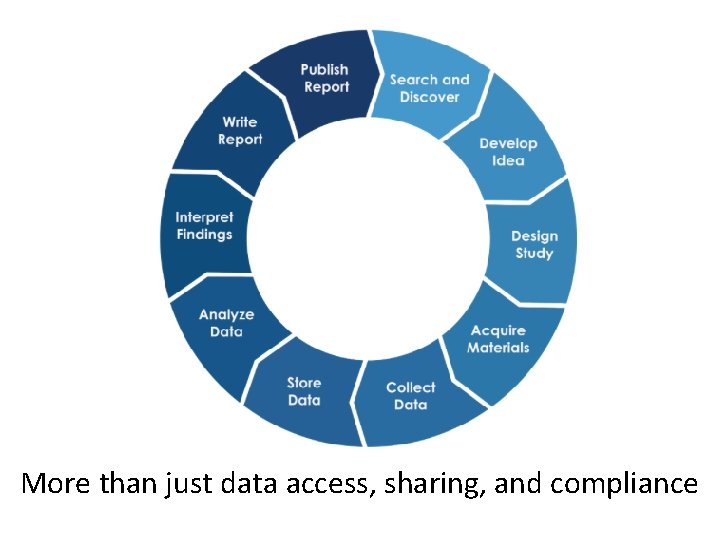

More than just data access, sharing, and compliance

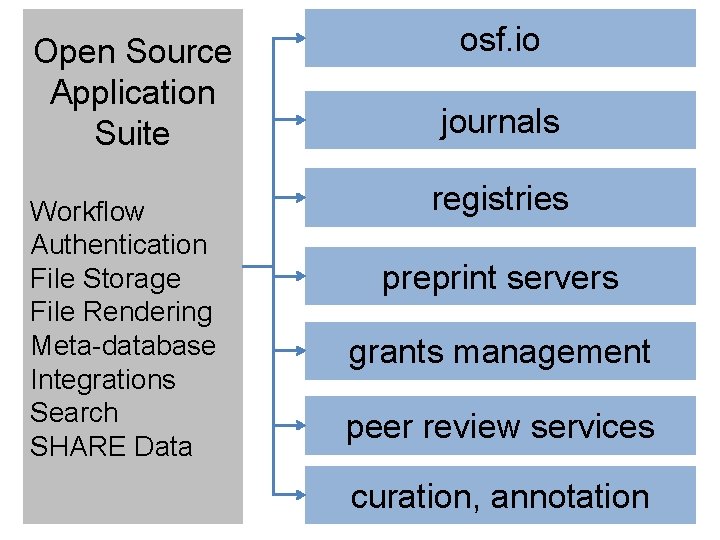

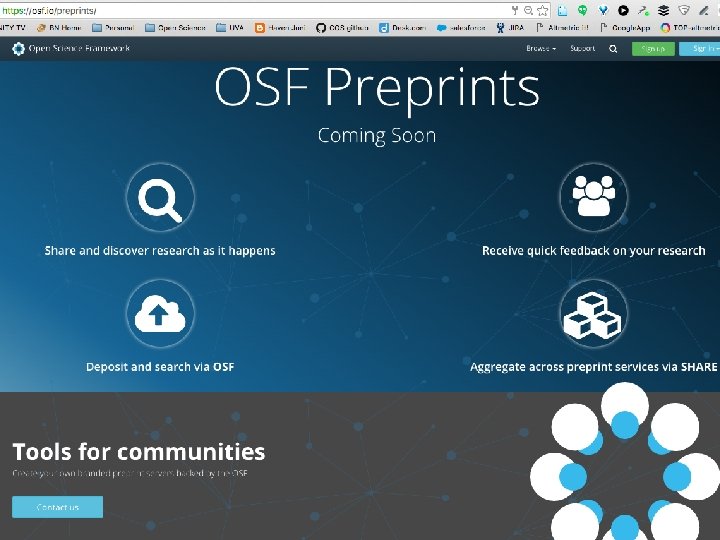

Open Source Application Suite Workflow Authentication File Storage File Rendering Meta-database Integrations Search SHARE Data osf. io journals registries preprint servers grants management peer review services curation, annotation

http: //osf. io/ free, open source

Open. Sesame

Find this presentation at: https: //osf. io/83 tng/ Questions: tim@cos. io

Resources: ● Reproducible Research Practices? o stats-consulting@cos. io ● The OSF? o support@osf. io ● Have feedback for how we could support you more? o o contact@cos. io feedback@cos. io

- Slides: 56