Improving Database Performance on Simultaneous Multithreading Processors Jingren

Improving Database Performance on Simultaneous Multithreading Processors Jingren Zhou Microsoft Research jrzhou@microsoft. com John Cieslewicz Columbia University johnc@cs. columbia. edu Kenneth A. Ross Columbia University kar@cs. columbia. edu Mihir Shah Columbia University ms 2604@columbia. edu

Simultaneous Multithreading (SMT) n Available on modern CPUs: “Hyperthreading” on Pentium 4 and Xeon. ¨ IBM POWER 5 ¨ Sun Ultra. Sparc IV ¨ n Challenge: Design software to efficiently utilize SMT. ¨ Intel Pentium 4 with Hyperthreading This talk: Database software

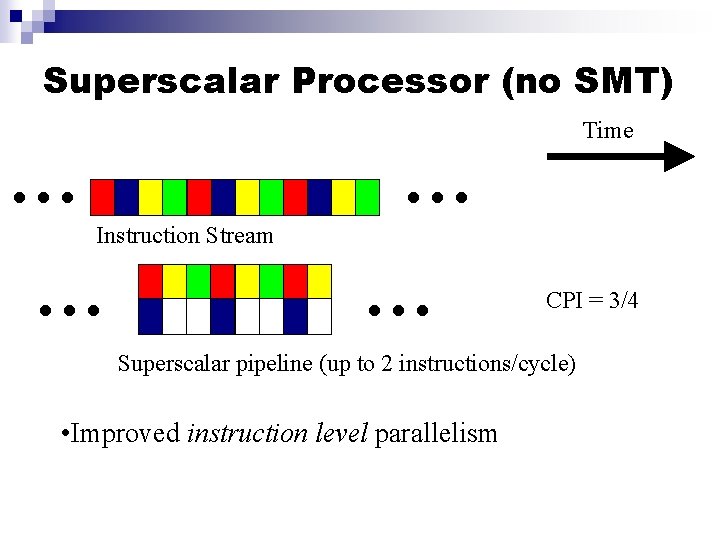

Superscalar Processor (no SMT) . . . Instruction Stream . . . Time CPI = 3/4 Superscalar pipeline (up to 2 instructions/cycle) • Improved instruction level parallelism

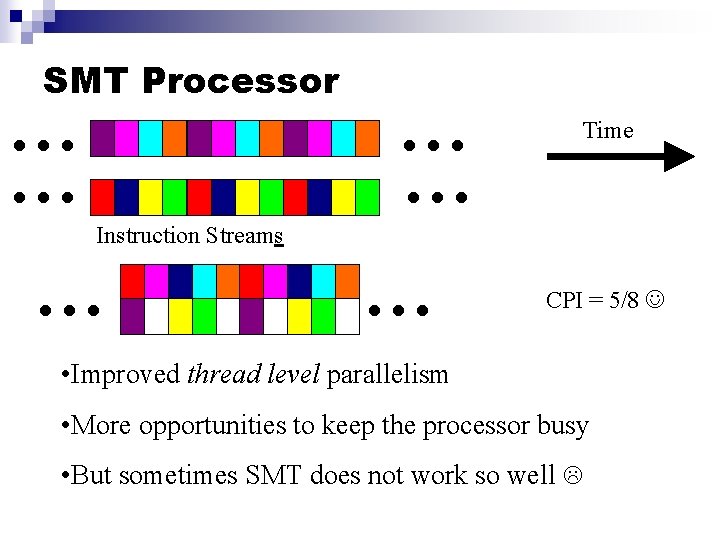

SMT Processor . . Instruction Streams . . Time CPI = 5/8 • Improved thread level parallelism • More opportunities to keep the processor busy • But sometimes SMT does not work so well

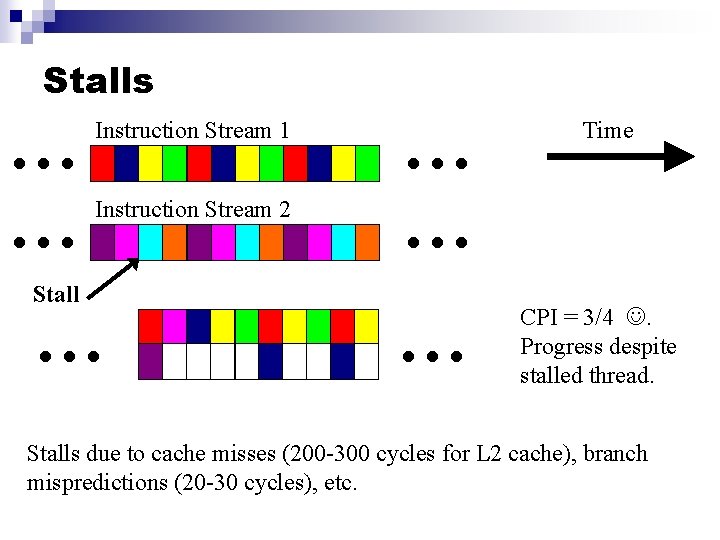

Stalls . . Instruction Stream 1 Instruction Stream 2 Stall . . Time CPI = 3/4 . Progress despite stalled thread. Stalls due to cache misses (200 -300 cycles for L 2 cache), branch mispredictions (20 -30 cycles), etc.

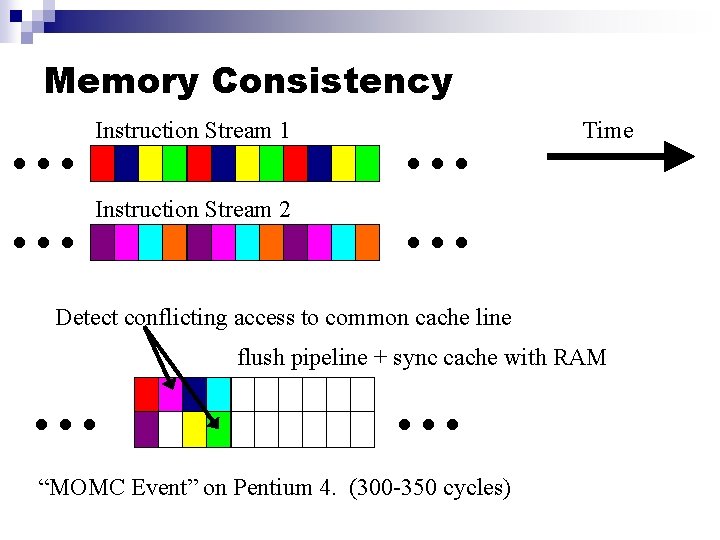

Memory Consistency . . . Instruction Stream 1 Instruction Stream 2 . . . Time Detect conflicting access to common cache line . . . flush pipeline + sync cache with RAM “MOMC Event” on Pentium 4. (300 -350 cycles)

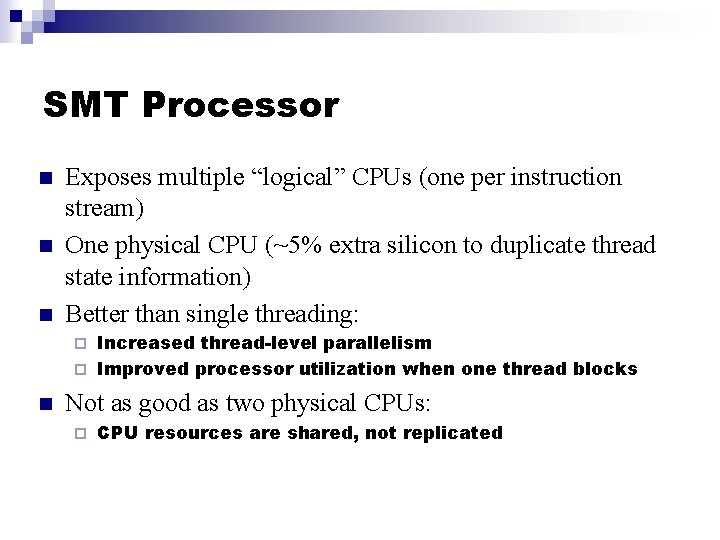

SMT Processor n n n Exposes multiple “logical” CPUs (one per instruction stream) One physical CPU (~5% extra silicon to duplicate thread state information) Better than single threading: Increased thread-level parallelism ¨ Improved processor utilization when one thread blocks ¨ n Not as good as two physical CPUs: ¨ CPU resources are shared, not replicated

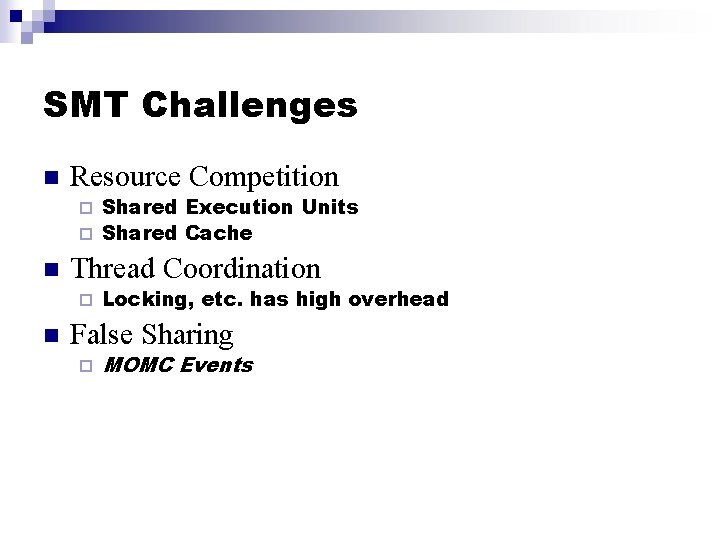

SMT Challenges n Resource Competition Shared Execution Units ¨ Shared Cache ¨ n Thread Coordination ¨ n Locking, etc. has high overhead False Sharing ¨ MOMC Events

Approaches to using SMT n n Ignore it, and write single threaded code. Naïve parallelism ¨ n SMT-aware parallelism ¨ n Pretend the logical CPUs are physical CPUs Parallel threads designed to avoid SMT-related interference Use one thread for the algorithm, and another to manage resources ¨ E. g. , to avoid stalls for cache misses

Naïve Parallelism n n n Treat SMT processor as if it is multi-core Databases already designed to utilize multiple processors - no code modification Uses shared processor resources inefficiently: Cache Pollution / Interference ¨ Competition for execution units ¨

SMT-Aware Parallelism n n Exploit intra-operator parallelism Divide input and use a separate thread to process each part E. g. , one thread for even tuples, one for odd tuples. ¨ Explicit partitioning step not required. ¨ n Sharing input involves multiple readers ¨ No MOMC events, because two reads don’t conflict

SMT-Aware Parallelism (cont. ) n Sharing output is challenging Thread coordination for output ¨ read/write and write/write conflicts on common cache lines (MOMC Events) ¨ n “Solution: ” Partition the output Each thread writes to separate memory buffer to avoid memory conflicts ¨ Need an extra merge step in the consumer of the output stream ¨ Difficult to maintain input order in the output ¨

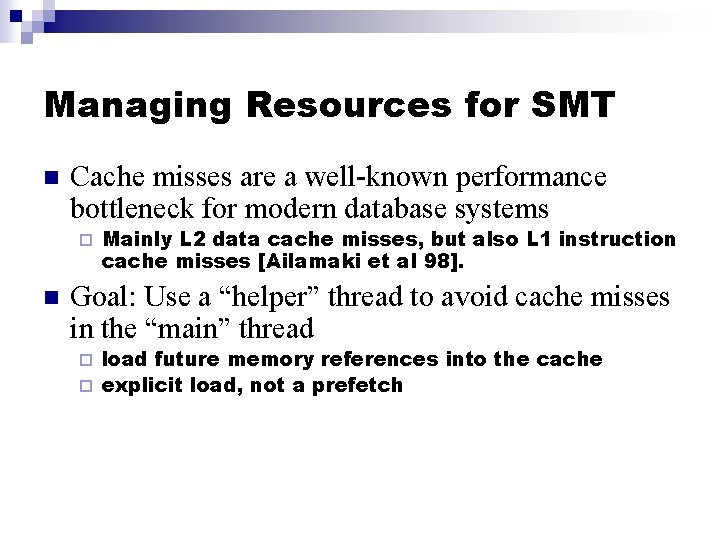

Managing Resources for SMT n Cache misses are a well-known performance bottleneck for modern database systems ¨ n Mainly L 2 data cache misses, but also L 1 instruction cache misses [Ailamaki et al 98]. Goal: Use a “helper” thread to avoid cache misses in the “main” thread load future memory references into the cache ¨ explicit load, not a prefetch ¨

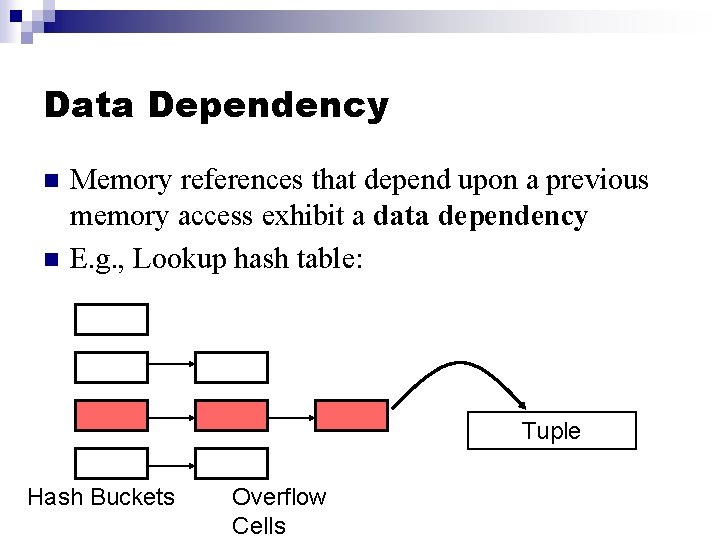

Data Dependency n n Memory references that depend upon a previous memory access exhibit a data dependency E. g. , Lookup hash table: Tuple Hash Buckets Overflow Cells

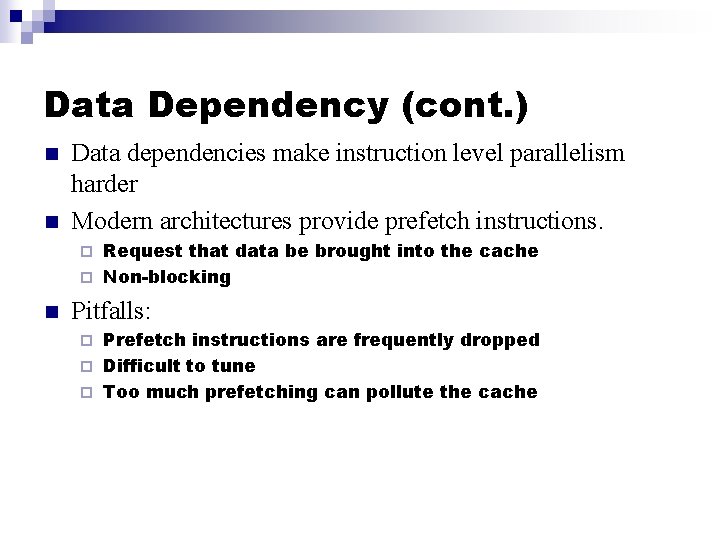

Data Dependency (cont. ) n n Data dependencies make instruction level parallelism harder Modern architectures provide prefetch instructions. Request that data be brought into the cache ¨ Non-blocking ¨ n Pitfalls: Prefetch instructions are frequently dropped ¨ Difficult to tune ¨ Too much prefetching can pollute the cache ¨

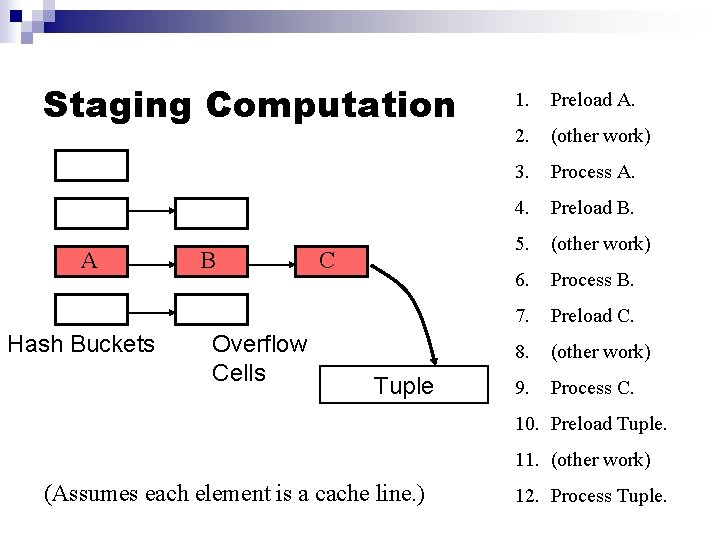

Staging Computation A Hash Buckets B Overflow Cells C Tuple 1. Preload A. 2. (other work) 3. Process A. 4. Preload B. 5. (other work) 6. Process B. 7. Preload C. 8. (other work) 9. Process C. 10. Preload Tuple. 11. (other work) (Assumes each element is a cache line. ) 12. Process Tuple.

Staging Computation (cont. ) n By overlapping memory latency with other work, some cache miss latency can be hidden. n Many probes “in flight” at the same time. n Algorithms need to be rewritten. n E. g. Chen, et al. [2004], Harizopoulos, et al. [2004].

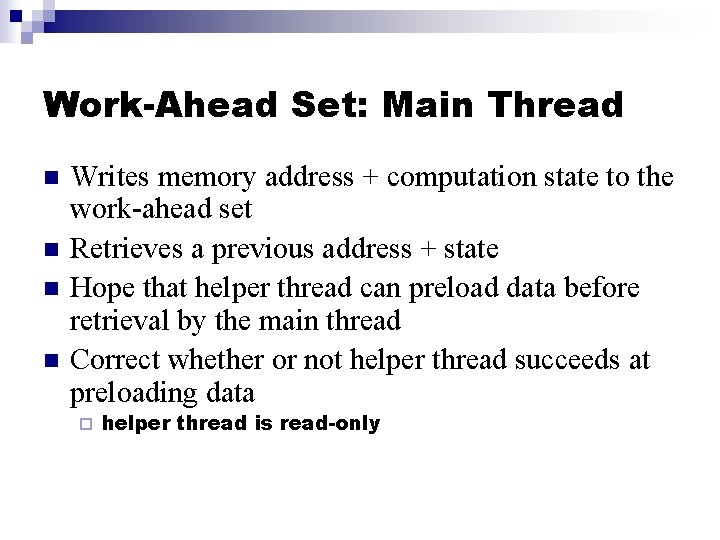

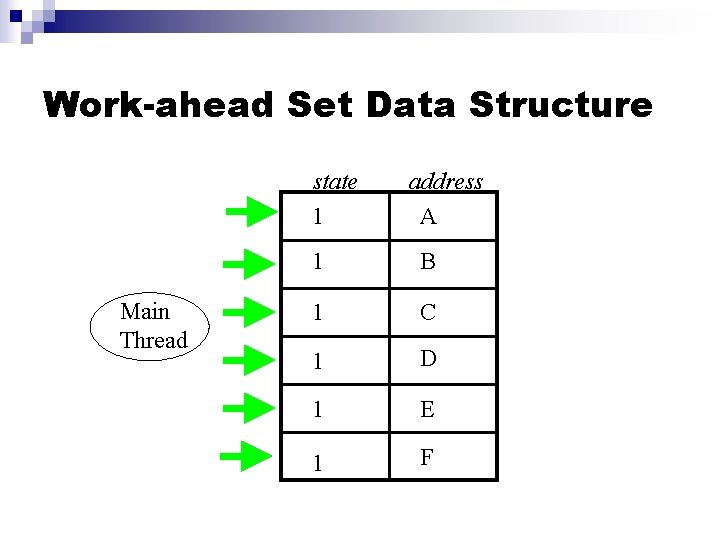

Work-Ahead Set: Main Thread n n Writes memory address + computation state to the work-ahead set Retrieves a previous address + state Hope that helper thread can preload data before retrieval by the main thread Correct whether or not helper thread succeeds at preloading data ¨ helper thread is read-only

Work-ahead Set Data Structure state 1 Main Thread address A 1 B 1 C 1 D 1 E 1 F

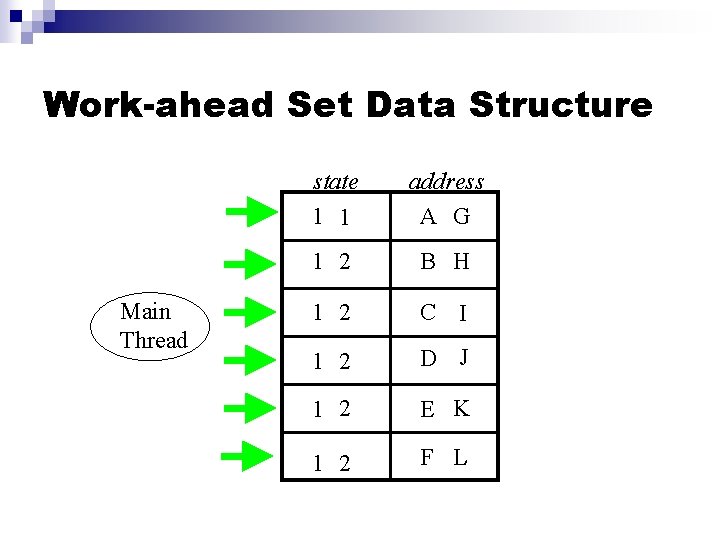

Work-ahead Set Data Structure Main Thread state 1 1 address A G 1 2 B H 1 2 C I 1 2 D J 1 2 E K 1 2 F L

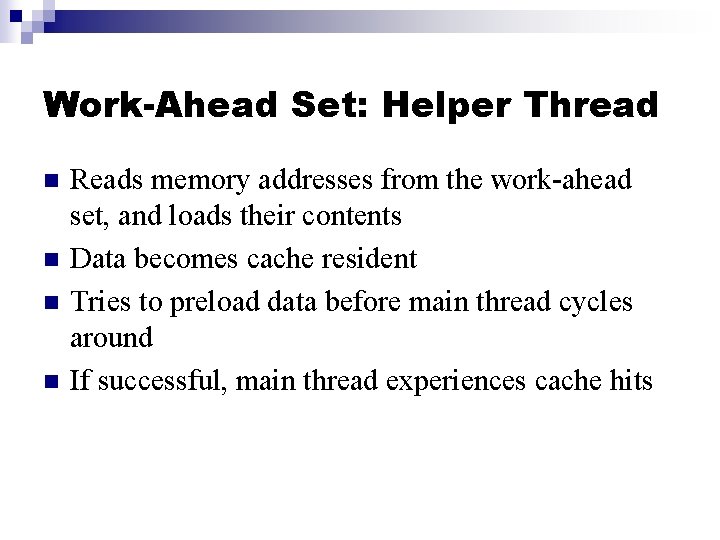

Work-Ahead Set: Helper Thread n n Reads memory addresses from the work-ahead set, and loads their contents Data becomes cache resident Tries to preload data before main thread cycles around If successful, main thread experiences cache hits

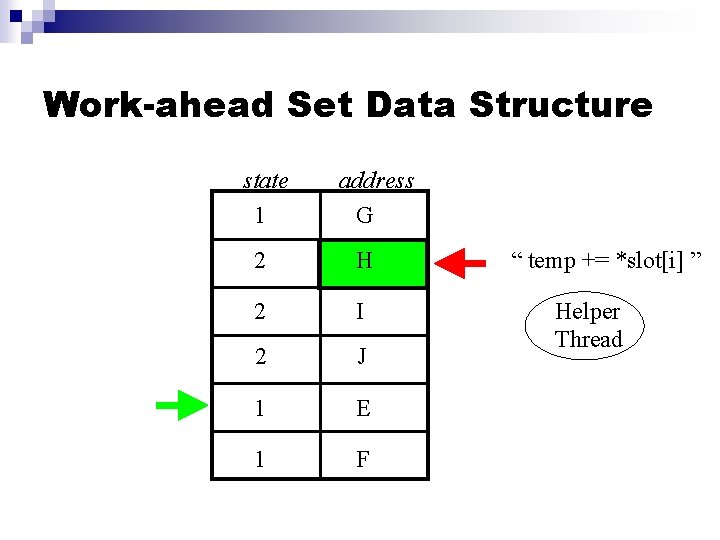

Work-ahead Set Data Structure state 1 address G 2 H 2 I 2 J 1 E 1 F “ temp += *slot[i] ” Helper Thread

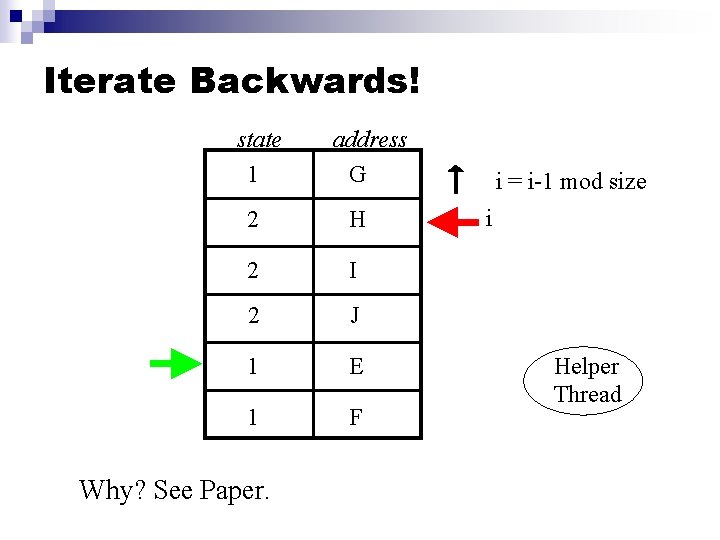

Iterate Backwards! state 1 address G 2 H 2 I 2 J 1 E 1 F Why? See Paper. i = i-1 mod size i Helper Thread

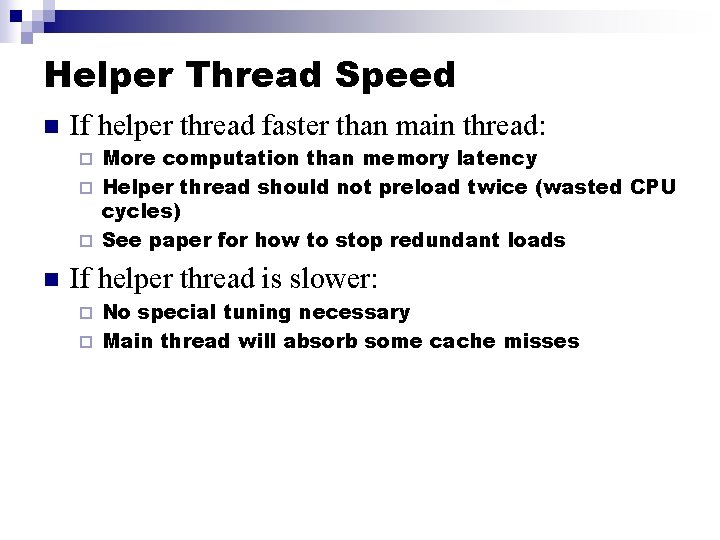

Helper Thread Speed n If helper thread faster than main thread: More computation than memory latency ¨ Helper thread should not preload twice (wasted CPU cycles) ¨ See paper for how to stop redundant loads ¨ n If helper thread is slower: No special tuning necessary ¨ Main thread will absorb some cache misses ¨

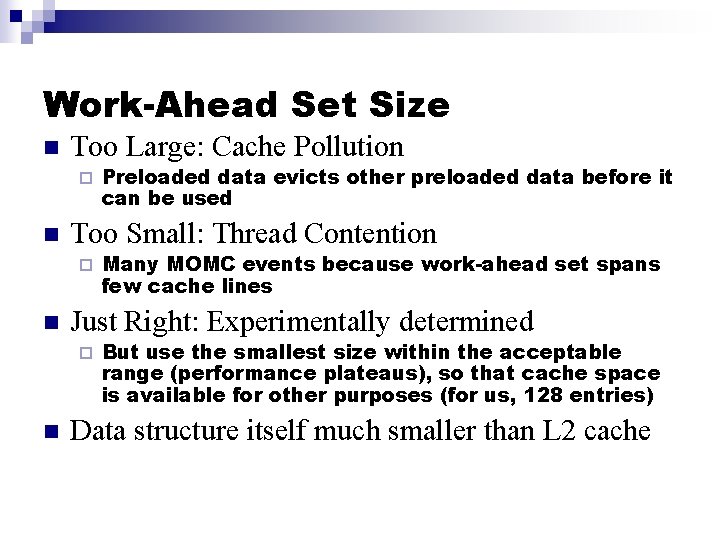

Work-Ahead Set Size n Too Large: Cache Pollution ¨ n Too Small: Thread Contention ¨ n Many MOMC events because work-ahead set spans few cache lines Just Right: Experimentally determined ¨ n Preloaded data evicts other preloaded data before it can be used But use the smallest size within the acceptable range (performance plateaus), so that cache space is available for other purposes (for us, 128 entries) Data structure itself much smaller than L 2 cache

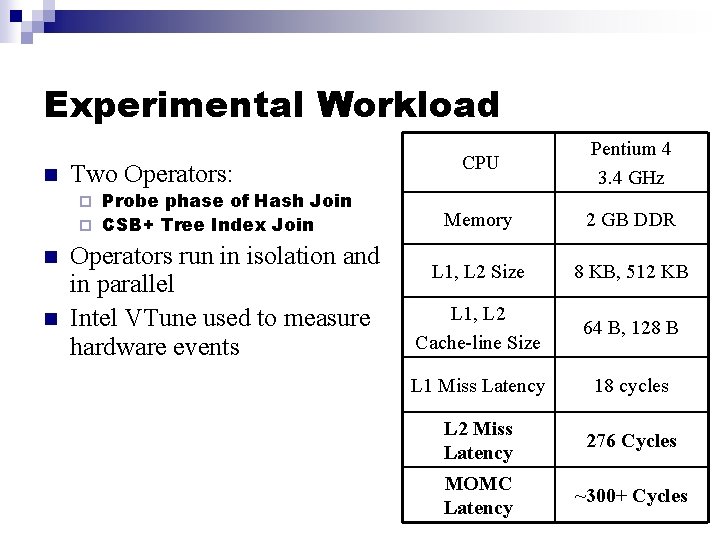

Experimental Workload n Two Operators: Probe phase of Hash Join ¨ CSB+ Tree Index Join ¨ n n Operators run in isolation and in parallel Intel VTune used to measure hardware events CPU Pentium 4 3. 4 GHz Memory 2 GB DDR L 1, L 2 Size 8 KB, 512 KB L 1, L 2 Cache-line Size 64 B, 128 B L 1 Miss Latency 18 cycles L 2 Miss Latency 276 Cycles MOMC Latency ~300+ Cycles

Experimental Outline 1. 2. 3. Hash join Index lookup Mixed: Hash join and index lookup

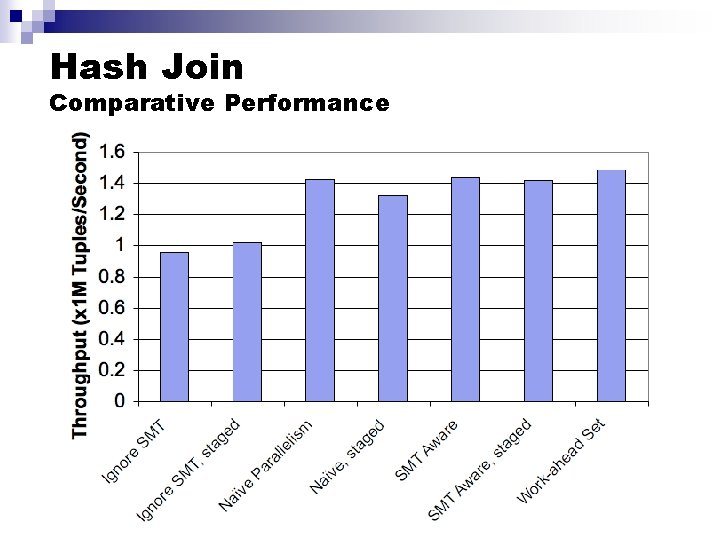

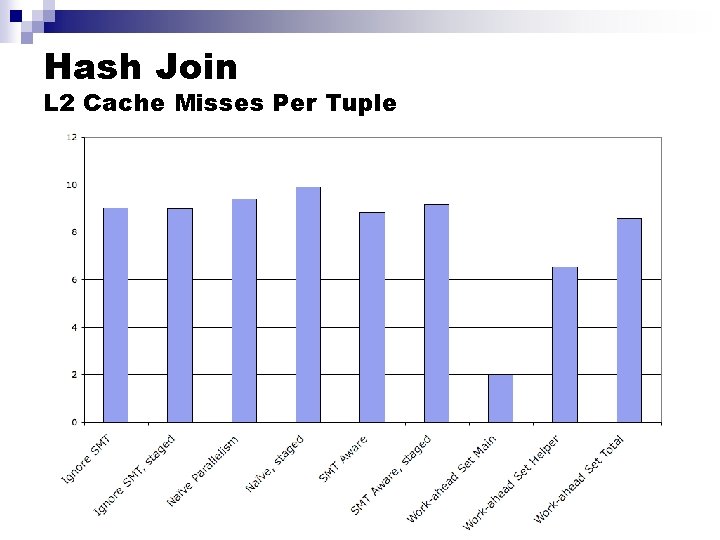

Hash Join Comparative Performance

Hash Join L 2 Cache Misses Per Tuple

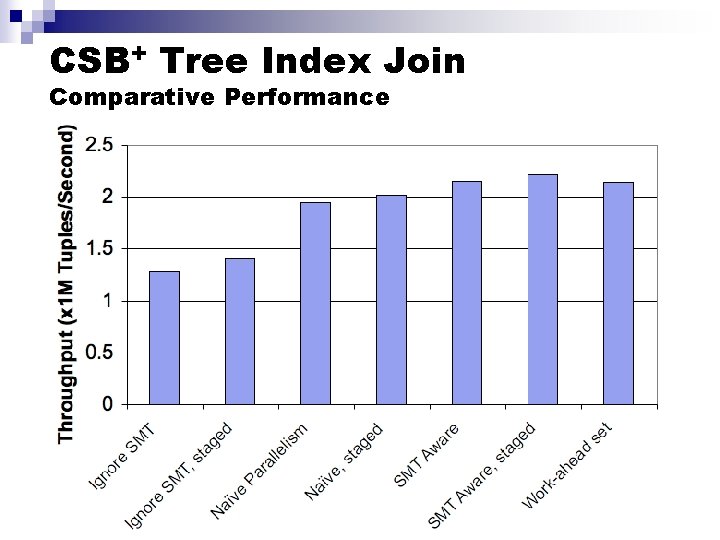

CSB+ Tree Index Join Comparative Performance

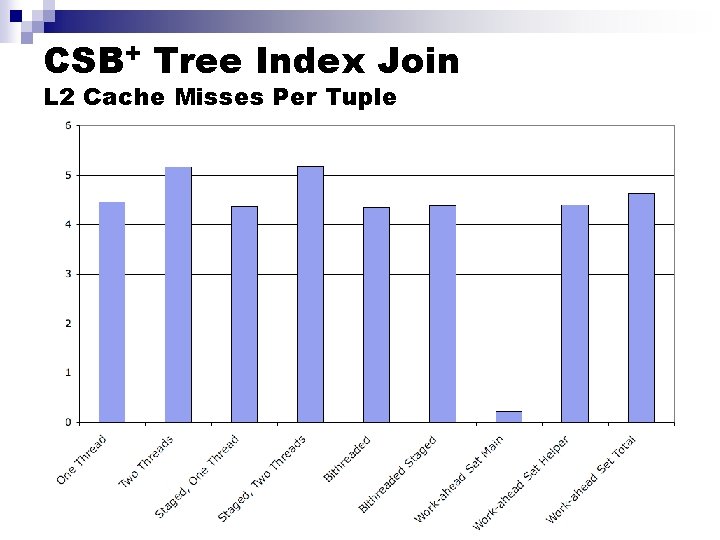

CSB+ Tree Index Join L 2 Cache Misses Per Tuple

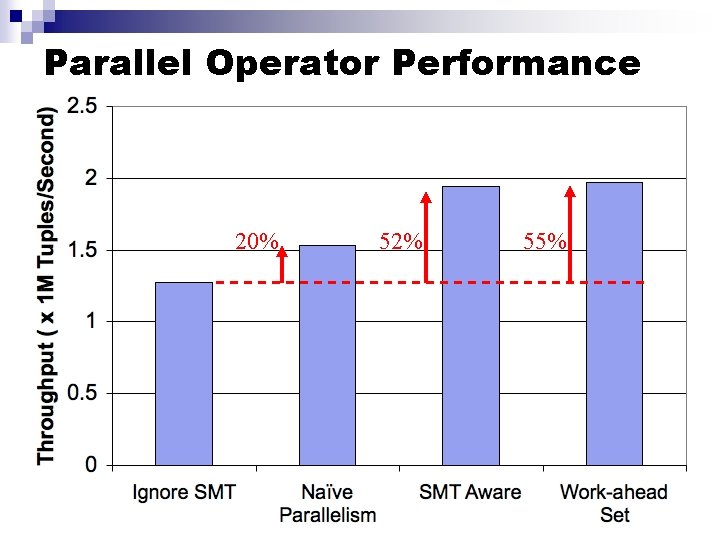

Parallel Operator Performance 20% 52% 55%

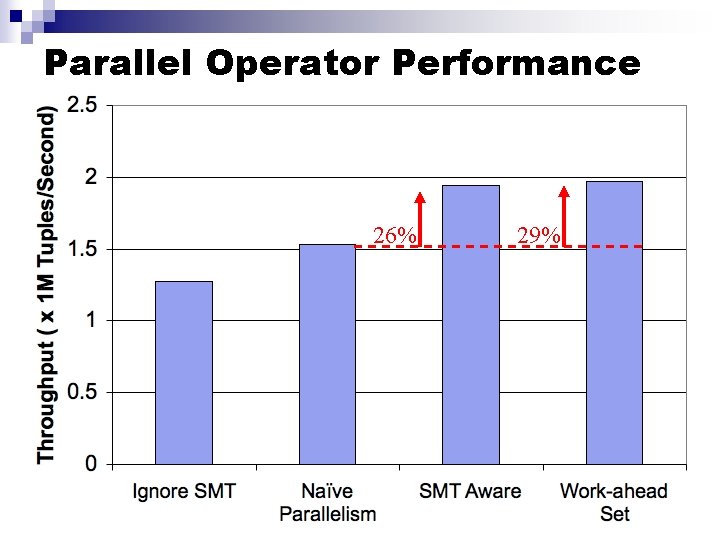

Parallel Operator Performance 26% 29%

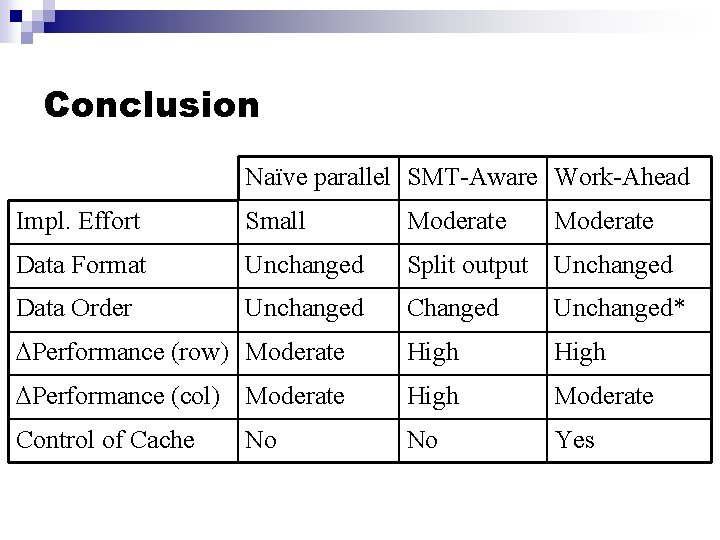

Conclusion Naïve parallel SMT-Aware Work-Ahead Impl. Effort Small Moderate Data Format Unchanged Split output Unchanged Data Order Unchanged Changed Unchanged* Performance (row) Moderate High Performance (col) Moderate High Moderate Control of Cache No Yes No Moderate

- Slides: 34