Importance of Semantic Representation Dataless Classification MingWei Chang

![Semantic Representation n Explicit Semantic Analysis ¨ [Gabrilovich & Markovitch, 2006, 2007] n Text Semantic Representation n Explicit Semantic Analysis ¨ [Gabrilovich & Markovitch, 2006, 2007] n Text](https://slidetodoc.com/presentation_image_h/319eae8c5d46bab6c478f396d9487136/image-9.jpg)

![Experiments n 20 Newsgroups ¨ 10 binary problems (from [Raina et al, ‘ 06]) Experiments n 20 Newsgroups ¨ 10 binary problems (from [Raina et al, ‘ 06])](https://slidetodoc.com/presentation_image_h/319eae8c5d46bab6c478f396d9487136/image-26.jpg)

- Slides: 39

Importance of Semantic Representation: Dataless Classification Ming-Wei Chang Lev Ratinov Dan Roth Vivek Srikumar University of Illinois, Urbana-Champaign

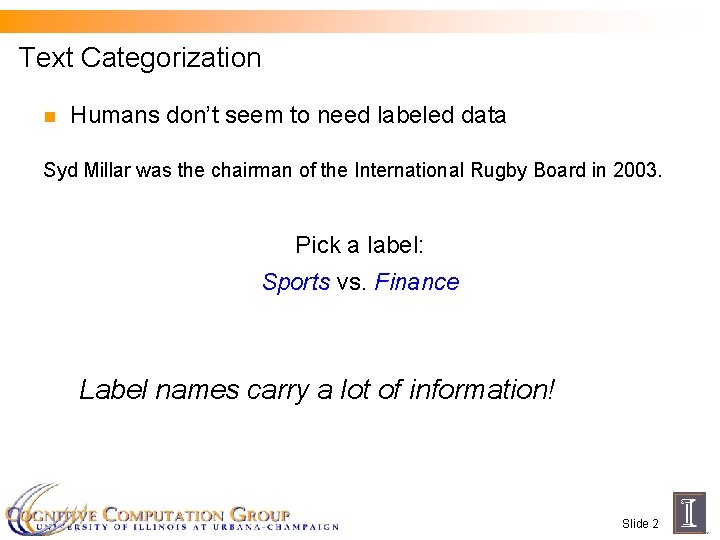

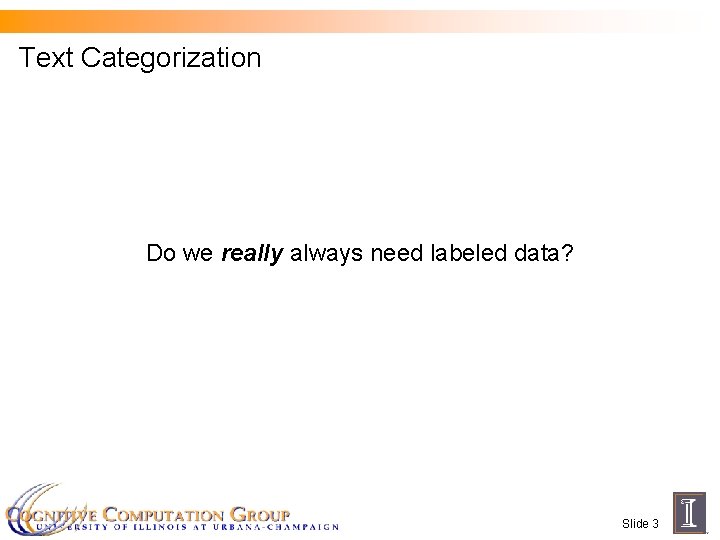

Text Categorization�� Classify the following sentence: �� Syd Millar was the chairman of the International Rugby Board in 2003. Pick a label: Class 1 vs. Class 2 n Traditionally, we � need annotated data to train a classifier Slide 1

Text Categorization n Humans don’t seem to need labeled data Syd Millar was the chairman of the International Rugby Board in 2003. Pick a label: Sports vs. Finance Label names carry a lot of information! Slide 2

Text Categorization Do we really always need labeled data? Slide 3

Contributions n We can often go quite far without annotated data ¨ n … if we “know” the meaning of text This works for text categorization ¨ …. and is consistent across different domains Slide 4

Outline n Semantic Representation n On-the-fly Classification n Datasets n Exploiting unlabeled data n Robustness to different domains Slide 5

Outline n Semantic Representation n On-the-fly Classification n Datasets n Exploiting unlabeled data n Robustness to different domains Slide 6

Semantic Representation n One common representation is the Bag of Words representation n All text is a vector in the space of words. Slide 7

![Semantic Representation n Explicit Semantic Analysis Gabrilovich Markovitch 2006 2007 n Text Semantic Representation n Explicit Semantic Analysis ¨ [Gabrilovich & Markovitch, 2006, 2007] n Text](https://slidetodoc.com/presentation_image_h/319eae8c5d46bab6c478f396d9487136/image-9.jpg)

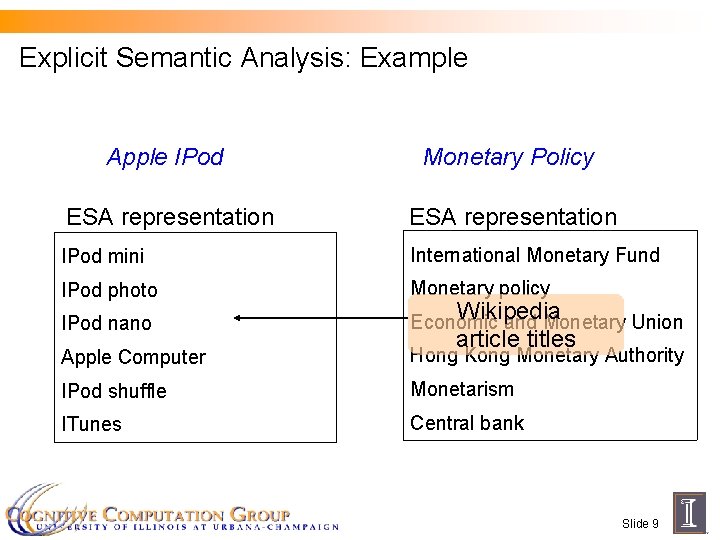

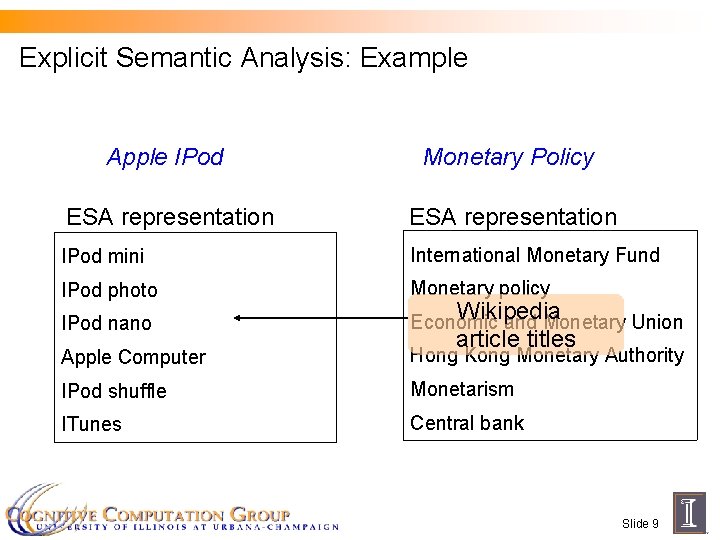

Semantic Representation n Explicit Semantic Analysis ¨ [Gabrilovich & Markovitch, 2006, 2007] n Text is a vector in the space of concepts n Concepts are defined by Wikipedia articles Slide 8

Explicit Semantic Analysis: Example Apple IPod Monetary Policy ESA representation IPod mini International Monetary Fund IPod photo Monetary policy IPod nano Economic and Monetary Union Apple Computer Hong Kong Monetary Authority IPod shuffle Monetarism ITunes Central bank Wikipedia article titles Slide 9

Semantic Representation n Two semantic representations ¨ Bag of words ¨ ESA Slide 10

Outline n Semantic Representation n On-the-fly Classification n Datasets n Exploiting unlabeled data n Robustness to different domains Slide 11

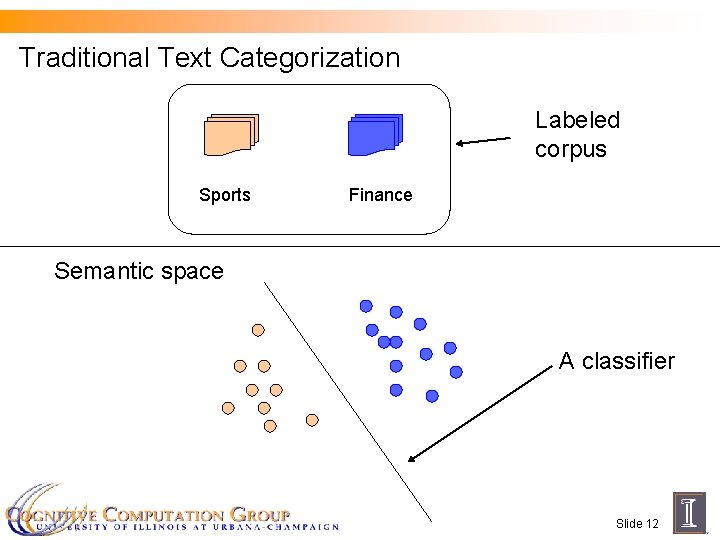

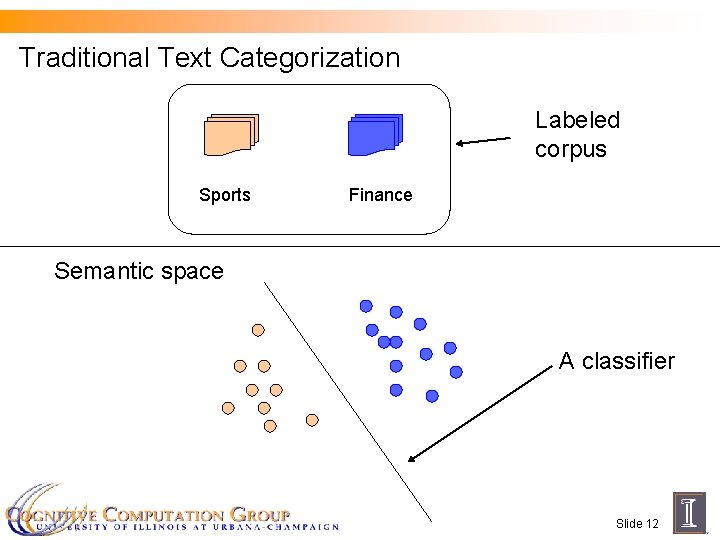

Traditional Text Categorization Labeled corpus Sports Finance Semantic space A classifier Slide 12

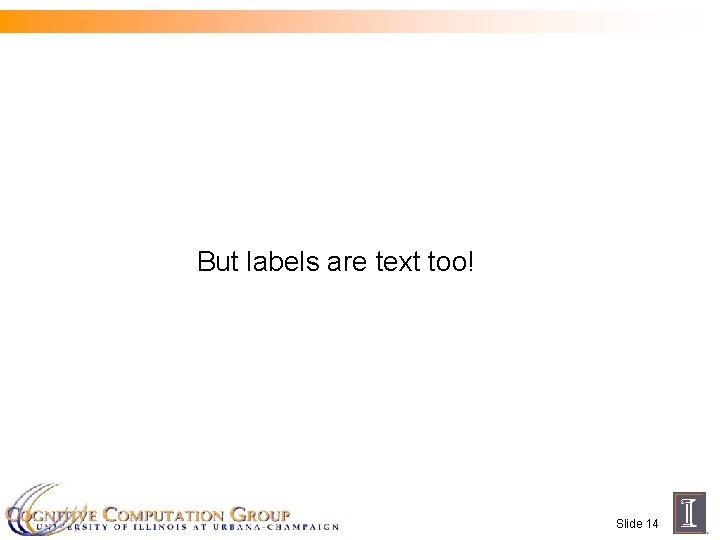

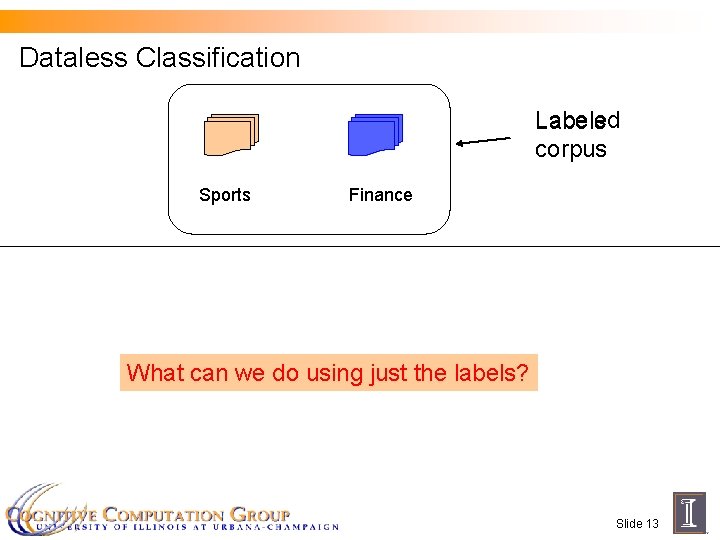

Dataless Classification Labeled Labels corpus Sports Finance What can we do using just the labels? Slide 13

But labels are text too! Slide 14

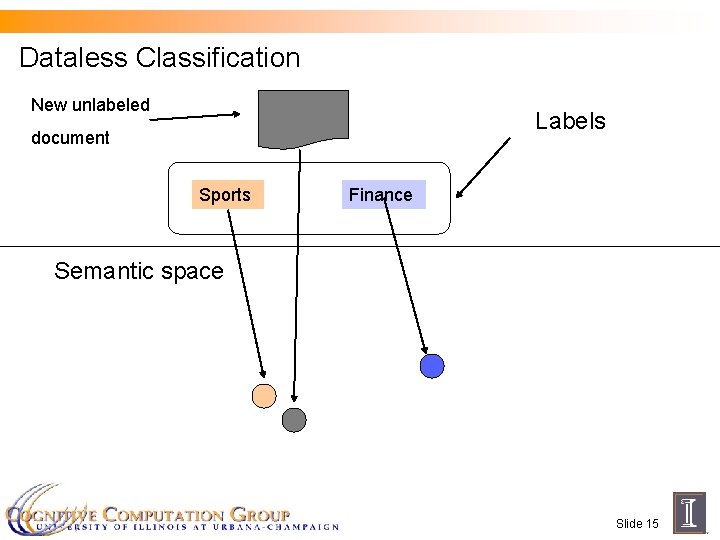

Dataless Classification New unlabeled Labels document Sports Finance Semantic space Slide 15

What is Dataless Classification? n Humans don’t need training for classification n Annotated training data not always needed n Look for the meaning of words Slide 16

What is Dataless Classification? n Humans don’t need training for classification n Annotated training data not always needed n Look for the meaning of words Slide 17

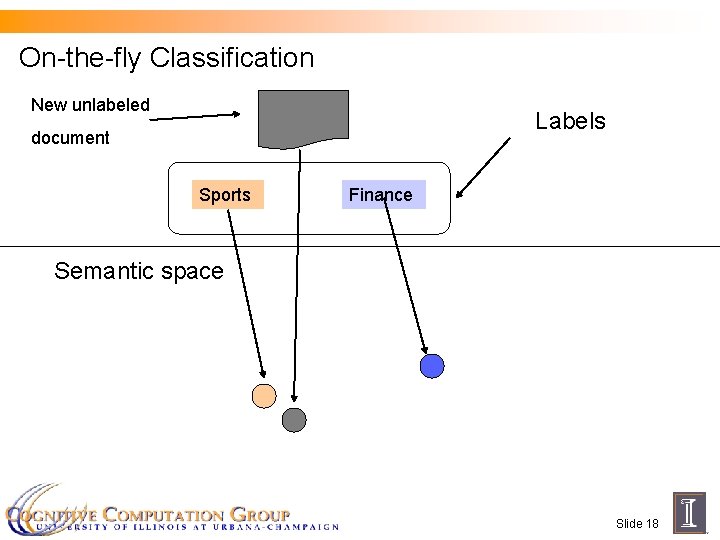

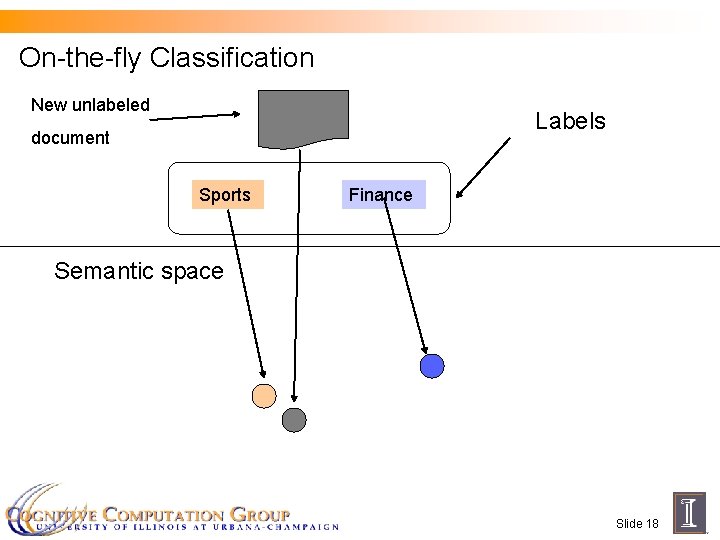

On-the-fly Classification New unlabeled Labels document Sports Finance Semantic space Slide 18

On-the-fly Classification n No training data needed n We � know the meaning of label names n Pick the label that is closest in meaning to the document n Nearest neighbors Slide 19

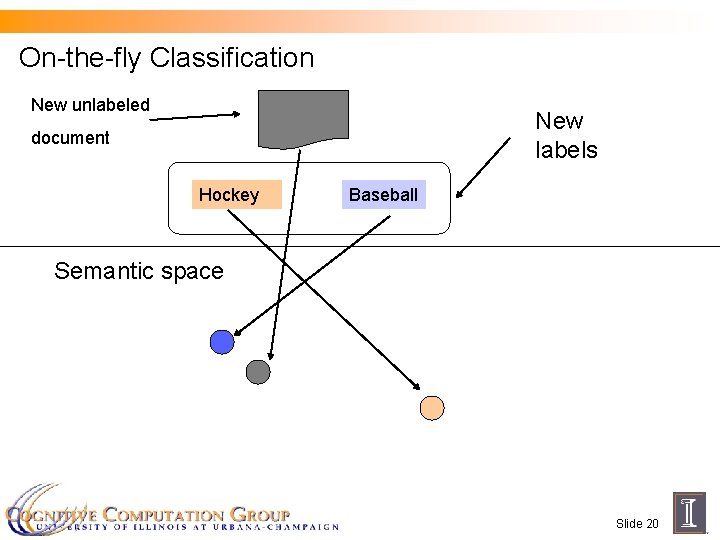

On-the-fly Classification New unlabeled New labels document Hockey Baseball Semantic space Slide 20

On-the-fly Classification n No need to even know labels before hand n Compare with traditional classification ¨ Annotated training data for each label Slide 21

Outline n Semantic Representation n On-the-fly Classification n Datasets n Exploiting unlabeled data n Robustness to different domains Slide 22

Dataset 1: Twenty Newsgroups n Posts to newsgroups ¨ Newsgroups have descriptive names sci. electronics = Science Electronics rec. motorbikes = Motorbikes Slide 23

Dataset 2: Yahoo Answers n Posts to Yahoo! Answers Posts categorized into a two level hierarchy ¨ 20 top level categories ¨ Totally 280 categories at the second level ¨ Arts and Humanities, Theater Acting Sports, Rugby League Slide 24

![Experiments n 20 Newsgroups 10 binary problems from Raina et al 06 Experiments n 20 Newsgroups ¨ 10 binary problems (from [Raina et al, ‘ 06])](https://slidetodoc.com/presentation_image_h/319eae8c5d46bab6c478f396d9487136/image-26.jpg)

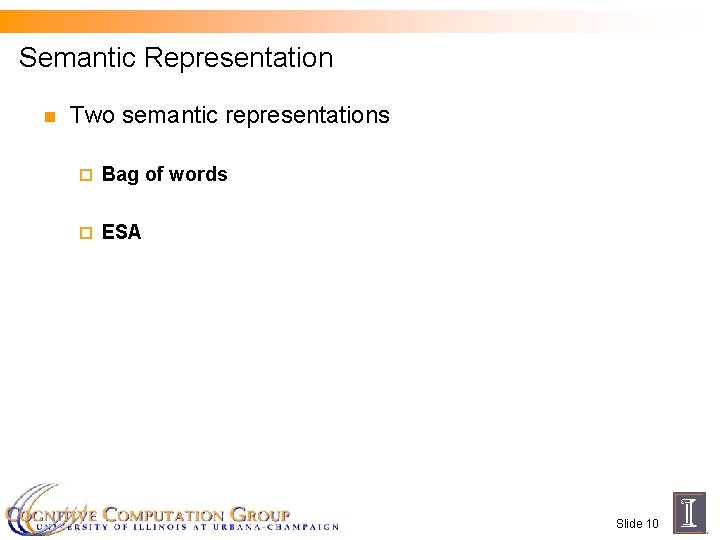

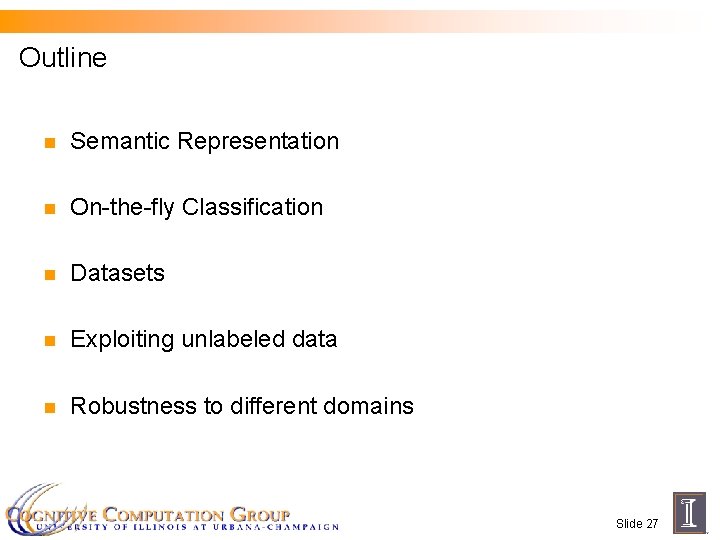

Experiments n 20 Newsgroups ¨ 10 binary problems (from [Raina et al, ‘ 06]) Religion vs. Politics. guns � Motorcycles vs. MS Windows n Yahoo! Answers ¨ 20 binary problems Health, Diet fitness vs. Health Allergies Consumer Electronics DVRs vs. Pets Rodents Slide 25

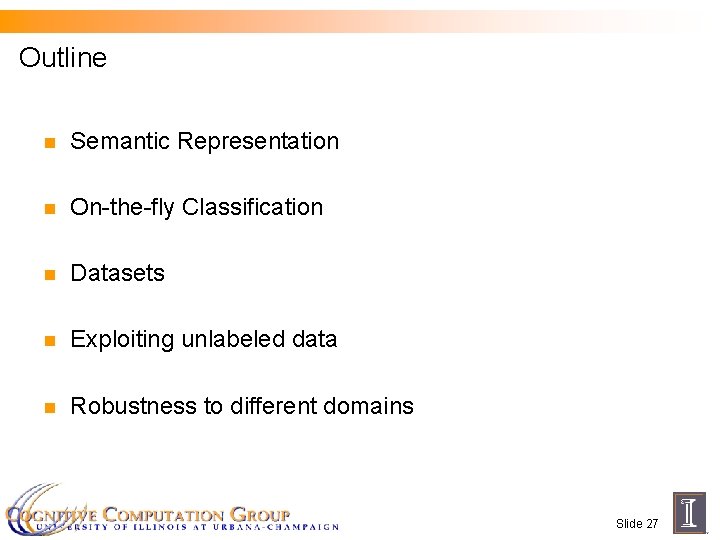

Results: On-the-fly classification Dataset Supervised Baseline Bag of Words ESA Newsgroup 71. 7 65. 7 85. 3 Yahoo! 84. 3 66. 8 88. 6 Naïve Bayes classifier Nearest neighbors, Uses annotated data, Ignores labels Uses labels, No annotated data Slide 26

Outline n Semantic Representation n On-the-fly Classification n Datasets n Exploiting unlabeled data n Robustness to different domains Slide 27

Using Unlabeled Data n Knowing the data collection helps ¨ n We can learn specific biases of the dataset Potential for semi-supervised learning Slide 28

Bootstrapping n Each label name is a “labeled” document ¨ n Train initial classifier ¨ n One “example” in word or concept space Same as the on-the-fly classifier Loop: Classify all documents with current classifier ¨ Retrain classifier with highly confident predictions ¨ Slide 29

Co-training n Words and concepts are two independent “views” n Each view is a teacher for the other [Blum & Mitchell ‘ 98] Slide 30

Co-training n Train initial classifiers in word space and concept space n Loop Classify documents with current classifiers ¨ Retrain with highly confident predictions� of both classifiers ¨ Slide 31

Using unlabeled data n Three approaches ¨ Bootstrapping with labels using Bag of Words ¨ Bootstrapping with labels using ESA ¨ Co-training Slide 32

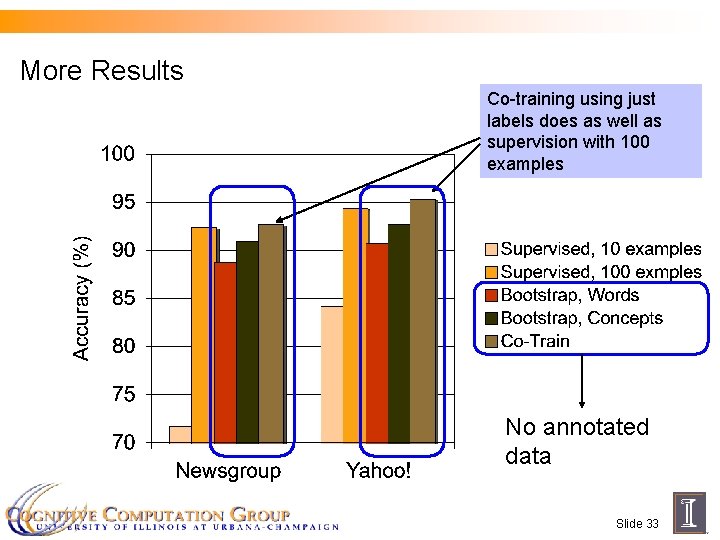

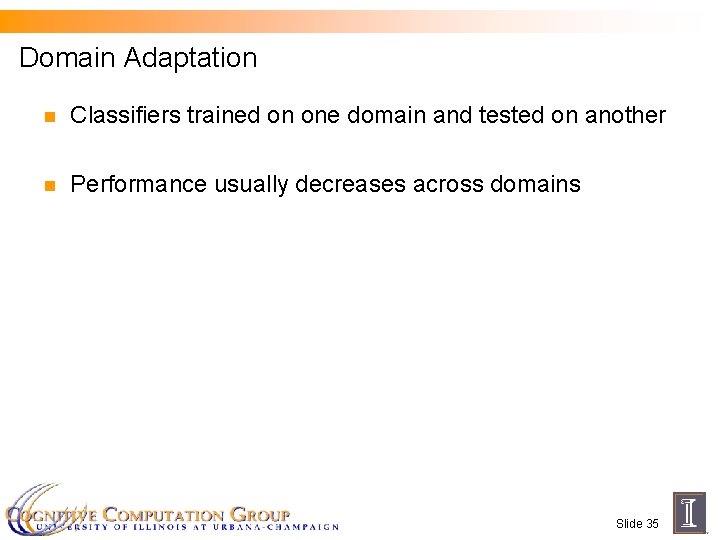

More Results Co-training using just labels does as well as supervision with 100 examples No annotated data Slide 33

Outline n Semantic Representation n On-the-fly Classification n Datasets n Exploiting unlabeled data n Robustness to different domains Slide 34

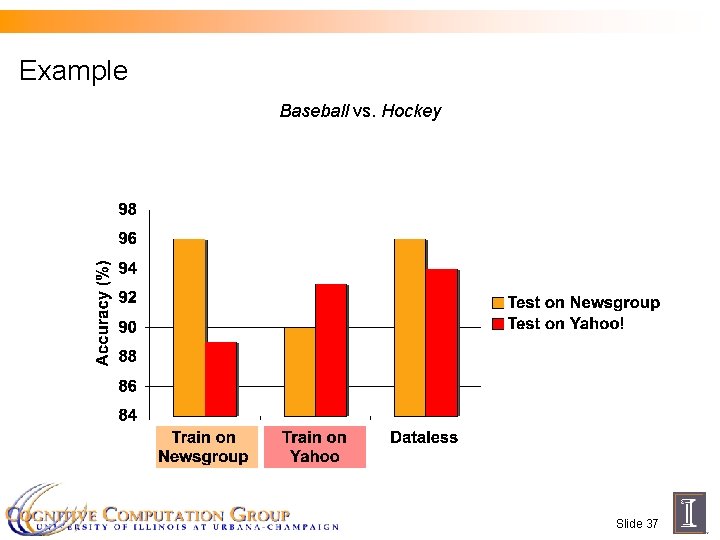

Domain Adaptation n Classifiers trained on one domain and tested on another n Performance usually decreases across domains Slide 35

But the label names are the same n Label names don’t depend on the domain n Label names are robust across domains ¨ On-the-fly classifiers are domain independent Slide 36

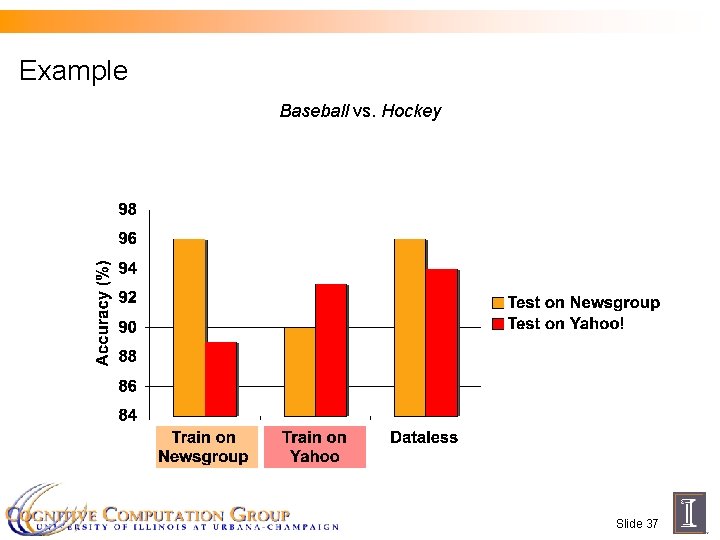

Example Baseball vs. Hockey Slide 37

Conclusion n Sometimes, label names are tell us more about a class than annotated examples ¨ n Standard learning practice of treating labels as unique identifiers loses information The right semantic representation helps ¨ What is the right one? Slide 38