Implementation of Voxel Volume Projection Operators Using CUDA

![The Joseph forward projector [1] • Illustration in the 2 D case: pi= L The Joseph forward projector [1] • Illustration in the 2 D case: pi= L](https://slidetodoc.com/presentation_image_h2/f92c4a56a556ba606f4c02b9b1fd0a12/image-6.jpg)

- Slides: 17

Implementation of Voxel Volume Projection Operators Using CUDA Applications to Iterative Cone-Beam CT reconstruction

Three questions to keep in mind during the presentation 1. Name three differences between the GPU and CPU contributing to the observed performance improvements. 2. Scattered accumulation (a[ix] = a[ix] + b) can result in reduced performance and unexpected results in CUDA. Why? Please state two possible problems! 3. Suppose that each thread in a CUDA program is responsible for writing to one unique output element. Give one possible solution for handling ”odd sized” output data.

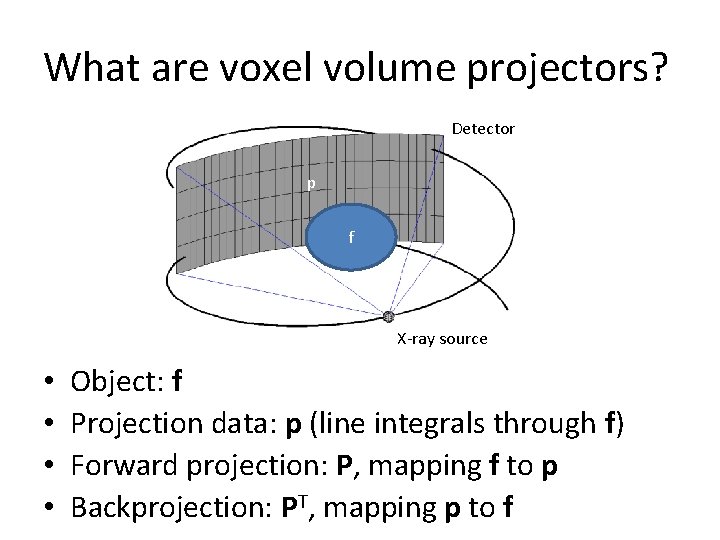

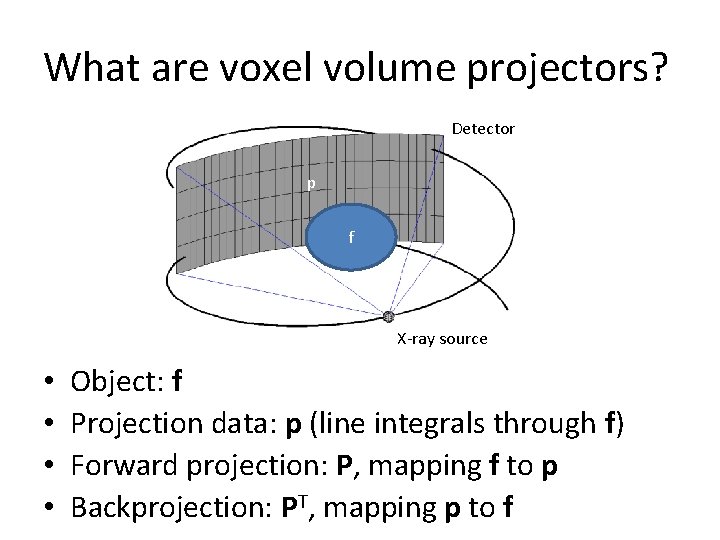

What are voxel volume projectors? Detector p f X-ray source • • Object: f Projection data: p (line integrals through f) Forward projection: P, mapping f to p Backprojection: PT, mapping p to f

The tomography problem • Given p, find an image/volume f, such that the difference between Pf and p is small in some sense, e. g. z(f)=||Pf-p||2 attains a minimum. • Steepest descent approach: – Gradient: z(f)=2 PT(Pf-p) – Update step: fk+1=fk-2 a. PT(Pfk-p) • We use this method only for illustration of why P and PT are needed. In practice, faster and better regularized methods are needed.

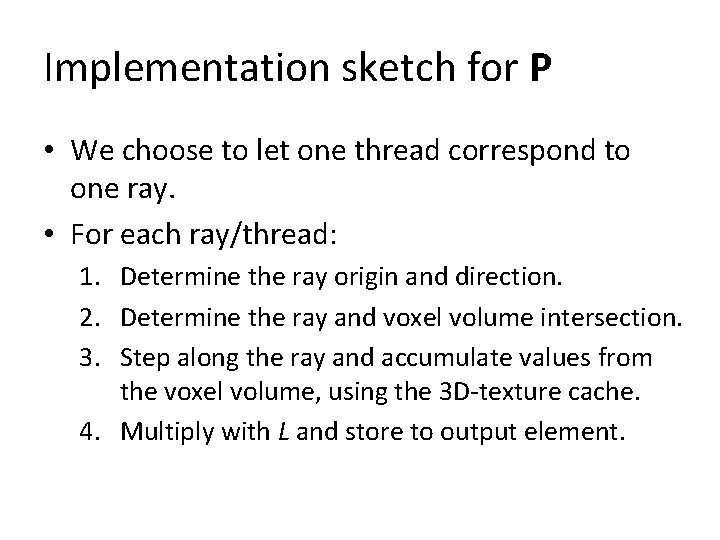

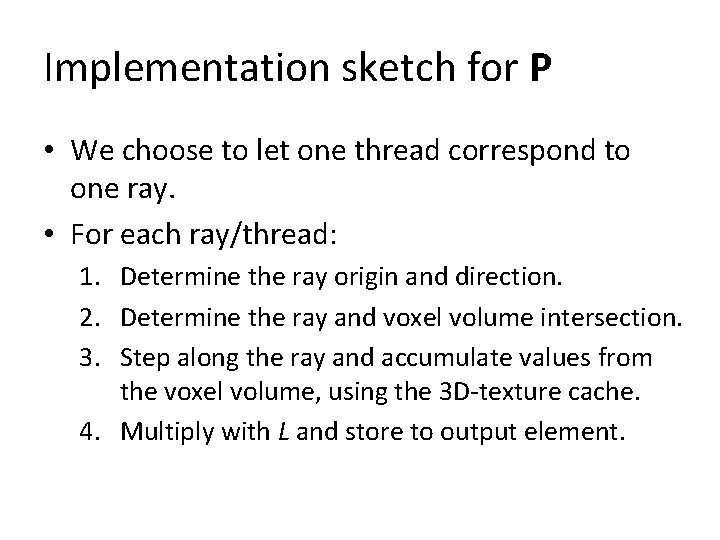

Why implement P and PT on the GPU? • Reasonable sizes of f and p are around 2 GB, implying a size of 2 GBx 2 GB for P and PT. • Although these matrices are sparse, the number of nonzero elements is approximately 2000 GB, i. e. , matrix -vector products involving these matrices are computationally demanding. • The GPU offers many solutions that help speeding up the computations: – – Massive parallelism Hardware linear interpolation Very high memory bandwidth Texture caches ”optimized for 2 D locality”

![The Joseph forward projector 1 Illustration in the 2 D case pi L The Joseph forward projector [1] • Illustration in the 2 D case: pi= L](https://slidetodoc.com/presentation_image_h2/f92c4a56a556ba606f4c02b9b1fd0a12/image-6.jpg)

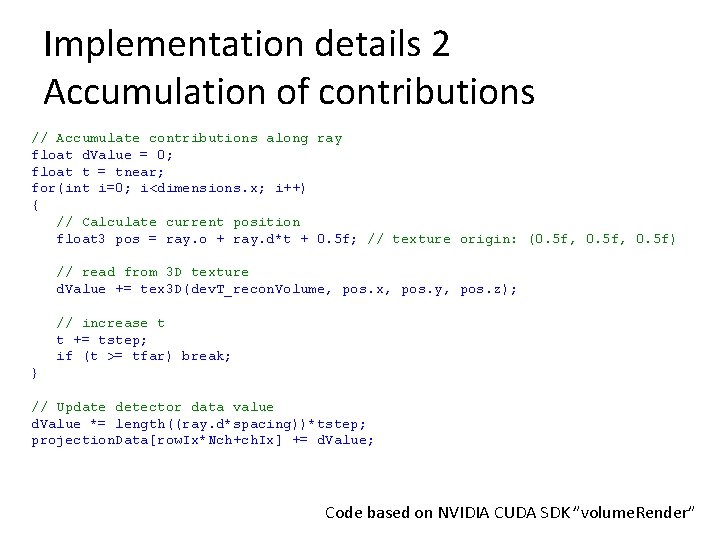

The Joseph forward projector [1] • Illustration in the 2 D case: pi= L (x 0+x 1+x 2+x 3) x 0 x 1 x 2 x 3 L • Generalization to 3 D is straightforward. Instead of linear intepolation, bilinear interpolation is used. [1] Joseph, P. M. , An improved algorithm for reprojecting rays through pixel images, IEEE Transactions on Medical Imaging, 1982, MI-1, 192 -196

Implementation sketch for P • We choose to let one thread correspond to one ray. • For each ray/thread: 1. Determine the ray origin and direction. 2. Determine the ray and voxel volume intersection. 3. Step along the ray and accumulate values from the voxel volume, using the 3 D-texture cache. 4. Multiply with L and store to output element.

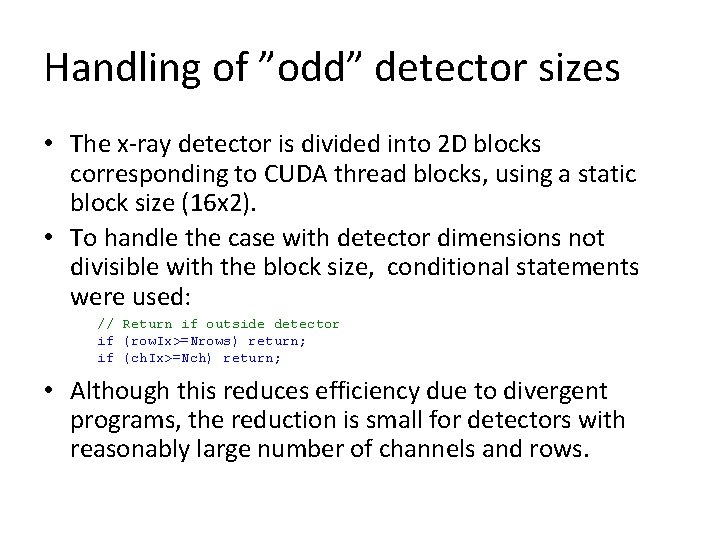

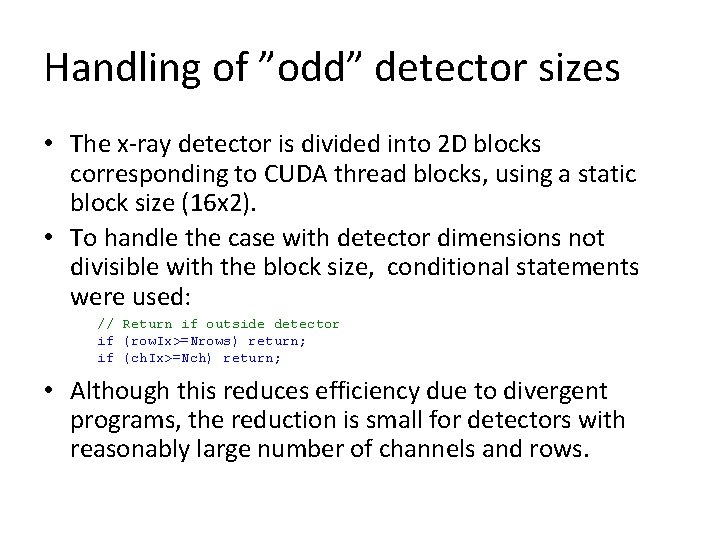

Handling of ”odd” detector sizes • The x-ray detector is divided into 2 D blocks corresponding to CUDA thread blocks, using a static block size (16 x 2). • To handle the case with detector dimensions not divisible with the block size, conditional statements were used: // Return if outside detector if (row. Ix>=Nrows) return; if (ch. Ix>=Nch) return; • Although this reduces efficiency due to divergent programs, the reduction is small for detectors with reasonably large number of channels and rows.

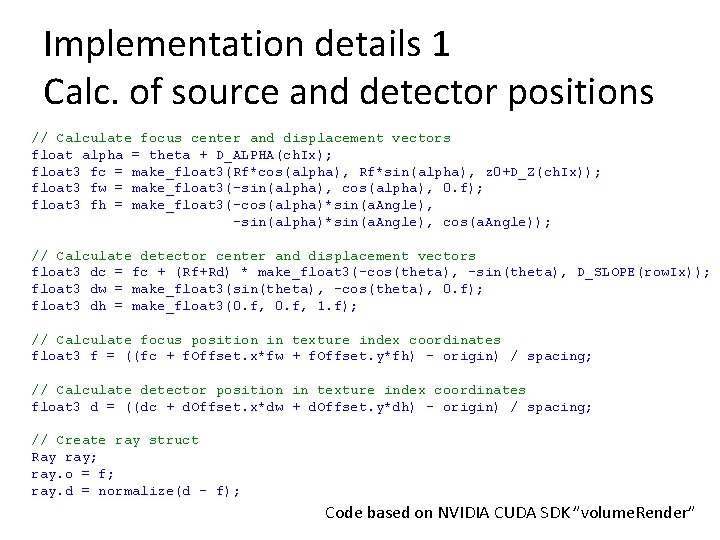

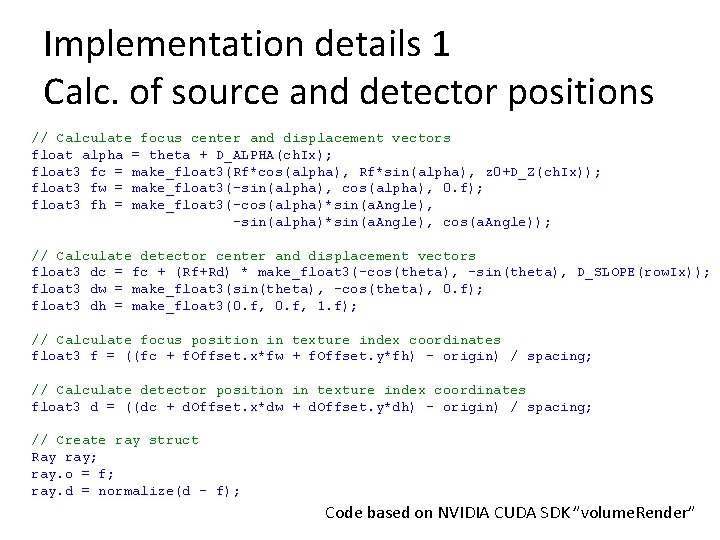

Implementation details 1 Calc. of source and detector positions // Calculate focus center and displacement vectors float alpha = theta + D_ALPHA(ch. Ix); float 3 fc = make_float 3(Rf*cos(alpha), Rf*sin(alpha), z 0+D_Z(ch. Ix)); float 3 fw = make_float 3(-sin(alpha), cos(alpha), 0. f); float 3 fh = make_float 3(-cos(alpha)*sin(a. Angle), -sin(alpha)*sin(a. Angle), cos(a. Angle)); // Calculate detector center and displacement vectors float 3 dc = fc + (Rf+Rd) * make_float 3(-cos(theta), -sin(theta), D_SLOPE(row. Ix)); float 3 dw = make_float 3(sin(theta), -cos(theta), 0. f); float 3 dh = make_float 3(0. f, 1. f); // Calculate focus position in texture index coordinates float 3 f = ((fc + f. Offset. x*fw + f. Offset. y*fh) - origin) / spacing; // Calculate detector position in texture index coordinates float 3 d = ((dc + d. Offset. x*dw + d. Offset. y*dh) - origin) / spacing; // Create ray struct Ray ray; ray. o = f; ray. d = normalize(d - f); Code based on NVIDIA CUDA SDK ”volume. Render”

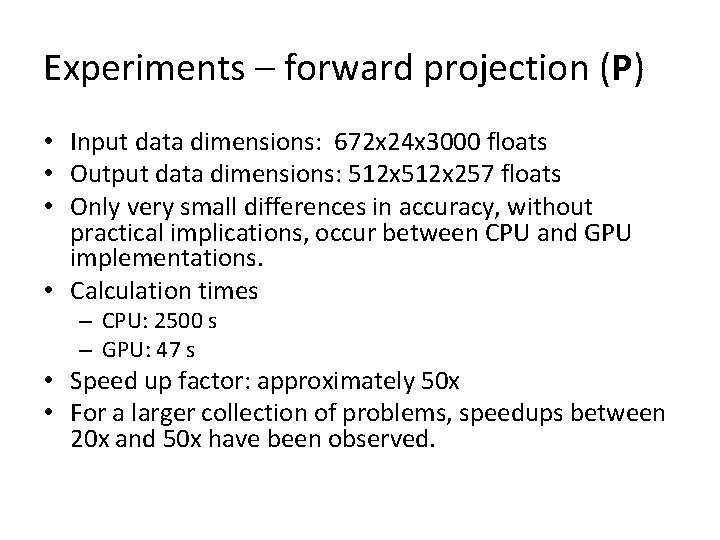

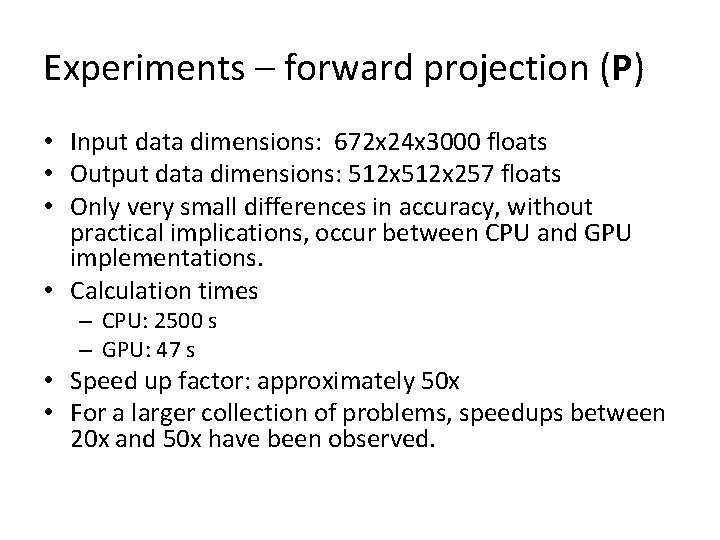

Implementation details 2 Accumulation of contributions // Accumulate contributions along ray float d. Value = 0; float t = tnear; for(int i=0; i<dimensions. x; i++) { // Calculate current position float 3 pos = ray. o + ray. d*t + 0. 5 f; // texture origin: (0. 5 f, 0. 5 f) // read from 3 D texture d. Value += tex 3 D(dev. T_recon. Volume, pos. x, pos. y, pos. z); // increase t t += tstep; if (t >= tfar) break; } // Update detector data value d. Value *= length((ray. d*spacing))*tstep; projection. Data[row. Ix*Nch+ch. Ix] += d. Value; Code based on NVIDIA CUDA SDK ”volume. Render”

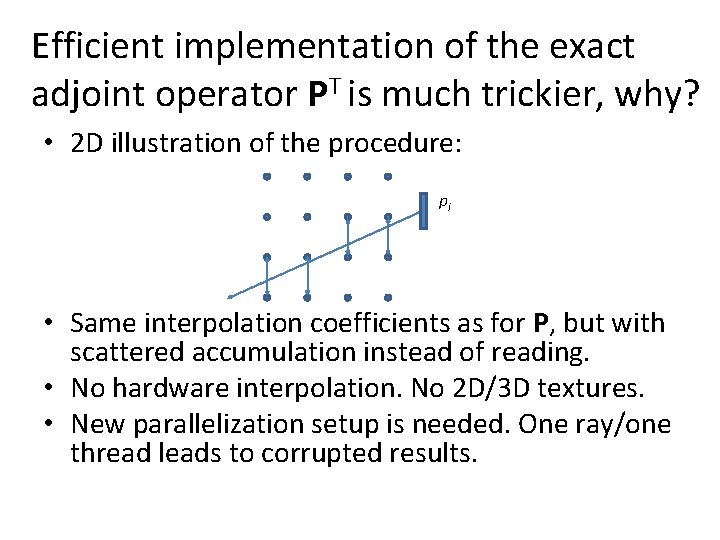

Experiments – forward projection (P) • Input data dimensions: 672 x 24 x 3000 floats • Output data dimensions: 512 x 257 floats • Only very small differences in accuracy, without practical implications, occur between CPU and GPU implementations. • Calculation times – CPU: 2500 s – GPU: 47 s • Speed up factor: approximately 50 x • For a larger collection of problems, speedups between 20 x and 50 x have been observed.

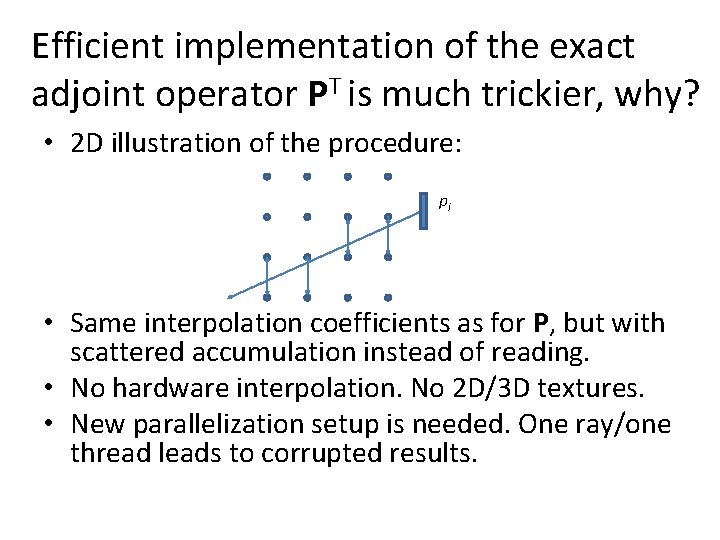

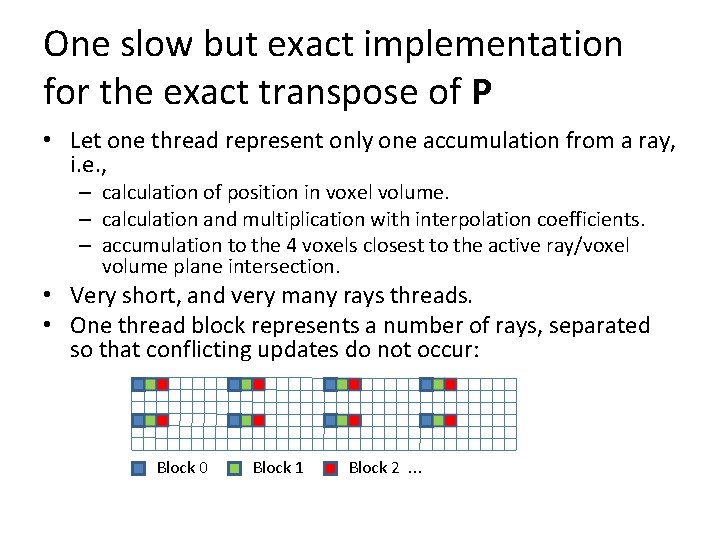

Efficient implementation of the exact adjoint operator PT is much trickier, why? • 2 D illustration of the procedure: pi • Same interpolation coefficients as for P, but with scattered accumulation instead of reading. • No hardware interpolation. No 2 D/3 D textures. • New parallelization setup is needed. One ray/one thread leads to corrupted results.

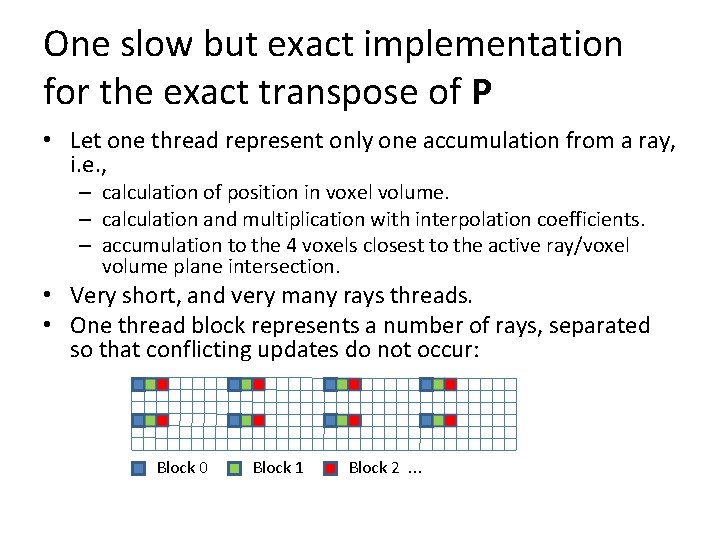

One slow but exact implementation for the exact transpose of P • Let one thread represent only one accumulation from a ray, i. e. , – calculation of position in voxel volume. – calculation and multiplication with interpolation coefficients. – accumulation to the 4 voxels closest to the active ray/voxel volume plane intersection. • Very short, and very many rays threads. • One thread block represents a number of rays, separated so that conflicting updates do not occur: Block 0 Block 1 Block 2. . .

Experiments – backprojection (PT) • Input data dimensions: 512 x 257 floats • Output data dimensions: 672 x 24 x 3000 floats • Calculation times – CPU: 2700 s – GPU: 700 s • Speed up factor: approximately 4 x • For a larger collection of problems, speedups between 4 x and 8 x have been observed.

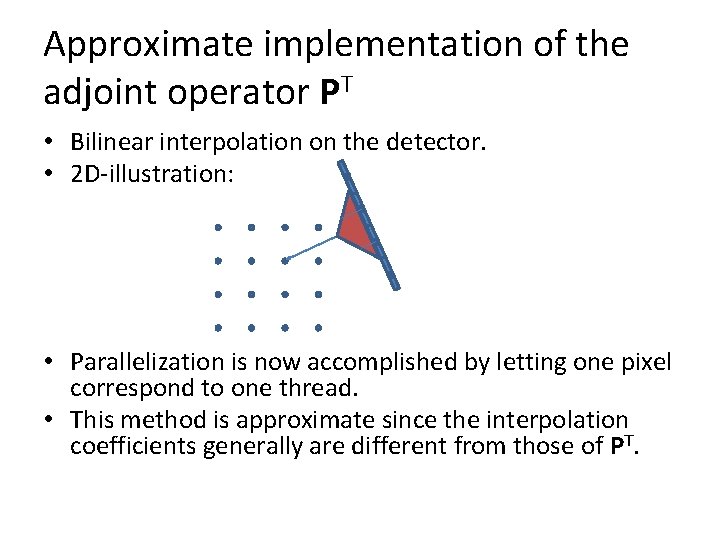

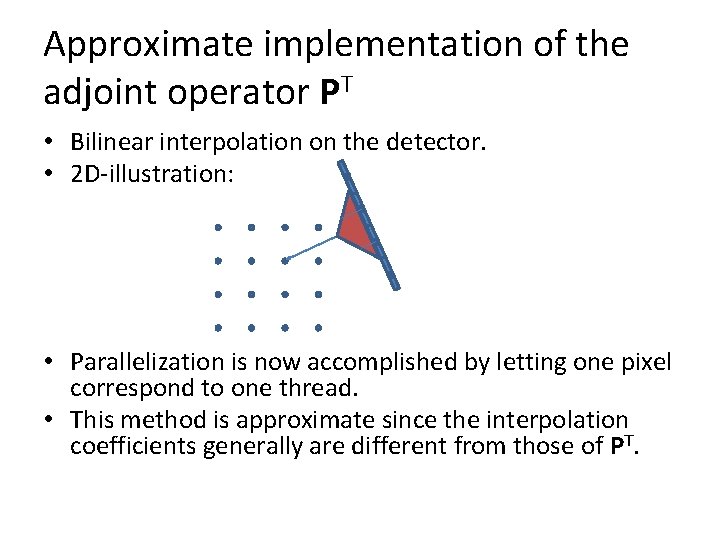

Approximate implementation of the adjoint operator PT • Bilinear interpolation on the detector. • 2 D-illustration: • Parallelization is now accomplished by letting one pixel correspond to one thread. • This method is approximate since the interpolation coefficients generally are different from those of PT.

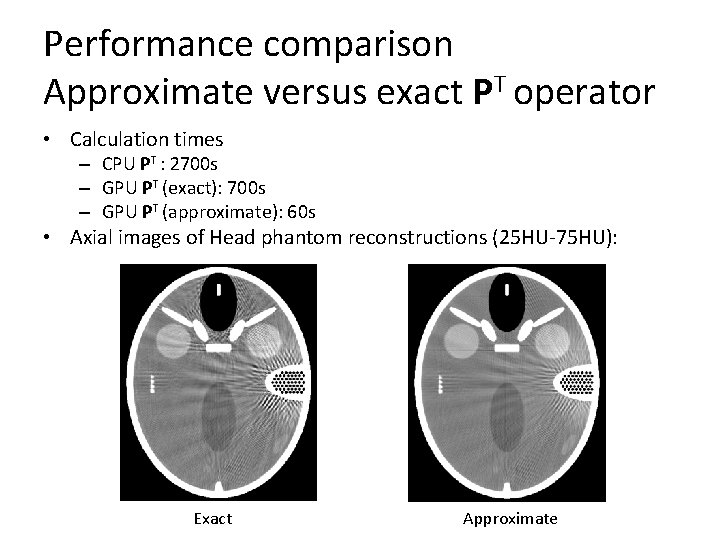

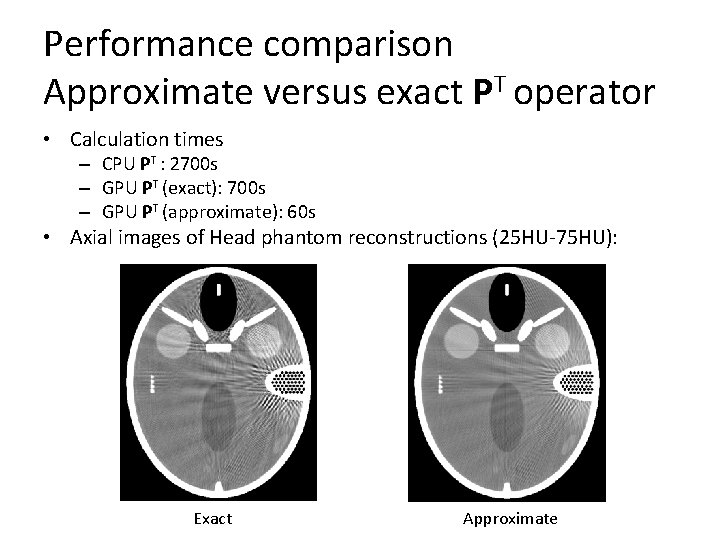

Performance comparison Approximate versus exact PT operator • Calculation times – CPU PT : 2700 s – GPU PT (exact): 700 s – GPU PT (approximate): 60 s • Axial images of Head phantom reconstructions (25 HU-75 HU): Exact Approximate

Conclusions and future research • For operations such as P and PT, speedups of 4 x to 50 x can be obtained. • The amount of speedup is highly dependent on – The possibility to efficiently read memory (coalesced or by the use of texture caches / constant memory). – The possibility to use hardware interpolation – Complexity of the kernel. Using too many registers slows down the program. Lookup tables in constant memory can help reducing the amount of registers. • Although scattered write is supported by CUDA, for these problemes, it did not seem good to use it from a performance point of view. Note that this prohibits using cuda. Array textures. • Remark: Even if the approximate PT operator give a bad result in the example given here, there are other situations where the exact operator is superior. It is therefore of interest to find better approximations.