Impact Evaluation Measuring Impact title Click to edit

- Slides: 42

Impact Evaluation Measuring Impact: title Click to edit Master Impact Evaluation Methods for Policy Makers style Paul Gertler UC Berkeley Click to edit Master subtitle style Note: slides by Sebastian Martinez, Christel Vermeersch and Paul Gertler. The content of this presentation reflects the views of the authors and not necessarily those of the World Bank. This version: November 2009. Human Development Network Middle East and North Africa Region World Bank Institute

Impact Evaluation p Logical Framework n p Measuring Impact n p p p How the program works “in theory” Identification Strategy Data Operational Plan Resources 2

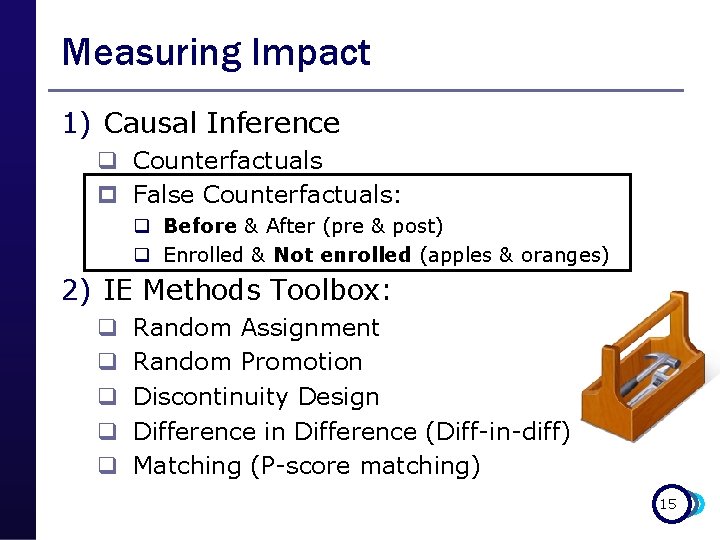

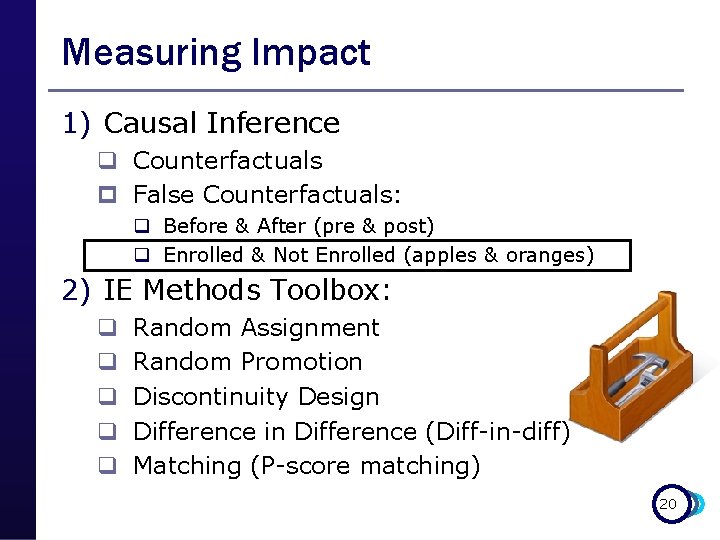

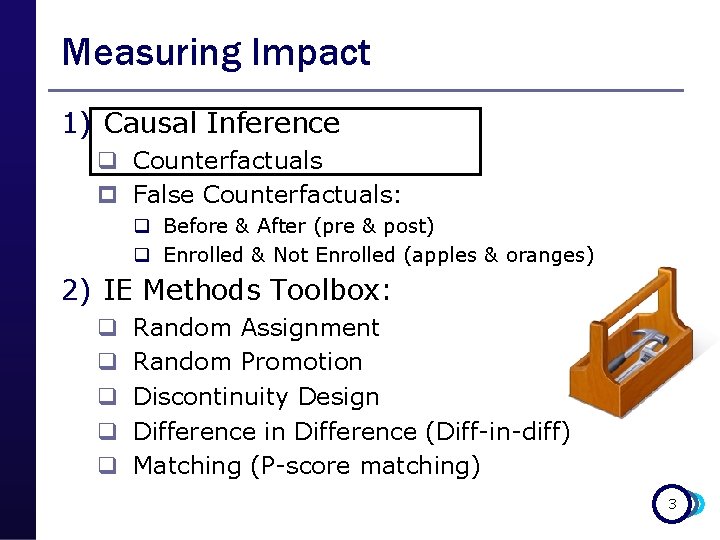

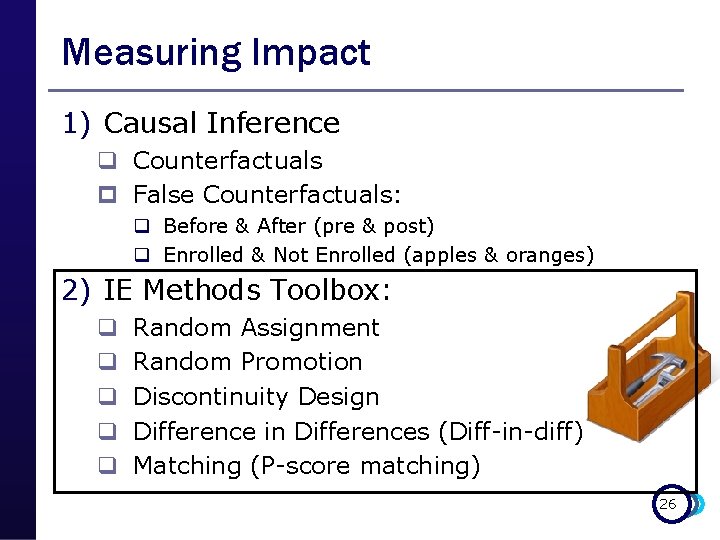

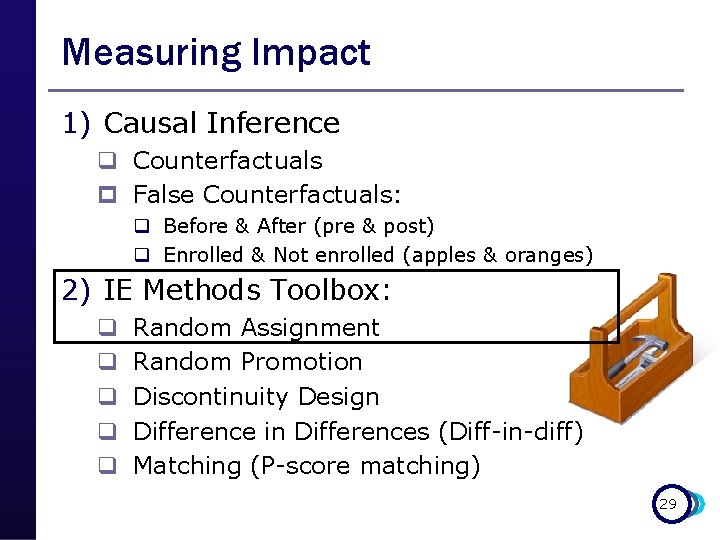

Measuring Impact 1) Causal Inference q Counterfactuals p False Counterfactuals: q Before & After (pre & post) q Enrolled & Not Enrolled (apples & oranges) 2) IE Methods Toolbox: q q q Random Assignment Random Promotion Discontinuity Design Difference in Difference (Diff-in-diff) Matching (P-score matching) 3

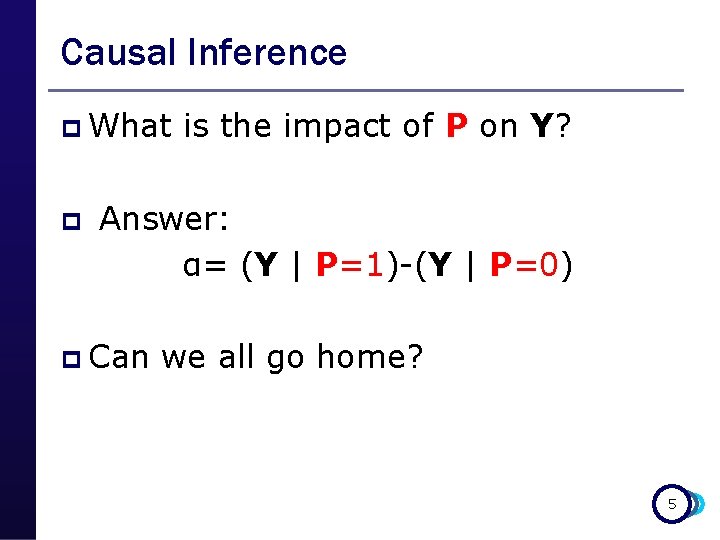

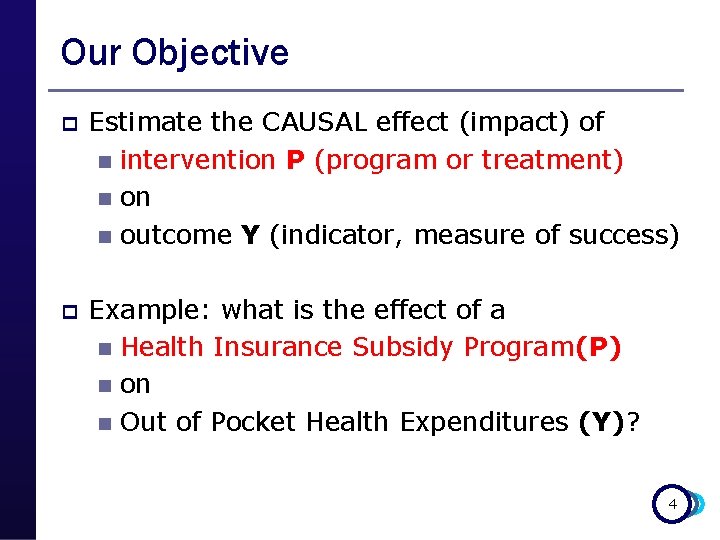

Our Objective p Estimate the CAUSAL effect (impact) of n intervention P (program or treatment) n on n outcome Y (indicator, measure of success) p Example: what is the effect of a n Health Insurance Subsidy Program(P) n on n Out of Pocket Health Expenditures (Y)? 4

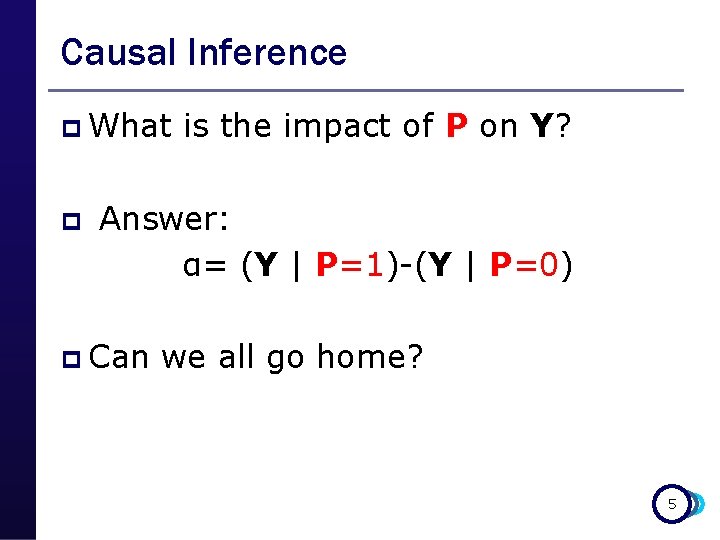

Causal Inference p What p is the impact of P on Y? Answer: α= (Y | P=1)-(Y | P=0) p Can we all go home? 5

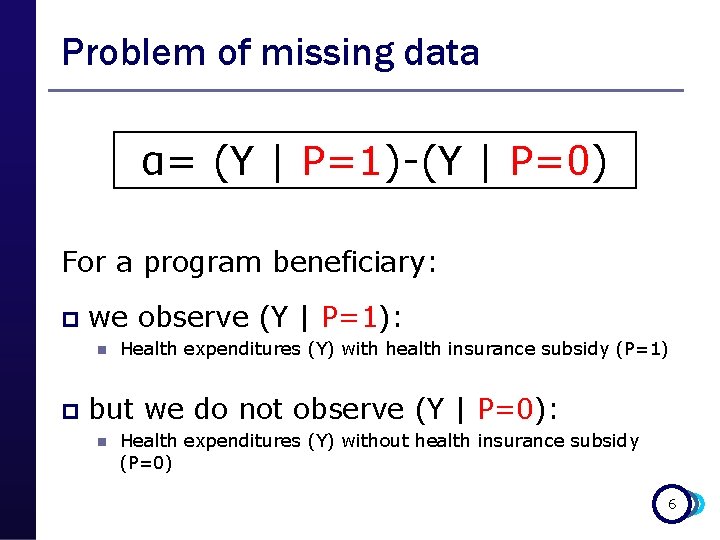

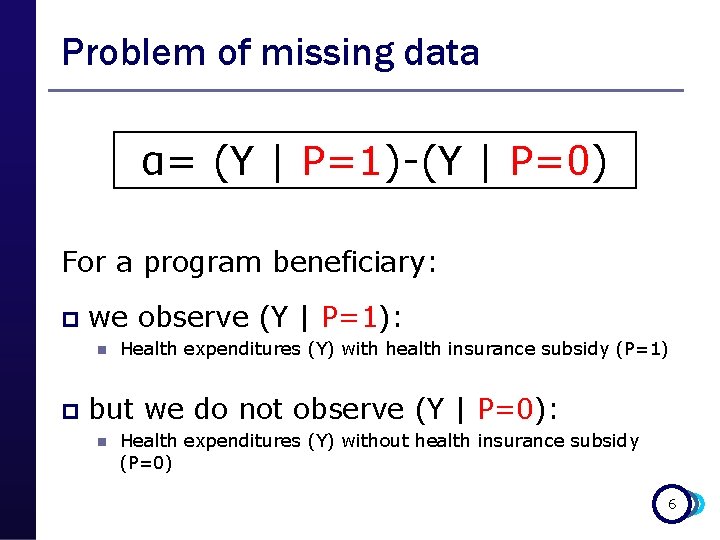

Problem of missing data α= (Y | P=1)-(Y | P=0) For a program beneficiary: p we observe (Y | P=1): n p Health expenditures (Y) with health insurance subsidy (P=1) but we do not observe (Y | P=0): n Health expenditures (Y) without health insurance subsidy (P=0) 6

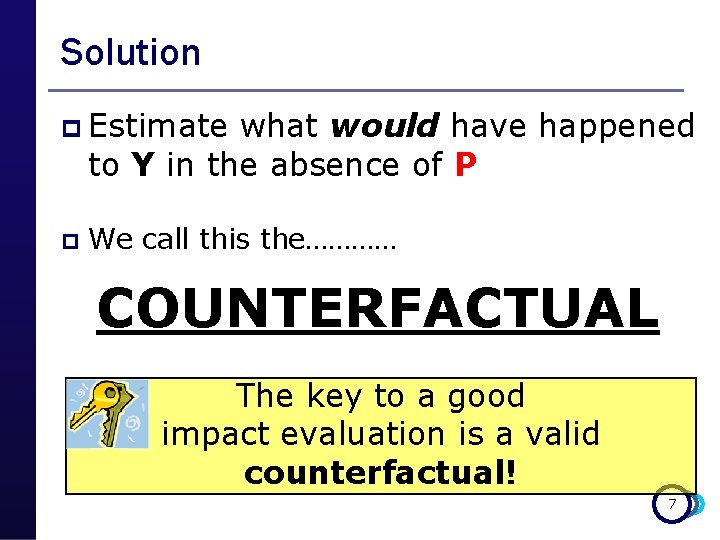

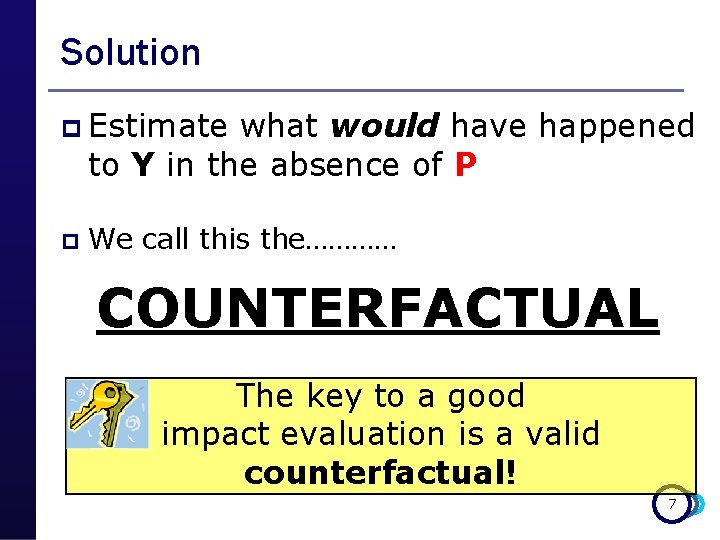

Solution p Estimate what would have happened to Y in the absence of P p We call this the………… COUNTERFACTUAL The key to a good impact evaluation is a valid counterfactual! 7

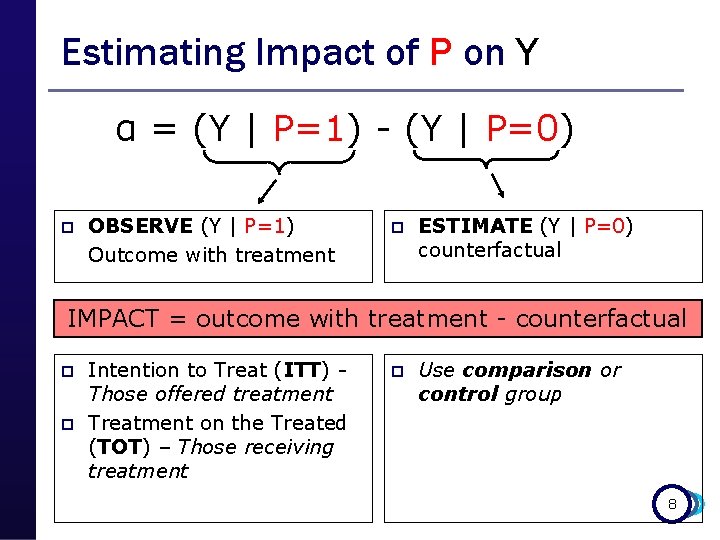

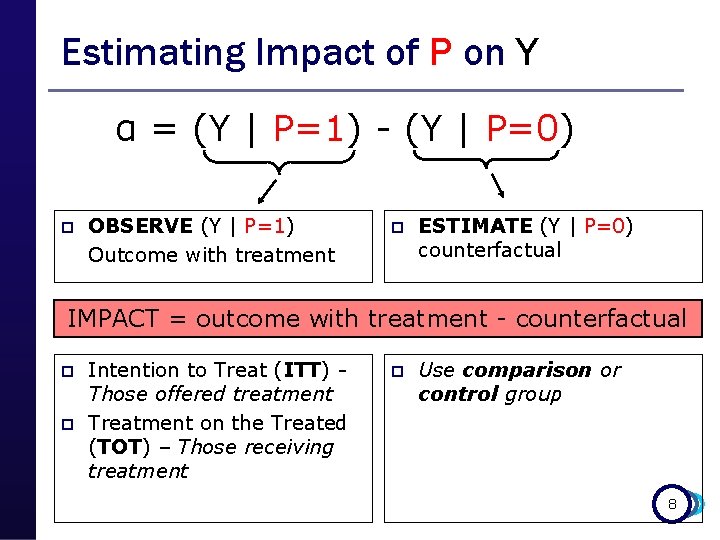

Estimating Impact of P on Y α = (Y | P=1) - (Y | P=0) p OBSERVE (Y | P=1) Outcome with treatment p ESTIMATE (Y | P=0) counterfactual IMPACT = outcome with treatment - counterfactual p p Intention to Treat (ITT) Those offered treatment Treatment on the Treated (TOT) – Those receiving treatment p Use comparison or control group 8

Example: What is the Impact of: giving Fulanito additional pocket money (P) on Fulanito’s consumption of candies (Y) 9

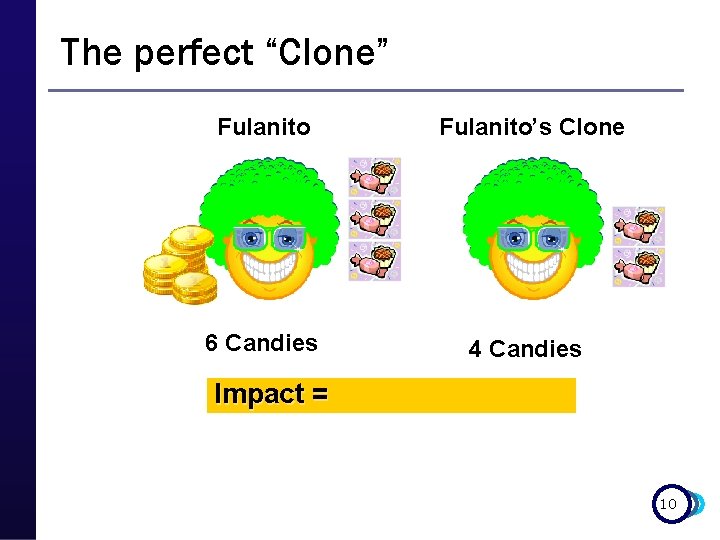

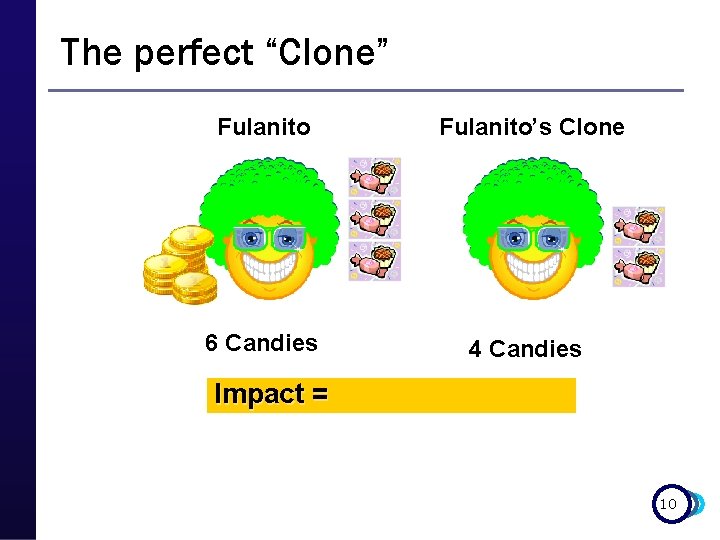

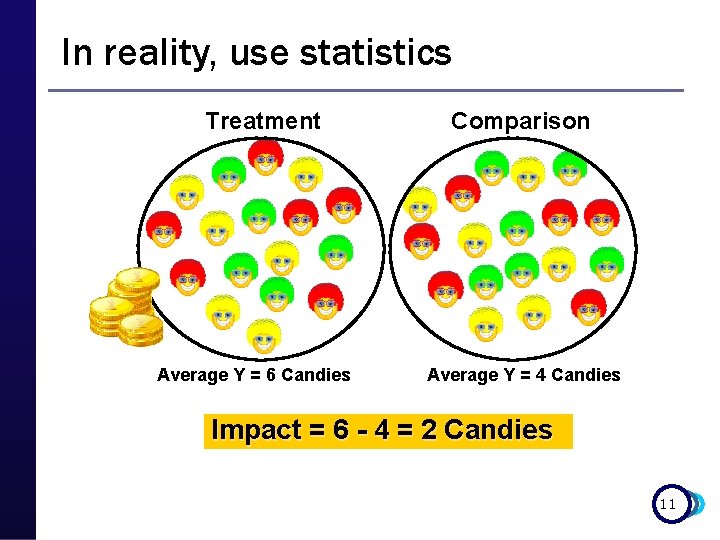

The perfect “Clone” Fulanito’s Clone 6 Candies 4 Candies Impact = 10

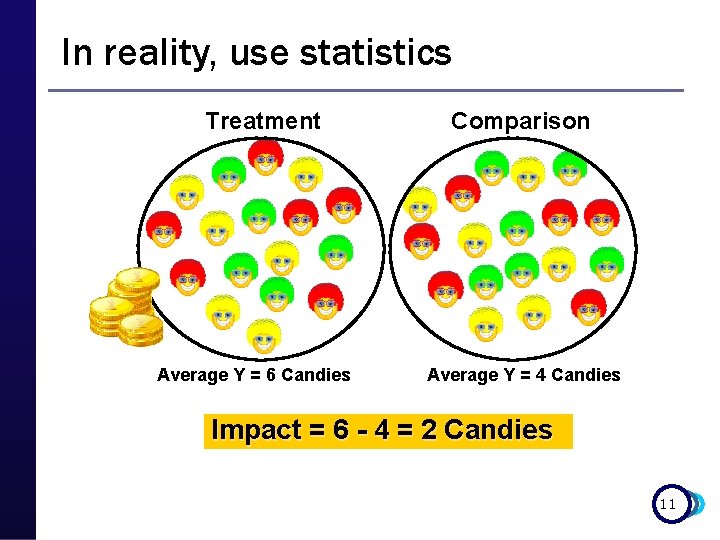

In reality, use statistics Treatment Average Y = 6 Candies Comparison Average Y = 4 Candies Impact = 6 - 4 = 2 Candies 11

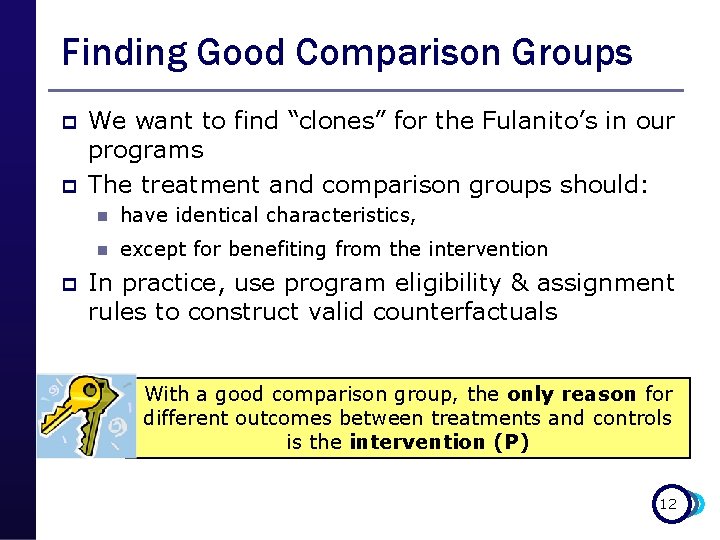

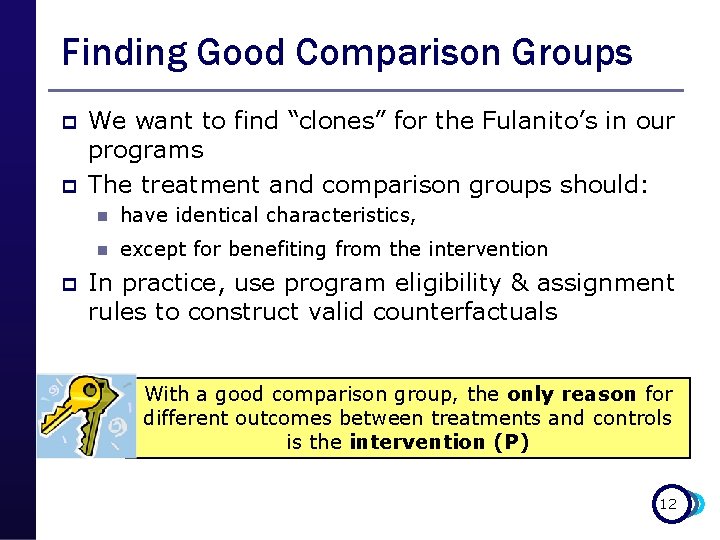

Finding Good Comparison Groups p p p We want to find “clones” for the Fulanito’s in our programs The treatment and comparison groups should: n have identical characteristics, n except for benefiting from the intervention In practice, use program eligibility & assignment rules to construct valid counterfactuals With a good comparison group, the only reason for different outcomes between treatments and controls is the intervention (P) 12

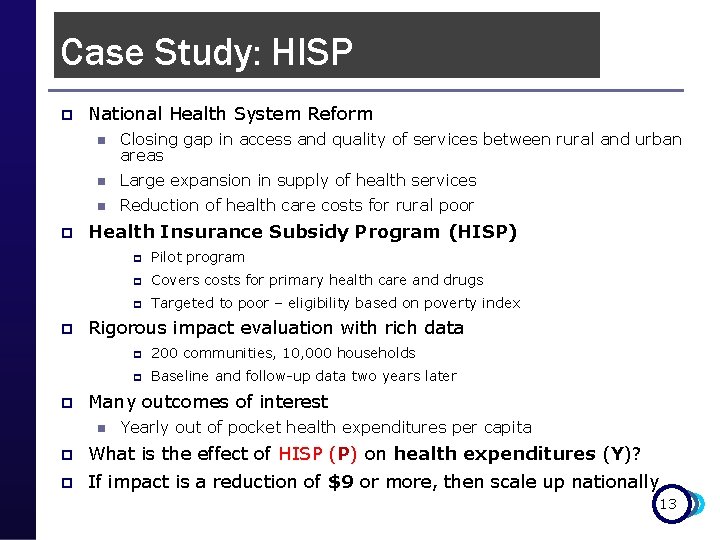

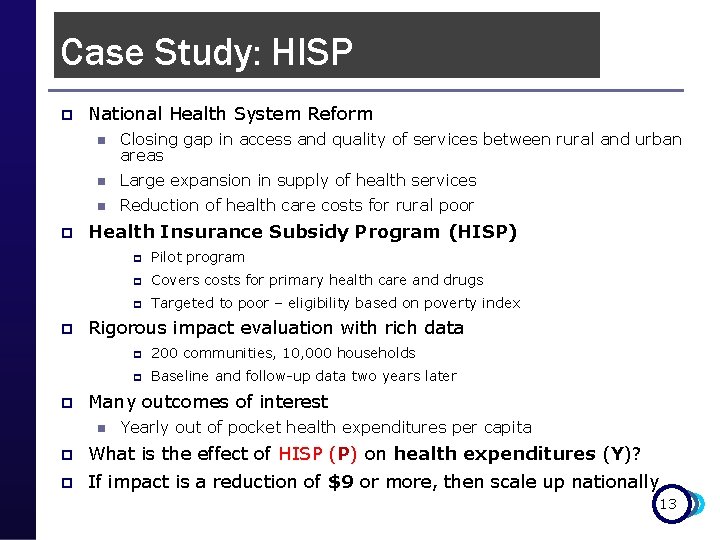

Case Study: HISP p p National Health System Reform n Closing gap in access and quality of services between rural and urban areas n Large expansion in supply of health services n Reduction of health care costs for rural poor Health Insurance Subsidy Program (HISP) p Pilot program p Covers costs for primary health care and drugs p Targeted to poor – eligibility based on poverty index Rigorous impact evaluation with rich data p 200 communities, 10, 000 households p Baseline and follow-up data two years later Many outcomes of interest n Yearly out of pocket health expenditures per capita p What is the effect of HISP (P) on health expenditures (Y)? p If impact is a reduction of $9 or more, then scale up nationally 13

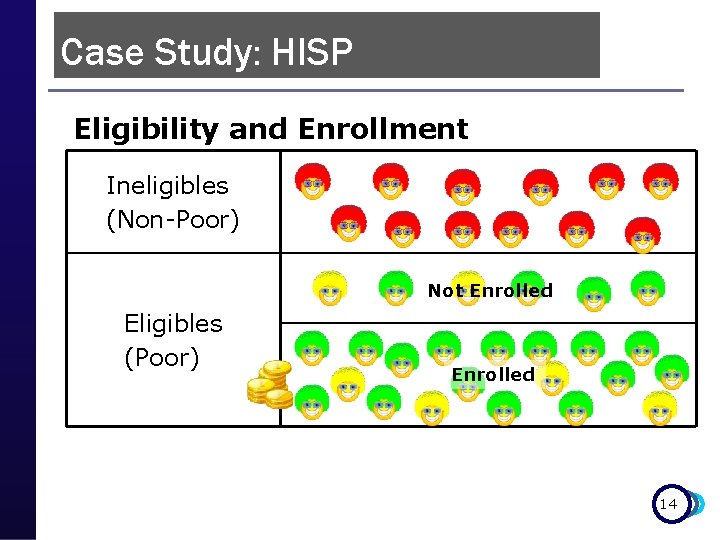

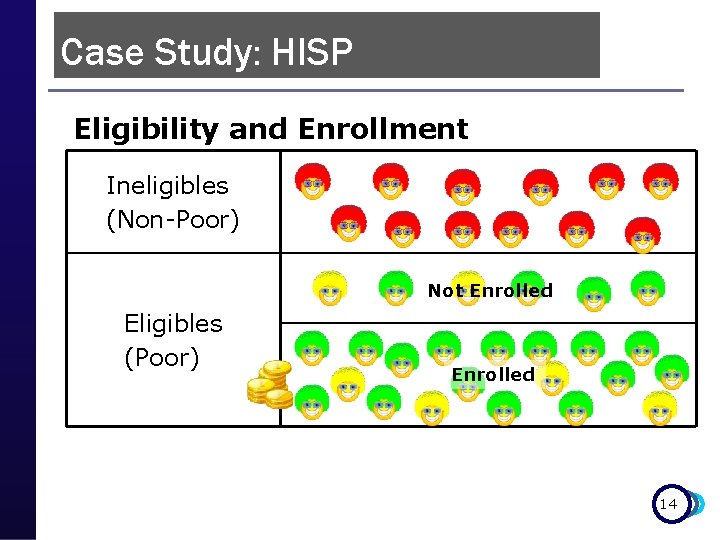

Case Study: HISP Eligibility and Enrollment Ineligibles (Non-Poor) Not Enrolled Eligibles (Poor) Enrolled 14

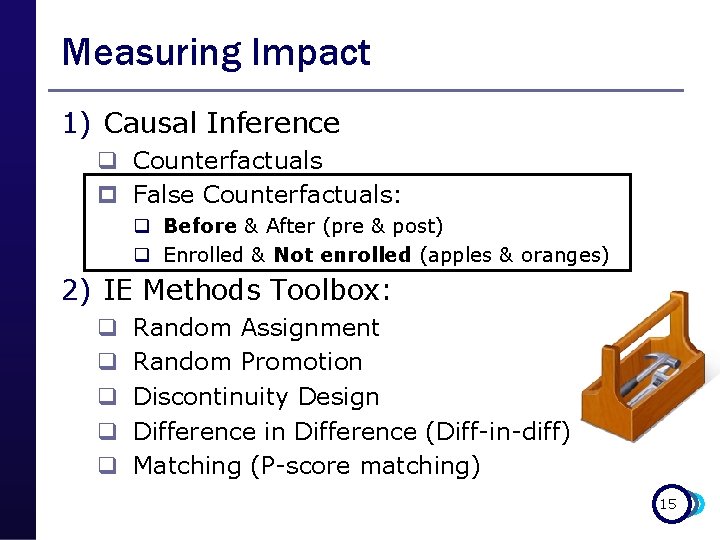

Measuring Impact 1) Causal Inference q Counterfactuals p False Counterfactuals: q Before & After (pre & post) q Enrolled & Not enrolled (apples & oranges) 2) IE Methods Toolbox: q q q Random Assignment Random Promotion Discontinuity Design Difference in Difference (Diff-in-diff) Matching (P-score matching) 15

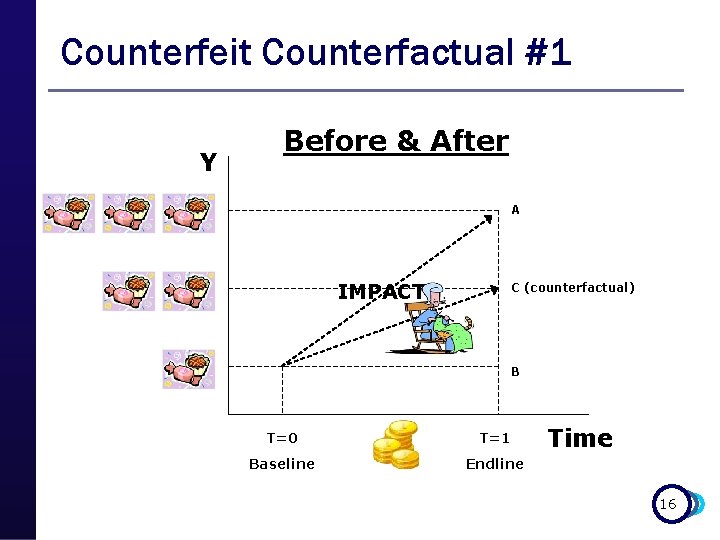

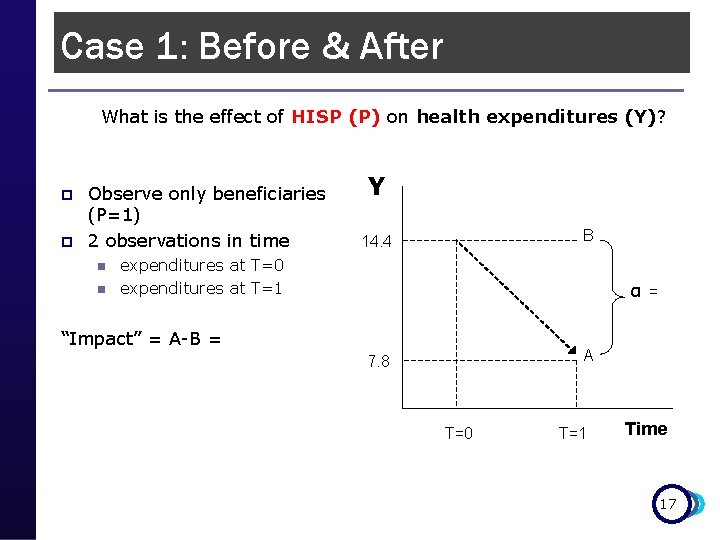

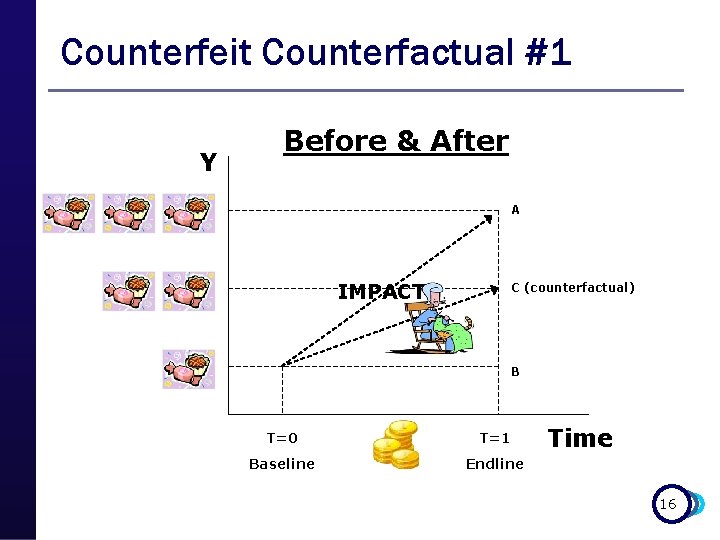

Counterfeit Counterfactual #1 Y Before & After A IMPACT? C (counterfactual) B T=0 T=1 Baseline Endline Time 16

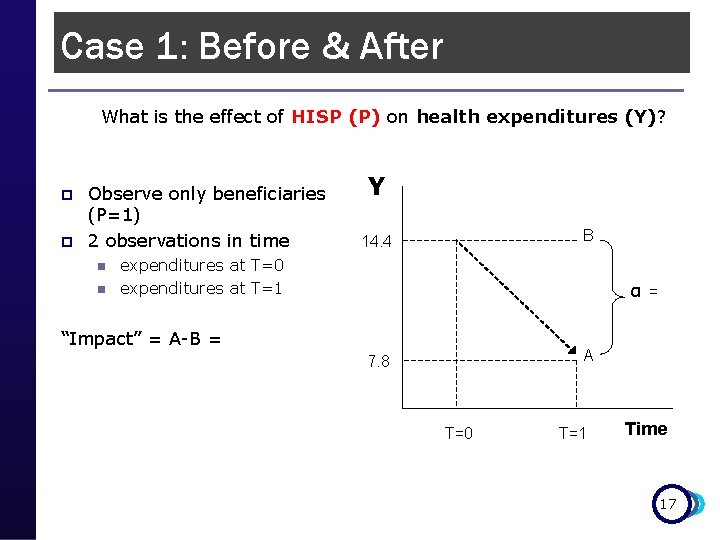

Case 1: Before & After What is the effect of HISP (P) on health expenditures (Y)? p p Observe only beneficiaries (P=1) 2 observations in time n n Y B 14. 4 expenditures at T=0 expenditures at T=1 α = “Impact” = A-B = A 7. 8 T=0 T=1 Time 17

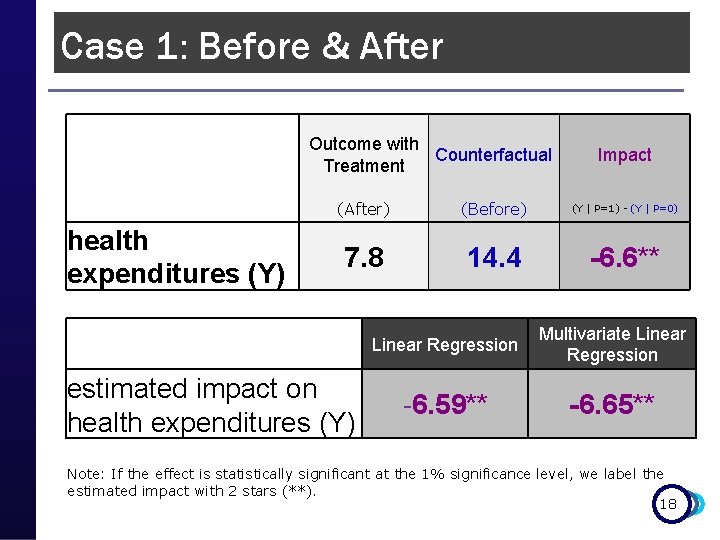

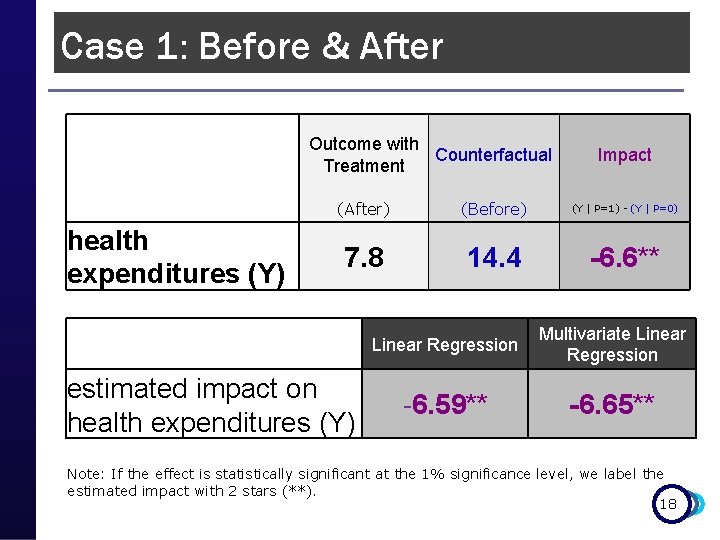

Case 1: Before & After Outcome with Counterfactual Treatment Impact health expenditures (Y) (After) (Before) (Y | P=1) - (Y | P=0) 7. 8 14. 4 -6. 6** Linear Regression Multivariate Linear Regression -6. 59** -6. 65** estimated impact on health expenditures (Y) Note: If the effect is statistically significant at the 1% significance level, we label the estimated impact with 2 stars (**). 18

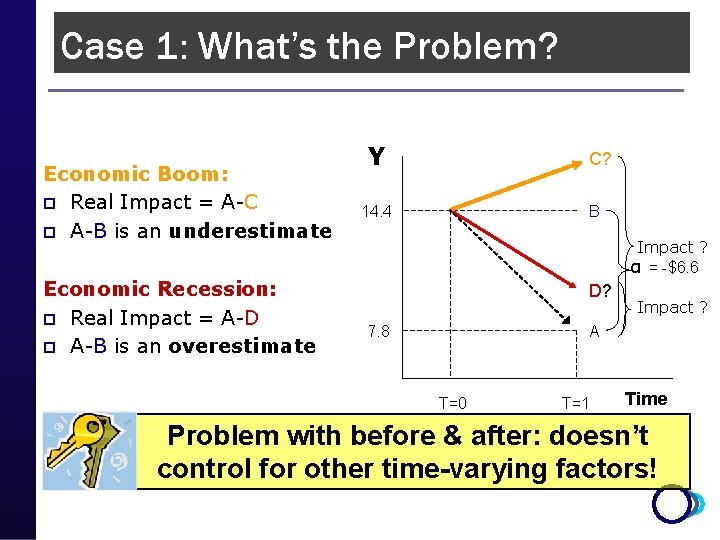

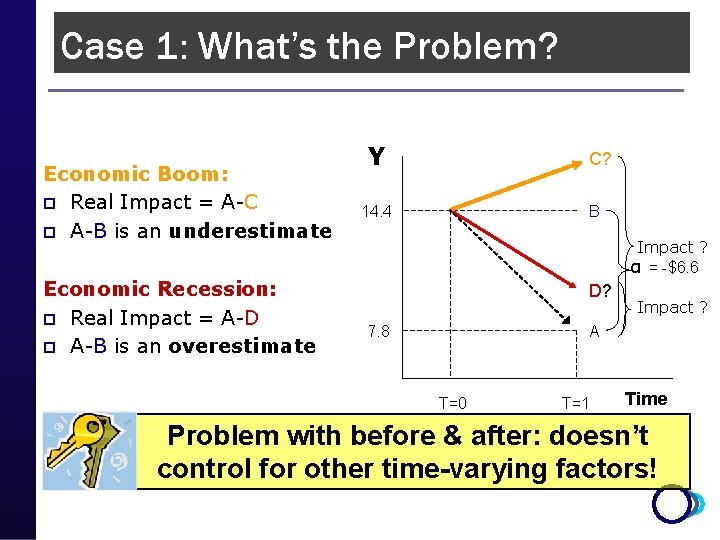

Case 1: What’s the Problem? Economic Boom: p Real Impact = A-C p A-B is an underestimate Economic Recession: p Real Impact = A-D p A-B is an overestimate Y C? 14. 4 B Impact ? α = -$6. 6 D? 7. 8 Impact ? A T=0 T=1 Time Problem with before & after: doesn’t control for other time-varying factors!

Measuring Impact 1) Causal Inference q Counterfactuals p False Counterfactuals: q Before & After (pre & post) q Enrolled & Not Enrolled (apples & oranges) 2) IE Methods Toolbox: q q q Random Assignment Random Promotion Discontinuity Design Difference in Difference (Diff-in-diff) Matching (P-score matching) 20

False Counterfactual #2 Enrolled & Not Enrolled p If we have post-treatment data on n Enrolled: treatment group n Not-enrolled: “control” group (counterfactual) p p p Those ineligible to participate Those that choose NOT to participate Selection Bias n Reason for not enrolling may be correlated with outcome (Y) p Control for observables p But not unobservables!! n Estimated impact is confounded with other things 21

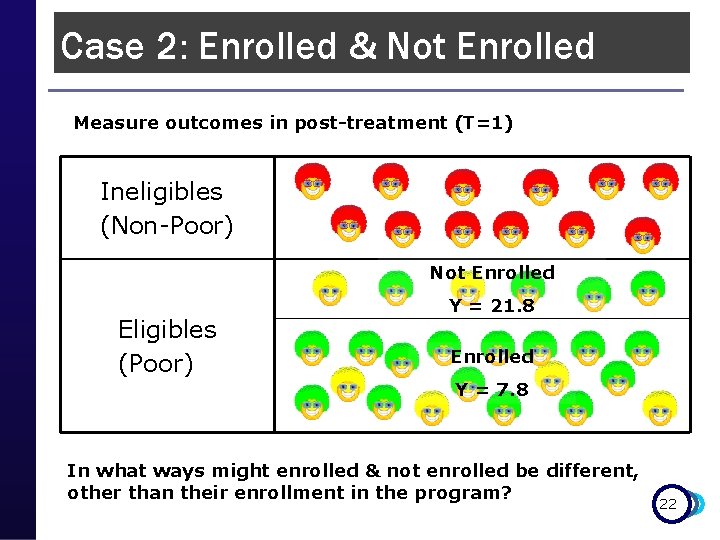

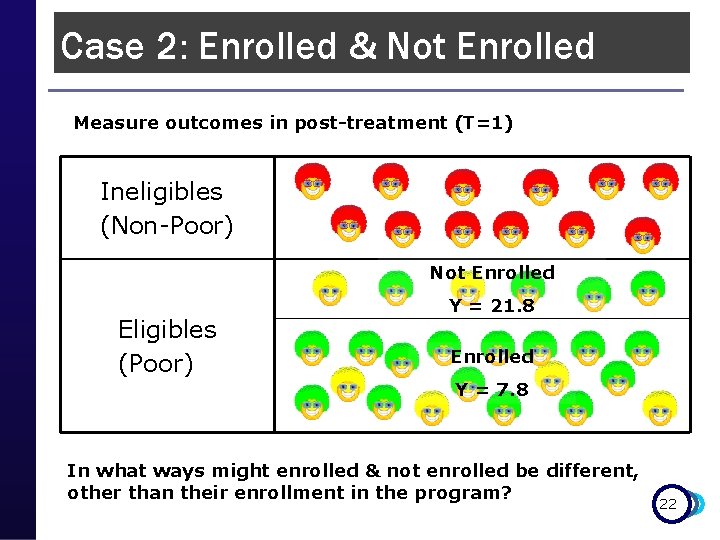

Case 2: Enrolled & Not Enrolled Measure outcomes in post-treatment (T=1) Ineligibles (Non-Poor) Not Enrolled Eligibles (Poor) Y = 21. 8 Enrolled Y = 7. 8 In what ways might enrolled & not enrolled be different, other than their enrollment in the program? 22

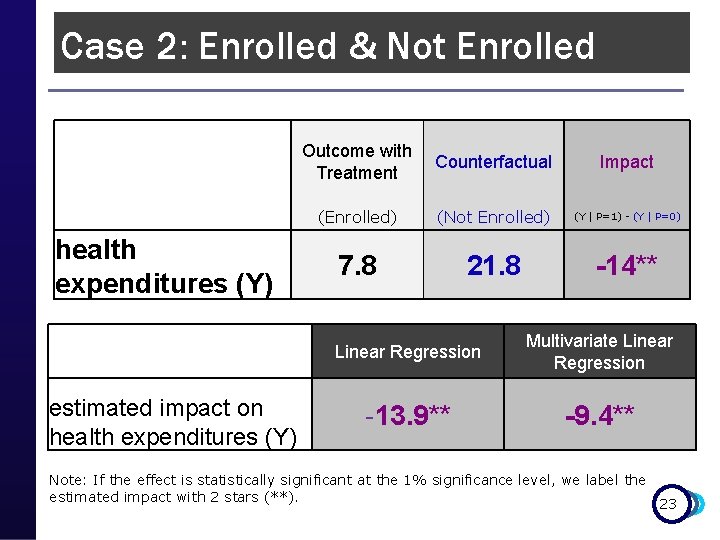

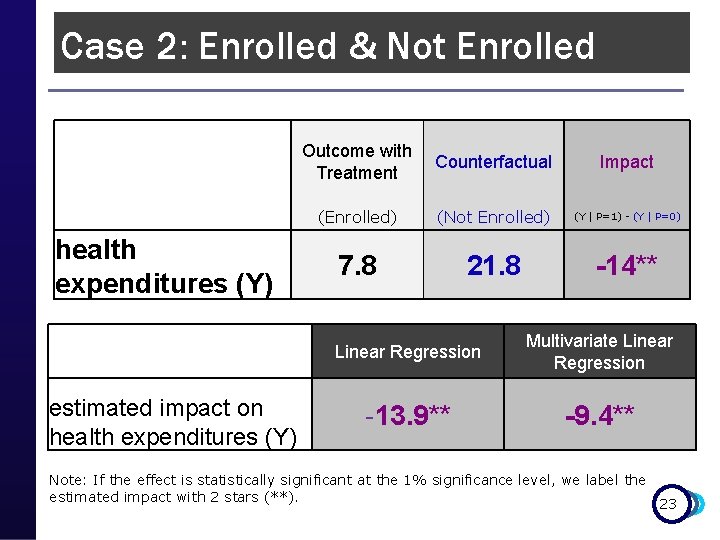

Case 2: Enrolled & Not Enrolled Outcome with Counterfactual Treatment Impact health expenditures (Y) (Enrolled) (Not Enrolled) (Y | P=1) - (Y | P=0) 7. 8 21. 8 -14** Linear Regression Multivariate Linear Regression -13. 9** -9. 4** estimated impact on health expenditures (Y) Note: If the effect is statistically significant at the 1% significance level, we label the estimated impact with 2 stars (**). 23

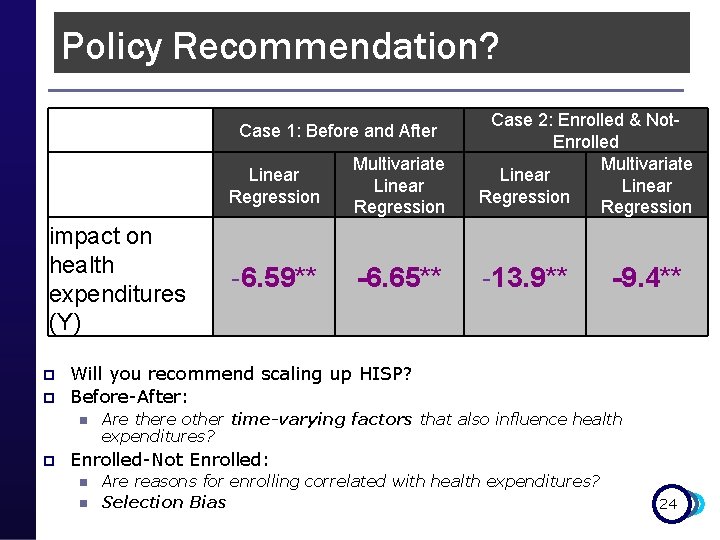

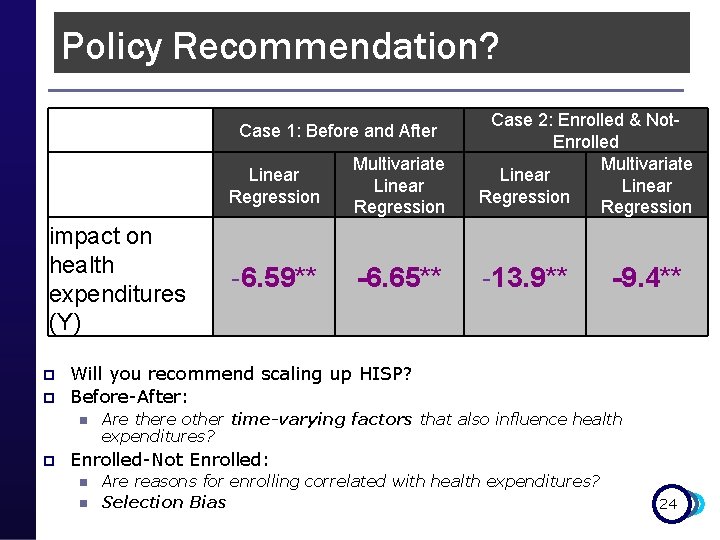

Policy Recommendation? Linear Regression Multivariate Linear Regression Case 2: Enrolled & Not. Enrolled Multivariate Linear Regression -6. 59** -6. 65** -13. 9** Case 1: Before and After impact on health expenditures (Y) p p Will you recommend scaling up HISP? Before-After: n p -9. 4** Are there other time-varying factors that also influence health expenditures? Enrolled-Not Enrolled: n n Are reasons for enrolling correlated with health expenditures? Selection Bias 24

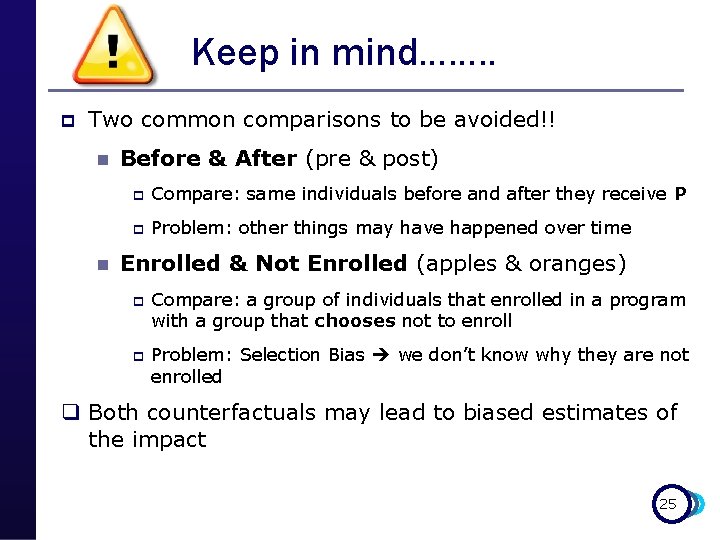

Keep in mind……. . p Two common comparisons to be avoided!! n n Before & After (pre & post) p Compare: same individuals before and after they receive P p Problem: other things may have happened over time Enrolled & Not Enrolled (apples & oranges) p p Compare: a group of individuals that enrolled in a program with a group that chooses not to enroll Problem: Selection Bias we don’t know why they are not enrolled q Both counterfactuals may lead to biased estimates of the impact 25

Measuring Impact 1) Causal Inference q Counterfactuals p False Counterfactuals: q Before & After (pre & post) q Enrolled & Not Enrolled (apples & oranges) 2) IE Methods Toolbox: q q q Random Assignment Random Promotion Discontinuity Design Difference in Differences (Diff-in-diff) Matching (P-score matching) 26

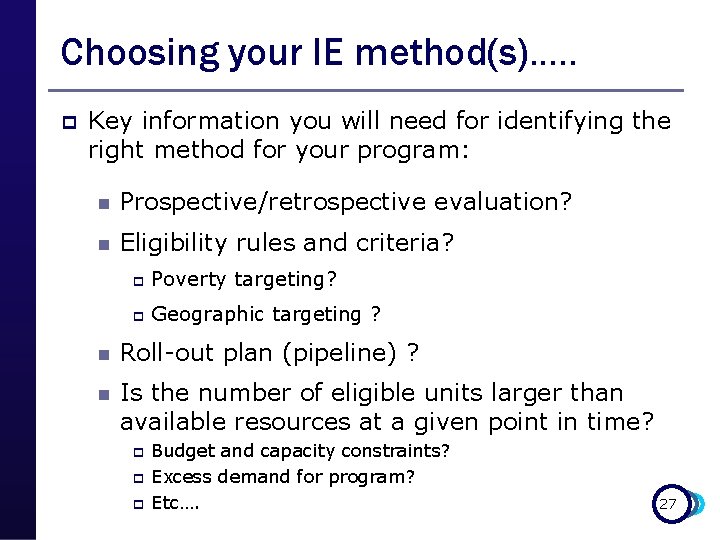

Choosing your IE method(s)…. . p Key information you will need for identifying the right method for your program: n Prospective/retrospective evaluation? n Eligibility rules and criteria? p Poverty targeting? p Geographic targeting ? n Roll-out plan (pipeline) ? n Is the number of eligible units larger than available resources at a given point in time? p p p Budget and capacity constraints? Excess demand for program? Etc…. 27

Choosing your IE method(s)…. . Choose the “best” possible design given the operational context p Best design = best comparison group you can find + least operational risk p Have we controlled for “everything”? n n p Internal validity Good comparison group Is the result valid for “everyone”? n n n External validity Local versus global treatment effect Evaluation results apply to population we’re interested in 28

Measuring Impact 1) Causal Inference q Counterfactuals p False Counterfactuals: q Before & After (pre & post) q Enrolled & Not enrolled (apples & oranges) 2) IE Methods Toolbox: q q q Random Assignment Random Promotion Discontinuity Design Difference in Differences (Diff-in-diff) Matching (P-score matching) 29

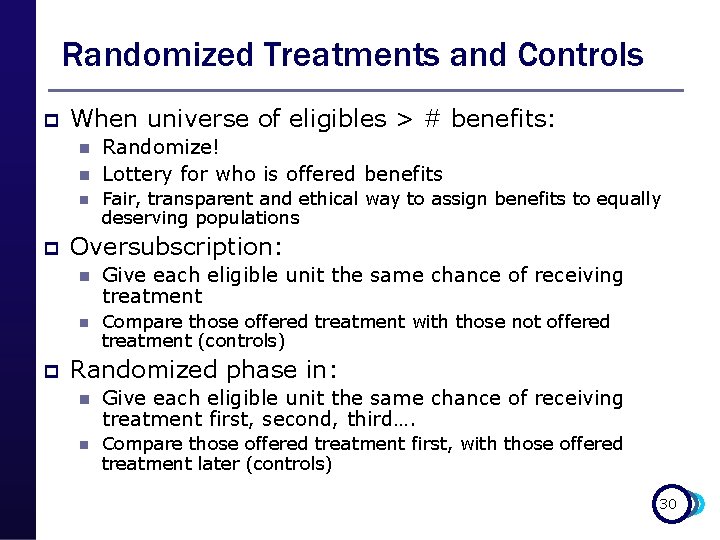

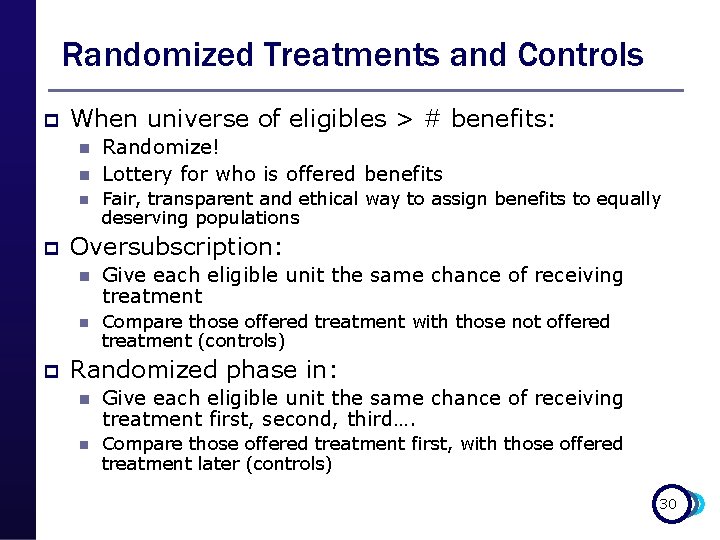

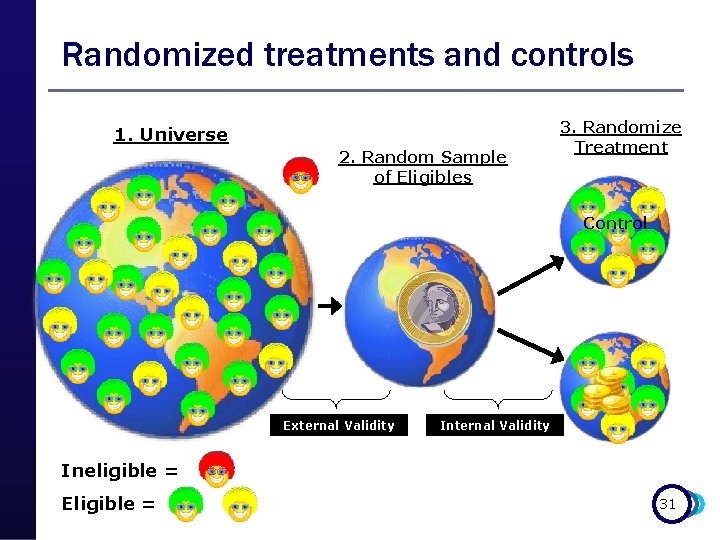

Randomized Treatments and Controls p When universe of eligibles > # benefits: n n n p p Randomize! Lottery for who is offered benefits Fair, transparent and ethical way to assign benefits to equally deserving populations Oversubscription: n Give each eligible unit the same chance of receiving treatment n Compare those offered treatment with those not offered treatment (controls) Randomized phase in: n Give each eligible unit the same chance of receiving treatment first, second, third…. n Compare those offered treatment first, with those offered treatment later (controls) 30

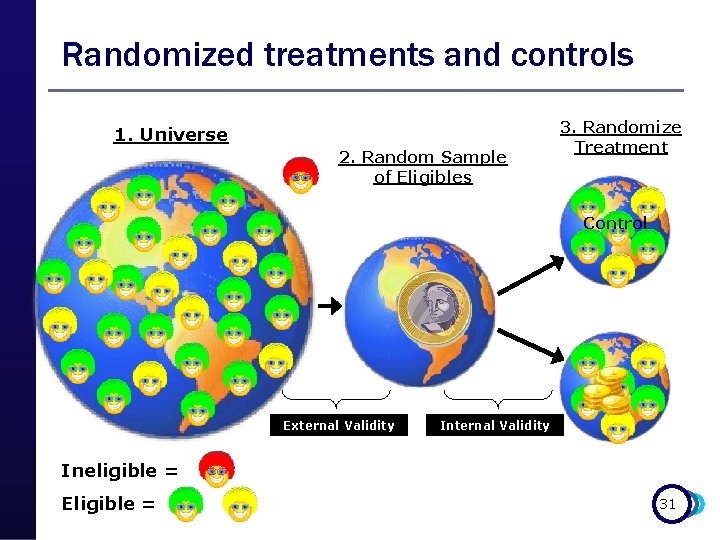

Randomized treatments and controls 1. Universe 2. Random Sample of Eligibles 3. Randomize Treatment Control External Validity Ineligible = Eligible = 31

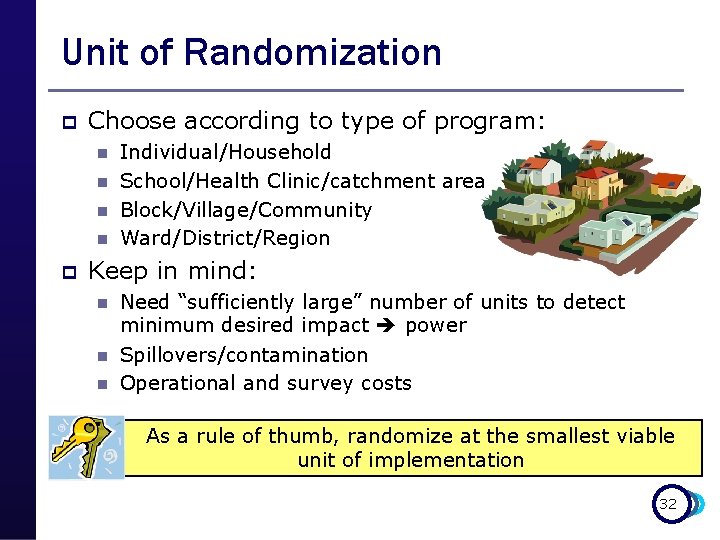

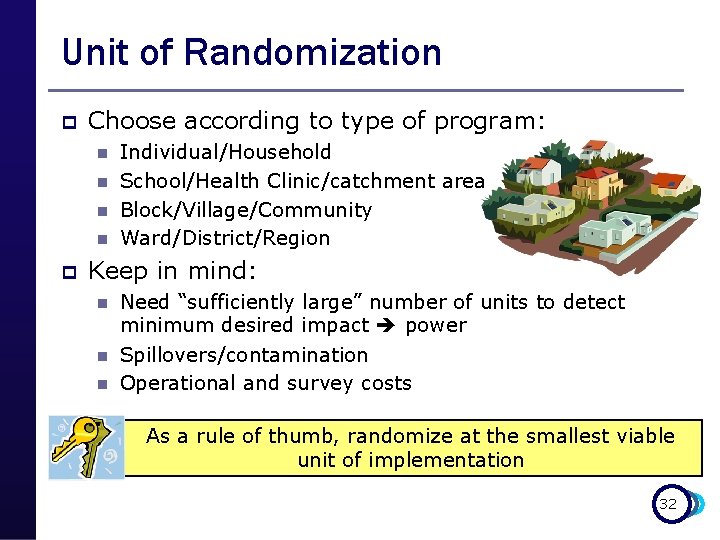

Unit of Randomization p Choose according to type of program: n n p Individual/Household School/Health Clinic/catchment area Block/Village/Community Ward/District/Region Keep in mind: n n n Need “sufficiently large” number of units to detect minimum desired impact power Spillovers/contamination Operational and survey costs As a rule of thumb, randomize at the smallest viable unit of implementation 32

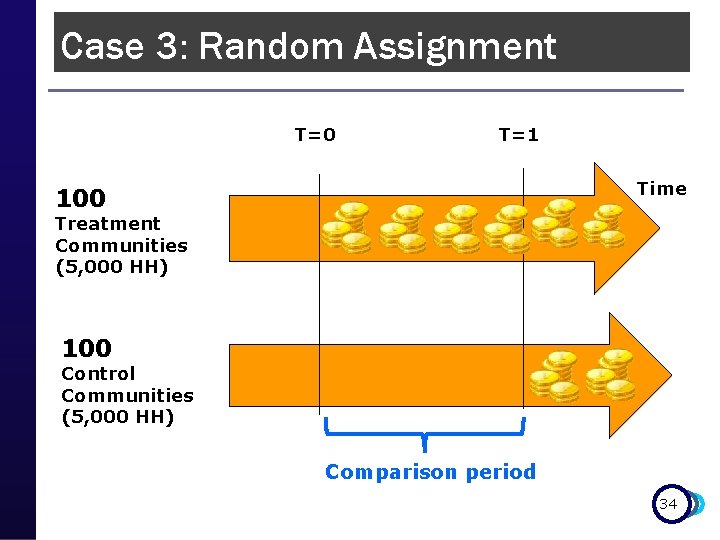

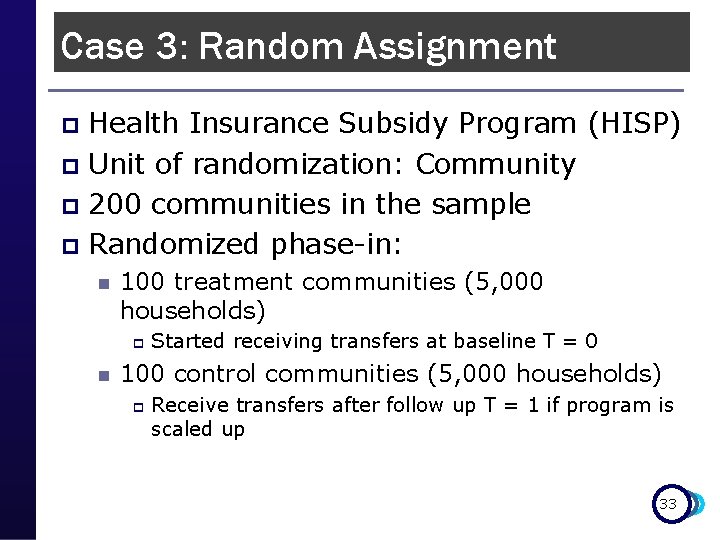

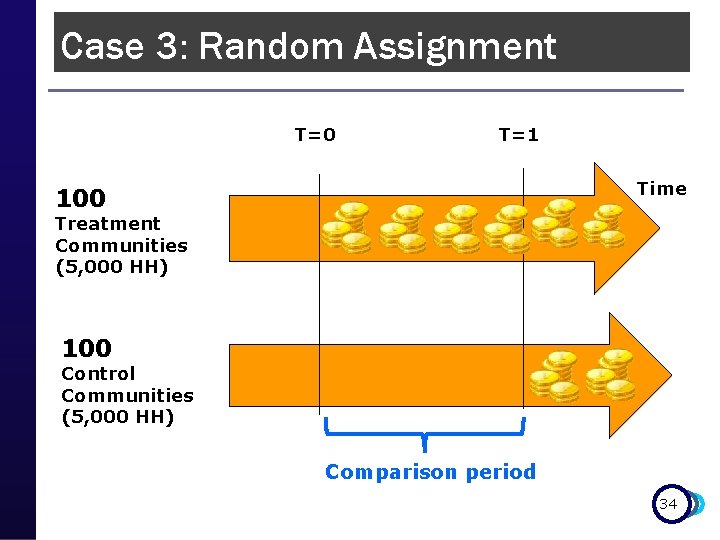

Case 3: Random Assignment Health Insurance Subsidy Program (HISP) p Unit of randomization: Community p 200 communities in the sample p Randomized phase-in: p n 100 treatment communities (5, 000 households) p n Started receiving transfers at baseline T = 0 100 control communities (5, 000 households) p Receive transfers after follow up T = 1 if program is scaled up 33

Case 3: Random Assignment T=0 T=1 Time 100 Treatment Communities (5, 000 HH) 100 Control Communities (5, 000 HH) Comparison period 34

Case 3: Random Assignment How do we know we have good clones? 35

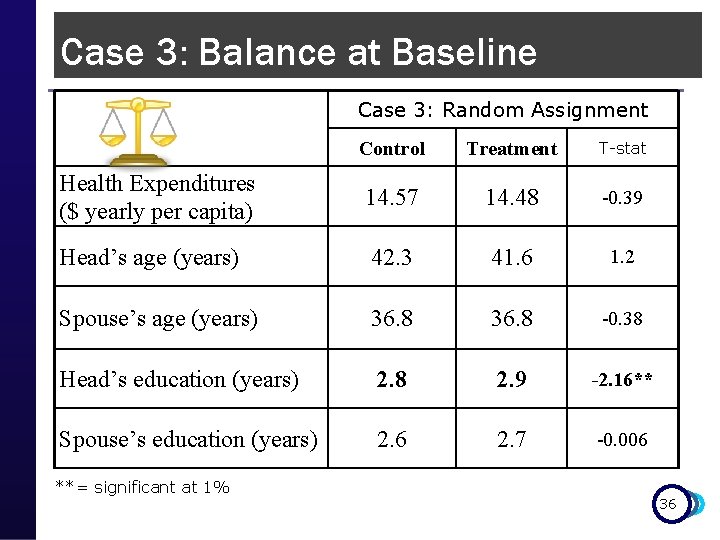

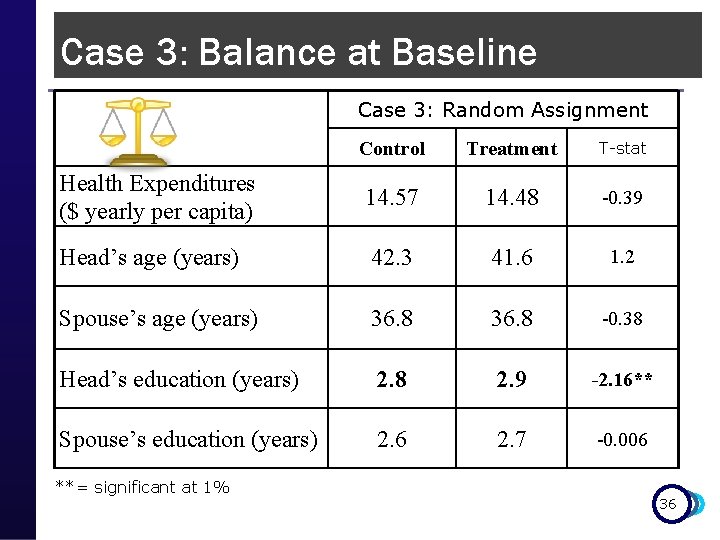

Case 3: Balance at Baseline Case 3: Random Assignment Control Treatment T-stat Health Expenditures ($ yearly per capita) 14. 57 14. 48 -0. 39 Head’s age (years) 42. 3 41. 6 1. 2 Spouse’s age (years) 36. 8 -0. 38 Head’s education (years) 2. 8 2. 9 -2. 16** Spouse’s education (years) 2. 6 2. 7 -0. 006 **= significant at 1% 36

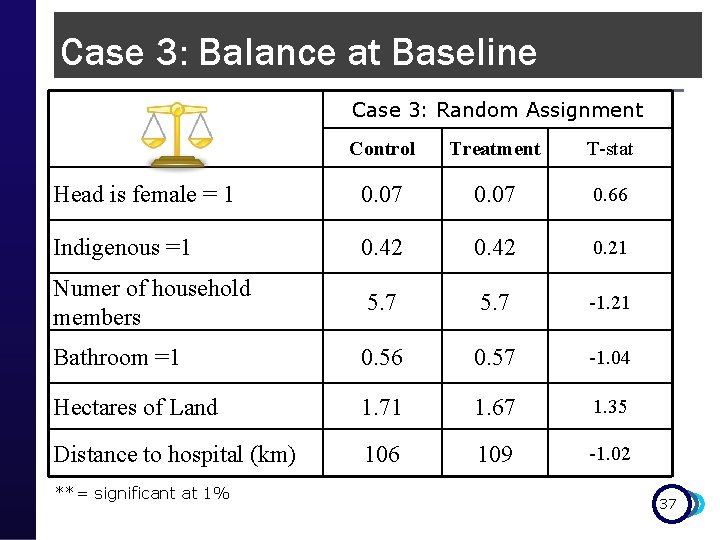

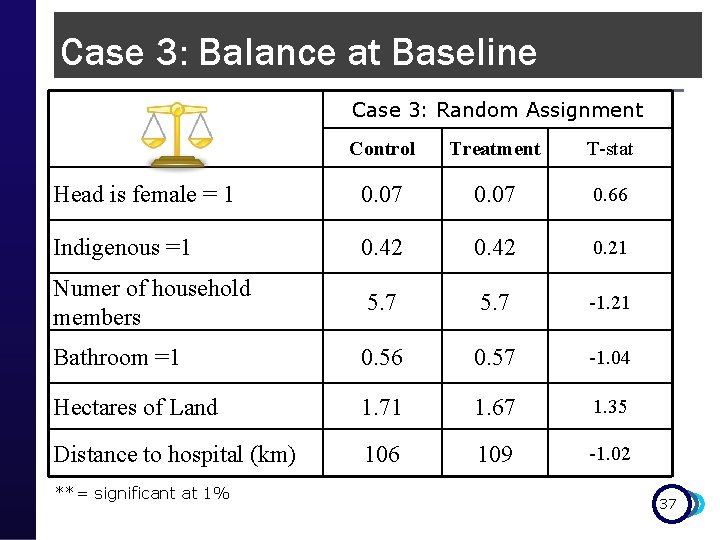

Case 3: Balance at Baseline Case 3: Random Assignment Control Treatment T-stat Head is female = 1 0. 07 0. 66 Indigenous =1 0. 42 0. 21 Numer of household members 5. 7 -1. 21 Bathroom =1 0. 56 0. 57 -1. 04 Hectares of Land 1. 71 1. 67 1. 35 Distance to hospital (km) 106 109 -1. 02 **= significant at 1% 37

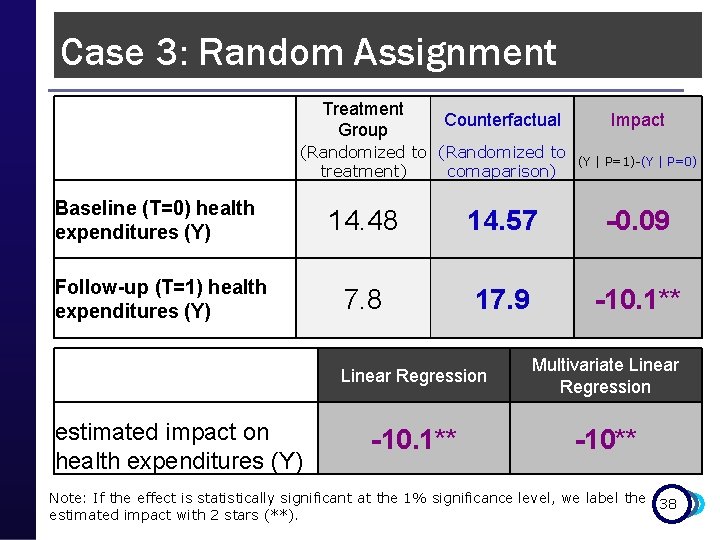

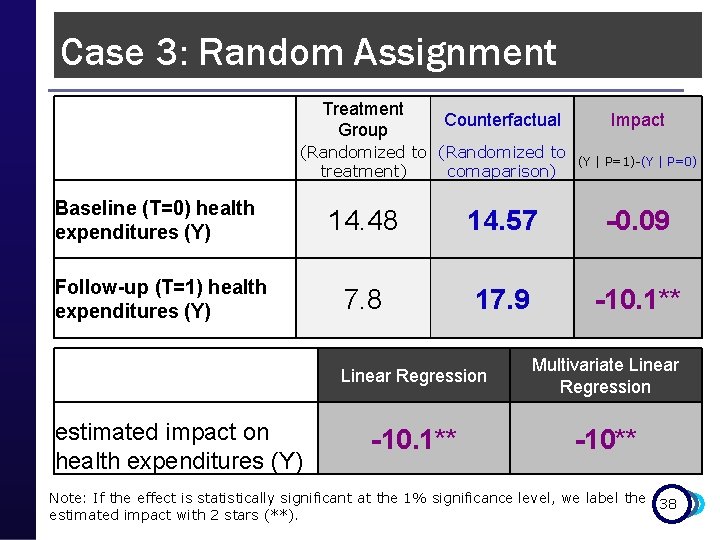

Case 3: Random Assignment Treatment Group Counterfactual (Randomized to treatment) comaparison) Baseline (T=0) health expenditures (Y) Follow-up (T=1) health expenditures (Y) (Y | P=1)-(Y | P=0) 14. 48 14. 57 -0. 09 7. 8 17. 9 -10. 1** Linear Regression Multivariate Linear Regression -10. 1** -10** estimated impact on health expenditures (Y) Impact Note: If the effect is statistically significant at the 1% significance level, we label the 38 estimated impact with 2 stars (**).

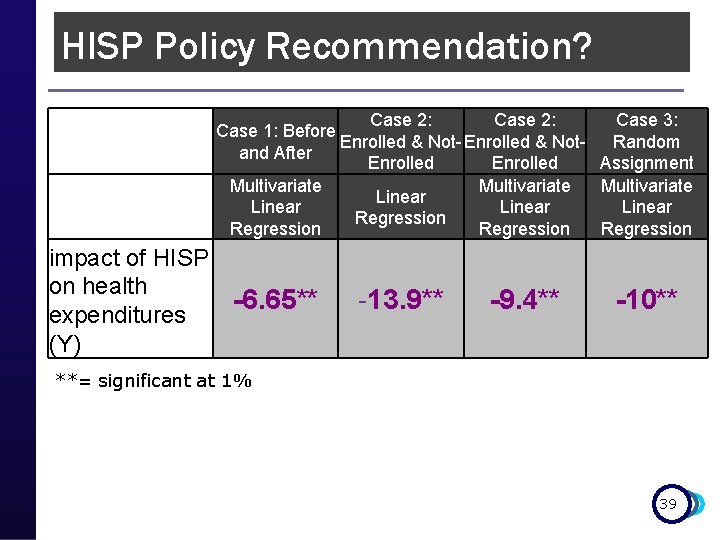

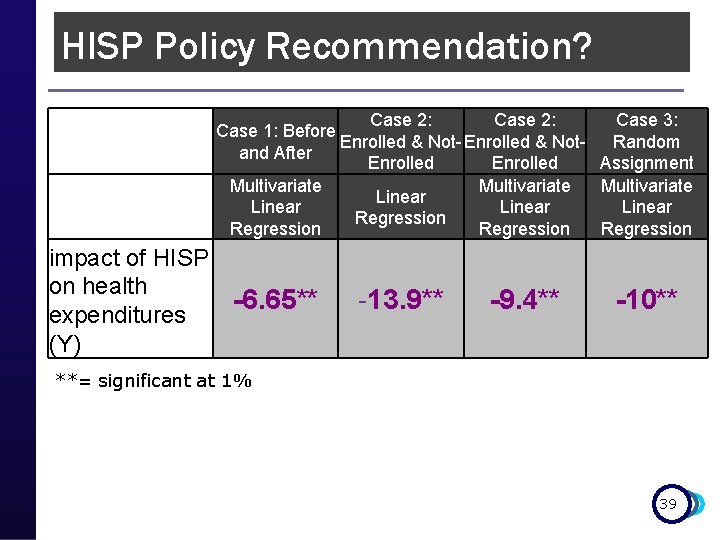

HISP Policy Recommendation? Case 2: Case 3: Case 1: Before Enrolled & Not- Enrolled & Not. Random and After Enrolled Assignment Multivariate Linear Regression impact of HISP on health -6. 65** expenditures (Y) -13. 9** -9. 4** -10** **= significant at 1% 39

Keep in mind……. . p Random Assignment: n n With large enough samples, produces two groups that are statistically equivalent We have identified the perfect “clone” Randomized beneficiary n n Randomized comparison Feasible for prospective evaluations with oversubscription/excess demand Most pilots and new programs fall into this 40 category!

Remember…. . p p Objective of impact evaluation is to estimate the CAUSAL effect or IMPACT of a program on outcomes of interest To estimate impact, we need to estimate the counterfactual n n p p What would have happened in the absence of the program Use comparison or control groups We have toolbox with 5 methods to identify good comparison groups Choose the best evaluation method that is feasible in the program’s operational context 41

THANK YOU! 42