Image Retrieval Part II Topics Applications of CBIR

Image Retrieval Part II

Topics • Applications of CBIR in digital library • Human-controlled interactive CBIR • Machine-controlled interactive CBIR 2

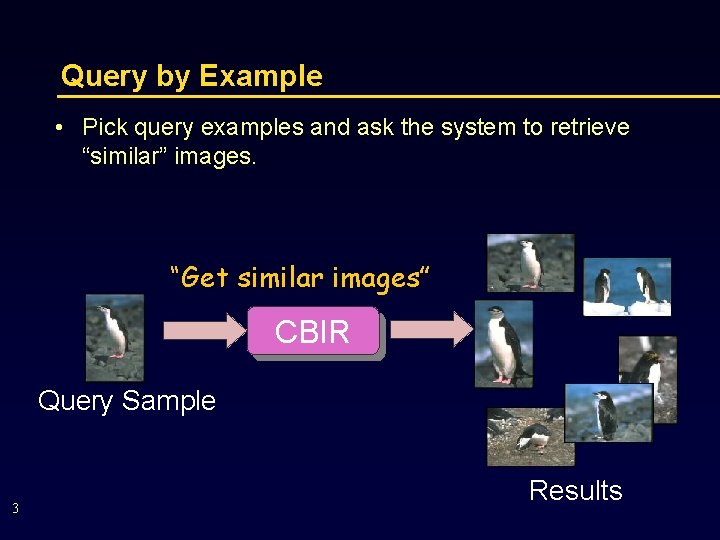

Query by Example • Pick query examples and ask the system to retrieve “similar” images. “Get similar images” CBIR Query Sample 3 Results

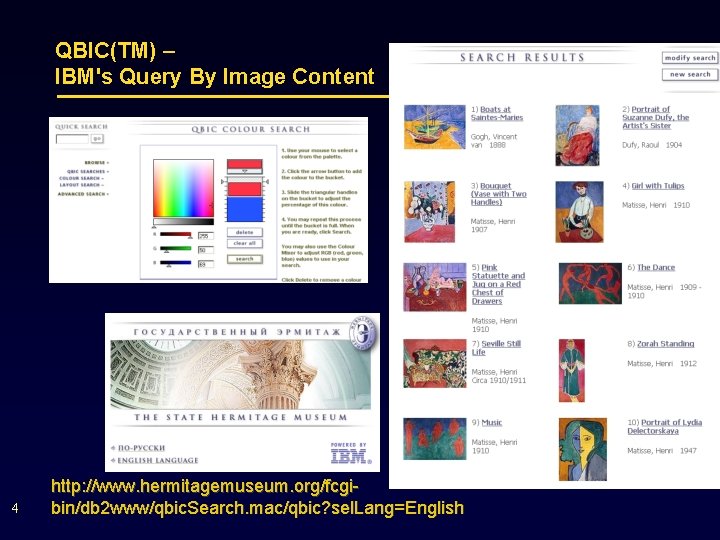

QBIC(TM) – IBM's Query By Image Content 4 http: //www. hermitagemuseum. org/fcgibin/db 2 www/qbic. Search. mac/qbic? sel. Lang=English

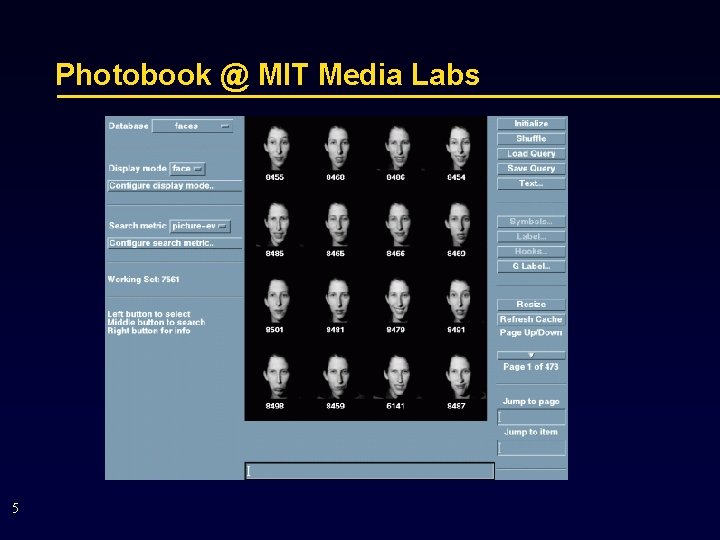

Photobook @ MIT Media Labs 5

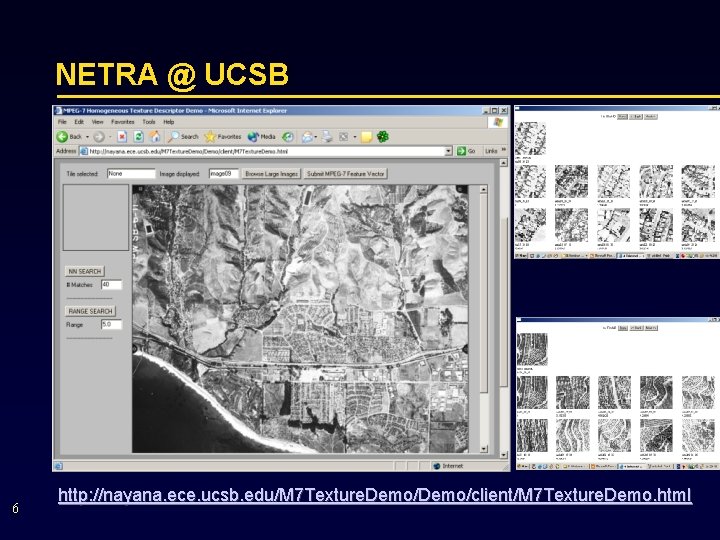

NETRA @ UCSB 6 http: //nayana. ece. ucsb. edu/M 7 Texture. Demo/client/M 7 Texture. Demo. html

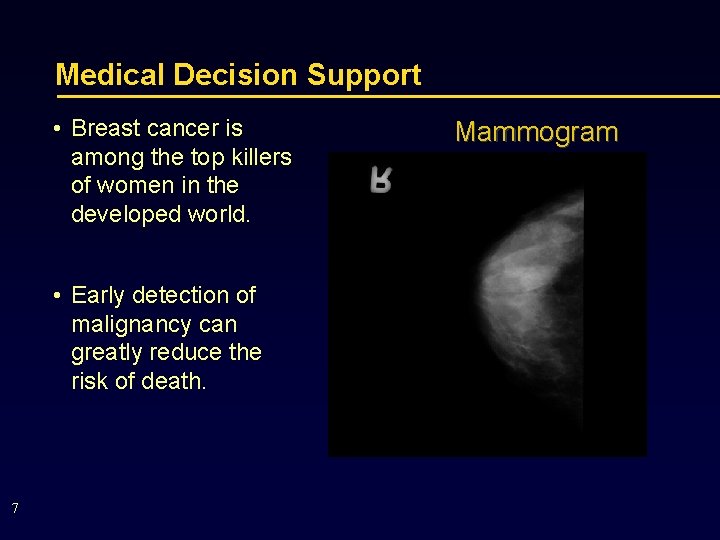

Medical Decision Support • Breast cancer is among the top killers of women in the developed world. • Early detection of malignancy can greatly reduce the risk of death. 7 Mammogram

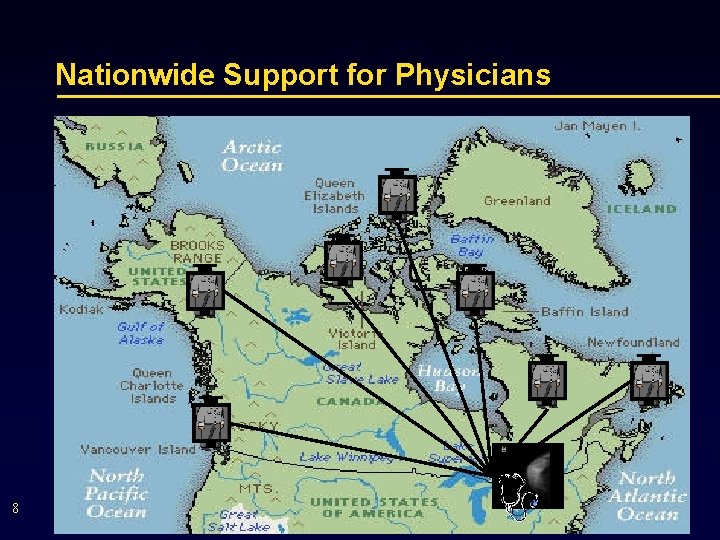

Nationwide Support for Physicians 8

Summary of Fundamental CBIR • CBIR using query by example • CBIR Algorithm: • • 9 First step --- Image Indexing Second step --- content matching Third step --- Ranking and displaying Relevance feedback (RF)

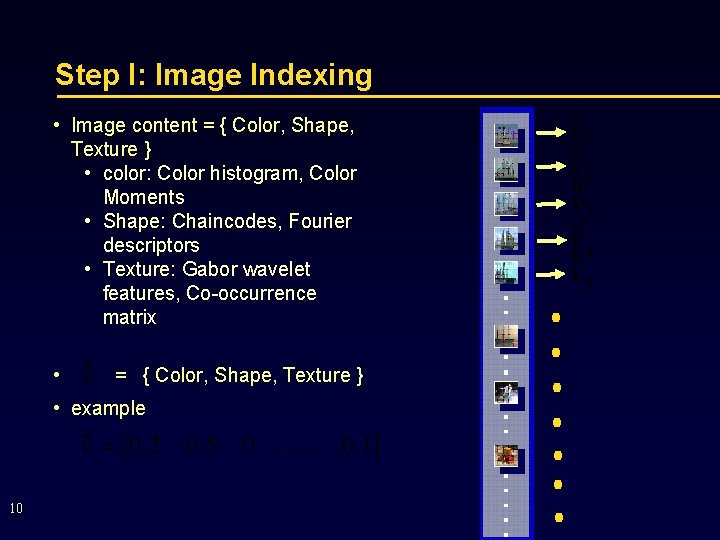

Step I: Image Indexing • Image content = { Color, Shape, Texture } • color: Color histogram, Color Moments • Shape: Chaincodes, Fourier descriptors • Texture: Gabor wavelet features, Co-occurrence matrix • = { Color, Shape, Texture } • example 10

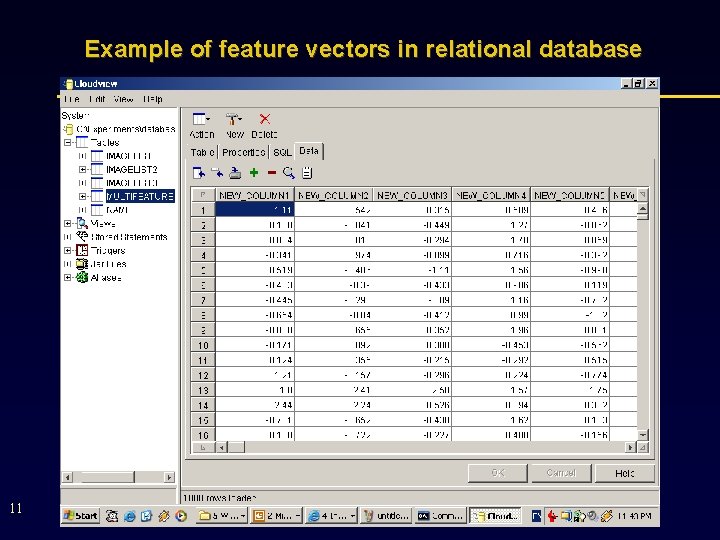

Example of feature vectors in relational database 11

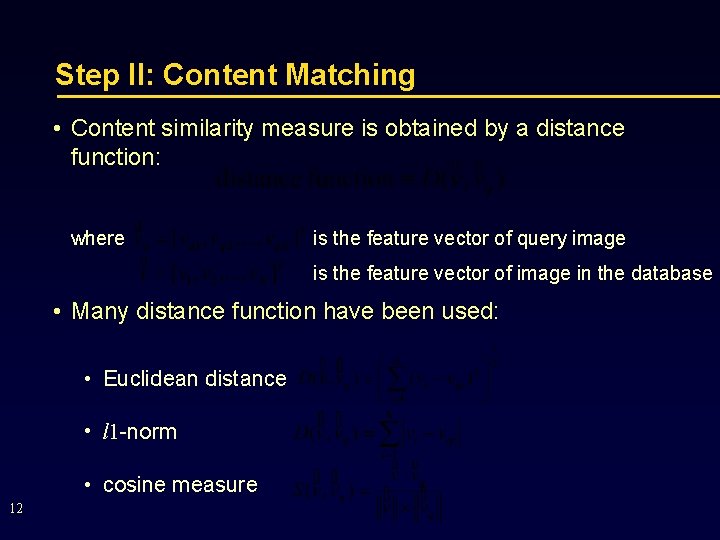

Step II: Content Matching • Content similarity measure is obtained by a distance function: where is the feature vector of query image is the feature vector of image in the database • Many distance function have been used: • Euclidean distance • l 1 -norm • cosine measure 12

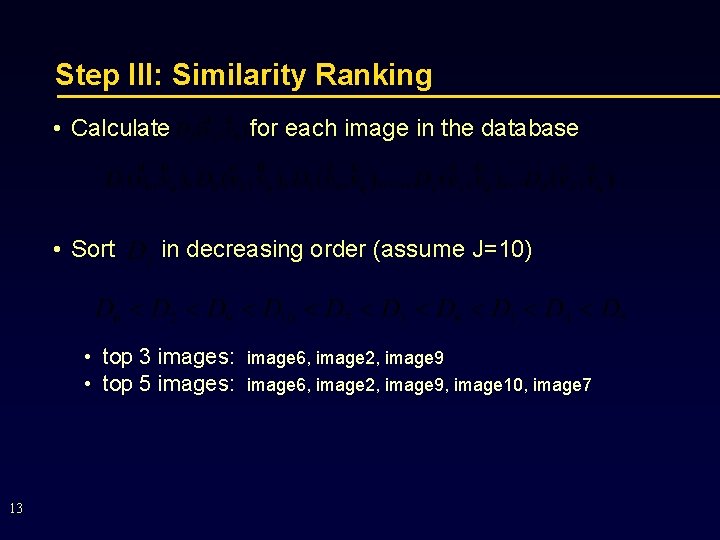

Step III: Similarity Ranking • Calculate • Sort in decreasing order (assume J=10) • top 3 images: • top 5 images: 13 for each image in the database image 6, image 2, image 9, image 10, image 7

Problems with CBIR In essence, retrieval is a pattern recognition problem with special characteristics. • Huge volume of (visual) data. • High dimensionality in feature space. • Query design: Gap between the high level concepts and low level features. • Linear matching criteria: A mismatch to the popular human perception model. 14

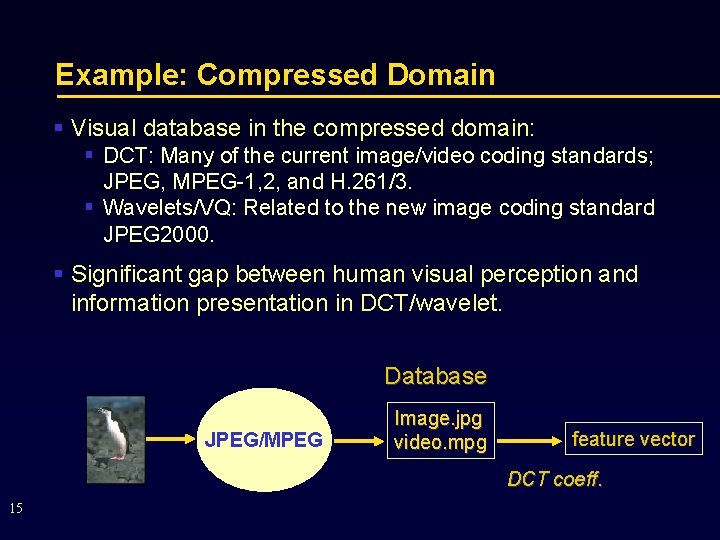

Example: Compressed Domain § Visual database in the compressed domain: § DCT: Many of the current image/video coding standards; JPEG, MPEG-1, 2, and H. 261/3. § Wavelets/VQ: Related to the new image coding standard JPEG 2000. § Significant gap between human visual perception and information presentation in DCT/wavelet. Database JPEG/MPEG Image. jpg video. mpg feature vector DCT coeff. 15

State-of-the-art • Human controlled interactive CBIR (HCI-CBIR) • Integrating human perception into content-based retrieval. • Machine controlled interactive CBIR (MCI-CBIR) • To reduce bandwidth requirement for browsing and searching over the Internet. • To minimize errors caused by excessive human involvement. 16

Integrating human perception into content-based retrieval

Scenario • Machine provides initial retrieval results, through query-by -keyword, sketch, or example, etc. ; • Iteratively: • User provides judgment on the current results as to whether, and to what degree, they are relevant to her/his request; • The machine learns and try again. 18

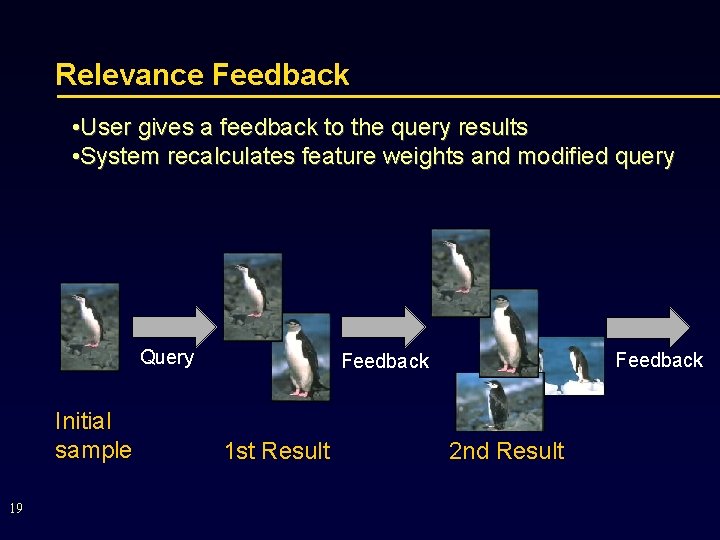

Relevance Feedback • User gives a feedback to the query results • System recalculates feature weights and modified query Query Initial sample 19 Feedback 1 st Result 2 nd Result

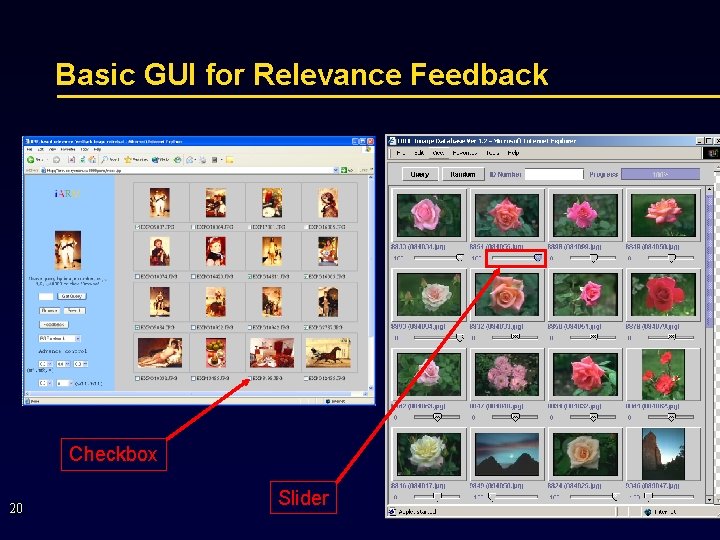

Basic GUI for Relevance Feedback Checkbox 20 Slider

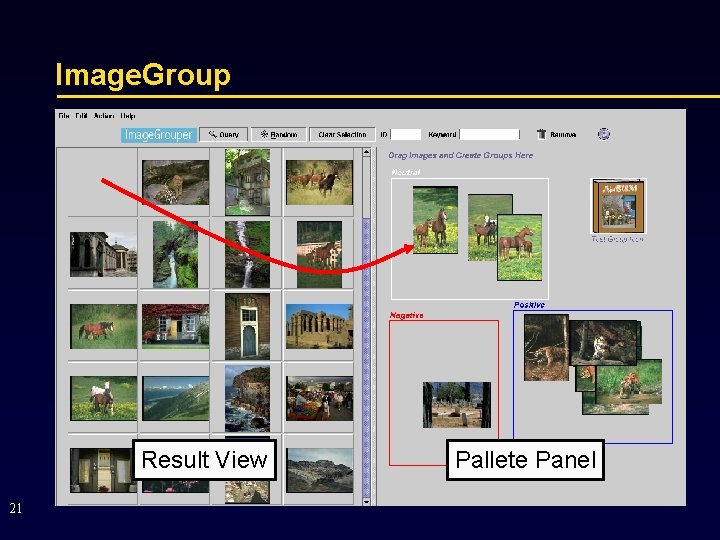

Image. Group Result View 21 Pallete Panel

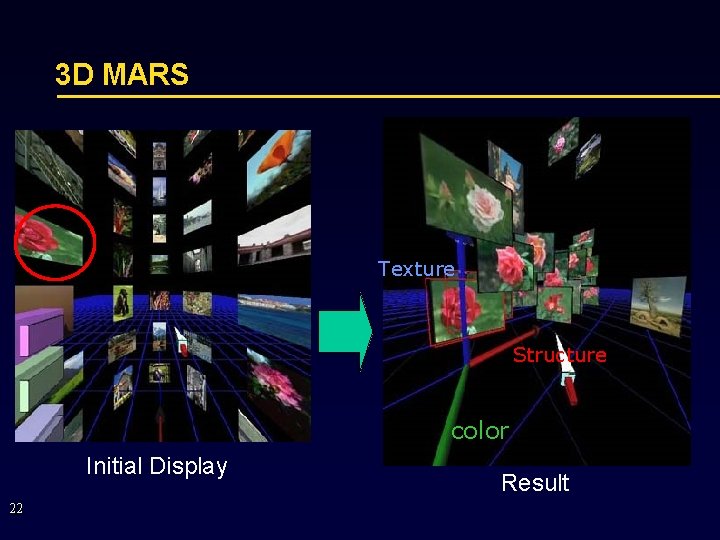

3 D MARS Texture Structure color Initial Display 22 Result

Human-Controlled Interactive CBIR (HCI-CBIR) • An attractive solution to numerous applications • Main feature: an active role played by users to improve retrieval accuracy • State-of-the-art • query design considerations • linear criteria in similarity ranking 23

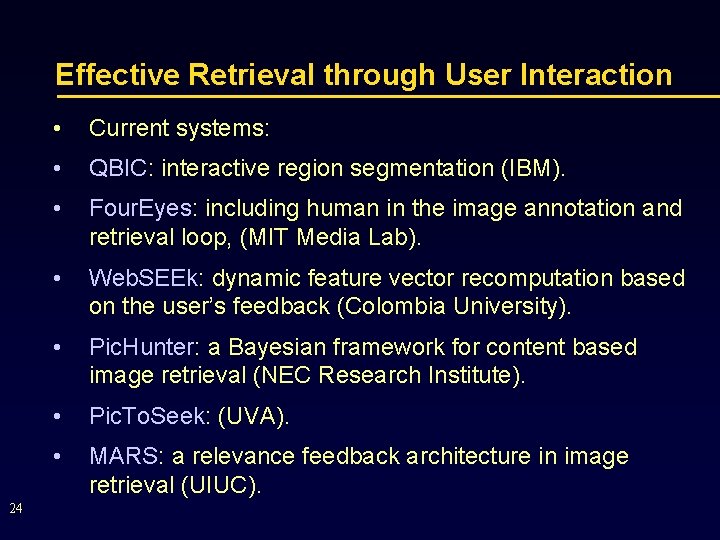

Effective Retrieval through User Interaction 24 • Current systems: • QBIC: interactive region segmentation (IBM). • Four. Eyes: including human in the image annotation and retrieval loop, (MIT Media Lab). • Web. SEEk: dynamic feature vector recomputation based on the user’s feedback (Colombia University). • Pic. Hunter: a Bayesian framework for content based image retrieval (NEC Research Institute). • Pic. To. Seek: (UVA). • MARS: a relevance feedback architecture in image retrieval (UIUC).

A “New” Proposal for HCI-CBIR The framework: Relevance feedback The key features: § Modeling: mapping a high level concept to low level features § Matching: Øcapturing user’s perceptual subjectivity to modify the query using non-linear measurement Øovercoming the difficulties faced by the traditional linear matching criteria 25

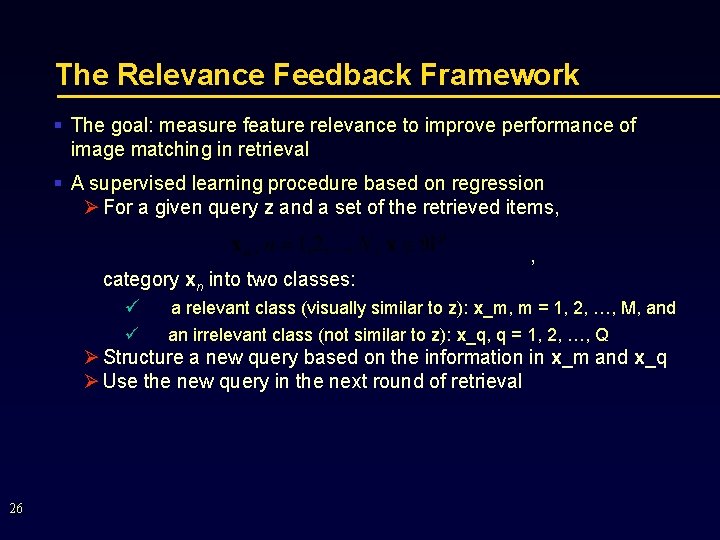

The Relevance Feedback Framework § The goal: measure feature relevance to improve performance of image matching in retrieval § A supervised learning procedure based on regression Ø For a given query z and a set of the retrieved items, , category xn into two classes: ü a relevant class (visually similar to z): x_m, m = 1, 2, …, M, and ü an irrelevant class (not similar to z): x_q, q = 1, 2, …, Q Ø Structure a new query based on the information in x_m and x_q Ø Use the new query in the next round of retrieval 26

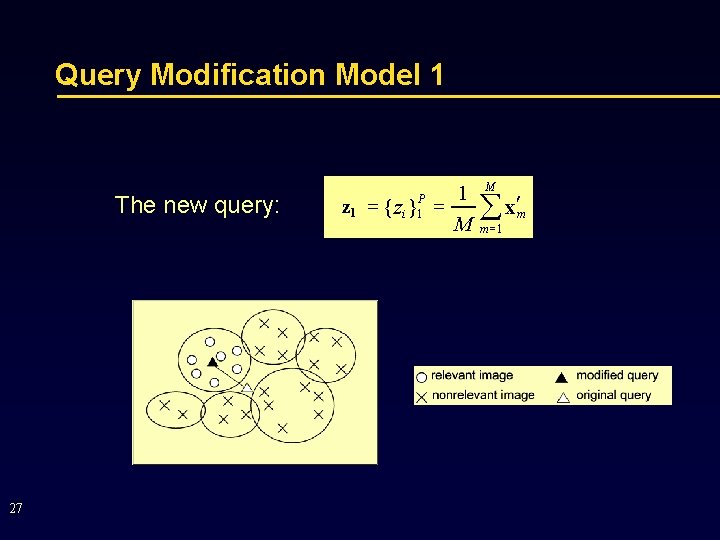

Query Modification Model 1 The new query: 27 1 z 1 = { z } = M P i 1 M å x¢ m =1 m

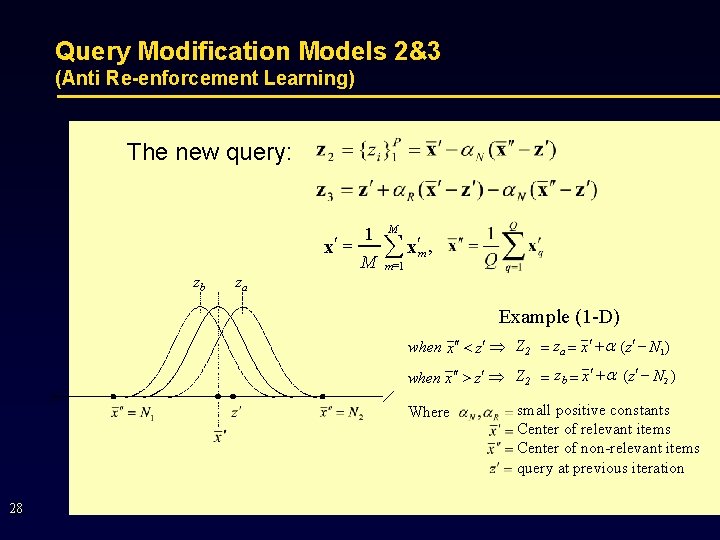

Query Modification Models 2&3 (Anti Re-enforcement Learning) The new query: 1 x¢ = M zb M å x¢ , m =1 m za Example (1 -D) when x ¢¢ < z¢ Þ Z 2 = za = x ¢ + a ( z¢ - N 1 ) when x ¢¢ > z¢ Þ Z 2 = zb = x ¢ + a ( z¢ - N 2 ) Where 28 small positive constants Center of relevant items Center of non-relevant items query at previous iteration

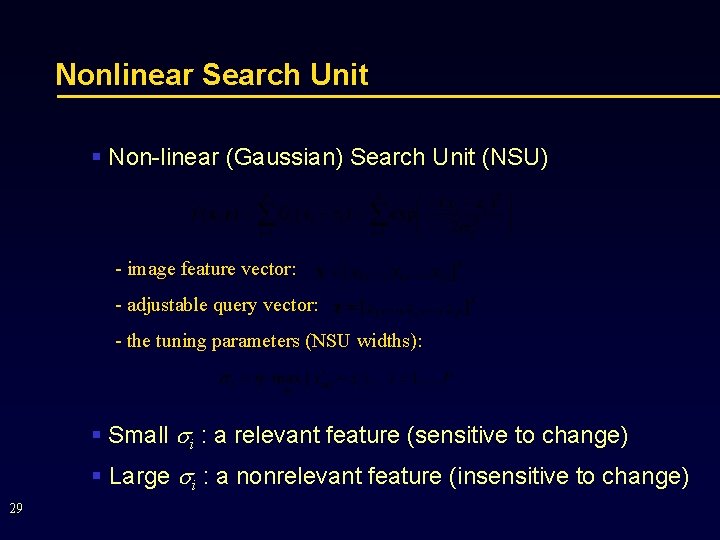

Nonlinear Search Unit § Non-linear (Gaussian) Search Unit (NSU) - image feature vector: - adjustable query vector: - the tuning parameters (NSU widths): § Small i : a relevant feature (sensitive to change) § Large i : a nonrelevant feature (insensitive to change) 29

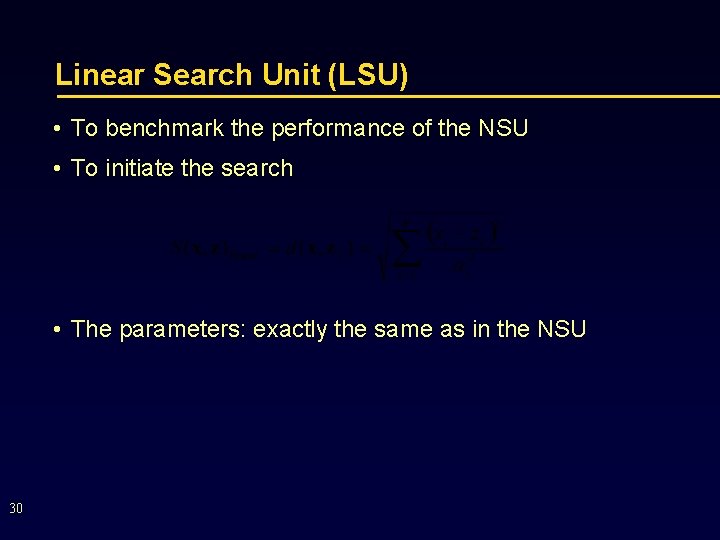

Linear Search Unit (LSU) • To benchmark the performance of the NSU • To initiate the search • The parameters: exactly the same as in the NSU 30

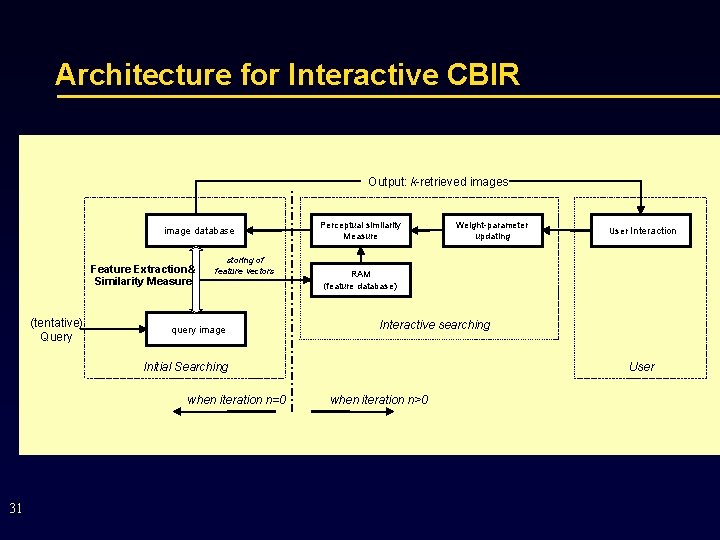

Architecture for Interactive CBIR Output: k-retrieved images image database Feature Extraction& Similarity Measure (tentative) Query storing of feature vectors query image Perceptual similarity Measure 31 User Interaction RAM (feature database) Interactive searching Initial Searching when iteration n=0 Weight-parameter updating User when iteration n>0

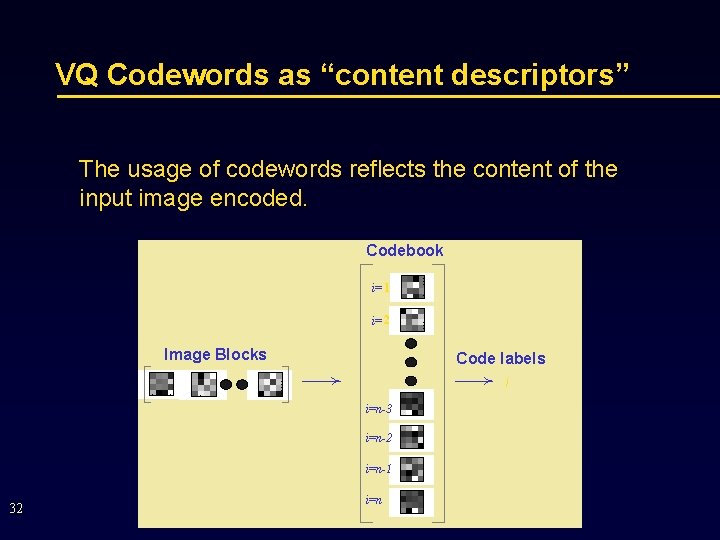

VQ Codewords as “content descriptors” The usage of codewords reflects the content of the input image encoded. Codebook i= 1 i= 2 Image Blocks Code labels i i=n-3 i=n-2 i=n-1 32 i=n

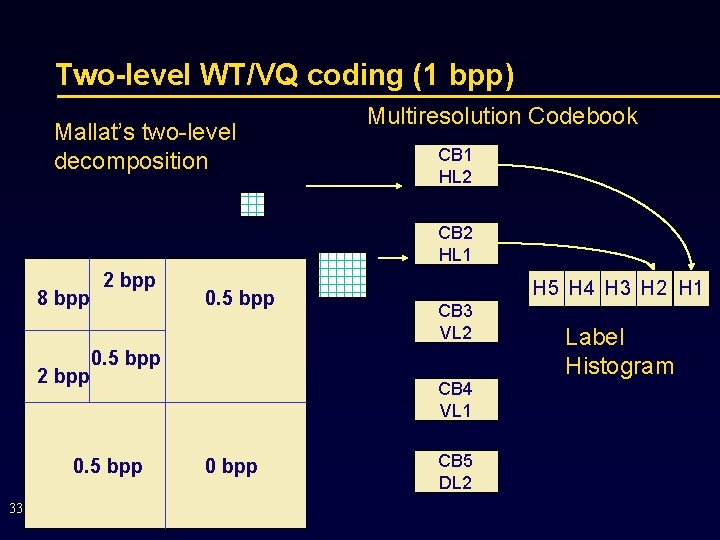

Two-level WT/VQ coding (1 bpp) Mallat’s two-level decomposition Multiresolution Codebook CB 1 HL 2 CB 2 HL 1 8 bpp 2 bpp H 5 H 4 H 3 H 2 H 1 CB 3 VL 2 0. 5 bpp 33 0. 5 bpp CB 4 VL 1 0 bpp CB 5 DL 2 Label Histogram

Test Database 1: Bordatz database • A texture image database provided by Mahjunath, at http: //vivaldi. ece. ucsb. edu/users/wei/codes. html • 1, 856 patterns in 116 different classes • 16 similar patterns in each class • Maintained as a single unclassified image database 34

![Queries (the Bordatz Database) [116 different image classes] 35 Queries (the Bordatz Database) [116 different image classes] 35](http://slidetodoc.com/presentation_image_h2/bb310e0eda37dafdb924ecd54cb91dae/image-35.jpg)

Queries (the Bordatz Database) [116 different image classes] 35

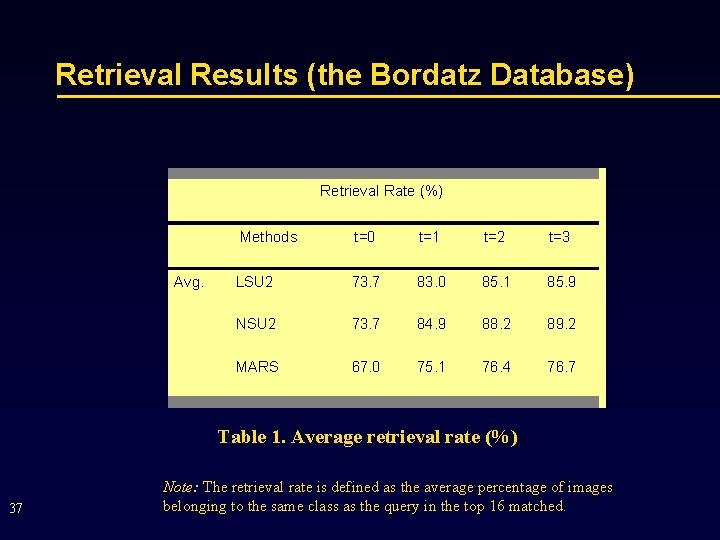

Performance Comparison • Methods Compared • LSU 2: linear search unit & query model 2 • NSU 2: non-linear search unit & query model 2 • Interactive CBIR in MARS: Multimedia Analysis and Retrieval System (developed at UIUC) 36

Retrieval Results (the Bordatz Database) Retrieval Rate (%) Avg. Methods t=0 t=1 t=2 t=3 LSU 2 73. 7 83. 0 85. 1 85. 9 NSU 2 73. 7 84. 9 88. 2 89. 2 MARS 67. 0 75. 1 76. 4 76. 7 Table 1. Average retrieval rate (%) 37 Note: The retrieval rate is defined as the average percentage of images belonging to the same class as the query in the top 16 matched.

i. ARM: Interactive-based Analysis and Retrieval of Multimedia On The Internet @iarm. ee. ryerson. ca: 8000/corel

Strategy • i. ARM implements interactive retrieval for the large image database, running on the J 2 EE Web Server. • Interaction architecture. • Based on a non-linear relevance feedback, a multimodel SRBF network. • Positive and negative feedbacks. • Properties: local and non-linear learning, fast and robust on a small input data. 39

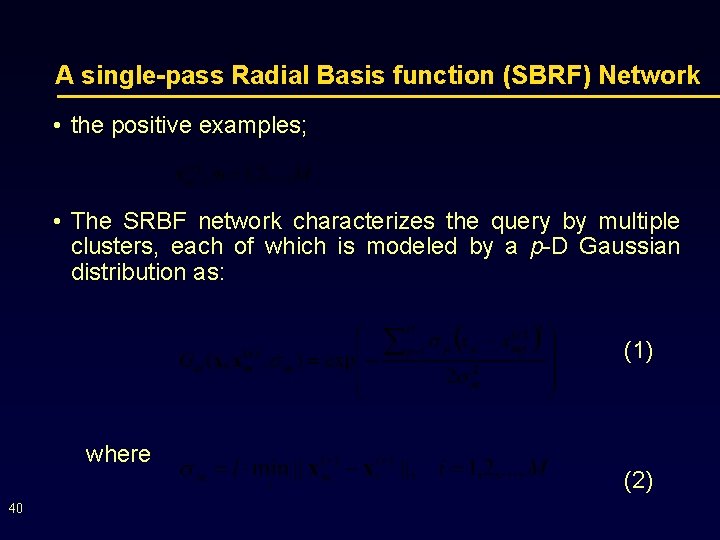

A single-pass Radial Basis function (SBRF) Network • the positive examples; • The SRBF network characterizes the query by multiple clusters, each of which is modeled by a p-D Gaussian distribution as: (1) where 40 (2)

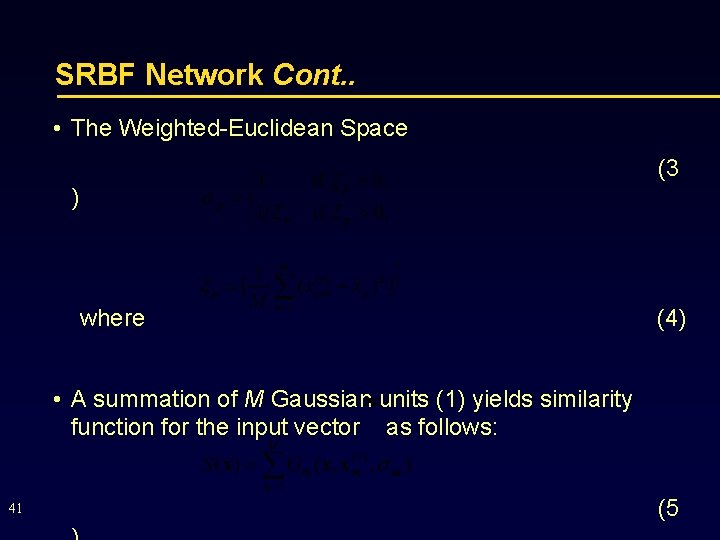

SRBF Network Cont. . • The Weighted-Euclidean Space (3 ) where (4) • A summation of M Gaussian units (1) yields similarity function for the input vector as follows: 41 (5

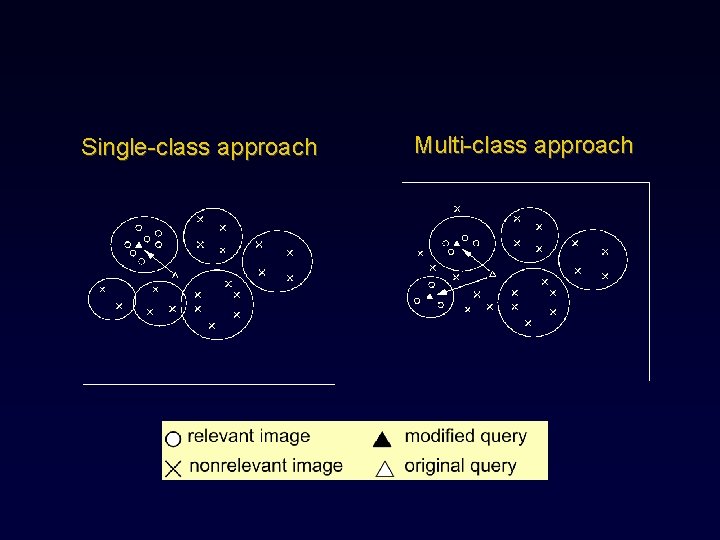

Single-class approach Multi-class approach

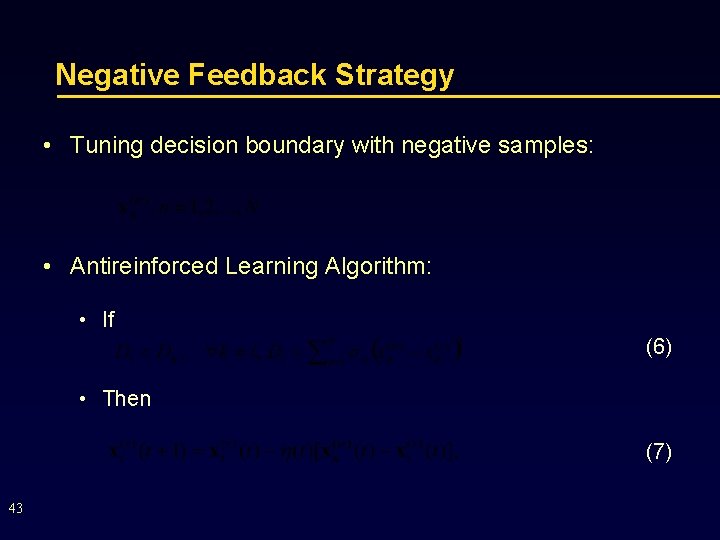

Negative Feedback Strategy • Tuning decision boundary with negative samples: • Antireinforced Learning Algorithm: • If (6) • Then (7) 43

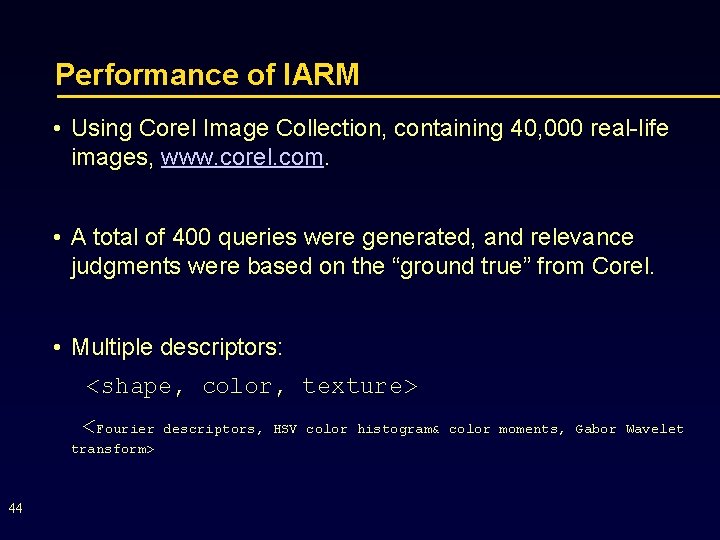

Performance of IARM • Using Corel Image Collection, containing 40, 000 real-life images, www. corel. com. • A total of 400 queries were generated, and relevance judgments were based on the “ground true” from Corel. • Multiple descriptors: <shape, color, texture> <Fourier transform> 44 descriptors, HSV color histogram& color moments, Gabor Wavelet

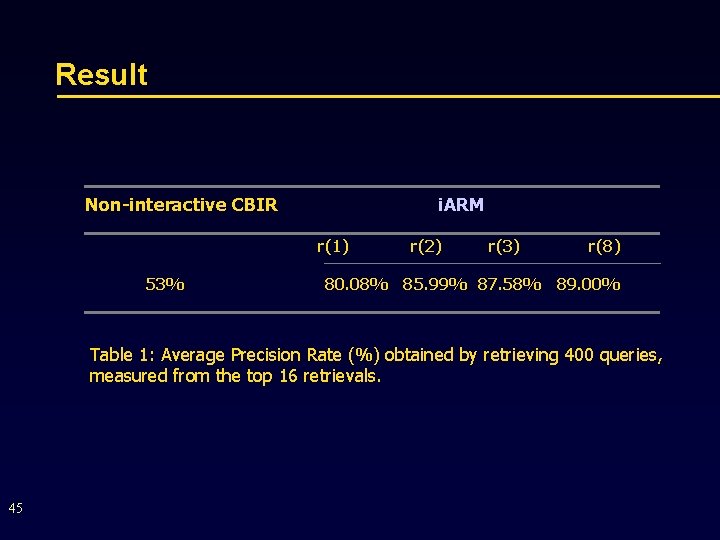

Result Non-interactive CBIR i. ARM r(1) 53% r(2) r(3) r(8) 80. 08% 85. 99% 87. 58% 89. 00% Table 1: Average Precision Rate (%) obtained by retrieving 400 queries, measured from the top 16 retrievals. 45

Test 1: Fast and Robust with small # relevance feedbacks

Example: Looking for “model”. 0. Start with choosing image at the bottom right corner as the query.

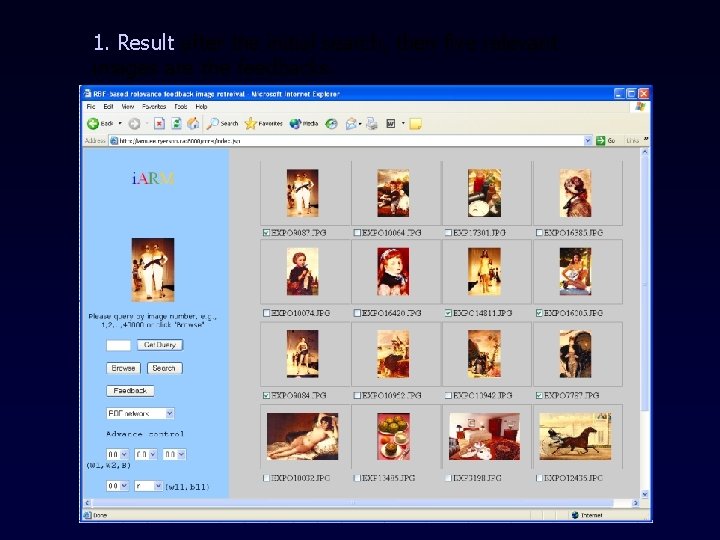

1. Result after the initial search, then five relevant images are the feedbacks.

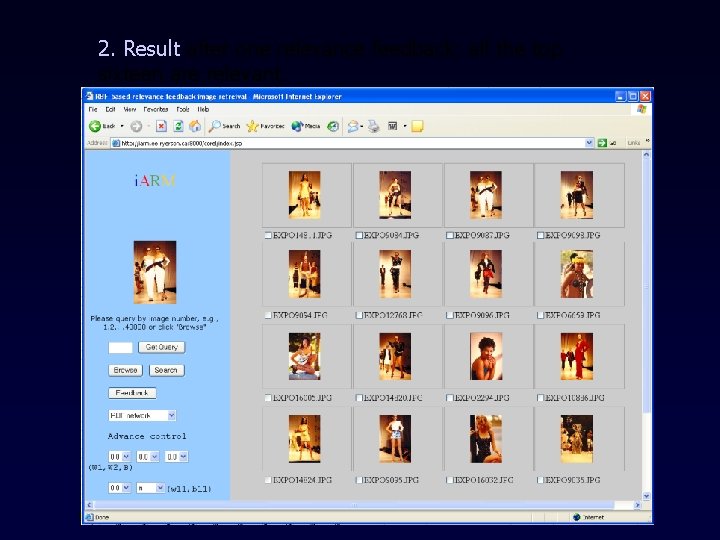

2. Result after one relevance feedback: all the top sixteen are relevant.

Test 2: Non-linearity

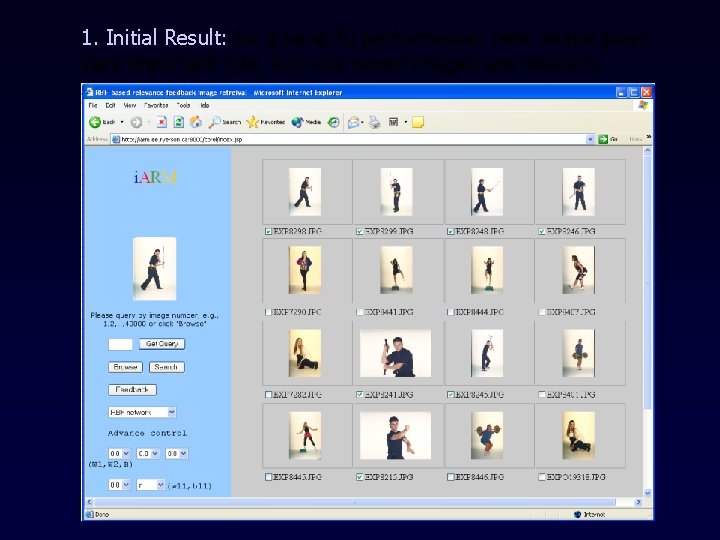

1. Initial Result: for a kang-fu performance; here shape plays very important role, but only seven images are relevant.

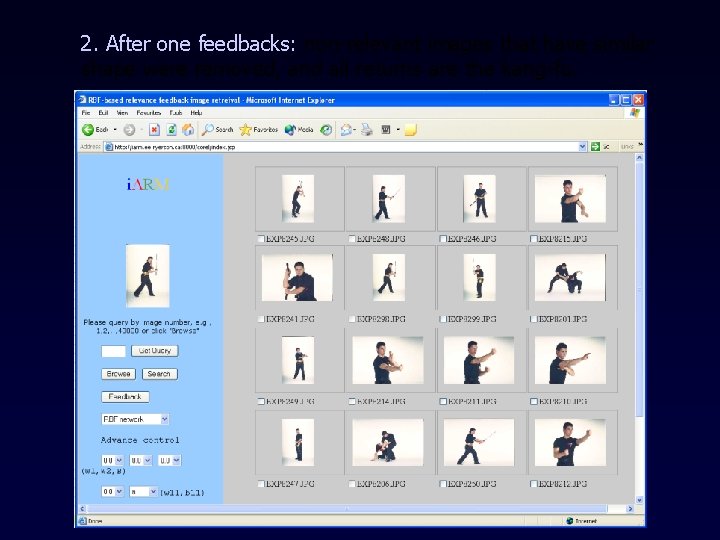

2. After one feedbacks: non-relevant images that have similar shape were removed, and all returns are the kang-fu.

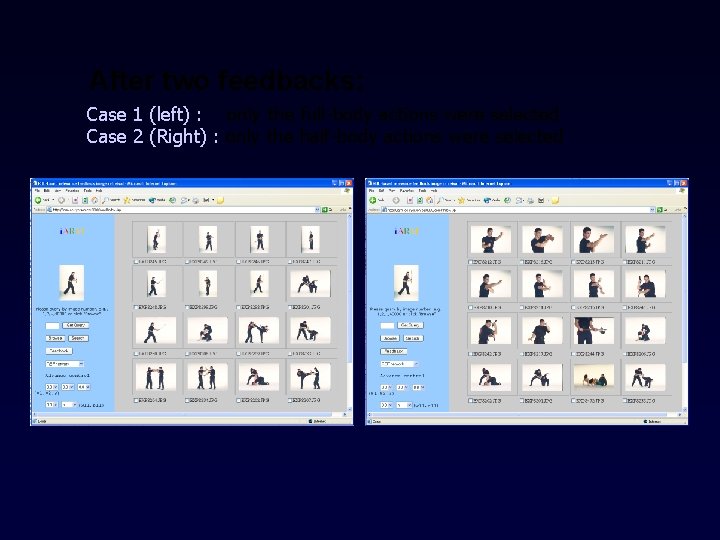

After two feedbacks: Case 1 (left) : only the full-body actions were selected Case 2 (Right) : only the half-body actions were selected

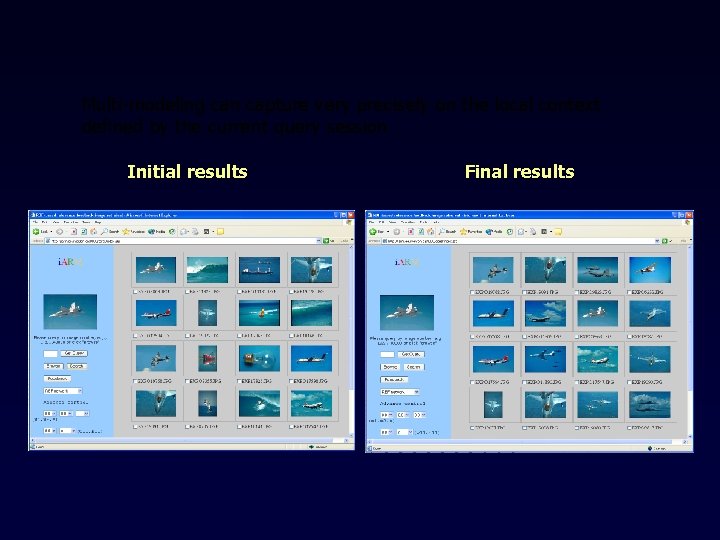

Test 3: Multi-model capturing

Multi-modeling can capture very precisely on the local context defined by the current query session Initial results Final results

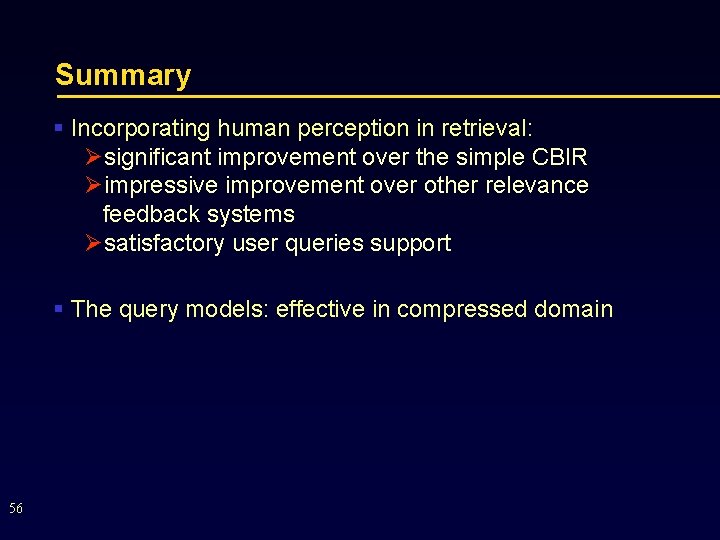

Summary § Incorporating human perception in retrieval: Øsignificant improvement over the simple CBIR Øimpressive improvement over other relevance feedback systems Øsatisfactory user queries support § The query models: effective in compressed domain 56

Machine Controlled Interactive Contentbased Retrieval (MCI-CBR)

Problem with HCI-CBR • User interaction requires • User to specify `relevance’ or `nonrelevance’ • Inconsistency human performance • Repeating many feedbacks for convergence • Transmission sample files, i. e. , high bandwidth • User-friendly environment (ideal preference) • Less training samples, i. e. , < 20 images/iteration • Less feedbacks, i. e. , 1 -2 iterations 58

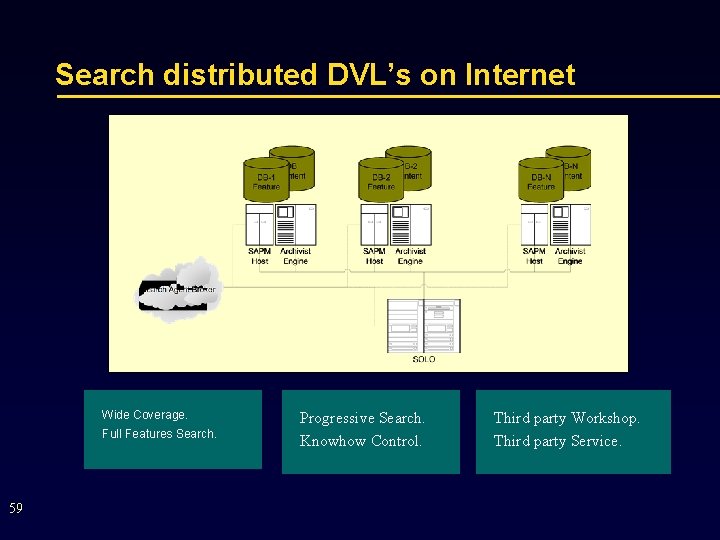

Search distributed DVL’s on Internet Wide Coverage. Full Features Search. 59 Progressive Search. Knowhow Control. Third party Workshop. Third party Service.

Machine Controlled Interactive CBIR (MCI-CBIR) § A key research area in multimedia processing § Aim: To incorporate self-learning capability into CBIR which allows: Øautomatic & semi-automatic retrieval Øminimization of user participations to reduce errors caused by human inconsistency performance Øreduction of bandwidth requirement in Internet browsing and searching 60

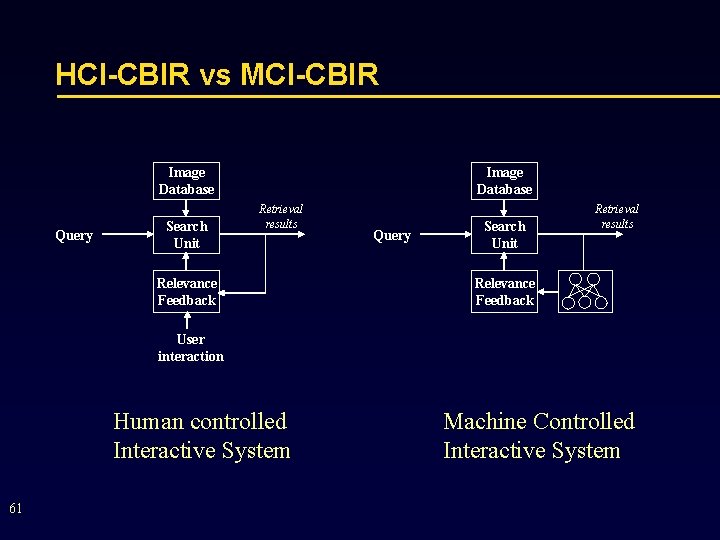

HCI-CBIR vs MCI-CBIR Image Database Query Search Unit Image Database Retrieval results Relevance Feedback Query Search Unit Retrieval results Relevance Feedback User interaction Human controlled Interactive System 61 Machine Controlled Interactive System

The Essence of MCI-CBR • Based on two feature space: R 1 and R 2 • Space R 1 is of reasonable quality & easy to calculate in retrieval, such as: • DCT, • DWT • Space R 2 is of very high quality, but potentially computationally intensive in relevance identification • Descriptors extracted from un-compressed images • Object and region based descriptors 62

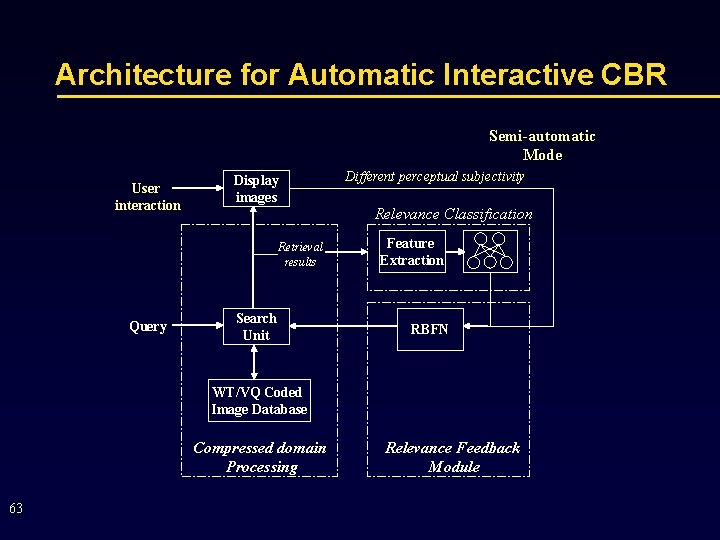

Architecture for Automatic Interactive CBR Semi-automatic Mode User interaction Display images Retrieval results Query Search Unit Different perceptual subjectivity Relevance Classification Feature Extraction RBFN WT/VQ Coded Image Database Compressed domain Processing 63 Relevance Feedback Module

SOTM • Relevance classification is performed by a Self. Organizing Tree Map (SOTM) which offers: • Independent learning based on competitive learning technique • A unique feature map that preserves topological ordering • SOTM is more suitable than the conventional SOM when input feature space is of high dimensionality 64

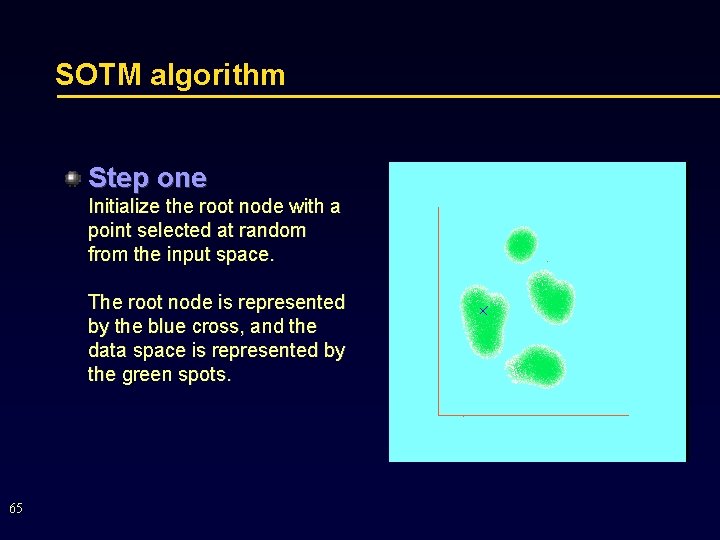

SOTM algorithm Step one Initialize the root node with a point selected at random from the input space. The root node is represented by the blue cross, and the data space is represented by the green spots. 65

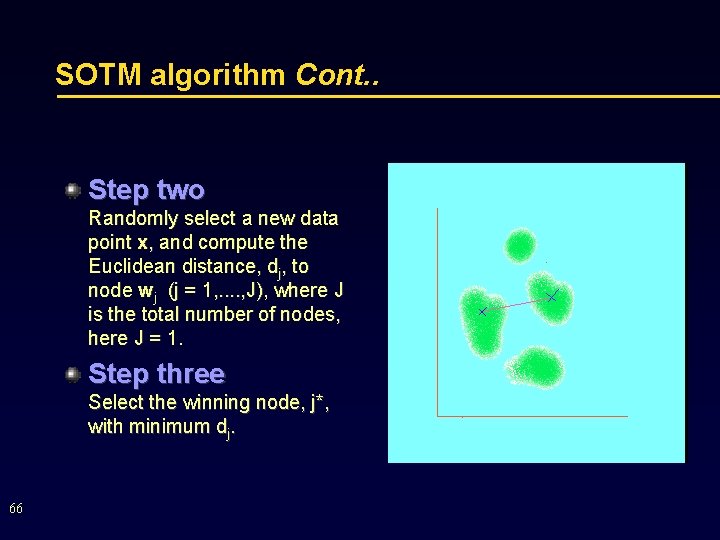

SOTM algorithm Cont. . Step two Randomly select a new data point x, and compute the Euclidean distance, dj, to node wj (j = 1, . . , J), where J is the total number of nodes, here J = 1. Step three Select the winning node, j*, with minimum dj. 66

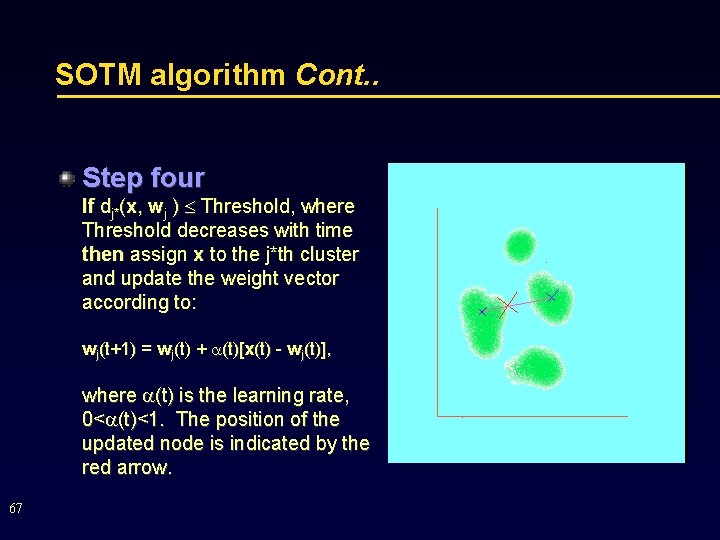

SOTM algorithm Cont. . Step four If dj*(x, wj ) Threshold, where Threshold decreases with time then assign x to the j*th cluster and update the weight vector according to: wj(t+1) = wj(t) + (t)[x(t) - wj(t)], where (t) is the learning rate, 0< (t)<1. The position of the updated node is indicated by the red arrow. 67

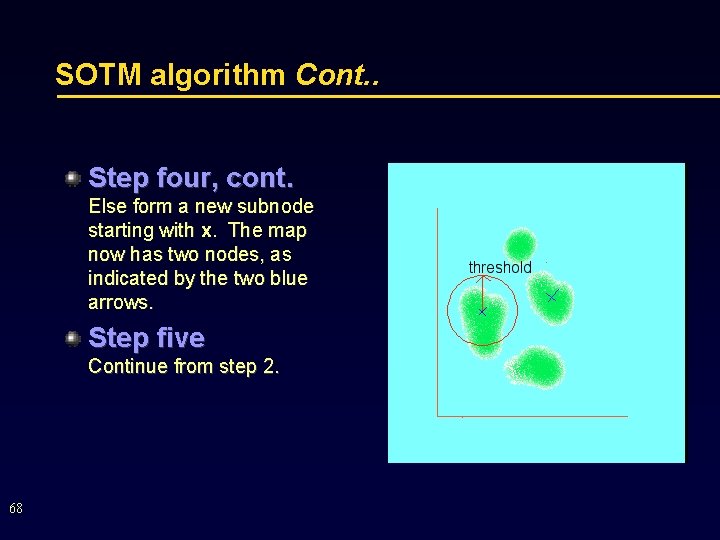

SOTM algorithm Cont. . Step four, cont. Else form a new subnode starting with x. The map now has two nodes, as indicated by the two blue arrows. Step five Continue from step 2. 68

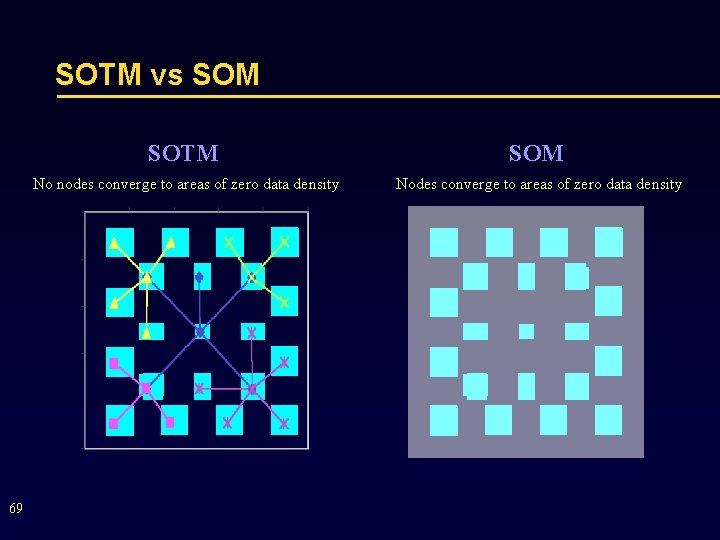

SOTM vs SOM 69 SOTM SOM No nodes converge to areas of zero data density Nodes converge to areas of zero data density

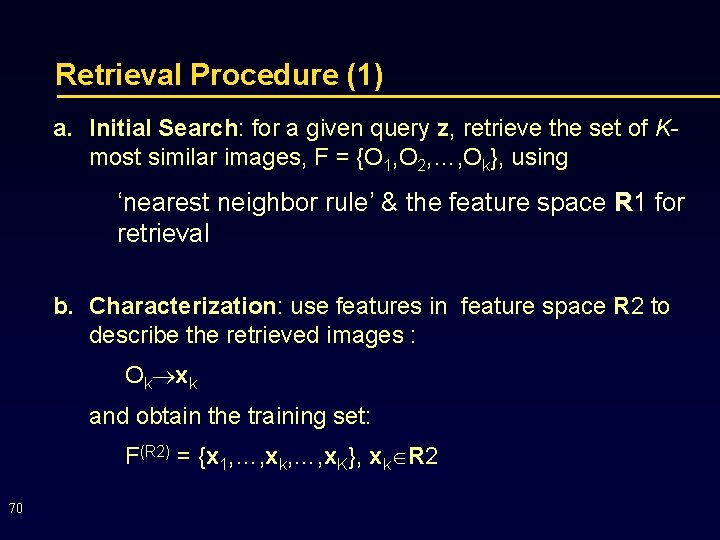

Retrieval Procedure (1) a. Initial Search: for a given query z, retrieve the set of Kmost similar images, F = {O 1, O 2, …, Ok}, using ‘nearest neighbor rule’ & the feature space R 1 for retrieval b. Characterization: use features in feature space R 2 to describe the retrieved images : Ok xk and obtain the training set: F(R 2) = {x 1, …, xk, …, x. K}, xk R 2 70

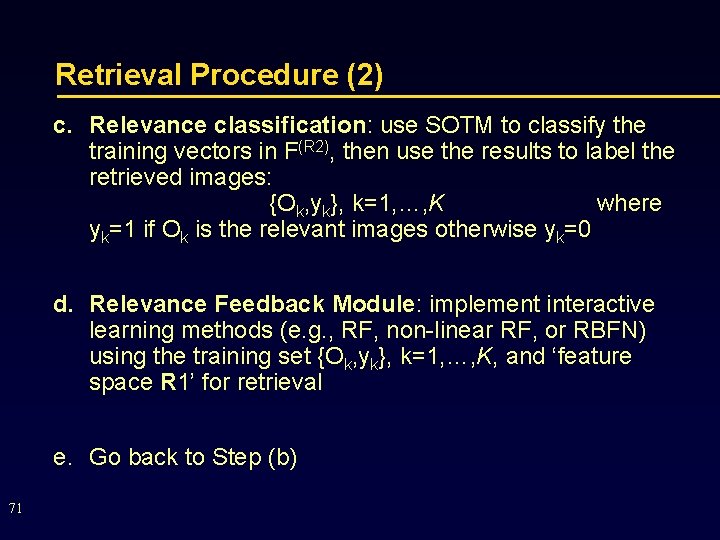

Retrieval Procedure (2) c. Relevance classification: use SOTM to classify the training vectors in F(R 2), then use the results to label the retrieved images: {Ok, yk}, k=1, …, K where yk=1 if Ok is the relevant images otherwise yk=0 d. Relevance Feedback Module: implement interactive learning methods (e. g. , RF, non-linear RF, or RBFN) using the training set {Ok, yk}, k=1, …, K, and ‘feature space R 1’ for retrieval e. Go back to Step (b) 71

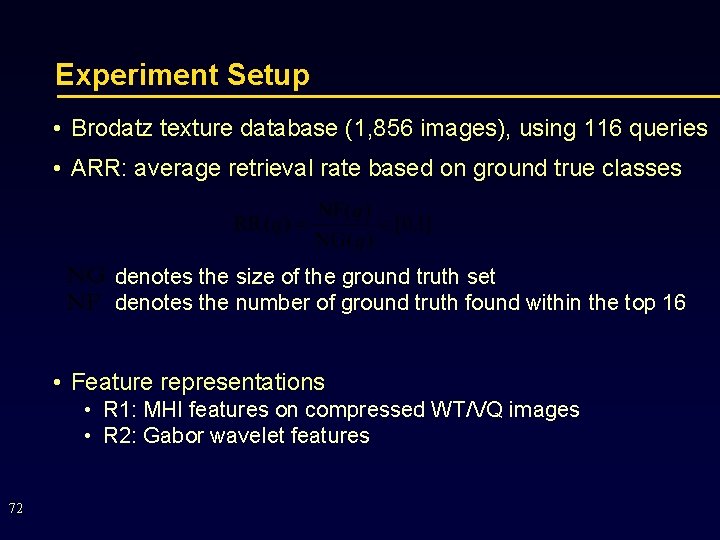

Experiment Setup • Brodatz texture database (1, 856 images), using 116 queries • ARR: average retrieval rate based on ground true classes denotes the size of the ground truth set denotes the number of ground truth found within the top 16 • Feature representations • R 1: MHI features on compressed WT/VQ images • R 2: Gabor wavelet features 72

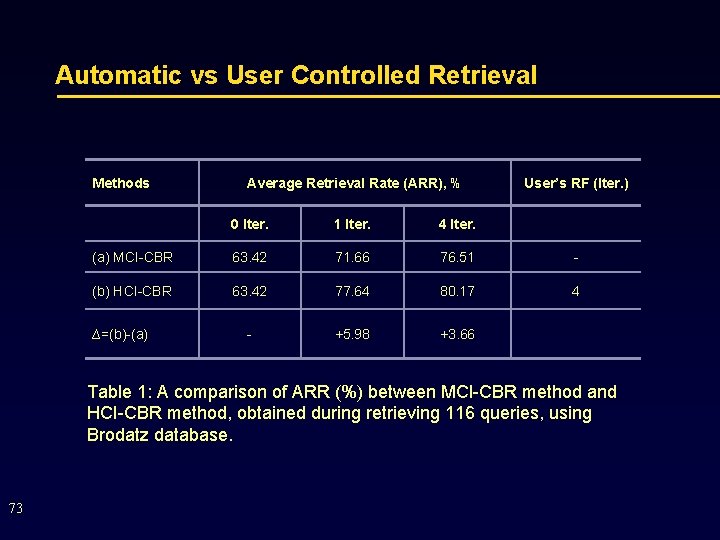

Automatic vs User Controlled Retrieval Methods Average Retrieval Rate (ARR), % User’s RF (Iter. ) 0 Iter. 1 Iter. 4 Iter. (a) MCI-CBR 63. 42 71. 66 76. 51 - (b) HCI-CBR 63. 42 77. 64 80. 17 4 - +5. 98 +3. 66 ∆=(b)-(a) Table 1: A comparison of ARR (%) between MCI-CBR method and HCI-CBR method, obtained during retrieving 116 queries, using Brodatz database. 73

Retrieval Example Non-Interactive Retrieval 74 Automatic Interactive Retrieval

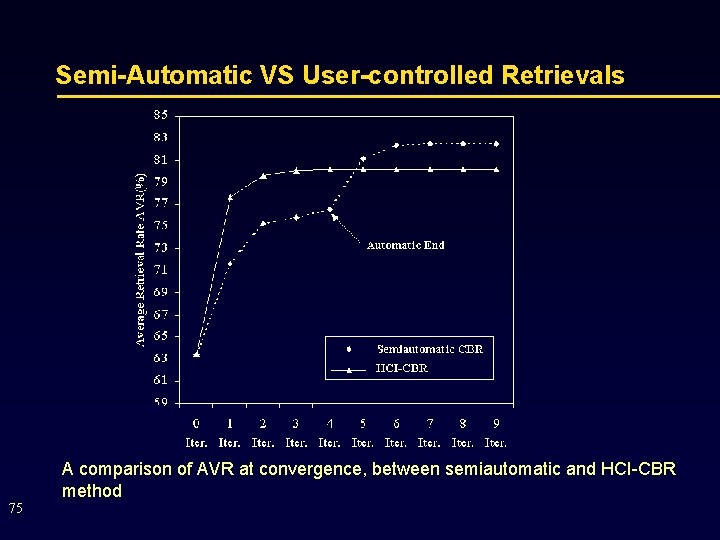

Semi-Automatic VS User-controlled Retrievals A comparison of AVR at convergence, between semiautomatic and HCI-CBR method 75

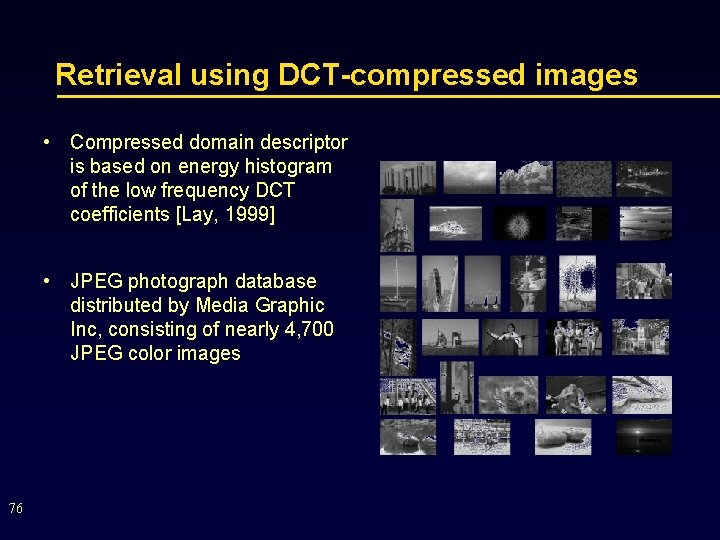

Retrieval using DCT-compressed images • Compressed domain descriptor is based on energy histogram of the low frequency DCT coefficients [Lay, 1999] • JPEG photograph database distributed by Media Graphic Inc, consisting of nearly 4, 700 JPEG color images 76

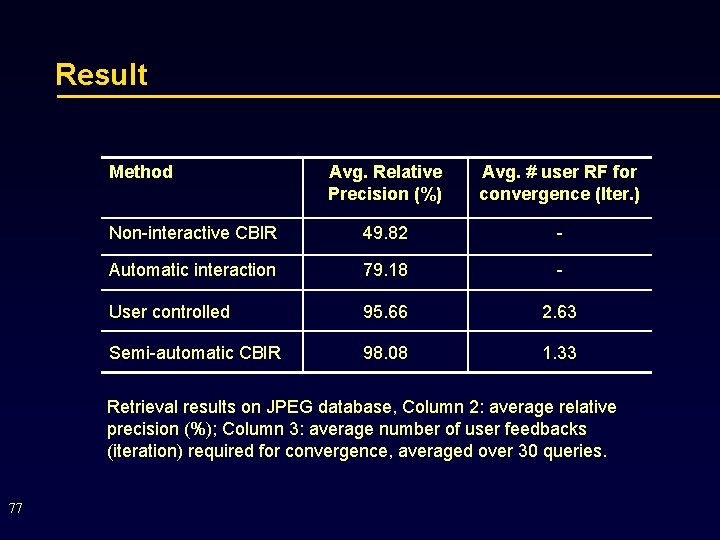

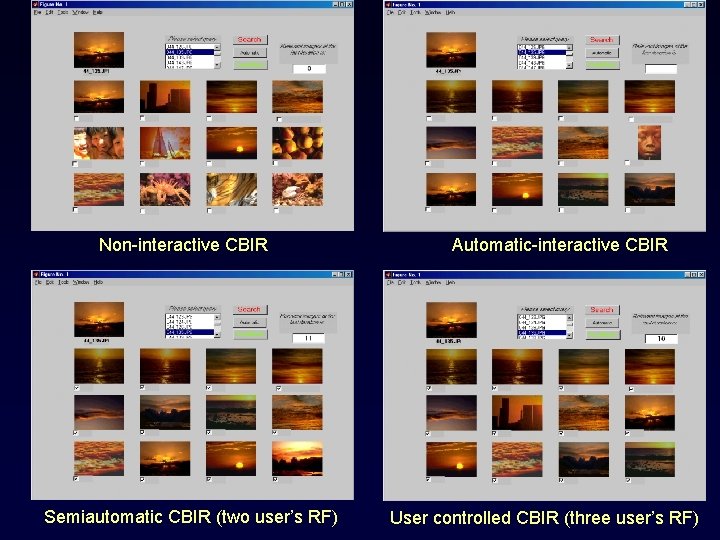

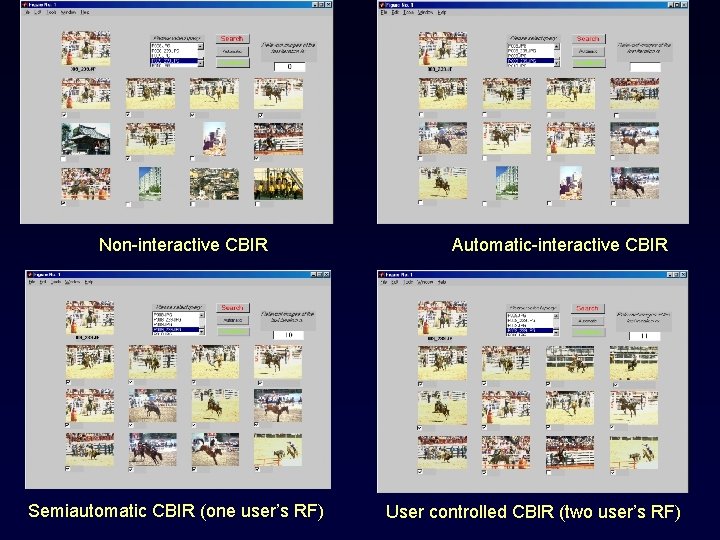

Result Method Avg. Relative Precision (%) Avg. # user RF for convergence (Iter. ) Non-interactive CBIR 49. 82 - Automatic interaction 79. 18 - User controlled 95. 66 2. 63 Semi-automatic CBIR 98. 08 1. 33 Retrieval results on JPEG database, Column 2: average relative precision (%); Column 3: average number of user feedbacks (iteration) required for convergence, averaged over 30 queries. 77

Non-interactive CBIR Semiautomatic CBIR (two user’s RF) Automatic-interactive CBIR User controlled CBIR (three user’s RF)

Non-interactive CBIR Semiautomatic CBIR (one user’s RF) Automatic-interactive CBIR User controlled CBIR (two user’s RF)

Summary • MCI-CBIR • minimizes the role of users in CBIR • Semi-automatic retrieval reach optimal performance quickly 80

- Slides: 80