Identifying reliable research Ignazio Ziano trying to build

Identifying reliable research Ignazio Ziano

trying to “build on the literature” science

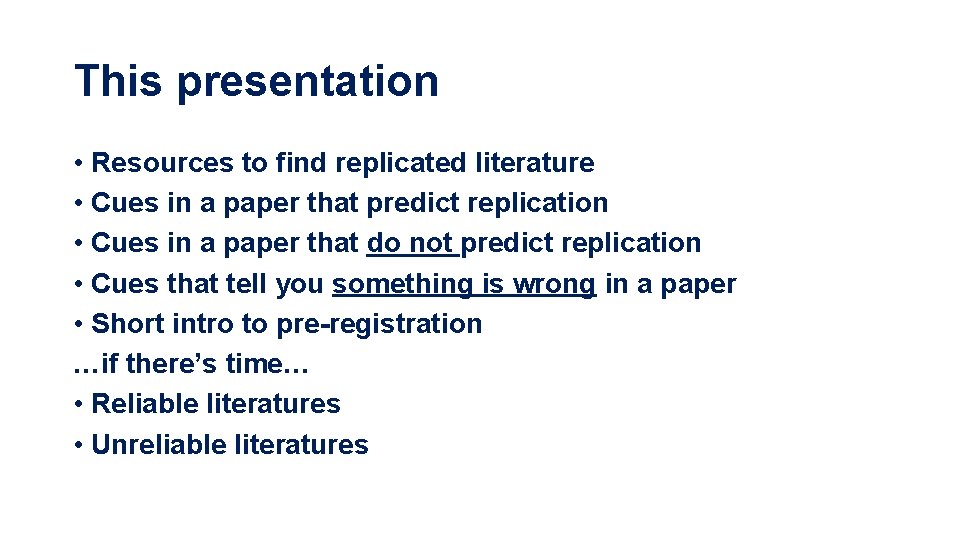

This presentation • Resources to find replicated literature • Cues in a paper that predict replication • Cues in a paper that do not predict replication • Cues that tell you something is wrong in a paper • Short intro to pre-registration …if there’s time… • Reliable literatures • Unreliable literatures

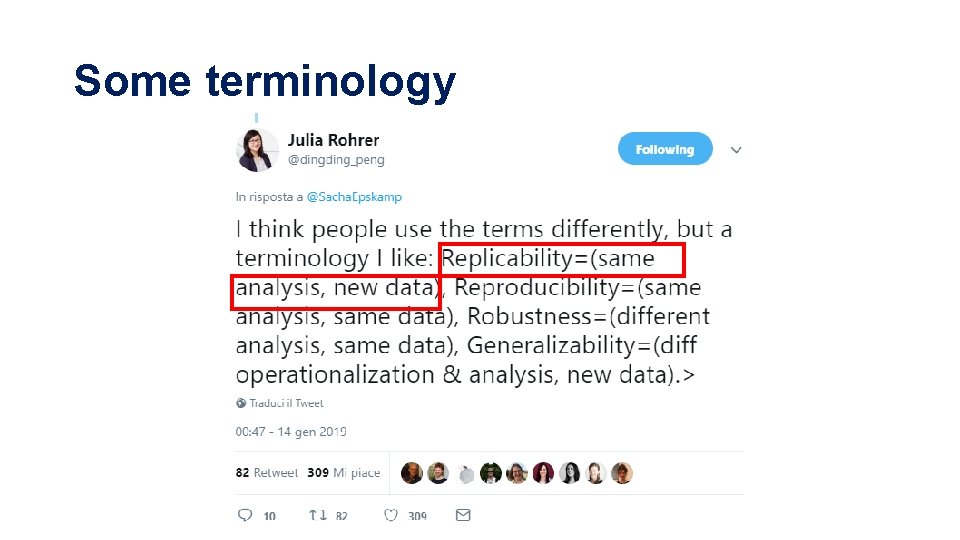

Some terminology

Replication – what is it? • Can someone else repeat the same experiments and get similar results? • Effect in same direction, p<. 05 • Le. Bel et al 2018 for a better taxonomy

Replication crisis - Psychology • R: PP (Nosek et al 2015) • 36/100 replicated effects both • significant (p<. 05) • same direction as original (36%) • Lower in social psych (~25%) than cognitive psych (~55%)

Replication crisis - Psychology • Many Labs 1: 10/13 successes (77%) • Many Labs 2: 14/28 (50%) • Many Labs 3: 7/10 (70%) • RSSP: 13/21 (62%) – includes econ papers too • Few “one-shot” large replications also failed to show effect • Ego depletion • Hagger et al 2016, N=2, 141 • Professor priming • O’Donnell et al 2018, N=6, 454 • Stereotype threat • Flore et al 2019, N=2, 064

Replication crisis – Economics • EE-RP (Experimental Econ Replication Project): 11/18 (61%) • IMHO, they have not looked hard enough…

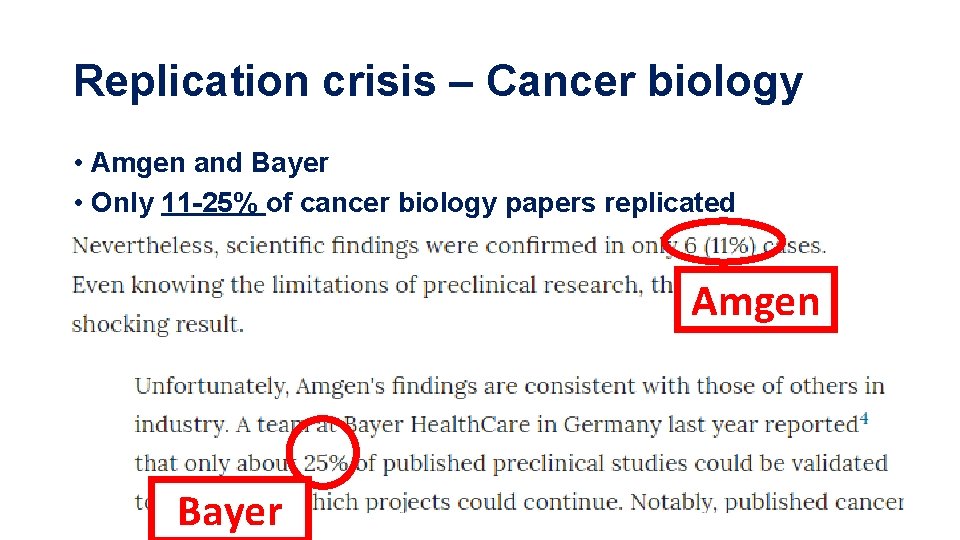

Replication crisis – Cancer biology • Amgen and Bayer • Only 11 -25% of cancer biology papers replicated Amgen Bayer

Sources for previous slide https: //www. nature. com/articles/nrd 3439 -c 1 https: //www. nature. com/articles/483531 a

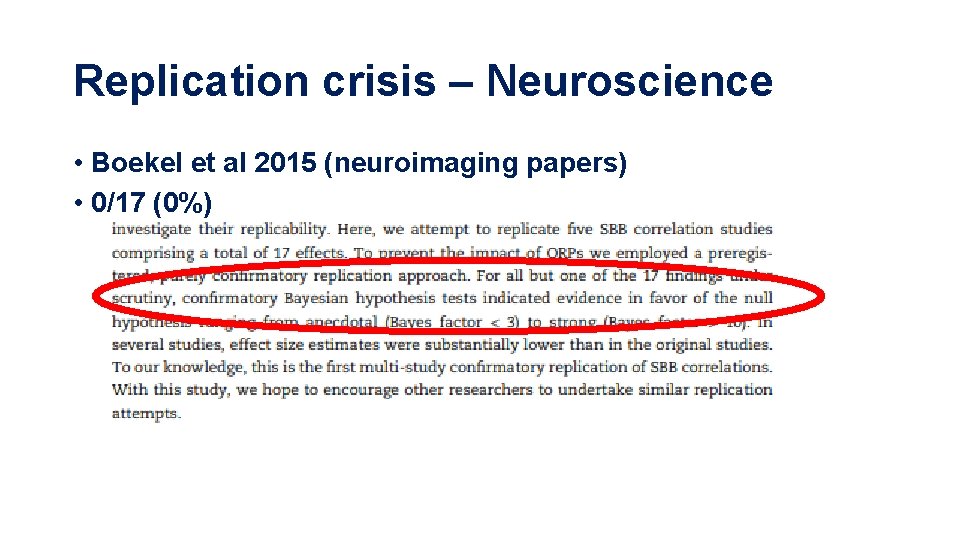

Replication crisis – Neuroscience • Boekel et al 2015 (neuroimaging papers) • 0/17 (0%)

Replication crisis – Artificial Intelligence?

Marketing

Marketing journals editors when they hear the words ‘replication crisis’

Replication crisis – Marketing (CB)? Methods & training are same as in Psych Replications in MKT journals are VERY RARE Marketing has same problems as others.

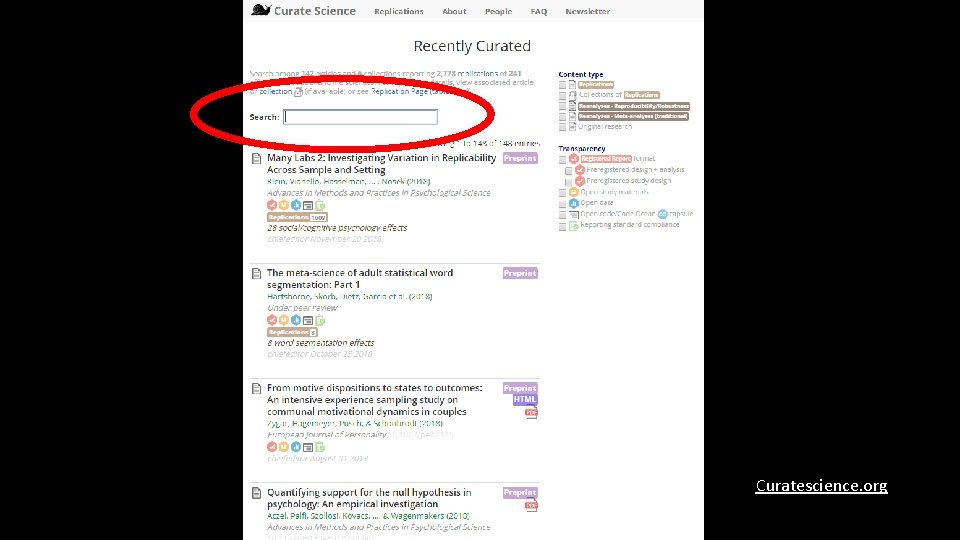

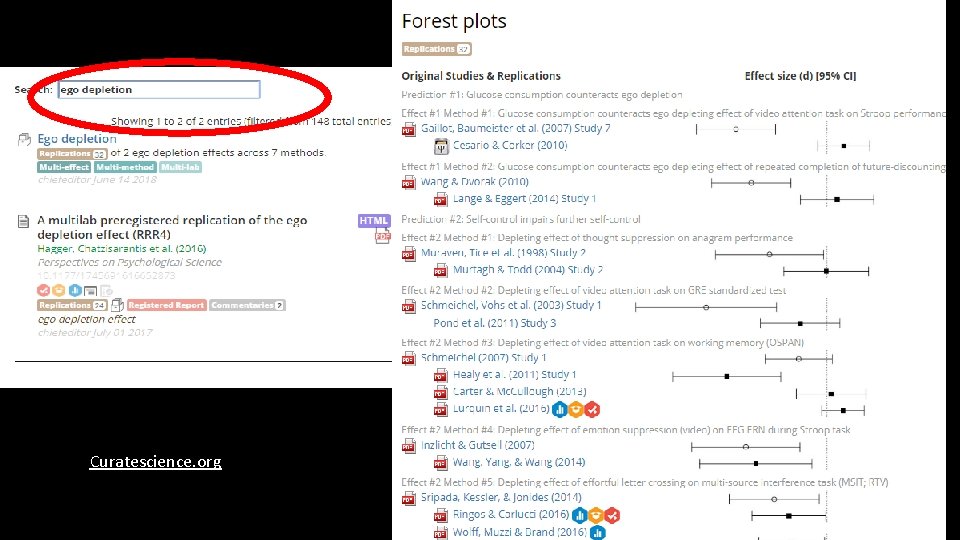

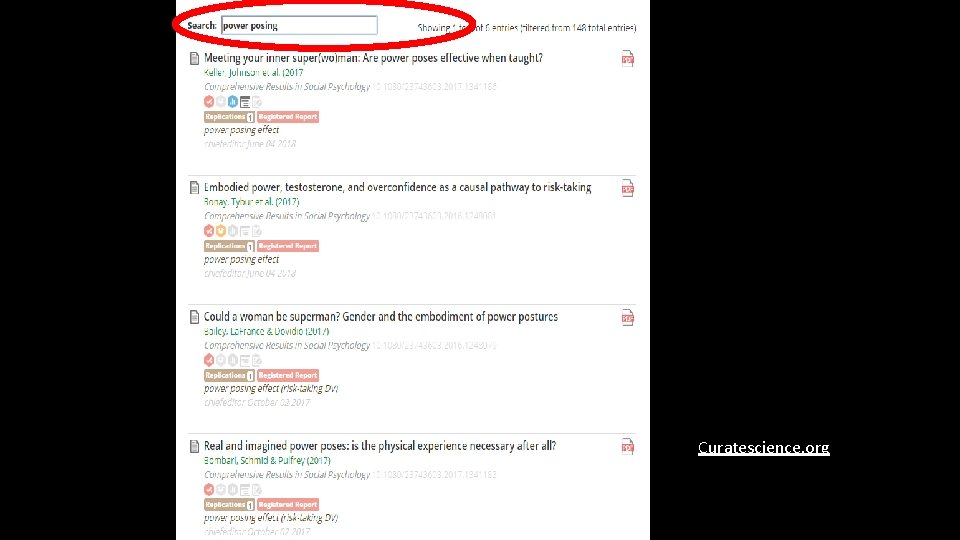

How to identify replicable research • I want to build on something. Will it work? • Build = replicate (conceptual/close), moderate, extend, • Has this been replicated successfully? • Curatescience. org contains a curated list of replications in Psychology • done by Etienne Le. Bel, now at KU Leuven • Overlap with CB authors and theories

Curatescience. org

Curatescience. org

Curatescience. org

X-Ray method • I want to build on something. Will it work? • Can I look at the paper features and infer whether it works? • Maybe!

P-values • Low p-values more likely to be replicated (e. g. Many Labs 2; R: PP) • Correlation between p-value and replication (Yes/No) • Many Labs: r = -. 33*** • Lower p-value replication more likely • It’s not really clear why • Maybe because it is harder to p-hack to. 01 than to. 05 • Simonsohn et al 2015

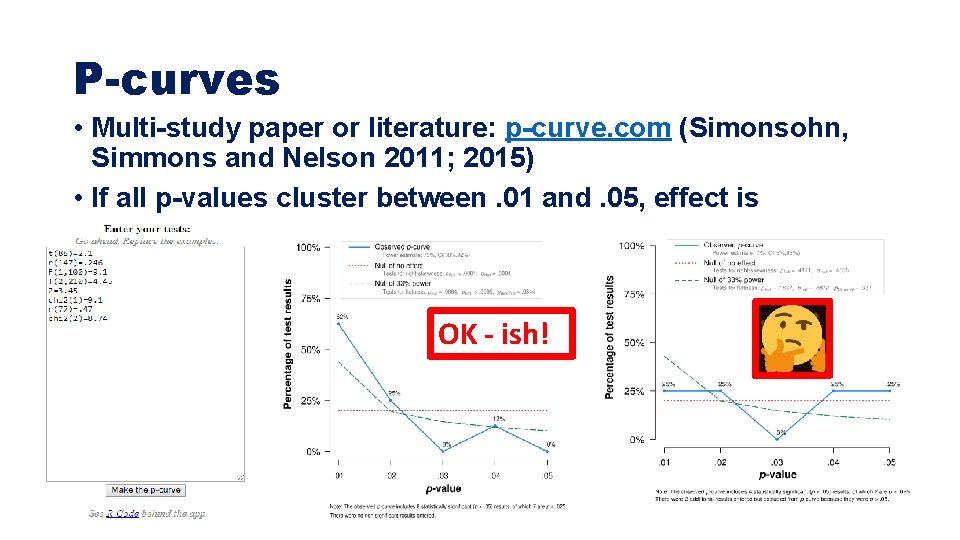

P-curves • Multi-study paper or literature: p-curve. com (Simonsohn, Simmons and Nelson 2011; 2015) • If all p-values cluster between. 01 and. 05, effect is implausible OK - ish!

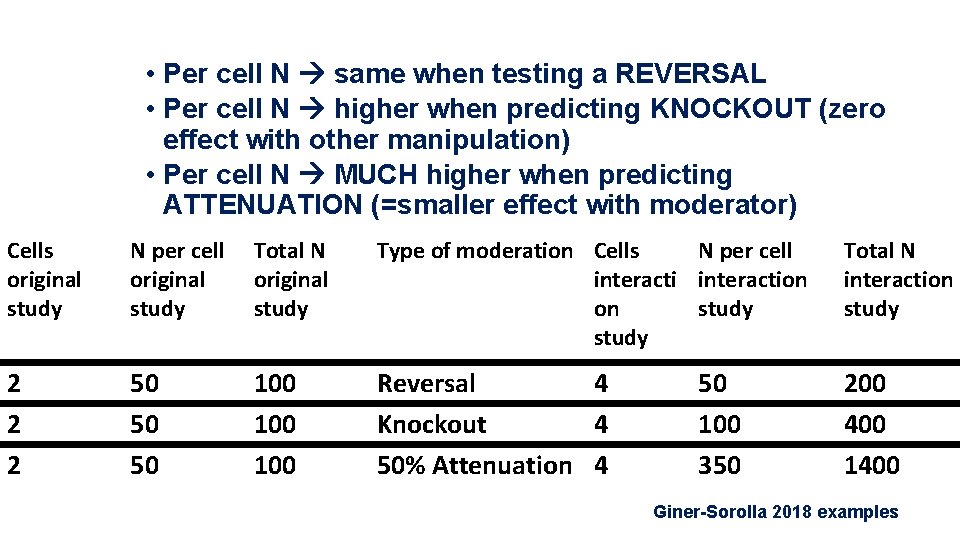

Interactions are less reliable than main effects • Moderation needs many more subjects than you think • Simonsohn 2014, “No-way interactions” • http: //datacolada. org/17 • Giner-Sorolla 2018 • https: //approachingblog. wordpress. com/2018/01/24/powering-yourinteraction-2/ • Gelman 2018 • https: //statmodeling. stat. columbia. edu/2018/03/15/need-16 -times-samplesize-estimate-interaction-estimate-main-effect/ • Interaction: Difference-in-Difference (kinda) • The smaller the D-I-D, the higher the N you need

• Per cell N same when testing a REVERSAL • Per cell N higher when predicting KNOCKOUT (zero effect with other manipulation) • Per cell N MUCH higher when predicting ATTENUATION (=smaller effect with moderator) Cells original study N per cell original study Total N original study Type of moderation Cells N per cell interaction on study Total N interaction study 2 2 2 50 50 50 100 100 Reversal 4 Knockout 4 50% Attenuation 4 200 400 1400 50 100 350 Giner-Sorolla 2018 examples

• Do researchers power their interactions this way? • No. In fact, they collect FEWER pp/cell when testing an interaction • My own impression; but see anectodal evidence: Simonsohn 2014 on Strack et al. 1988

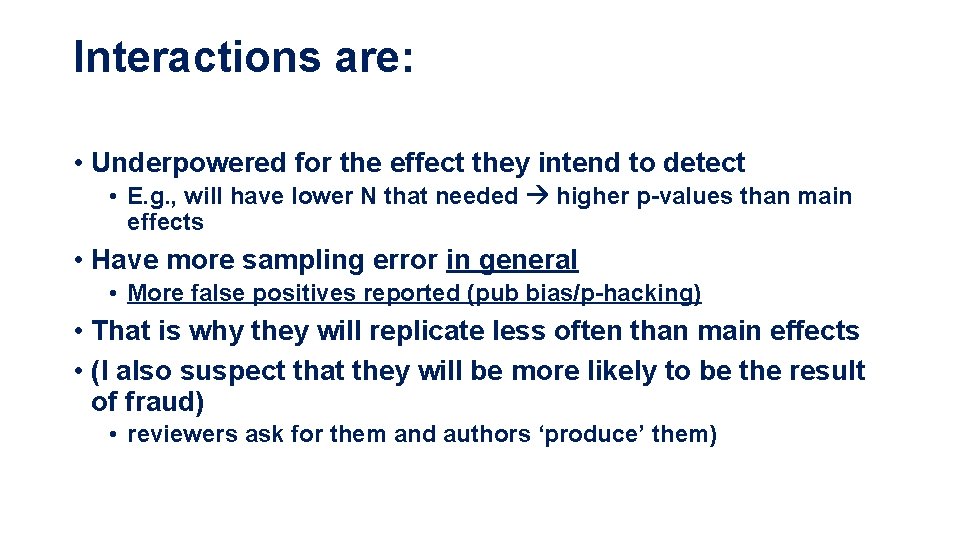

Interactions are: • Underpowered for the effect they intend to detect • E. g. , will have lower N that needed higher p-values than main effects • Have more sampling error in general • More false positives reported (pub bias/p-hacking) • That is why they will replicate less often than main effects • (I also suspect that they will be more likely to be the result of fraud) • reviewers ask for them and authors ‘produce’ them)

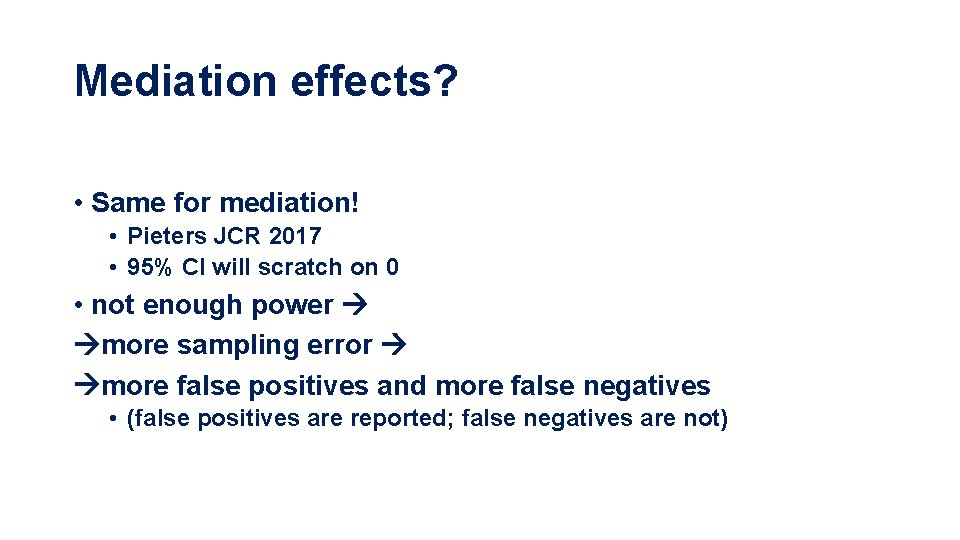

Mediation effects? • Same for mediation! • Pieters JCR 2017 • 95% CI will scratch on 0 • not enough power more sampling error more false positives and more false negatives • (false positives are reported; false negatives are not)

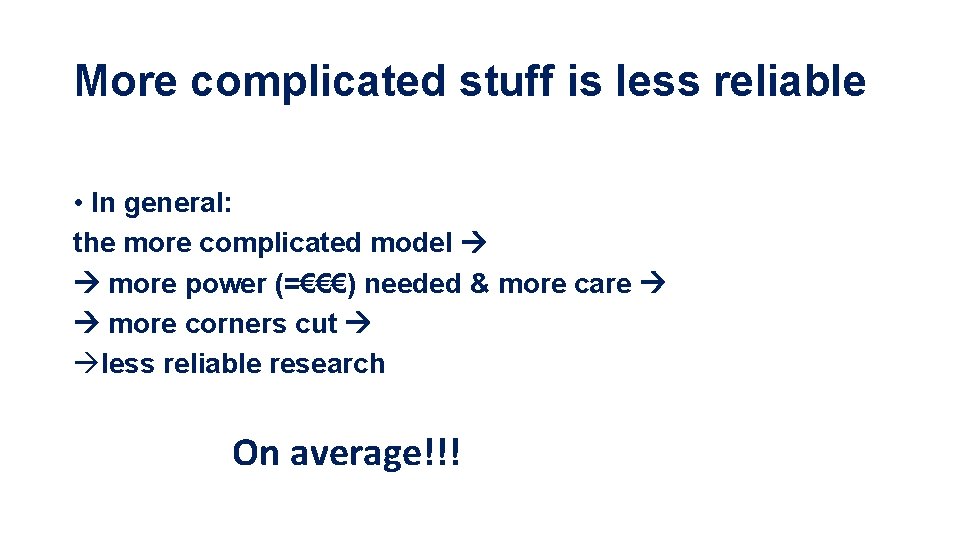

More complicated stuff is less reliable • In general: the more complicated model more power (=€€€) needed & more care more corners cut less reliable research On average!!!

Stuff that DOES NOT predict replicability • Journal prestige • Brembs 2018 • JCR is not more reliable than JBR – just more famous • (Same for institutional prestige IMHO)

Stuff that DOES NOT predict replicability • A meta-analysis that shows an overall effect • Hagger et al 2010 meta • Ego depletion is real and d=. 62 …then failed to replicate… (Hagger et al 2016) • De. Coster and Claypool 2004 meta-analysis • Elderly priming is real and d=. 40 …then failed to replicate…(Pashler et al 2008)

The trouble with meta-analysis • Garbage in, garbage out • Unreliable literature = unreliable meta-analysis • Corrections (PET-PEESE, fail-safe N, p-uniform) will STILL TELL YOU THERE IS AN EFFECT (when there is none) • Mc. Shane et al 2016, PPS • They assume that we know the combined effect of selective reporting, p-hacking, and fraud, but we DO NOT • Meta-analyses are great to show many problems a lit has • E. g. , Egger’s plot, p-curve can hint that something is wrong

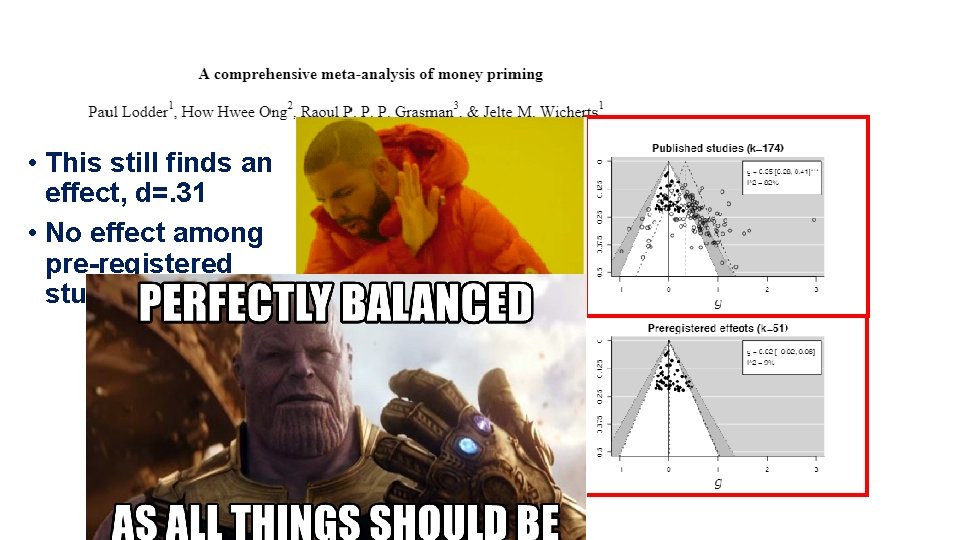

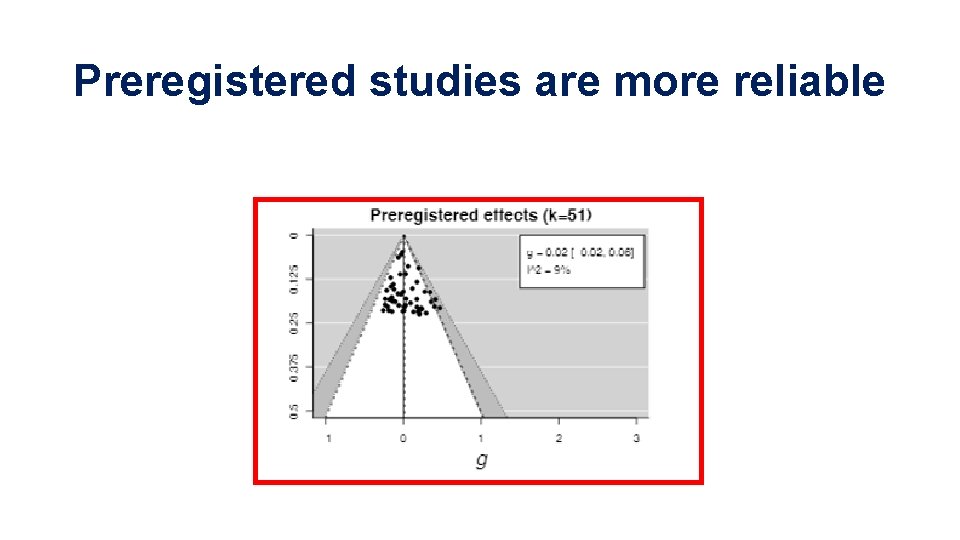

• This still finds an effect, d=. 31 • No effect among pre-registered studies

Preregistered studies are more reliable

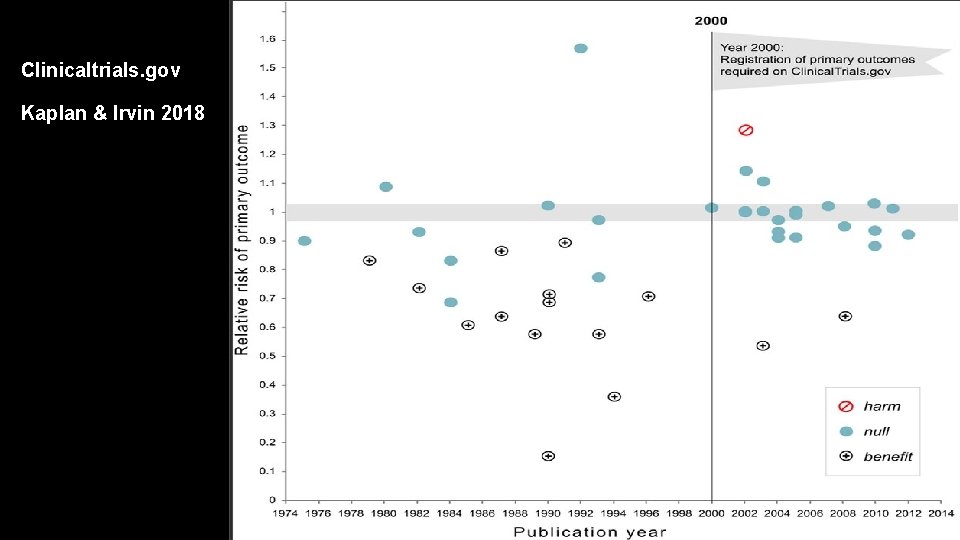

Clinicaltrials. gov Kaplan & Irvin 2018

• A lot of subjective decisions • Which are NOT the problem! • Albeit they are classified as QRPs and they are widespread (John et al 2012) • The problem: transparency • Did you really test a HP or were you fishing? • How? Pre-registration

What is preregistration? ‘In pre-registration, researchers describe their hypotheses, methods, and analyses before a piece of research is conducted, in a way that can be externally verified’ Van ‘t Veer & Giner-Sorolla 2016 This means… • • write it down before you do it upload it on a 3 rd party website Where it is time-stamped Then run the study

Where& how can I preregister? • Open Science Framework (osf. io) • Aspredicted. org • MANY other places • E. g. , USA medical trials HAVE to be preregistered and are all at clinicaltrials. gov • New publishing platform researchers. one

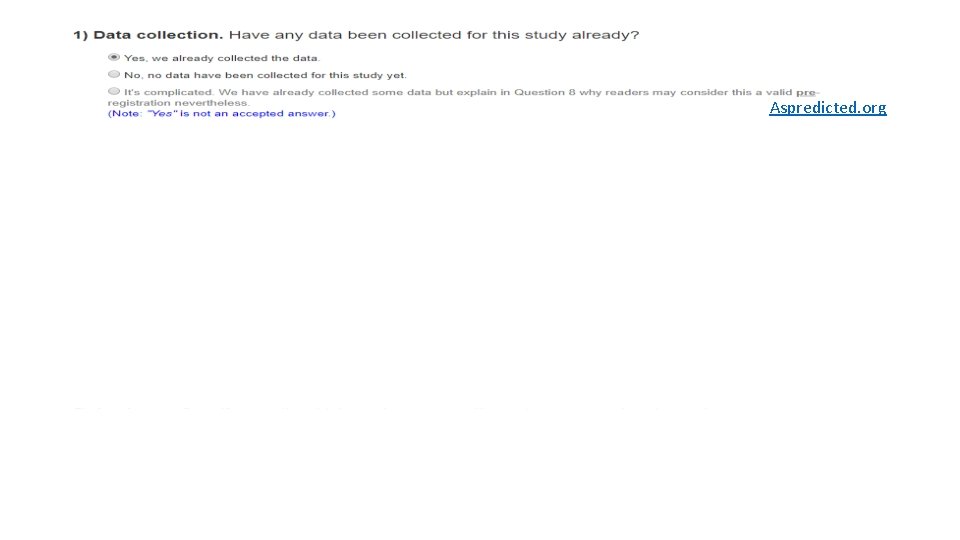

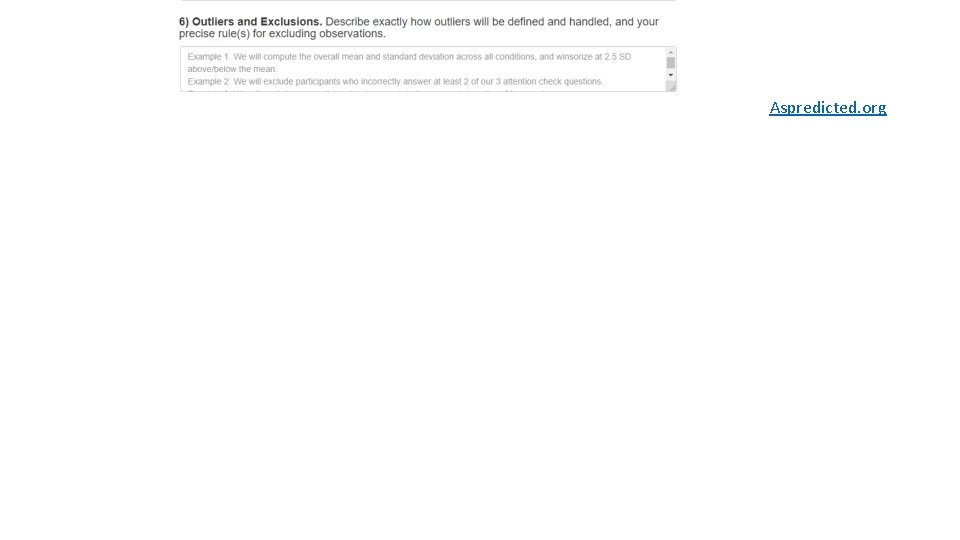

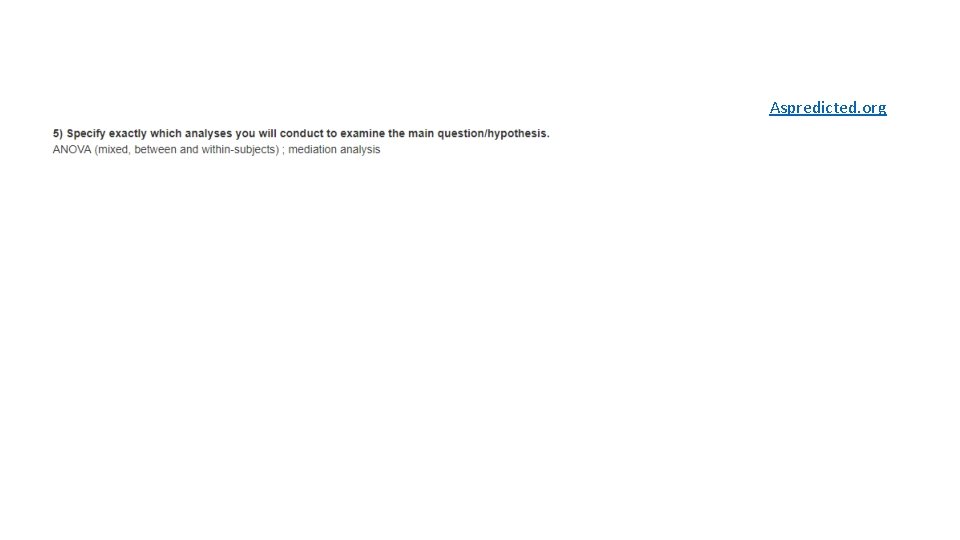

Aspredicted. org

Aspredicted. org

Aspredicted. org

Aspredicted. org

Why preregister? • Transparency of your decision • A priori vs. ad hoc • Fine to ‘learn from the data’ if you specify what you planned and what you learned! • If you want to replicate other people’s work • Make it clear that you are not out to get them!

Are people who do not preregister bad people? • Nah • BUT preregistration makes your research more transparent • Easier to publish null results • ‘Look. We followed the recipe. We didn’t fuck anything up. It just doesn’t work!’

Is preregistered research better than non prereg research? • Nah! • Only more transparent! • Which means easier to criticize and spot mistakes in • Self-correcting science

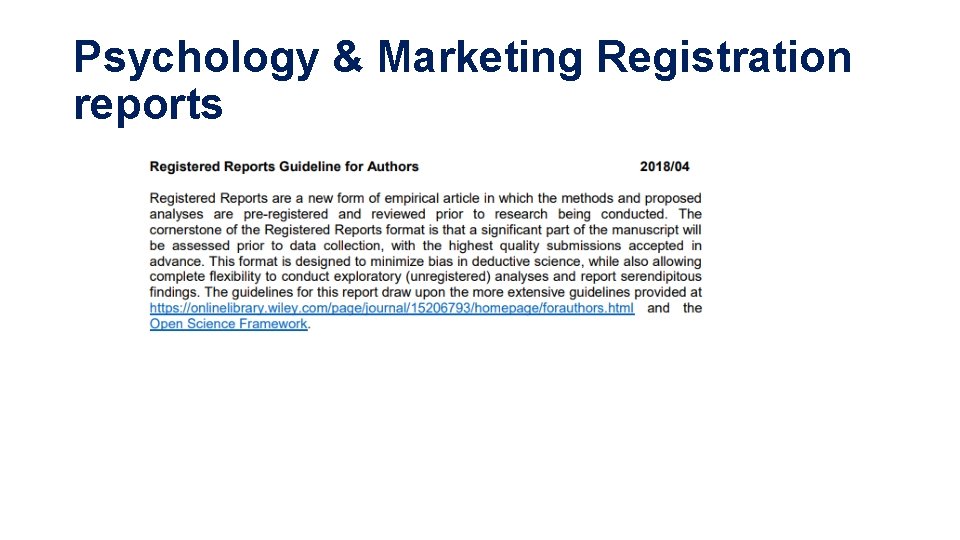

Psychology & Marketing Registration reports

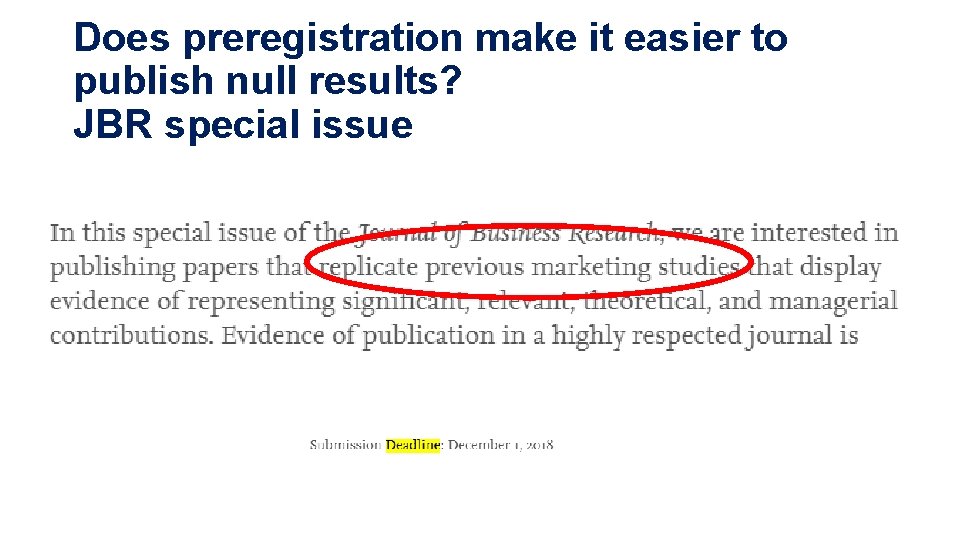

Does preregistration make it easier to publish null results? JBR special issue

Journal of Business Ethics special issue Deadline: January 1 st 2019

Journal of Economic Psychology special issue Deadline: June 1 st 2018

Does preregistration make it easier to publish replications? Very common in psych

Useful readings • Nosek & Ebersole 2017 PNAS, ‘The preregistration revolution’ • FAQ about preregistration (e. g. , ‘does it stifle creativity? ’; ‘what do I do with secondary data? ’) • Van ‘t Veer & Giner-Sorolla 2016 JESP, ‘Pre-registration in social psychology—A discussion and suggested template’ • Psychology-centred, very relevant to experimental research • Simonsohn, Simmons, Nelson 2017 ‘How to properly preregister a study’, datacolada. org/64 • Common pitfalls of preregistrations: they are too vague. Guide on how to improve them.

Where to preregister/submit • Aspredicted. org • Open Scien Framework (osf. io) • Psych & Marketing RR guidelines • JBR special issue (expired)

Further indication an effect may not be real • Effect is too big • • Danziger et al 2012 – Israeli judges Cohen’s d = 1. 96 More like a manipulation check Something is probably wrong • Glöckner 2016, JDM

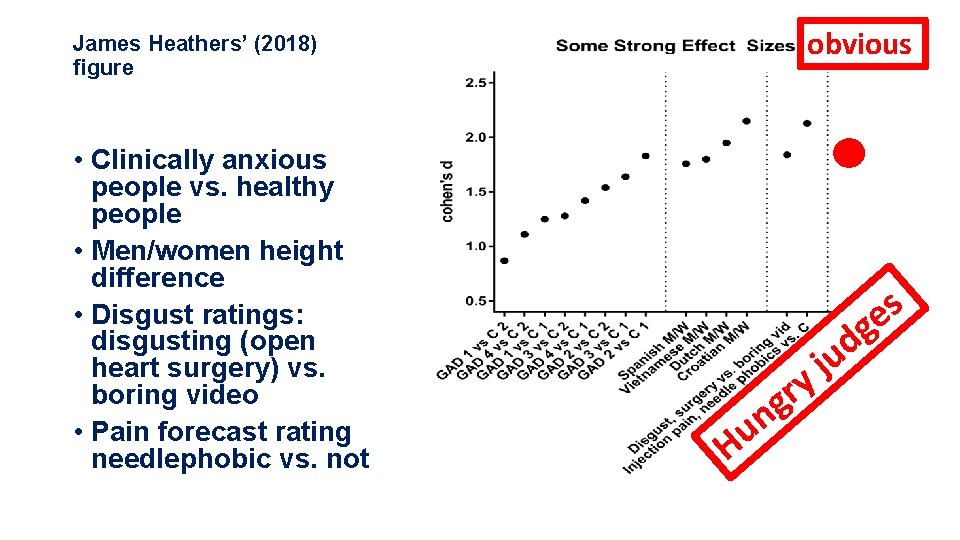

obvious James Heathers’ (2018) figure • Clinically anxious people vs. healthy people • Men/women height difference • Disgust ratings: disgusting (open heart surgery) vs. boring video • Pain forecast rating needlephobic vs. not s e g H n u y r g d u j

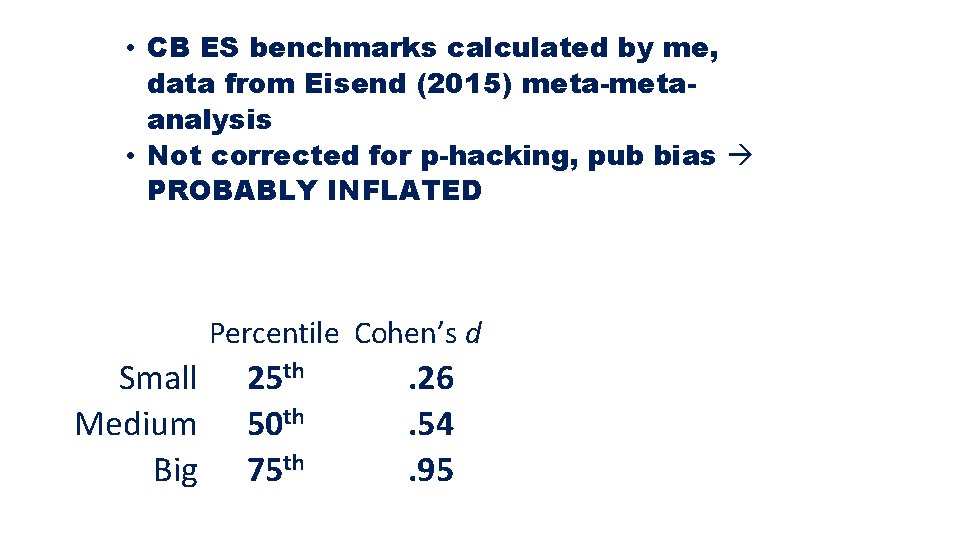

• CB ES benchmarks calculated by me, data from Eisend (2015) meta-metaanalysis • Not corrected for p-hacking, pub bias PROBABLY INFLATED Percentile Cohen’s d Small Medium Big 25 th 50 th 75 th . 26. 54. 95 Total N 2 BS cond, 80% power, alpha=. 05, two-tailed 468 110 38 Total N 2 BS cond, 80% power, alpha=. 05, two-tailed, 33% correction for pub bias 972 232 84

More X-rays – is this even a paper? • Statcheck, SPRITE, GRIM, and GRIMMER

Statcheck • Nuijten et al 2016, 2018 • “…we found a significant effect, t(132)= 1. 82, p <. 05 (two-tailed)” • Is this possible? • NO! p=value here is p=. 071 • Correspondence between test statistics and p-value • Nuijten et al 2016: 1/8 papers in psych (1985 -2013) contain at least one gross inconsistency • Magically clustered around p=. 05 • There’s an app for that! • And an r package • Statcheck. io

Statcheck. io

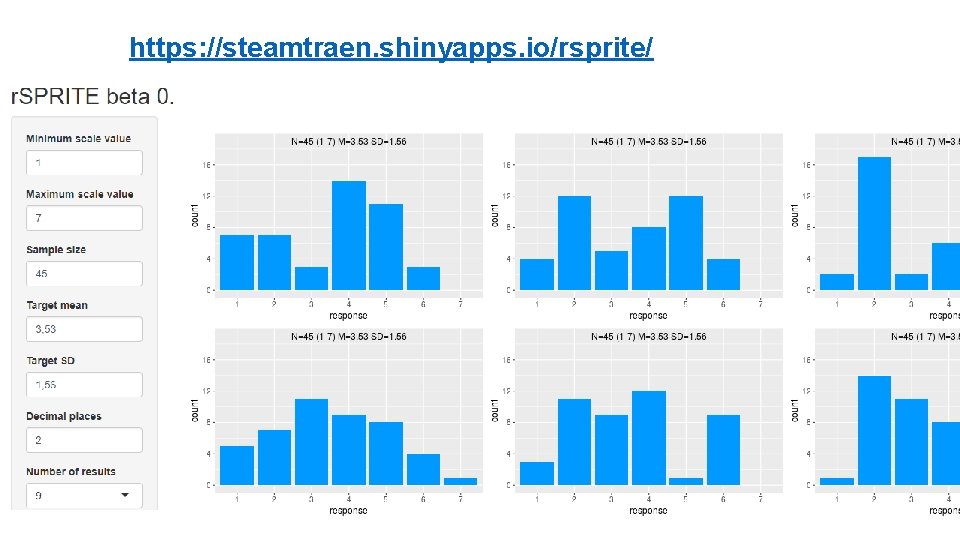

SPRITE • Heathers et al 2017 • Sample Parameter Reconstruction via Iterative TEchniques • Given an M, an SD, and an N, there is only a finite number of distributions that correspond to it • Are they plausible? • Or there is a kid who ate 500 g of carrots? • There is an app for that! • https: //steamtraen. shinyapps. io/rsprite/

https: //steamtraen. shinyapps. io/rsprite/

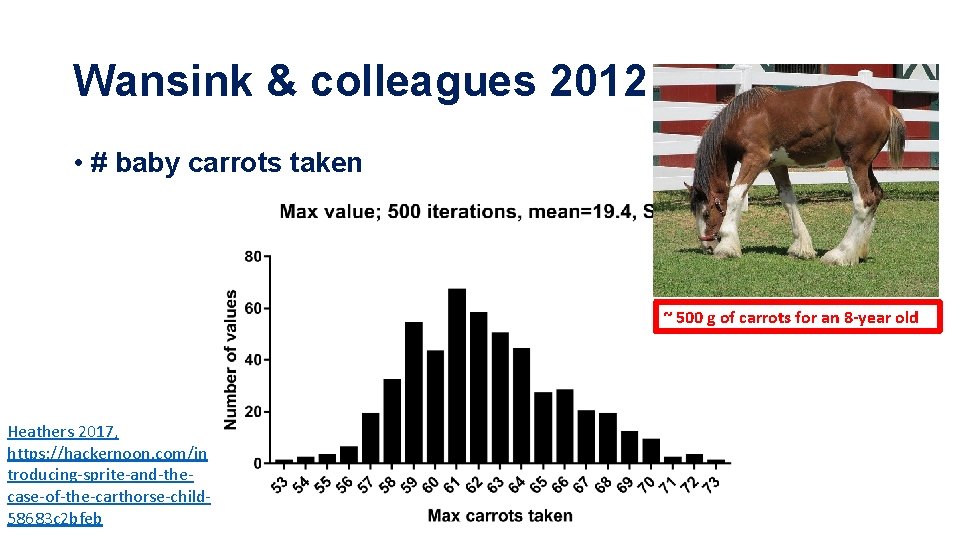

Wansink & colleagues 2012 • # baby carrots taken ~ 500 g of carrots for an 8 -year old Heathers 2017, https: //hackernoon. com/in troducing-sprite-and-thecase-of-the-carthorse-child 58683 c 2 bfeb

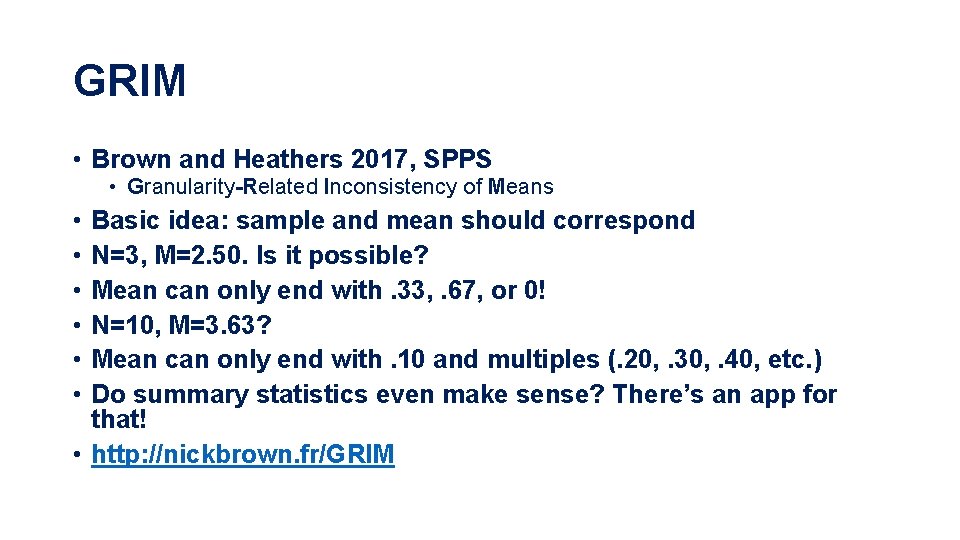

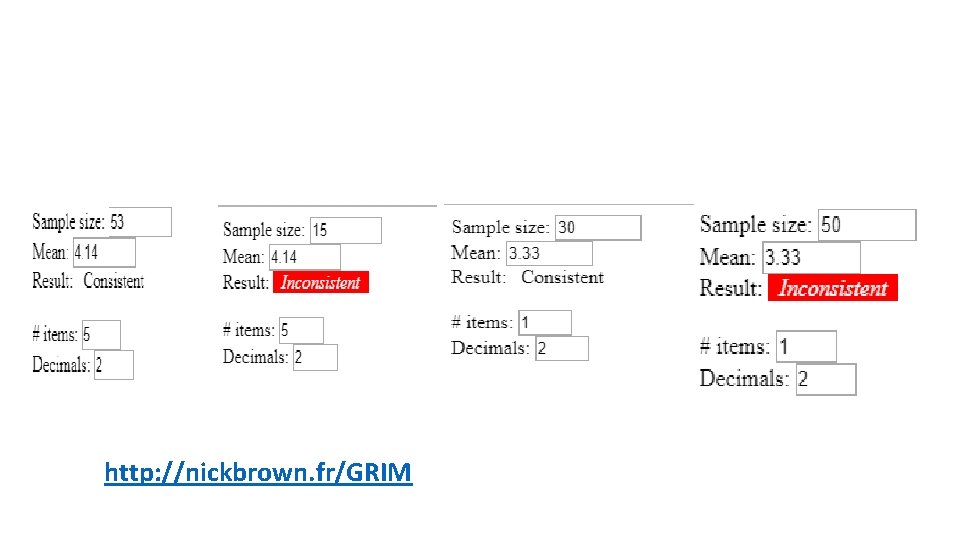

GRIM • Brown and Heathers 2017, SPPS • Granularity-Related Inconsistency of Means • • • Basic idea: sample and mean should correspond N=3, M=2. 50. Is it possible? Mean can only end with. 33, . 67, or 0! N=10, M=3. 63? Mean can only end with. 10 and multiples (. 20, . 30, . 40, etc. ) Do summary statistics even make sense? There’s an app for that! • http: //nickbrown. fr/GRIM

http: //nickbrown. fr/GRIM

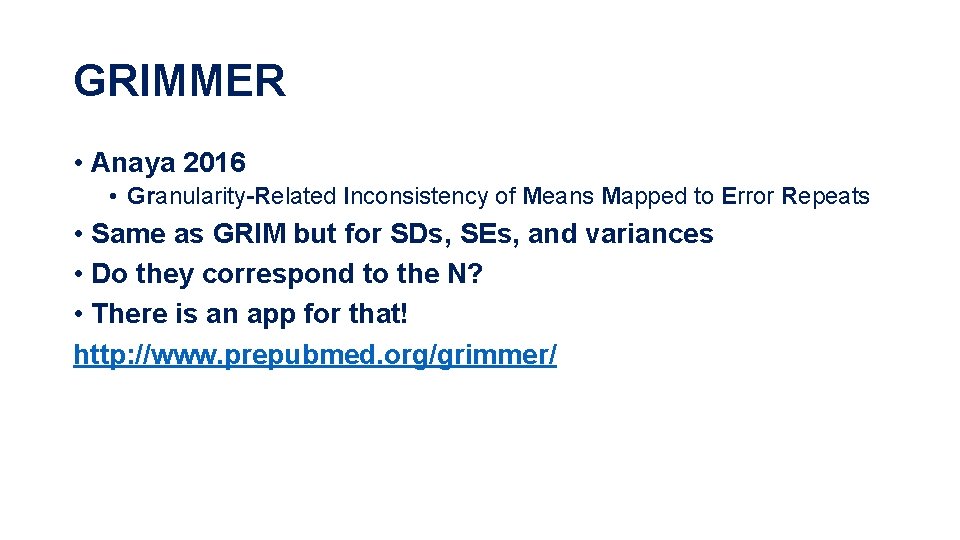

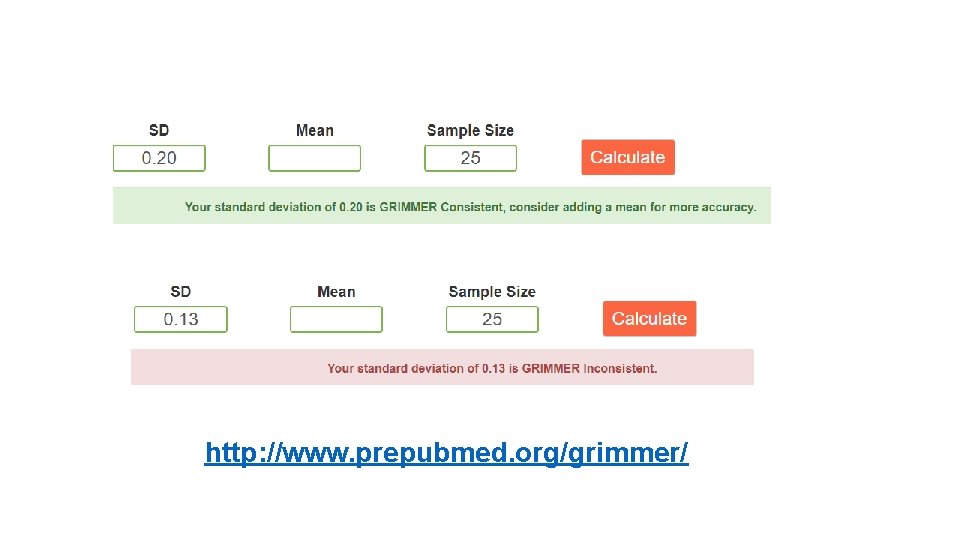

GRIMMER • Anaya 2016 • Granularity-Related Inconsistency of Means Mapped to Error Repeats • Same as GRIM but for SDs, SEs, and variances • Do they correspond to the N? • There is an app for that! http: //www. prepubmed. org/grimmer/

http: //www. prepubmed. org/grimmer/

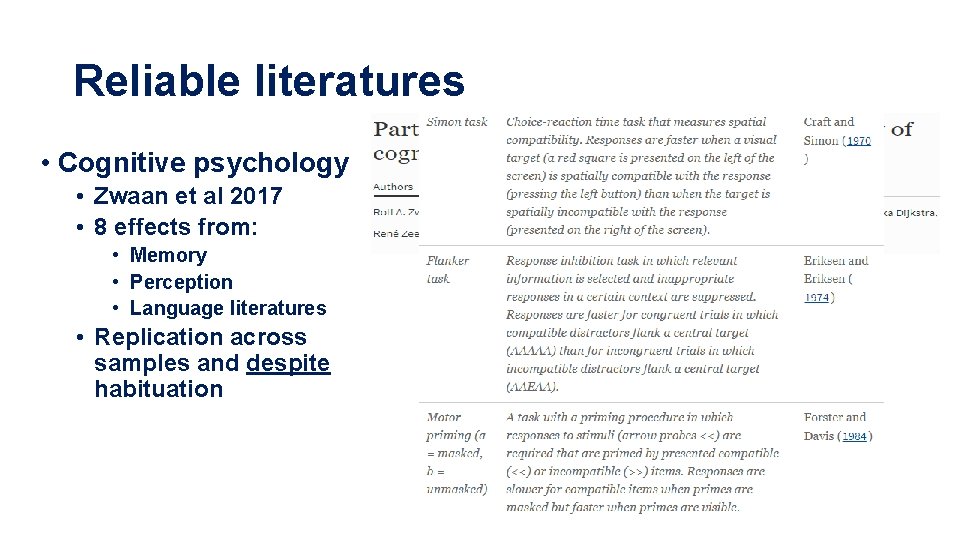

Reliable literatures • Cognitive psychology • Zwaan et al 2017 • 8 effects from: • Memory • Perception • Language literatures • Replication across samples and despite habituation

Reliable literatures • Judgment and decision-making • Gilad Feldman HKU: 70 -80% replication success • His website: mgto. org • • • Omission bias Outcome bias Anchoring Preference for indirect harm Effort heuristic ALL REPLICATE

UNRELIABLE LITERATURES THAT YOU SHOULD NOT ATTEMPT TO EXTEND • Social/behavioral priming (cognitive priming is OK) • E. g. , money priming (Vohs et al 2006) • Failed replications • Rohrer et al 2013 JEP: G • Caruso 2016 PS (one of the main MP authors!) • Meta-analysis shows massive publication bias/p-hacking • E. g. , elderly priming (Bargh 2001) • Failed replications (Pashler 2008) • Professor priming (Dijksterhuis & van Knippenberg 1998) does not replicate (O’Donnell et al 2018)

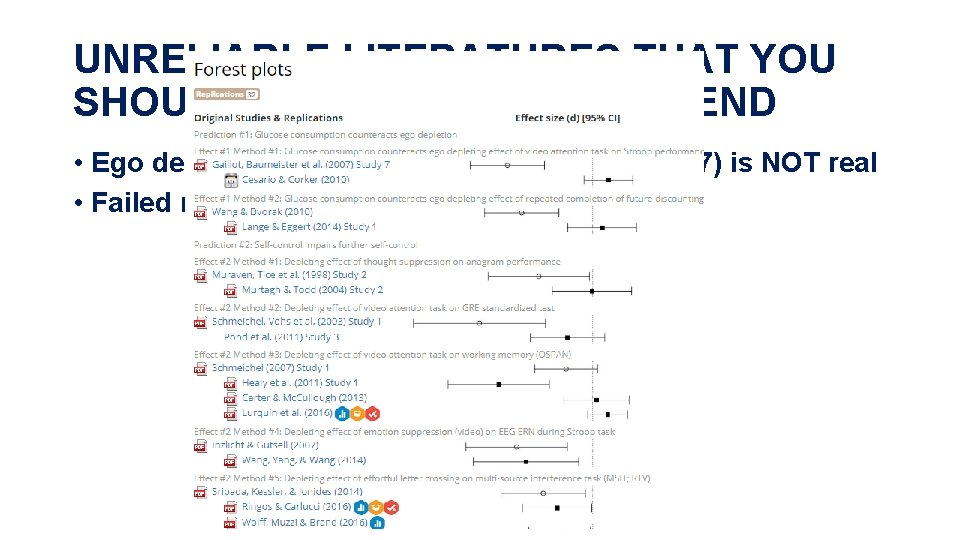

UNRELIABLE LITERATURES THAT YOU SHOULD NOT ATTEMPT TO EXTEND • Ego depletion (e. g. , Baumeister and Vohs 2007) is NOT real • Failed replications list

UNRELIABLE LITERATURES THAT YOU SHOULD NOT ATTEMPT TO EXTEND • Stereotype threat (Aronson & Steele 1995) is NOT real • Flore et al (2019) failed replication • N>2000, NL, pre-registered • Flore & Wicherts (2015) meta-analysis shows massive evidence of pub bias

UNRELIABLE LITERATURES THAT YOU SHOULD NOT ATTEMPT TO EXTEND • THIS LIST IS NOT EXHAUSTIVE • What about fluency, emotions, heart vs. reason evolutionary psychology? • MARKETING?

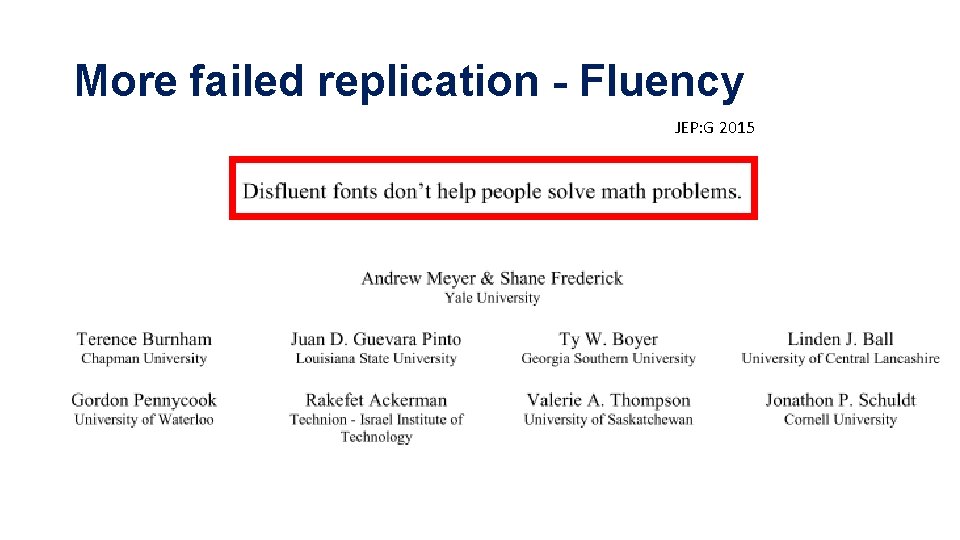

More failed replication - Fluency JEP: G 2015

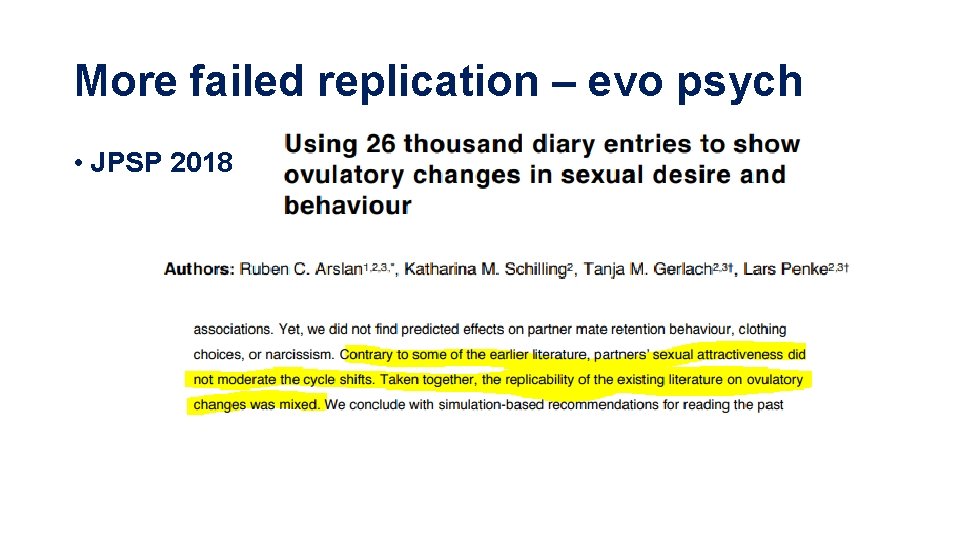

More failed replication – evo psych • JPSP 2018

Final recommendations • Learn more statistics • Lakens FREE course on coursera • https: //www. coursera. org/learn/statistical-inferences • Read papers VERY critically • VERY CRITICALLY I SAID! • Look at p-values, interaction, mediations critically • Expect the worst • Run bigger samples • DO NOT fight the power

Download presentation at osf. io/nbcpj/ Thanks! Ignazio. ziano@Grenoble-em. com @ignaziano

• Five studies completed • Two successful • One unsuccessful • Two uninterpretable (? )

Caveat for power analysis • Even when it replicates, ES is likely to be smaller! • • E. g. ML 2 (Klein et al. 2018) Collect more subjects that you’d need from a power analysis And definitely more than you think! Or power for 95% rather than 80%

Power analysis • Is there an effect size? • Effect size extraction • Web-based tools • http: //www. psychometrica. de/effect_size. html • http: //psych. purdue. edu/~gfrancis/Equivalent. Statistics/ • Lakens (2013) spreadsheet • https: //www. frontiersin. org/articles/10. 3389/fpsyg. 2013. 00863/full • R package poweranalysis (not pwr) • Easy tricks to extract ESs from test stats

G*power or R (pwr package – not poweranalysis) • Some stuff in G*power is weird but OK • A priori analysis • Decide N based on what ES you think you’ll have • Suggestion: pick higher N than you think • Sensitivity analysis • I have this big a sample: how big can the ES be?

Exercise with G*power • A priori analysis for two dependent means, dz=. 45, power=. 80. alpha=. 01 • Sensitivity analysis for two independent means, N=160, power=. 95, alpha=. 05

What to do with stuff that does not replicate? • Pre-register it a replication attempt • To make it clear you are not after anyone and did everything by the book • Perfect for Masters Theses (I’m doing it in Grenoble) • Send it to journals that publish replications • Many in Psych - also As • JESP, SPPS, C&E • Some in Mkt/Mgmt lists – no As • • JBR had a special issues signalling interest Journal of Business Ethics Psych & Marketing publishes / invites them Journal of Economic Psych too

Examples of replication papers published in Psych • Kutscher & Feldman 2018 C&E

• Crawford et al 2018 SPPS

Psychology & Marketing Registration reports

Does preregistration make it easier to publish null results? JBR special issue

Journal of Business Ethics special issue Deadline: January 1 st 2019

Journal of Economic Psychology special issue Deadline: June 1 st 2018

Thanks!

- Slides: 95