Identifying Rare Class in Absence of True Labels

Identifying Rare Class in Absence of True Labels: Application to Monitoring Forest Fires from Satellite data Vipin Kumar University of Minnesota kumar@cs. umn. edu www. cs. umn. edu/~kumar ACM SIGKDD Workshop on Outlier Definition, Detection and Description (August 10, 2015) Work supported by NASA and NSF Expeditions in Computing project on Understanding Climate Change using Data-driven Approaches

Global Mapping of Forest Fires Mapping fires is important for… • Climate change studies e. g. , linking the impact of a changing climate on the frequency of fires • Carbon cycle studies e. g. , quantifying how much C 02 is emitted by fires (critical for UN-REDD) • Land cover management e. g. , identifying active deforestation fronts 2

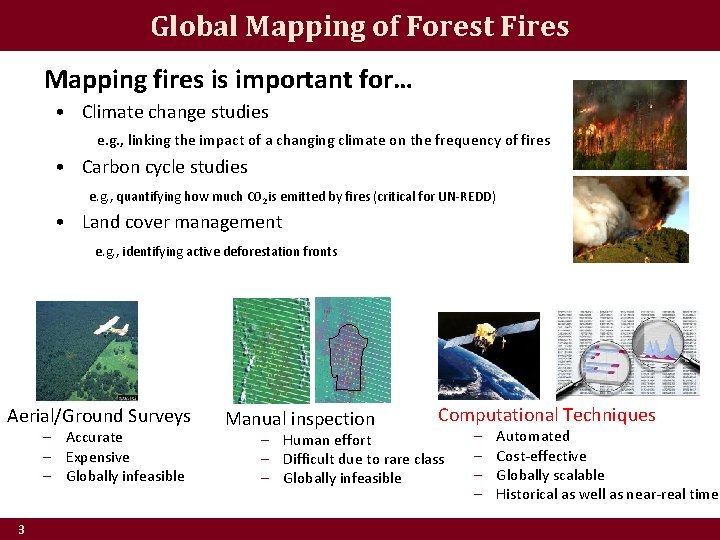

Global Mapping of Forest Fires Mapping fires is important for… • Climate change studies e. g. , linking the impact of a changing climate on the frequency of fires • Carbon cycle studies e. g. , quantifying how much C 02 is emitted by fires (critical for UN-REDD) • Land cover management e. g. , identifying active deforestation fronts Aerial/Ground Surveys – Accurate – Expensive – Globally infeasible 3 Manual inspection Computational Techniques – Human effort – Difficult due to rare class – Globally infeasible – – Automated Cost-effective Globally scalable Historical as well as near-real time

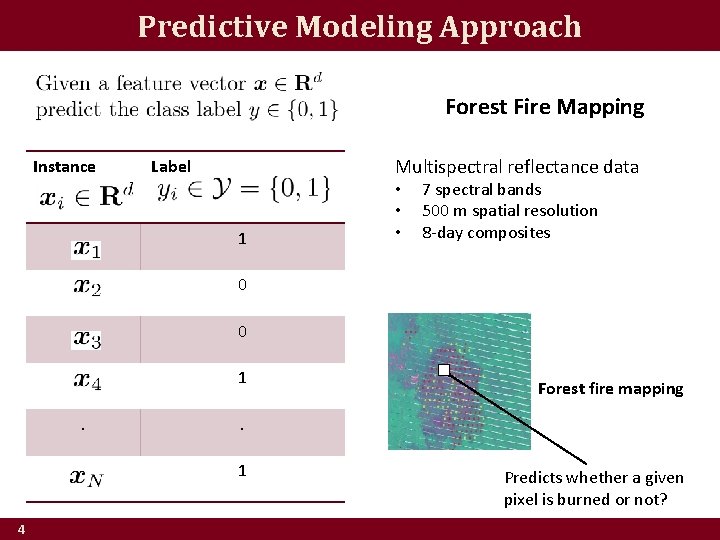

Predictive Modeling Approach Forest Fire Mapping Instance Multispectral reflectance data Label 1 • • • 7 spectral bands 500 m spatial resolution 8 -day composites 0 0 1. . 1 4 Forest fire mapping Predicts whether a given pixel is burned or not?

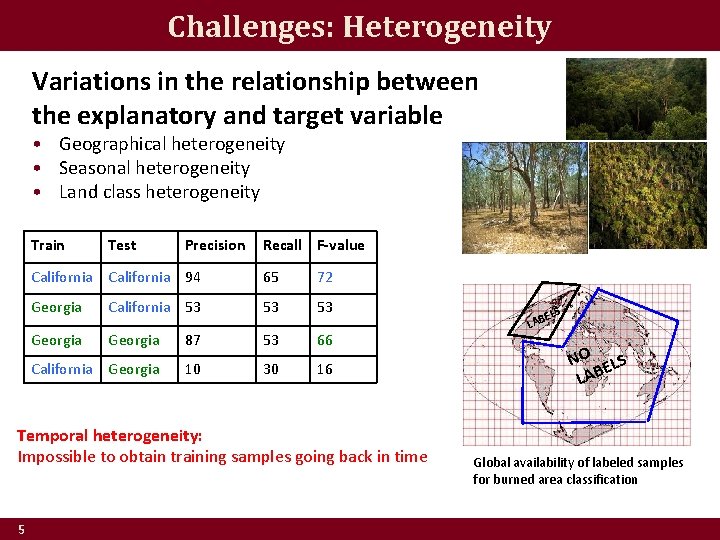

Challenges: Heterogeneity Variations in the relationship between the explanatory and target variable • Geographical heterogeneity • Seasonal heterogeneity • Land class heterogeneity Train Test Precision Recall F-value California 94 65 72 Georgia California 53 53 53 Georgia 87 53 66 California Georgia 10 30 16 Temporal heterogeneity: Impossible to obtain training samples going back in time 5 S EL LAB NO ELS LAB Global availability of labeled samples for burned area classification

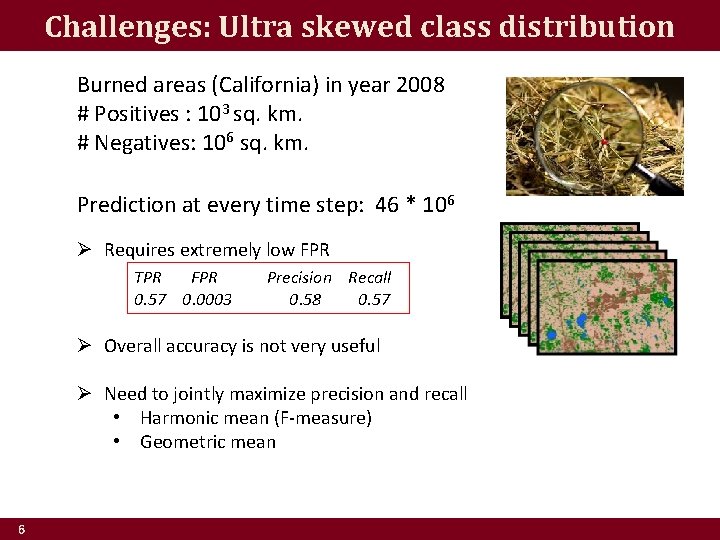

Challenges: Ultra skewed class distribution Burned areas (California) in year 2008 # Positives : 103 sq. km. # Negatives: 106 sq. km. Prediction at every time step: 46 * 106 Ø Requires extremely low FPR TPR FPR 0. 57 0. 0003 Precision Recall 0. 58 0. 57 Ø Overall accuracy is not very useful Ø Need to jointly maximize precision and recall • Harmonic mean (F-measure) • Geometric mean 6

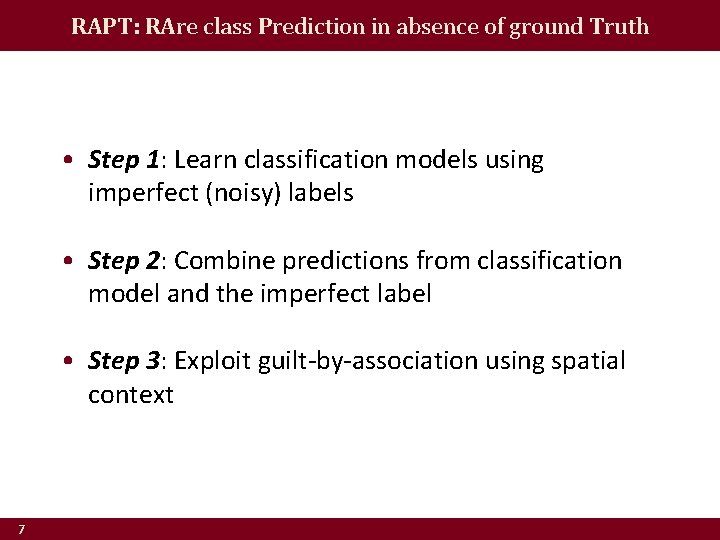

RAPT: RAre class Prediction in absence of ground Truth • Step 1: Learn classification models using imperfect (noisy) labels • Step 2: Combine predictions from classification model and the imperfect label • Step 3: Exploit guilt-by-association using spatial context 7

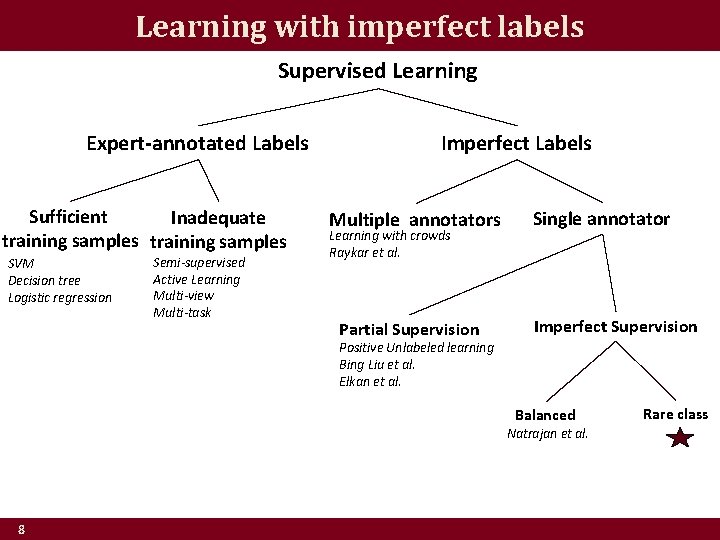

Learning with imperfect labels Supervised Learning Expert-annotated Labels Sufficient Inadequate training samples SVM Decision tree Logistic regression Semi-supervised Active Learning Multi-view Multi-task Imperfect Labels Multiple annotators Learning with crowds Raykar et al. Partial Supervision Single annotator Imperfect Supervision Positive Unlabeled learning Bing Liu et al. Elkan et al. Balanced Natrajan et al. 8 Rare class

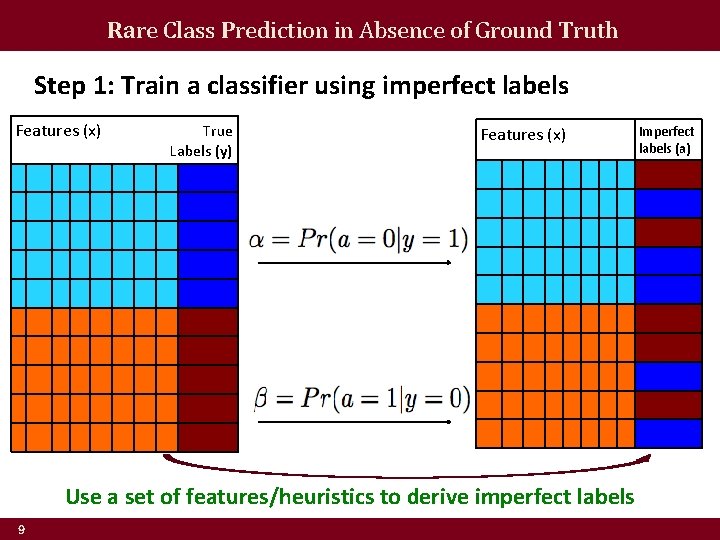

Rare Class Prediction in Absence of Ground Truth Step 1: Train a classifier using imperfect labels Features (x) True Labels (y) Features (x) Use a set of features/heuristics to derive imperfect labels 9 Imperfect labels (a)

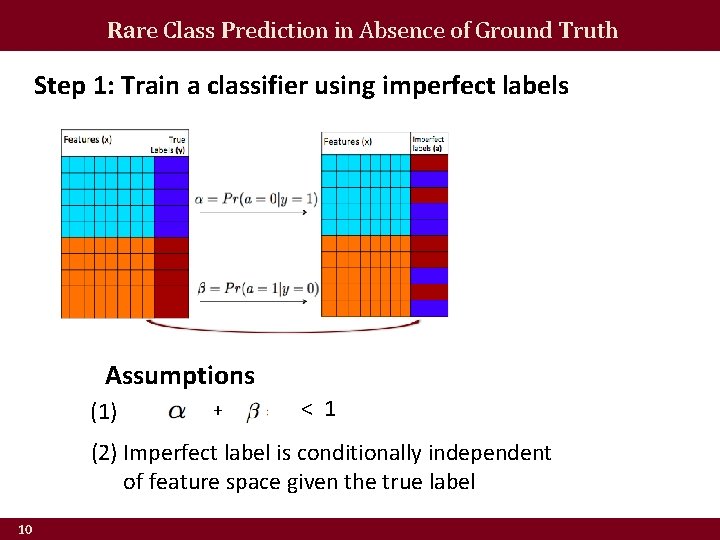

Rare Class Prediction in Absence of Ground Truth Step 1: Train a classifier using imperfect labels Assumptions (1) + < 1 (2) Imperfect label is conditionally independent of feature space given the true label 10

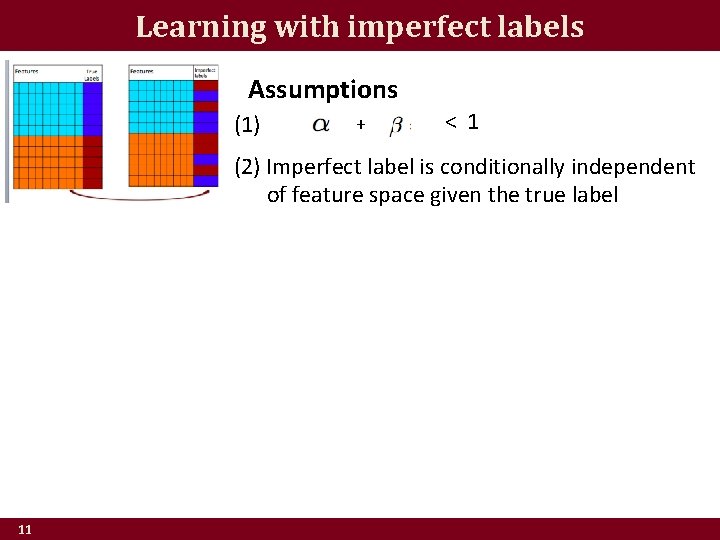

Learning with imperfect labels Assumptions (1) + < 1 (2) Imperfect label is conditionally independent of feature space given the true label 11

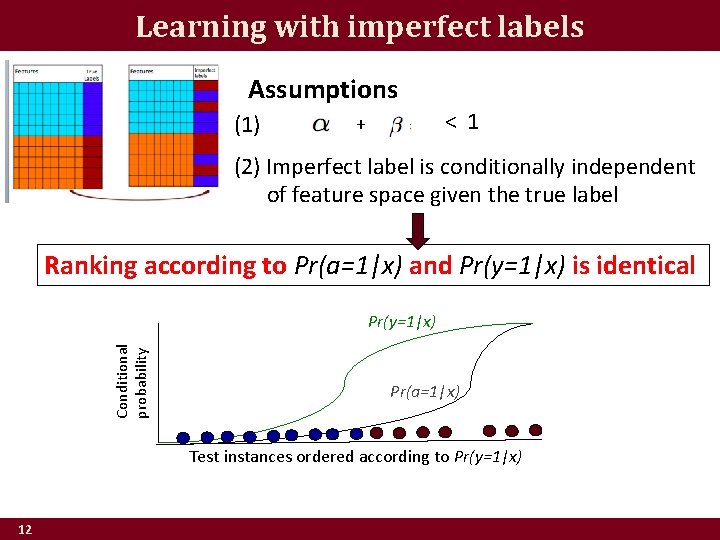

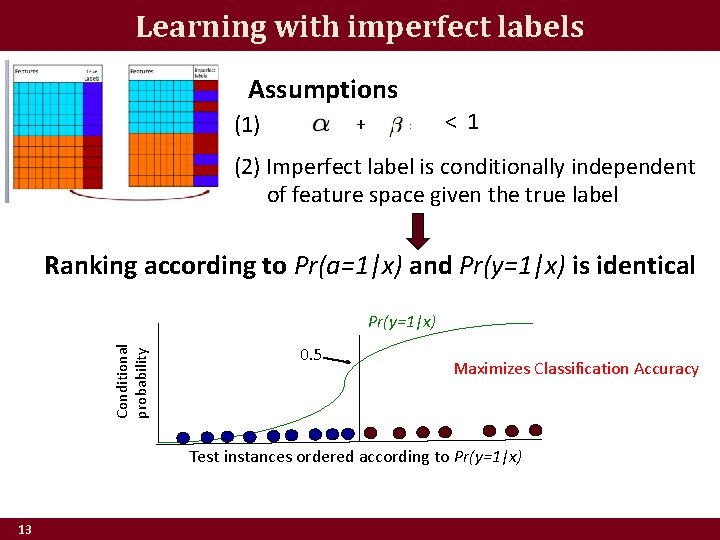

Learning with imperfect labels Assumptions (1) < 1 + (2) Imperfect label is conditionally independent of feature space given the true label Ranking according to Pr(a=1|x) and Pr(y=1|x) is identical Conditional probability Pr(y=1|x) Pr(a=1|x) Test instances ordered according to Pr(y=1|x) 12

Learning with imperfect labels Assumptions (1) < 1 + (2) Imperfect label is conditionally independent of feature space given the true label Ranking according to Pr(a=1|x) and Pr(y=1|x) is identical Conditional probability Pr(y=1|x) 0. 5 Maximizes Classification Accuracy Test instances ordered according to Pr(y=1|x) 13

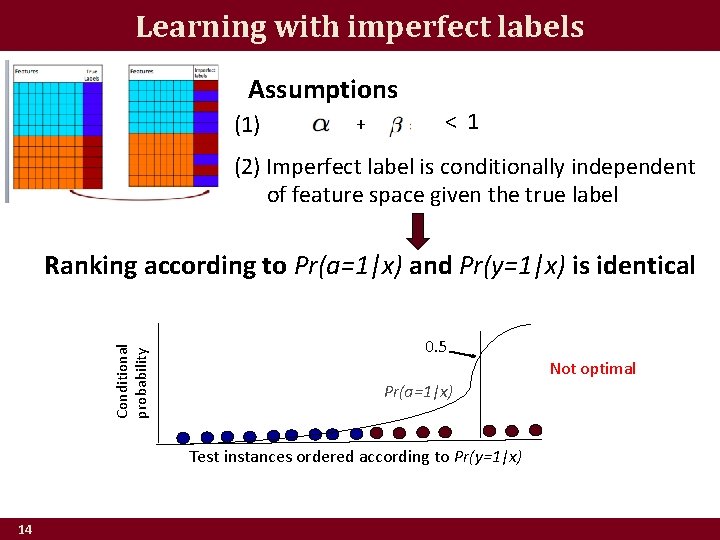

Learning with imperfect labels Assumptions (1) + < 1 (2) Imperfect label is conditionally independent of feature space given the true label Conditional probability Ranking according to Pr(a=1|x) and Pr(y=1|x) is identical 0. 5 Pr(a=1|x) Test instances ordered according to Pr(y=1|x) 14 Not optimal

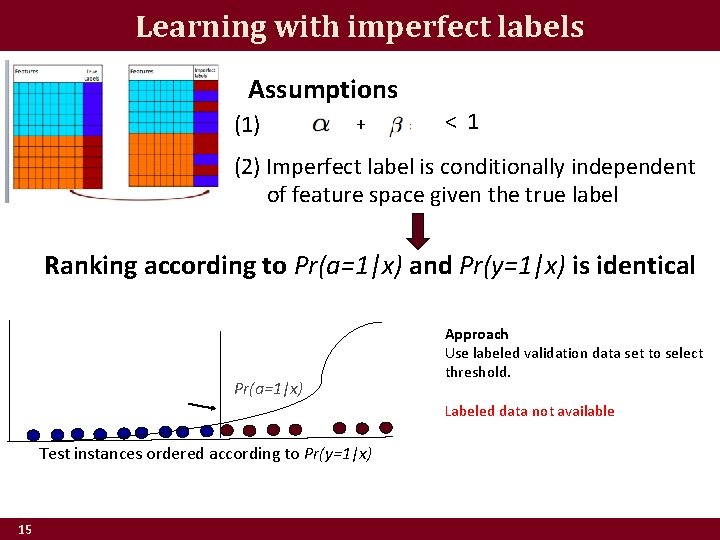

Learning with imperfect labels Assumptions (1) + < 1 (2) Imperfect label is conditionally independent of feature space given the true label Ranking according to Pr(a=1|x) and Pr(y=1|x) is identical Pr(a=1|x) Approach Use labeled validation data set to select threshold. Labeled data not available Test instances ordered according to Pr(y=1|x) 15

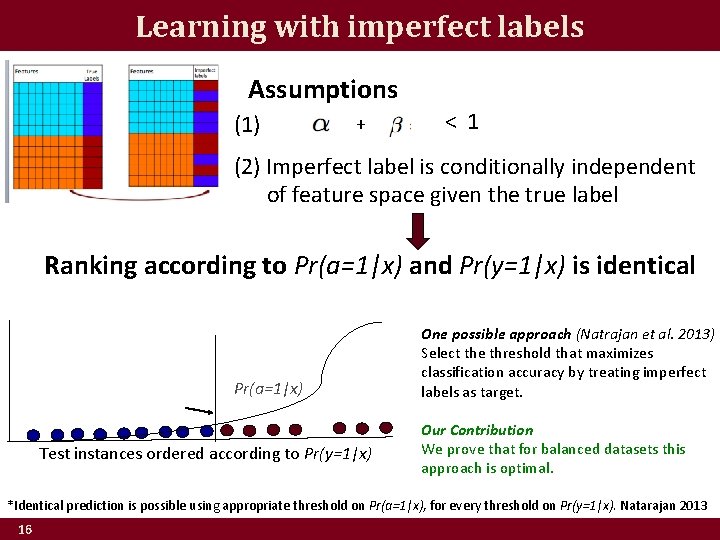

Learning with imperfect labels Assumptions (1) + < 1 (2) Imperfect label is conditionally independent of feature space given the true label Ranking according to Pr(a=1|x) and Pr(y=1|x) is identical Pr(a=1|x) Test instances ordered according to Pr(y=1|x) One possible approach (Natrajan et al. 2013) Select the threshold that maximizes classification accuracy by treating imperfect labels as target. Our Contribution We prove that for balanced datasets this approach is optimal. *Identical prediction is possible using appropriate threshold on Pr(a=1|x), for every threshold on Pr(y=1|x). Natarajan 2013 16

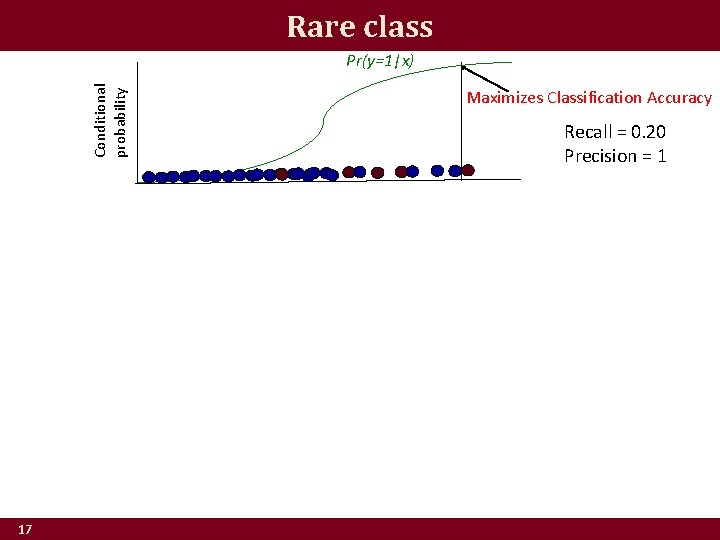

Rare class Conditional probability Pr(y=1|x) 17 Maximizes Classification Accuracy Recall = 0. 20 Precision = 1

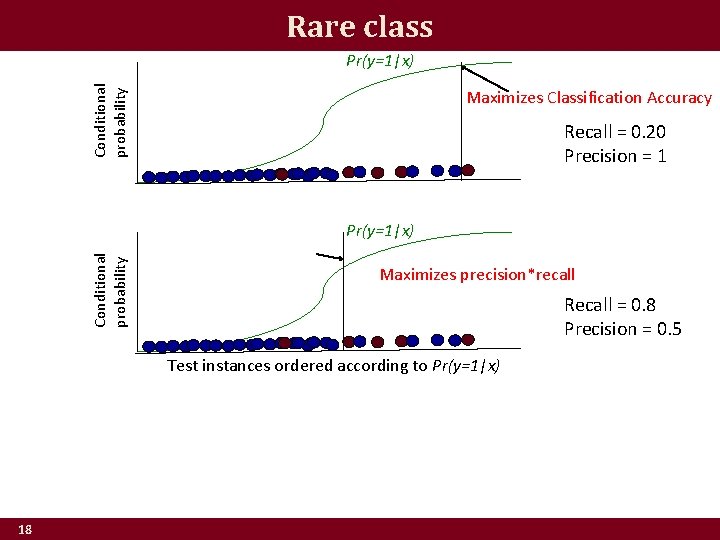

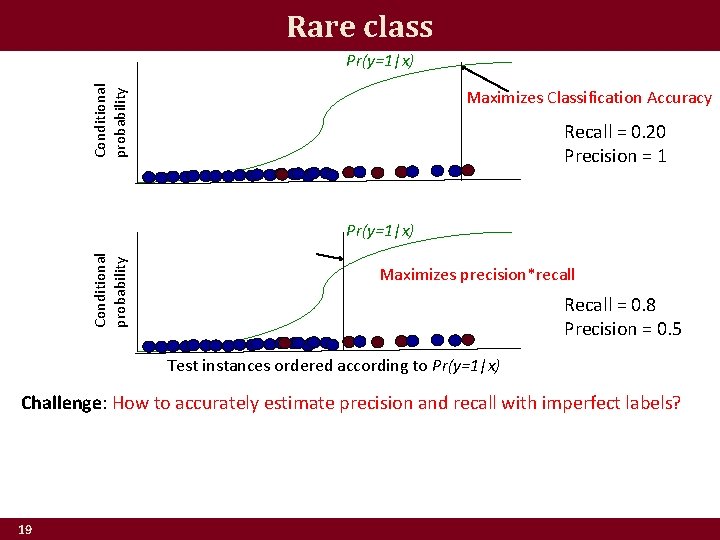

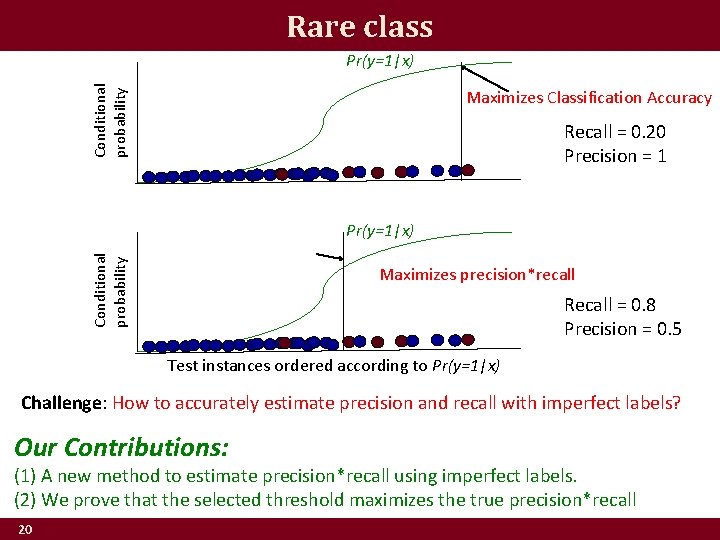

Rare class Conditional probability Pr(y=1|x) Maximizes Classification Accuracy Recall = 0. 20 Precision = 1 Conditional probability Pr(y=1|x) Maximizes precision*recall Recall = 0. 8 Precision = 0. 5 Test instances ordered according to Pr(y=1|x) 18

Rare class Conditional probability Pr(y=1|x) Maximizes Classification Accuracy Recall = 0. 20 Precision = 1 Conditional probability Pr(y=1|x) Maximizes precision*recall Recall = 0. 8 Precision = 0. 5 Test instances ordered according to Pr(y=1|x) Challenge: How to accurately estimate precision and recall with imperfect labels? 19

Rare class Conditional probability Pr(y=1|x) Maximizes Classification Accuracy Recall = 0. 20 Precision = 1 Conditional probability Pr(y=1|x) Maximizes precision*recall Recall = 0. 8 Precision = 0. 5 Test instances ordered according to Pr(y=1|x) Challenge: How to accurately estimate precision and recall with imperfect labels? Our Contributions: (1) A new method to estimate precision*recall using imperfect labels. (2) We prove that the selected threshold maximizes the true precision*recall 20

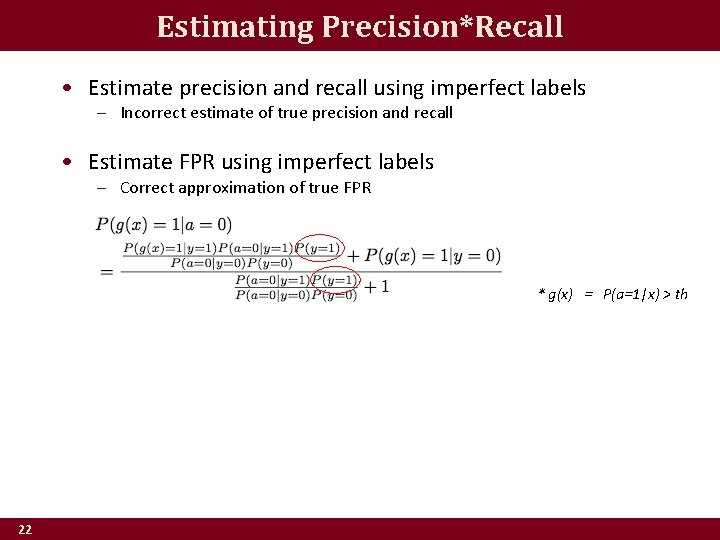

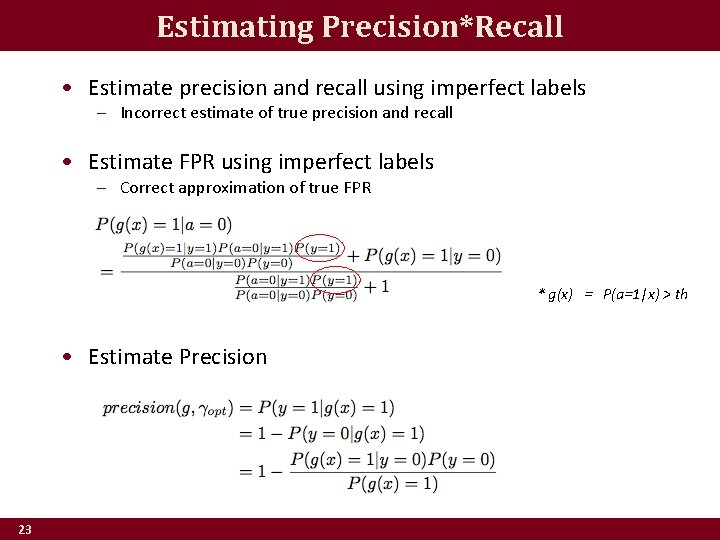

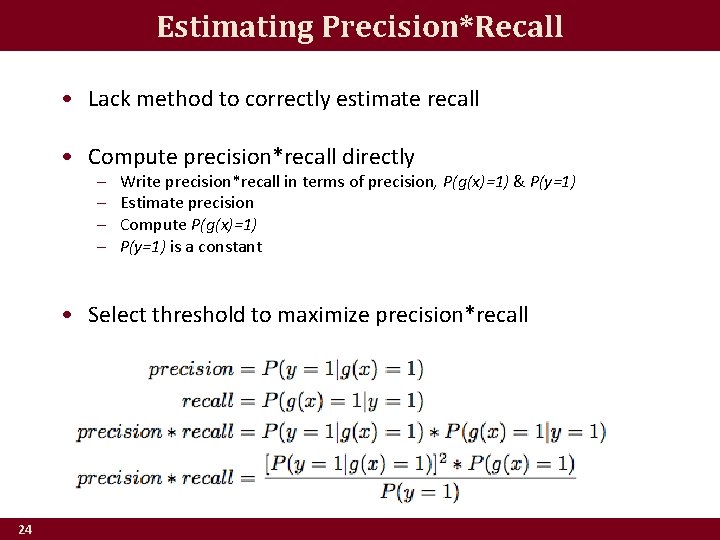

Estimating Precision*Recall • Estimate precision and recall using imperfect labels – Incorrect estimate of true precision and recall 21

Estimating Precision*Recall • Estimate precision and recall using imperfect labels – Incorrect estimate of true precision and recall • Estimate FPR using imperfect labels – Correct approximation of true FPR * g(x) = P(a=1|x) > th 22

Estimating Precision*Recall • Estimate precision and recall using imperfect labels – Incorrect estimate of true precision and recall • Estimate FPR using imperfect labels – Correct approximation of true FPR * g(x) = P(a=1|x) > th • Estimate Precision 23

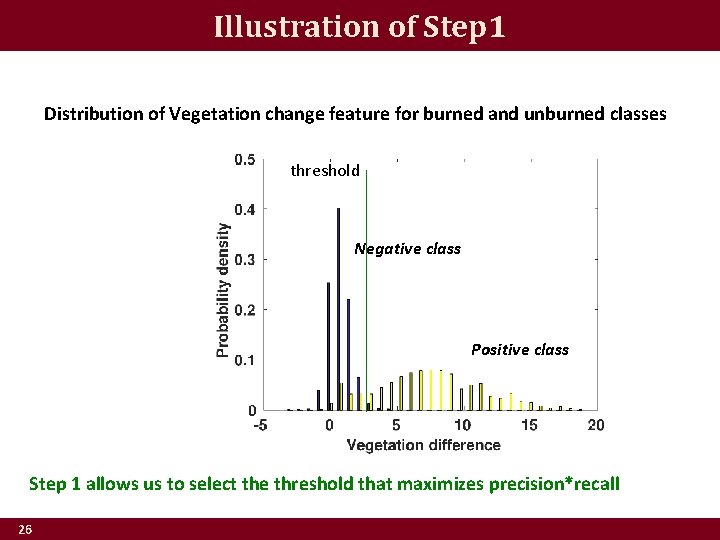

Estimating Precision*Recall • Lack method to correctly estimate recall • Compute precision*recall directly – – Write precision*recall in terms of precision, P(g(x)=1) & P(y=1) Estimate precision Compute P(g(x)=1) P(y=1) is a constant • Select threshold to maximize precision*recall 24

Illustration of Step 1 Distribution of Vegetation change feature for burned and unburned classes Negative class Positive class 25

Illustration of Step 1 Distribution of Vegetation change feature for burned and unburned classes threshold Negative class Positive class Step 1 allows us to select the threshold that maximizes precision*recall 26

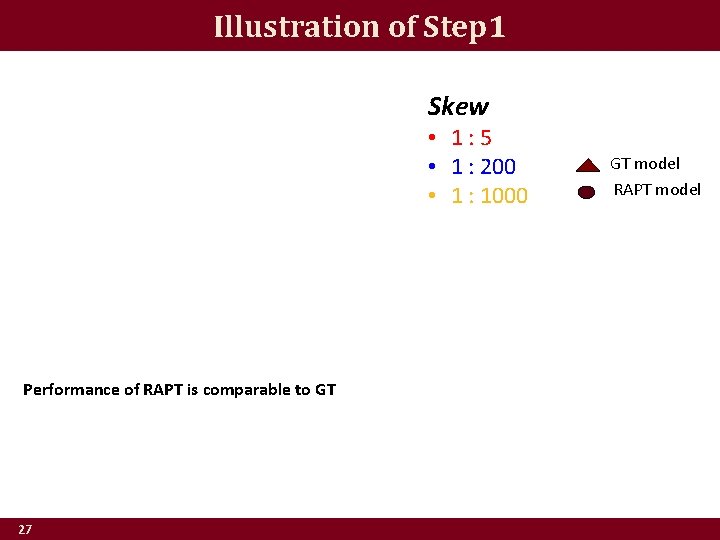

Illustration of Step 1 Skew • 1: 5 • 1 : 200 • 1 : 1000 Performance of RAPT is comparable to GT 27 GT model RAPT model

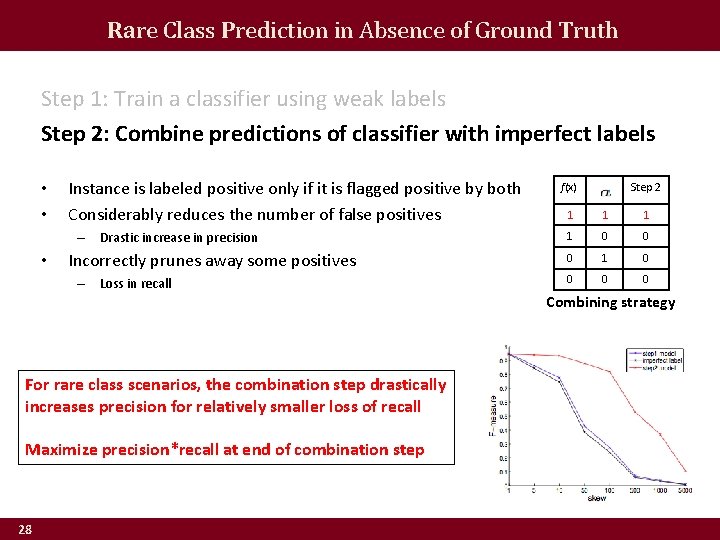

Rare Class Prediction in Absence of Ground Truth Step 1: Train a classifier using weak labels Step 2: Combine predictions of classifier with imperfect labels • • Instance is labeled positive only if it is flagged positive by both Considerably reduces the number of false positives – Drastic increase in precision • Incorrectly prunes away some positives – Loss in recall For rare class scenarios, the combination step drastically increases precision for relatively smaller loss of recall Maximize precision*recall at end of combination step 28 f(x) Step 2 1 1 0 0 0 1 0 0 Combining strategy

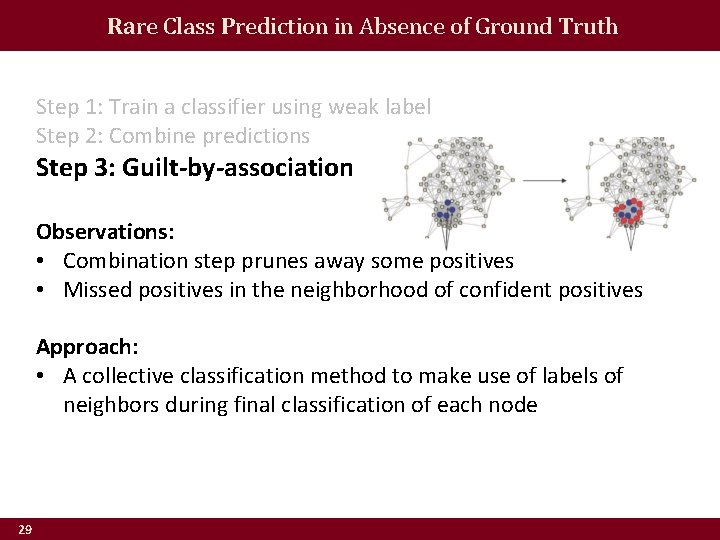

Rare Class Prediction in Absence of Ground Truth Step 1: Train a classifier using weak label Step 2: Combine predictions Step 3: Guilt-by-association Observations: • Combination step prunes away some positives • Missed positives in the neighborhood of confident positives Approach: • A collective classification method to make use of labels of neighbors during final classification of each node 29

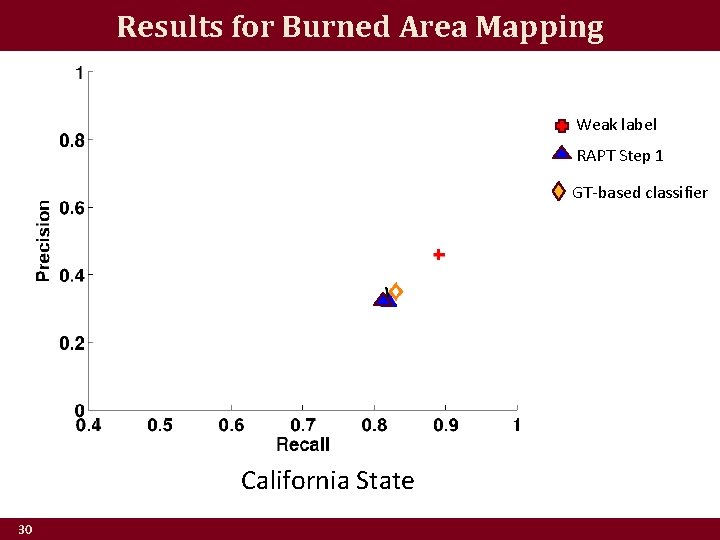

Results for Burned Area Mapping Weak label RAPT Step 1 GT-based classifier California State 30

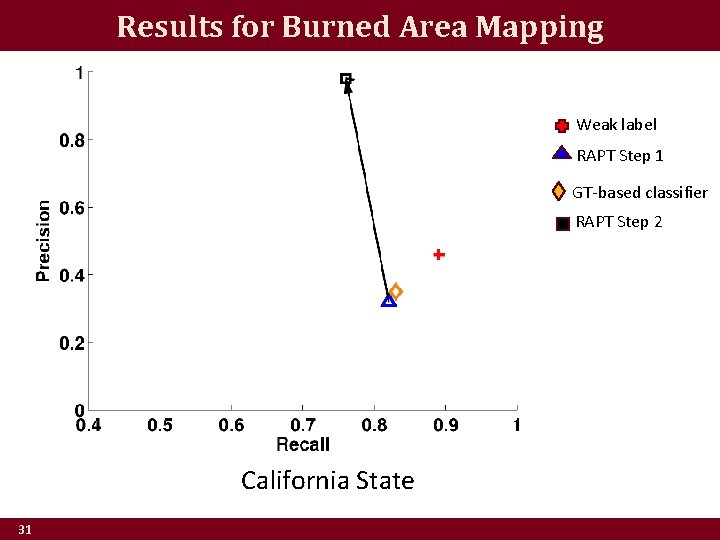

Results for Burned Area Mapping Weak label RAPT Step 1 GT-based classifier RAPT Step 2 California State 31

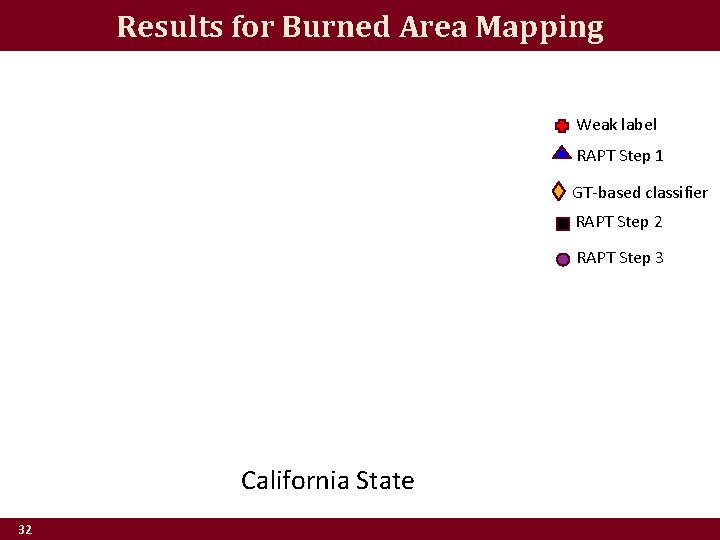

Results for Burned Area Mapping Weak label RAPT Step 1 GT-based classifier RAPT Step 2 RAPT Step 3 California State 32

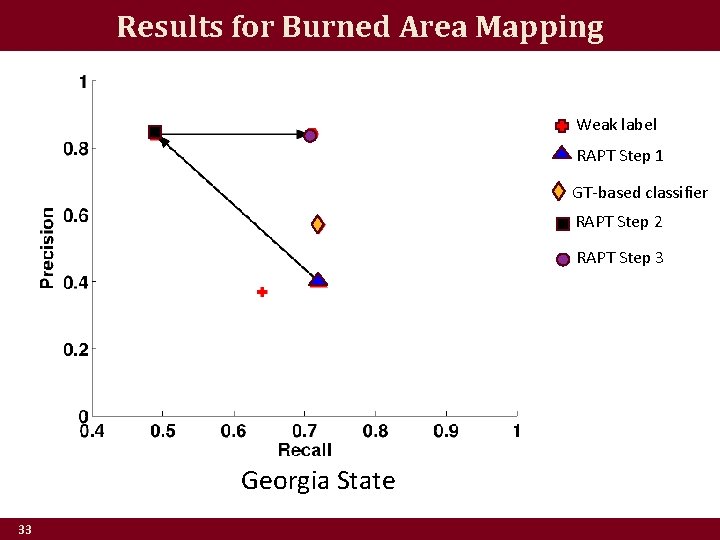

Results for Burned Area Mapping Weak label RAPT Step 1 GT-based classifier RAPT Step 2 RAPT Step 3 Georgia State 33

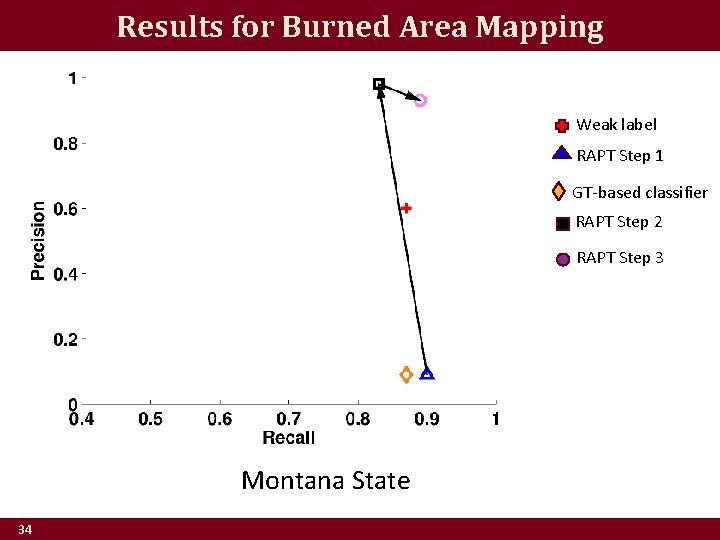

Results for Burned Area Mapping Weak label RAPT Step 1 GT-based classifier RAPT Step 2 RAPT Step 3 Montana State 34

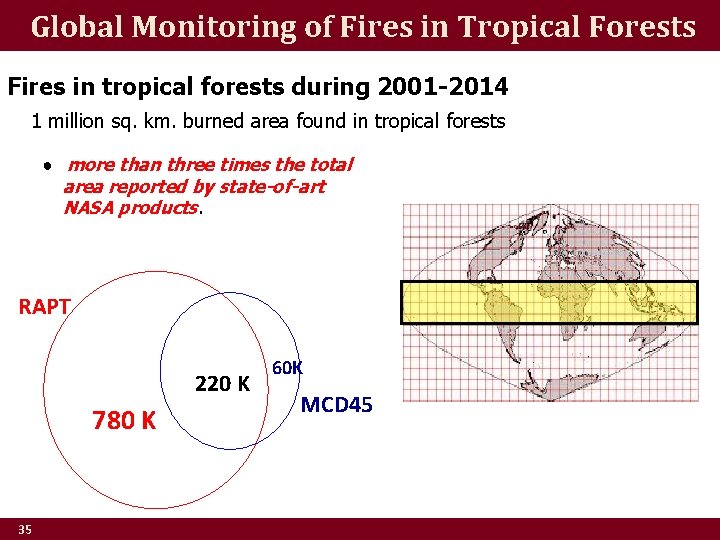

Global Monitoring of Fires in Tropical Forests Fires in tropical forests during 2001 -2014 1 million sq. km. burned area found in tropical forests ● more than three times the total area reported by state-of-art NASA products. RAPT 220 K 780 K 35 60 K MCD 45

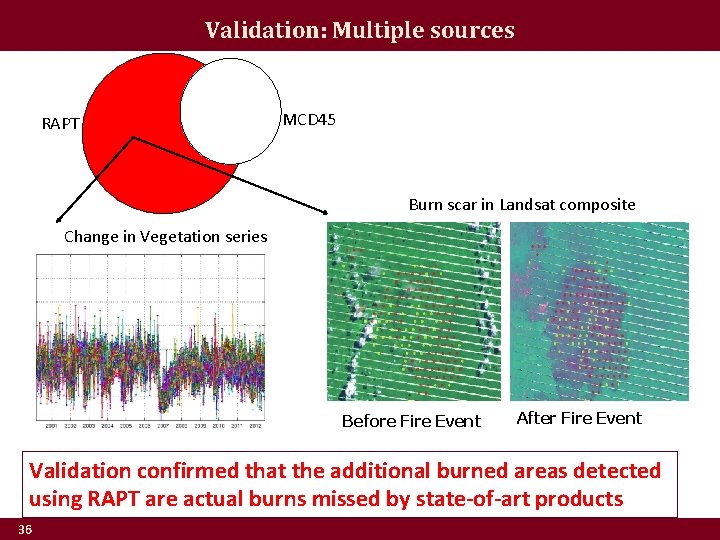

Validation: Multiple sources RAPT MCD 45 Burn scar in Landsat composite Change in Vegetation series Before Fire Event After Fire Event Validation confirmed that the additional burned areas detected using RAPT are actual burns missed by state-of-art products 36

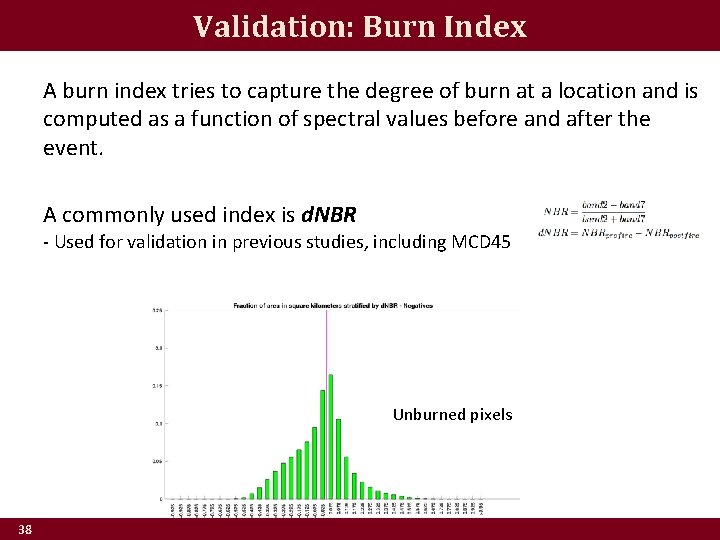

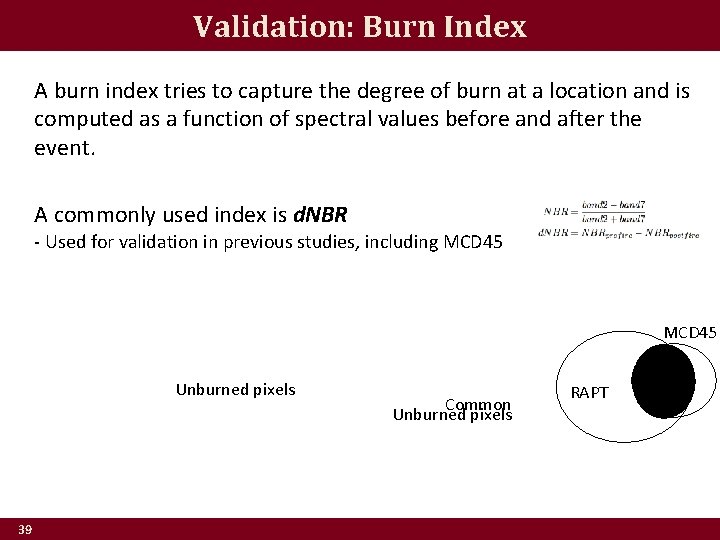

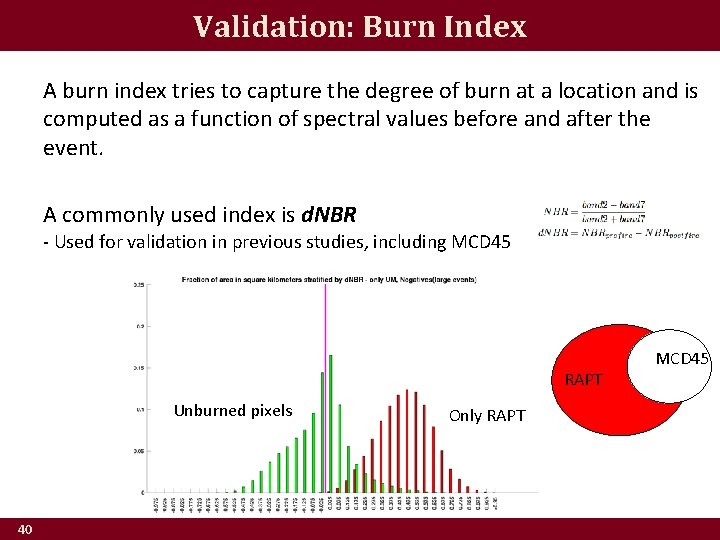

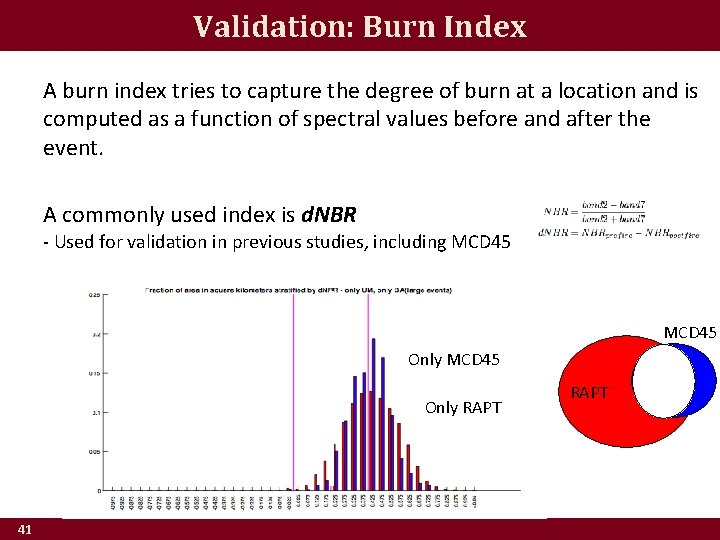

Validation: Burn Index A burn index tries to capture the degree of burn at a location and is computed as a function of spectral values before and after the event. A commonly used index is d. NBR - Used for validation in previous studies, including MCD 45 37

Validation: Burn Index A burn index tries to capture the degree of burn at a location and is computed as a function of spectral values before and after the event. A commonly used index is d. NBR - Used for validation in previous studies, including MCD 45 Unburned pixels 38

Validation: Burn Index A burn index tries to capture the degree of burn at a location and is computed as a function of spectral values before and after the event. A commonly used index is d. NBR - Used for validation in previous studies, including MCD 45 Unburned pixels 39 Common Unburned pixels RAPT

Validation: Burn Index A burn index tries to capture the degree of burn at a location and is computed as a function of spectral values before and after the event. A commonly used index is d. NBR - Used for validation in previous studies, including MCD 45 RAPT Unburned pixels 40 Only RAPT MCD 45

Validation: Burn Index A burn index tries to capture the degree of burn at a location and is computed as a function of spectral values before and after the event. A commonly used index is d. NBR - Used for validation in previous studies, including MCD 45 Only RAPT 41 RAPT

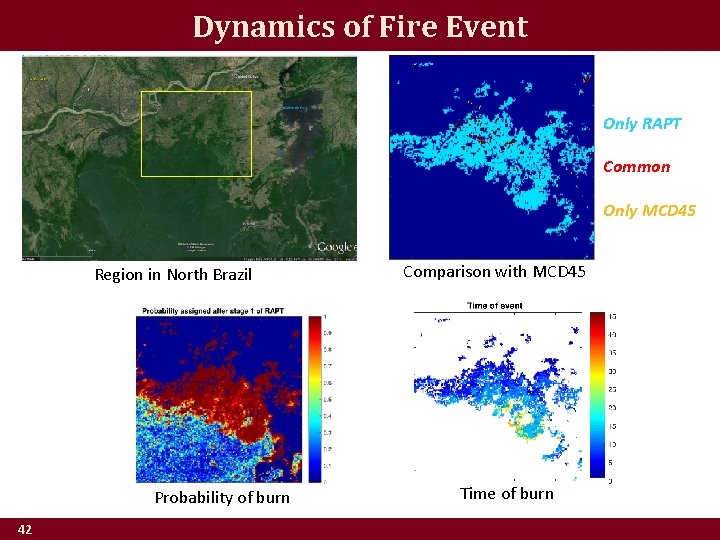

Dynamics of Fire Event Only RAPT Common Only MCD 45 Region in North Brazil Probability of burn 42 Comparison with MCD 45 Time of burn

![Impact on REDD+ “The [Peru] government needs to spend more than $100 m a Impact on REDD+ “The [Peru] government needs to spend more than $100 m a](http://slidetodoc.com/presentation_image_h2/5bee253c8768854d27dcf85a66390bc6/image-43.jpg)

Impact on REDD+ “The [Peru] government needs to spend more than $100 m a year on high-resolution satellite pictures of its billions of trees. But … a computing facility developed by the Planetary Skin Institute (PSI) … might help cut that budget. ” “ALERTS, which was launched at Cancún, uses … data-mining algorithms developed at the University of Minnesota and a lot of computing power … to spot places where land use changed. ” (The Economist 12/16/2010) 43

Concluding Remarks • Future research – Study the impact of the conditional independence between features and imperfect labels – Extend to incorporate labels from multiple annotators • Other applications – Urban extent mapping – Cyber security – Epidemiology 44

Thank You! Questions? UMN team members Varun Mithal (Ph. D thesis) Guruprasad Nayak Ankush Khandelwal NASA AMES Collaborators Rama Nemani Nikunj C. Oza Work supported by NASA and NSF Expeditions in Computing project on Understanding Climate Change using Data-driven Approaches 45

- Slides: 45