IDEA Assessment Data Anne Rainey IDEA Part B

IDEA Assessment Data Anne Rainey, IDEA Part B Data Manager, Montana Nick Easter, Ed. D. , IDEA Part B Data Manager, Nevada Meredith Miceli, Research to Practice Division Data Team Lead, OSEP 2017 LEADERSHIP CONFERENCE 1

Welcome and Introductions • Welcome • Introduction of speakers 2 2017 LEADERSHIP CONFERENCE

Objectives • What is IDEA Assessment Data? • Where is the data reported? • Assessment Meta. Data Survey • OSEP Review and Feedback • How do they review? • Data Quality Reports • Data Notes • Ensuring Data Quality • Challenges Discussion 3 2017 LEADERSHIP CONFERENCE

What is IDEA Assessment Data? • Statewide Assessment Data • Alternate Assessment • Subset of all Assessment Data 4 2017 LEADERSHIP CONFERENCE

Where is this data reported? • EDFacts Files • SPP/APR • CSPR 5 2017 LEADERSHIP CONFERENCE

Where is the Assessment Meta. Data reported? • Assessment Meta. Data Survey – submitted via EMAPS • Open / reopen periods align with assessment data open /reopen periods • Respondents: State Assessment Director • Read only access for Part B Data Managers & EDFacts Coordinators • How is it used? • Used to cross validate State’s assessment data submission • Used in OSEP’s evaluation of completeness • Used to determine which counts are considered proficient/ to calculate percent proficient • Resource: Assessment Metadata Survey User Guide • https: //www 2. ed. gov/about/inits/ed/edfacts/index. html 6 2017 LEADERSHIP CONFERENCE

When are the data submitted/ resubmitted? • Assessment data have 3 open/ reopen periods: • December due date • Data used for OSEP’s evaluation of timeliness, completeness, and accuracy of the data submission • Feb/ March reopen period: • Opportunity for States to resubmit assessment data or data notes to explain data quality inquires • April reopen period: • Final resubmission period • Assessment data in the system as of the end of this resubmission period is used for public reporting and associated data products 7 2017 LEADERSHIP CONFERENCE

How does OSEP Review the Assessment Data? • Timeliness • Are the data in the appropriate EDFacts system by the due date? • Completeness • Are data for all relevant file specifications submitted? • Are data for all category sets, subtotals, and totals submitted? • Do data match responses in the metadata sources? • Accuracy • Do data meet our edit checks? • LEA Roll up Comparison • Are there large differences between the sum of the counts reported at the LEA level compared to the counts reported at the SEA level? 8 2017 LEADERSHIP CONFERENCE

Data Quality Review - Timeliness • SEA level assessment data on children with disabilities (IDEA) are submitted to the EDFacts submission systems (ESS) by the December due date and time: • • 9 C 175 — Academic Achievement in Mathematics C 178 — Academic Achievement in Reading (Language Arts) C 185 — Assessment Participation in Mathematics C 188 — Assessment Participation in Reading/Language Arts 2017 LEADERSHIP CONFERENCE

Data Quality Review - Completeness • Achievement data reported in ESS aligns to data reported in EMAPS by subject (M, RLA), assessment type, grade and performance level. • Data are flagged if not aligned. • Participation data reported in ESS aligns to data reported in EMAPS by subject (M, RLA), assessment type and grade. • Data are flagged if not aligned. • Note: Zero counts are required at the SEA level. 10 2017 LEADERSHIP CONFERENCE

Data Quality Review - Accuracy • For mathematics/ reading by grade and assessment type, the number of students with disabilities (IDEA) who took an assessment and received a valid score (reported in C 175/ C 178) should equal the number of students with disabilities (IDEA) who participated in an assessment (reported in C 185/ C 188). • Data are flagged if counts do not match or data are blank. • By assessment type, the number of students with disabilities (IDEA) who took an assessment and received a valid score reported in C 175/ C 178 should be reported at the same grade level as the number of students with disabilities (IDEA) who participated in an assessment reported in C 185/ C 188. • Data are flagged if grade levels are misaligned. • Limited to HS grade misalignment 11 2017 LEADERSHIP CONFERENCE

Communication of Data Quality Inquiries • OSEP’s comments/ data quality inquiries communicated via Data Quality Report (DQR) • During February: • Partner Support Center (PSC) sends out an email to Assessment Directors, EDFacts Coordinators, and Part B Data Managers with the comments on the assessment data submissions from OESE/ OSS and OSEP • OSEP also posts the DQR for the IDEA Assessment data on OMB Max. • During March: • If data quality inquiries remain, PSC sends out another email to same group of recipients • OSEP also posts the DQR for the IDEA Assessment data on OMB Max. 12 2017 LEADERSHIP CONFERENCE

Data Quality Reports • OSEP’s communication tool regarding the State’s data submission • Provides State with: • OSEP’s evaluation of the timeliness, completeness, and accuracy of the State’s data submission • OSEP’s data quality inquiries • OSEP’s expectation to either resubmit data/ metadata or provide an explanation in the form of a data note • Locations: • Posted on OMB Max • Emailed with the CSPR comments to Part B Data Managers (as well as the Assessment Director & EDFacts Coordinator) 13 2017 LEADERSHIP CONFERENCE

Data Notes • Opportunity for the State to clarify situation(s) associated with the submission of the assessment data and metadata • Possible situations: • Data collection or reporting anomalies • Relevant changes in assessments or data collection/ reporting processes from previous year • Implementation of new assessments, initiatives, processes that may impact data or metadata • Opportunity for the State to respond to OSEP’s data quality inquiries • Data Notes submitted by States may be provided to the public to accompany the public release data file 14 2017 LEADERSHIP CONFERENCE

What does OSEP do with the Data? • APR: • Pre-populate the APR for Part B indicator 3 • Evaluation of data submission • Part B Results Matrix • Data Display • Public Release Data File • Static Tables • Ad hoc requests (i. e. , targeted analyses of previously collected data) 15 2017 LEADERSHIP CONFERENCE

Public reporting of IDEA assessment data • If the difference between participation and performance counts results in more than a 1 percentage point increase or decrease in the percent proficient, the number of students with disabilities who scored at or above proficient on the assessment and the number of students with disabilities who took that type of assessment were suppressed from the public file. • High school data is reported as HS and all grades are rolled up together into a total count grades 9 -12. 2017 LEADERSHIP CONFERENCE

How does that translate into what happens at the SEA Level? Lessons Learned from Nevada 17 2017 LEADERSHIP CONFERENCE

Validating Assessment Data What to validate for: Accuracy is if the total participants are equal to the total number of performance scores. Completeness is if the EDFacts data matches the Assessment Metadata Survey. 2017 LEADERSHIP CONFERENCE

Across file comparison Language Arts • The number of students with disabilities (IDEA) who took a Reading assessment and received a valid score (FS 178) for regular assessments based on grade level achievement standards must equal the number of students with disabilities (IDEA) who participated in the assessment (FS 188). 2017 LEADERSHIP CONFERENCE

Across file comparison Math • The number of students with disabilities (IDEA) who took a Math assessment and received a valid score (FS 175) for regular assessments based on grade level achievement standards must equal the number of students with disabilities (IDEA) who participated in the assessment (FS 185). 2017 LEADERSHIP CONFERENCE

Achievement metadata matching • Achievement data (FS 175) submitted for regular assessments based on grade level achievement standards with and without accommodations has data for all performance levels (ex. PERF LEVEL 1 -4) • SEA data file must include all performance levels (ex. PERF LEVEL 1 -4) for all data categories including zero counts. 2017 LEADERSHIP CONFERENCE

Participation metadata • Participation data (FS 185) submitted for assessments based on grade level achievement standards with and without accommodations must match the grades offered within EMAPS assessment metadata survey. 2017 LEADERSHIP CONFERENCE

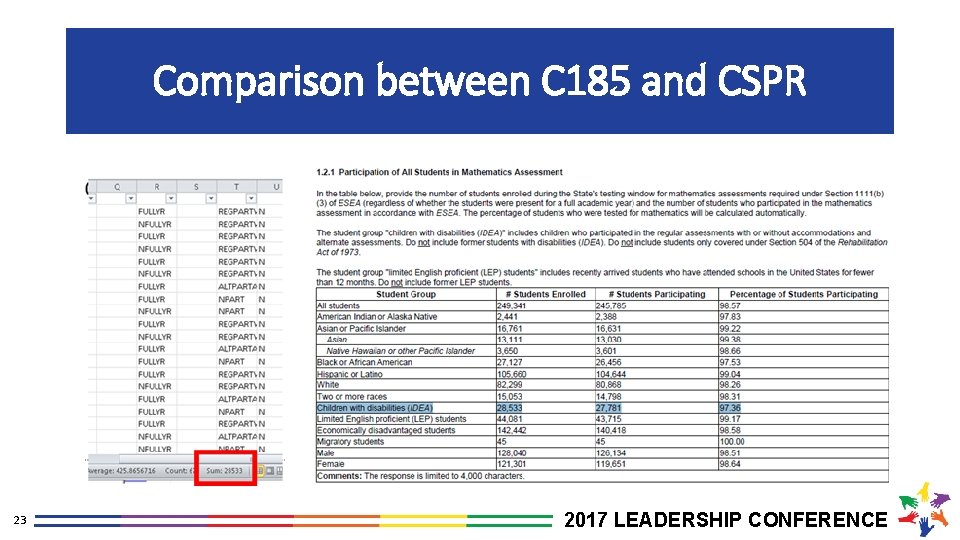

Comparison between C 185 and CSPR 23 2017 LEADERSHIP CONFERENCE

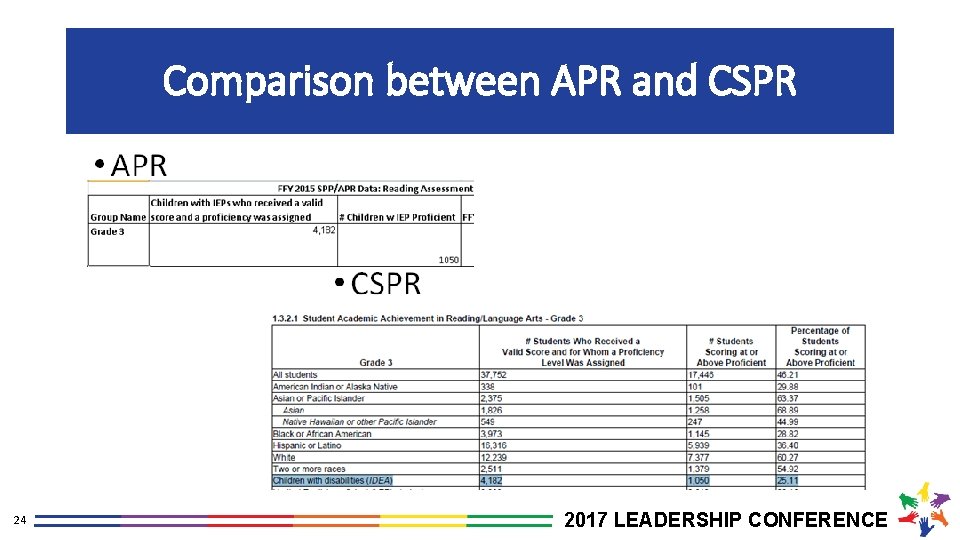

Comparison between APR and CSPR 24 2017 LEADERSHIP CONFERENCE

Open Discussion • What are the challenges you face with submitting and updating Assessment Data? • What would make the process easier? 25 2017 LEADERSHIP CONFERENCE

Questions? • Any remaining questions? • Contact Us • Anne Rainey, Part B Data Manager, MT (arainey@mt. gov) • Nick Easter, Ed. D. , Part B Data Manager, NV (neaster@doe. nv. gov) • Meredith Miceli, Research to Practice Division, OSEP (Meredith. Miceli@ed. gov) 26 2017 LEADERSHIP CONFERENCE

- Slides: 26