Ice Cube simulation with PPC on GPUs photon

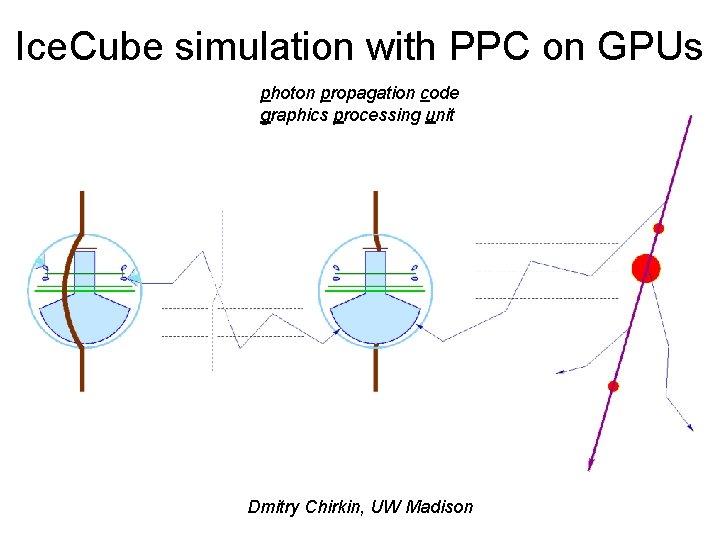

Ice. Cube simulation with PPC on GPUs photon propagation code graphics processing unit Dmitry Chirkin, UW Madison

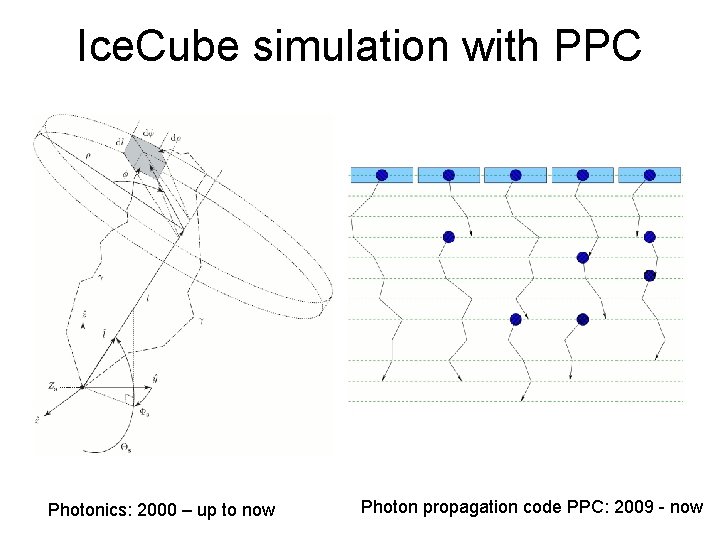

Ice. Cube simulation with PPC Photonics: 2000 – up to now Photon propagation code PPC: 2009 - now

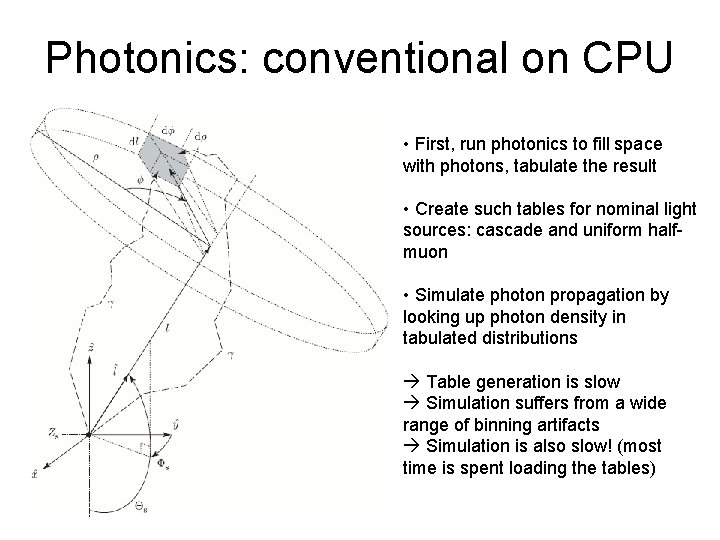

Photonics: conventional on CPU • First, run photonics to fill space with photons, tabulate the result • Create such tables for nominal light sources: cascade and uniform halfmuon • Simulate photon propagation by looking up photon density in tabulated distributions Table generation is slow Simulation suffers from a wide range of binning artifacts Simulation is also slow! (most time is spent loading the tables)

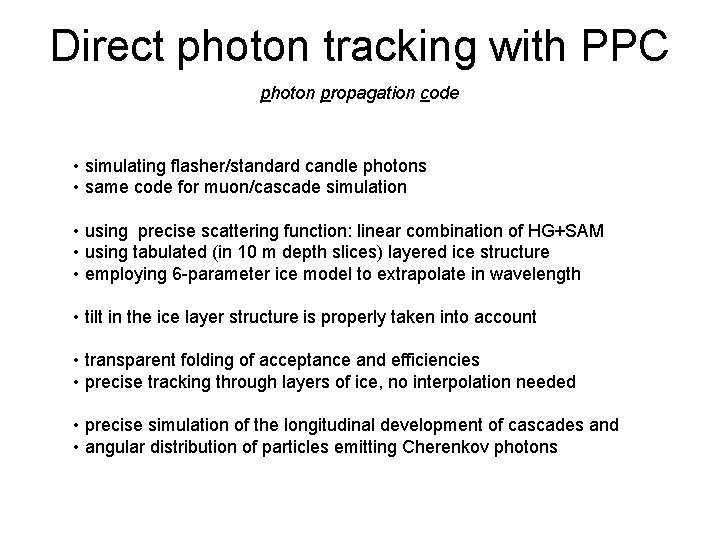

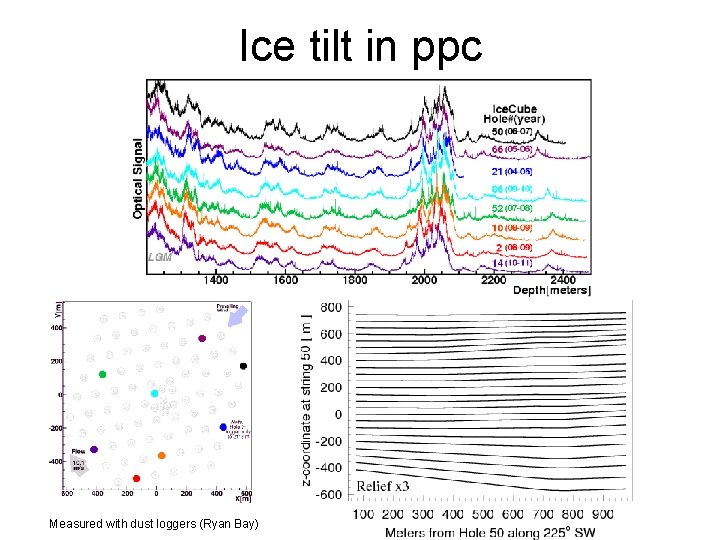

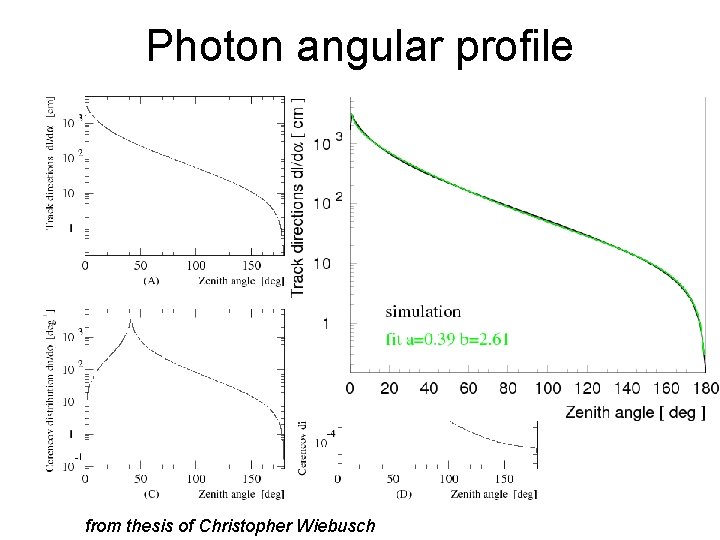

Direct photon tracking with PPC photon propagation code • simulating flasher/standard candle photons • same code for muon/cascade simulation • using precise scattering function: linear combination of HG+SAM • using tabulated (in 10 m depth slices) layered ice structure • employing 6 -parameter ice model to extrapolate in wavelength • tilt in the ice layer structure is properly taken into account • transparent folding of acceptance and efficiencies • precise tracking through layers of ice, no interpolation needed • precise simulation of the longitudinal development of cascades and • angular distribution of particles emitting Cherenkov photons

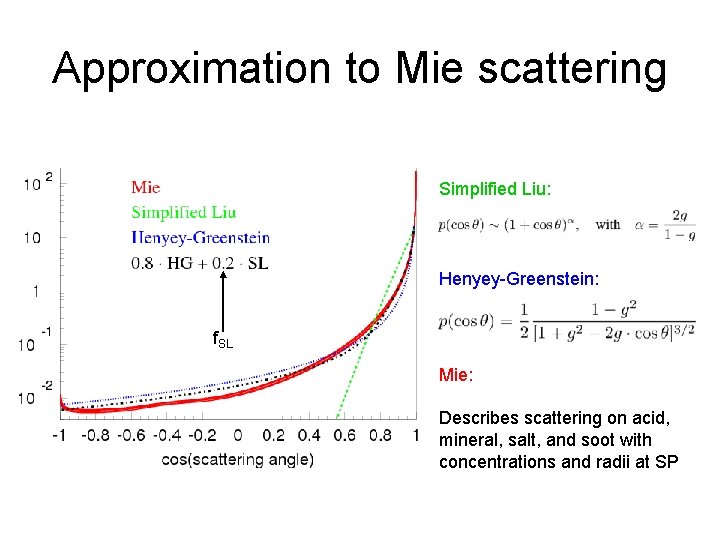

Approximation to Mie scattering Simplified Liu: Henyey-Greenstein: f. SL Mie: Describes scattering on acid, mineral, salt, and soot with concentrations and radii at SP

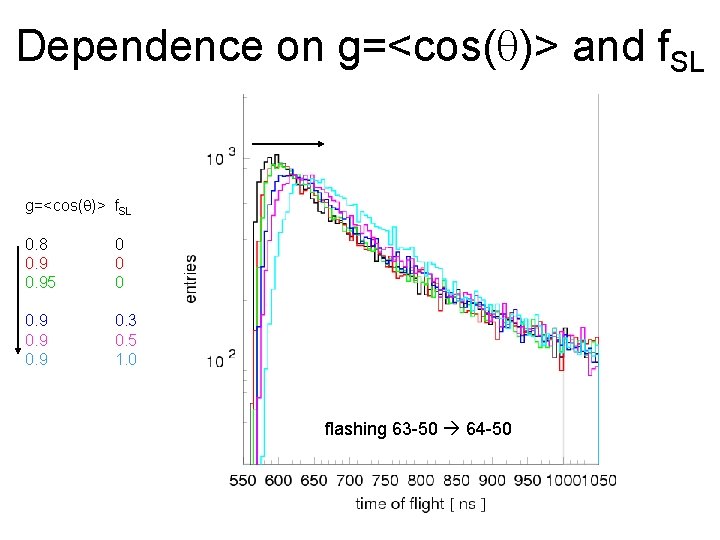

Dependence on g=<cos(q)> and f. SL g=<cos(q)> f. SL 0. 8 0. 95 0 0. 9 0. 3 0. 5 1. 0 flashing 63 -50 64 -50

Ice tilt in ppc Measured with dust loggers (Ryan Bay)

Photon angular profile from thesis of Christopher Wiebusch

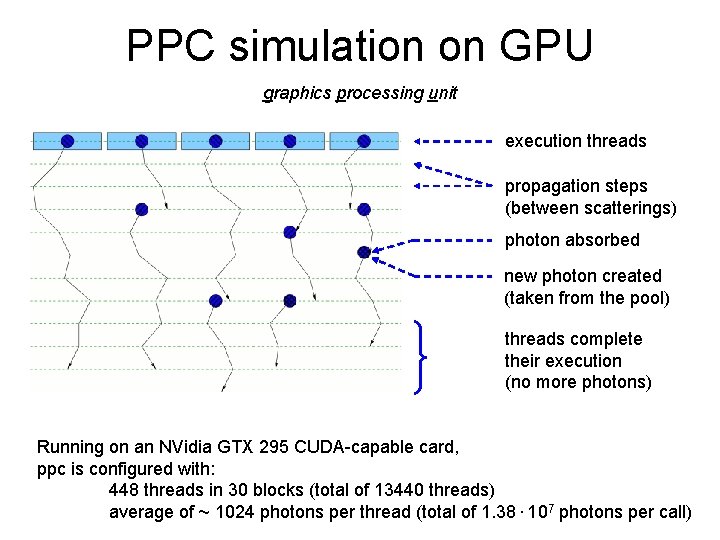

PPC simulation on GPU graphics processing unit execution threads propagation steps (between scatterings) photon absorbed new photon created (taken from the pool) threads complete their execution (no more photons) Running on an NVidia GTX 295 CUDA-capable card, ppc is configured with: 448 threads in 30 blocks (total of 13440 threads) average of ~ 1024 photons per thread (total of 1. 38. 107 photons per call)

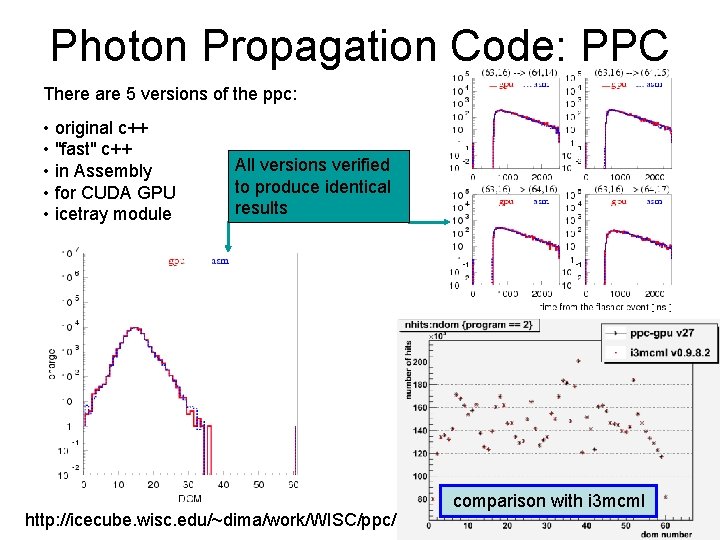

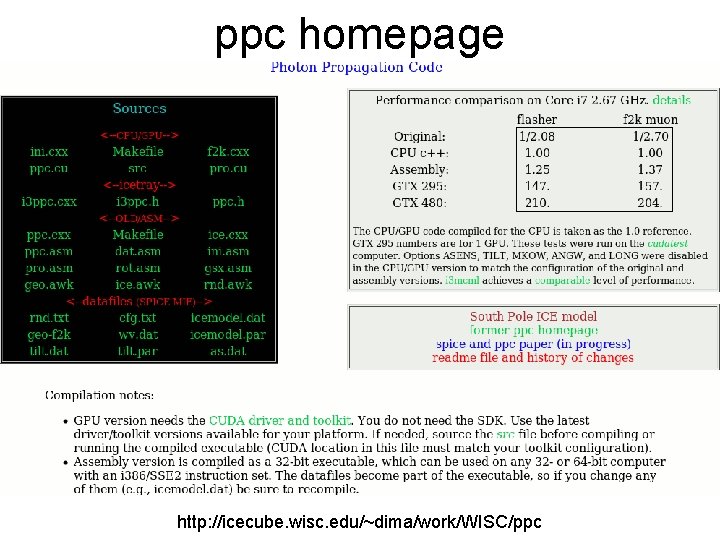

Photon Propagation Code: PPC There are 5 versions of the ppc: • original c++ • "fast" c++ • in Assembly • for CUDA GPU • icetray module All versions verified to produce identical results http: //icecube. wisc. edu/~dima/work/WISC/ppc/ comparison with i 3 mcml

ppc icetray module • at http: //code. icecube. wisc. edu/svn/projects/ppc/trunk/ • uses a wrapper: private/ppc/i 3 ppc. cxx, compiled by cmake system into the libppc. so • additional library libxppc. so is compiled by cmake Set GPU_XPPC: BOOL=ON or OFF • or can also be compiled by running make in private/ppc/gpu: “make glib” compiles gpu-accelerated version (needs cuda tools) “make clib” compiles cpu version (from the same sources!)

![ppc example script run. py if(len(sys. argv)!=6): print "Use: run. py [corsika/nugen/flasher] [gpu] [seed] ppc example script run. py if(len(sys. argv)!=6): print "Use: run. py [corsika/nugen/flasher] [gpu] [seed]](http://slidetodoc.com/presentation_image/add6e68ef2dfcf8ce68f0ececa336ff5/image-12.jpg)

ppc example script run. py if(len(sys. argv)!=6): print "Use: run. py [corsika/nugen/flasher] [gpu] [seed] [infile/num of flasher events] [outfile]" sys. exit() … det = "ic 86" detector = False … os. putenv("PPCTABLESDIR", expandvars("$I 3_BUILD/ppc/resources/ice/mie")) … if(mode == "flasher"): … str=63 dom=20 nph=8. e 9 tray. Add. Module("I 3 Photo. Flash", "photoflash")(…) os. putenv("WFLA", "405") # flasher wavelength; set to 337 for standard candles os. putenv("FLDR", "-1") # direction of the first flasher LED … # Set FLDR=x+(n-1)*360, where 0<=x<360 and n>0 to simulate n LEDs in a # symmetrical n-fold pattern, with first LED centered in the direction x. # Negative or unset FLDR simulates a symmetric in azimuth pattern of light. tray. Add. Module("i 3 ppc", "ppc")( ("gpu", gpu), ("bad", bad), ("nph", nph*0. 1315/25), # corrected for efficiency and DOM oversize factor; eff(337)=0. 0354 ("fla", OMKey(str, dom)), # set str=-str for tilted flashers, str=0 and dom=1, 2 for SC 1 and 2 ) else:

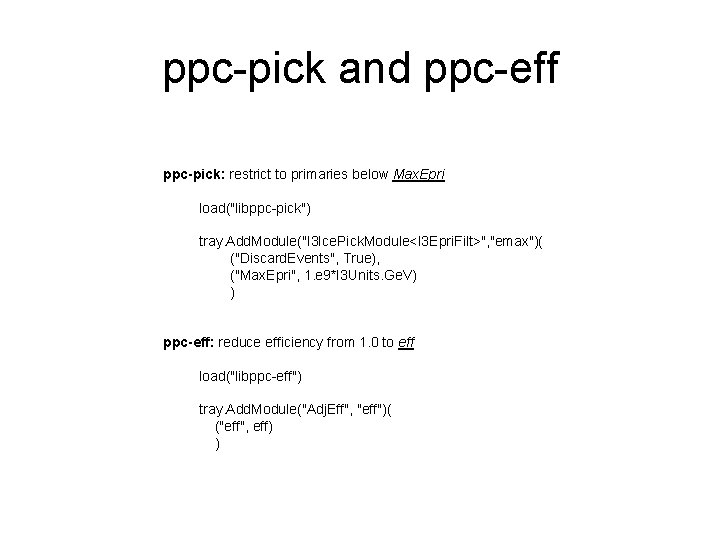

ppc-pick and ppc-eff ppc-pick: restrict to primaries below Max. Epri load("libppc-pick") tray. Add. Module("I 3 Ice. Pick. Module<I 3 Epri. Filt>", "emax")( ("Discard. Events", True), ("Max. Epri", 1. e 9*I 3 Units. Ge. V) ) ppc-eff: reduce efficiency from 1. 0 to eff load("libppc-eff") tray. Add. Module("Adj. Eff", "eff")( ("eff", eff) )

ppc homepage http: //icecube. wisc. edu/~dima/work/WISC/ppc

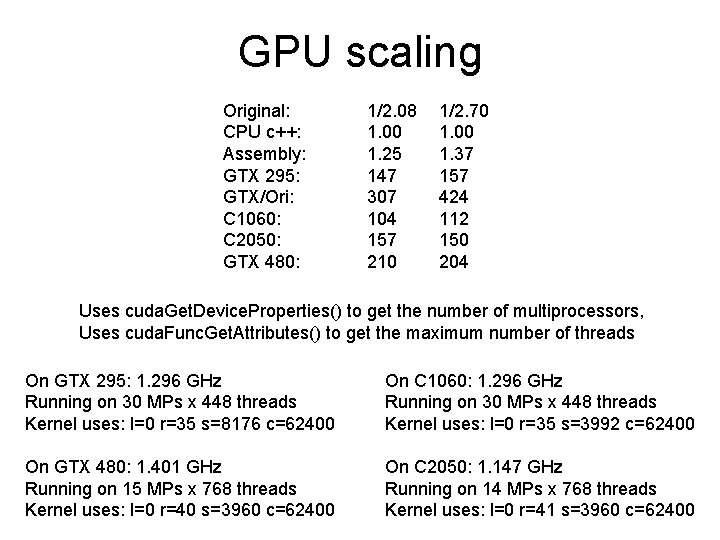

GPU scaling Original: CPU c++: Assembly: GTX 295: GTX/Ori: C 1060: C 2050: GTX 480: 1/2. 08 1. 00 1. 25 147 307 104 157 210 1/2. 70 1. 00 1. 37 157 424 112 150 204 Uses cuda. Get. Device. Properties() to get the number of multiprocessors, Uses cuda. Func. Get. Attributes() to get the maximum number of threads On GTX 295: 1. 296 GHz Running on 30 MPs x 448 threads Kernel uses: l=0 r=35 s=8176 c=62400 On C 1060: 1. 296 GHz Running on 30 MPs x 448 threads Kernel uses: l=0 r=35 s=3992 c=62400 On GTX 480: 1. 401 GHz Running on 15 MPs x 768 threads Kernel uses: l=0 r=40 s=3960 c=62400 On C 2050: 1. 147 GHz Running on 14 MPs x 768 threads Kernel uses: l=0 r=41 s=3960 c=62400

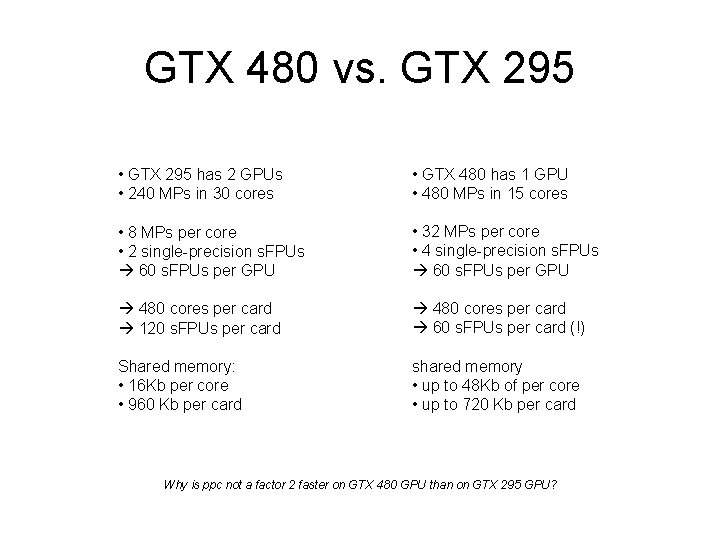

GTX 480 vs. GTX 295 • GTX 295 has 2 GPUs • 240 MPs in 30 cores • GTX 480 has 1 GPU • 480 MPs in 15 cores • 8 MPs per core • 2 single-precision s. FPUs 60 s. FPUs per GPU • 32 MPs per core • 4 single-precision s. FPUs 60 s. FPUs per GPU 480 cores per card 120 s. FPUs per card 480 cores per card 60 s. FPUs per card (!) Shared memory: • 16 Kb per core • 960 Kb per card shared memory • up to 48 Kb of per core • up to 720 Kb per card Why is ppc not a factor 2 faster on GTX 480 GPU than on GTX 295 GPU?

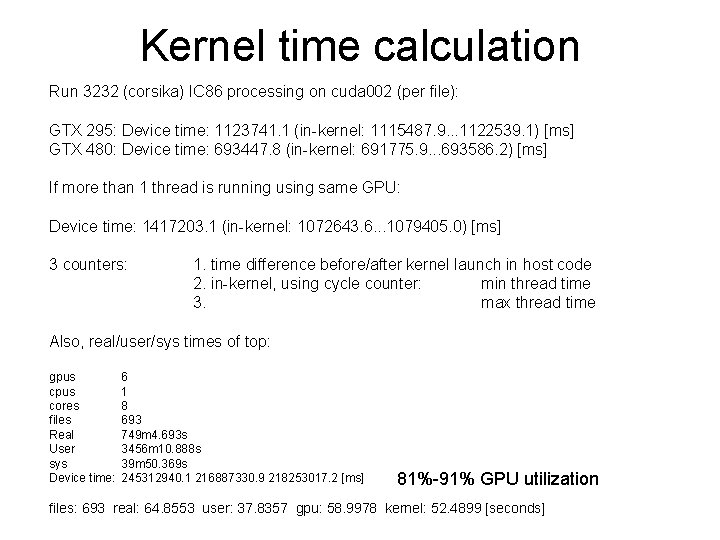

Kernel time calculation Run 3232 (corsika) IC 86 processing on cuda 002 (per file): GTX 295: Device time: 1123741. 1 (in-kernel: 1115487. 9. . . 1122539. 1) [ms] GTX 480: Device time: 693447. 8 (in-kernel: 691775. 9. . . 693586. 2) [ms] If more than 1 thread is running using same GPU: Device time: 1417203. 1 (in-kernel: 1072643. 6. . . 1079405. 0) [ms] 3 counters: 1. time difference before/after kernel launch in host code 2. in-kernel, using cycle counter: min thread time 3. max thread time Also, real/user/sys times of top: gpus cores files Real User sys Device time: 6 1 8 693 749 m 4. 693 s 3456 m 10. 888 s 39 m 50. 369 s 245312940. 1 216887330. 9 218253017. 2 [ms] 81%-91% GPU utilization files: 693 real: 64. 8553 user: 37. 8357 gpu: 58. 9978 kernel: 52. 4899 [seconds]

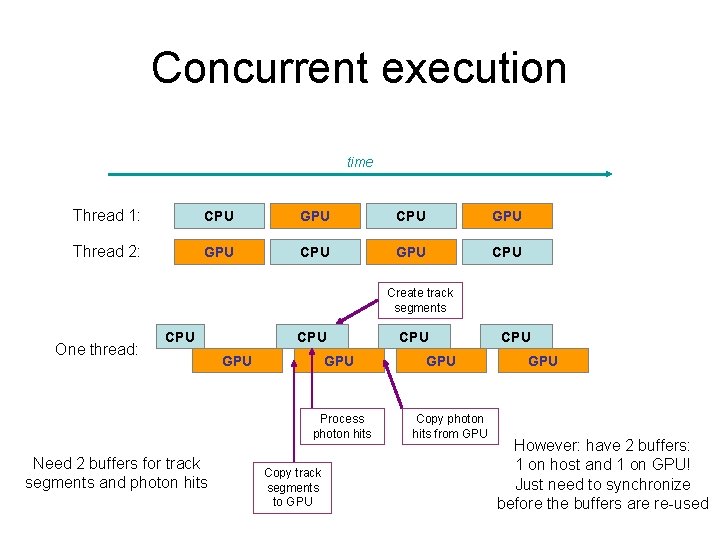

Concurrent execution time Thread 1: CPU GPU Thread 2: GPU CPU Create track segments One thread: CPU GPU Process photon hits Need 2 buffers for track segments and photon hits Copy track segments to GPU CPU GPU Copy photon hits from GPU CPU GPU However: have 2 buffers: 1 on host and 1 on GPU! Just need to synchronize before the buffers are re-used

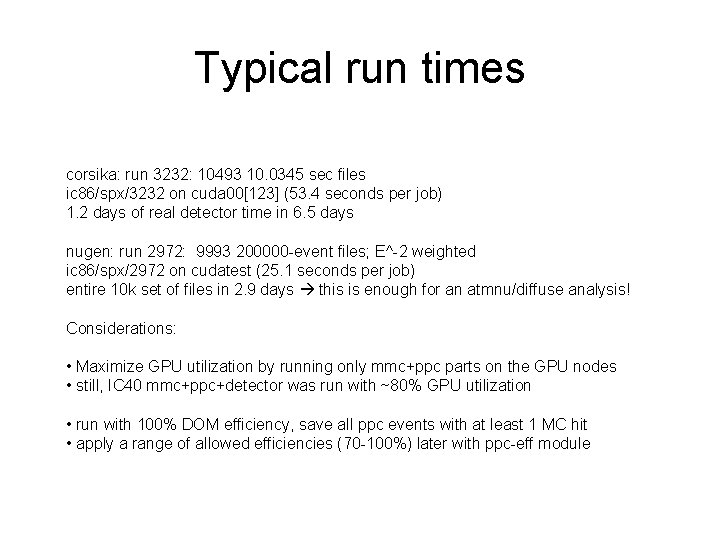

Typical run times corsika: run 3232: 10493 10. 0345 sec files ic 86/spx/3232 on cuda 00[123] (53. 4 seconds per job) 1. 2 days of real detector time in 6. 5 days nugen: run 2972: 9993 200000 -event files; E^-2 weighted ic 86/spx/2972 on cudatest (25. 1 seconds per job) entire 10 k set of files in 2. 9 days this is enough for an atmnu/diffuse analysis! Considerations: • Maximize GPU utilization by running only mmc+ppc parts on the GPU nodes • still, IC 40 mmc+ppc+detector was run with ~80% GPU utilization • run with 100% DOM efficiency, save all ppc events with at least 1 MC hit • apply a range of allowed efficiencies (70 -100%) later with ppc-eff module

Use in analysis PPC run on GPUs was already used in several analyses already published or in progress. The ease of changing the ice parameters facilitated propagation of ice uncertainties through the analysis, as all “systematics” simulation sets are simulated in roughly the same amount of time, with no extra overhead. A similar quality uncertainty analysis based on photonics simulation would have taken much longer because of the large CPU cost of the initial table generation.

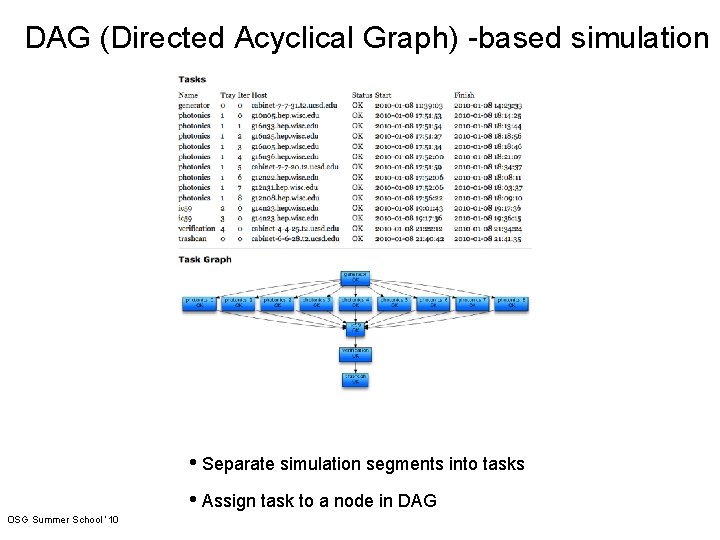

DAG (Directed Acyclical Graph) -based simulation • Separate simulation segments into tasks • Assign task to a node in DAG OSG Summer School ‘ 10

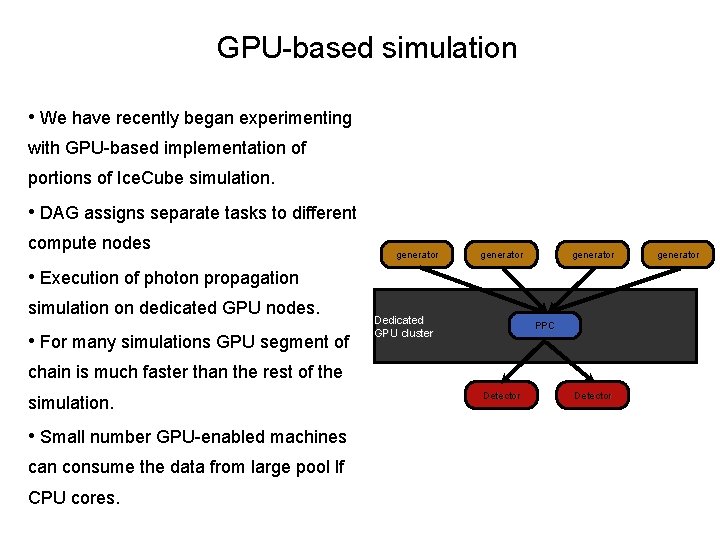

GPU-based simulation • We have recently began experimenting with GPU-based implementation of portions of Ice. Cube simulation. • DAG assigns separate tasks to different compute nodes generator • Execution of photon propagation simulation on dedicated GPU nodes. • For many simulations GPU segment of Dedicated GPU cluster PPC chain is much faster than the rest of the simulation. • Small number GPU-enabled machines can consume the data from large pool lf CPU cores. Detector generator

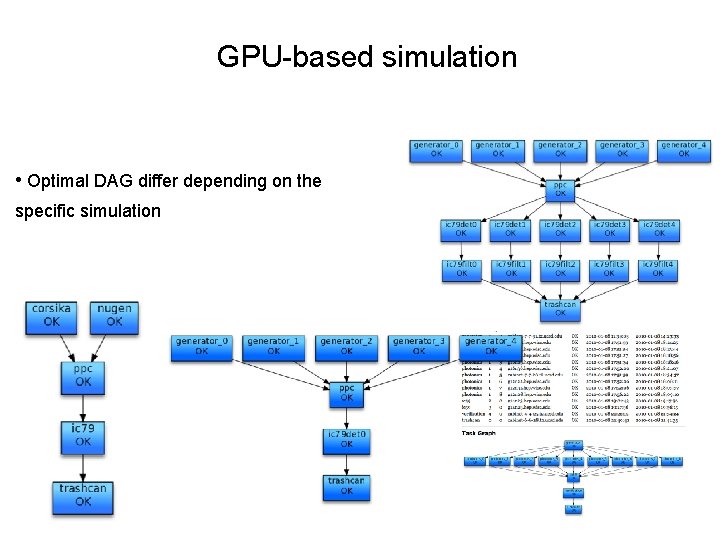

GPU-based simulation • Optimal DAG differ depending on the specific simulation

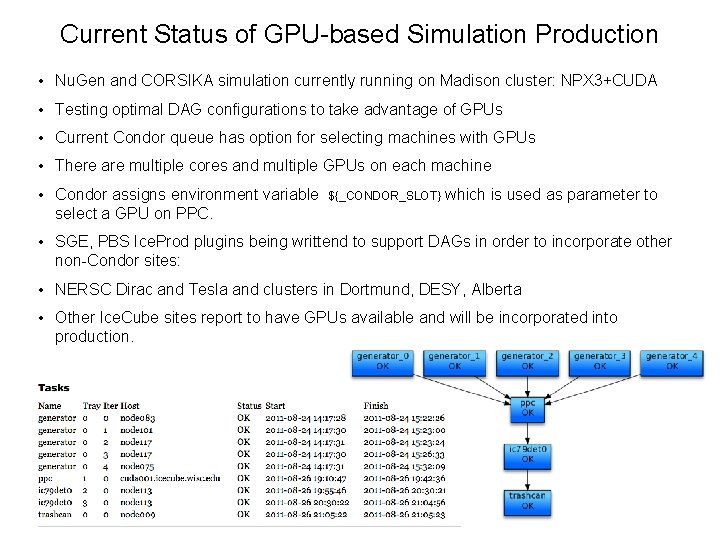

Current Status of GPU-based Simulation Production • Nu. Gen and CORSIKA simulation currently running on Madison cluster: NPX 3+CUDA • Testing optimal DAG configurations to take advantage of GPUs • Current Condor queue has option for selecting machines with GPUs • There are multiple cores and multiple GPUs on each machine • Condor assigns environment variable select a GPU on PPC. ${_CONDOR_SLOT} which is used as parameter to • SGE, PBS Ice. Prod plugins being writtend to support DAGs in order to incorporate other non-Condor sites: • NERSC Dirac and Tesla and clusters in Dortmund, DESY, Alberta • Other Ice. Cube sites report to have GPUs available and will be incorporated into production.

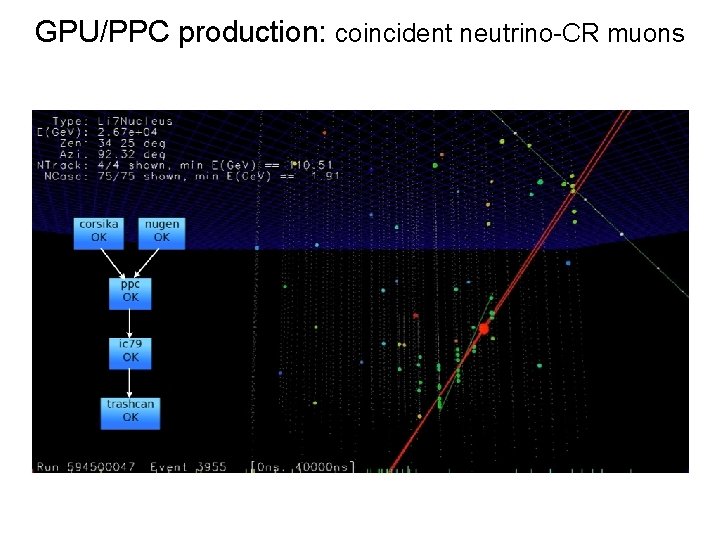

GPU/PPC production: coincident neutrino-CR muons

Our initial GPU cluster 4 computers: • 1 cudatest • 3 cuda nodes (cuda 001 -3) Each has 4 -core CPU 3 GPU cards, each with 2 GPUs (i. e. 6 GPUs per computer) Each computer is ~ $3500

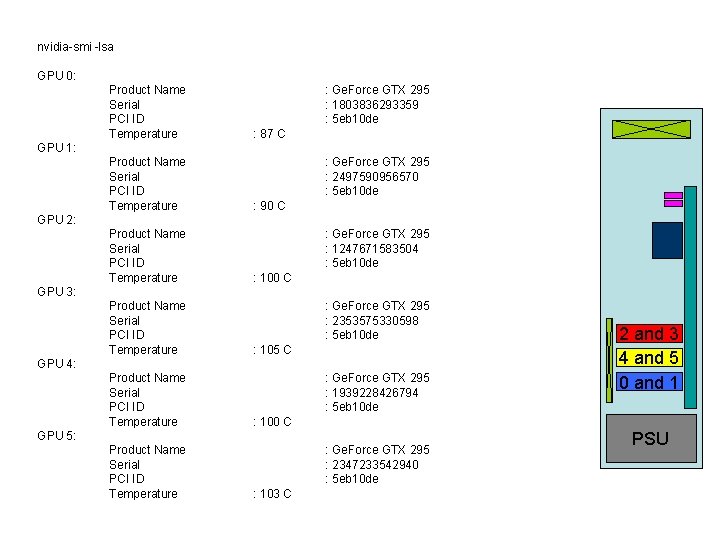

nvidia-smi -lsa GPU 0: cudatest: GPU 1: Product Name Serial PCI ID Temperature Our initial cluster : Ge. Force GTX 295 : 1803836293359 : 5 eb 10 de : 87 C Product Name : Ge. Force GTX 295 Serial driver 2030, runtime 2030 : 2497590956570 Found 6 devices, PCI ID : 5 eb 10 de 0(1. 3): Ge. Force GTX 295 1. 296 GHz G(939261952) S(16384) C(65536) R(16384) W(32) Temperature : 90 C l 1 o 1 c 0 h 1 i 0 m 30 a 256 M(262144) T(512: 512, 64) G(65535, 1) GPU 2: 1(1. 3): Ge. Force GTX 295 1. 296 GHz G(939261952) S(16384) C(65536) R(16384) W(32) Product Name : Ge. Force GTX 295 l 0 Serial o 1 c 0 h 1 i 0 m 30 a 256 M(262144) : T(512: 512, 64) G(65535, 1) 1247671583504 2(1. 3): Ge. Force GTX 295 1. 296 GHz G(939261952) S(16384) C(65536) R(16384) W(32) PCI ID : 5 eb 10 de 100 C l 0 Temperature o 1 c 0 h 1 i 0 m 30 a 256 : M(262144) T(512: 512, 64) G(65535, 1) GPU 3: Ge. Force GTX 295 1. 296 GHz G(938803200) S(16384) C(65536) R(16384) W(32) 3(1. 3): Ge. Force GTX 295 l 0 Product o 1 c 0 Name h 1 i 0 m 30 a 256 M(262144) : T(512: 512, 64) G(65535, 1) Serial : 2353575330598 4(1. 3): Ge. Force GTX 295 1. 296 GHz G(939261952) S(16384) C(65536) R(16384) W(32) PCI ID : 5 eb 10 de l 0 Temperature o 1 c 0 h 1 i 0 m 30 a 256 : M(262144) T(512: 512, 64) G(65535, 1) 105 C 5(1. 3): GPU 4: Ge. Force GTX 295 1. 296 GHz G(939261952) S(16384) C(65536) R(16384) W(32) Ge. Force GTX 295 l 0 Product o 1 c 0 Name h 1 i 0 m 30 a 256 M(262144) : T(512: 512, 64) G(65535, 1) Serial : 1939228426794 PCI ID : 5 eb 10 de Temperature : 100 C GPU 5: Product Name : Ge. Force GTX 295 Serial : 2347233542940 3 GTX 295 each with 2 GPUs: 5 eb 10 de PCIcards, ID Temperature : 103 C 2 and 3 4 and 5 0 and 1 PSU

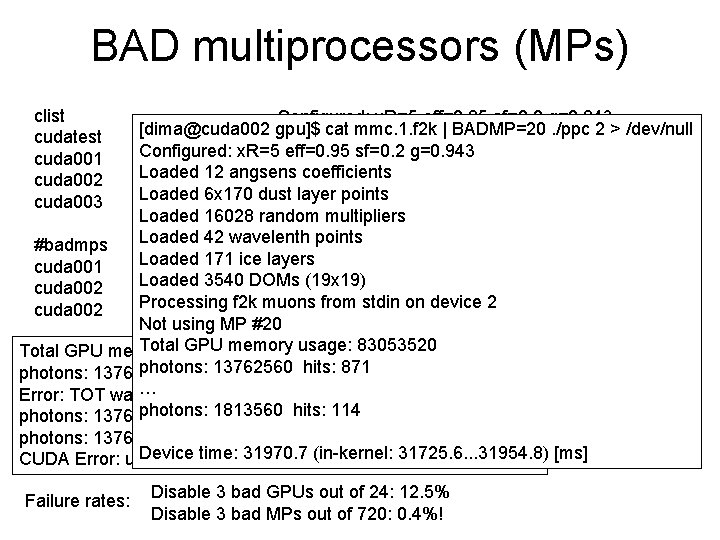

BAD multiprocessors (MPs) clist cudatest cuda 001 cuda 002 cuda 003 Configured: x. R=5 eff=0. 95 sf=0. 2 g=0. 943 [dima@cuda 002 gpu]$ cat mmc. 1. f 2 k | BADMP=20. /ppc 2 > /dev/null 0 1 2 3 4 5 Loaded 12 angsens coefficients Configured: x. R=5 eff=0. 95 sf=0. 2 g=0. 943 0 1 2 3 4 5 Loaded 6 x 170 dust layer points Loaded 12 angsens coefficients 0 1 2 3 4 5 Loaded 16028 random multipliers Loaded layer points 0 1 6 x 170 2 3 4 5 dust Loaded 42 wavelenth points Loaded 16028 random multipliers Loaded 171 ice layers Loaded 42 wavelenth points #badmps Loaded 3540 DOMs (19 x 19) Loaded cuda 001 3 22171 ice layers Processing f 2 k muons from stdin on device 2 Loaded 3540 DOMs (19 x 19) cuda 002 2 20 Total GPU memory usage: 83053520 Processing from stdin on device 2 cuda 002 4 10 f 2 k muons photons: 13762560 hits: 991 Not using MP #20 Error: TOT was a nan or an inf 1 times! Bad MP #20 Total GPU memory usage: 83053520 photons: 13762560 hits: 393 photons: hits: 871 photons: 13762560 hits: 13762560 938 photons: 13762560 hits: 570 … Error: TOT was a nan or an inf 9 photons: times! Bad MP #20 13762560 hits: #20 501#20 photons: 1813560 hits: 114 photons: 13762560 hits: 442 photons: 13762560 hits: 832 photons: 13762560 hits: 627 photons: 13762560 hits: 717 Device time: 31970. 7 (in-kernel: 31725. 6. . . 31954. 8) [ms] CUDA Error: unspecified launch failure Failure rates: Disable 3 bad GPUs out of 24: 12. 5% Disable 3 bad MPs out of 720: 0. 4%!

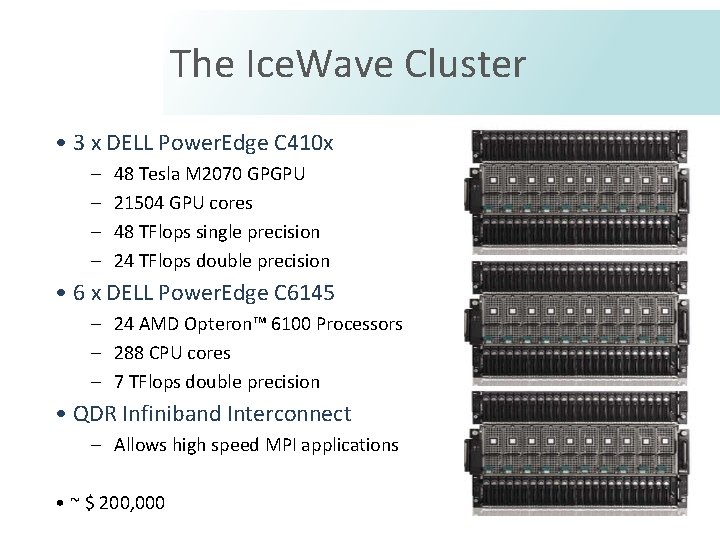

The Ice. Wave Cluster • 3 x DELL Power. Edge C 410 x – – 48 Tesla M 2070 GPGPU 21504 GPU cores 48 TFlops single precision 24 TFlops double precision • 6 x DELL Power. Edge C 6145 – 24 AMD Opteron™ 6100 Processors – 288 CPU cores – 7 TFlops double precision • QDR Infiniband Interconnect – Allows high speed MPI applications • ~ $ 200, 000

Basic Elements • DELL Power. Edge C 410 x – 16 PCIe Expansion chassis – Use of C 2050 TESLA GPUs () – Flexible assignment of GPUs to. Servers Allows 1 -4 GPUs per server

Basic Elements • DELL Power. Edge C 6145 – – – 2 x 4 CPU Servers AMD Opteron™ 6100 (Magny-Cours) 12 cores per processor 48 -96 cores per 2 U server 192 GB per 2 U server

Concluding remarks • PPC (photon propagation code) is used by Ice. Cube for photon tracking • Precise treatment of the photon propagation, includes all known effects (longitudinal development of particle cascades, ice tilt, etc. ) • PPC can be run on CPUs or GPUs; running on a GPU is 100 s of times faster than on a CPU core • We use DAG to run PPC routinely on GPUs for mass production of simulated data • GPU computers can be assembled with NVidia or AMD video cards however, some problems exist in consumer video cards bad MPs can be worked around in PPC computing-grade hardware can be used instead

Extra slides

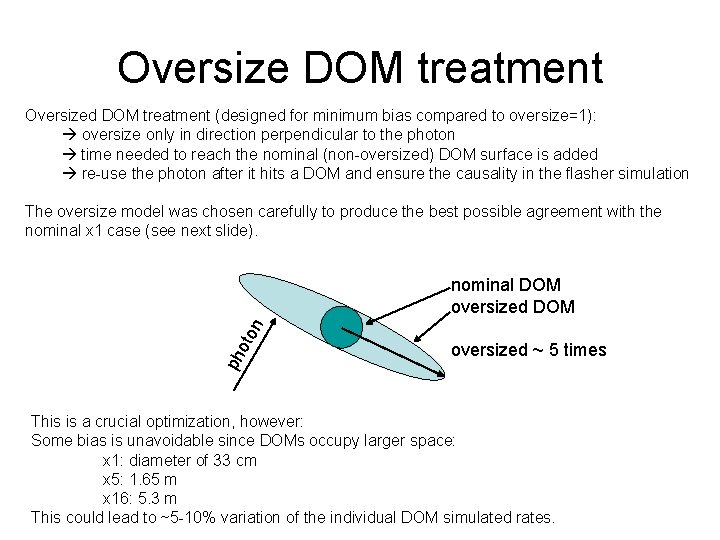

Oversize DOM treatment Oversized DOM treatment (designed for minimum bias compared to oversize=1): oversize only in direction perpendicular to the photon time needed to reach the nominal (non-oversized) DOM surface is added re-use the photon after it hits a DOM and ensure the causality in the flasher simulation The oversize model was chosen carefully to produce the best possible agreement with the nominal x 1 case (see next slide). ph oto n nominal DOM oversized ~ 5 times This is a crucial optimization, however: Some bias is unavoidable since DOMs occupy larger space: x 1: diameter of 33 cm x 5: 1. 65 m x 16: 5. 3 m This could lead to ~5 -10% variation of the individual DOM simulated rates.

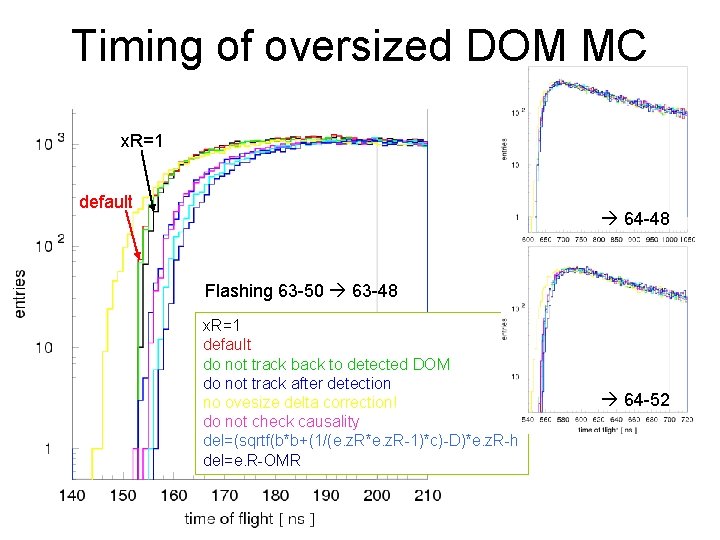

Timing of oversized DOM MC x. R=1 default 64 -48 Flashing 63 -50 63 -48 x. R=1 default do not track back to detected DOM do not track after detection no ovesize delta correction! do not check causality del=(sqrtf(b*b+(1/(e. z. R*e. z. R-1)*c)-D)*e. z. R-h del=e. R-OMR 64 -52

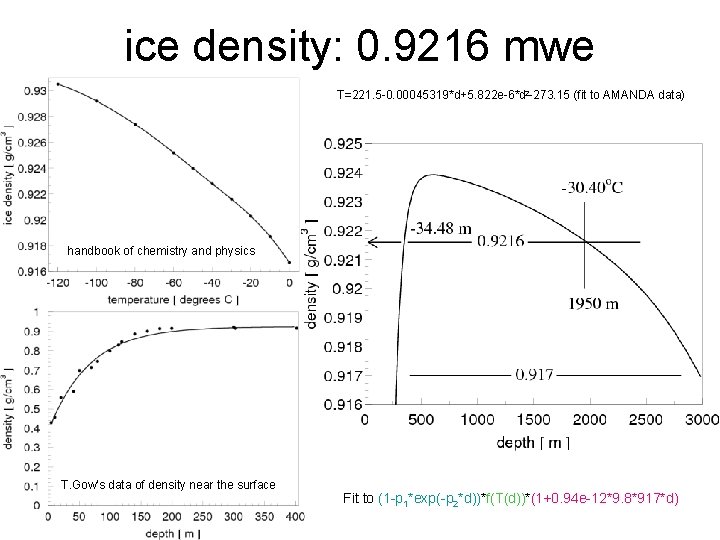

ice density: 0. 9216 mwe T=221. 5 -0. 00045319*d+5. 822 e-6*d 2 -273. 15 (fit to AMANDA data) handbook of chemistry and physics T. Gow's data of density near the surface Fit to (1 -p 1*exp(-p 2*d))*f(T(d))*(1+0. 94 e-12*9. 8*917*d)

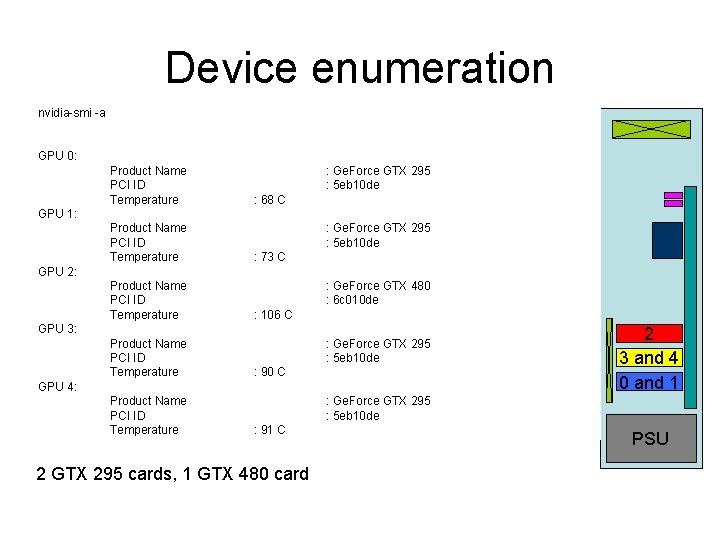

Device enumeration nvidia-smi -a cuda 002: GPU 0: Product Name : Ge. Force GTX 295 Found 5 devices, driver 3010, runtime 3010 PCI ID : 5 eb 10 de 0(2. 0): Ge. Force GTX 480 1. 401 GHz: 68 G(1610285056) S(49152) C(65536) R(32768) W(32) Temperature C l 0 o 1 c 0 h 1 i 0 m 15 a 512 M(2147483647) T(1024: 1024, 64) G(65535, 1) GPU 1: Product Name : Ge. Force GTX 295 1(1. 3): Ge. Force GTX 295 1. 242 GHz G(938803200) S(16384) C(65536) R(16384) W(32) PCI ID : 5 eb 10 de l 0 o 1 c 0 h 1 i 0 m 30 a 256 M(2147483647) T(512: 512, 64) G(65535, 1) Temperature C 2(1. 3): Ge. Force GTX 295 1. 242 GHz: 73 G(939327488) S(16384) C(65536) R(16384) W(32) GPU 2: l 0 Product o 1 c 0 Name h 1 i 0 m 30 a 256 M(2147483647) T(512: 512, 64) G(65535, 1) : Ge. Force GTX 480 3(1. 3): Ge. Force GTX 295 1. 242 GHz G(939327488) S(16384) C(65536) R(16384) W(32) PCI ID : 6 c 010 de l 0 Temperature o 1 c 0 h 1 i 0 m 30 a 256 : M(2147483647) T(512: 512, 64) G(65535, 1) 106 C GPU 3: Ge. Force GTX 295 1. 242 GHz G(939327488) S(16384) C(65536) R(16384) W(32) 4(1. 3): : Ge. Force GTX 512, 64) 295 l 0 Product o 1 c 0 Name h 1 i 0 m 30 a 256 M(2147483647) T(512: G(65535, 1) PCI ID Temperature : 5 eb 10 de : 90 C GPU 4: Product Name PCI ID Temperature 2 0 3 and 4 1 and 1 0 2 : Ge. Force GTX 295 : 5 eb 10 de : 91 C 2 GTX 295 cards, 1 GTX 480 card PSU

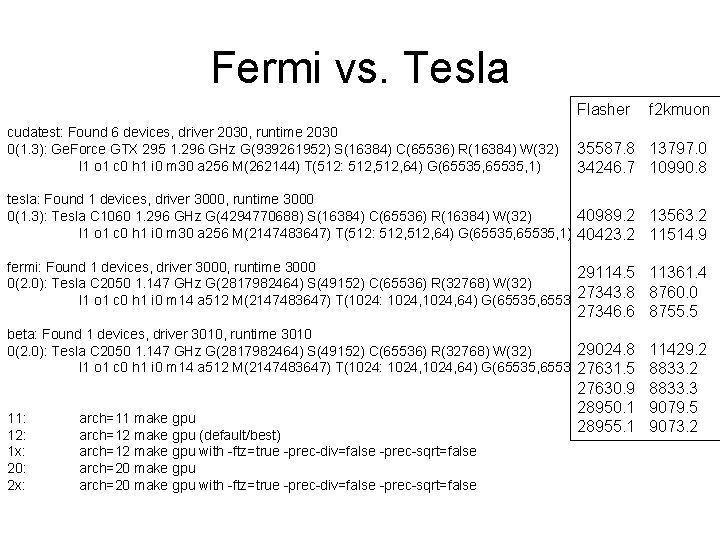

Fermi vs. Tesla Flasher cudatest: Found 6 devices, driver 2030, runtime 2030 0(1. 3): Ge. Force GTX 295 1. 296 GHz G(939261952) S(16384) C(65536) R(16384) W(32) l 1 o 1 c 0 h 1 i 0 m 30 a 256 M(262144) T(512: 512, 64) G(65535, 1) f 2 kmuon 35587. 8 13797. 0 34246. 7 10990. 8 tesla: Found 1 devices, driver 3000, runtime 3000 40989. 2 0(1. 3): Tesla C 1060 1. 296 GHz G(4294770688) S(16384) C(65536) R(16384) W(32) l 1 o 1 c 0 h 1 i 0 m 30 a 256 M(2147483647) T(512: 512, 64) G(65535, 1) 40423. 2 13563. 2 11514. 9 fermi: Found 1 devices, driver 3000, runtime 3000 29114. 5 0(2. 0): Tesla C 2050 1. 147 GHz G(2817982464) S(49152) C(65536) R(32768) W(32) 27343. 8 l 1 o 1 c 0 h 1 i 0 m 14 a 512 M(2147483647) T(1024: 1024, 64) G(65535, 1) 11361. 4 8760. 0 27346. 6 8755. 5 beta: Found 1 devices, driver 3010, runtime 3010 29024. 8 0(2. 0): Tesla C 2050 1. 147 GHz G(2817982464) S(49152) C(65536) R(32768) W(32) l 1 o 1 c 0 h 1 i 0 m 14 a 512 M(2147483647) T(1024: 1024, 64) G(65535, 1) 27631. 5 11: 12: 1 x: 20: 2 x: arch=11 make gpu arch=12 make gpu (default/best) arch=12 make gpu with -ftz=true -prec-div=false -prec-sqrt=false arch=20 make gpu with -ftz=true -prec-div=false -prec-sqrt=false 11429. 2 8833. 2 27630. 9 8833. 3 28950. 1 9079. 5 28955. 1 9073. 2

Other Consider: • building production computers with only 2 cards, leaving a space in between • using 6 -core CPUs if paired with 3 GPU cards • 4 -way Tesla GPU-only servers a possible solution • Consumer GTX card much faster than Tesla/Fermi cards GTX 295 was so far found to be a better choice than GTX 480 • but: no longer available! Reliability: • 0. 4% loss of advertised capacity in GTX 295 cards • however: 2 of 3 affected cards were “refurbished” • do cards deteriorate over time? The failed MPs did not change in ~3 months

- Slides: 39