Hypothesis Tests in Depth The subtleties of Testing

Hypothesis Tests in Depth The subtleties of Testing

Steps to solve a hypothesis test 1) 2) 3) 4) ◦ ◦ ◦ State your hypotheses & significance level Null (equality) and Alternative (<, >, ≠) α Typically given Calculate the appropriate test statistic Choose correct type based on assumptions Calculate with your sample data Make a decision CV or P-val Methods Draw conclusions and interpret results.

Questions? your P-val and CV methods always lead you to the same conclusion? � Will � Which is preferred? ◦ Statistical Significance vs. Practical Significance ◦ ASA Statement on P-values � What can go wrong? ◦ Types of errors ◦ Quantifying error

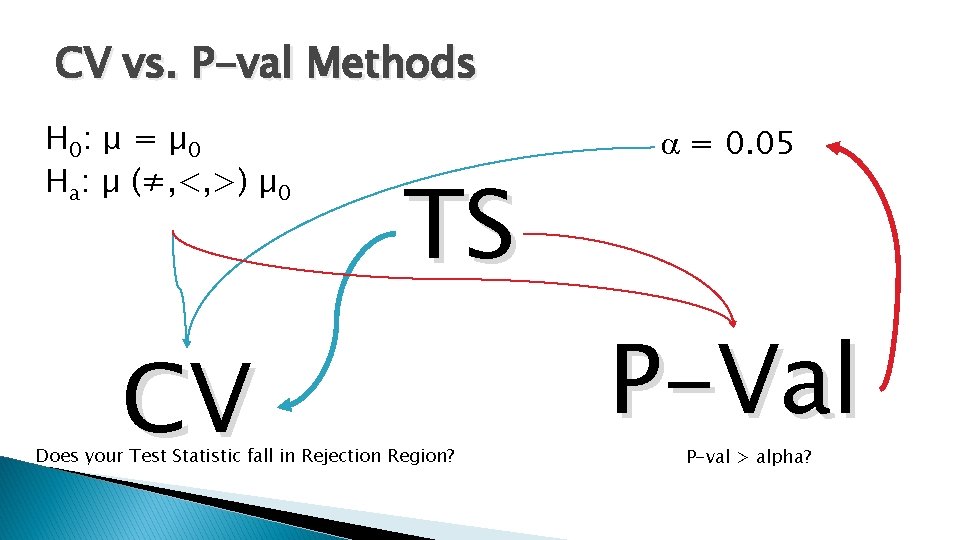

CV vs. P-val Methods H 0: μ = µ 0 Ha: µ (≠, <, >) µ 0 CV TS Does your Test Statistic fall in Rejection Region? a = 0. 05 P-Val P-val > alpha?

CV vs. P-val Methods � They are essential comparing the same thing � The p-value method is preferred in practice because it comes with a measure of emphasis of rejection. � P-values are useful when interpreted correctly and the test is set up properly, however, when used improperly can be misleading.

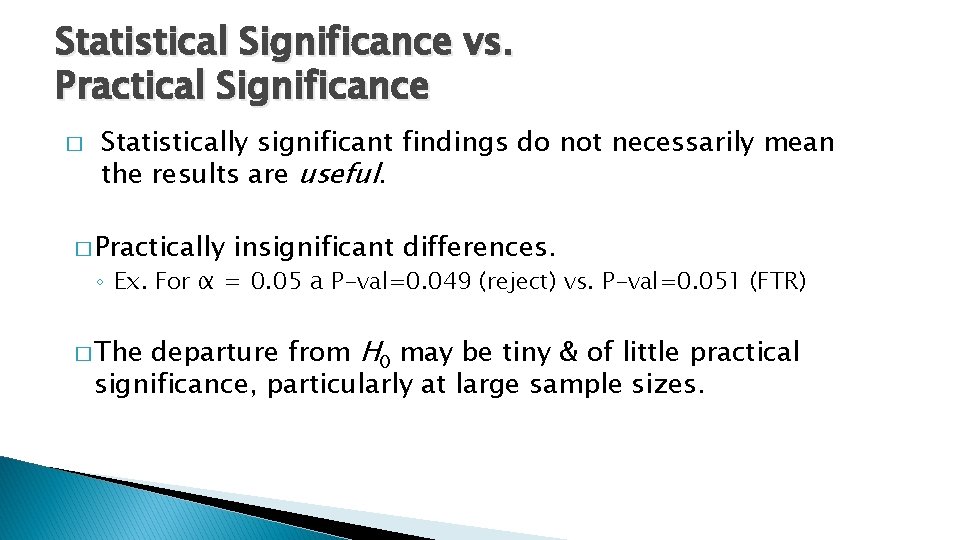

Statistical Significance vs. Practical Significance � Statistically significant findings do not necessarily mean the results are useful. � Practically insignificant differences. ◦ Ex. For α = 0. 05 a P-val=0. 049 (reject) vs. P-val=0. 051 (FTR) departure from H 0 may be tiny & of little practical significance, particularly at large sample sizes. � The

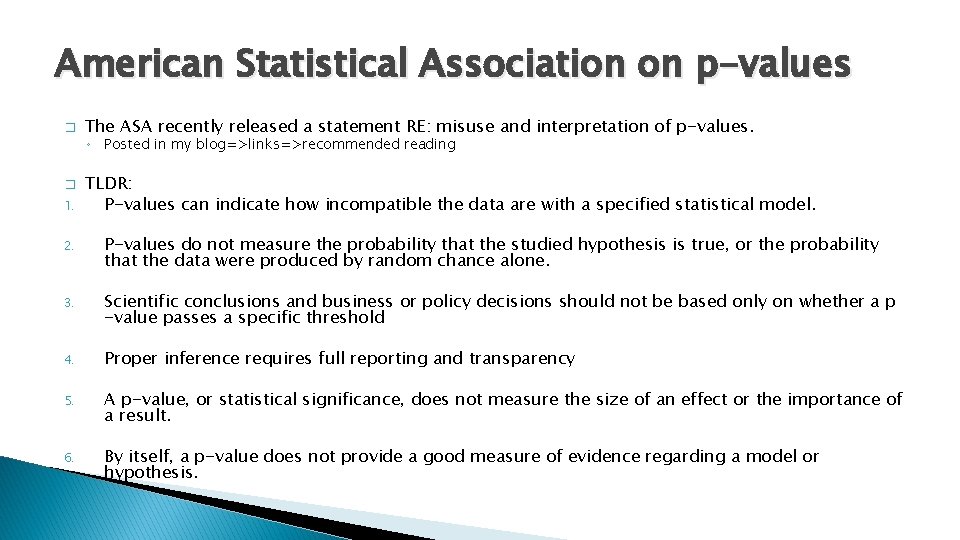

American Statistical Association on p-values � The ASA recently released a statement RE: misuse and interpretation of p-values. ◦ Posted in my blog=>links=>recommended reading � 1. TLDR: P-values can indicate how incompatible the data are with a specified statistical model. 2. P-values do not measure the probability that the studied hypothesis is true, or the probability that the data were produced by random chance alone. 3. Scientific conclusions and business or policy decisions should not be based only on whether a p -value passes a specific threshold 4. Proper inference requires full reporting and transparency 5. A p-value, or statistical significance, does not measure the size of an effect or the importance of a result. 6. By itself, a p-value does not provide a good measure of evidence regarding a model or hypothesis.

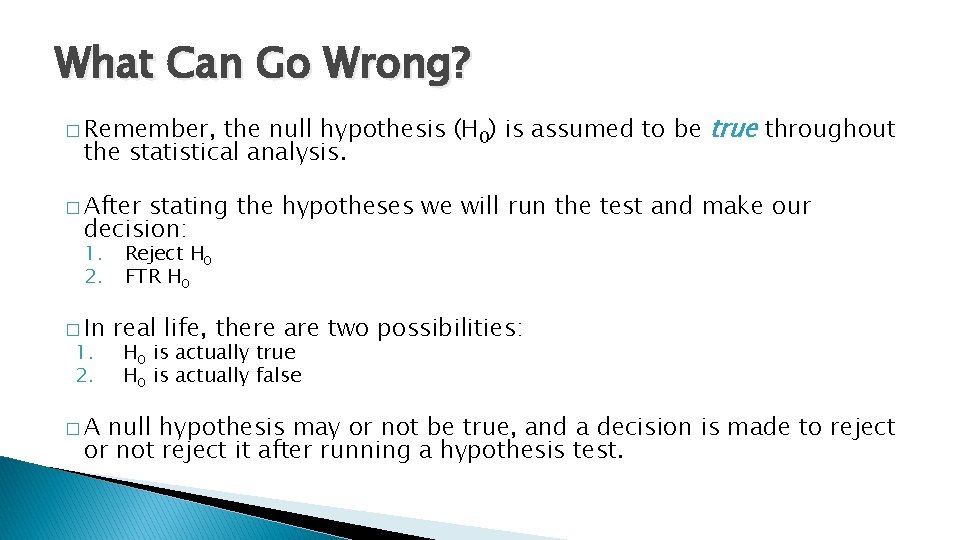

What Can Go Wrong? the null hypothesis (H 0) is assumed to be true throughout the statistical analysis. � Remember, � After stating the hypotheses we will run the test and make our decision: 1. 2. � In 1. 2. �A Reject H 0 FTR H 0 real life, there are two possibilities: H 0 is actually true H 0 is actually false null hypothesis may or not be true, and a decision is made to reject or not reject it after running a hypothesis test.

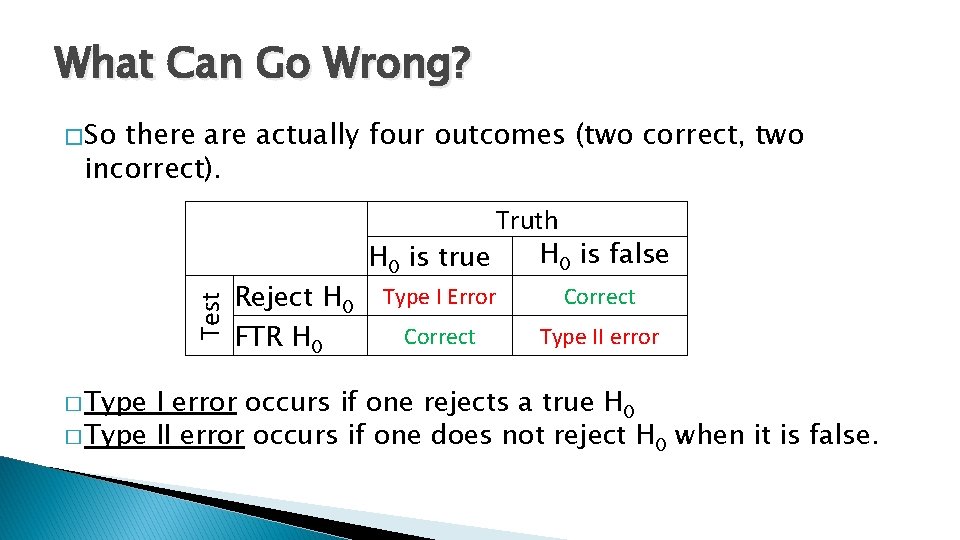

What Can Go Wrong? � So there actually four outcomes (two correct, two incorrect). Test � Type Reject H 0 FTR H 0 Truth H 0 is false H 0 is true Type I Error Correct Type II error occurs if one rejects a true H 0 � Type II error occurs if one does not reject H 0 when it is false.

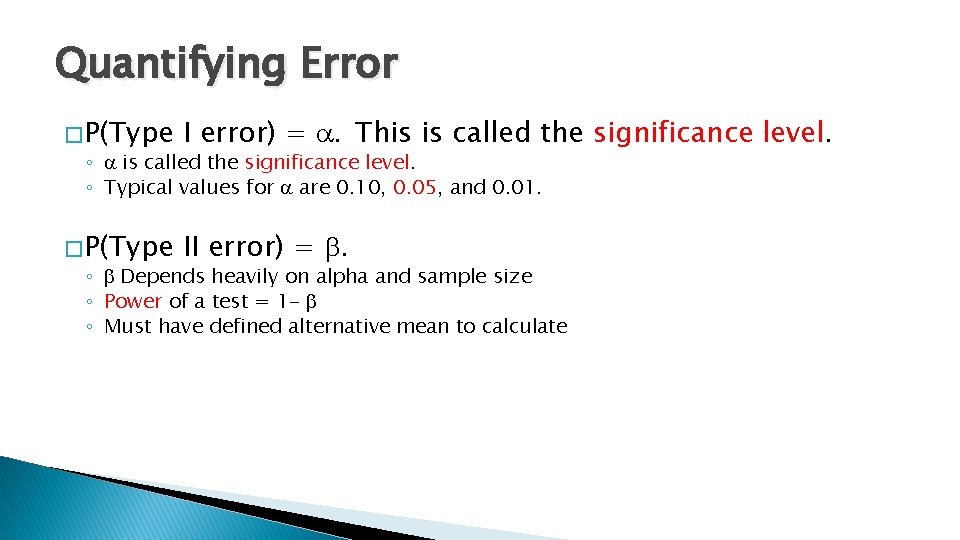

Quantifying Error � P(Type I error) = a. This is called the ◦ a is called the significance level. ◦ Typical values for a are 0. 10, 0. 05, and 0. 01. � P(Type II error) = b. ◦ b Depends heavily on alpha and sample size ◦ Power of a test = 1 - b ◦ Must have defined alternative mean to calculate significance level.

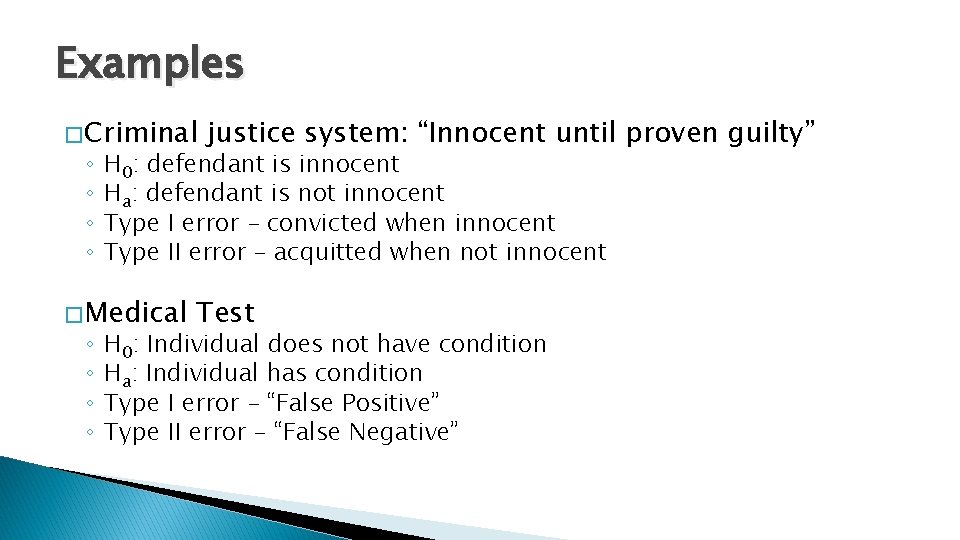

Examples � Criminal ◦ ◦ H 0: defendant is innocent Ha: defendant is not innocent Type I error – convicted when innocent Type II error – acquitted when not innocent � Medical ◦ ◦ justice system: “Innocent until proven guilty” Test H 0: Individual does not have condition Ha: Individual has condition Type I error – “False Positive” Type II error – “False Negative”

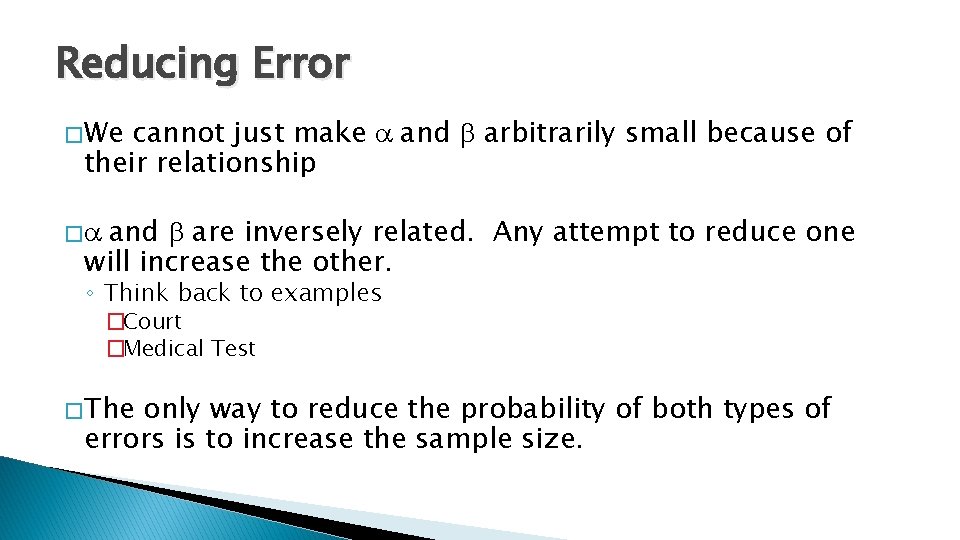

Reducing Error � We cannot just make a and b arbitrarily small because of their relationship and b are inversely related. Any attempt to reduce one will increase the other. �a ◦ Think back to examples �Court �Medical Test � The only way to reduce the probability of both types of errors is to increase the sample size.

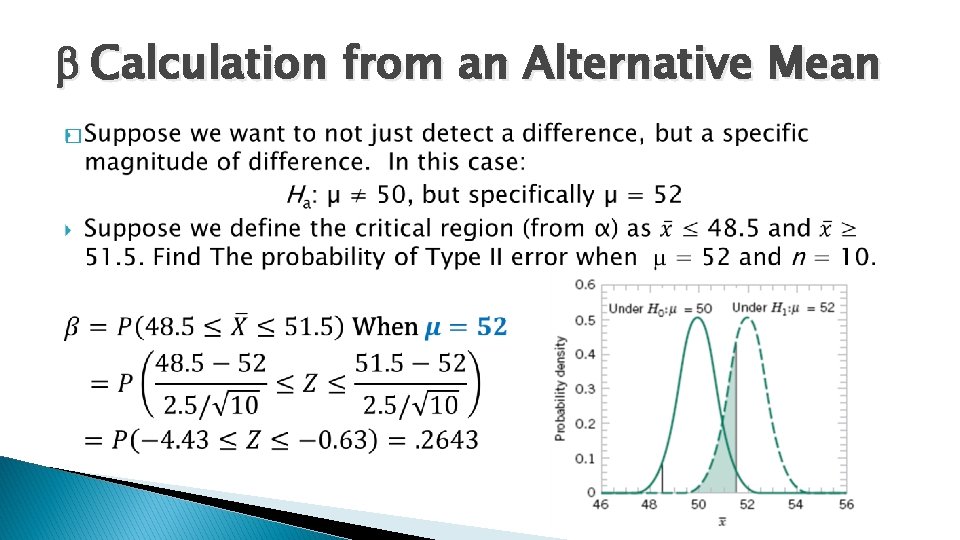

b Calculation from an Alternative Mean �

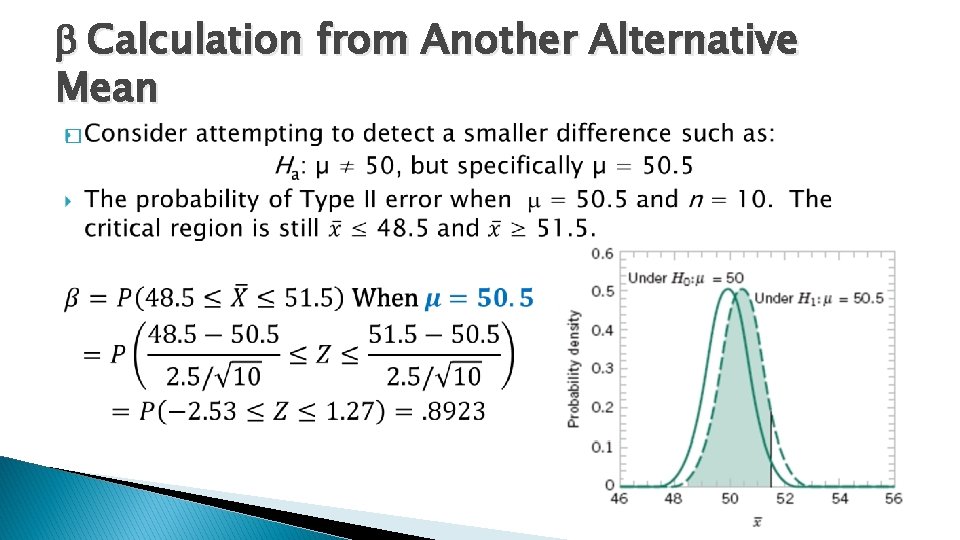

b Calculation from Another Alternative Mean �

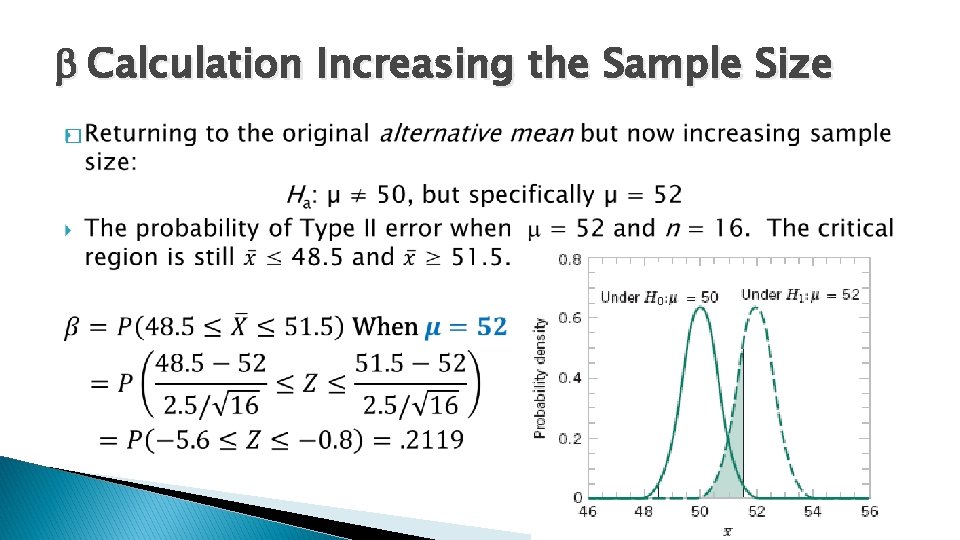

b Calculation Increasing the Sample Size �

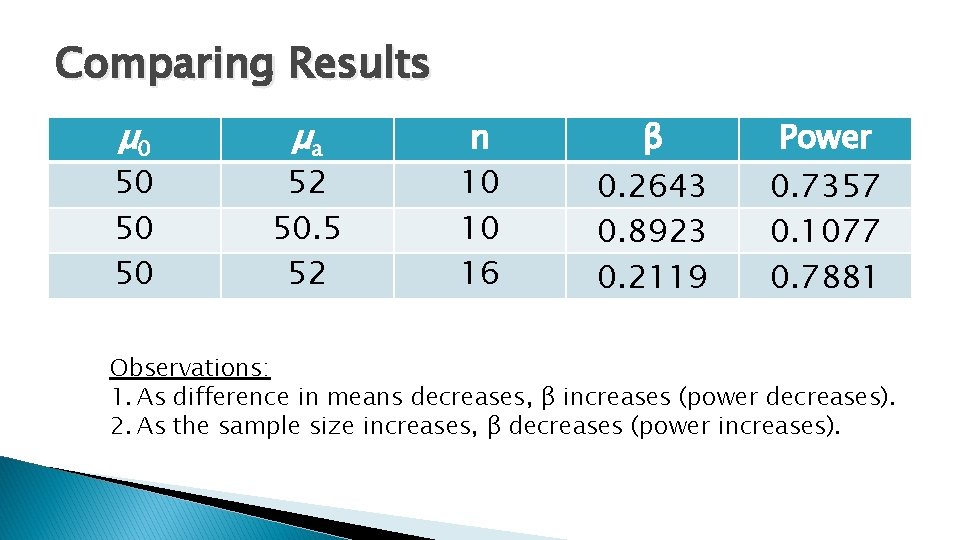

Comparing Results μ 0 50 50 50 μa 52 50. 5 52 n 10 10 16 β Power 0. 2643 0. 8923 0. 2119 0. 7357 0. 1077 0. 7881 Observations: 1. As difference in means decreases, β increases (power decreases). 2. As the sample size increases, β decreases (power increases).

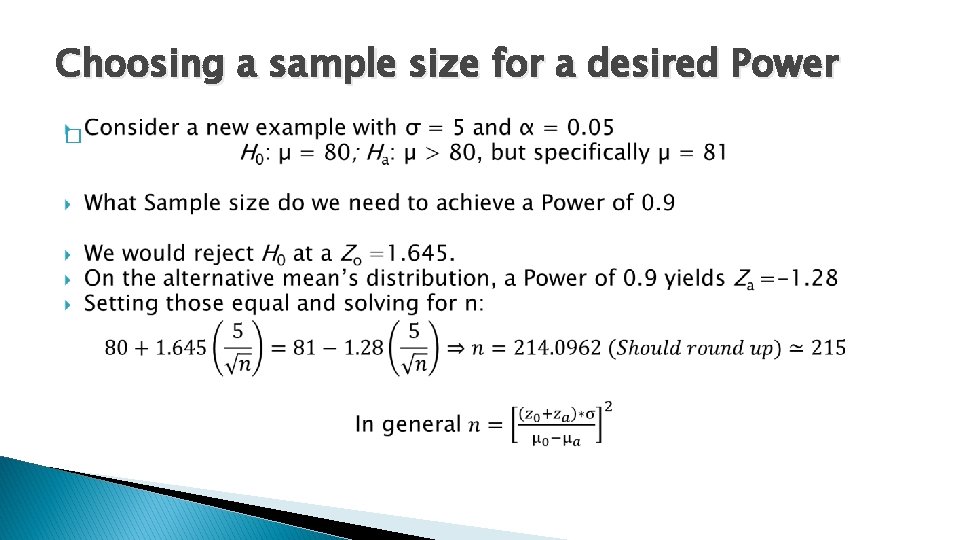

Choosing a sample size for a desired Power �

- Slides: 17