Hyperparameters estimation for Bayesian positive system identification via

Hyperparameters estimation for Bayesian positive system identification via the EM algorithm Zheng Man (D 2) 2019. 7. 1

outline 1. introduction 2. Previous results about positive system identification 3. EM algorithm 4. Hyperparameter estimation via the EM algorithm 5. Simulations 6. Conclusion and future work

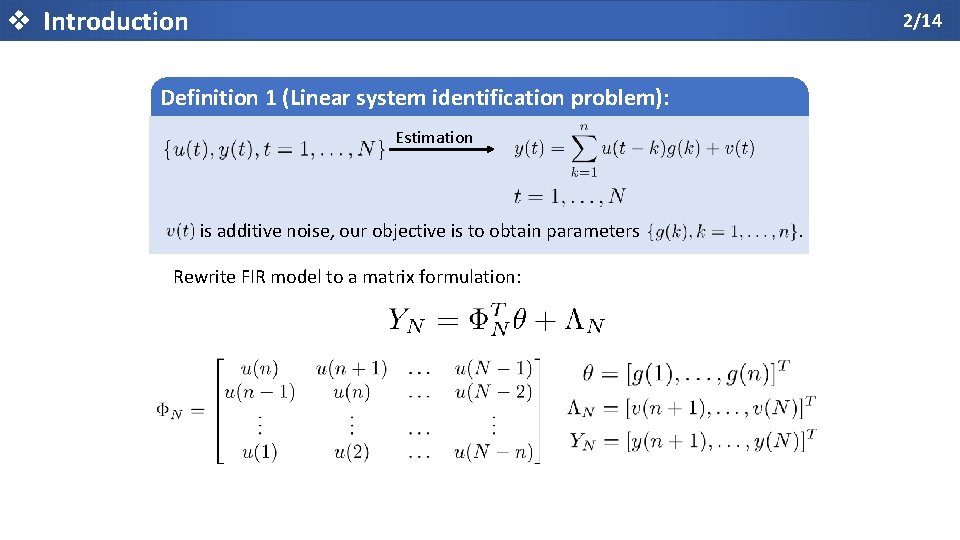

v Introduction 2/14 Definition 1 (Linear system identification problem): Estimation is additive noise, our objective is to obtain parameters Rewrite FIR model to a matrix formulation: .

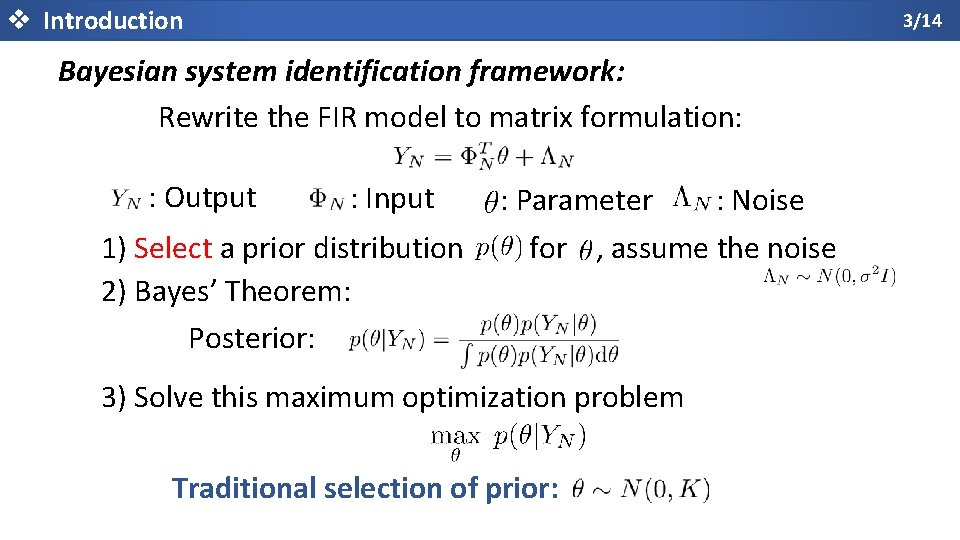

v Introduction 3/14 Bayesian system identification framework: Rewrite the FIR model to matrix formulation: : Output : Input 1) Select a prior distribution 2) Bayes’ Theorem: Posterior: : Parameter : Noise for , assume the noise 3) Solve this maximum optimization problem Traditional selection of prior:

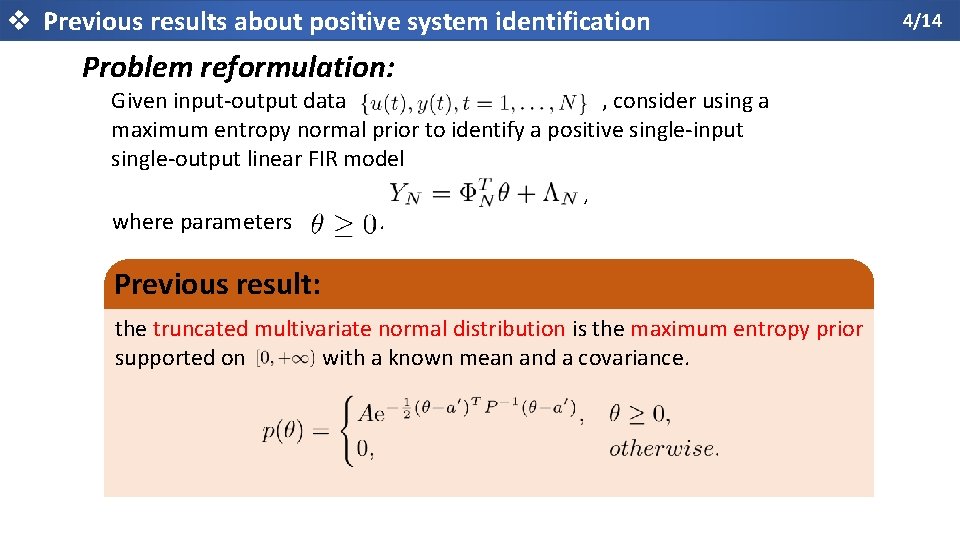

v Previous results about positive system identification Problem reformulation: Given input-output data , consider using a maximum entropy normal prior to identify a positive single-input single-output linear FIR model where parameters . , Previous result: the truncated multivariate normal distribution is the maximum entropy prior supported on with a known mean and a covariance. 4/14

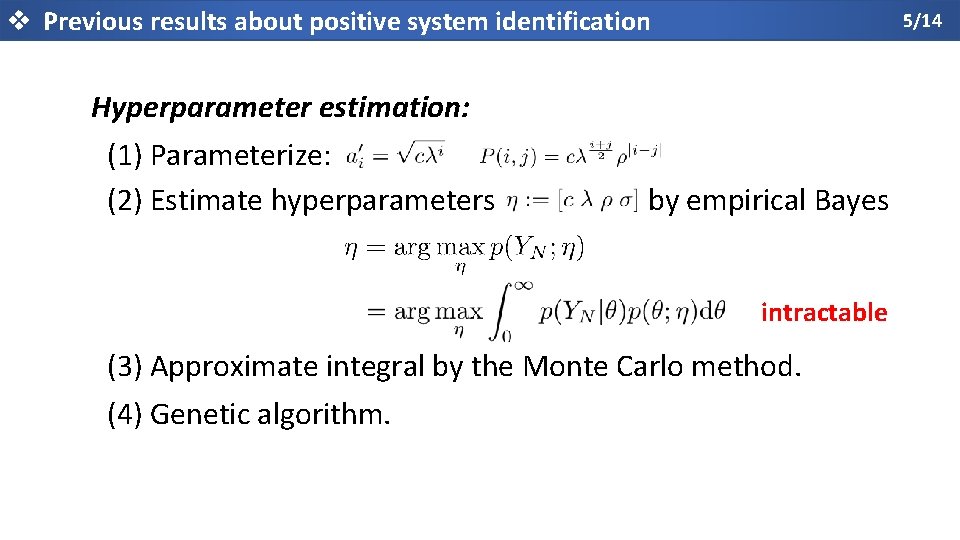

v Previous results about positive system identification 5/14 Hyperparameter estimation: (1) Parameterize: (2) Estimate hyperparameters by empirical Bayes intractable (3) Approximate integral by the Monte Carlo method. (4) Genetic algorithm.

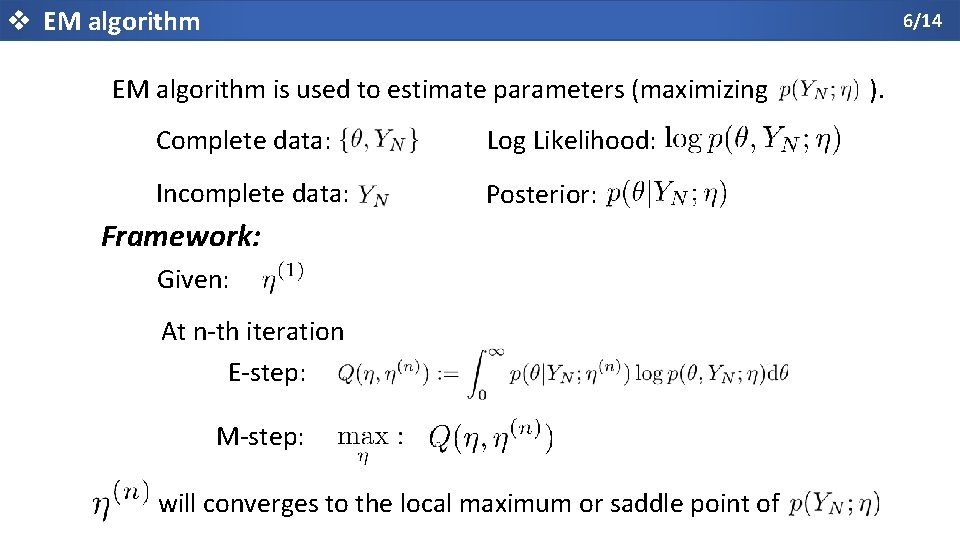

v EM algorithm 6/14 EM algorithm is used to estimate parameters (maximizing Complete data: Log Likelihood: Incomplete data: Posterior: Framework: Given: At n-th iteration E-step: M-step: will converges to the local maximum or saddle point of ).

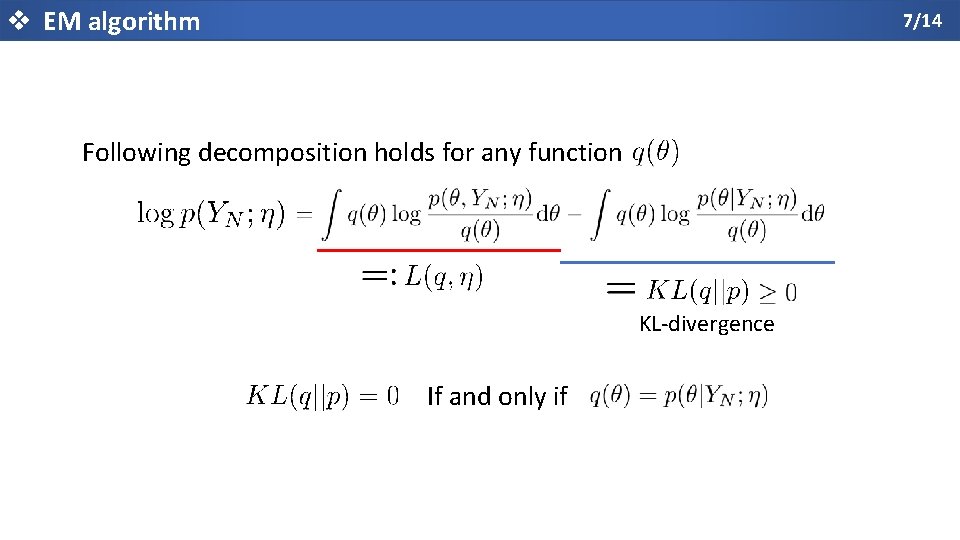

v EM algorithm 7/14 Following decomposition holds for any function KL-divergence If and only if

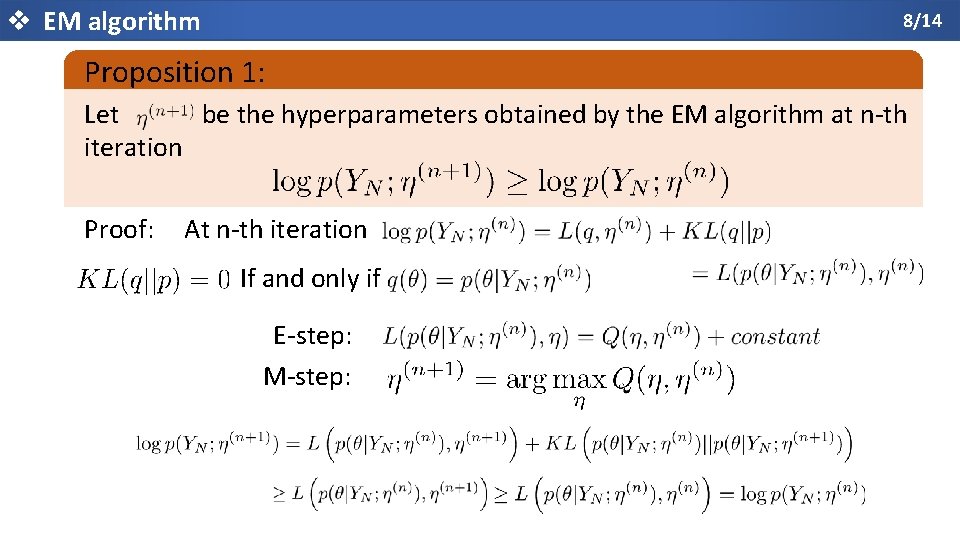

v EM algorithm 8/14 Proposition 1: Let be the hyperparameters obtained by the EM algorithm at n-th iteration Proof: At n-th iteration If and only if E-step: M-step:

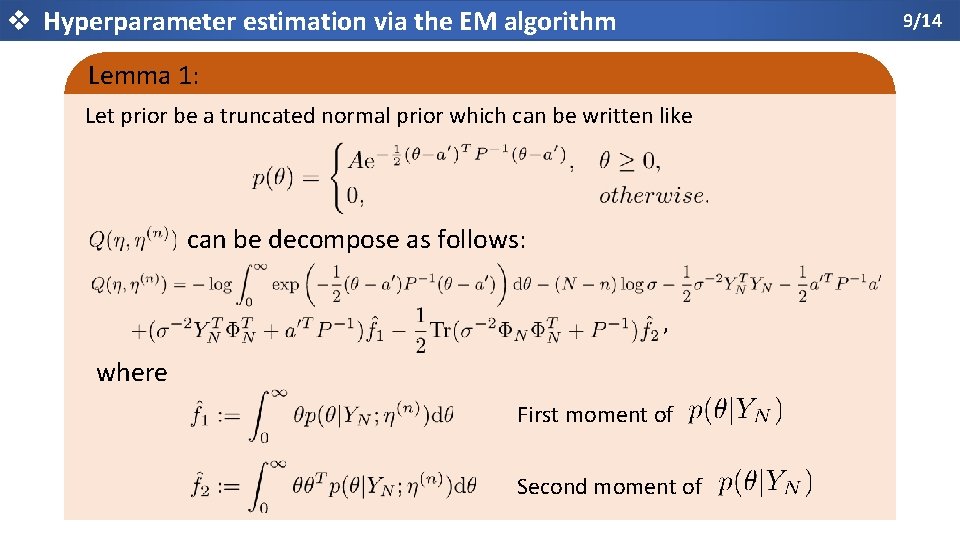

v Hyperparameter estimation via the EM algorithm 9/14 Lemma 1: Let prior be a truncated normal prior which can be written like can be decompose as follows: , where First moment of Second moment of

v Hyperparameter estimation via the EM algorithm 10/14 Remark: and can be calculated by the probability density function (pdf) and the cumulative density function (cdf) of a Gaussian distribution. E-step M-step (Gradient descent) is a fixed small value

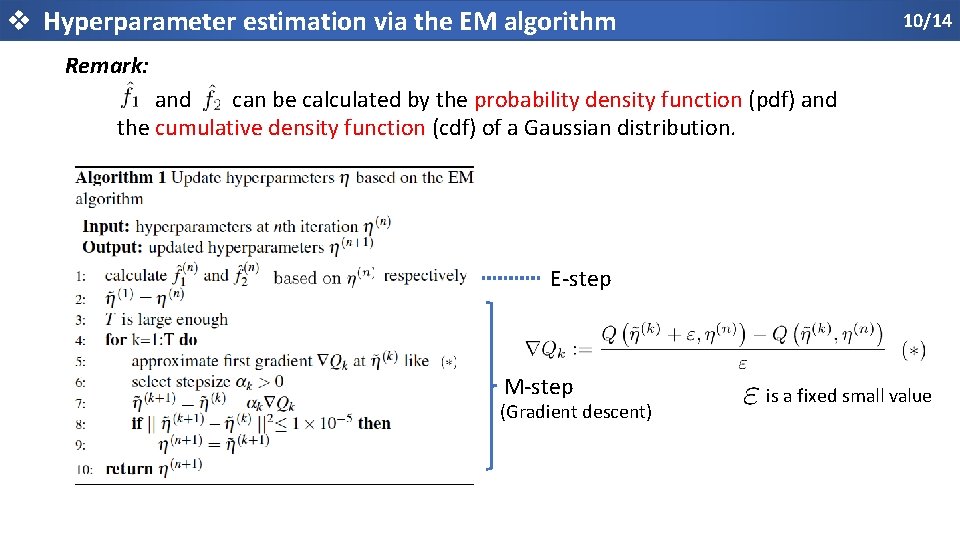

v Simulations 11/14 Test Function: Positive system Figure 1. Impulse responses of the test function .

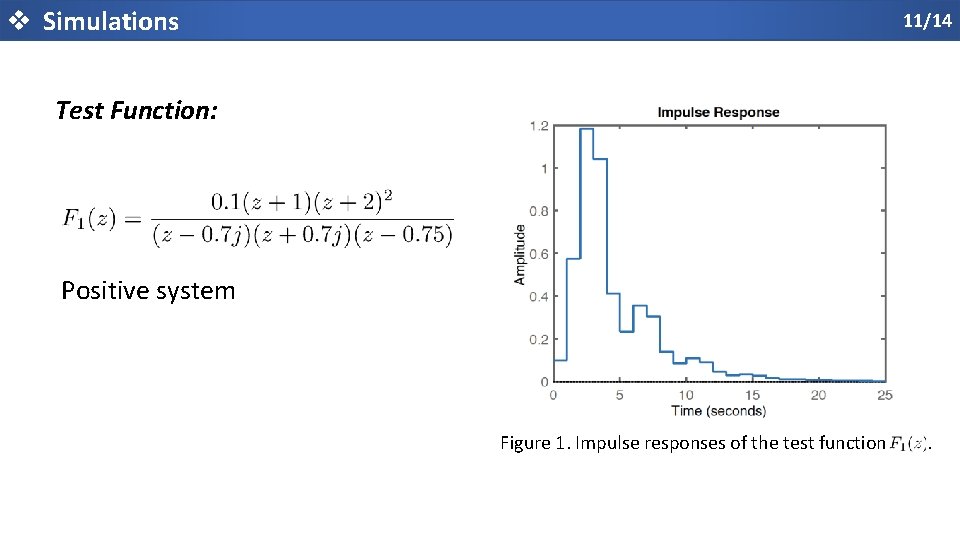

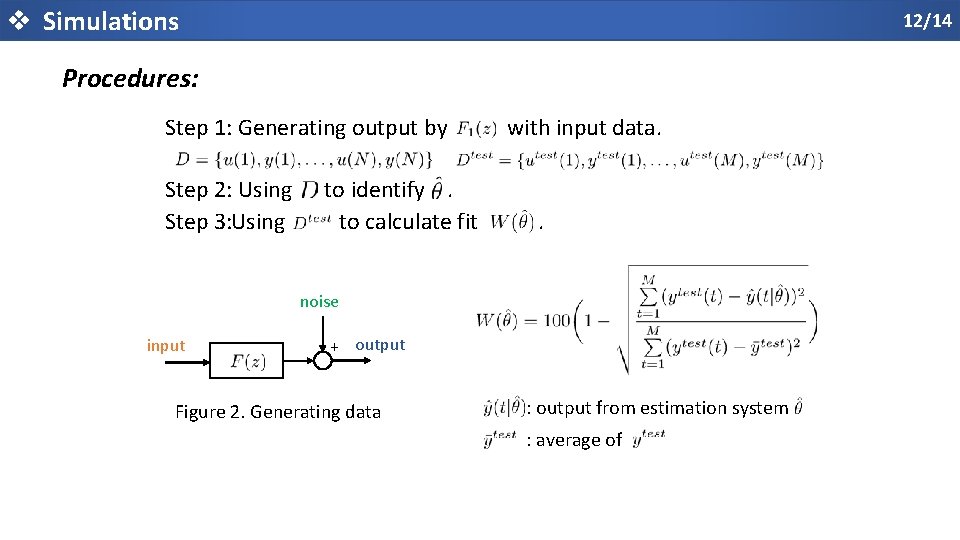

v Simulations 12/14 Procedures: Step 1: Generating output by Step 2: Using Step 3: Using to identify. to calculate fit with input data. . noise input + output Figure 2. Generating data : output from estimation system : average of

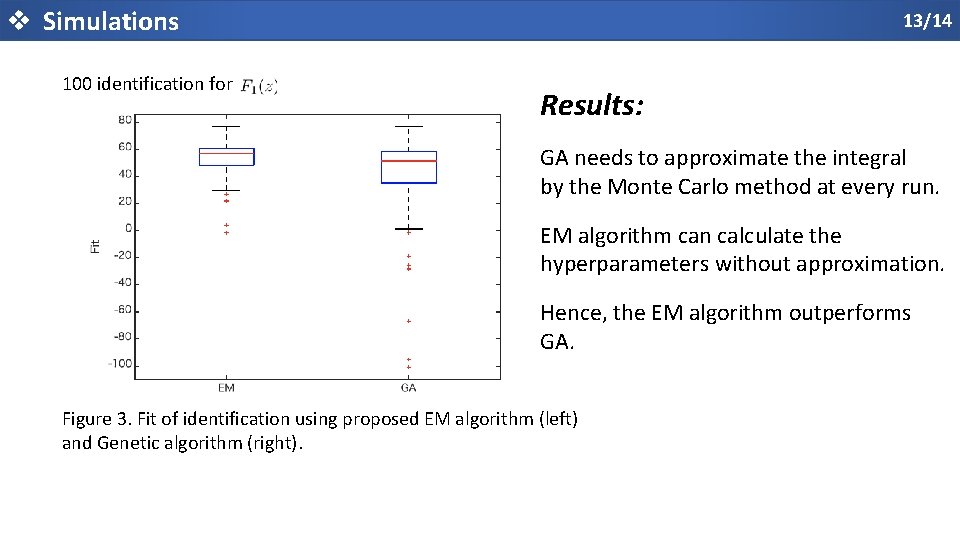

v Simulations 100 identification for 13/14 Results: GA needs to approximate the integral by the Monte Carlo method at every run. EM algorithm can calculate the hyperparameters without approximation. Hence, the EM algorithm outperforms GA. Figure 3. Fit of identification using proposed EM algorithm (left) and Genetic algorithm (right).

v Conclusion and future work 14/14 Conclusion: v This paper proposes the EM algorithm to solve the hyperparameter estimations problems. Future work: v Continue the research about another noninformative prior, i. e. , the reference prior.

- Slides: 15