Hwajung Lee ITEC 452 Distributed Computing Lecture 3

Hwajung Lee ITEC 452 Distributed Computing Lecture 3 Models in Distributed Systems

Why do we need models? Models are simple abstractions that help understand the variability -- abstractions that preserve the essential features, but hide the implementation details from observers who view the system at a higher level. Results derived from an abstract model is applicable to a wide range of platforms.

Two Primary Models Two primary models that capture the essence of interprocess communication: Message-passing Model Shared-memory Model

Message Passing Model (1) Process actions in a distributed system can be represented in the following notation: System topology is a graph G = (V, E), where V = set of nodes (sequential processes) E = set of edges (links or channels, bi/unidirectional)

Message Passing Model (2) Four types of actions by a process: Internal action ▪ a process performs computations in its own address space resulting in the modification of one or more of its local variables. Communication action ▪ a process sends a message to another process or receives a message from another process Input action ▪ A process reads data from sources external to the system Output action ▪ A process sends data outside of the system (called “environment”)

Message Passing Model (3) Possible Dimension of Variability in Distributed Systems Type of Channels ▪ Point-t 0 -point or not ▪ Reliable vs. Unreliable channels Type of Systems ▪ Synchronous vs. Asynchronous systems

Message Passing Model (4) Type of Channels in a Message Passing Model Message propagate along directed edges called channels Communications are assumed to be point-topoint (a broadcasting is considered as a set of point-to-point communications) Reliable vs. unreliable channels ▪ Reliable channel : a loss of corruption of message is not considered ▪ Unreliable channel: It will be considered in a later chapter

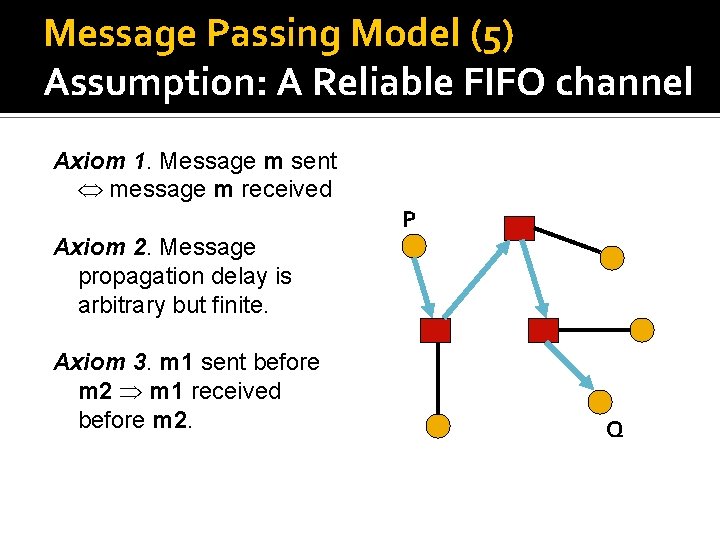

Message Passing Model (5) Assumption: A Reliable FIFO channel Axiom 1. Message m sent message m received Axiom 2. Message propagation delay is arbitrary but finite. Axiom 3. m 1 sent before m 2 m 1 received before m 2. P Q

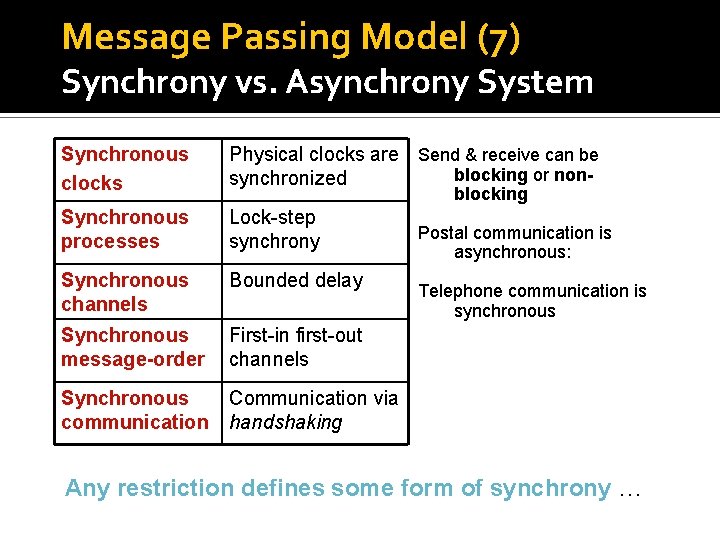

Message Passing Model (6) Type of Systems: Synchrony vs. Asynchrony Broad notion of Synchrony Senders and receivers maintain synchronized clocks and operating with a rigid temporal relationship. However, in practice, there are many aspects of synchrony, and transition from a fully asynchronous to a fully synchronous model is a gradual one. Thus, we will see a few examples of the behaviors characterizing a synchronous systems.

Message Passing Model (7) Synchrony vs. Asynchrony System Synchronous clocks Physical clocks are Send & receive can be blocking or nonsynchronized Synchronous processes Lock-step synchrony Synchronous channels Bounded delay Synchronous message-order First-in first-out channels Synchronous communication Communication via handshaking blocking Postal communication is asynchronous: Telephone communication is synchronous Any restriction defines some form of synchrony …

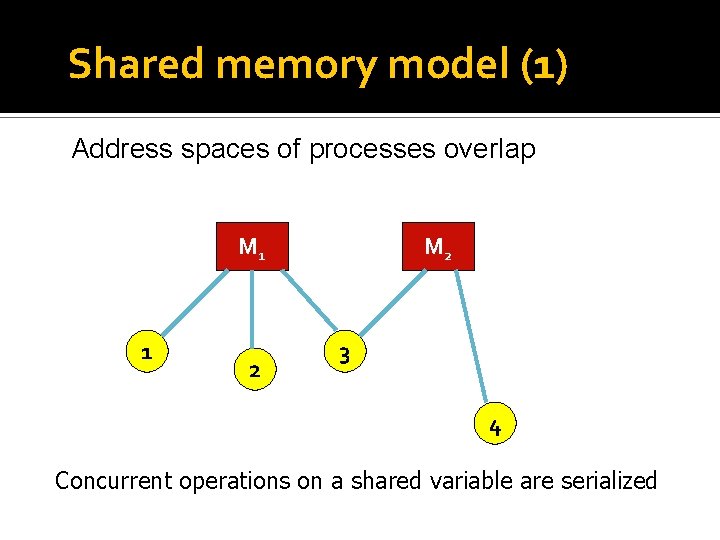

Shared memory model (1) Address spaces of processes overlap M 1 1 2 M 2 3 4 Concurrent operations on a shared variable are serialized

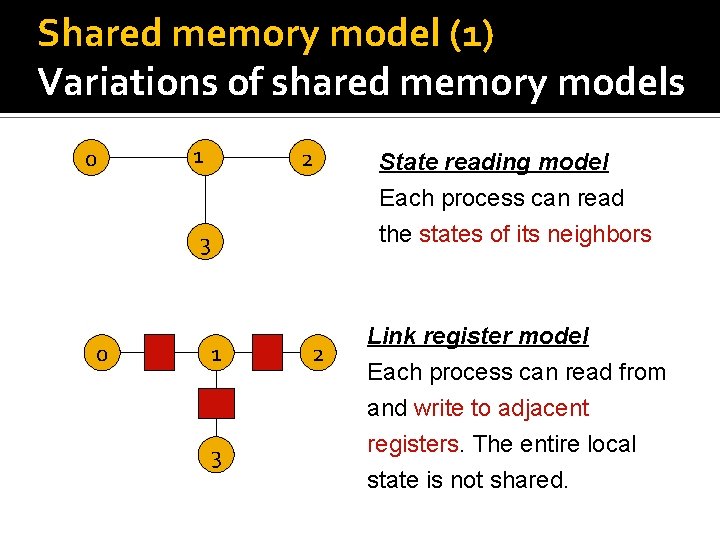

Shared memory model (1) Variations of shared memory models 0 1 2 3 0 1 3 2 State reading model Each process can read the states of its neighbors Link register model Each process can read from and write to adjacent registers. The entire local state is not shared.

Modeling wireless networks Communication Limited via broadcast range Dynamic topology

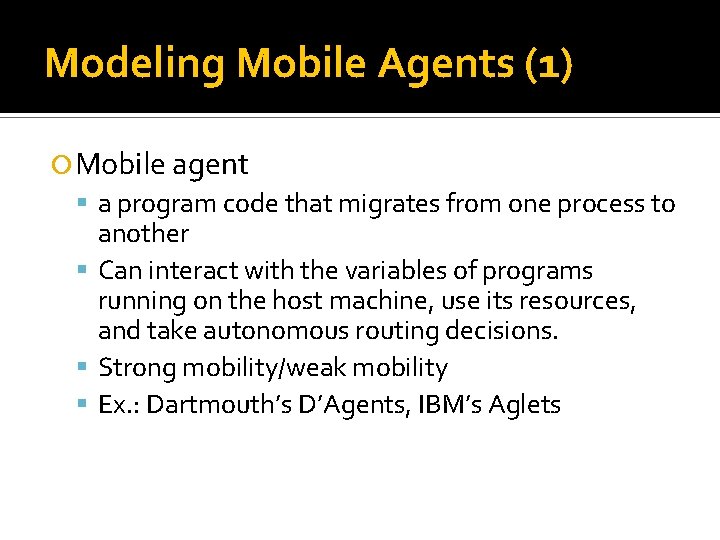

Modeling Mobile Agents (1) Mobile agent a program code that migrates from one process to another Can interact with the variables of programs running on the host machine, use its resources, and take autonomous routing decisions. Strong mobility/weak mobility Ex. : Dartmouth’s D’Agents, IBM’s Aglets

Modeling Mobile Agents (2) Model: (I, P, and B) I is the agent identifier and is unique for every agent. P designates the agent program B is the briefcase and represent the data variables to be used by the agent

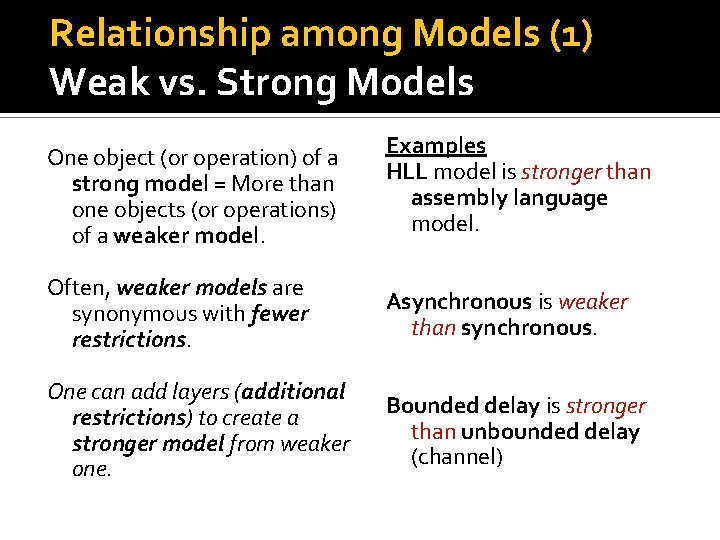

Relationship among Models (1) Weak vs. Strong Models One object (or operation) of a strong model = More than one objects (or operations) of a weaker model. Examples HLL model is stronger than assembly language model. Often, weaker models are synonymous with fewer restrictions. Asynchronous is weaker than synchronous. One can add layers (additional restrictions) to create a stronger model from weaker one. Bounded delay is stronger than unbounded delay (channel)

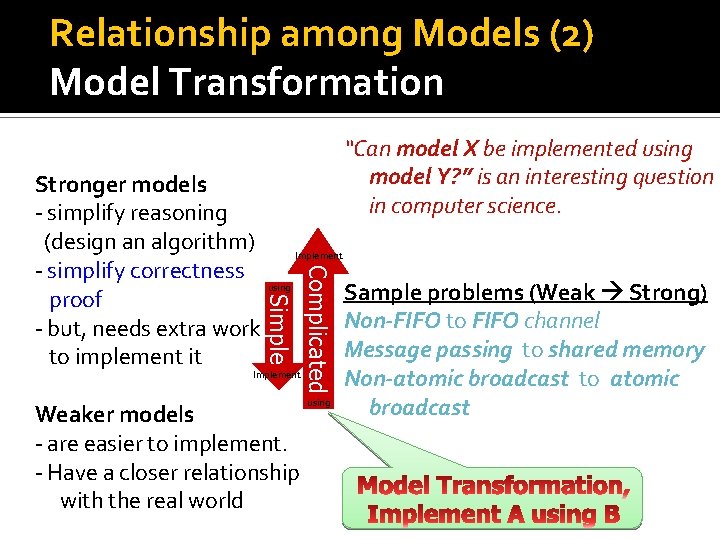

Relationship among Models (2) Model Transformation “Can model X be implemented using model Y? ” is an interesting question in computer science. Complicated Simple Stronger models - simplify reasoning (design an algorithm) Implement - simplify correctness using Sample problems (Weak Strong) proof Non-FIFO to FIFO channel - but, needs extra work Message passing to shared memory to implement it Implement Non-atomic broadcast to atomic using broadcast Weaker models - are easier to implement. - Have a closer relationship with the real world

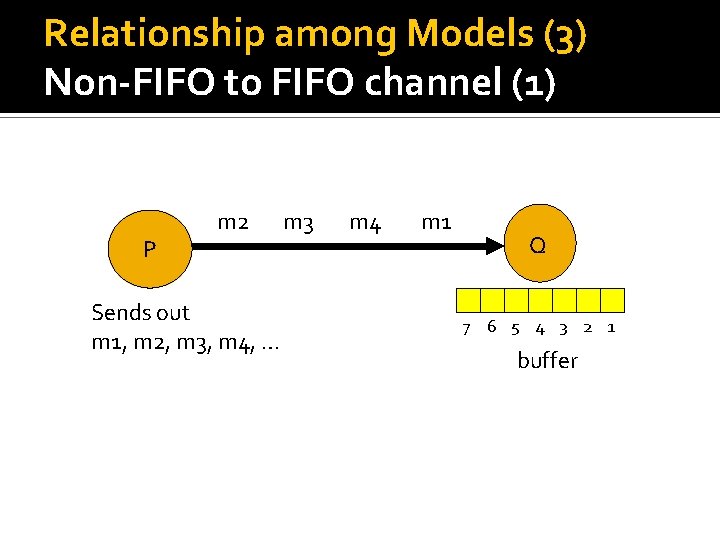

Relationship among Models (3) Non-FIFO to FIFO channel (1) P m 2 Sends out m 1, m 2, m 3, m 4, … m 3 m 4 m 1 Q 7 6 5 4 3 2 1 buffer

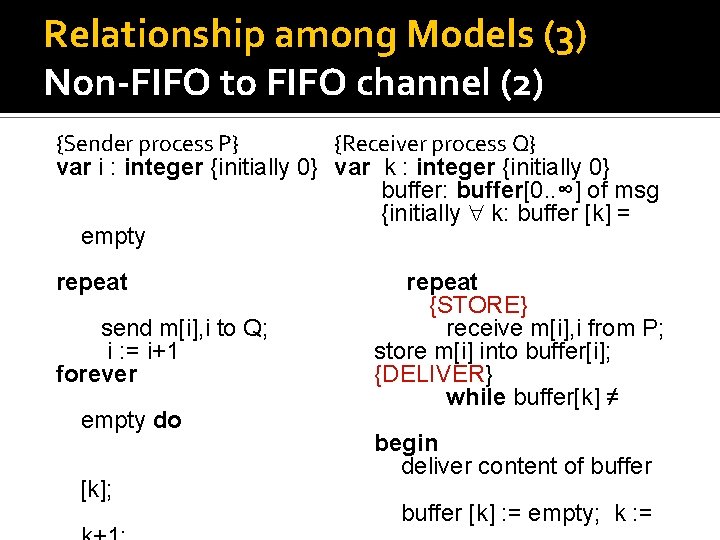

Relationship among Models (3) Non-FIFO to FIFO channel (2) {Sender process P} {Receiver process Q} var i : integer {initially 0} var k : integer {initially 0} buffer: buffer[0. . ∞] of msg {initially k: buffer [k] = empty repeat send m[i], i to Q; i : = i+1 forever empty do [k]; repeat {STORE} receive m[i], i from P; store m[i] into buffer[i]; {DELIVER} while buffer[k] ≠ begin deliver content of buffer [k] : = empty; k : =

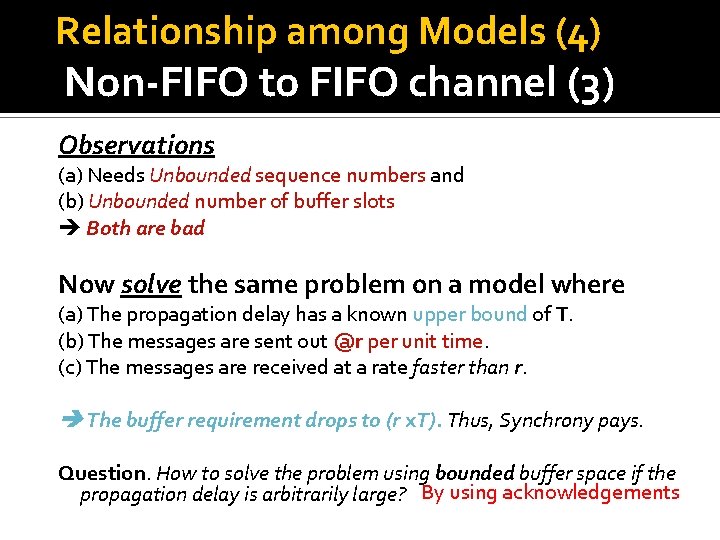

Relationship among Models (4) Non-FIFO to FIFO channel (3) Observations (a) Needs Unbounded sequence numbers and (b) Unbounded number of buffer slots Both are bad Now solve the same problem on a model where (a) The propagation delay has a known upper bound of T. (b) The messages are sent out @r per unit time. (c) The messages are received at a rate faster than r. The buffer requirement drops to (r x. T). Thus, Synchrony pays. Question. How to solve the problem using bounded buffer space if the propagation delay is arbitrarily large? By using acknowledgements

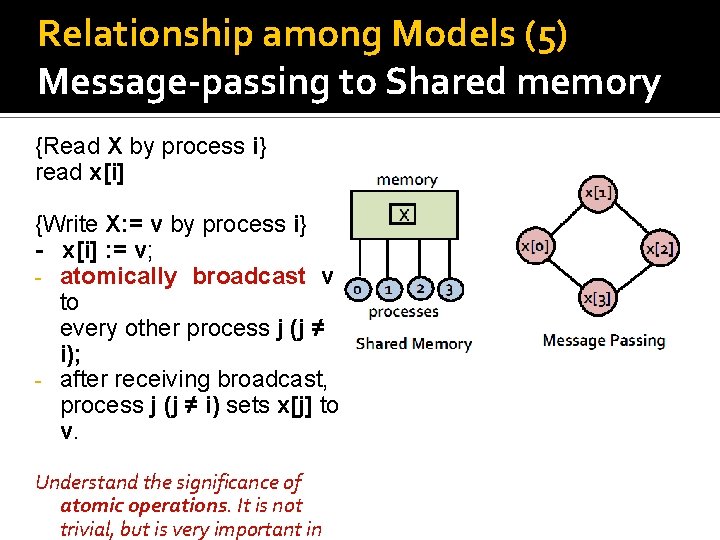

Relationship among Models (5) Message-passing to Shared memory {Read X by process i} read x[i] {Write X: = v by process i} - x[i] : = v; - atomically broadcast v to every other process j (j ≠ i); - after receiving broadcast, process j (j ≠ i) sets x[j] to v. Understand the significance of atomic operations. It is not trivial, but is very important in

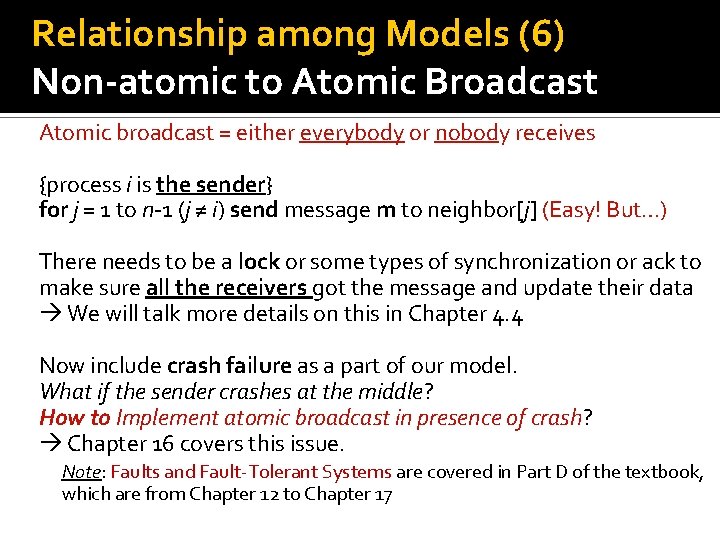

Relationship among Models (6) Non-atomic to Atomic Broadcast Atomic broadcast = either everybody or nobody receives {process i is the sender} for j = 1 to n-1 (j ≠ i) send message m to neighbor[j] (Easy! But…) There needs to be a lock or some types of synchronization or ack to make sure all the receivers got the message and update their data We will talk more details on this in Chapter 4. 4 Now include crash failure as a part of our model. What if the sender crashes at the middle? How to Implement atomic broadcast in presence of crash? Chapter 16 covers this issue. Note: Faults and Fault-Tolerant Systems are covered in Part D of the textbook, which are from Chapter 12 to Chapter 17

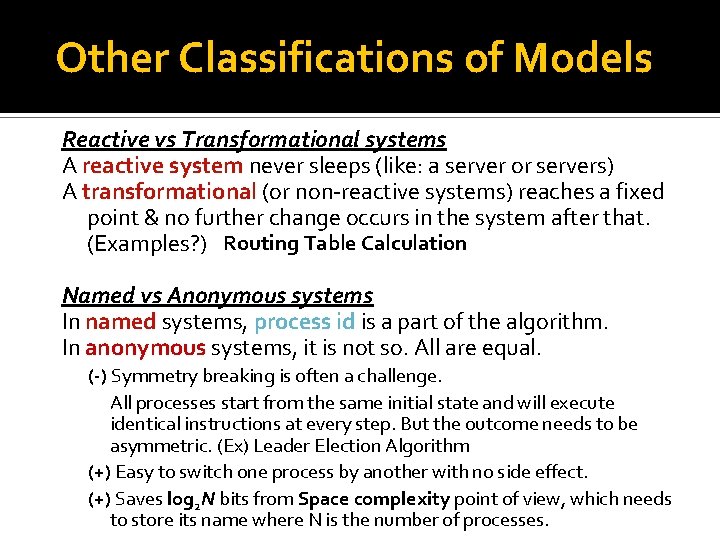

Other Classifications of Models Reactive vs Transformational systems A reactive system never sleeps (like: a server or servers) A transformational (or non-reactive systems) reaches a fixed point & no further change occurs in the system after that. (Examples? ) Routing Table Calculation Named vs Anonymous systems In named systems, process id is a part of the algorithm. In anonymous systems, it is not so. All are equal. (-) Symmetry breaking is often a challenge. All processes start from the same initial state and will execute identical instructions at every step. But the outcome needs to be asymmetric. (Ex) Leader Election Algorithm (+) Easy to switch one process by another with no side effect. (+) Saves log 2 N bits from Space complexity point of view, which needs to store its name where N is the number of processes.

Complexity Measures (1) Common Measures Space complexity How much space is needed per process to run an algorithm? (measured in terms of N, the size of the network) Time complexity What is the max. time (number of steps) needed to complete the execution of the algorithm? Message complexity How many message are exchanged to complete the execution of the algorithm?

Complexity Measures (2) Other Measures Bit complexity Measures how many bits are transmitted when the algorithm runs. It may be a better measure, since messages may be of arbitrary size.

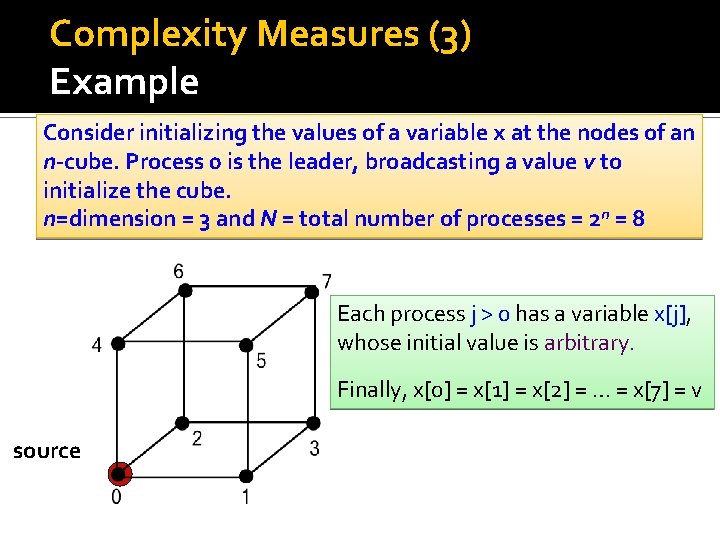

Complexity Measures (3) Example Consider initializing the values of a variable x at the nodes of an n-cube. Process 0 is the leader, broadcasting a value v to initialize the cube. n=dimension = 3 and N = total number of processes = 2 n = 8 Each process j > 0 has a variable x[j], whose initial value is arbitrary. Finally, x[0] = x[1] = x[2] = … = x[7] = v source

![Complexity Measures (4) Broadcasting using Messages {Process 0} m. value : = x[0]; send Complexity Measures (4) Broadcasting using Messages {Process 0} m. value : = x[0]; send](http://slidetodoc.com/presentation_image_h/84f0fa89069ef9cdf9f4aed3f87f94a6/image-27.jpg)

Complexity Measures (4) Broadcasting using Messages {Process 0} m. value : = x[0]; send m to all neighbors {Process i > 0} repeat receive m {m contains the value}; if m is received for the first time then x[i] : = m. value; send x[i] to each neighbor j >I m else discard m end if forever m m Number of edges=|E| What is the (1) message complexity =1/2*N log 2 N (2) space complexity per process?

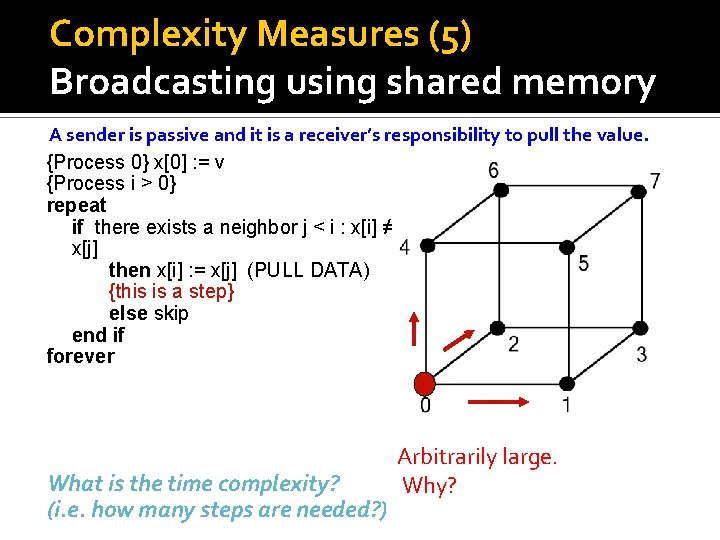

Complexity Measures (5) Broadcasting using shared memory A sender is passive and it is a receiver’s responsibility to pull the value. {Process 0} x[0] : = v {Process i > 0} repeat if there exists a neighbor j < i : x[i] ≠ x[j] then x[i] : = x[j] (PULL DATA) {this is a step} else skip end if forever What is the time complexity? (i. e. how many steps are needed? ) Arbitrarily large. Why?

![Complexity Measures (6) Broadcasting using shared memory {Process 0} x[0] : = v {Process Complexity Measures (6) Broadcasting using shared memory {Process 0} x[0] : = v {Process](http://slidetodoc.com/presentation_image_h/84f0fa89069ef9cdf9f4aed3f87f94a6/image-29.jpg)

Complexity Measures (6) Broadcasting using shared memory {Process 0} x[0] : = v {Process i > 0} repeat if there exists a neighbor j < i : x[i] ≠ x[j] then x[i] : = x[j] (PULL DATA) {this is a step} else skip end if forever 99 15 12 27 10 53 14 32 Node 7 can keep copying from 3, 5, 6 indefinitely long before the value in node 0 is eventually copied into it.

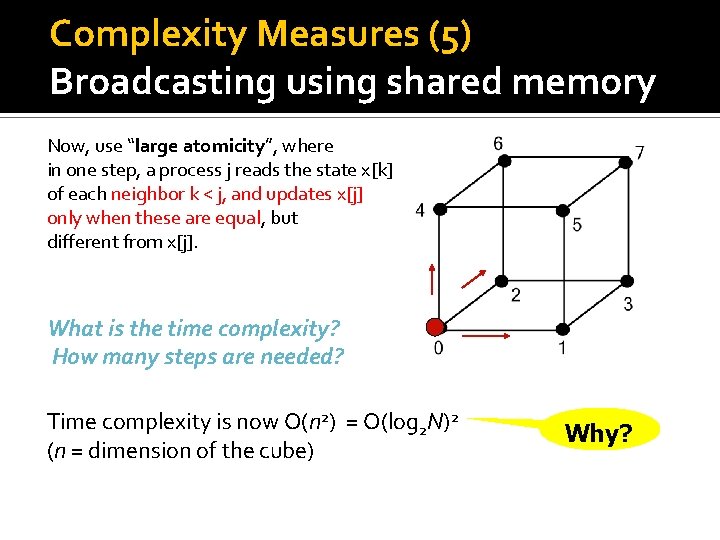

Complexity Measures (5) Broadcasting using shared memory Now, use “large atomicity”, where in one step, a process j reads the state x[k] of each neighbor k < j, and updates x[j] only when these are equal, but different from x[j]. What is the time complexity? How many steps are needed? Time complexity is now O(n 2) = O(log 2 N)2 (n = dimension of the cube) Why?

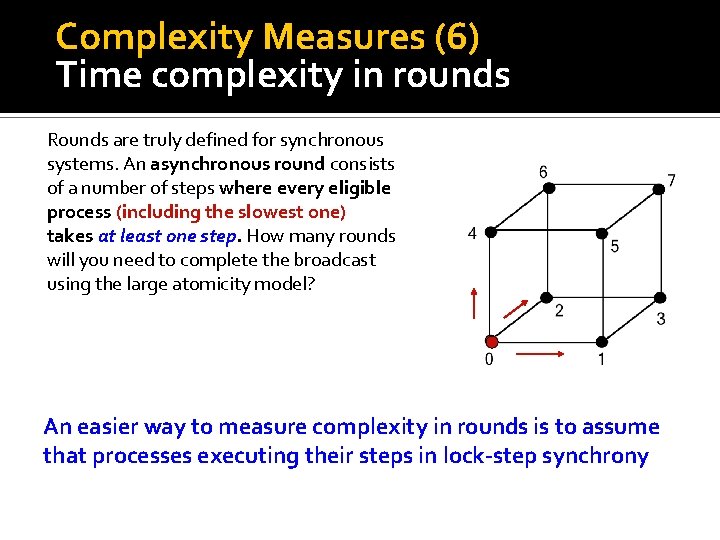

Complexity Measures (6) Time complexity in rounds Rounds are truly defined for synchronous systems. An asynchronous round consists of a number of steps where every eligible process (including the slowest one) takes at least one step. How many rounds will you need to complete the broadcast using the large atomicity model? An easier way to measure complexity in rounds is to assume that processes executing their steps in lock-step synchrony

- Slides: 31