http miblsi cenmi org Mi BLSi State Implementers

http: //miblsi. cenmi. org Mi. BLSi State Implementers’ Conference Overview of DIBELS Next Survey & Deep

Acknowledgements The material for this training day was developed by… – Dynamic Measurement Group • Kelly Powell-Smith, Ph. D • Stephanie Stollar, Ph. D – Cathy Claes – Terri Metcalf – Pam Jones Content was based on the work of… – The Dynamic Measurement Group With permission, some slides are adapted directly from The DMG DIBELS Next Survey and Deep Training materials 2

Intended Outcomes Participants will: • Understand the purpose of DIBELS Next Survey and Deep • See how both tools fit into an Outcomes Driven Model and School-wide Assessment System • Become familiar with the design and basic administration of each tool • Understand how the data can be used to make decisions 3

Agenda I. Overview of DIBELS Next Survey I. III. IV. Purpose The Outcomes Driven Model Overview of Design & Administration Making Decisions II. Overview of DIBELS Deep I. III. IV. Purpose The Outcomes Driven Model Overview of Design & Administration Making Decisions 4

DIBELS Next Survey ® • Consider conducting DIBELS Next Survey when you don't have enough information to select progress monitoring materials or when you believe out-ofgrade level materials may be most appropriate for monitoring and need to select the most appropriate level. • Purpose(s): – To identify a student's instructional level and appropriate level for progress monitoring – To set goals and make instructional decisions Dynamic Measurement Group 5

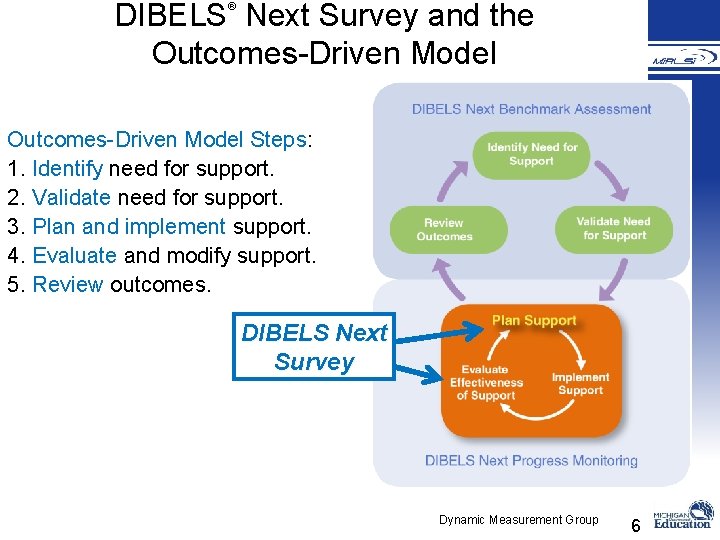

DIBELS Next Survey and the Outcomes-Driven Model ® Outcomes-Driven Model Steps: 1. Identify need for support. 2. Validate need for support. 3. Plan and implement support. 4. Evaluate and modify support. 5. Review outcomes. DIBELS Next Survey Dynamic Measurement Group 6

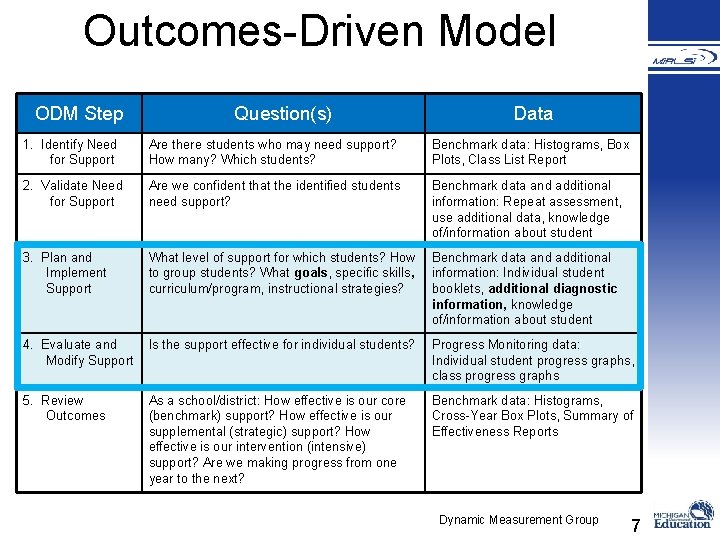

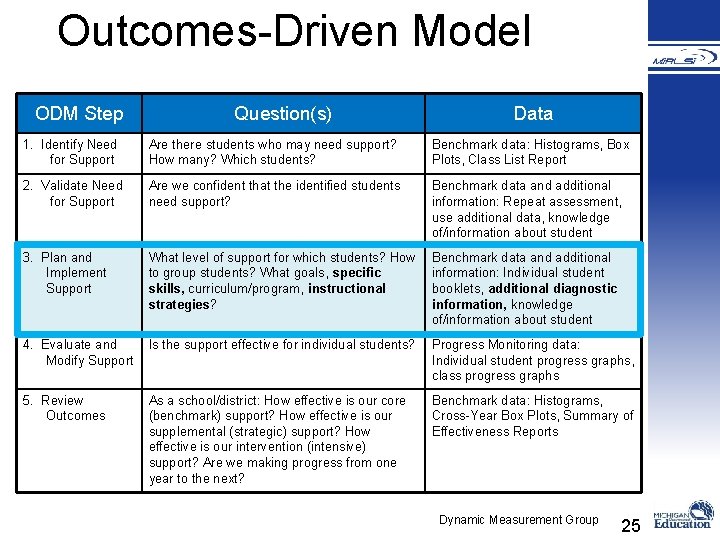

Outcomes-Driven Model ODM Step Question(s) Data 1. Identify Need for Support Are there students who may need support? How many? Which students? Benchmark data: Histograms, Box Plots, Class List Report 2. Validate Need for Support Are we confident that the identified students need support? Benchmark data and additional information: Repeat assessment, use additional data, knowledge of/information about student 3. Plan and Implement Support What level of support for which students? How to group students? What goals, specific skills, curriculum/program, instructional strategies? Benchmark data and additional information: Individual student booklets, additional diagnostic information, knowledge of/information about student 4. Evaluate and Modify Support Is the support effective for individual students? Progress Monitoring data: Individual student progress graphs, class progress graphs 5. Review Outcomes As a school/district: How effective is our core (benchmark) support? How effective is our supplemental (strategic) support? How effective is our intervention (intensive) support? Are we making progress from one year to the next? Benchmark data: Histograms, Cross-Year Box Plots, Summary of Effectiveness Reports Dynamic Measurement Group 7

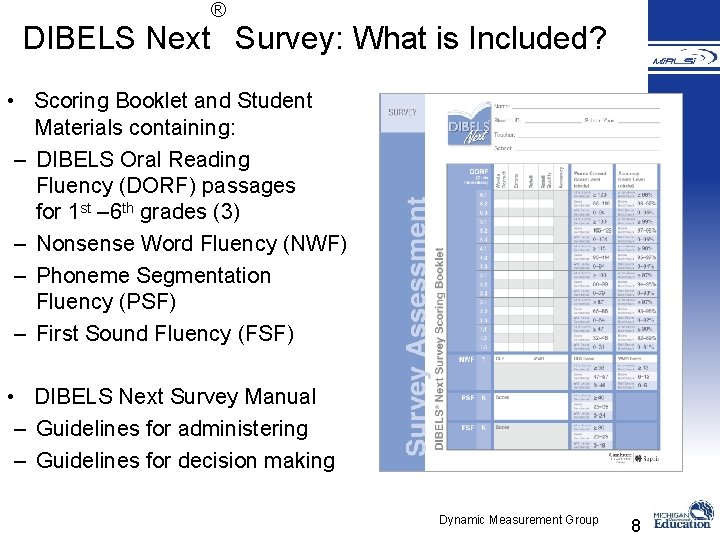

® DIBELS Next Survey: What is Included? • Scoring Booklet and Student Materials containing: – DIBELS Oral Reading Fluency (DORF) passages for 1 st – 6 th grades (3) – Nonsense Word Fluency (NWF) – Phoneme Segmentation Fluency (PSF) – First Sound Fluency (FSF) • DIBELS Next Survey Manual – Guidelines for administering – Guidelines for decision making Dynamic Measurement Group 8

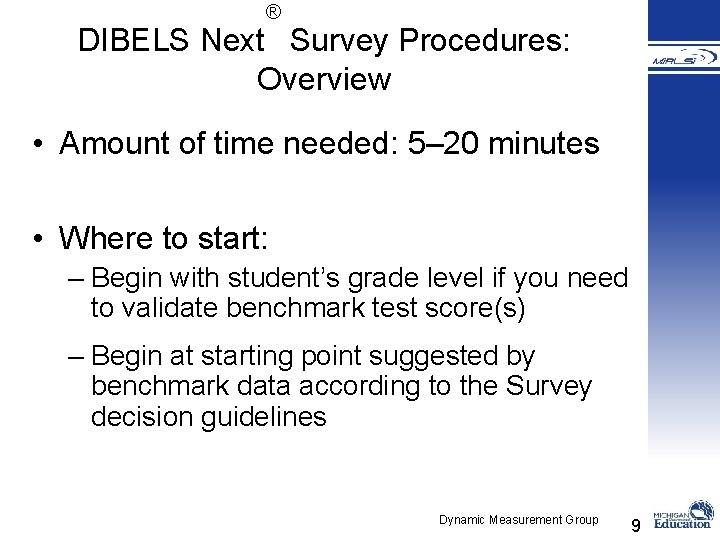

® DIBELS Next Survey Procedures: Overview • Amount of time needed: 5– 20 minutes • Where to start: – Begin with student’s grade level if you need to validate benchmark test score(s) – Begin at starting point suggested by benchmark data according to the Survey decision guidelines Dynamic Measurement Group 9

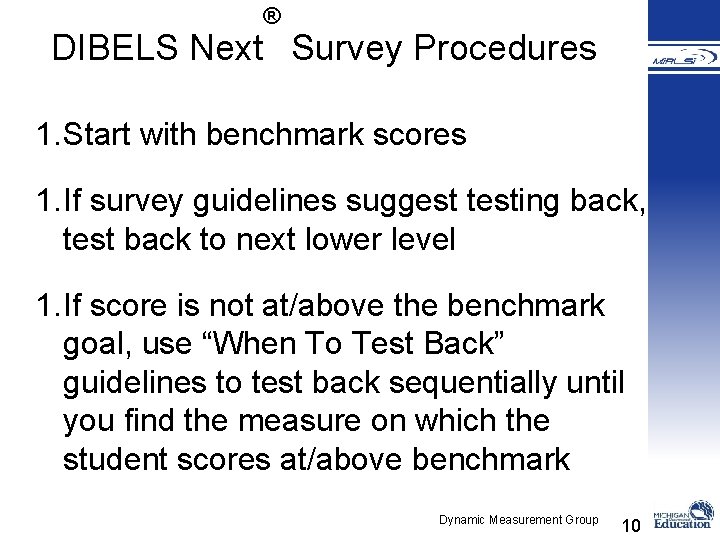

® DIBELS Next Survey Procedures 1. Start with benchmark scores 1. If survey guidelines suggest testing back, test back to next lower level 1. If score is not at/above the benchmark goal, use “When To Test Back” guidelines to test back sequentially until you find the measure on which the student scores at/above benchmark Dynamic Measurement Group 10

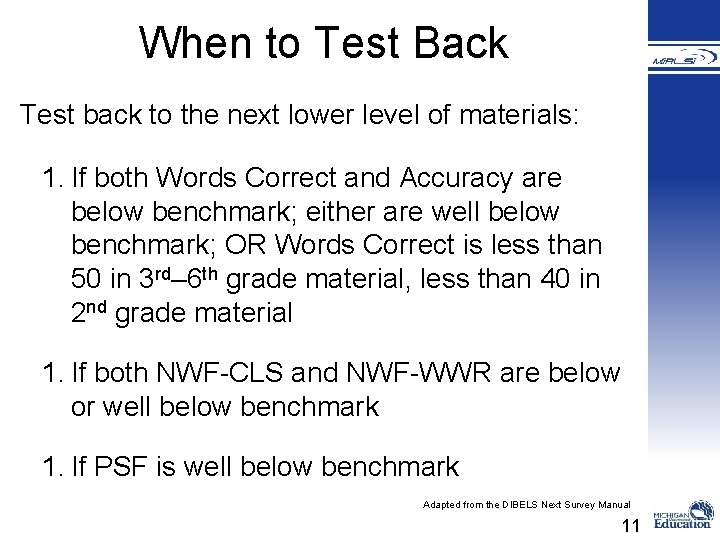

When to Test Back Test back to the next lower level of materials: 1. If both Words Correct and Accuracy are below benchmark; either are well below benchmark; OR Words Correct is less than 50 in 3 rd– 6 th grade material, less than 40 in 2 nd grade material 1. If both NWF-CLS and NWF-WWR are below or well below benchmark 1. If PSF is well below benchmark Adapted from the DIBELS Next Survey Manual 11

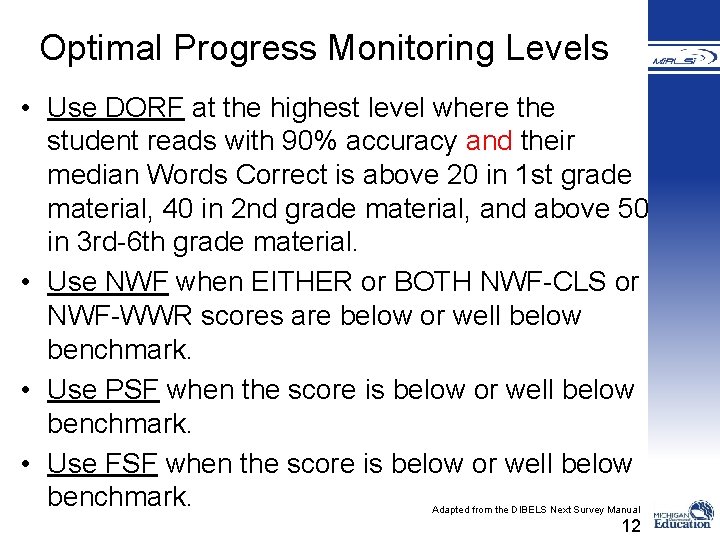

Optimal Progress Monitoring Levels • Use DORF at the highest level where the student reads with 90% accuracy and their median Words Correct is above 20 in 1 st grade material, 40 in 2 nd grade material, and above 50 in 3 rd-6 th grade material. • Use NWF when EITHER or BOTH NWF-CLS or NWF-WWR scores are below or well below benchmark. • Use PSF when the score is below or well below benchmark. • Use FSF when the score is below or well below benchmark. Adapted from the DIBELS Next Survey Manual 12

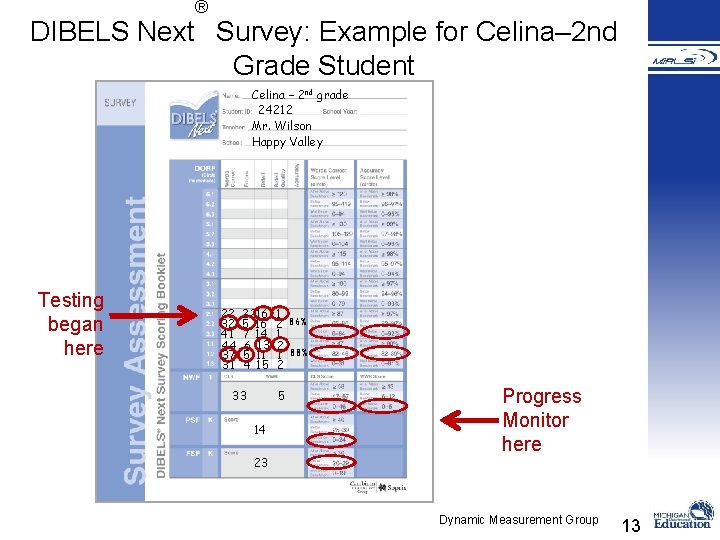

® DIBELS Next Survey: Example for Celina– 2 nd Grade Student Celina – 2 nd grade 24212 Mr. Wilson Happy Valley Testing began here 22 32 41 44 37 31 2216 5 16 7 14 6 13 5 11 4 15 33 1 2 1 2 5 14 23 86% 88% Progress Monitor here Dynamic Measurement Group 13

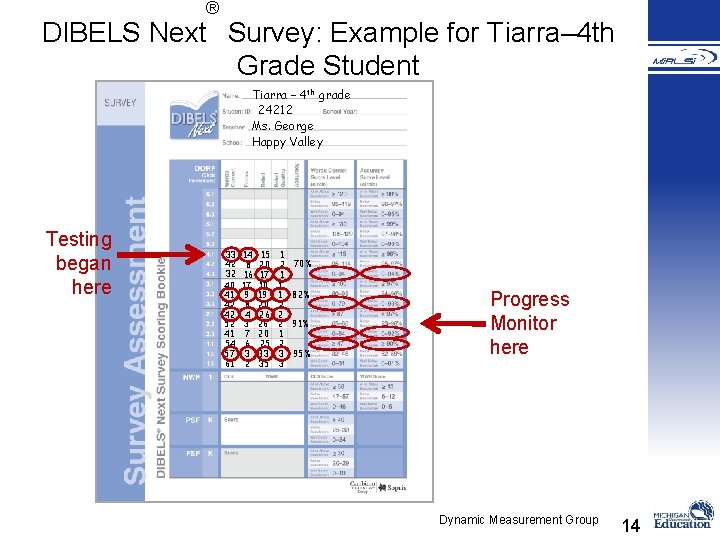

® DIBELS Next Survey: Example for Tiarra– 4 th Grade Student Tiarra – 4 th grade 24212 Ms. George Happy Valley Testing began here 33 42 32 40 41 42 42 52 41 54 57 61 14 8 16 17 9 8 4 3 7 6 3 2 15 20 17 10 19 20 26 26 20 25 33 35 1 2 1 1 1 2 2 2 1 2 3 3 70% 82% 91% 95% Progress Monitor here Dynamic Measurement Group 14

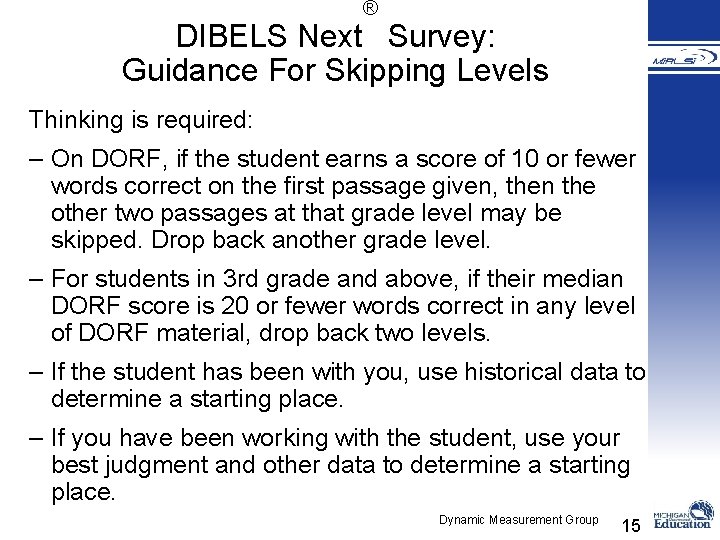

® DIBELS Next Survey: Guidance For Skipping Levels Thinking is required: – On DORF, if the student earns a score of 10 or fewer words correct on the first passage given, then the other two passages at that grade level may be skipped. Drop back another grade level. – For students in 3 rd grade and above, if their median DORF score is 20 or fewer words correct in any level of DORF material, drop back two levels. – If the student has been with you, use historical data to determine a starting place. – If you have been working with the student, use your best judgment and other data to determine a starting place. Dynamic Measurement Group 15

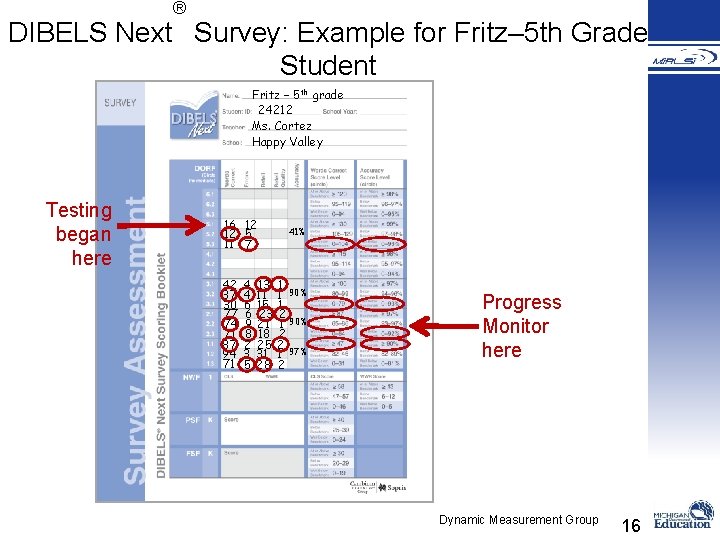

® DIBELS Next Survey: Example for Fritz– 5 th Grade Student Fritz – 5 th grade 24212 Ms. Cortez Happy Valley Testing began here 16 12 12 5 11 7 42 37 30 77 74 71 87 94 71 4 13 4 11 6 15 6 23 9 21 8 18 2 25 3 31 5 28 41% 1 1 90% 1 2 1 90% 2 2 1 97% 2 Progress Monitor here Dynamic Measurement Group 16

Goal Setting 1. Determine the goal based on the progress monitoring level and the end-of-year benchmark goal for that level (e. g. , 87 wcpm with 97% accuracy in second grade DORF). 1. Set the goal date so that the goal is achieved in half the time in which it would usually be achieved (e. g. , move the end-of-year benchmark goal to be achieved by the middle-of-year benchmark time). 2. Draw an aimline connecting the current performance goal. 17

Activity With your partner: Look back at the three examples where we determined the level for progress monitoring. Determine a realistic goal for each student. 18

Decision Making with DIBELS Next Survey Data from DIBELS Next Survey can be used to make the following decisions for students: 1. Determine instruction level for intervention 2. Determine level for progress monitoring 3. Set realistic progress goals 19

Partner Processing With your partner discuss the following questions: 1. Have you used a test back procedure for students, either with DIBELS 6 th Edition, Next, or another assessment? 2. How can DIBELS Next Survey fit into the assessment system at your school? Who will need to be trained? 20

DIBELS Deep: In-Depth Diagnostic Assessment of Literacy Skills 21

Purpose • Provide educators with brief diagnostic assessments for students below benchmark that are: – Cost effective – Time efficient – Comprehensive enough to provide specific information – User friendly – Adaptable across instructional settings – Aligned with the five essential components of effective reading – Aligned with the Common Core 22

DIBELS Deep Answers… • What specific skills need to be taught? • What instructional strategies might be used? 23

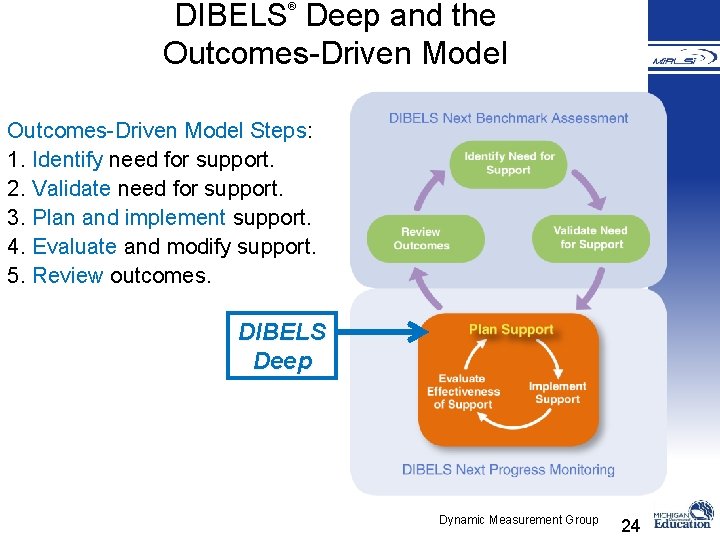

DIBELS Deep and the Outcomes-Driven Model ® Outcomes-Driven Model Steps: 1. Identify need for support. 2. Validate need for support. 3. Plan and implement support. 4. Evaluate and modify support. 5. Review outcomes. DIBELS Deep Dynamic Measurement Group 24

Outcomes-Driven Model ODM Step Question(s) Data 1. Identify Need for Support Are there students who may need support? How many? Which students? Benchmark data: Histograms, Box Plots, Class List Report 2. Validate Need for Support Are we confident that the identified students need support? Benchmark data and additional information: Repeat assessment, use additional data, knowledge of/information about student 3. Plan and Implement Support What level of support for which students? How to group students? What goals, specific skills, curriculum/program, instructional strategies? Benchmark data and additional information: Individual student booklets, additional diagnostic information, knowledge of/information about student 4. Evaluate and Modify Support Is the support effective for individual students? Progress Monitoring data: Individual student progress graphs, class progress graphs 5. Review Outcomes As a school/district: How effective is our core (benchmark) support? How effective is our supplemental (strategic) support? How effective is our intervention (intensive) support? Are we making progress from one year to the next? Benchmark data: Histograms, Cross-Year Box Plots, Summary of Effectiveness Reports Dynamic Measurement Group 25

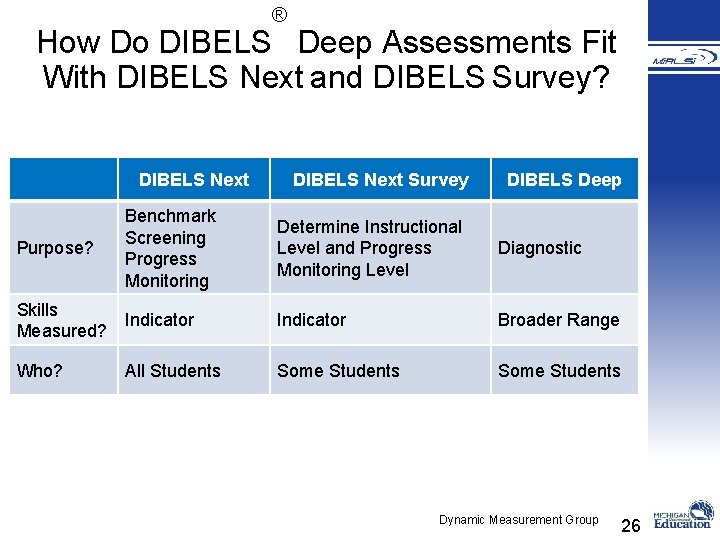

® How Do DIBELS Deep Assessments Fit With DIBELS Next and DIBELS Survey? DIBELS Next Survey DIBELS Deep Purpose? Benchmark Screening Progress Monitoring Determine Instructional Level and Progress Monitoring Level Diagnostic Skills Measured? Indicator Broader Range Who? All Students Some Students Dynamic Measurement Group 26

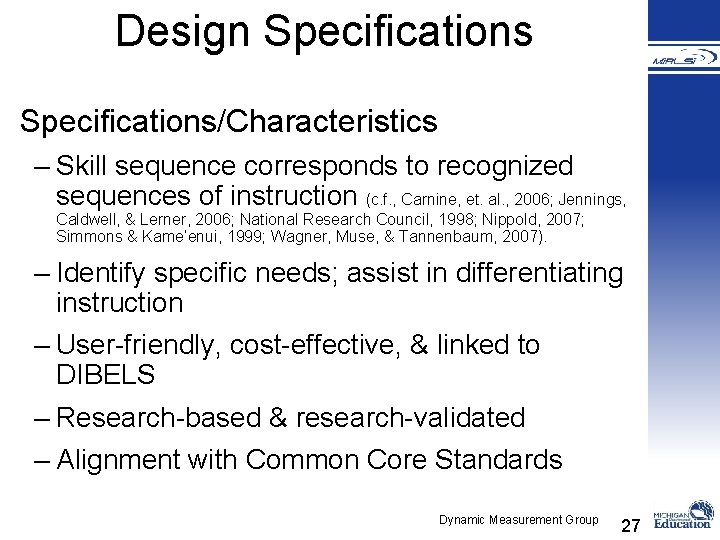

Design Specifications/Characteristics – Skill sequence corresponds to recognized sequences of instruction (c. f. , Carnine, et. al. , 2006; Jennings, Caldwell, & Lerner, 2006; National Research Council, 1998; Nippold, 2007; Simmons & Kame’enui, 1999; Wagner, Muse, & Tannenbaum, 2007). – Identify specific needs; assist in differentiating instruction – User-friendly, cost-effective, & linked to DIBELS – Research-based & research-validated – Alignment with Common Core Standards Dynamic Measurement Group 27

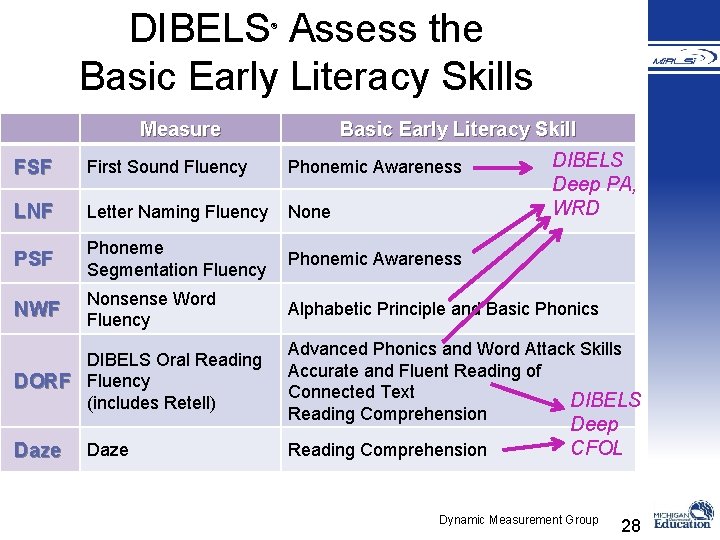

DIBELS Assess the Basic Early Literacy Skills ® Measure Basic Early Literacy Skill DIBELS Deep PA, WRD FSF First Sound Fluency Phonemic Awareness LNF Letter Naming Fluency None PSF Phoneme Segmentation Fluency Phonemic Awareness NWF Nonsense Word Fluency Alphabetic Principle and Basic Phonics DIBELS Oral Reading DORF Fluency (includes Retell) Daze Advanced Phonics and Word Attack Skills Accurate and Fluent Reading of Connected Text DIBELS Reading Comprehension Deep CFOL Dynamic Measurement Group 28

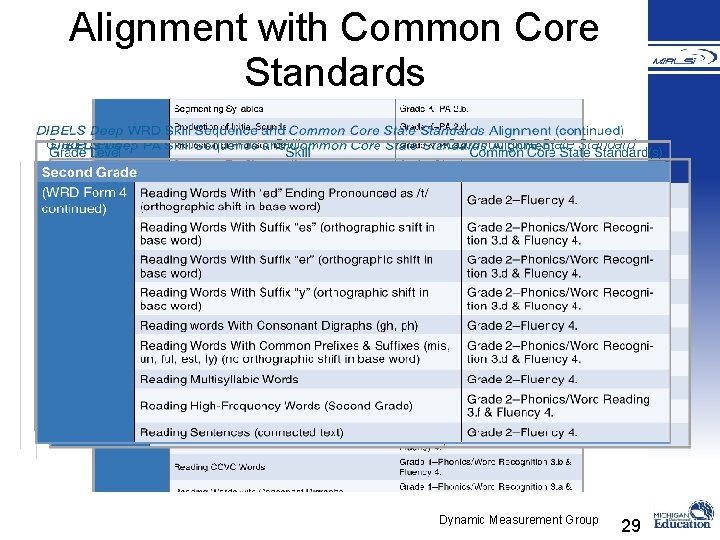

Alignment with Common Core Standards Grade Level Skill Common Core State Standard Dynamic Measurement Group 29

What is Included®in this Edition of DIBELS Deep? DIBELS Deep Assessment Manual Directions for administering & scoring Case study examples DIBELS Deep Phonemic Awareness (PA) Assessment Book Scoring Sheet DIBELS Deep Word Reading and Decoding (WRD) Assessment Book Scoring Sheets for WRD Quick Screen & WRD Forms 1 – 5 Dynamic Measurement Group 30

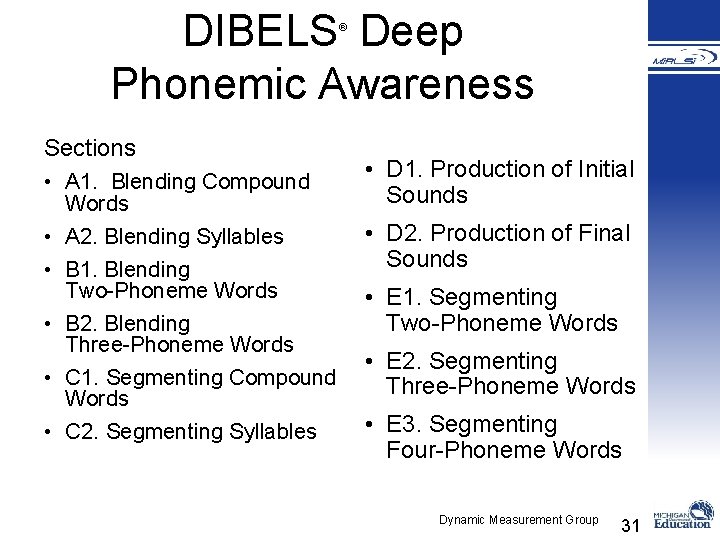

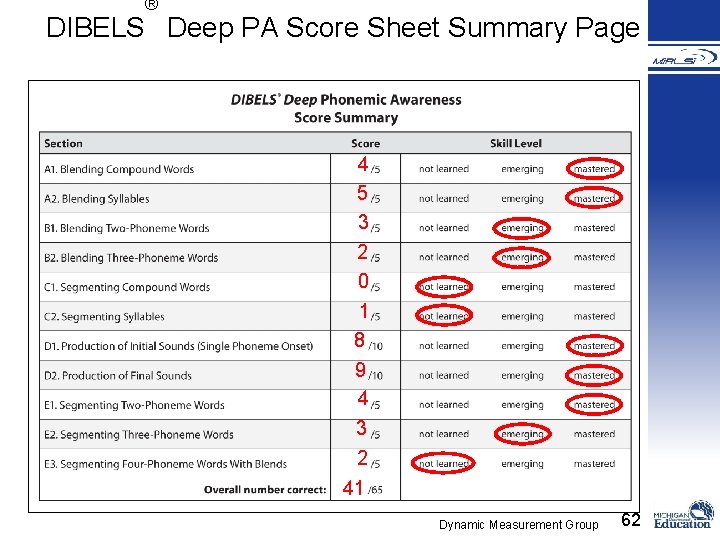

DIBELS Deep Phonemic Awareness ® Sections • A 1. Blending Compound Words • A 2. Blending Syllables • B 1. Blending Two-Phoneme Words • B 2. Blending Three-Phoneme Words • C 1. Segmenting Compound Words • C 2. Segmenting Syllables • D 1. Production of Initial Sounds • D 2. Production of Final Sounds • E 1. Segmenting Two-Phoneme Words • E 2. Segmenting Three-Phoneme Words • E 3. Segmenting Four-Phoneme Words Dynamic Measurement Group 31

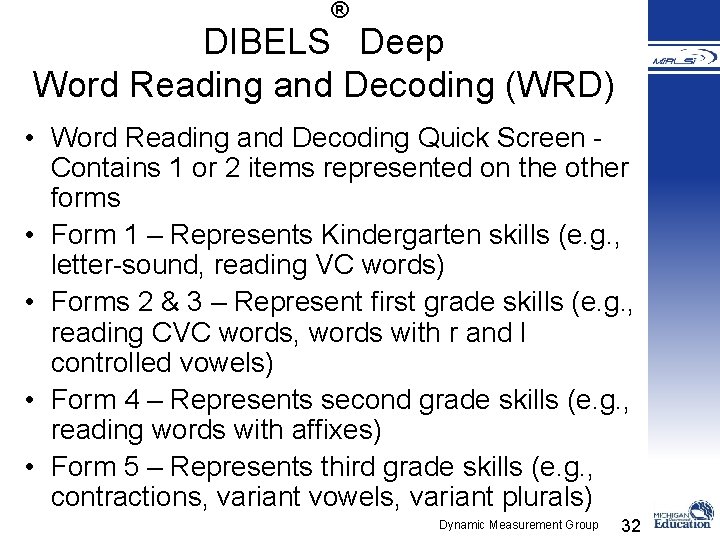

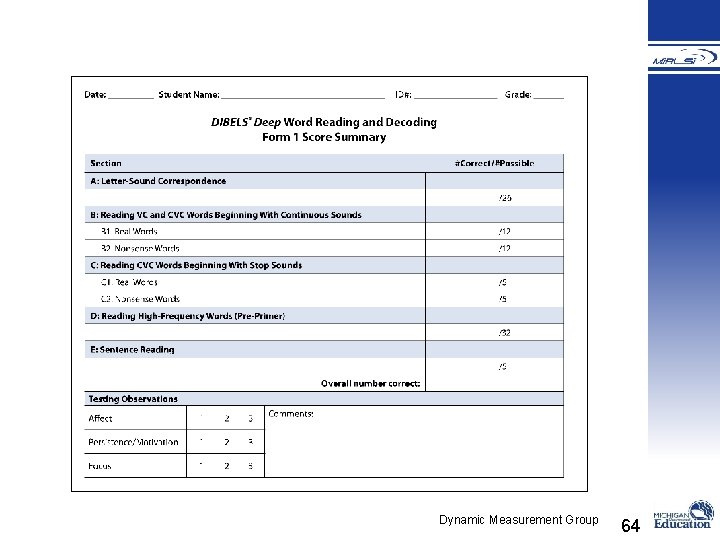

® DIBELS Deep Word Reading and Decoding (WRD) • Word Reading and Decoding Quick Screen Contains 1 or 2 items represented on the other forms • Form 1 – Represents Kindergarten skills (e. g. , letter-sound, reading VC words) • Forms 2 & 3 – Represent first grade skills (e. g. , reading CVC words, words with r and l controlled vowels) • Form 4 – Represents second grade skills (e. g. , reading words with affixes) • Form 5 – Represents third grade skills (e. g. , contractions, variant vowels, variant plurals) Dynamic Measurement Group 32

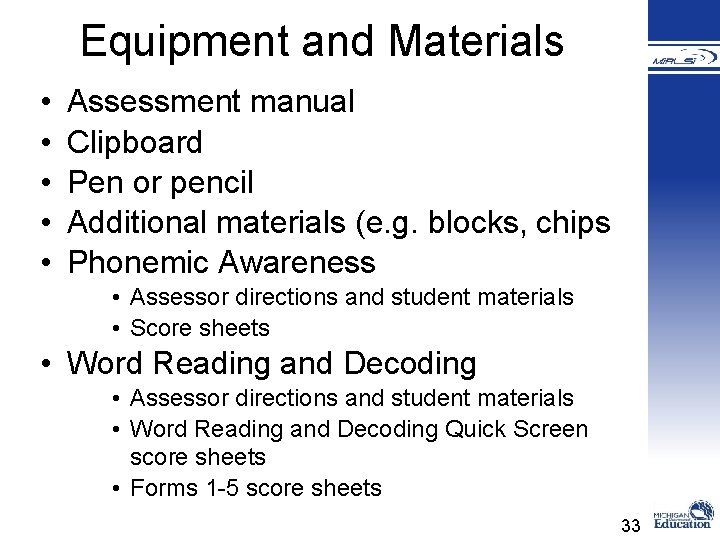

Equipment and Materials • • • Assessment manual Clipboard Pen or pencil Additional materials (e. g. blocks, chips Phonemic Awareness • Assessor directions and student materials • Score sheets • Word Reading and Decoding • Assessor directions and student materials • Word Reading and Decoding Quick Screen score sheets • Forms 1 -5 score sheets 33

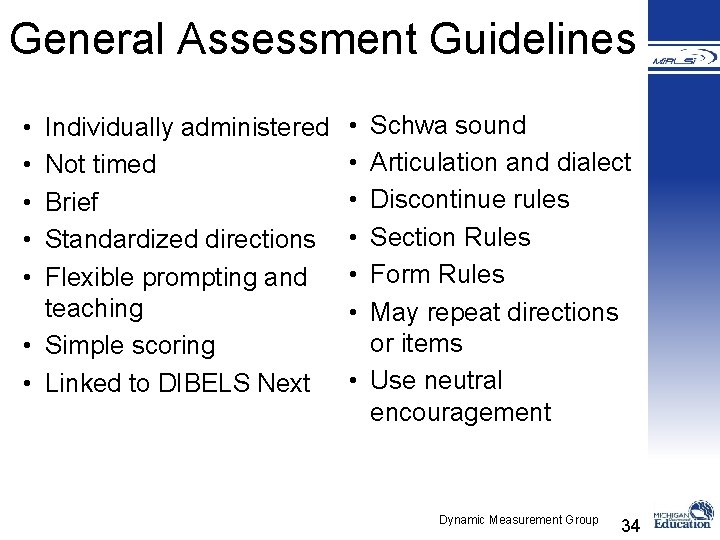

General Assessment Guidelines • • • Individually administered Not timed Brief Standardized directions Flexible prompting and teaching • Simple scoring • Linked to DIBELS Next • • • Schwa sound Articulation and dialect Discontinue rules Section Rules Form Rules May repeat directions or items • Use neutral encouragement Dynamic Measurement Group 34

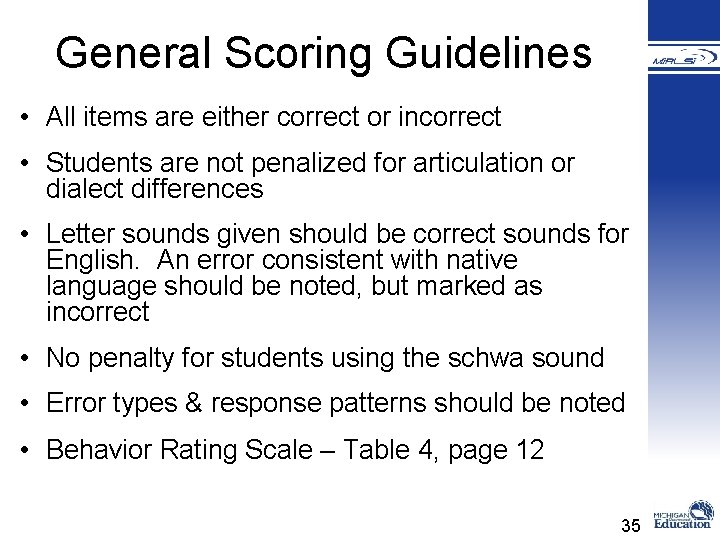

General Scoring Guidelines • All items are either correct or incorrect • Students are not penalized for articulation or dialect differences • Letter sounds given should be correct sounds for English. An error consistent with native language should be noted, but marked as incorrect • No penalty for students using the schwa sound • Error types & response patterns should be noted • Behavior Rating Scale – Table 4, page 12 35

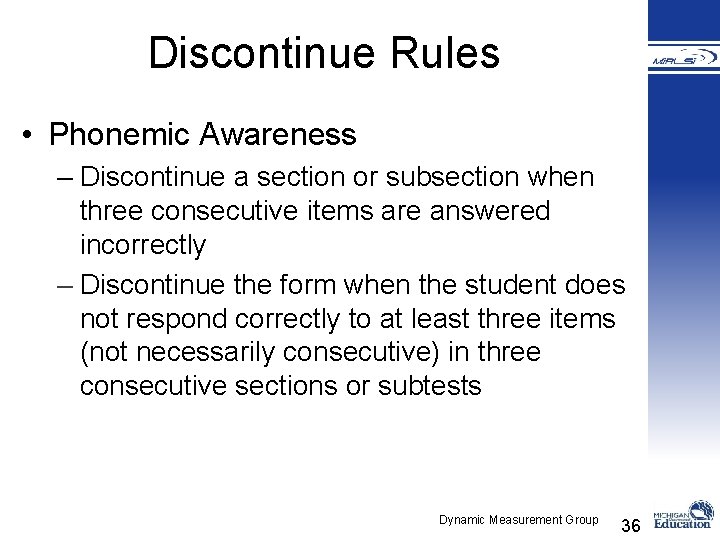

Discontinue Rules • Phonemic Awareness – Discontinue a section or subsection when three consecutive items are answered incorrectly – Discontinue the form when the student does not respond correctly to at least three items (not necessarily consecutive) in three consecutive sections or subtests Dynamic Measurement Group 36

® DIBELS Deep WRD General Discontinue Rules • Quick Screen – 5 consecutive items incorrect • Section Discontinue Rule – 3 consecutive items incorrect • Form Discontinue Rule – Student does not get at least 3 items correct (not necessarily consecutively) within 3 consecutive sections • Some sections have slightly different discontinue rules: – High Frequency Words – Sentence Reading Dynamic Measurement Group 37

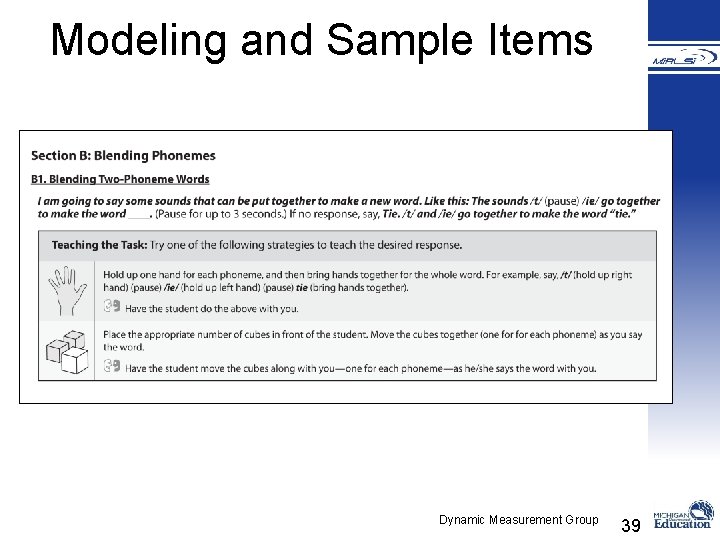

Administration and Scoring • Modeling and Sample Items – Intended to ensure students understand the task and what is expected – Must be presented exactly as stated in the administration directions • Repeating Directions – Directions may be repeated if you believe that the student did not hear or understand the directions, a practice item, or a test item 38

Modeling and Sample Items Dynamic Measurement Group 39

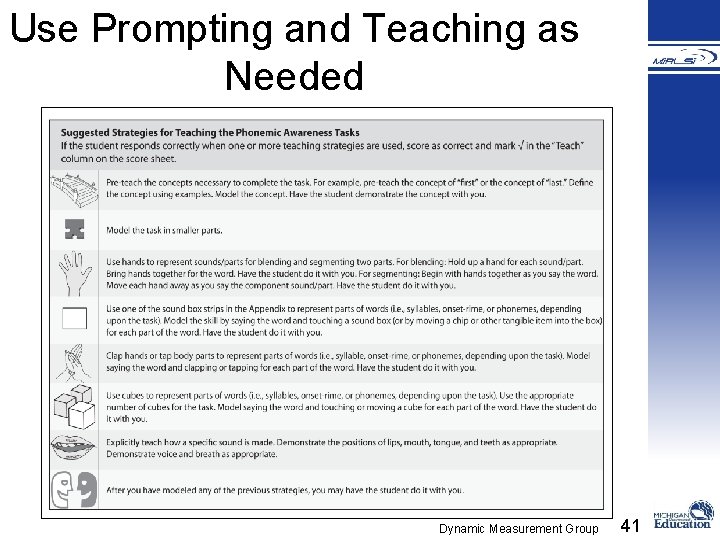

Prompting and Teaching • Suggested prompts and teaching strategies – page 16 in assessment manual • Additional strategies may be used, such as those consistent with the core instructional program • Always make note of any prompts or teaching strategies used as this information may be useful in planning instruction – Check the “Prompt/Teach” column on score sheet – Make note of the type of teaching strategy in the “Notes” section 40

Use Prompting and Teaching as Needed Dynamic Measurement Group 41

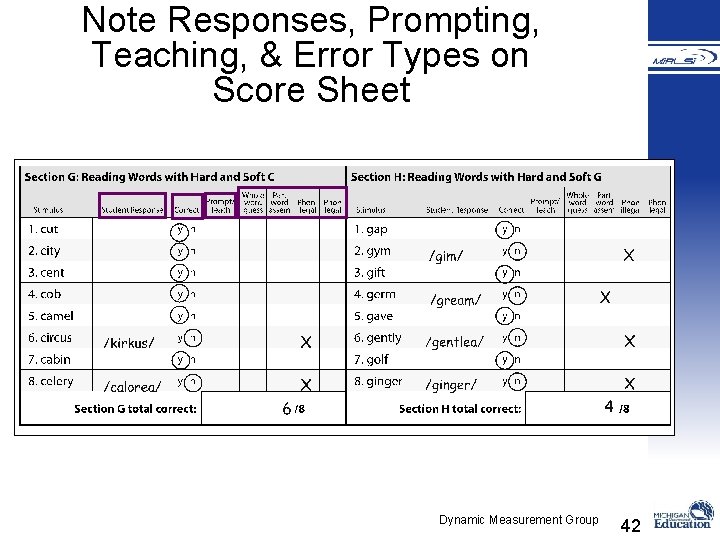

Note Responses, Prompting, Teaching, & Error Types on Score Sheet Dynamic Measurement Group 42

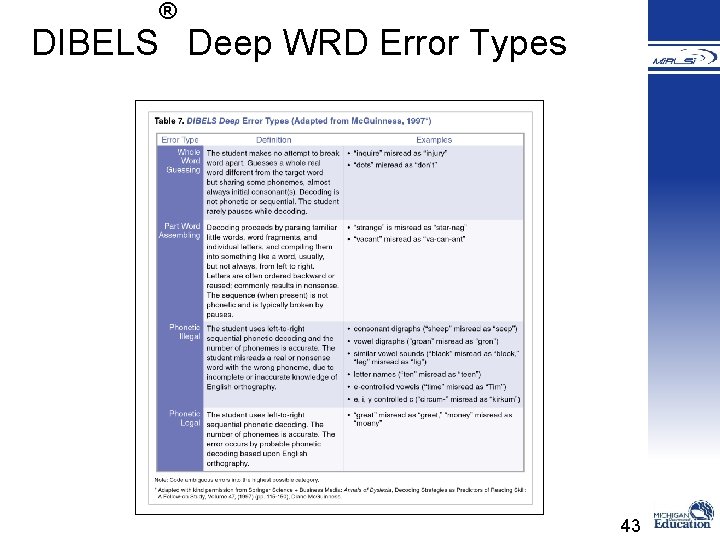

® DIBELS Deep WRD Error Types 43

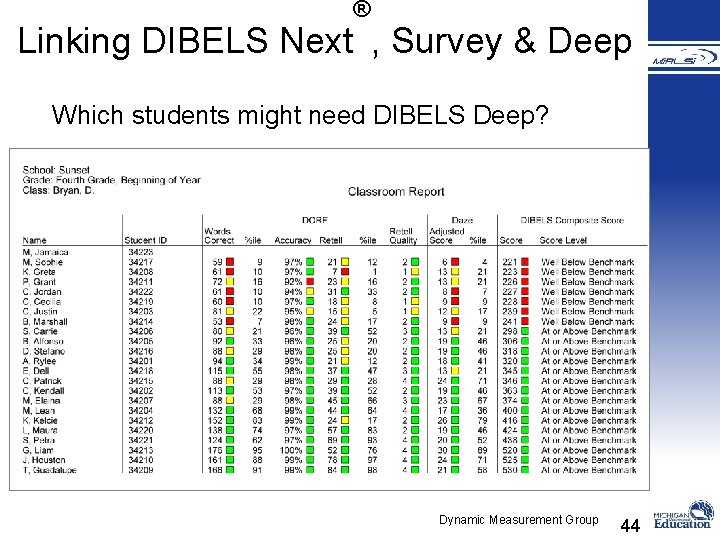

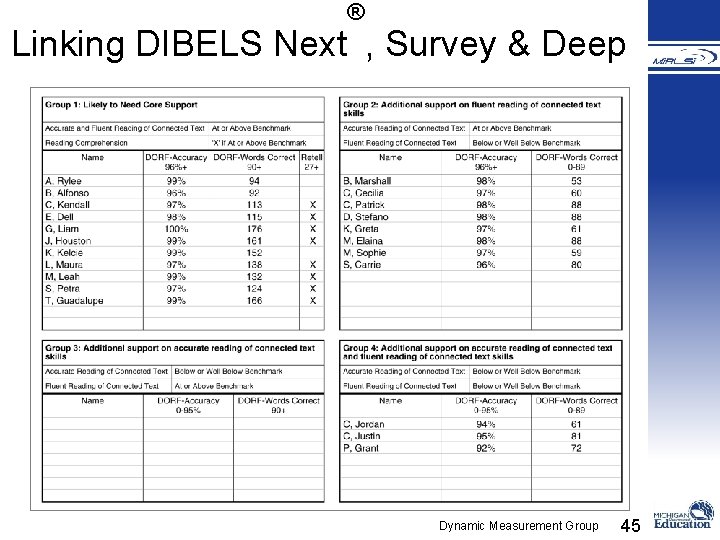

® Linking DIBELS Next , Survey & Deep Which students might need DIBELS Deep? Dynamic Measurement Group 44

® Linking DIBELS Next , Survey & Deep Dynamic Measurement Group 45

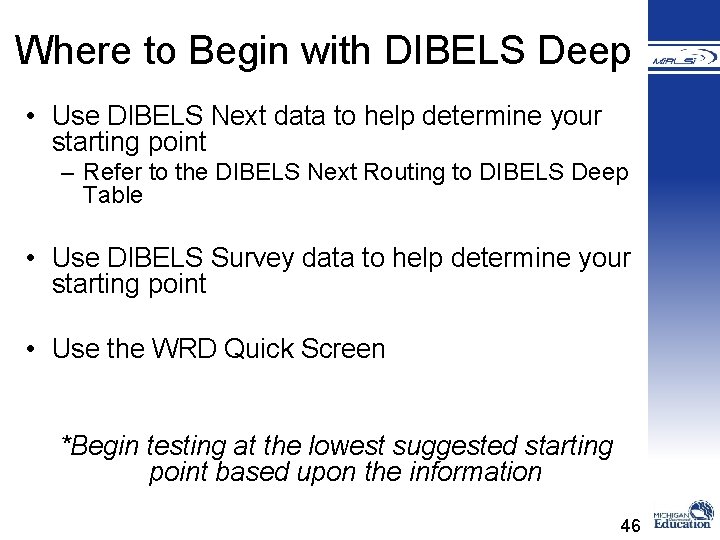

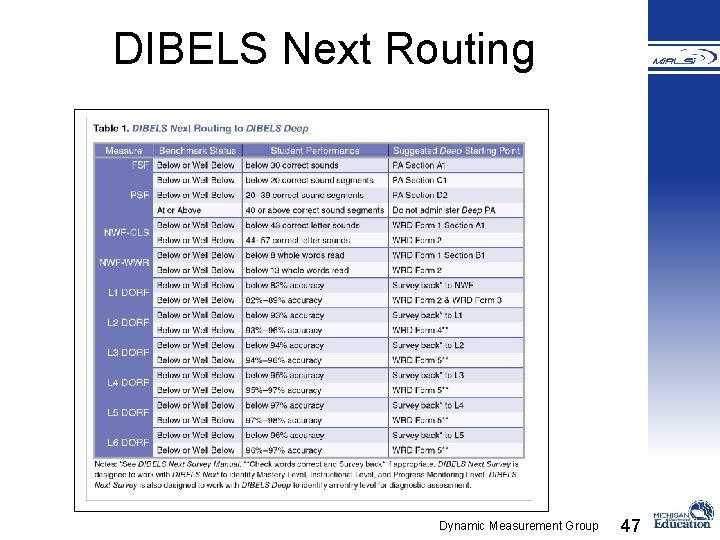

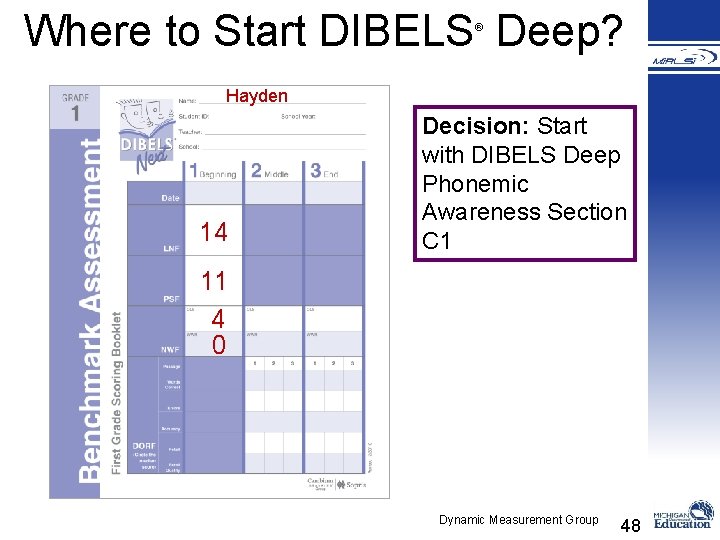

Where to Begin with DIBELS Deep • Use DIBELS Next data to help determine your starting point – Refer to the DIBELS Next Routing to DIBELS Deep Table • Use DIBELS Survey data to help determine your starting point • Use the WRD Quick Screen *Begin testing at the lowest suggested starting point based upon the information 46

DIBELS Next Routing Dynamic Measurement Group 47

Where to Start DIBELS Deep? ® Hayden 14 Decision: Start with DIBELS Deep Phonemic Awareness Section C 1 11 4 0 Dynamic Measurement Group 48

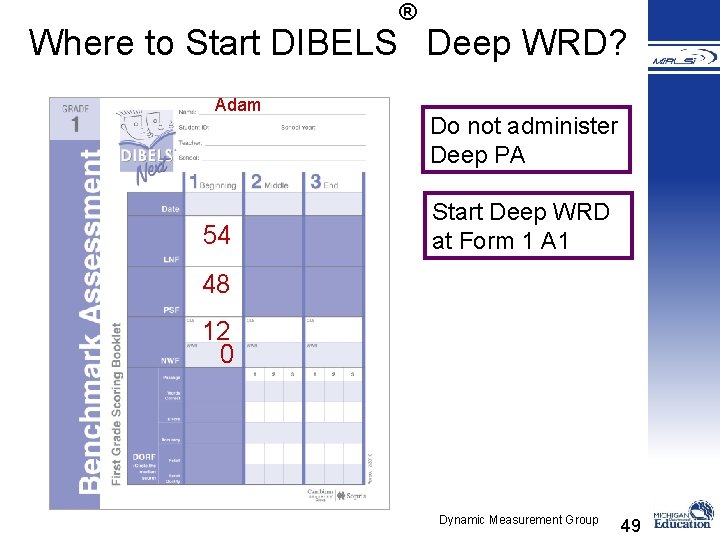

® Where to Start DIBELS Deep WRD? Adam 54 Do not administer Deep PA Start Deep WRD at Form 1 A 1 48 12 0 Dynamic Measurement Group 49

Using DIBELS Next Survey to Determine Starting Point 50

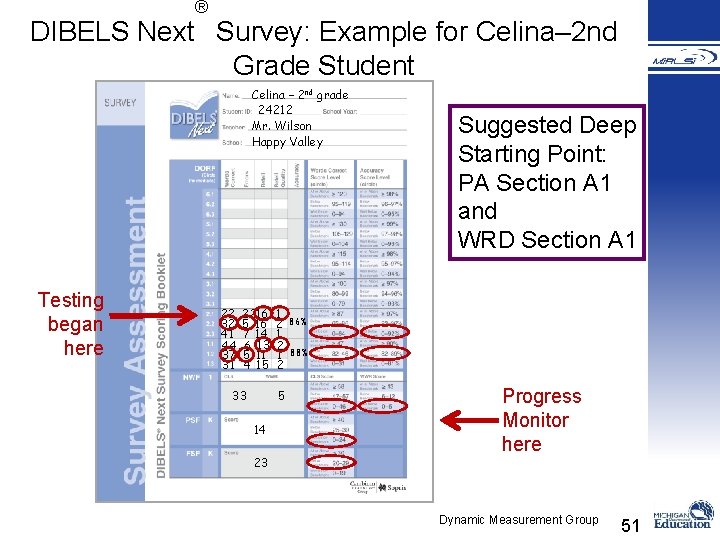

® DIBELS Next Survey: Example for Celina– 2 nd Grade Student Celina – 2 nd grade 24212 Mr. Wilson Happy Valley Testing began here 22 32 41 44 37 31 2216 5 16 7 14 6 13 5 11 4 15 33 1 2 1 2 5 14 23 Suggested Deep Starting Point: PA Section A 1 and WRD Section A 1 86% 88% Progress Monitor here Dynamic Measurement Group 51

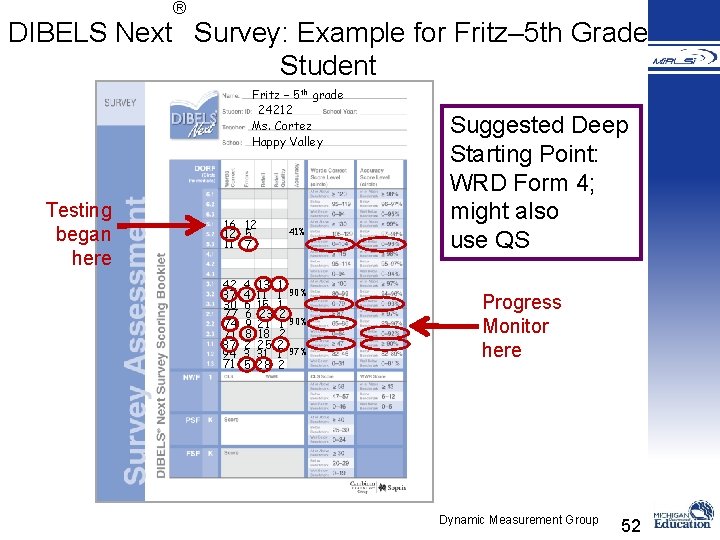

® DIBELS Next Survey: Example for Fritz– 5 th Grade Student Fritz – 5 th grade 24212 Ms. Cortez Happy Valley Testing began here 16 12 12 5 11 7 42 37 30 77 74 71 87 94 71 4 13 4 11 6 15 6 23 9 21 8 18 2 25 3 31 5 28 41% 1 1 90% 1 2 1 90% 2 2 1 97% 2 Suggested Deep Starting Point: WRD Form 4; might also use QS Progress Monitor here Dynamic Measurement Group 52

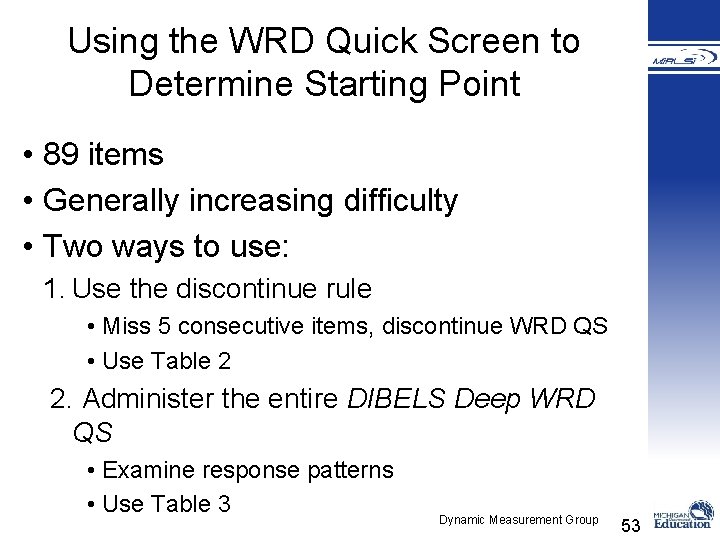

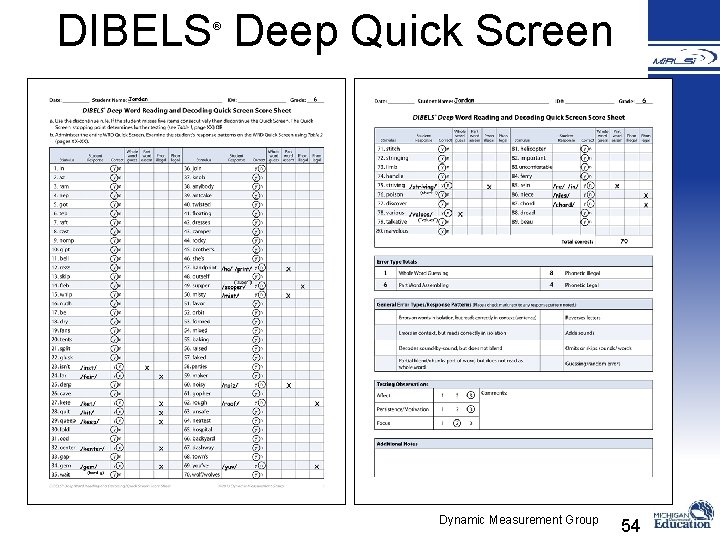

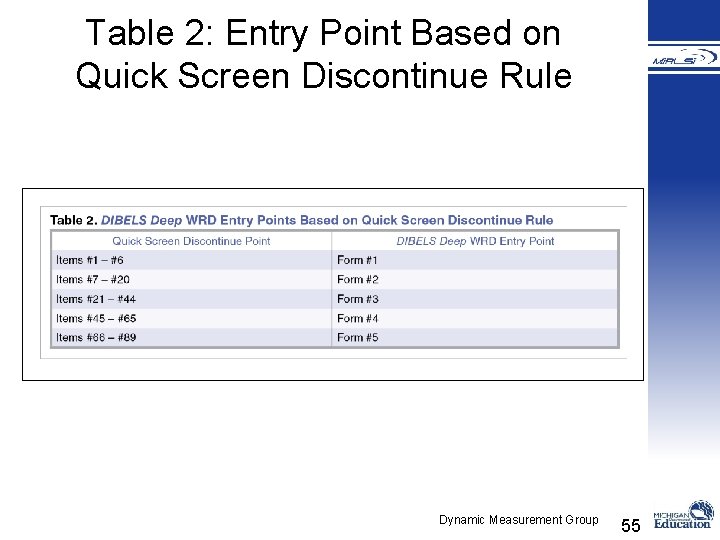

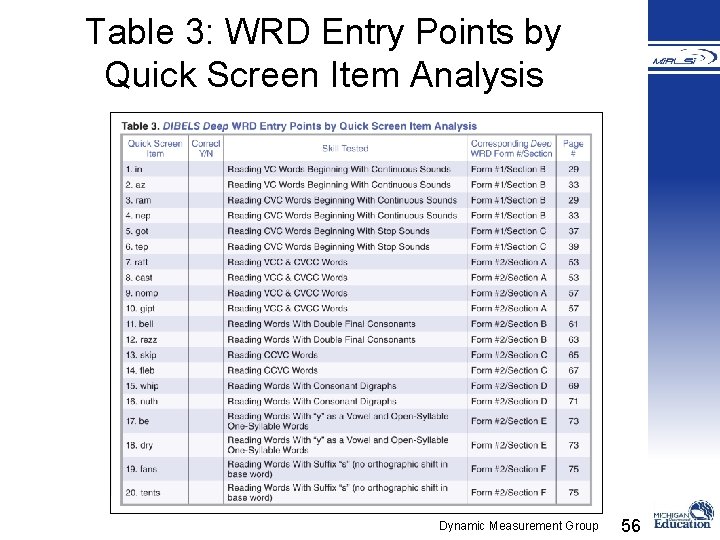

Using the WRD Quick Screen to Determine Starting Point • 89 items • Generally increasing difficulty • Two ways to use: 1. Use the discontinue rule • Miss 5 consecutive items, discontinue WRD QS • Use Table 2 2. Administer the entire DIBELS Deep WRD QS • Examine response patterns • Use Table 3 Dynamic Measurement Group 53

DIBELS Deep Quick Screen ® Dynamic Measurement Group 54

Table 2: Entry Point Based on Quick Screen Discontinue Rule Dynamic Measurement Group 55

Table 3: WRD Entry Points by Quick Screen Item Analysis Dynamic Measurement Group 56

Partner Processing With your partner, review the three ways to determine the starting point for DIBELS Deep. 1. Partner 1 teach your partner how to use DIBELS Next data to determine the starting point. 2. Partner 2 teach how to use DIBELS Next Survey. 3. Partner 1 teach how to use the DIBELS Deep Quick Screen. 57

Appropriate Use of DIBELS Deep ® • Designed for making instructional decisions • Not designed for making high-stakes decisions (retention, grades, special education eligibility, teacher evaluation) – What specific skills need to be taught? – What instructional strategies might be used? Dynamic Measurement Group 58

Data Interpretation Skills Mastered Skills Emerging Skills Not Learned Instructional Implications Dynamic Measurement Group 59

General Interpretation: Skill Level Definitions Scores 0– 1 out of 5; or 0– 3 out of 10 • The student likely has not yet learned the skill. • Circle “not learned” as the Skill Level. • Instructional implication: The student needs to be taught the skill. Scores 2– 3 out of 5; or 4– 7 out of 10 • The student's skills are still emerging. • Circle “emerging” under Skill Level. • Instructional implication: Re-teaching or review of the skill in addition to practice with feedback are needed. Dynamic Measurement Group 60

General Interpretation: Skill Level Definitions Scores 4– 5 out of 5; or 8– 10 out of 10 • The student has mastered the skills. • Circle “mastered” under Skill Level. • Instructional implication: The student is ready to learn more advanced skills. Dynamic Measurement Group 61

® DIBELS Deep PA Score Sheet Summary Page 4 5 3 2 0 1 8 9 4 3 2 41 Dynamic Measurement Group 62

General Interpretation: Skill Level Definitions • Mostly incorrect responses/meets discontinue rule • The student likely has not yet learned the skill – Instructional implication: The student needs to be taught the skill • Mixture of correct and incorrect responses • The student’s skills are still emerging – Instructional implication: Re-teaching or review of the skill in addition to practice with feedback are needed • Correct responses for most items • The student has mastered the skills in that section – Instructional implication: The student is ready to learn more advanced skills Dynamic Measurement Group 63

Sample DIBELS Deep WRD Score Sheet Summary Pages ® Dynamic Measurement Group 64

Training on DIBELS Deep ® • Ideally, users of DIBELS Deep will have also received training on DIBELS Next • Training on DIBELS Deep should include: • Research on learning to read and the basic early literacy skills • Purposes, design, and use of DIBELS Deep • Administration and scoring • Making instructional decisions with DIBELS Deep Dynamic Measurement Group 65

DIBELS Deep Logistics ® • Copyright • Purchasing DIBELS Deep • http: //www. soprislearning. com • $132. 95 • How many kits to order? • How often to give? • Parent permission? • Storing materials? • Reporting results? Dynamic Measurement Group 66

Partner Processing With your partner discuss the following questions: 1. How can DIBELS Deep fit into the assessment system at your school? Who will need to be trained? 67

® DIBELS Deep Comprehension, Fluency & Oral Language (CFOL) • Story Coherence • Listening Comprehension • Reading Comprehension (Retell & Summarizing) • Syntax • Morphological awareness • Grammar • Figurative Language • Vocabulary Dynamic Measurement Group 68

Intended Outcomes Participants will: • Understand the purpose of DIBELS Next Survey and Deep • See how both tools fit into an Outcomes Driven Model and School-wide Assessment System • Become familiar with the design and basic administration of each tool • Understand how the data can be used to make decisions 69

STATEMENT OF COMPLIANCE WITH FEDERAL LAW The Michigan Department of Education (MDE) complies with all federal laws and regulations prohibiting discrimination, and with all requirements of the U. S. Department of Education (USED). STATEMENT OF FUNDING This document was produced and distributed through an Individuals with Disabilities Education Act (IDEA) Mandated Activities Project (MAP) for the Michigan’s Integrated Behavior and Learning Support Initiative (Mi. BLSi) awarded by the Michigan Department of Education (MDE). The opinions expressed herein do not necessarily reflect the position or policy of the MDE, Michigan State Board of Education (SBE) or the U. S. Department of Education (USED), and no endorsement is inferred. This document is in the public domain and may be copied for further distribution when proper credit is given. COMPLIANCE WITH TITLE IX Title IX of the Education Amendments of 1972 is the landmark federal law that bans sex discrimination in schools, whether it is in curricular, extra-curricular or athletic activities. Title IX states: “No person in the U. S. shall, on the basis of sex be excluded from participation in, or denied the benefits of, or be subject to discrimination under any educational program or activity receiving federal aid. ” The Michigan Department of Education (MDE) is in compliance with Title IX of the Education Amendments of 1972, as amended, 20 U. S. C. 1681 et seq. (Title IX), and its implementing regulation, at 34 C. F. R. Part 106, which prohibits discrimination based on sex. The MDE, as a recipient of federal financial assistance from the U. S. Department of Education (USED), is subject to the provisions of Title IX. The MDE does not discriminate based on gender in employment or in any educational program or activity that it operates. State Board of Education John C. Austin, President Casandra E. Ulbrich , Vice President Nancy Danhof, Secretary Marianne Yared Mc. Guire, Treasurer Richard Zeile, NASBE Delegate Kathleen N. Straus Daniel Varner Eileen Lappin Weiser Ex-Officio Rick Snyder, Governor Michael P. Flanagan, Superintendent of Public Instruction For inquiries and complaints regarding Title IX, contact: Ms. Norma Tims, Office of Career and Technical Education, Michigan Department of Education, Hannah Building, 608 West Allegan, P. O. Box 30008, Lansing, MI 48909. 70

- Slides: 70