HPC Challenge 2007 IBM Research HPC Challenge 07

HPC Challenge 2007 IBM Research HPC Challenge 07: X 10 1 Vijay Saraswat vijay@saraswat. org November 13, 2007 IBM Research This material is based upon work supported by the Defense Advanced Research Projects Agency under its Agreement No. HR 0011 -07 -9 -0002. © 2007 IBM Corporation

HPC Challenge 2007 IBM Research Teams 2 § Application – Tong Wen (WAT) – Mark Stephenson (AUS) – Vipin Sachdeva (AUS) – Guojing Cong (WAT) § Runtime – Sreedhar Kodali (ISL) – Ganesh Bikshandi (ISL) – Sriram Krishnamurthy (WAT) – Sayantan Sur (WAT) § Compiler – Igor Peshansky (WAT) – Krishna Venkata (IRL) – Pradeep Varma (IRL) – Nathaniel Nystrom (WAT) § BG Port – Jose Castanos (WAT) § ARMCI Port – Vinod Tipparaju (PNNL) Special thanks to Calin Cascaval, John Gunnels and Doug Lea. © 2007 IBM Corporation

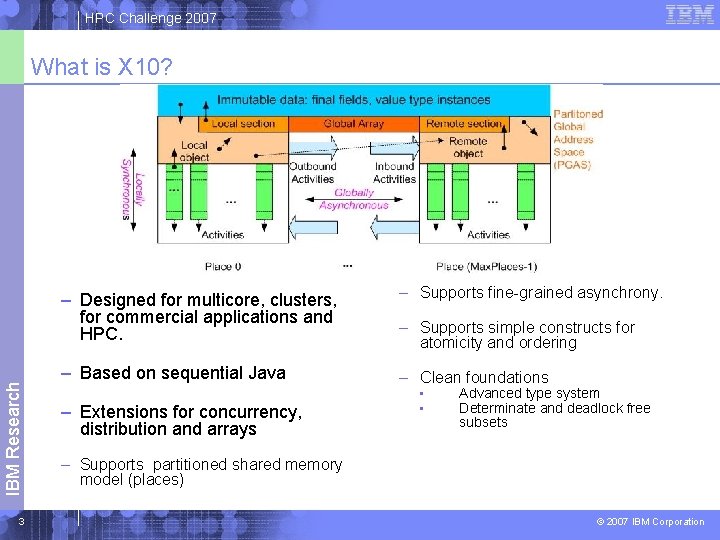

HPC Challenge 2007 IBM Research What is X 10? 3 – Designed for multicore, clusters, for commercial applications and HPC. – Supports fine-grained asynchrony. – Based on sequential Java – Clean foundations – Extensions for concurrency, distribution and arrays – Supports simple constructs for atomicity and ordering • • Advanced type system Determinate and deadlock free subsets – Supports partitioned shared memory model (places) © 2007 IBM Corporation

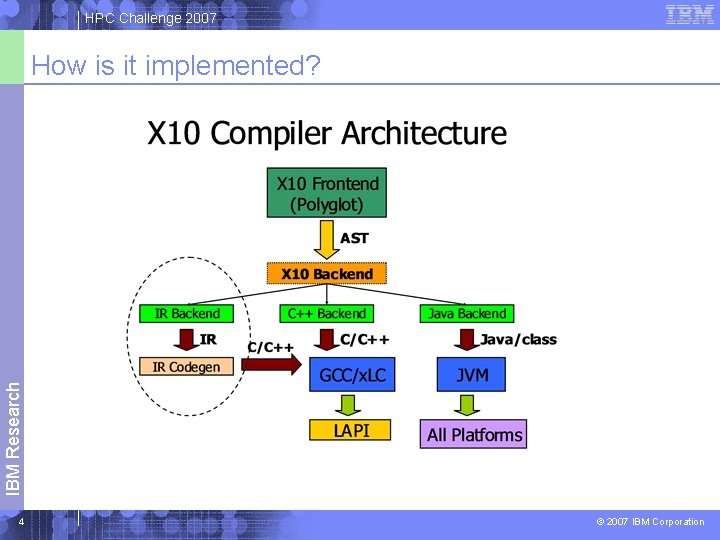

HPC Challenge 2007 IBM Research How is it implemented? 4 © 2007 IBM Corporation

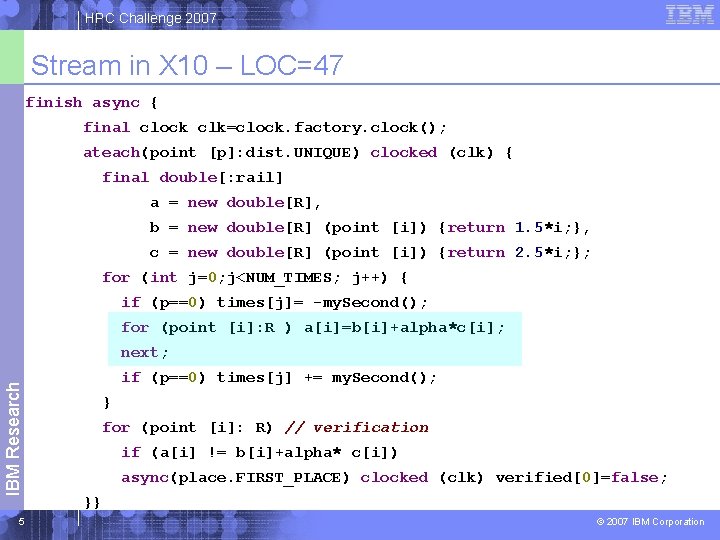

HPC Challenge 2007 Stream in X 10 – LOC=47 finish async { final clock clk=clock. factory. clock(); ateach(point [p]: dist. UNIQUE) clocked (clk) { final double[: rail] a = new double[R], b = new double[R] (point [i]) {return 1. 5*i; }, c = new double[R] (point [i]) {return 2. 5*i; }; for (int j=0; j<NUM_TIMES; j++) { if (p==0) times[j]= -my. Second(); for (point [i]: R ) a[i]=b[i]+alpha*c[i]; IBM Research next; 5 if (p==0) times[j] += my. Second(); } for (point [i]: R) // verification if (a[i] != b[i]+alpha* c[i]) async(place. FIRST_PLACE) clocked (clk) verified[0]=false; }} © 2007 IBM Corporation

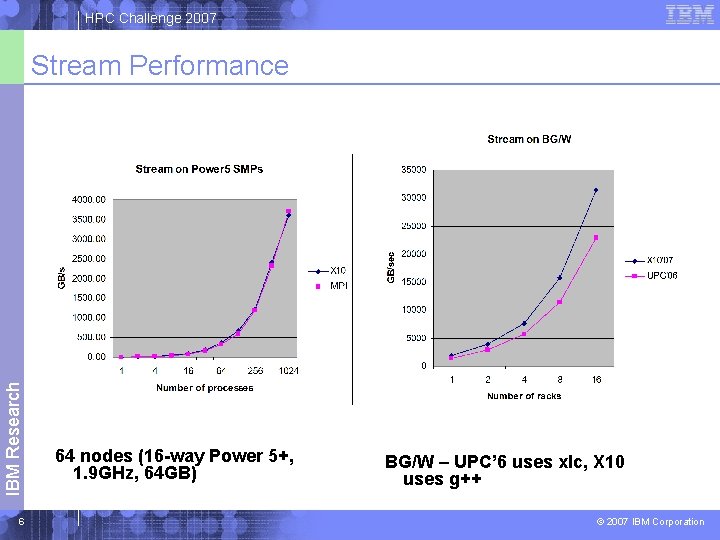

HPC Challenge 2007 IBM Research Stream Performance 6 64 nodes (16 -way Power 5+, 1. 9 GHz, 64 GB) BG/W – UPC’ 6 uses xlc, X 10 uses g++ © 2007 IBM Corporation

![HPC Challenge 2007 FT Code (LOC=137) Key routine: global transpose finish ateach(point [p]: UNIQUE) HPC Challenge 2007 FT Code (LOC=137) Key routine: global transpose finish ateach(point [p]: UNIQUE)](http://slidetodoc.com/presentation_image/5583039eb88dcdf2c8d34198f47c9dd4/image-7.jpg)

HPC Challenge 2007 FT Code (LOC=137) Key routine: global transpose finish ateach(point [p]: UNIQUE) { final int num. Local. Rows = SQRTN/NUM_PLACES; int row. Start. A = p*num. Local. Rows; // local transpose region block = [0: num. Local. Rows-1, 0: num. Local. Rows-1]; for (int k=0; k<NUM_PLACES; k++) { //for each block int col. Start. A = k*num. Local. Rows; … transpose locally… for (int i=0; i<num. Local. Rows; i++) { IBM Research final int src. Idx = 2*((row. Start. A + i)*SQRTN+col. Start. A), 7 dest. Idx = 2*(SQRTN * (col. Start. A + i) + row. Start. A); async (UNIQUE[k]) Runtime. array. Copy(Y, src. Idx, Z, dest. Idx, 2*num. Local. Rows); }}} Actual FFT done through call to (sequential) FFTW. © 2007 IBM Corporation

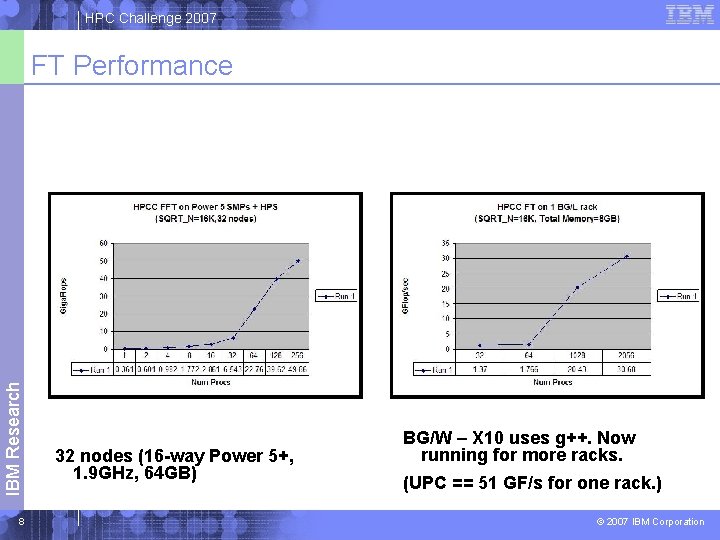

HPC Challenge 2007 IBM Research FT Performance 8 32 nodes (16 -way Power 5+, 1. 9 GHz, 64 GB) BG/W – X 10 uses g++. Now running for more racks. (UPC == 51 GF/s for one rack. ) © 2007 IBM Corporation

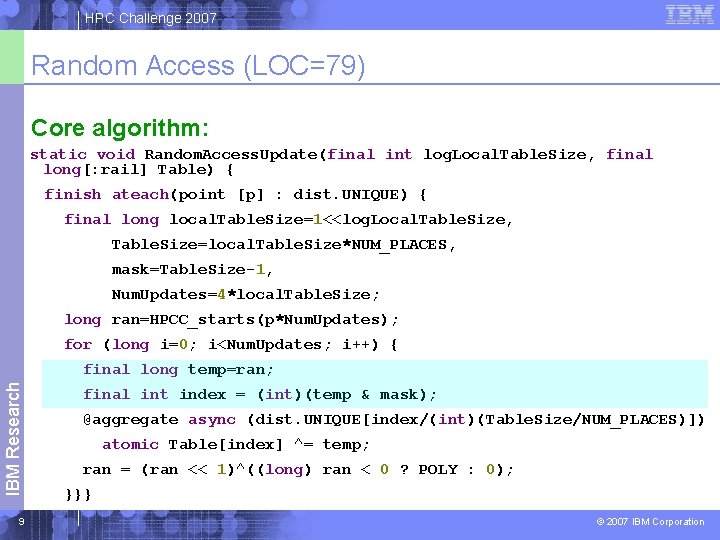

HPC Challenge 2007 Random Access (LOC=79) Core algorithm: static void Random. Access. Update(final int log. Local. Table. Size, final long[: rail] Table) { finish ateach(point [p] : dist. UNIQUE) { final long local. Table. Size=1<<log. Local. Table. Size, Table. Size=local. Table. Size*NUM_PLACES, mask=Table. Size-1, Num. Updates=4*local. Table. Size; long ran=HPCC_starts(p*Num. Updates); for (long i=0; i<Num. Updates; i++) { IBM Research final long temp=ran; 9 final int index = (int)(temp & mask); @aggregate async (dist. UNIQUE[index/(int)(Table. Size/NUM_PLACES)]) atomic Table[index] ^= temp; ran = (ran << 1)^((long) ran < 0 ? POLY : 0); }}} © 2007 IBM Corporation

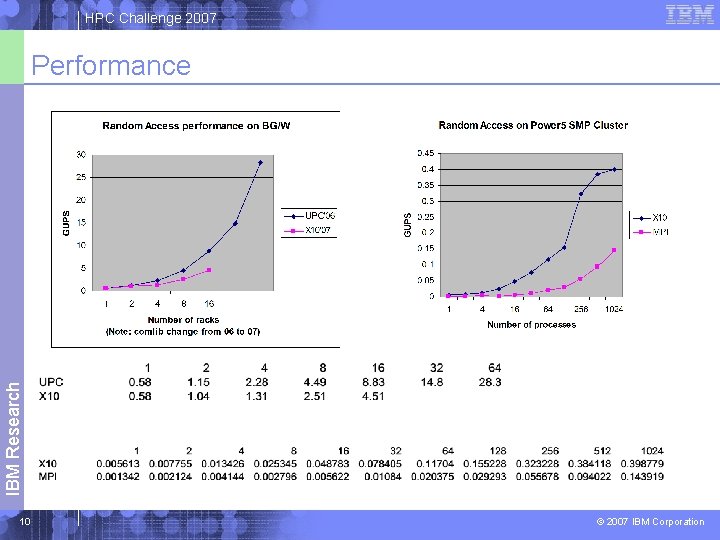

HPC Challenge 2007 IBM Research Performance 10 © 2007 IBM Corporation

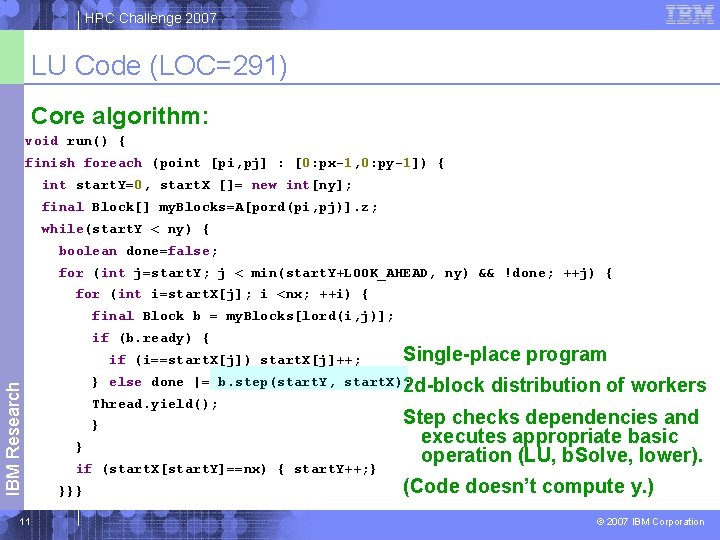

HPC Challenge 2007 LU Code (LOC=291) Core algorithm: void run() { finish foreach (point [pi, pj] : [0: px-1, 0: py-1]) { int start. Y=0, start. X []= new int[ny]; final Block[] my. Blocks=A[pord(pi, pj)]. z; while(start. Y < ny) { boolean done=false; for (int j=start. Y; j < min(start. Y+LOOK_AHEAD, ny) && !done; ++j) { for (int i=start. X[j]; i <nx; ++i) { final Block b = my. Blocks[lord(i, j)]; if (b. ready) { IBM Research if (i==start. X[j]) start. X[j]++; 11 Single-place program } else done |= b. step(start. Y, start. X); 2 d-block Thread. yield(); } } if (start. X[start. Y]==nx) { start. Y++; } }}} distribution of workers Step checks dependencies and executes appropriate basic operation (LU, b. Solve, lower). (Code doesn’t compute y. ) © 2007 IBM Corporation

![HPC Challenge 2007 Example of steps boolean step(final int start. Y, final int[] start. HPC Challenge 2007 Example of steps boolean step(final int start. Y, final int[] start.](http://slidetodoc.com/presentation_image/5583039eb88dcdf2c8d34198f47c9dd4/image-12.jpg)

HPC Challenge 2007 Example of steps boolean step(final int start. Y, final int[] start. X) { visit. Count++; if (count==max. Count) { return I<J ? step. Ilt. J() : (I==J ? step. Ieq. J() : step. Igt. J()); } else { Block IBuddy=get. Block(I, count); if (!IBuddy. ready) return false; Block JBuddy=get. Block(count, J); if (!JBuddy. ready) return false; mulsub(IBuddy, JBuddy); IBM Research count++; 12 return true; } } Call BLAS for DGEMM. step. Ilt. J wait; backsolve step. Ieq. J wait; control panel LU factorization step. Igt. J wait; compute lower, participate in LU factorization © 2007 IBM Corporation

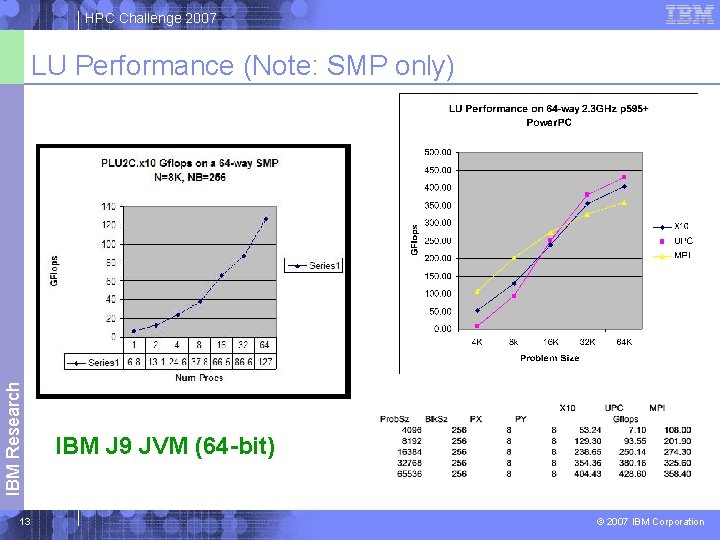

HPC Challenge 2007 IBM Research LU Performance (Note: SMP only) 13 IBM J 9 JVM (64 -bit) © 2007 IBM Corporation

IBM Research: Software Technology Programming Technologies Backup slides 14 © 2005 IBM Corporation

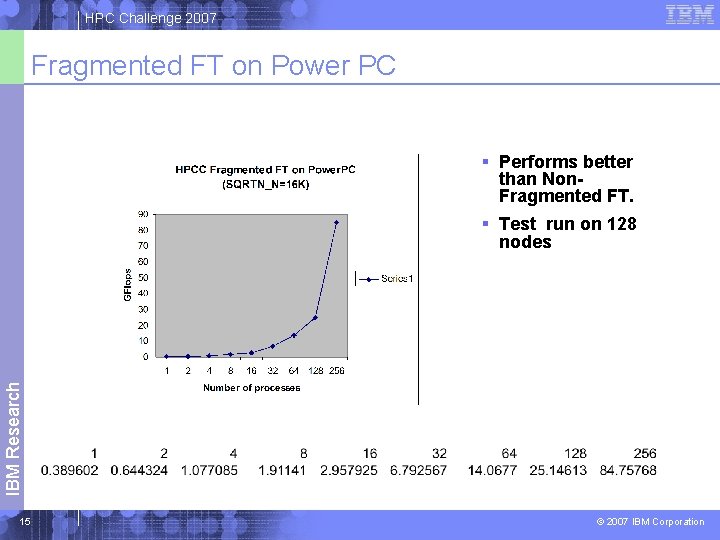

HPC Challenge 2007 Fragmented FT on Power PC § Performs better than Non. Fragmented FT. IBM Research § Test run on 128 nodes 15 © 2007 IBM Corporation

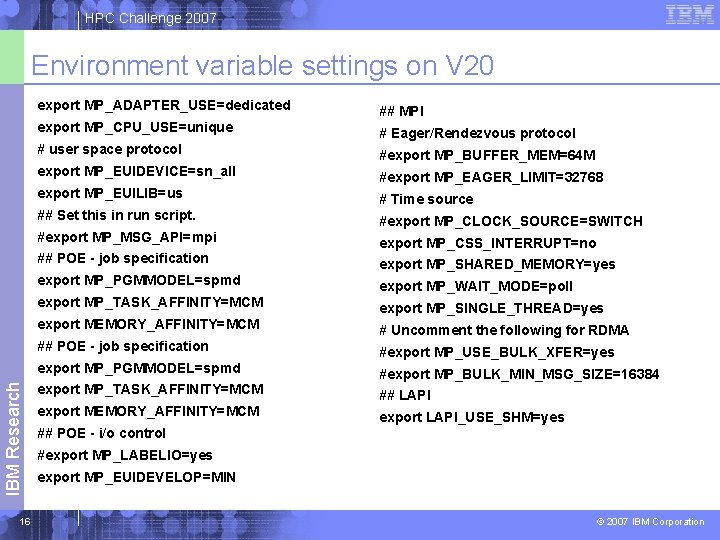

HPC Challenge 2007 IBM Research Environment variable settings on V 20 16 export MP_ADAPTER_USE=dedicated ## MPI export MP_CPU_USE=unique # Eager/Rendezvous protocol # user space protocol #export MP_BUFFER_MEM=64 M export MP_EUIDEVICE=sn_all #export MP_EAGER_LIMIT=32768 export MP_EUILIB=us # Time source ## Set this in run script. #export MP_CLOCK_SOURCE=SWITCH #export MP_MSG_API=mpi export MP_CSS_INTERRUPT=no ## POE - job specification export MP_SHARED_MEMORY=yes export MP_PGMMODEL=spmd export MP_WAIT_MODE=poll export MP_TASK_AFFINITY=MCM export MP_SINGLE_THREAD=yes export MEMORY_AFFINITY=MCM # Uncomment the following for RDMA ## POE - job specification #export MP_USE_BULK_XFER=yes export MP_PGMMODEL=spmd #export MP_BULK_MIN_MSG_SIZE=16384 export MP_TASK_AFFINITY=MCM ## LAPI export MEMORY_AFFINITY=MCM export LAPI_USE_SHM=yes ## POE - i/o control #export MP_LABELIO=yes export MP_EUIDEVELOP=MIN © 2007 IBM Corporation

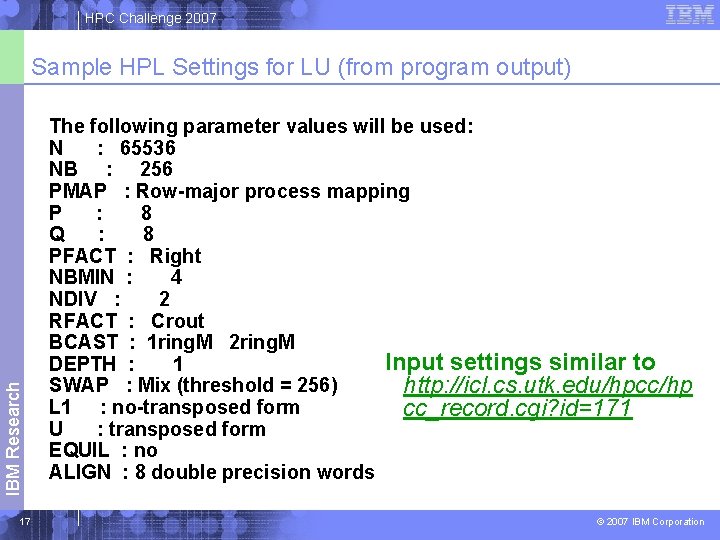

HPC Challenge 2007 IBM Research Sample HPL Settings for LU (from program output) 17 The following parameter values will be used: N : 65536 NB : 256 PMAP : Row-major process mapping P : 8 Q : 8 PFACT : Right NBMIN : 4 NDIV : 2 RFACT : Crout BCAST : 1 ring. M 2 ring. M Input settings similar to DEPTH : 1 SWAP : Mix (threshold = 256) http: //icl. cs. utk. edu/hpcc/hp L 1 : no-transposed form cc_record. cgi? id=171 U : transposed form EQUIL : no ALIGN : 8 double precision words © 2007 IBM Corporation

- Slides: 17