HPC Benchmarking and Performance Evaluation With Realistic Applications

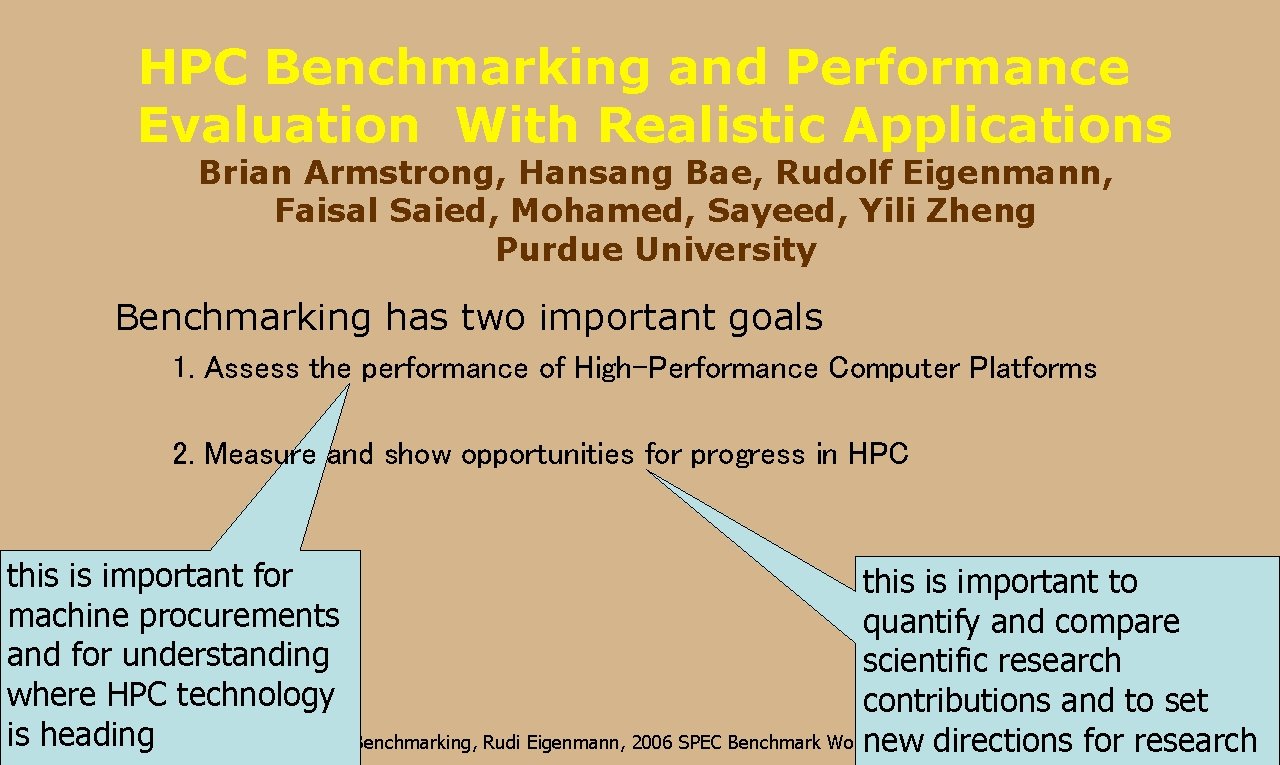

HPC Benchmarking and Performance Evaluation With Realistic Applications Brian Armstrong, Hansang Bae, Rudolf Eigenmann, Faisal Saied, Mohamed, Sayeed, Yili Zheng Purdue University Benchmarking has two important goals 1. Assess the performance of High-Performance Computer Platforms 2. Measure and show opportunities for progress in HPC this is important for this is important to machine procurements quantify and compare and for understanding scientific research where HPC technology contributions and to set is heading HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop new directions for research

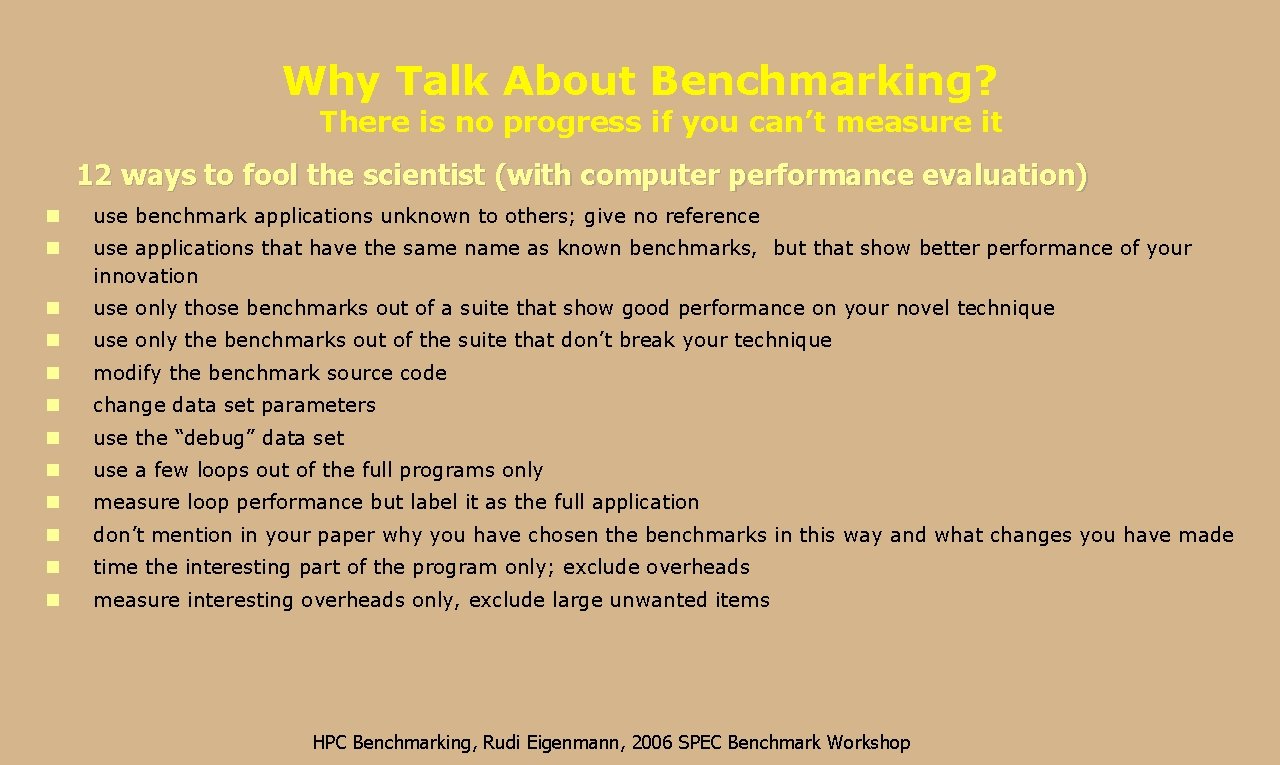

Why Talk About Benchmarking? There is no progress if you can’t measure it 12 ways to fool the scientist (with computer performance evaluation) n use benchmark applications unknown to others; give no reference n use applications that have the same name as known benchmarks, but that show better performance of your innovation n use only those benchmarks out of a suite that show good performance on your novel technique n use only the benchmarks out of the suite that don’t break your technique n modify the benchmark source code n change data set parameters n use the “debug” data set n use a few loops out of the full programs only n measure loop performance but label it as the full application n don’t mention in your paper why you have chosen the benchmarks in this way and what changes you have made n time the interesting part of the program only; exclude overheads n measure interesting overheads only, exclude large unwanted items HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

Benchmarks Need to be Representative and Open • Representative Benchmarks: – Represent real problems • Open Benchmarks: – No proprietary strings attached – Source code and performance data can be freely distributed With these goals in mind, SPEC’s High-performance group was formed in 1994 HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

Why is Benchmarking with Real Application Hard? • Simple Benchmarks are Overly Easy to Run • Realistic Benchmarks Cannot be Abstracted from Real Applications • Today's Realistic Applications May Not be Tomorrows Applications • Benchmarking is not Eligible for Research Funding • Maintaining Benchmarking Efforts is Costly • Proprietary Full-Application Benchmarks Cannot Serve as Yardsticks HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

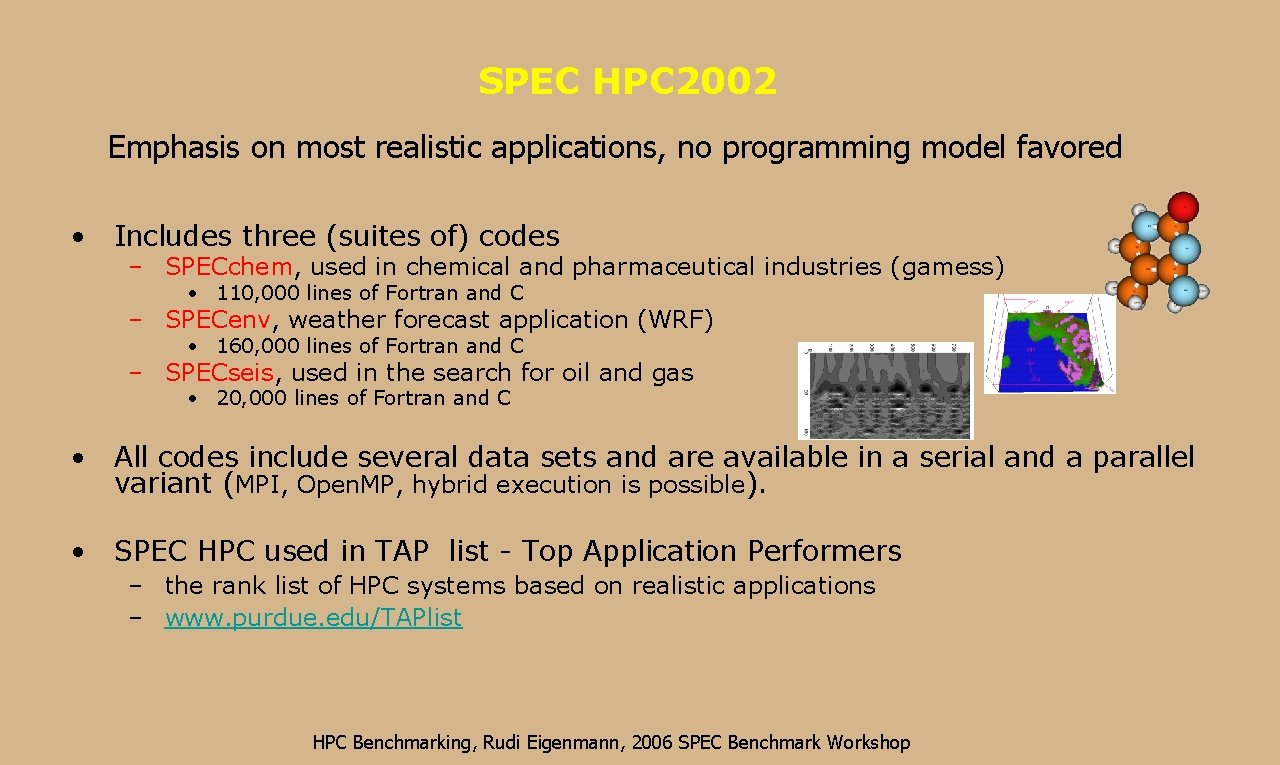

SPEC HPC 2002 Emphasis on most realistic applications, no programming model favored • Includes three (suites of) codes – SPECchem, used in chemical and pharmaceutical industries (gamess) • 110, 000 lines of Fortran and C – SPECenv, weather forecast application (WRF) • 160, 000 lines of Fortran and C – SPECseis, used in the search for oil and gas • 20, 000 lines of Fortran and C • All codes include several data sets and are available in a serial and a parallel variant (MPI, Open. MP, hybrid execution is possible). • SPEC HPC used in TAP list - Top Application Performers – the rank list of HPC systems based on realistic applications – www. purdue. edu/TAPlist HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

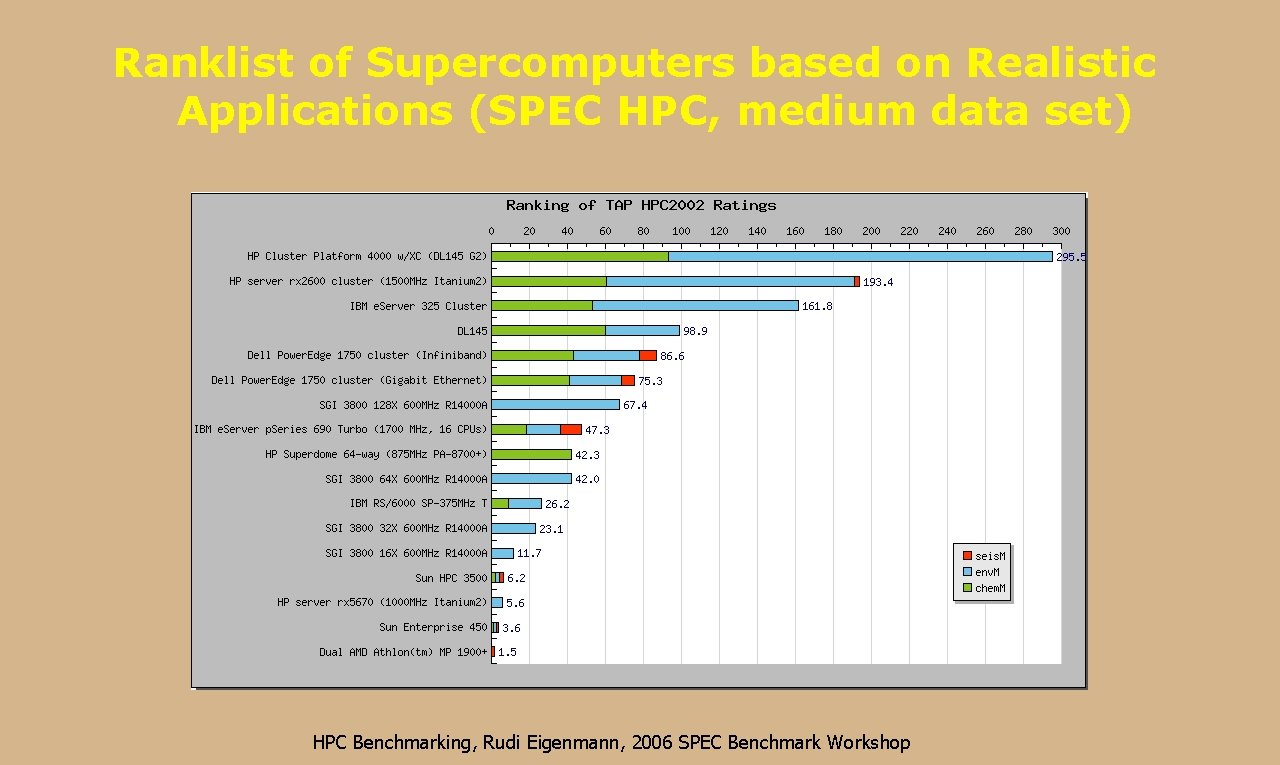

Ranklist of Supercomputers based on Realistic Applications (SPEC HPC, medium data set) HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

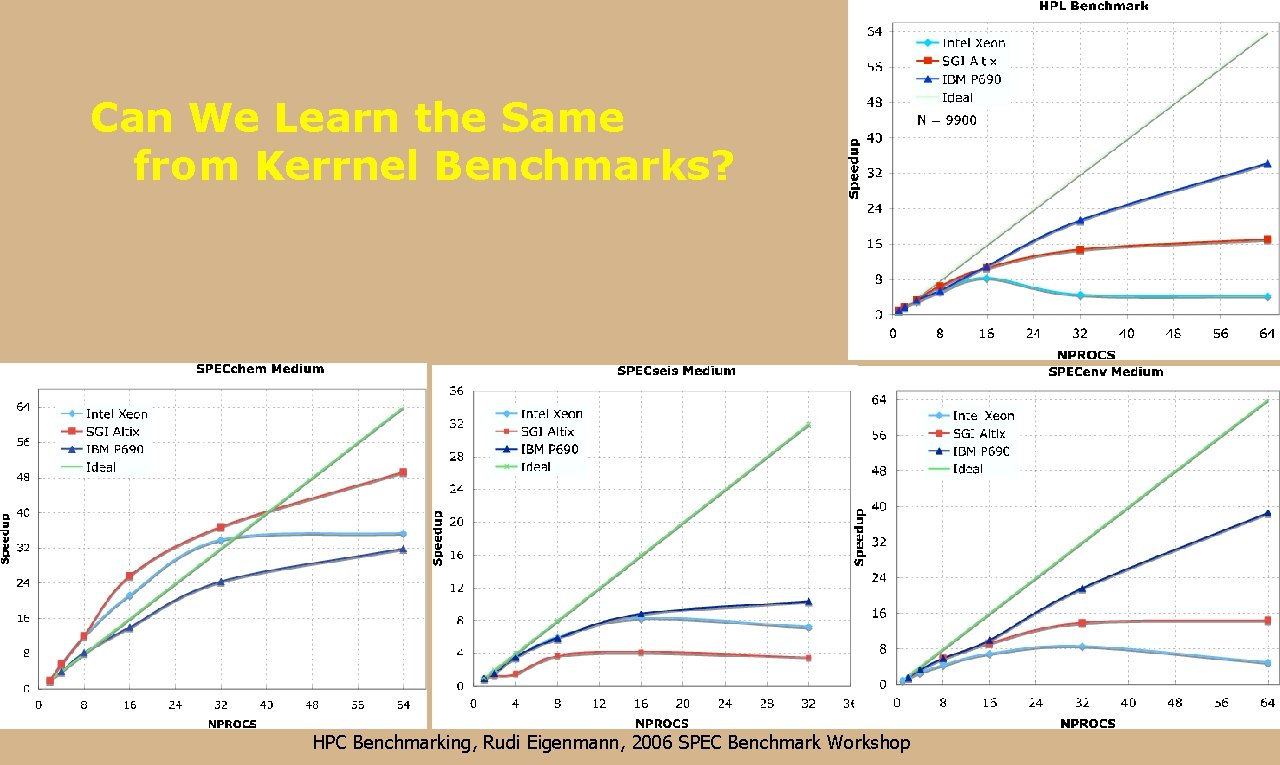

Can We Learn the Same from Kerrnel Benchmarks? HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

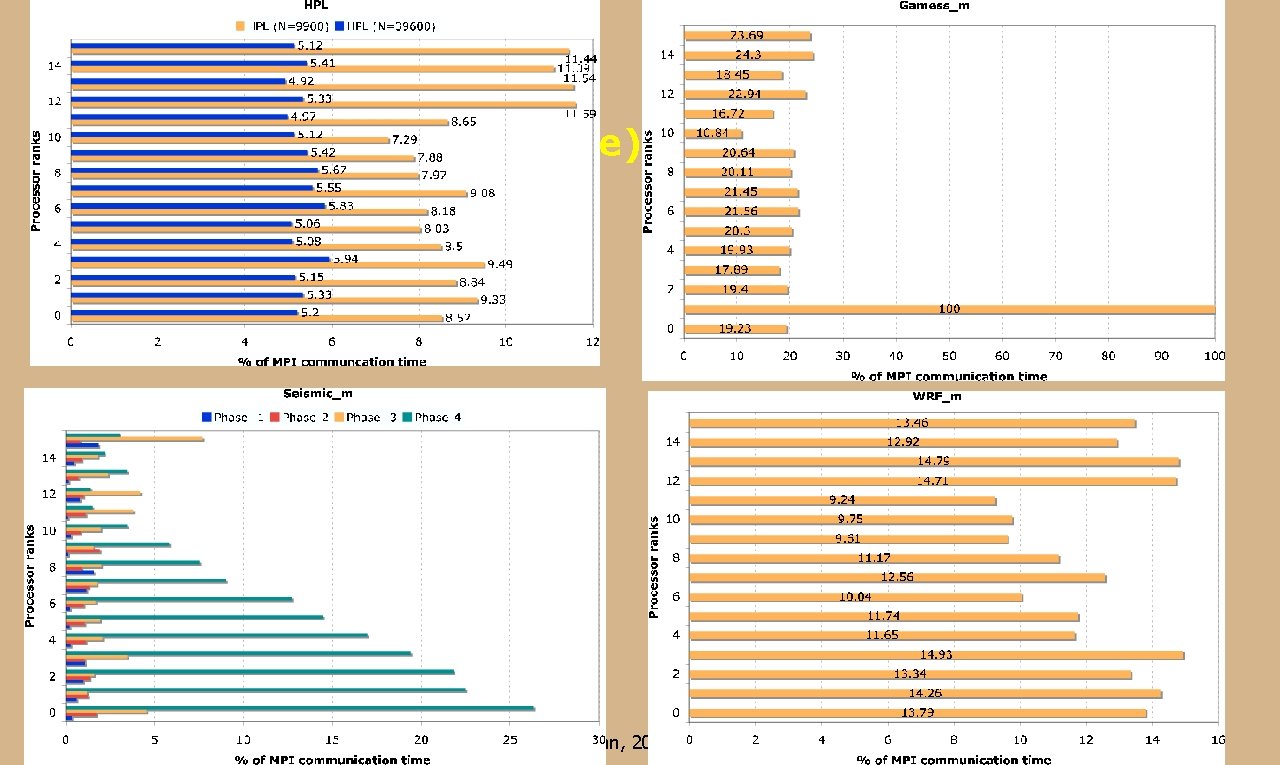

MPI Communication (percent of overall runtime) HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

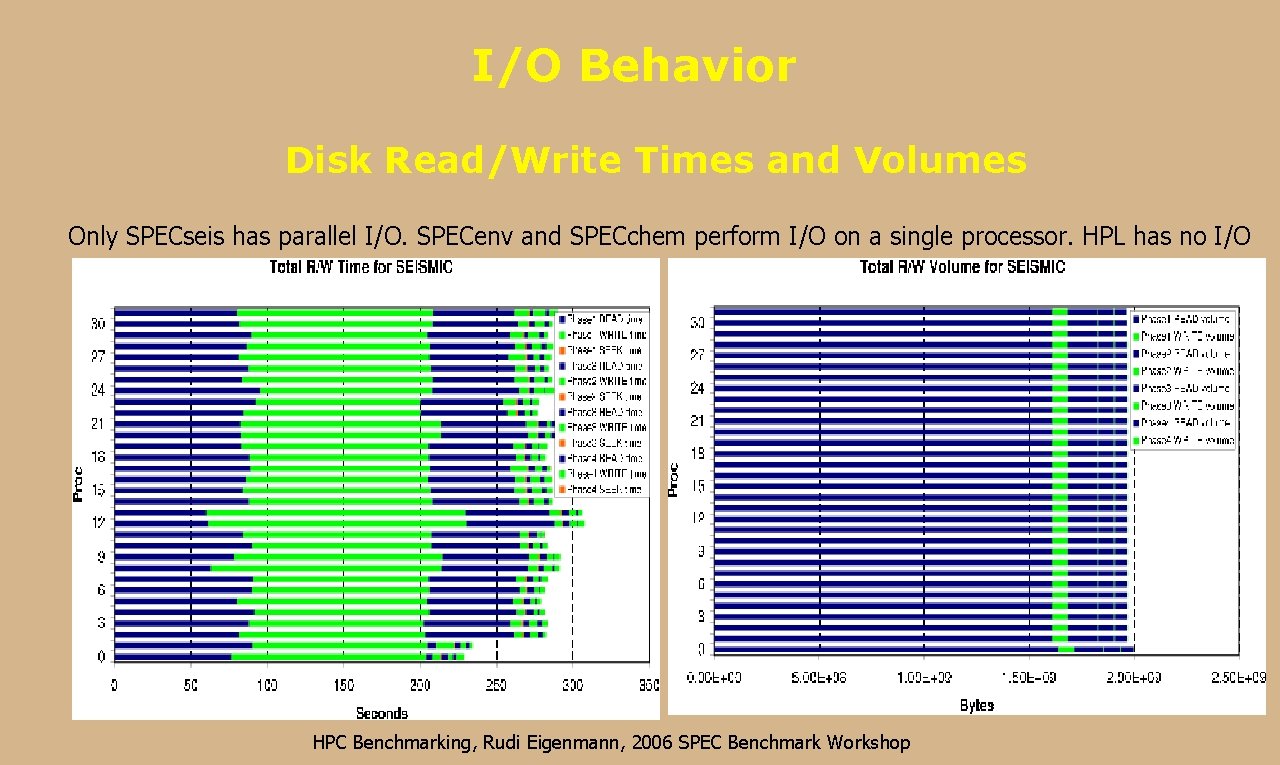

I/O Behavior Disk Read/Write Times and Volumes Only SPECseis has parallel I/O. SPECenv and SPECchem perform I/O on a single processor. HPL has no I/O HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

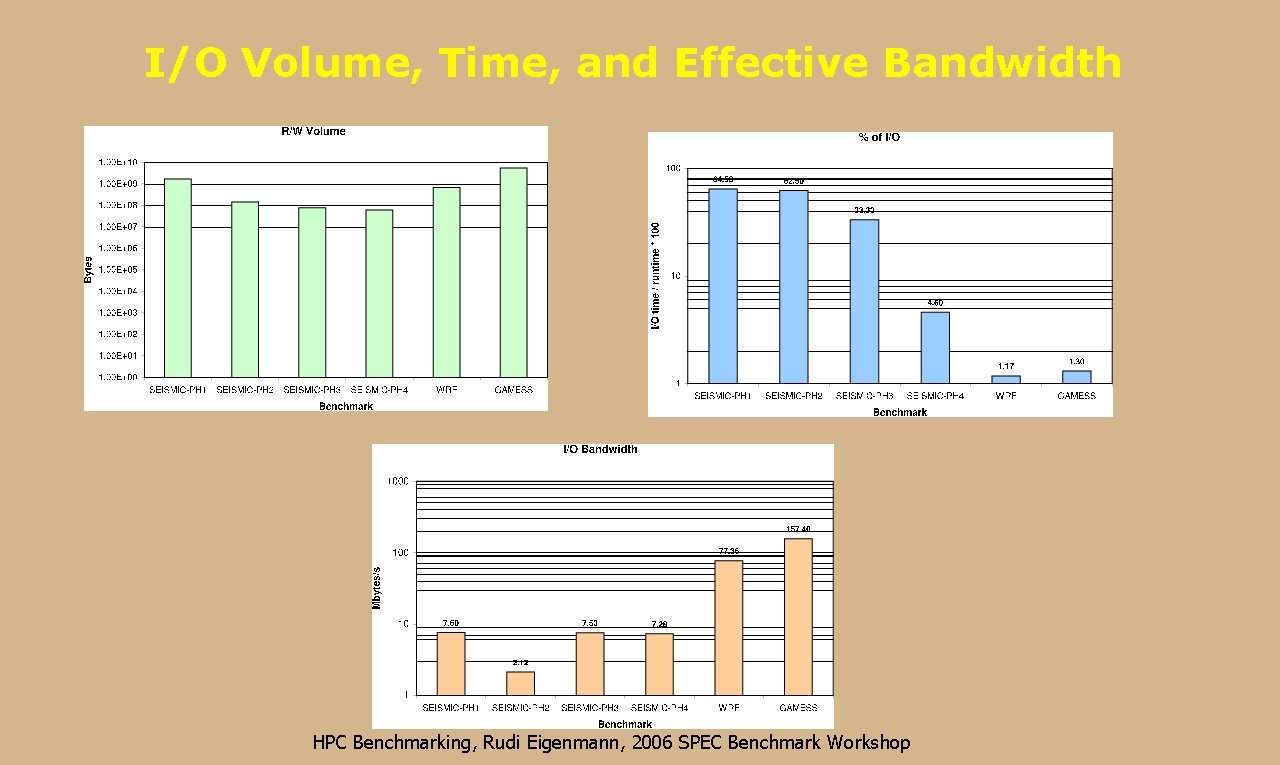

I/O Volume, Time, and Effective Bandwidth HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

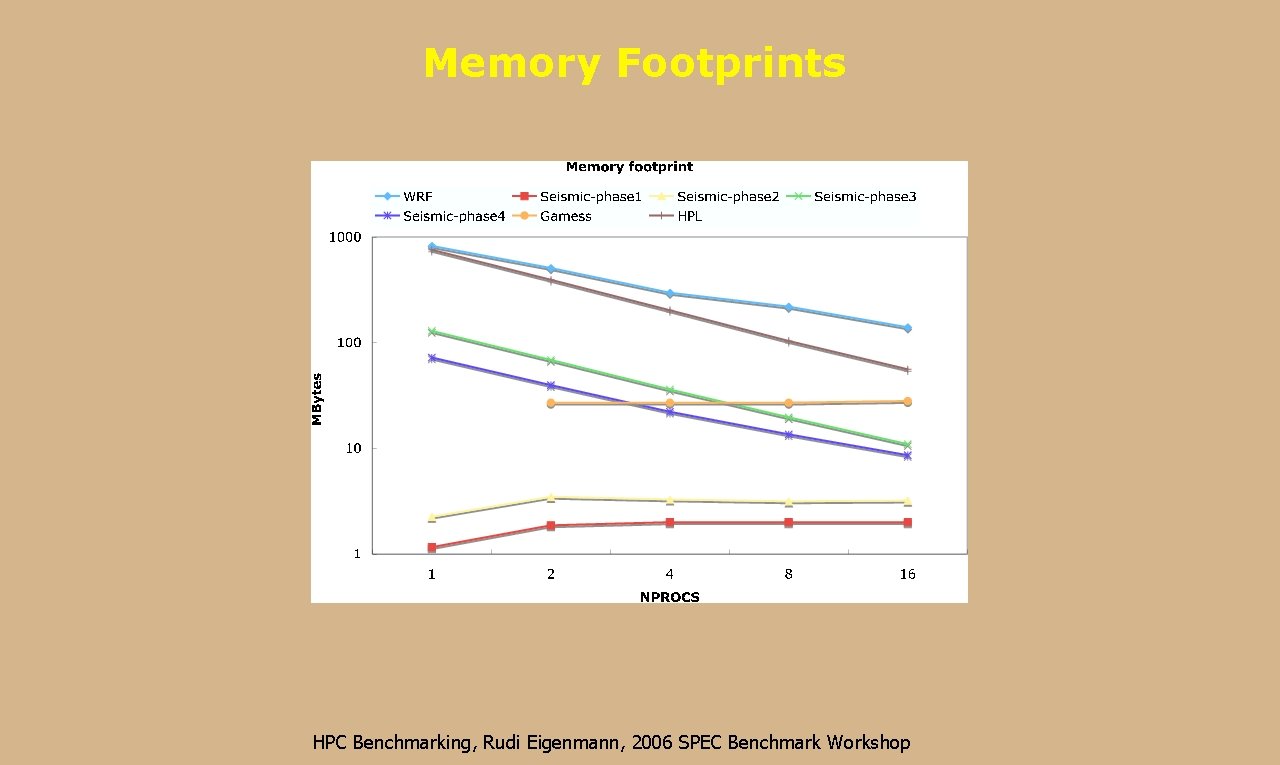

Memory Footprints HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

Conclusions • There is a dire need for basing performance evaluation and benchmarking results on realistic applications. • The SPEC HPC the main criteria for real-application benchmarking: relevance and openness. • Kernel benchmarks are the best choices for measuring individual system components. However, there is a large range of questions that can only be answered satisfactorily using real-application benchmarks. • Benchmarking with real applications is hard and there are many challenges, but there is no replacement. HPC Benchmarking, Rudi Eigenmann, 2006 SPEC Benchmark Workshop

- Slides: 12