How Microservices and Serverless Computing Enable the Next

How Microservices and Serverless Computing Enable the Next Gen of Machine Intelligence Jon Peck Full-Spectrum Developer & Advocate jpeck@algorithmia. com @peckjon Algorithmia. com Making state-of-the-art algorithms discoverable and accessible to everyone

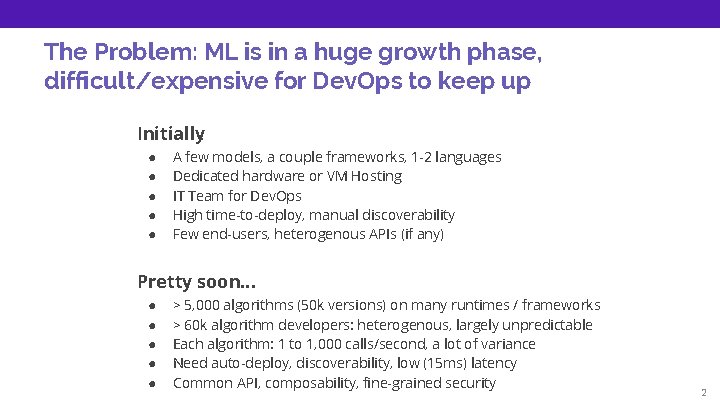

The Problem: ML is in a huge growth phase, difficult/expensive for Dev. Ops to keep up Initially: ● ● ● A few models, a couple frameworks, 1 -2 languages Dedicated hardware or VM Hosting IT Team for Dev. Ops High time-to-deploy, manual discoverability Few end-users, heterogenous APIs (if any) Pretty soon. . . ● ● ● > 5, 000 algorithms (50 k versions) on many runtimes / frameworks > 60 k algorithm developers: heterogenous, largely unpredictable Each algorithm: 1 to 1, 000 calls/second, a lot of variance Need auto-deploy, discoverability, low (15 ms) latency Common API, composability, fine-grained security 2

The Need: an “Operating System for AI” AI/ML scalable infrastructure on demand + marketplace ● Function-as-a-service for Machine & Deep Learning ● Discoverable, live inventory of AI via APIs ● Anyone can contribute & use ● Composable, Monetizable ● Every developer on earth can make their app intelligent 3

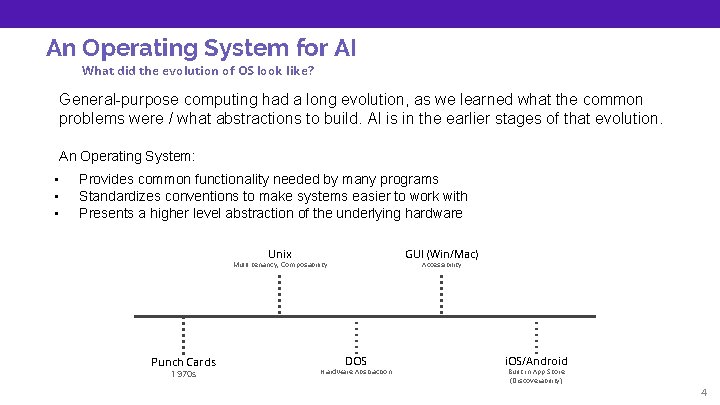

An Operating System for AI What did the evolution of OS look like? General-purpose computing had a long evolution, as we learned what the common problems were / what abstractions to build. AI is in the earlier stages of that evolution. An Operating System: • • • Provides common functionality needed by many programs Standardizes conventions to make systems easier to work with Presents a higher level abstraction of the underlying hardware Unix GUI (Win/Mac) Multi-tenancy, Composability Punch Cards 1970 s Accessibility DOS Hardware Abstraction i. OS/Android Built-in App Store (Discoverability) 4

Use Case Jian Yang made an app to recognize food “See. Food” © HBO All Rights Reserved 5

Use Case He deployed his trained model to a GPU-enabled server ? GPU-enabled Server 6

Use Case The app is a hit! See. Food Productivity 7

Use Case … and now his server is overloaded. ? ? ? x. N GPU-enabled Server 8

Characteristics of AI • Two distinct phases: training and inference • Lots of processing power • Heterogenous hardware (CPU, GPU, FPGA, TPU, etc. ) • Limited by compute rather than bandwidth • “Tensorflow is open source, scaling it is not. ” 9

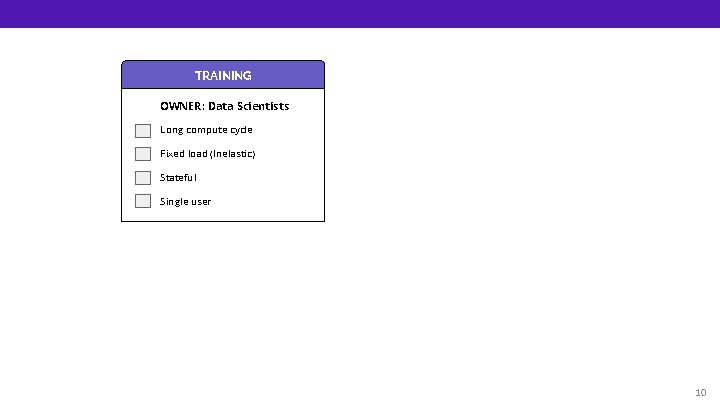

TRAINING OWNER: Data Scientists Long compute cycle Fixed load (Inelastic) Stateful Single user 10

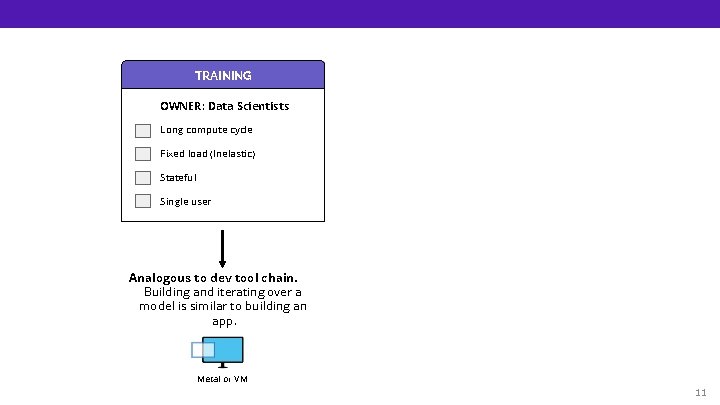

TRAINING OWNER: Data Scientists Long compute cycle Fixed load (Inelastic) Stateful Single user Analogous to dev tool chain. Building and iterating over a model is similar to building an app. Metal or VM 11

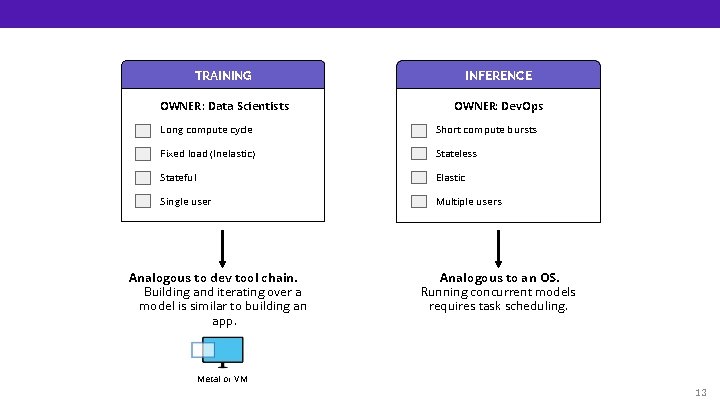

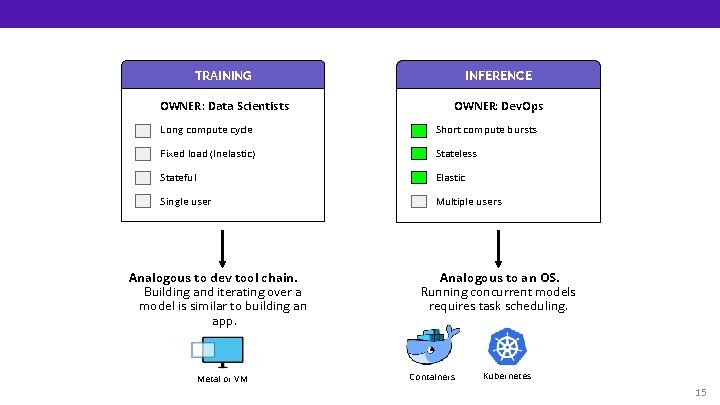

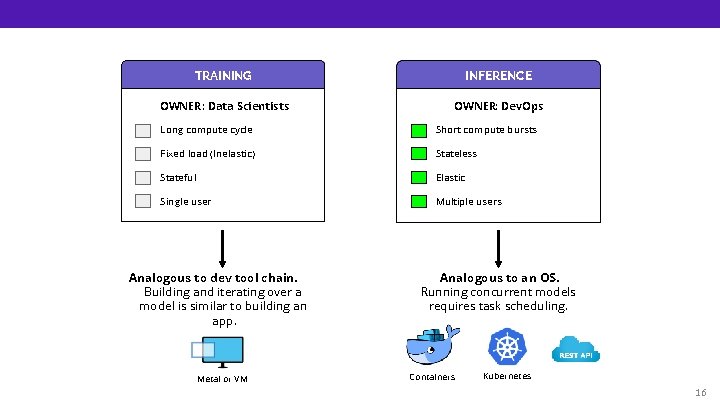

TRAINING INFERENCE OWNER: Data Scientists OWNER: Dev. Ops Long compute cycle Short compute bursts Fixed load (Inelastic) Stateless Stateful Elastic Single user Multiple users Analogous to dev tool chain. Building and iterating over a model is similar to building an app. Metal or VM 12

TRAINING INFERENCE OWNER: Data Scientists OWNER: Dev. Ops Long compute cycle Short compute bursts Fixed load (Inelastic) Stateless Stateful Elastic Single user Multiple users Analogous to dev tool chain. Building and iterating over a model is similar to building an app. Analogous to an OS. Running concurrent models requires task scheduling. Metal or VM 13

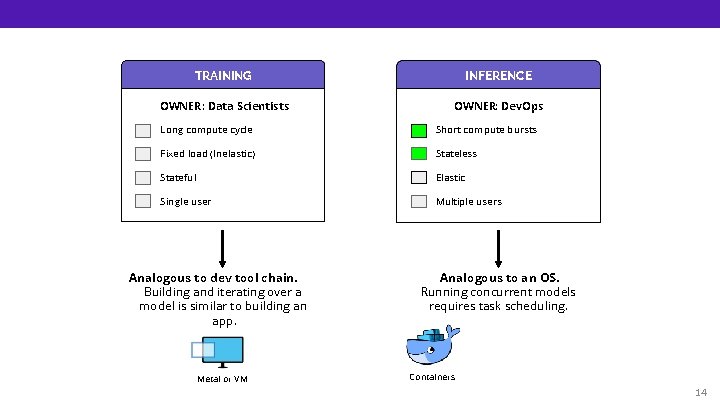

TRAINING INFERENCE OWNER: Data Scientists OWNER: Dev. Ops Long compute cycle Short compute bursts Fixed load (Inelastic) Stateless Stateful Elastic Single user Multiple users Analogous to dev tool chain. Building and iterating over a model is similar to building an app. Metal or VM Analogous to an OS. Running concurrent models requires task scheduling. Containers 14

TRAINING INFERENCE OWNER: Data Scientists OWNER: Dev. Ops Long compute cycle Short compute bursts Fixed load (Inelastic) Stateless Stateful Elastic Single user Multiple users Analogous to dev tool chain. Building and iterating over a model is similar to building an app. Metal or VM Analogous to an OS. Running concurrent models requires task scheduling. Containers Kubernetes 15

TRAINING INFERENCE OWNER: Data Scientists OWNER: Dev. Ops Long compute cycle Short compute bursts Fixed load (Inelastic) Stateless Stateful Elastic Single user Multiple users Analogous to dev tool chain. Building and iterating over a model is similar to building an app. Metal or VM Analogous to an OS. Running concurrent models requires task scheduling. Containers Kubernetes 16

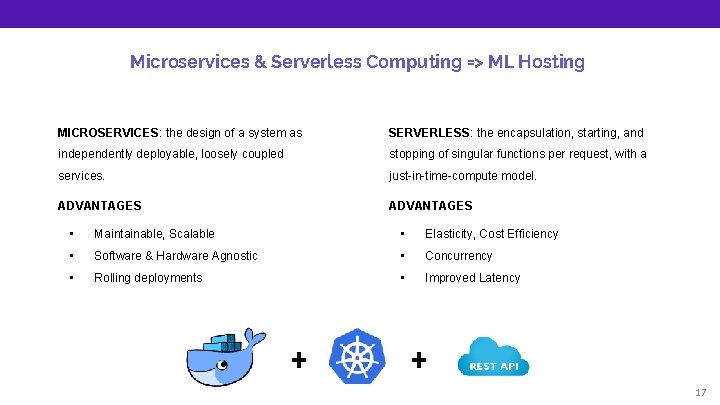

Microservices & Serverless Computing => ML Hosting MICROSERVICES: the design of a system as SERVERLESS: the encapsulation, starting, and independently deployable, loosely coupled stopping of singular functions per request, with a services. just-in-time-compute model. ADVANTAGES • Maintainable, Scalable • Elasticity, Cost Efficiency • Software & Hardware Agnostic • Concurrency • Rolling deployments • Improved Latency + + 17

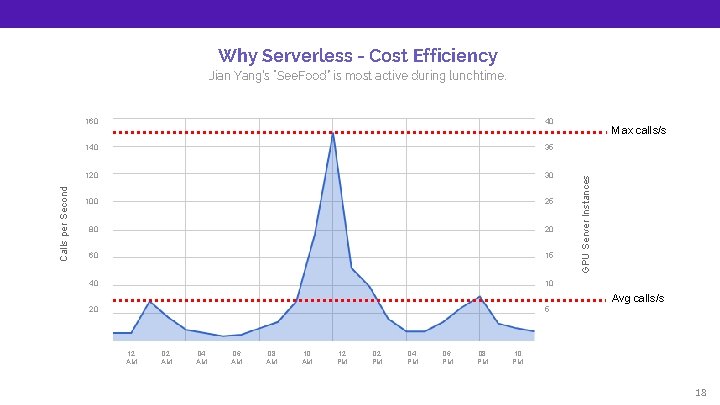

Why Serverless - Cost Efficiency 160 40 140 35 120 30 100 25 80 20 60 15 40 10 20 5 Max calls/s GPU Server Instances Calls per Second Jian Yang’s “See. Food” is most active during lunchtime. Avg calls/s 12 AM 04 AM 06 AM 08 AM 10 AM 12 PM 04 PM 06 PM 08 PM 10 PM 18

Traditional Architecture - Design for Maximum 160 40 140 35 120 30 100 25 80 20 60 15 40 10 20 5 Max calls/s GPU Server Instances Calls per Second 40 machines 24 hours. $648 * 40 = $25, 920 per month Avg calls/s 12 AM 04 AM 06 AM 08 AM 10 AM 12 PM 04 PM 06 PM 08 PM 10 PM 19

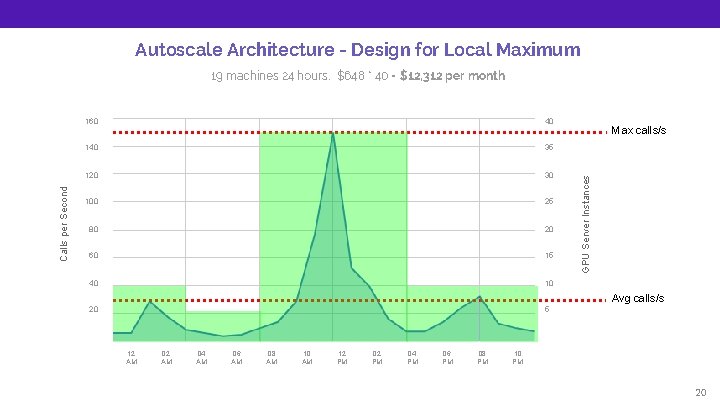

Autoscale Architecture - Design for Local Maximum 160 40 140 35 120 30 100 25 80 20 60 15 40 10 20 5 Max calls/s GPU Server Instances Calls per Second 19 machines 24 hours. $648 * 40 = $12, 312 per month Avg calls/s 12 AM 04 AM 06 AM 08 AM 10 AM 12 PM 04 PM 06 PM 08 PM 10 PM 20

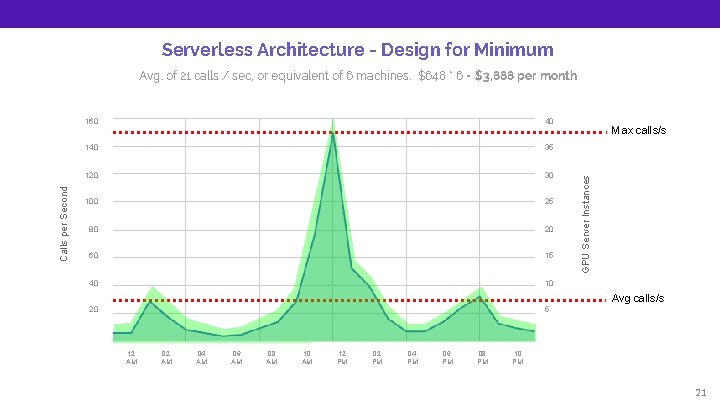

Serverless Architecture - Design for Minimum 160 40 140 35 120 30 100 25 80 20 60 15 40 10 20 5 Max calls/s GPU Server Instances Calls per Second Avg. of 21 calls / sec, or equivalent of 6 machines. $648 * 6 = $3, 888 per month Avg calls/s 12 AM 04 AM 06 AM 08 AM 10 AM 12 PM 04 PM 06 PM 08 PM 10 PM 21

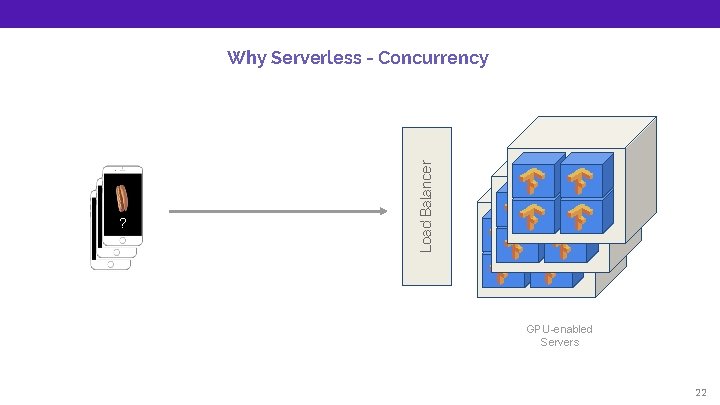

? ? ? Load Balancer Why Serverless - Concurrency GPU-enabled Servers 22

Why Serverless - Improved Latency Portability = Low Latency 23

+ + Almost there! We also need: GPU Memory Management, Job Scheduling, Cloud Abstraction, Discoverability, Authentication, Logging, etc. 24

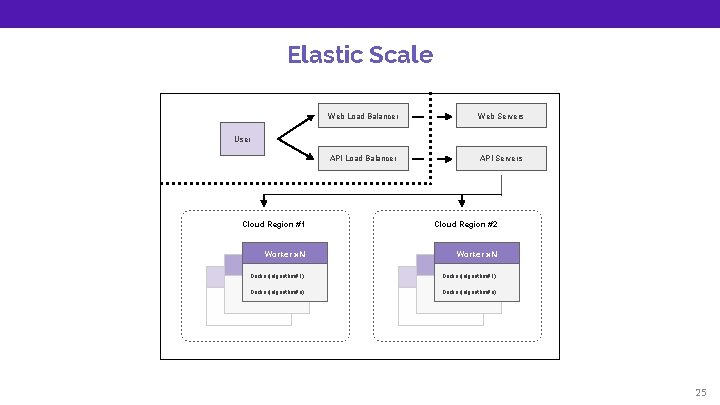

Elastic Scale Web Load Balancer Web Servers API Load Balancer API Servers User Cloud Region #1 Cloud Region #2 Worker x. N Docker(algorithm#1). . Docker(algorithm#n) 25

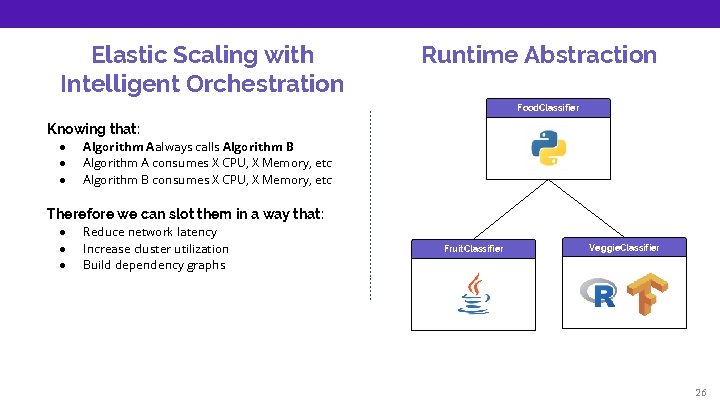

Elastic Scaling with Intelligent Orchestration Runtime Abstraction Food. Classifier Knowing that: ● ● ● Algorithm Aalways calls Algorithm B Algorithm A consumes X CPU, X Memory, etc Algorithm B consumes X CPU, X Memory, etc Therefore we can slot them in a way that: ● ● ● Reduce network latency Increase cluster utilization Build dependency graphs Fruit. Classifier Veggie. Classifier 26

Composability is critical for AI workflows because of data processing pipelines and ensembles. Fruit or Veggie Classifier cat file. csv | grep foo | wc -l Fruit Classifier Veggie Classifier 27

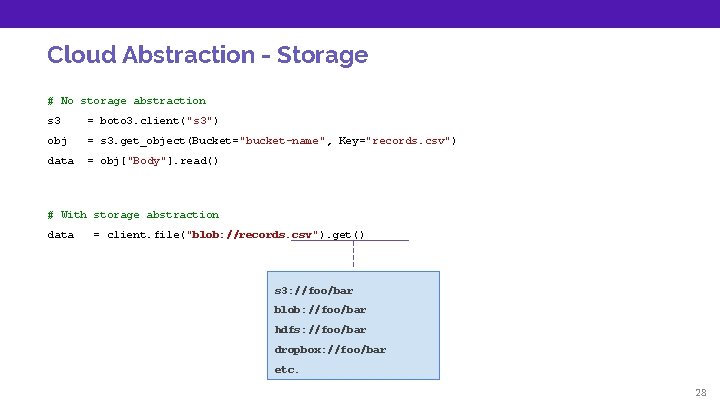

Cloud Abstraction - Storage # No storage abstraction s 3 = boto 3. client("s 3") obj = s 3. get_object(Bucket="bucket-name", Key="records. csv") data = obj["Body"]. read() # With storage abstraction data = client. file("blob: //records. csv"). get() s 3: //foo/bar blob: //foo/bar hdfs: //foo/bar dropbox: //foo/bar etc. 28

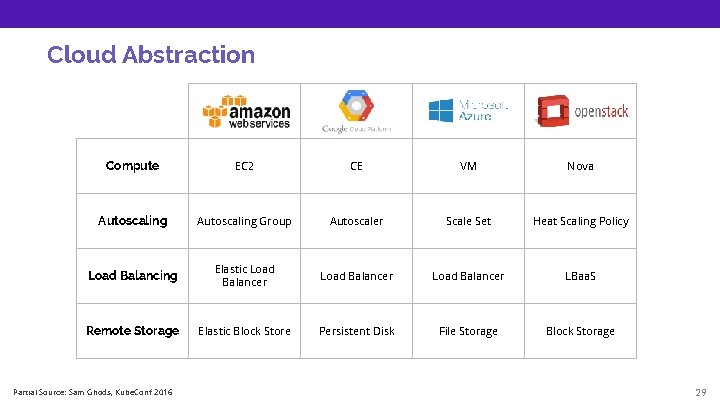

Cloud Abstraction Compute EC 2 CE VM Nova Autoscaling Group Autoscaler Scale Set Heat Scaling Policy Load Balancing Elastic Load Balancer LBaa. S Remote Storage Elastic Block Store Persistent Disk File Storage Block Storage Partial Source: Sam Ghods, Kube. Conf 2016 29

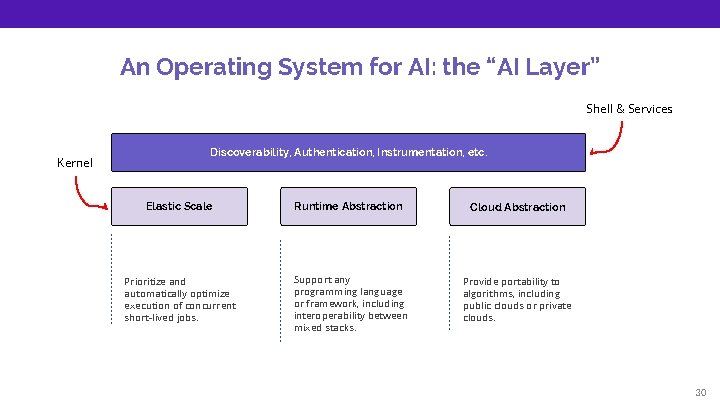

An Operating System for AI: the “AI Layer” Shell & Services Kernel Discoverability, Authentication, Instrumentation, etc. Elastic Scale Runtime Abstraction Cloud Abstraction Prioritize and automatically optimize execution of concurrent short-lived jobs. Support any programming language or framework, including interoperability between mixed stacks. Provide portability to algorithms, including public clouds or private clouds. 30

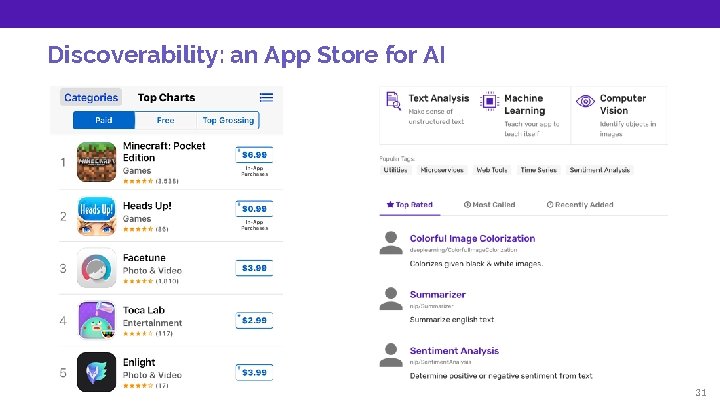

Discoverability: an App Store for AI 31

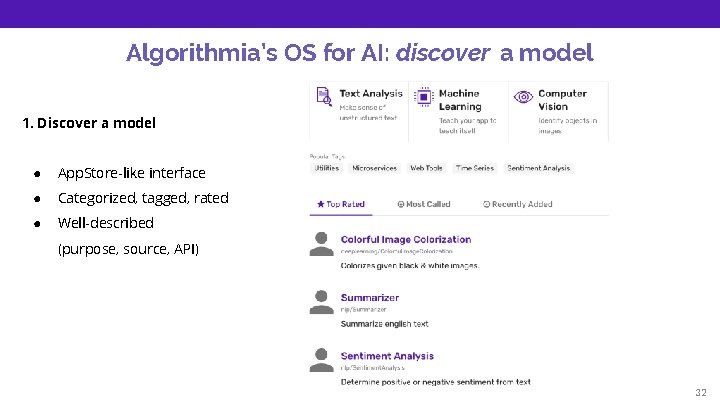

Algorithmia’s OS for AI: discover a model 1. Discover a model ● App. Store-like interface ● Categorized, tagged, rated ● Well-described (purpose, source, API) 32

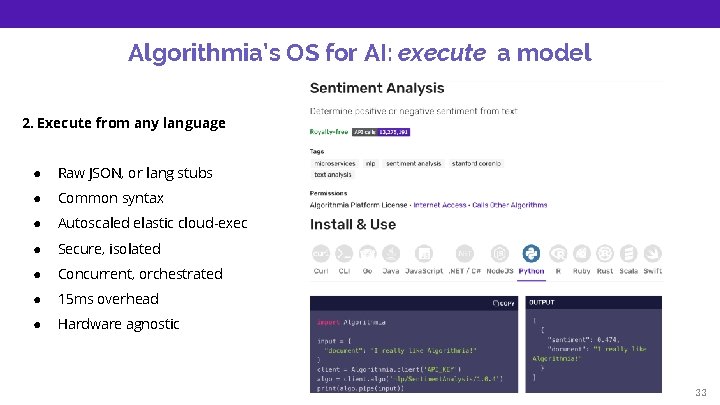

Algorithmia’s OS for AI: execute a model 2. Execute from any language ● Raw JSON, or lang stubs ● Common syntax ● Autoscaled elastic cloud-exec ● Secure, isolated ● Concurrent, orchestrated ● 15 ms overhead ● Hardware agnostic 33

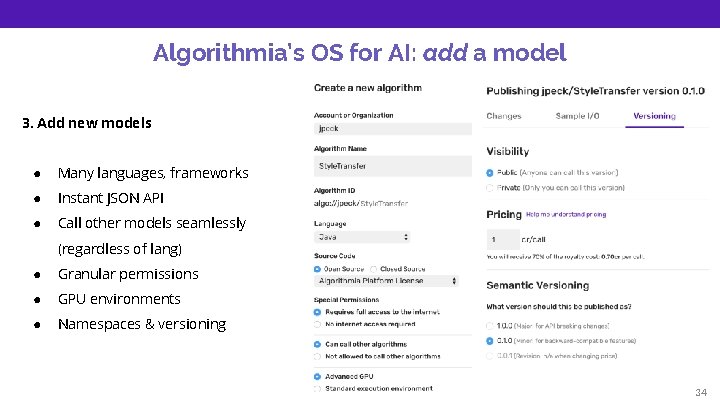

Algorithmia’s OS for AI: add a model 3. Add new models ● Many languages, frameworks ● Instant JSON API ● Call other models seamlessly (regardless of lang) ● Granular permissions ● GPU environments ● Namespaces & versioning 34

Thank you! Jon Peck Developer Advocate FREE STUFF $50 free at Algorithmia. com signup code: WOSC 18 jpeck@algorithmia. com @peckjon Algorithmia. com WE ARE HIRING algorithmia. com/jobs ● ● Seattle or Remote Bright, collaborative env Unlimited PTO Dog-friendly

- Slides: 35