Hilbert Space Embeddings of Conditional Distributions With Applications

Hilbert Space Embeddings of Conditional Distributions --With Applications to Dynamical Systems Le Song Carnegie Mellon University Joint work with Jonathan Huang, Alex Smola and Kenji Fukumizu

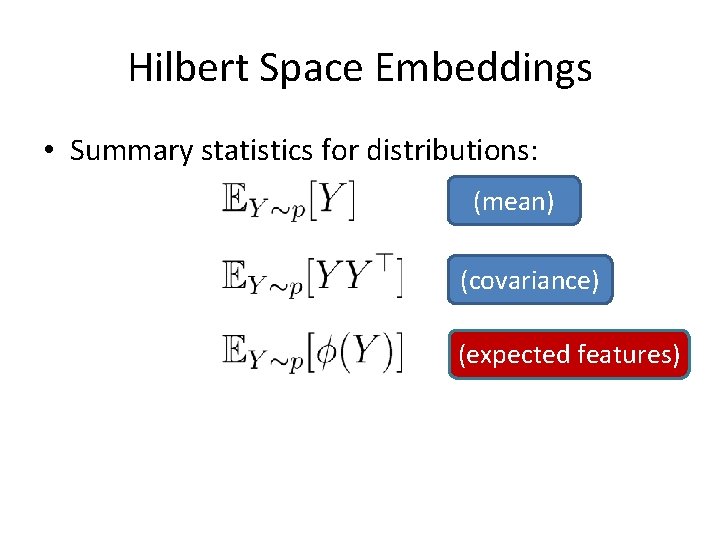

Hilbert Space Embeddings • Summary statistics for distributions: (mean) (covariance) (expected features)

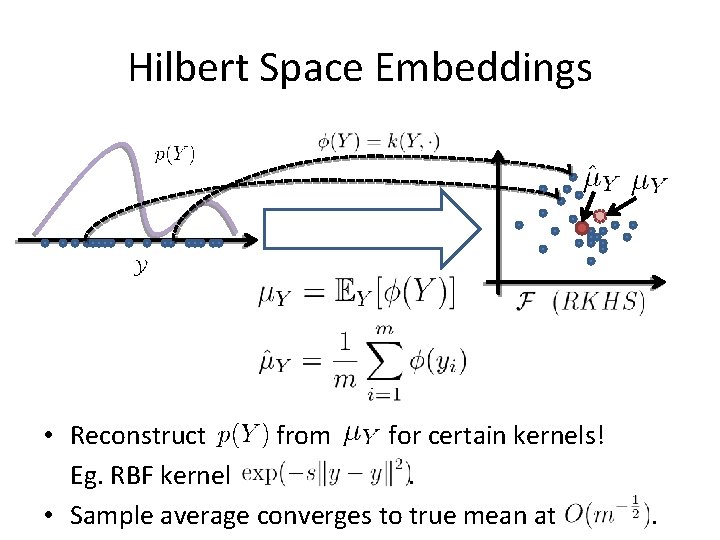

Hilbert Space Embeddings • Reconstruct from for certain kernels! Eg. RBF kernel. • Sample average converges to true mean at .

![Hilbert Space Embeddings Structured Prediction Embed Conditional Distributions p(y|x) µ[p(y|x)] Dynamical Systems Hilbert Space Embeddings Structured Prediction Embed Conditional Distributions p(y|x) µ[p(y|x)] Dynamical Systems](http://slidetodoc.com/presentation_image_h2/cd74ea82edf671a9200b1ba9930a857e/image-4.jpg)

Hilbert Space Embeddings Structured Prediction Embed Conditional Distributions p(y|x) µ[p(y|x)] Dynamical Systems

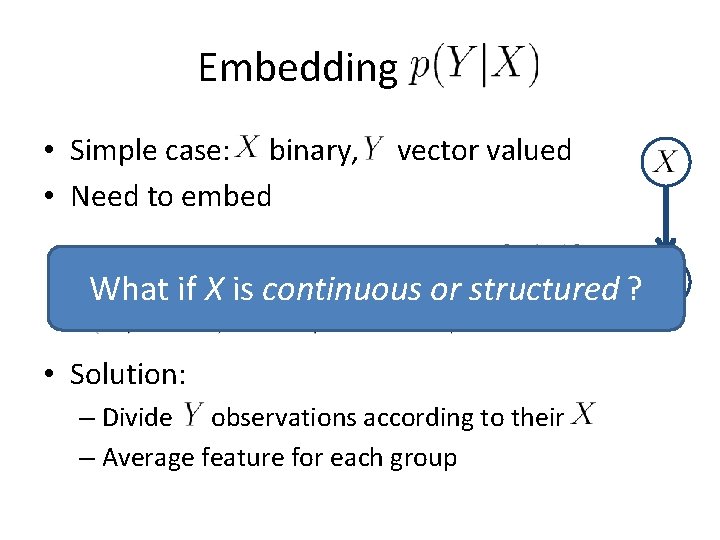

Embedding P(Y|X) • Simple case: X binary, Y vector valued • Need to embed What if X is continuous or structured ? • Solution: – Divide observations according to their – Average feature for each group

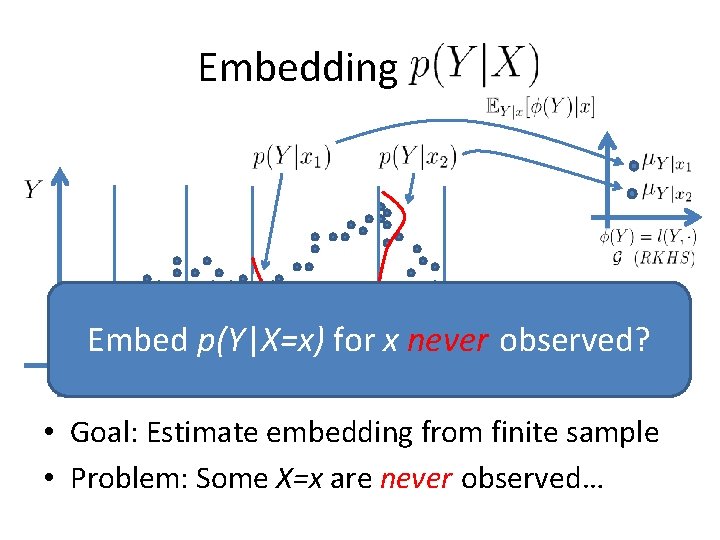

Embedding P(Y|X) Embed p(Y|X=x) for x never observed? • Goal: Estimate embedding from finite sample • Problem: Some X=x are never observed…

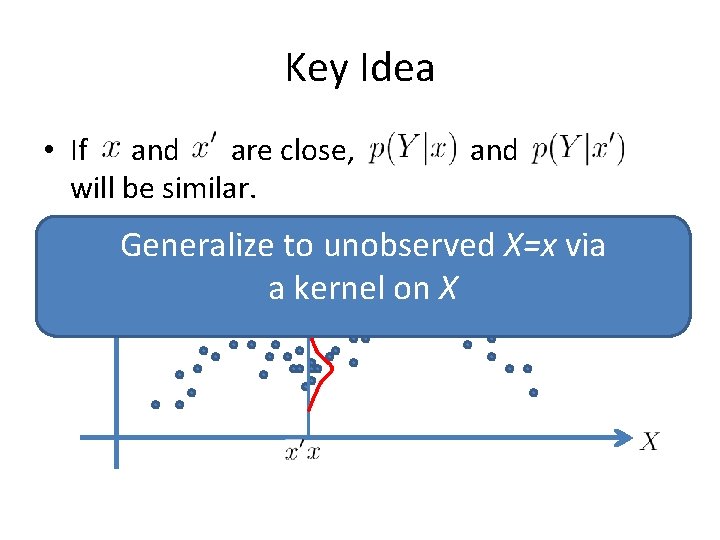

Key Idea • If and are close, will be similar. and Generalize to unobserved X=x via a kernel on X

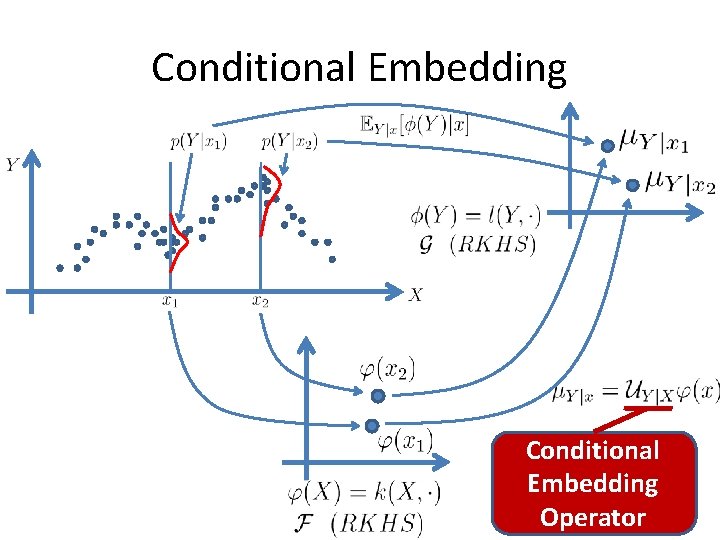

Conditional Embedding Operator

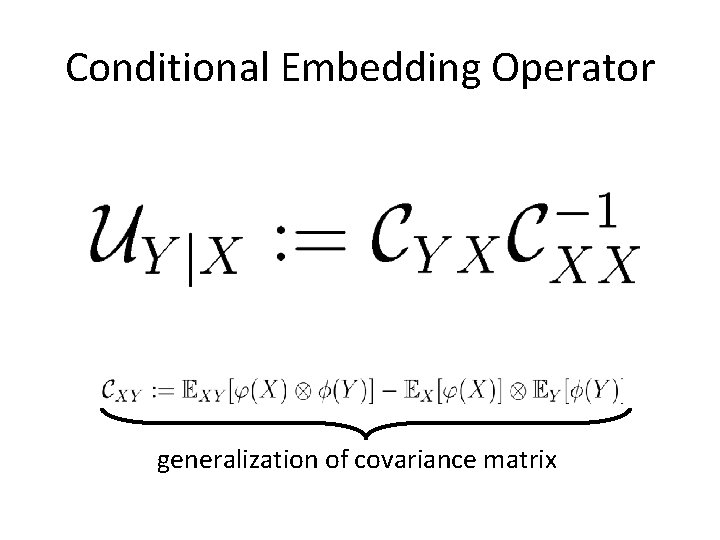

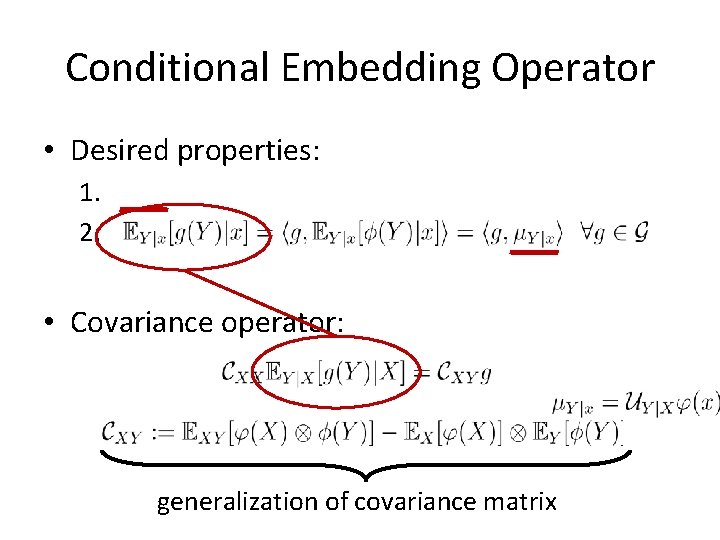

Conditional Embedding Operator generalization of covariance matrix

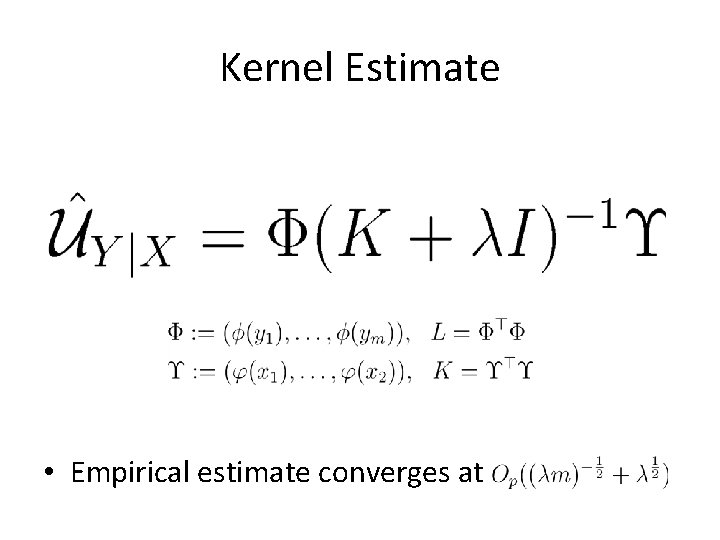

Kernel Estimate • Empirical estimate converges at

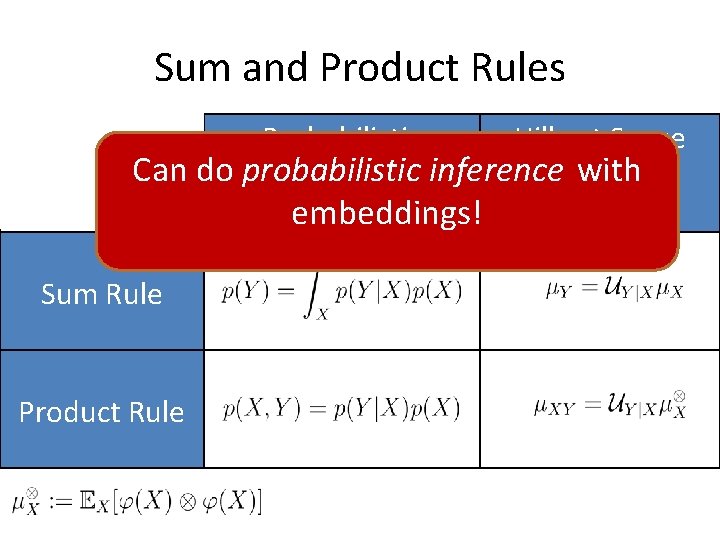

Sum and Product Rules Probabilistic Hilbert Space Can do probabilistic with Relation inference Relation embeddings! Sum Rule Product Rule

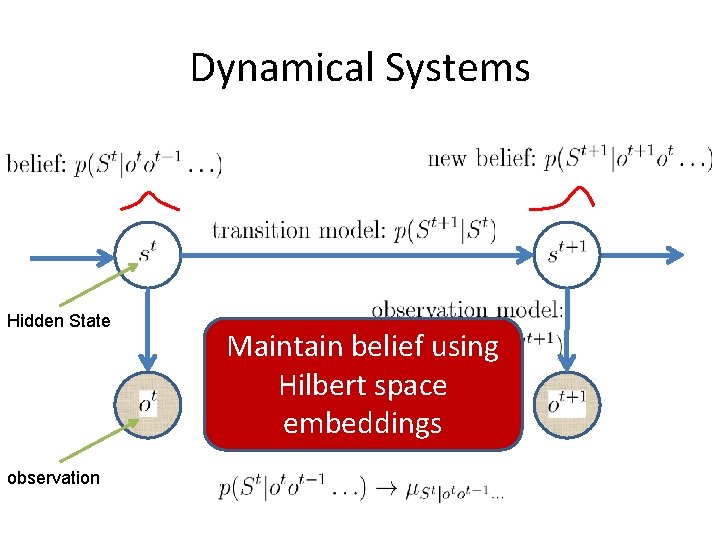

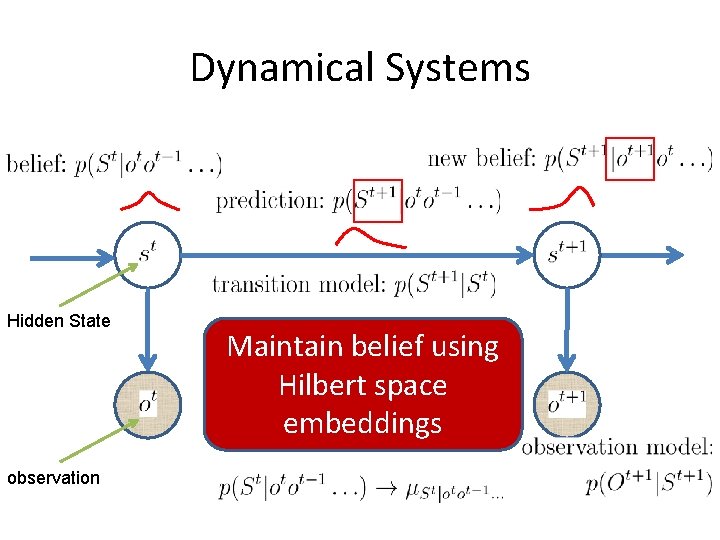

Dynamical Systems Hidden State observation Maintain belief using Hilbert space embeddings

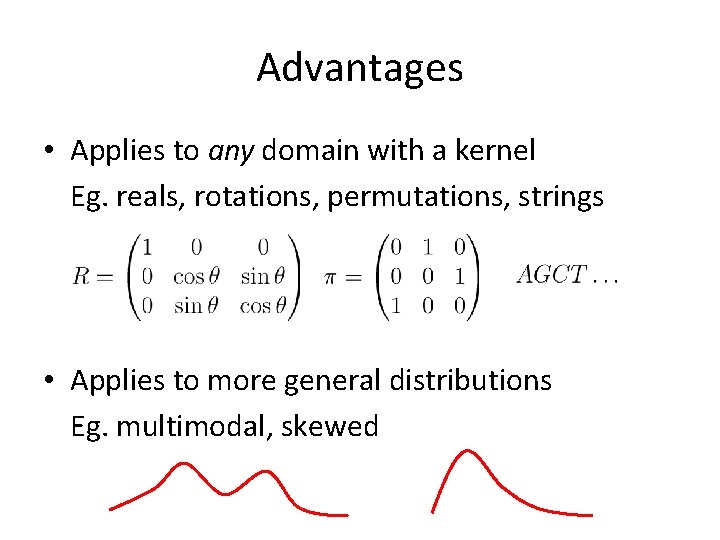

Advantages • Applies to any domain with a kernel Eg. reals, rotations, permutations, strings • Applies to more general distributions Eg. multimodal, skewed

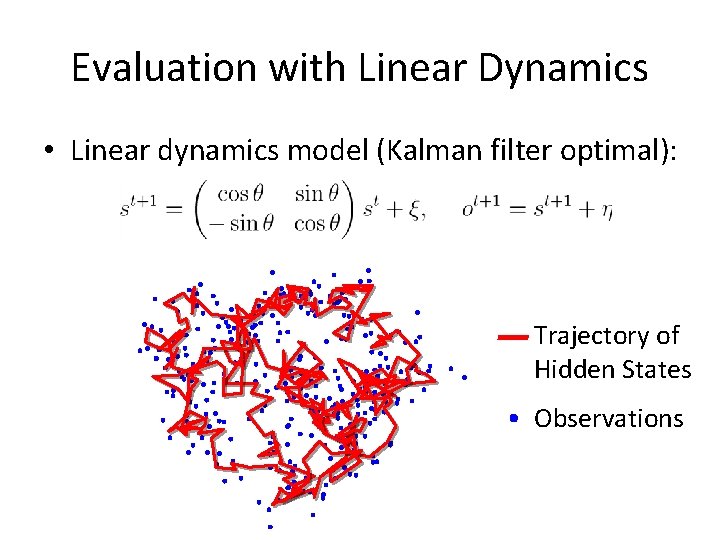

Evaluation with Linear Dynamics • Linear dynamics model (Kalman filter optimal): Trajectory of Hidden States Observations

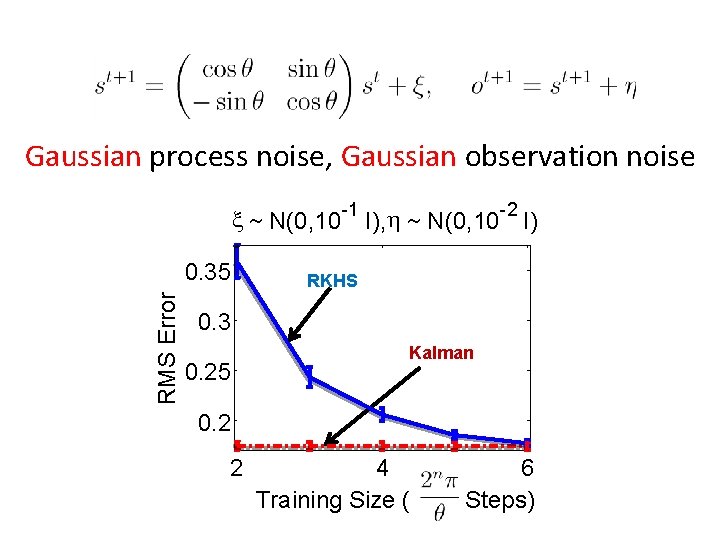

Gaussian process noise, Gaussian observation noise x ~ N(0, 10 -1 I), h ~ N(0, 10 -2 I) RMS Error 0. 35 RKHS 0. 3 0. 25 Kalman 0. 2 2 4 Training Size ( 6 Steps)

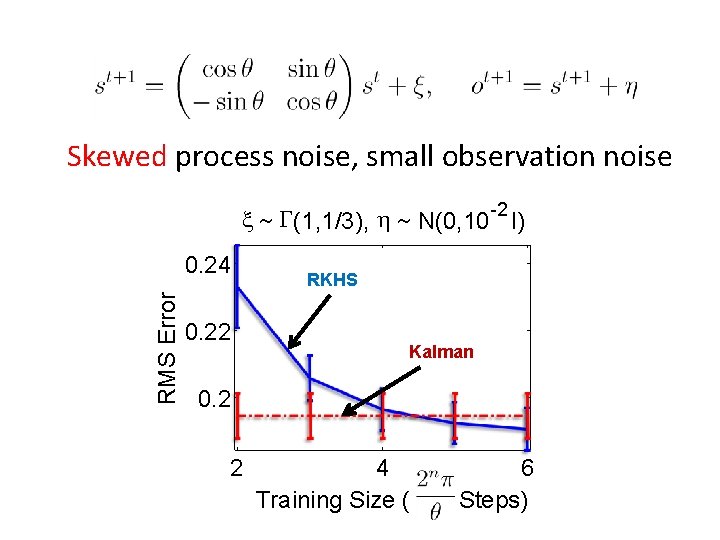

Skewed process noise, small observation noise x ~ G(1, 1/3), h ~ N(0, 10 -2 I) RMS Error 0. 24 0. 22 RKHS Kalman 0. 2 2 4 Training Size ( 6 Steps)

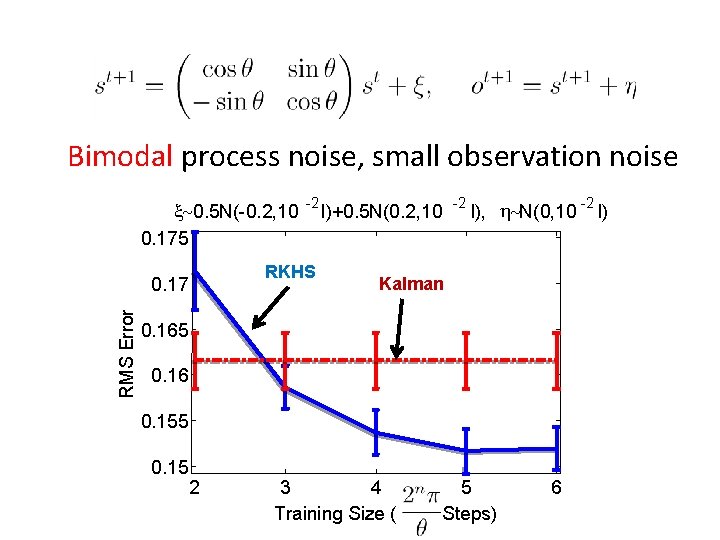

Bimodal process noise, small observation noise x~0. 5 N(-0. 2, 10 0. 175 RKHS 0. 17 RMS Error -2 -2 I)+0. 5 N(0. 2, 10 -2 I), h~N(0, 10 I) Kalman 0. 165 0. 16 0. 155 0. 15 2 3 4 Training Size ( 5 Steps) 6

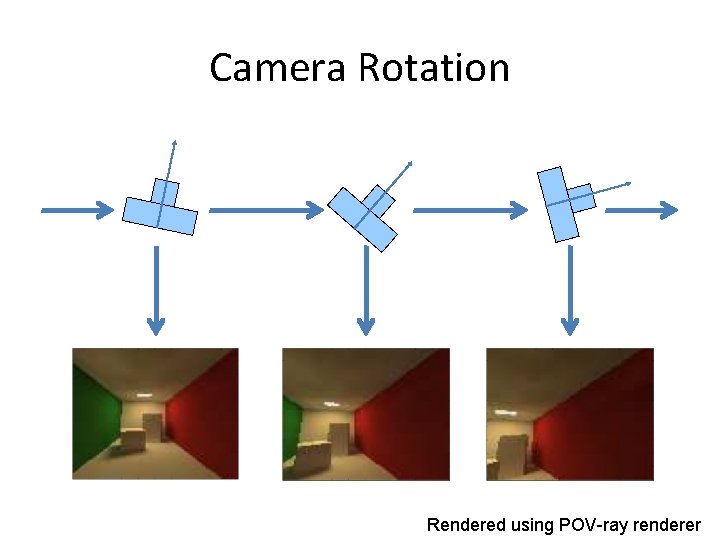

Camera Rotation Rendered using POV-ray renderer

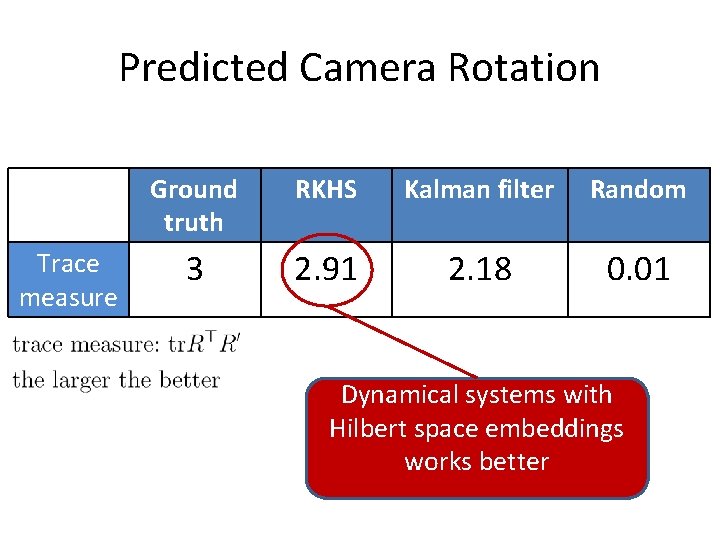

Predicted Camera Rotation Trace measure Ground truth RKHS Kalman filter Random 3 2. 91 2. 18 0. 01 Dynamical systems with Hilbert space embeddings works better

Video from Predicted Rotation Original Video Rendered with Predicted Camera Rotation

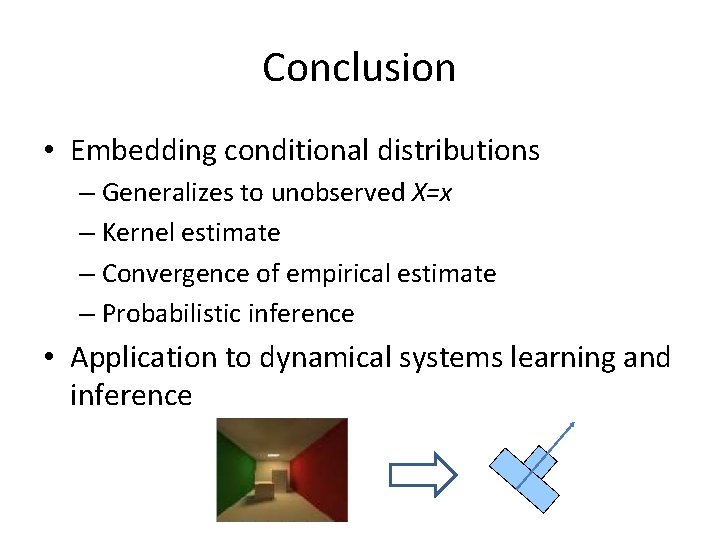

Conclusion • Embedding conditional distributions – Generalizes to unobserved X=x – Kernel estimate – Convergence of empirical estimate – Probabilistic inference • Application to dynamical systems learning and inference

The End • Thanks

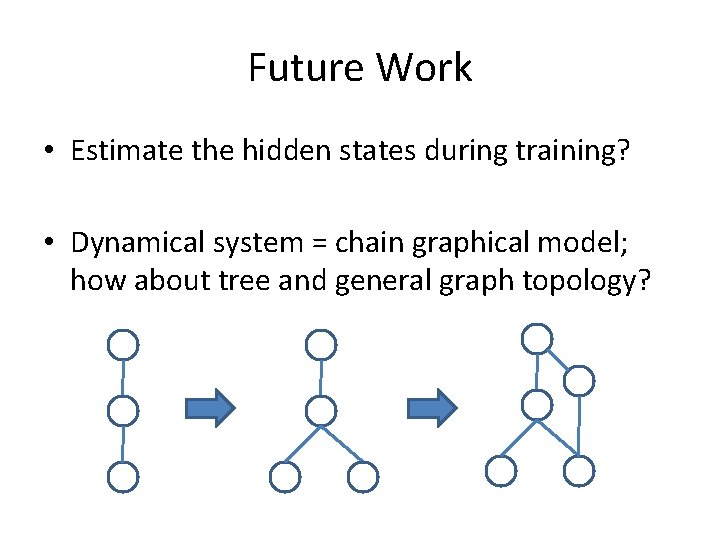

Future Work • Estimate the hidden states during training? • Dynamical system = chain graphical model; how about tree and general graph topology?

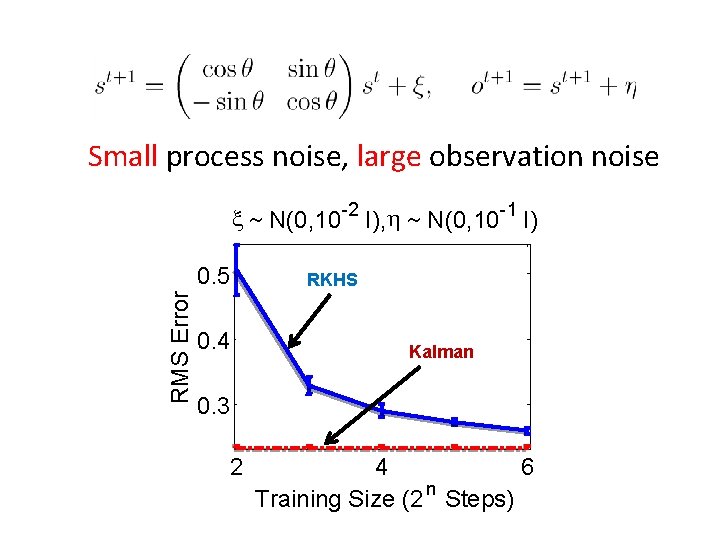

Small process noise, large observation noise x ~ N(0, 10 -2 I), h ~ N(0, 10 -1 I) RMS Error 0. 5 0. 4 RKHS Kalman 0. 3 2 4 6 n Training Size (2 Steps)

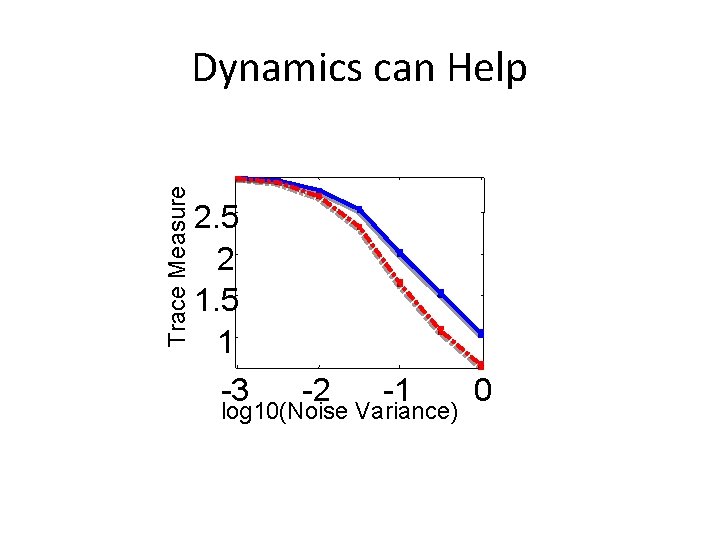

Trace Measure Dynamics can Help 2. 5 2 1. 5 1 -3 -2 -1 log 10(Noise Variance) 0

Dynamical Systems Hidden State observation Maintain belief using Hilbert space embeddings

Conditional Embedding Operator • Desired properties: 1. 2. • Covariance operator: generalization of covariance matrix

- Slides: 27