HighThroughput and Language Agnostic Entity Disambiguation and Linking

High-Throughput and Language. Agnostic Entity Disambiguation and Linking on User Generated Data Preeti Bhargava, Nemanja Spasojevic, Guoning Hu Applied Data Science, Lithium Technologies Email: team-relevance@klout. com

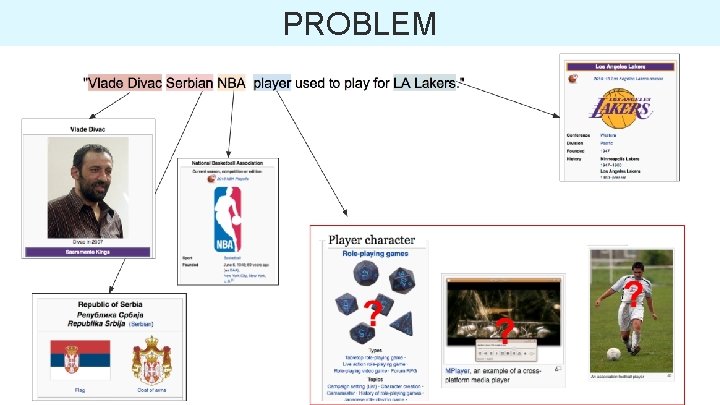

PROBLEM

APPLICATIONS • Tweets & other user generated text • User profile (interests & expertise) • URL recommendations • Content personalization

CHALLENGES • Ambiguity • Multi-lingual content • High throughput and lightweight approach o 0. 5 B documents daily (~1 -2 ms per tweet) o commodity hardware (REST API, MR) • Shallow NLP approach (no POS) • Dense annotations (efficient information retrieval)

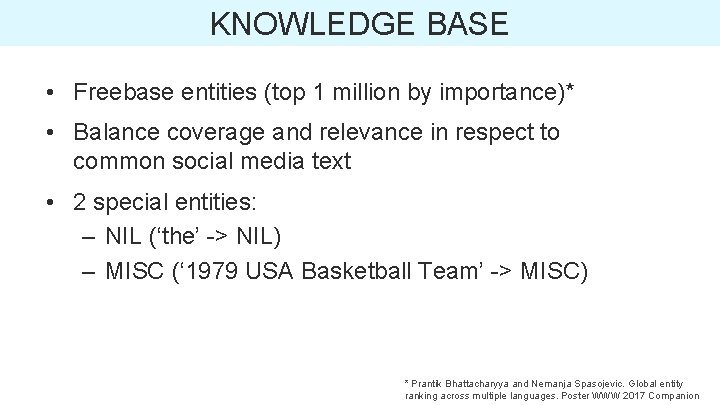

KNOWLEDGE BASE • Freebase entities (top 1 million by importance)* • Balance coverage and relevance in respect to common social media text • 2 special entities: – NIL (‘the’ -> NIL) – MISC (‘ 1979 USA Basketball Team’ -> MISC) * Prantik Bhattacharyya and Nemanja Spasojevic. Global entity ranking across multiple languages. Poster WWW 2017 Companion

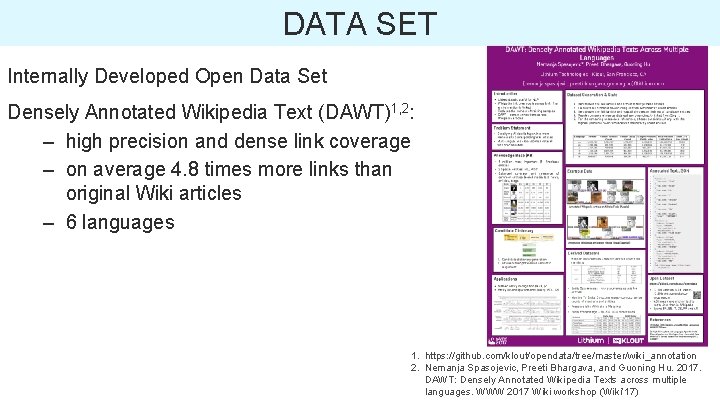

DATA SET Internally Developed Open Data Set Densely Annotated Wikipedia Text (DAWT)1, 2: – high precision and dense link coverage – on average 4. 8 times more links than original Wiki articles – 6 languages 1. https: //github. com/klout/opendata/tree/master/wiki_annotation 2. Nemanja Spasojevic, Preeti Bhargava, and Guoning Hu. 2017. DAWT: Densely Annotated Wikipedia Texts across multiple languages. WWW 2017 Wiki workshop (Wiki’ 17)

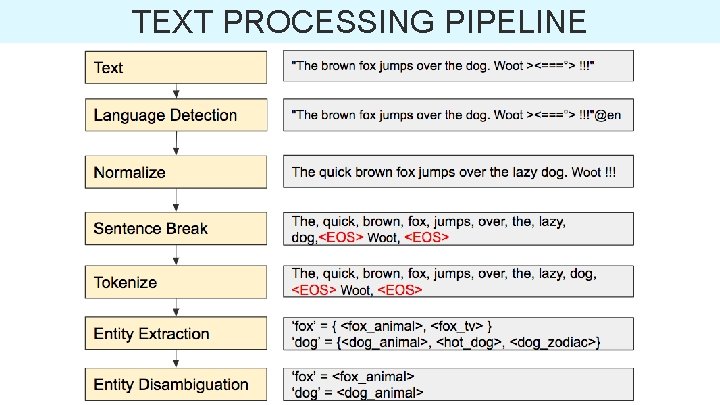

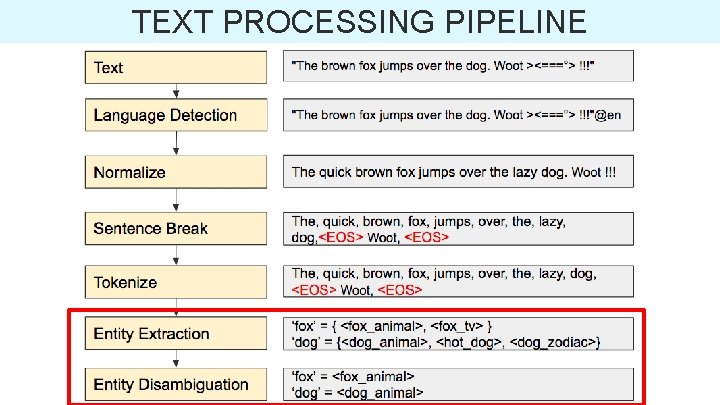

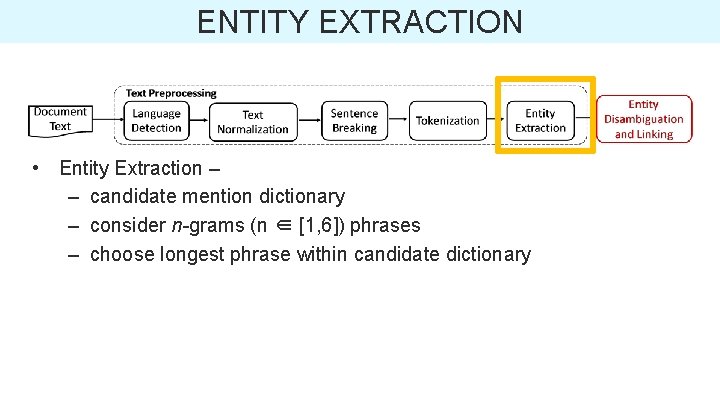

TEXT PROCESSING PIPELINE

TEXT PROCESSING PIPELINE

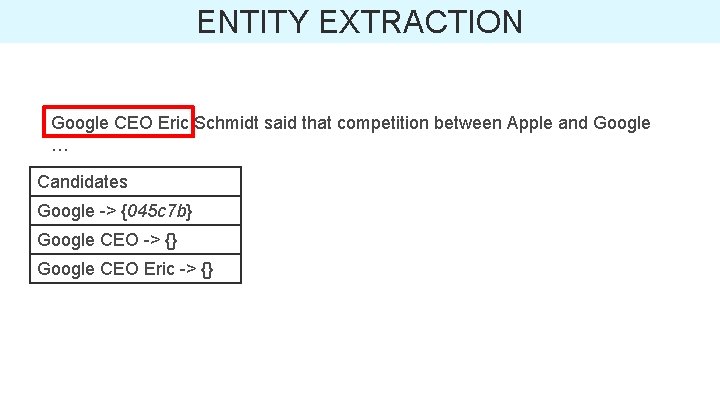

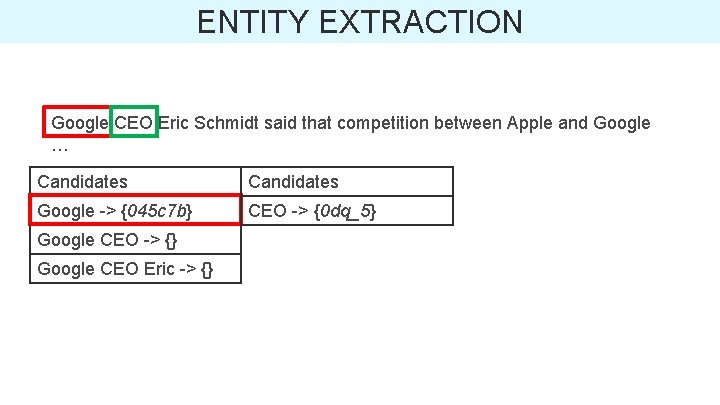

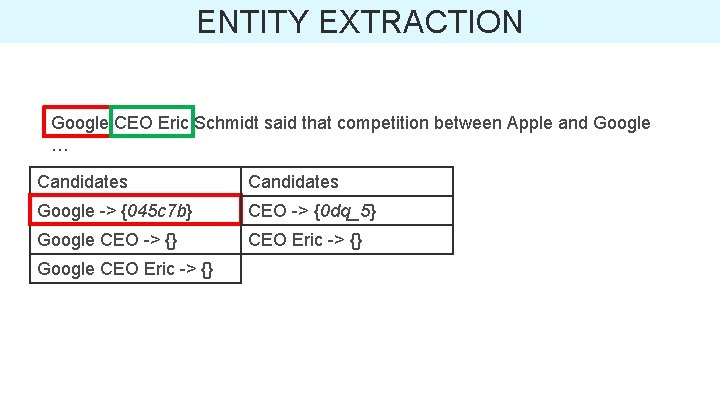

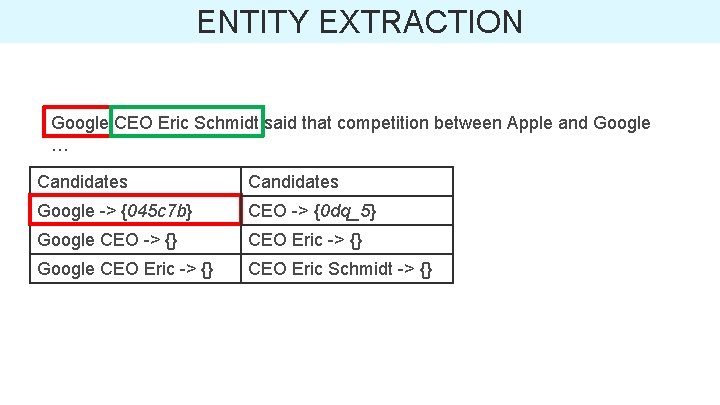

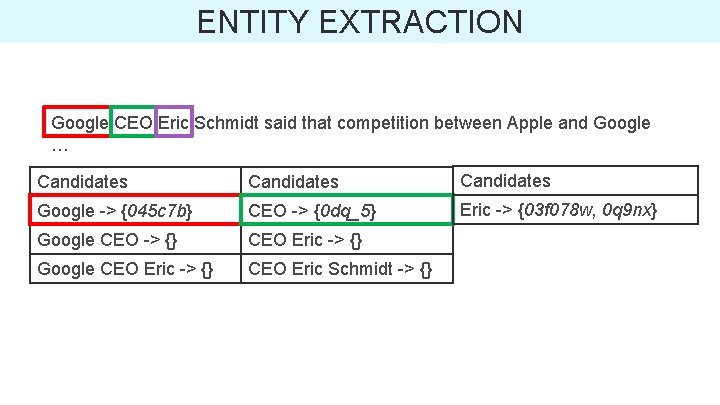

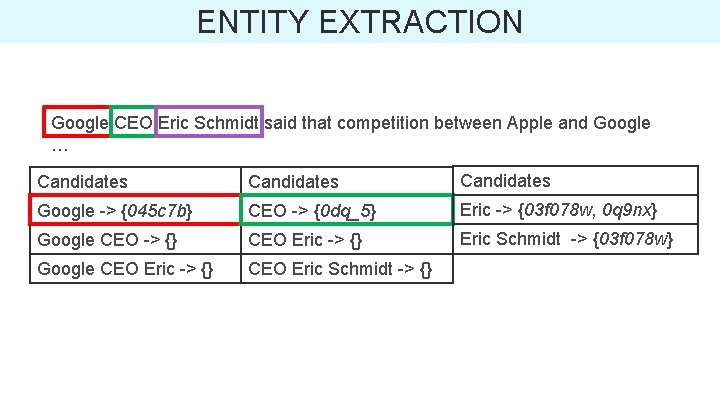

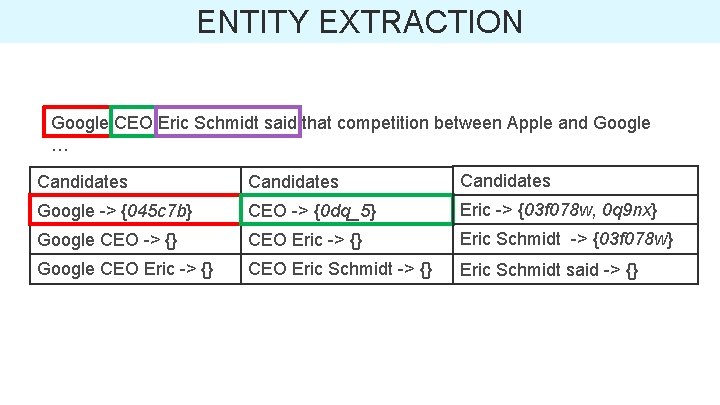

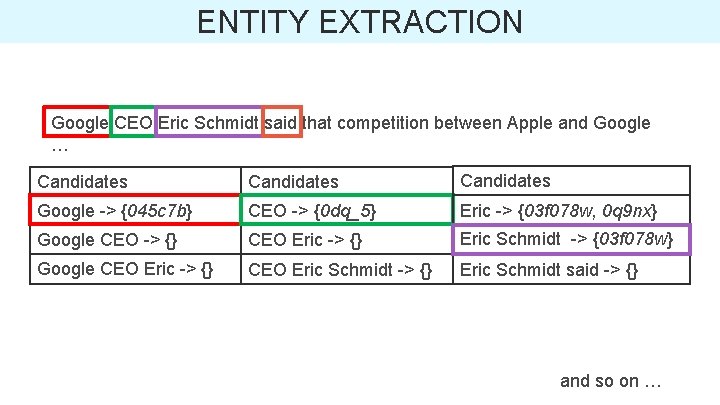

ENTITY EXTRACTION • Entity Extraction – – candidate mention dictionary – consider n-grams (n ∈ [1, 6]) phrases – choose longest phrase within candidate dictionary

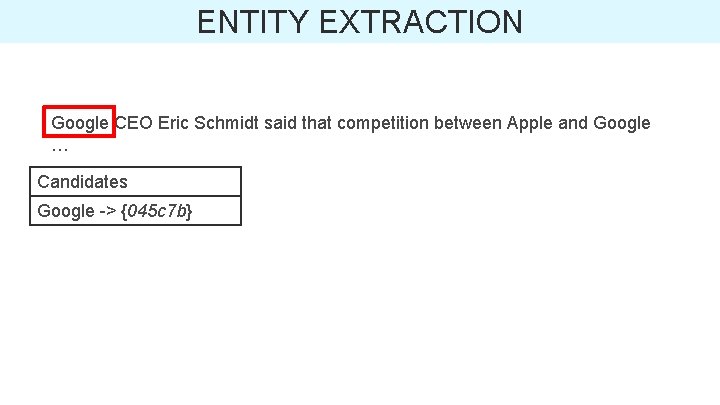

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} Google CEO -> {}

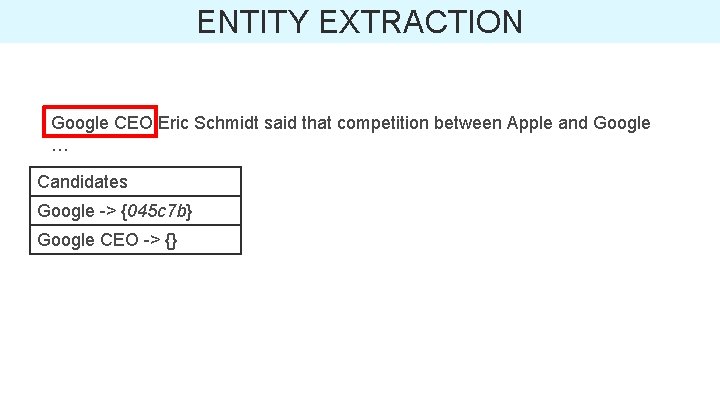

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} Google CEO -> {} Google CEO Eric -> {}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} CEO -> {0 dq_5} Google CEO -> {} Google CEO Eric -> {}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} CEO -> {0 dq_5} Google CEO -> {} CEO Eric -> {} Google CEO Eric -> {}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} CEO -> {0 dq_5} Google CEO -> {} CEO Eric -> {} Google CEO Eric -> {} CEO Eric Schmidt -> {}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} CEO -> {0 dq_5} Eric -> {03 f 078 w, 0 q 9 nx} Google CEO -> {} CEO Eric -> {} Google CEO Eric -> {} CEO Eric Schmidt -> {}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} CEO -> {0 dq_5} Eric -> {03 f 078 w, 0 q 9 nx} Google CEO -> {} CEO Eric -> {} Eric Schmidt -> {03 f 078 w} Google CEO Eric -> {} CEO Eric Schmidt -> {}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} CEO -> {0 dq_5} Eric -> {03 f 078 w, 0 q 9 nx} Google CEO -> {} CEO Eric -> {} Eric Schmidt -> {03 f 078 w} Google CEO Eric -> {} CEO Eric Schmidt -> {} Eric Schmidt said -> {}

ENTITY EXTRACTION Google CEO Eric Schmidt said that competition between Apple and Google … Candidates Google -> {045 c 7 b} CEO -> {0 dq_5} Eric -> {03 f 078 w, 0 q 9 nx} Google CEO -> {} CEO Eric -> {} Eric Schmidt -> {03 f 078 w} Google CEO Eric -> {} CEO Eric Schmidt -> {} Eric Schmidt said -> {} and so on …

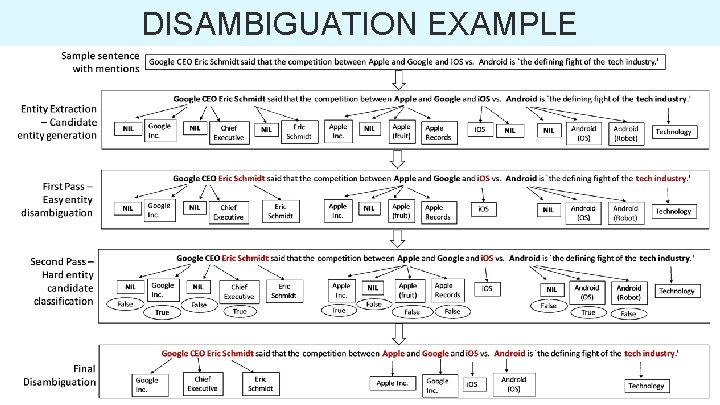

ENTITY DISAMBIGUATION Two-pass algorithm: 1. disambiguates and links a set of easy mentions 2. leverages these easy entities and several features to disambiguate and link the remaining hard mentions

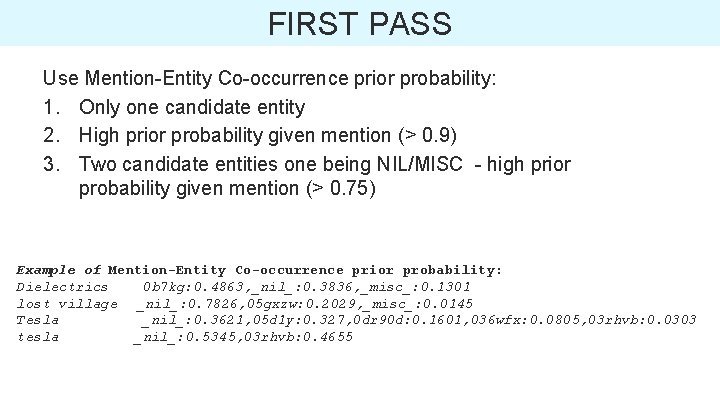

FIRST PASS Use Mention-Entity Co-occurrence prior probability: 1. Only one candidate entity 2. High prior probability given mention (> 0. 9) 3. Two candidate entities one being NIL/MISC - high prior probability given mention (> 0. 75) Example of Mention-Entity Co-occurrence prior probability: Dielectrics 0 b 7 kg: 0. 4863, _nil_: 0. 3836, _misc_: 0. 1301 lost village _nil_: 0. 7826, 05 gxzw: 0. 2029, _misc_: 0. 0145 Tesla _nil_: 0. 3621, 05 d 1 y: 0. 327, 0 dr 90 d: 0. 1601, 036 wfx: 0. 0805, 03 rhvb: 0. 0303 tesla _nil_: 0. 5345, 03 rhvb: 0. 4655

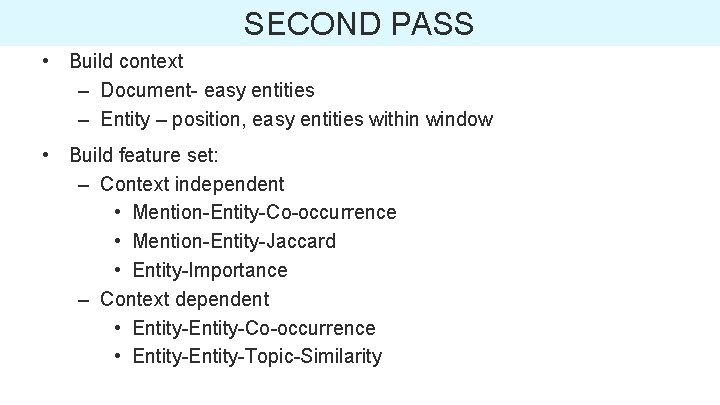

SECOND PASS • Build context – Document- easy entities – Entity – position, easy entities within window • Build feature set: – Context independent • Mention-Entity-Co-occurrence • Mention-Entity-Jaccard • Entity-Importance – Context dependent • Entity-Co-occurrence • Entity-Topic-Similarity

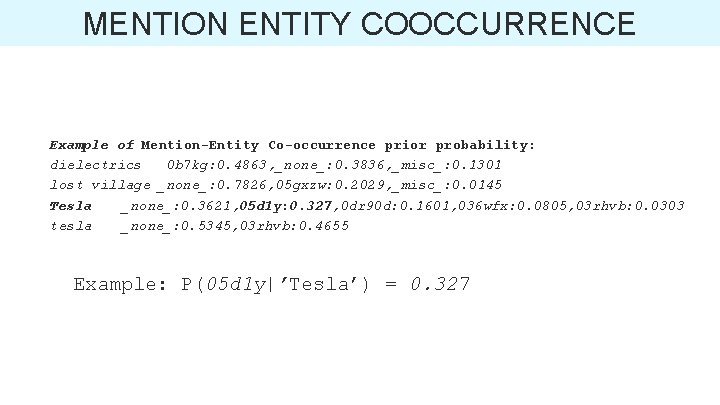

MENTION ENTITY COOCCURRENCE Example of Mention-Entity Co-occurrence prior probability: dielectrics 0 b 7 kg: 0. 4863, _none_: 0. 3836, _misc_: 0. 1301 lost village _none_: 0. 7826, 05 gxzw: 0. 2029, _misc_: 0. 0145 Tesla _none_: 0. 3621, 05 d 1 y: 0. 327, 0 dr 90 d: 0. 1601, 036 wfx: 0. 0805, 03 rhvb: 0. 0303 tesla _none_: 0. 5345, 03 rhvb: 0. 4655 Example: P(05 d 1 y|’Tesla’) = 0. 327

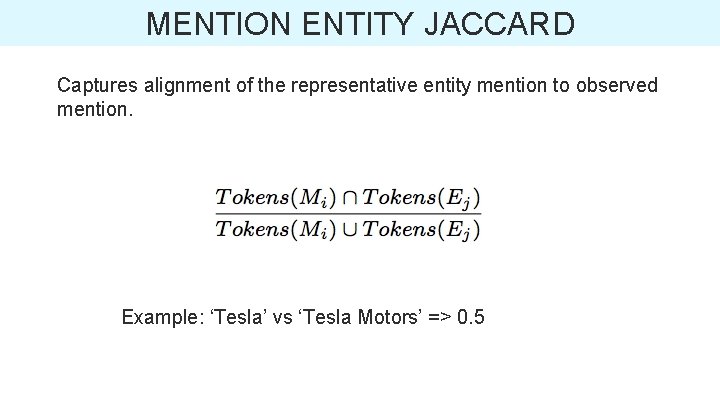

MENTION ENTITY JACCARD Captures alignment of the representative entity mention to observed mention. Example: ‘Tesla’ vs ‘Tesla Motors’ => 0. 5

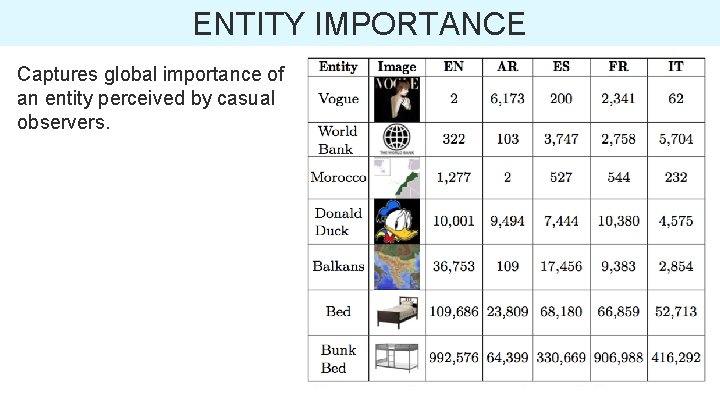

ENTITY IMPORTANCE Captures global importance of an entity perceived by casual observers.

ENTITY COOCCURRENCE Average co-occurrence of a candidate entity with the disambiguated easy entities in the context window.

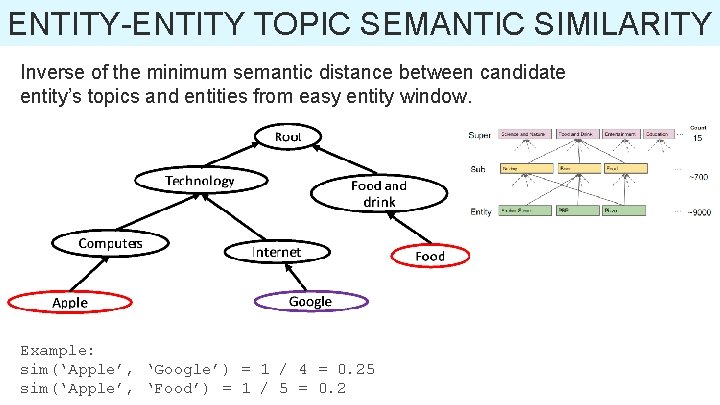

ENTITY-ENTITY TOPIC SEMANTIC SIMILARITY Inverse of the minimum semantic distance between candidate entity’s topics and entities from easy entity window. Example: sim(‘Apple’, ‘Google’) = 1 / 4 = 0. 25 sim(‘Apple’, ‘Food’) = 1 / 5 = 0. 2

DISAMBIGUATION • Use an ensemble of two classifiers: – Decision Tree classifier labels the feature vector as ‘True’ or ‘False’. – Generate final scores using weights generated by the Logistic Regression classifier • Final Disambiguation: – Only one candidate entity is labeled as ‘True’ – Multiple candidate entities labeled as ‘True’ , highest scoring wins – All candidate entities labeled as ‘False’, use highest scoring only if large score margin compared to next one.

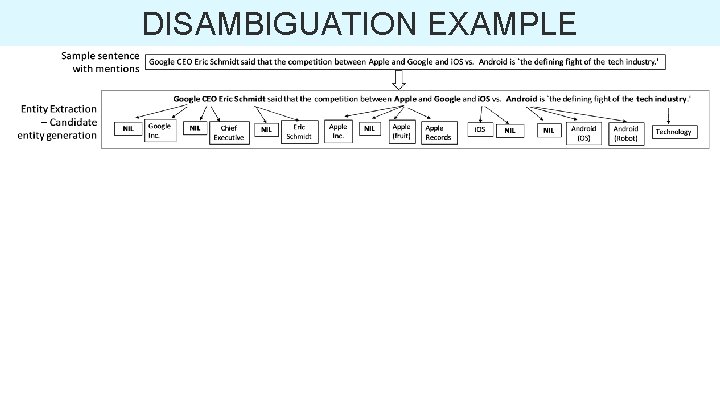

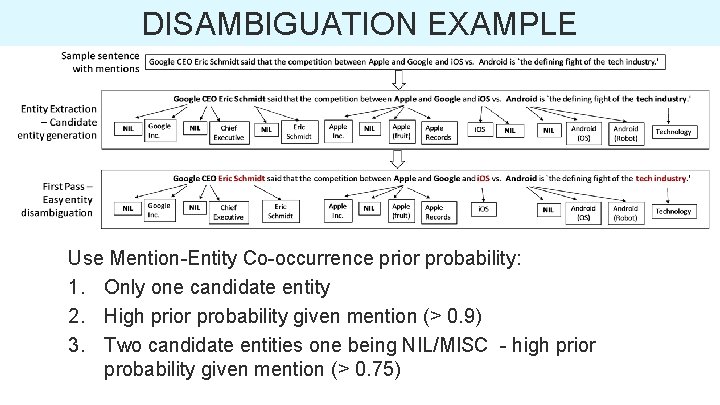

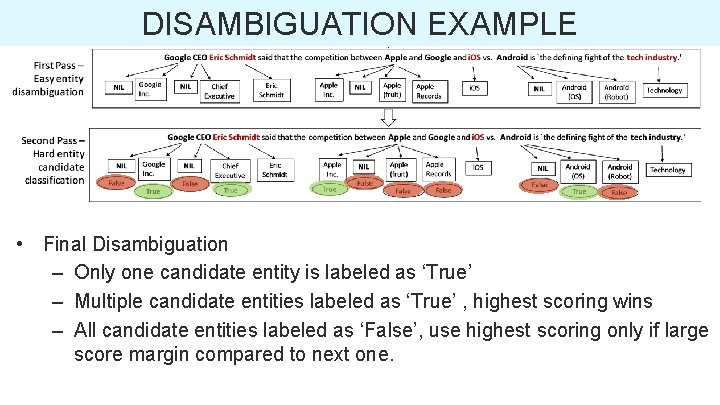

DISAMBIGUATION EXAMPLE

DISAMBIGUATION EXAMPLE

DISAMBIGUATION EXAMPLE Use Mention-Entity Co-occurrence prior probability: 1. Only one candidate entity 2. High prior probability given mention (> 0. 9) 3. Two candidate entities one being NIL/MISC - high prior probability given mention (> 0. 75)

DISAMBIGUATION EXAMPLE • Final Disambiguation – Only one candidate entity is labeled as ‘True’ – Multiple candidate entities labeled as ‘True’ , highest scoring wins – All candidate entities labeled as ‘False’, use highest scoring only if large score margin compared to next one.

DISAMBIGUATION EXAMPLE

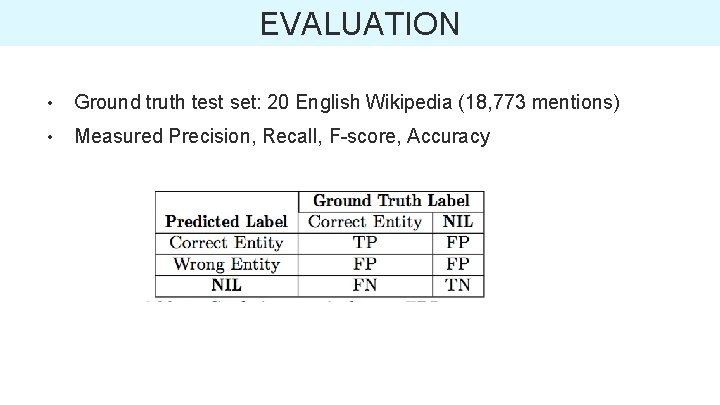

EVALUATION • Ground truth test set: 20 English Wikipedia (18, 773 mentions) • Measured Precision, Recall, F-score, Accuracy

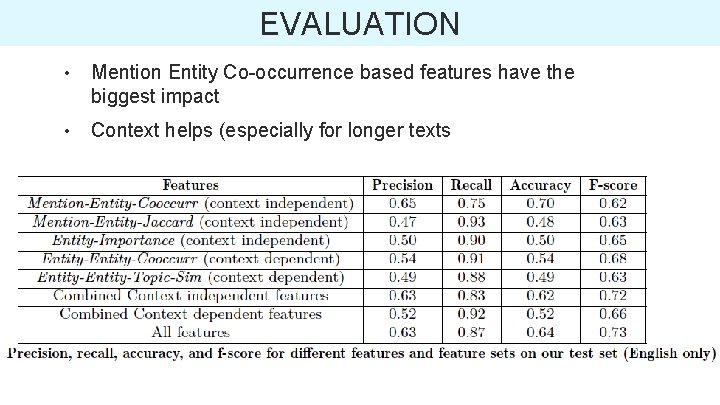

EVALUATION • Mention Entity Co-occurrence based features have the biggest impact • Context helps (especially for longer texts

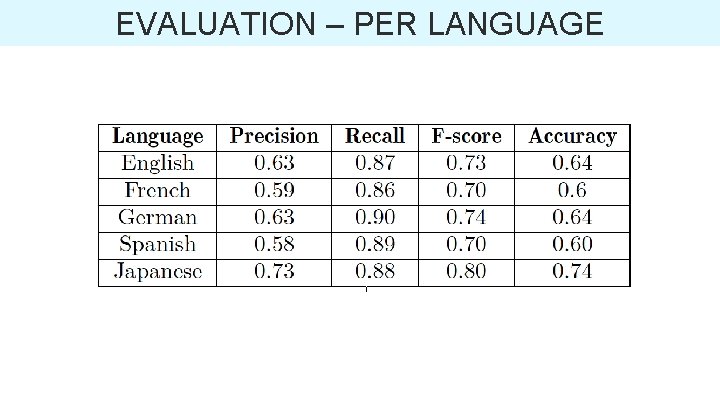

EVALUATION – PER LANGUAGE

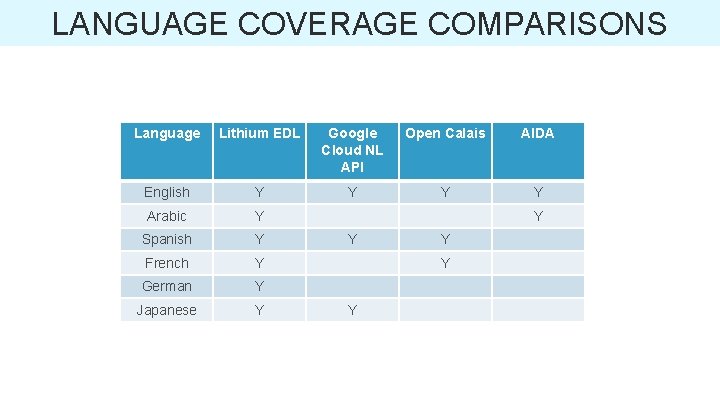

LANGUAGE COVERAGE COMPARISONS Language Lithium EDL Google Cloud NL API Open Calais AIDA English Y Y Arabic Y Spanish Y French Y German Y Japanese Y Y Y

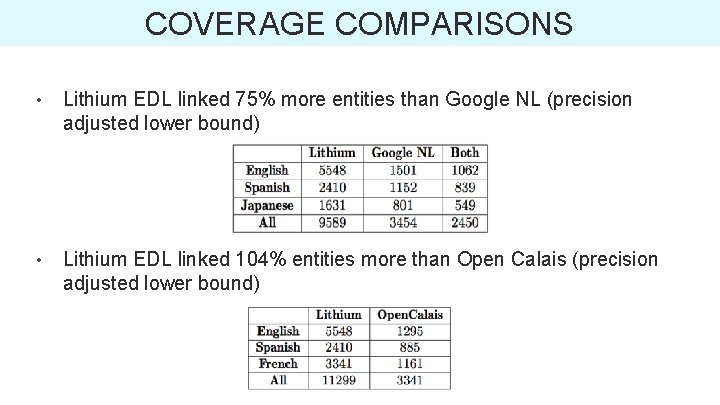

COVERAGE COMPARISONS • Lithium EDL linked 75% more entities than Google NL (precision adjusted lower bound) • Lithium EDL linked 104% entities more than Open Calais (precision adjusted lower bound)

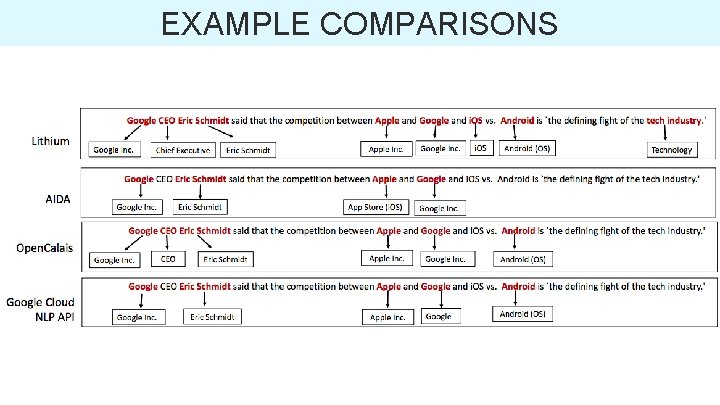

EXAMPLE COMPARISONS

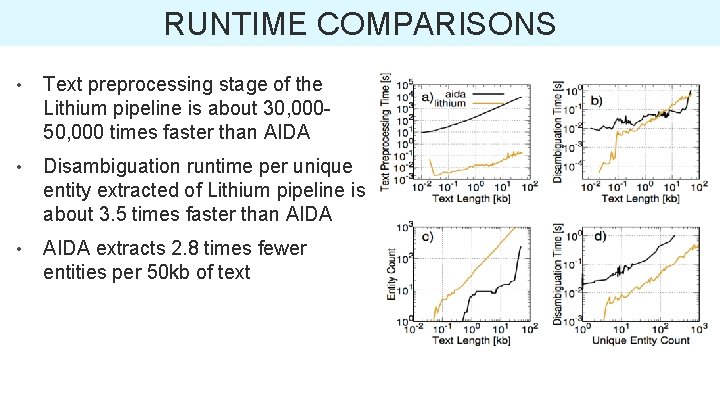

RUNTIME COMPARISONS • Text preprocessing stage of the Lithium pipeline is about 30, 00050, 000 times faster than AIDA • Disambiguation runtime per unique entity extracted of Lithium pipeline is about 3. 5 times faster than AIDA • AIDA extracts 2. 8 times fewer entities per 50 kb of text

CONCLUSION • Presented an EDL algorithm that uses several context-dependent and context-independent features • Lithium EDL system recognizes several types of entities (professional titles, sports, activities etc. ) in addition to named entities (people, places, organizations etc. ) – 75% more entities than state of the art systems • EDL algorithm is language-agnostic and currently supports 6 different languages – applicable to real world data • High throughput and lightweight – 3. 5 times faster than state-of-the-art systems such as AIDA

Questions? E-mail: team-relevance@klout. com

- Slides: 42