High Performance Parallel Programming Dirk van der Knijff

- Slides: 38

High Performance Parallel Programming Dirk van der Knijff Advanced Research Computing Information Division

High Performance Parallel Programming Lecture 2: Message Passing Interface (MPI) part 1 High Performance Parallel Programming

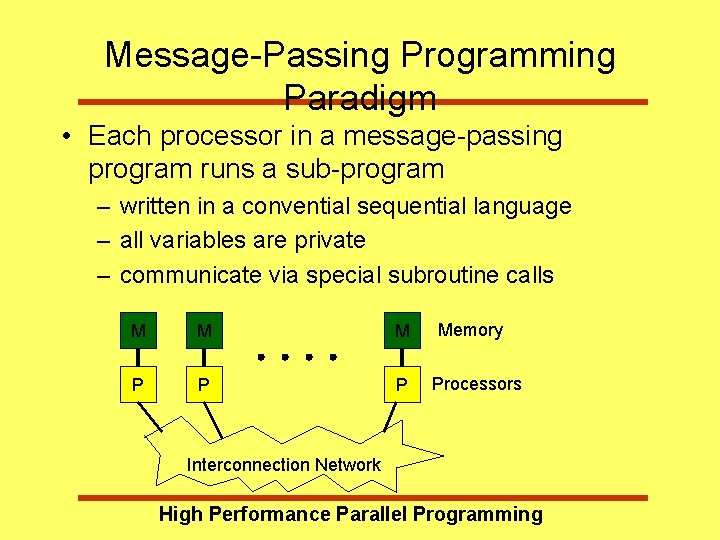

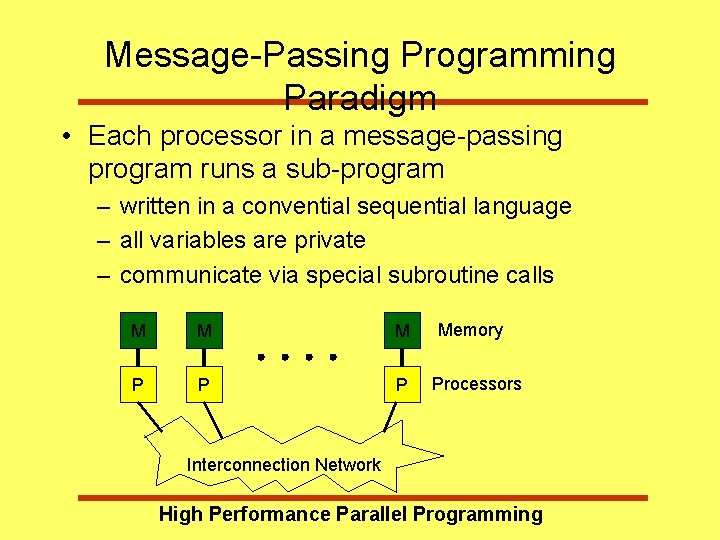

Message-Passing Programming Paradigm • Each processor in a message-passing program runs a sub-program – written in a convential sequential language – all variables are private – communicate via special subroutine calls M M M P P P Memory Processors Interconnection Network High Performance Parallel Programming

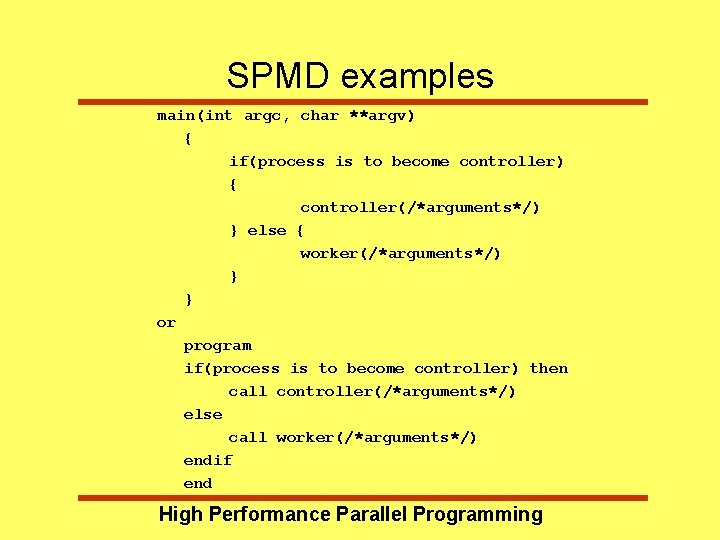

Single Program Multiple Data • Introduced in data parallel programming (HPF) • Same program runs everywhere • Restriction on general message-passing model • Some vendors only support SPMD parallel programs • Usual way of writing MPI programs • General message-passing model can be emulated High Performance Parallel Programming

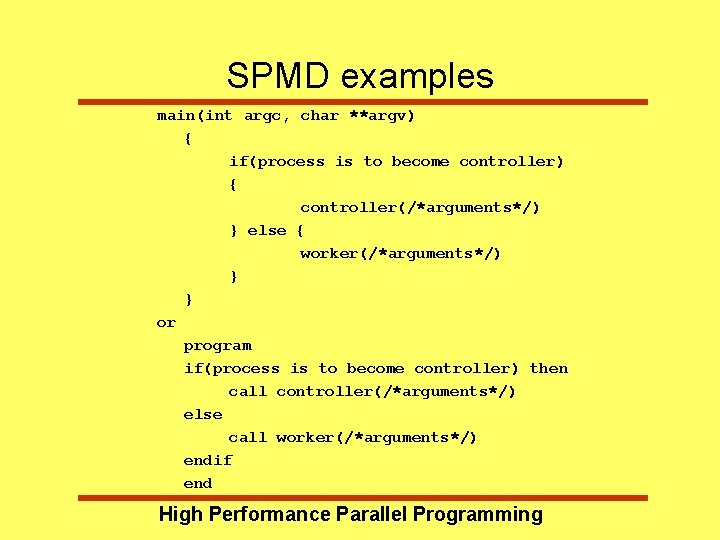

SPMD examples main(int argc, char **argv) { if(process is to become controller) { controller(/*arguments*/) } else { worker(/*arguments*/) } } or program if(process is to become controller) then call controller(/*arguments*/) else call worker(/*arguments*/) endif end High Performance Parallel Programming

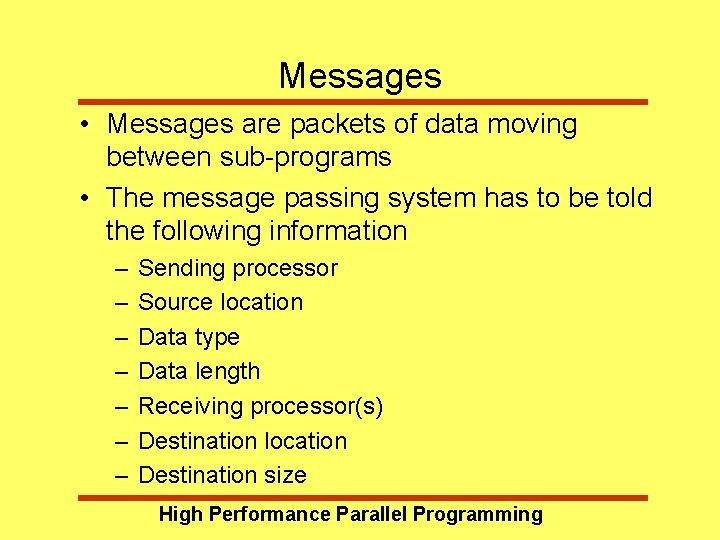

Messages • Messages are packets of data moving between sub-programs • The message passing system has to be told the following information – – – – Sending processor Source location Data type Data length Receiving processor(s) Destination location Destination size High Performance Parallel Programming

Messages • Access: – Each sub-program needs to be connected to a message passing system • Addressing: – Messages need to have addresses to be sent to • Reception: – It is important that the recieving process is capable of dealing with the messages it is sent • A message passing system is similar to: – Post-office, Phone line, Fax, E-mail, etc High Performance Parallel Programming

Point-to-Point Communication • Simplest form of message passing • One process sends a message to another • Several variations on how sending a message can interact with execution of the subprogram High Performance Parallel Programming

Point-to-Point variations • Synchronous Sends – provide information about the completion of the message – e. g. fax machines • Asynchronous Sends – Only know when the message has left – e. g. post cards • Blocking operations – only return from the call when operation has completed • Non-blocking operations High Performance Parallel Programming – return straight away - can test/wait later for

Collective Communications • Collective communication routines are higher level routines involving several processes at a time • Can be built out of point-to-point communications • Barriers – synchronise processes • Broadcast – one-to-many communication • Reduction operations – combine data from. Parallel several. Programming processes to produce High Performance

Message Passing Systems • Initially each manufacturer developed their own • Wide range of features, often incompatable • Several groups developed systems for workstations • PVM - (Parallel Virtual Machine) – – de facto standard before MPI Open Source (NOT public domain!) User Interface to the System (daemons) Support for Dynamic environments High Performance Parallel Programming

MPI Forum • Sixty people from forty different organisations • Both users and vendors, from the US and Europe • Two-year process of proposals, meetings and review • Produced a document defining a standard Message Passing Interface (MPI) – – to provide source-code portability to allow efficient implementation it provides a high level of functionality support for heterogeneous parallel architectures High Performance Parallel Programming

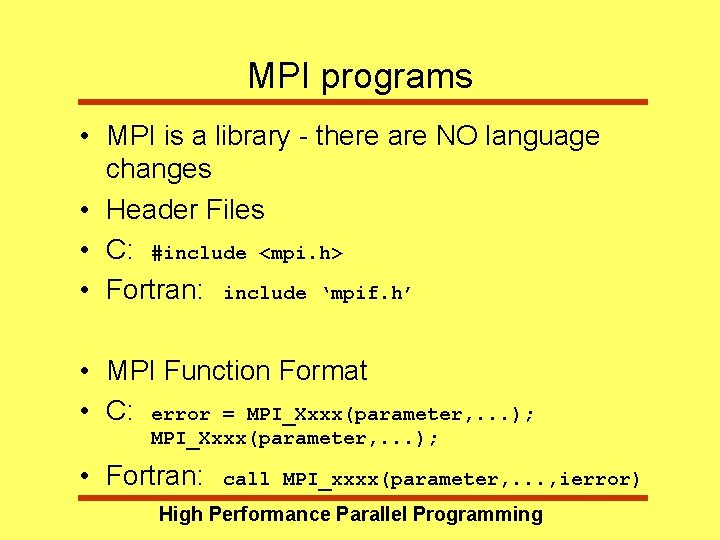

MPI programs • MPI is a library - there are NO language changes • Header Files • C: #include <mpi. h> • Fortran: include ‘mpif. h’ • MPI Function Format • C: error = MPI_Xxxx(parameter, . . . ); • Fortran: call MPI_xxxx(parameter, . . . , ierror) High Performance Parallel Programming

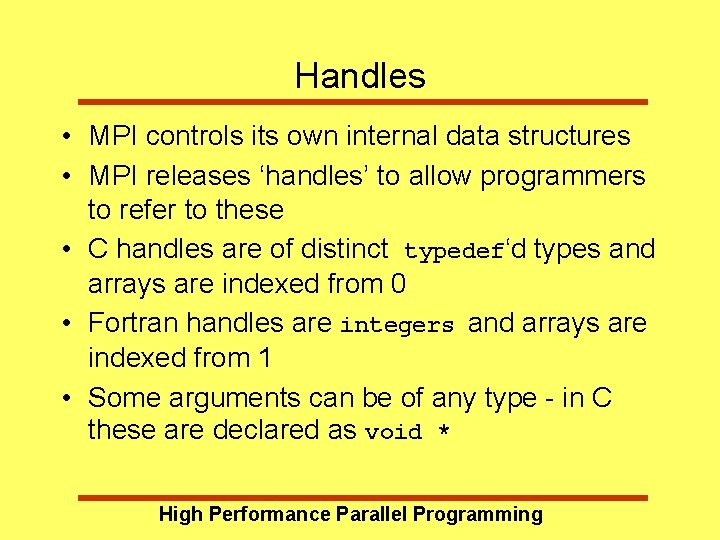

Handles • MPI controls its own internal data structures • MPI releases ‘handles’ to allow programmers to refer to these • C handles are of distinct typedef‘d types and arrays are indexed from 0 • Fortran handles are integers and arrays are indexed from 1 • Some arguments can be of any type - in C these are declared as void * High Performance Parallel Programming

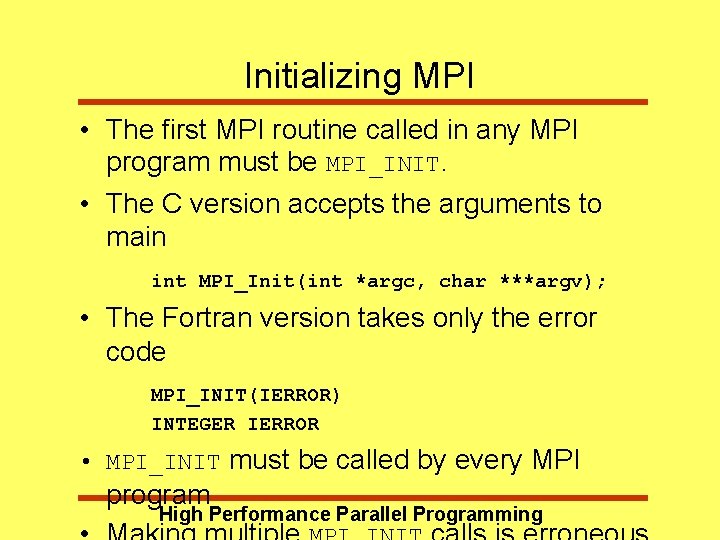

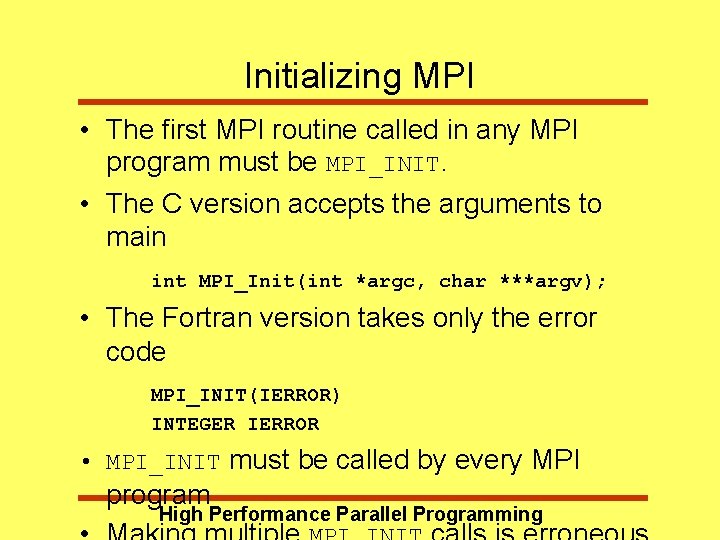

Initializing MPI • The first MPI routine called in any MPI program must be MPI_INIT. • The C version accepts the arguments to main int MPI_Init(int *argc, char ***argv); • The Fortran version takes only the error code MPI_INIT(IERROR) INTEGER IERROR • MPI_INIT must be called by every MPI program High Performance Parallel Programming

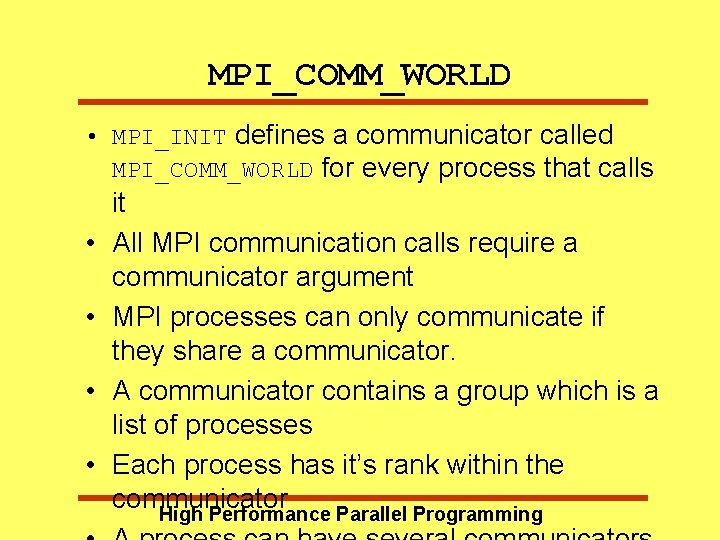

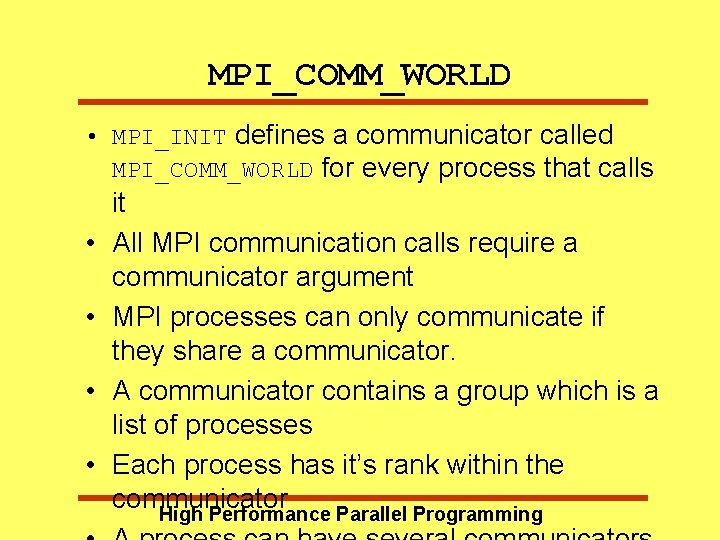

MPI_COMM_WORLD • MPI_INIT defines a communicator called MPI_COMM_WORLD for every process that calls • • it All MPI communication calls require a communicator argument MPI processes can only communicate if they share a communicator. A communicator contains a group which is a list of processes Each process has it’s rank within the communicator High Performance Parallel Programming

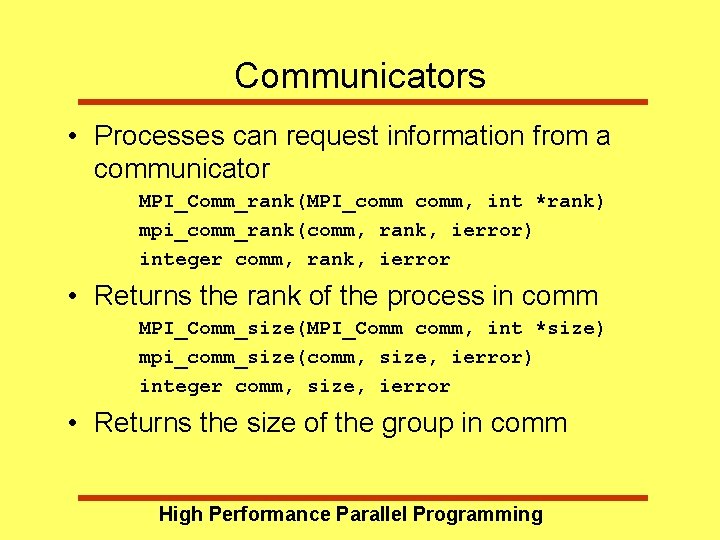

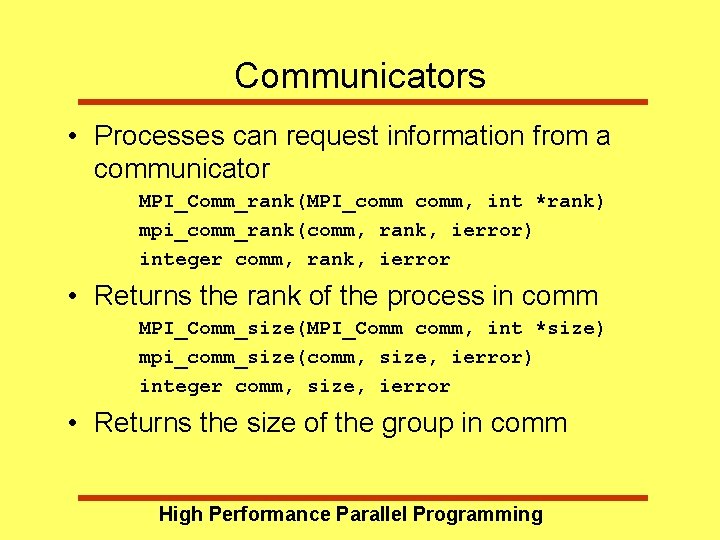

Communicators • Processes can request information from a communicator MPI_Comm_rank(MPI_comm, int *rank) mpi_comm_rank(comm, rank, ierror) integer comm, rank, ierror • Returns the rank of the process in comm MPI_Comm_size(MPI_Comm comm, int *size) mpi_comm_size(comm, size, ierror) integer comm, size, ierror • Returns the size of the group in comm High Performance Parallel Programming

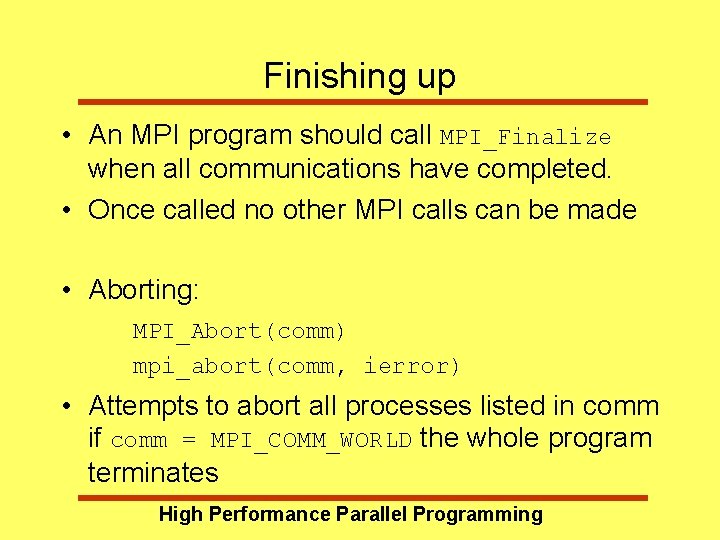

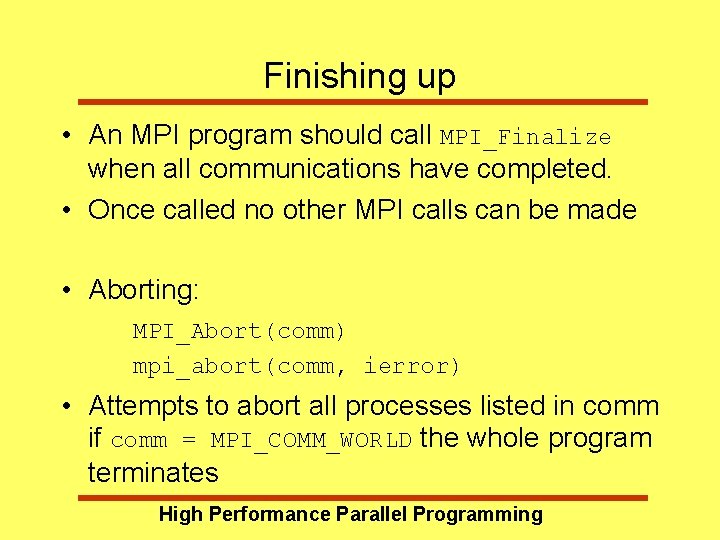

Finishing up • An MPI program should call MPI_Finalize when all communications have completed. • Once called no other MPI calls can be made • Aborting: MPI_Abort(comm) mpi_abort(comm, ierror) • Attempts to abort all processes listed in comm if comm = MPI_COMM_WORLD the whole program terminates High Performance Parallel Programming

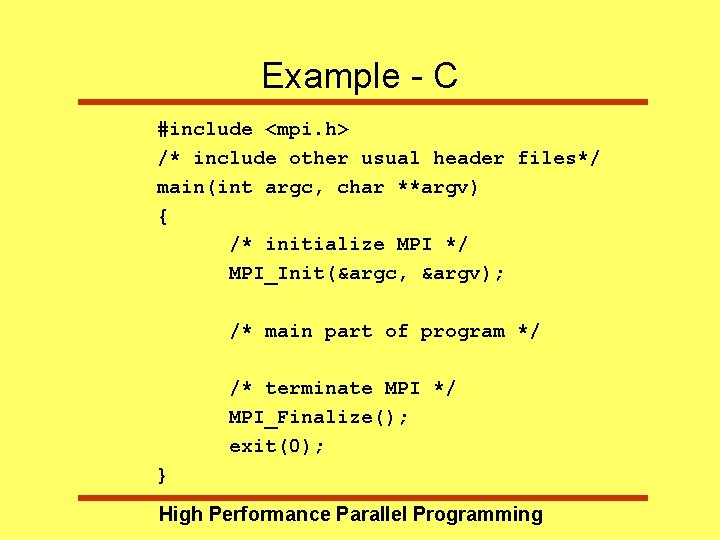

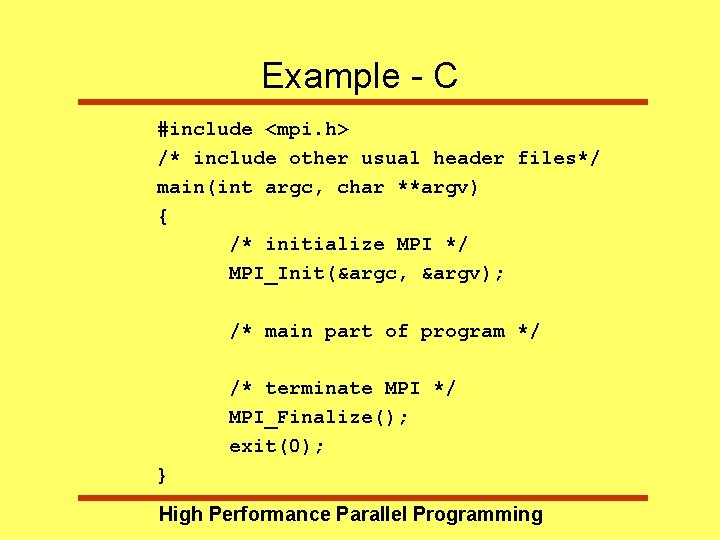

Example - C #include <mpi. h> /* include other usual header files*/ main(int argc, char **argv) { /* initialize MPI */ MPI_Init(&argc, &argv); /* main part of program */ /* terminate MPI */ MPI_Finalize(); exit(0); } High Performance Parallel Programming

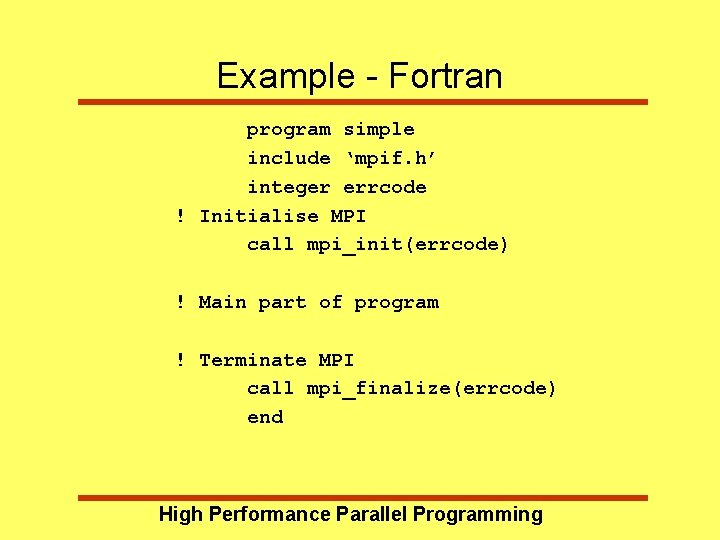

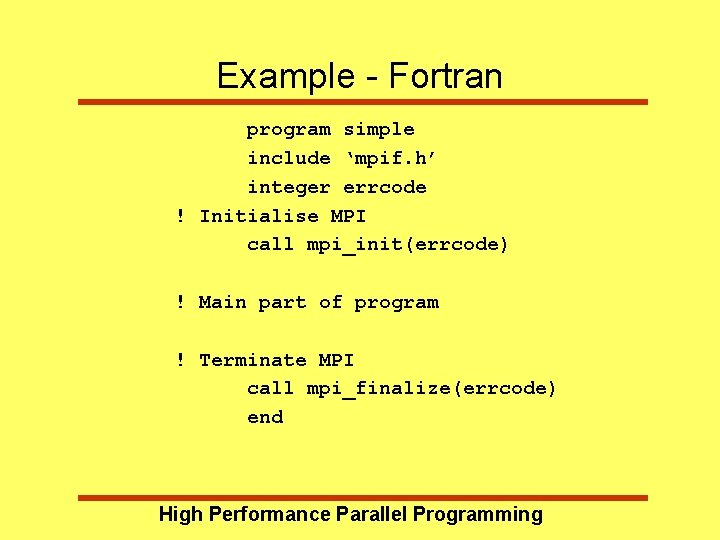

Example - Fortran program simple include ‘mpif. h’ integer errcode ! Initialise MPI call mpi_init(errcode) ! Main part of program ! Terminate MPI call mpi_finalize(errcode) end High Performance Parallel Programming

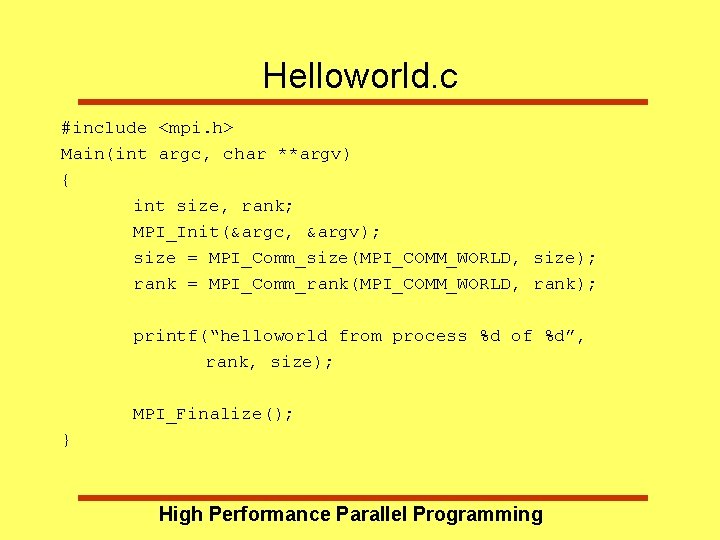

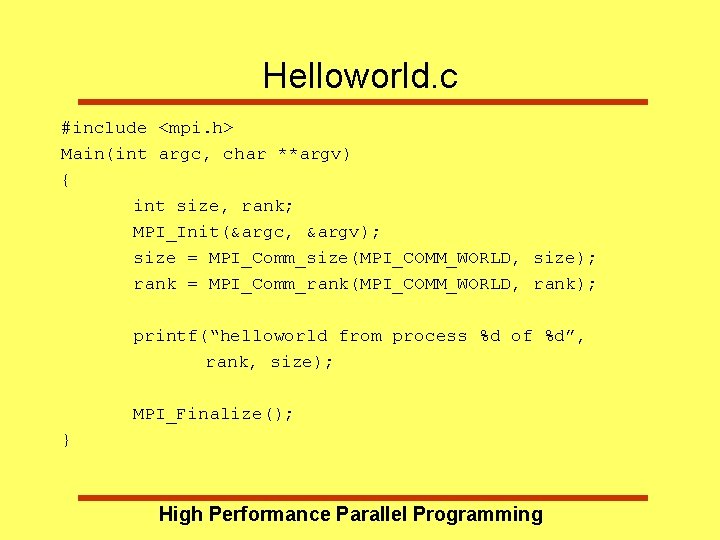

Helloworld. c #include <mpi. h> Main(int argc, char **argv) { int size, rank; MPI_Init(&argc, &argv); size = MPI_Comm_size(MPI_COMM_WORLD, size); rank = MPI_Comm_rank(MPI_COMM_WORLD, rank); printf(“helloworld from process %d of %d”, rank, size); MPI_Finalize(); } High Performance Parallel Programming

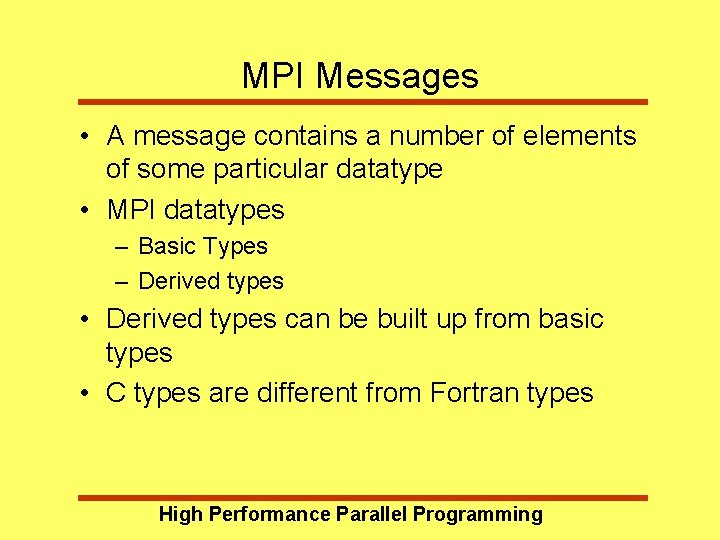

MPI Messages • A message contains a number of elements of some particular datatype • MPI datatypes – Basic Types – Derived types • Derived types can be built up from basic types • C types are different from Fortran types High Performance Parallel Programming

MPI Basic Datatypes - C High Performance Parallel Programming

MPI Basic Datatypes - Fortran High Performance Parallel Programming

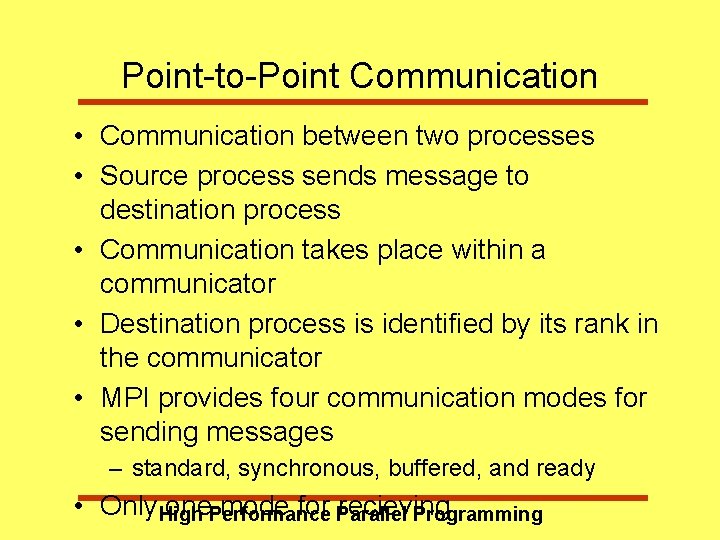

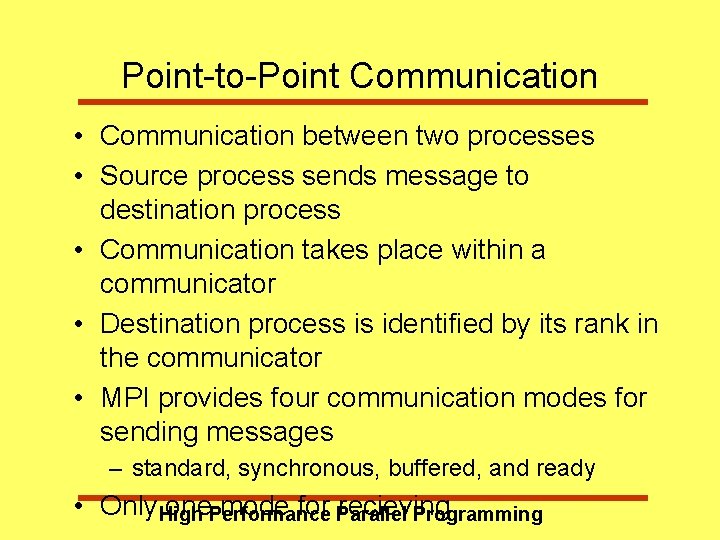

Point-to-Point Communication • Communication between two processes • Source process sends message to destination process • Communication takes place within a communicator • Destination process is identified by its rank in the communicator • MPI provides four communication modes for sending messages – standard, synchronous, buffered, and ready • Only High one. Performance mode for Parallel recieving Programming

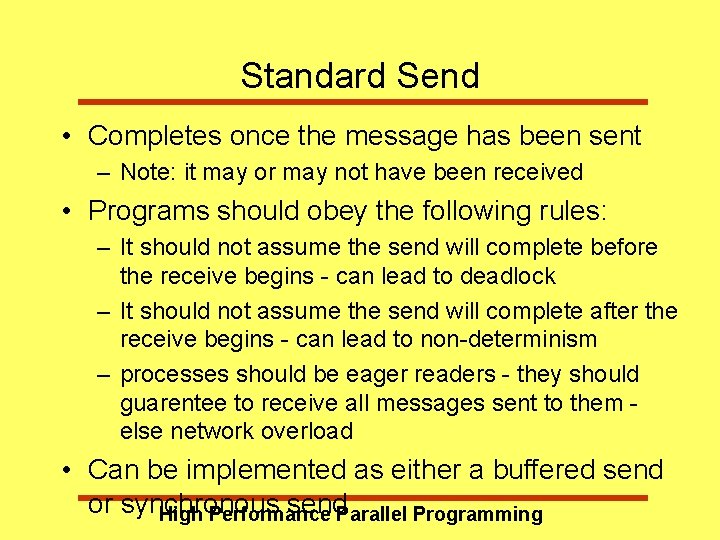

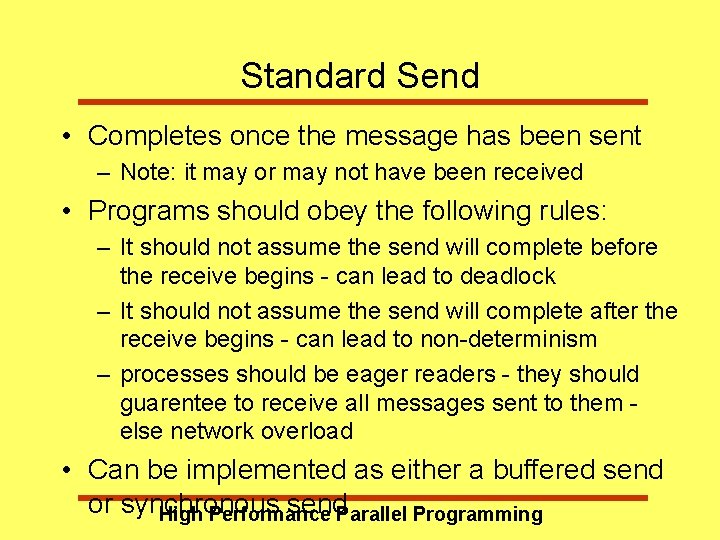

Standard Send • Completes once the message has been sent – Note: it may or may not have been received • Programs should obey the following rules: – It should not assume the send will complete before the receive begins - can lead to deadlock – It should not assume the send will complete after the receive begins - can lead to non-determinism – processes should be eager readers - they should guarentee to receive all messages sent to them else network overload • Can be implemented as either a buffered send or synchronous send. Parallel Programming High Performance

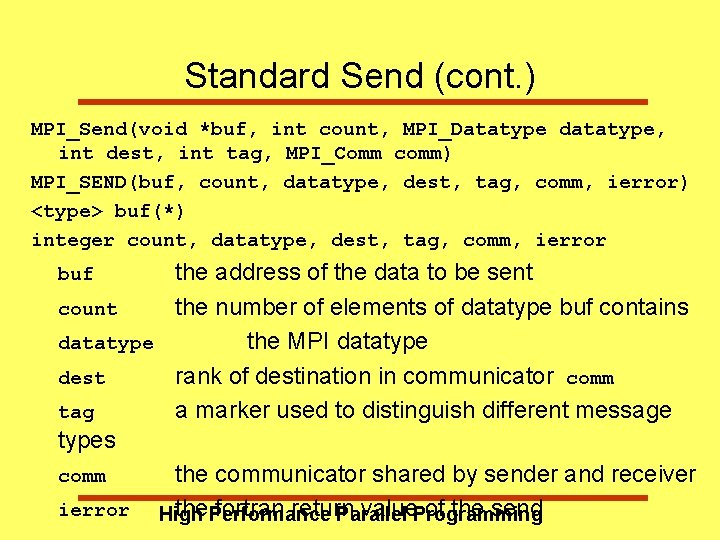

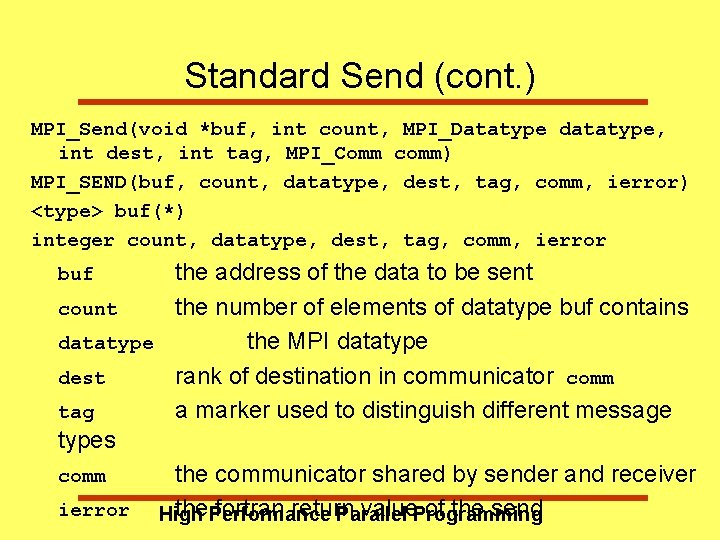

Standard Send (cont. ) MPI_Send(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) MPI_SEND(buf, count, datatype, dest, tag, comm, ierror) <type> buf(*) integer count, datatype, dest, tag, comm, ierror the address of the data to be sent count the number of elements of datatype buf contains datatype the MPI datatype dest rank of destination in communicator comm tag a marker used to distinguish different message types comm the communicator shared by sender and receiver ierror High the. Performance fortran return value. Programming of the send Parallel buf

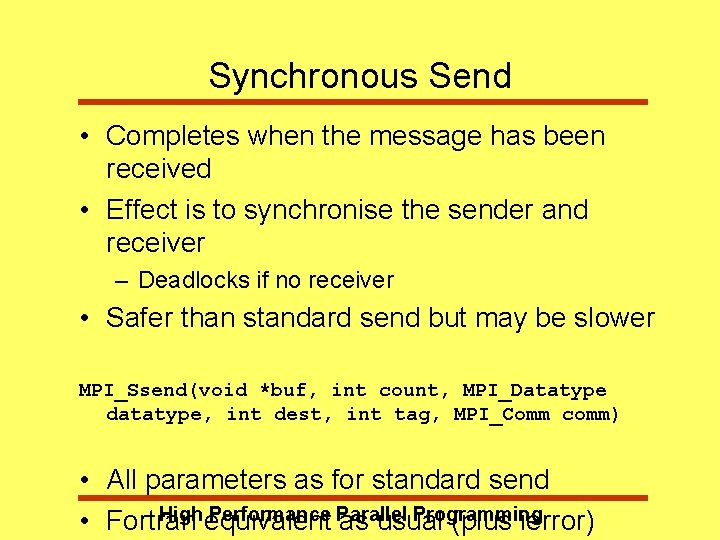

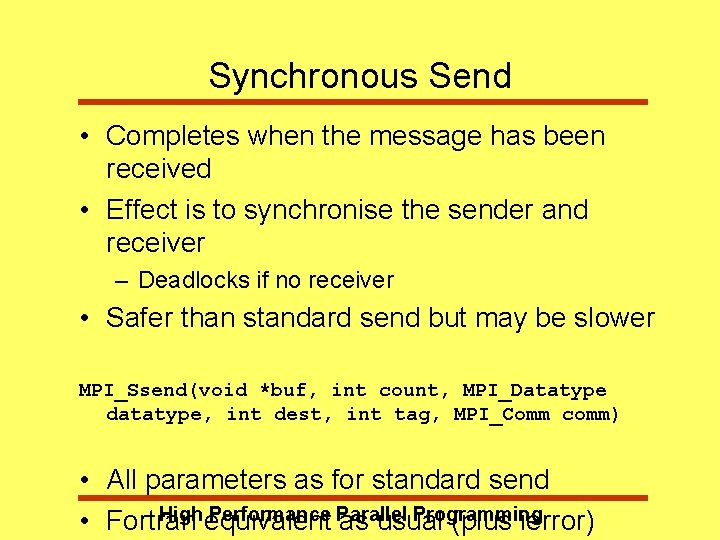

Synchronous Send • Completes when the message has been received • Effect is to synchronise the sender and receiver – Deadlocks if no receiver • Safer than standard send but may be slower MPI_Ssend(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) • All parameters as for standard send High equivalent Performance Parallel Programming • Fortran as usual (plus ierror)

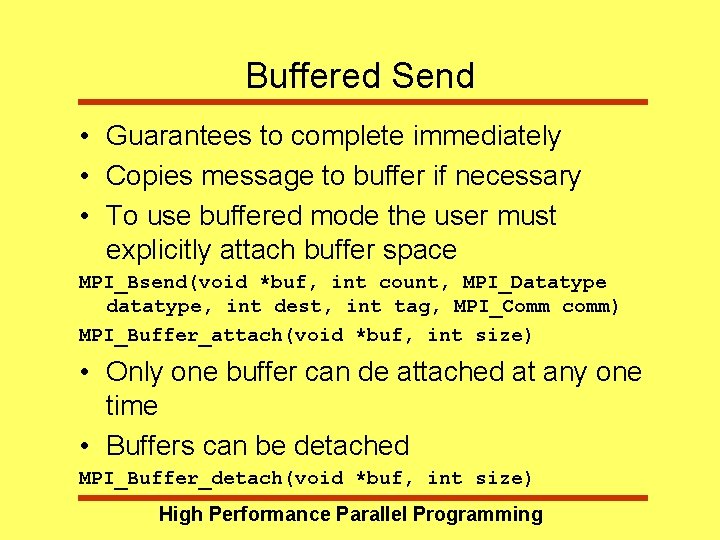

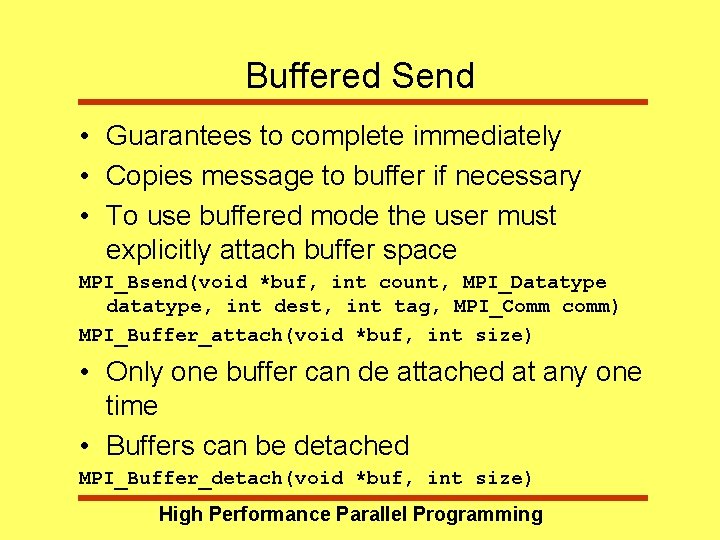

Buffered Send • Guarantees to complete immediately • Copies message to buffer if necessary • To use buffered mode the user must explicitly attach buffer space MPI_Bsend(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) MPI_Buffer_attach(void *buf, int size) • Only one buffer can de attached at any one time • Buffers can be detached MPI_Buffer_detach(void *buf, int size) High Performance Parallel Programming

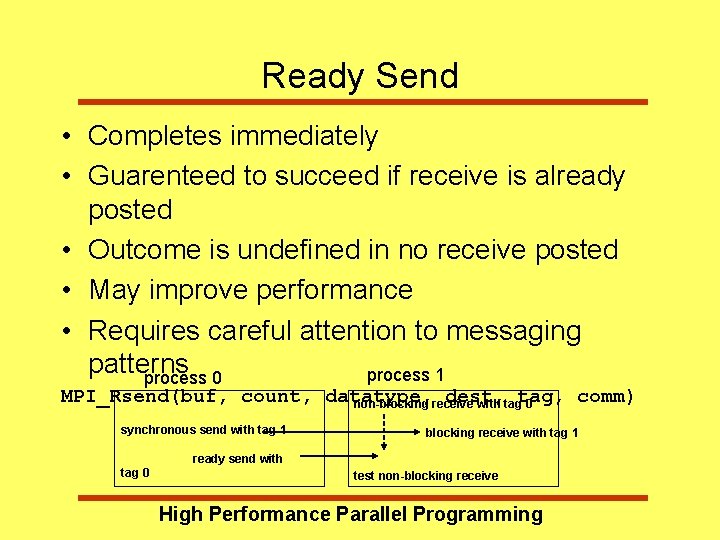

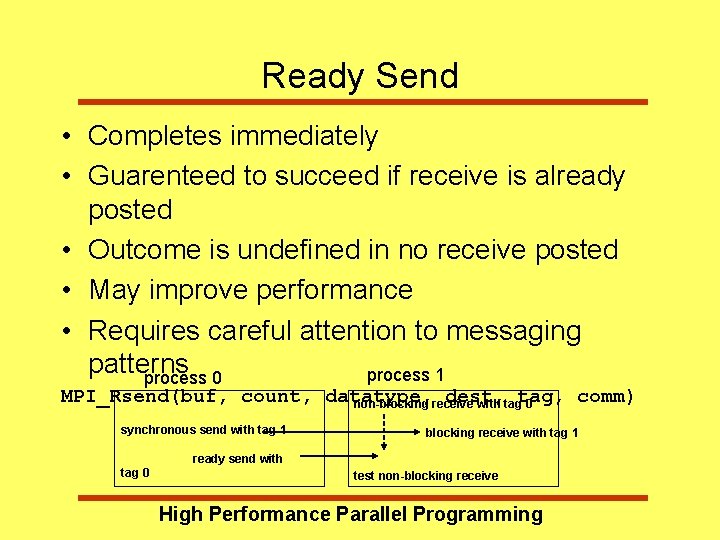

Ready Send • Completes immediately • Guarenteed to succeed if receive is already posted • Outcome is undefined in no receive posted • May improve performance • Requires careful attention to messaging patterns process 1 process 0 MPI_Rsend(buf, count, datatype, dest, comm) non-blocking receive with tagtag, 0 synchronous send with tag 1 blocking receive with tag 1 ready send with tag 0 test non-blocking receive High Performance Parallel Programming

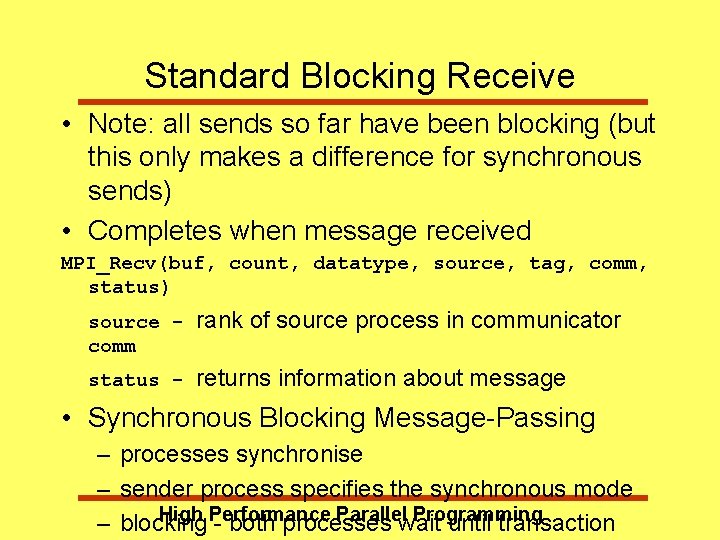

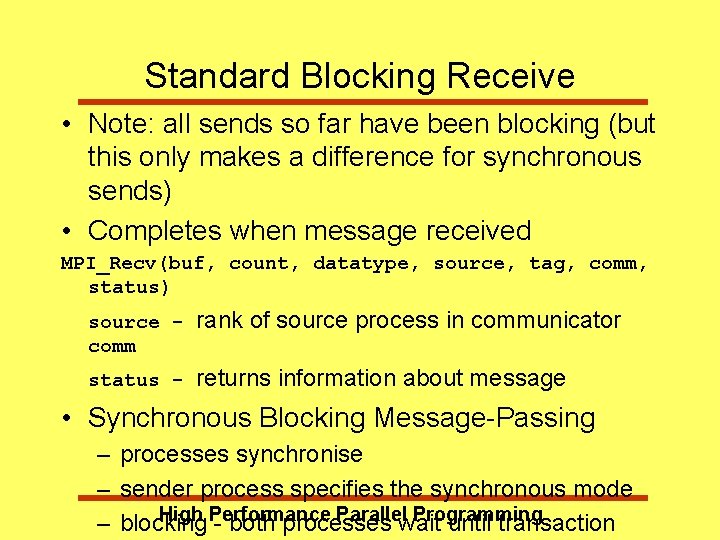

Standard Blocking Receive • Note: all sends so far have been blocking (but this only makes a difference for synchronous sends) • Completes when message received MPI_Recv(buf, count, datatype, source, tag, comm, status) source comm rank of source process in communicator status - returns information about message • Synchronous Blocking Message-Passing – processes synchronise – sender process specifies the synchronous mode High Performance Parallel Programming – blocking - both processes wait until transaction

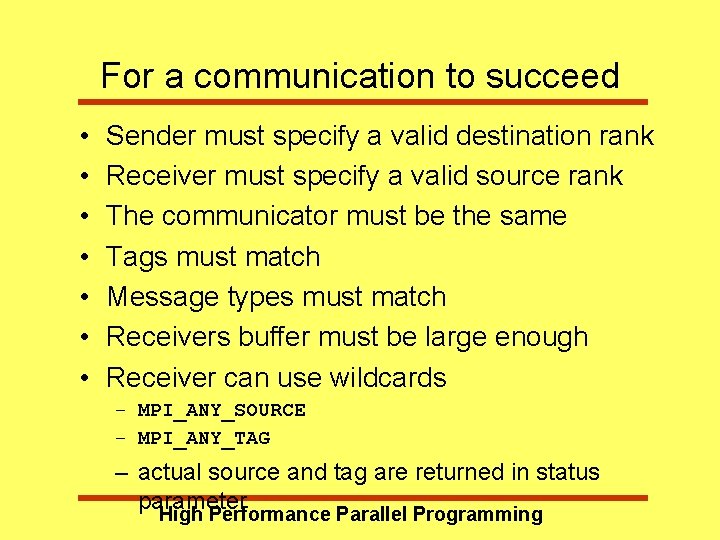

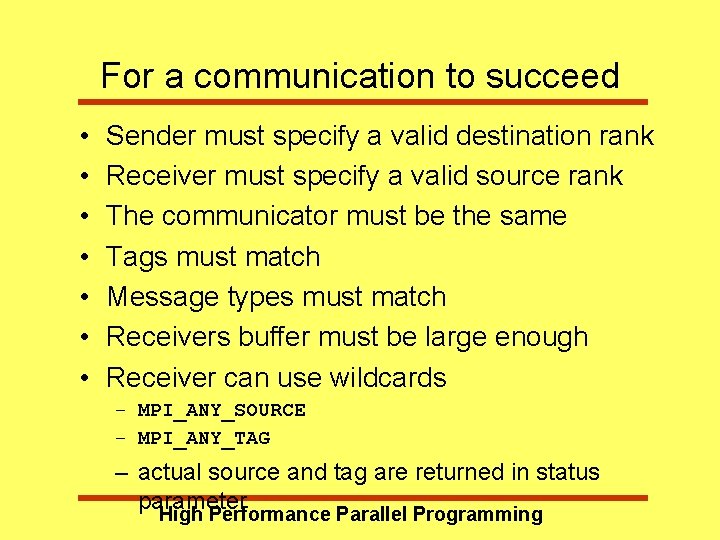

For a communication to succeed • • Sender must specify a valid destination rank Receiver must specify a valid source rank The communicator must be the same Tags must match Message types must match Receivers buffer must be large enough Receiver can use wildcards – MPI_ANY_SOURCE – MPI_ANY_TAG – actual source and tag are returned in status parameter High Performance Parallel Programming

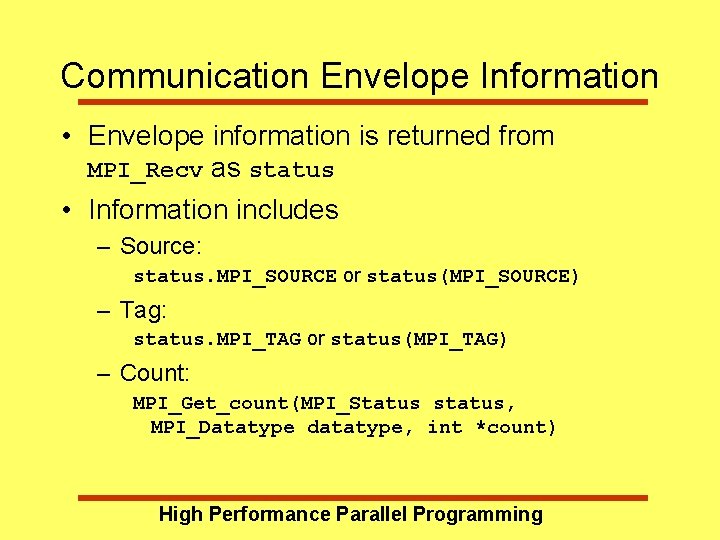

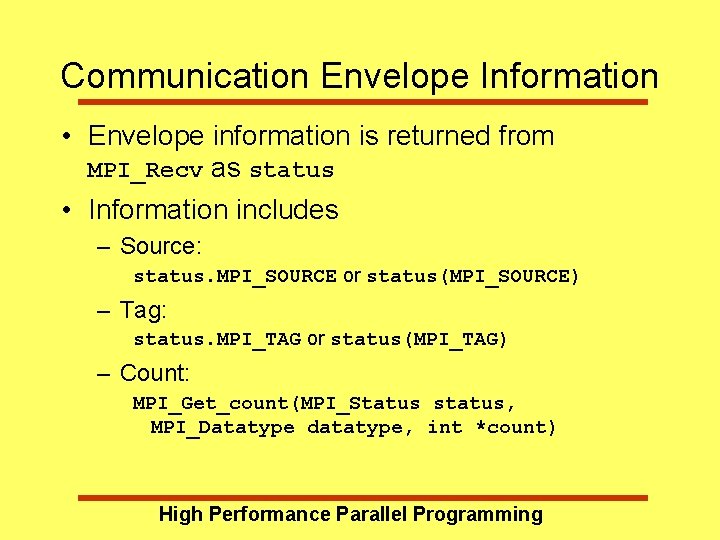

Communication Envelope Information • Envelope information is returned from MPI_Recv as status • Information includes – Source: status. MPI_SOURCE or status(MPI_SOURCE) – Tag: status. MPI_TAG or status(MPI_TAG) – Count: MPI_Get_count(MPI_Status status, MPI_Datatype datatype, int *count) High Performance Parallel Programming

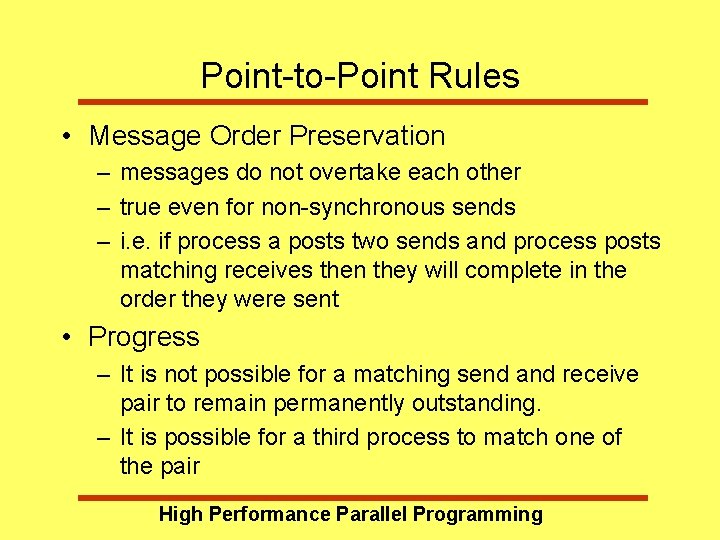

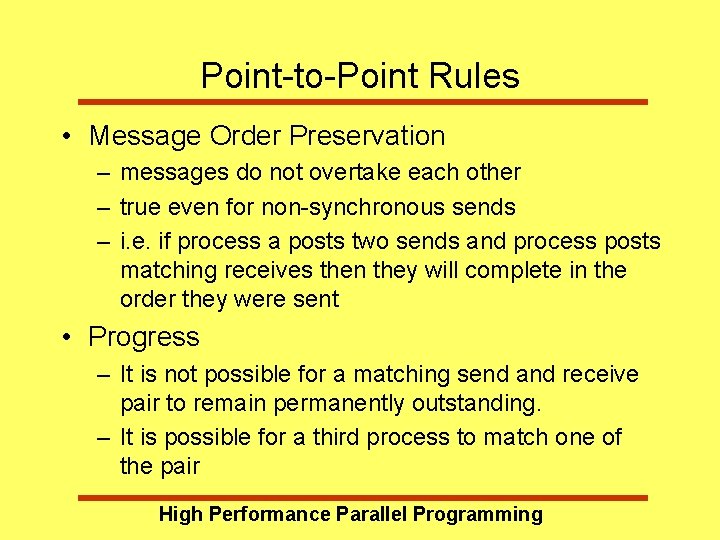

Point-to-Point Rules • Message Order Preservation – messages do not overtake each other – true even for non-synchronous sends – i. e. if process a posts two sends and process posts matching receives then they will complete in the order they were sent • Progress – It is not possible for a matching send and receive pair to remain permanently outstanding. – It is possible for a third process to match one of the pair High Performance Parallel Programming

Exercise: Ping pong • Write a program in which two processes repeatedly pass a message back and forth. • Insert timing calls to measure the time taken for one message. • Investigate how the time taken varies with the size of the message. High Performance Parallel Programming

Timers • C: double MPI_Wtime(void); • Fortran: double precision mpi_wtime() – Time is measured in seconds – Time to perform a task is measured by consulting the time before and after High Performance Parallel Programming

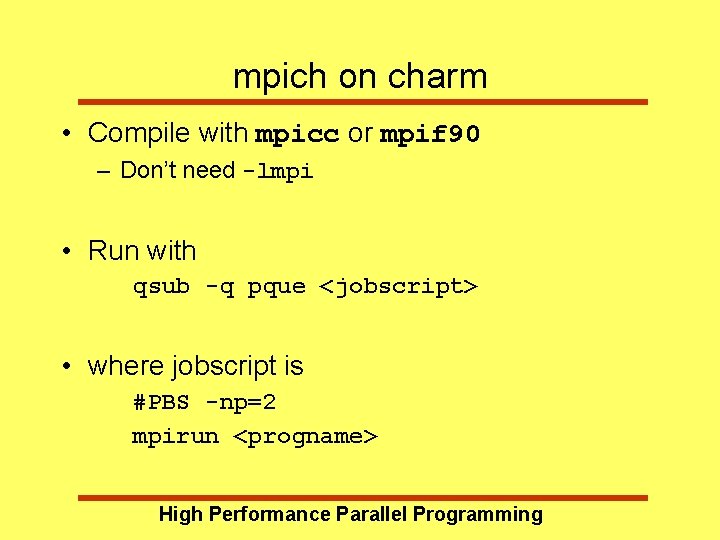

mpich on charm • Compile with mpicc or mpif 90 – Don’t need -lmpi • Run with qsub -q pque <jobscript> • where jobscript is #PBS -np=2 mpirun <progname> High Performance Parallel Programming

High Performance Parallel Programming Next week - MPI part 2 High Performance Parallel Programming