High Performance Parallel Programming Dirk van der Knijff

- Slides: 57

High Performance Parallel Programming Dirk van der Knijff Advanced Research Computing Information Division

High Performance Parallel Programming Lecture 1: Introduction to Parallel Programming High Performance Parallel Programming 2

Introduction • Parallel programming covers all occasions where more than 1 functional element is involved. • Most simple hardware parallelism is already exploited – Bit-parallel adders and multipliers – Multiple arithmetic units – Overlapped compute and I/O – Pipelined instruction execution • We are concerned with higher levels. High Performance Parallel Programming 3

Why parallel? • Because it’s faster!!! – Even without good scalability – but not always… • Because it’s cheaper! – Leverages commodity components – Allows arbitrary size computers • Because it’s natural! – The real world is parallel – Computer languages usually force a serial view High Performance Parallel Programming 4

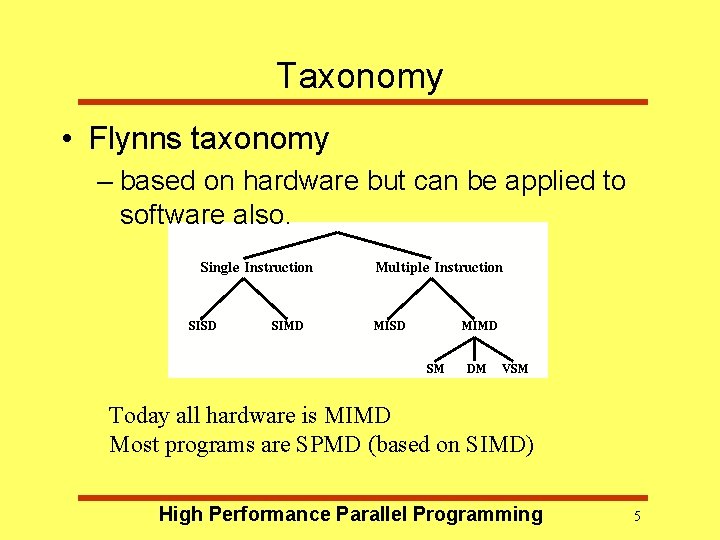

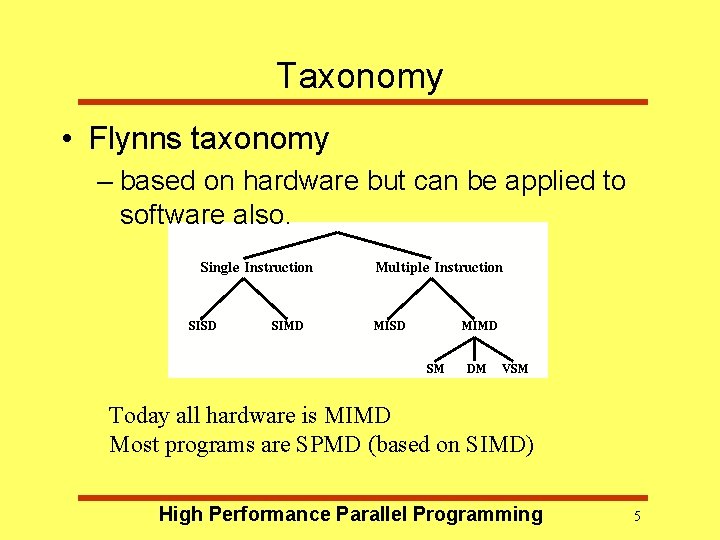

Taxonomy • Flynns taxonomy – based on hardware but can be applied to software also. Today all hardware is MIMD Most programs are SPMD (based on SIMD) High Performance Parallel Programming 5

Parallel Architectures SIMD - Single Instruction Multiple Data Parallel Machines MIMD - Multiple Instruction Multiple Data SMP - Shared Memory Processing cc. NUMA - cache coherent Non-Uniform Memory Access DM - Distrubuted Memory MPP - Massively Parallel Processors NOWS - Network Of Work-Stations High Performance Parallel Programming 6

Programming Models Machine architectures and Programming models have a natural affinity, both in their descriptions and in there effects on each other. Algorithms have been developed to fit machines and machines have been built to fit algorithms. Purpose built Supercomputers - ASCI FPGAs QCDmachines High-Performance Parallel Programming 7

Parallel Programming Models – Control • How is parallelism created? • What orderings exist between operations? • How do different threads of control synchronize? – Data • What data is private vs. shared? • How is logically shared data accessed or communicated? – Operations • What are the atomic operations? – Cost • How do we account for the cost of each of the above? High Performance Parallel Programming 8

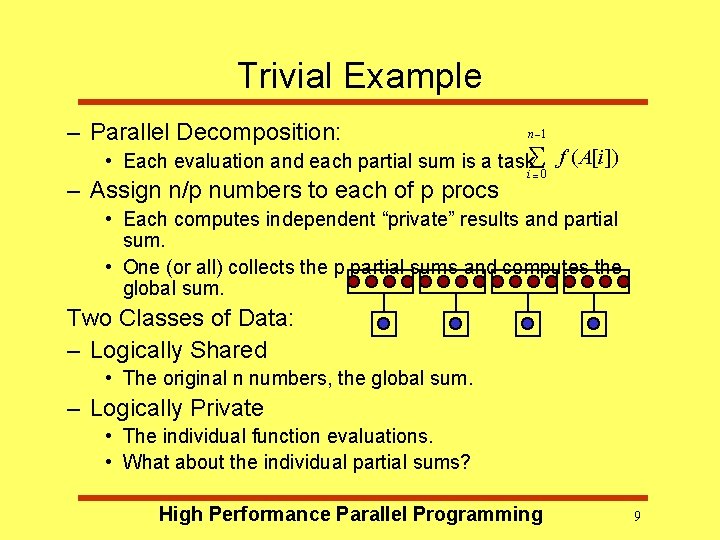

Trivial Example – Parallel Decomposition: n -1 • Each evaluation and each partial sum is a task. S 0 f ( A[i ]) – Assign n/p numbers to each of p procs i= • Each computes independent “private” results and partial sum. • One (or all) collects the p partial sums and computes the global sum. Two Classes of Data: – Logically Shared • The original n numbers, the global sum. – Logically Private • The individual function evaluations. • What about the individual partial sums? High Performance Parallel Programming 9

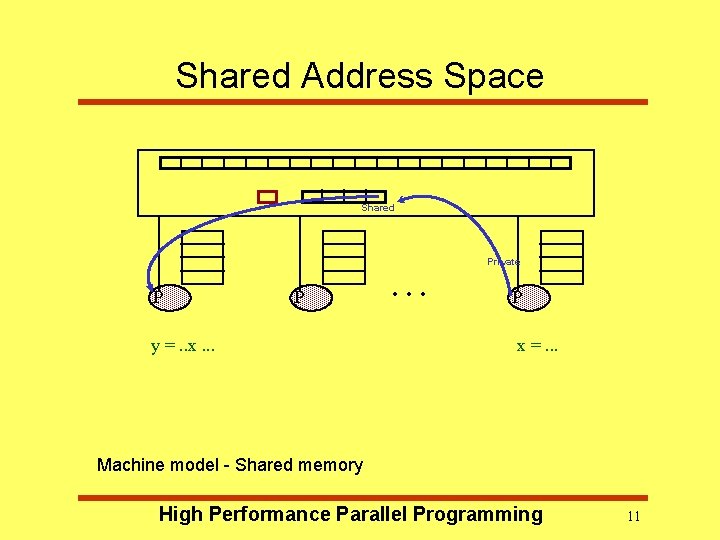

Model 1: Shared Address Space • Program consists of a collection of threads of control. • Each has a set of private variables, e. g. local variables on the stack. • Collectively with a set of shared variables, e. g. , static variables, shared common blocks, global heap. • Threads communicate implicitly by writing and reading shared variables. • Threads coordinate explicitly by synchronization operations on shared variables -- writing and reading flags, locks or semaphores. • Like concurrent programming on a uniprocessor. High Performance Parallel Programming 10

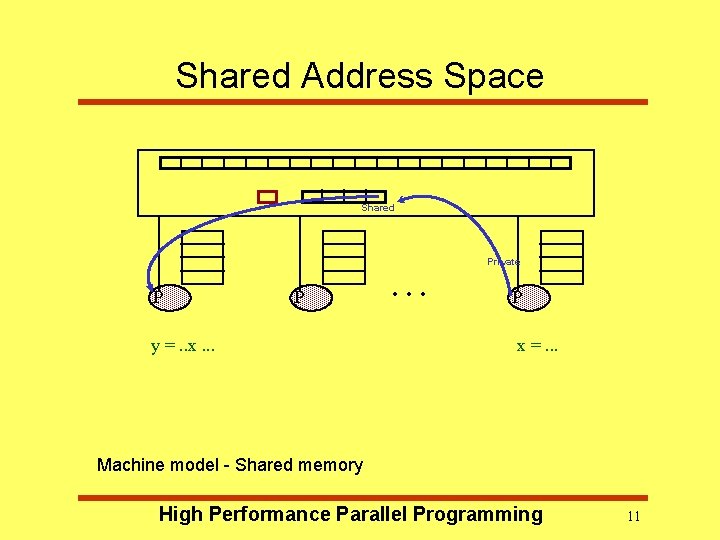

Shared Address Space Shared Private P P y =. . x. . . P x =. . . Machine model - Shared memory High Performance Parallel Programming 11

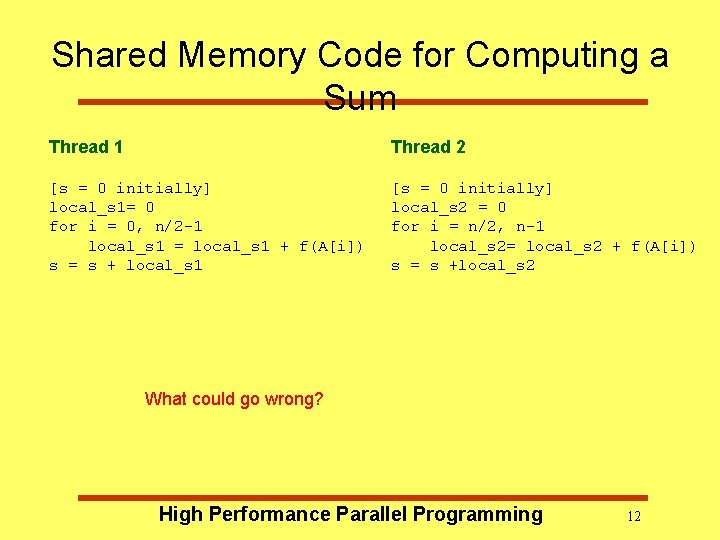

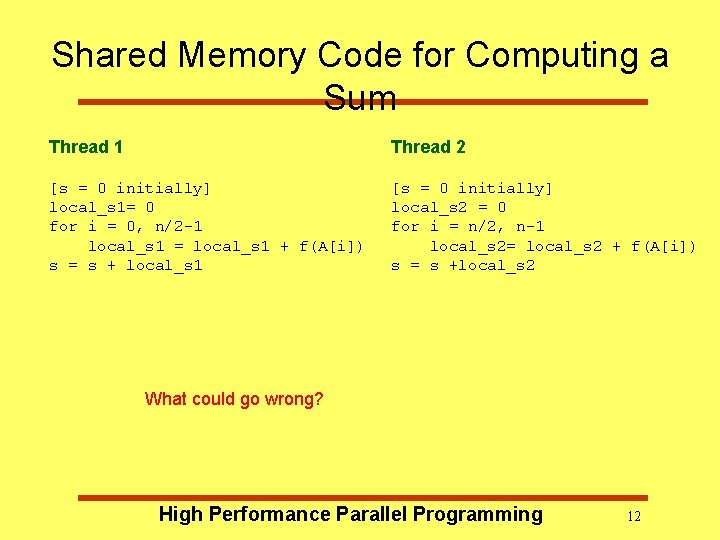

Shared Memory Code for Computing a Sum Thread 1 Thread 2 [s = 0 initially] local_s 1= 0 for i = 0, n/2 -1 local_s 1 = local_s 1 + f(A[i]) s = s + local_s 1 [s = 0 initially] local_s 2 = 0 for i = n/2, n-1 local_s 2= local_s 2 + f(A[i]) s = s +local_s 2 What could go wrong? High Performance Parallel Programming 12

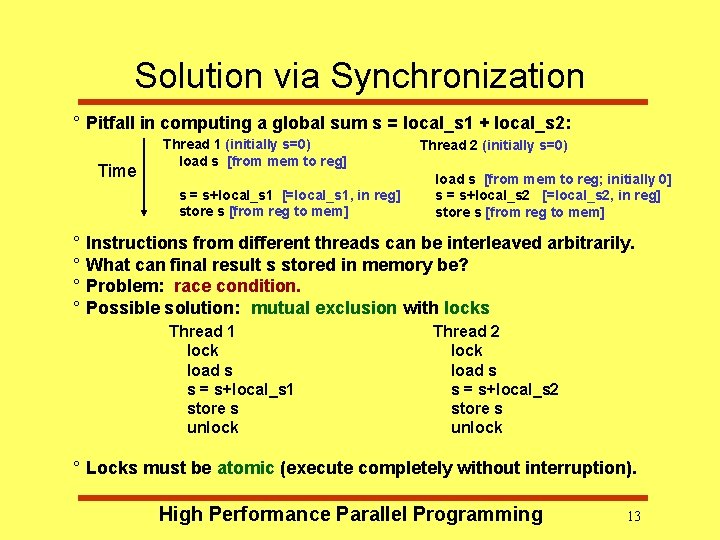

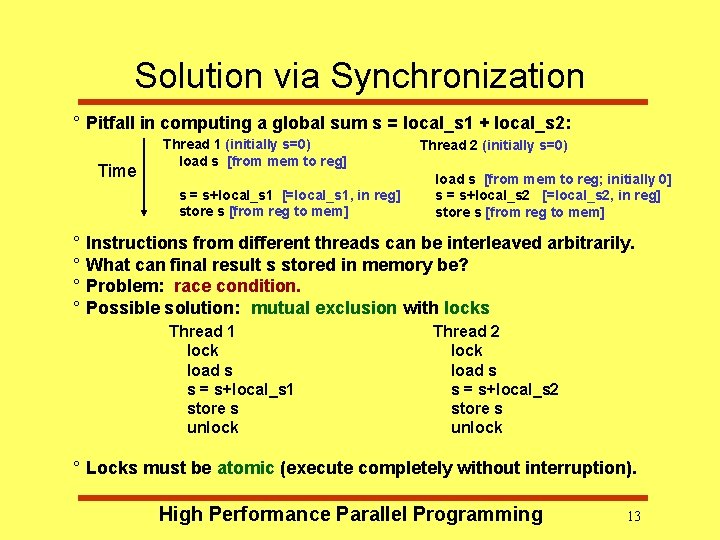

Solution via Synchronization ° Pitfall in computing a global sum s = local_s 1 + local_s 2: Time Thread 1 (initially s=0) load s [from mem to reg] s = s+local_s 1 [=local_s 1, in reg] store s [from reg to mem] Thread 2 (initially s=0) load s [from mem to reg; initially 0] s = s+local_s 2 [=local_s 2, in reg] store s [from reg to mem] ° Instructions from different threads can be interleaved arbitrarily. ° What can final result s stored in memory be? ° Problem: race condition. ° Possible solution: mutual exclusion with locks Thread 1 lock load s s = s+local_s 1 store s unlock Thread 2 lock load s s = s+local_s 2 store s unlock ° Locks must be atomic (execute completely without interruption). High Performance Parallel Programming 13

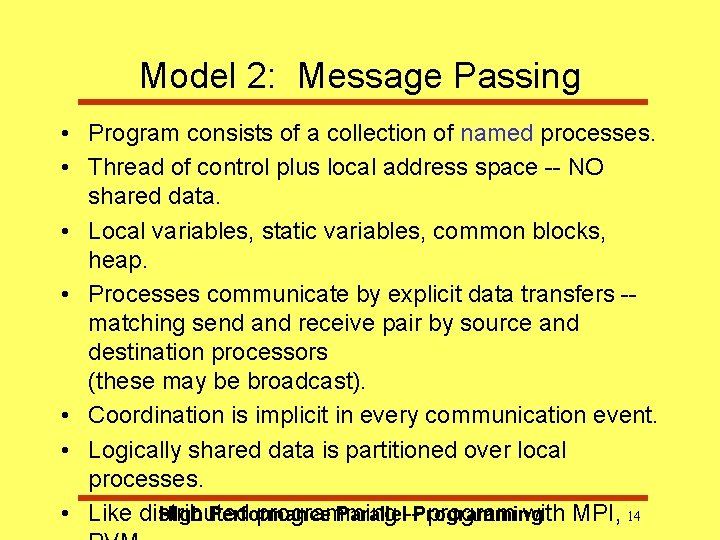

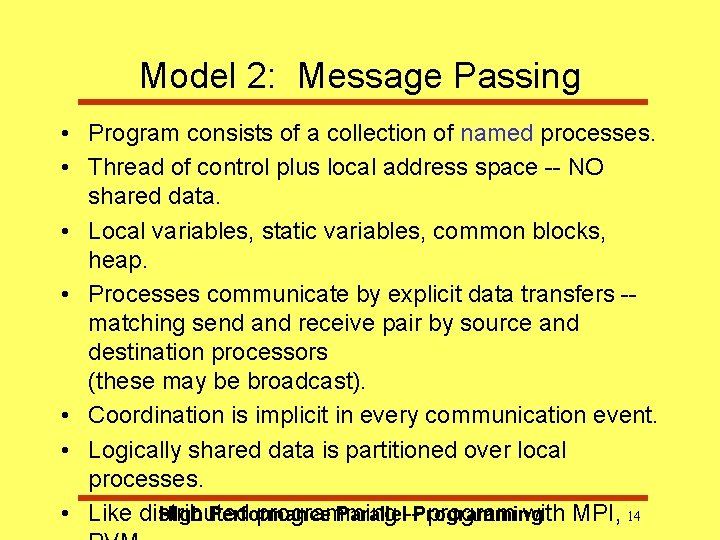

Model 2: Message Passing • Program consists of a collection of named processes. • Thread of control plus local address space -- NO shared data. • Local variables, static variables, common blocks, heap. • Processes communicate by explicit data transfers -matching send and receive pair by source and destination processors (these may be broadcast). • Coordination is implicit in every communication event. • Logically shared data is partitioned over local processes. High Performance Parallel--Programming • Like distributed programming program with MPI, 14

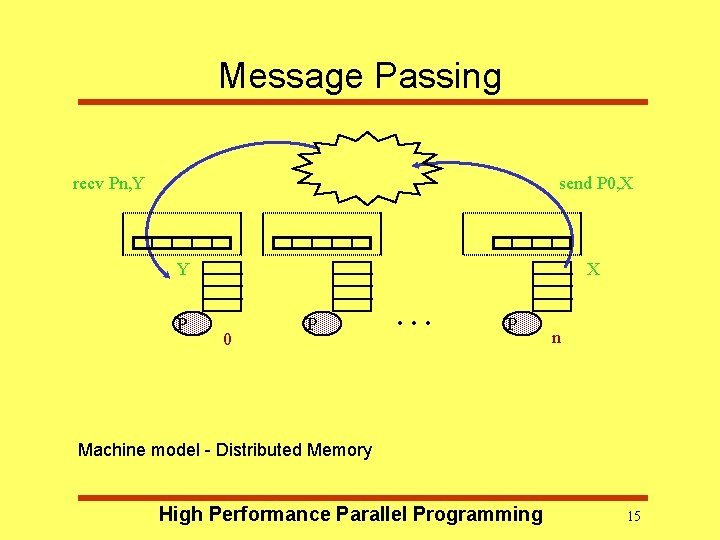

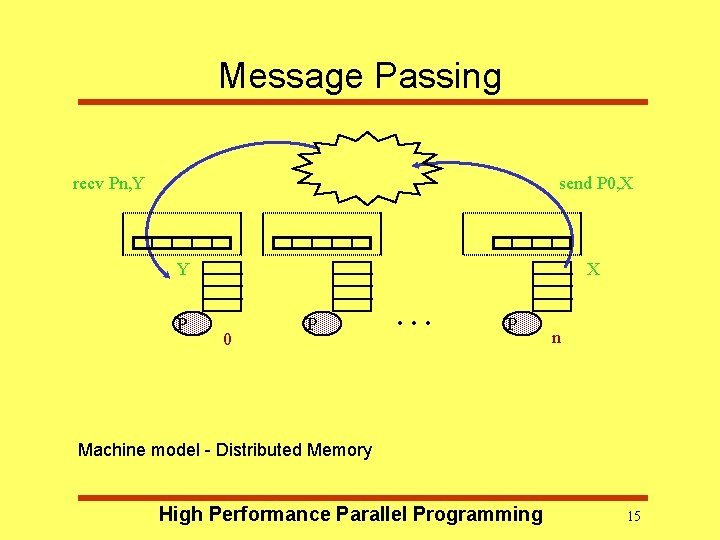

Message Passing recv Pn, Y send P 0, X X Y P 0 P . . . P n Machine model - Distributed Memory High Performance Parallel Programming 15

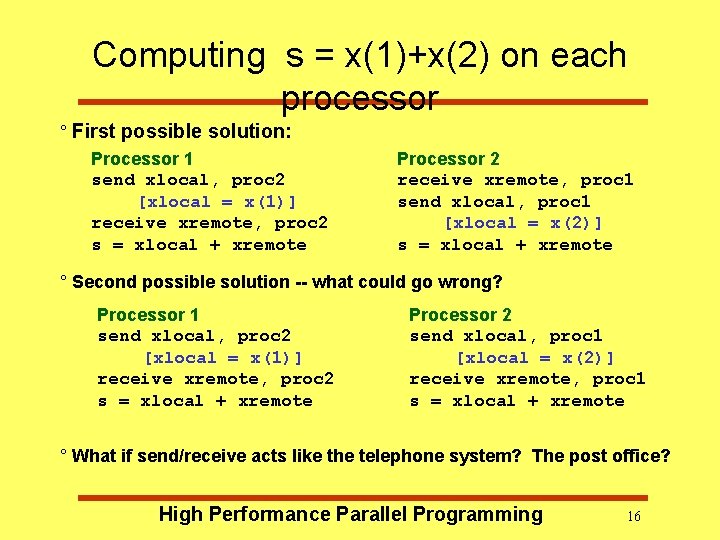

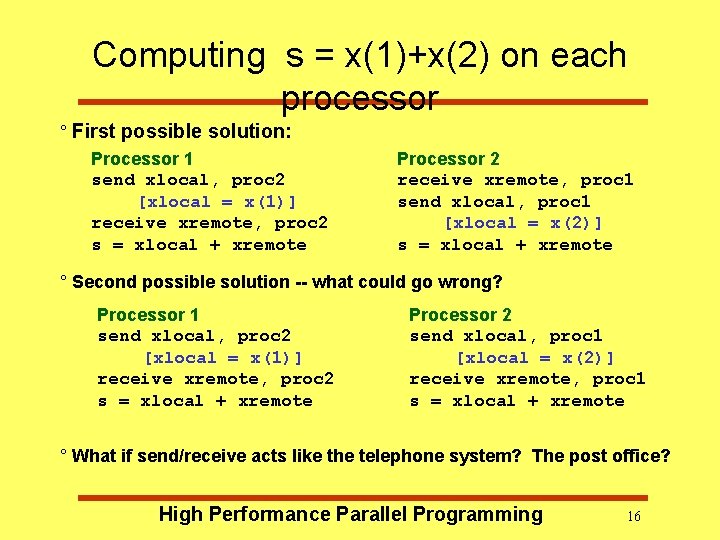

Computing s = x(1)+x(2) on each processor ° First possible solution: Processor 1 send xlocal, proc 2 [xlocal = x(1)] receive xremote, proc 2 s = xlocal + xremote Processor 2 receive xremote, proc 1 send xlocal, proc 1 [xlocal = x(2)] s = xlocal + xremote ° Second possible solution -- what could go wrong? Processor 1 send xlocal, proc 2 [xlocal = x(1)] receive xremote, proc 2 s = xlocal + xremote Processor 2 send xlocal, proc 1 [xlocal = x(2)] receive xremote, proc 1 s = xlocal + xremote ° What if send/receive acts like the telephone system? The post office? High Performance Parallel Programming 16

Model 3: Data Parallel • Single sequential thread of control consisting of parallel operations. • Parallel operations applied to all (or a defined subset) of a data structure. • Communication is implicit in parallel operators and “shifted” data structures. • Elegant and easy to understand reason about. • Like marching in a regiment. • Used by Matlab. • Drawback: not all problems fit this model. High Performance Parallel Programming 17

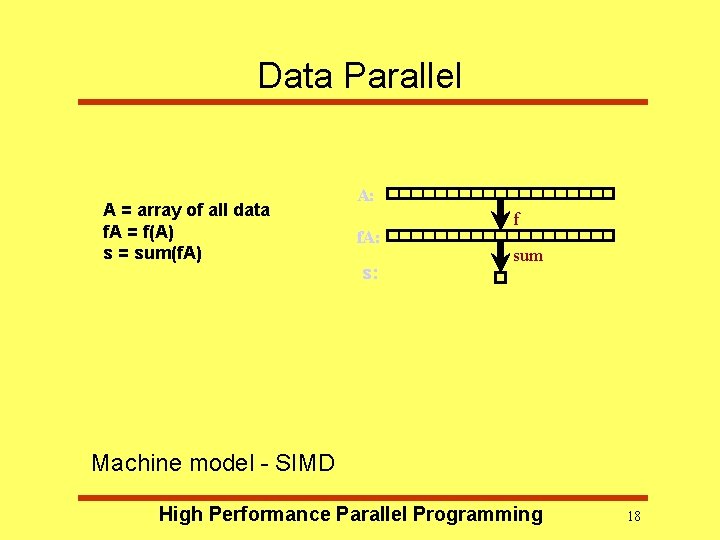

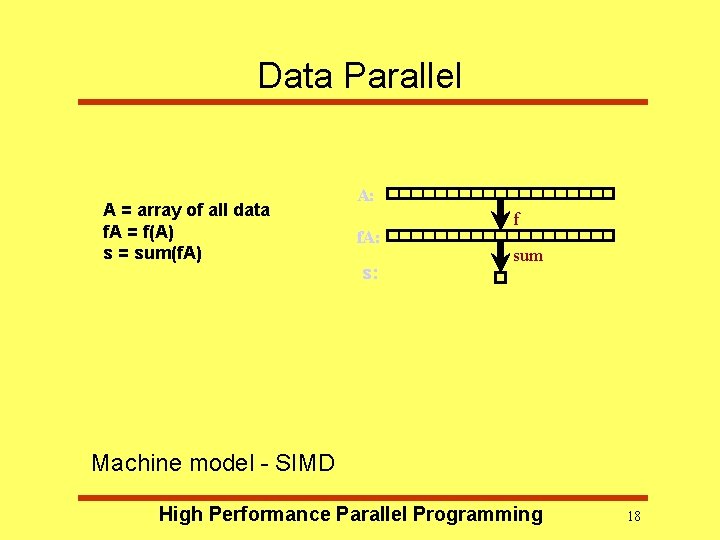

Data Parallel A = array of all data f. A = f(A) s = sum(f. A) A: f. A: s: f sum Machine model - SIMD High Performance Parallel Programming 18

SIMD Architecture – – A large number of (usually) small processors. A single “control processor” issues each instruction. Each processor executes the same instruction. Some processors may be turned off on some instructions. – Machines are not popular (CM 2), but programming model is. – Implemented by mapping n-fold parallelism to p processors. – Programming • Mostly done in the compilers (HPF = High Performance Fortran). • Usually modified to Single Program Multiple Data or SPMD High Performance Parallel Programming 19

Data Parallel Programming • Data Parallel programming involves the processing and manipulation of large arrays • Processors should (simultaneously) perform similar operations on different array elements • Each processor has a local memory => large arrays must be distributed across many different processors • Arrays can be distributed in different ways depending on how they are used • We want to choose a distribution which maximises the ratio of local work to High Performance Parallel Programming 20

Data Parallel Programming (HPF) • Most successful Data-parallel language • Extension to Fortran 90/95 – Fastest language – Has powerful array constructs – Has suitable data structures implementation • HPF is based on compiler directives • Easiest way to write parallel programs High Performance Parallel – Compiler does most of. Programming the work… 21

Open. MP - Shared Memory Systems • Key feature is a single address space across the whole memory system. – every processor can read and write all memory locations • Caches are kept coherent – all processors have same view of memory. • Two main types: – true shared memory – distributed shared memory High Performance Parallel Programming 22

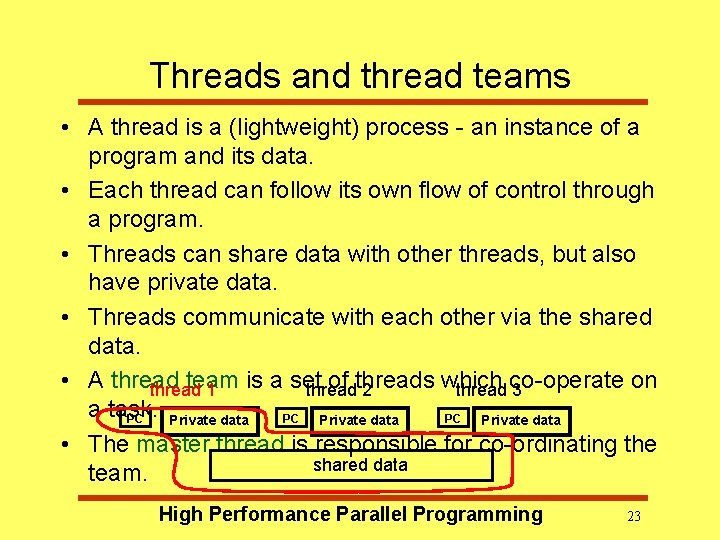

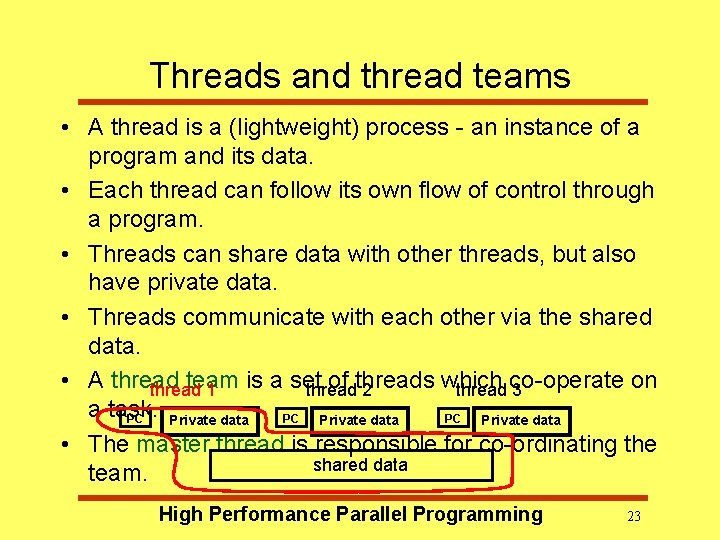

Threads and thread teams • A thread is a (lightweight) process - an instance of a program and its data. • Each thread can follow its own flow of control through a program. • Threads can share data with other threads, but also have private data. • Threads communicate with each other via the shared data. • A thread team is a set of threads which on thread 1 thread 2 thread co-operate 3 a task. PC PC PC Private data • The master thread is responsible for co-ordinating the shared data team. High Performance Parallel Programming 23

Directives and sentinels • A directive is a special line of source code with meaning only to a compiler that understands it. • Note the difference between directives (must be obeyed) and hints (may be obeyed). • A directive is distinguished by a sentinel at the start of the line. • Open. MP sentinels are: – Fortran: !$OMP (or C$OMP or *$OMP) – C/C++: #pragma omp High Performance Parallel Programming 24

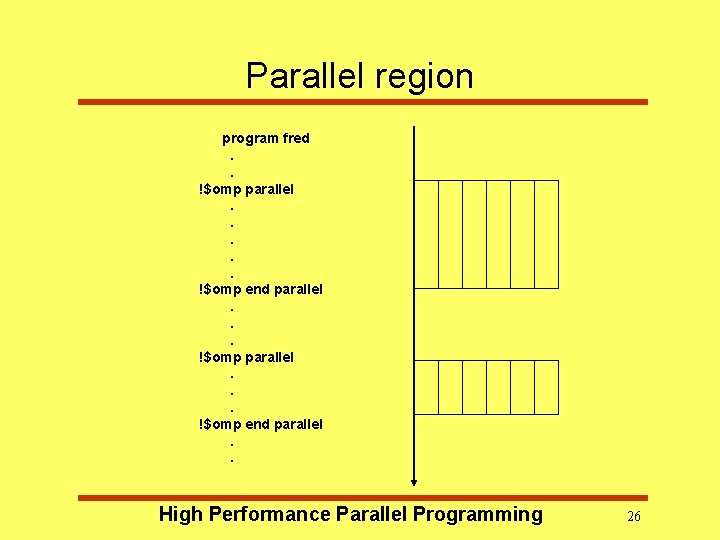

Parallel region • The parallel region is the basic parallel construct in Open. MP. • A parallel region defines a section of a program. • Program begins execution on a single thread (the master thread). • When the first parallel region is encountered, the master thread creates a team of threads. (Fork/join model) • Every thread executes the statements which are inside the parallel region • At the end of the parallel region, the master thread waits for the other threads to finish, and continues executing the next statements High Performance Parallel Programming 25

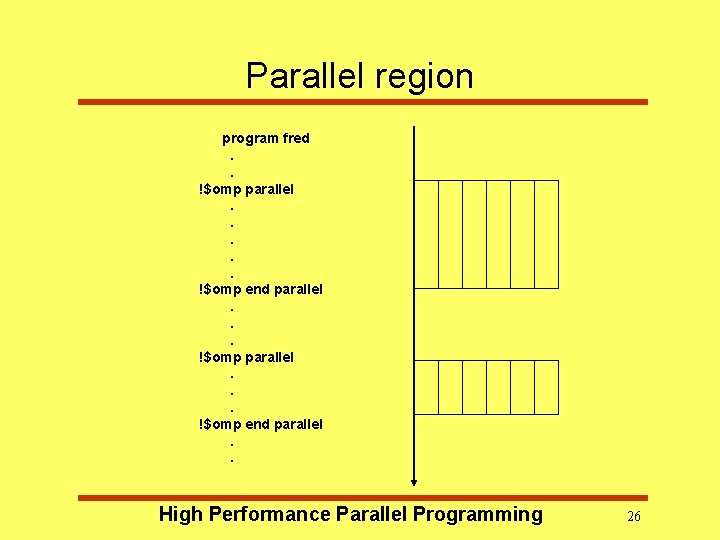

Parallel region program fred. . !$omp parallel. . . !$omp end parallel. . High Performance Parallel Programming 26

Shared and private data • Inside a parallel region, variables can either be shared or private. • All threads see the same copy of shared variables. • All threads can read or write shared variables. • Each thread has its own copy of private variables: these are invisible to other threads. • A private variable can only be read or written by its own thread. High Performance Parallel Programming 27

Parallel loops • Loops are the main source of parallelism in many applications. • If the iterations of a loop are independent (can be done in any order) then we can share out the iterations between different threads. • e. g. if we have two threads and the loop do i = 1, 100 a(i) = a(i) + b(i) end do we could do iteration 1 -50 on one thread and iterations 51 -100 on the other. High Performance Parallel Programming 28

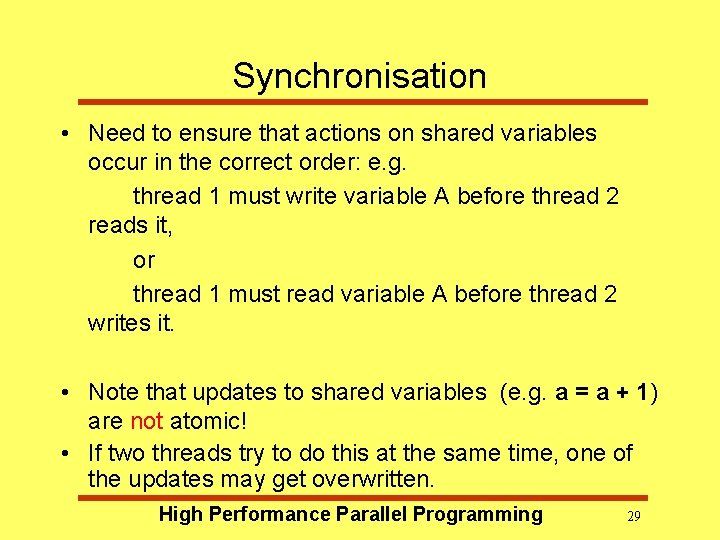

Synchronisation • Need to ensure that actions on shared variables occur in the correct order: e. g. thread 1 must write variable A before thread 2 reads it, or thread 1 must read variable A before thread 2 writes it. • Note that updates to shared variables (e. g. a = a + 1) are not atomic! • If two threads try to do this at the same time, one of the updates may get overwritten. High Performance Parallel Programming 29

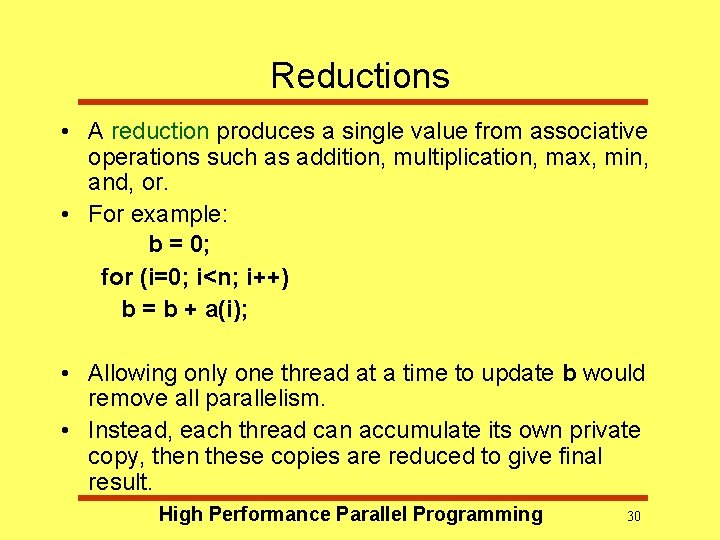

Reductions • A reduction produces a single value from associative operations such as addition, multiplication, max, min, and, or. • For example: b = 0; for (i=0; i<n; i++) b = b + a(i); • Allowing only one thread at a time to update b would remove all parallelism. • Instead, each thread can accumulate its own private copy, then these copies are reduced to give final result. High Performance Parallel Programming 30

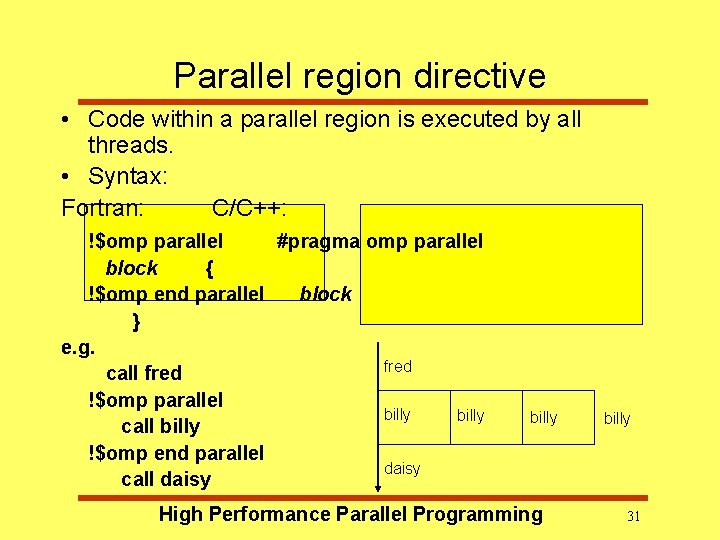

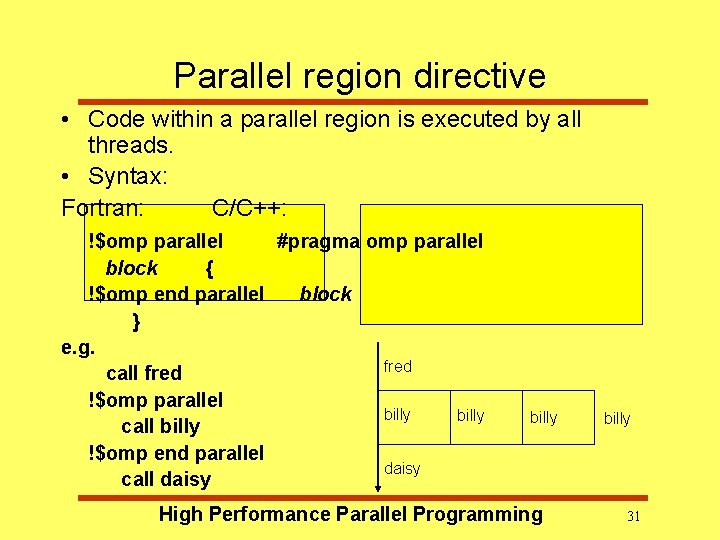

Parallel region directive • Code within a parallel region is executed by all threads. • Syntax: Fortran: C/C++: !$omp parallel #pragma omp parallel block { !$omp end parallel block } e. g. fred call fred !$omp parallel billy call billy !$omp end parallel daisy call daisy billy High Performance Parallel Programming billy 31

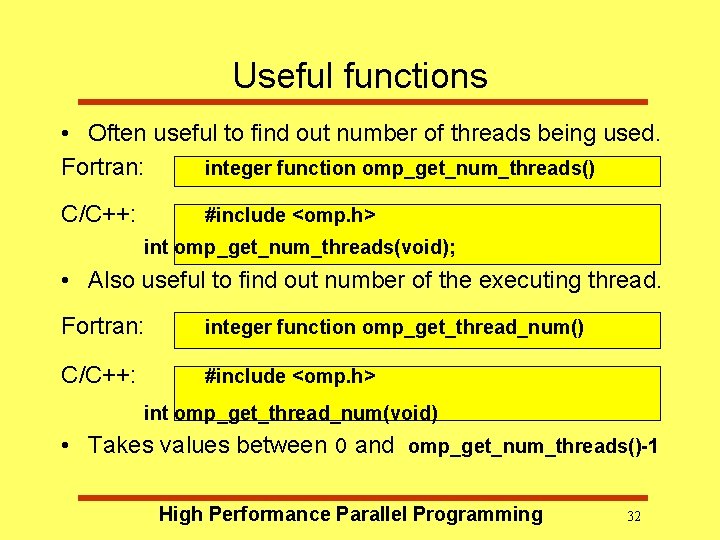

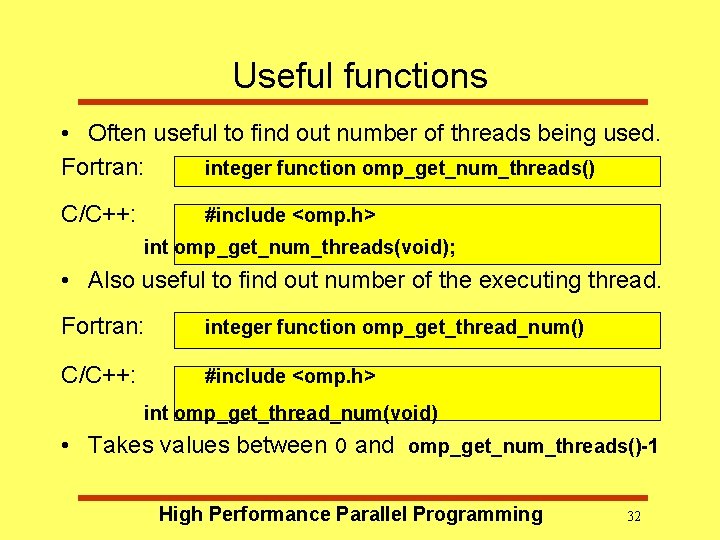

Useful functions • Often useful to find out number of threads being used. Fortran: integer function omp_get_num_threads() C/C++: #include <omp. h> int omp_get_num_threads(void); • Also useful to find out number of the executing thread. Fortran: integer function omp_get_thread_num() C/C++: #include <omp. h> int omp_get_thread_num(void) • Takes values between 0 and omp_get_num_threads()-1 High Performance Parallel Programming 32

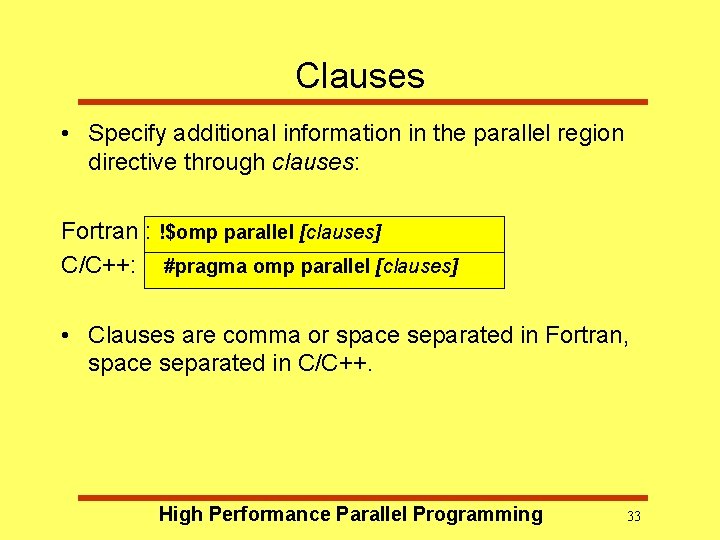

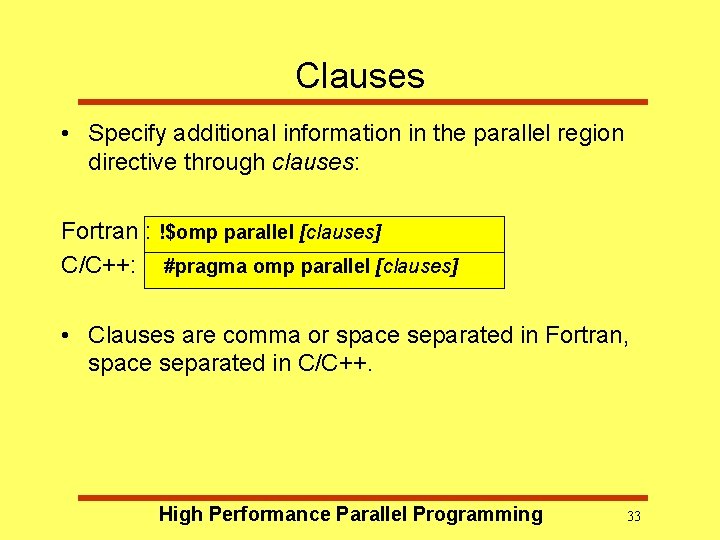

Clauses • Specify additional information in the parallel region directive through clauses: Fortran : !$omp parallel [clauses] C/C++: #pragma omp parallel [clauses] • Clauses are comma or space separated in Fortran, space separated in C/C++. High Performance Parallel Programming 33

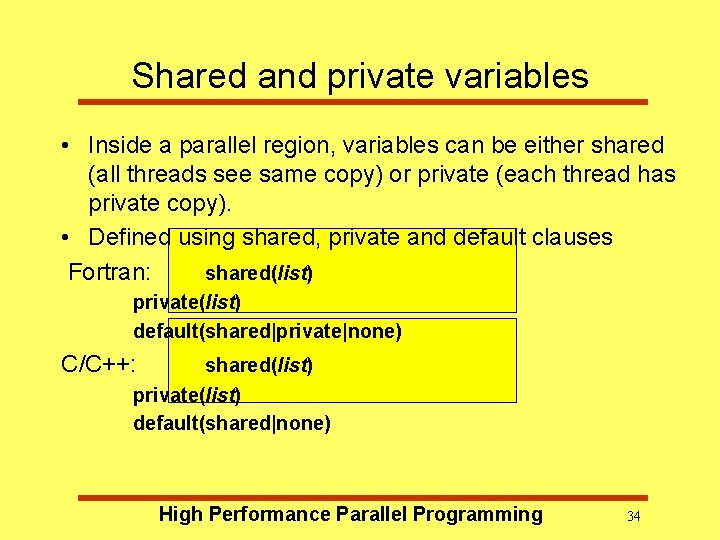

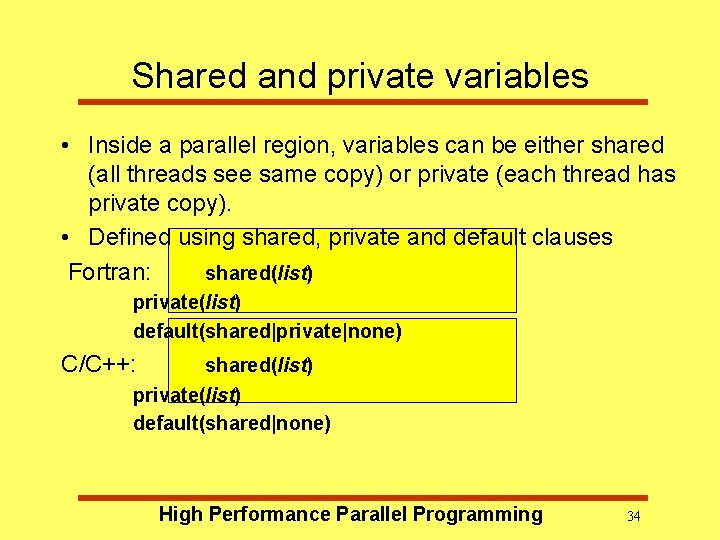

Shared and private variables • Inside a parallel region, variables can be either shared (all threads see same copy) or private (each thread has private copy). • Defined using shared, private and default clauses Fortran: shared(list) private(list) default(shared|private|none) C/C++: shared(list) private(list) default(shared|none) High Performance Parallel Programming 34

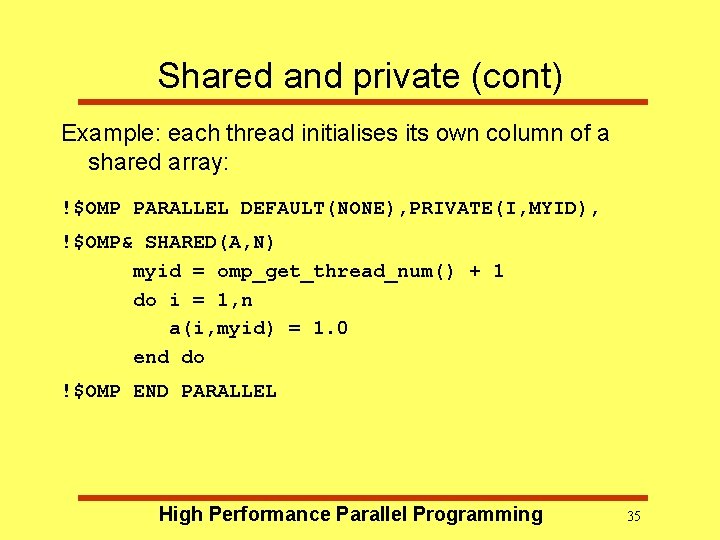

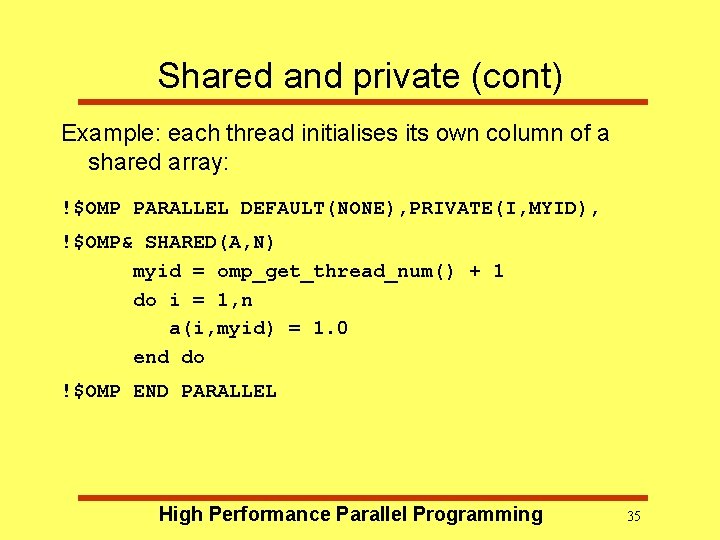

Shared and private (cont) Example: each thread initialises its own column of a shared array: !$OMP PARALLEL DEFAULT(NONE), PRIVATE(I, MYID), !$OMP& SHARED(A, N) myid = omp_get_thread_num() + 1 do i = 1, n a(i, myid) = 1. 0 end do !$OMP END PARALLEL High Performance Parallel Programming 35

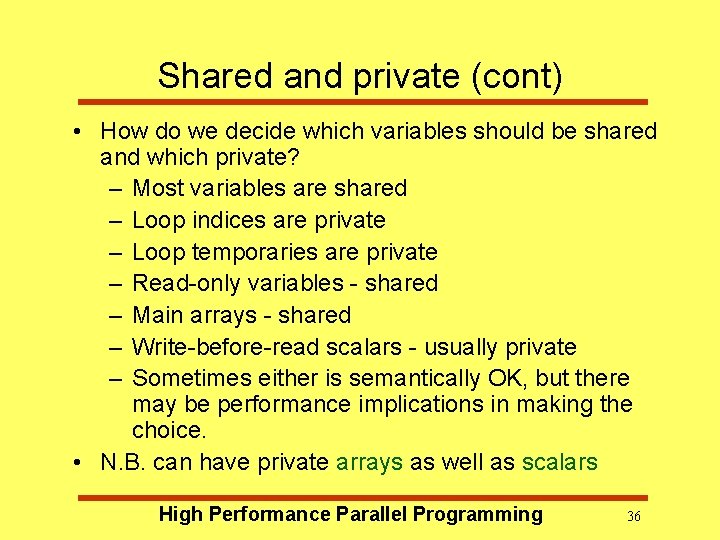

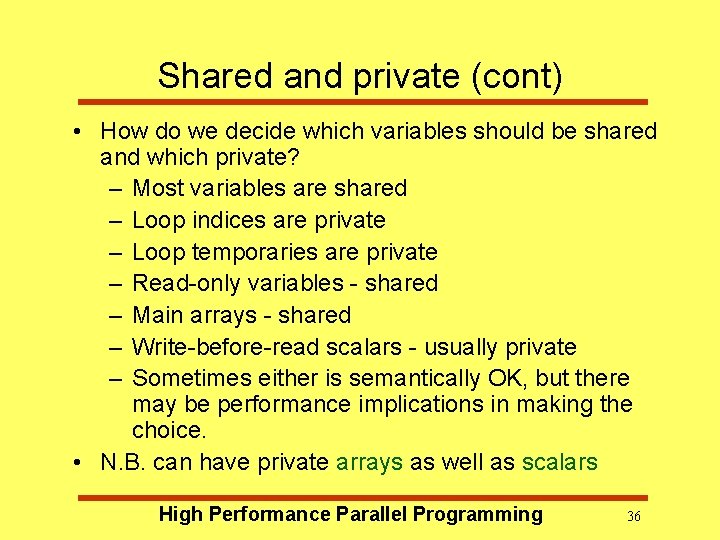

Shared and private (cont) • How do we decide which variables should be shared and which private? – Most variables are shared – Loop indices are private – Loop temporaries are private – Read-only variables - shared – Main arrays - shared – Write-before-read scalars - usually private – Sometimes either is semantically OK, but there may be performance implications in making the choice. • N. B. can have private arrays as well as scalars High Performance Parallel Programming 36

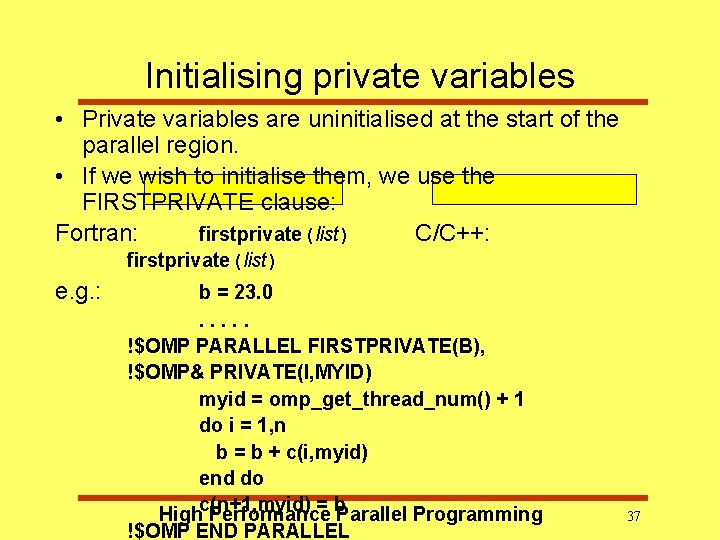

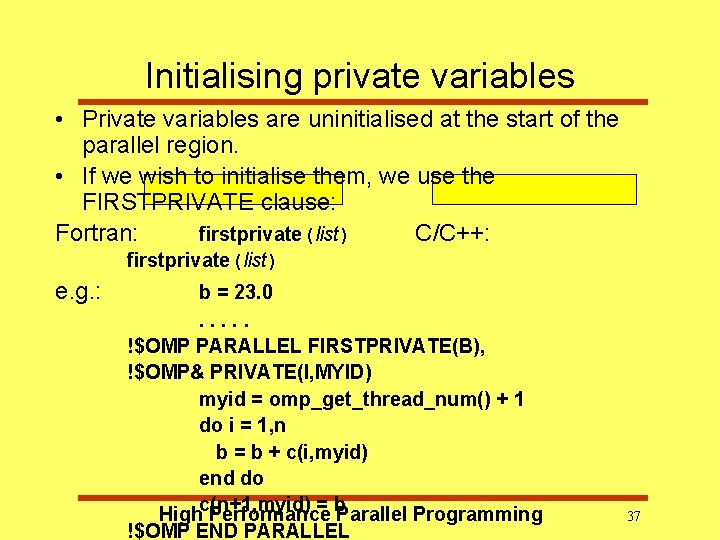

Initialising private variables • Private variables are uninitialised at the start of the parallel region. • If we wish to initialise them, we use the FIRSTPRIVATE clause: Fortran: firstprivate(list) C/C++: firstprivate(list) e. g. : b = 23. 0. . . !$OMP PARALLEL FIRSTPRIVATE(B), !$OMP& PRIVATE(I, MYID) myid = omp_get_thread_num() + 1 do i = 1, n b = b + c(i, myid) end do = b. Parallel Programming Highc(n+1, myid) Performance !$OMP END PARALLEL 37

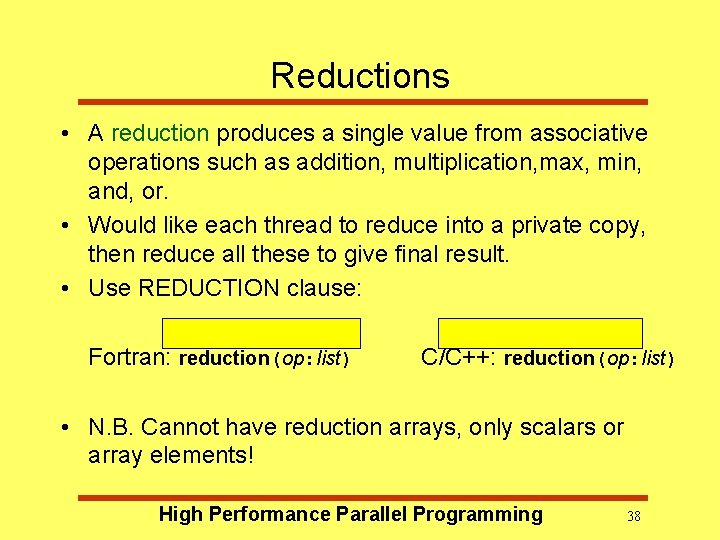

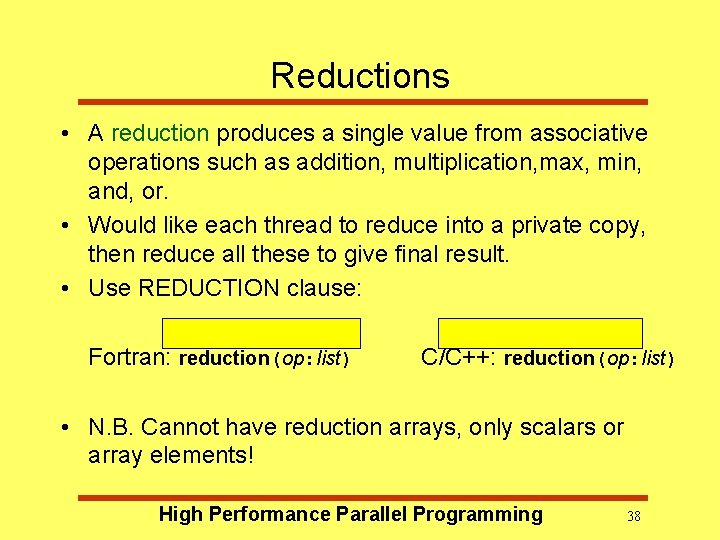

Reductions • A reduction produces a single value from associative operations such as addition, multiplication, max, min, and, or. • Would like each thread to reduce into a private copy, then reduce all these to give final result. • Use REDUCTION clause: Fortran: reduction(op: list) C/C++: reduction(op: list) • N. B. Cannot have reduction arrays, only scalars or array elements! High Performance Parallel Programming 38

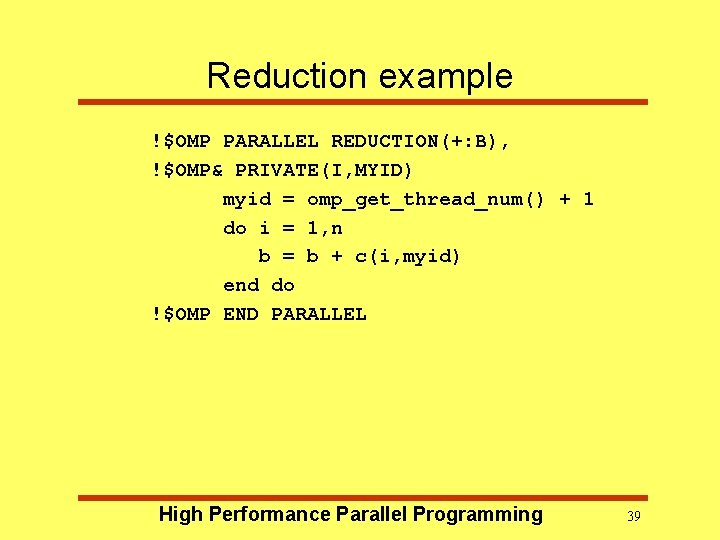

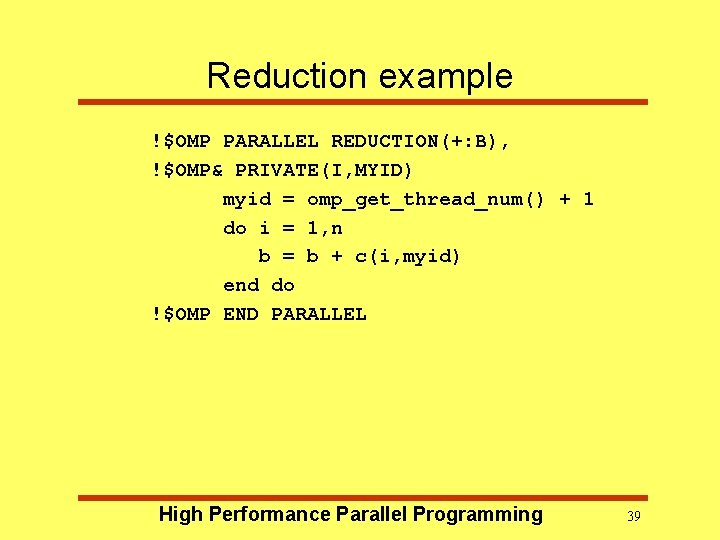

Reduction example !$OMP PARALLEL REDUCTION(+: B), !$OMP& PRIVATE(I, MYID) myid = omp_get_thread_num() + 1 do i = 1, n b = b + c(i, myid) end do !$OMP END PARALLEL High Performance Parallel Programming 39

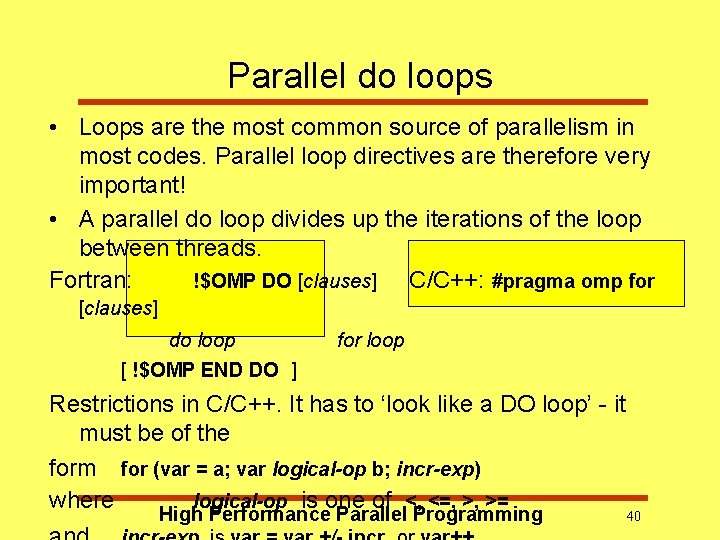

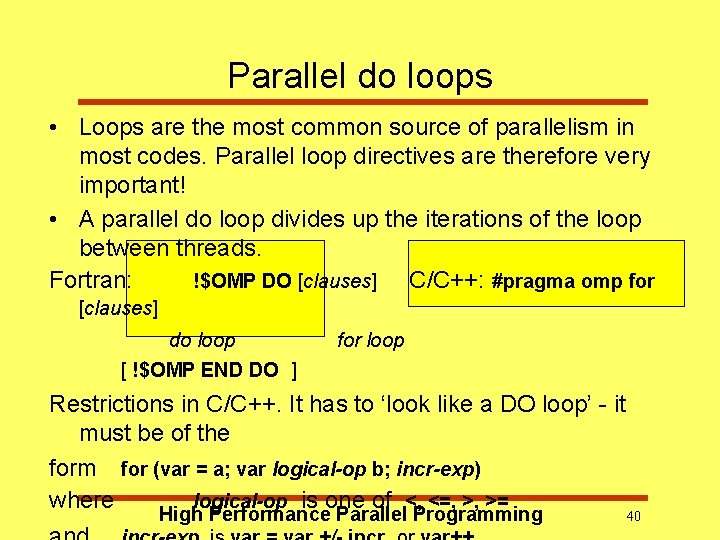

Parallel do loops • Loops are the most common source of parallelism in most codes. Parallel loop directives are therefore very important! • A parallel do loop divides up the iterations of the loop between threads. Fortran: !$OMP DO [clauses] C/C++: #pragma omp for [clauses] do loop for loop [ !$OMP END DO ] Restrictions in C/C++. It has to ‘look like a DO loop’ - it must be of the form for (var = a; var logical-op b; incr-exp) where logical-op is one of <, <=, >, >= High Performance Parallel Programming 40

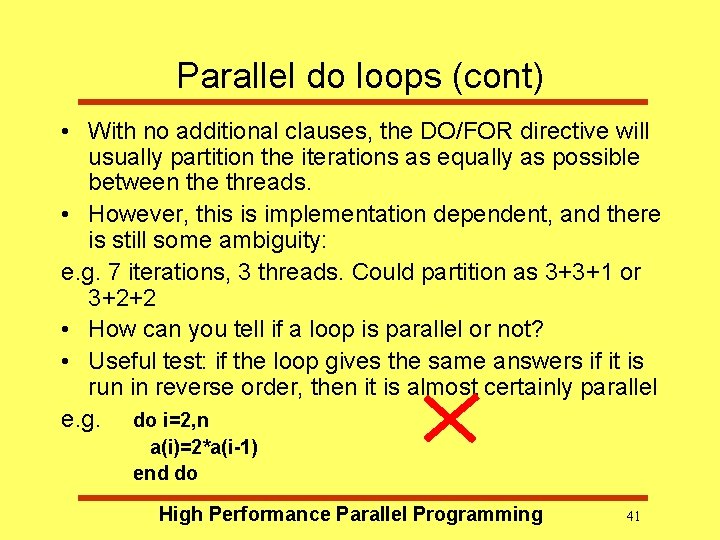

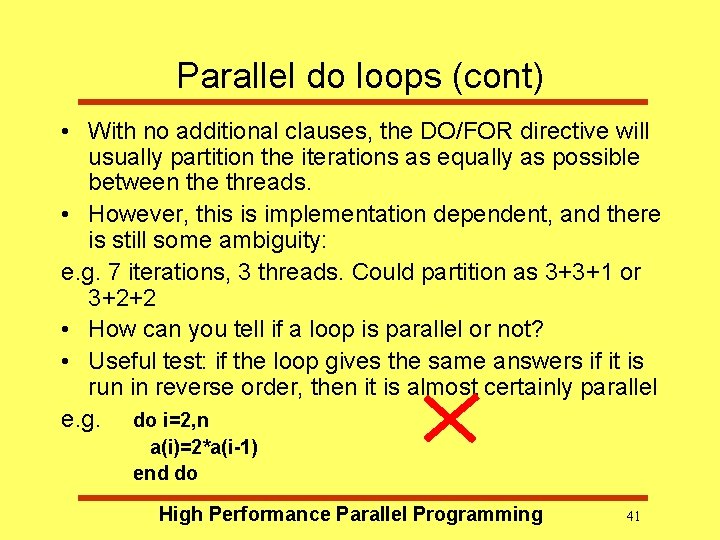

Parallel do loops (cont) • With no additional clauses, the DO/FOR directive will usually partition the iterations as equally as possible between the threads. • However, this is implementation dependent, and there is still some ambiguity: e. g. 7 iterations, 3 threads. Could partition as 3+3+1 or 3+2+2 • How can you tell if a loop is parallel or not? • Useful test: if the loop gives the same answers if it is run in reverse order, then it is almost certainly parallel e. g. do i=2, n a(i)=2*a(i-1) end do High Performance Parallel Programming 41

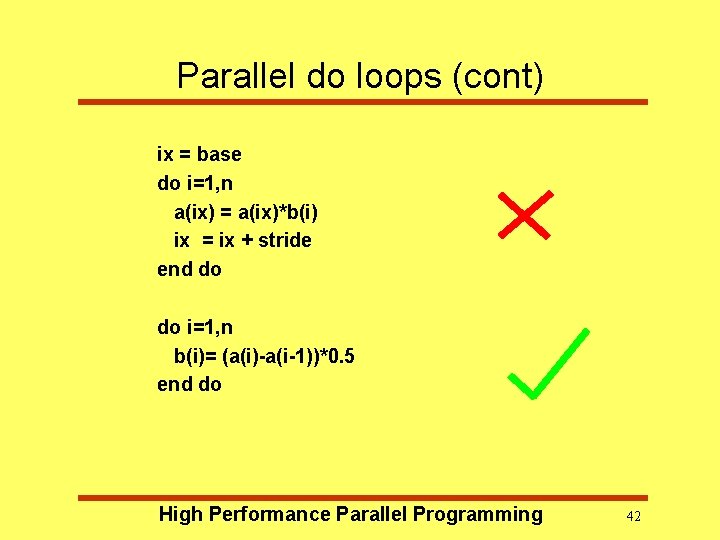

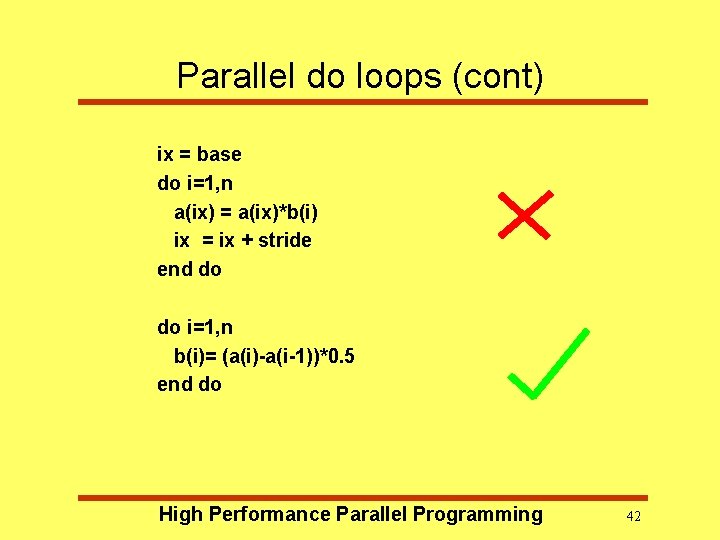

Parallel do loops (cont) ix = base do i=1, n a(ix) = a(ix)*b(i) ix = ix + stride end do do i=1, n b(i)= (a(i)-a(i-1))*0. 5 end do High Performance Parallel Programming 42

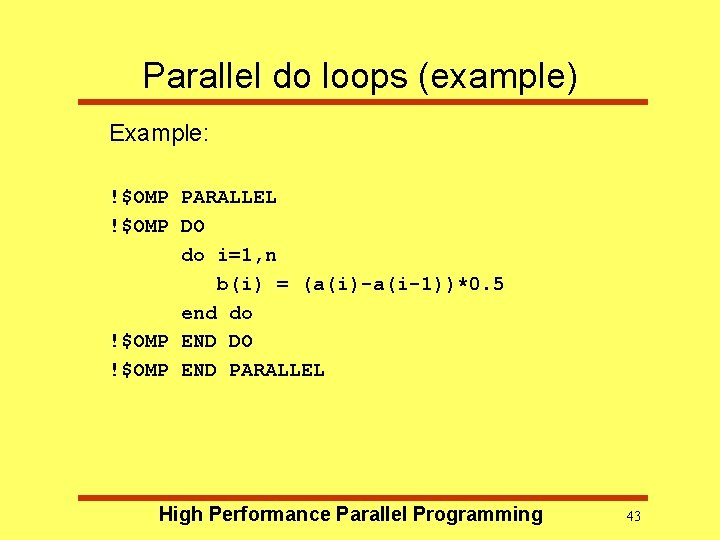

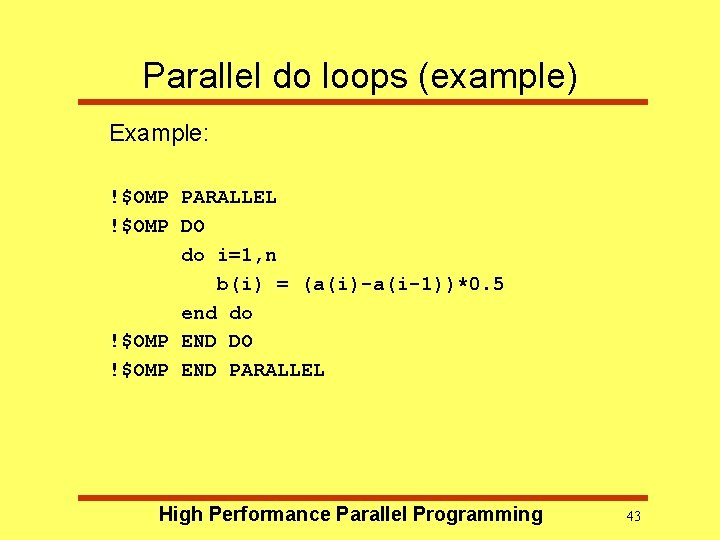

Parallel do loops (example) Example: !$OMP PARALLEL !$OMP DO do i=1, n b(i) = (a(i)-a(i-1))*0. 5 end do !$OMP END DO !$OMP END PARALLEL High Performance Parallel Programming 43

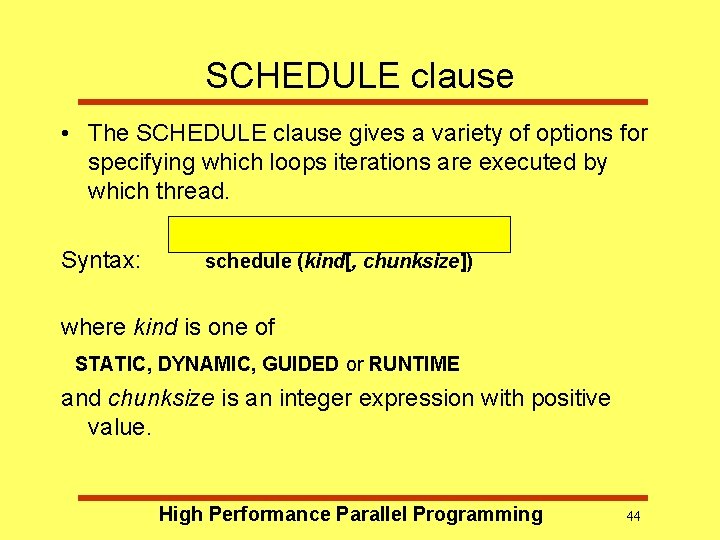

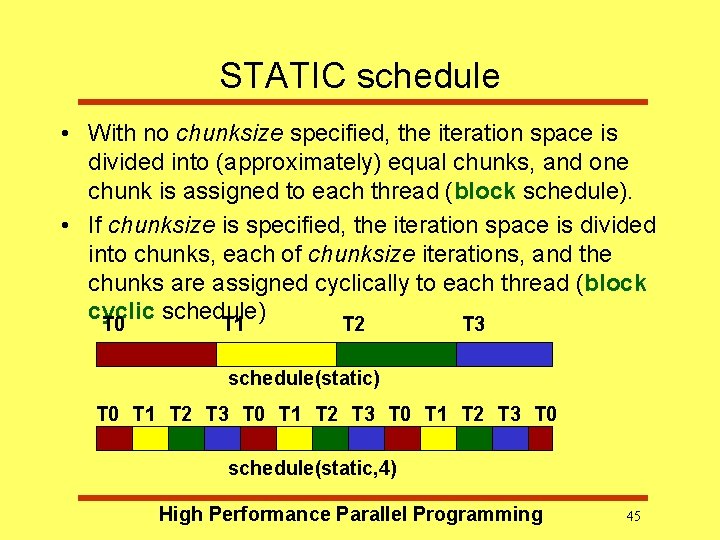

SCHEDULE clause • The SCHEDULE clause gives a variety of options for specifying which loops iterations are executed by which thread. Syntax: schedule (kind[, chunksize]) where kind is one of STATIC, DYNAMIC, GUIDED or RUNTIME and chunksize is an integer expression with positive value. High Performance Parallel Programming 44

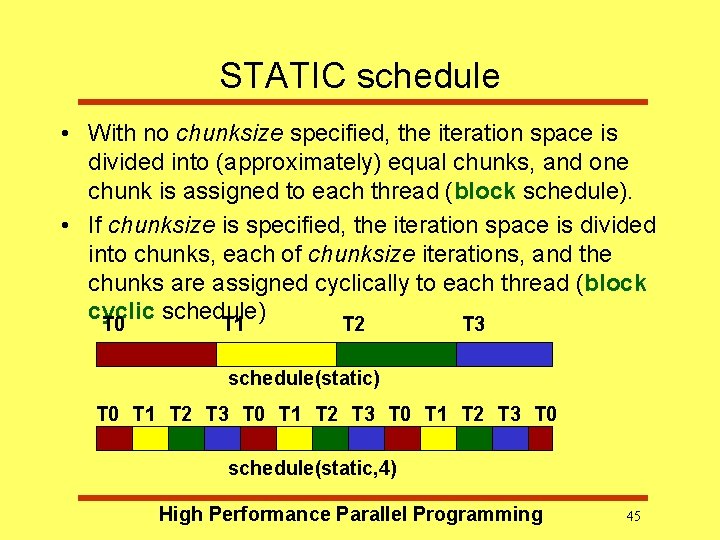

STATIC schedule • With no chunksize specified, the iteration space is divided into (approximately) equal chunks, and one chunk is assigned to each thread (block schedule). • If chunksize is specified, the iteration space is divided into chunks, each of chunksize iterations, and the chunks are assigned cyclically to each thread (block cyclic schedule) T 0 T 1 T 2 T 3 schedule(static) T 0 T 1 T 2 T 3 T 0 schedule(static, 4) High Performance Parallel Programming 45

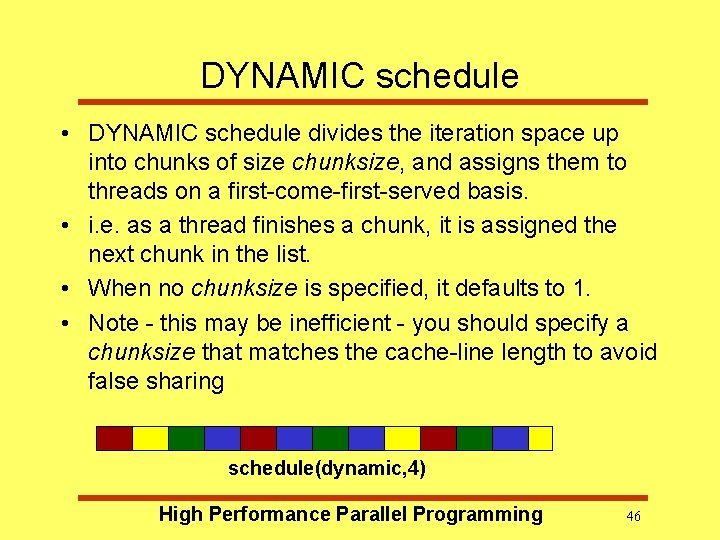

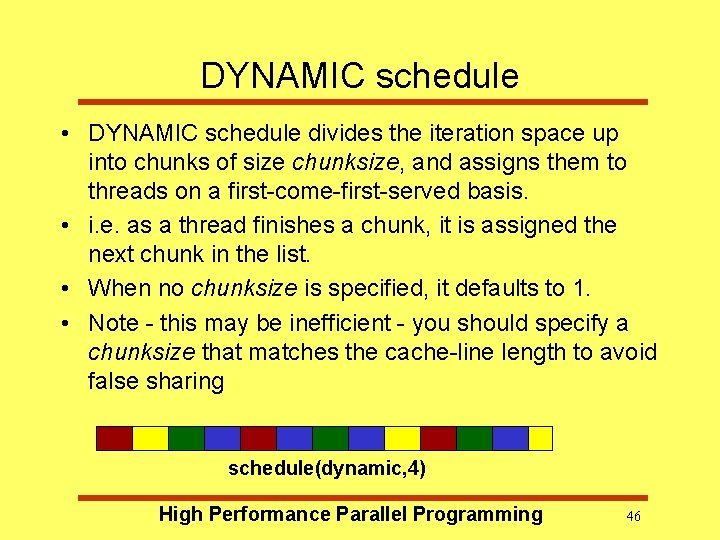

DYNAMIC schedule • DYNAMIC schedule divides the iteration space up into chunks of size chunksize, and assigns them to threads on a first-come-first-served basis. • i. e. as a thread finishes a chunk, it is assigned the next chunk in the list. • When no chunksize is specified, it defaults to 1. • Note - this may be inefficient - you should specify a chunksize that matches the cache-line length to avoid false sharing schedule(dynamic, 4) High Performance Parallel Programming 46

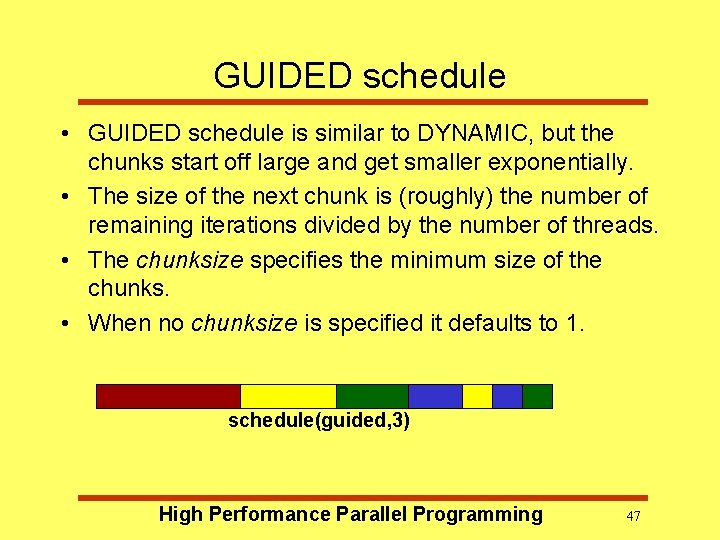

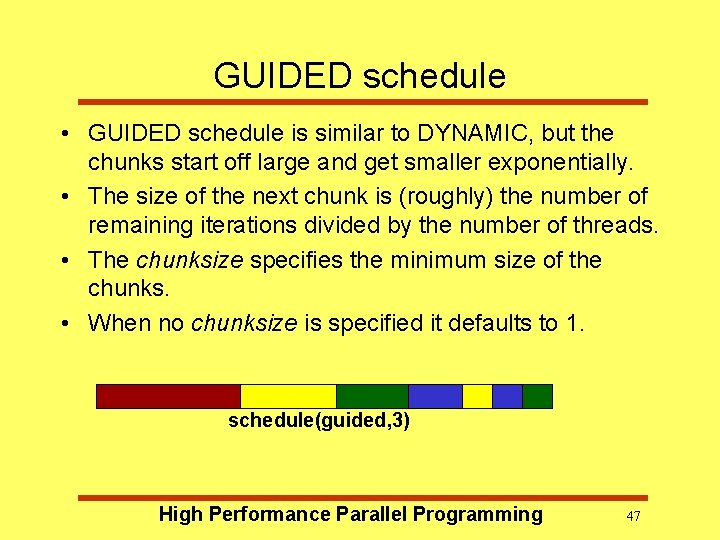

GUIDED schedule • GUIDED schedule is similar to DYNAMIC, but the chunks start off large and get smaller exponentially. • The size of the next chunk is (roughly) the number of remaining iterations divided by the number of threads. • The chunksize specifies the minimum size of the chunks. • When no chunksize is specified it defaults to 1. schedule(guided, 3) High Performance Parallel Programming 47

RUNTIME schedule • The RUNTIME schedule defers the choice of schedule to run time, when it is determined by the value of the environment variable OMP_SCHEDULE. • e. g. export OMP_SCHEDULE=“guided, 4” • It is illegal to specify a chunksize with the RUNTIME schedule. High Performance Parallel Programming 48

Synchronization Recall: • Need to synchronise actions on shared variables. • Need to respect dependencies (true and anti) • Need to protect updates to shared variables (not atomic by default) High Performance Parallel Programming 49

Critical sections • A critical section is a block of code which can be executed by only one thread at a time. • Can be used to protect updates to shared variables. • The CRITICAL directive allows critical sections to be named. • If one thread is in a critical section with a given name, no other thread may be in a critical section with the same name, though they can be in critical sections with other names. Fortran: !$OMP CRITICAL [( name )] block !$OMP END CRITICAL [( name )] C/C++: #pragma omp critical [( name )] High Performance Parallel Programming structured block 50

BARRIER directive • No thread can proceed past a barrier until all the other threads have arrived. • Note that there is an implicit barrier at the end of DO/FOR, SECTIONS and SINGLE directives. Fortran: !$omp barrier C/C++: #pragma omp barrier Either all threads or none must encounter the barrier: (DEADLOCK!!) e. g. !$OMP PARALLEL PRIVATE(I, MYID) myid = omp_get_thread_num() a(myid) = a(myid)*3. 5 !$OMP BARRIER b(myid) = a(neighb(myid)) + c !$OMP END PARALLEL High Performance Parallel Programming 51

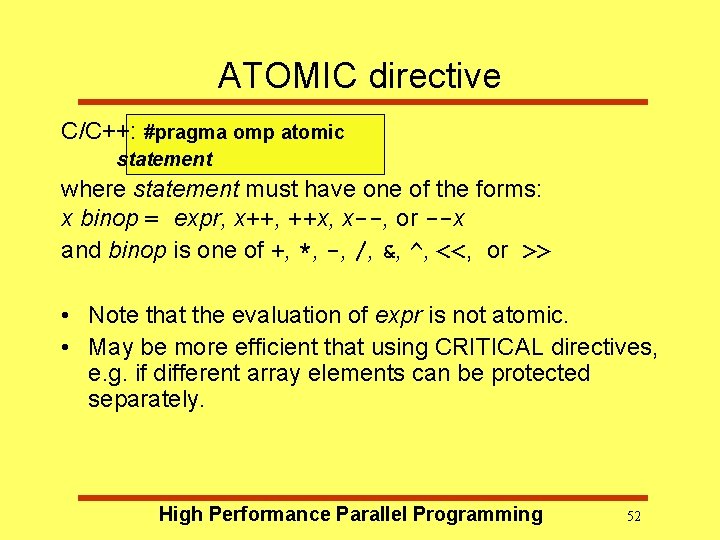

ATOMIC directive C/C++: #pragma omp atomic statement where statement must have one of the forms: x binop = expr, x++, ++x, x--, or --x and binop is one of +, *, -, /, &, ^, <<, or >> • Note that the evaluation of expr is not atomic. • May be more efficient that using CRITICAL directives, e. g. if different array elements can be protected separately. High Performance Parallel Programming 52

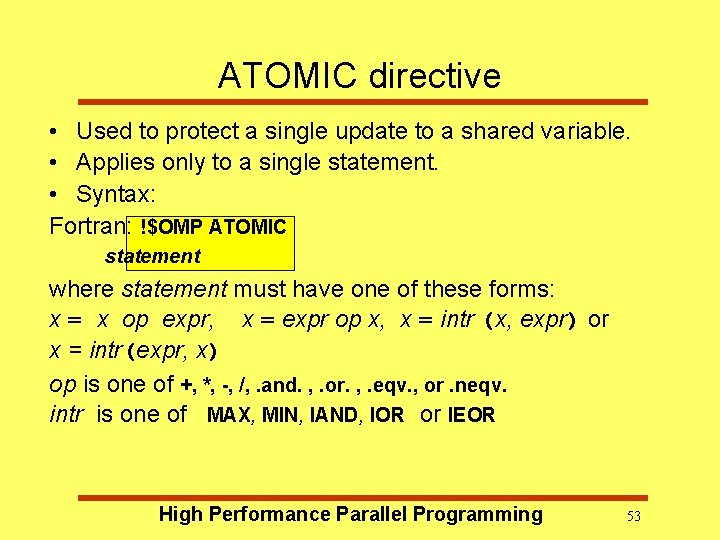

ATOMIC directive • Used to protect a single update to a shared variable. • Applies only to a single statement. • Syntax: Fortran: !$OMP ATOMIC statement where statement must have one of these forms: x = x op expr, x = expr op x, x = intr (x, expr) or x = intr(expr, x) op is one of +, *, -, /, . and. , . or. , . eqv. , or. neqv. intr is one of MAX, MIN, IAND, IOR or IEOR High Performance Parallel Programming 53

Lock routines • Occasionally we may require more flexibility than is provided by CRITICAL and ATOMIC directions. • A lock is a special variable that may be set by a thread. No other thread may set the lock until the thread which set the lock has unset it. • Setting a lock can either be blocking or nonblocking. • A lock must be initialised before it is used, and may be destroyed when it is not longer required. • Lock variables should not be used for any Performance Parallel Programming 54 other High purpose.

Choosing synchronisation • As a rough guide, use ATOMIC directives if possible, as these allow most optimisation. • If this is not possible, use CRITICAL directives. Make sure you use different names wherever possible. • As a last resort you may need to use the lock routines, but this should be quite a rare occurrence. High Performance Parallel Programming 55

Further reading • Open. MP Specification http: //www. openmp. org/ • My self-paced course (under development) http: //www. hpc. unimelb. edu. au/vpic/omp/cont ents. html High Performance Parallel Programming 56

High Performance Parallel Programming Tomorrow - MPI programming. High Performance Parallel Programming 57