Hierarchical spatiotemporal memory for machine learning based on

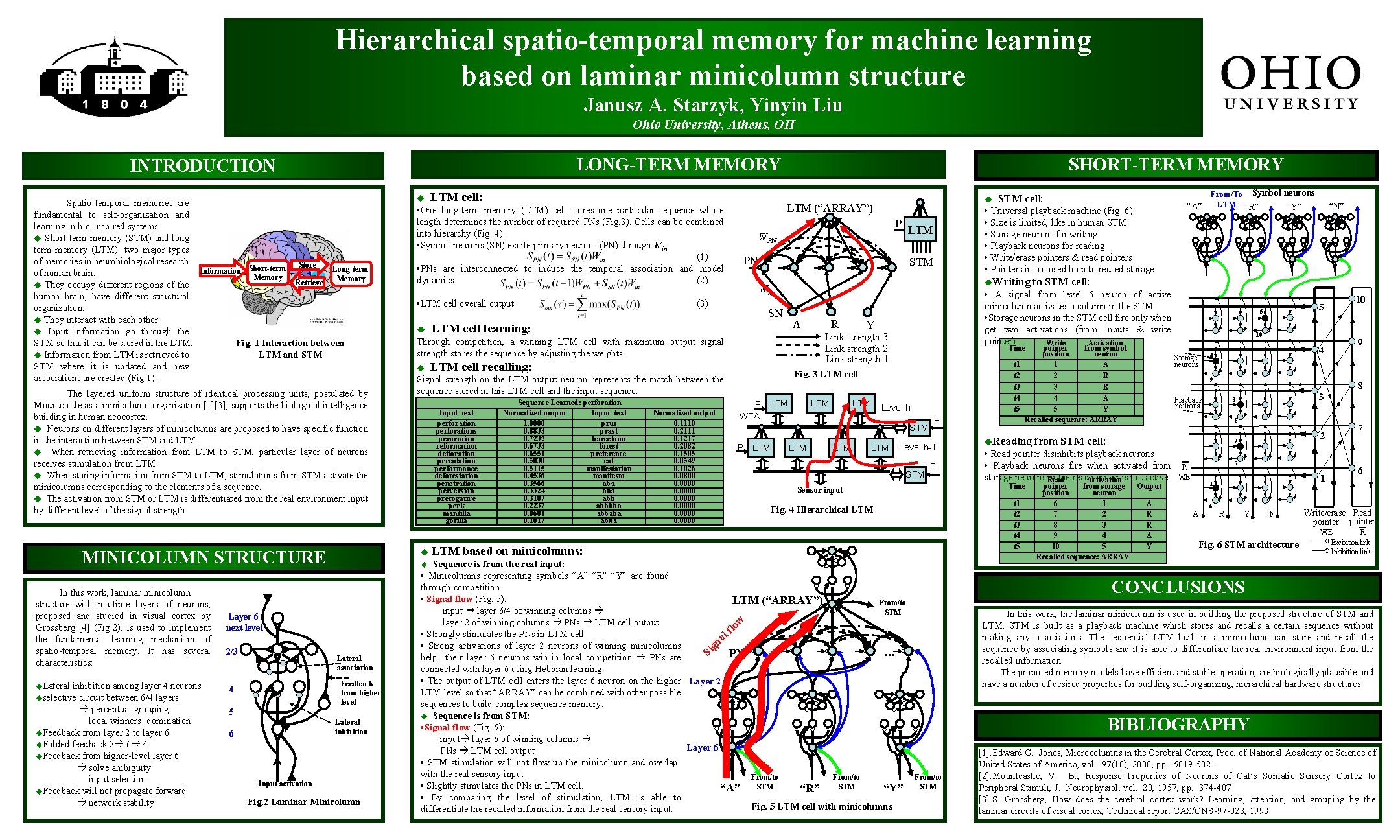

Hierarchical spatio-temporal memory for machine learning based on laminar minicolumn structure Janusz A. Starzyk, Yinyin Liu Ohio University, Athens, OH LONG-TERM MEMORY INTRODUCTION Spatio-temporal memories are fundamental to self-organization and learning in bio-inspired systems. u Short term memory (STM) and long term memory (LTM): two major types of memories in neurobiological research of human brain. u They occupy different regions of the human brain, have different structural organization. u They interact with each other. u Input information go through the STM so that it can be stored in the LTM. u Information from LTM is retrieved to STM where it is updated and new associations are created (Fig. 1). u Information Short-term Memory Store Retrieve Long-term Memory u Fig. 1 Interaction between LTM and STM inhibition among layer 4 neurons uselective circuit between 6/4 layers perceptual grouping local winners’ domination u. Feedback from layer 2 to layer 6 u. Folded feedback 2 6 4 u. Feedback from higher-level layer 6 solve ambiguity input selection u. Feedback will not propagate forward network stability Feedback from higher level 5 Lateral inhibition 6 PN STM u. Writing Win SN LTM cell learning: A Input text perforations peroration reformation defloration percolation performance deforestation penetration perversion prerogative perk mantilla gorilla Normalized output 0. 1118 0. 2111 0. 1217 0. 2082 0. 1505 0. 0549 0. 1026 0. 0800 0. 0000 R Y t 1 t 2 t 3 t 4 t 5 LTM Level h STM P LTM … LTM P Level h-1 Input activation Fig. 2 Laminar Minicolumn pointer from symbol position neuron 1 A 2 R 3 R 4 A 5 Y Recalled sequence: ARRAY STM P t 1 t 2 t 3 t 4 t 5 Fig. 4 Hierarchical LTM based on minicolumns: 5 5 10 Storage neurons 4 4 9 Playback neurons 2 2 pointer from storage Output position neuron 6 1 A 7 2 R 8 3 R 9 4 A 10 5 Y Recalled sequence: ARRAY 7 1 1 6 A 9 3 3 8 R W/E 10 8 from STM cell: • Read pointer disinhibits playback neurons • Playback neurons fire when activated from storage neurons Read & the read. Activation pointer is not active Time Sensor input Sequence is from the real input: • Minicolumns representing symbols “A” “R” “Y” are found through competition. • Signal flow (Fig. 5): LTM (“ARRAY”) From/to input layer 6/4 of winning columns STM layer 2 of winning columns PNs LTM cell output w lo f • Strongly stimulates the PNs in LTM cell al n • Strong activations of layer 2 neurons of winning minicolumns ig PN … S help their layer 6 neurons win in local competition PNs are connected with layer 6 using Hebbian learning. • The output of LTM cell enters the layer 6 neuron on the higher Layer 2 LTM level so that “ARRAY” can be combined with other possible sequences to build complex sequence memory. u Sequence is from STM: • Signal flow (Fig. 5): input layer 6 of winning columns Layer 6 PNs LTM cell output • STM stimulation will not flow up the minicolumn and overlap with the real sensory input From/to • Slightly stimulates the PNs in LTM cell. STM “A” STM “Y” “R” • By comparing the level of stimulation, LTM is able to Fig. 5 LTM cell with minicolumns differentiate the recalled information from the real sensory input. u. Reading “N” to STM cell: Time Fig. 3 LTM cell P LTM WTA From/To Symbol neurons LTM “R” “A” “Y” • A signal from level 6 neuron of active minicolumn activates a column in the STM • Storage neurons in the STM cell fire only when get two activations (from inputs & write pointer) Write Activation Link strength 3 Link strength 2 Link strength 1 LTM cell recalling: Sequence Learned: perforation Normalized output Input text 1. 0000 prus 0. 8833 prast 0. 7232 barcelona 0. 6733 forest 0. 6551 preference 0. 5030 cat 0. 5115 manifestation 0. 4536 manifesto 0. 3566 aba 0. 3324 bba 0. 3107 abb 0. 2237 abbbba 0. 0601 abbaba 0. 1817 abba LTM WPN STM cell: • Universal playback machine (Fig. 6) • Size is limited, like in human STM • Storage neurons for writing • Playback neurons for reading • Write/erase pointers & read pointers • Pointers in a closed loop to reused storage u Lateral association 4 P Signal strength on the LTM output neuron represents the match between the sequence stored in this LTM cell and the input sequence. u Layer 6 next level 2/3 (3) u LTM (“ARRAY”) Through competition, a winning LTM cell with maximum output signal strength stores the sequence by adjusting the weights. u MINICOLUMN STRUCTURE u. Lateral • One long-term memory (LTM) cell stores one particular sequence whose length determines the number of required PNs (Fig. 3). Cells can be combined into hierarchy (Fig. 4). • Symbol neurons (SN) excite primary neurons (PN) through Win. (1) • PNs are interconnected to induce the temporal association and model dynamics. (2) • LTM cell overall output The layered uniform structure of identical processing units, postulated by Mountcastle as a minicolumn organization [1][3], supports the biological intelligence building in human neocortex. u Neurons on different layers of minicolumns are proposed to have specific function in the interaction between STM and LTM. u When retrieving information from LTM to STM, particular layer of neurons receives stimulation from LTM. u When storing information from STM to LTM, stimulations from STM activate the minicolumns corresponding to the elements of a sequence. u The activation from STM or LTM is differentiated from the real environment input by different level of the signal strength. In this work, laminar minicolumn structure with multiple layers of neurons, proposed and studied in visual cortex by Grossberg [4] (Fig. 2), is used to implement the fundamental learning mechanism of spatio-temporal memory. It has several characteristics: LTM cell: SHORT-TERM MEMORY R Y N Fig. 6 STM architecture 7 6 Write/erase Read pointer W/E R Excitation link Inhibition link CONCLUSIONS In this work, the laminar minicolumn is used in building the proposed structure of STM and LTM. STM is built as a playback machine which stores and recalls a certain sequence without making any associations. The sequential LTM built in a minicolumn can store and recall the sequence by associating symbols and it is able to differentiate the real environment input from the recalled information. The proposed memory models have efficient and stable operation, are biologically plausible and have a number of desired properties for building self-organizing, hierarchical hardware structures. BIBLIOGRAPHY From/to STM [1]. Edward G. Jones, Microcolumns in the Cerebral Cortex, Proc. of National Academy of Science of United States of America, vol. 97(10), 2000, pp. 5019 -5021 [2]. Mountcastle, V. B. , Response Properties of Neurons of Cat’s Somatic Sensory Cortex to Peripheral Stimuli, J. Neurophysiol, vol. 20, 1957, pp. 374 -407 [3]. S. Grossberg, How does the cerebral cortex work? Learning, attention, and grouping by the laminar circuits of visual cortex, Technical report CAS/CNS-97 -023, 1998.

- Slides: 1