Hidden Variables the EM Algorithm and Mixtures of

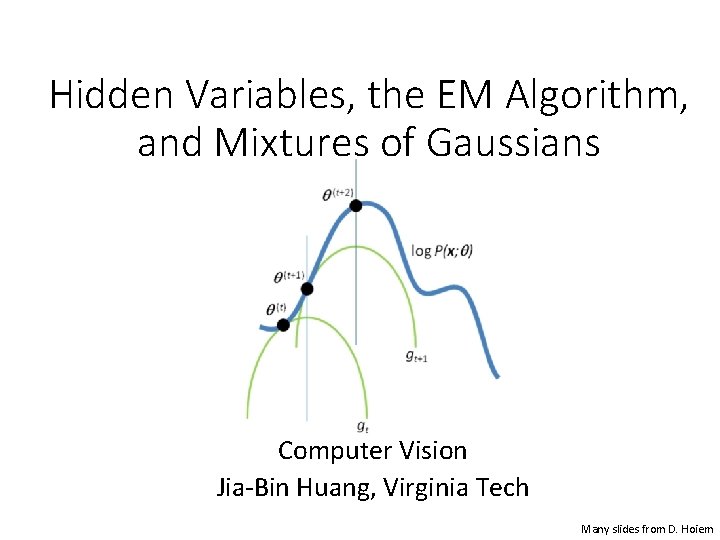

Hidden Variables, the EM Algorithm, and Mixtures of Gaussians Computer Vision Jia-Bin Huang, Virginia Tech Many slides from D. Hoiem

Administrative stuffs • Final project • proposal due Oct 30 (Monday) • Tips for final project • Set up several milestones • Think about how you are going to evaluate • Demo is highly encouraged • HW 4 out tomorrow

Sample final projects • State quarter classification • Stereo Vision - correspondence matching • Collaborative monocular SLAM for Multiple Robots in an unstructured environment • Fight Detection using Convolutional Neural Networks • Actor Rating using Facial Emotion Recognition • Fiducial Markers on Bat Tracking Based on Non-rigid Registration • Im 2 Latex: Converting Handwritten Mathematical Expressions to Latex • Pedestrian Detection and Tracking • Inference with Deep Neural Networks • Rubik's Cube • Plant Leaf Disease Detection and Classification • MBZIRC Challenge-2017 • Multi-modal Learning Scheme for Athlete Recognition System in Long Video • Computer Vision In Quantitative Phase Imaging • Aircraft pose estimation for level flight • Automatic segmentation of brain tumor from MRI images • Visual Dialog • Pixel. Dream

Superpixel algorithms • Goal: divide the image into a large number of regions, such that each regions lie within object boundaries • Examples • Watershed • Felzenszwalb and Huttenlocher graph-based • Turbopixels • SLIC

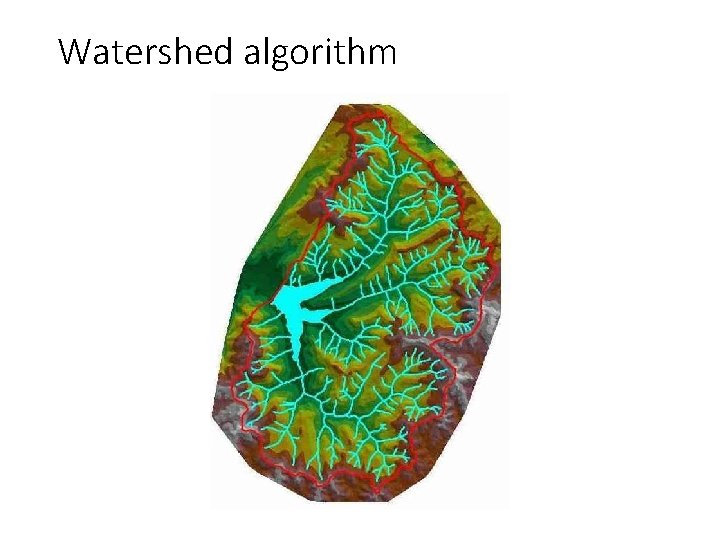

Watershed algorithm

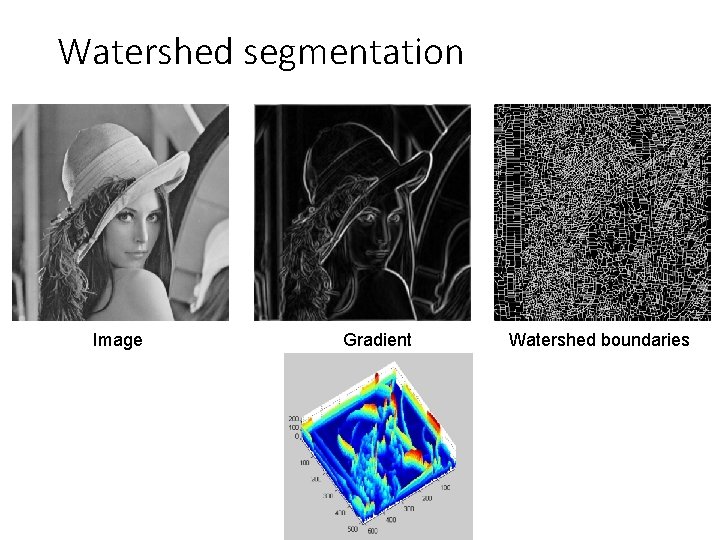

Watershed segmentation Image Gradient Watershed boundaries

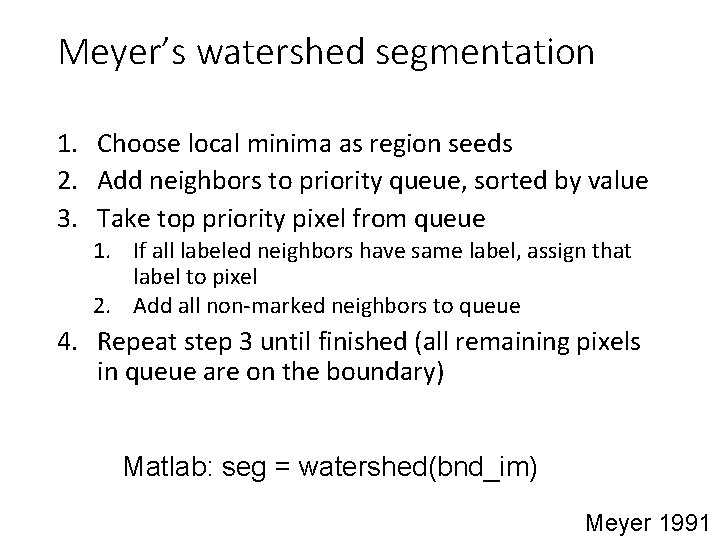

Meyer’s watershed segmentation 1. Choose local minima as region seeds 2. Add neighbors to priority queue, sorted by value 3. Take top priority pixel from queue 1. If all labeled neighbors have same label, assign that label to pixel 2. Add all non-marked neighbors to queue 4. Repeat step 3 until finished (all remaining pixels in queue are on the boundary) Matlab: seg = watershed(bnd_im) Meyer 1991

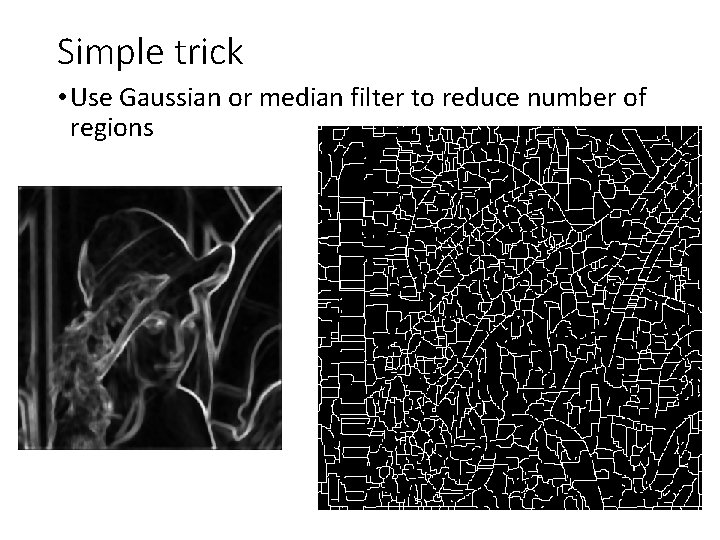

Simple trick • Use Gaussian or median filter to reduce number of regions

Watershed usage • Use as a starting point for hierarchical segmentation –Ultrametric contour map (Arbelaez 2006) • Works with any soft boundaries –Pb (w/o non-max suppression) –Canny (w/o non-max suppression) –Etc.

Watershed pros and cons • Pros – Fast (< 1 sec for 512 x 512 image) – Preserves boundaries • Cons – Only as good as the soft boundaries (which may be slow to compute) – Not easy to get variety of regions for multiple segmentations • Usage – Good algorithm for superpixels, hierarchical segmentation

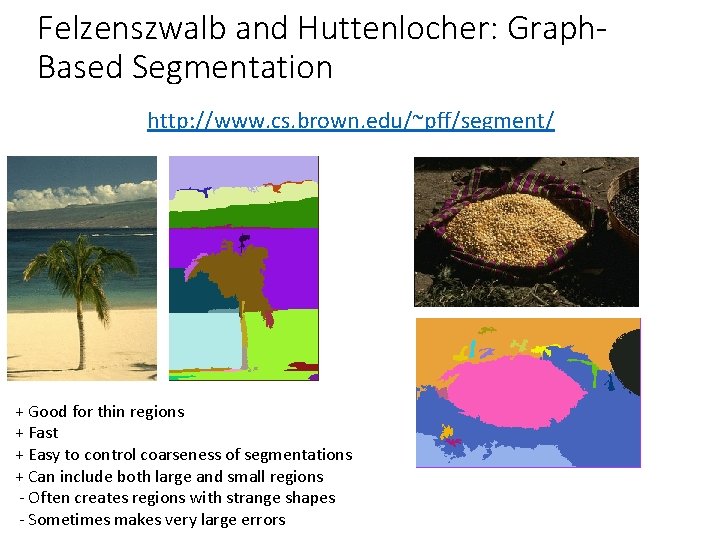

Felzenszwalb and Huttenlocher: Graph. Based Segmentation http: //www. cs. brown. edu/~pff/segment/ + Good for thin regions + Fast + Easy to control coarseness of segmentations + Can include both large and small regions - Often creates regions with strange shapes - Sometimes makes very large errors

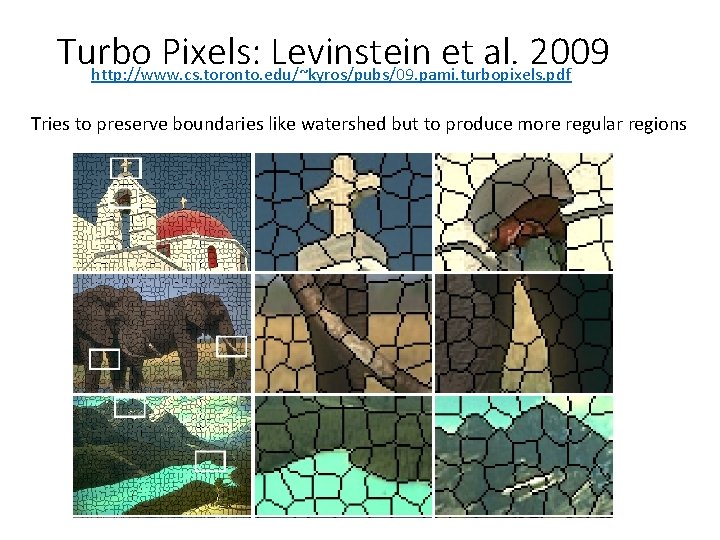

Turbo Pixels: Levinstein et al. 2009 http: //www. cs. toronto. edu/~kyros/pubs/09. pami. turbopixels. pdf Tries to preserve boundaries like watershed but to produce more regular regions

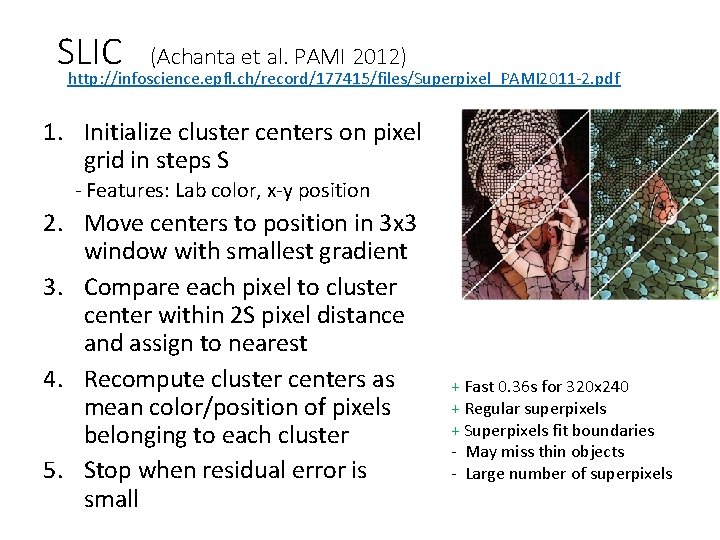

SLIC (Achanta et al. PAMI 2012) http: //infoscience. epfl. ch/record/177415/files/Superpixel_PAMI 2011 -2. pdf 1. Initialize cluster centers on pixel grid in steps S - Features: Lab color, x-y position 2. Move centers to position in 3 x 3 window with smallest gradient 3. Compare each pixel to cluster center within 2 S pixel distance and assign to nearest 4. Recompute cluster centers as mean color/position of pixels belonging to each cluster 5. Stop when residual error is small + Fast 0. 36 s for 320 x 240 + Regular superpixels + Superpixels fit boundaries - May miss thin objects - Large number of superpixels

Choices in segmentation algorithms • Oversegmentation • Watershed + Structure random forest • Felzenszwalb and Huttenlocher 2004 http: //www. cs. brown. edu/~pff/segment/ • SLIC • Turbopixels • Mean-shift • Larger regions (object-level) • Hierarchical segmentation (e. g. , from Pb) • Normalized cuts • Mean-shift • Seed + graph cuts (discussed later)

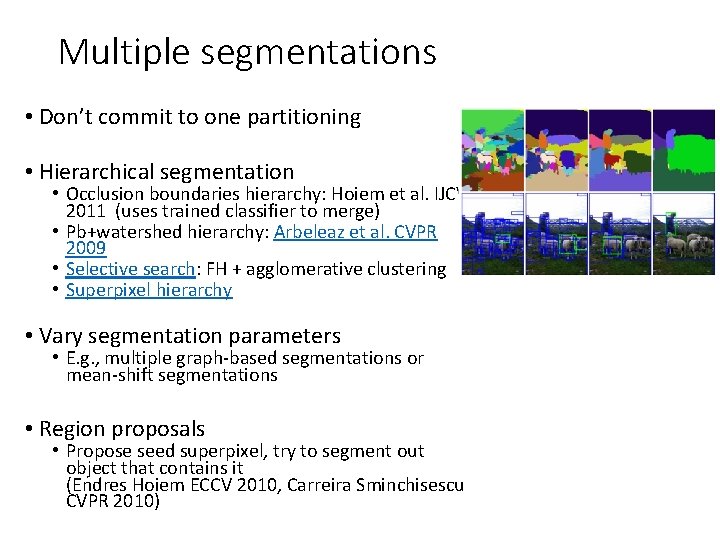

Multiple segmentations • Don’t commit to one partitioning • Hierarchical segmentation • Occlusion boundaries hierarchy: Hoiem et al. IJCV 2011 (uses trained classifier to merge) • Pb+watershed hierarchy: Arbeleaz et al. CVPR 2009 • Selective search: FH + agglomerative clustering • Superpixel hierarchy • Vary segmentation parameters • E. g. , multiple graph-based segmentations or mean-shift segmentations • Region proposals • Propose seed superpixel, try to segment out object that contains it (Endres Hoiem ECCV 2010, Carreira Sminchisescu CVPR 2010)

Review: Image Segmentation • Gestalt cues and principles of organization • Uses of segmentation • Efficiency • Provide feature supports • Propose object regions • Want the segmented object • Segmentation and grouping • Gestalt cues • By clustering (k-means, mean-shift) • By boundaries (watershed) • By graph (merging , graph cuts) • By labeling (MRF) <- Next lecture

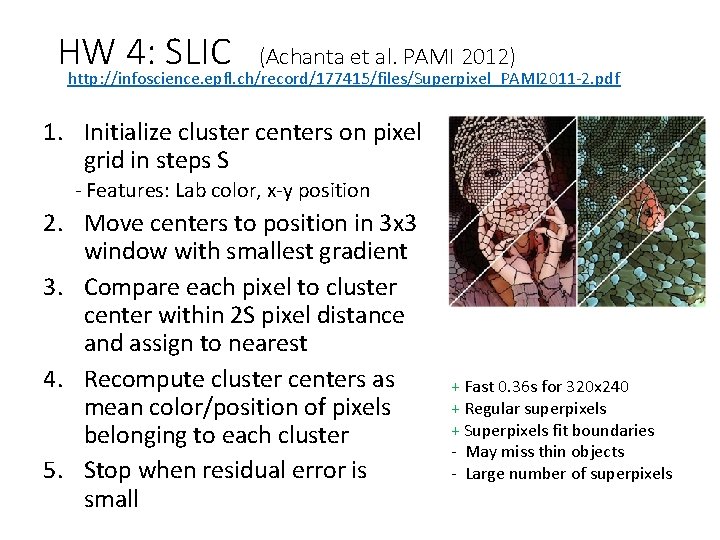

HW 4: SLIC (Achanta et al. PAMI 2012) http: //infoscience. epfl. ch/record/177415/files/Superpixel_PAMI 2011 -2. pdf 1. Initialize cluster centers on pixel grid in steps S - Features: Lab color, x-y position 2. Move centers to position in 3 x 3 window with smallest gradient 3. Compare each pixel to cluster center within 2 S pixel distance and assign to nearest 4. Recompute cluster centers as mean color/position of pixels belonging to each cluster 5. Stop when residual error is small + Fast 0. 36 s for 320 x 240 + Regular superpixels + Superpixels fit boundaries - May miss thin objects - Large number of superpixels

Today’s Class • Examples of Missing Data Problems • Detecting outliers • Latent topic models • Segmentation (HW 4, problem 2) • Background • Maximum Likelihood Estimation • Probabilistic Inference • Dealing with “Hidden” Variables • EM algorithm, Mixture of Gaussians • Hard EM

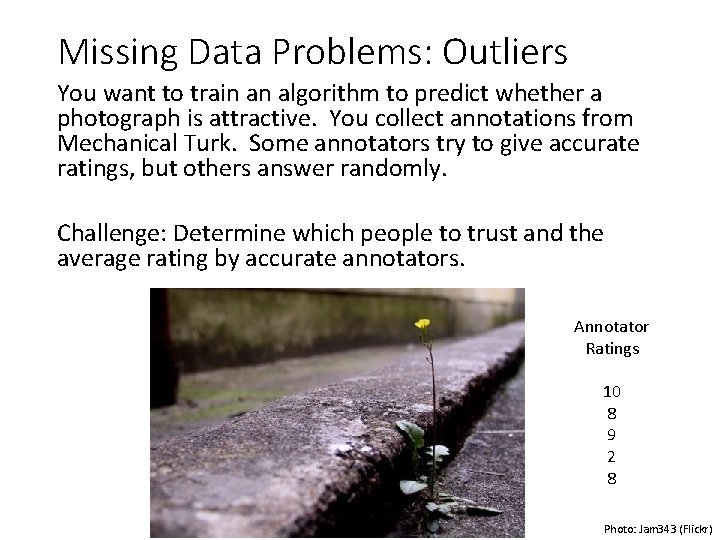

Missing Data Problems: Outliers You want to train an algorithm to predict whether a photograph is attractive. You collect annotations from Mechanical Turk. Some annotators try to give accurate ratings, but others answer randomly. Challenge: Determine which people to trust and the average rating by accurate annotators. Annotator Ratings 10 8 9 2 8 Photo: Jam 343 (Flickr)

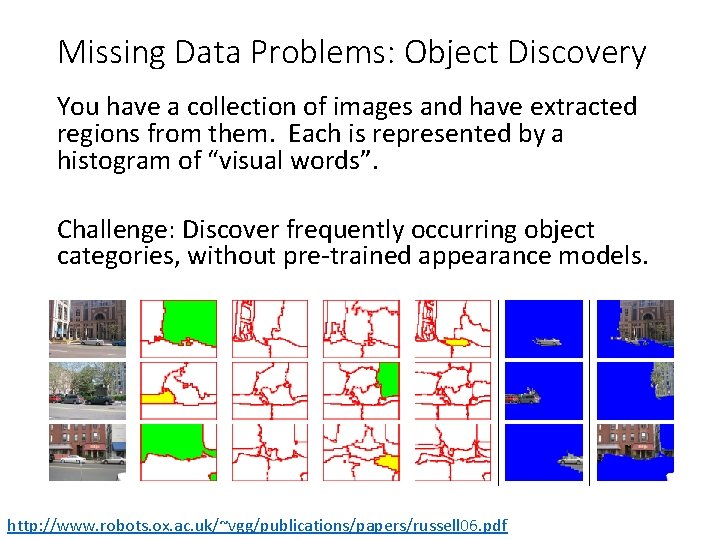

Missing Data Problems: Object Discovery You have a collection of images and have extracted regions from them. Each is represented by a histogram of “visual words”. Challenge: Discover frequently occurring object categories, without pre-trained appearance models. http: //www. robots. ox. ac. uk/~vgg/publications/papers/russell 06. pdf

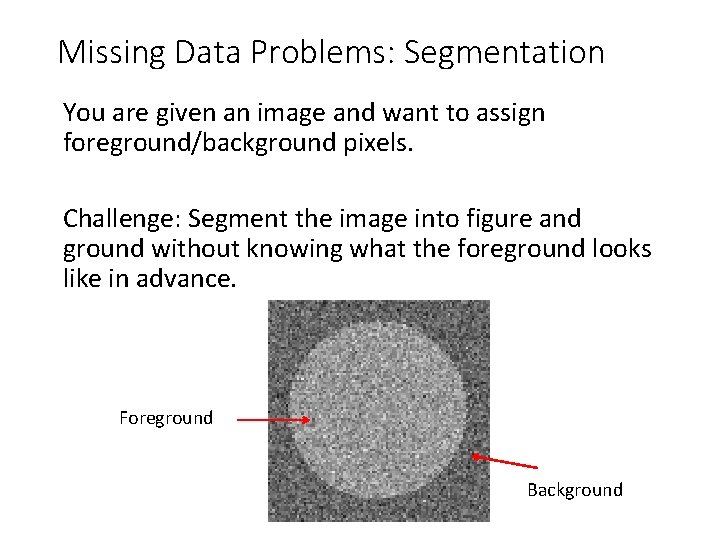

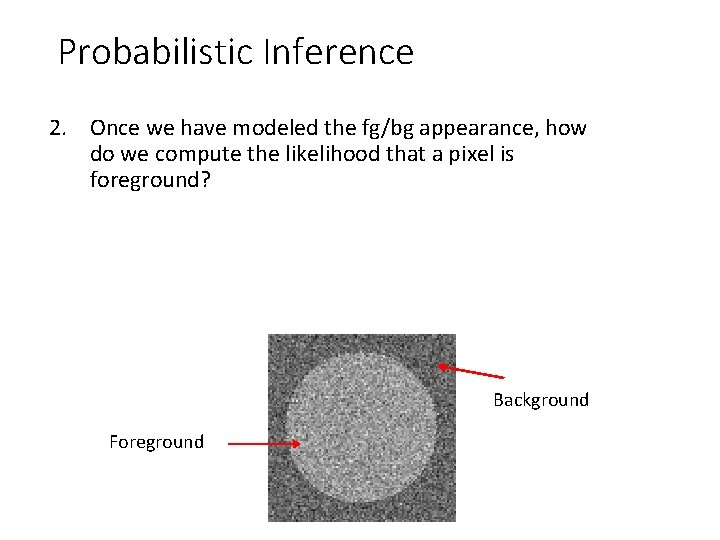

Missing Data Problems: Segmentation You are given an image and want to assign foreground/background pixels. Challenge: Segment the image into figure and ground without knowing what the foreground looks like in advance. Foreground Background

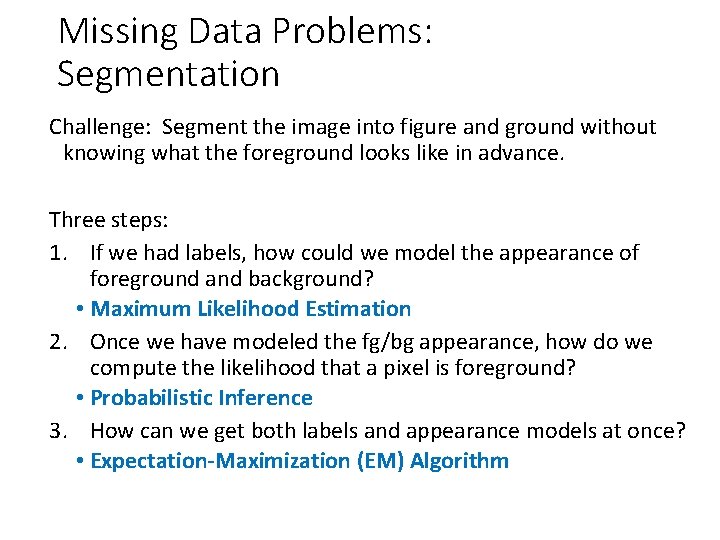

Missing Data Problems: Segmentation Challenge: Segment the image into figure and ground without knowing what the foreground looks like in advance. Three steps: 1. If we had labels, how could we model the appearance of foreground and background? • Maximum Likelihood Estimation 2. Once we have modeled the fg/bg appearance, how do we compute the likelihood that a pixel is foreground? • Probabilistic Inference 3. How can we get both labels and appearance models at once? • Expectation-Maximization (EM) Algorithm

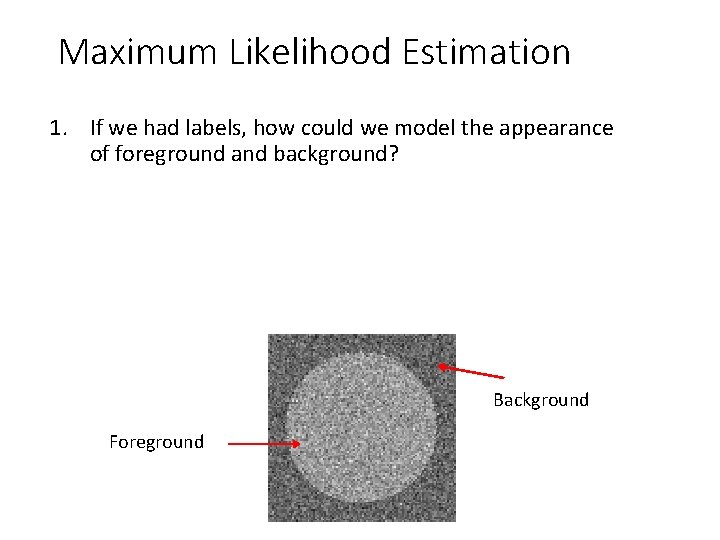

Maximum Likelihood Estimation 1. If we had labels, how could we model the appearance of foreground and background? Background Foreground

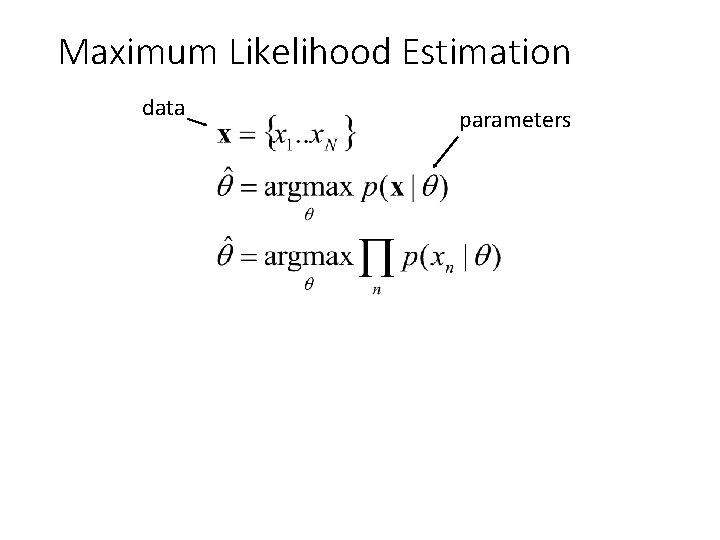

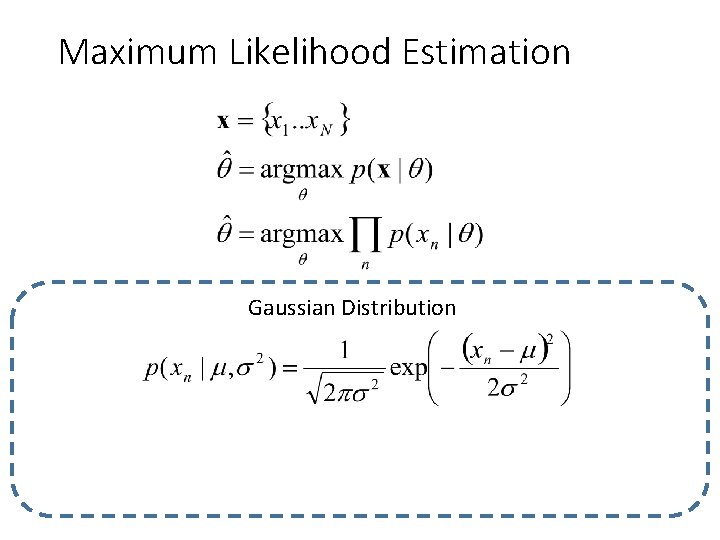

Maximum Likelihood Estimation data parameters

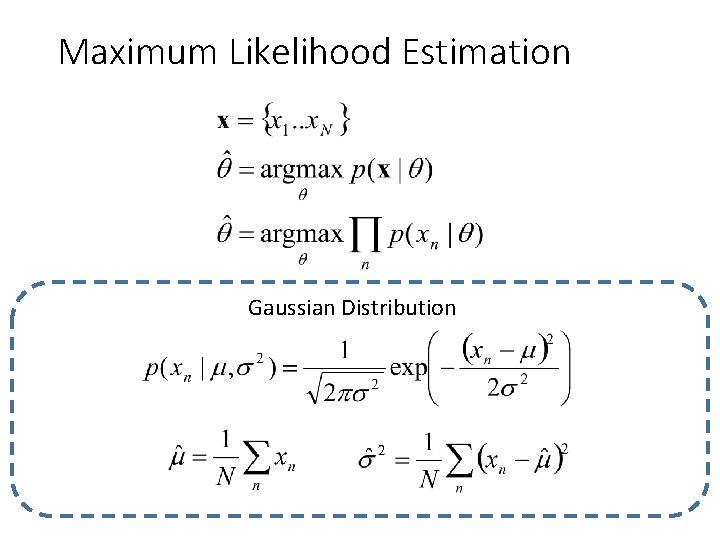

Maximum Likelihood Estimation Gaussian Distribution

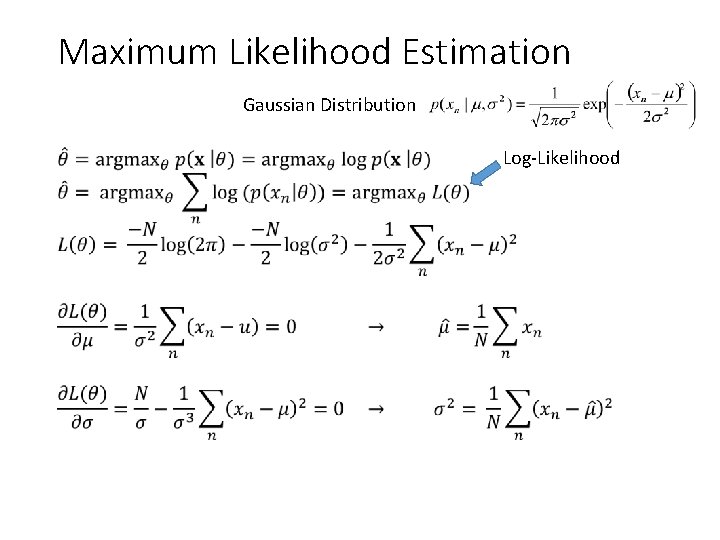

Maximum Likelihood Estimation Gaussian Distribution • Log-Likelihood

Maximum Likelihood Estimation Gaussian Distribution

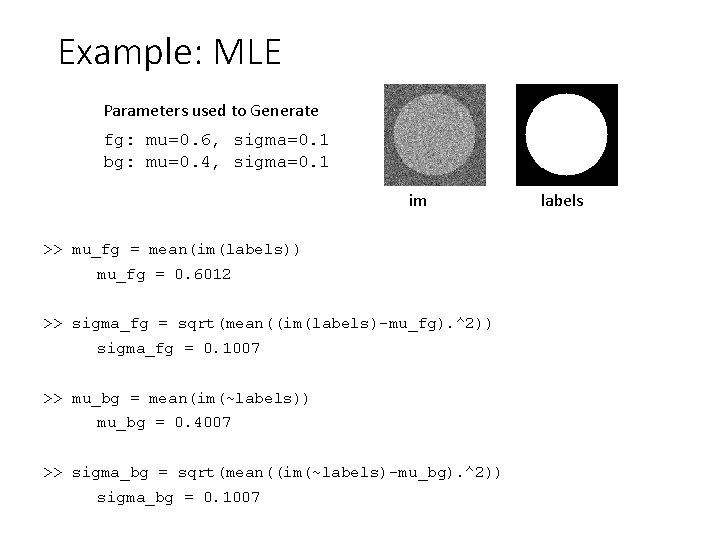

Example: MLE Parameters used to Generate fg: mu=0. 6, sigma=0. 1 bg: mu=0. 4, sigma=0. 1 im >> mu_fg = mean(im(labels)) mu_fg = 0. 6012 >> sigma_fg = sqrt(mean((im(labels)-mu_fg). ^2)) sigma_fg = 0. 1007 >> mu_bg = mean(im(~labels)) mu_bg = 0. 4007 >> sigma_bg = sqrt(mean((im(~labels)-mu_bg). ^2)) sigma_bg = 0. 1007 labels

Probabilistic Inference 2. Once we have modeled the fg/bg appearance, how do we compute the likelihood that a pixel is foreground? Background Foreground

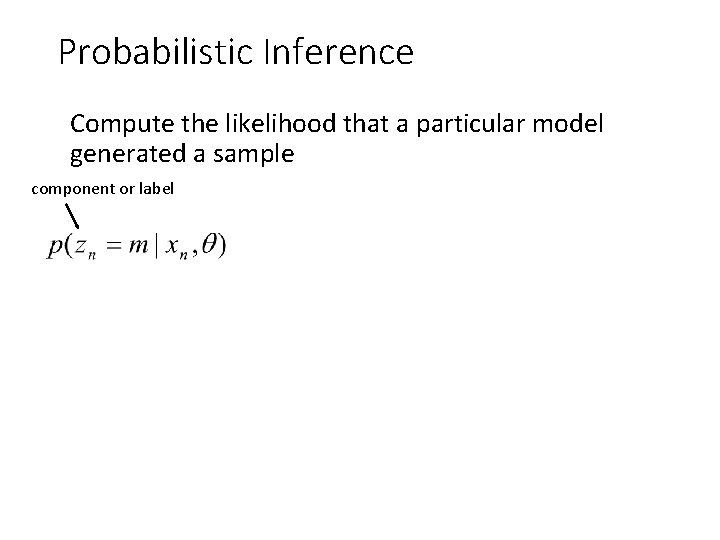

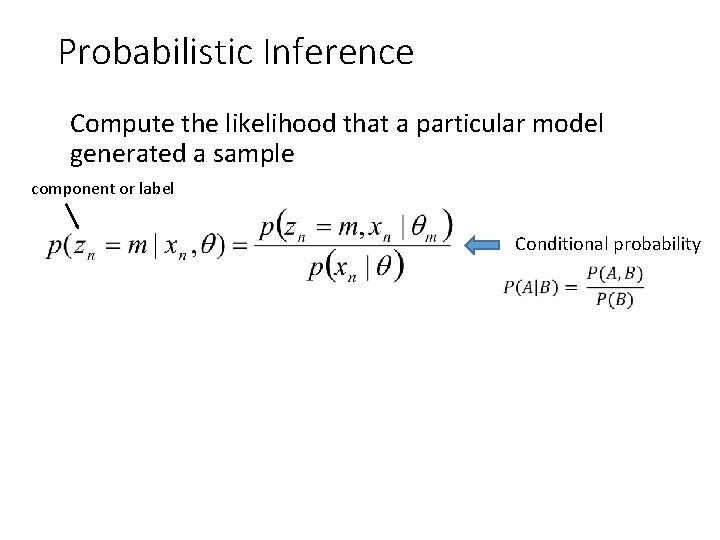

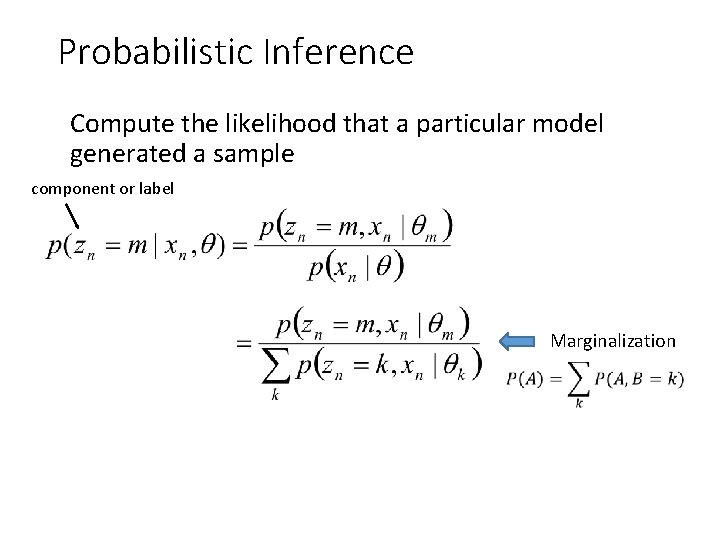

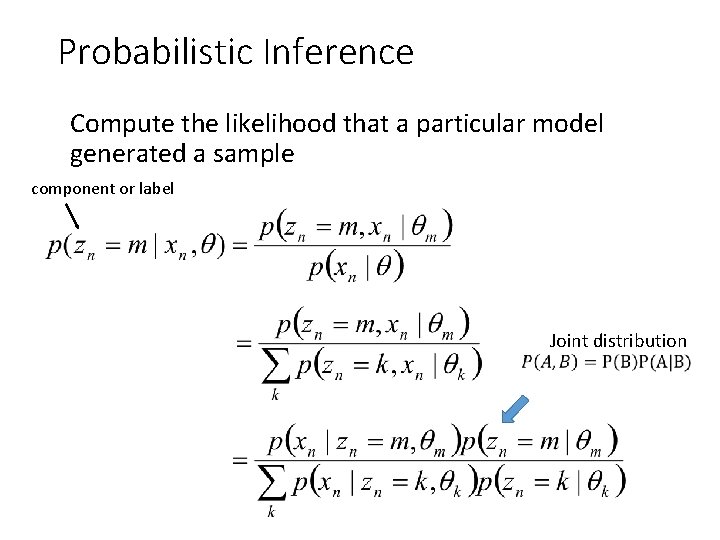

Probabilistic Inference Compute the likelihood that a particular model generated a sample component or label

Probabilistic Inference Compute the likelihood that a particular model generated a sample component or label Conditional probability

Probabilistic Inference Compute the likelihood that a particular model generated a sample component or label Marginalization

Probabilistic Inference Compute the likelihood that a particular model generated a sample component or label Joint distribution

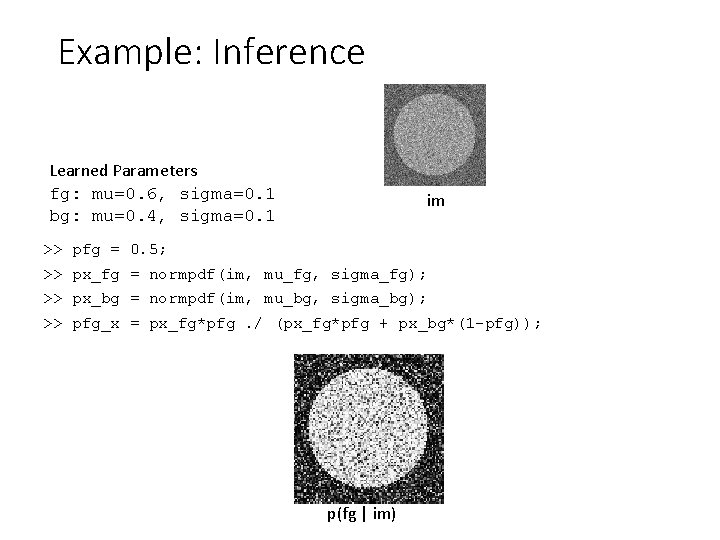

Example: Inference Learned Parameters fg: mu=0. 6, sigma=0. 1 bg: mu=0. 4, sigma=0. 1 im >> pfg = 0. 5; >> px_fg = normpdf(im, mu_fg, sigma_fg); >> px_bg = normpdf(im, mu_bg, sigma_bg); >> pfg_x = px_fg*pfg. / (px_fg*pfg + px_bg*(1 -pfg)); p(fg | im)

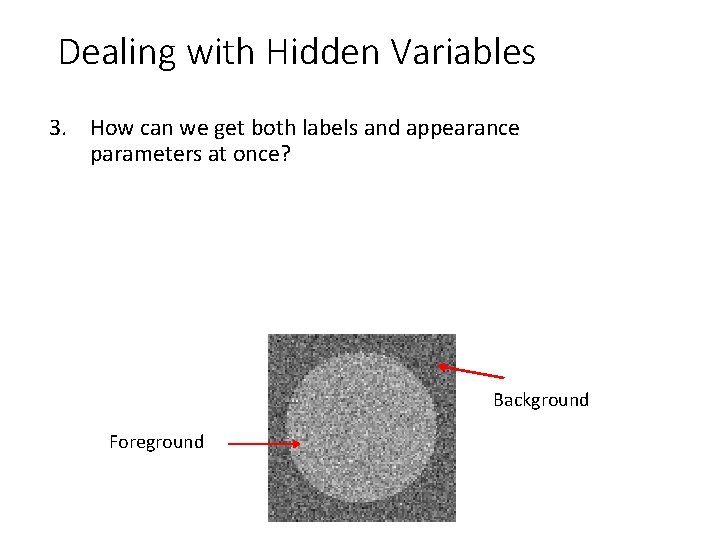

Dealing with Hidden Variables 3. How can we get both labels and appearance parameters at once? Background Foreground

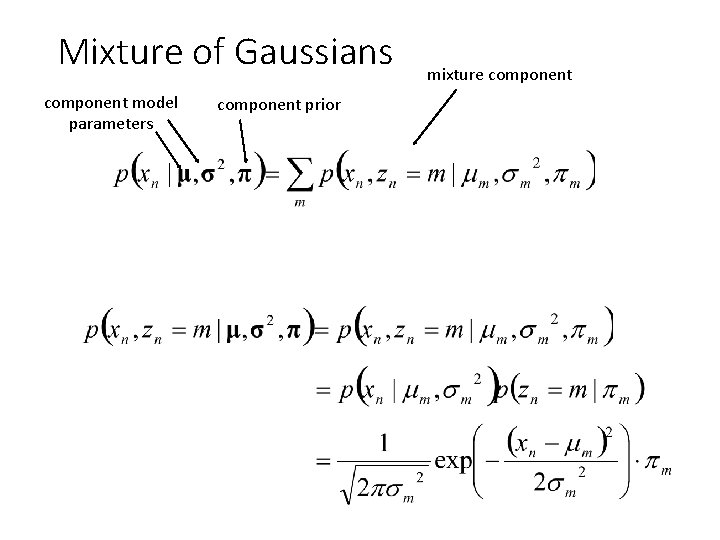

Mixture of Gaussians component model parameters component prior mixture component

Mixture of Gaussians With enough components, can represent any probability density function • Widely used as general purpose pdf estimator

Segmentation with Mixture of Gaussians Pixels come from one of several Gaussian components • We don’t know which pixels come from which components • We don’t know the parameters for the components Problem: - Estimate the parameters of the Gaussian Mixture Model. What would you do?

Simple solution 1. Initialize parameters 2. Compute the probability of each hidden variable given the current parameters 3. Compute new parameters for each model, weighted by likelihood of hidden variables 4. Repeat 2 -3 until convergence

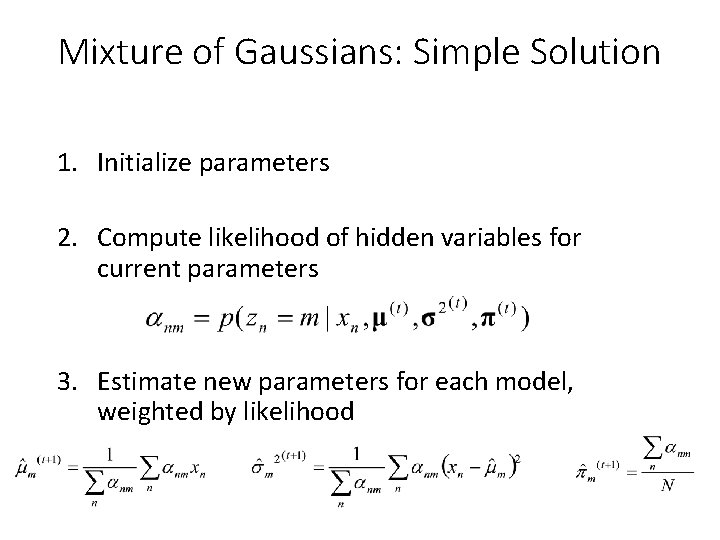

Mixture of Gaussians: Simple Solution 1. Initialize parameters 2. Compute likelihood of hidden variables for current parameters 3. Estimate new parameters for each model, weighted by likelihood

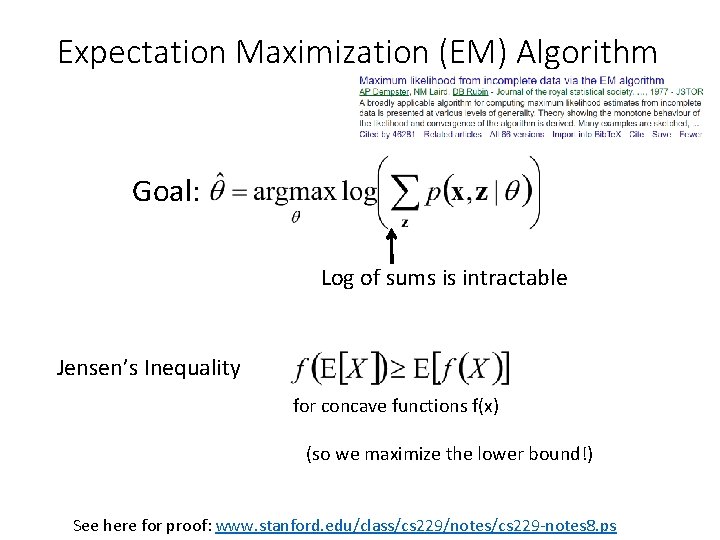

Expectation Maximization (EM) Algorithm Goal: Log of sums is intractable Jensen’s Inequality for concave functions f(x) (so we maximize the lower bound!) See here for proof: www. stanford. edu/class/cs 229/notes/cs 229 -notes 8. ps

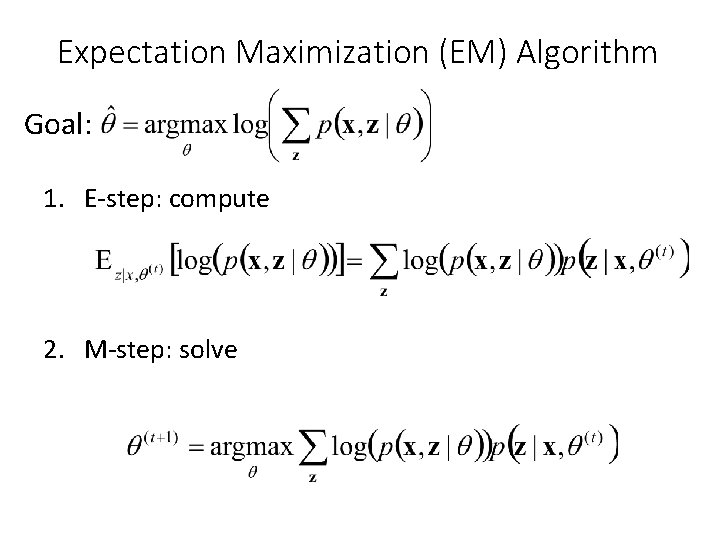

Expectation Maximization (EM) Algorithm Goal: 1. E-step: compute 2. M-step: solve

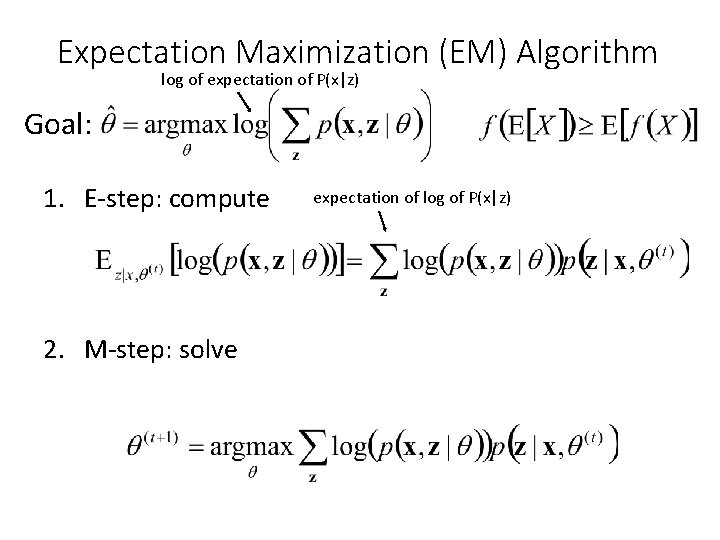

Expectation Maximization (EM) Algorithm log of expectation of P(x|z) Goal: 1. E-step: compute 2. M-step: solve expectation of log of P(x|z)

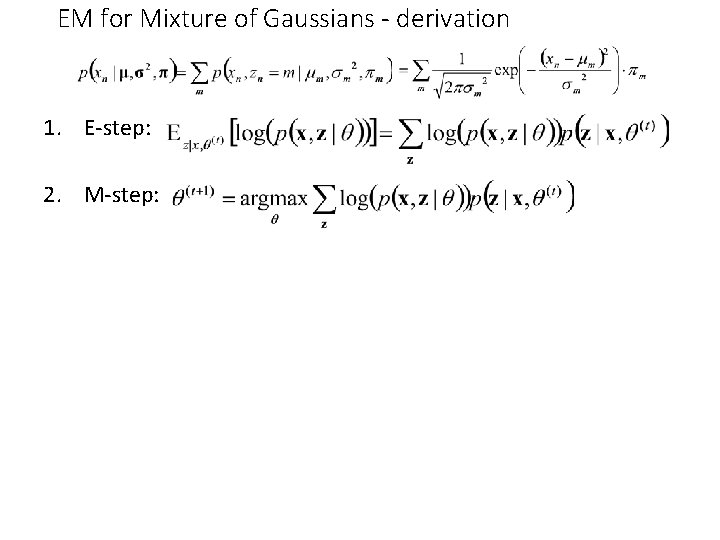

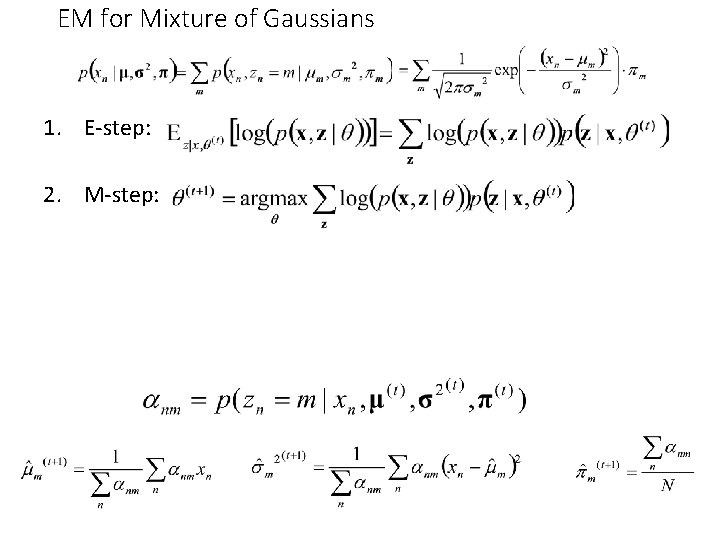

EM for Mixture of Gaussians - derivation 1. E-step: 2. M-step:

EM for Mixture of Gaussians 1. E-step: 2. M-step:

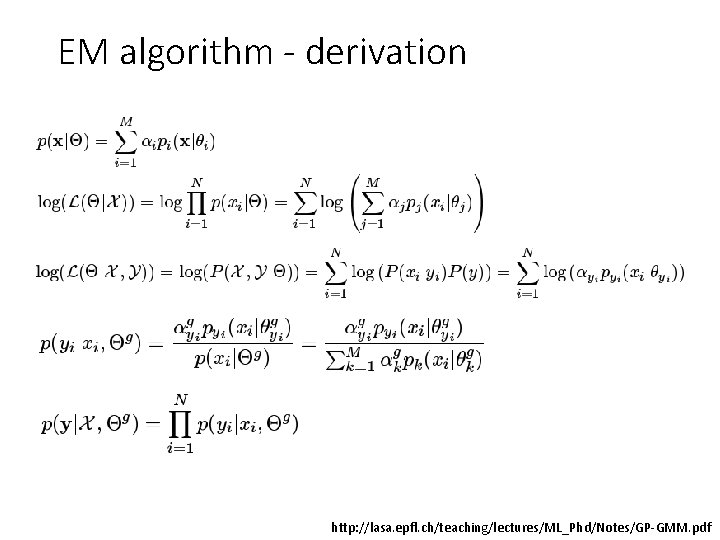

EM algorithm - derivation http: //lasa. epfl. ch/teaching/lectures/ML_Phd/Notes/GP-GMM. pdf

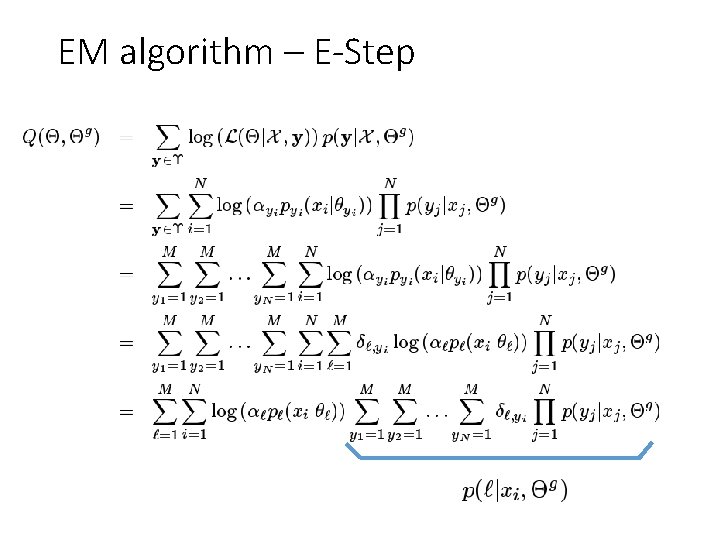

EM algorithm – E-Step

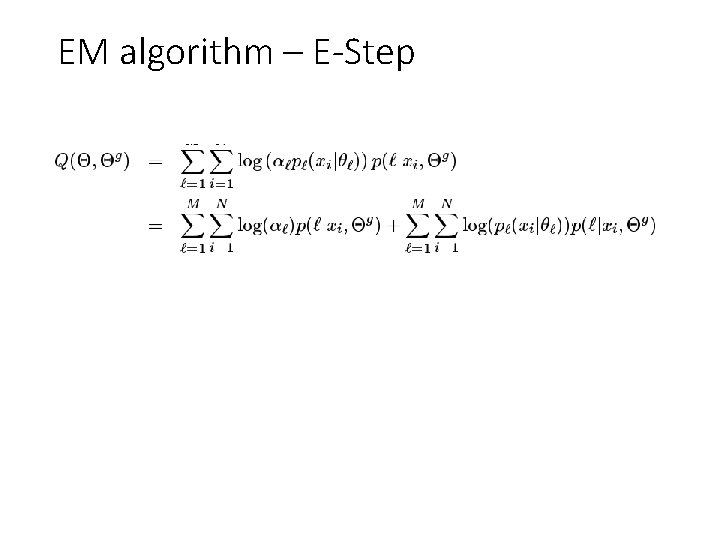

EM algorithm – E-Step

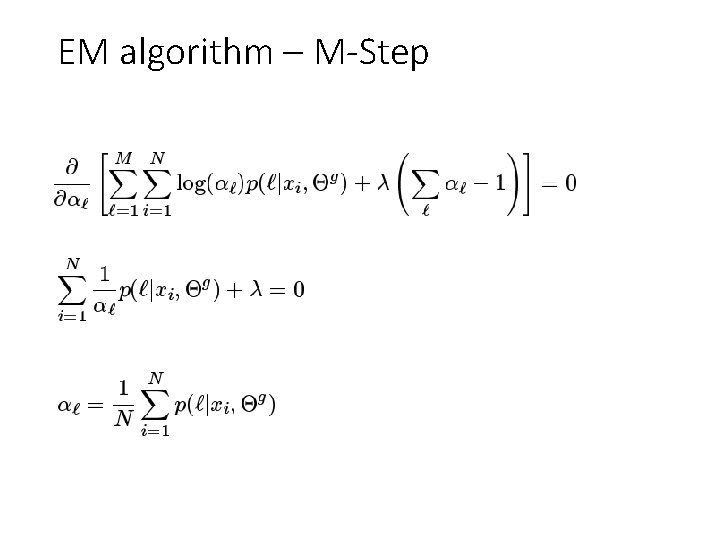

EM algorithm – M-Step

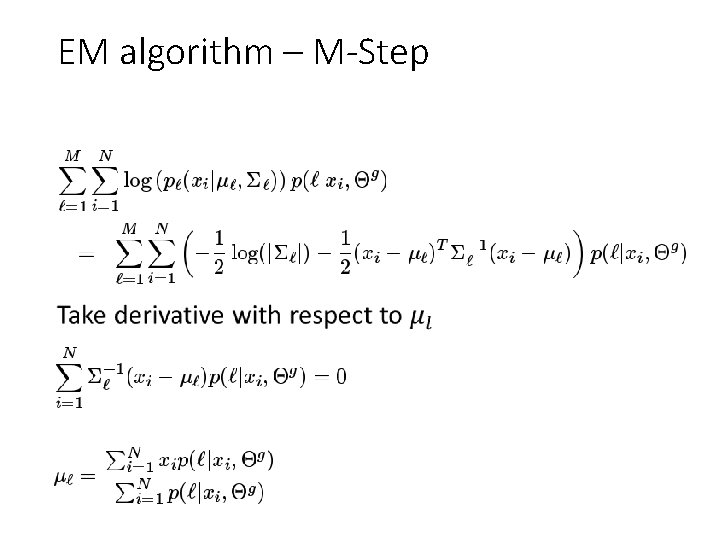

EM algorithm – M-Step •

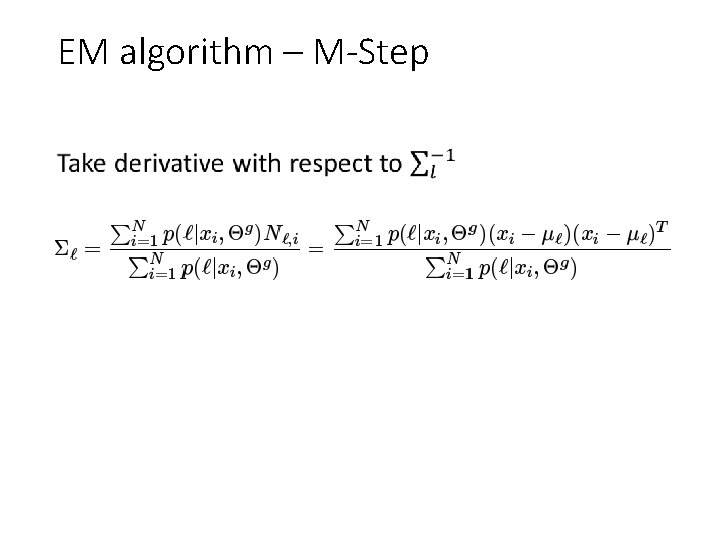

EM algorithm – M-Step •

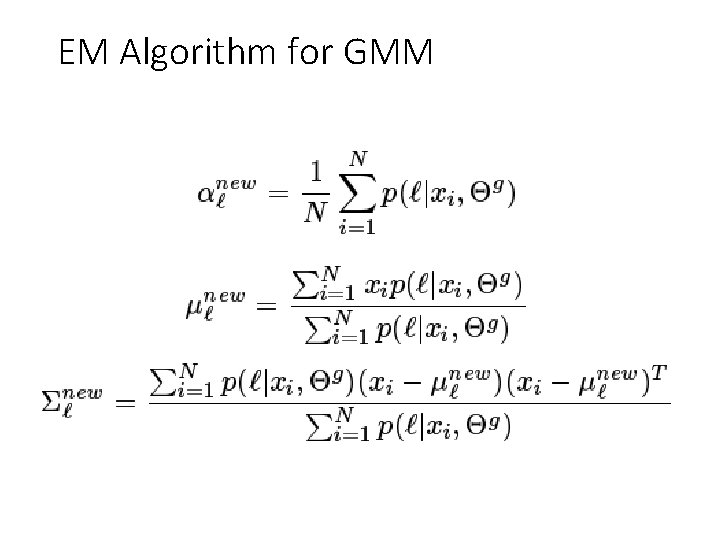

EM Algorithm for GMM

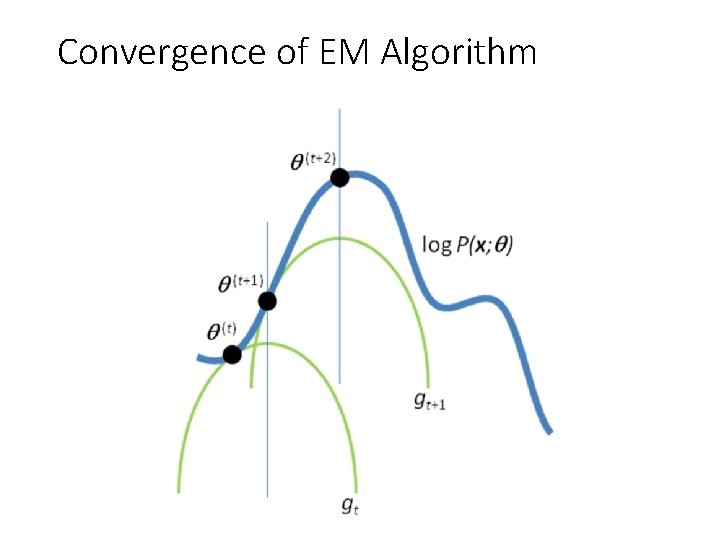

EM Algorithm • Maximizes a lower bound on the data likelihood at each iteration • Each step increases the data likelihood • Converges to local maximum • Common tricks to derivation • Find terms that sum or integrate to 1 • Lagrange multiplier to deal with constraints

Convergence of EM Algorithm

EM Demos • Mixture of Gaussian demo • Simple segmentation demo

“Hard EM” • Same as EM except compute z* as most likely values for hidden variables • K-means is an example • Advantages • Simpler: can be applied when cannot derive EM • Sometimes works better if you want to make hard predictions at the end • But • Generally, pdf parameters are not as accurate as EM

Missing Data Problems: Outliers You want to train an algorithm to predict whether a photograph is attractive. You collect annotations from Mechanical Turk. Some annotators try to give accurate ratings, but others answer randomly. Challenge: Determine which people to trust and the average rating by accurate annotators. Annotator Ratings 10 8 9 2 8 Photo: Jam 343 (Flickr)

Next class • MRFs and Graph-cut Segmentation • Think about your final projects (if not done already)

- Slides: 58