Hidden Markov Models HMM Rabiners Paper Markoviana Reading

Hidden Markov Models (HMM) Rabiner’s Paper Markoviana Reading Group Computer Eng. & Science Dept. Arizona State University

Stationary and Non-stationary Stationary Process: Its statistical properties do not vary with time Non-stationary Process: The signal properties vary over time Markoviana Reading Group Fatih Gelgi – Feb, 2005 2

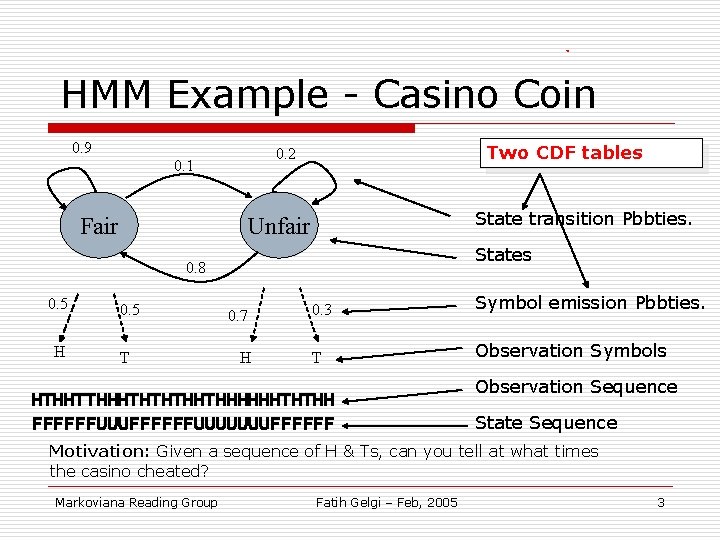

HMM Example - Casino Coin 0. 9 Fair Two CDF tables 0. 2 0. 1 State transition Pbbties. Unfair States 0. 8 0. 5 H 0. 5 T 0. 7 H 0. 3 Symbol emission Pbbties. T Observation Symbols HTHHTTHHHTHTHTHHTHHHHHHTHTHH FFFFFFUUUUUUUFFFFFF Observation Sequence State Sequence Motivation: Given a sequence of H & Ts, can you tell at what times the casino cheated? Markoviana Reading Group Fatih Gelgi – Feb, 2005 3

Properties of an HMM o First-order Markov process n o qt only depends on qt-1 Time is discrete Markoviana Reading Group Fatih Gelgi – Feb, 2005 4

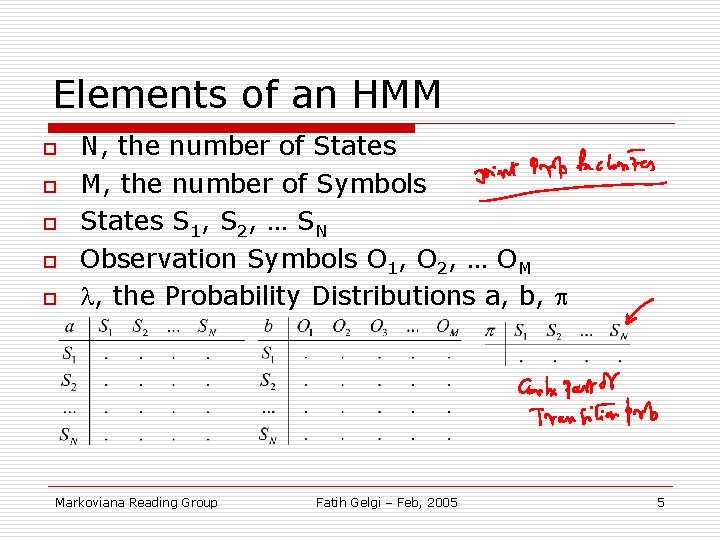

Elements of an HMM o o o N, the number of States M, the number of Symbols States S 1, S 2, … SN Observation Symbols O 1, O 2, … OM , the Probability Distributions a, b, Markoviana Reading Group Fatih Gelgi – Feb, 2005 5

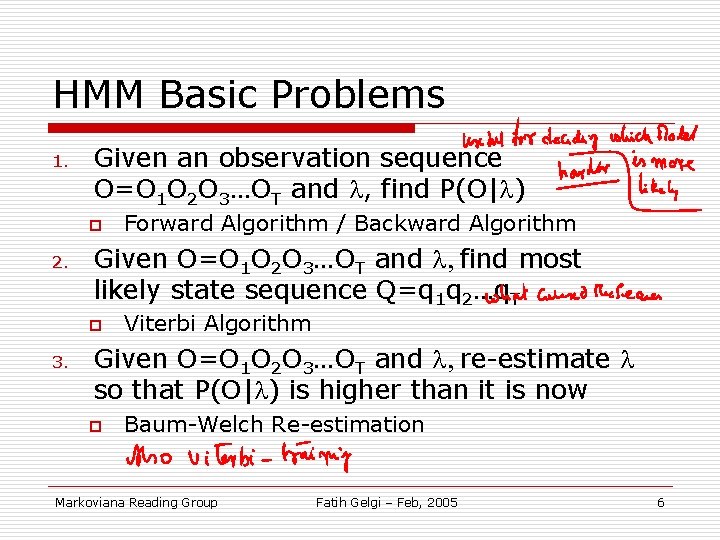

HMM Basic Problems 1. Given an observation sequence O=O 1 O 2 O 3…OT and , find P(O| ) p 2. Given O=O 1 O 2 O 3…OT and , find most likely state sequence Q=q 1 q 2…q. T p 3. Forward Algorithm / Backward Algorithm Viterbi Algorithm Given O=O 1 O 2 O 3…OT and , re-estimate so that P(O| ) is higher than it is now p Baum-Welch Re-estimation Markoviana Reading Group Fatih Gelgi – Feb, 2005 6

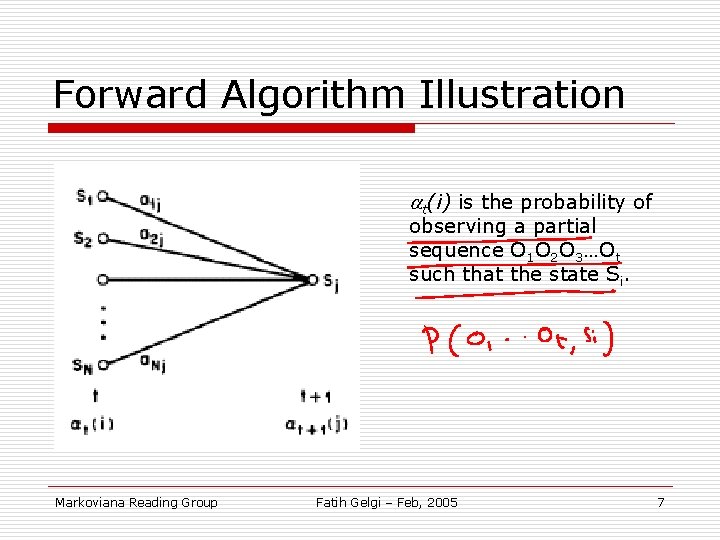

Forward Algorithm Illustration at(i) is the probability of observing a partial sequence O 1 O 2 O 3…Ot such that the state Si. Markoviana Reading Group Fatih Gelgi – Feb, 2005 7

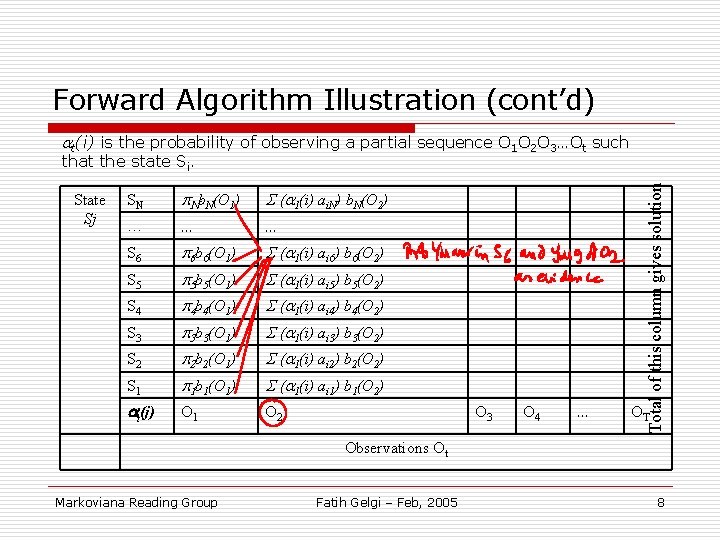

Forward Algorithm Illustration (cont’d) at(i) is the probability of observing a partial sequence O 1 O 2 O 3…Ot such State Sj SN p. Nb. N(O 1) S (a 1(i) ai. N) b. N(O 2) … … … S 6 p 6 b 6(O 1) S (a 1(i) ai 6) b 6(O 2) S 5 p 5 b 5(O 1) S (a 1(i) ai 5) b 5(O 2) S 4 p 4 b 4(O 1) S (a 1(i) ai 4) b 4(O 2) S 3 p 3 b 3(O 1) S (a 1(i) ai 3) b 3(O 2) S 2 p 2 b 2(O 1) S (a 1(i) ai 2) b 2(O 2) S 1 p 1 b 1(O 1) S (a 1(i) ai 1) b 1(O 2) at(j) O 1 O 2 O 3 O 4 … Total of this column gives solution that the state Si. OT Observations Ot Markoviana Reading Group Fatih Gelgi – Feb, 2005 8

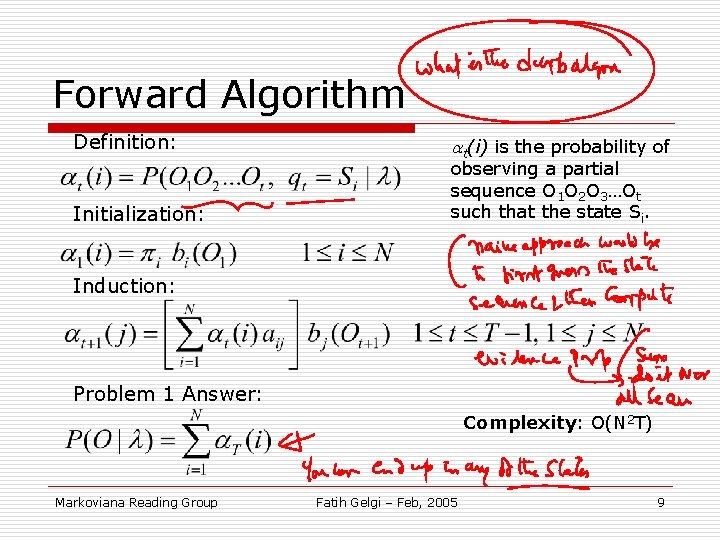

Forward Algorithm Definition: Initialization: at(i) is the probability of observing a partial sequence O 1 O 2 O 3…Ot such that the state Si. Induction: Problem 1 Answer: Complexity: O(N 2 T) Markoviana Reading Group Fatih Gelgi – Feb, 2005 9

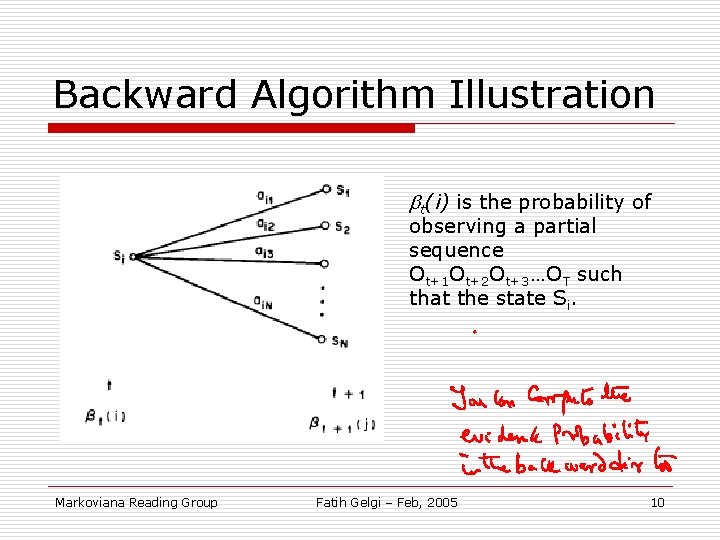

Backward Algorithm Illustration t(i) is the probability of observing a partial sequence Ot+1 Ot+2 Ot+3…OT such that the state Si. Markoviana Reading Group Fatih Gelgi – Feb, 2005 10

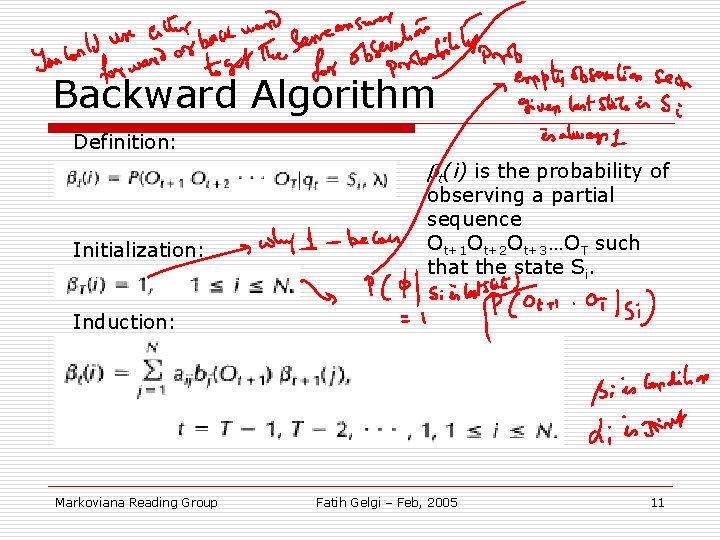

Backward Algorithm Definition: t(i) is the probability of Initialization: observing a partial sequence Ot+1 Ot+2 Ot+3…OT such that the state Si. Induction: Markoviana Reading Group Fatih Gelgi – Feb, 2005 11

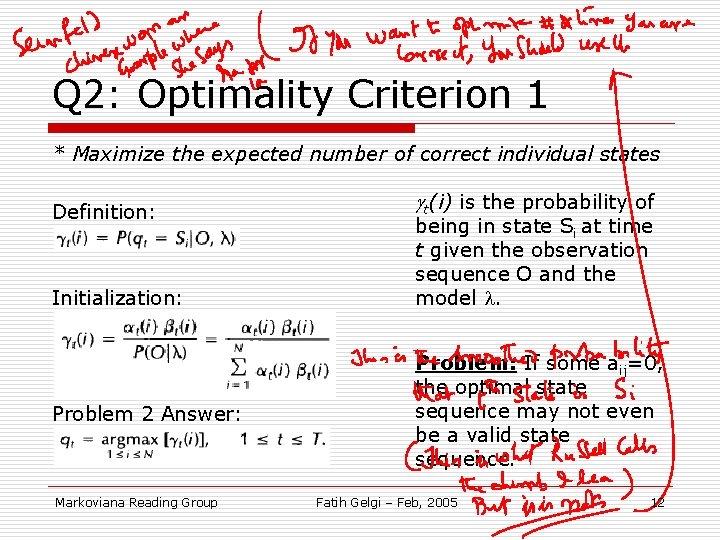

Q 2: Optimality Criterion 1 * Maximize the expected number of correct individual states Definition: Initialization: Problem 2 Answer: Markoviana Reading Group t(i) is the probability of being in state Si at time t given the observation sequence O and the model . Problem: If some aij=0, the optimal state sequence may not even be a valid state sequence. Fatih Gelgi – Feb, 2005 12

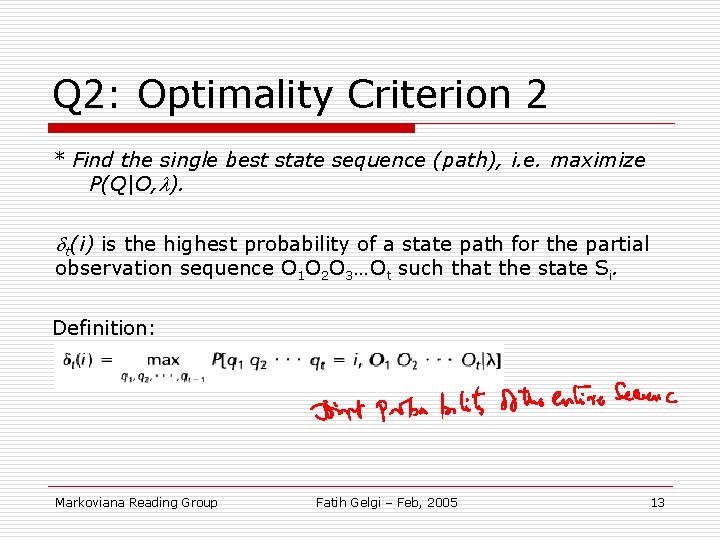

Q 2: Optimality Criterion 2 * Find the single best state sequence (path), i. e. maximize P(Q|O, ). dt(i) is the highest probability of a state path for the partial observation sequence O 1 O 2 O 3…Ot such that the state Si. Definition: Markoviana Reading Group Fatih Gelgi – Feb, 2005 13

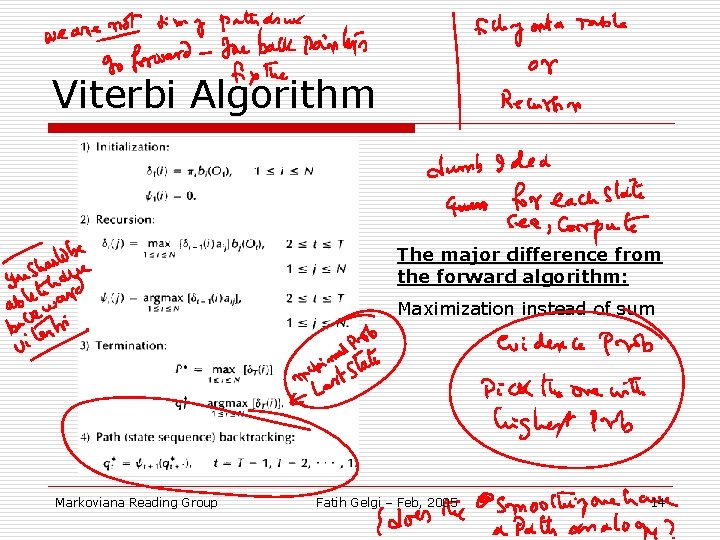

Viterbi Algorithm The major difference from the forward algorithm: Maximization instead of sum Markoviana Reading Group Fatih Gelgi – Feb, 2005 14

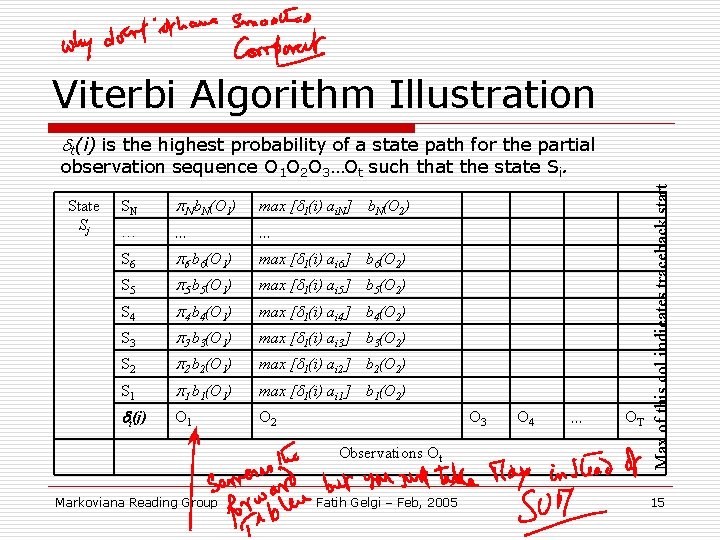

Viterbi Algorithm Illustration dt(i) is the highest probability of a state path for the partial State Sj Observations Ot Max of this col indicates traceback start observation sequence O 1 O 2 O 3…Ot such that the state Si. Fatih Gelgi – Feb, 2005 15 SN p. N b. N(O 1) max [d 1(i) ai. N] … … … S 6 p 6 b 6(O 1) max [d 1(i) ai 6] b 6(O 2) S 5 p 5 b 5(O 1) max [d 1(i) ai 5] b 5(O 2) S 4 p 4 b 4(O 1) max [d 1(i) ai 4] b 4(O 2) S 3 p 3 b 3(O 1) max [d 1(i) ai 3] b 3(O 2) S 2 p 2 b 2(O 1) max [d 1(i) ai 2] b 2(O 2) S 1 p 1 b 1(O 1) max [d 1(i) ai 1] b 1(O 2) dt(j) O 1 O 2 Markoviana Reading Group b. N(O 2) O 3 O 4 … OT

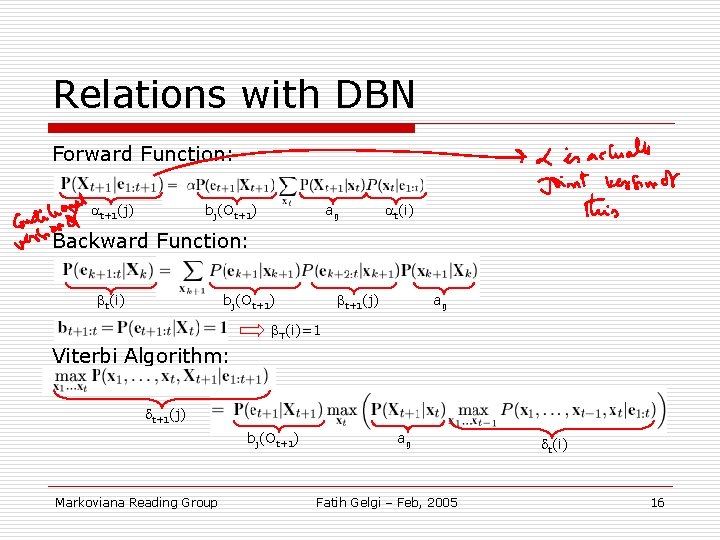

Relations with DBN Forward Function: t+1(j) bj(Ot+1) aij t(i) Backward Function: t(i) t+1(j) bj(Ot+1) aij T(i)=1 Viterbi Algorithm: t+1(j) bj(Ot+1) Markoviana Reading Group aij Fatih Gelgi – Feb, 2005 t(i) 16

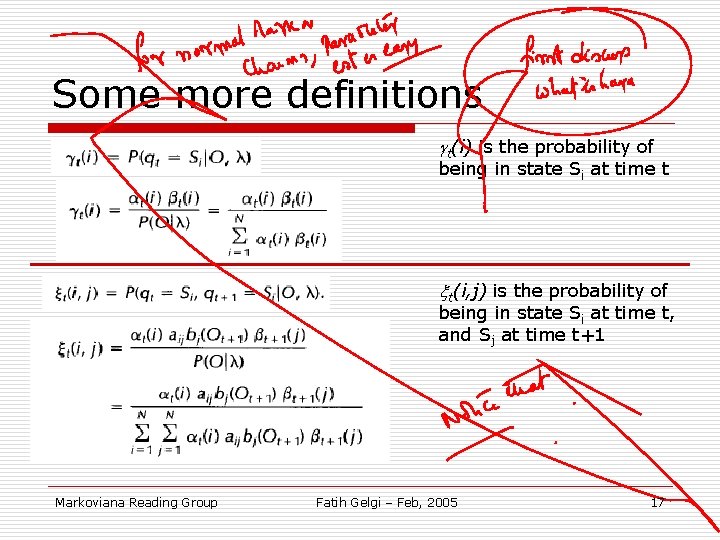

Some more definitions t(i) is the probability of being in state Si at time t xt(i, j) is the probability of being in state Si at time t, and Sj at time t+1 Markoviana Reading Group Fatih Gelgi – Feb, 2005 17

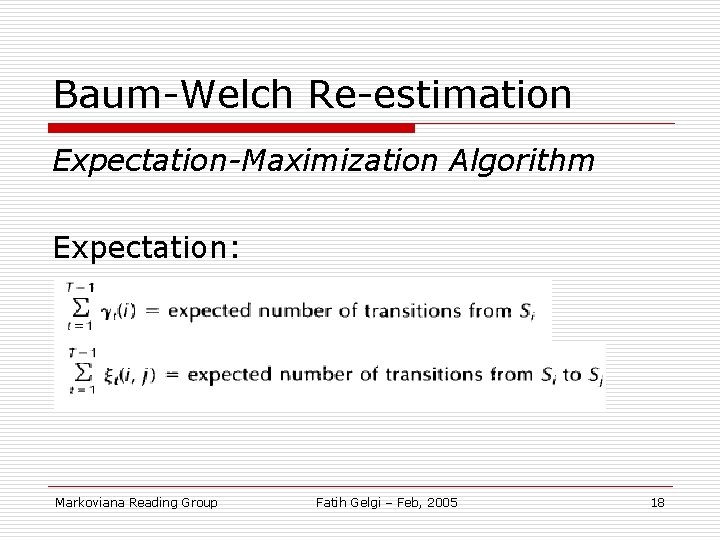

Baum-Welch Re-estimation Expectation-Maximization Algorithm Expectation: Markoviana Reading Group Fatih Gelgi – Feb, 2005 18

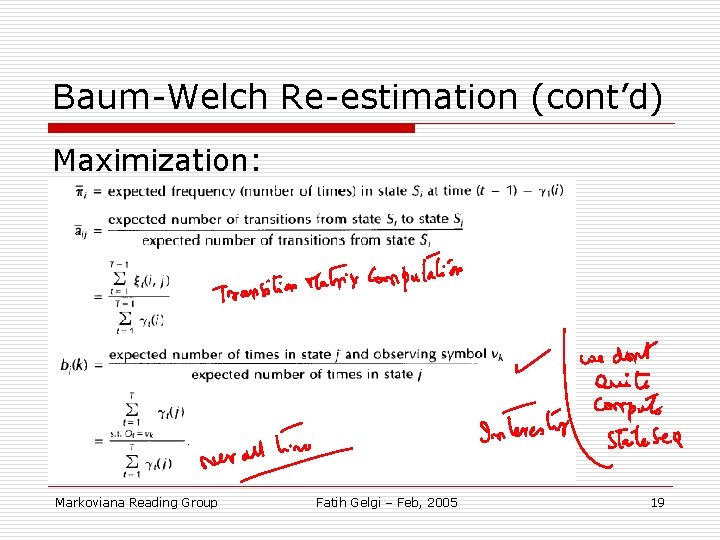

Baum-Welch Re-estimation (cont’d) Maximization: Markoviana Reading Group Fatih Gelgi – Feb, 2005 19

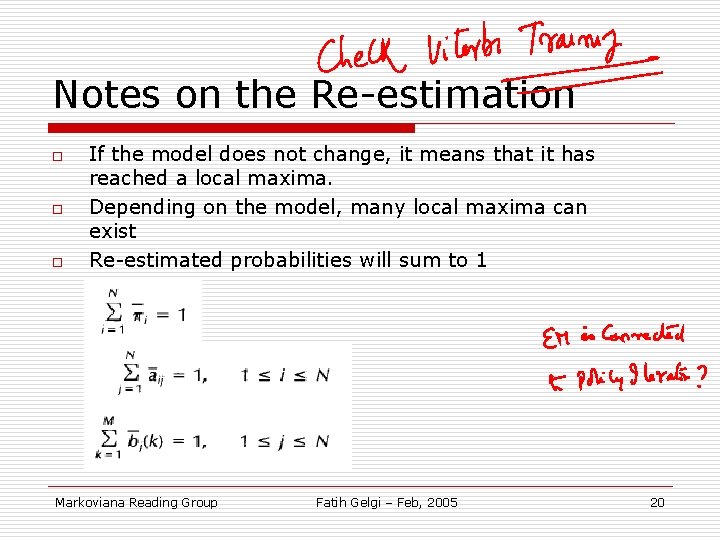

Notes on the Re-estimation o o o If the model does not change, it means that it has reached a local maxima. Depending on the model, many local maxima can exist Re-estimated probabilities will sum to 1 Markoviana Reading Group Fatih Gelgi – Feb, 2005 20

Implementation issues o o o Scaling Multiple observation sequences Initial parameter estimation Missing data Choice of model size and type Markoviana Reading Group Fatih Gelgi – Feb, 2005 21

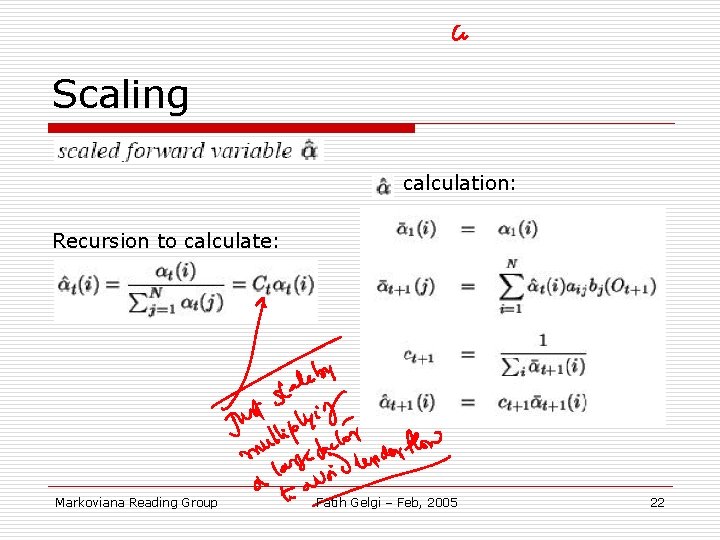

Scaling calculation: Recursion to calculate: Markoviana Reading Group Fatih Gelgi – Feb, 2005 22

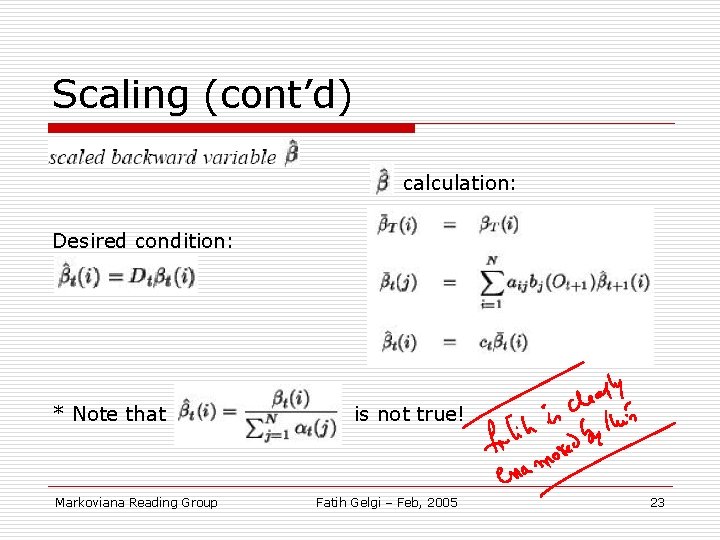

Scaling (cont’d) calculation: Desired condition: * Note that Markoviana Reading Group is not true! Fatih Gelgi – Feb, 2005 23

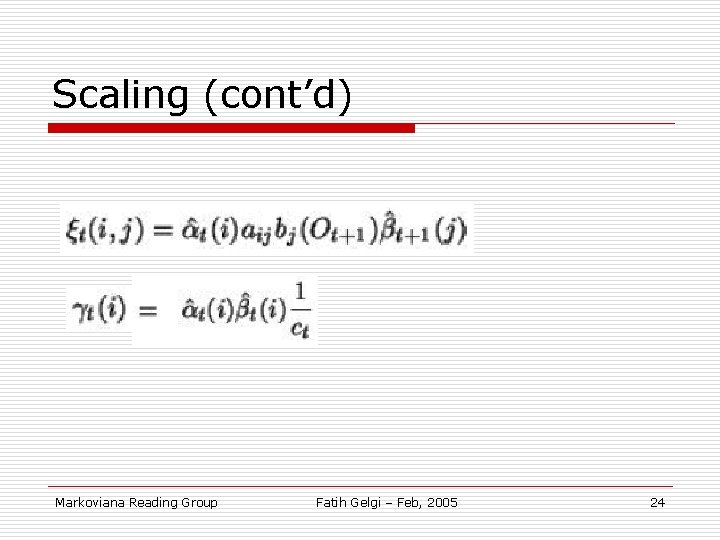

Scaling (cont’d) Markoviana Reading Group Fatih Gelgi – Feb, 2005 24

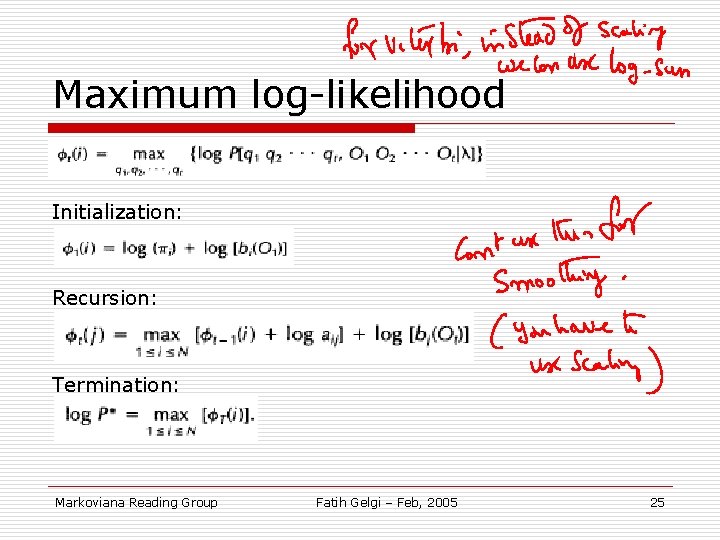

Maximum log-likelihood Initialization: Recursion: Termination: Markoviana Reading Group Fatih Gelgi – Feb, 2005 25

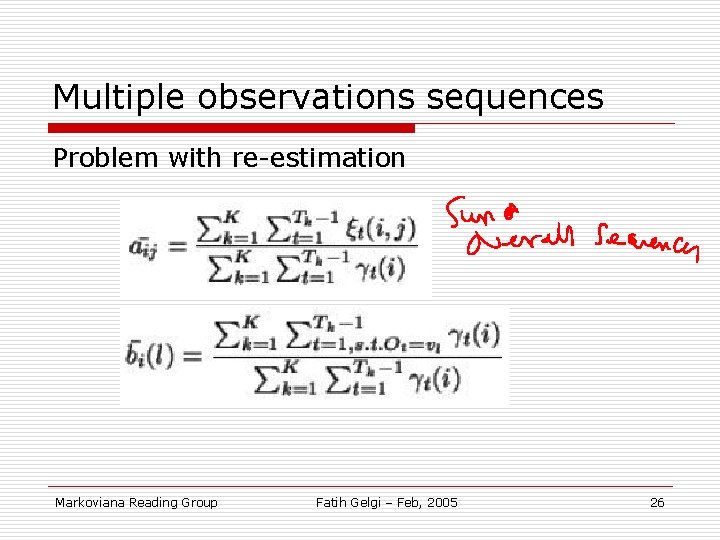

Multiple observations sequences Problem with re-estimation Markoviana Reading Group Fatih Gelgi – Feb, 2005 26

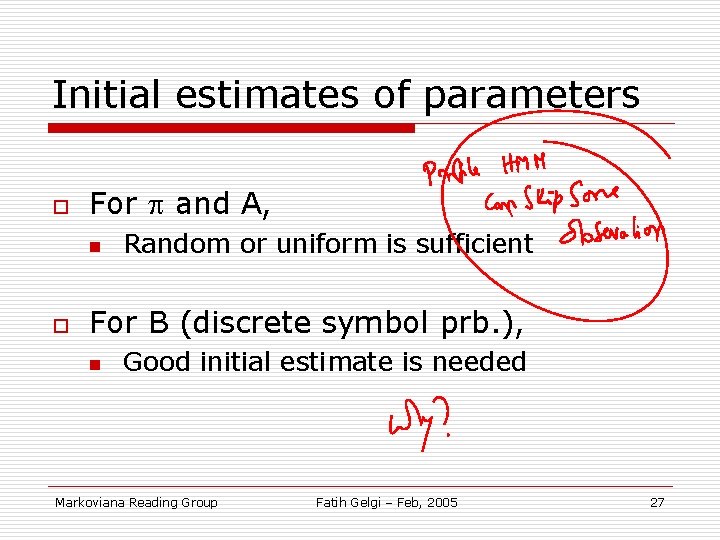

Initial estimates of parameters o For and A, n o Random or uniform is sufficient For B (discrete symbol prb. ), n Good initial estimate is needed Markoviana Reading Group Fatih Gelgi – Feb, 2005 27

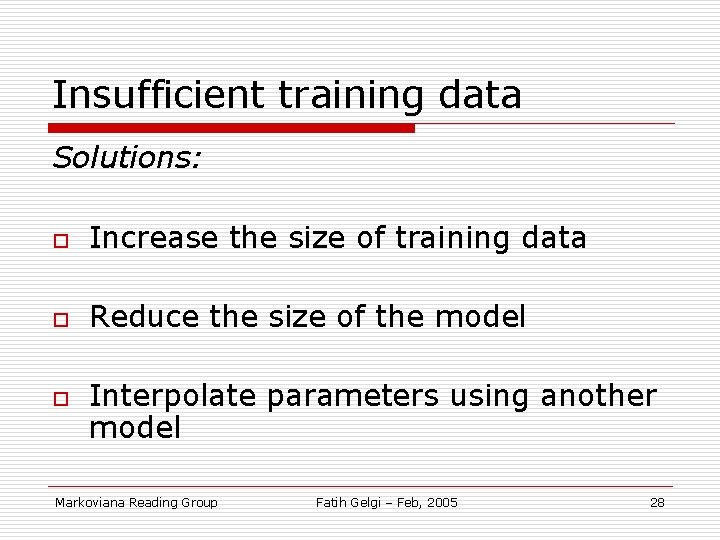

Insufficient training data Solutions: o Increase the size of training data o Reduce the size of the model o Interpolate parameters using another model Markoviana Reading Group Fatih Gelgi – Feb, 2005 28

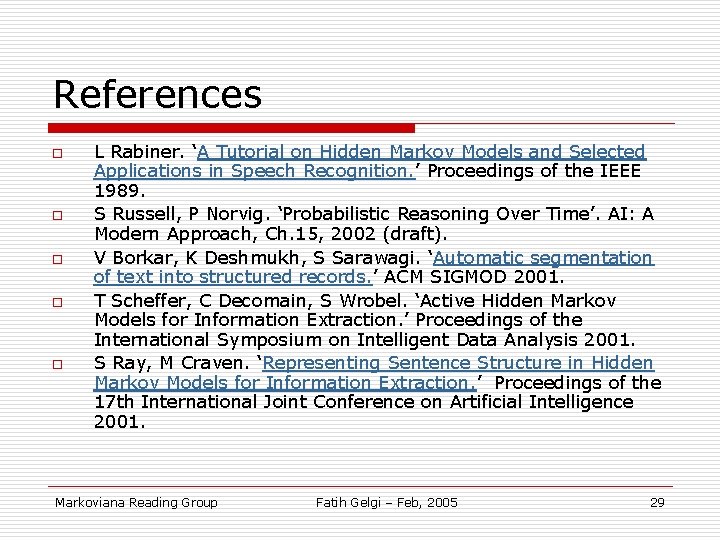

References o o o L Rabiner. ‘A Tutorial on Hidden Markov Models and Selected Applications in Speech Recognition. ’ Proceedings of the IEEE 1989. S Russell, P Norvig. ‘Probabilistic Reasoning Over Time’. AI: A Modern Approach, Ch. 15, 2002 (draft). V Borkar, K Deshmukh, S Sarawagi. ‘Automatic segmentation of text into structured records. ’ ACM SIGMOD 2001. T Scheffer, C Decomain, S Wrobel. ‘Active Hidden Markov Models for Information Extraction. ’ Proceedings of the International Symposium on Intelligent Data Analysis 2001. S Ray, M Craven. ‘Representing Sentence Structure in Hidden Markov Models for Information Extraction. ’ Proceedings of the 17 th International Joint Conference on Artificial Intelligence 2001. Markoviana Reading Group Fatih Gelgi – Feb, 2005 29

- Slides: 29