HENP Grids and Networks Global Virtual Organizations Harvey

HENP Grids and Networks: Global Virtual Organizations Harvey B. Newman, Caltech Internet 2 Virtual Member Meeting March 19, 2002 http: //l 3 www. cern. ch/~newman/HENPGrids. Nets_I 2 Virt 031902. ppt

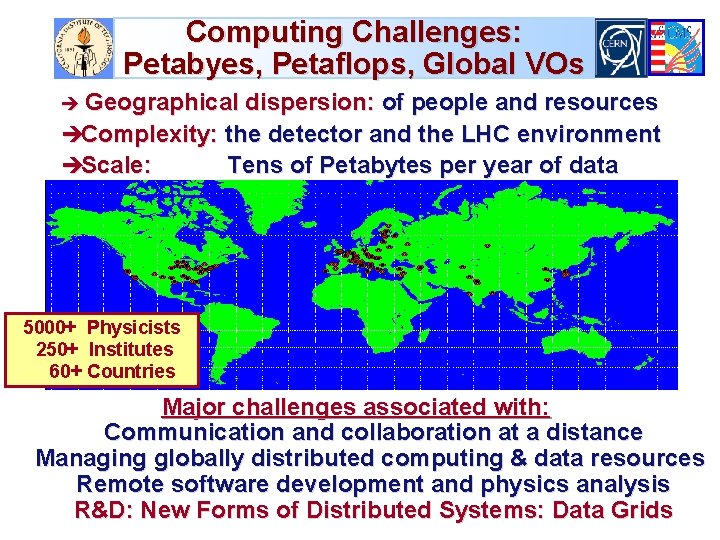

Computing Challenges: Petabyes, Petaflops, Global VOs è Geographical dispersion: of people and resources èComplexity: the detector and the LHC environment èScale: Tens of Petabytes per year of data 5000+ Physicists 250+ Institutes 60+ Countries Major challenges associated with: Communication and collaboration at a distance Managing globally distributed computing & data resources Remote software development and physics analysis R&D: New Forms of Distributed Systems: Data Grids

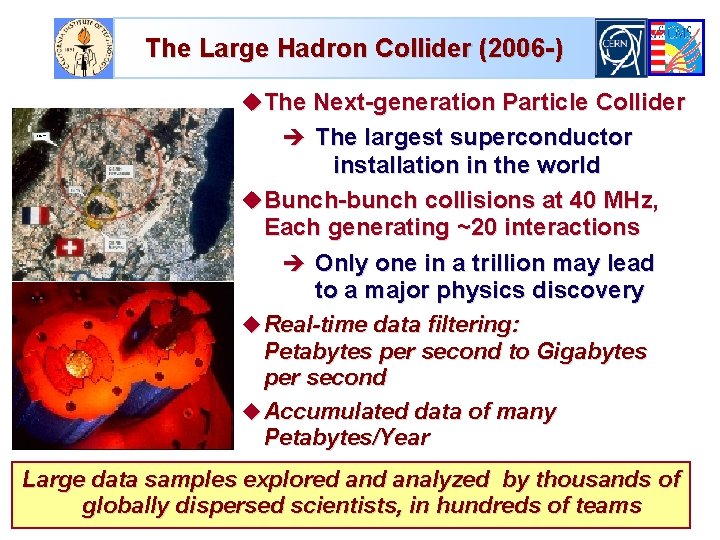

The Large Hadron Collider (2006 -) u. The Next-generation Particle Collider è The largest superconductor installation in the world u. Bunch-bunch collisions at 40 MHz, Each generating ~20 interactions è Only one in a trillion may lead to a major physics discovery u Real-time data filtering: Petabytes per second to Gigabytes per second u Accumulated data of many Petabytes/Year Large data samples explored analyzed by thousands of globally dispersed scientists, in hundreds of teams

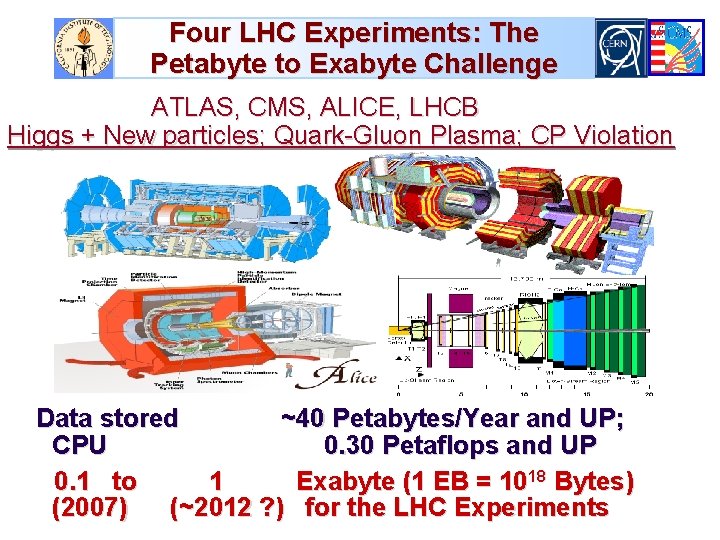

Four LHC Experiments: The Petabyte to Exabyte Challenge ATLAS, CMS, ALICE, LHCB Higgs + New particles; Quark-Gluon Plasma; CP Violation Data stored ~40 Petabytes/Year and UP; CPU 0. 30 Petaflops and UP 0. 1 to 1 Exabyte (1 EB = 1018 Bytes) (2007) (~2012 ? ) for the LHC Experiments

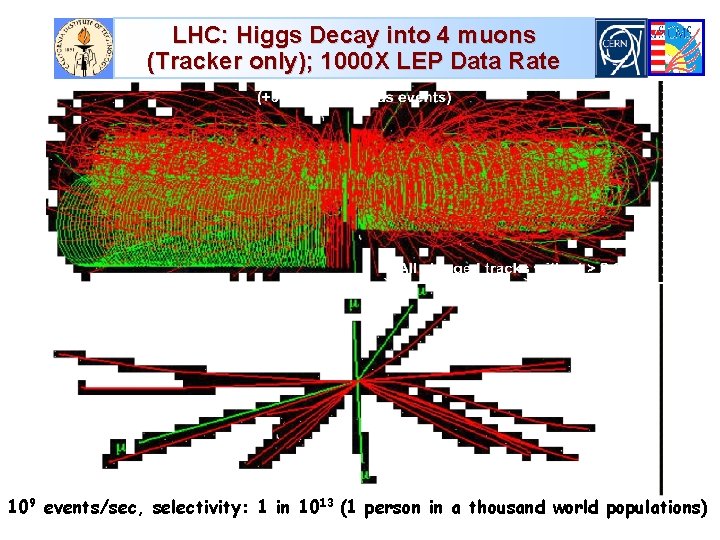

LHC: Higgs Decay into 4 muons (Tracker only); 1000 X LEP Data Rate 109 events/sec, selectivity: 1 in 1013 (1 person in a thousand world populations)

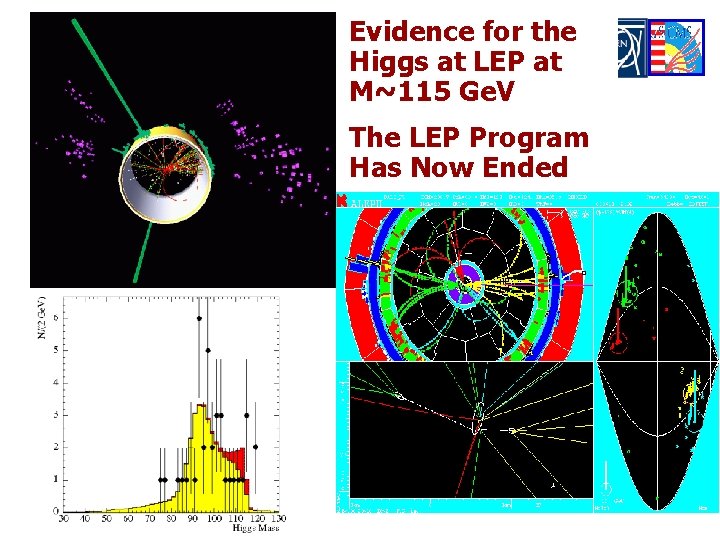

Evidence for the Higgs at LEP at M~115 Ge. V The LEP Program Has Now Ended

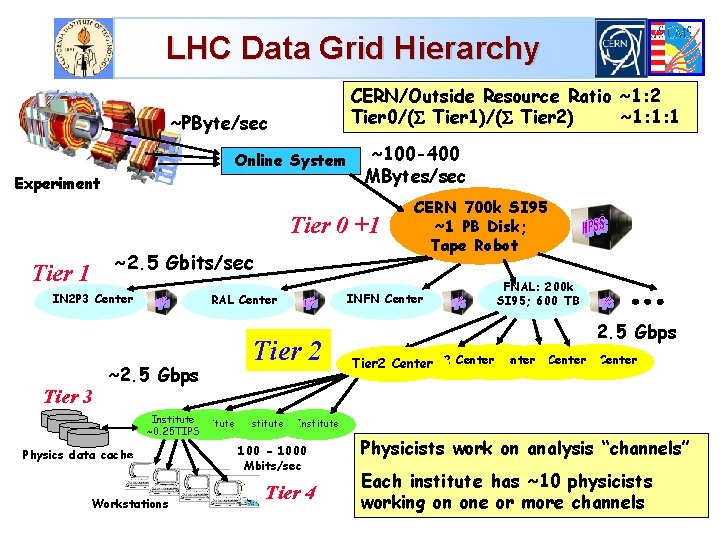

LHC Data Grid Hierarchy CERN/Outside Resource Ratio ~1: 2 Tier 0/( Tier 1)/( Tier 2) ~1: 1: 1 ~PByte/sec Online System Experiment ~100 -400 MBytes/sec Tier 0 +1 ~2. 5 Gbits/sec Tier 1 IN 2 P 3 Center Tier 3 INFN Center RAL Center ~2. 5 Gbps Tier 2 Institute ~0. 25 TIPS Physics data cache Workstations CERN 700 k SI 95 ~1 PB Disk; Tape Robot FNAL: 200 k SI 95; 600 TB 2. 5 Gbps Tier 2 Center Tier 2 Center Institute 100 - 1000 Mbits/sec Tier 4 Physicists work on analysis “channels” Each institute has ~10 physicists working on one or more channels

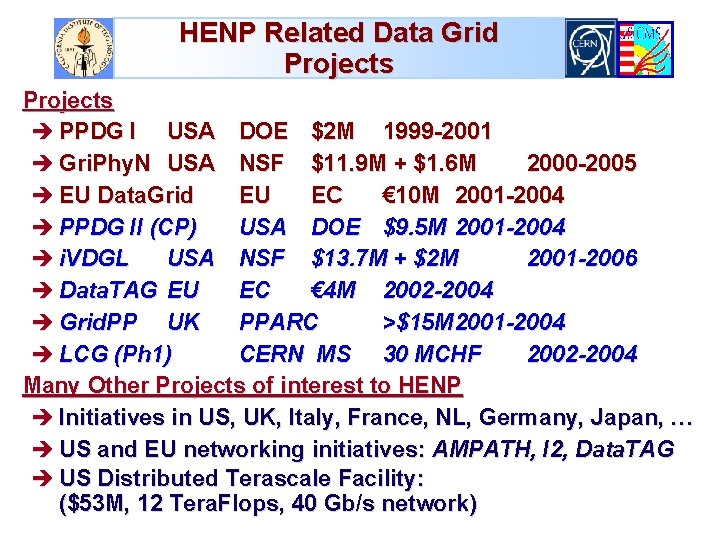

HENP Related Data Grid Projects è PPDG I USA DOE $2 M 1999 -2001 è Gri. Phy. N USA NSF $11. 9 M + $1. 6 M 2000 -2005 è EU Data. Grid EU EC € 10 M 2001 -2004 è PPDG II (CP) USA DOE $9. 5 M 2001 -2004 è i. VDGL USA NSF $13. 7 M + $2 M 2001 -2006 è Data. TAG EU EC € 4 M 2002 -2004 è Grid. PP UK PPARC >$15 M 2001 -2004 è LCG (Ph 1) CERN MS 30 MCHF 2002 -2004 Many Other Projects of interest to HENP è Initiatives in US, UK, Italy, France, NL, Germany, Japan, … è US and EU networking initiatives: AMPATH, I 2, Data. TAG è US Distributed Terascale Facility: ($53 M, 12 Tera. Flops, 40 Gb/s network)

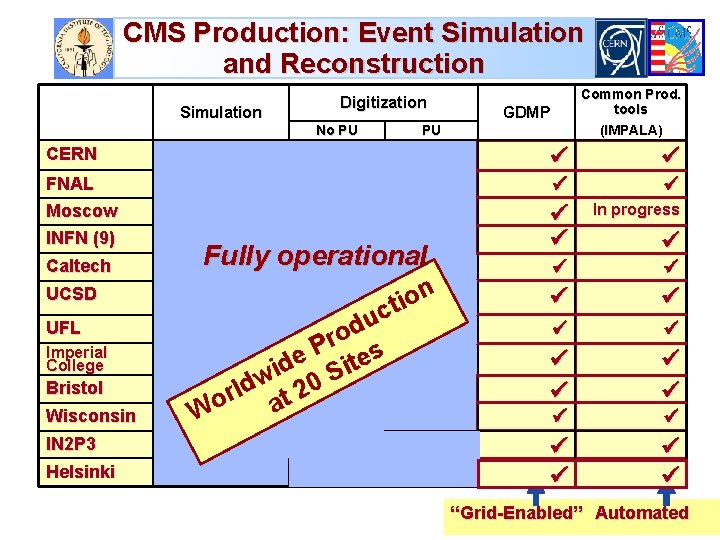

CMS Production: Event Simulation and Reconstruction Simulation Digitization No PU Common Prod. tools (IMPALA) GDMP PU CERN FNAL Moscow In progress INFN (9) Caltech UCSD Fully operational n o i ct u d o r s P e ite d i S w 0 d orl at 2 UFL Imperial College Bristol Wisconsin IN 2 P 3 Helsinki W “Grid-Enabled” Automated

Next Generation Networks for Experiments: Goals and Needs u Providing rapid access to event samples and subsets from massive data stores è From ~400 Terabytes in 2001, ~Petabytes by 2002, ~100 Petabytes by 2007, to ~1 Exabyte by ~2012. u Providing analyzed results with rapid turnaround, by coordinating and managing the LIMITED computing, data handling and NETWORK resources effectively u Enabling rapid access to the data and the collaboration è Across an ensemble of networks of varying capability u Advanced integrated applications, such as Data Grids, rely on seamless operation of our LANs and WANs è With reliable, quantifiable (monitored), high performance è For “Grid-enabled” event processing and data analysis, and collaboration

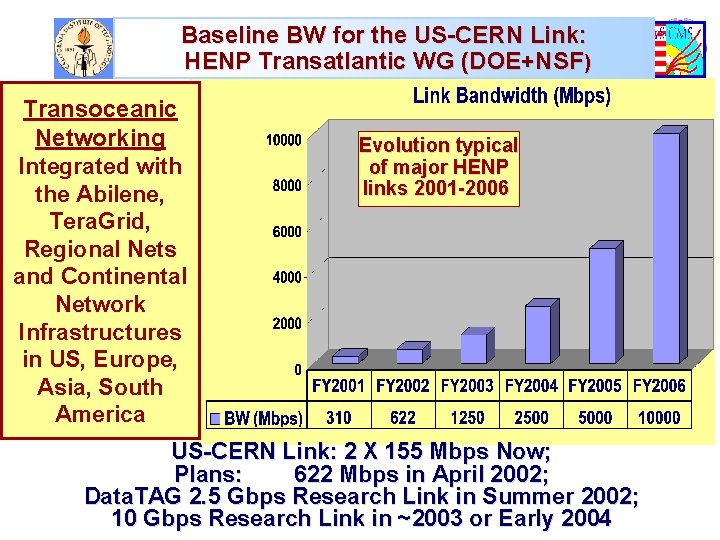

Baseline BW for the US-CERN Link: HENP Transatlantic WG (DOE+NSF) Transoceanic Networking Integrated with the Abilene, Tera. Grid, Regional Nets and Continental Network Infrastructures in US, Europe, Asia, South America Evolution typical of major HENP links 2001 -2006 US-CERN Link: 2 X 155 Mbps Now; Plans: 622 Mbps in April 2002; Data. TAG 2. 5 Gbps Research Link in Summer 2002; 10 Gbps Research Link in ~2003 or Early 2004

![Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum](http://slidetodoc.com/presentation_image/ab1dc4c0b83a296d331545f660a5a11e/image-12.jpg)

Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Link Occupancy 50% Assumed See http: //gate. hep. anl. gov/lprice/TAN

![Links Required to US Labs and Transatlantic [*] Maximum Link Occupancy 50% Assumed Links Required to US Labs and Transatlantic [*] Maximum Link Occupancy 50% Assumed](http://slidetodoc.com/presentation_image/ab1dc4c0b83a296d331545f660a5a11e/image-13.jpg)

Links Required to US Labs and Transatlantic [*] Maximum Link Occupancy 50% Assumed

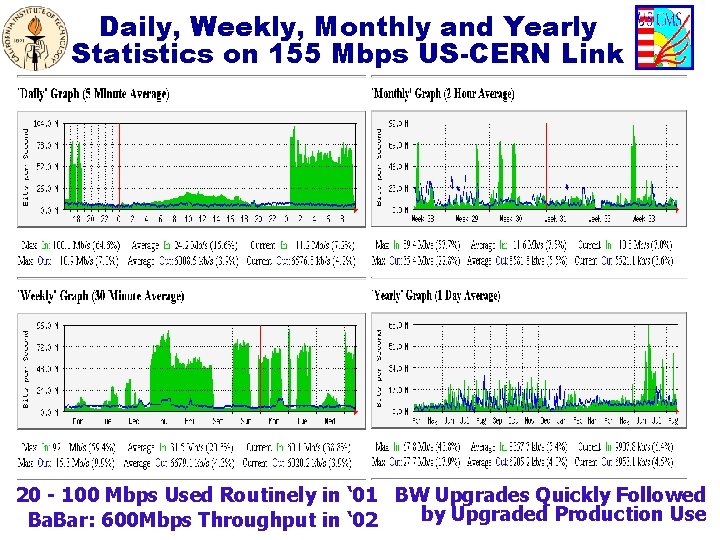

Daily, Weekly, Monthly and Yearly Statistics on 155 Mbps US-CERN Link 20 - 100 Mbps Used Routinely in ‘ 01 BW Upgrades Quickly Followed by Upgraded Production Use Ba. Bar: 600 Mbps Throughput in ‘ 02

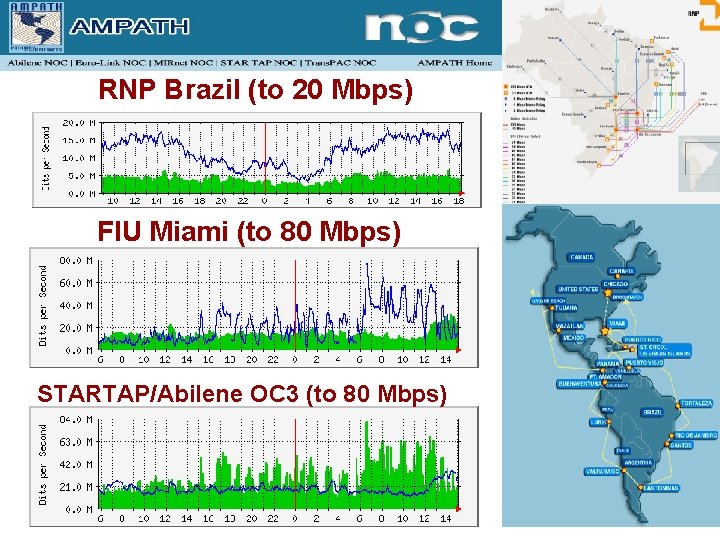

RNP Brazil (to 20 Mbps) FIU Miami (to 80 Mbps) STARTAP/Abilene OC 3 (to 80 Mbps)

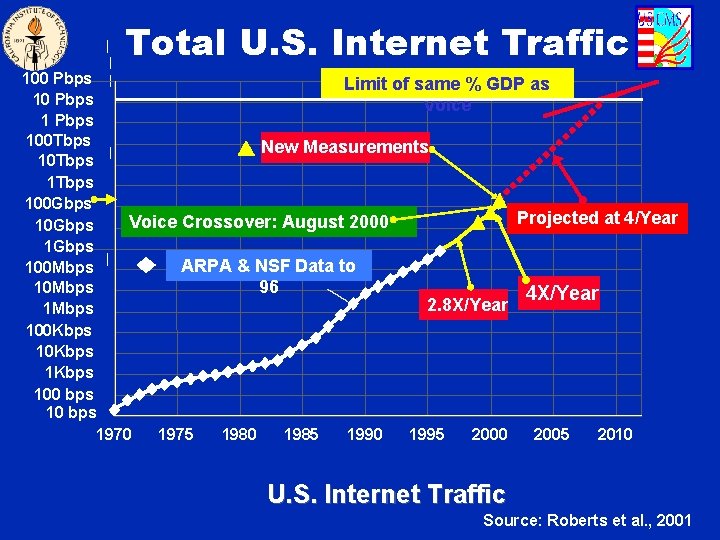

Total U. S. Internet Traffic 100 Pbps Limit of same % GDP as 10 Pbps Voice 1 Pbps 100 Tbps New Measurements 10 Tbps 100 Gbps Projected at 4/Year Voice Crossover: August 2000 10 Gbps 1 Gbps ARPA & NSF Data to 100 Mbps 10 Mbps 96 4 X/Year 2. 8 X/Year 1 Mbps 100 Kbps 1 Kbps 100 bps 1970 1975 1980 1985 1990 1995 2000 2005 2010 U. S. Internet Traffic Source: Roberts et al. , 2001

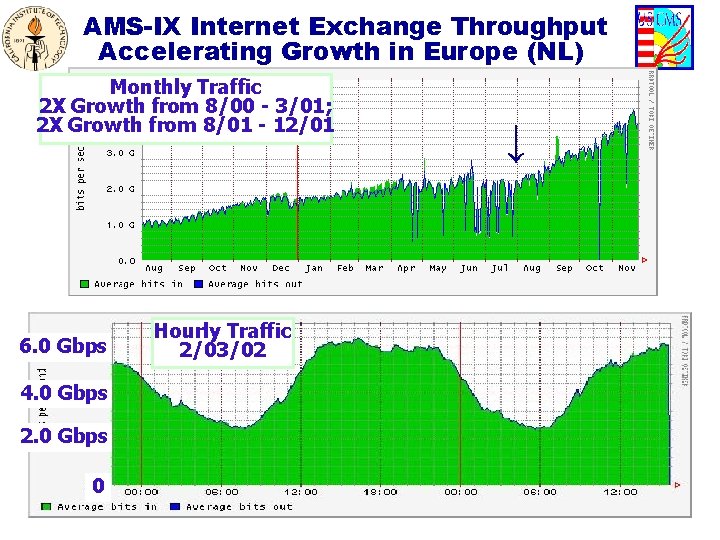

AMS-IX Internet Exchange Throughput Accelerating Growth in Europe (NL) Monthly Traffic 2 X Growth from 8/00 - 3/01; 2 X Growth from 8/01 - 12/01 6. 0 Gbps 4. 0 Gbps 2. 0 Gbps 0 Hourly Traffic 2/03/02 ↓

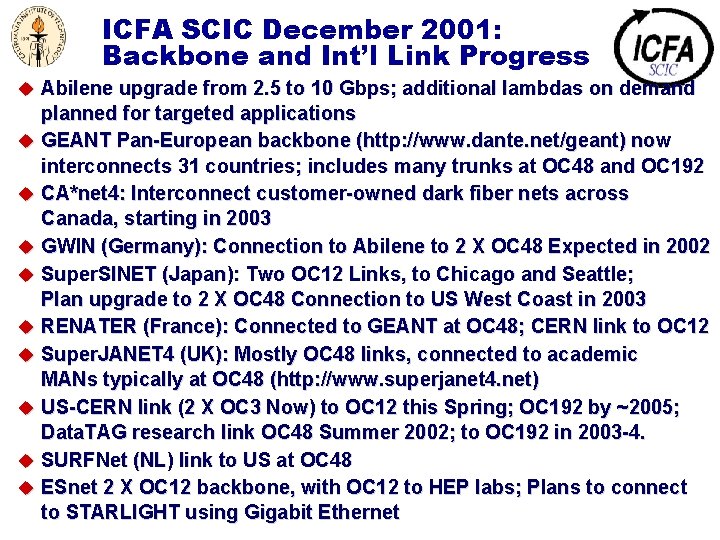

ICFA SCIC December 2001: Backbone and Int’l Link Progress u Abilene upgrade from 2. 5 to 10 Gbps; additional lambdas on demand planned for targeted applications u GEANT Pan-European backbone (http: //www. dante. net/geant) now interconnects 31 countries; includes many trunks at OC 48 and OC 192 u CA*net 4: Interconnect customer-owned dark fiber nets across Canada, starting in 2003 u GWIN (Germany): Connection to Abilene to 2 X OC 48 Expected in 2002 u Super. SINET (Japan): Two OC 12 Links, to Chicago and Seattle; Plan upgrade to 2 X OC 48 Connection to US West Coast in 2003 u RENATER (France): Connected to GEANT at OC 48; CERN link to OC 12 u Super. JANET 4 (UK): Mostly OC 48 links, connected to academic MANs typically at OC 48 (http: //www. superjanet 4. net) u US-CERN link (2 X OC 3 Now) to OC 12 this Spring; OC 192 by ~2005; Data. TAG research link OC 48 Summer 2002; to OC 192 in 2003 -4. u SURFNet (NL) link to US at OC 48 u ESnet 2 X OC 12 backbone, with OC 12 to HEP labs; Plans to connect to STARLIGHT using Gigabit Ethernet

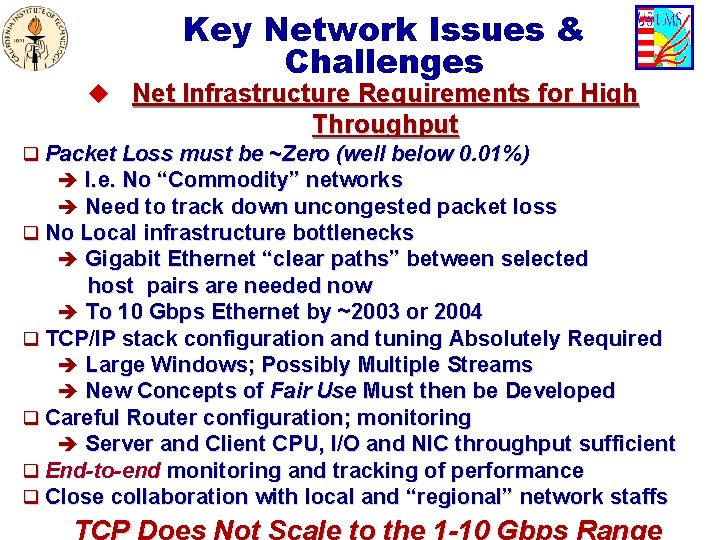

Key Network Issues & Challenges u Net Infrastructure Requirements for High Throughput q Packet Loss must be ~Zero (well below 0. 01%) è I. e. No “Commodity” networks è Need to track down uncongested packet loss q No Local infrastructure bottlenecks è Gigabit Ethernet “clear paths” between selected host pairs are needed now è To 10 Gbps Ethernet by ~2003 or 2004 q TCP/IP stack configuration and tuning Absolutely Required è Large Windows; Possibly Multiple Streams è New Concepts of Fair Use Must then be Developed q Careful Router configuration; monitoring è Server and Client CPU, I/O and NIC throughput sufficient q End-to-end monitoring and tracking of performance q Close collaboration with local and “regional” network staffs TCP Does Not Scale to the 1 -10 Gbps Range

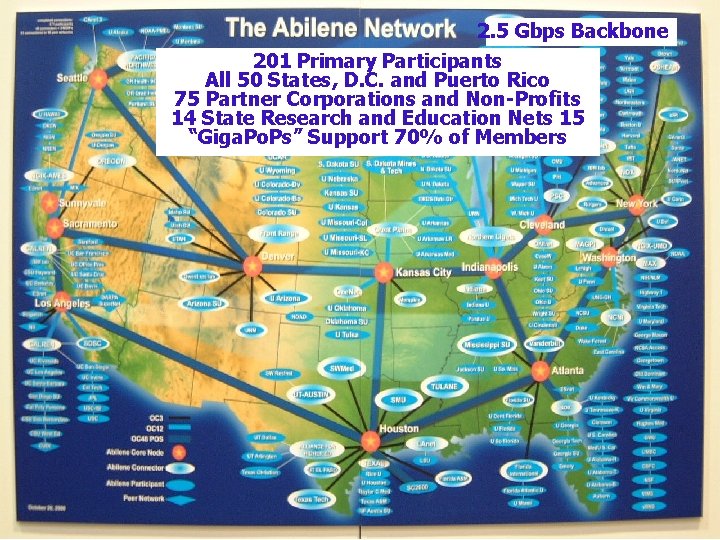

2. 5 Gbps Backbone 201 Primary Participants All 50 States, D. C. and Puerto Rico 75 Partner Corporations and Non-Profits 14 State Research and Education Nets 15 “Giga. Po. Ps” Support 70% of Members

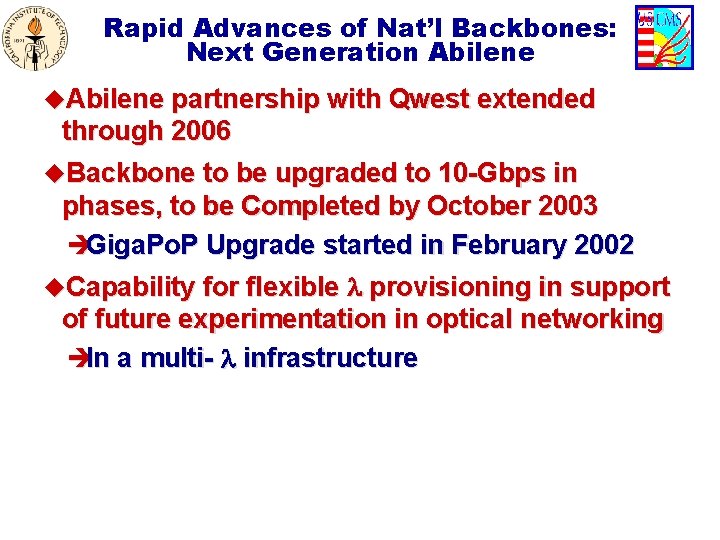

Rapid Advances of Nat’l Backbones: Next Generation Abilene u. Abilene partnership with Qwest extended through 2006 u. Backbone to be upgraded to 10 -Gbps in phases, to be Completed by October 2003 èGiga. Po. P Upgrade started in February 2002 u. Capability for flexible provisioning in support of future experimentation in optical networking èIn a multi- infrastructure

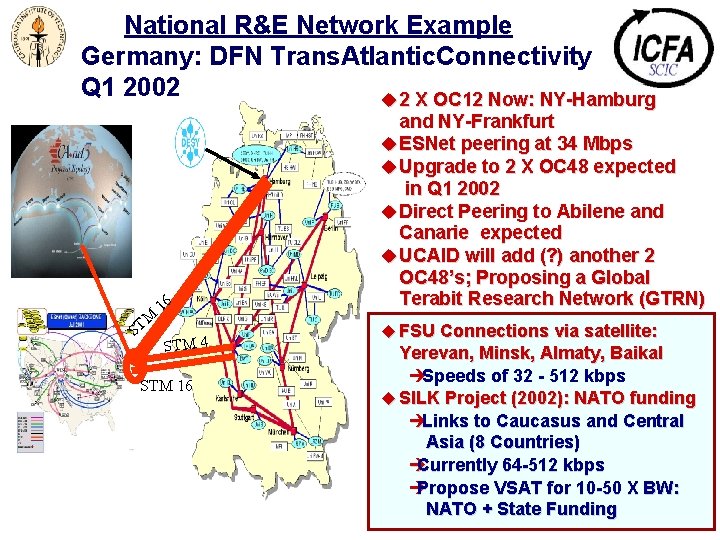

National R&E Network Example Germany: DFN Trans. Atlantic. Connectivity Q 1 2002 u 2 X OC 12 Now: NY-Hamburg M ST 16 STM 4 STM 16 and NY-Frankfurt u ESNet peering at 34 Mbps u Upgrade to 2 X OC 48 expected in Q 1 2002 u Direct Peering to Abilene and Canarie expected u UCAID will add (? ) another 2 OC 48’s; Proposing a Global Terabit Research Network (GTRN) u FSU Connections via satellite: Yerevan, Minsk, Almaty, Baikal èSpeeds of 32 - 512 kbps u SILK Project (2002): NATO funding èLinks to Caucasus and Central Asia (8 Countries) è Currently 64 -512 kbps è Propose VSAT for 10 -50 X BW: NATO + State Funding

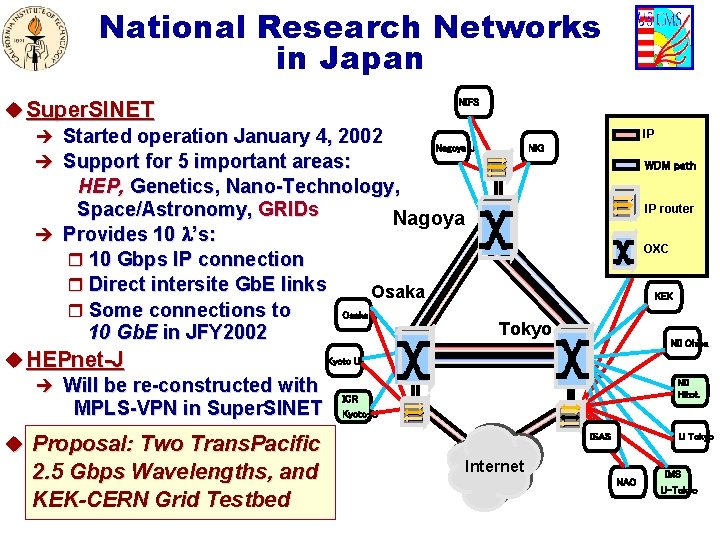

National Research Networks in Japan NIFS u Super. SINET è Started operation January 4, 2002 Nagoya U è Support for 5 important areas: HEP, Genetics, Nano-Technology, Space/Astronomy, GRIDs Nagoya è Provides 10 ’s: r 10 Gbps IP connection r Direct intersite Gb. E links Osaka r Some connections to Osaka U 10 Gb. E in JFY 2002 Kyoto U u HEPnet-J è Will be re-constructed with ICR MPLS-VPN in Super. SINET Kyoto-U IP NIG WDM path IP router OXC Tohoku U KEK Tokyo u Proposal: Two Trans. Pacific 2. 5 Gbps Wavelengths, and KEK-CERN Grid Testbed NII Chiba NII Hitot. ISAS U Tokyo Internet NAO IMS U-Tokyo

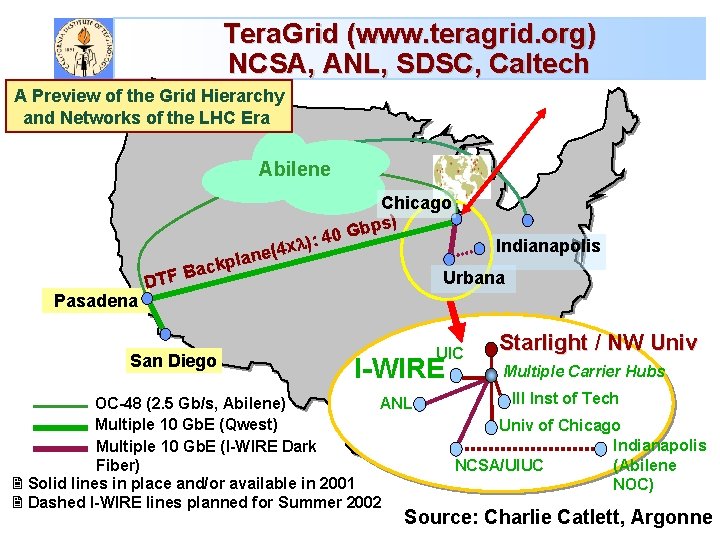

Tera. Grid (www. teragrid. org) NCSA, ANL, SDSC, Caltech A Preview of the Grid Hierarchy and Networks of the LHC Era Abilene ckpl a Pasadena B DTF San Diego : 40 ) x 4 ane( Chicago ) Gbps Indianapolis Urbana UIC I-WIRE OC-48 (2. 5 Gb/s, Abilene) ANL Multiple 10 Gb. E (Qwest) Multiple 10 Gb. E (I-WIRE Dark Fiber) 2 Solid lines in place and/or available in 2001 2 Dashed I-WIRE lines planned for Summer 2002 Starlight / NW Univ Multiple Carrier Hubs Ill Inst of Tech Univ of Chicago Indianapolis (Abilene NCSA/UIUC NOC) Source: Charlie Catlett, Argonne

![Throughput quality improvements: BWTCP < MSS/(RTT*sqrt(loss)) [*] 80% Improvement/Year Factor of 10 In 4 Throughput quality improvements: BWTCP < MSS/(RTT*sqrt(loss)) [*] 80% Improvement/Year Factor of 10 In 4](http://slidetodoc.com/presentation_image/ab1dc4c0b83a296d331545f660a5a11e/image-25.jpg)

Throughput quality improvements: BWTCP < MSS/(RTT*sqrt(loss)) [*] 80% Improvement/Year Factor of 10 In 4 Years Eastern Europe Keeping Up China Recent Improvement [*] See “Macroscopic Behavior of the TCP Congestion Avoidance Algorithm, ” Matthis, Semke, Mahdavi, Ott, Computer Communication Review 27(3), 7/1997

![Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational](http://slidetodoc.com/presentation_image/ab1dc4c0b83a296d331545f660a5a11e/image-26.jpg)

Internet 2 HENP WG [*] u Mission: To help ensure that the required èNational and international network infrastructures (end-to-end) èStandardized tools and facilities for high performance and end-to-end monitoring and tracking, and èCollaborative systems u are developed and deployed in a timely manner, and used effectively to meet the needs of the US LHC and other major HENP Programs, as well as the at-large scientific community. èTo carry out these developments in a way that is broadly applicable across many fields u Formed an Internet 2 WG as a suitable framework: Oct. 26 2001 u [*] Co-Chairs: S. Mc. Kee (Michigan), H. Newman (Caltech); Sec’y J. Williams (Indiana u Website: http: //www. internet 2. edu/henp; also see the Internet 2 End-to-end Initiative: http: //www. internet 2. edu/e 2 e

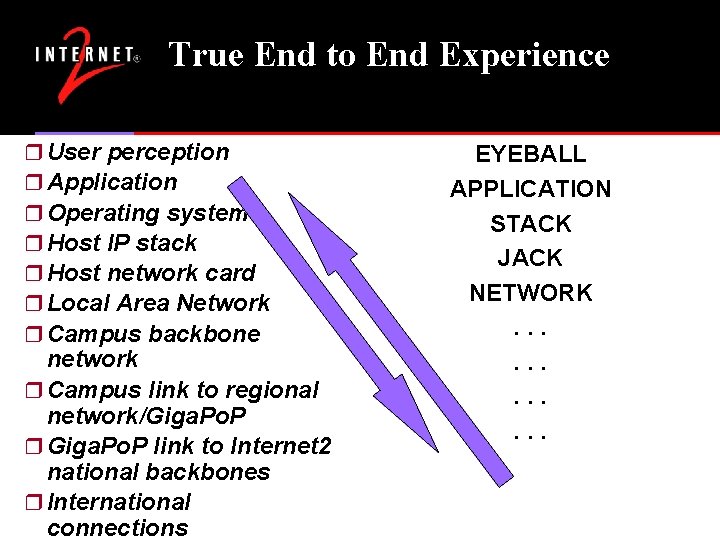

True End to End Experience r User perception r Application r Operating system r Host IP stack r Host network card r Local Area Network r Campus backbone network r Campus link to regional network/Giga. Po. P r Giga. Po. P link to Internet 2 national backbones r International connections EYEBALL APPLICATION STACK JACK NETWORK. . .

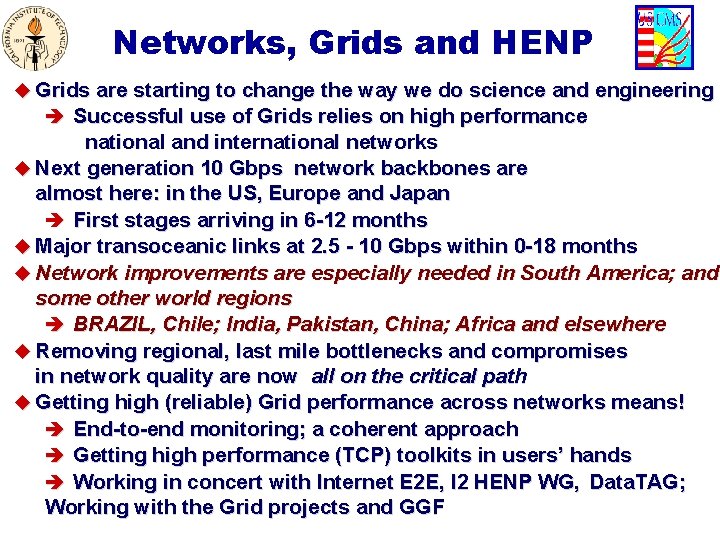

Networks, Grids and HENP u Grids are starting to change the way we do science and engineering è Successful use of Grids relies on high performance national and international networks u Next generation 10 Gbps network backbones are almost here: in the US, Europe and Japan è First stages arriving in 6 -12 months u Major transoceanic links at 2. 5 - 10 Gbps within 0 -18 months u Network improvements are especially needed in South America; and some other world regions è BRAZIL, Chile; India, Pakistan, China; Africa and elsewhere u Removing regional, last mile bottlenecks and compromises in network quality are now all on the critical path u Getting high (reliable) Grid performance across networks means! è End-to-end monitoring; a coherent approach è Getting high performance (TCP) toolkits in users’ hands è Working in concert with Internet E 2 E, I 2 HENP WG, Data. TAG; Working with the Grid projects and GGF

- Slides: 28