HENP Grids and Networks Global Virtual Organizations Harvey

HENP Grids and Networks Global Virtual Organizations Harvey B Newman, Professor of Physics LHCNet PI, US CMS Collaboration Board Chair WAN In Lab Site Visit, Caltech Meeting the Advanced Network Needs of Science March 5, 2003

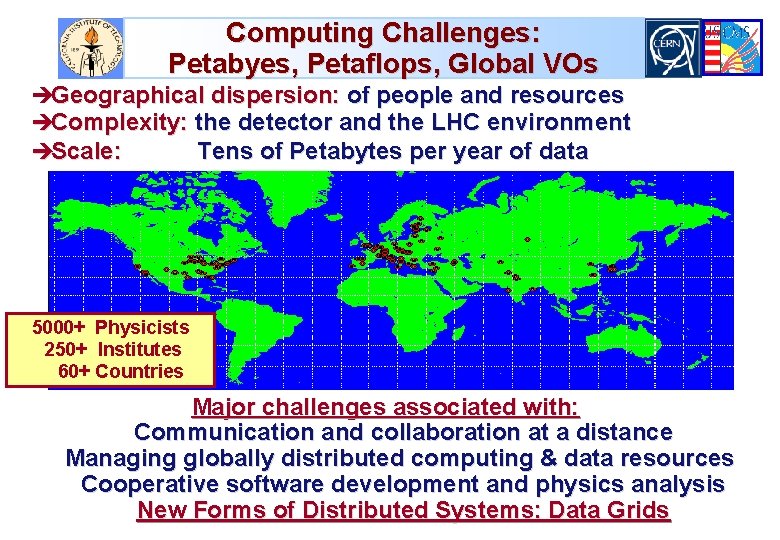

Computing Challenges: Petabyes, Petaflops, Global VOs èGeographical dispersion: of people and resources èComplexity: the detector and the LHC environment èScale: Tens of Petabytes per year of data 5000+ Physicists 250+ Institutes 60+ Countries Major challenges associated with: Communication and collaboration at a distance Managing globally distributed computing & data resources Cooperative software development and physics analysis New Forms of Distributed Systems: Data Grids

Next Generation Networks for Experiments: Goals and Needs Large data samples explored analyzed by thousands of globally dispersed scientists, in hundreds of teams u Providing rapid access to event samples, subsets and analyzed physics results from massive data stores è From Petabytes by 2002, ~100 Petabytes by 2007, to ~1 Exabyte by ~2012. u Providing analyzed results with rapid turnaround, by coordinating and managing the large but LIMITED computing, data handling and NETWORK resources effectively u Enabling rapid access to the data and the collaboration è Across an ensemble of networks of varying capability u Advanced integrated applications, such as Data Grids, rely on seamless operation of our LANs and WANs è With reliable, monitored, quantifiable high performance

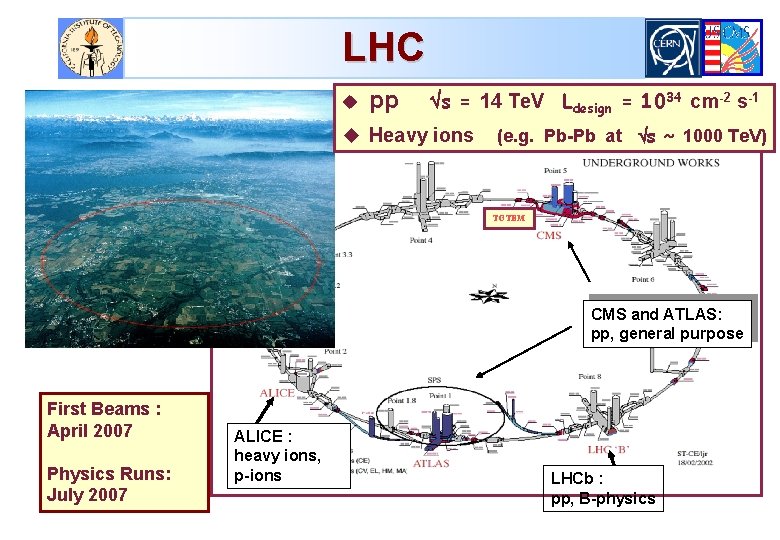

LHC u pp s = 14 Te. V Ldesign = 1034 cm-2 s-1 u Heavy ions (e. g. Pb-Pb at s ~ 1000 Te. V) TOTEM 27 Km ring 1232 dipoles B=8. 3 T (Nb. Ti at 1. 9 K) First Beams : April 2007 Physics Runs: July 2007 ALICE : heavy ions, p-ions CMS and ATLAS: pp, general purpose LHCb : pp, B-physics

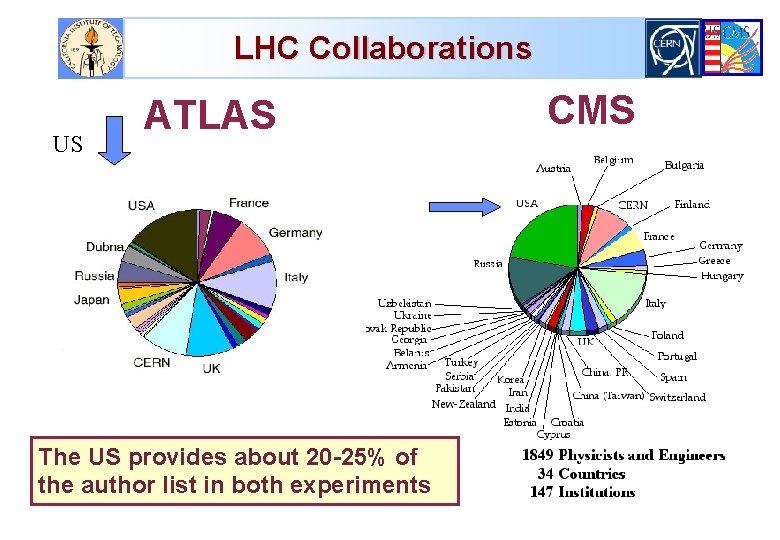

LHC Collaborations US ATLAS The US provides about 20 -25% of the author list in both experiments CMS

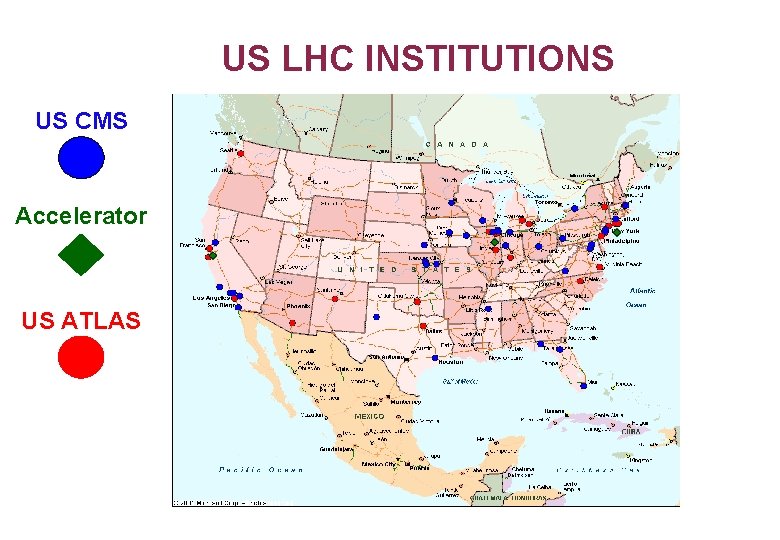

US LHC INSTITUTIONS US CMS Accelerator US ATLAS

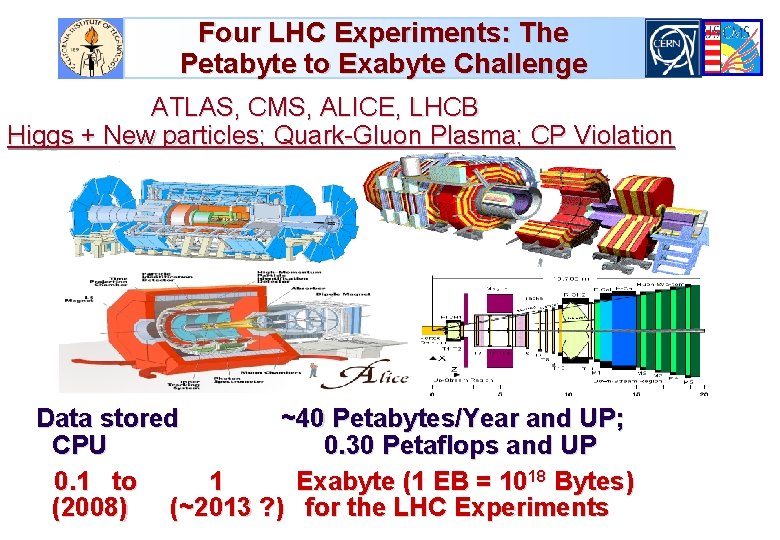

Four LHC Experiments: The Petabyte to Exabyte Challenge ATLAS, CMS, ALICE, LHCB Higgs + New particles; Quark-Gluon Plasma; CP Violation Data stored ~40 Petabytes/Year and UP; CPU 0. 30 Petaflops and UP 0. 1 to 1 Exabyte (1 EB = 1018 Bytes) (2008) (~2013 ? ) for the LHC Experiments

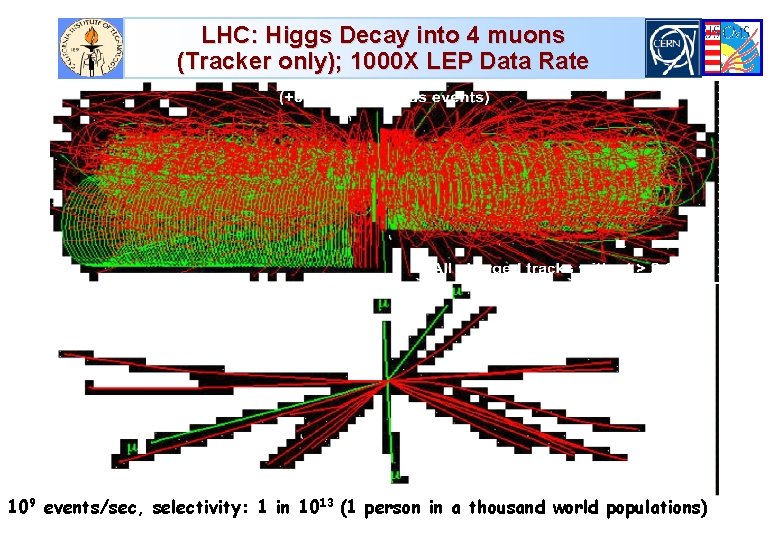

LHC: Higgs Decay into 4 muons (Tracker only); 1000 X LEP Data Rate 109 events/sec, selectivity: 1 in 1013 (1 person in a thousand world populations)

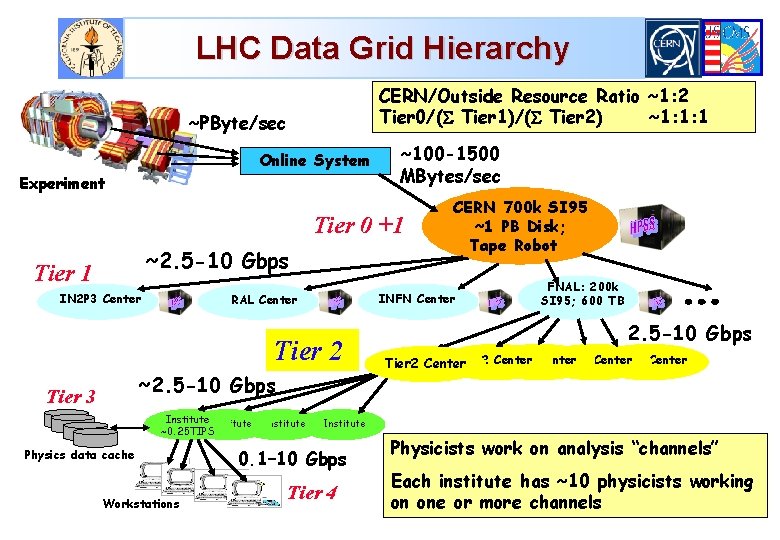

LHC Data Grid Hierarchy CERN/Outside Resource Ratio ~1: 2 Tier 0/( Tier 1)/( Tier 2) ~1: 1: 1 ~PByte/sec Online System Experiment ~100 -1500 MBytes/sec Tier 0 +1 ~2. 5 -10 Gbps Tier 1 IN 2 P 3 Center INFN Center RAL Center Tier 2 ~2. 5 -10 Gbps Tier 3 Institute ~0. 25 TIPS Physics data cache Workstations Institute CERN 700 k SI 95 ~1 PB Disk; Tape Robot FNAL: 200 k SI 95; 600 TB 2. 5 -10 Gbps Tier 2 Center Tier 2 Center Institute 0. 1– 10 Gbps Tier 4 Physicists work on analysis “channels” Each institute has ~10 physicists working on one or more channels

![Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum](http://slidetodoc.com/presentation_image_h/f93b1434284fca73a89fa0eae49a6c93/image-10.jpg)

Transatlantic Net WG (HN, L. Price) Bandwidth Requirements [*] u [*] Installed BW. Maximum Link Occupancy 50% Assumed See http: //gate. hep. anl. gov/lprice/TAN

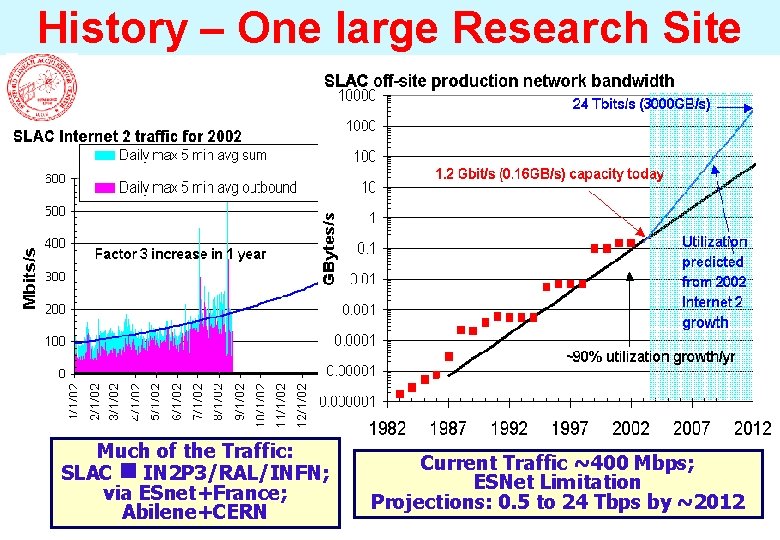

History – One large Research Site Much of the Traffic: SLAC IN 2 P 3/RAL/INFN; via ESnet+France; Abilene+CERN Current Traffic ~400 Mbps; ESNet Limitation Projections: 0. 5 to 24 Tbps by ~2012

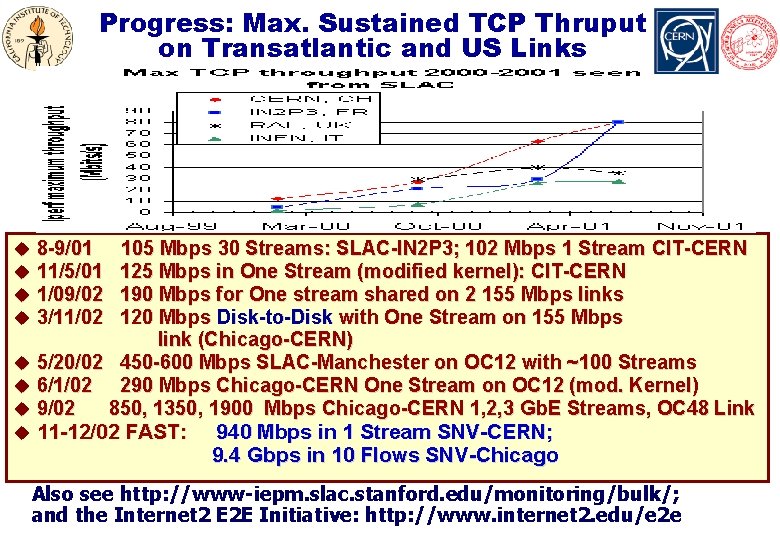

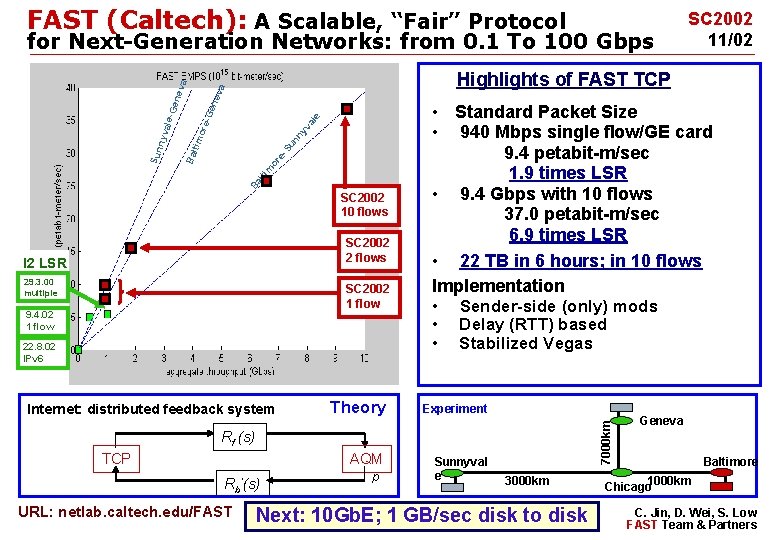

Progress: Max. Sustained TCP Thruput on Transatlantic and US Links * u 8 -9/01 u 11/5/01 u 1/09/02 u 3/11/02 105 Mbps 30 Streams: SLAC-IN 2 P 3; 102 Mbps 1 Stream CIT-CERN 125 Mbps in One Stream (modified kernel): CIT-CERN 190 Mbps for One stream shared on 2 155 Mbps links 120 Mbps Disk-to-Disk with One Stream on 155 Mbps link (Chicago-CERN) u 5/20/02 450 -600 Mbps SLAC-Manchester on OC 12 with ~100 Streams u 6/1/02 290 Mbps Chicago-CERN One Stream on OC 12 (mod. Kernel) u 9/02 850, 1350, 1900 Mbps Chicago-CERN 1, 2, 3 Gb. E Streams, OC 48 Link u 11 -12/02 FAST: 940 Mbps in 1 Stream SNV-CERN; 9. 4 Gbps in 10 Flows SNV-Chicago Also see http: //www-iepm. slac. stanford. edu/monitoring/bulk/; and the Internet 2 E 2 E Initiative: http: //www. internet 2. edu/e 2 e

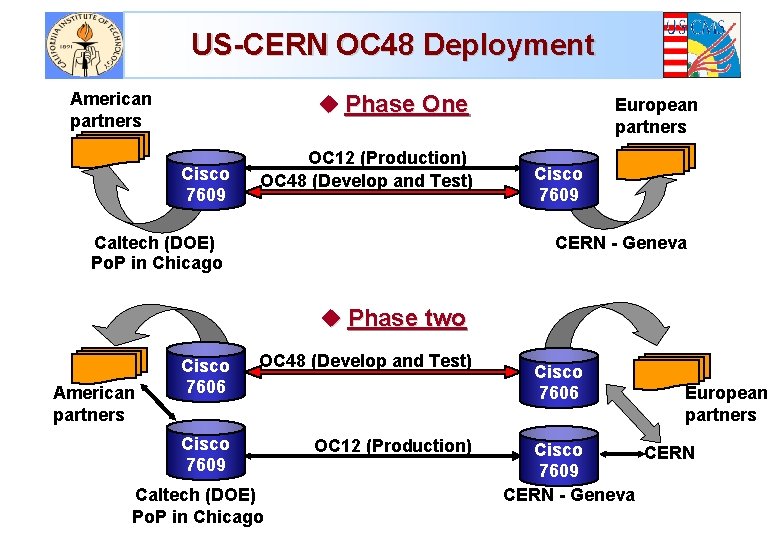

US-CERN OC 48 Deployment American partners u Phase One Cisco 7609 OC 12 (Production) OC 48 (Develop and Test) Caltech (DOE) Po. P in Chicago European partners Cisco 7609 CERN - Geneva u Phase two American partners Cisco 7606 OC 48 (Develop and Test) Cisco 7609 OC 12 (Production) Caltech (DOE) Po. P in Chicago Cisco 7606 European partners Cisco CERN 7609 CERN - Geneva

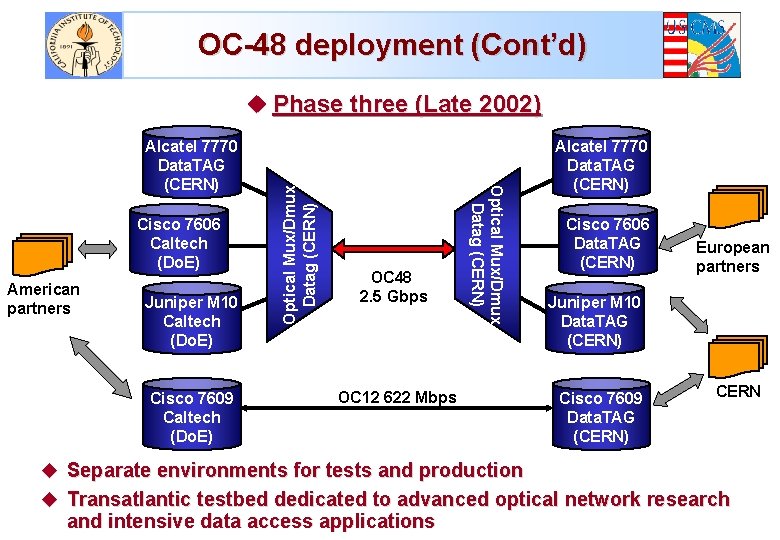

OC-48 deployment (Cont’d) Cisco 7606 Caltech (Do. E) American partners Juniper M 10 Caltech (Do. E) Cisco 7609 Caltech (Do. E) OC 48 2. 5 Gbps Optical Mux/Dmux Datag (CERN) Alcatel 7770 Data. TAG (CERN) Optical Mux/Dmux Datag (CERN) u Phase three (Late 2002) OC 12 622 Mbps Alcatel 7770 Data. TAG (CERN) Cisco 7606 Data. TAG (CERN) European partners Juniper M 10 Data. TAG (CERN) Cisco 7609 Data. TAG (CERN) CERN u Separate environments for tests and production u Transatlantic testbed dedicated to advanced optical network research and intensive data access applications

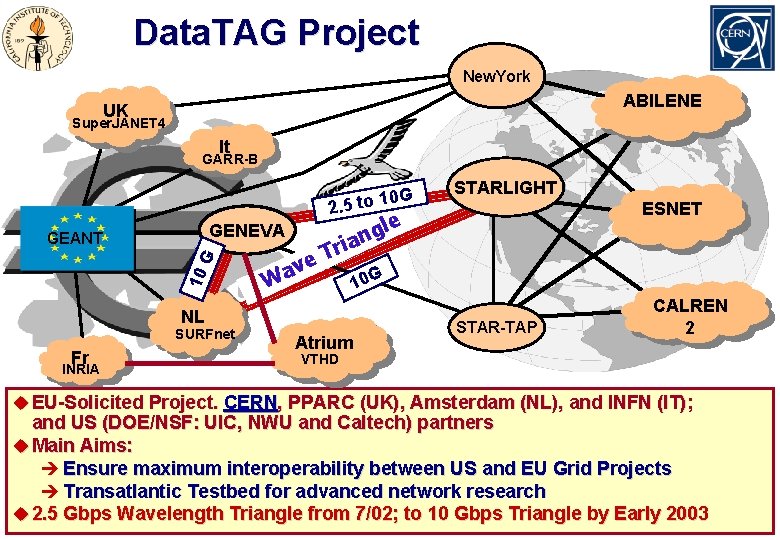

Data. TAG Project New. York ABILENE UK Super. JANET 4 It GARR-B 0 G. 5 to 1 2 GENEVA 10 G GEANT W Fr INRIA ESNET le g ian r T e v a 10 G NL SURFnet STARLIGHT Atrium STAR-TAP CALREN 2 VTHD u EU-Solicited Project. CERN, PPARC (UK), Amsterdam (NL), and INFN (IT); and US (DOE/NSF: UIC, NWU and Caltech) partners u Main Aims: è Ensure maximum interoperability between US and EU Grid Projects è Transatlantic Testbed for advanced network research u 2. 5 Gbps Wavelength Triangle from 7/02; to 10 Gbps Triangle by Early 2003

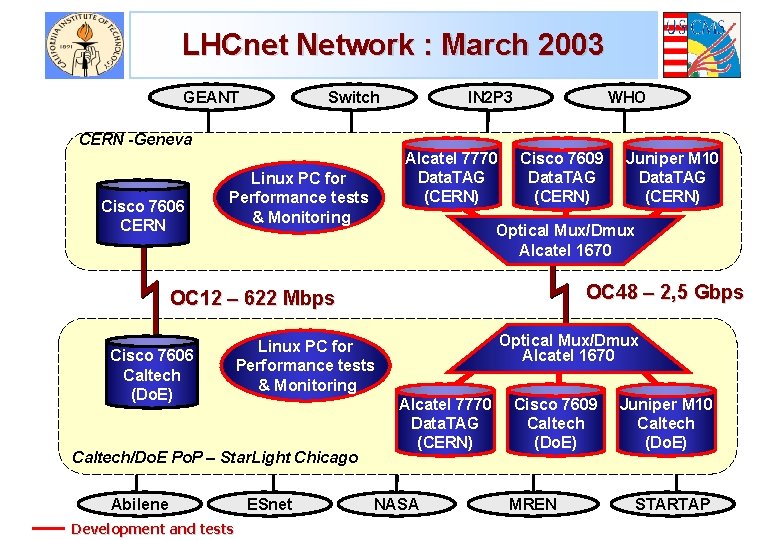

LHCnet Network : March 2003 GEANT Switch IN 2 P 3 WHO CERN -Geneva Cisco 7606 CERN Alcatel 7770 Data. TAG (CERN) Linux PC for Performance tests & Monitoring Cisco 7609 Data. TAG (CERN) Optical Mux/Dmux Alcatel 1670 OC 48 – 2, 5 Gbps OC 12 – 622 Mbps Cisco 7606 Caltech (Do. E) Abilene Development and tests Optical Mux/Dmux Alcatel 1670 Linux PC for Performance tests & Monitoring Caltech/Do. E Po. P – Star. Light Chicago ESnet Juniper M 10 Data. TAG (CERN) Alcatel 7770 Data. TAG (CERN) NASA Cisco 7609 Caltech (Do. E) MREN Juniper M 10 Caltech (Do. E) STARTAP

FAST (Caltech): A Scalable, “Fair” Protocol or e. Ba lti m SC 2002 10 flows SC 2002 2 flows I 2 LSR 29. 3. 00 multiple SC 2002 1 flow 9. 4. 02 1 flow • Standard Packet Size • 940 Mbps single flow/GE card 9. 4 petabit-m/sec 1. 9 times LSR • 9. 4 Gbps with 10 flows 37. 0 petabit-m/sec 6. 9 times LSR • 22 TB in 6 hours; in 10 flows Implementation • Sender-side (only) mods • • 22. 8. 02 IPv 6 Internet: distributed feedback system Delay (RTT) based Stabilized Vegas Theory Experiment AQM Sunnyval e 7000 km Su tim nn yv al e ore -Ge nev a Highlights of FAST TCP Bal Sun n yva le-G ene v a for Next-Generation Networks: from 0. 1 To 100 Gbps Rf (s) TCP Rb’(s) URL: netlab. caltech. edu/FAST SC 2002 11/02 p 3000 km Next: 10 Gb. E; 1 GB/sec disk to disk Geneva Baltimore 1000 km Chicago C. Jin, D. Wei, S. Low FAST Team & Partners

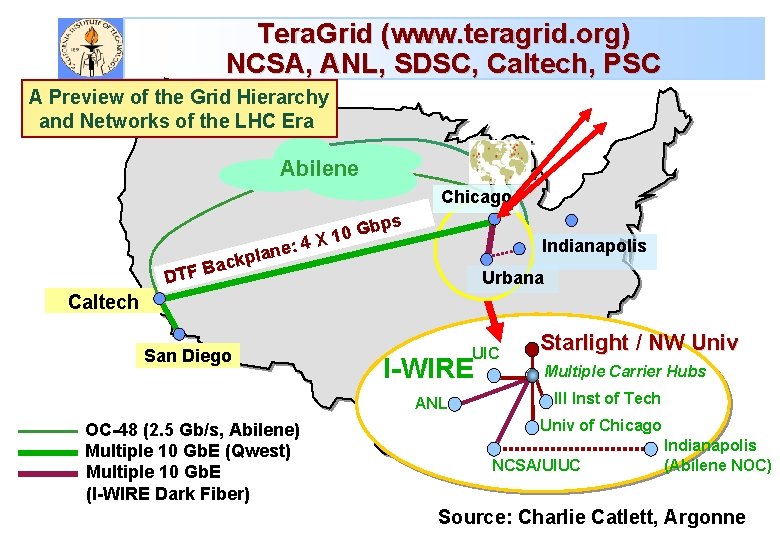

Tera. Grid (www. teragrid. org) NCSA, ANL, SDSC, Caltech, PSC A Preview of the Grid Hierarchy and Networks of the LHC Era Abilene Chicago 4 X : e n ckpla ps b G 10 Indianapolis a B DTF Urbana Caltech San Diego UIC I-WIRE ANL OC-48 (2. 5 Gb/s, Abilene) Multiple 10 Gb. E (Qwest) Multiple 10 Gb. E (I-WIRE Dark Fiber) Starlight / NW Univ Multiple Carrier Hubs Ill Inst of Tech Univ of Chicago NCSA/UIUC Indianapolis (Abilene NOC) Source: Charlie Catlett, Argonne

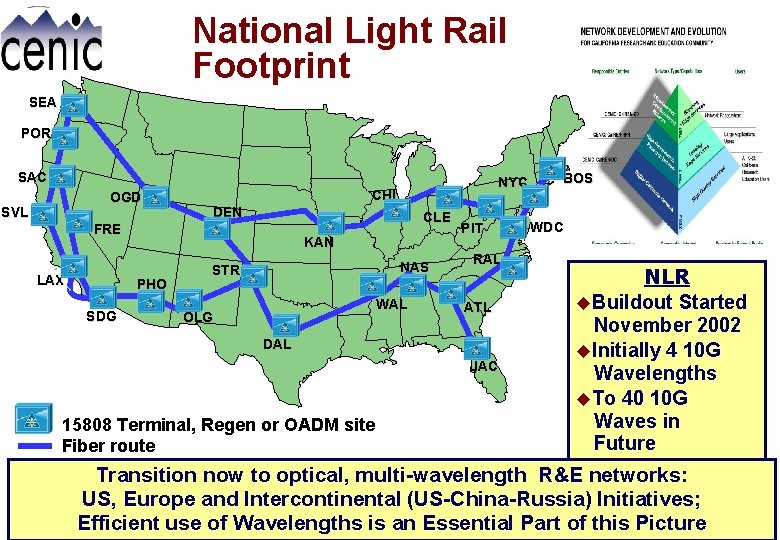

National Light Rail Footprint SEA POR SAC OGD SVL CHI DEN CLE FRE LAX KAN PHO SDG NYC NAS STR WAL OLG PIT RAL ATL DAL JAC 15808 Terminal, Regen or OADM site Fiber route BOS WDC NLR u. Buildout Started November 2002 u. Initially 4 10 G Wavelengths u. To 40 10 G Waves in Future Transition now to optical, multi-wavelength R&E networks: US, Europe and Intercontinental (US-China-Russia) Initiatives; Efficient use of Wavelengths is an Essential Part of this Picture

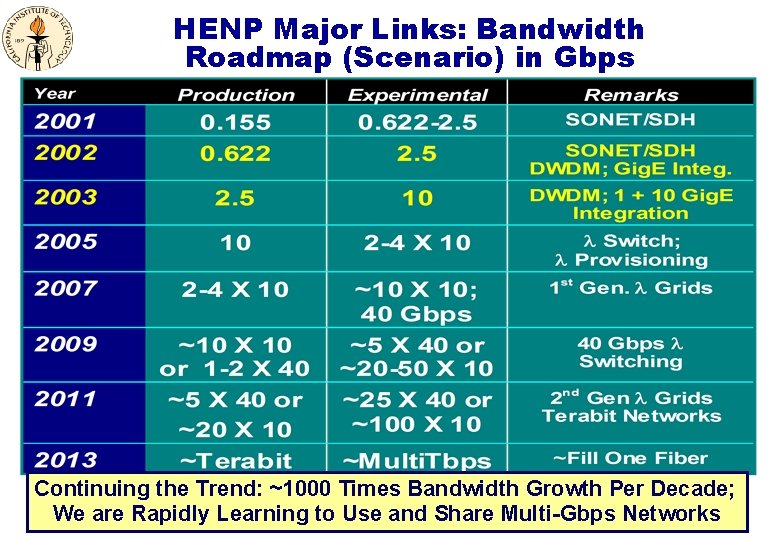

HENP Major Links: Bandwidth Roadmap (Scenario) in Gbps Continuing the Trend: ~1000 Times Bandwidth Growth Per Decade; We are Rapidly Learning to Use and Share Multi-Gbps Networks

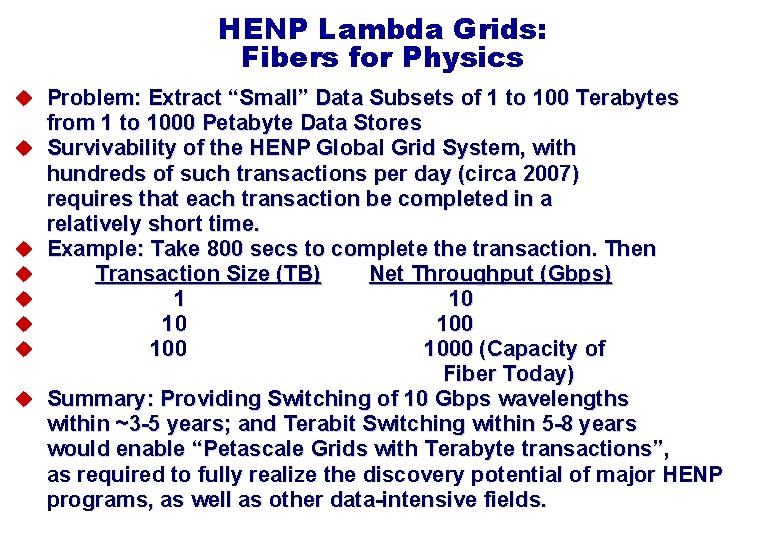

HENP Lambda Grids: Fibers for Physics u Problem: Extract “Small” Data Subsets of 1 to 100 Terabytes u u u u from 1 to 1000 Petabyte Data Stores Survivability of the HENP Global Grid System, with hundreds of such transactions per day (circa 2007) requires that each transaction be completed in a relatively short time. Example: Take 800 secs to complete the transaction. Then Transaction Size (TB) Net Throughput (Gbps) 1 10 10 100 1000 (Capacity of Fiber Today) Summary: Providing Switching of 10 Gbps wavelengths within ~3 -5 years; and Terabit Switching within 5 -8 years would enable “Petascale Grids with Terabyte transactions”, as required to fully realize the discovery potential of major HENP programs, as well as other data-intensive fields.

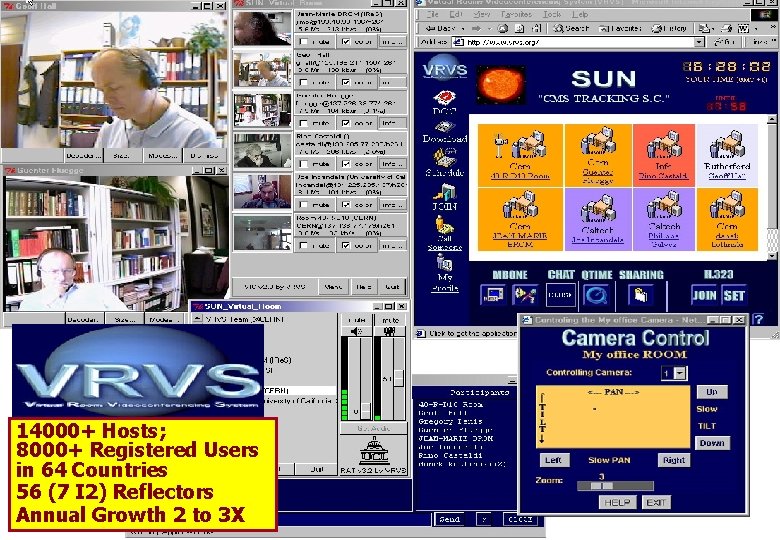

Emerging Data Grid User Communities u NSF Network for Earthquake Engineering Simulation (NEES) èIntegrated instrumentation, collaboration, simulation u Grid Physics Network (Gri. Phy. N) èATLAS, CMS, LIGO, SDSS u Access Grid; VRVS: supporting group-based collaboration And u u Genomics, Proteomics, . . . The Earth System Grid and EOSDIS Federating Brain Data Computed Micro. Tomography … è Virtual Observatories

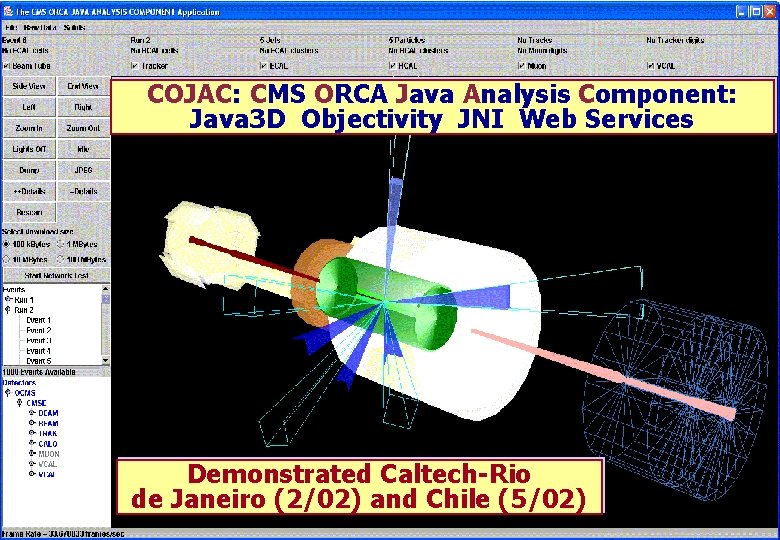

COJAC: CMS ORCA Java Analysis Component: Java 3 D Objectivity JNI Web Services Demonstrated Caltech-Rio de Janeiro (2/02) and Chile (5/02)

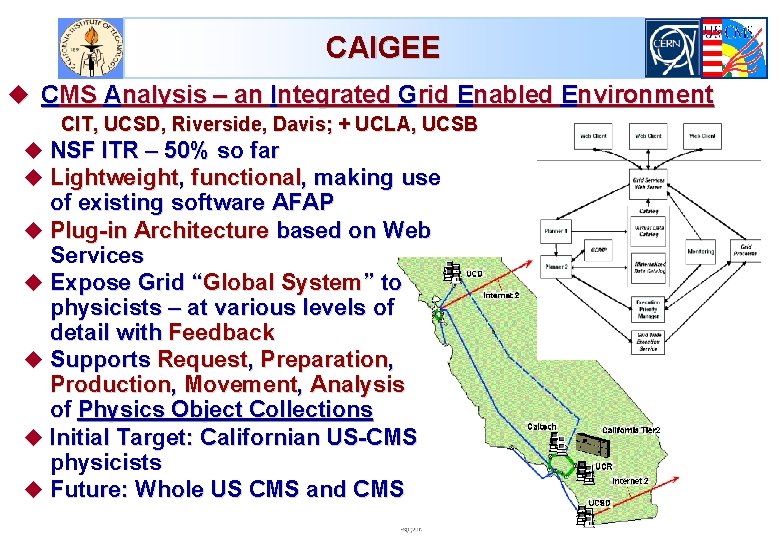

CAIGEE u CMS Analysis – an Integrated Grid Enabled Environment CIT, UCSD, Riverside, Davis; + UCLA, UCSB u NSF ITR – 50% so far u Lightweight, functional, making use of existing software AFAP u Plug-in Architecture based on Web Services u Expose Grid “Global System” to physicists – at various levels of detail with Feedback u Supports Request, Preparation, Production, Movement, Analysis of Physics Object Collections u Initial Target: Californian US-CMS physicists u Future: Whole US CMS and CMS

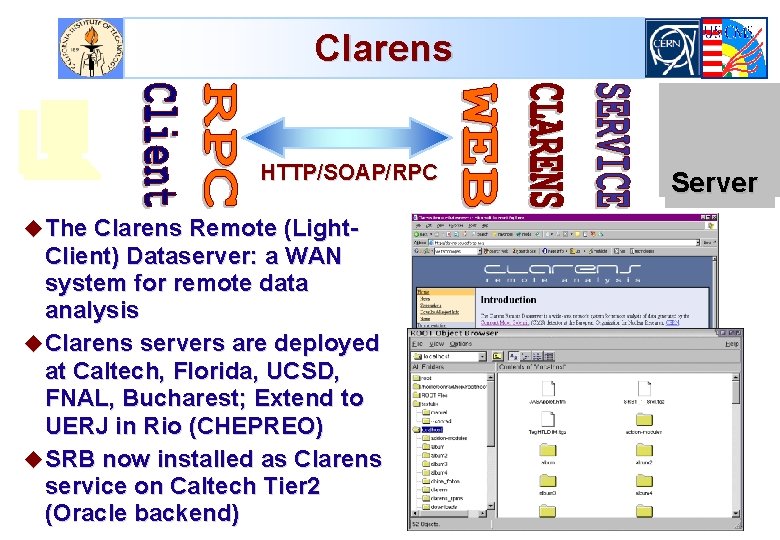

Clarens HTTP/SOAP/RPC u The Clarens Remote (Light- Client) Dataserver: a WAN system for remote data analysis u Clarens servers are deployed at Caltech, Florida, UCSD, FNAL, Bucharest; Extend to UERJ in Rio (CHEPREO) u SRB now installed as Clarens service on Caltech Tier 2 (Oracle backend) Server

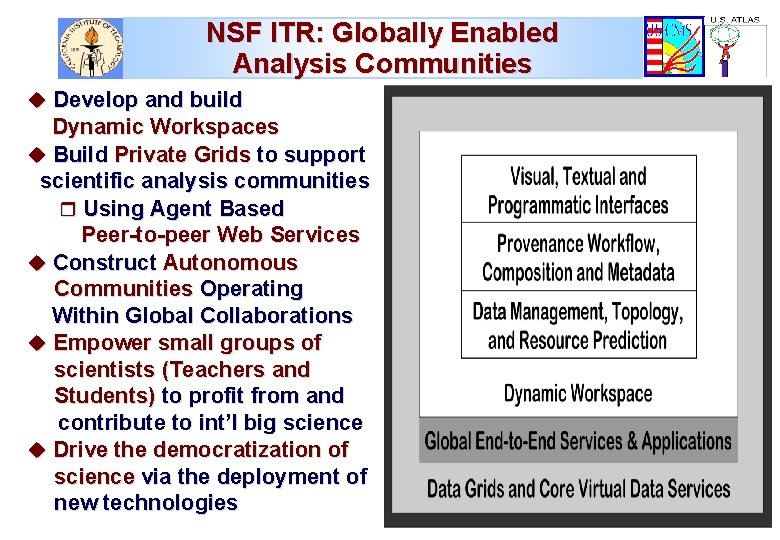

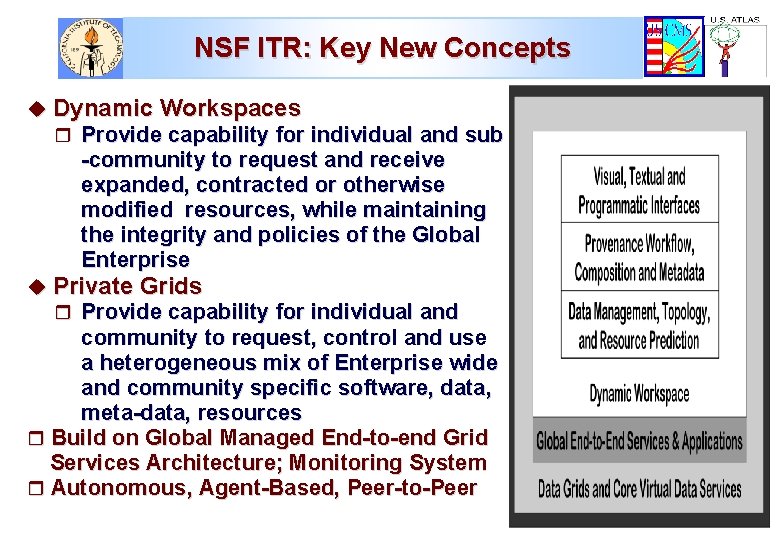

NSF ITR: Globally Enabled Analysis Communities u Develop and build Dynamic Workspaces u Build Private Grids to support scientific analysis communities r Using Agent Based Peer-to-peer Web Services u Construct Autonomous Communities Operating Within Global Collaborations u Empower small groups of scientists (Teachers and Students) to profit from and contribute to int’l big science u Drive the democratization of science via the deployment of new technologies

NSF ITR: Key New Concepts u Dynamic Workspaces r Provide capability for individual and sub -community to request and receive expanded, contracted or otherwise modified resources, while maintaining the integrity and policies of the Global Enterprise u Private Grids r Provide capability for individual and community to request, control and use a heterogeneous mix of Enterprise wide and community specific software, data, meta-data, resources r Build on Global Managed End-to-end Grid Services Architecture; Monitoring System r Autonomous, Agent-Based, Peer-to-Peer

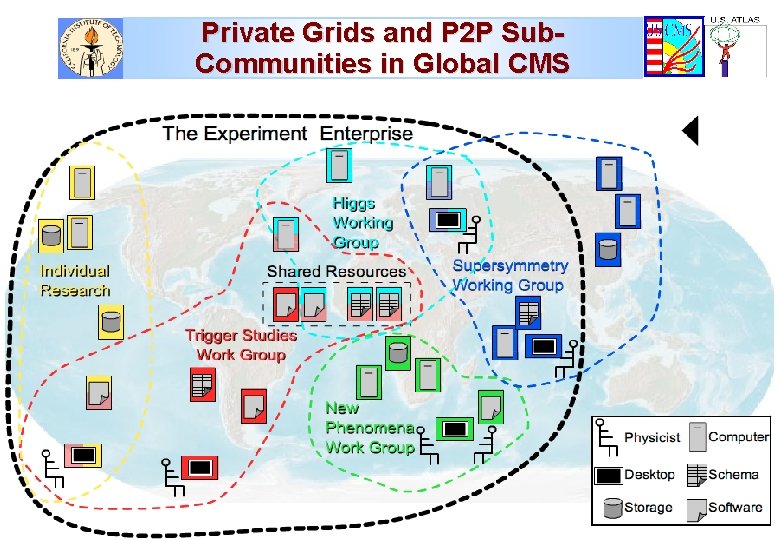

Private Grids and P 2 P Sub. Communities in Global CMS

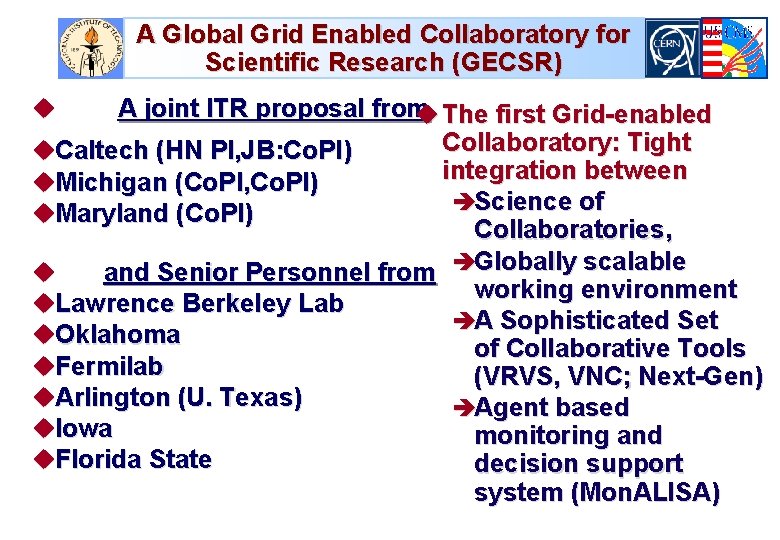

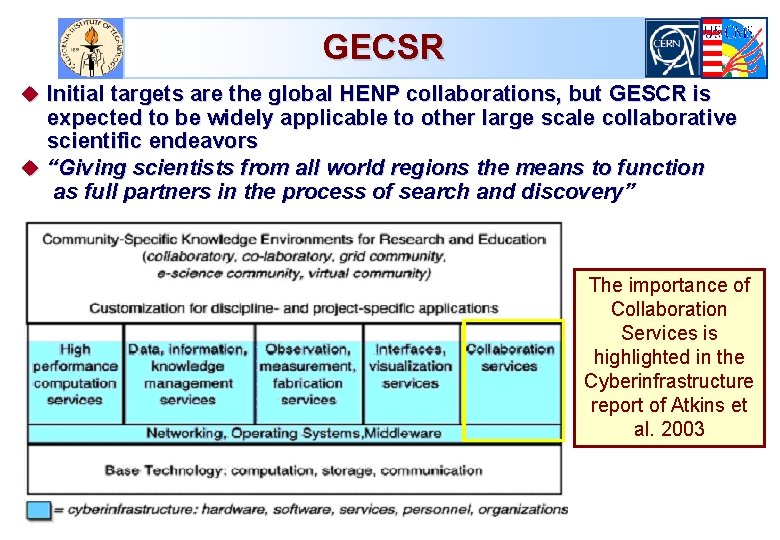

A Global Grid Enabled Collaboratory for Scientific Research (GECSR) A joint ITR proposal from u The first Grid-enabled Collaboratory: Tight u. Caltech (HN PI, JB: Co. PI) integration between u. Michigan (Co. PI, Co. PI) èScience of u. Maryland (Co. PI) Collaboratories, u and Senior Personnel from èGlobally scalable working environment u. Lawrence Berkeley Lab èA Sophisticated Set u. Oklahoma of Collaborative Tools u. Fermilab (VRVS, VNC; Next-Gen) u. Arlington (U. Texas) èAgent based u. Iowa monitoring and u. Florida State decision support system (Mon. ALISA) u

GECSR u Initial targets are the global HENP collaborations, but GESCR is expected to be widely applicable to other large scale collaborative scientific endeavors u “Giving scientists from all world regions the means to function as full partners in the process of search and discovery” The importance of Collaboration Services is highlighted in the Cyberinfrastructure report of Atkins et al. 2003

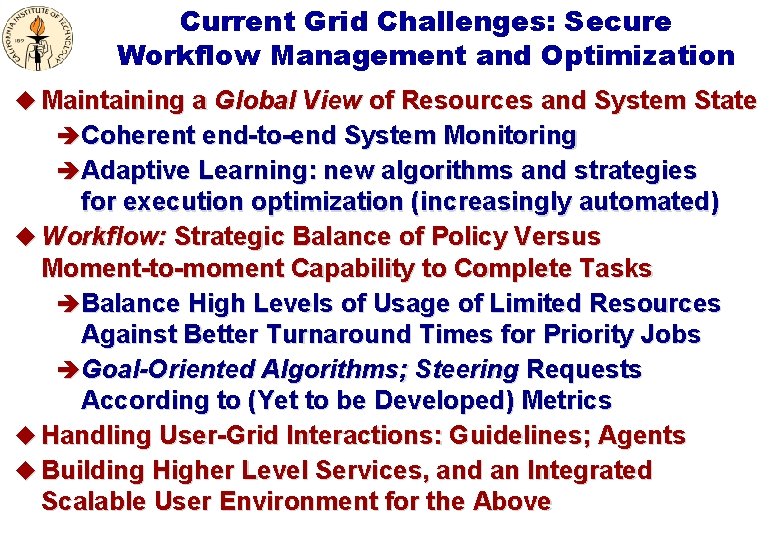

Current Grid Challenges: Secure Workflow Management and Optimization u Maintaining a Global View of Resources and System State è Coherent end-to-end System Monitoring è Adaptive Learning: new algorithms and strategies for execution optimization (increasingly automated) u Workflow: Strategic Balance of Policy Versus Moment-to-moment Capability to Complete Tasks è Balance High Levels of Usage of Limited Resources Against Better Turnaround Times for Priority Jobs è Goal-Oriented Algorithms; Steering Requests According to (Yet to be Developed) Metrics u Handling User-Grid Interactions: Guidelines; Agents u Building Higher Level Services, and an Integrated Scalable User Environment for the Above

14000+ Hosts; 8000+ Registered Users in 64 Countries 56 (7 I 2) Reflectors Annual Growth 2 to 3 X

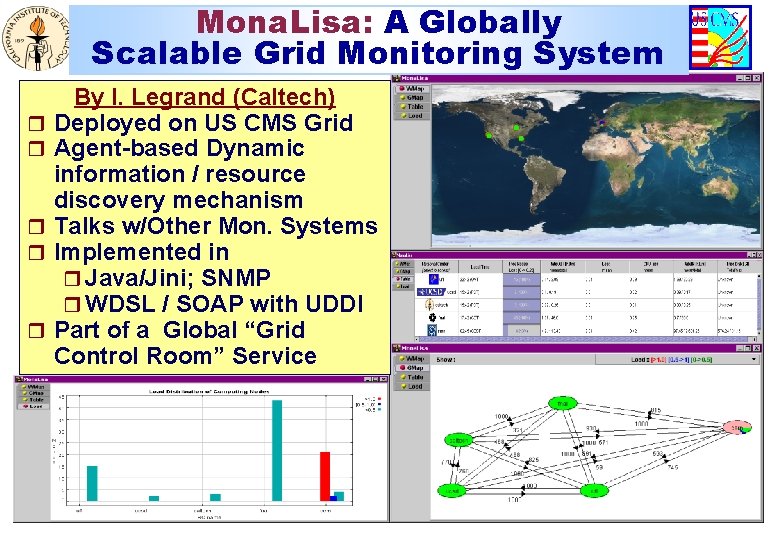

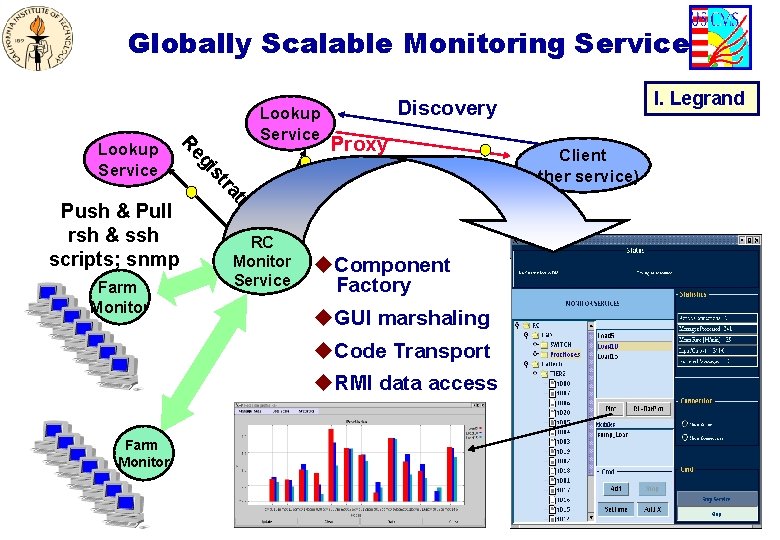

Mona. Lisa: A Globally Scalable Grid Monitoring System r r r By I. Legrand (Caltech) Deployed on US CMS Grid Agent-based Dynamic information / resource discovery mechanism Talks w/Other Mon. Systems Implemented in r Java/Jini; SNMP r WDSL / SOAP with UDDI Part of a Global “Grid Control Room” Service

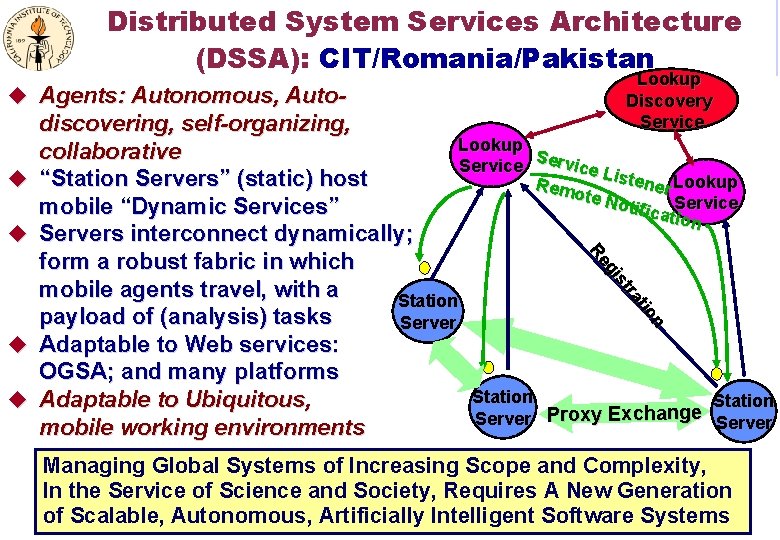

Distributed System Services Architecture (DSSA): CIT/Romania/Pakistan u Agents: Autonomous, Auto- u u Lookup Discovery Service n tio ra st gi Re discovering, self-organizing, Lookup collaborative S Service Li stene Lookup “Station Servers” (static) host Rem r ote N otific Service mobile “Dynamic Services” ation Servers interconnect dynamically; form a robust fabric in which mobile agents travel, with a Station payload of (analysis) tasks Server Adaptable to Web services: OGSA; and many platforms Station Adaptable to Ubiquitous, Station ge han Server Proxy Exc Server mobile working environments u u Managing Global Systems of Increasing Scope and Complexity, In the Service of Science and Society, Requires A New Generation of Scalable, Autonomous, Artificially Intelligent Software Systems

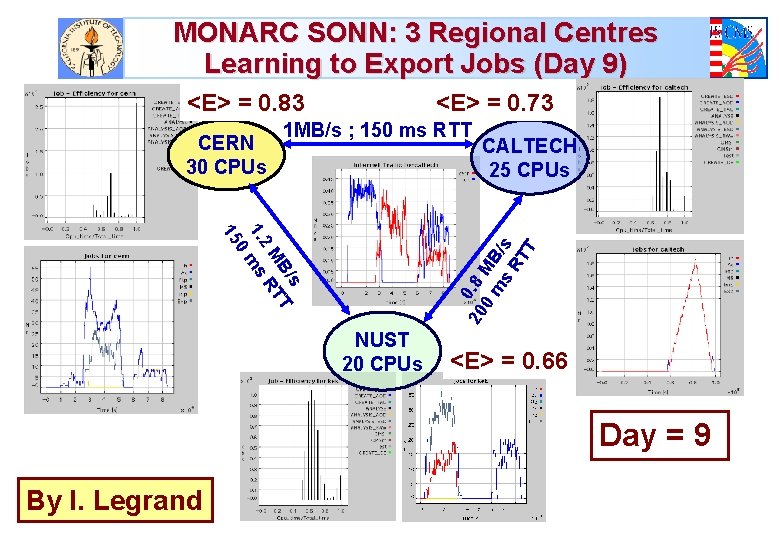

MONARC SONN: 3 Regional Centres Learning to Export Jobs (Day 9) <E> = 0. 83 CERN 30 CPUs <E> = 0. 73 1 MB/s ; 150 ms RTT s B/ T M RT 2 1. ms 0 15 20 0. 8 0 MB m s /s RT T CALTECH 25 CPUs NUST 20 CPUs <E> = 0. 66 Day = 9 By I. Legrand

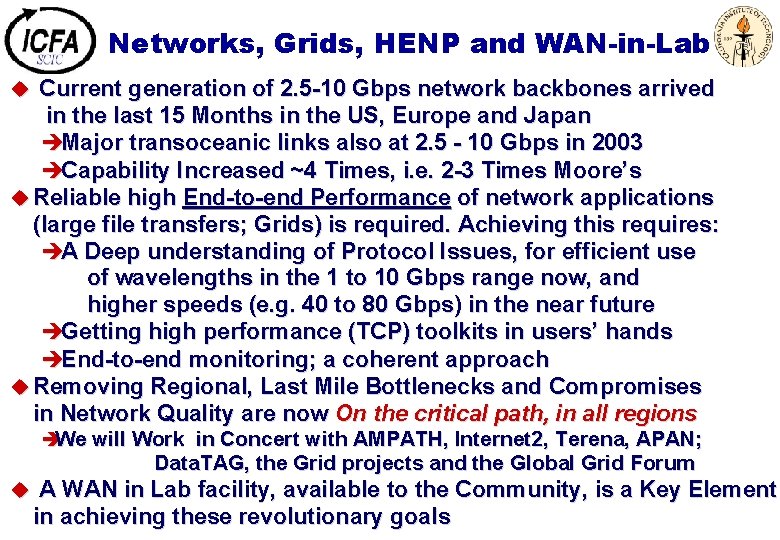

Networks, Grids, HENP and WAN-in-Lab u Current generation of 2. 5 -10 Gbps network backbones arrived in the last 15 Months in the US, Europe and Japan èMajor transoceanic links also at 2. 5 - 10 Gbps in 2003 èCapability Increased ~4 Times, i. e. 2 -3 Times Moore’s u Reliable high End-to-end Performance of network applications (large file transfers; Grids) is required. Achieving this requires: èA Deep understanding of Protocol Issues, for efficient use of wavelengths in the 1 to 10 Gbps range now, and higher speeds (e. g. 40 to 80 Gbps) in the near future èGetting high performance (TCP) toolkits in users’ hands èEnd-to-end monitoring; a coherent approach u Removing Regional, Last Mile Bottlenecks and Compromises in Network Quality are now On the critical path, in all regions èWe will Work in Concert with AMPATH, Internet 2, Terena, APAN; Data. TAG, the Grid projects and the Global Grid Forum u A WAN in Lab facility, available to the Community, is a Key Element in achieving these revolutionary goals

Some Extra Slides Follow

Global Networks for HENP u National and International Networks, with sufficient (rapidly increasing) capacity and capability, are essential for èThe daily conduct of collaborative work in both experiment and theory èDetector development & construction on a global scale; Data analysis involving physicists from all world regions èThe formation of worldwide collaborations èThe conception, design and implementation of next generation facilities as “global networks” u “Collaborations on this scale would never have been attempted, if they could not rely on excellent networks”

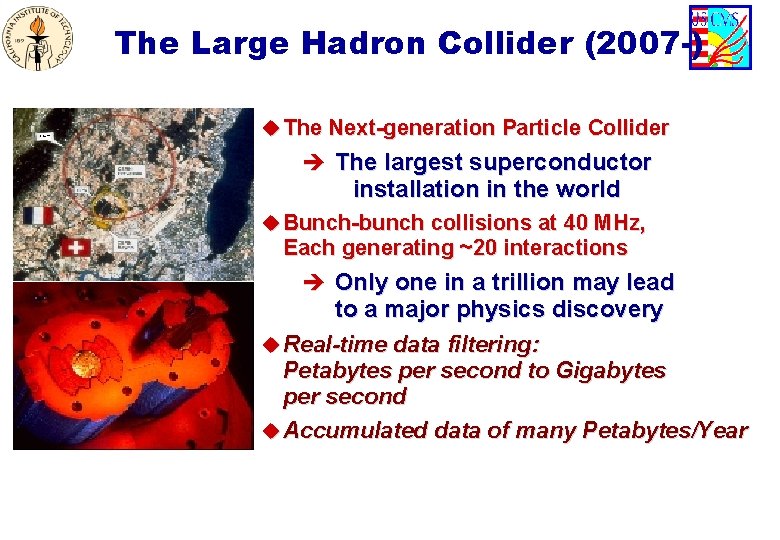

The Large Hadron Collider (2007 -) u The Next-generation Particle Collider è The largest superconductor installation in the world u Bunch-bunch collisions at 40 MHz, Each generating ~20 interactions è Only one in a trillion may lead to a major physics discovery u Real-time data filtering: Petabytes per second to Gigabytes per second u Accumulated data of many Petabytes/Year

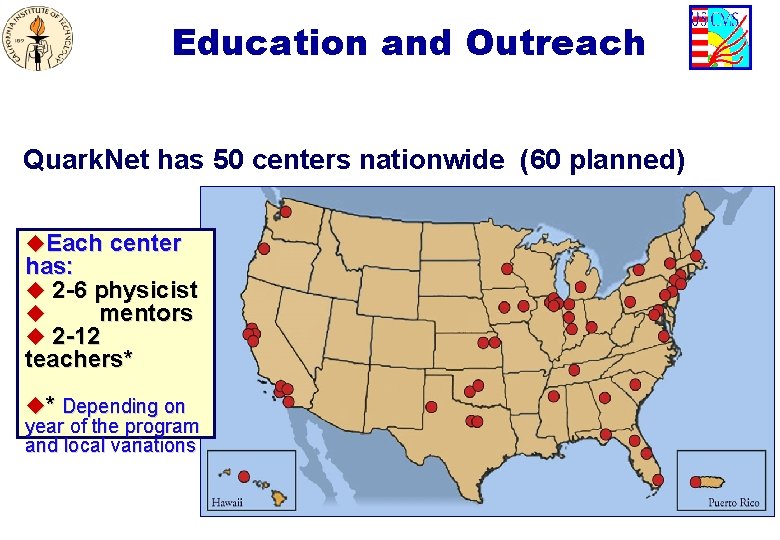

Education and Outreach Quark. Net has 50 centers nationwide (60 planned) u. Each center has: u 2 -6 physicist u mentors u 2 -12 teachers* u* Depending on year of the program and local variations

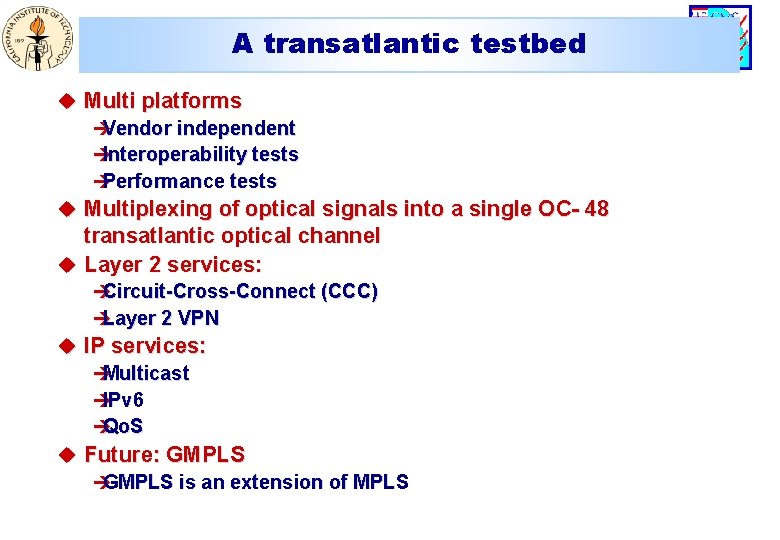

A transatlantic testbed u Multi platforms è Vendor independent è Interoperability tests è Performance tests u Multiplexing of optical signals into a single OC- 48 transatlantic optical channel u Layer 2 services: è Circuit-Cross-Connect (CCC) è Layer 2 VPN u IP services: è Multicast è IPv 6 è Qo. S u Future: GMPLS è GMPLS is an extension of MPLS

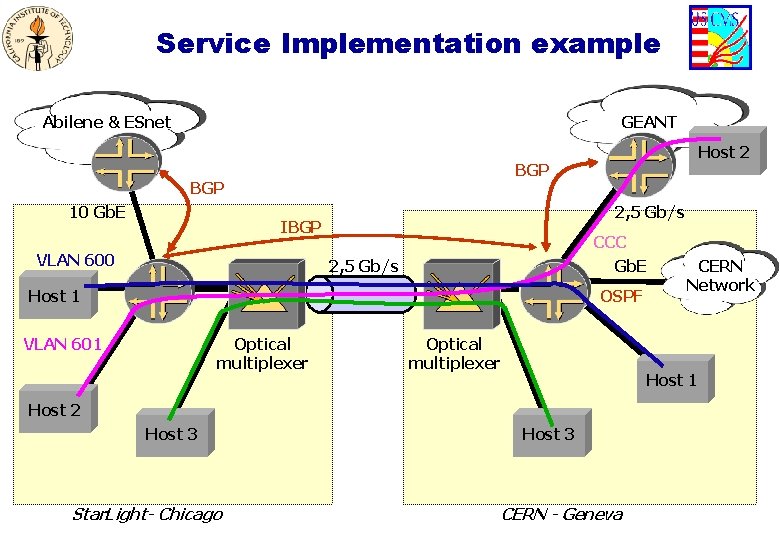

Service Implementation example Abilene & ESnet GEANT BGP 10 Gb. E Host 2 2, 5 Gb/s IBGP VLAN 600 CCC Gb. E 2, 5 Gb/s OSPF Host 1 VLAN 601 Optical multiplexer Host 1 Host 2 Host 3 Star. Light- Chicago CERN Network Host 3 CERN - Geneva

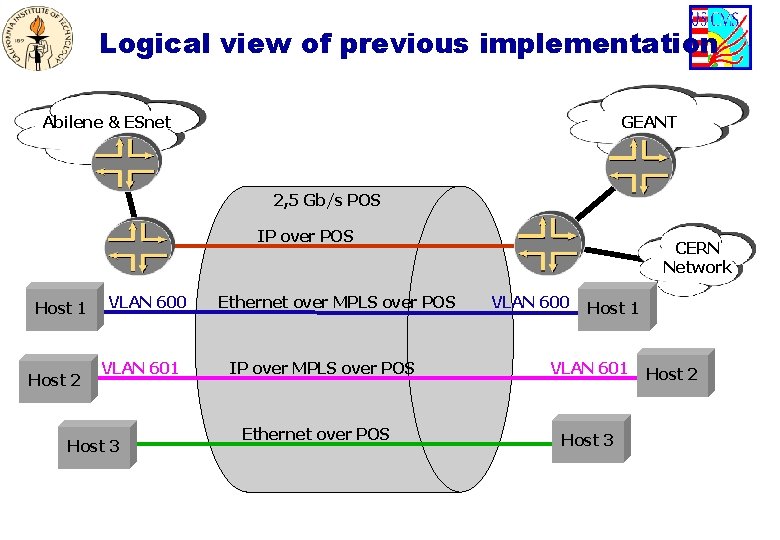

Logical view of previous implementation Abilene & ESnet GEANT 2, 5 Gb/s POS IP over POS Host 1 Host 2 VLAN 600 VLAN 601 Host 3 Ethernet over MPLS over POS IP over MPLS over POS Ethernet over POS CERN Network VLAN 600 Host 1 VLAN 601 Host 3 Host 2

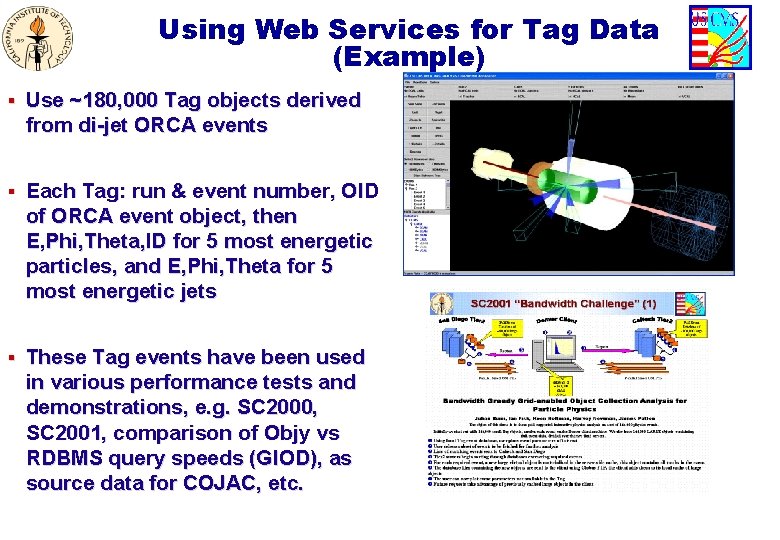

Using Web Services for Tag Data (Example) § Use ~180, 000 Tag objects derived from di-jet ORCA events § Each Tag: run & event number, OID of ORCA event object, then E, Phi, Theta, ID for 5 most energetic particles, and E, Phi, Theta for 5 most energetic jets § These Tag events have been used in various performance tests and demonstrations, e. g. SC 2000, SC 2001, comparison of Objy vs RDBMS query speeds (GIOD), as source data for COJAC, etc.

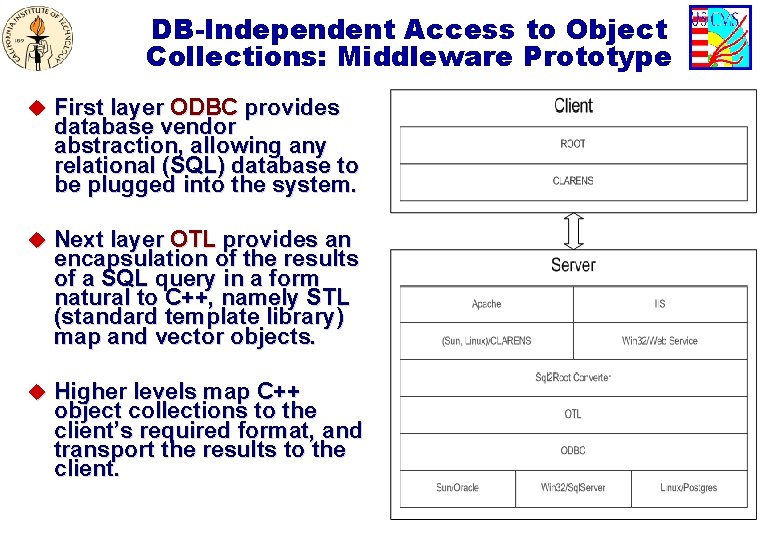

DB-Independent Access to Object Collections: Middleware Prototype u First layer ODBC provides database vendor abstraction, allowing any relational (SQL) database to be plugged into the system. u Next layer OTL provides an encapsulation of the results of a SQL query in a form natural to C++, namely STL (standard template library) map and vector objects. u Higher levels map C++ object collections to the client’s required format, and transport the results to the client.

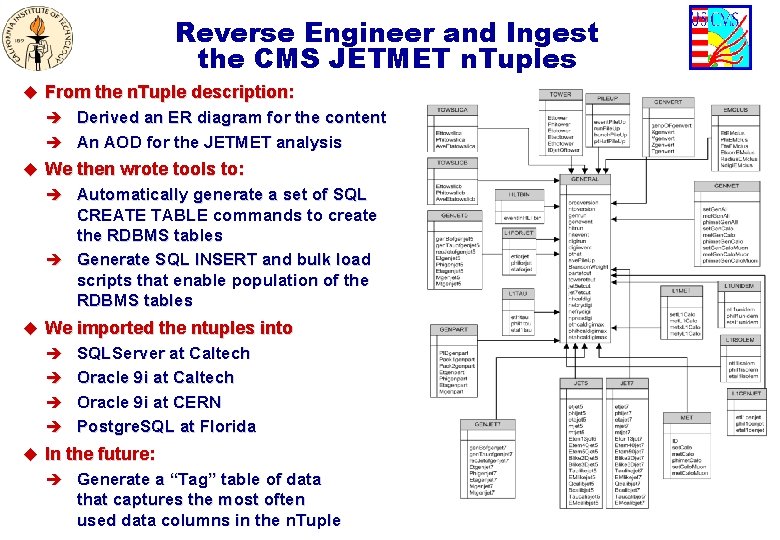

Reverse Engineer and Ingest the CMS JETMET n. Tuples u From the n. Tuple description: è Derived an ER diagram for the content è An AOD for the JETMET analysis u We then wrote tools to: è Automatically generate a set of SQL CREATE TABLE commands to create the RDBMS tables è Generate SQL INSERT and bulk load scripts that enable population of the RDBMS tables u We imported the ntuples into è SQLServer at Caltech è Oracle 9 i at CERN è Postgre. SQL at Florida u In the future: è Generate a “Tag” table of data that captures the most often used data columns in the n. Tuple

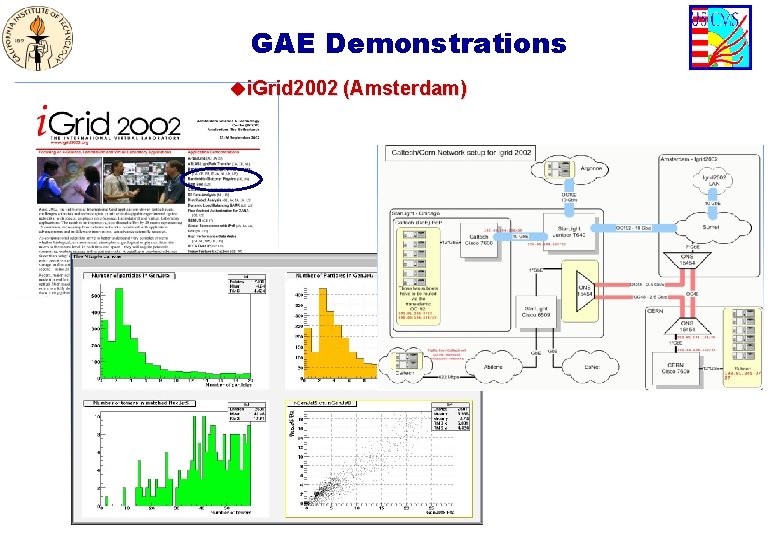

GAE Demonstrations ui. Grid 2002 (Amsterdam)

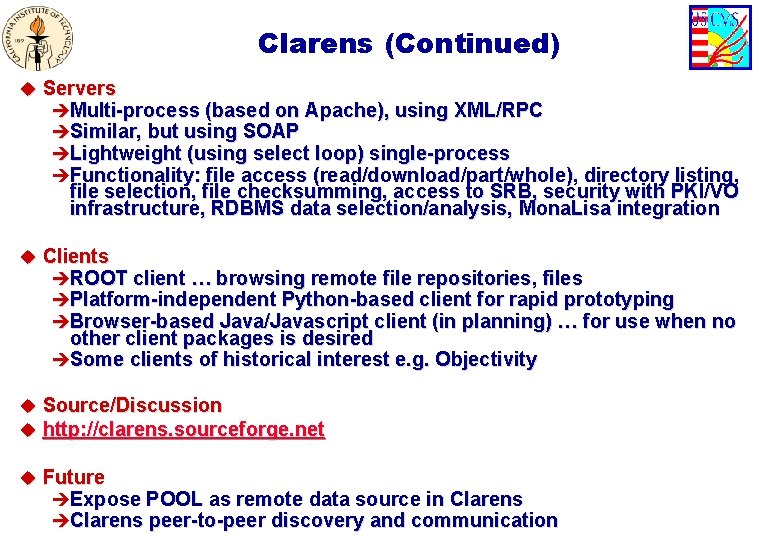

Clarens (Continued) u Servers èMulti-process (based on Apache), using XML/RPC èSimilar, but using SOAP èLightweight (using select loop) single-process èFunctionality: file access (read/download/part/whole), directory listing, file selection, file checksumming, access to SRB, security with PKI/VO infrastructure, RDBMS data selection/analysis, Mona. Lisa integration u Clients èROOT client … browsing remote file repositories, files èPlatform-independent Python-based client for rapid prototyping èBrowser-based Java/Javascript client (in planning) … for use when no other client packages is desired èSome clients of historical interest e. g. Objectivity u Source/Discussion u http: //clarens. sourceforge. net u Future èExpose POOL as remote data source in Clarens èClarens peer-to-peer discovery and communication

Globally Scalable Monitoring Service n tio Farm Monitor Proxy ra st Push & Pull rsh & ssh scripts; snmp RC Monitor Service u. Component Factory u. GUI marshaling u. Code Transport u. RMI data access Farm Monitor I. Legrand Discovery gi Re Lookup Service Client (other service)

NSF/ITR: A Global Grid-Enabled Collaboratory for Scientific Research (GECSR) and Grid Analysis Environment (GAE) CHEP 2001, Beijing Harvey B Newman California Institute of Technology September 6, 2001

GECSR Features u Persistent Collaboration: desktop, small and large conference rooms, halls, Virtual Control Rooms u Hierarchical, Persistent, ad-hoc peer groups (using Virtual Organization management tools) u “Language of Access”: an ontology and terminology for users to control the GECSR. è Example: cost of interrupting an expert, virtual open, and partly-open doors. u Support for Human- System (Agent)-Human as well as Human- Human interactions u Evaluation, Evolution and Optimisation of the GECSR u Agent-based decision support for users u The GECSR will be delivered in “packages” over the course of

GECSR: First Year Package u NEESGrid: unifying interface and tool launch system èCHEF Framework: portlets for file transfer using Grid. FTP, teamlets, announcements, chat, shared calendar, role-based access, threaded discussions, document repository èwww. chefproject. org u GIS-GIB: a geographic-information-systems-based Grid information broker u VO Management tools (developed for PPDG) u Videoconferencing and shared desktop: VRVS and VNC (www. vrvs. org and www. vnc. org) u Mona. Lisa: real time system monitoring and user control u The above tools already exist: the effort is in integrating and identifying the missing functionality.

GECSR: Second Year Package u. Enhancements, including: u. Detachable windows u. Web Services Definition (WSDL) for CHEF u. Federated Collaborative Servers u. Search capabilities u. Learning Management Components u. Grid Computational Portal Toolkit u. Knowledge Book, HEPBook u. Software Agents for intelligent searching etc. u. Flexible Authentication

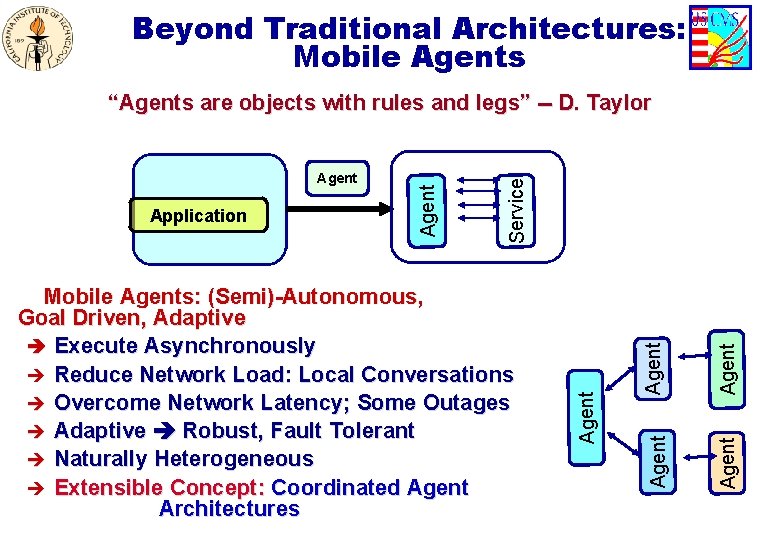

Beyond Traditional Architectures: Mobile Agents Agent Mobile Agents: (Semi)-Autonomous, Goal Driven, Adaptive è Execute Asynchronously è Reduce Network Load: Local Conversations è Overcome Network Latency; Some Outages è Adaptive Robust, Fault Tolerant è Naturally Heterogeneous è Extensible Concept: Coordinated Agent Architectures Agent Application Service Agent “Agents are objects with rules and legs” -- D. Taylor

- Slides: 54