Hash Tables CENG 213 Data Structures Yusuf Sahilliolu

Hash Tables CENG 213 Data Structures Yusuf Sahillioğlu 1

Hash Tables • We’ll discuss the hash table ADT which supports only a subset of the operations allowed by binary search trees. • The implementation of hash tables is called hashing. • Hashing is a technique used for performing insertions, deletions and finds in constant average time (i. e. O(1)). – Worst-case times O(n), n # of keys. – Perfect Hashing O(1) worst for Finds. O(n) worst-case Space. Polynomial build time. Static, e. g. , good for English dictionary which is rarely updated. https: //youtu. be/z 0 l. J 2 k 0 sl 1 g? t=3351 • Inefficient in operations that require any ordering information among the elements, such as find. Min, find. Max and printing the entire table in sorted order. 2

General Idea • The ideal hash table structure is merely an array of some fixed size, containing the items. • A stored item needs to have a data member, called key, that will be used in computing the index value for the item. – Key could be an integer, a string, etc – e. g. a name or Id that is a part of a large employee structure • The size of the array is Table. Size. • The items that are stored in the hash table are indexed by values from 0 to Table. Size – 1. • Each key is mapped into some number in the range 0 to Table. Size – 1; this number is called hash value. • The mapping is called a hash function. 3

General Idea • Direct Access Table and Hash Table are two different ways of implementing Dictionary ADT. Hash Table is more practical. • Spelling correction is done with a Dictionary: – Search the word in Dictionary. – If not found, perturb some letters and search new ones until a hit. • Since Dictionaries are so fast, we can afford many trial perturbation of letters. • CTRL+F: use Dictionaries to find a pattern (substring) in text. • Authentication: username vs. password matching, your username is searched quickly in Dictionary to get the corresponding pwd. • And many other applications. 4

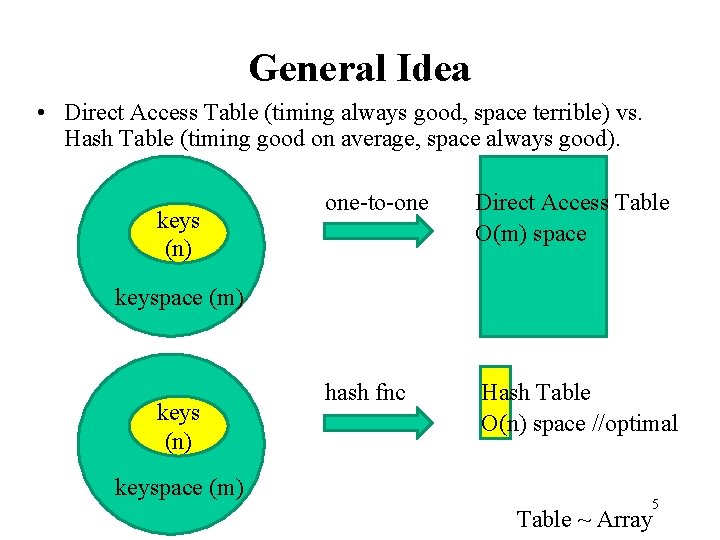

General Idea • Direct Access Table (timing always good, space terrible) vs. Hash Table (timing good on average, space always good). keys (n) one-to-one Direct Access Table O(m) space hash fnc Hash Table O(n) space //optimal keyspace (m) keys (n) keyspace (m) 5 Table ~ Array

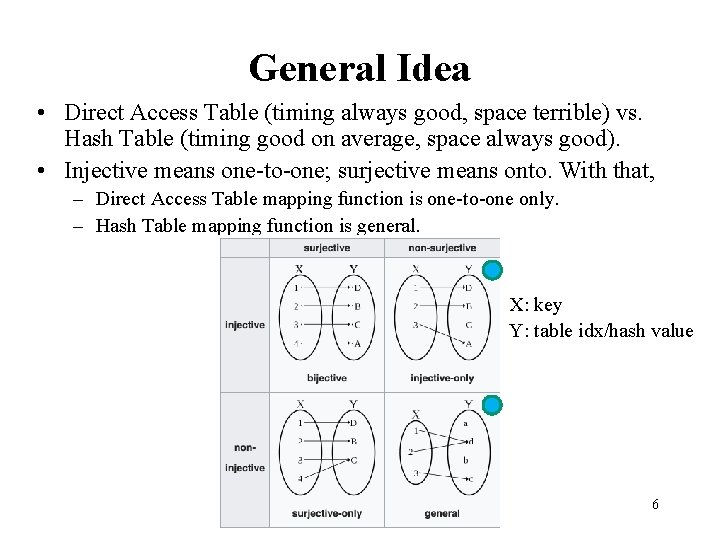

General Idea • Direct Access Table (timing always good, space terrible) vs. Hash Table (timing good on average, space always good). • Injective means one-to-one; surjective means onto. With that, – Direct Access Table mapping function is one-to-one only. – Hash Table mapping function is general. X: key Y: table idx/hash value 6

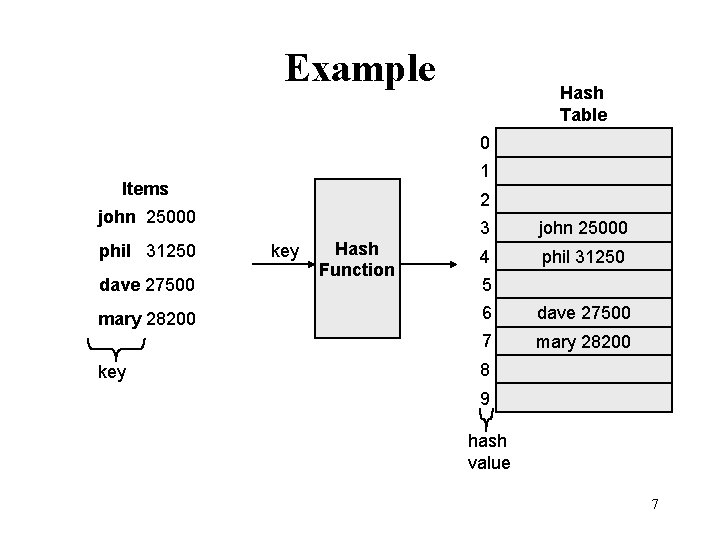

Example Hash Table 0 1 Items 2 john 25000 phil 31250 dave 27500 mary 28200 key Hash Function 3 john 25000 4 phil 31250 5 6 dave 27500 7 mary 28200 8 9 hash value 7

Hash Function • The hash function: – must be simple to compute. – must distribute the keys evenly among the cells. • If we know which keys will occur in advance we can write perfect hash functions, but we don’t. 8

Hash function Problems: • Keys may not be numeric. • Number of possible keys is much larger than the space available in table. • Different keys may map into same location – – Hash function is not one-to-one => collision. If there are too many collisions, the performance of the hash table will suffer dramatically. 9

Hash Functions • If the input keys are integers then simply Key mod Table. Size is a general strategy. – Unless key happens to have some undesirable properties. (e. g. all keys end in 0 and we use mod 10). Prime size helps in this case (next slide). • If the keys are strings, hash function needs more care. – First convert it into a numeric value. 10

Table. Size • It is important to have a prime Table. Size. • Key mod Table. Size to map everything to [0, Table. Size-1] table indices. • Every key that shares a common factor with Table. Size will be hashed to a slot that is a multiple of this factor. So, use a number that has very few factors, i. e. , a prime number. – But why? • If keys are uniformly distributed, then keys 11 w/o common factors appear good utiliztn.

Table. Size • It is important to have a prime Table. Size. • Key mod Table. Size to map everything to [0, Table. Size-1] table indices. • Every key that shares a common factor with Table. Size will be hashed to a slot that is a multiple of this factor. Keys (all divisible by 15): 15, 30, 45, 60, 75, . . key % 15 slot 0 key % 30 slots 15, 0, . . key % 45 15, 30, 0, . . 12

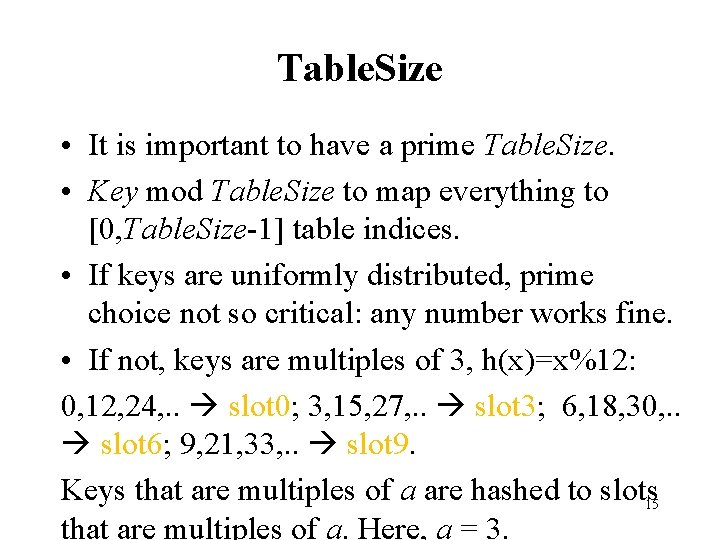

Table. Size • It is important to have a prime Table. Size. • Key mod Table. Size to map everything to [0, Table. Size-1] table indices. • If keys are uniformly distributed, prime choice not so critical: any number works fine. • If not, keys are multiples of 3, h(x)=x%12: 0, 12, 24, . . slot 0; 3, 15, 27, . . slot 3; 6, 18, 30, . . slot 6; 9, 21, 33, . . slot 9. Keys that are multiples of a are hashed to slots 15 that are multiples of a. Here, a = 3.

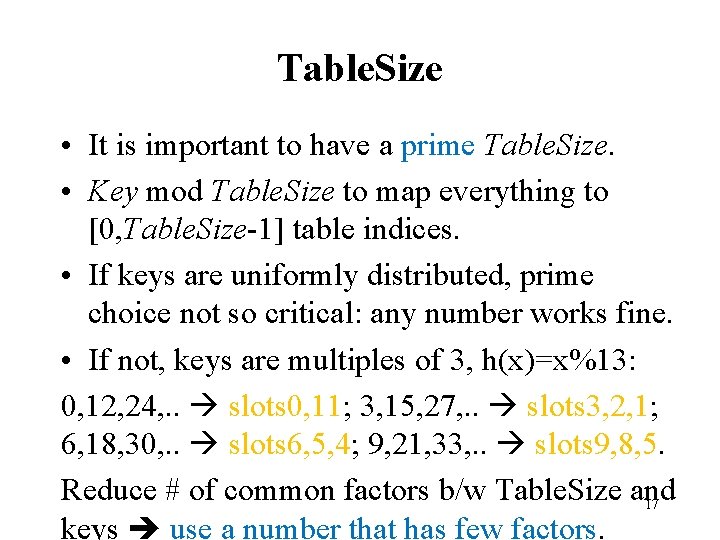

Table. Size • It is important to have a prime Table. Size. • Key mod Table. Size to map everything to [0, Table. Size-1] table indices. • If keys are uniformly distributed, prime choice not so critical: any number works fine. • If not, keys are multiples of 3, h(x)=x%13: 0, 12, 24, . . slots 0, 11; 3, 15, 27, . . slots 3, 2, 1; 6, 18, 30, . . slots 6, 5, 4; 9, 21, 33, . . slots 9, 8, 5. Reduce # of common factors b/w Table. Size and 17 keys use a number that has few factors.

Table. Size • It is important to have Table. Size = �(keys). • Omega: Table. Size must be at least # keys to make load factor constant (slide 26). • Big-O: Table. Size must be at most # keys to make the space linear. 18

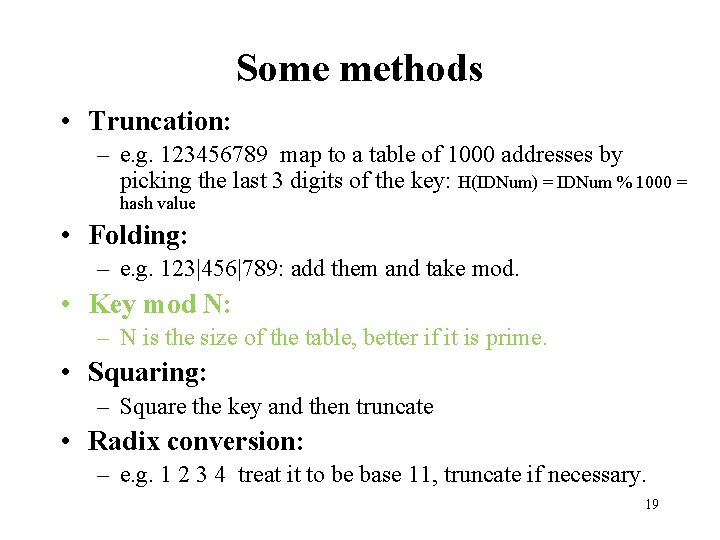

Some methods • Truncation: – e. g. 123456789 map to a table of 1000 addresses by picking the last 3 digits of the key: H(IDNum) = IDNum % 1000 = hash value • Folding: – e. g. 123|456|789: add them and take mod. • Key mod N: – N is the size of the table, better if it is prime. • Squaring: – Square the key and then truncate • Radix conversion: – e. g. 1 2 3 4 treat it to be base 11, truncate if necessary. 19

![Hash Function 1 • Add up the ASCII values ([0, 127]) of all chars Hash Function 1 • Add up the ASCII values ([0, 127]) of all chars](http://slidetodoc.com/presentation_image_h/c1c39c746f58117c7e32f13a5a2c06ab/image-17.jpg)

Hash Function 1 • Add up the ASCII values ([0, 127]) of all chars of the key. int hash(const string &key, int table. Size) { int has. Val = 0; for (int i = 0; i < key. length(); i++) hash. Val += key[i]; return hash. Val % table. Size; } • Simple to implement and fast. • However, if the table size is large, the function does not distribute the keys well. • e. g. Table size =10000, key length <= 8, the hash function can assume values only between 0 and 8*127=1016. 20

Hash Function 2 • Examine only the first 3 characters of the key. int hash (const string &key, int table. Size) { return (key[0] + 27*key[1] + 729*key[2]) % table. Size; } • In theory, 26 * 26 = 17576 different words can be generated. However, English is not random, only 2851 different combinations are possible. • Thus, this function although easily computable, is also not appropriate if the hash table is reasonably large. 21

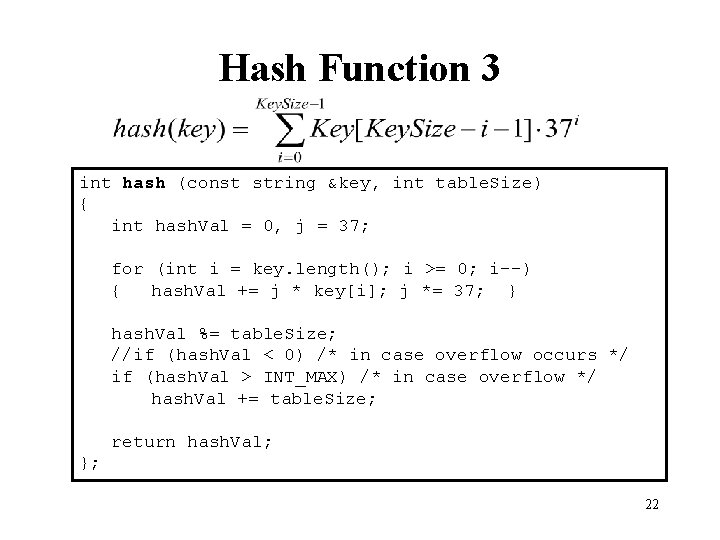

Hash Function 3 int hash (const string &key, int table. Size) { int hash. Val = 0, j = 37; for (int i = key. length(); i >= 0; i--) { hash. Val += j * key[i]; j *= 37; } hash. Val %= table. Size; //if (hash. Val < 0) /* in case overflow occurs */ if (hash. Val > INT_MAX) /* in case overflow */ hash. Val += table. Size; return hash. Val; }; 22

![Hash function for strings: key[i] 98 105 key a l i 0 1 2 Hash function for strings: key[i] 98 105 key a l i 0 1 2](http://slidetodoc.com/presentation_image_h/c1c39c746f58117c7e32f13a5a2c06ab/image-20.jpg)

Hash function for strings: key[i] 98 105 key a l i 0 1 2 i Key. Size = 3; hash(“ali”) = (105 * 1 + 108*37 + 98*372) % 10, 007 = 8172 “ali” hash function 0 1 2 …… ali 8172 …… 10, 006 (Table. Size) 23

Collision Resolution • If, when an element is inserted, it hashes to the same value as an already inserted element, then we have a collision and need to resolve it. • There are several methods for dealing with this: – Separate chaining – Open addressing • Linear Probing • Quadratic Probing • Double Hashing 24

Separate Chaining • The idea is to keep a list of all elements that hash to the same value. – The array elements are pointers to the first nodes of the lists. – A new item is inserted to the front of the list. • Advantages: – Better space utilization for large items. – Simple collision handling: searching linked list. – Overflow: we can store more items than the hash table size. – Deletion is quick and easy: deletion from the linked list. 25

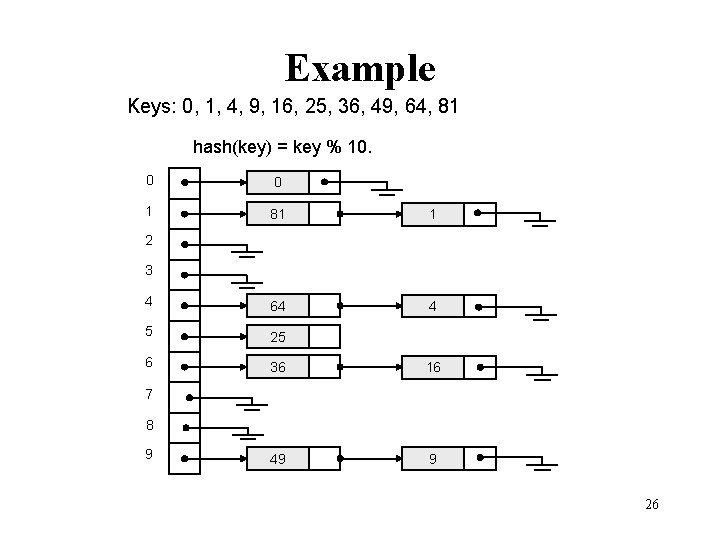

Example Keys: 0, 1, 4, 9, 16, 25, 36, 49, 64, 81 hash(key) = key % 10. 0 0 1 81 1 4 64 4 5 25 6 36 16 49 9 2 3 7 8 9 26

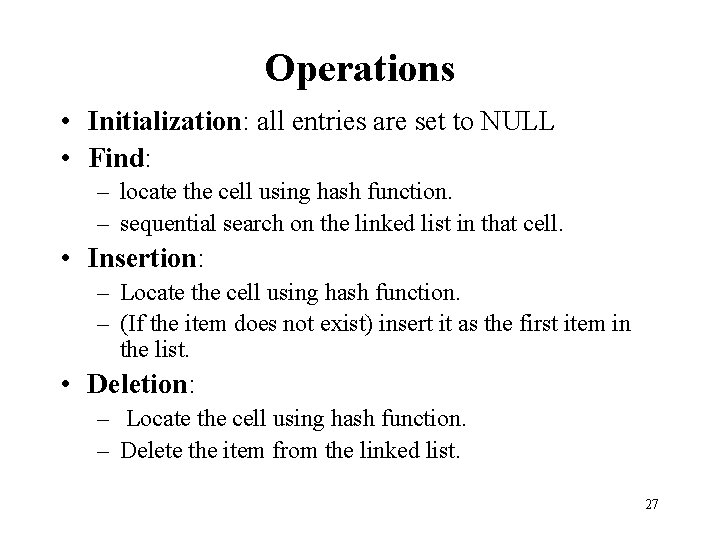

Operations • Initialization: all entries are set to NULL • Find: – locate the cell using hash function. – sequential search on the linked list in that cell. • Insertion: – Locate the cell using hash function. – (If the item does not exist) insert it as the first item in the list. • Deletion: – Locate the cell using hash function. – Delete the item from the linked list. 27

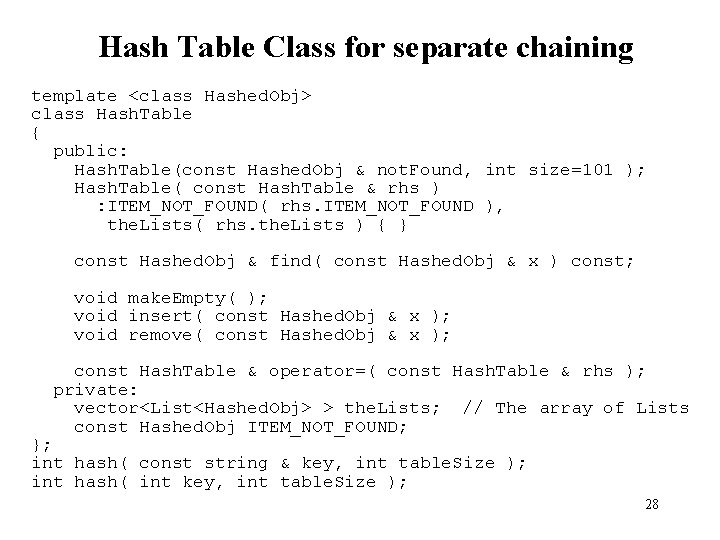

Hash Table Class for separate chaining template <class Hashed. Obj> class Hash. Table { public: Hash. Table(const Hashed. Obj & not. Found, int size=101 ); Hash. Table( const Hash. Table & rhs ) : ITEM_NOT_FOUND( rhs. ITEM_NOT_FOUND ), the. Lists( rhs. the. Lists ) { } const Hashed. Obj & find( const Hashed. Obj & x ) const; void make. Empty( ); void insert( const Hashed. Obj & x ); void remove( const Hashed. Obj & x ); const Hash. Table & operator=( const Hash. Table & rhs ); private: vector<List<Hashed. Obj> > the. Lists; // The array of Lists const Hashed. Obj ITEM_NOT_FOUND; }; int hash( const string & key, int table. Size ); int hash( int key, int table. Size ); 28

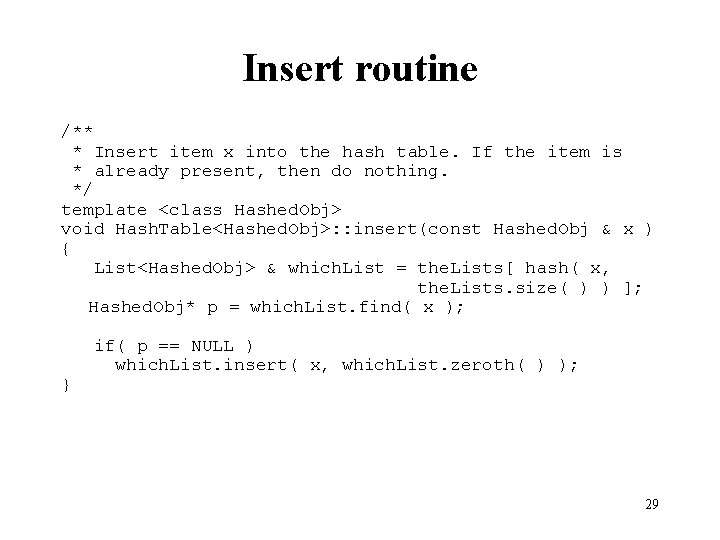

Insert routine /** * Insert item x into the hash table. If the item is * already present, then do nothing. */ template <class Hashed. Obj> void Hash. Table<Hashed. Obj>: : insert(const Hashed. Obj & x ) { List<Hashed. Obj> & which. List = the. Lists[ hash( x, the. Lists. size( ) ) ]; Hashed. Obj* p = which. List. find( x ); } if( p == NULL ) which. List. insert( x, which. List. zeroth( ) ); 29

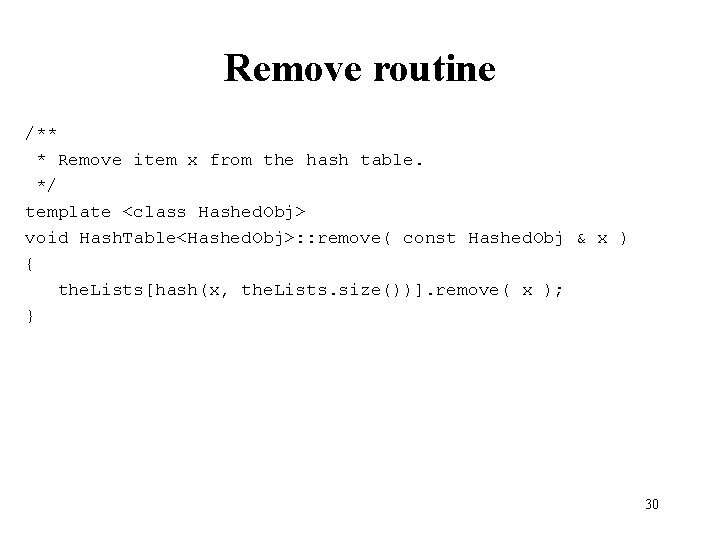

Remove routine /** * Remove item x from the hash table. */ template <class Hashed. Obj> void Hash. Table<Hashed. Obj>: : remove( const Hashed. Obj & x ) { the. Lists[hash(x, the. Lists. size())]. remove( x ); } 30

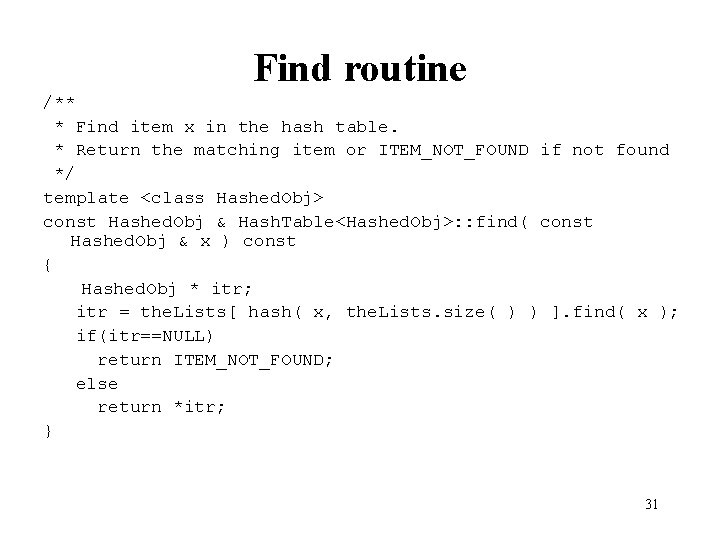

Find routine /** * Find item x in the hash table. * Return the matching item or ITEM_NOT_FOUND if not found */ template <class Hashed. Obj> const Hashed. Obj & Hash. Table<Hashed. Obj>: : find( const Hashed. Obj & x ) const { Hashed. Obj * itr; itr = the. Lists[ hash( x, the. Lists. size( ) ) ]. find( x ); if(itr==NULL) return ITEM_NOT_FOUND; else return *itr; } 31

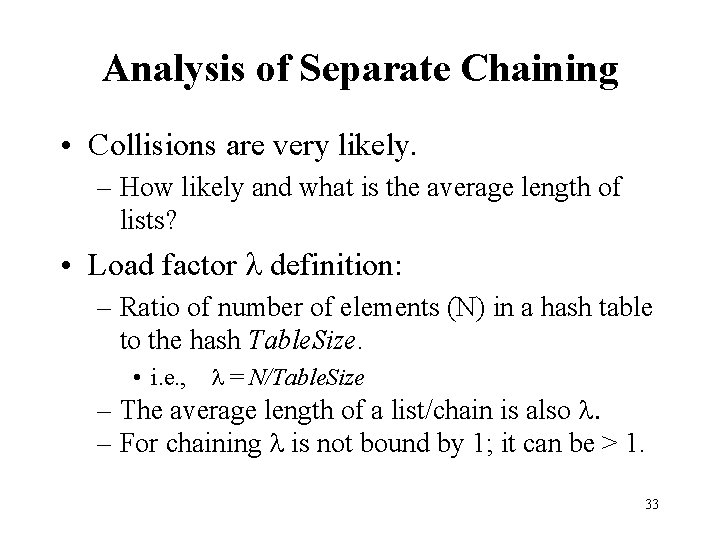

Analysis of Separate Chaining • Collisions are very likely. – How likely and what is the average length of lists? • Load factor l definition: – Ratio of number of elements (N) in a hash table to the hash Table. Size. • i. e. , l = N/Table. Size – The average length of a list/chain is also l. • 1/Table. Size probability (assume uniform distribution) for a key to go 1 of Table. Size slots. • Do N independent trials N / Table. Size expected 32 chain length.

Analysis of Separate Chaining • Collisions are very likely. – How likely and what is the average length of lists? • Load factor l definition: – Ratio of number of elements (N) in a hash table to the hash Table. Size. • i. e. , l = N/Table. Size – The average length of a list/chain is also l. – For chaining l is not bound by 1; it can be > 1. 33

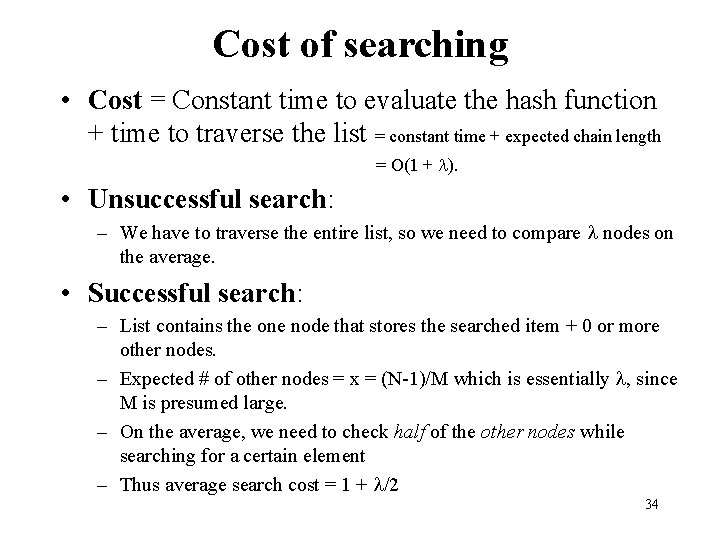

Cost of searching • Cost = Constant time to evaluate the hash function + time to traverse the list = constant time + expected chain length = O(1 + l). • Unsuccessful search: – We have to traverse the entire list, so we need to compare l nodes on the average. • Successful search: – List contains the one node that stores the searched item + 0 or more other nodes. – Expected # of other nodes = x = (N-1)/M which is essentially l, since M is presumed large. – On the average, we need to check half of the other nodes while searching for a certain element – Thus average search cost = 1 + l/2 34

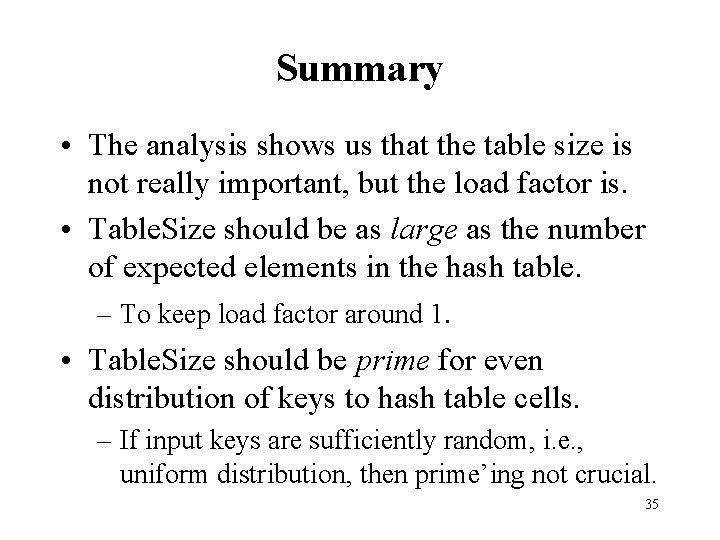

Summary • The analysis shows us that the table size is not really important, but the load factor is. • Table. Size should be as large as the number of expected elements in the hash table. – To keep load factor around 1. • Table. Size should be prime for even distribution of keys to hash table cells. – If input keys are sufficiently random, i. e. , uniform distribution, then prime’ing not crucial. 35

Hashing: Open Addressing 36

Collision Resolution with Open Addressing • Separate chaining has the disadvantage of using linked lists. – Requires the implementation of a second data structure. • In an open addressing hashing system, all the data go inside the table. – Thus, a bigger table is needed. • Generally the load factor should be below 0. 5. – If a collision occurs, alternative cells are tried until an empty cell is found. 37

Open Addressing • More formally: – Cells h 0(x), h 1(x), h 2(x), …are tried in succession where hi(x) = (hash(x) + f(i)) mod Table. Size, with f(0) = 0. – The function f is the collision resolution strategy. • There are three common collision resolution strategies: – Linear Probing – Quadratic probing – Double hashing 38

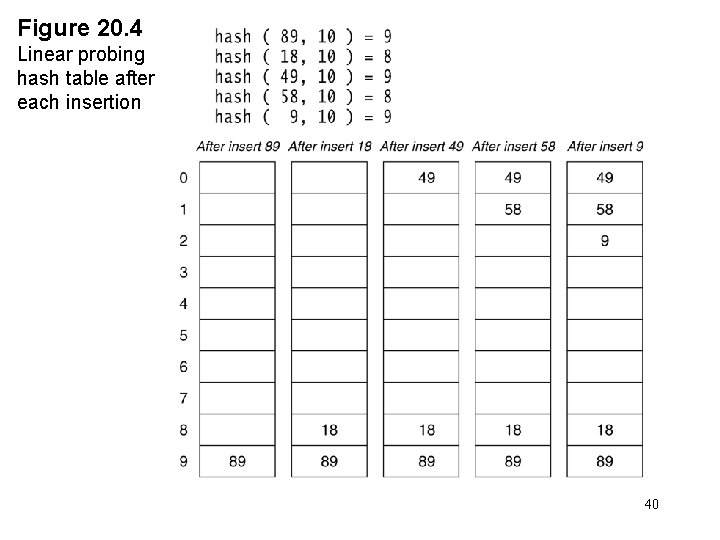

Linear Probing • In linear probing, collisions are resolved by sequentially scanning an array (with wraparound) until an empty cell is found. – i. e. f is a linear function of i, typically f(i)= i. • Example: – Insert items with keys: 89, 18, 49, 58, 9 into an empty hash table. – Table size is 10. – Hash function is hash(x) = x mod 10. • f(i) = i; 39

Figure 20. 4 Linear probing hash table after each insertion 40

Find and Delete • The find algorithm follows the same probe sequence as the insert algorithm. – A find for 58 would involve 4 probes. – A find for 19 would involve 5 probes. • We must use lazy deletion (i. e. , marking items as deleted) – Standard deletion (i. e. physically removing the item) cannot be performed. • When an item is deleted, the location must be marked in a special way, so that the searches know that the spot used to have something in it. – e. g. , remove 89 from hash table, then search 58. 41

Clustering Problem • As long as table is big enough, a free cell can always be found, but the time to do so can get quite large. • Worse, even if the table is relatively empty, blocks of occupied cells start forming. • This effect is known as primary clustering. • Any key that hashes into the cluster will require several attempts to resolve the collision, and then it will add to the cluster. 42

Analysis of insertion • The average number of cells that are examined in an insertion using linear probing is roughly (1 + 1/(1 – λ)2) / 2 • Proof is beyond the scope of this class • Note that λ <= 1 in open addressing; recall, λ = N / Table. Size. • For a half full table we obtain 2. 5 as the average number of cells examined during an insertion. • Primary clustering is a problem at high load factors. For half empty tables the effect is not disastrous. 43

Analysis of Find • An unsuccessful search costs the same as insertion. • The cost of a successful search of X is equal to the cost of inserting X at the time X was inserted. • For λ = 0. 5 the average cost of insertion is 2. 5. The average cost of finding the newly inserted item will be 2. 5 no matter how many insertions follow. • Thus the average cost of a successful search is an average of the insertion costs over all smaller load factors. 44

Average cost of find • The average number of cells that are examined in an unsuccessful search using linear probing is roughly (1 + 1/(1 – λ)2) / 2. • The average number of cells that are examined in a successful search is approximately (1 + 1/(1 – λ)) / 2. – Derived from: 45

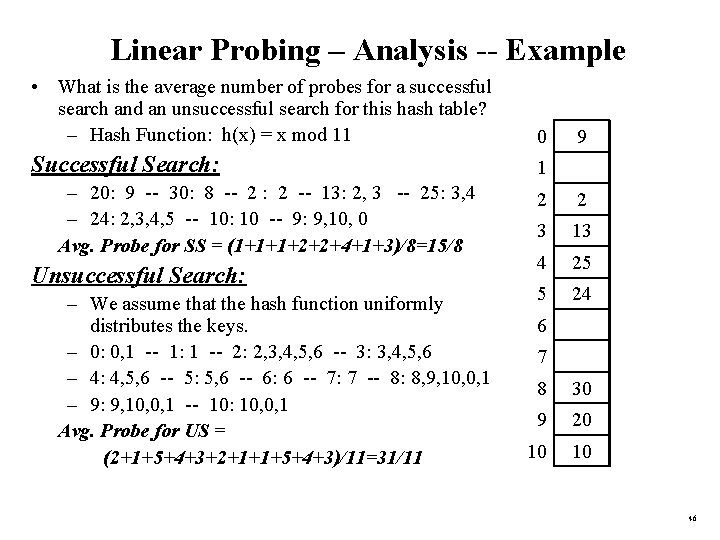

Linear Probing – Analysis -- Example • What is the average number of probes for a successful search and an unsuccessful search for this hash table? – Hash Function: h(x) = x mod 11 0 Successful Search: 1 – 20: 9 -- 30: 8 -- 2 : 2 -- 13: 2, 3 -- 25: 3, 4 – 24: 2, 3, 4, 5 -- 10: 10 -- 9: 9, 10, 0 Avg. Probe for SS = (1+1+1+2+2+4+1+3)/8=15/8 Unsuccessful Search: – We assume that the hash function uniformly distributes the keys. – 0: 0, 1 -- 1: 1 -- 2: 2, 3, 4, 5, 6 -- 3: 3, 4, 5, 6 – 4: 4, 5, 6 -- 5: 5, 6 -- 6: 6 -- 7: 7 -- 8: 8, 9, 10, 0, 1 – 9: 9, 10, 0, 1 -- 10: 10, 0, 1 Avg. Probe for US = (2+1+5+4+3+2+1+1+5+4+3)/11=31/11 9 2 2 3 13 4 25 5 24 6 7 8 30 9 20 10 10 46

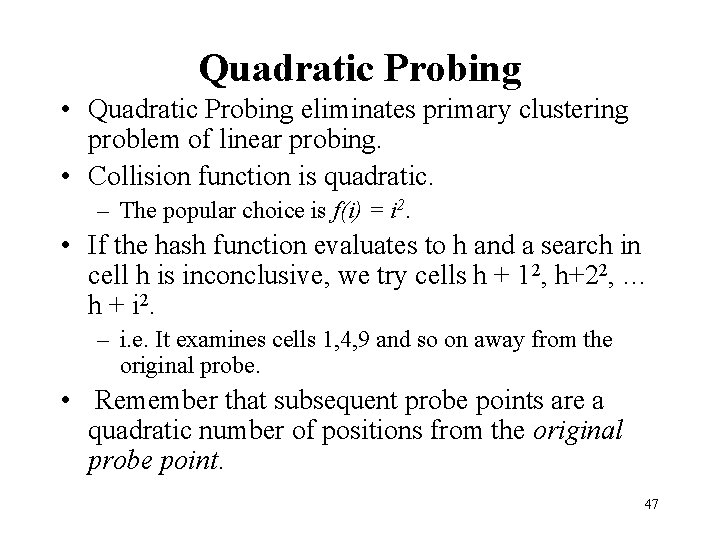

Quadratic Probing • Quadratic Probing eliminates primary clustering problem of linear probing. • Collision function is quadratic. – The popular choice is f(i) = i 2. • If the hash function evaluates to h and a search in cell h is inconclusive, we try cells h + 12, h+22, … h + i 2. – i. e. It examines cells 1, 4, 9 and so on away from the original probe. • Remember that subsequent probe points are a quadratic number of positions from the original probe point. 47

Figure 20. 6 A quadratic probing hash table after each insertion (note that the table size was poorly chosen because it is not a prime number). 48

Quadratic Probing • Problem: – We may not be sure that we will probe all locations in the table (i. e. there is no guarantee to find an empty cell if table is more than half full. ) – If the hash table size is not prime this problem will be much severe. • However, there is a theorem stating that: – If the table size is prime and load factor is not larger than 0. 5 (= table is at least half empty), all quadratic probes will be to different locations and an item can always be inserted. 49

Some considerations • How efficient is calculating the quadratic probes? – Linear probing is easily implemented. Quadratic probing appears to require * and % operations. – However by the use of the following trick, this is overcome: • Hi = Hi-1+2 i – 1 (mod M) 50

Some Considerations • What happens if load factor gets too high? – Dynamically expand the table as soon as the load factor reaches 0. 5, which is called rehashing. – Always double to a prime number. – When expanding the hash table, reinsert the new table by using the new hash function. 51

Analysis of Quadratic Probing • Quadratic probing has not yet been mathematically analyzed. • Although quadratic probing eliminates primary clustering, elements that hash to the same location will probe the same alternative cells. This is known as secondary clustering. – E. g. , insert 68 (probes: 1, 2, 4, 9 starting from hit 18), then 78 (probes: 1, 2, 4, 9, 16 starting from the same hit 18). 52

Analysis of Quadratic Probing • Quadratic probing has not yet been mathematically analyzed. • Although quadratic probing eliminates primary clustering, elements that hash to the same location will probe the same alternative cells. This is known as secondary clustering. • Techniques that eliminate secondary clustering are available. – the most popular one is double hashing. 53

Double Hashing • A second hash function is used to drive the collision resolution. – f(i) = i * hash 2(x) • We apply a second hash function to x and probe at a distance hash 2(x), 2*hash 2(x), … and so on. • The function hash 2(x) must never evaluate to zero. – e. g. Let hash 2(x) = x mod 9 and try to insert 99 in the previous example. • A function such as hash 2(x) = R – ( x mod R) with R a prime smaller than Table. Size will work well. – e. g. try R = 7 for the previous example. (7 - x mod 7) 54

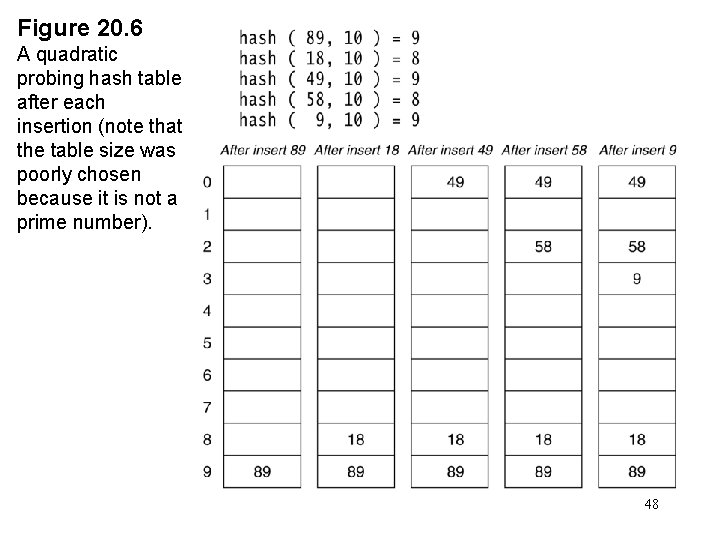

The relative efficiency of four collision-resolution methods λ λ 55

Hashing Applications • Compilers use hash tables to implement the symbol table (a data structure to keep track of declared variables). • Game programs use hash tables to keep track of positions it has encountered (transposition table) • Spell checkers, correctors (discussed). • Ctrl+F: search a substring in text (next slide). 56

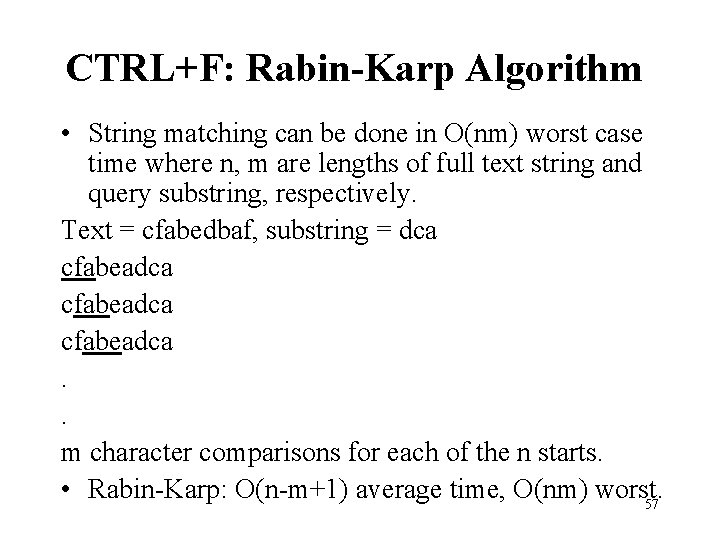

CTRL+F: Rabin-Karp Algorithm • String matching can be done in O(nm) worst case time where n, m are lengths of full text string and query substring, respectively. Text = cfabedbaf, substring = dca cfabeadca. . m character comparisons for each of the n starts. • Rabin-Karp: O(n-m+1) average time, O(nm) worst. 57

CTRL+F: Rabin-Karp Algorithm • Idea: use a hash value to avoid checking pattern (substring) in the string (text); if the hash value is matching, only then check it. 58

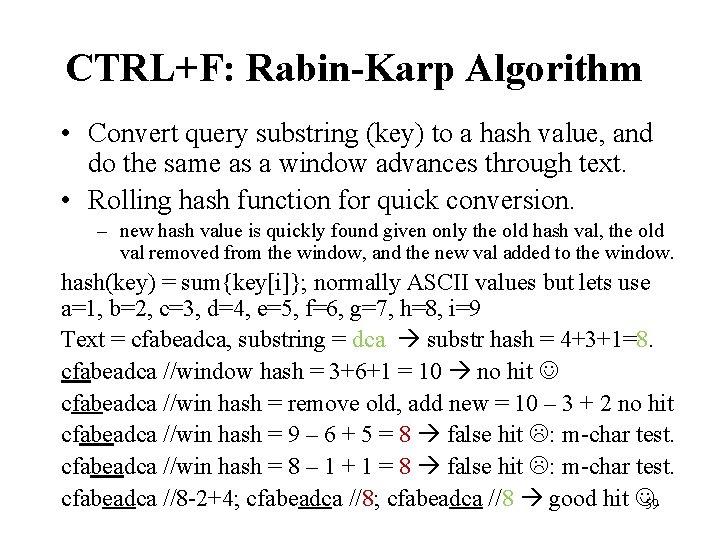

CTRL+F: Rabin-Karp Algorithm • Convert query substring (key) to a hash value, and do the same as a window advances through text. • Rolling hash function for quick conversion. – new hash value is quickly found given only the old hash val, the old val removed from the window, and the new val added to the window. hash(key) = sum{key[i]}; normally ASCII values but lets use a=1, b=2, c=3, d=4, e=5, f=6, g=7, h=8, i=9 Text = cfabeadca, substring = dca substr hash = 4+3+1=8. cfabeadca //window hash = 3+6+1 = 10 no hit cfabeadca //win hash = remove old, add new = 10 – 3 + 2 no hit cfabeadca //win hash = 9 – 6 + 5 = 8 false hit : m-char test. cfabeadca //win hash = 8 – 1 + 1 = 8 false hit : m-char test. cfabeadca //8 -2+4; cfabeadca //8 good hit . 59

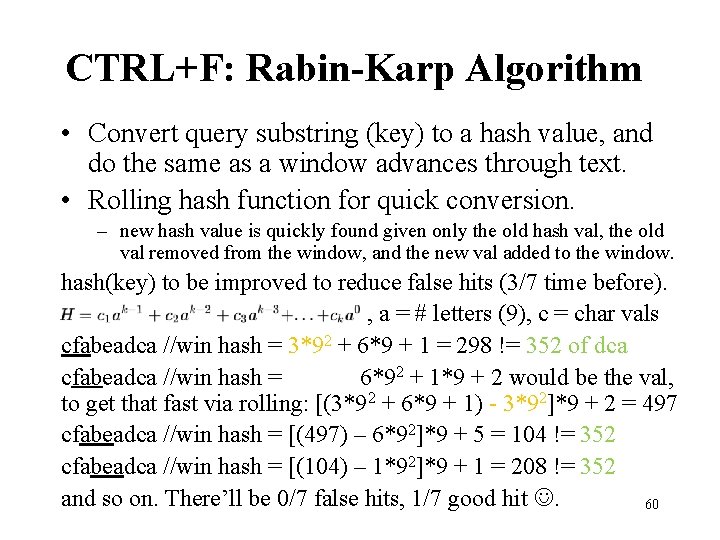

CTRL+F: Rabin-Karp Algorithm • Convert query substring (key) to a hash value, and do the same as a window advances through text. • Rolling hash function for quick conversion. – new hash value is quickly found given only the old hash val, the old val removed from the window, and the new val added to the window. hash(key) to be improved to reduce false hits (3/7 time before). , a = # letters (9), c = char vals cfabeadca //win hash = 3*92 + 6*9 + 1 = 298 != 352 of dca cfabeadca //win hash = 6*92 + 1*9 + 2 would be the val, to get that fast via rolling: [(3*92 + 6*9 + 1) - 3*92]*9 + 2 = 497 cfabeadca //win hash = [(497) – 6*92]*9 + 5 = 104 != 352 cfabeadca //win hash = [(104) – 1*92]*9 + 1 = 208 != 352 and so on. There’ll be 0/7 false hits, 1/7 good hit . 60

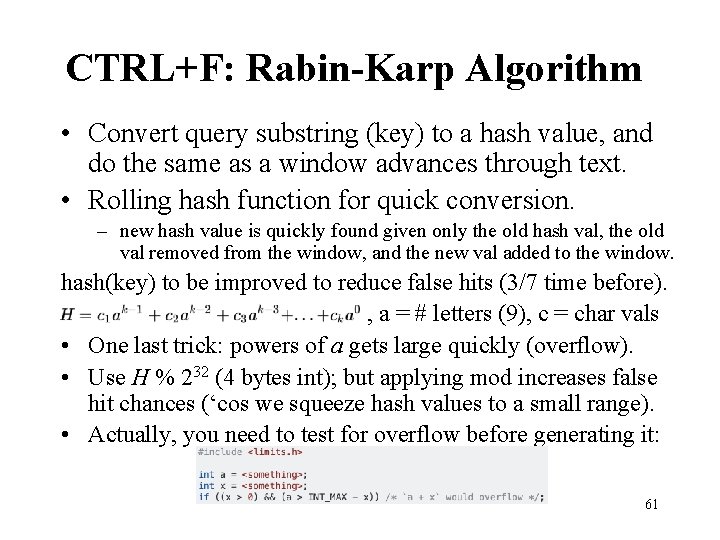

CTRL+F: Rabin-Karp Algorithm • Convert query substring (key) to a hash value, and do the same as a window advances through text. • Rolling hash function for quick conversion. – new hash value is quickly found given only the old hash val, the old val removed from the window, and the new val added to the window. hash(key) to be improved to reduce false hits (3/7 time before). , a = # letters (9), c = char vals • One last trick: powers of a gets large quickly (overflow). • Use H % 232 (4 bytes int); but applying mod increases false hit chances (‘cos we squeeze hash values to a small range). • Actually, you need to test for overflow before generating it: 61

![# Pairs w/ Given Sum Input: [3 4 106 2 5], sum = 7 # Pairs w/ Given Sum Input: [3 4 106 2 5], sum = 7](http://slidetodoc.com/presentation_image_h/c1c39c746f58117c7e32f13a5a2c06ab/image-59.jpg)

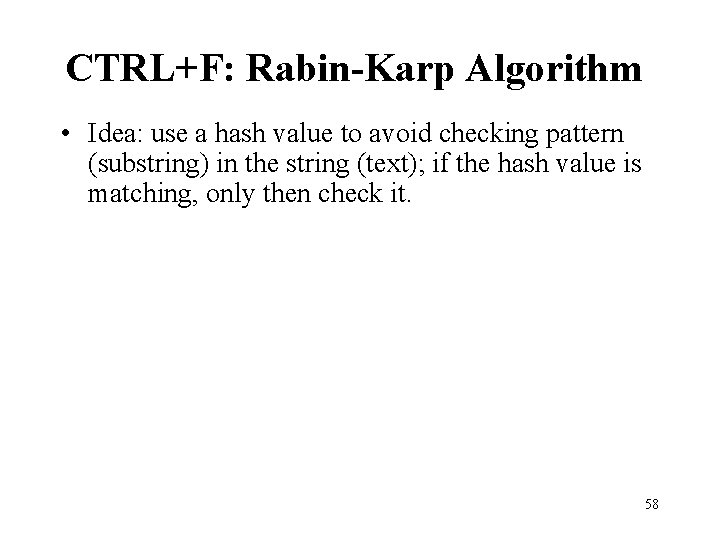

# Pairs w/ Given Sum Input: [3 4 106 2 5], sum = 7 Output: 2 (3 -4, 2 -5) Naïve solution # 1: O(n 2), for the ith element, look for its partner. Naïve solution # 2: O(nlgn), sort, look for partner via binary search. Cool solution: O(n) avg time, build hash table, hash for partner. O(n) worst time, if direct access table used. Space complexity will no longer be O(n), it’ll be linear in range, e. g. , would need 106 – 2 entries. 62

![Sort Locations for an Array Input: [3 4 106 2 5] Output: [1 2 Sort Locations for an Array Input: [3 4 106 2 5] Output: [1 2](http://slidetodoc.com/presentation_image_h/c1c39c746f58117c7e32f13a5a2c06ab/image-60.jpg)

Sort Locations for an Array Input: [3 4 106 2 5] Output: [1 2 4 0 3] Naïve solution # 1: O(n 2), find. Min, replace w/ i, i++, repeat. Cool solution: O(nlgn), copy to tmp[], sort tmp[], store a mapping of numbers and their locations in hash table, replace A[]. elements w/ their locs using hash table Again, Hash Table avg: O(nlgn + n) Again, Hash Table worst: O(nlgn + n 2) Again, Dir Acc Table worst: O(nlgn + n) 63

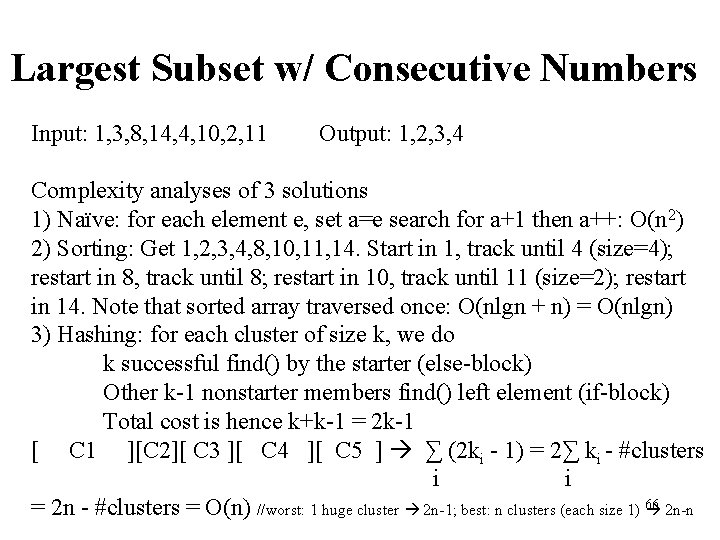

Largest Subset w/ Consecutive Numbers Input: 1, 3, 8, 14, 4, 10, 2, 11 Output: 1, 2, 3, 4 Insert all numbers into Hash Table (O(n)) Traverse array again w/ this strategy For next number x If x-1 in the hash table (find: O(1)) A sequence not start at x (‘cos x is part of another (larger) seq) Else A seq is starting at x Append x to the seq, x++ Repeat ’till x+1 not in the hash table (find: O(1)) If seq. size larger than champion, update champion 64

Largest Subset w/ Consecutive Numbers Input: 1, 3, 8, 14, 4, 10, 2, 11 Output: 1, 2, 3, 4 65

Largest Subset w/ Consecutive Numbers Input: 1, 3, 8, 14, 4, 10, 2, 11 Output: 1, 2, 3, 4 Complexity analyses of 3 solutions 1) Naïve: for each element e, set a=e search for a+1 then a++: O(n 2) 2) Sorting: Get 1, 2, 3, 4, 8, 10, 11, 14. Start in 1, track until 4 (size=4); restart in 8, track until 8; restart in 10, track until 11 (size=2); restart in 14. Note that sorted array traversed once: O(nlgn + n) = O(nlgn) 3) Hashing: for each cluster of size k, we do k successful find() by the starter (else-block) Other k-1 nonstarter members find() left element (if-block) Total cost is hence k+k-1 = 2 k-1 [ C 1 ][C 2][ C 3 ][ C 4 ][ C 5 ] ∑ (2 ki - 1) = 2∑ ki - #clusters i i = 2 n - #clusters = O(n) //worst: 1 huge cluster 2 n-1; best: n clusters (each size 1) 66 2 n-n

Summary • Hash tables can be used to implement the insert and find operations in constant average time. – it depends on the load factor not on the number of items in the table. • It is important to have a prime Table. Size and a correct choice of load factor and hash function. • For separate chaining the load factor should be close to 1. • For open addressing load factor should not exceed 0. 5 unless this is completely unavoidable. – Rehashing can be implemented to grow (or shrink) the table. 67

- Slides: 64