Hardware Transactional Memory Herlihy Moss 1993 1 Some

- Slides: 37

Hardware Transactional Memory (Herlihy, Moss, 1993) 1 Some slides are taken from a presentation by Royi Maimon & Merav Havuv, prepared for a seminar given by Prof. Yehuda Afek.

Outline l Hardware Transactional Memory (HTM) q q 2 Transactions Caches and coherence protocols General Implementation Simulation

What is a transaction? l l 3 A transaction is a sequence of memory loads and stores executed by a single process that either commits or aborts If a transaction commits, all the loads and stores appear to have executed atomically If a transaction aborts, none of its stores take effect Transaction operations aren't visible until they commit (if they do)

Transactional Memory l l A new multiprocessor architecture The goal: Implementing non-blocking synchronization that is efficient – easy to use compared with conventional techniques based on mutual exclusion – l 4 Implemented by straightforward extensions to multiprocessor cache-coherence protocols and / or by software mechanisms

Outline l Hardware Transactional Memory (HTM) q q 55 Transactions Caches and coherence protocols General Implementation Simulation

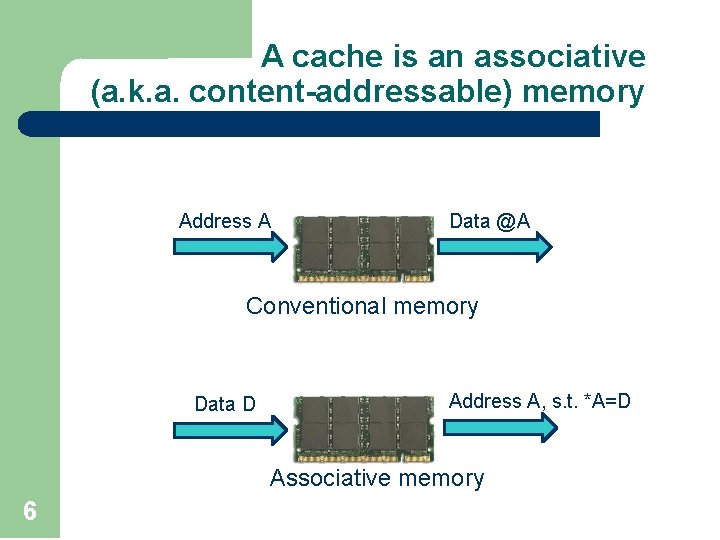

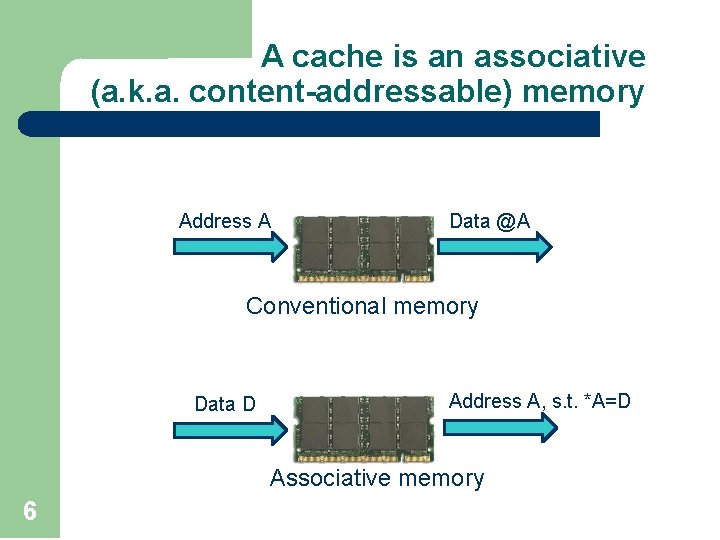

A cache is an associative (a. k. a. content-addressable) memory Address A Data @A Conventional memory Data D Address A, s. t. *A=D Associative memory 6

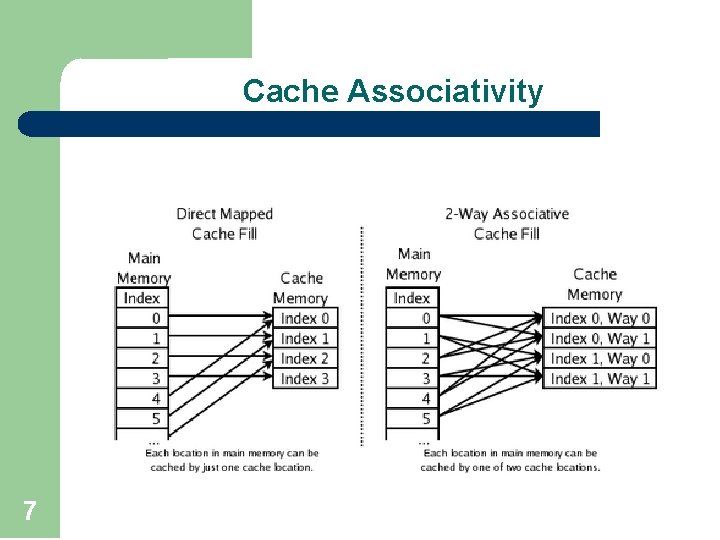

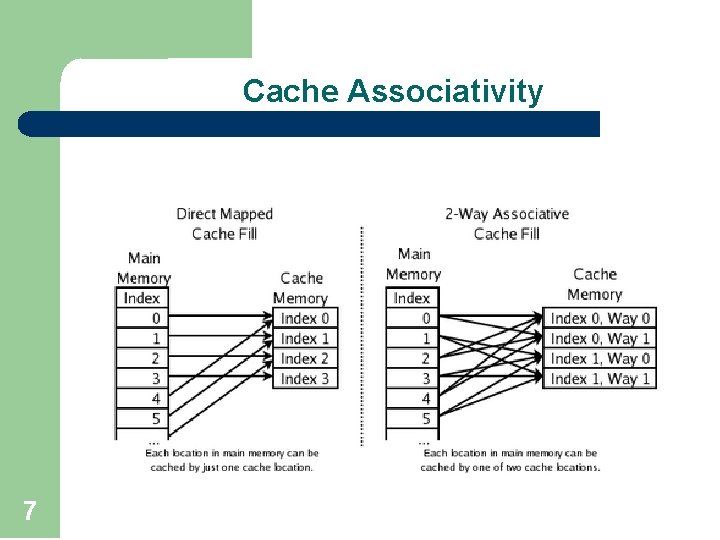

Cache Associativity 7

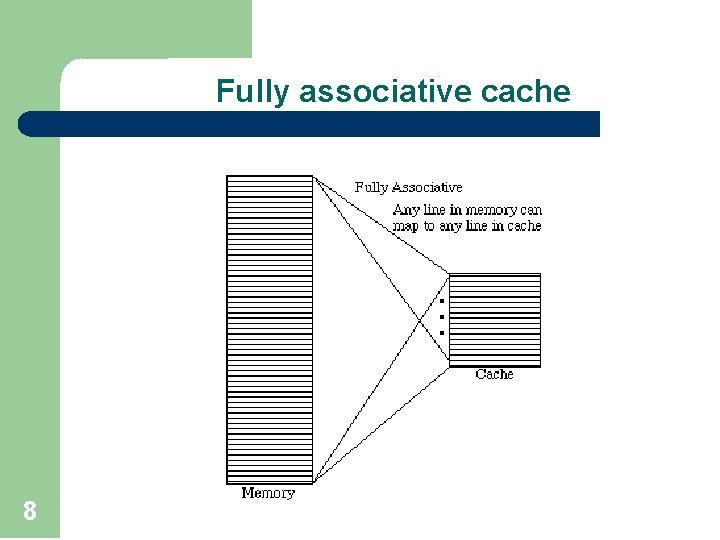

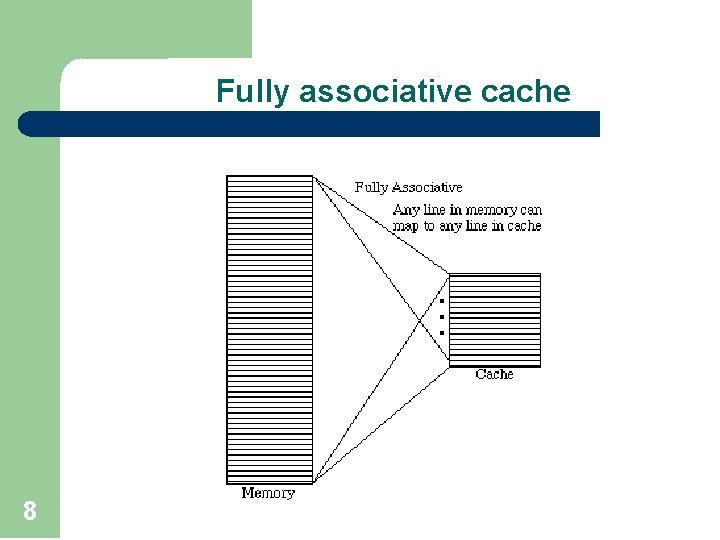

Fully associative cache 8

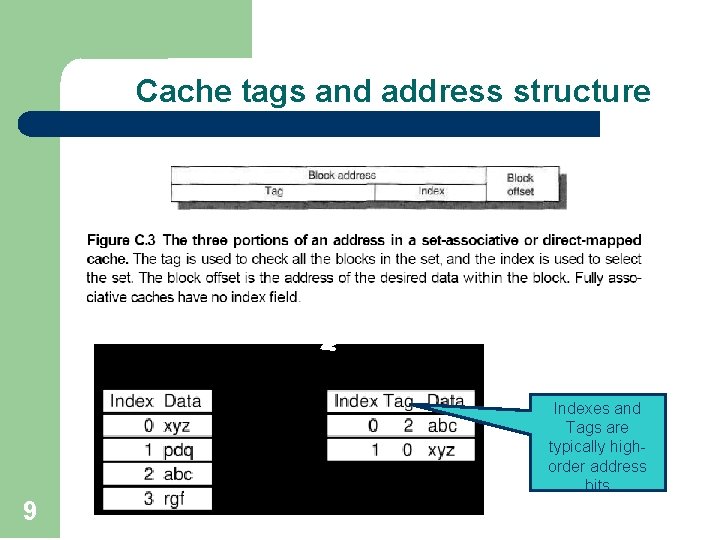

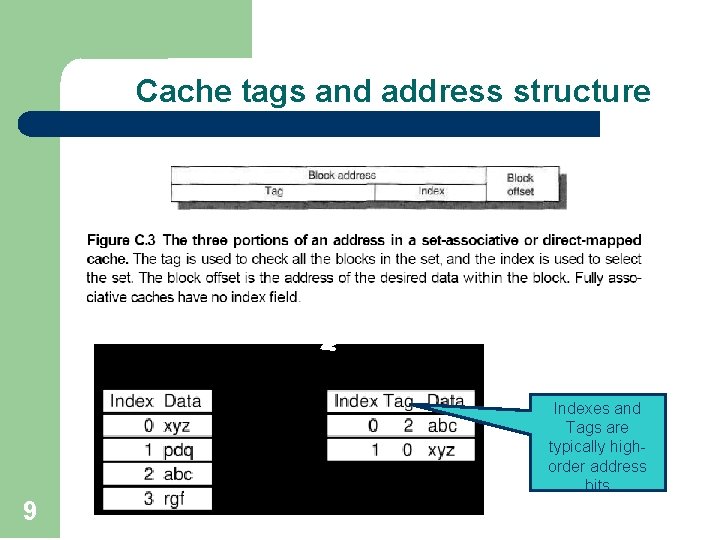

Cache tags and address structure Main Memory Cache Indexes and Tags are typically highorder address bits 9

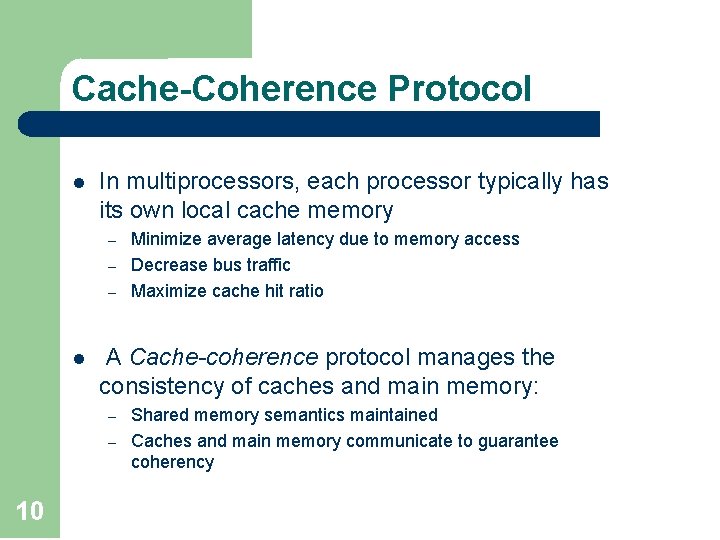

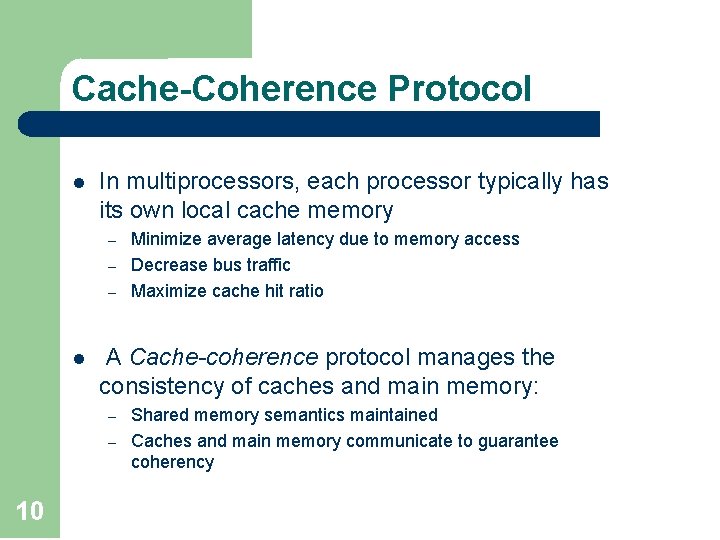

Cache-Coherence Protocol l In multiprocessors, each processor typically has its own local cache memory – – – l A Cache-coherence protocol manages the consistency of caches and main memory: – – 10 Minimize average latency due to memory access Decrease bus traffic Maximize cache hit ratio Shared memory semantics maintained Caches and main memory communicate to guarantee coherency

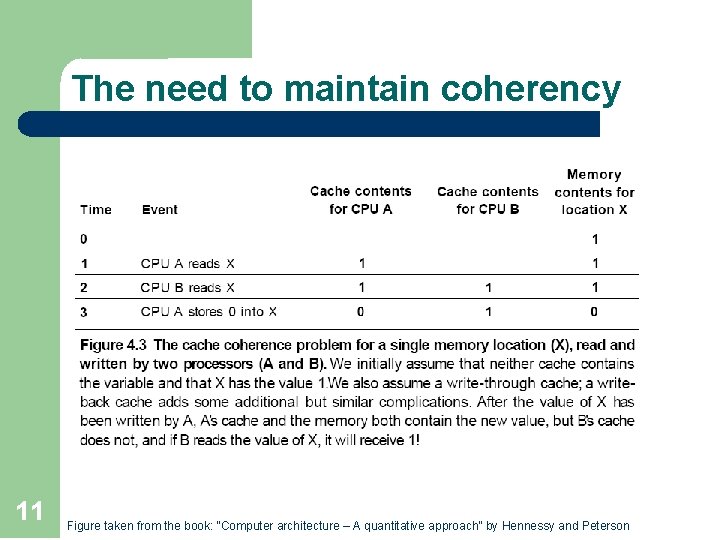

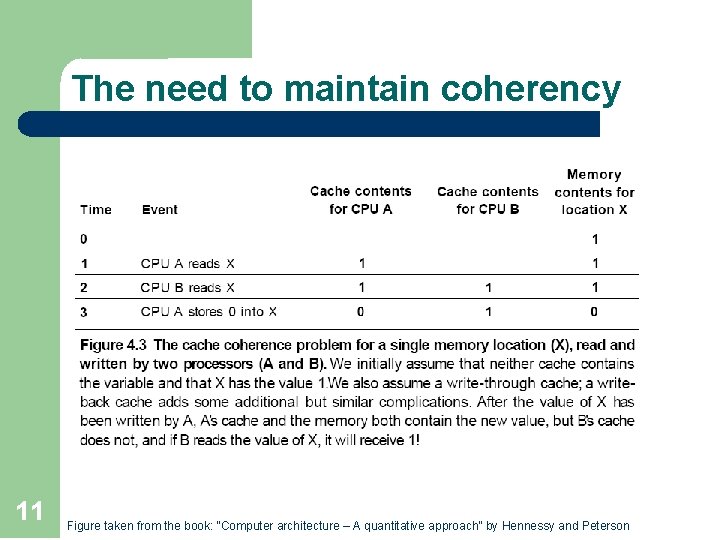

The need to maintain coherency 11 Figure taken from the book: “Computer architecture – A quantitative approach” by Hennessy and Peterson

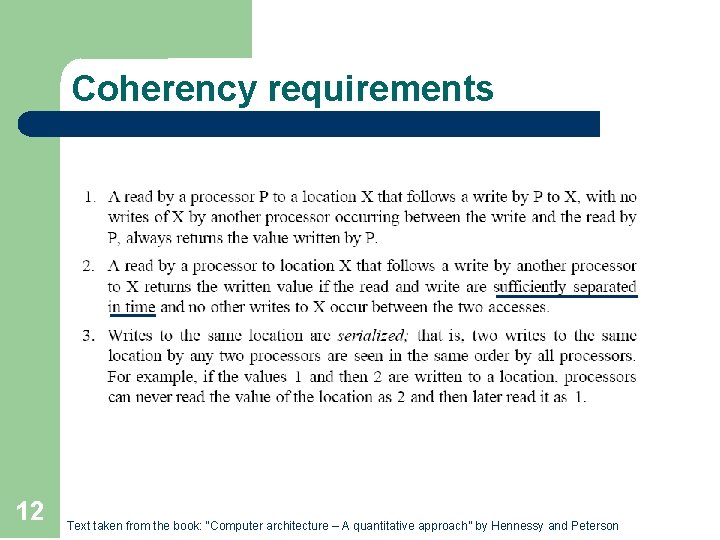

Coherency requirements 12 Text taken from the book: “Computer architecture – A quantitative approach” by Hennessy and Peterson

Snoopy Cache l 13 All caches monitor (snoop) the activity on a global bus/interconnect to determine if they have a copy of the block of data that is requested on the bus.

Coherence protocol types 14 l Write through: the information is written to both the cache block and to the block in the lower-level memory l Write-back: the information is written only to the cache block. The modified cache block is written to main memory only when it is replaced

3 -state Coherence protocol 15 l Invalid: cache line/block does not contain legal information l Shared: cache line/block contains information that may be shared by other caches l Modified/exclusive: cache line/block was modified while in cache and is exclusively owned by current cache

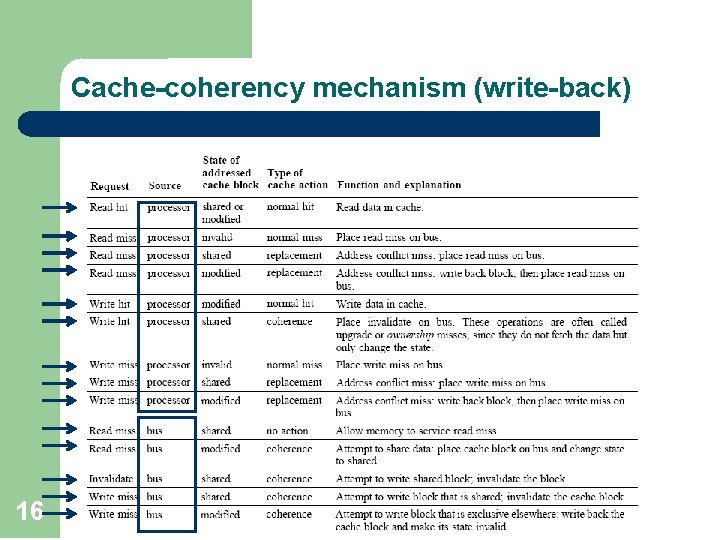

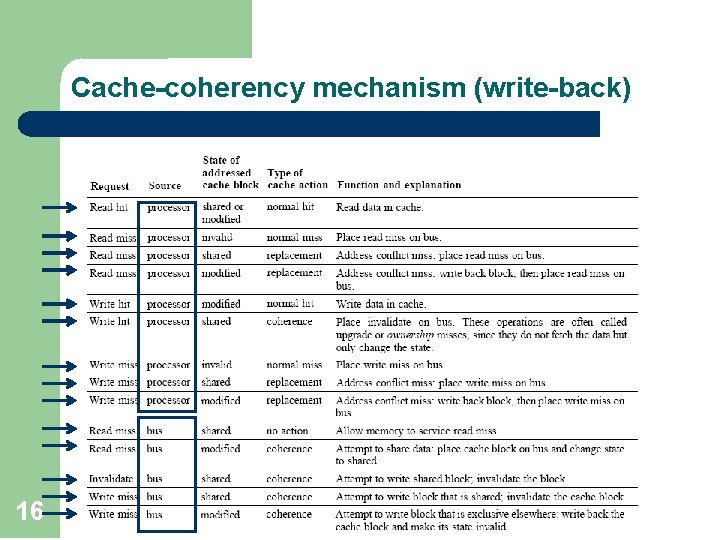

Cache-coherency mechanism (write-back) 16

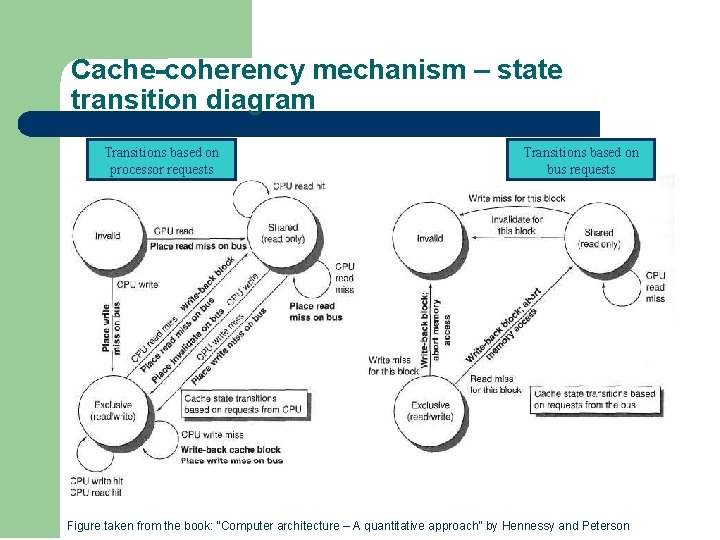

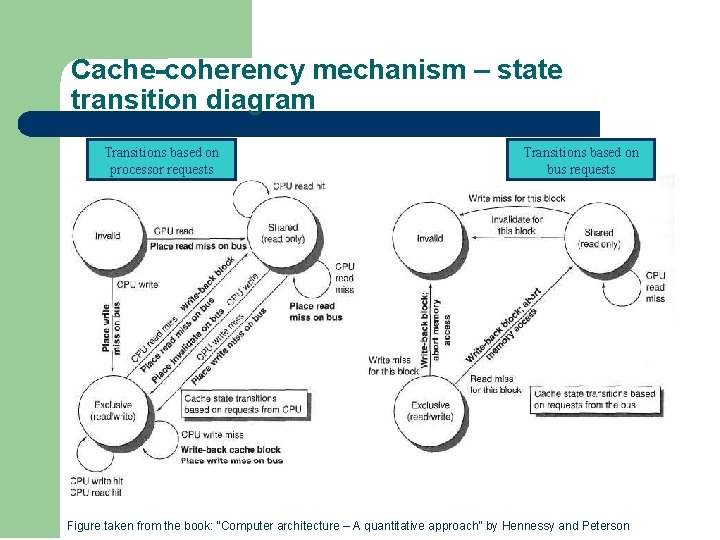

Cache-coherency mechanism – state transition diagram Transitions based on processor requests Transitions based on bus requests Figure taken from the book: “Computer architecture – A quantitative approach” by Hennessy and Peterson

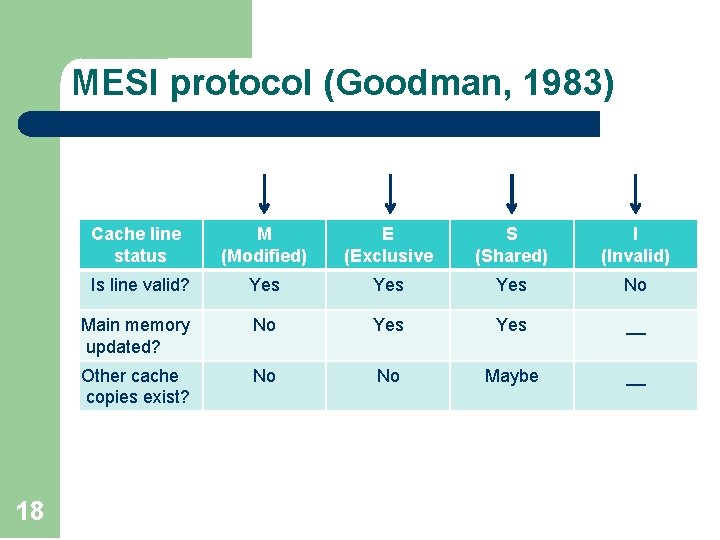

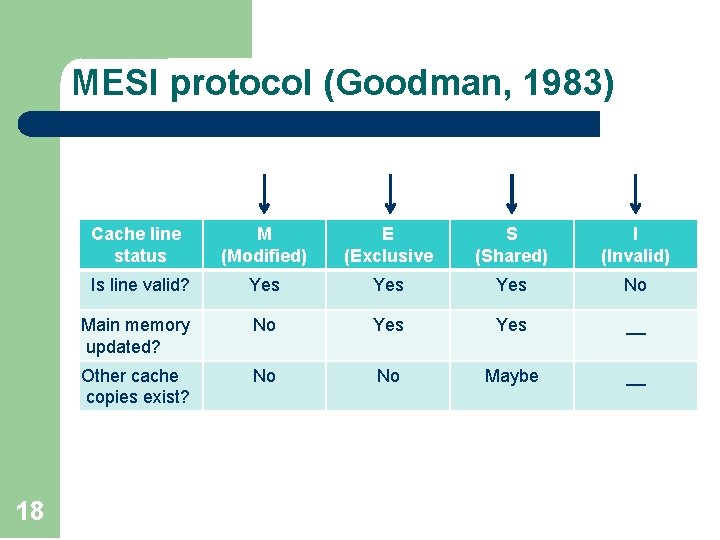

MESI protocol (Goodman, 1983) 18 Cache line status M (Modified) E (Exclusive S (Shared) I (Invalid) Is line valid? Yes Yes No Main memory updated? No Yes __ Other cache copies exist? No No Maybe __

Outline l Hardware Transactional Memory (HTM) q q 19 Transactions Caches and coherence protocols General Implementation Simulation

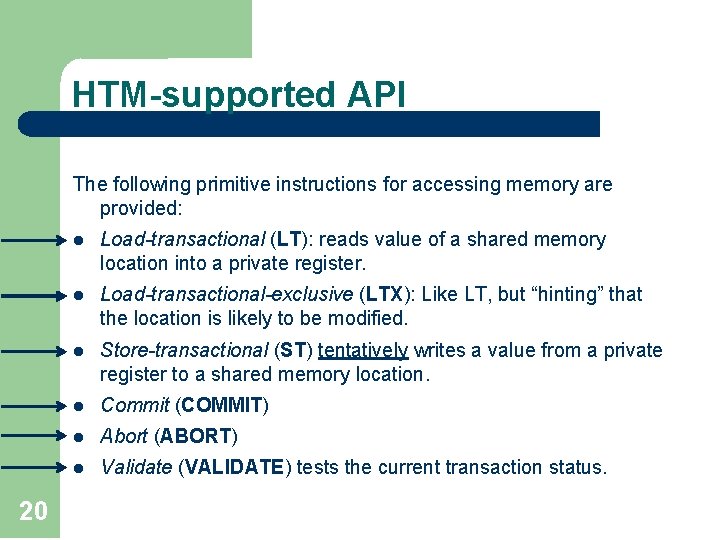

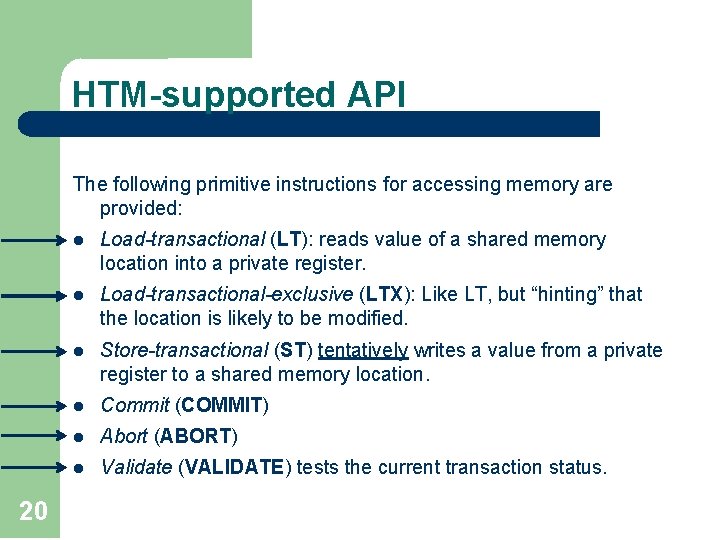

HTM-supported API The following primitive instructions for accessing memory are provided: 20 l Load-transactional (LT): reads value of a shared memory location into a private register. l Load-transactional-exclusive (LTX): Like LT, but “hinting” that the location is likely to be modified. l Store-transactional (ST) tentatively writes a value from a private register to a shared memory location. l Commit (COMMIT) l Abort (ABORT) l Validate (VALIDATE) tests the current transaction status.

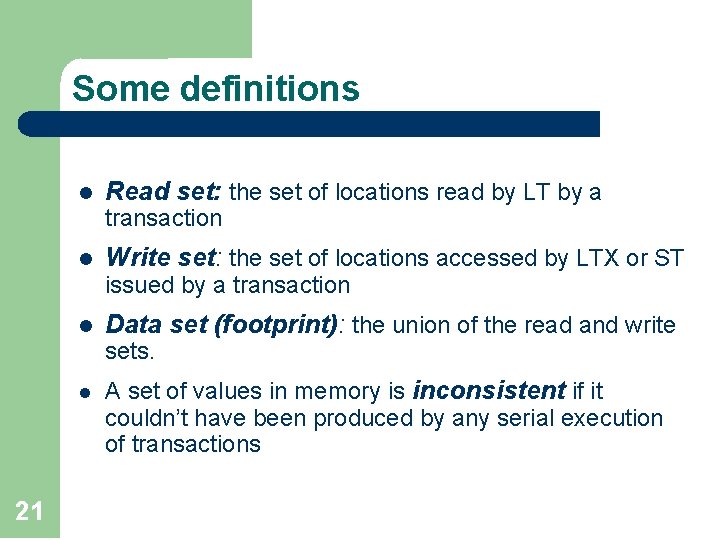

Some definitions l Read set: the set of locations read by LT by a transaction l Write set: the set of locations accessed by LTX or ST issued by a transaction l Data set (footprint): the union of the read and write sets. l 21 A set of values in memory is inconsistent if it couldn’t have been produced by any serial execution of transactions

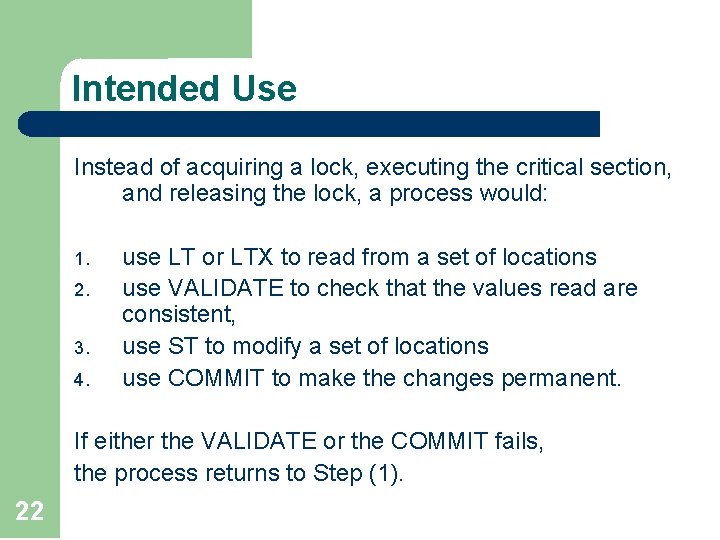

Intended Use Instead of acquiring a lock, executing the critical section, and releasing the lock, a process would: 1. 2. 3. 4. use LT or LTX to read from a set of locations use VALIDATE to check that the values read are consistent, use ST to modify a set of locations use COMMIT to make the changes permanent. If either the VALIDATE or the COMMIT fails, the process returns to Step (1). 22

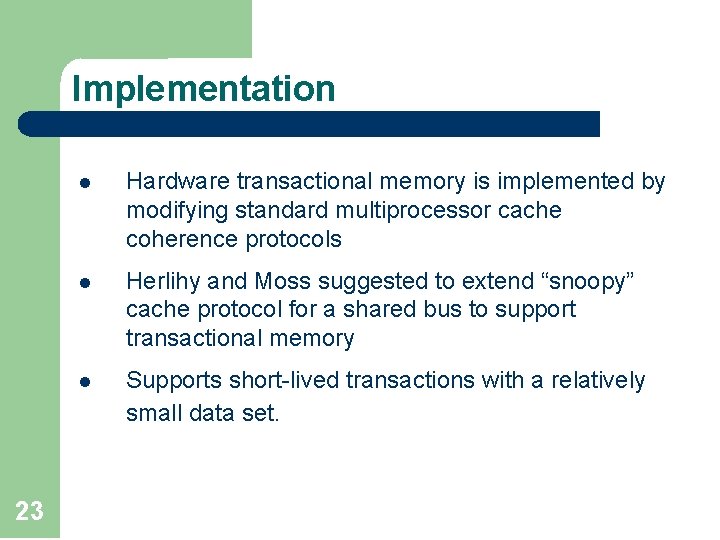

Implementation 23 l Hardware transactional memory is implemented by modifying standard multiprocessor cache coherence protocols l Herlihy and Moss suggested to extend “snoopy” cache protocol for a shared bus to support transactional memory l Supports short-lived transactions with a relatively small data set.

The basic idea l l 24 Any protocol capable of detecting register access conflicts can also detect transaction conflict at no extra cost Once a transaction conflict is detected, it can be resolved in a variety of ways

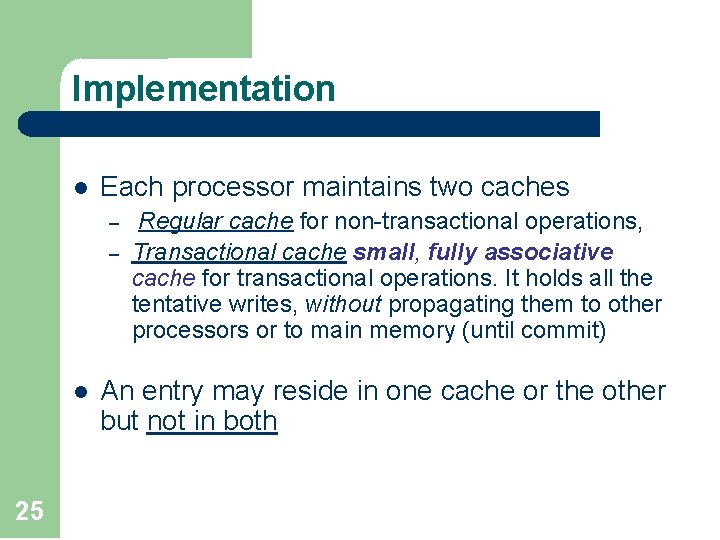

Implementation l Each processor maintains two caches – – l 25 Regular cache for non-transactional operations, Transactional cache small, fully associative cache for transactional operations. It holds all the tentative writes, without propagating them to other processors or to main memory (until commit) An entry may reside in one cache or the other but not in both

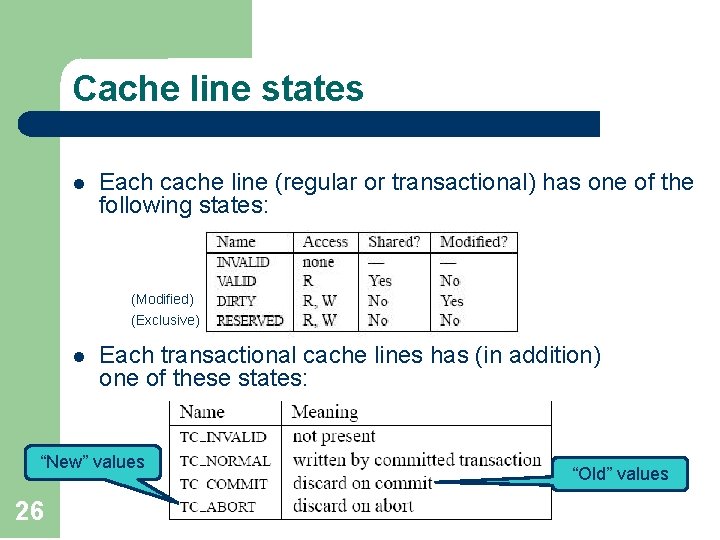

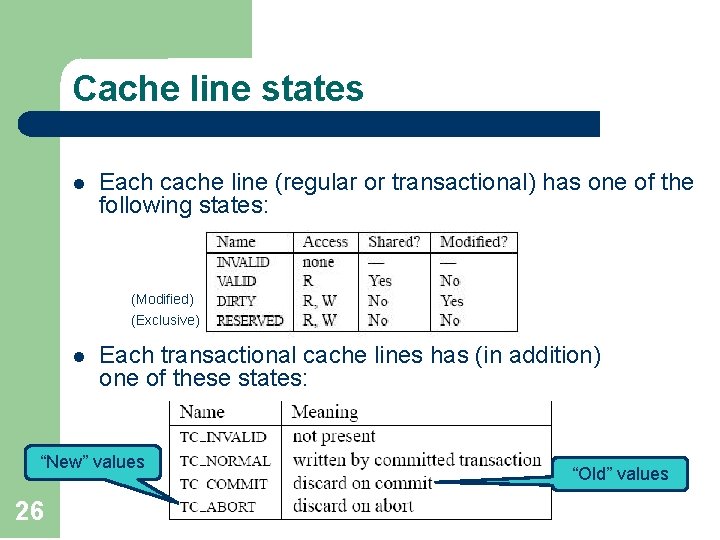

Cache line states l Each cache line (regular or transactional) has one of the following states: (Modified) (Exclusive) l Each transactional cache lines has (in addition) one of these states: “New” values 26 “Old” values

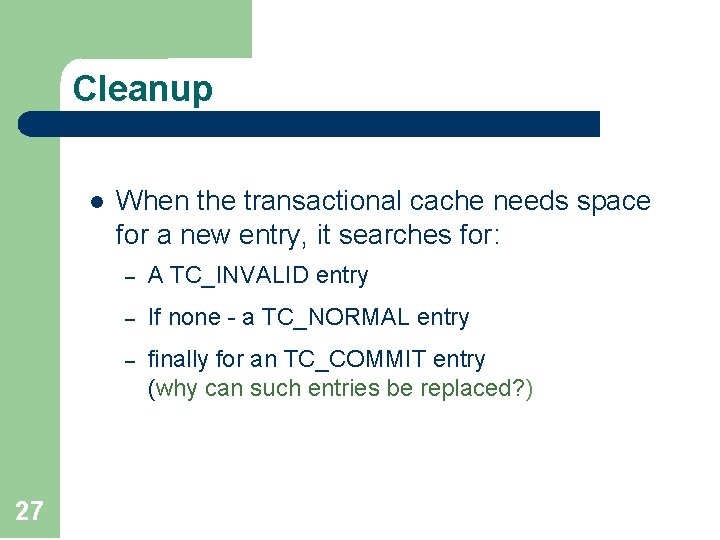

Cleanup l 27 When the transactional cache needs space for a new entry, it searches for: – A TC_INVALID entry – If none - a TC_NORMAL entry – finally for an TC_COMMIT entry (why can such entries be replaced? )

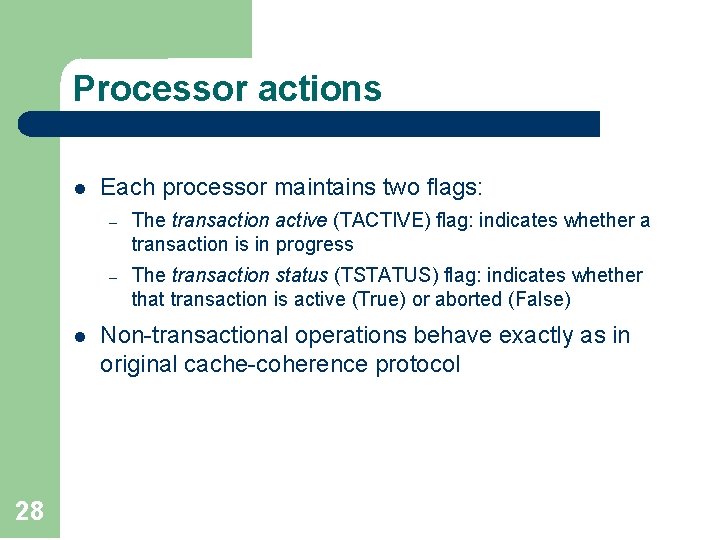

Processor actions l l 28 Each processor maintains two flags: – The transaction active (TACTIVE) flag: indicates whether a transaction is in progress – The transaction status (TSTATUS) flag: indicates whether that transaction is active (True) or aborted (False) Non-transactional operations behave exactly as in original cache-coherence protocol

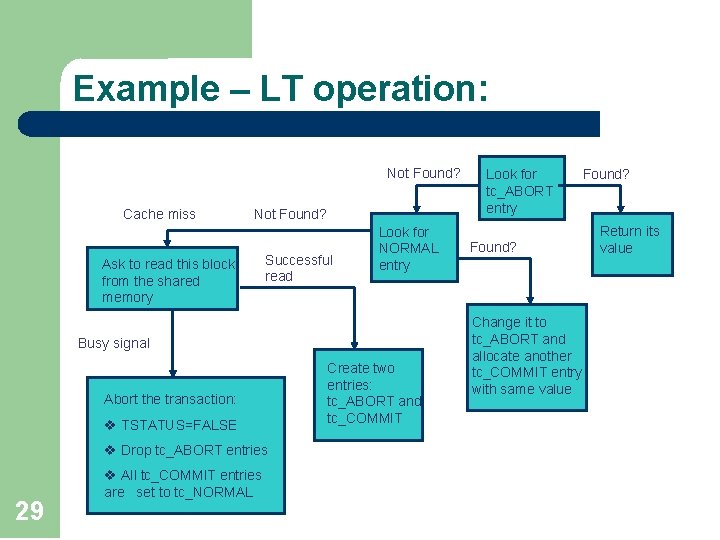

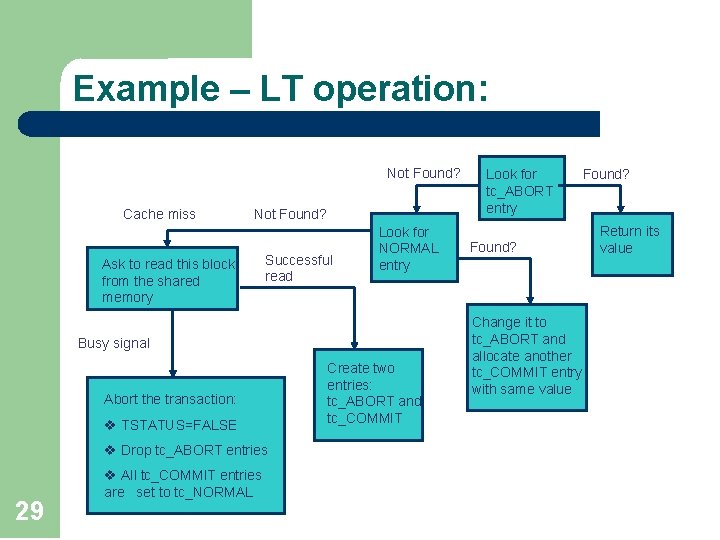

Example – LT operation: Not Found? Cache miss Not Found? Ask to read this block from the shared memory Successful read Look for NORMAL entry Busy signal Abort the transaction: v TSTATUS=FALSE v Drop tc_ABORT entries 29 v All tc_COMMIT entries are set to tc_NORMAL Create two entries: tc_ABORT and tc_COMMIT Look for tc_ABORT entry Found? Change it to tc_ABORT and allocate another tc_COMMIT entry with same value Found? Return its value

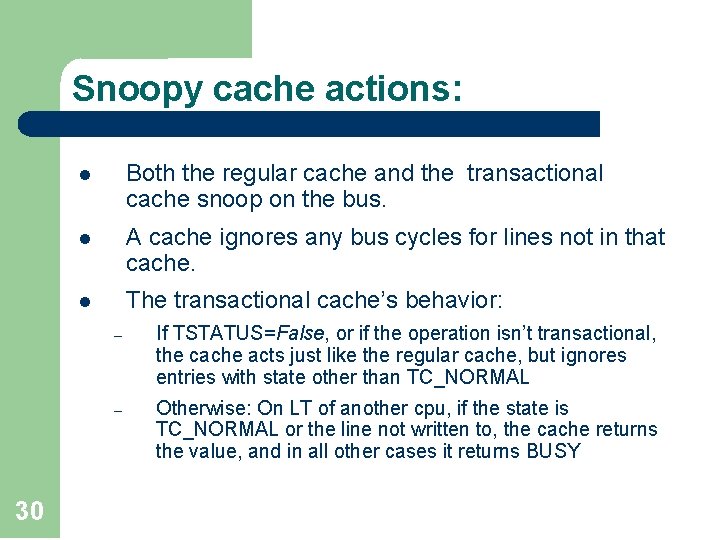

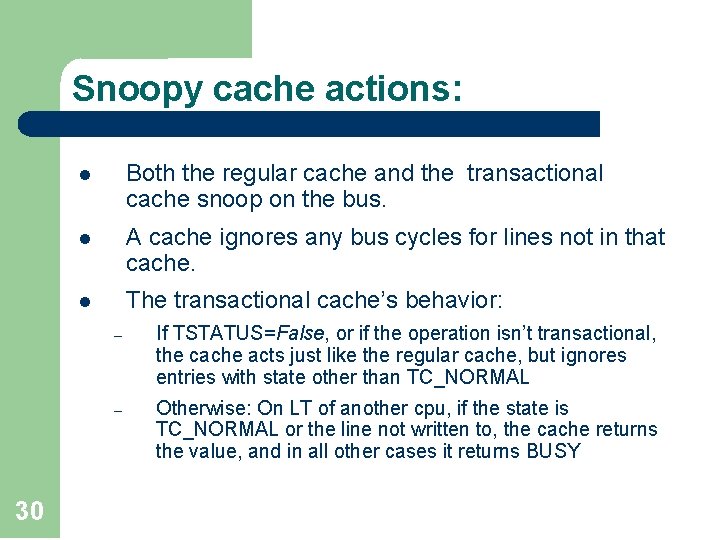

Snoopy cache actions: 30 l Both the regular cache and the transactional cache snoop on the bus. l A cache ignores any bus cycles for lines not in that cache. l The transactional cache’s behavior: – If TSTATUS=False, or if the operation isn’t transactional, the cache acts just like the regular cache, but ignores entries with state other than TC_NORMAL – Otherwise: On LT of another cpu, if the state is TC_NORMAL or the line not written to, the cache returns the value, and in all other cases it returns BUSY

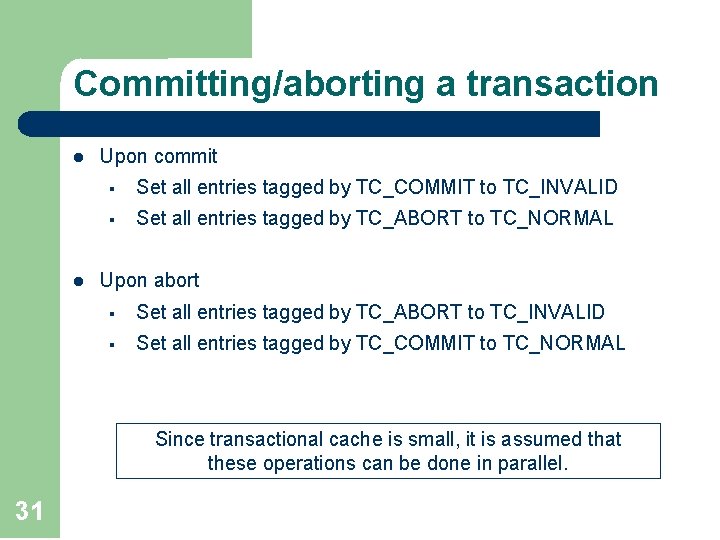

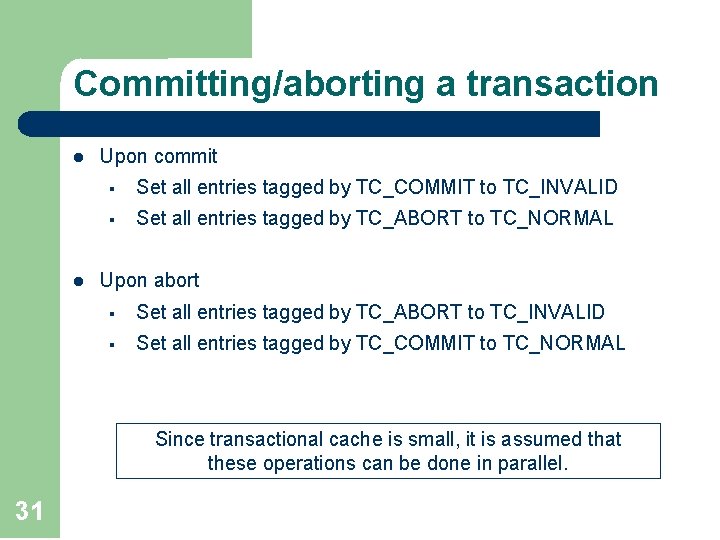

Committing/aborting a transaction l l Upon commit § Set all entries tagged by TC_COMMIT to TC_INVALID § Set all entries tagged by TC_ABORT to TC_NORMAL Upon abort § Set all entries tagged by TC_ABORT to TC_INVALID § Set all entries tagged by TC_COMMIT to TC_NORMAL Since transactional cache is small, it is assumed that these operations can be done in parallel. 31

Outline l l Lock-Free Hardware Transactional Memory (HTM) q q 32 Transactions Caches and coherence protocols General Implementation Simulation

Simulation 33 l We’ll see an example code for the producer/consumer algorithm using transactional memory architecture. l The simulation runs on both cache coherence protocols: snoopy and directory cache. l The simulation uses 32 processors l The simulation finishes when 2^16 operations have completed.

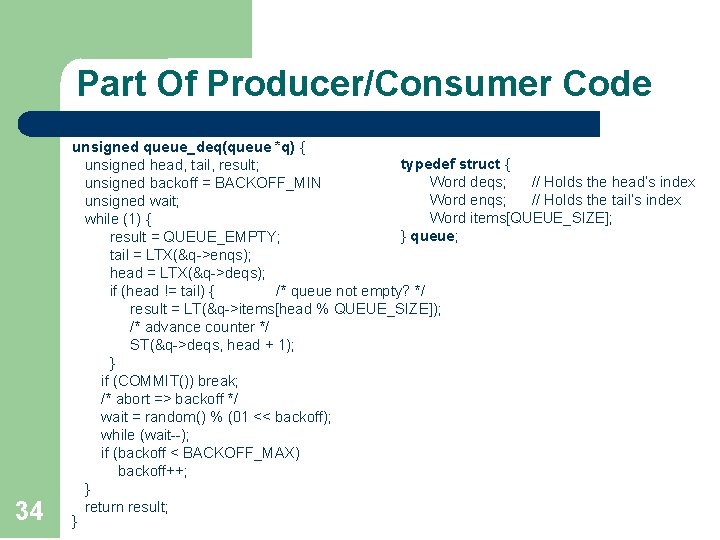

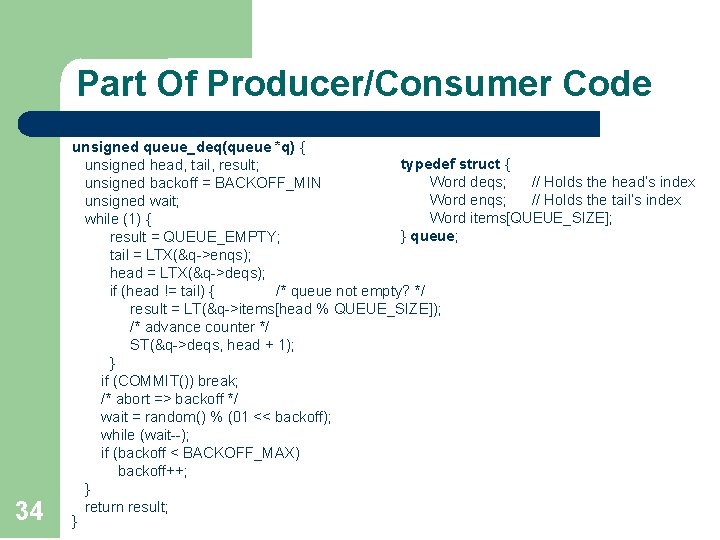

Part Of Producer/Consumer Code 34 unsigned queue_deq(queue *q) { typedef struct { unsigned head, tail, result; Word deqs; // Holds the head’s index unsigned backoff = BACKOFF_MIN Word enqs; // Holds the tail’s index unsigned wait; Word items[QUEUE_SIZE]; while (1) { } queue; result = QUEUE_EMPTY; tail = LTX(&q->enqs); head = LTX(&q->deqs); if (head != tail) { /* queue not empty? */ result = LT(&q->items[head % QUEUE_SIZE]); /* advance counter */ ST(&q->deqs, head + 1); } if (COMMIT()) break; /* abort => backoff */ wait = random() % (01 << backoff); while (wait--); if (backoff < BACKOFF_MAX) backoff++; } return result; }

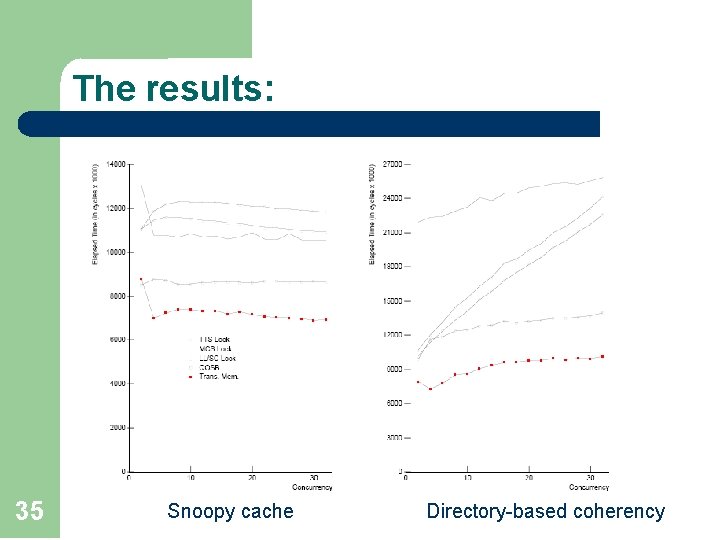

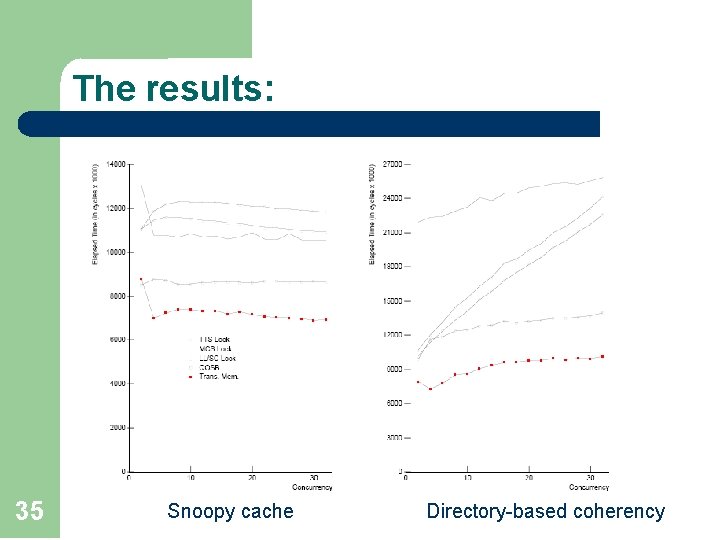

The results: 35 Snoopy cache Directory-based coherency

Key Limitations: 36 l Transactional size is limited by cache size l Transaction length effectively limited by scheduling quantum l Process migration problematic

MSA: A few sample research directions q Theoretic o Are there counters/stacks/queues with sub-linear write-contention? o What is the space complexity of obstruction-free read/write consensus? o What is the step-complexity of 1 -time read/write counter? o. . . q (More) practical o The design of efficient lock-free/blocking concurrent objects o Defining more realistic metrics for blocking synchronization, and designing algorithms that are efficient w. r. t these metrics o Improve the usability of transactional memory 37 o. . .