Hadoop Map Reduce Presentation by Yoni Nesher Non

Hadoop & Map Reduce Presentation by Yoni Nesher Non. SQL database Techforum 1

Hadoop & Map Reduce Forum Agenda: • Big data problem domain • Hadoop ecosystem • Hadoop Distributed File System (HDFS) • Diving in to Map. Reduce • Map. Reduce case studies • Map. Reduce v. s. parallel DBs systems – comparison and analysis 2

Hadoop & Map Reduce Main topics: HDFS – Hadoop distributed file system - manage the storage across a network of machine, designed for storing very large files, optimized for streaming data access patterns Map. Reduce - A distributed data processing model and execution environment that runs on large clusters of commodity machines. 3

Introduction • It has been said that “More data usually beats better algorithms” • For some problems, however sophisticated your algorithms are, they can often be beaten simply by having more data (and a less sophisticated algorithm) • So the good news is that Big Data is here! • The bad news is that we are struggling to store and analyze it. . 4

Introduction • A possible (and only) solution - read and write data in parallel • This approach introduces new problems in the data I/O domain: • hardware failure: • As soon as you start using many pieces of hardware, the chance that one will fail is fairly high. • A common way of avoiding data loss is through replications • Combining data: • Most of the analysis tasks need to be able to combine the data in some way; data read from one disk may need to be combined with the data from any of the other disks The Map. Reduce - programming model abstracts the problem from disk reads and writes (commin up. . ) 5

Introduction • What is Hadoop ? • Hadoop provides a reliable shared storage and analysis system. The storage is provided by HDFS and analysis by Map. Reduce. • There are other parts to Hadoop, but these capabilities are its kernel • History • Hadoop was created by Doug Cutting, the creator of Apache Lucene, the widely used text search library. • Hadoop has its origins in Apache Nutch, an open source web search engine, also a part of the Lucene project. • In January 2008, Hadoop was made its own top-level project at Apache • Using Hadoop: Yahoo!, Last. fm, Facebook, the New York Times (more examples later on. . ) 6

The Hadoop ecosystem: • Common • A set of components and interfaces for distributed file systems and general I/O (serialization, Java RPC, persistent data structures). • Avro • A serialization system for efficient, cross-language RPC, and persistent data storage. • Map. Reduce • • A distributed data processing model and execution environment that runs on large clusters of commodity machines. HDFS • A distributed file system that runs on large clusters of commodity machines. Pig • A data flow language and execution environment for exploring very large datasets. Pig runs on HDFS and Map. Reduce clusters. Hive • A distributed data warehouse. Hive manages data stored in HDFS and provides a query language based on SQL (and which is translated by the runtime engine to Map. Reduce jobs) for querying the data. HBase • A distributed, column-oriented database. HBase uses HDFS for its underlying storage, and supports both batch-style computations using Map. Reduce and point queries (random reads). Zoo. Keeper • A distributed, highly available coordination service. Zoo. Keeper provides primitives such as distributed locks that can be used for building distributed applications. Sqoop • A tool for efficiently moving data between relational databases and HDFS. 7

Hadoop HDFS What is HDFS ? • distributed filesystems – manage the storage across a network of machine • designed for storing very large files • files that are hundreds of megabytes, gigabytes, or terabytes in size. • There are Hadoop clusters running today that store petabytes of data in single files • streaming data access patterns • Optimize for write-once, read-many-times • Not optimized for low latency seek operations, Lots of small files, Multiple writers, arbitrary file modifications 8

HDFS concepts • Blocks • The minimum amount of data that a file system can read or write. • A disk files system blocks are typically a few kilobytes in size • HDFS block are 64 MB by default • files in HDFS are broken into block-sized chunks, which are stored as independent units. • Unlike a file system for a single disk, a file in HDFS that is smaller than a single block does not occupy a full block’s worth of underlying storage. 13

HDFS concepts • Blocks (cont. ) • blocks are just a chunk of data to be stored—file metadata such as hierarchies and permissions does not need to be stored with the blocks • each block is replicated to a small number of physically separate machines (typically three). • If a block becomes unavailable, a copy can be read from another location in a way that is transparent to the client 14

HDFS concepts • Namenodes and Datanodes master-worker pattern: a namenode (the master) and a number of datanodes (workers) • Name. Node: • Manages the filesystem namespace and maintains the filesystem tree and the metadata for all the files and directories in the tree. • Information is stored persistently on the local disk • Knows the datanodes on which all the blocks for a given file are located • It does not store block locations persistently, since this information is reconstructed from datanodes when the system starts. 15

HDFS concepts • Namenodes and Datanodes (cont. ) • Data. Nodes: • Datanodes are the workhorses of the file system. • Store and retrieve blocks when they are told to (by clients or the namenode • Report back to the namenode periodically with lists of blocks that they are storing • Without the namenode, the filesystem cannot be used: • If the machine running the namenode crashes, all the files on the file system would be lost • There would be no way of knowing how to reconstruct the files from the blocks on the datanodes. 16

HDFS concepts • Namenodes and Datanodes (cont. ) • For this reason, it is important to make the namenode resilient to failure • Possible solution - back up the files that make up the persistent state of the file system metadata • Hadoop can be configured so that the namenode writes its persistent state to multiple file systems. These writes are synchronous and atomic. • The usual configuration choice is to write to local disk as well as a remote NFS mount. 17

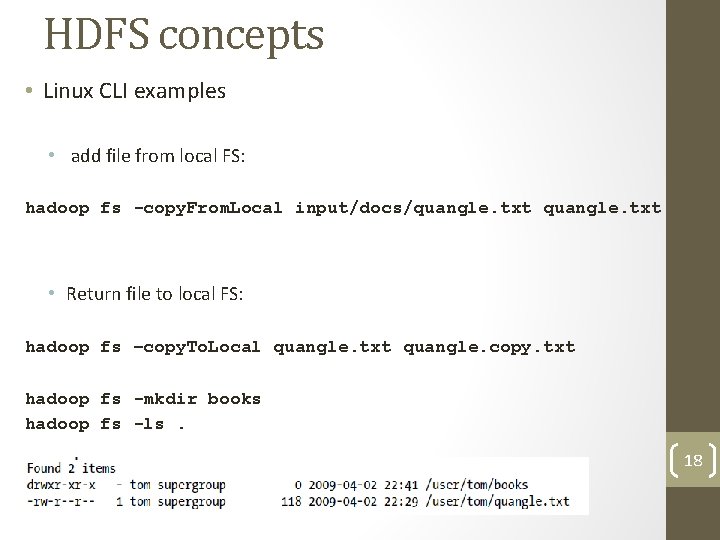

HDFS concepts • Linux CLI examples • add file from local FS: hadoop fs -copy. From. Local input/docs/quangle. txt • Return file to local FS: hadoop fs –copy. To. Local quangle. txt quangle. copy. txt hadoop fs -mkdir books hadoop fs -ls. 18

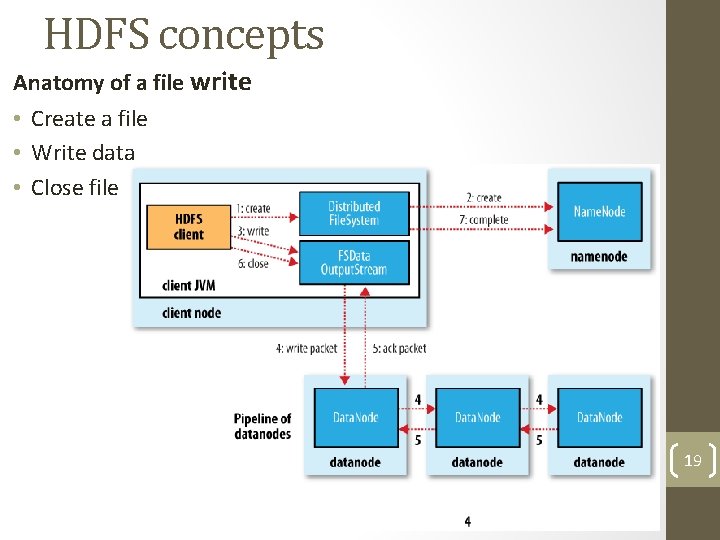

HDFS concepts Anatomy of a file write • Create a file • Write data • Close file 19

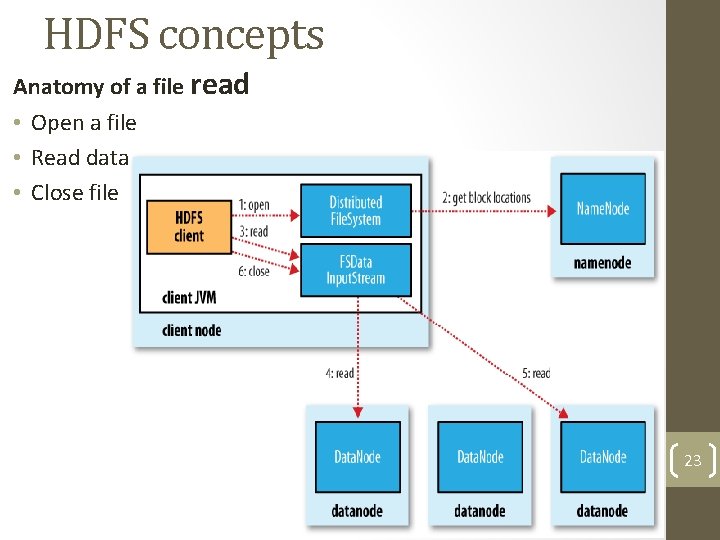

HDFS concepts Anatomy of a file read • Open a file • Read data • Close file 23

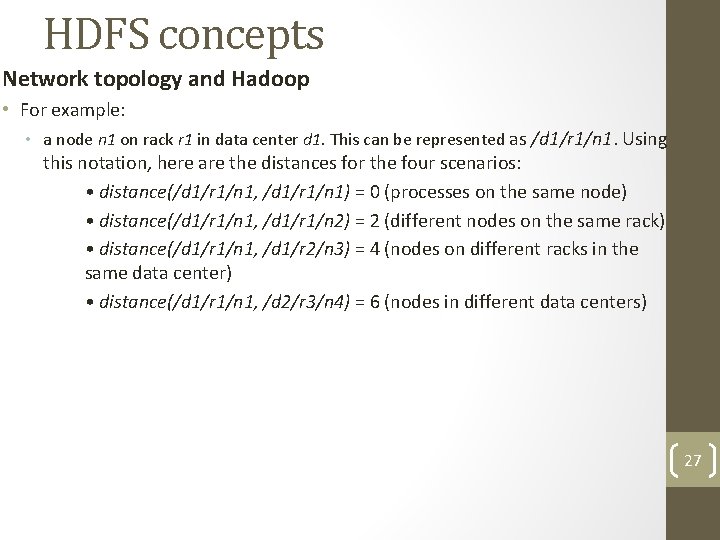

HDFS concepts Network topology and Hadoop • For example: • a node n 1 on rack r 1 in data center d 1. This can be represented as /d 1/r 1/n 1. Using this notation, here are the distances for the four scenarios: • distance(/d 1/r 1/n 1, /d 1/r 1/n 1) = 0 (processes on the same node) • distance(/d 1/r 1/n 1, /d 1/r 1/n 2) = 2 (different nodes on the same rack) • distance(/d 1/r 1/n 1, /d 1/r 2/n 3) = 4 (nodes on different racks in the same data center) • distance(/d 1/r 1/n 1, /d 2/r 3/n 4) = 6 (nodes in different data centers) 27

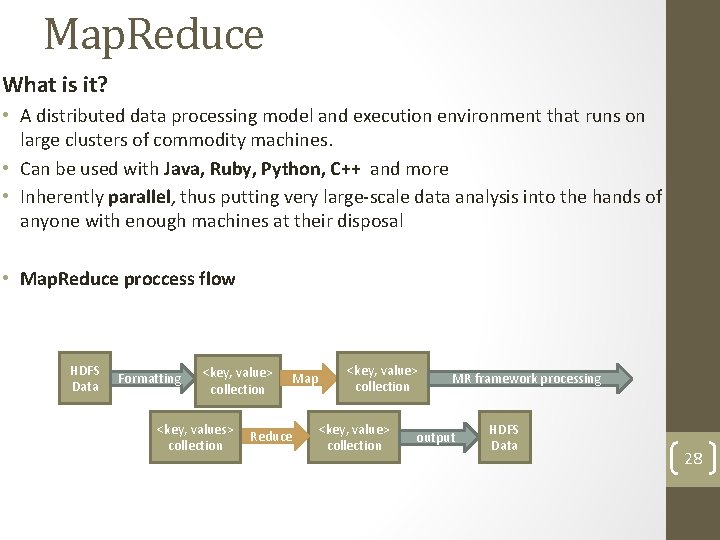

Map. Reduce What is it? • A distributed data processing model and execution environment that runs on large clusters of commodity machines. • Can be used with Java, Ruby, Python, C++ and more • Inherently parallel, thus putting very large-scale data analysis into the hands of anyone with enough machines at their disposal • Map. Reduce proccess flow HDFS Data Formatting <key, value> collection Map <key, values> Reduce collection <key, value> collection MR framework processing output HDFS Data 28

Map. Reduce Problem example: Weather Dataset Create a program that mines weather data • Weather sensors collecting data every hour at many locations across the globe, gather a large volume of log data. Source: NCDC • The data is stored using a line-oriented ASCII format, in which each line is a record • Mission - calculate max temperature each year around the world • Problem - millions of temperature measurements records 29

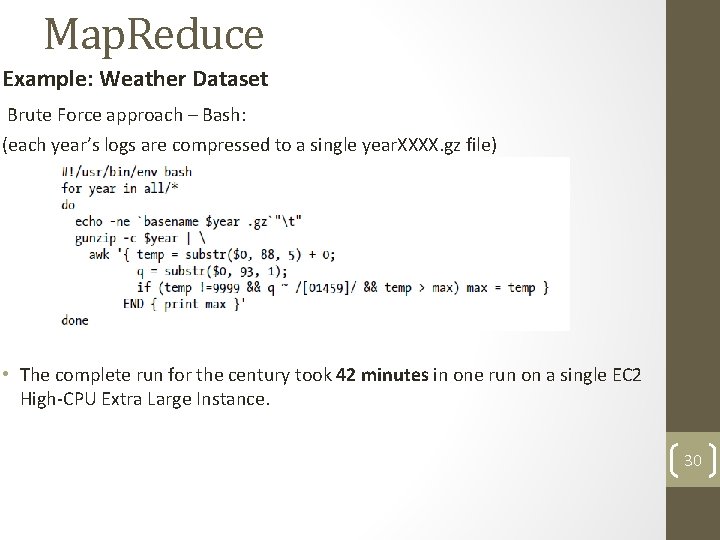

Map. Reduce Example: Weather Dataset Brute Force approach – Bash: (each year’s logs are compressed to a single year. XXXX. gz file) • The complete run for the century took 42 minutes in one run on a single EC 2 High-CPU Extra Large Instance. 30

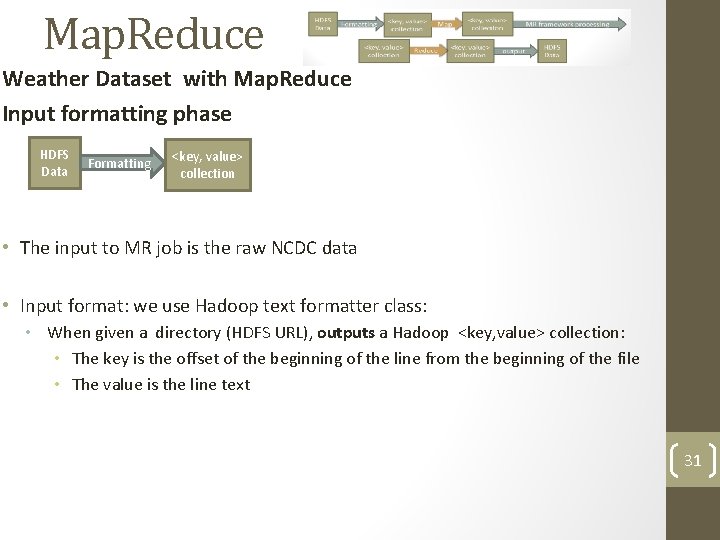

Map. Reduce Weather Dataset with Map. Reduce Input formatting phase HDFS Data Formatting <key, value> collection • The input to MR job is the raw NCDC data • Input format: we use Hadoop text formatter class: • When given a directory (HDFS URL), outputs a Hadoop <key, value> collection: • The key is the offset of the beginning of the line from the beginning of the file • The value is the line text 31

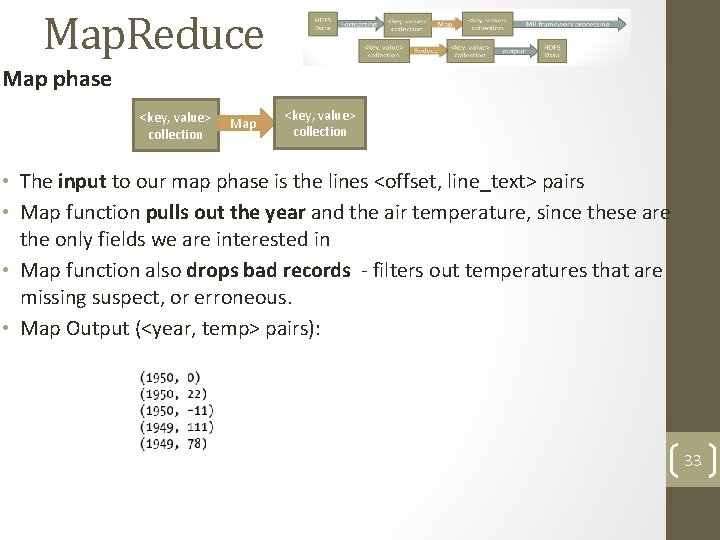

Map. Reduce Map phase <key, value> collection Map <key, value> collection • The input to our map phase is the lines <offset, line_text> pairs • Map function pulls out the year and the air temperature, since these are the only fields we are interested in • Map function also drops bad records - filters out temperatures that are missing suspect, or erroneous. • Map Output (<year, temp> pairs): 33

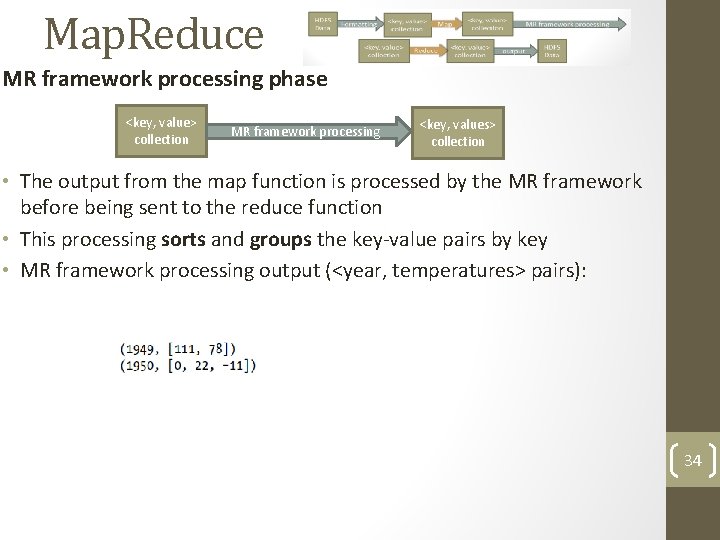

Map. Reduce MR framework processing phase <key, value> collection MR framework processing <key, values> collection • The output from the map function is processed by the MR framework before being sent to the reduce function • This processing sorts and groups the key-value pairs by key • MR framework processing output (<year, temperatures> pairs): 34

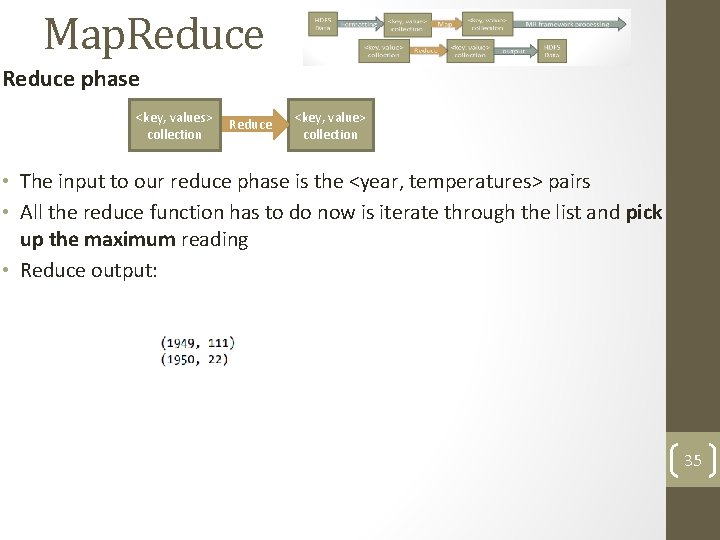

Map. Reduce phase <key, values> Reduce collection <key, value> collection • The input to our reduce phase is the <year, temperatures> pairs • All the reduce function has to do now is iterate through the list and pick up the maximum reading • Reduce output: 35

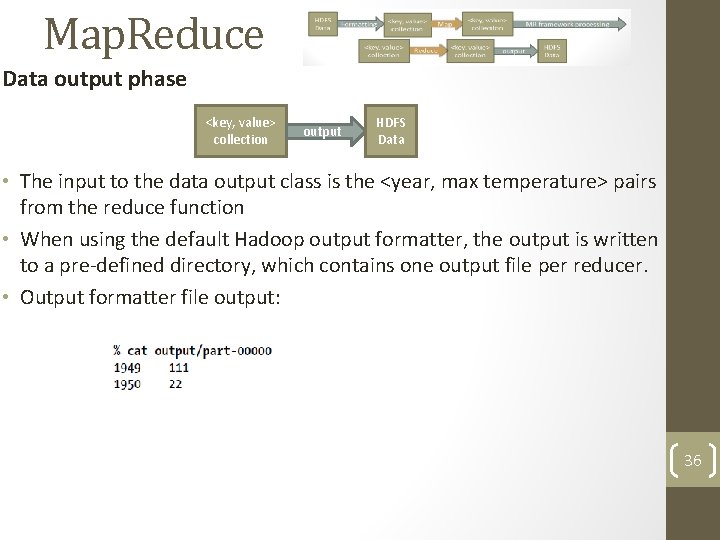

Map. Reduce Data output phase <key, value> collection output HDFS Data • The input to the data output class is the <year, max temperature> pairs from the reduce function • When using the default Hadoop output formatter, the output is written to a pre-defined directory, which contains one output file per reducer. • Output formatter file output: 36

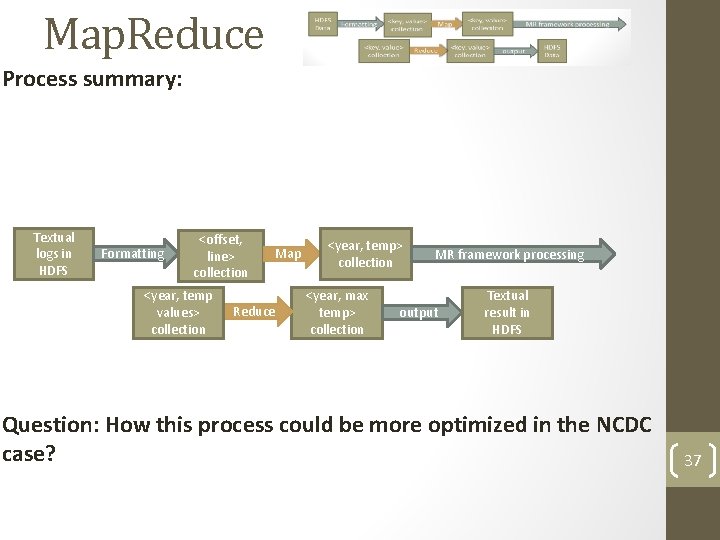

Map. Reduce Process summary: Textual logs in HDFS Formatting <offset, line> collection <year, temp values> collection Map Reduce <year, temp> collection <year, max temp> collection MR framework processing output Textual result in HDFS Question: How this process could be more optimized in the NCDC case? 37

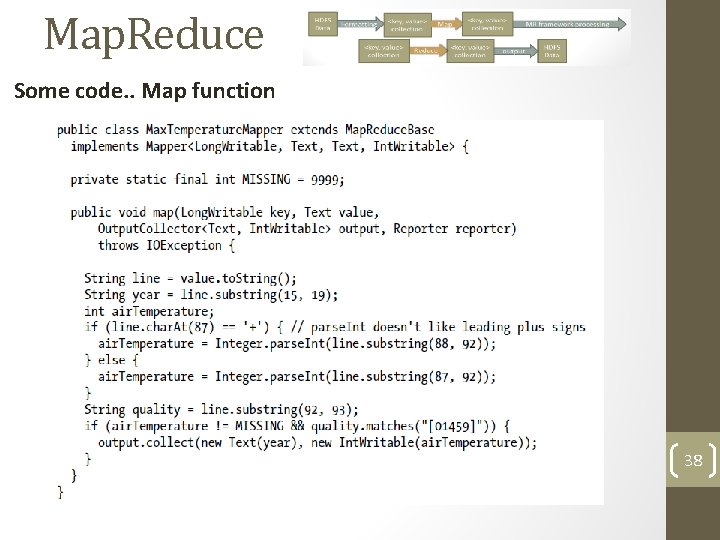

Map. Reduce Some code. . Map function 38

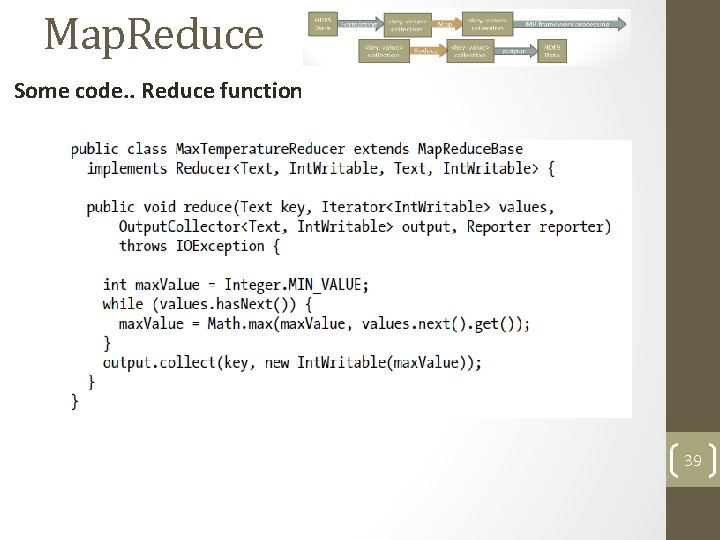

Map. Reduce Some code. . Reduce function 39

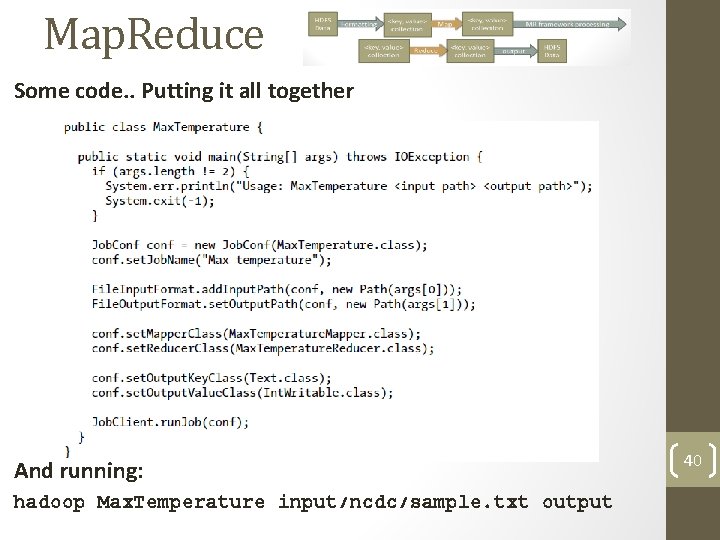

Map. Reduce Some code. . Putting it all together And running: hadoop Max. Temperature input/ncdc/sample. txt output 40

Map. Reduce Going deep. . Definitions: • MR Job - a unit of work that the client wants to be performed. Consists of: • The input data • The Map. Reduce program • Configuration information. • Hadoop runs the job by dividing it into tasks, of which there are two types: map tasks and reduce tasks. 41

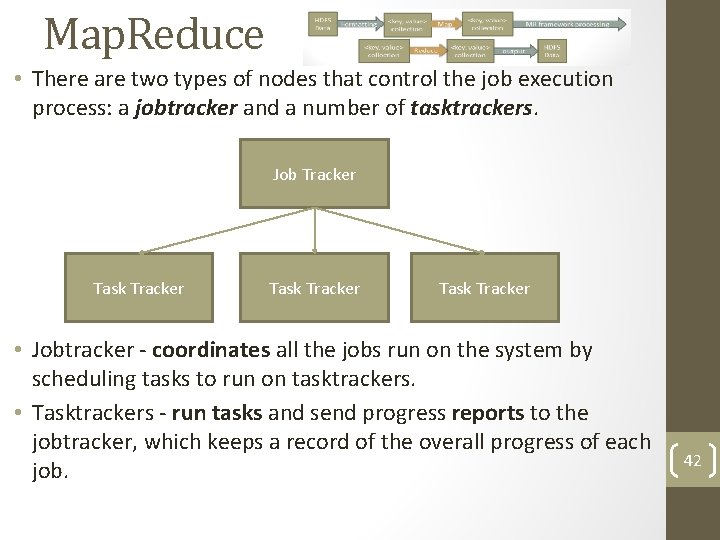

Map. Reduce • There are two types of nodes that control the job execution process: a jobtracker and a number of tasktrackers. Job Tracker Task Tracker • Jobtracker - coordinates all the jobs run on the system by scheduling tasks to run on tasktrackers. • Tasktrackers - run tasks and send progress reports to the jobtracker, which keeps a record of the overall progress of each job. 42

Map. Reduce Scaling out! • Hadoop divides the input to a Map. Reduce job into fixed-size pieces called input splits, or just splits. • Splits are normally corresponds to (one or more) file blocks • Hadoop creates one map task for each split, which runs the user defined map function for each record in the split. • Hadoop does its best to run the map task on a node where the input data resides in HDFS. This is called the data locality optimization. 43

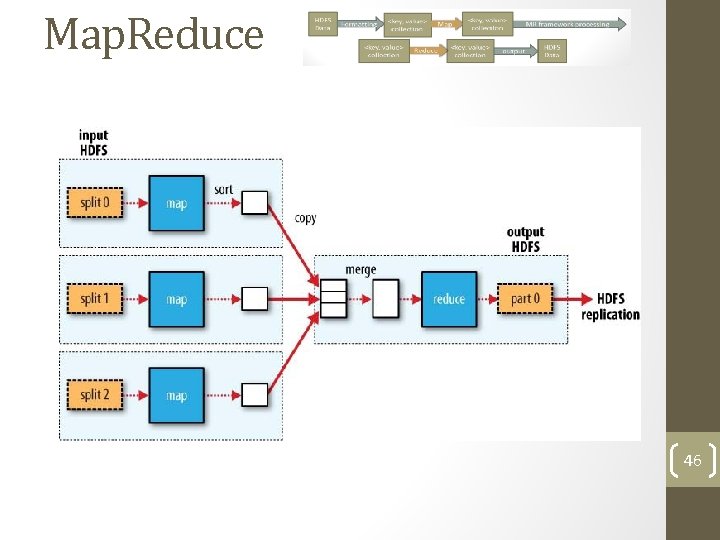

Map. Reduce 46

Map. Reduce • When there are multiple reducers, the map tasks partition their output, each creating one partition for each reduce task. • Framework ensures that the records for any given key are all in a single partition. • The partitioning can be controlled by a user-defined partitioning function, but normally the default partitioner— which buckets keys using a hash function—works very well • what will be a good partition function in our case? 47

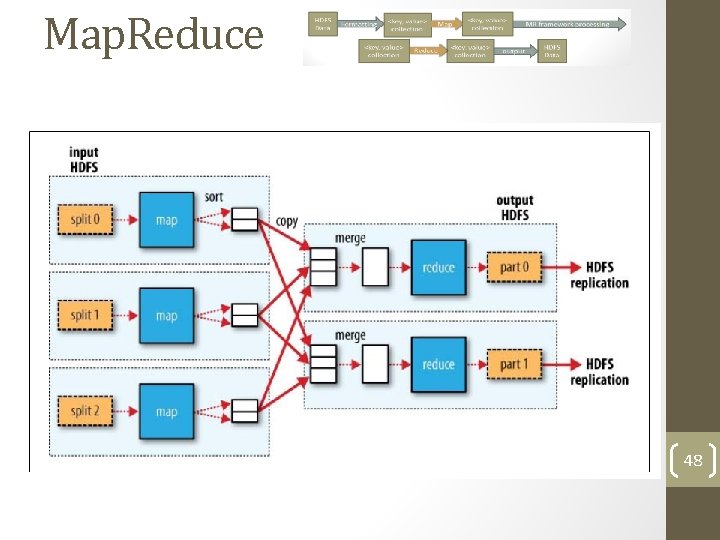

Map. Reduce 48

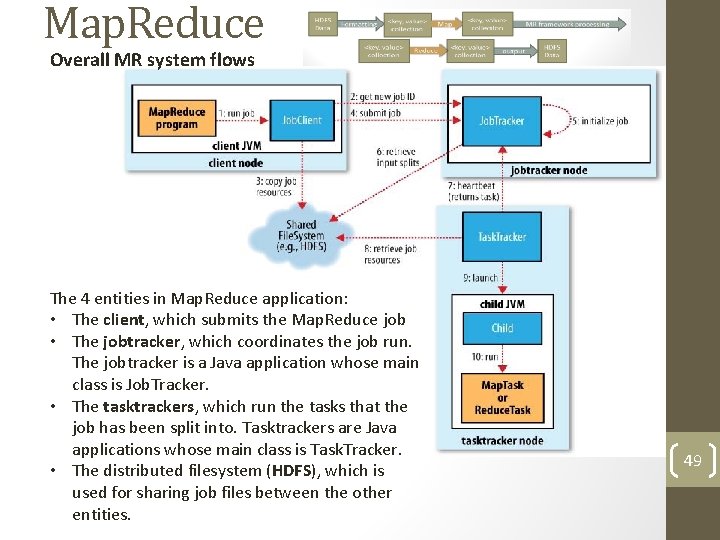

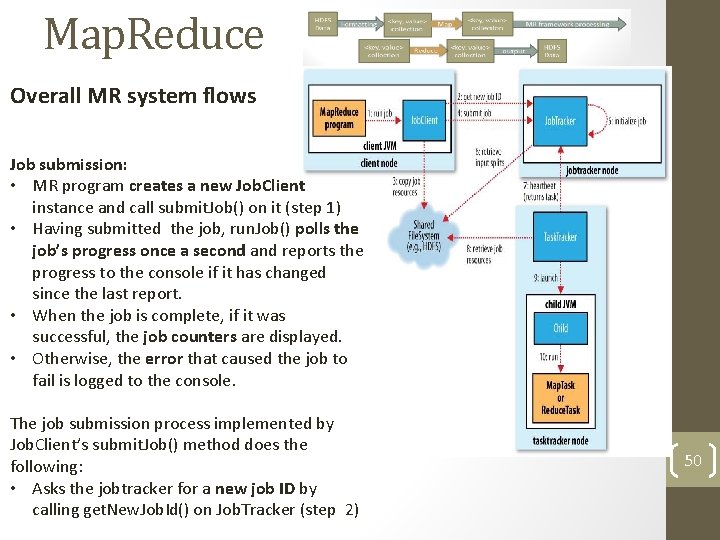

Map. Reduce Overall MR system flows The 4 entities in Map. Reduce application: • The client, which submits the Map. Reduce job • The jobtracker, which coordinates the job run. The jobtracker is a Java application whose main class is Job. Tracker. • The tasktrackers, which run the tasks that the job has been split into. Tasktrackers are Java applications whose main class is Task. Tracker. • The distributed filesystem (HDFS), which is used for sharing job files between the other entities. 49

Map. Reduce Overall MR system flows Job submission: • MR program creates a new Job. Client instance and call submit. Job() on it (step 1) • Having submitted the job, run. Job() polls the job’s progress once a second and reports the progress to the console if it has changed since the last report. • When the job is complete, if it was successful, the job counters are displayed. • Otherwise, the error that caused the job to fail is logged to the console. The job submission process implemented by Job. Client’s submit. Job() method does the following: • Asks the jobtracker for a new job ID by calling get. New. Job. Id() on Job. Tracker (step 2) 50

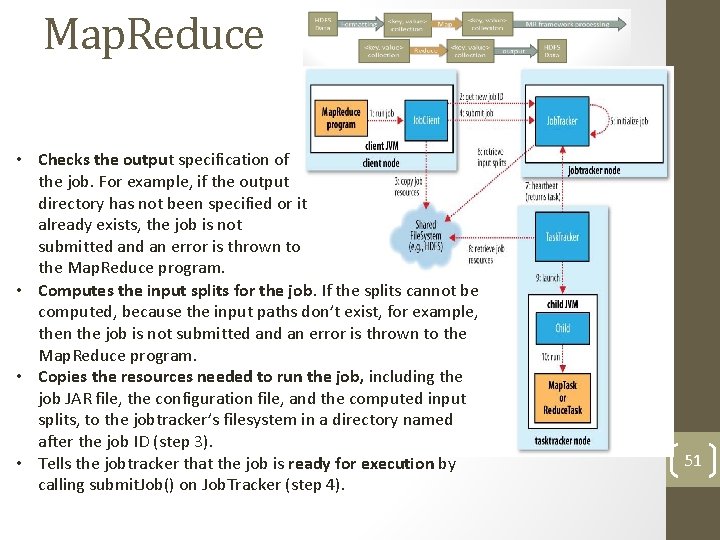

Map. Reduce • Checks the output specification of the job. For example, if the output directory has not been specified or it already exists, the job is not submitted an error is thrown to the Map. Reduce program. • Computes the input splits for the job. If the splits cannot be computed, because the input paths don’t exist, for example, then the job is not submitted an error is thrown to the Map. Reduce program. • Copies the resources needed to run the job, including the job JAR file, the configuration file, and the computed input splits, to the jobtracker’s filesystem in a directory named after the job ID (step 3). • Tells the jobtracker that the job is ready for execution by calling submit. Job() on Job. Tracker (step 4). 51

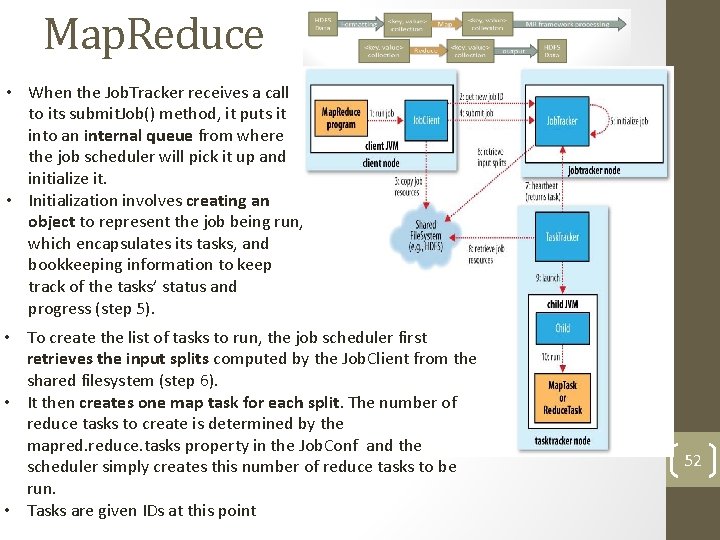

Map. Reduce • When the Job. Tracker receives a call to its submit. Job() method, it puts it into an internal queue from where the job scheduler will pick it up and initialize it. • Initialization involves creating an object to represent the job being run, which encapsulates its tasks, and bookkeeping information to keep track of the tasks’ status and progress (step 5). • To create the list of tasks to run, the job scheduler first retrieves the input splits computed by the Job. Client from the shared filesystem (step 6). • It then creates one map task for each split. The number of reduce tasks to create is determined by the mapred. reduce. tasks property in the Job. Conf and the scheduler simply creates this number of reduce tasks to be run. • Tasks are given IDs at this point 52

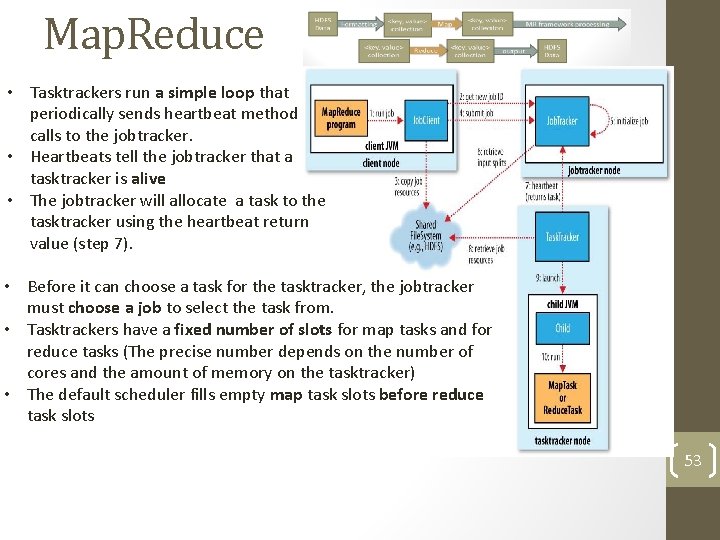

Map. Reduce • Tasktrackers run a simple loop that periodically sends heartbeat method calls to the jobtracker. • Heartbeats tell the jobtracker that a tasktracker is alive • The jobtracker will allocate a task to the tasktracker using the heartbeat return value (step 7). • Before it can choose a task for the tasktracker, the jobtracker must choose a job to select the task from. • Tasktrackers have a fixed number of slots for map tasks and for reduce tasks (The precise number depends on the number of cores and the amount of memory on the tasktracker) • The default scheduler fills empty map task slots before reduce task slots 53

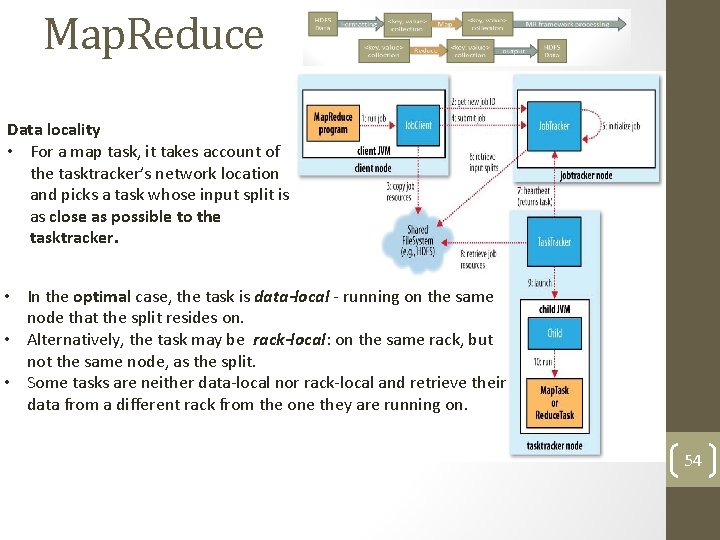

Map. Reduce Data locality • For a map task, it takes account of the tasktracker’s network location and picks a task whose input split is as close as possible to the tasktracker. • In the optimal case, the task is data-local - running on the same node that the split resides on. • Alternatively, the task may be rack-local: on the same rack, but not the same node, as the split. • Some tasks are neither data-local nor rack-local and retrieve their data from a different rack from the one they are running on. 54

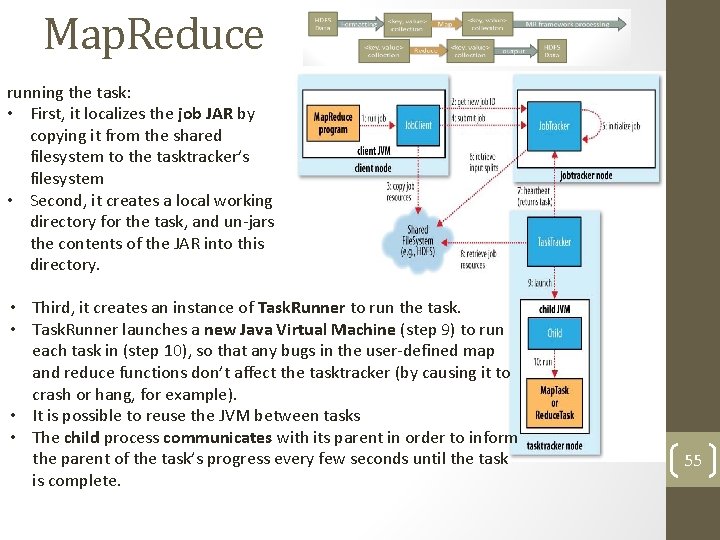

Map. Reduce running the task: • First, it localizes the job JAR by copying it from the shared filesystem to the tasktracker’s filesystem • Second, it creates a local working directory for the task, and un-jars the contents of the JAR into this directory. • Third, it creates an instance of Task. Runner to run the task. • Task. Runner launches a new Java Virtual Machine (step 9) to run each task in (step 10), so that any bugs in the user-defined map and reduce functions don’t affect the tasktracker (by causing it to crash or hang, for example). • It is possible to reuse the JVM between tasks • The child process communicates with its parent in order to inform the parent of the task’s progress every few seconds until the task is complete. 55

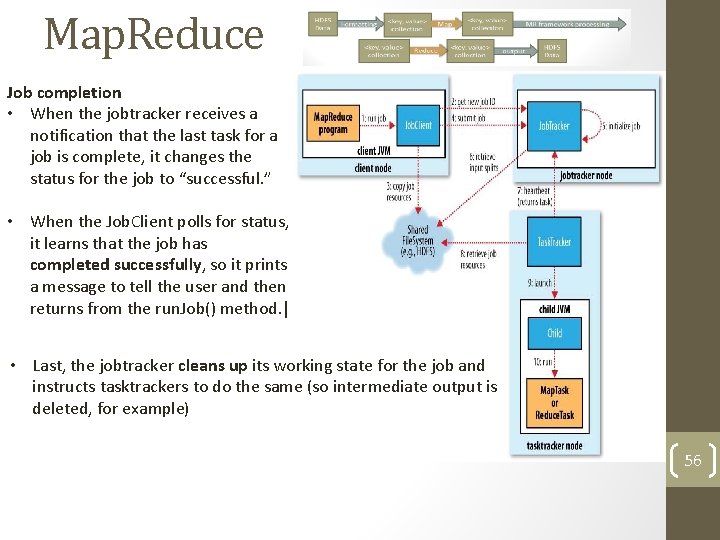

Map. Reduce Job completion • When the jobtracker receives a notification that the last task for a job is complete, it changes the status for the job to “successful. ” • When the Job. Client polls for status, it learns that the job has completed successfully, so it prints a message to tell the user and then returns from the run. Job() method. | • Last, the jobtracker cleans up its working state for the job and instructs tasktrackers to do the same (so intermediate output is deleted, for example) 56

Map. Reduce Back to the Weather Dataset • The same program will run, without alteration, on a full cluster. • This is the point of Map. Reduce: it scales to the size of your data and the size of your hardware. • On a 10 -node EC 2 cluster running High-CPU Extra Large Instances, the program took six minutes to run 57

Map. Reduce Hadoop implementations around: • EBay • 532 nodes cluster (8 * 532 cores, 5. 3 PB). • Heavy usage of Java Map. Reduce, Pig, Hive, HBase • Using it for Search optimization and Research. • Facebook • Use Hadoop to store copies of internal log and dimension data sources and use it as a source for reporting/analytics and machine learning. • Currently major clusters: • 1100 -machine cluster with 8800 cores and about 12 PB raw storage. • 300 -machine cluster with 2400 cores and about 3 PB raw storage. • Each (commodity) node has 8 cores and 12 TB of storage. 58

Map. Reduce • Linked. In • multiple grids divided up based upon purpose. • 120 Nehalem-based Sun x 4275, with 2 x 4 cores, 24 GB RAM, 8 x 1 TB SATA • 580 Westmere-based HP SL 170 x, with 2 x 4 cores, 24 GB RAM, 6 x 2 TB SATA • 1200 Westmere-based Super. Micro X 8 DTT-H, with 2 x 6 cores, 24 GB RAM, 6 x 2 TB SATA • Software: • • Cent. OS 5. 5 -> RHEL 6. 1 Apache Hadoop 0. 2+patches -> Apache Hadoop 0. 204+patches Pig 0. 9 heavily customized Hive, Avro, Kafka, and other bits and pieces. . . • Used for discovering People You May Know and other fun facts. • Yahoo! • • More than 100, 000 CPUs in >40, 000 computers running Hadoop Biggest cluster: 4500 nodes (2*4 cpu boxes w 4*1 TB disk & 16 GB RAM) Used to support research for Ad Systems and Web Search Also used to do scaling tests to support development of Hadoop on larger clusters 59

Map. Reduce and Parallel DBMS systems • In the mid-1980 s the Teradata and Gamma projects pioneered a new architectural paradigm for parallel database systems based on a cluster of commodity computers nodes • Those were called “shared-nothing nodes” (or separate CPU, memory, and disks), only connected through a high-speed interconnection • Every parallel database system built since then essentially uses the techniques first pioneered by these two projects: • horizontal partitioning of relational tables - distribute the rows of a relational table across the nodes of the cluster so they can be processed in parallel. • Partitioned execution of SQL queries - selection, aggregation, join, projection, and update queries are distributed among the nodes, result are sent back to a “master” node for merge. 60

Map. Reduce and Parallel DBMS systems • Many commercial implementations are available, including Teradata, Netezza, Data. Allegro (Microsoft), Par. Accel, Greenplum, Aster, Vertica, and DB 2. • All run on shared-nothing clusters of nodes, with tables horizontally partitioned over them. Map. Reduce • An attractive quality of the MR programming model is simplicity; an MR program consists of only two functions • Map and Reduce—written by a user to process key/value data pairs. • The input data set is stored in a collection of partitions in a distributed file system deployed on each node in the cluster. • The program is then injected into a distributed-processing framework and executed 61

Map. Reduce and Parallel DBMS systems MR – Parallel DBMS comparison • Filtering and transformation of individual data items (tuples in tables) can be executed by a modern parallel DBMS using SQL. • For Map operations not easily expressed in SQL, many DBMSs support user defined functions (UDF) extensibility provides the equivalent functionality of a Map operation. • SQL aggregates augmented with UDFs and user-defined aggregates provide DBMS users the same MR-style reduce functionality. • Lastly, the reshuffle that occurs between the Map and Reduce tasks in MR is equivalent to a GROUP BY operation in SQL. • Given this, parallel DBMSs provide the same computing model as MR, with the added benefit of using a declarative language (SQL). 62

Map. Reduce and Parallel DBMS systems MR – Parallel DBMS comparison • As for scalability - several production databases in the multipetabyte range are run by very large customers, operating on clusters of order 100 nodes. • The people who manage these systems do not report the need for additional parallelism. • Thus, parallel DBMSs offer great scalability over the range of nodes that customers desire. So why use Map. Reduce? Why it is used so widely? 63

Map. Reduce and Parallel DBMS systems several application classes are mentioned as possible use cases in which the MR model is a better choice than a DBMS: • ETL and “read once” data sets. • The use of MR is characterized by the following template of five operations: • • • Read logs of information from several different sources; Parse and clean the log data; Perform complex transformations Decide what attribute data to store Load the information into a persistent storage • These steps are analogous to the extract, transform, and load phases in ETL systems • The MR system is essentially “cooking” raw data into useful information that is consumed by another storage system. Hence, an MR system can be considered a general-purpose parallel ETL system. 64

Map. Reduce and Parallel DBMS systems • Complex analytics. • In many data mining and data-clustering applications, the program must make multiple passes over the data. • Such applications cannot be structured as single SQL aggregate queries • MR is a good candidate for such applications • Semi-structured data. • MR systems do not require users to define a schema for their data. • MR-style systems easily store and process what is known as “semistructured” data. • Such data often looks like key-value pairs, where the number of attributes present in any given record varies. • This style of data is typical of Web traffic logs derived from different sources 65

Map. Reduce and Parallel DBMS systems • Quick-and-dirty analysis. • One disappointing aspect of many current parallel DBMSs is that they are difficult to install and configure properly, and require heavy tuning • open-source MR implementation provides the best “out-of-the-box” experience - MR system up and running significantly faster than the DBMSs. • Once a DBMS is up and running properly, programmers must still write a schema for their data, then load the data set into the system. • This process takes considerably longer in a DBMS than in an MR system • Limited-budget operations. • Another strength of MR systems is that most are open source projects available for free. • DBMSs, and in particular parallel DBMSs, are expensive!! 66

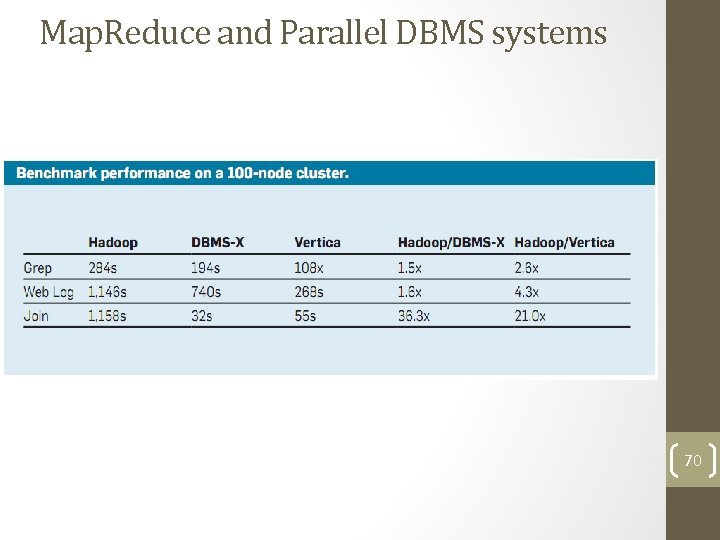

Map. Reduce and Parallel DBMS systems Benchmark • The benchmark compares two parallel DBMSs to the Hadoop MR framework on a variety of tasks. • Two database systems are used: • Vertica, a commercial column-store relational database • DBMS-X, a row-based database from a large commercial vendor • All experiments run on a 100 -node shared-nothing cluster at the University of Wisconsin-Madison • Benchmark tasks: • Grep task • Representative of a large subset of the real programs written by users of Map. Reduce • For the task, each system must scan through a data set of 100 B records looking for a three-character pattern. • Use a 1 TB data set spread over the 100 nodes (10 GB/node). The data set consists of 10 billion records, each 100 B. 67

Map. Reduce and Parallel DBMS systems Benchmark • Web log task • Conventional SQL aggregation with a GROUP BY clause on a table of user visits in a Web server log. • Such data is fairly typical of Web logs, and the query is commonly used in traffic analytics. • Used a 2 TB data set consisting of 155 million records spread over the 100 nodes (20 GB/node). • Each system must calculate the total ad revenue generated for each visited IP address from the logs. • Like the previous task, the records must all be read, and thus there is no indexing opportunity for the DBMSs 68

Map. Reduce and Parallel DBMS systems Benchmark • Join task • A complex join operation over two tables requiring an additional aggregation and filtering operation. • The user-visit data set from the previous task is joined with an additional 100 GB table of Page. Rank values for 18 million URLs (1 GB/node). • The join task consists of two subtasks • In the first part of the task, each system must find the IP address that generated the most revenue within a particular date range in the users visits. • Once these intermediate records are generated, the system must then calculate the average Page. Rank of all pages visited during this interval. 69

Map. Reduce and Parallel DBMS systems 70

Map. Reduce and Parallel DBMS systems Results analysis • The performance differences between Hadoop and the DBMSs can be explained by a variety of factors • these differences result from implementation choices made by the two classes of system, not from any fundamental difference in the two models: • the MR processing model is independent of the underlying storage system, so data could theoretically be massaged, indexed, compressed, and carefully laid out (into a schema) on storage during a load phase, just like a DBMS. • Howerver, the goal of the study was to compare the real-life differences in performance of representative realizations of the two models. 71

Map. Reduce and Parallel DBMS systems Results analysis • Repetitive record parsing • One contributing factor for Hadoop’s slower performance is that the default configuration of Hadoop stores data in the accompanying distributed file system (HDFS), in the same textual format in which the data was generated. • Consequently, this default storage method places the burden of parsing the fields of each record on user code. • This parsing task requires each Map and Reduce task repeatedly parse and convert string fields into the appropriate type. • In DBMS systems, data resides in the schema in the converted form (conversions take place once when loading the logs in to the DB) 72

Map. Reduce and Parallel DBMS systems Results analysis • Compression • Enabling data compression in the DBMSs delivered a significant performance gain. • The benchmark results show that using compression in Vertica and DBMSX on these workloads improves performance by a factor of two to four. • On the other hand, Hadoop often executed slower when used compression on its input files. At most, compression improved performance by 15% Why? • commercial DBMSs use carefully tuned compression algorithms to ensure that the cost of decompressing tuples does not offset the performance gains from the reduced I/O cost of reading compressed data • UNIX gzip (used by Hadoop) does not make these optimizations 73

Map. Reduce and Parallel DBMS systems Results analysis • Pipelining • All parallel DBMSs operate by creating a query plan that is distributed to the appropriate nodes at execution time. • data is “pushed” (streamed) by the producer node to the consumer node • the intermediate data is never written to disk • In MR systems, the producer writes the intermediate results to local data structures, and the consumer subsequently “pulls” the data. • These data structures are often quite large, so the system must write them out to disk, introducing a potential bottleneck. • writing data structures to disk gives Hadoop a convenient way to checkpoint the output of intermediate map jobs - improving fault tolerance • it adds significant performance overhead! 74

Map. Reduce and Parallel DBMS systems Results analysis • Column-oriented storage • In a column store-based database (such as Vertica), the system reads only the attributes necessary for solving the user query. • This limited need for reading data represents a considerable performance advantage over traditional, row-stored databases, where the system reads all attributes off the disk. • DBMS-X and Hadoop/HDFS are both essentially row stores, while Vertica is a column store, giving Vertica a significant advantage over the other two systems in the benchmark 75

Map. Reduce and Parallel DBMS systems Conclusions • Most of the architectural differences between the two systems are the result of the different focuses of the two classes of system. • Parallel DBMSs excel at efficient querying of large data sets • MR style systems excel at complex analytics and ETL tasks. • MR systems are also more scalable, faster to learn and install and much cheaper, in both the software COGS, and the ability to utilize legacy machines, deployment environments, etc. • The two technologies are complementary, and MR-style systems are expected to performing ETL and live directly upstream from DBMSs. 76

Map. Reduce Questions? 77

- Slides: 64