Hadoop HBase V 0 20 Jazznchc org tw

Hadoop 進階課程 HBase 資料庫應用 < V 0. 20 > 王耀聰 陳威宇 Jazz@nchc. org. tw waue@nchc. org. tw

RDBMS / 資料庫 l Relational Data. Base Management System = 關聯式資料庫管理系統 u Oracle、IBM DB 2、SQL Server、My. SQL… 4

將RDBMS簡化吧 l RDBMS -> key-value Data. Base u 簡化掉不需要的功能,到只剩下key-value 的架構 n GET(key) n SET(key, value) n DELETE(key) l 類似 Excel 9

Distributed key-value System l key-value Data. Base -> Distributed keyvalue Data. Base l 加強 key-value 架構的 scalability,使得 增加機器就可以增加容量與頻寬 l 適合管理大量分散於不同主機的資料 l 通稱為 No. SQL Data. Base 10

常見的 No. SQL Open. Source l HBase (Yahoo!) l Cassandra (Facebook) l Mongo. DB l Couch. DB (IBM) l Simple. DB (Amazon) Commercial l Big. Table (Google) 11

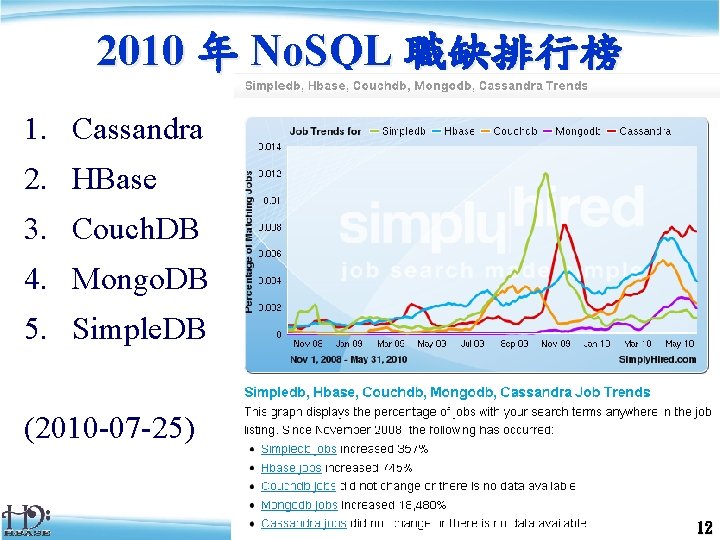

2010 年 No. SQL 職缺排行榜 1. Cassandra 2. HBase 3. Couch. DB 4. Mongo. DB 5. Simple. DB (2010 -07 -25) 12

HBase 資料庫應用 一、HBase 介紹 介紹HBase如何而來,它的 Why, What, How …. ,以及它的架構 HBase, Hadoop database, is an open-source, distributed, versioned, column-oriented store modeled after Google' Bigtable. Use it when you need random, realtime read/write access to your Big Data. Adapted From http: //hbase. apache. org 13

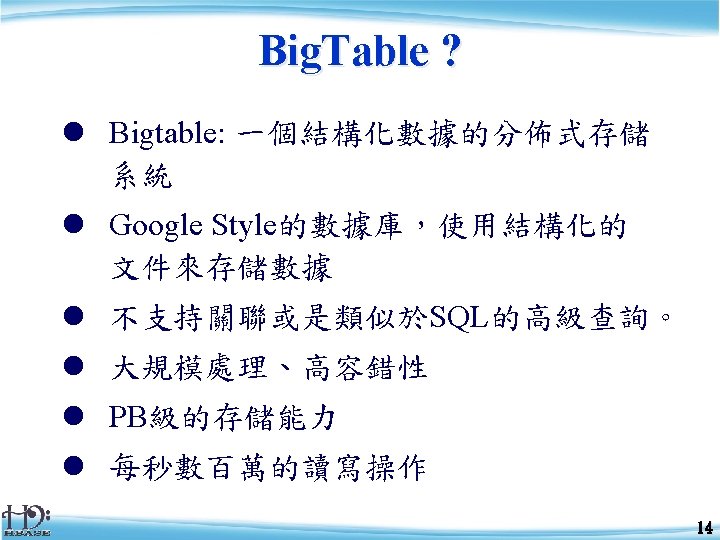

開發歷程 l l Started toward by Chad Walters and Jim 2006. 11 u l 2007. 2 u l Hadoop become Apache top-level project and HBase becomes subproject 2010. 3 u l First useable HBase 2008. 1 u l Initial HBase prototype created as Hadoop contrib. 2007. 10 u l Google releases paper on Big. Table HBase graduates from Hadoop sub-project to Apache Top Level Project 2010. 7 u HBase 0. 20. 6 released 16

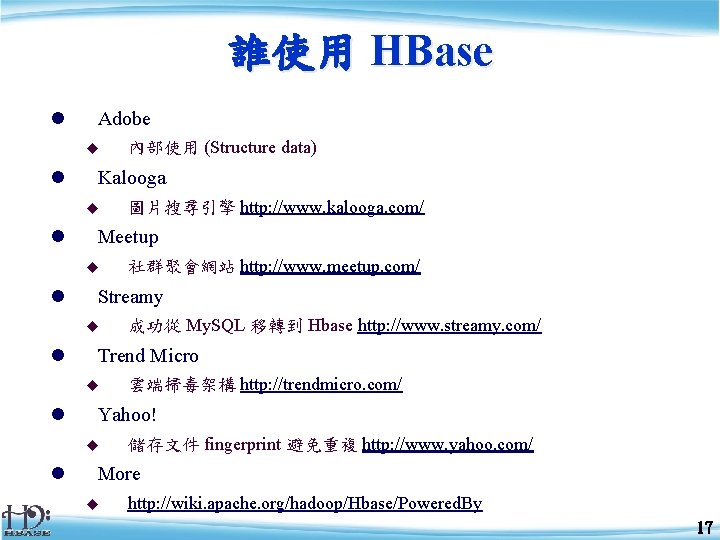

誰使用 HBase l Adobe u l Kalooga u l 雲端掃毒架構 http: //trendmicro. com/ Yahoo! u l 成功從 My. SQL 移轉到 Hbase http: //www. streamy. com/ Trend Micro u l 社群聚會網站 http: //www. meetup. com/ Streamy u l 圖片搜尋引擎 http: //www. kalooga. com/ Meetup u l 內部使用 (Structure data) 儲存文件 fingerprint 避免重複 http: //www. yahoo. com/ More u http: //wiki. apache. org/hadoop/Hbase/Powered. By 17

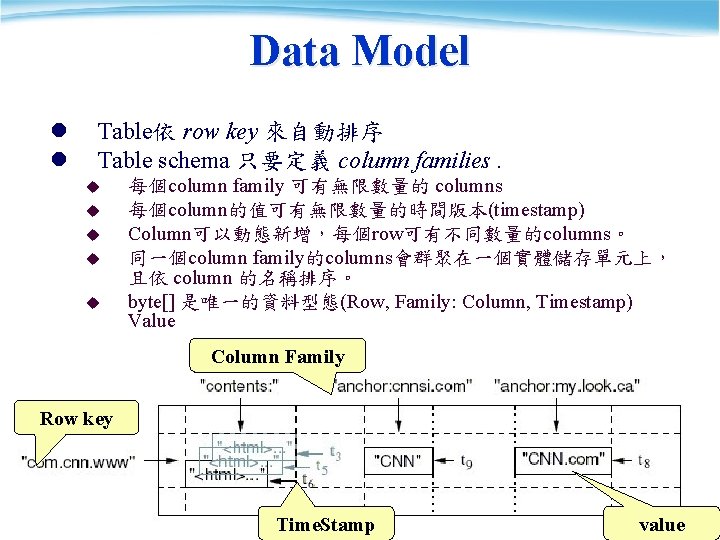

Data Model l l Table依 row key 來自動排序 Table schema 只要定義 column families. u u u 每個column family 可有無限數量的 columns 每個column的值可有無限數量的時間版本(timestamp) Column可以動態新增,每個row可有不同數量的columns。 同一個column family的columns會群聚在一個實體儲存單元上, 且依 column 的名稱排序。 byte[] 是唯一的資料型態(Row, Family: Column, Timestamp) Value Column Family Row key 19 Time. Stamp value

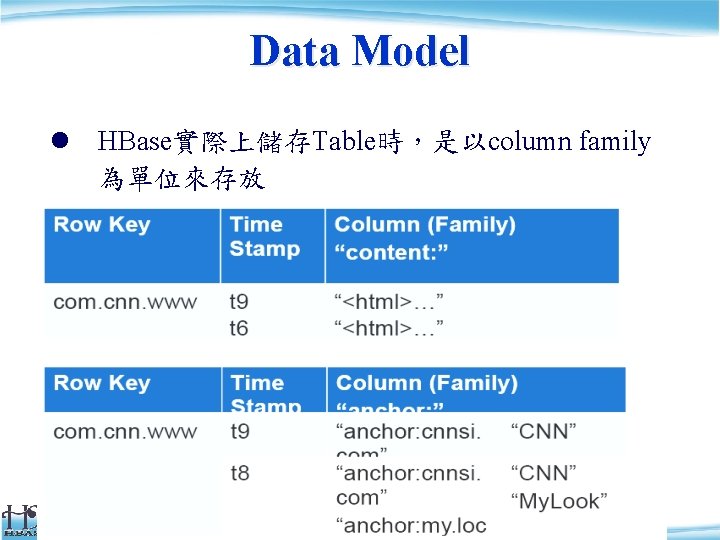

Data Model l HBase實際上儲存Table時,是以column family 為單位來存放 20

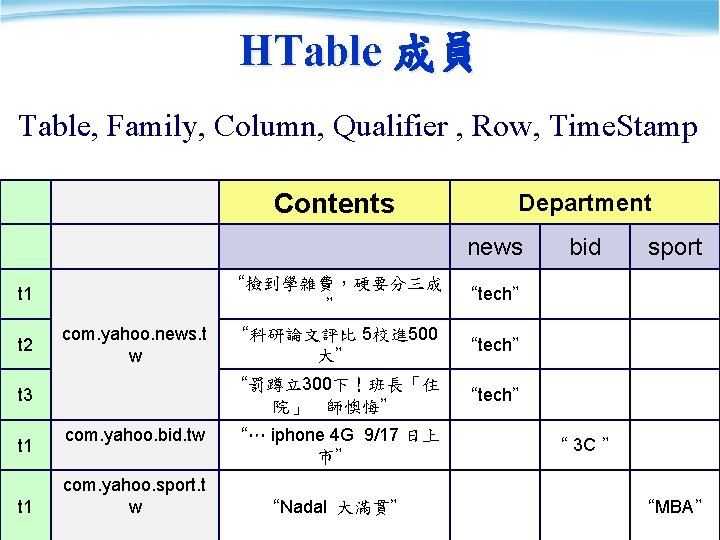

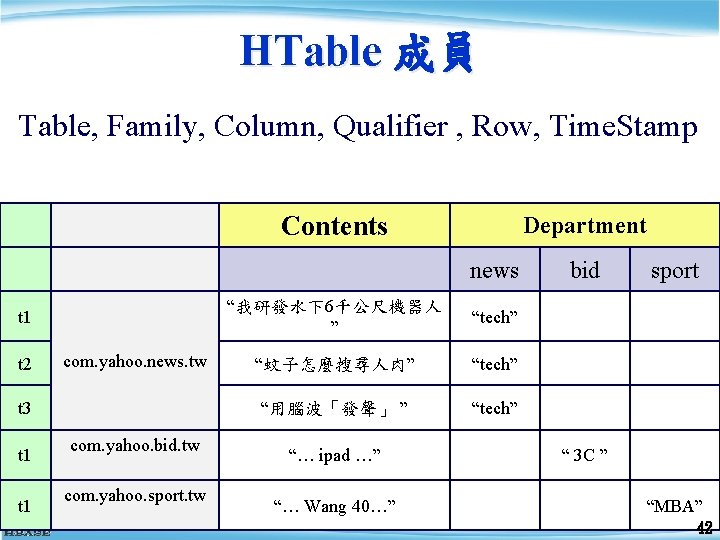

HTable 成員 Table, Family, Column, Qualifier , Row, Time. Stamp Contents Department news com. yahoo. news. t w t 3 t 1 sport “撿到學雜費,硬要分三成 “tech” ” t 1 t 2 bid com. yahoo. bid. tw com. yahoo. sport. t w “科研論文評比 5校進 500 大” “tech” “罰蹲立300下!班長「住 院」 師懊悔” “tech” “… iphone 4 G 9/17 日上 市” “Nadal 大滿貫” “ 3 C ” “MBA” 21

HBase 與 Hadoop 搭配的架構 From: http: //www. larsgeorge. com/2009/10/hbasearchitecture-101 -storage. html 23

HBase 0. 20 特色 l 解決單點失效問題(single point of failure) u Ex: Hadoop Name. Node failure l 設定檔改變或小版本更新會重新啟動 l 隨機讀寫(Random access)效能如同 My. SQL 24

安裝 需先安裝過 Hadoop… $ wget http: //ftp. twaren. net/Unix/Web/apache/hadoop/hbase 0. 20. 6/hbase-0. 20. 6. tar. gz $ sudo tar -zxvf hbase-*. tar. gz -C /opt/ $ sudo ln -sf /opt/hbase-0. 20. 6 /opt/hbase $ sudo chown -R $USER: $USER /opt/hbase 27

系統環境設定 $ vim /opt/hbase/conf/hbase-env. sh # 基本設定 export JAVA_HOME=/usr/lib/jvm/java-6 -sun export HADOOP_HOME=/opt/hadoop export HBASE_HOME=/opt/hbase export HBASE_LOG_DIR=/var/hadoop/hbase-logs export HBASE_PID_DIR=/var/hadoop/hbase-pids export HBASE_CLASSPATH=$HBASE_CLASSPATH: /opt/hadoop/conf # 叢集選項 export HBASE_MANAGES_ZK=true 28

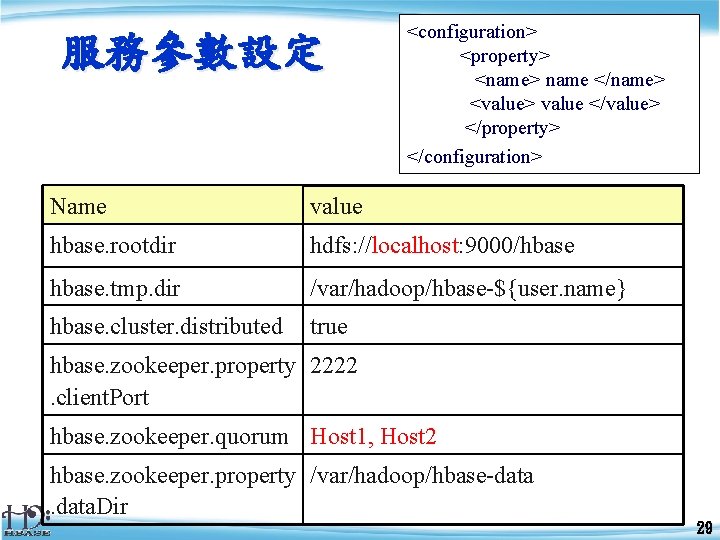

服務參數設定 <configuration> <property> <name> name </name> <value> value </value> </property> </configuration> Name value hbase. rootdir hdfs: //localhost: 9000/hbase hbase. tmp. dir /var/hadoop/hbase-${user. name} hbase. cluster. distributed true hbase. zookeeper. property 2222 . client. Port hbase. zookeeper. quorum Host 1, Host 2 hbase. zookeeper. property /var/hadoop/hbase-data . data. Dir 29

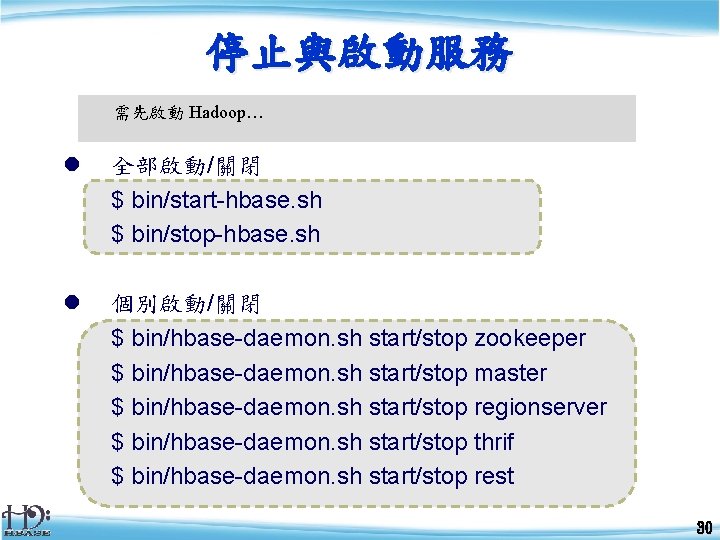

停止與啟動服務 需先啟動 Hadoop… l 全部啟動/關閉 $ bin/start-hbase. sh $ bin/stop-hbase. sh l 個別啟動/關閉 $ bin/hbase-daemon. sh start/stop zookeeper $ bin/hbase-daemon. sh start/stop master $ bin/hbase-daemon. sh start/stop regionserver $ bin/hbase-daemon. sh start/stop thrif $ bin/hbase-daemon. sh start/stop rest 30

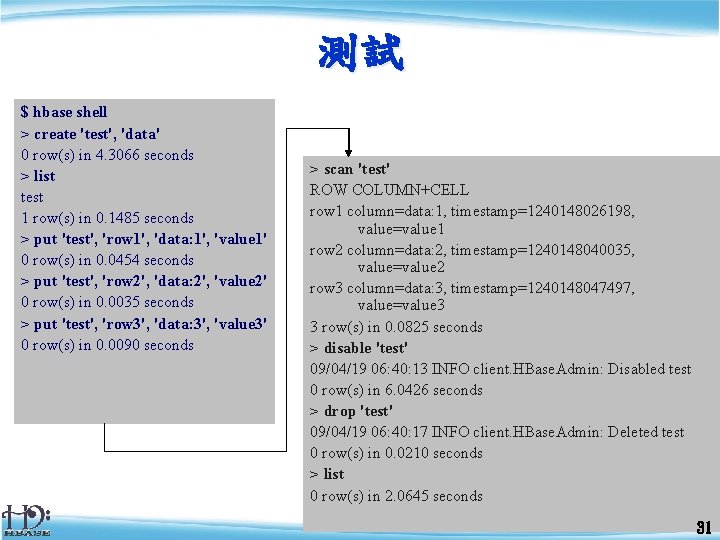

測試 $ hbase shell > create 'test', 'data' 0 row(s) in 4. 3066 seconds > list test 1 row(s) in 0. 1485 seconds > put 'test', 'row 1', 'data: 1', 'value 1' 0 row(s) in 0. 0454 seconds > put 'test', 'row 2', 'data: 2', 'value 2' 0 row(s) in 0. 0035 seconds > put 'test', 'row 3', 'data: 3', 'value 3' 0 row(s) in 0. 0090 seconds > scan 'test' ROW COLUMN+CELL row 1 column=data: 1, timestamp=1240148026198, value=value 1 row 2 column=data: 2, timestamp=1240148040035, value=value 2 row 3 column=data: 3, timestamp=1240148047497, value=value 3 3 row(s) in 0. 0825 seconds > disable 'test' 09/04/19 06: 40: 13 INFO client. HBase. Admin: Disabled test 0 row(s) in 6. 0426 seconds > drop 'test' 09/04/19 06: 40: 17 INFO client. HBase. Admin: Deleted test 0 row(s) in 0. 0210 seconds > list 0 row(s) in 2. 0645 seconds 31

![連線到 HBase 的方法 l Java client u get(byte [] row, byte [] column, long 連線到 HBase 的方法 l Java client u get(byte [] row, byte [] column, long](http://slidetodoc.com/presentation_image/41a266c235cdd83193940a623940256c/image-32.jpg)

連線到 HBase 的方法 l Java client u get(byte [] row, byte [] column, long timestamp, int versions); l Non-Java clients u Thrift server hosting HBase client instance l Sample ruby, c++, & java (via thrift) clients u REST server hosts HBase client l Table. Input/Output. Format for Map. Reduce u HBase as MR source or sink l HBase Shell u u JRuby IRB with “DSL” to add get, scan, and admin. /bin/hbase shell YOUR_SCRIPT 32

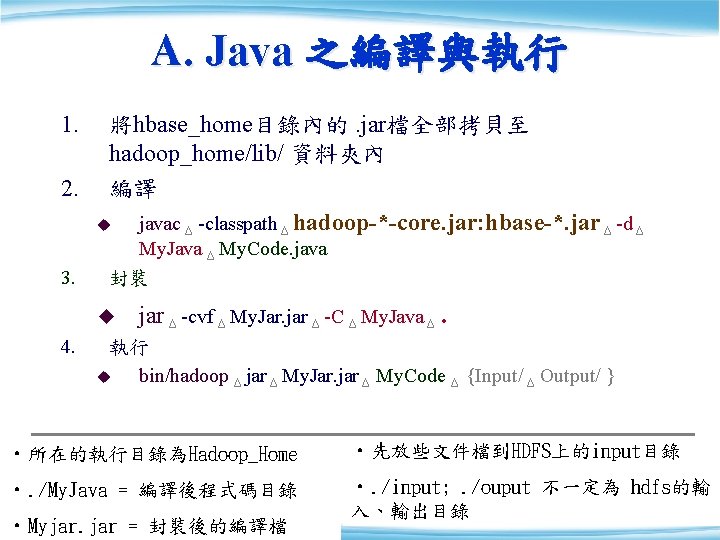

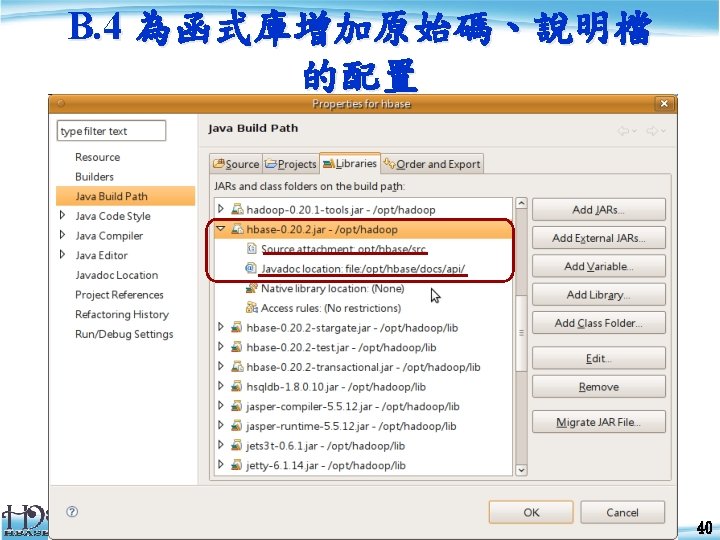

A. Java 之編譯與執行 1. 將hbase_home目錄內的. jar檔全部拷貝至 hadoop_home/lib/ 資料夾內 2. 編譯 u javac Δ -classpath Δ hadoop-*-core. jar: hbase-*. jar Δ -d Δ 3. My. Java Δ My. Code. java 封裝 u 4. jar Δ -cvf Δ My. Jar. jar Δ -C Δ My. Java Δ. 執行 u bin/hadoop Δ jar Δ My. Jar. jar Δ My. Code Δ {Input/ Δ Output/ } • 所在的執行目錄為Hadoop_Home • 先放些文件檔到HDFS上的input目錄 • . /My. Java = 編譯後程式碼目錄 • . /input; . /ouput 不一定為 hdfs的輸 35 入、輸出目錄 35 • Myjar. jar = 封裝後的編譯檔

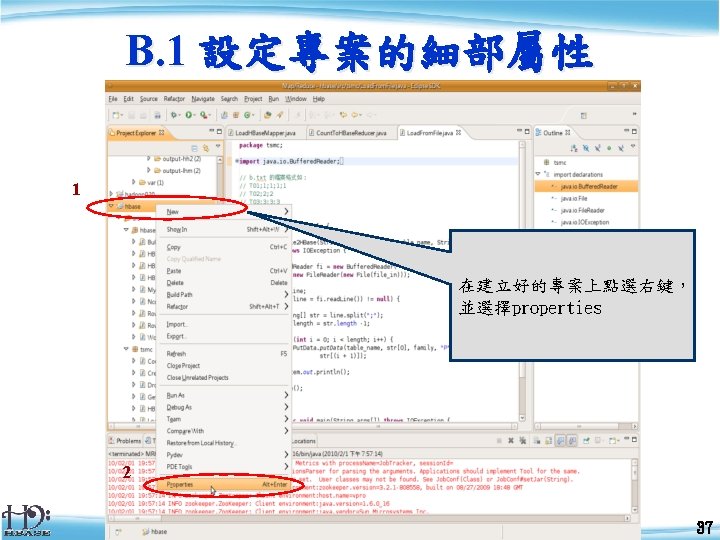

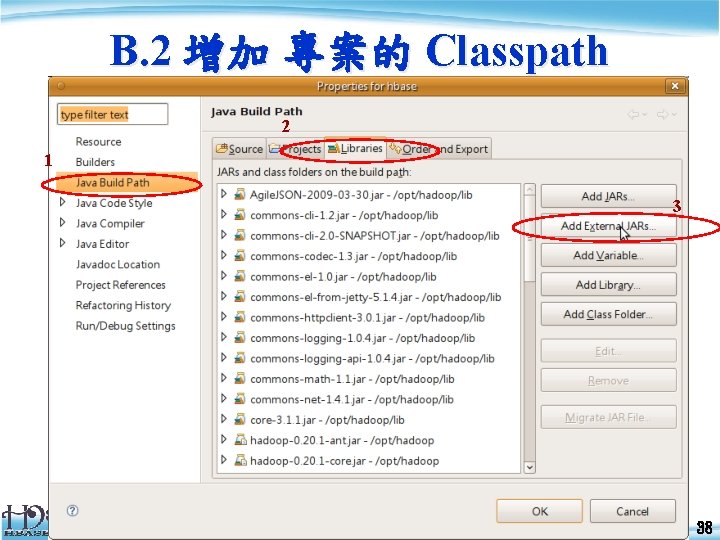

B. 2 增加 專案的 Classpath 2 1 3 38

HTable 成員 Table, Family, Column, Qualifier , Row, Time. Stamp Contents Department news t 1 t 2 com. yahoo. news. tw t 3 t 1 com. yahoo. bid. tw com. yahoo. sport. tw “我研發水下6千公尺機器人 ” “tech” “蚊子怎麼搜尋人肉” “tech” “用腦波「發聲」 ” “tech” “… ipad …” “… Wang 40…” bid sport “ 3 C ” “MBA” 42

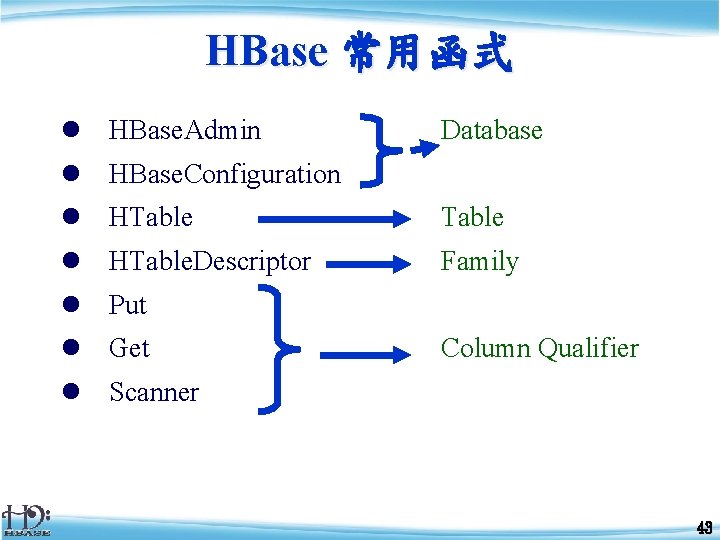

HBase 常用函式 l HBase. Admin Database l HBase. Configuration l HTable. Descriptor Family l Put l Get Column Qualifier l Scanner 43

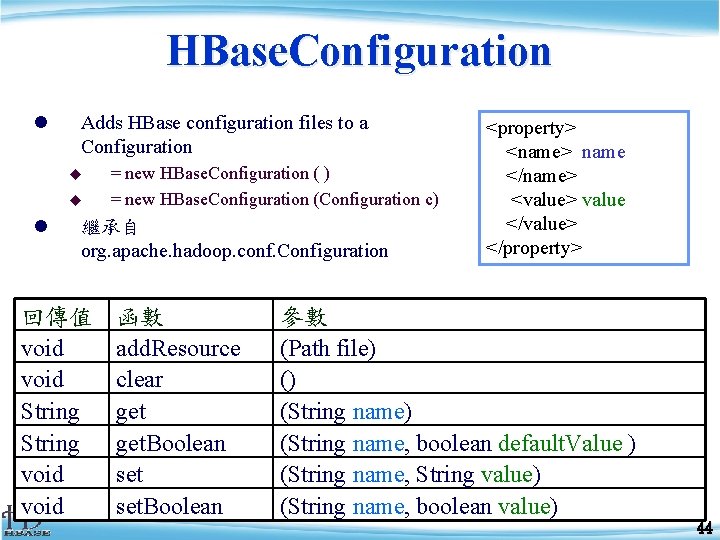

HBase. Configuration l Adds HBase configuration files to a Configuration u u l = new HBase. Configuration ( ) = new HBase. Configuration (Configuration c) 繼承自 org. apache. hadoop. conf. Configuration 回傳值 void String void 函數 add. Resource clear get. Boolean set. Boolean <property> <name> name </name> <value> value </value> </property> 參數 (Path file) () (String name, boolean default. Value ) (String name, String value) (String name, boolean value) 44

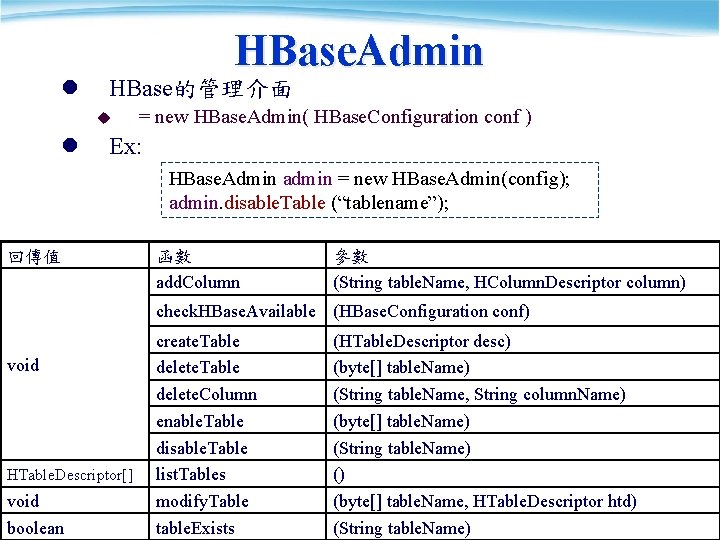

l HBase. Admin HBase的管理介面 u l = new HBase. Admin( HBase. Configuration conf ) Ex: HBase. Admin admin = new HBase. Admin(config); admin. disable. Table (“tablename”); 回傳值 函數 add. Column 參數 (String table. Name, HColumn. Descriptor column) check. HBase. Available (HBase. Configuration conf) void HTable. Descriptor[] void boolean create. Table delete. Column enable. Table disable. Table list. Tables modify. Table table. Exists (HTable. Descriptor desc) (byte[] table. Name) (String table. Name, String column. Name) (byte[] table. Name) (String table. Name) () (byte[] table. Name, HTable. Descriptor htd) (String table. Name) 45

HTable. Descriptor l HTable. Descriptor contains the name of an HTable, and its column families. u u l = new HTable. Descriptor() = new HTable. Descriptor(String name) Constant-values u l org. apache. hadoop. hbase. HTable. Descriptor. TABLE_DESCRIPTOR_VERSION Ex: HTable. Descriptor htd = new HTable. Descriptor(tablename); htd. add. Family ( new HColumn. Descriptor (“Family”)); 回傳值 函數 參數 void HColumn. Descriptor byte[] void add. Family remove. Family get. Name get. Value set. Value (HColumn. Descriptor family) (byte[] column) ( ) = Table name (byte[] key) = 對應key的value (String key, String value) 46

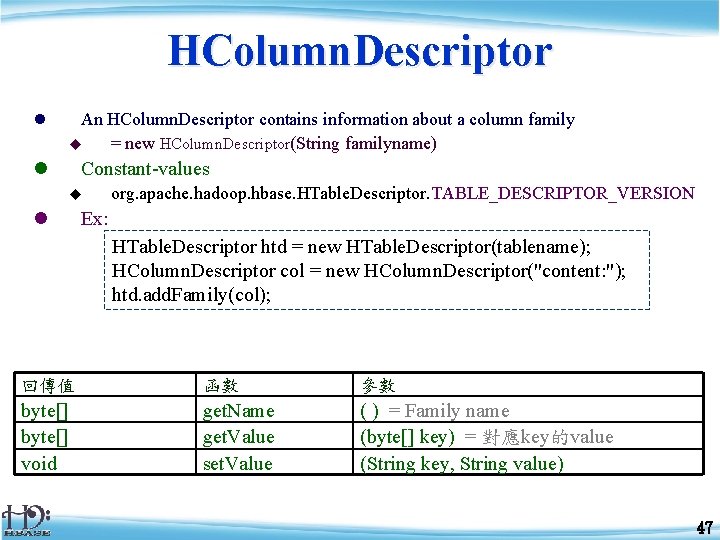

HColumn. Descriptor l An HColumn. Descriptor contains information about a column family u = new HColumn. Descriptor(String familyname) l Constant-values u l org. apache. hadoop. hbase. HTable. Descriptor. TABLE_DESCRIPTOR_VERSION Ex: HTable. Descriptor htd = new HTable. Descriptor(tablename); HColumn. Descriptor col = new HColumn. Descriptor("content: "); htd. add. Family(col); 回傳值 函數 參數 byte[] void get. Name get. Value set. Value ( ) = Family name (byte[] key) = 對應key的value (String key, String value) 47

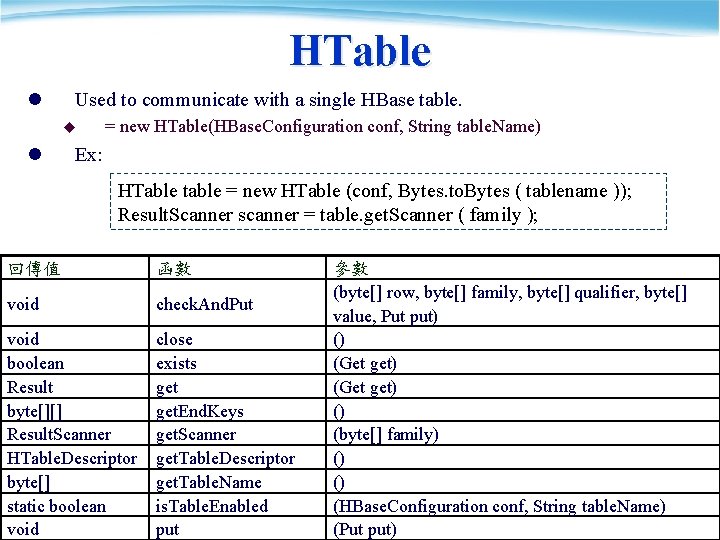

HTable l Used to communicate with a single HBase table. u l = new HTable(HBase. Configuration conf, String table. Name) Ex: HTable table = new HTable (conf, Bytes. to. Bytes ( tablename )); Result. Scanner scanner = table. get. Scanner ( family ); 回傳值 函數 void check. And. Put void boolean Result byte[][] Result. Scanner HTable. Descriptor byte[] static boolean void close exists get. End. Keys get. Scanner get. Table. Descriptor get. Table. Name is. Table. Enabled put 參數 (byte[] row, byte[] family, byte[] qualifier, byte[] value, Put put) () (Get get) () (byte[] family) () () (HBase. Configuration conf, String table. Name) 48 (Put put)

![Put l Used to perform Put operations for a single row. = new Put(byte[] Put l Used to perform Put operations for a single row. = new Put(byte[]](http://slidetodoc.com/presentation_image/41a266c235cdd83193940a623940256c/image-49.jpg)

Put l Used to perform Put operations for a single row. = new Put(byte[] row) = new Put(byte[] row, Row. Lock row. Lock) u u l Ex: HTable table = new HTable (conf, Bytes. to. Bytes ( tablename )); Put p = new Put ( brow ); p. add (family, qualifier, value); table. put ( p ); Put byte[] Row. Lock long boolean Put add get. Row. Lock get. Time. Stamp is. Empty set. Time. Stamp (byte[] family, byte[] qualifier, byte[] value) (byte[] column, long ts, byte[] value) () () (long timestamp) 49

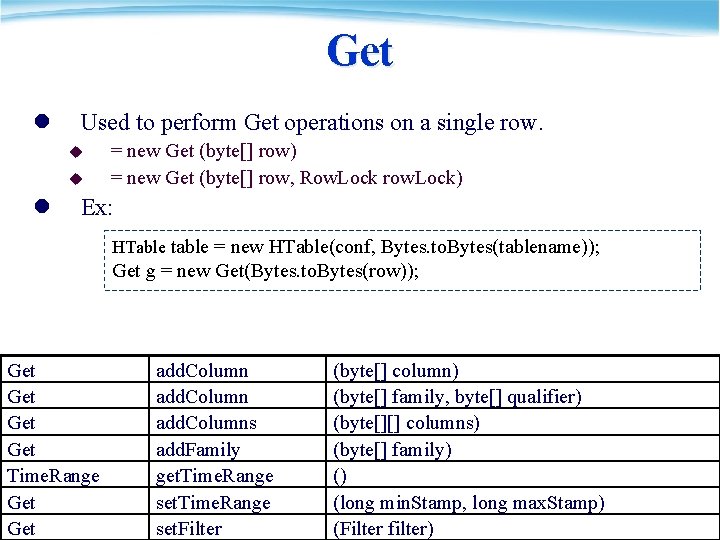

Get l Used to perform Get operations on a single row. u u l = new Get (byte[] row) = new Get (byte[] row, Row. Lock row. Lock) Ex: HTable table = new HTable(conf, Bytes. to. Bytes(tablename)); Get g = new Get(Bytes. to. Bytes(row)); Get Get Time. Range Get add. Columns add. Family get. Time. Range set. Filter (byte[] column) (byte[] family, byte[] qualifier) (byte[][] columns) (byte[] family) () (long min. Stamp, long max. Stamp) (Filter filter) 50

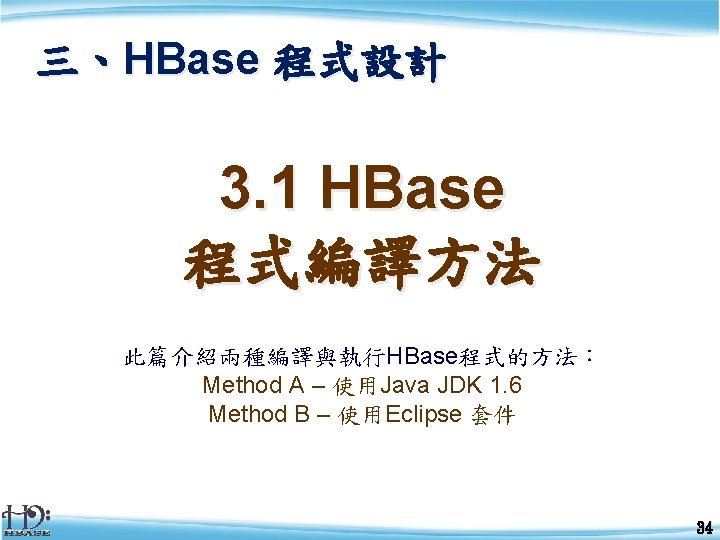

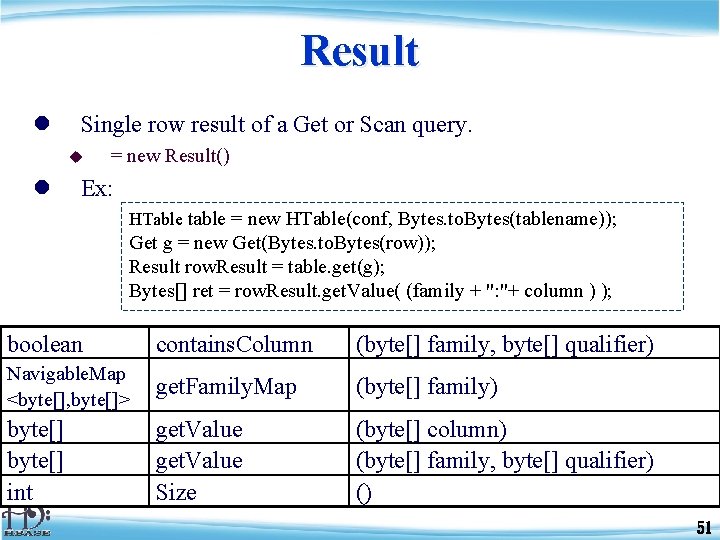

Result l Single row result of a Get or Scan query. u l = new Result() Ex: HTable table = new HTable(conf, Bytes. to. Bytes(tablename)); Get g = new Get(Bytes. to. Bytes(row)); Result row. Result = table. get(g); Bytes[] ret = row. Result. get. Value( (family + ": "+ column ) ); boolean contains. Column (byte[] family, byte[] qualifier) Navigable. Map <byte[], byte[]> get. Family. Map (byte[] family) byte[] int get. Value Size (byte[] column) (byte[] family, byte[] qualifier) () 51

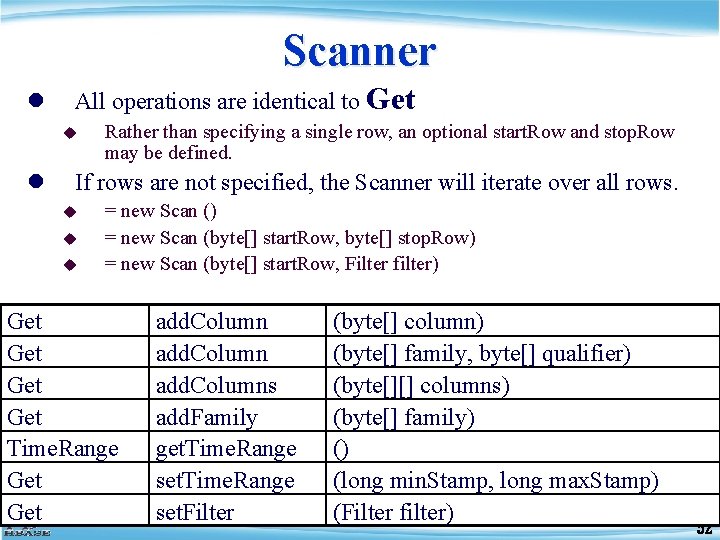

Scanner l All operations are identical to Get u l Rather than specifying a single row, an optional start. Row and stop. Row may be defined. If rows are not specified, the Scanner will iterate over all rows. u u u = new Scan () = new Scan (byte[] start. Row, byte[] stop. Row) = new Scan (byte[] start. Row, Filter filter) Get Get Time. Range Get add. Columns add. Family get. Time. Range set. Filter (byte[] column) (byte[] family, byte[] qualifier) (byte[][] columns) (byte[] family) () (long min. Stamp, long max. Stamp) (Filter filter) 52

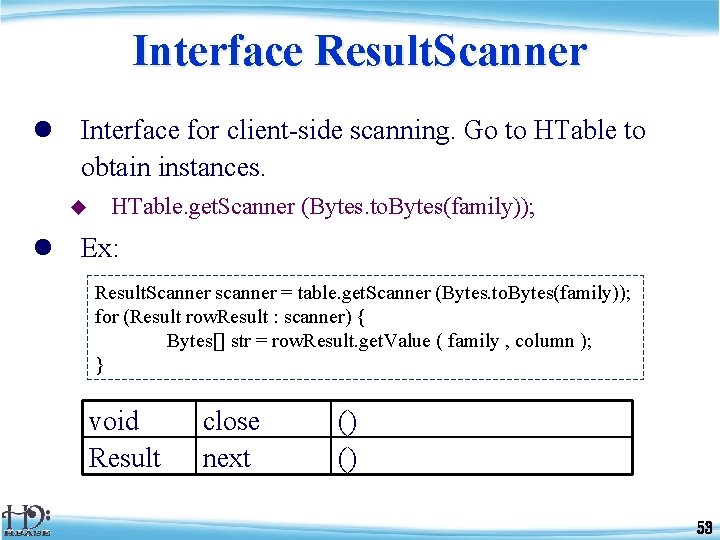

Interface Result. Scanner l Interface for client-side scanning. Go to HTable to obtain instances. u HTable. get. Scanner (Bytes. to. Bytes(family)); l Ex: Result. Scanner scanner = table. get. Scanner (Bytes. to. Bytes(family)); for (Result row. Result : scanner) { Bytes[] str = row. Result. get. Value ( family , column ); } void Result close next () () 53

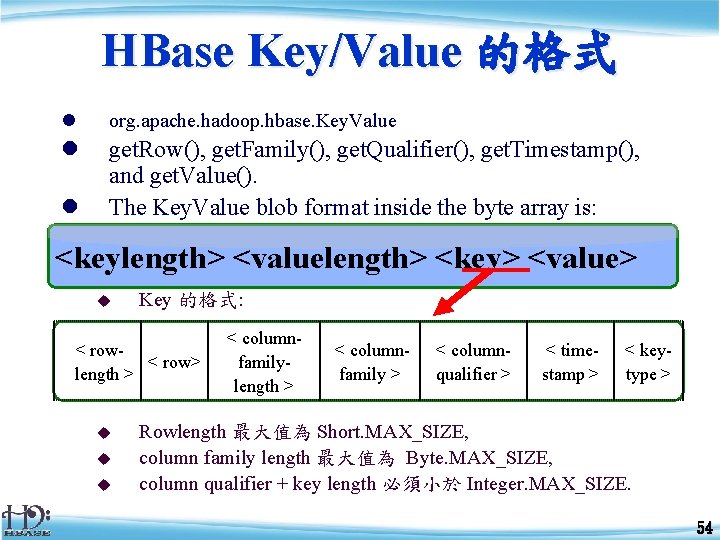

HBase Key/Value 的格式 l org. apache. hadoop. hbase. Key. Value l get. Row(), get. Family(), get. Qualifier(), get. Timestamp(), and get. Value(). The Key. Value blob format inside the byte array is: l <keylength> <valuelength> <key> <value> u Key 的格式: < row> length > u u u < columnfamilylength > < columnfamily > < columnqualifier > < timestamp > < keytype > Rowlength 最大值為 Short. MAX_SIZE, column family length 最大值為 Byte. MAX_SIZE, column qualifier + key length 必須小於 Integer. MAX_SIZE. 54

範例一:新增Table <指令> create <表名>, {<family>, …. } $ hbase shell > create ‘tablename', ‘family 1', 'family 2', 'family 3‘ 0 row(s) in 4. 0810 seconds > List tablename 1 row(s) in 0. 0190 seconds 56

範例一:新增Table <程式碼> public static void create. HBase. Table ( String tablename, String familyname ) throws IOException { HBase. Configuration config = new HBase. Configuration(); HBase. Admin admin = new HBase. Admin(config); HTable. Descriptor htd = new HTable. Descriptor( tablename ); HColumn. Descriptor col = new HColumn. Descriptor( familyname ); htd. add. Family ( col ); if( admin. table. Exists(tablename)) { return () } admin. create. Table(htd); } 57

![範例二:Put資料進Column <指令> put ‘表名’, ‘列’ , ‘column’, ‘值’ , [‘時間’] > put 'tablename', 'row 範例二:Put資料進Column <指令> put ‘表名’, ‘列’ , ‘column’, ‘值’ , [‘時間’] > put 'tablename', 'row](http://slidetodoc.com/presentation_image/41a266c235cdd83193940a623940256c/image-58.jpg)

範例二:Put資料進Column <指令> put ‘表名’, ‘列’ , ‘column’, ‘值’ , [‘時間’] > put 'tablename', 'row 1', 'family 1: qua 1', 'value' 0 row(s) in 0. 0030 seconds 58

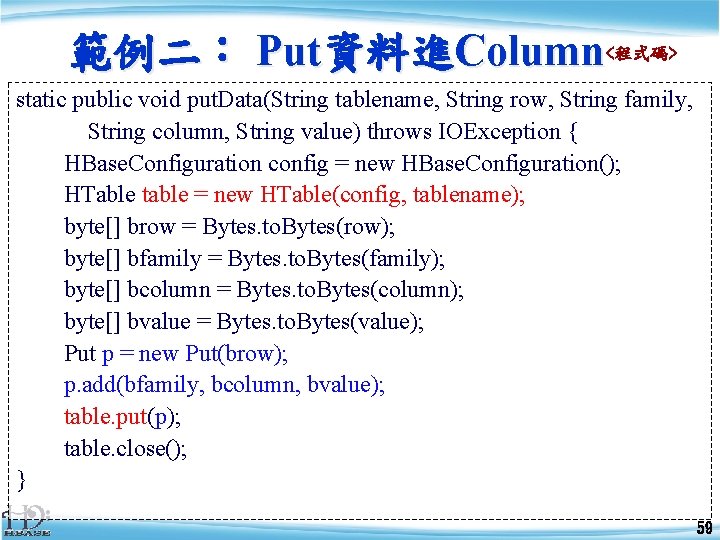

範例二: Put資料進Column<程式碼> static public void put. Data(String tablename, String row, String family, String column, String value) throws IOException { HBase. Configuration config = new HBase. Configuration(); HTable table = new HTable(config, tablename); byte[] brow = Bytes. to. Bytes(row); byte[] bfamily = Bytes. to. Bytes(family); byte[] bcolumn = Bytes. to. Bytes(column); byte[] bvalue = Bytes. to. Bytes(value); Put p = new Put(brow); p. add(bfamily, bcolumn, bvalue); table. put(p); table. close(); } 59

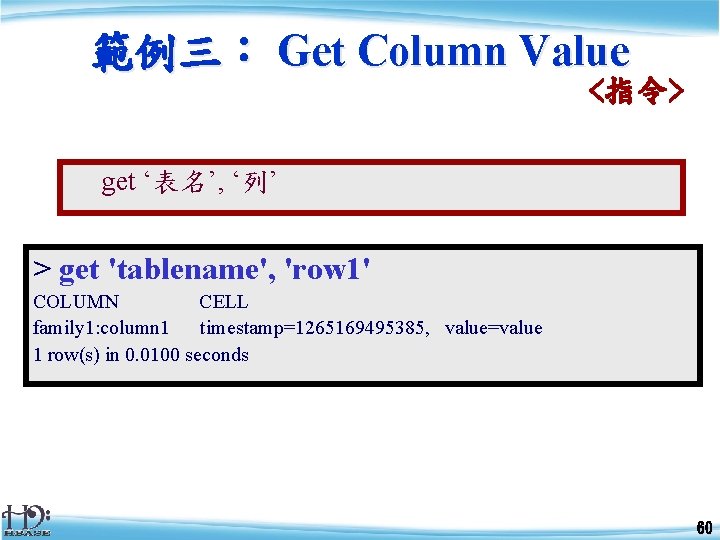

範例三: Get Column Value <指令> get ‘表名’, ‘列’ > get 'tablename', 'row 1' COLUMN CELL family 1: column 1 timestamp=1265169495385, value=value 1 row(s) in 0. 0100 seconds 60

範例三: Get Column Value <程式碼> String get. Column ( String tablename, String row, String family, String column ) throws IOException { HBase. Configuration conf = new HBase. Configuration(); HTable table; table = new HTable( conf, Bytes. to. Bytes( tablename)); Get g = new Get(Bytes. to. Bytes(row)); Result row. Result = table. get(g); return Bytes. to. String( row. Result. get. Value ( Bytes. to. Bytes (family + “: ” + column))); } 61

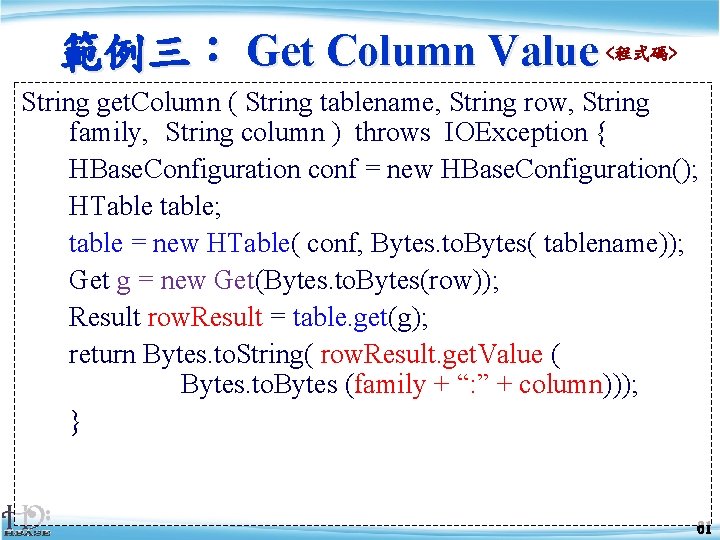

範例四: Scan all Column <指令> scan ‘表名’ > scan 'tablename' ROW COLUMN+CELL row 1 column=family 1: column 1, timestamp=1265169415385, value=value 1 row 2 column=family 1: column 1, timestamp=1263534411333, value=value 2 row 3 column=family 1: column 1, timestamp=1263645465388, value=value 3 row 4 column=family 1: column 1, timestamp=1264654615301, value=value 4 row 5 column=family 1: column 1, timestamp=1265146569567, value=value 5 5 row(s) in 0. 0100 seconds 62

範例四:Scan all Column <程式碼> static void Scan. Column(String tablename, String family, String column) throws IOException { HBase. Configuration conf = new HBase. Configuration(); HTable table = new HTable ( conf, Bytes. to. Bytes(tablename)); Result. Scanner scanner = table. get. Scanner( Bytes. to. Bytes(family)); int i = 1; for (Result row. Result : scanner) { byte[] by = row. Result. get. Value( Bytes. to. Bytes(family), Bytes. to. Bytes(column) ); String str = Bytes. to. String ( by ); System. out. println("row " + i + " is "" + str +"""); i++; }}} 63

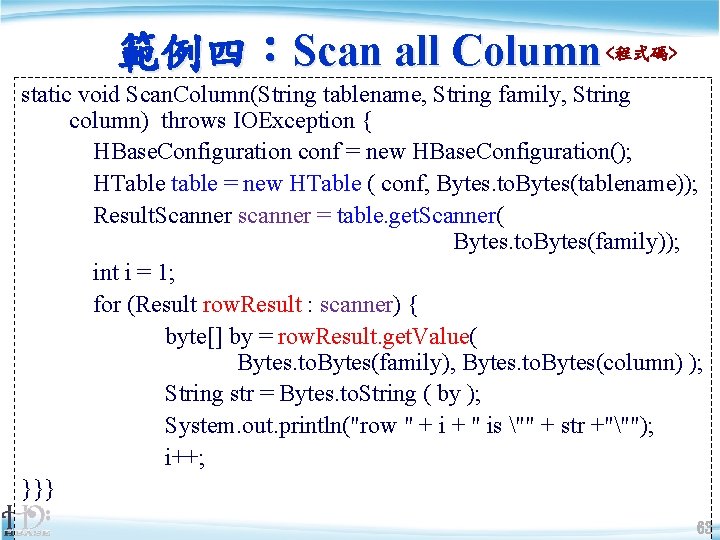

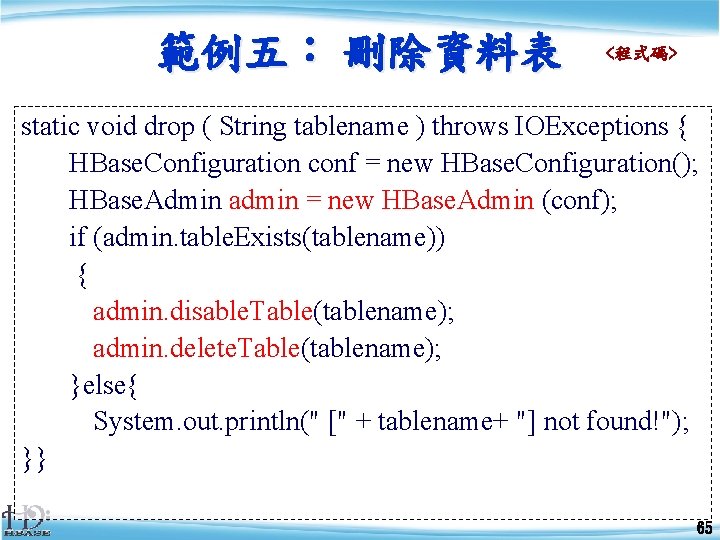

範例五: 刪除資料表 <指令> disable ‘表名’ drop ‘表名’ > disable 'tablename' 0 row(s) in 6. 0890 seconds > drop 'tablename' 0 row(s) in 0. 0090 seconds 0 row(s) in 0. 0710 seconds 64

範例五: 刪除資料表 <程式碼> static void drop ( String tablename ) throws IOExceptions { HBase. Configuration conf = new HBase. Configuration(); HBase. Admin admin = new HBase. Admin (conf); if (admin. table. Exists(tablename)) { admin. disable. Table(tablename); admin. delete. Table(tablename); }else{ System. out. println(" [" + tablename+ "] not found!"); }} 65

三、 HBase 程式設計 3. 2. 3 Map. Reduce與 HBase的搭配 HBase 的 Map. Reduce 範例程式碼 66

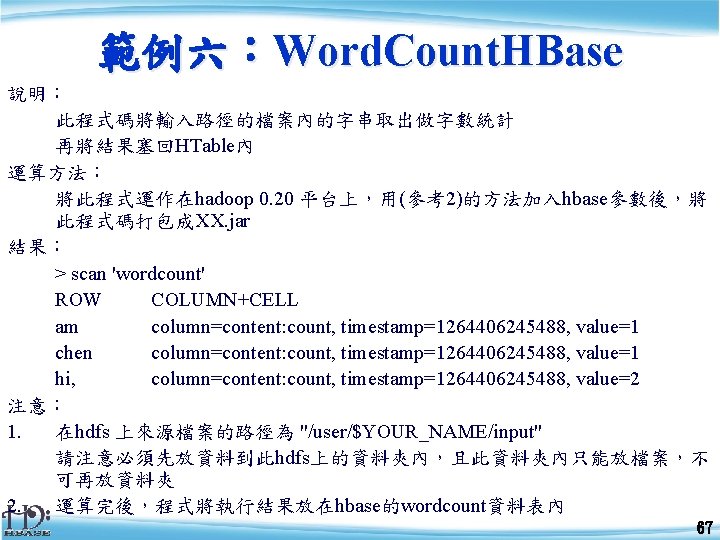

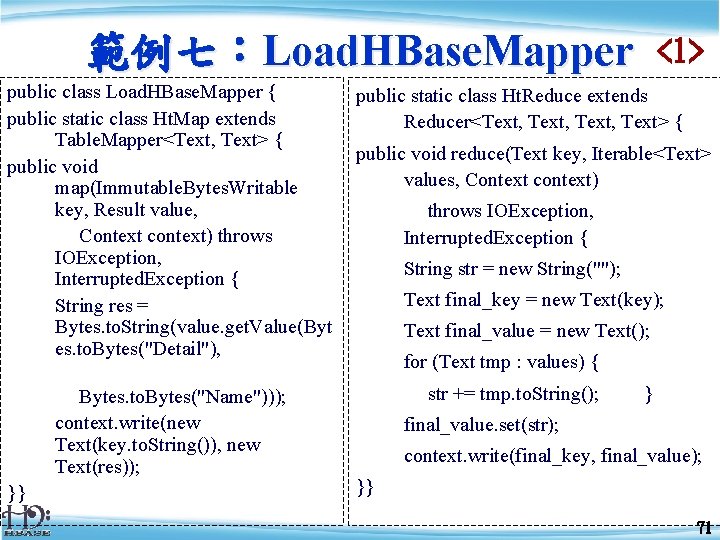

範例六:Word. Count. HBase 說明: 此程式碼將輸入路徑的檔案內的字串取出做字數統計 再將結果塞回HTable內 運算方法: 將此程式運作在hadoop 0. 20 平台上,用(參考2)的方法加入hbase參數後,將 此程式碼打包成XX. jar 結果: > scan 'wordcount' ROW COLUMN+CELL am column=content: count, timestamp=1264406245488, value=1 chen column=content: count, timestamp=1264406245488, value=1 hi, column=content: count, timestamp=1264406245488, value=2 注意: 1. 在hdfs 上來源檔案的路徑為 "/user/$YOUR_NAME/input" 請注意必須先放資料到此hdfs上的資料夾內,且此資料夾內只能放檔案,不 可再放資料夾 2. 運算完後,程式將執行結果放在hbase的wordcount資料表內 67

範例六:Word. Count. HBase public class Word. Count. HBase { public static class Map extends Mapper<Long. Writable, Text, Int. Writable> { private Int. Writable i = new Int. Writable(1); public void map(Long. Writable key, Text value, Context context) throws IOException, Interrupted. Exception { String s[] = value. to. String(). trim(). split(" "); for( String m : s) { context. write(new Text(m), i); }}} <1> public static class Reduce extends Table. Reducer<Text, Int. Writable, Null. Writable> { public void reduce(Text key, Iterable<Int. Writable> values, Context context) throws IOException, Interrupted. Exception { int sum = 0; for(Int. Writable i : values) { sum += i. get(); } Put put = new Put(Bytes. to. Bytes(key. to. String())); put. add(Bytes. to. Bytes("content"), Bytes. to. Bytes("count"), Bytes. to. Bytes(String. value. Of(sum))); context. write(Null. Writable. get(), put); } } 68

範例六:Word. Count. HBase public static void create. HBase. Table(String tablename)throws IOException { HTable. Descriptor htd = new HTable. Descriptor(tablename); HColumn. Descriptor col = new HColumn. Descriptor("content: "); htd. add. Family(col); HBase. Configuration config = new HBase. Configuration(); HBase. Admin admin = new HBase. Admin(config); if(admin. table. Exists(tablename)) { admin. disable. Table(tablename); admin. delete. Table(tablename); } System. out. println("create new table: " + tablename); admin. create. Table(htd); } <2> public static void main(String args[]) throws Exception { String tablename = "wordcount"; Configuration conf = new Configuration(); conf. set(Table. Output. Format. OUTPUT_TABLE, tablename); create. HBase. Table(tablename); String input = args[0]; Job job = new Job(conf, "Word. Count " + input); job. set. Jar. By. Class(Word. Count. HBase. class); job. set. Num. Reduce. Tasks(3); job. set. Mapper. Class(Map. class); job. set. Reducer. Class(Reduce. class); job. set. Map. Output. Key. Class(Text. class); job. set. Map. Output. Value. Class(Int. Writable. class); job. set. Input. Format. Class(Text. Input. Format. class); job. set. Output. Format. Class(Table. Output. Format. class); File. Input. Format. add. Input. Path(job, new Path(input)); System. exit(job. wait. For. Completion(true)? 0: 1); }} 69

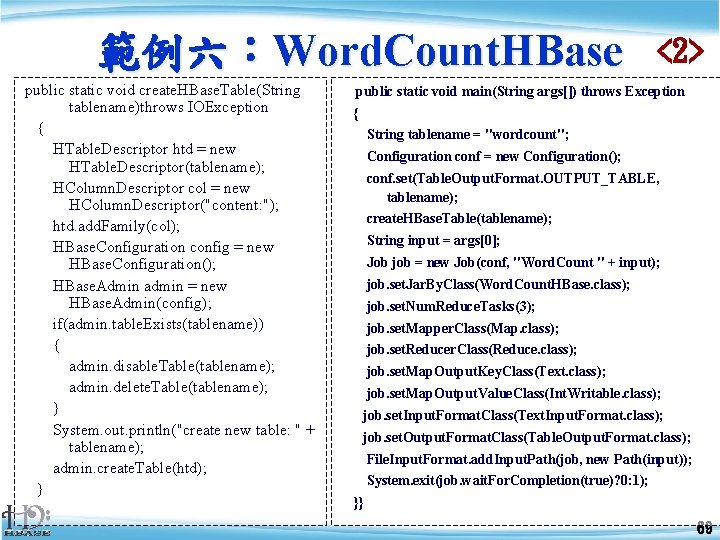

範例七:Load. HBase. Mapper public class Load. HBase. Mapper { public static class Ht. Map extends Table. Mapper<Text, Text> { public void map(Immutable. Bytes. Writable key, Result value, Context context) throws IOException, Interrupted. Exception { String res = Bytes. to. String(value. get. Value(Byt es. to. Bytes("Detail"), Bytes. to. Bytes("Name"))); context. write(new Text(key. to. String()), new Text(res)); }} <1> public static class Ht. Reduce extends Reducer<Text, Text> { public void reduce(Text key, Iterable<Text> values, Context context) throws IOException, Interrupted. Exception { String str = new String(""); Text final_key = new Text(key); Text final_value = new Text(); for (Text tmp : values) { str += tmp. to. String(); } final_value. set(str); context. write(final_key, final_value); }} 71

![範例七: Load. HBase. Mapper public static void main(String args[]) throws Exception { String input 範例七: Load. HBase. Mapper public static void main(String args[]) throws Exception { String input](http://slidetodoc.com/presentation_image/41a266c235cdd83193940a623940256c/image-72.jpg)

範例七: Load. HBase. Mapper public static void main(String args[]) throws Exception { String input = args[0]; String tablename = "tcrc"; Configuration conf = new Configuration(); Job job = new Job (conf, tablename + " hbase data to hdfs"); job. set. Jar. By. Class (Load. HBase. Mapper. class); Table. Map. Reduce. Util. init. Table. Mapper. Job (tablename, my. Scan, Ht. Map. class, Text. class, job); job. set. Mapper. Class (Ht. Map. class); <2> job. set. Reducer. Class (Ht. Reduce. class); job. set. Map. Output. Key. Class (Text. class); job. set. Map. Output. Value. Class (Text. class); job. set. Input. Format. Class ( Table. Input. Format. class); job. set. Output. Format. Class ( Text. Output. Format. class); job. set. Output. Key. Class( Text. class); job. set. Output. Value. Class( Text. class); File. Output. Format. set. Output. Path ( job, new Path(input)); System. exit (job. wait. For. Completion (true) ? 0 : 1); }} 72

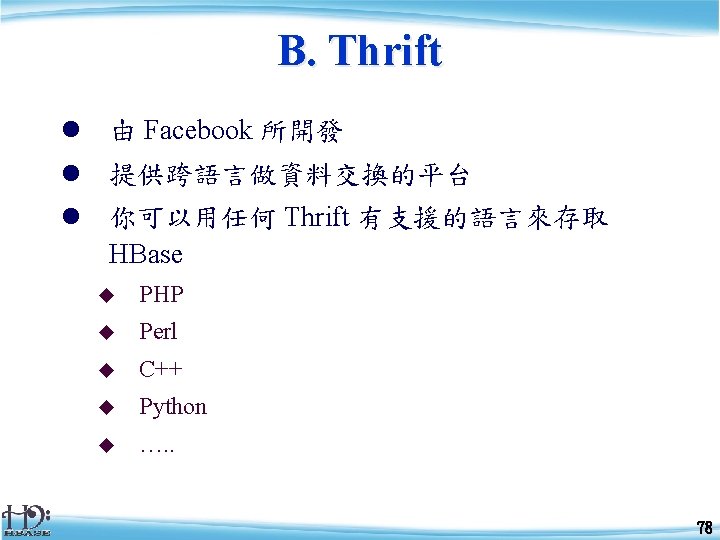

三、 HBase 程式設計 3. 2. 4 進階補充 HBase內contrib的項目,如 A. Trancational B. Thrift 73

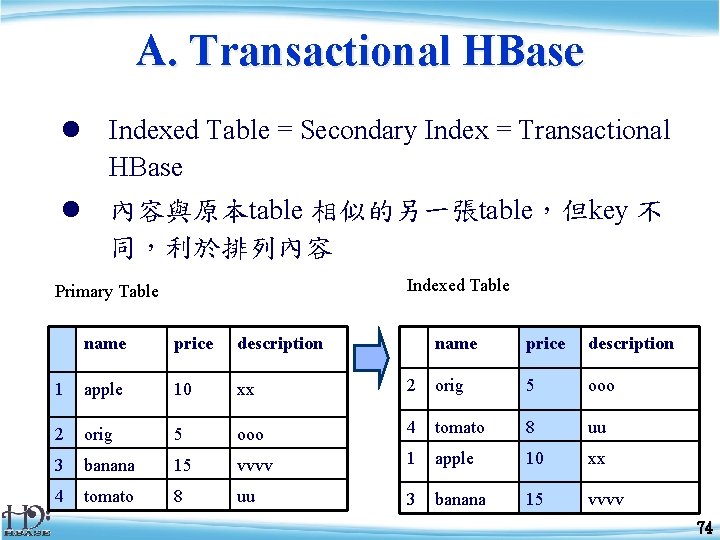

A. Transactional HBase l Indexed Table = Secondary Index = Transactional HBase l 內容與原本table 相似的另一張table,但key 不 同,利於排列內容 Indexed Table Primary Table name price description 1 apple 10 xx 2 orig 5 ooo 4 tomato 8 uu 3 banana 15 vvvv 1 apple 10 xx 4 tomato 8 uu 3 banana 15 vvvv 74

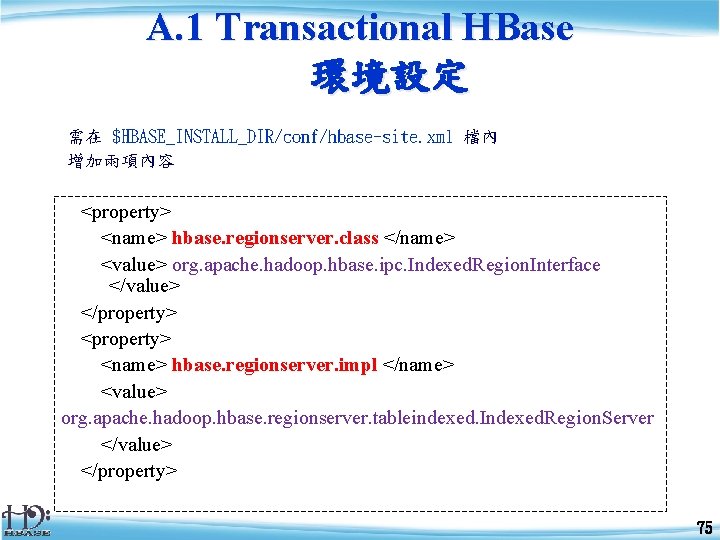

A. 1 Transactional HBase 環境設定 需在 $HBASE_INSTALL_DIR/conf/hbase-site. xml 檔內 增加兩項內容 <property> <name> hbase. regionserver. class </name> <value> org. apache. hadoop. hbase. ipc. Indexed. Region. Interface </value> </property> <property> <name> hbase. regionserver. impl </name> <value> org. apache. hadoop. hbase. regionserver. tableindexed. Indexed. Region. Server </value> </property> 75

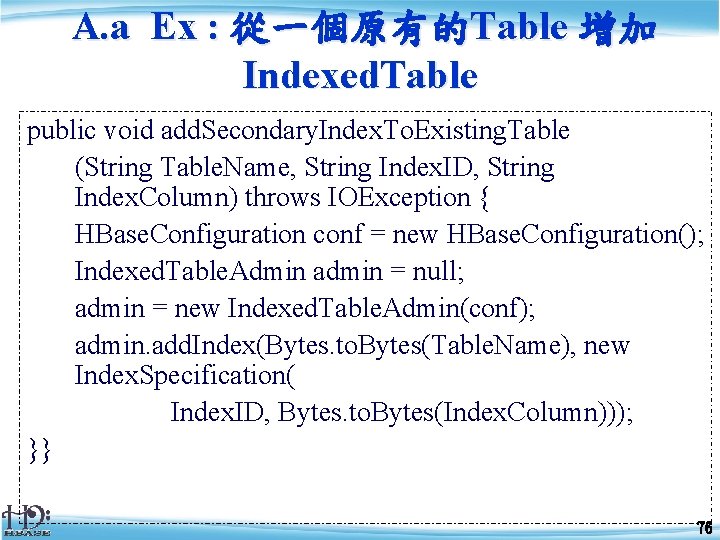

A. a Ex : 從一個原有的Table 增加 Indexed. Table public void add. Secondary. Index. To. Existing. Table (String Table. Name, String Index. ID, String Index. Column) throws IOException { HBase. Configuration conf = new HBase. Configuration(); Indexed. Table. Admin admin = null; admin = new Indexed. Table. Admin(conf); admin. add. Index(Bytes. to. Bytes(Table. Name), new Index. Specification( Index. ID, Bytes. to. Bytes(Index. Column))); }} 76

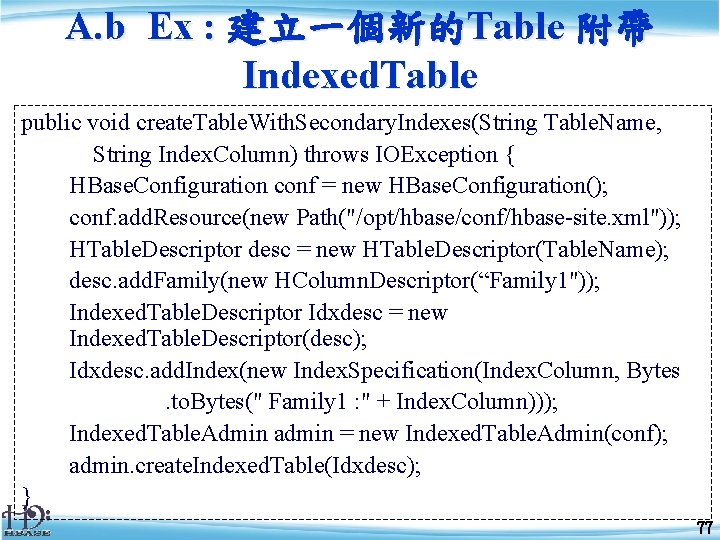

A. b Ex : 建立一個新的Table 附帶 Indexed. Table public void create. Table. With. Secondary. Indexes(String Table. Name, String Index. Column) throws IOException { HBase. Configuration conf = new HBase. Configuration(); conf. add. Resource(new Path("/opt/hbase/conf/hbase-site. xml")); HTable. Descriptor desc = new HTable. Descriptor(Table. Name); desc. add. Family(new HColumn. Descriptor(“Family 1")); Indexed. Table. Descriptor Idxdesc = new Indexed. Table. Descriptor(desc); Idxdesc. add. Index(new Index. Specification(Index. Column, Bytes. to. Bytes(" Family 1 : " + Index. Column))); Indexed. Table. Admin admin = new Indexed. Table. Admin(conf); admin. create. Indexed. Table(Idxdesc); } 77

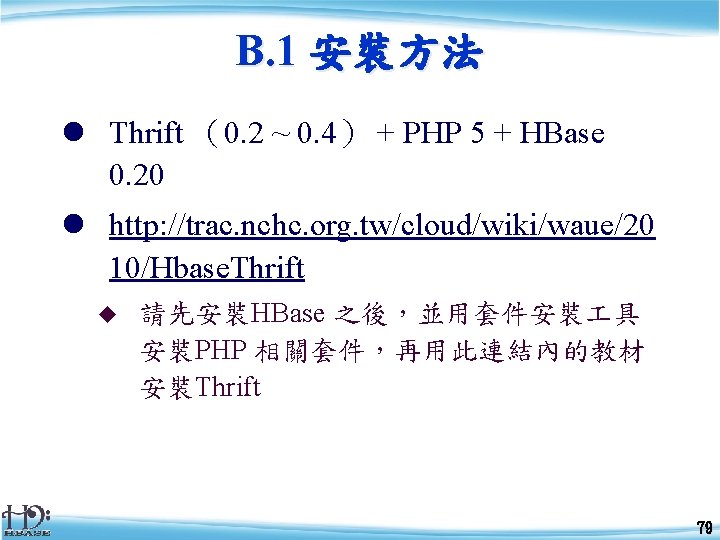

B. 1 安裝方法 l Thrift (0. 2 ~ 0. 4) + PHP 5 + HBase 0. 20 l http: //trac. nchc. org. tw/cloud/wiki/waue/20 10/Hbase. Thrift u 請先安裝HBase 之後,並用套件安裝 具 安裝PHP 相關套件,再用此連結內的教材 安裝Thrift 79

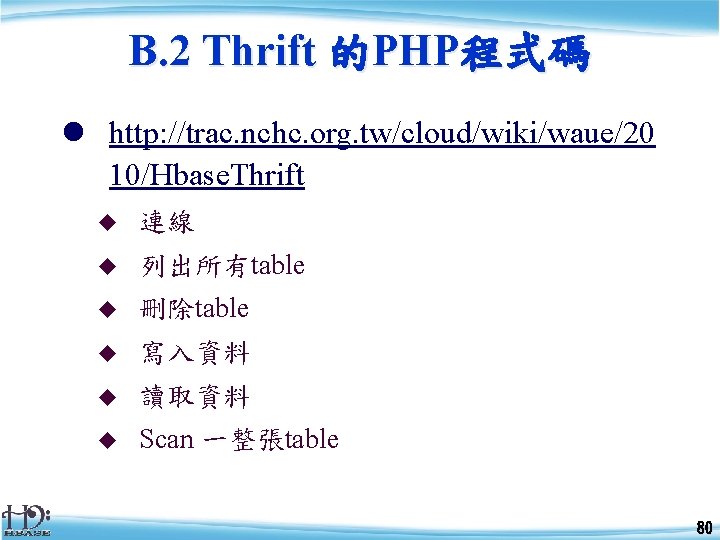

B. 2 Thrift 的PHP程式碼 l http: //trac. nchc. org. tw/cloud/wiki/waue/20 10/Hbase. Thrift u 連線 u 列出所有table u 刪除table u 寫入資料 u 讀取資料 u Scan 一整張table 80

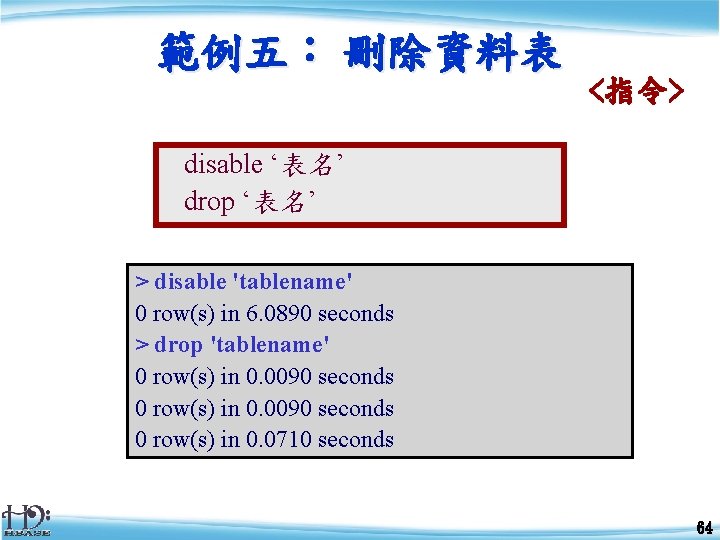

3. 3. 1. 建立商店資料 假設:目前有四間商店進駐中科餐廳,分別為位在 第 1區的Gun. Long,品項4項單價為<20, 40, 30, 50> 第 2區的ESing, 品項1項單價為<50> 第 3區的Sun. Don, 品項2項單價為<40, 30> 第 4區的Star. Bucks, 品項3項單價為<50, 20> Detail Name Products Locate P 1 P 2 P 3 P 4 T 01 Gun. Long 01 20 40 30 50 T 02 50 ESing 02 T 03 Sun. Don 03 Star. Buck T 04 04 s Turnover 40 30 50 50 20 83

![3. 3. 1. a 建立初始HTable <程式碼> public void create. HBase. Table(String tablename, String[] family) 3. 3. 1. a 建立初始HTable <程式碼> public void create. HBase. Table(String tablename, String[] family)](http://slidetodoc.com/presentation_image/41a266c235cdd83193940a623940256c/image-84.jpg)

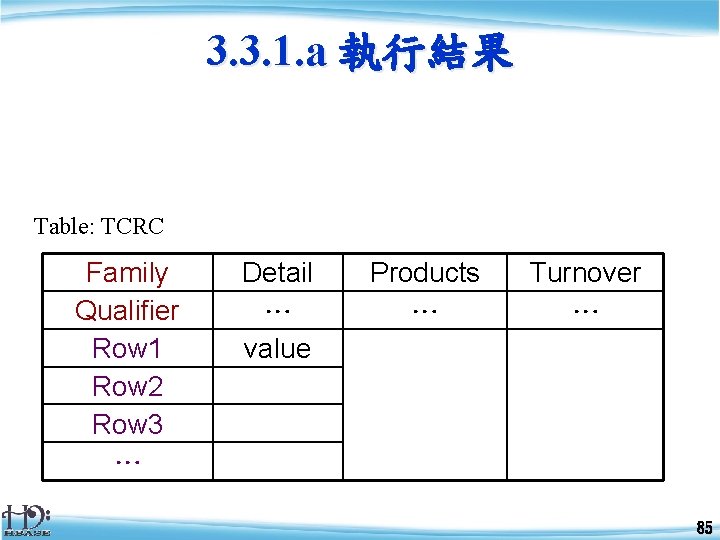

3. 3. 1. a 建立初始HTable <程式碼> public void create. HBase. Table(String tablename, String[] family) throws IOException { HTable. Descriptor htd = new HTable. Descriptor(tablename); for (String fa : family) { htd. add. Family(new HColumn. Descriptor(fa)); } HBase. Configuration config = new HBase. Configuration(); HBase. Admin admin = new HBase. Admin(config); if (admin. table. Exists(tablename)) { System. out. println("Table: " + tablename + "Existed. "); } else { System. out. println("create new table: " + tablename); admin. create. Table(htd); } } 84

3. 3. 1. a 執行結果 Table: TCRC Family Qualifier Row 1 Row 2 Row 3 … Detail … value Products … Turnover … 85

3. 3. 1. b 用讀檔方式把資料匯入HTable <程式碼> void load. File 2 HBase(String file_in, String table_name) throws IOException { Buffered. Reader fi = new Buffered. Reader( new File. Reader(new File(file_in))); String line; while ((line = fi. read. Line()) != null) { String[] str = line. split("; "); int length = str. length; Put. Data. put. Data(table_name, str[0]. trim(), "Detail", "Name", str[1]. trim()); Put. Data. put. Data(table_name, str[0]. trim(), "Detail", "Locate", str[2]. trim()); for (int i = 3; i < length; i++) { Put. Data. put. Data(table_name, str[0], "Products", "P" + (i - 2), str[i]); } System. out. println(); } fi. close(); } 86

3. 3. 1. b 執行結果 Detail Name Products Locat e Turnover P 1 P 2 P 3 P 4 T 01 Gun. Long 01 20 40 30 50 T 02 ESing 02 50 T 03 Sun. Don 03 40 30 Star. Buck T 04 04 50 50 20 s 87

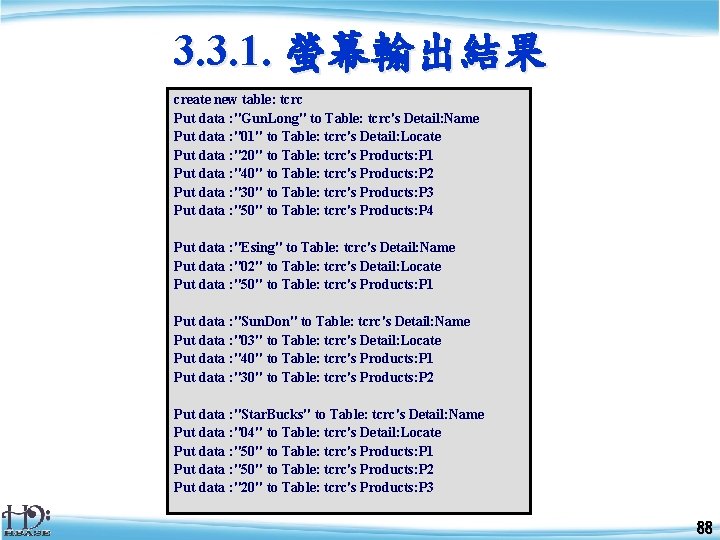

3. 3. 1. 螢幕輸出結果 create new table: tcrc Put data : "Gun. Long" to Table: tcrc's Detail: Name Put data : "01" to Table: tcrc's Detail: Locate Put data : "20" to Table: tcrc's Products: P 1 Put data : "40" to Table: tcrc's Products: P 2 Put data : "30" to Table: tcrc's Products: P 3 Put data : "50" to Table: tcrc's Products: P 4 Put data : "Esing" to Table: tcrc's Detail: Name Put data : "02" to Table: tcrc's Detail: Locate Put data : "50" to Table: tcrc's Products: P 1 Put data : "Sun. Don" to Table: tcrc's Detail: Name Put data : "03" to Table: tcrc's Detail: Locate Put data : "40" to Table: tcrc's Products: P 1 Put data : "30" to Table: tcrc's Products: P 2 Put data : "Star. Bucks" to Table: tcrc's Detail: Name Put data : "04" to Table: tcrc's Detail: Locate Put data : "50" to Table: tcrc's Products: P 1 Put data : "50" to Table: tcrc's Products: P 2 Put data : "20" to Table: tcrc's Products: P 3 88

3. 3. 2. 用Hadoop的Map Reduce運算並 把結果匯入HTable <map 程式碼> public class tcrc 2 Count { public static class Ht. Map extends Mapper<Long. Writable, Text, Int. Writable> { private Int. Writable one = new Int. Writable(1); public void map(Long. Writable key, Text value, Context context) throws IOException, Interrupted. Exception { String s[] = value. to. String(). trim(). split(": "); // xxx: T 01: P 4: oooo => T 01@P 4 String str = s[1] + "@" + s[2]; context. write(new Text(str), one); } } <reduce程式碼> public static class Ht. Reduce extends Table. Reducer<Text, Int. Writable, Long. Writable> { public void reduce(Text key, Iterable<Int. Writable> values, Context context) throws IOException, Interrupted. Exception { int sum = 0; for (Int. Writable i : values) sum += i. get(); String[] str = (key. to. String()). split("@"); byte[] row = (str[0]). get. Bytes(); byte[] family = Bytes. to. Bytes("Turnover"); byte[] qualifier = (str[1]). get. Bytes(); byte[] summary = Bytes. to. Bytes(String. value. Of(sum)); Put put = new Put(row); put. add(family, qualifier, summary ); context. write(new Long. Writable(), put); }} 90

![3. 3. 2. 用Hadoop的Map Reduce運算並把結果匯入 HTable < Main 程式碼> public static void main(String args[]) 3. 3. 2. 用Hadoop的Map Reduce運算並把結果匯入 HTable < Main 程式碼> public static void main(String args[])](http://slidetodoc.com/presentation_image/41a266c235cdd83193940a623940256c/image-91.jpg)

3. 3. 2. 用Hadoop的Map Reduce運算並把結果匯入 HTable < Main 程式碼> public static void main(String args[]) throws Exception { String input = "income"; String tablename = "tcrc"; Configuration conf = new Configuration(); conf. set(Table. Output. Format. OUTPUT_TABLE, tablename); Job job = new Job(conf, "Count to tcrc"); job. set. Jar. By. Class(tcrc 2 Count. class); job. set. Mapper. Class(Ht. Map. class); job. set. Reducer. Class(Ht. Reduce. class); job. set. Map. Output. Key. Class(Text. class); job. set. Map. Output. Value. Class(Int. Writable. class); job. set. Input. Format. Class(Text. Input. Format. class); job. set. Output. Format. Class(Table. Output. Format. class); File. Input. Format. add. Input. Path(job, new Path(input)); System. exit(job. wait. For. Completion(true) ? 0 : 1); } } 91

3. 3. 2 執行結果 Detail Name Products Locat e Turnover P 1 P 2 P 3 P 4 T 01 Gun. Long 01 20 40 30 50 1 T 02 ESing 02 50 1 1 1 2 T 03 Sun. Don 03 40 30 3 T 04 Star. Bucks 04 50 50 20 2 92

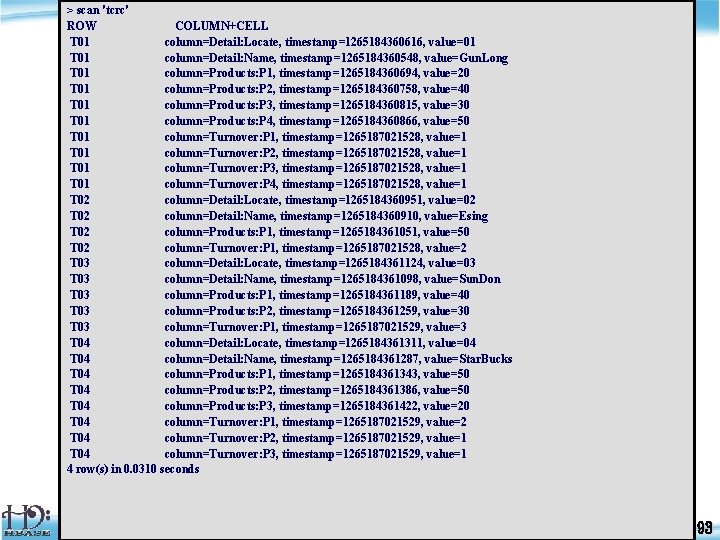

> scan 'tcrc' ROW COLUMN+CELL T 01 column=Detail: Locate, timestamp=1265184360616, value=01 T 01 column=Detail: Name, timestamp=1265184360548, value=Gun. Long T 01 column=Products: P 1, timestamp=1265184360694, value=20 T 01 column=Products: P 2, timestamp=1265184360758, value=40 T 01 column=Products: P 3, timestamp=1265184360815, value=30 T 01 column=Products: P 4, timestamp=1265184360866, value=50 T 01 column=Turnover: P 1, timestamp=1265187021528, value=1 T 01 column=Turnover: P 2, timestamp=1265187021528, value=1 T 01 column=Turnover: P 3, timestamp=1265187021528, value=1 T 01 column=Turnover: P 4, timestamp=1265187021528, value=1 T 02 column=Detail: Locate, timestamp=1265184360951, value=02 T 02 column=Detail: Name, timestamp=1265184360910, value=Esing T 02 column=Products: P 1, timestamp=1265184361051, value=50 T 02 column=Turnover: P 1, timestamp=1265187021528, value=2 T 03 column=Detail: Locate, timestamp=1265184361124, value=03 T 03 column=Detail: Name, timestamp=1265184361098, value=Sun. Don T 03 column=Products: P 1, timestamp=1265184361189, value=40 T 03 column=Products: P 2, timestamp=1265184361259, value=30 T 03 column=Turnover: P 1, timestamp=1265187021529, value=3 T 04 column=Detail: Locate, timestamp=1265184361311, value=04 T 04 column=Detail: Name, timestamp=1265184361287, value=Star. Bucks T 04 column=Products: P 1, timestamp=1265184361343, value=50 T 04 column=Products: P 2, timestamp=1265184361386, value=50 T 04 column=Products: P 3, timestamp=1265184361422, value=20 T 04 column=Turnover: P 1, timestamp=1265187021529, value=2 T 04 column=Turnover: P 2, timestamp=1265187021529, value=1 T 04 column=Turnover: P 3, timestamp=1265187021529, value=1 4 row(s) in 0. 0310 seconds 93

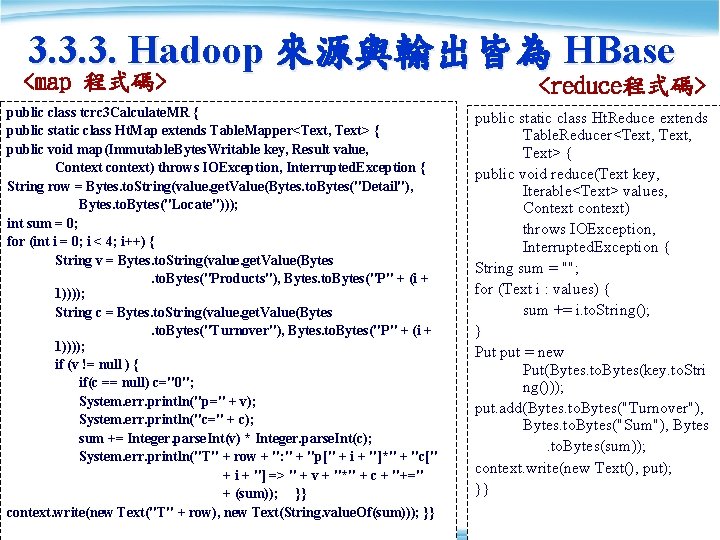

3. 3. 3. Hadoop 來源與輸出皆為 HBase <map 程式碼> public class tcrc 3 Calculate. MR { public static class Ht. Map extends Table. Mapper<Text, Text> { public void map(Immutable. Bytes. Writable key, Result value, Context context) throws IOException, Interrupted. Exception { String row = Bytes. to. String(value. get. Value(Bytes. to. Bytes("Detail"), Bytes. to. Bytes("Locate"))); int sum = 0; for (int i = 0; i < 4; i++) { String v = Bytes. to. String(value. get. Value(Bytes. to. Bytes("Products"), Bytes. to. Bytes("P" + (i + 1)))); String c = Bytes. to. String(value. get. Value(Bytes. to. Bytes("Turnover"), Bytes. to. Bytes("P" + (i + 1)))); if (v != null ) { if(c == null) c="0"; System. err. println("p=" + v); System. err. println("c=" + c); sum += Integer. parse. Int(v) * Integer. parse. Int(c); System. err. println("T" + row + ": " + "p[" + i + "]*" + "c[" + i + "] => " + v + "*" + c + "+=" + (sum)); }} context. write(new Text("T" + row), new Text(String. value. Of(sum))); }} <reduce程式碼> public static class Ht. Reduce extends Table. Reducer<Text, Text> { public void reduce(Text key, Iterable<Text> values, Context context) throws IOException, Interrupted. Exception { String sum = ""; for (Text i : values) { sum += i. to. String(); } Put put = new Put(Bytes. to. Bytes(key. to. Stri ng())); put. add(Bytes. to. Bytes("Turnover"), Bytes. to. Bytes("Sum"), Bytes. to. Bytes(sum)); context. write(new Text(), put); }} 95

![3. 3. 3. Hadoop 來源與輸出皆為 HBase < Main 程式碼> public static void main(String args[]) 3. 3. 3. Hadoop 來源與輸出皆為 HBase < Main 程式碼> public static void main(String args[])](http://slidetodoc.com/presentation_image/41a266c235cdd83193940a623940256c/image-96.jpg)

3. 3. 3. Hadoop 來源與輸出皆為 HBase < Main 程式碼> public static void main(String args[]) throws Exception { String tablename = "tcrc"; Scan my. Scan = new Scan(); my. Scan. add. Column("Detail: Locate". get. Bytes()); my. Scan. add. Column("Products: P 1". get. Bytes()); my. Scan. add. Column("Products: P 2". get. Bytes()); my. Scan. add. Column("Products: P 3". get. Bytes()); my. Scan. add. Column("Products: P 4". get. Bytes()); my. Scan. add. Column("Turnover: P 1". get. Bytes()); my. Scan. add. Column("Turnover: P 2". get. Bytes()); my. Scan. add. Column("Turnover: P 3". get. Bytes()); my. Scan. add. Column("Turnover: P 4". get. Bytes()); Configuration conf = new Configuration(); Job job = new Job(conf, "Calculating "); job. set. Jar. By. Class(tcrc 3 Calculate. MR. class); job. set. Mapper. Class(Ht. Map. class); job. set. Reducer. Class(Ht. Reduce. class); job. set. Map. Output. Key. Class(Text. class); job. set. Map. Output. Value. Class(Text. class); job. set. Input. Format. Class(Table. Input. Format. class); job. set. Output. Format. Class(Table. Output. Format. class ); Table. Map. Reduce. Util. init. Table. Mapper. Job(tablenam e, my. Scan, Ht. Map. class, Text. class, job); Table. Map. Reduce. Util. init. Table. Reducer. Job(tablena me, Ht. Reduce. class, job); System. exit(job. wait. For. Completion(true) ? 0 : 1); } } 96

> scan ‘tcrc’ ROW COLUMN+CELL T 01 column=Detail: Locate, timestamp=1265184360616, value=01 T 01 column=Detail: Name, timestamp=1265184360548, value=Gun. Long T 01 column=Products: P 1, timestamp=1265184360694, value=20 T 01 column=Products: P 2, timestamp=1265184360758, value=40 T 01 column=Products: P 3, timestamp=1265184360815, value=30 T 01 column=Products: P 4, timestamp=1265184360866, value=50 T 01 column=Turnover: P 1, timestamp=1265187021528, value=1 T 01 column=Turnover: P 2, timestamp=1265187021528, value=1 T 01 column=Turnover: P 3, timestamp=1265187021528, value=1 T 01 column=Turnover: P 4, timestamp=1265187021528, value=1 T 01 column=Turnover: sum, timestamp=1265190421993, value=140 T 02 column=Detail: Locate, timestamp=1265184360951, value=02 T 02 column=Detail: Name, timestamp=1265184360910, value=Esing T 02 column=Products: P 1, timestamp=1265184361051, value=50 T 02 column=Turnover: P 1, timestamp=1265187021528, value=2 T 02 column=Turnover: sum, timestamp=1265190421993, value=100 T 03 column=Detail: Locate, timestamp=1265184361124, value=03 T 03 column=Detail: Name, timestamp=1265184361098, value=Sun. Don T 03 column=Products: P 1, timestamp=1265184361189, value=40 T 03 column=Products: P 2, timestamp=1265184361259, value=30 T 03 column=Turnover: P 1, timestamp=1265187021529, value=3 T 03 column=Turnover: sum, timestamp=1265190421993, value=120 T 04 column=Detail: Locate, timestamp=1265184361311, value=04 T 04 column=Detail: Name, timestamp=1265184361287, value=Star. Bucks T 04 column=Products: P 1, timestamp=1265184361343, value=50 T 04 column=Products: P 2, timestamp=1265184361386, value=50 T 04 column=Products: P 3, timestamp=1265184361422, value=20 T 04 column=Turnover: P 1, timestamp=1265187021529, value=2 T 04 column=Turnover: P 2, timestamp=1265187021529, value=1 T 04 column=Turnover: P 3, timestamp=1265187021529, value=1 T 04 column=Turnover: sum, timestamp=1265190421993, value=170 4 row(s) in 0. 0460 seconds 97

3. 3. 3. 執行結果 Detail Name Products Locate P 1 Turnover P 2 P 3 P 4 P 1 P 2 P 3 P 4 Sum T 01 Gun. Long 01 20 40 30 50 1 1 140 T 02 ESing T 03 Sun. Don 02 50 2 100 03 40 30 3 3 210 4 480 T 04 Star. Bucks 04 50 50 20 98

3. 3. 4. 回顧 Transactional HBase l Indexed Table = Secondary Index = Transactional HBase l 內容與原本table 相似的另一張table,但key 不 同,利於排列內容 Indexed Table Primary Table name price description 1 apple 10 xx 2 orig 5 ooo 4 tomato 8 uu 3 banana 15 vvvv 1 apple 10 xx 4 tomato 8 uu 3 banana 15 vvvv 100

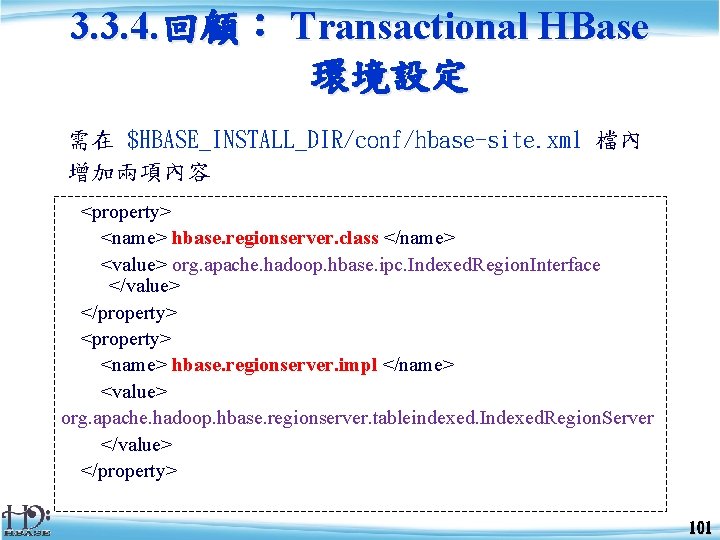

3. 3. 4. 回顧: Transactional HBase 環境設定 需在 $HBASE_INSTALL_DIR/conf/hbase-site. xml 檔內 增加兩項內容 <property> <name> hbase. regionserver. class </name> <value> org. apache. hadoop. hbase. ipc. Indexed. Region. Interface </value> </property> <property> <name> hbase. regionserver. impl </name> <value> org. apache. hadoop. hbase. regionserver. tableindexed. Indexed. Region. Server </value> </property> 101

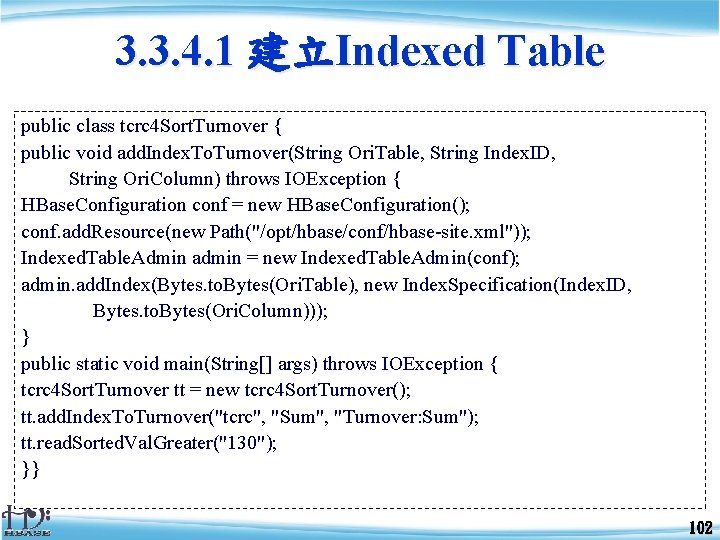

3. 3. 4. 1 建立Indexed Table public class tcrc 4 Sort. Turnover { public void add. Index. To. Turnover(String Ori. Table, String Index. ID, String Ori. Column) throws IOException { HBase. Configuration conf = new HBase. Configuration(); conf. add. Resource(new Path("/opt/hbase/conf/hbase-site. xml")); Indexed. Table. Admin admin = new Indexed. Table. Admin(conf); admin. add. Index(Bytes. to. Bytes(Ori. Table), new Index. Specification(Index. ID, Bytes. to. Bytes(Ori. Column))); } public static void main(String[] args) throws IOException { tcrc 4 Sort. Turnover tt = new tcrc 4 Sort. Turnover(); tt. add. Index. To. Turnover("tcrc", "Sum", "Turnover: Sum"); tt. read. Sorted. Val. Greater("130"); }} 102

3. 3. 4. 1 Indexed Table 輸出結果 > scan 'tcrc-Sum' ROW COLUMN+CELL 100 T 02 column=Turnover: Sum, timestamp=1265190782127, value=100 100 T 02 column=__INDEX__: ROW, timestamp=1265190782127, value=T 02 120 T 03 column=Turnover: Sum, timestamp=1265190782128, value=120 120 T 03 column=__INDEX__: ROW, timestamp=1265190782128, value=T 03 140 T 01 column=Turnover: Sum, timestamp=1265190782126, value=140 140 T 01 column=__INDEX__: ROW, timestamp=1265190782126, value=T 01 170 T 04 column=Turnover: Sum, timestamp=1265190782129, value=170 170 T 04 column=__INDEX__: ROW, timestamp=1265190782129, value=T 04 4 row(s) in 0. 0140 seconds 103

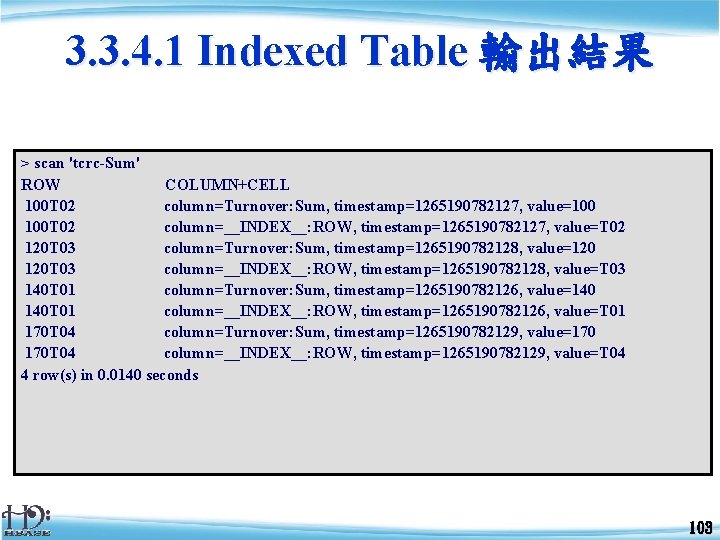

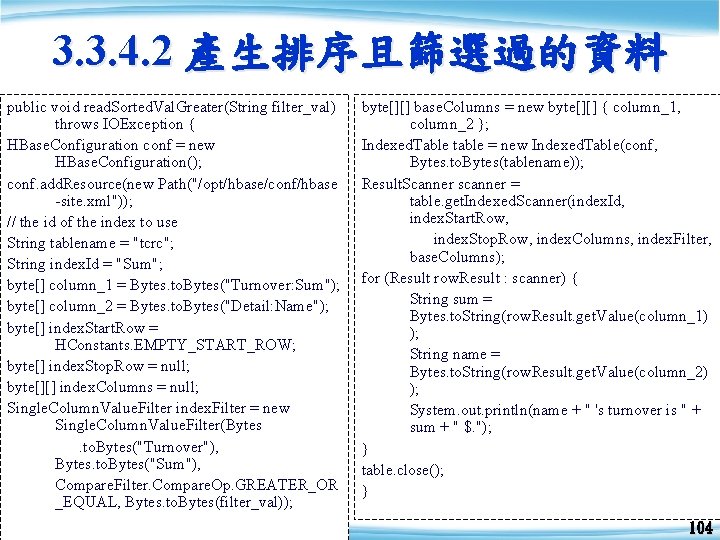

3. 3. 4. 2 產生排序且篩選過的資料 public void read. Sorted. Val. Greater(String filter_val) throws IOException { HBase. Configuration conf = new HBase. Configuration(); conf. add. Resource(new Path("/opt/hbase/conf/hbase -site. xml")); // the id of the index to use String tablename = "tcrc"; String index. Id = "Sum"; byte[] column_1 = Bytes. to. Bytes("Turnover: Sum"); byte[] column_2 = Bytes. to. Bytes("Detail: Name"); byte[] index. Start. Row = HConstants. EMPTY_START_ROW; byte[] index. Stop. Row = null; byte[][] index. Columns = null; Single. Column. Value. Filter index. Filter = new Single. Column. Value. Filter(Bytes. to. Bytes("Turnover"), Bytes. to. Bytes("Sum"), Compare. Filter. Compare. Op. GREATER_OR _EQUAL, Bytes. to. Bytes(filter_val)); byte[][] base. Columns = new byte[][] { column_1, column_2 }; Indexed. Table table = new Indexed. Table(conf, Bytes. to. Bytes(tablename)); Result. Scanner scanner = table. get. Indexed. Scanner(index. Id, index. Start. Row, index. Stop. Row, index. Columns, index. Filter, base. Columns); for (Result row. Result : scanner) { String sum = Bytes. to. String(row. Result. get. Value(column_1) ); String name = Bytes. to. String(row. Result. get. Value(column_2) ); System. out. println(name + " 's turnover is " + sum + " $. "); } table. close(); } 104

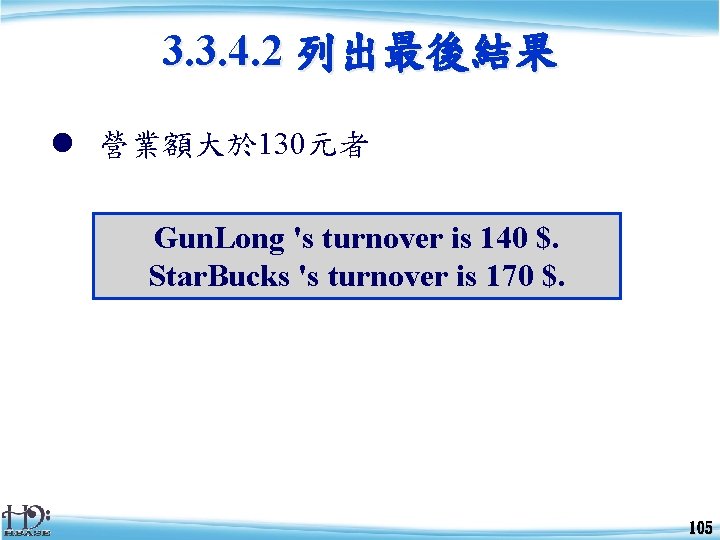

3. 3. 4. 2 列出最後結果 l 營業額大於 130元者 Gun. Long 's turnover is 140 $. Star. Bucks 's turnover is 170 $. 105

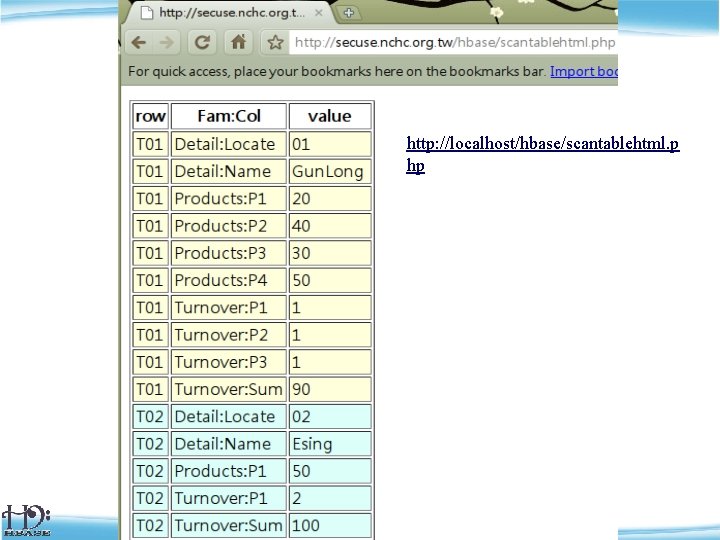

畫面 http: //localhost/hbase/scantablehtml. p hp 108

- Slides: 109