Gridifying the LHC Data Peter Kunszt CERN ITDB

Gridifying the LHC Data Peter Kunszt CERN IT/DB EU Data. Grid Data Management Peter. Kunszt@cern. ch P. Kunszt Openlab 17. 3. 2003 1

Outline • • The Grid as a means of transparent data access Current mode of operations at CERN Elements of Grid data access Current capabilities of the EU Data. Grid/LCG-1 Grid infrastructure • Outlook P. Kunszt Openlab 17. 3. 2003 2

Outline • • The Grid as a means of transparent data access Current mode of operations at CERN Elements of Grid data access Current capabilities of the EU Data. Grid/LCG-1 Grid infrastructure • Outlook P. Kunszt Openlab 17. 3. 2003 3

The Grid vision • Flexible, secure, coordinated resource sharing among dynamic collections of individuals, institutions, and resource – From “The Anatomy of the Grid: Enabling Scalable Virtual Organizations” • Enable communities (“virtual organizations”) to share geographically distributed resources as they pursue common goals -- assuming the absence of… – central location, – central control, – omniscience, – existing trust relationships. P. Kunszt Openlab 17. 3. 2003 4

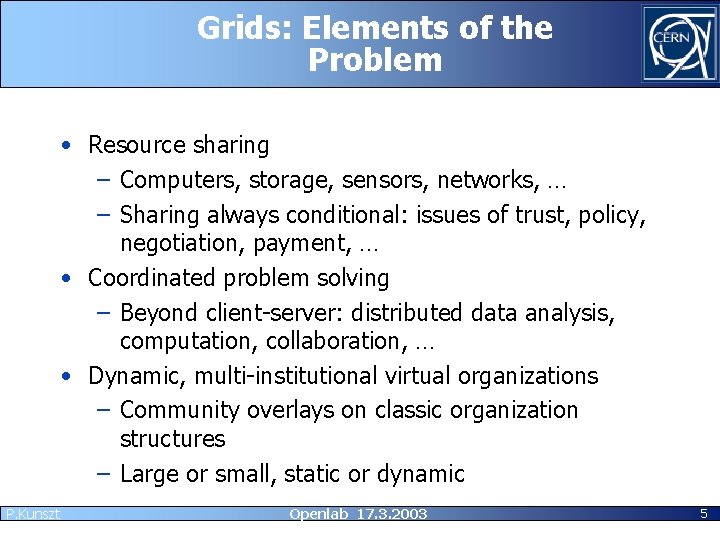

Grids: Elements of the Problem • Resource sharing – Computers, storage, sensors, networks, … – Sharing always conditional: issues of trust, policy, negotiation, payment, … • Coordinated problem solving – Beyond client-server: distributed data analysis, computation, collaboration, … • Dynamic, multi-institutional virtual organizations – Community overlays on classic organization structures – Large or small, static or dynamic P. Kunszt Openlab 17. 3. 2003 5

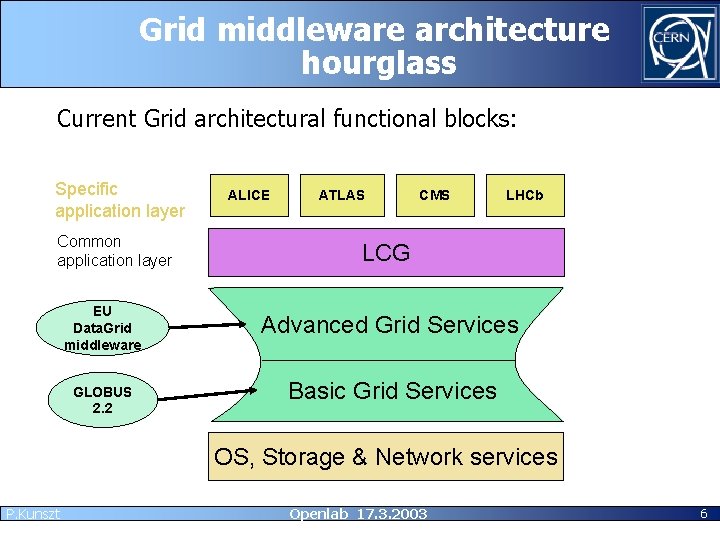

Grid middleware architecture hourglass Current Grid architectural functional blocks: Specific application layer Common application layer EU Data. Grid middleware GLOBUS 2. 2 ALICE ATLAS CMS LHCb LCG Advanced Grid Services Basic Grid Services OS, Storage & Network services P. Kunszt Openlab 17. 3. 2003 6

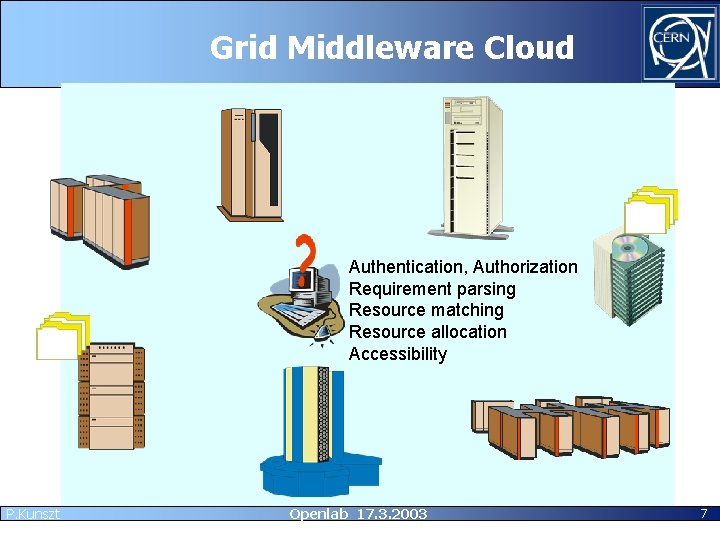

Grid Middleware Cloud Authentication, Authorization Requirement parsing Resource matching Resource allocation Accessibility P. Kunszt Openlab 17. 3. 2003 7

Vision of Grid Data Management • • • P. Kunszt Distributed Shared Data Storage Ubiquitous Data Access Transparent Data Transfer and Migration Consistency and Robustness Optimisation Openlab 17. 3. 2003 8

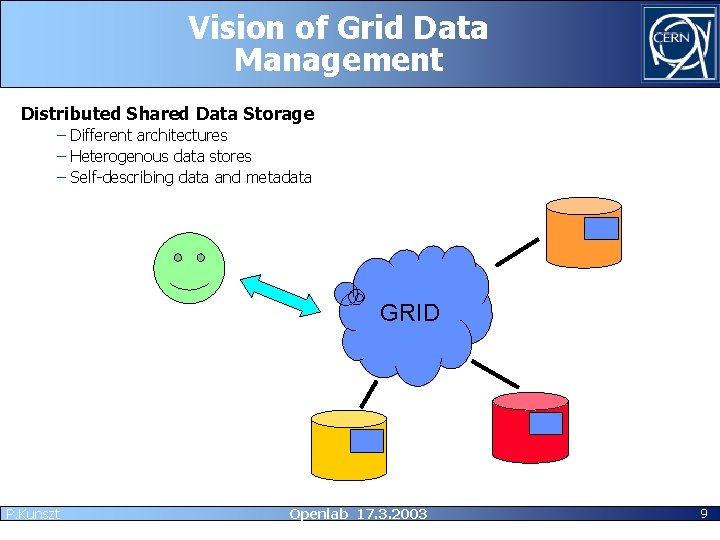

Vision of Grid Data Management Distributed Shared Data Storage – Different architectures – Heterogenous data stores – Self-describing data and metadata GRID P. Kunszt Openlab 17. 3. 2003 9

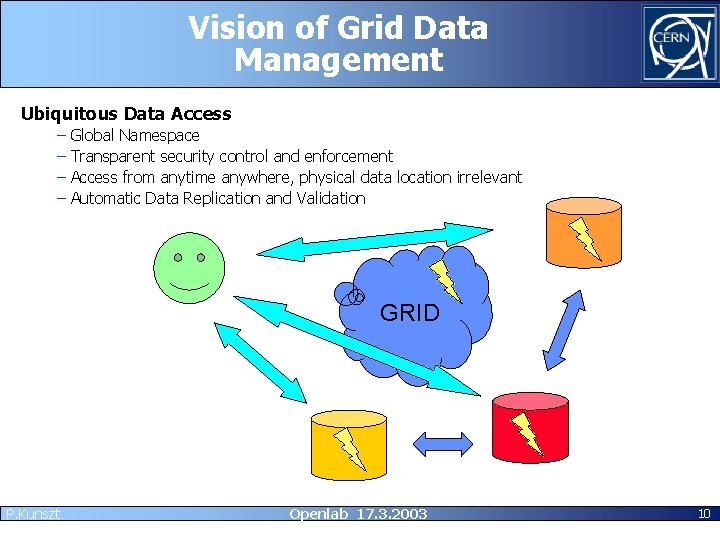

Vision of Grid Data Management Ubiquitous Data Access – – Global Namespace Transparent security control and enforcement Access from anytime anywhere, physical data location irrelevant Automatic Data Replication and Validation GRID P. Kunszt Openlab 17. 3. 2003 10

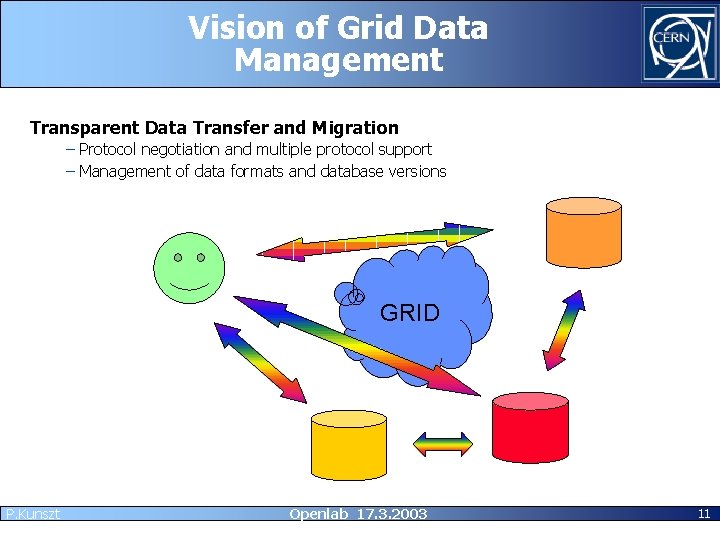

Vision of Grid Data Management Transparent Data Transfer and Migration – Protocol negotiation and multiple protocol support – Management of data formats and database versions GRID P. Kunszt Openlab 17. 3. 2003 11

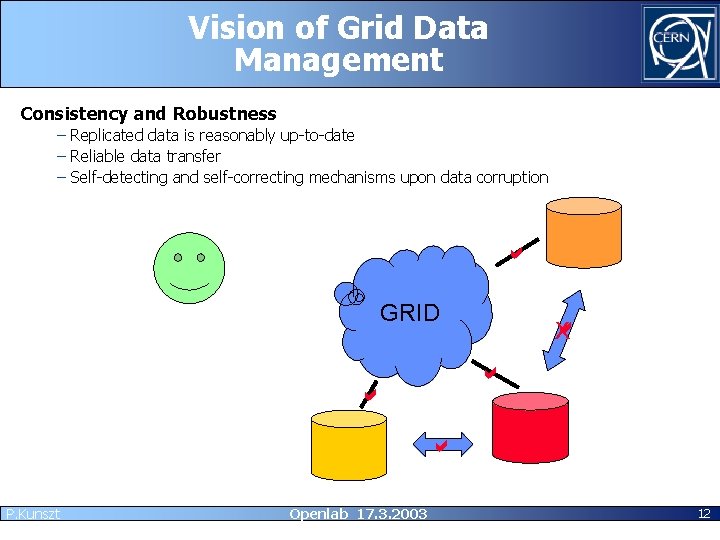

Vision of Grid Data Management Consistency and Robustness – Replicated data is reasonably up-to-date – Reliable data transfer – Self-detecting and self-correcting mechanisms upon data corruption GRID X P. Kunszt Openlab 17. 3. 2003 12

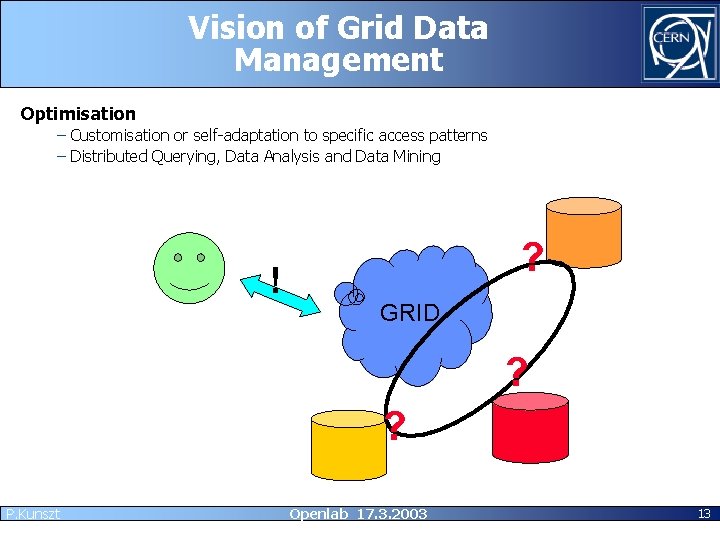

Vision of Grid Data Management Optimisation – Customisation or self-adaptation to specific access patterns – Distributed Querying, Data Analysis and Data Mining ! ? GRID ? ? P. Kunszt Openlab 17. 3. 2003 13

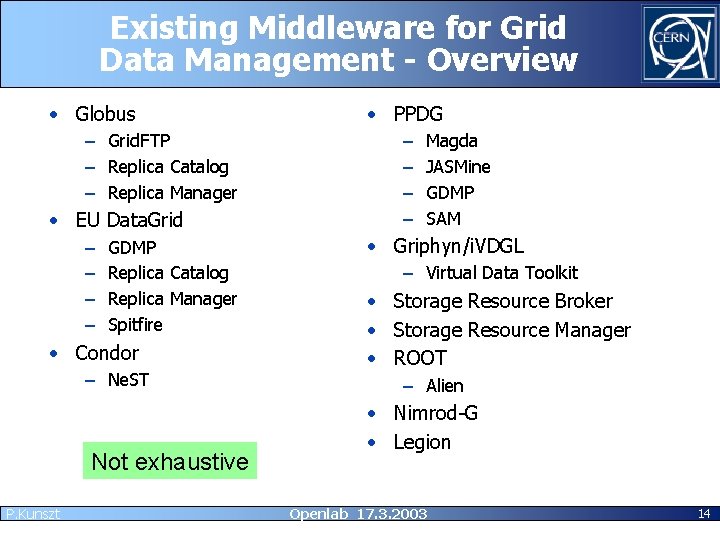

Existing Middleware for Grid Data Management - Overview • Globus – Grid. FTP – Replica Catalog – Replica Manager • EU Data. Grid – – GDMP Replica Catalog Replica Manager Spitfire • Condor – Ne. ST Not exhaustive P. Kunszt • PPDG – – Magda JASMine GDMP SAM • Griphyn/i. VDGL – Virtual Data Toolkit • Storage Resource Broker • Storage Resource Manager • ROOT – Alien • Nimrod-G • Legion Openlab 17. 3. 2003 14

What you would like to see reliable available powerful calm cool easy to use …. and nice to look at P. Kunszt Openlab 17. 3. 2003 15

Outline • The Grid as a means of transparent data access • Current mode of operations at CERN – Non-Grid operations – Grid operations • Elements of Grid data access • Current capabilities of the EU Data. Grid/LCG-1 Grid infrastructure • Outlook P. Kunszt Openlab 17. 3. 2003 16

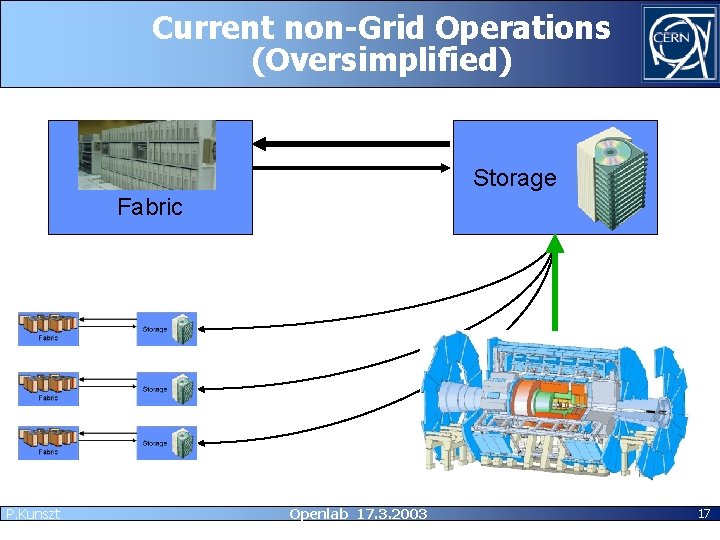

Current non-Grid Operations (Oversimplified) Storage Fabric P. Kunszt Openlab 17. 3. 2003 17

Current non-Grid Operations • Planning of – Resources (computing and storage) – Projects and job schedules – Data placement • Monitoring of – Running jobs – Available resources – Alarms P. Kunszt Openlab 17. 3. 2003 18

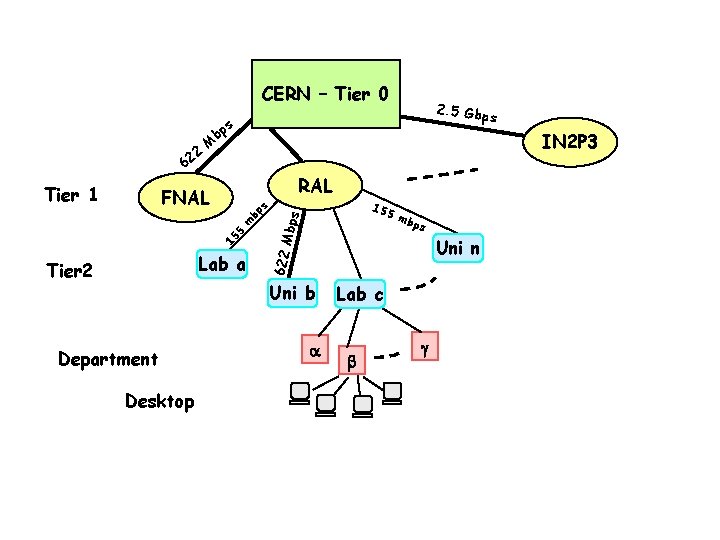

CERN – Tier 0 M IN 2 P 3 RAL bp m 5 15 Lab a Tier 2 155 s FNAL Mbps Tier 1 s bp ps Uni n Uni b Department mb 622 2 62 2. 5 Gbp s Lab c Desktop P. Kunszt Openlab 17. 3. 2003

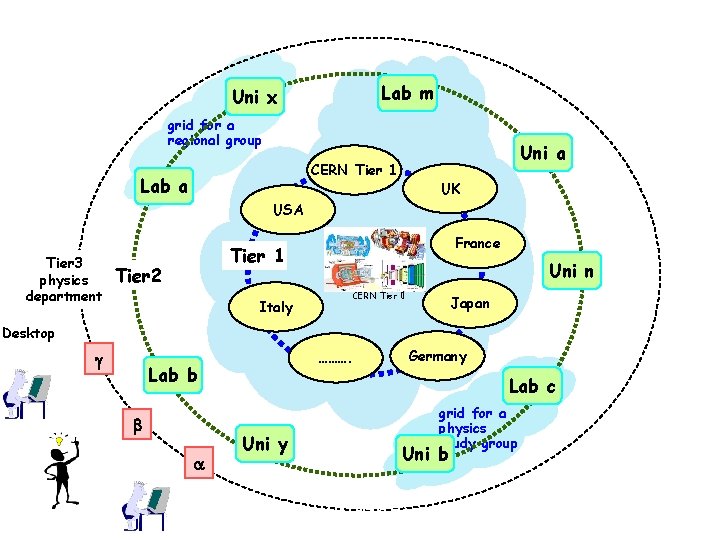

Lab m Uni x grid for a regional group Uni a CERN Tier 1 Lab a UK USA Tier 3 physics department France Tier 1 Tier 2 Uni n CERN Tier 0 Italy Japan Desktop Lab b P. Kunszt ………. Germany Lab c Uni y grid for a physics study group Uni b Openlab 17. 3. 2003

Grid Testbed Today • Currently largest Grid Testbed: EU Data. Grid – Not a full-fledged Grid fulfilling the Grid vision – Pragmatic: what can be done today – Research aspect: trying out novel approaches • Operation of each Grid Site: – Huge effort at each computing center for installation and operations support – Local user support is necessary but not sufficient • Operation of the Grid as a logical entity – Complex management – coordination effort among Grid centers concerning Grid middleware updates, but also policies, trust on o relationships rn e t f a – Grid middleware support through many channels he t – Complex interdependencies of Grid middleware in alk t e P. Kunszt Openlab 17. 3. 2003 Se 21

Outline • • The Grid as a means of transparent data access Current mode of operations at CERN Elements of Grid data access Current capabilities of the EU Data. Grid/LCG-1 Grid infrastructure • Outlook P. Kunszt Openlab 17. 3. 2003 22

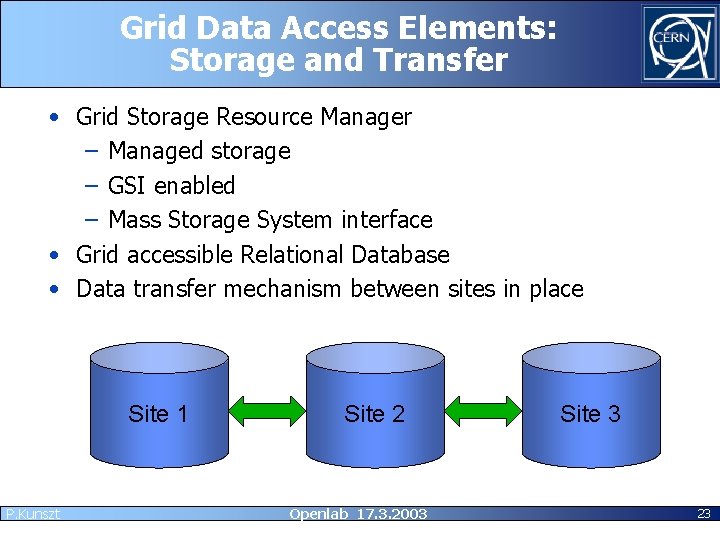

Grid Data Access Elements: Storage and Transfer • Grid Storage Resource Manager – Managed storage – GSI enabled – Mass Storage System interface • Grid accessible Relational Database • Data transfer mechanism between sites in place Site 1 P. Kunszt Site 2 Openlab 17. 3. 2003 Site 3 23

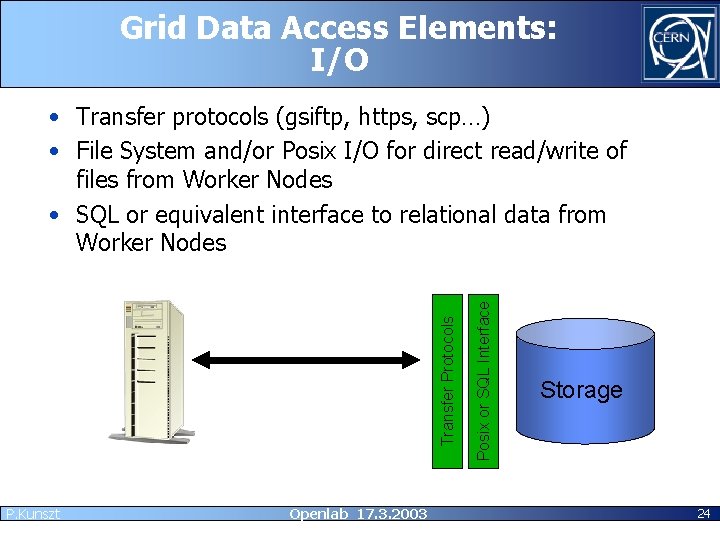

Grid Data Access Elements: I/O P. Kunszt Openlab 17. 3. 2003 Posix or SQL Interface Transfer Protocols • Transfer protocols (gsiftp, https, scp…) • File System and/or Posix I/O for direct read/write of files from Worker Nodes • SQL or equivalent interface to relational data from Worker Nodes Storage 24

Grid Data Access Elements: Catalogs • Grid Data Location Service – Find location of all identical copies (replicas) • Metadata Catalogs – File/Object specific metadata – Logical names catalog – Collections • Grid Database Object Catalog P. Kunszt Openlab 17. 3. 2003 25

Higher Level Data Management Services • Customizable pre- and post-processing services – Transparent encryption and decryption of data for transfer and storage – External catalog updates • Optimization Services – Location of the best replica based on access ‘cost’ – Active preemptive replication based on usage patterns – Automated replication service based on subscriptions • Data Consistency Services – Data Versioning – Consistency between replicas – Reliable data transfer service – Consistency between catalog data and the actual stored data • Virtual Data Services – On-the-fly data generation P. Kunszt Openlab 17. 3. 2003 26

Outline • • The Grid as a means of transparent data access Current mode of operations at CERN Elements of Grid data access Current capabilities of the EU Data. Grid/LCG-1 Grid infrastructure • Outlook P. Kunszt Openlab 17. 3. 2003 27

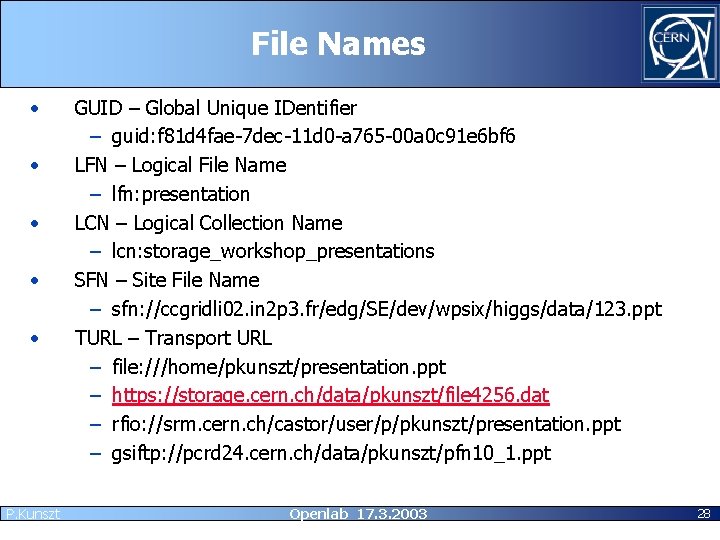

File Names • • • P. Kunszt GUID – Global Unique IDentifier – guid: f 81 d 4 fae-7 dec-11 d 0 -a 765 -00 a 0 c 91 e 6 bf 6 LFN – Logical File Name – lfn: presentation LCN – Logical Collection Name – lcn: storage_workshop_presentations SFN – Site File Name – sfn: //ccgridli 02. in 2 p 3. fr/edg/SE/dev/wpsix/higgs/data/123. ppt TURL – Transport URL – file: ///home/pkunszt/presentation. ppt – https: //storage. cern. ch/data/pkunszt/file 4256. dat – rfio: //srm. cern. ch/castor/user/p/pkunszt/presentation. ppt – gsiftp: //pcrd 24. cern. ch/data/pkunszt/pfn 10_1. ppt Openlab 17. 3. 2003 28

Data Services in EDG/LCG 1: Storage and I/O • Grid Storage Element for files: – Understands SFNs – maps into TURL – GSI enabled interface: Storage Resource Manager http: //sdm. lbl. gov/srm/documents/joint. docs/SRM. joint. func. design. part 1. doc – Support of different MSS backends – Support for Grid. FTP and RFIO (CASTOR) – Will be deployed this week in the EDG testbed for the first time. • Grid. FTP Server for files: – only TURL (gsiftp: //) – Also GSI enabled – Only FTP-like functionality, no management capabilities • Current remote I/O – NFS – RFIO – Grid. FTP P. Kunszt Openlab 17. 3. 2003 29

Data Services in EDG/LCG 1: Database access • Spitfire – Thin client for GSI-enabled database access – Customizable, API exposed through a Web Service WSDL – Not suitable for large result sets – Not used by HEP applications yet P. Kunszt Openlab 17. 3. 2003 30

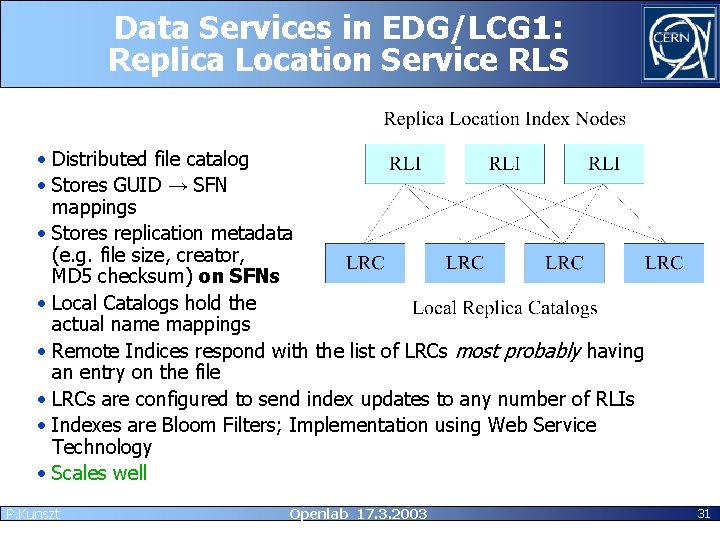

Data Services in EDG/LCG 1: Replica Location Service RLS • Distributed file catalog • Stores GUID → SFN mappings • Stores replication metadata (e. g. file size, creator, MD 5 checksum) on SFNs • Local Catalogs hold the actual name mappings • Remote Indices respond with the list of LRCs most probably having an entry on the file • LRCs are configured to send index updates to any number of RLIs • Indexes are Bloom Filters; Implementation using Web Service Technology • Scales well P. Kunszt Openlab 17. 3. 2003 31

Data Services in EDG/LCG 1: Replica Metadata Catalog • Single logical service for replication metadata – Deployement possible as a high-availability service (Web service technology) – Possibility of synchronized data on many sites to avoid a single site entry point (Using underlying database technology) • Holds Logical File Name (LFN) → GUID mappings (“aliases”) • Contains LCN → set of GUIDs mapping (“collections”) • Holds replication metadata on LFNs, LCNs and GUIDs • Might hold a small amount of replica-specific application metadata – O(10) items P. Kunszt Openlab 17. 3. 2003 32

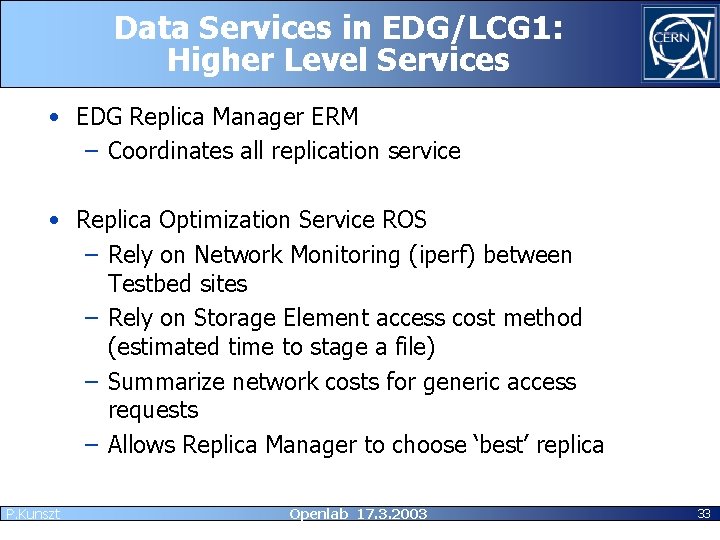

Data Services in EDG/LCG 1: Higher Level Services • EDG Replica Manager ERM – Coordinates all replication service • Replica Optimization Service ROS – Rely on Network Monitoring (iperf) between Testbed sites – Rely on Storage Element access cost method (estimated time to stage a file) – Summarize network costs for generic access requests – Allows Replica Manager to choose ‘best’ replica P. Kunszt Openlab 17. 3. 2003 33

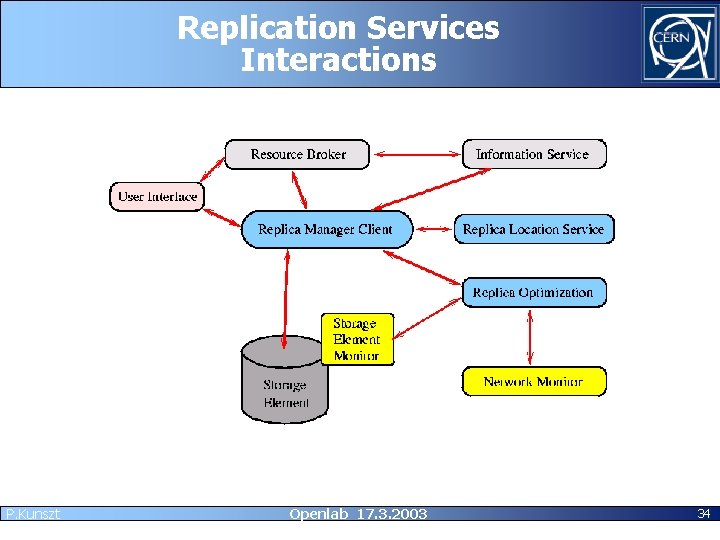

Replication Services Interactions P. Kunszt Openlab 17. 3. 2003 34

Outlook • We took only the first step on the long road to fulfilling the Grid vision • Promising initial results but a lot of work still needs to be done • Industry has only recently joined the Grid community through GGF – industrial-strength middleware solutions are not available yet • By the end of this year we’ll have a first experience with a Grid infrastructure that was built for production from the start (LCG 1). P. Kunszt Openlab 17. 3. 2003 35

Open Grid Services Architecture • OGSA is a framework for a Grid architecture based on the Web Service paradigm. – Every service is a Grid Service. The main difference to Web Services is that Grid Services may be stateful services. – These Grid Services interoperate through wellunderstood interfaces. • The reference implementation, Globus Toolkit 3 is still in beta, the first release is expected for this summer. • Depending on the evolution until the end of the year, we will see whether OGSA becomes stable enough to be considered for integration into LCG next year. P. Kunszt Openlab 17. 3. 2003 36

Thank you for your attention P. Kunszt Openlab 17. 3. 2003 37

- Slides: 37