GRID Register https twiki ific uv estwikibinviewECienciaAcceso GRIDCSIC

GRID

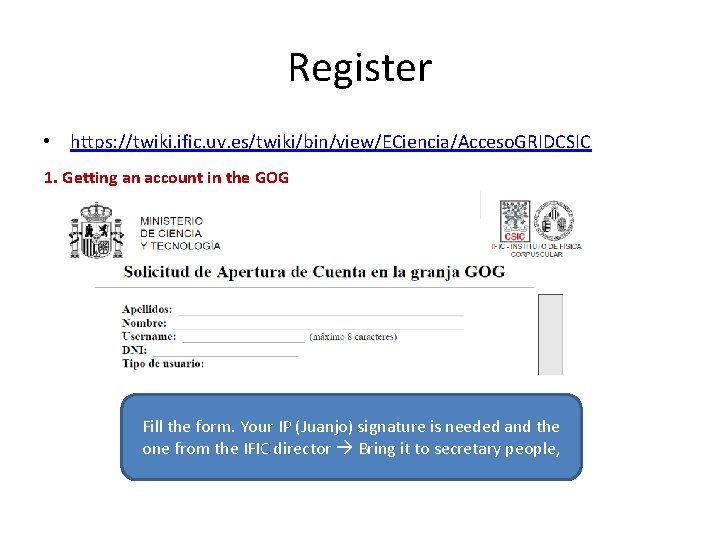

Register • https: //twiki. ific. uv. es/twiki/bin/view/ECiencia/Acceso. GRIDCSIC 1. Getting an account in the GOG Fill the form. Your IP (Juanjo) signature is needed and the one from the IFIC director Bring it to secretary people,

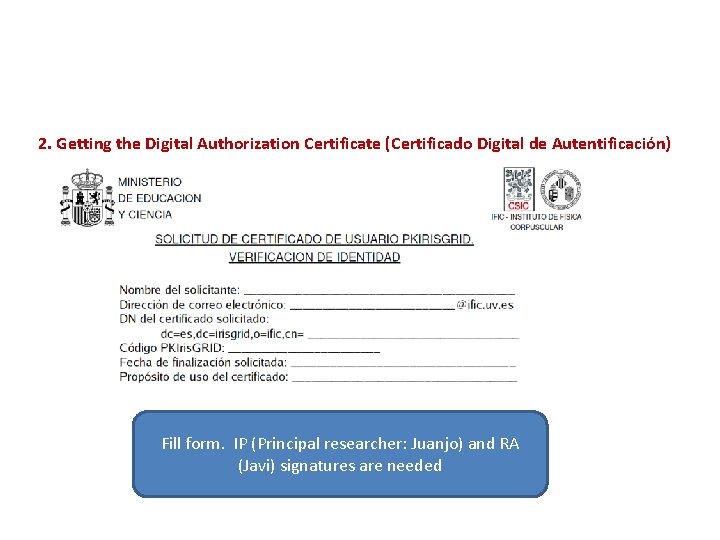

2. Getting the Digital Authorization Certificate (Certificado Digital de Autentificación) Fill form. IP (Principal researcher: Juanjo) and RA (Javi) signatures are needed

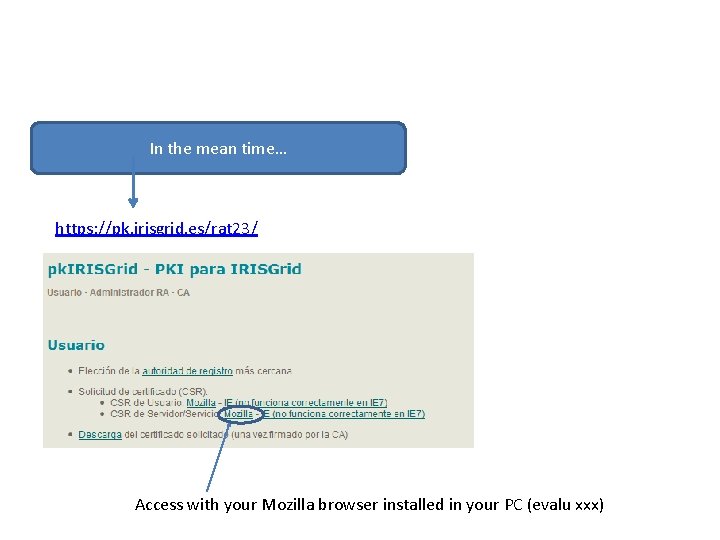

In the mean time… https: //pk. irisgrid. es/rat 23/ Access with your Mozilla browser installed in your PC (evalu xxx)

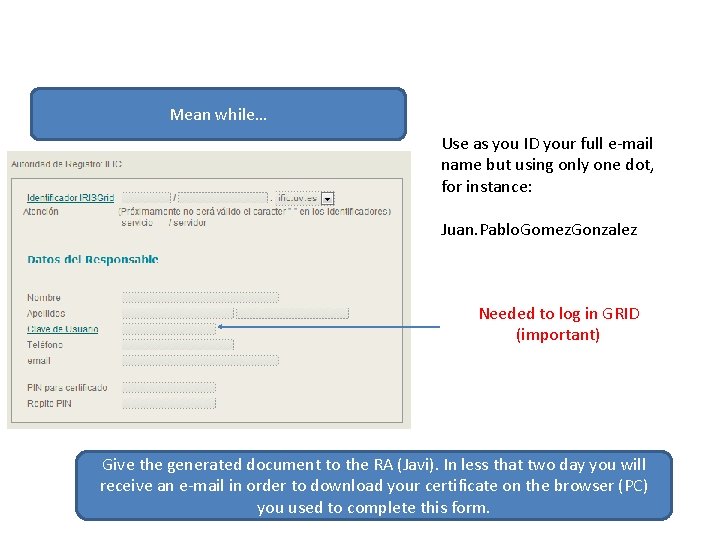

Mean while… Use as you ID your full e-mail name but using only one dot, for instance: Juan. Pablo. Gomez. Gonzalez Needed to log in GRID (important) Give the generated document to the RA (Javi). In less that two day you will receive an e-mail in order to download your certificate on the browser (PC) you used to complete this form.

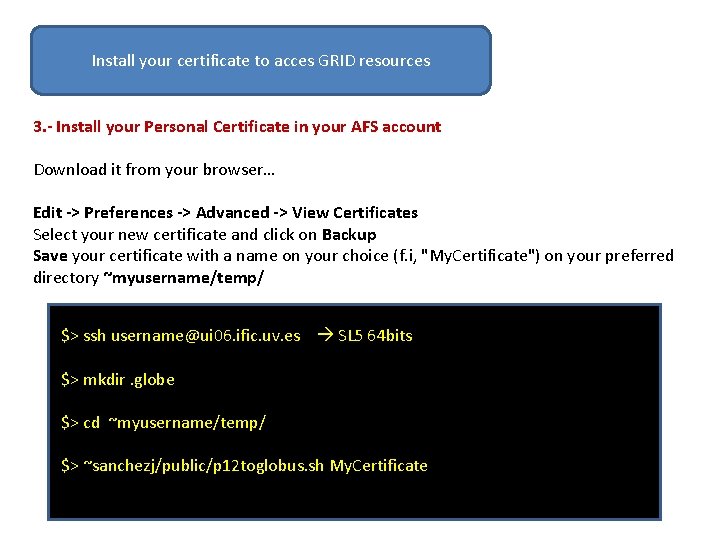

Install your certificate to acces GRID resources 3. - Install your Personal Certificate in your AFS account Download it from your browser… Edit -> Preferences -> Advanced -> View Certificates Select your new certificate and click on Backup Save your certificate with a name on your choice (f. i, "My. Certificate") on your preferred directory ~myusername/temp/ $> ssh username@ui 06. ific. uv. es SL 5 64 bits $> mkdir. globe $> cd ~myusername/temp/ $> ~sanchezj/public/p 12 toglobus. sh My. Certificate

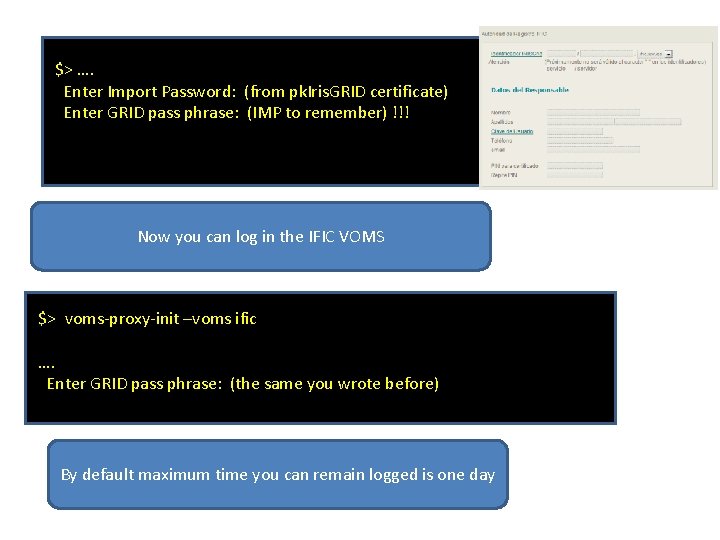

$> …. Enter Import Password: (from pk. Iris. GRID certificate) Enter GRID pass phrase: (IMP to remember) !!! Now you can log in the IFIC VOMS $> voms-proxy-init –voms ific …. Enter GRID pass phrase: (the same you wrote before) By default maximum time you can remain logged is one day

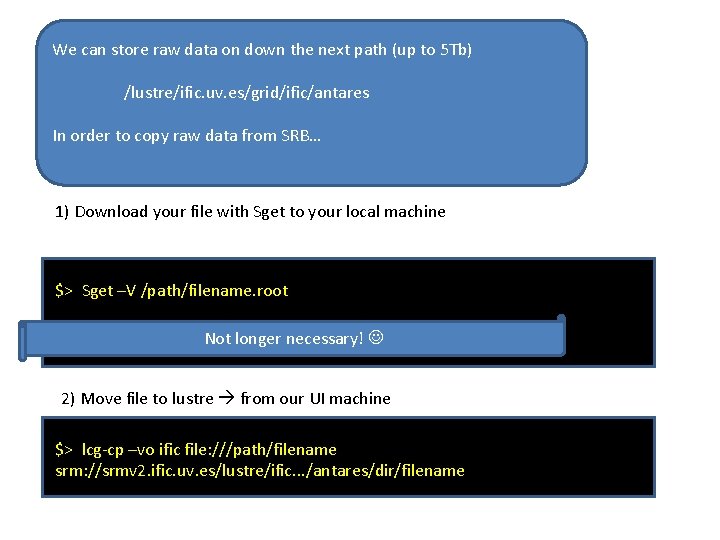

We can store raw data on down the next path (up to 5 Tb) /lustre/ific. uv. es/grid/ific/antares In order to copy raw data from SRB… 1) Download your file with Sget to your local machine $> Sget –V /path/filename. root $> scp filename. root. Not username@ui 06. ific. uv. es: /path/ longer necessary! 2) Move file to lustre from our UI machine $> lcg-cp –vo ific file: ///path/filename srm: //srmv 2. ific. uv. es/lustre/ific. . . /antares/dir/filename

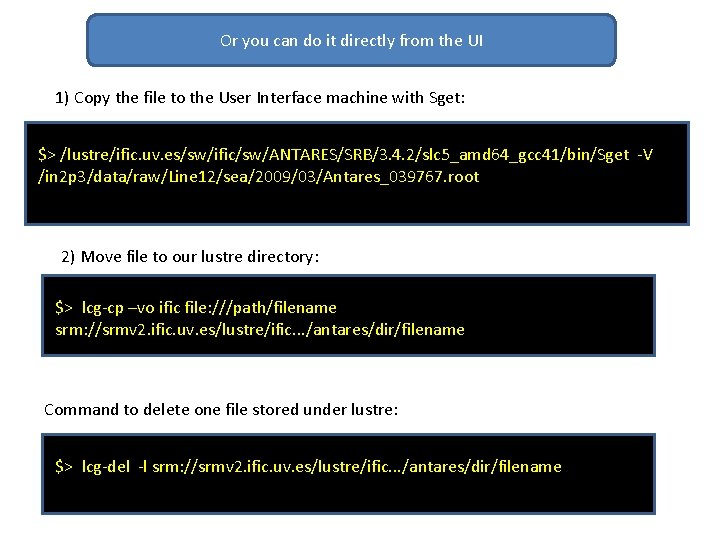

Or you can do it directly from the UI 1) Copy the file to the User Interface machine with Sget: $> /lustre/ific. uv. es/sw/ific/sw/ANTARES/SRB/3. 4. 2/slc 5_amd 64_gcc 41/bin/Sget -V /in 2 p 3/data/raw/Line 12/sea/2009/03/Antares_039767. root 2) Move file to our lustre directory: $> lcg-cp –vo ific file: ///path/filename srm: //srmv 2. ific. uv. es/lustre/ific. . . /antares/dir/filename Command to delete one file stored under lustre: $> lcg-del -l srm: //srmv 2. ific. uv. es/lustre/ific. . . /antares/dir/filename

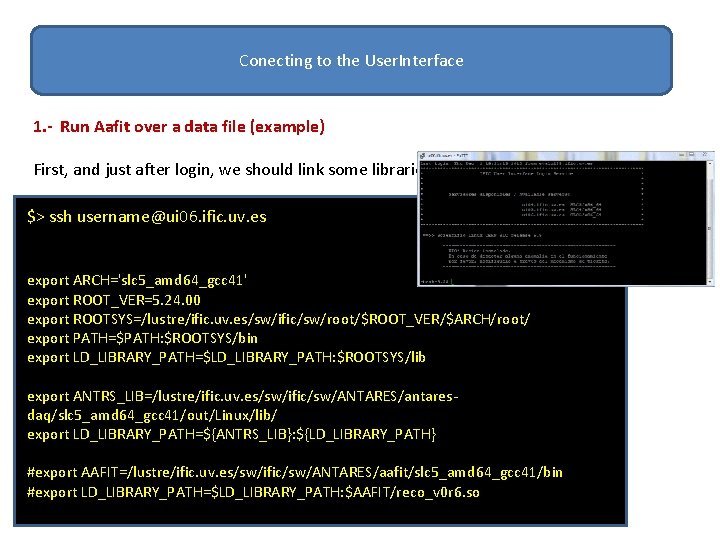

Conecting to the User. Interface 1. - Run Aafit over a data file (example) First, and just after login, we should link some libraries: $> ssh username@ui 06. ific. uv. es export ARCH='slc 5_amd 64_gcc 41' export ROOT_VER=5. 24. 00 export ROOTSYS=/lustre/ific. uv. es/sw/ific/sw/root/$ROOT_VER/$ARCH/root/ export PATH=$PATH: $ROOTSYS/bin export LD_LIBRARY_PATH=$LD_LIBRARY_PATH: $ROOTSYS/lib export ANTRS_LIB=/lustre/ific. uv. es/sw/ific/sw/ANTARES/antaresdaq/slc 5_amd 64_gcc 41/out/Linux/lib/ export LD_LIBRARY_PATH=${ANTRS_LIB}: ${LD_LIBRARY_PATH} #export AAFIT=/lustre/ific. uv. es/sw/ific/sw/ANTARES/aafit/slc 5_amd 64_gcc 41/bin #export LD_LIBRARY_PATH=$LD_LIBRARY_PATH: $AAFIT/reco_v 0 r 6. so

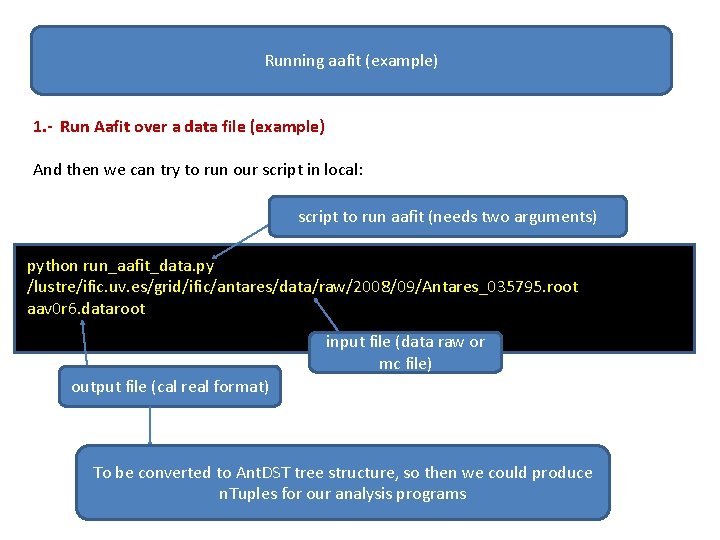

Running aafit (example) 1. - Run Aafit over a data file (example) And then we can try to run our script in local: script to run aafit (needs two arguments) python run_aafit_data. py /lustre/ific. uv. es/grid/ific/antares/data/raw/2008/09/Antares_035795. root aav 0 r 6. dataroot input file (data raw or mc file) output file (cal real format) To be converted to Ant. DST tree structure, so then we could produce n. Tuples for our analysis programs

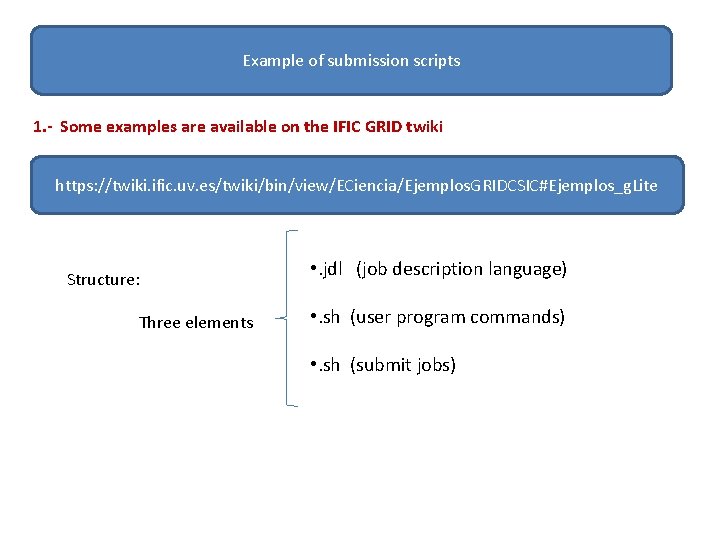

Example of submission scripts 1. - Some examples are available on the IFIC GRID twiki https: //twiki. ific. uv. es/twiki/bin/view/ECiencia/Ejemplos. GRIDCSIC#Ejemplos_g. Lite Structure: Three elements • . jdl (job description language) • . sh (user program commands) • . sh (submit jobs)

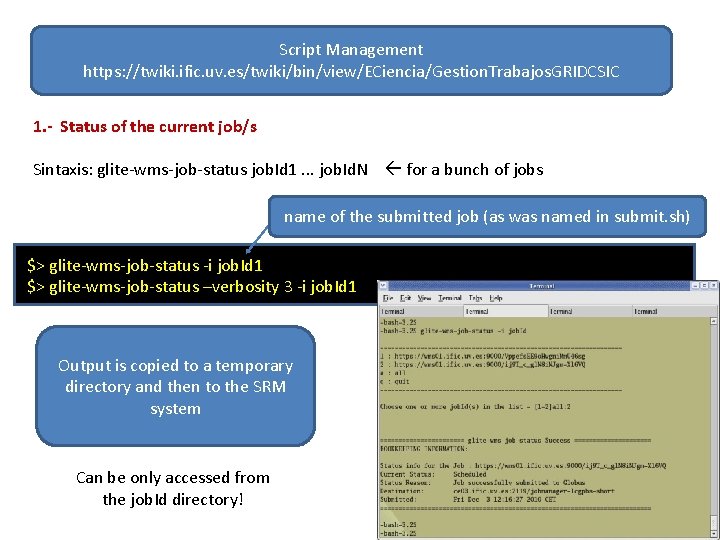

Script Management https: //twiki. ific. uv. es/twiki/bin/view/ECiencia/Gestion. Trabajos. GRIDCSIC 1. - Status of the current job/s Sintaxis: glite-wms-job-status job. Id 1. . . job. Id. N for a bunch of jobs name of the submitted job (as was named in submit. sh) $> glite-wms-job-status -i job. Id 1 $> glite-wms-job-status –verbosity 3 -i job. Id 1 Output is copied to a temporary directory and then to the SRM system Can be only accessed from the job. Id directory!

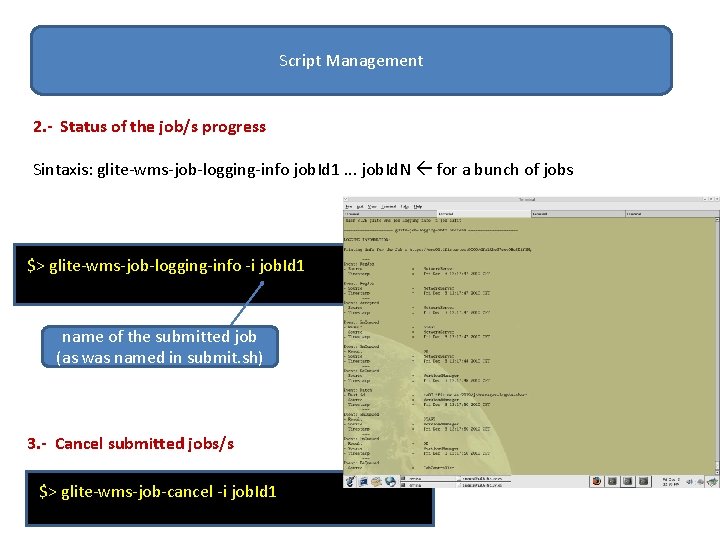

Script Management 2. - Status of the job/s progress Sintaxis: glite-wms-job-logging-info job. Id 1. . . job. Id. N for a bunch of jobs $> glite-wms-job-logging-info -i job. Id 1 name of the submitted job (as was named in submit. sh) 3. - Cancel submitted jobs/s $> glite-wms-job-cancel -i job. Id 1

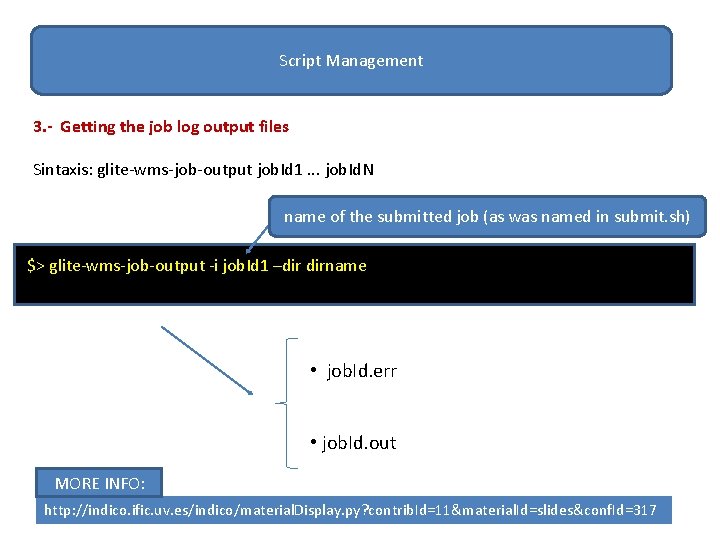

Script Management 3. - Getting the job log output files Sintaxis: glite-wms-job-output job. Id 1. . . job. Id. N name of the submitted job (as was named in submit. sh) $> glite-wms-job-output -i job. Id 1 –dir dirname • job. Id. err • job. Id. out MORE INFO: http: //indico. ific. uv. es/indico/material. Display. py? contrib. Id=11&material. Id=slides&conf. Id=317

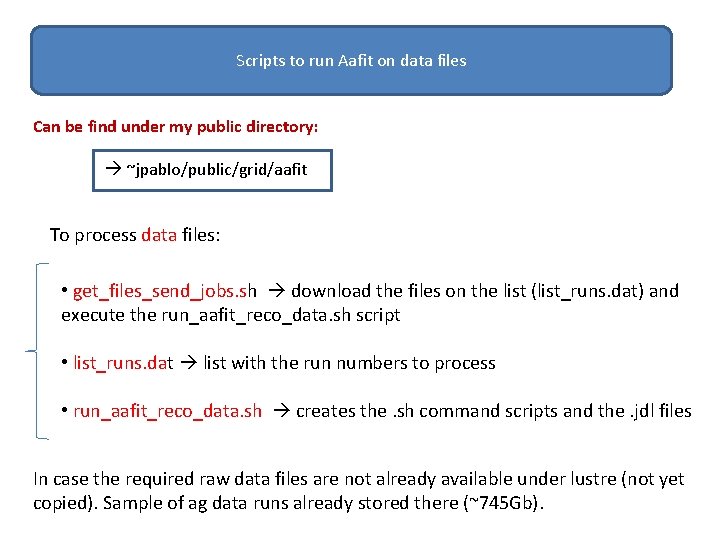

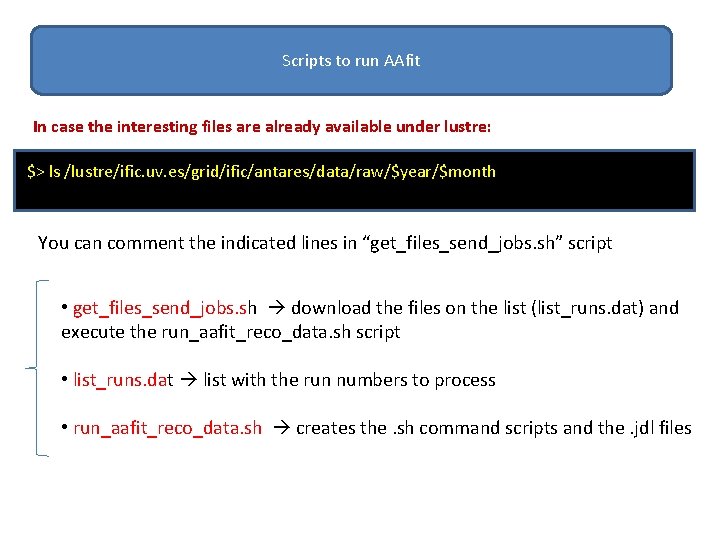

Scripts to run Aafit on data files Can be find under my public directory: ~jpablo/public/grid/aafit To process data files: • get_files_send_jobs. sh download the files on the list (list_runs. dat) and execute the run_aafit_reco_data. sh script • list_runs. dat list with the run numbers to process • run_aafit_reco_data. sh creates the. sh command scripts and the. jdl files In case the required raw data files are not already available under lustre (not yet copied). Sample of ag data runs already stored there (~745 Gb).

Scripts to run AAfit In case the interesting files are already available under lustre: $> ls /lustre/ific. uv. es/grid/ific/antares/data/raw/$year/$month You can comment the indicated lines in “get_files_send_jobs. sh” script • get_files_send_jobs. sh download the files on the list (list_runs. dat) and execute the run_aafit_reco_data. sh script • list_runs. dat list with the run numbers to process • run_aafit_reco_data. sh creates the. sh command scripts and the. jdl files

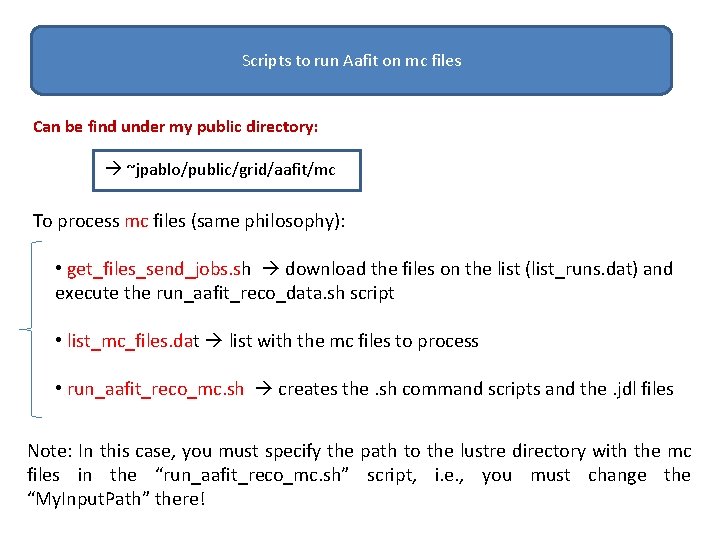

Scripts to run Aafit on mc files Can be find under my public directory: ~jpablo/public/grid/aafit/mc To process mc files (same philosophy): • get_files_send_jobs. sh download the files on the list (list_runs. dat) and execute the run_aafit_reco_data. sh script • list_mc_files. dat list with the mc files to process • run_aafit_reco_mc. sh creates the. sh command scripts and the. jdl files Note: In this case, you must specify the path to the lustre directory with the mc files in the “run_aafit_reco_mc. sh” script, i. e. , you must change the “My. Input. Path” there!

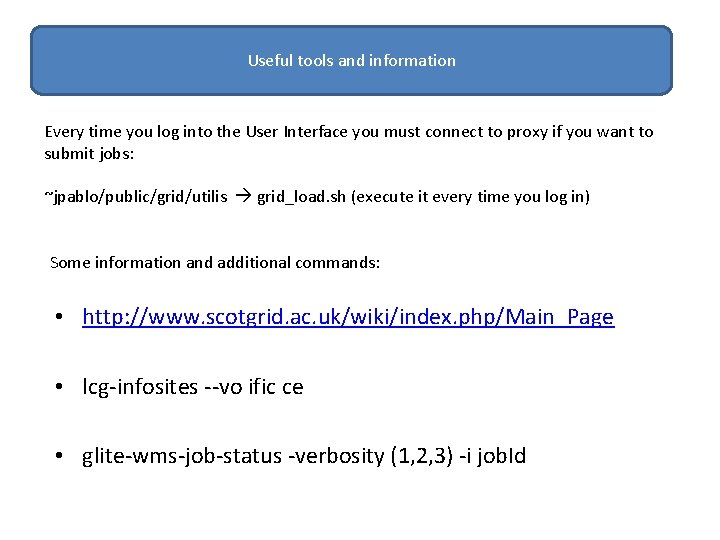

Useful information Useful tools and information Every time you log into the User Interface you must connect to proxy if you want to submit jobs: ~jpablo/public/grid/utilis grid_load. sh (execute it every time you log in) Some information and additional commands: • http: //www. scotgrid. ac. uk/wiki/index. php/Main_Page • lcg-infosites --vo ific ce • glite-wms-job-status -verbosity (1, 2, 3) -i job. Id

- Slides: 19