Green Datacenter Initiatives at SDSC Matt Campbell SDSC

Green Datacenter Initiatives at SDSC Matt Campbell SDSC Data Center Services Manager mattc@sdsc. edu SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

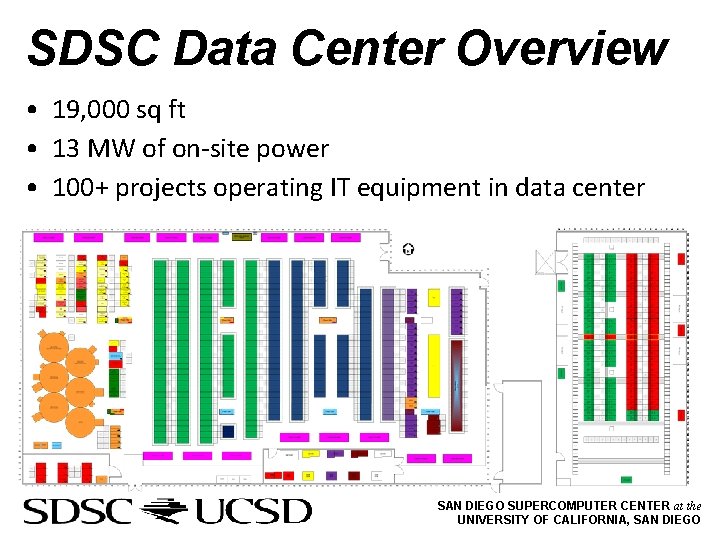

SDSC Data Center Overview • 19, 000 sq ft • 13 MW of on-site power • 100+ projects operating IT equipment in data center SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

Key Points • How do we illustrate energy loss and quantify savings? • Establishing a common unit of measurement for data centers • What areas can be improved? • Mechanical • Electrical • IT equipment SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

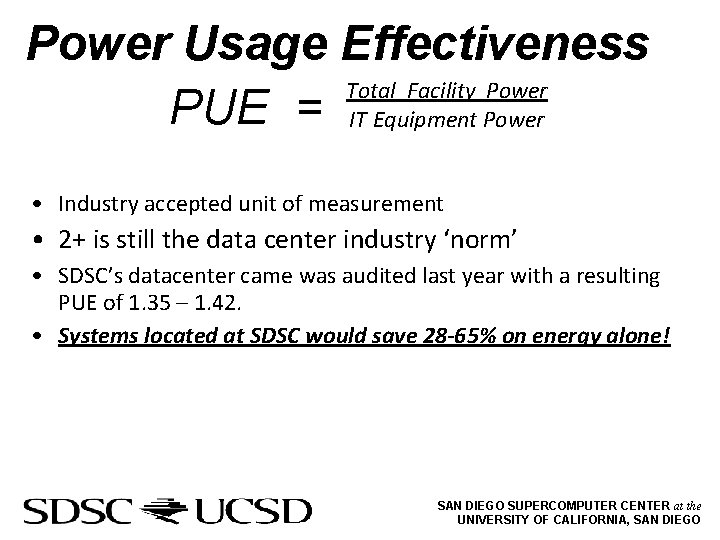

Power Usage Effectiveness Total Facility Power PUE = IT Equipment Power • Industry accepted unit of measurement • 2+ is still the data center industry ‘norm’ • SDSC’s datacenter came was audited last year with a resulting PUE of 1. 35 – 1. 42. • Systems located at SDSC would save 28 -65% on energy alone! SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

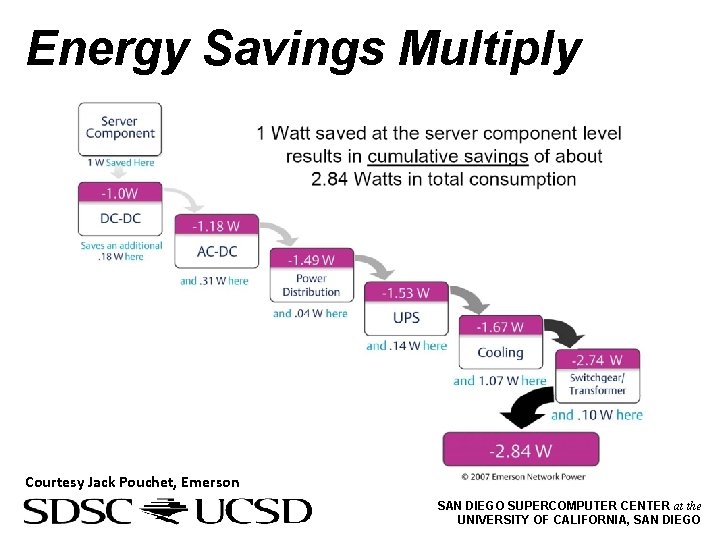

Energy Savings Multiply Courtesy Jack Pouchet, Emerson SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

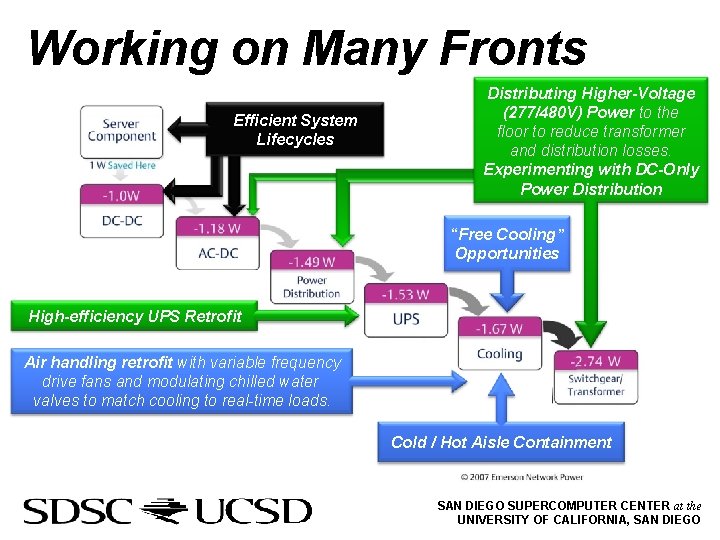

Working on Many Fronts Efficient System Lifecycles Distributing Higher-Voltage (277/480 V) Power to the floor to reduce transformer and distribution losses. Experimenting with DC-Only Power Distribution “Free Cooling” Opportunities High-efficiency UPS Retrofit Air handling retrofit with variable frequency drive fans and modulating chilled water valves to match cooling to real-time loads. Cold / Hot Aisle Containment SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

Data Center Improvements to Lower PUE • Mechanical Systems • Aisle containment • CRAH VFD retrofits • Blanking panels, floor brushes • Electrical Systems • High efficiency UPS • System Lifecycles • Virtualization • Consolidation of ‘ghost’ servers SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

Cold/Hot Aisle Containment • • • Expect 50 -80% better cooling airflow efficiency than traditional hot/cold aisle Separates cold/hot air, eliminating the need for over-cooling, over-blowing Allows for high temperature differentials, maximizing efficiency of cooling equipment Cold Aisle Containment • Knuerr Coolflex • • Hot Aisle Containment • First deployment in the United States Requires a standardized datacenter Enclosed cold aisles (70 -78 F) Servers exhaust to room as a whole, which runs hot (90 -100 F) • • • Essentially a “heat chimney” on top of hot aisles with doors at each end. Flexible, can be phased incrementally Cold room as a whole (70 -78 F) Exhaust to enclosed hot aisle (100+F), ducting directly to cooling systems SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

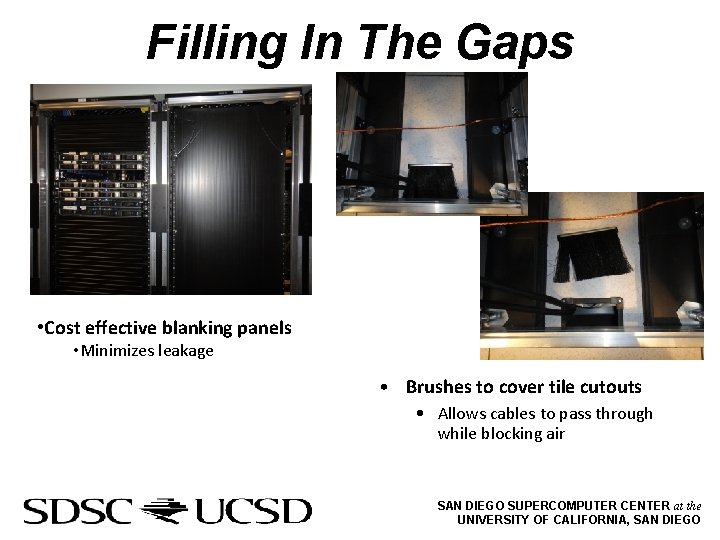

Filling In The Gaps • Cost effective blanking panels • Minimizes leakage • Brushes to cover tile cutouts • Allows cables to pass through while blocking air SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

CRAH VFD Retrofits • Added variable frequency drives to computer room air handlers • Allows CRAH units to modulate based on control signal SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

Electrical Systems • High efficiency UPS units that can save ~500, 000 k. Wh/year • Higher voltage to IT equipment • Providing 277/480 V power on the floor to more efficiently power HPC systems without transformer and distribution losses • Exploration of DC power for IT equipment SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

Hardware Lifecycle Efficiency • Hardware retirement • Equipment refresh more often leverages technology improvements • Virtualization • Maximize equipment utilization • Consolidation of ‘ghost’ servers • Underutilized IT equipment • ‘Forgotten’ equipment SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

QUESTIONS? SAN DIEGO SUPERCOMPUTER CENTER at the UNIVERSITY OF CALIFORNIA, SAN DIEGO

- Slides: 13