Greedy Algorithms Many optimization problems can be solved

Greedy Algorithms • Many optimization problems can be solved more quickly using a greedy approach – The basic principle is that local optimal decisions may be used to build an optimal solution – But the greedy approach may not always lead to an optimal solution overall for all problems – The key is knowing which problems will work with this approach and which will not • We will study – The activity selection problem – Element of a greedy strategy – The problem of generating Huffman codes

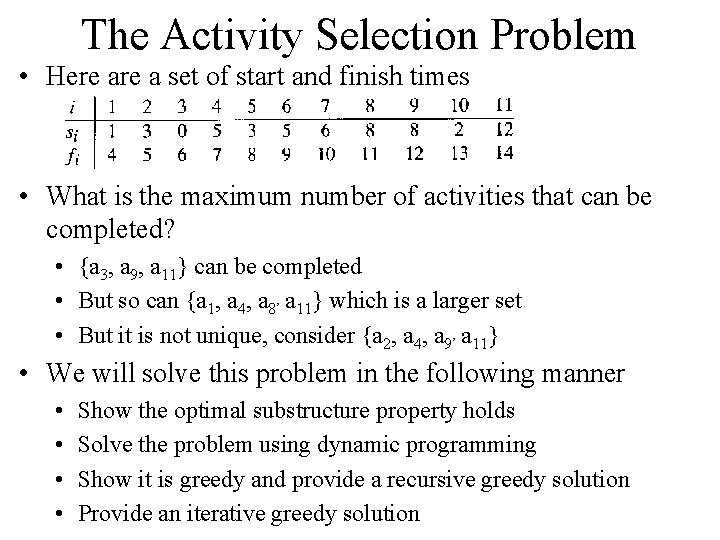

The Activity Selection Problem • Here a set of start and finish times • What is the maximum number of activities that can be completed? • {a 3, a 9, a 11} can be completed • But so can {a 1, a 4, a 8’ a 11} which is a larger set • But it is not unique, consider {a 2, a 4, a 9’ a 11} • We will solve this problem in the following manner • • Show the optimal substructure property holds Solve the problem using dynamic programming Show it is greedy and provide a recursive greedy solution Provide an iterative greedy solution

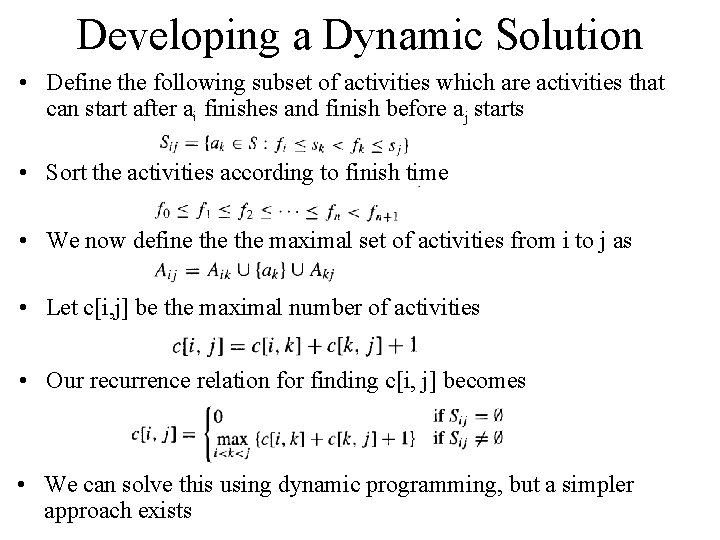

Developing a Dynamic Solution • Define the following subset of activities which are activities that can start after ai finishes and finish before aj starts • Sort the activities according to finish time • We now define the maximal set of activities from i to j as • Let c[i, j] be the maximal number of activities • Our recurrence relation for finding c[i, j] becomes • We can solve this using dynamic programming, but a simpler approach exists

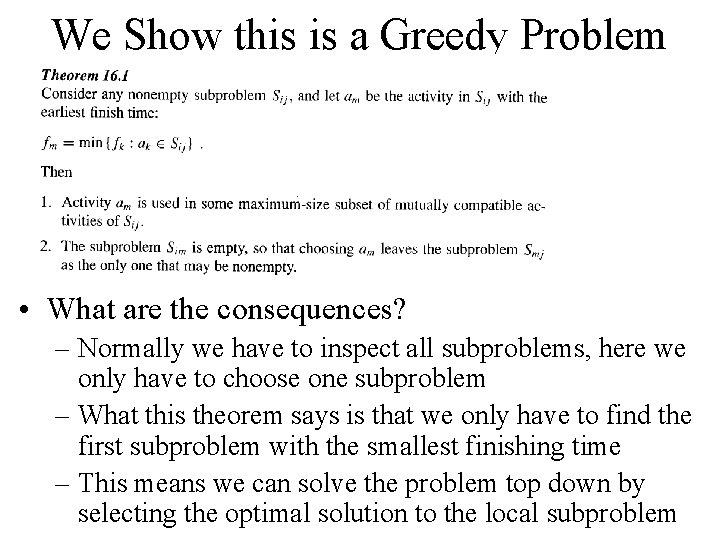

We Show this is a Greedy Problem • What are the consequences? – Normally we have to inspect all subproblems, here we only have to choose one subproblem – What this theorem says is that we only have to find the first subproblem with the smallest finishing time – This means we can solve the problem top down by selecting the optimal solution to the local subproblem

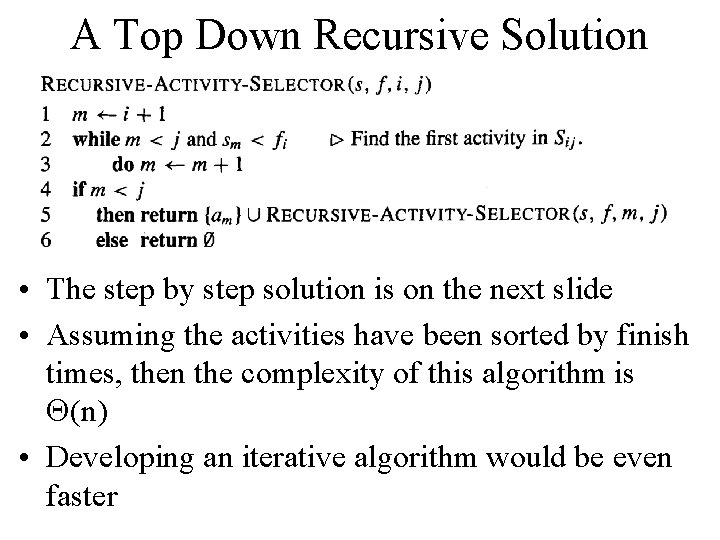

A Top Down Recursive Solution • The step by step solution is on the next slide • Assuming the activities have been sorted by finish times, then the complexity of this algorithm is Q(n) • Developing an iterative algorithm would be even faster

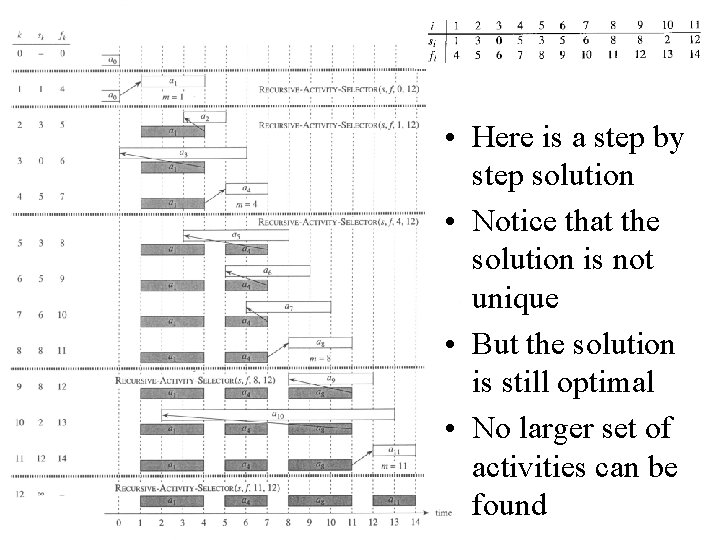

• Here is a step by step solution • Notice that the solution is not unique • But the solution is still optimal • No larger set of activities can be found

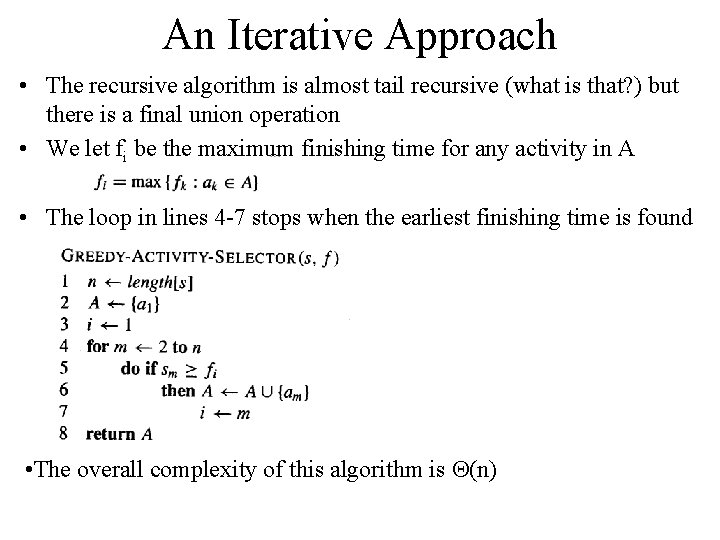

An Iterative Approach • The recursive algorithm is almost tail recursive (what is that? ) but there is a final union operation • We let fi be the maximum finishing time for any activity in A • The loop in lines 4 -7 stops when the earliest finishing time is found • The overall complexity of this algorithm is Q(n)

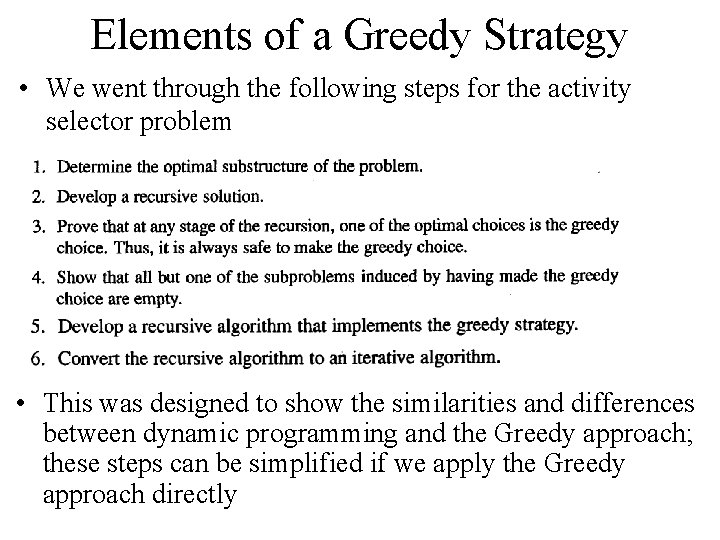

Elements of a Greedy Strategy • We went through the following steps for the activity selector problem • This was designed to show the similarities and differences between dynamic programming and the Greedy approach; these steps can be simplified if we apply the Greedy approach directly

Applying Greedy Directly • Steps in designing a greedy algorithm • You must show the greedy choice property holds: a global optimal solution can be reached by a local optimal choice • The optimal substructure property holds (the same as dynamic programming)

Greedy vs. Dynamic Programming • Any problem solvable by Greedy can be solved by dynamic programming, but not vice versa • Two related example problems

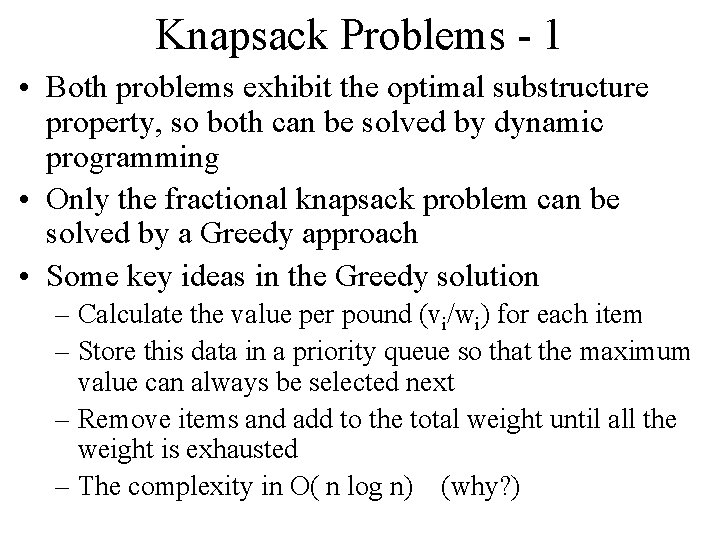

Knapsack Problems - 1 • Both problems exhibit the optimal substructure property, so both can be solved by dynamic programming • Only the fractional knapsack problem can be solved by a Greedy approach • Some key ideas in the Greedy solution – Calculate the value per pound (vi/wi) for each item – Store this data in a priority queue so that the maximum value can always be selected next – Remove items and add to the total weight until all the weight is exhausted – The complexity in O( n log n) (why? )

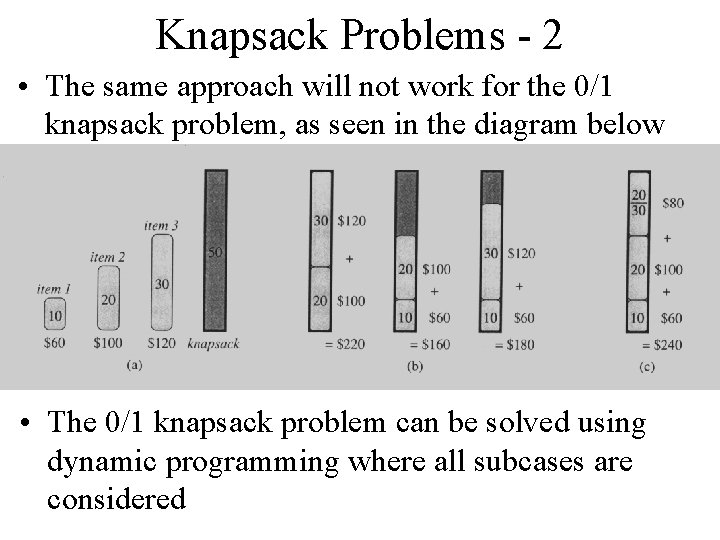

Knapsack Problems - 2 • The same approach will not work for the 0/1 knapsack problem, as seen in the diagram below • The 0/1 knapsack problem can be solved using dynamic programming where all subcases are considered

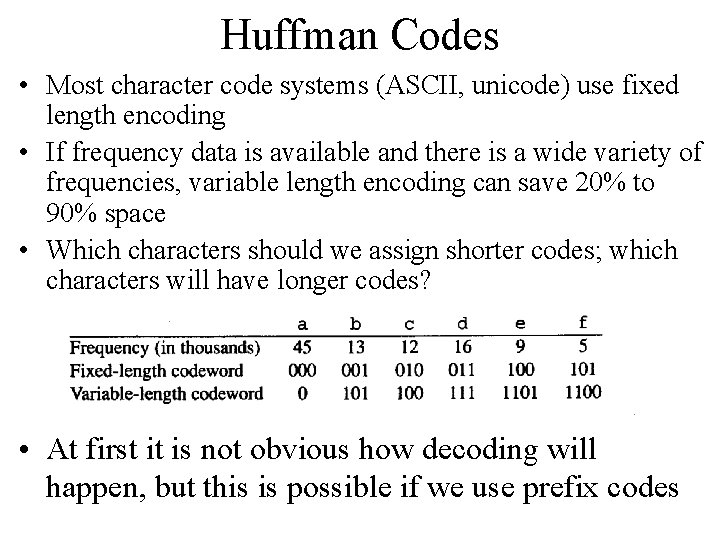

Huffman Codes • Most character code systems (ASCII, unicode) use fixed length encoding • If frequency data is available and there is a wide variety of frequencies, variable length encoding can save 20% to 90% space • Which characters should we assign shorter codes; which characters will have longer codes? • At first it is not obvious how decoding will happen, but this is possible if we use prefix codes

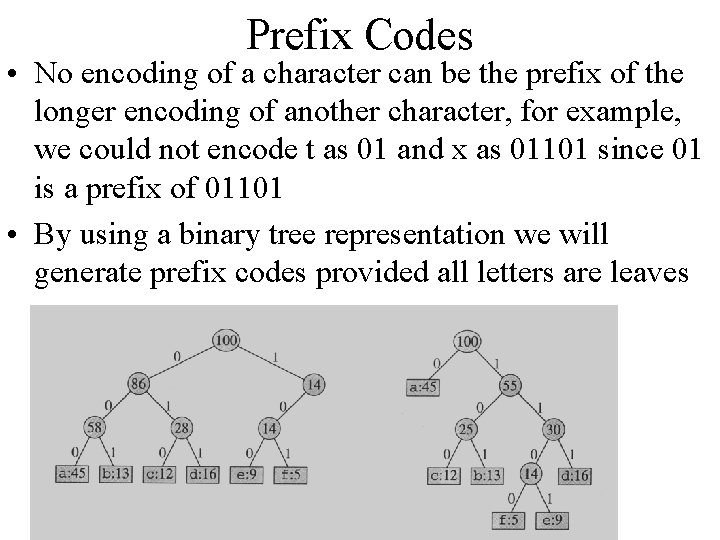

Prefix Codes • No encoding of a character can be the prefix of the longer encoding of another character, for example, we could not encode t as 01 and x as 01101 since 01 is a prefix of 01101 • By using a binary tree representation we will generate prefix codes provided all letters are leaves

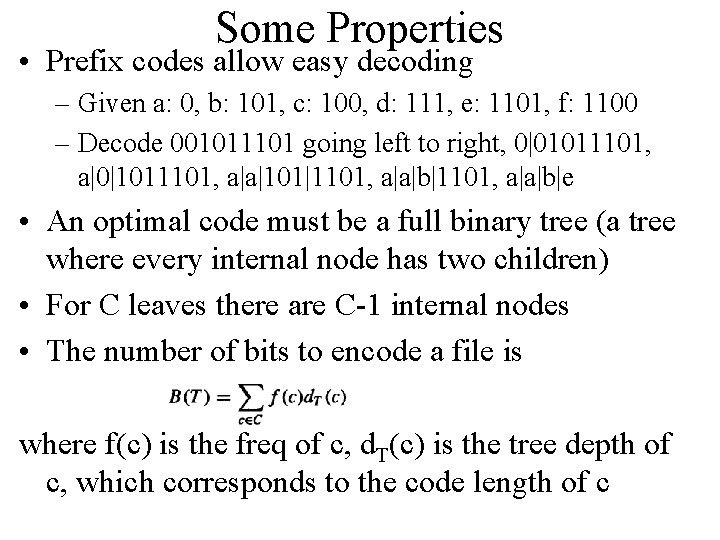

Some Properties • Prefix codes allow easy decoding – Given a: 0, b: 101, c: 100, d: 111, e: 1101, f: 1100 – Decode 001011101 going left to right, 0|01011101, a|0|1011101, a|a|101|1101, a|a|b|e • An optimal code must be a full binary tree (a tree where every internal node has two children) • For C leaves there are C-1 internal nodes • The number of bits to encode a file is where f(c) is the freq of c, d. T(c) is the tree depth of c, which corresponds to the code length of c

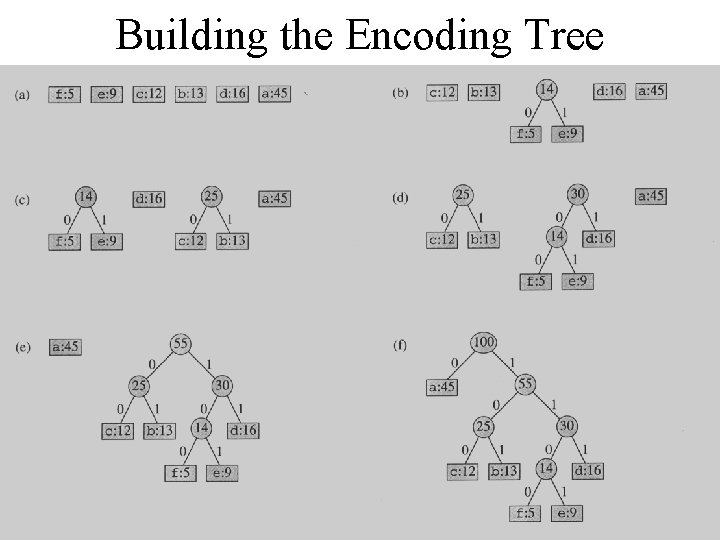

Building the Encoding Tree

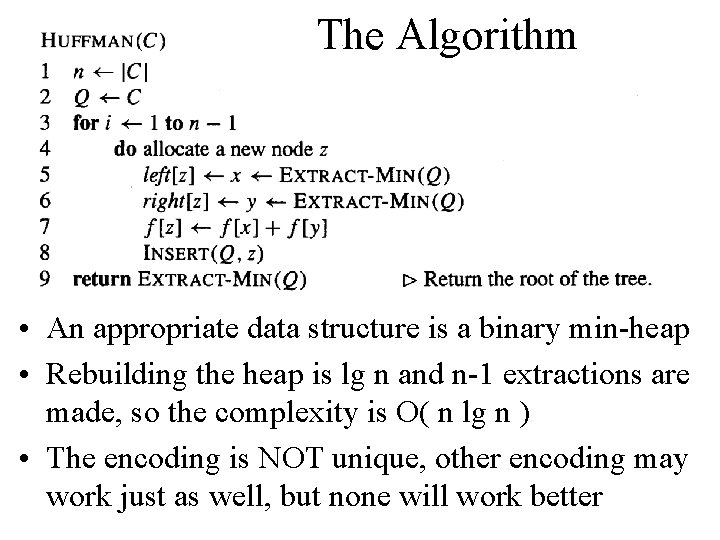

The Algorithm • An appropriate data structure is a binary min-heap • Rebuilding the heap is lg n and n-1 extractions are made, so the complexity is O( n lg n ) • The encoding is NOT unique, other encoding may work just as well, but none will work better

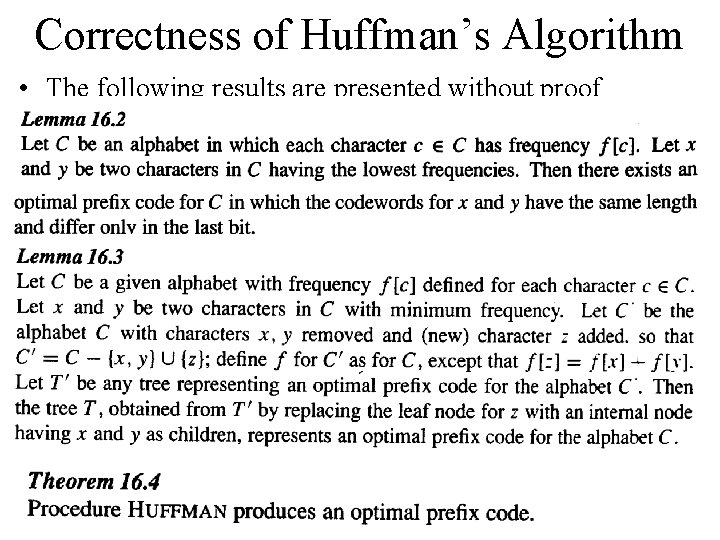

Correctness of Huffman’s Algorithm • The following results are presented without proof

- Slides: 18