Graphical models Tom Griffiths UC Berkeley Challenges of

Graphical models Tom Griffiths UC Berkeley

Challenges of probabilistic models • Specifying well-defined probabilistic models with many variables is hard (for modelers) • Representing probability distributions over those variables is hard (for computers/learners) • Computing quantities using those distributions is hard (for computers/learners)

Representing structured distributions Four random variables: X 1 X 2 X 3 X 4 coin toss produces heads pencil levitates friend has psychic powers friend has two-headed coin Domain {0, 1}

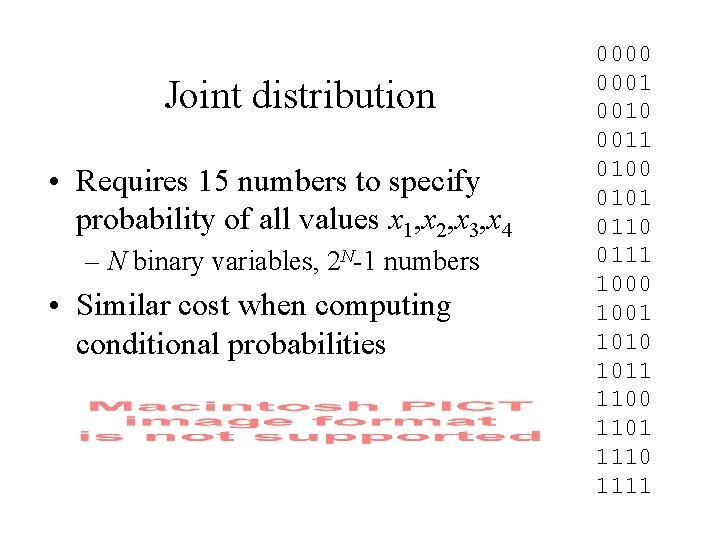

Joint distribution • Requires 15 numbers to specify probability of all values x 1, x 2, x 3, x 4 – N binary variables, 2 N-1 numbers • Similar cost when computing conditional probabilities 0000 0001 0010 0011 0100 0101 0110 0111 1000 1001 1010 1011 1100 1101 1110 1111

How can we use fewer numbers? Four random variables: X 1 X 2 X 3 X 4 coin toss produces heads Domain {0, 1}

Statistical independence • Two random variables X 1 and X 2 are independent if P(x 1|x 2) = P(x 1) – e. g. coinflips: P(x 1=H|x 2=H) = P(x 1=H) = 0. 5 • Independence makes it easier to represent and work with probability distributions • We can exploit the product rule: If x 1, x 2, x 3, and x 4 are all independent…

Expressing independence • Statistical independence is the key to efficient probabilistic representation and computation • This has led to the development of languages for indicating dependencies among variables • Some of the most popular languages are based on “graphical models”

Graphical models • Introduction to graphical models – definitions – efficient representation and inference – explaining away • Graphical models and cognitive science – uses of graphical models

Graphical models • Introduction to graphical models – definitions – efficient representation and inference – explaining away • Graphical models and cognitive science – uses of graphical models

Graphical models • Express the probabilistic dependency structure among a set of variables (Pearl, 1988) • Consist of – a set of nodes, corresponding to variables – a set of edges, indicating dependency – a set of functions defined on the graph that specify a probability distribution

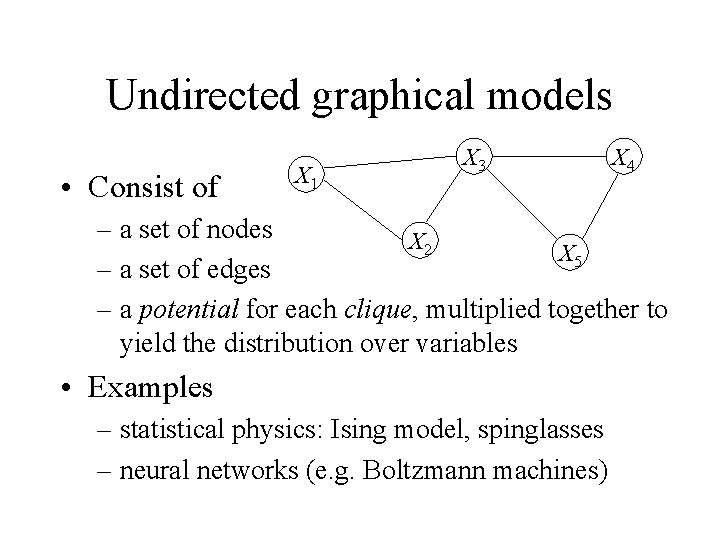

Undirected graphical models • Consist of X 1 X 3 X 4 – a set of nodes X 2 X 5 – a set of edges – a potential for each clique, multiplied together to yield the distribution over variables • Examples – statistical physics: Ising model, spinglasses – neural networks (e. g. Boltzmann machines)

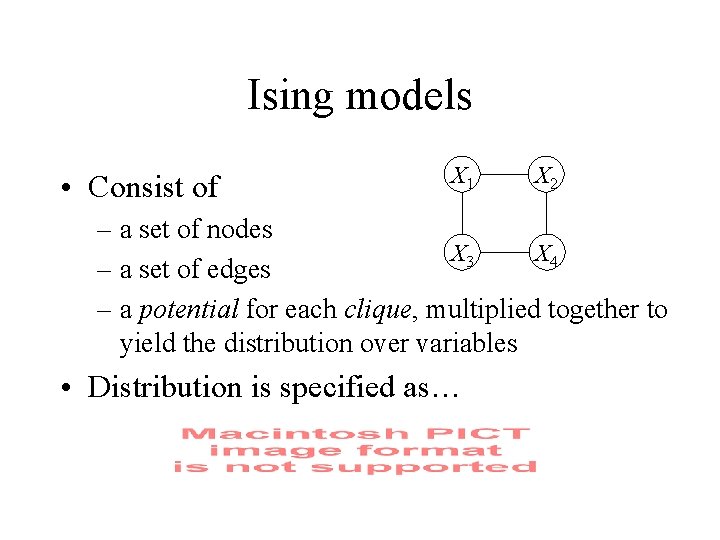

Ising models • Consist of X 1 X 2 – a set of nodes X 3 X 4 – a set of edges – a potential for each clique, multiplied together to yield the distribution over variables • Distribution is specified as…

Ising models

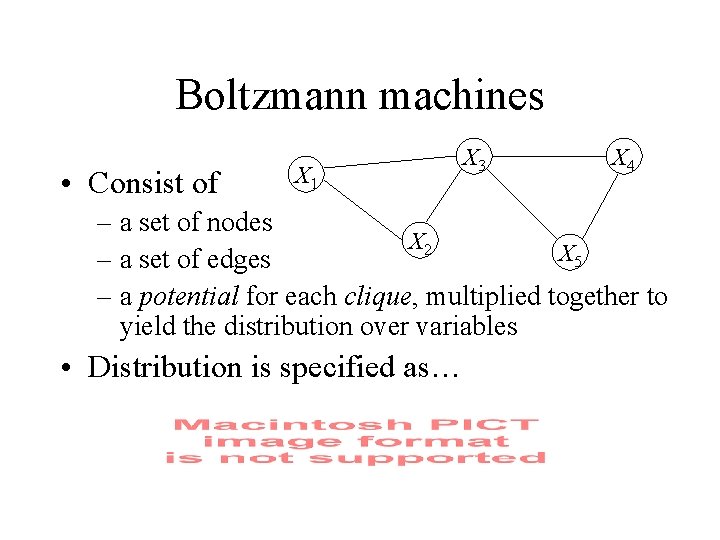

Boltzmann machines • Consist of X 1 X 3 X 4 – a set of nodes X 2 X 5 – a set of edges – a potential for each clique, multiplied together to yield the distribution over variables • Distribution is specified as…

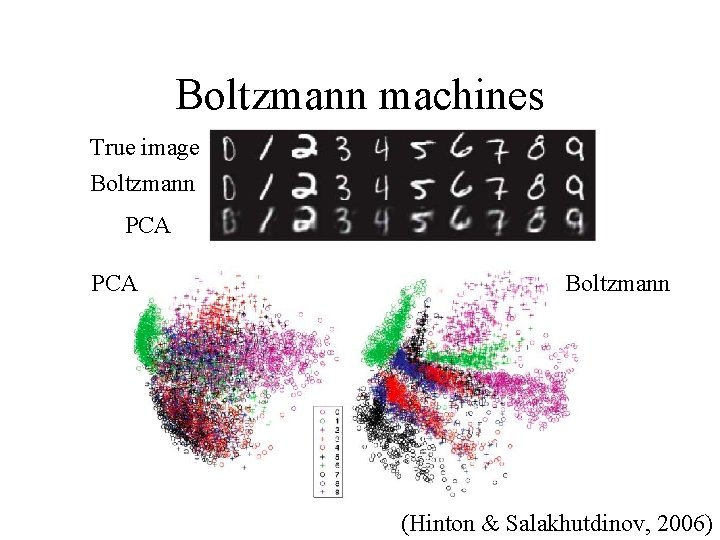

Boltzmann machines True image Boltzmann PCA Boltzmann (Hinton & Salakhutdinov, 2006)

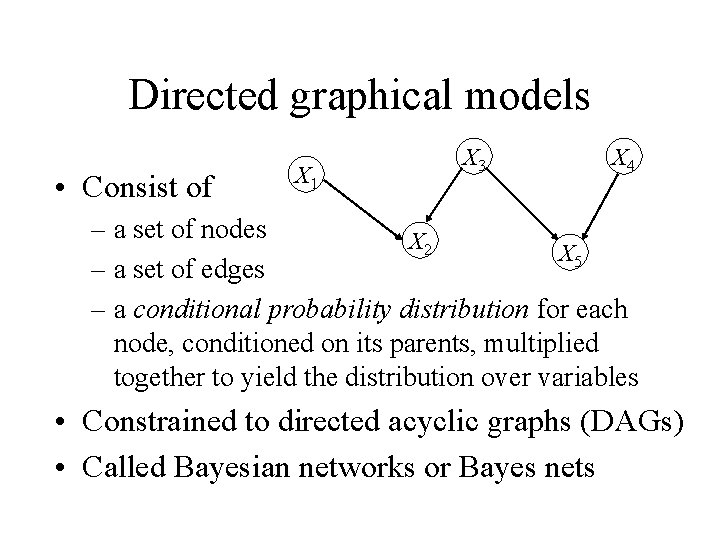

Directed graphical models • Consist of X 1 X 3 X 4 – a set of nodes X 2 X 5 – a set of edges – a conditional probability distribution for each node, conditioned on its parents, multiplied together to yield the distribution over variables • Constrained to directed acyclic graphs (DAGs) • Called Bayesian networks or Bayes nets

Bayesian networks and Bayes • Two different problems – Bayesian statistics is a method of inference – Bayesian networks are a form of representation • There is no necessary connection – many users of Bayesian networks rely upon frequentist statistical methods – many Bayesian inferences cannot be easily represented using Bayesian networks

Graphical models • Introduction to graphical models – definitions – efficient representation and inference – explaining away • Graphical models and cognitive science – uses of graphical models

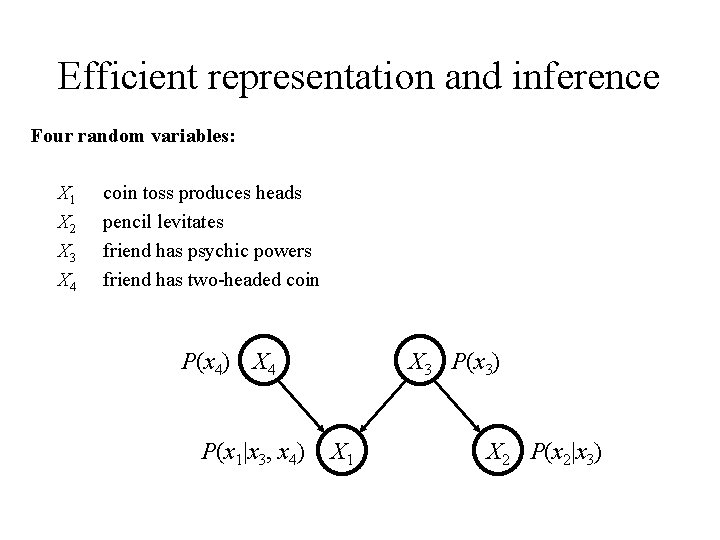

Efficient representation and inference Four random variables: X 1 X 2 X 3 X 4 coin toss produces heads pencil levitates friend has psychic powers friend has two-headed coin P(x 4) X 4 P(x 1|x 3, x 4) X 3 P(x 3) X 1 X 2 P(x 2|x 3)

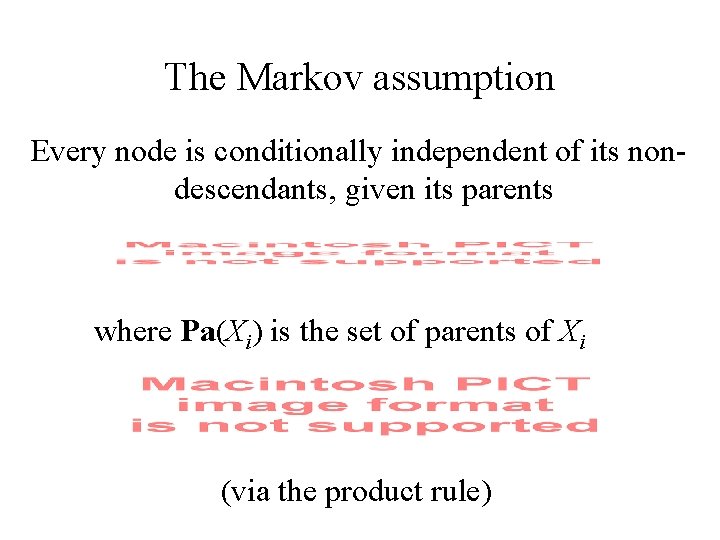

The Markov assumption Every node is conditionally independent of its nondescendants, given its parents where Pa(Xi) is the set of parents of Xi (via the product rule)

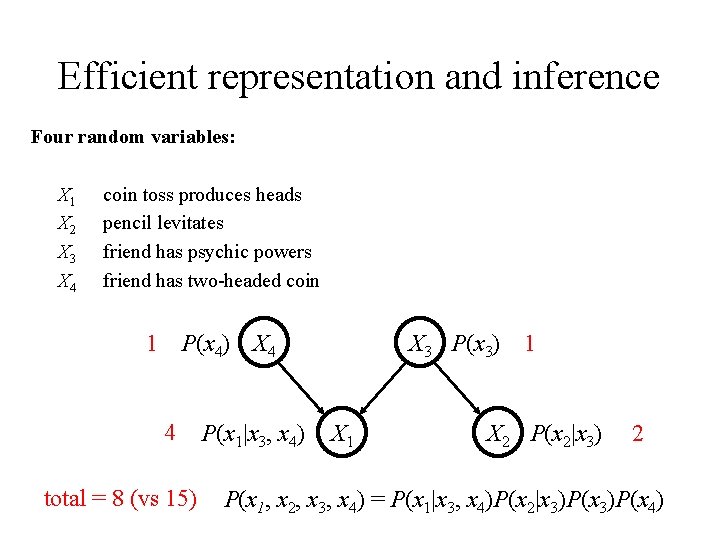

Efficient representation and inference Four random variables: X 1 X 2 X 3 X 4 coin toss produces heads pencil levitates friend has psychic powers friend has two-headed coin 1 P(x 4) X 4 4 total = 8 (vs 15) P(x 1|x 3, x 4) X 3 P(x 3) 1 X 2 P(x 2|x 3) 2 P(x 1, x 2, x 3, x 4) = P(x 1|x 3, x 4)P(x 2|x 3)P(x 4)

Reading a Bayesian network • The structure of a Bayes net can be read as the generative process behind a distribution • Gives the joint probability distribution over variables obtained by sampling each variable conditioned on its parents

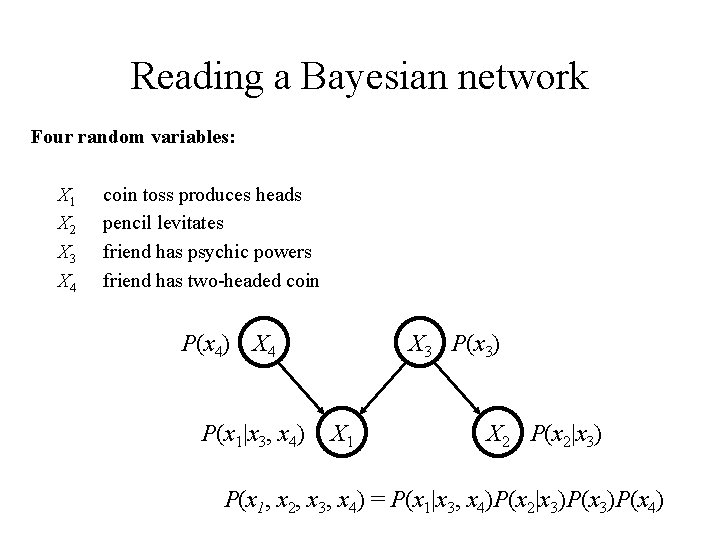

Reading a Bayesian network Four random variables: X 1 X 2 X 3 X 4 coin toss produces heads pencil levitates friend has psychic powers friend has two-headed coin P(x 4) X 4 P(x 1|x 3, x 4) X 3 P(x 3) X 1 X 2 P(x 2|x 3) P(x 1, x 2, x 3, x 4) = P(x 1|x 3, x 4)P(x 2|x 3)P(x 4)

Reading a Bayesian network • The structure of a Bayes net can be read as the generative process behind a distribution • Gives the joint probability distribution over variables obtained by sampling each variable conditioned on its parents • Simple rules for determining whether two variables are dependent or independent

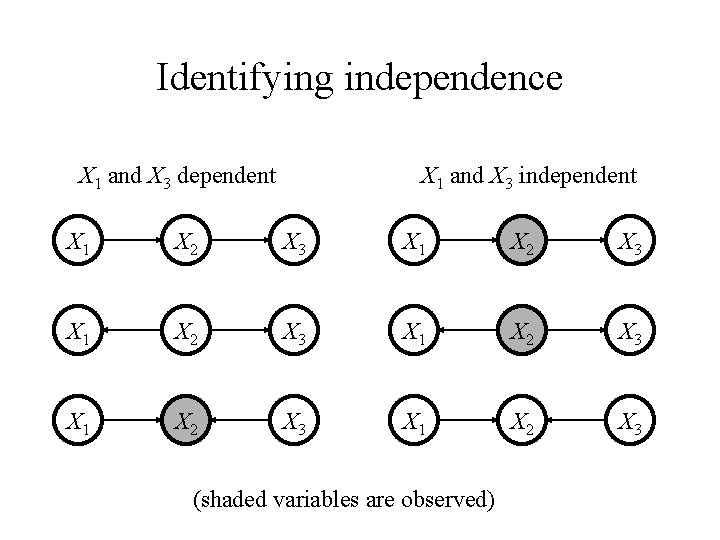

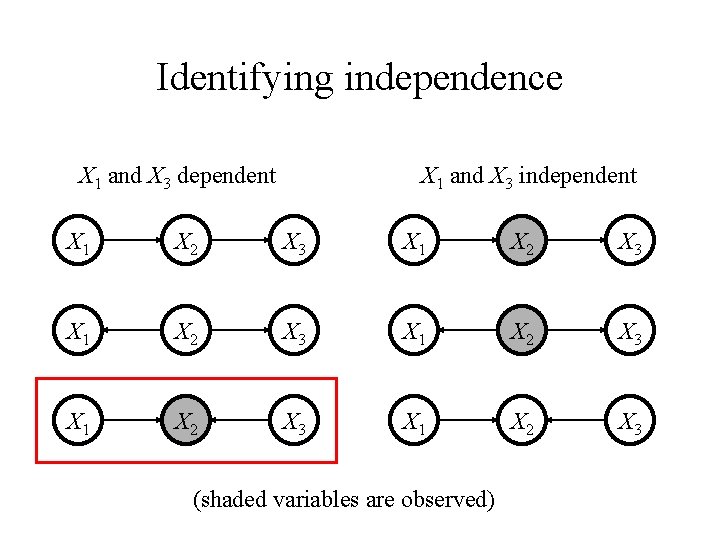

Identifying independence X 1 and X 3 dependent X 1 and X 3 independent X 1 X 2 X 3 X 1 X 2 X 3 (shaded variables are observed)

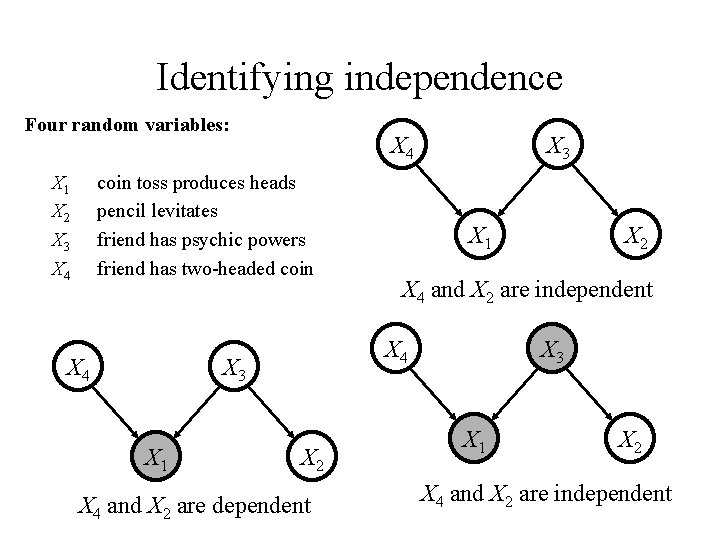

Identifying independence Four random variables: X 1 X 2 X 3 X 4 coin toss produces heads pencil levitates friend has psychic powers friend has two-headed coin X 4 X 1 X 2 X 4 and X 2 are dependent X 2 X 4 and X 2 are independent X 4 X 3 X 1 X 2 X 4 and X 2 are independent

Reading a Bayesian network • The structure of a Bayes net can be read as the generative process behind a distribution • Gives the joint probability distribution over variables obtained by sampling each variable conditioned on its parents • Simple rules for determining whether two variables are dependent or independent • Independence makes inference more efficient

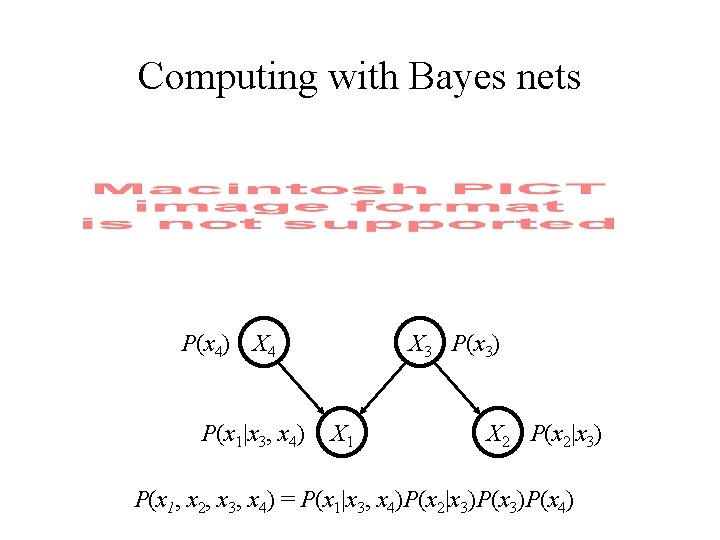

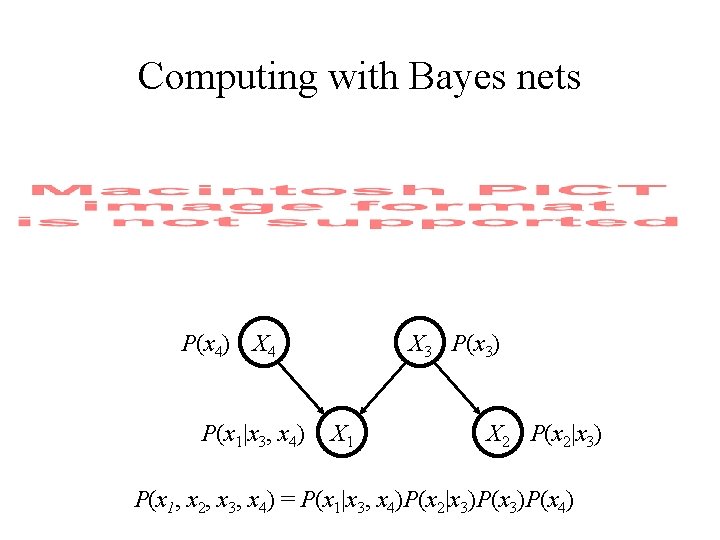

Computing with Bayes nets P(x 4) X 4 P(x 1|x 3, x 4) X 3 P(x 3) X 1 X 2 P(x 2|x 3) P(x 1, x 2, x 3, x 4) = P(x 1|x 3, x 4)P(x 2|x 3)P(x 4)

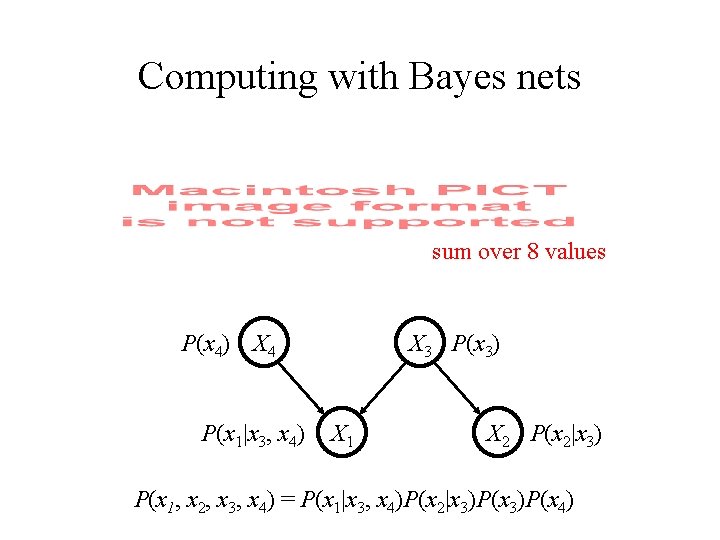

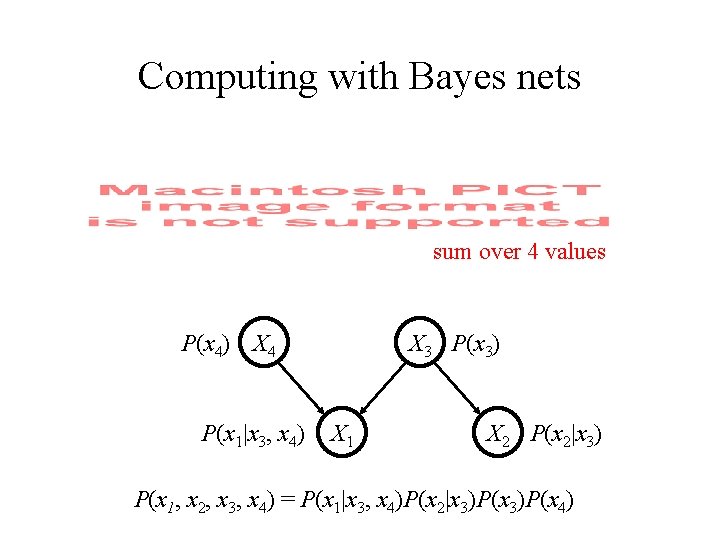

Computing with Bayes nets sum over 8 values P(x 4) X 4 P(x 1|x 3, x 4) X 3 P(x 3) X 1 X 2 P(x 2|x 3) P(x 1, x 2, x 3, x 4) = P(x 1|x 3, x 4)P(x 2|x 3)P(x 4)

Computing with Bayes nets P(x 4) X 4 P(x 1|x 3, x 4) X 3 P(x 3) X 1 X 2 P(x 2|x 3) P(x 1, x 2, x 3, x 4) = P(x 1|x 3, x 4)P(x 2|x 3)P(x 4)

Computing with Bayes nets sum over 4 values P(x 4) X 4 P(x 1|x 3, x 4) X 3 P(x 3) X 1 X 2 P(x 2|x 3) P(x 1, x 2, x 3, x 4) = P(x 1|x 3, x 4)P(x 2|x 3)P(x 4)

Computing with Bayes nets • Inference algorithms for Bayesian networks exploit dependency structure • Message-passing algorithms – “belief propagation” passes simple messages between nodes, exact for tree-structured networks • More general inference algorithms – exact: “junction-tree” – approximate: Monte Carlo schemes

Logic and probability • Bayesian networks are equivalent to a probabilistic propositional logic • Associate variables with atomic propositions… – Bayes net specifies a distribution over possible worlds, probability of a proposition is a sum over worlds • More efficient than simply enumerating worlds • Developing similarly efficient schemes for working with other probabilistic logics is a major topic of current research

Graphical models • Introduction to graphical models – definitions – efficient representation and inference – explaining away • Graphical models and cognitive science – uses of graphical models

Identifying independence X 1 and X 3 dependent X 1 and X 3 independent X 1 X 2 X 3 X 1 X 2 X 3 (shaded variables are observed)

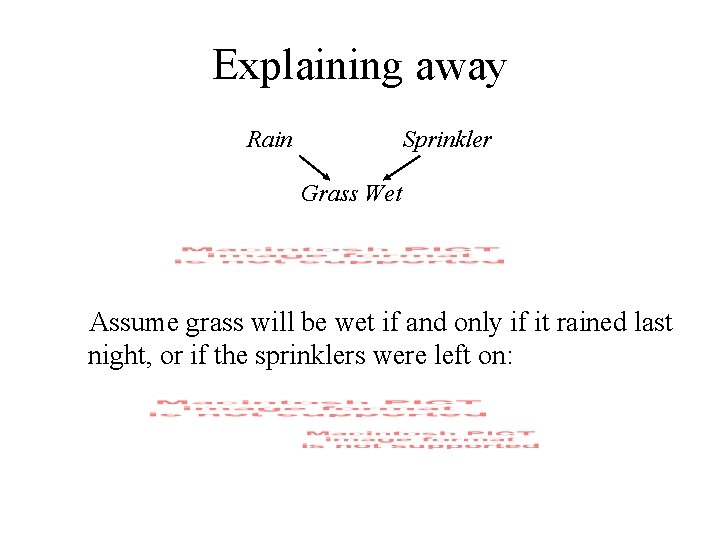

Explaining away Rain Sprinkler Grass Wet Assume grass will be wet if and only if it rained last night, or if the sprinklers were left on:

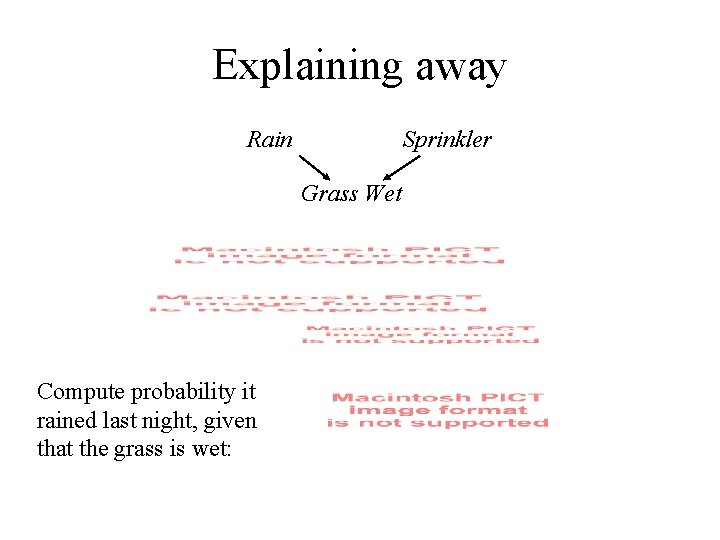

Explaining away Rain Sprinkler Grass Wet Compute probability it rained last night, given that the grass is wet:

Explaining away Rain Sprinkler Grass Wet Compute probability it rained last night, given that the grass is wet:

Explaining away Rain Sprinkler Grass Wet Compute probability it rained last night, given that the grass is wet:

Explaining away Rain Sprinkler Grass Wet Compute probability it rained last night, given that the grass is wet:

Explaining away Rain Sprinkler Grass Wet Compute probability it rained last night, given that the grass is wet: Between 1 and P(s)

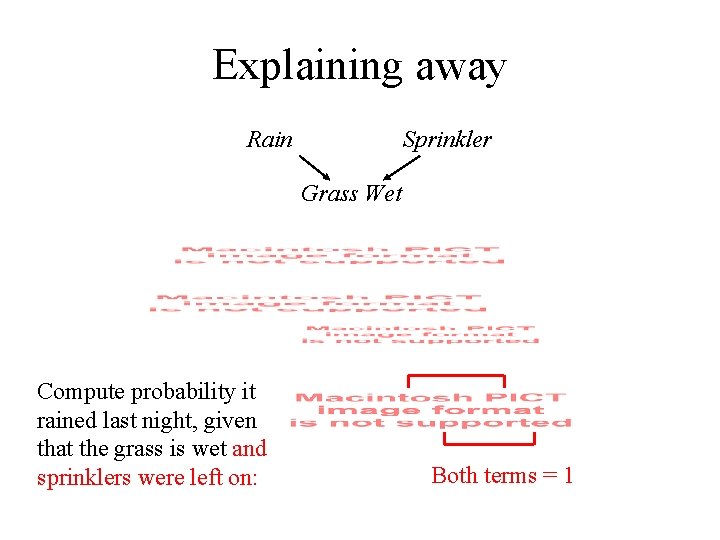

Explaining away Rain Sprinkler Grass Wet Compute probability it rained last night, given that the grass is wet and sprinklers were left on: Both terms = 1

Explaining away Rain Sprinkler Grass Wet Compute probability it rained last night, given that the grass is wet and sprinklers were left on:

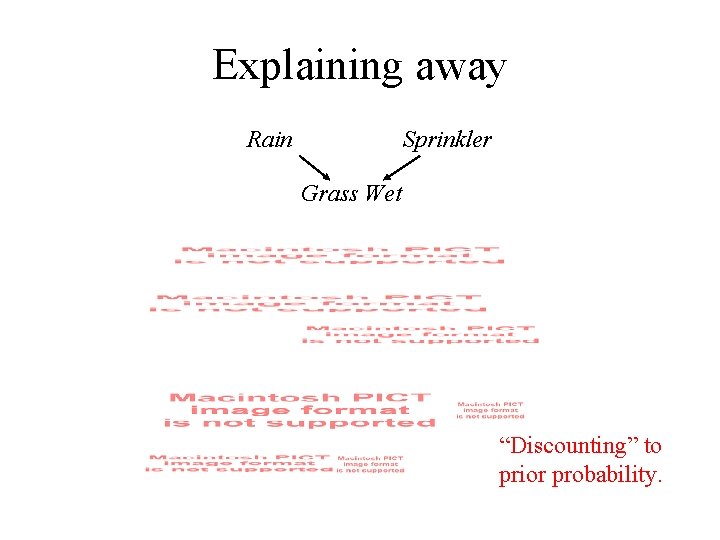

Explaining away Rain Sprinkler Grass Wet “Discounting” to prior probability.

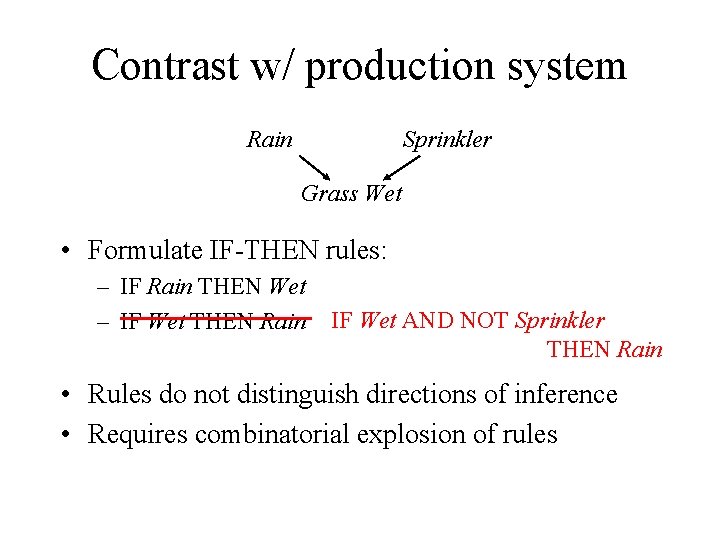

Contrast w/ production system Rain Sprinkler Grass Wet • Formulate IF-THEN rules: – IF Rain THEN Wet – IF Wet THEN Rain IF Wet AND NOT Sprinkler THEN Rain • Rules do not distinguish directions of inference • Requires combinatorial explosion of rules

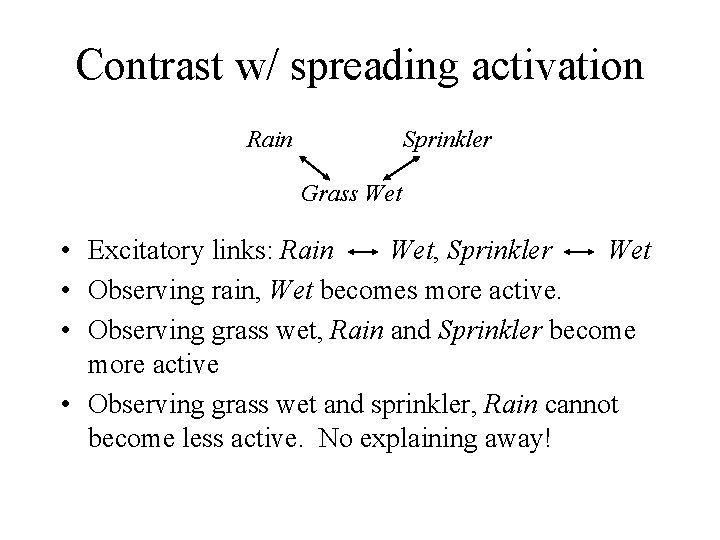

Contrast w/ spreading activation Rain Sprinkler Grass Wet • Excitatory links: Rain Wet, Sprinkler Wet • Observing rain, Wet becomes more active. • Observing grass wet, Rain and Sprinkler become more active • Observing grass wet and sprinkler, Rain cannot become less active. No explaining away!

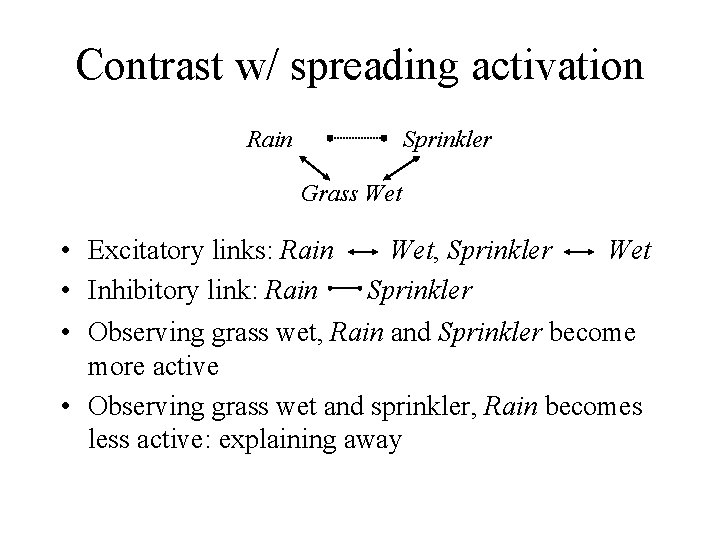

Contrast w/ spreading activation Rain Sprinkler Grass Wet • Excitatory links: Rain Wet, Sprinkler Wet • Inhibitory link: Rain Sprinkler • Observing grass wet, Rain and Sprinkler become more active • Observing grass wet and sprinkler, Rain becomes less active: explaining away

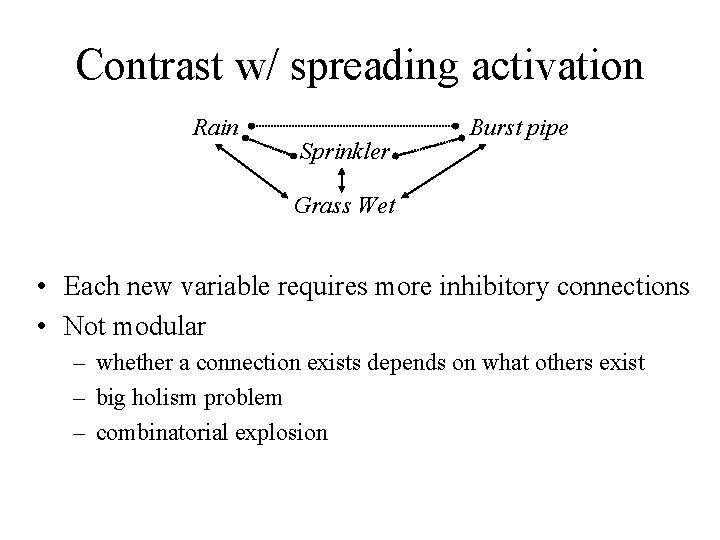

Contrast w/ spreading activation Rain Sprinkler Burst pipe Grass Wet • Each new variable requires more inhibitory connections • Not modular – whether a connection exists depends on what others exist – big holism problem – combinatorial explosion

Contrast w/ spreading activation (Mc. Clelland & Rumelhart, 1981)

Graphical models • Capture dependency structure in distributions • Provide an efficient means of representing and reasoning with probabilities • Support kinds of inference that are problematic for other cognitive models: explaining away – hard to capture in a production system – more natural than with spreading activation

Graphical models • Introduction to graphical models – definitions – efficient representation and inference – explaining away • Graphical models and cognitive science – uses of graphical models

Uses of graphical models • Understanding existing cognitive models – e. g. , neural network models

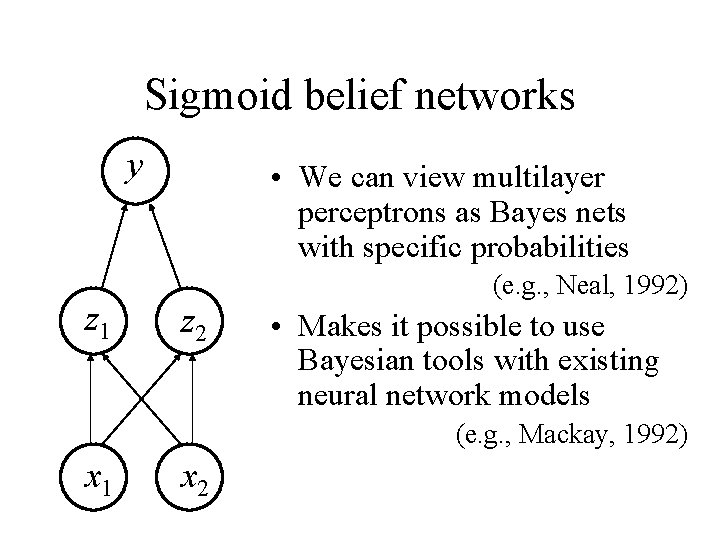

Sigmoid belief networks y z 1 • We can view multilayer perceptrons as Bayes nets with specific probabilities z 2 (e. g. , Neal, 1992) • Makes it possible to use Bayesian tools with existing neural network models (e. g. , Mackay, 1992) x 1 x 2

Uses of graphical models • Understanding existing cognitive models – e. g. , neural network models • Representation and reasoning – a way to address holism in induction (c. f. Fodor)

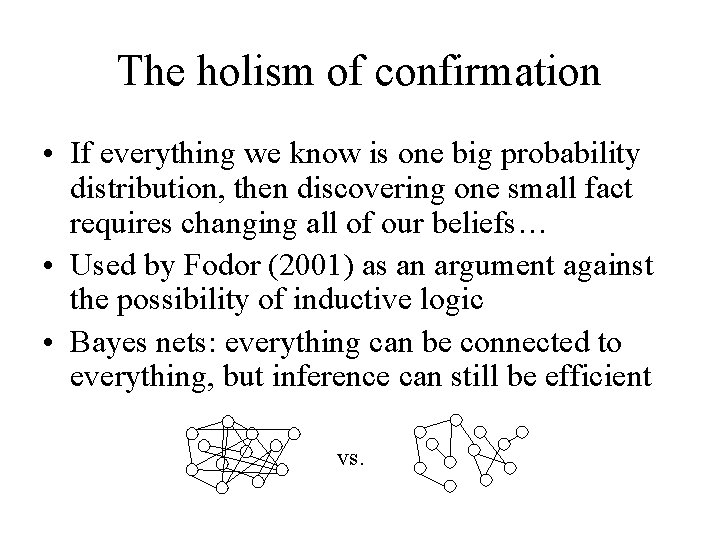

The holism of confirmation • If everything we know is one big probability distribution, then discovering one small fact requires changing all of our beliefs… • Used by Fodor (2001) as an argument against the possibility of inductive logic • Bayes nets: everything can be connected to everything, but inference can still be efficient vs.

Uses of graphical models • Understanding existing cognitive models – e. g. , neural network models • Representation and reasoning – a way to address holism in induction (c. f. Fodor) • Defining generative models – mixture models, language models, …

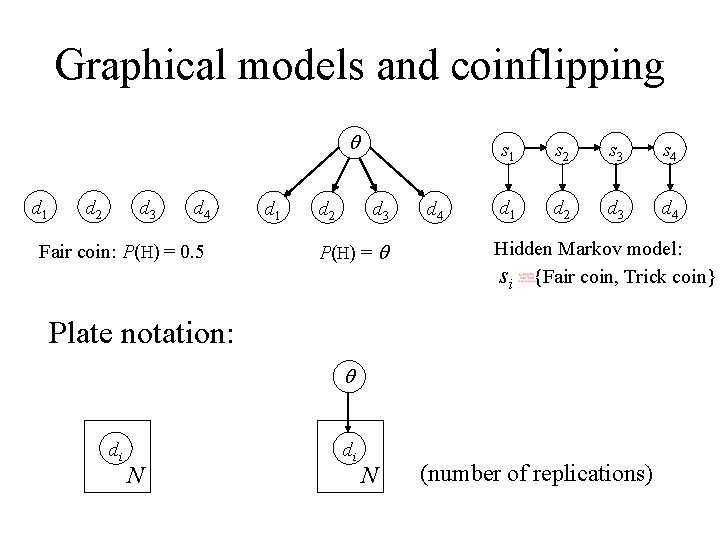

Graphical models and coinflipping q d 1 d 2 d 3 d 4 Fair coin: P(H) = 0. 5 d 1 d 2 d 3 P(H) = q d 4 s 1 s 2 s 3 s 4 d 1 d 2 d 3 d 4 Hidden Markov model: si {Fair coin, Trick coin} Plate notation: q di N (number of replications)

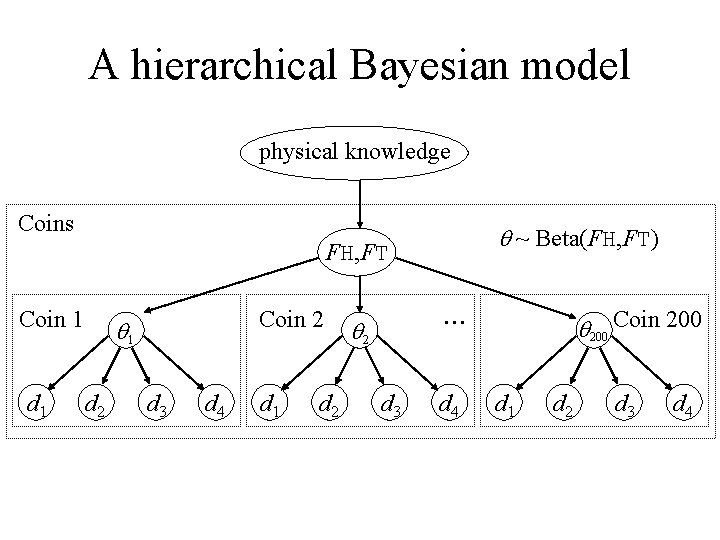

A hierarchical Bayesian model physical knowledge Coins q ~ Beta(FH, FT) FH, FT Coin 1 d 1 Coin 2 q 1 d 2 d 3 d 4 d 1 d 2 . . . q 2 d 3 d 4 q 200 Coin 200 d 1 d 2 d 3 d 4

Uses of graphical models • Understanding existing cognitive models – e. g. , neural network models • Representation and reasoning – a way to address holism in induction (c. f. Fodor) • Defining generative models – mixture models, language models, … • Modeling human causal reasoning – more on Friday!

- Slides: 60