GraphBased Parallel Computing William Cohen 1 Computing paradigms

Graph-Based Parallel Computing William Cohen 1

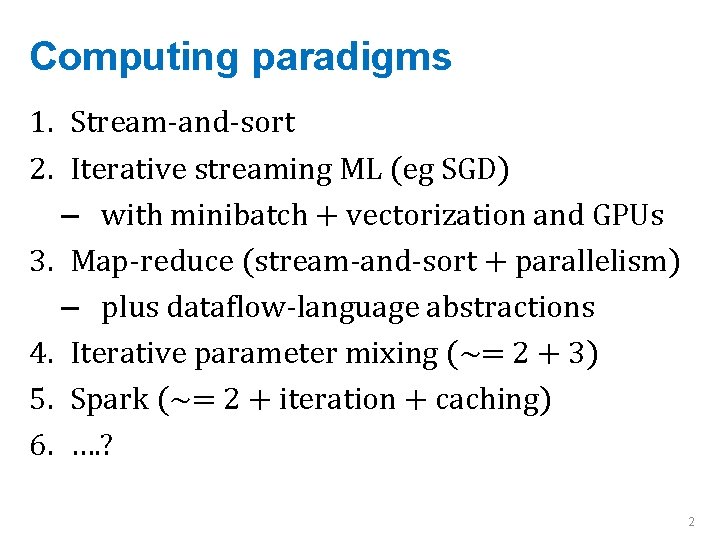

Computing paradigms 1. Stream-and-sort 2. Iterative streaming ML (eg SGD) – with minibatch + vectorization and GPUs 3. Map-reduce (stream-and-sort + parallelism) – plus dataflow-language abstractions 4. Iterative parameter mixing (~= 2 + 3) 5. Spark (~= 2 + iteration + caching) 6. …. ? 2

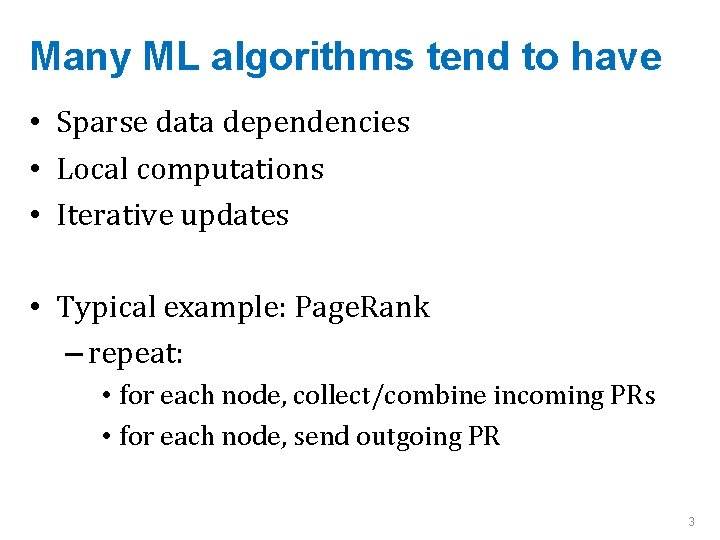

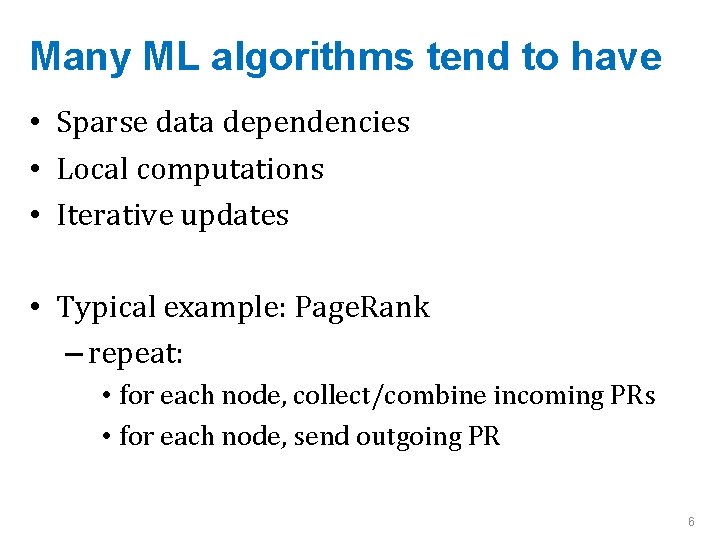

Many ML algorithms tend to have • Sparse data dependencies • Local computations • Iterative updates • Typical example: Page. Rank – repeat: • for each node, collect/combine incoming PRs • for each node, send outgoing PR 3

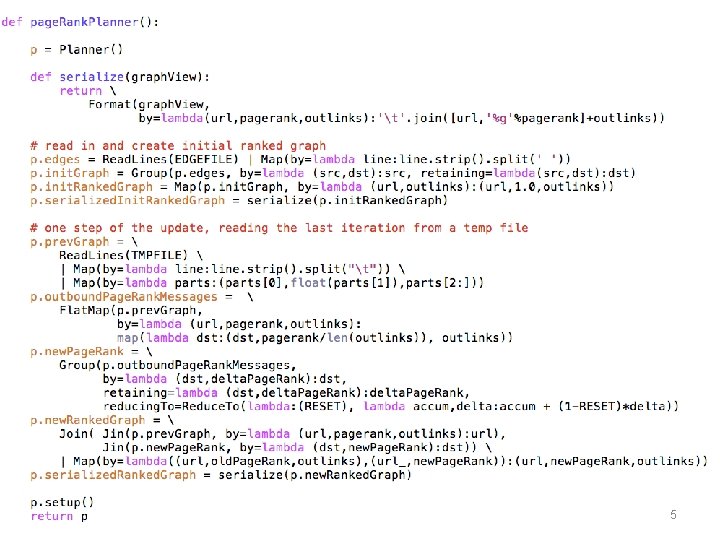

5

Many ML algorithms tend to have • Sparse data dependencies • Local computations • Iterative updates • Typical example: Page. Rank – repeat: • for each node, collect/combine incoming PRs • for each node, send outgoing PR 6

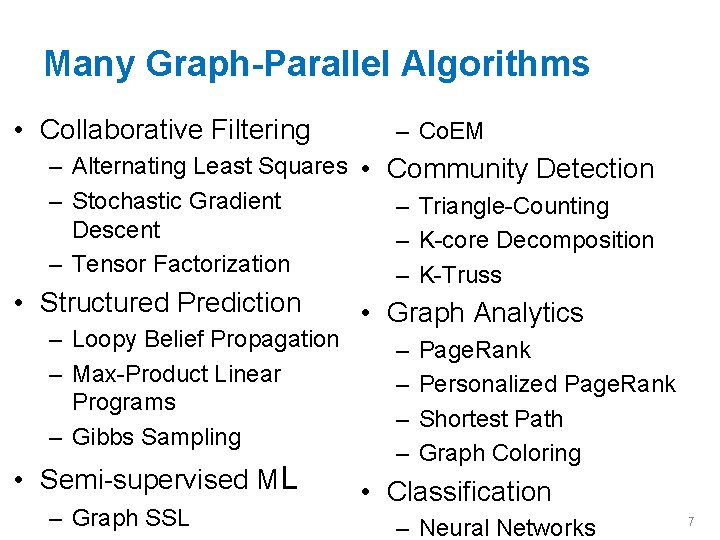

Many Graph-Parallel Algorithms • Collaborative Filtering – Co. EM – Alternating Least Squares • Community Detection – Stochastic Gradient – Triangle-Counting Descent – K-core Decomposition – Tensor Factorization – K-Truss • Structured Prediction – Loopy Belief Propagation – Max-Product Linear Programs – Gibbs Sampling • Semi-supervised ML – Graph SSL • Graph Analytics – – Page. Rank Personalized Page. Rank Shortest Path Graph Coloring • Classification – Neural Networks 7

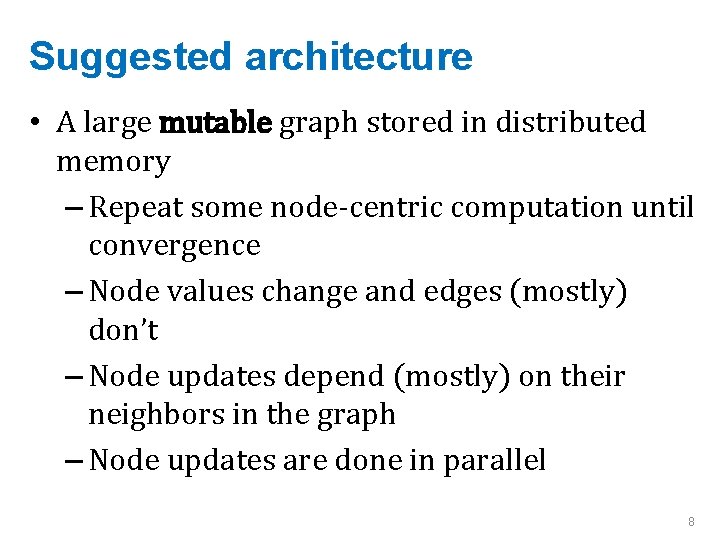

Suggested architecture • A large mutable graph stored in distributed memory – Repeat some node-centric computation until convergence – Node values change and edges (mostly) don’t – Node updates depend (mostly) on their neighbors in the graph – Node updates are done in parallel 8

Sample system: Pregel 9

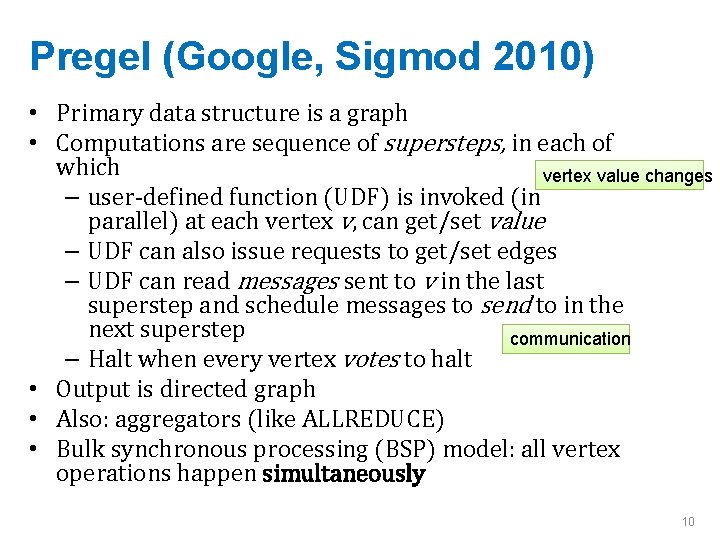

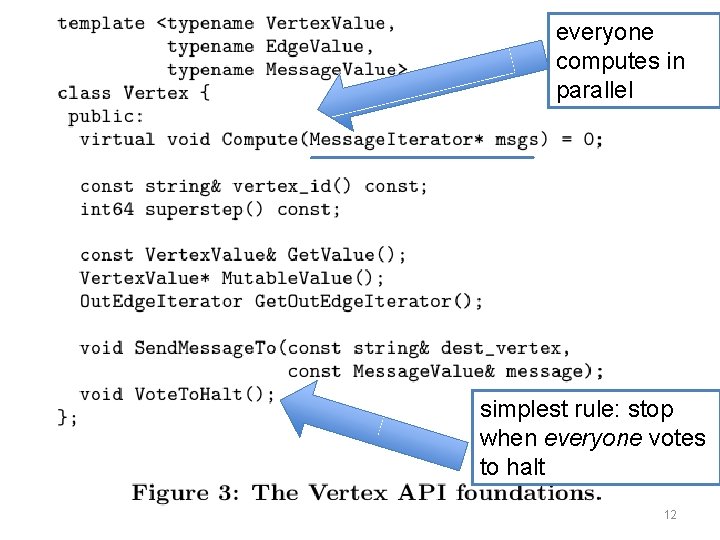

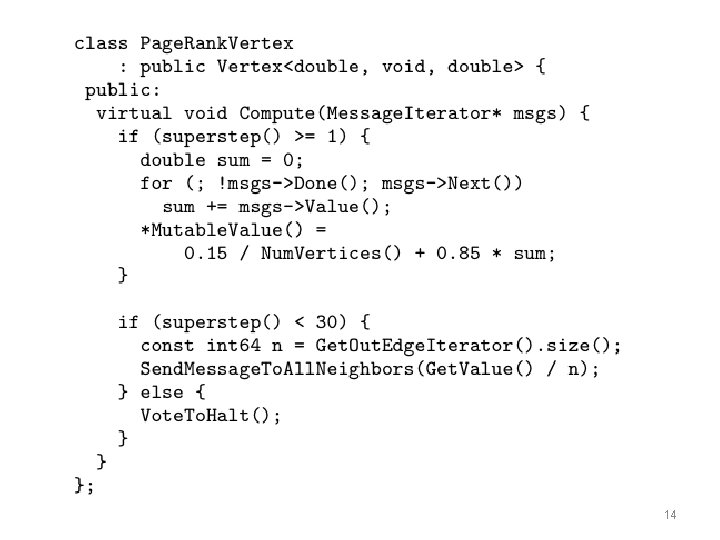

Pregel (Google, Sigmod 2010) • Primary data structure is a graph • Computations are sequence of supersteps, in each of which vertex value changes – user-defined function (UDF) is invoked (in parallel) at each vertex v, can get/set value – UDF can also issue requests to get/set edges – UDF can read messages sent to v in the last superstep and schedule messages to send to in the next superstep communication – Halt when every vertex votes to halt • Output is directed graph • Also: aggregators (like ALLREDUCE) • Bulk synchronous processing (BSP) model: all vertex operations happen simultaneously 10

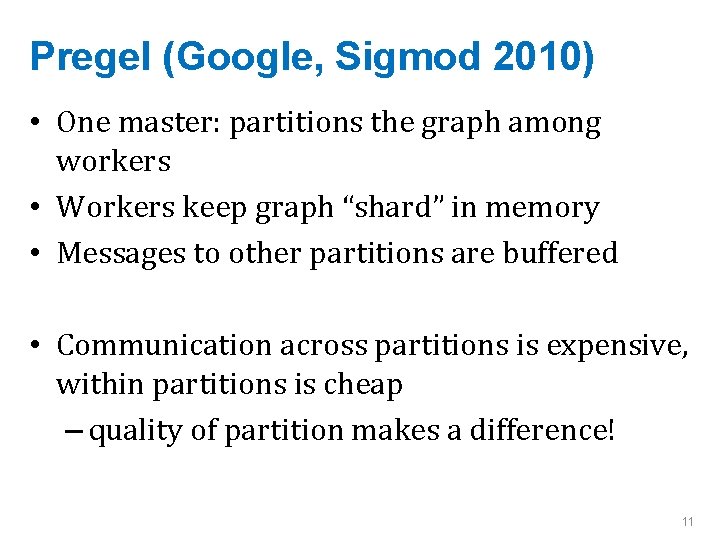

Pregel (Google, Sigmod 2010) • One master: partitions the graph among workers • Workers keep graph “shard” in memory • Messages to other partitions are buffered • Communication across partitions is expensive, within partitions is cheap – quality of partition makes a difference! 11

everyone computes in parallel simplest rule: stop when everyone votes to halt 12

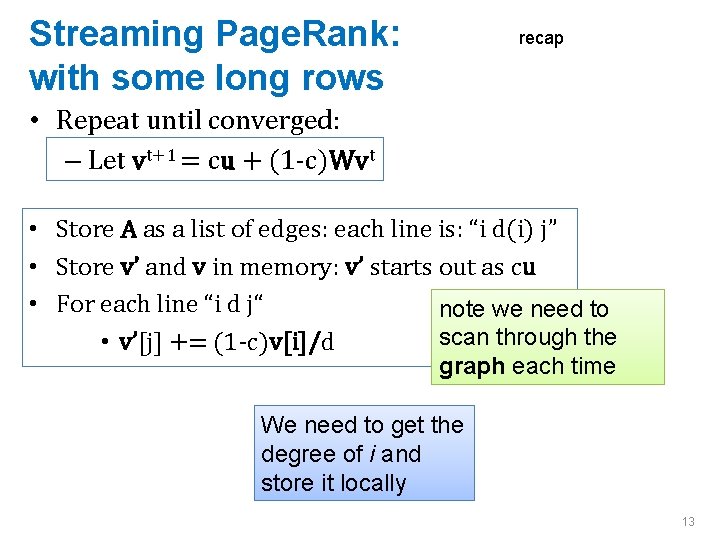

Streaming Page. Rank: with some long rows recap • Repeat until converged: – Let vt+1 = cu + (1 -c)Wvt • Store A as a list of edges: each line is: “i d(i) j” • Store v’ and v in memory: v’ starts out as cu • For each line “i d j“ note we need to scan through the • v’[j] += (1 -c)v[i]/d graph each time We need to get the degree of i and store it locally 13

14

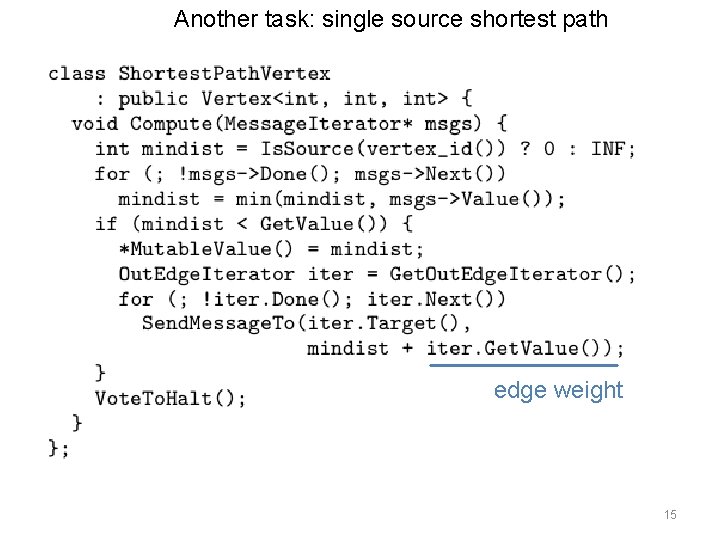

Another task: single source shortest path edge weight 15

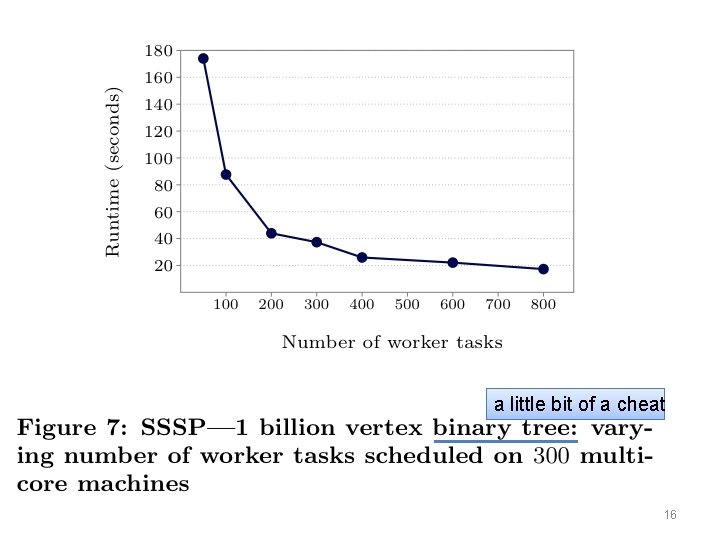

a little bit of a cheat 16

Sample system: Signal-Collect 17

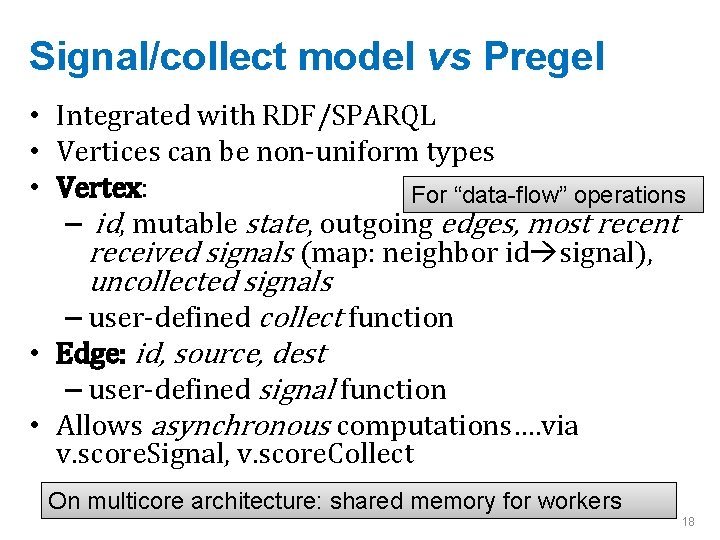

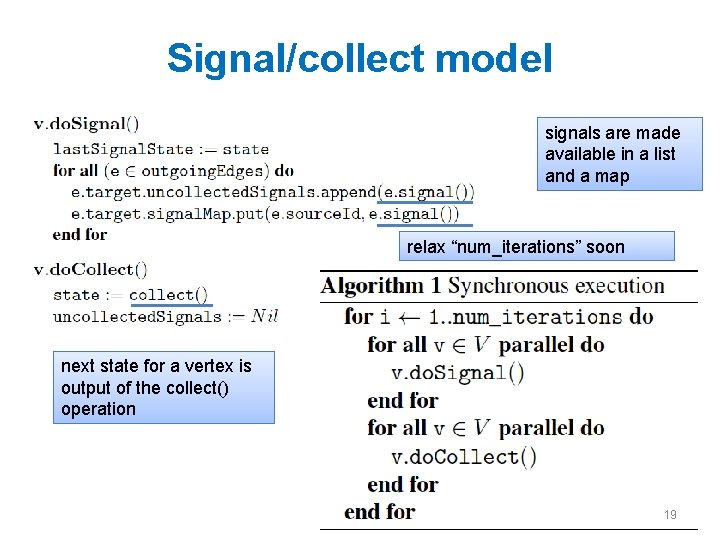

Signal/collect model vs Pregel • Integrated with RDF/SPARQL • Vertices can be non-uniform types • Vertex: For “data-flow” operations – id, mutable state, outgoing edges, most recent received signals (map: neighbor id signal), uncollected signals – user-defined collect function • Edge: id, source, dest – user-defined signal function • Allows asynchronous computations…. via v. score. Signal, v. score. Collect On multicore architecture: shared memory for workers 18

Signal/collect model signals are made available in a list and a map relax “num_iterations” soon next state for a vertex is output of the collect() operation 19

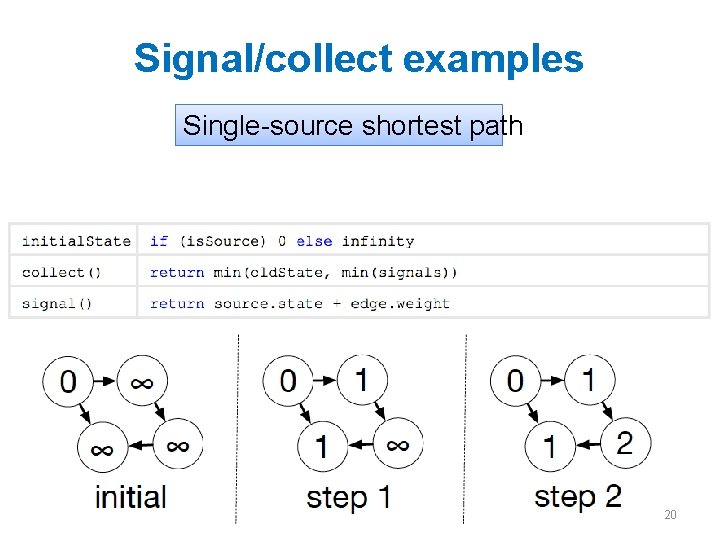

Signal/collect examples Single-source shortest path 20

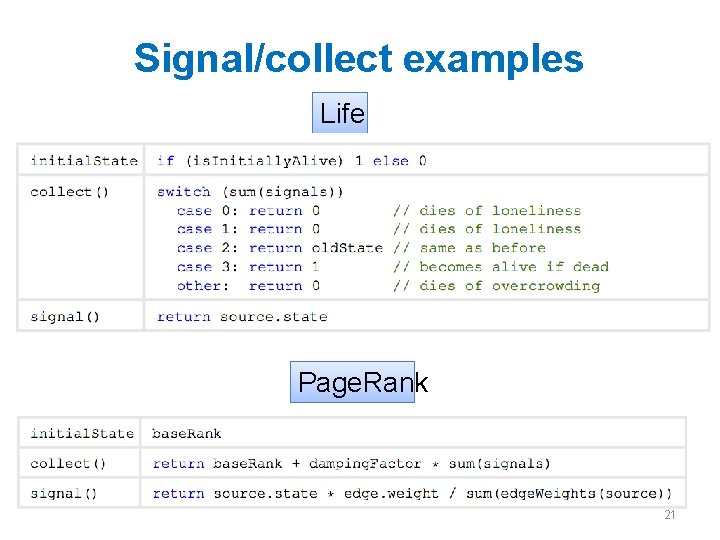

Signal/collect examples Life Page. Rank 21

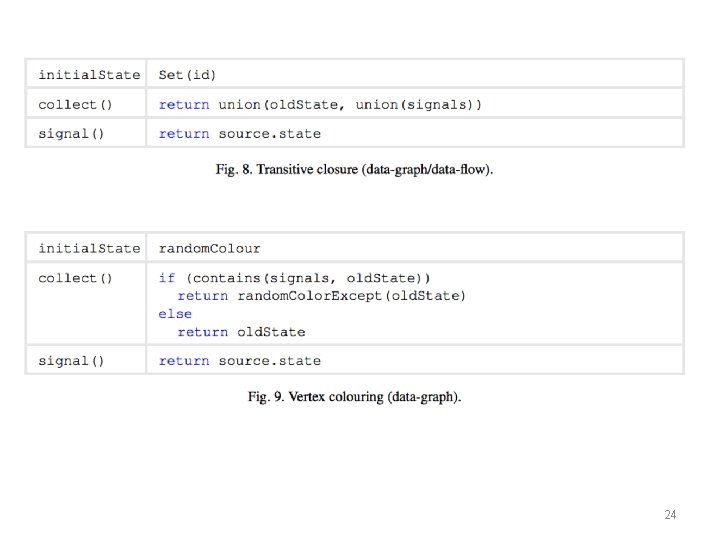

24

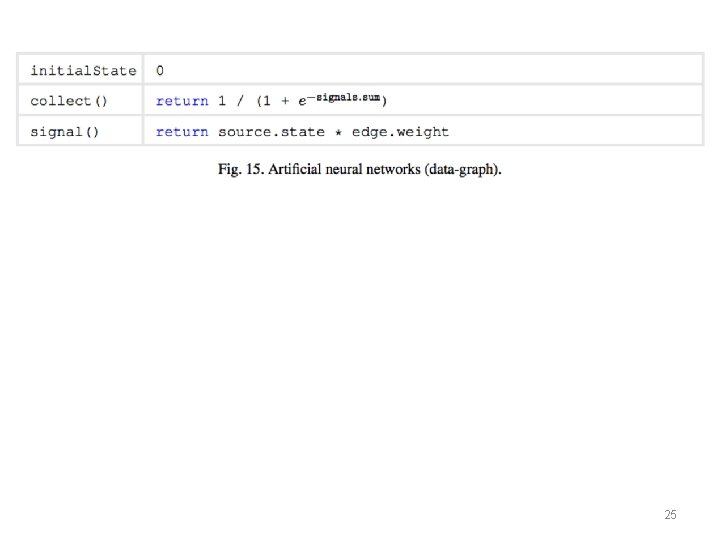

25

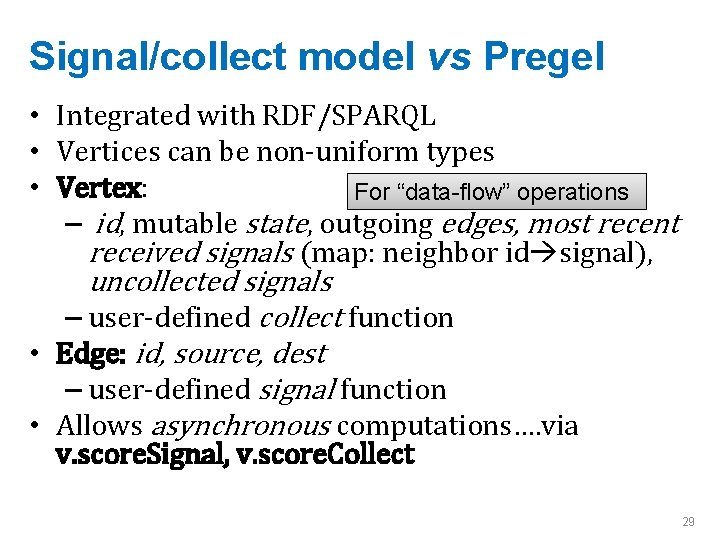

Signal/collect model vs Pregel • Integrated with RDF/SPARQL • Vertices can be non-uniform types • Vertex: For “data-flow” operations – id, mutable state, outgoing edges, most recent received signals (map: neighbor id signal), uncollected signals – user-defined collect function • Edge: id, source, dest – user-defined signal function • Allows asynchronous computations…. via v. score. Signal, v. score. Collect 29

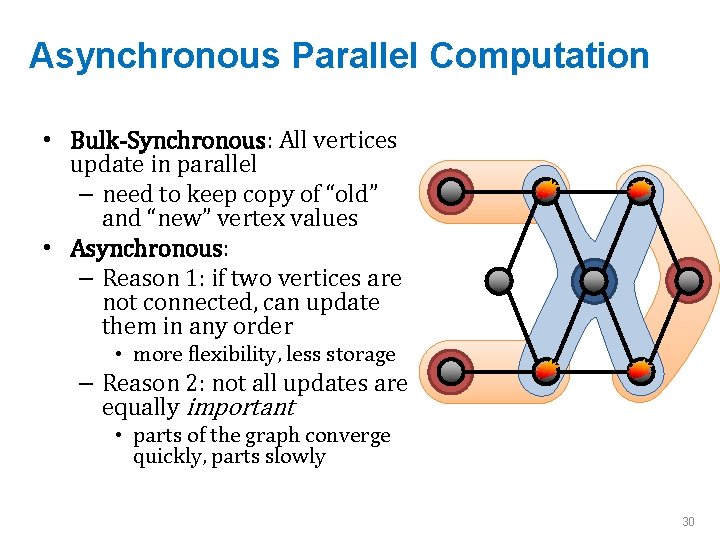

Asynchronous Parallel Computation • Bulk-Synchronous: All vertices update in parallel – need to keep copy of “old” and “new” vertex values • Asynchronous: – Reason 1: if two vertices are not connected, can update them in any order • more flexibility, less storage – Reason 2: not all updates are equally important • parts of the graph converge quickly, parts slowly 30

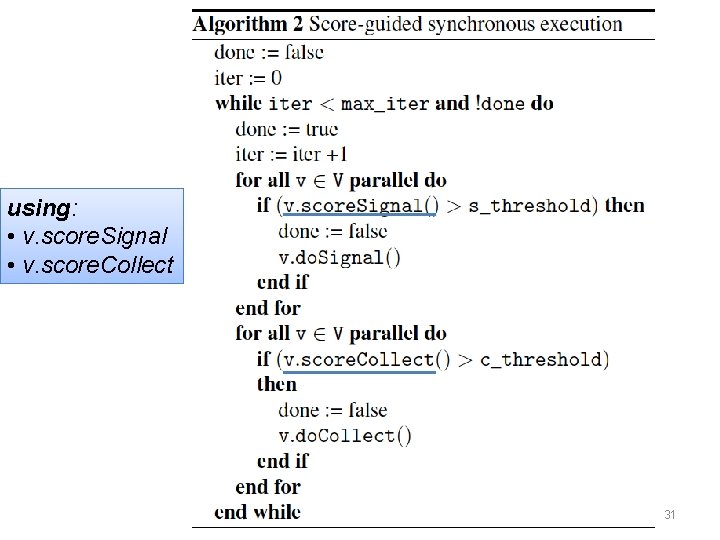

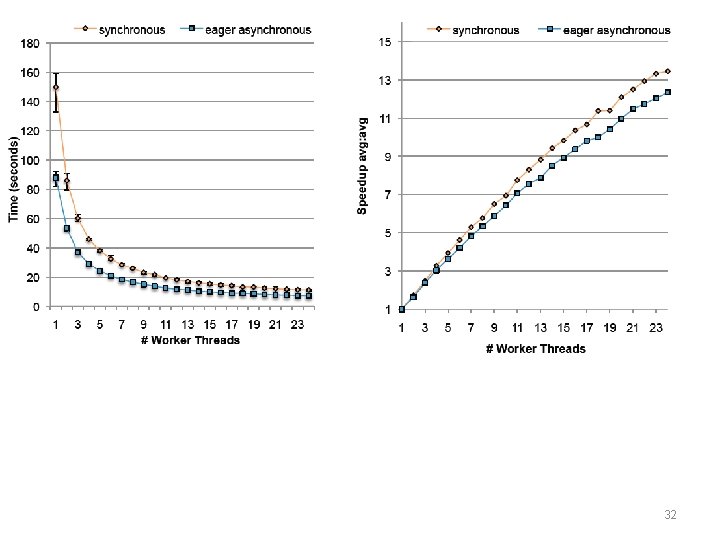

using: • v. score. Signal • v. score. Collect 31

32

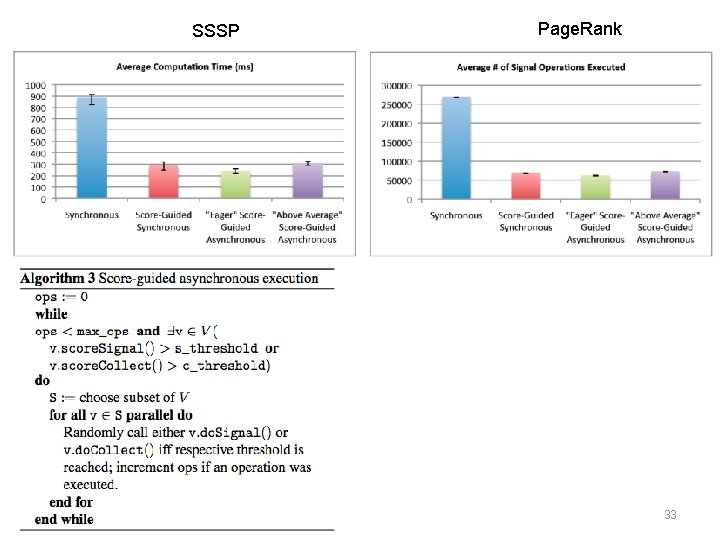

SSSP Page. Rank 33

Sample system: Graph. Lab 34

Graph. Lab • Data in graph, UDF vertex function • Differences: – some control over scheduling • vertex function can insert new tasks in a queue – messages must follow graph edges: can access adjacent vertices only – “shared data table” for global data – library algorithms for matrix factorization, co. EM, SVM, Gibbs, … – Graph. Lab Now Dato 35

Graph. Lab’s descendents • Power. Graph • Graph. Chi • Graph. X 36

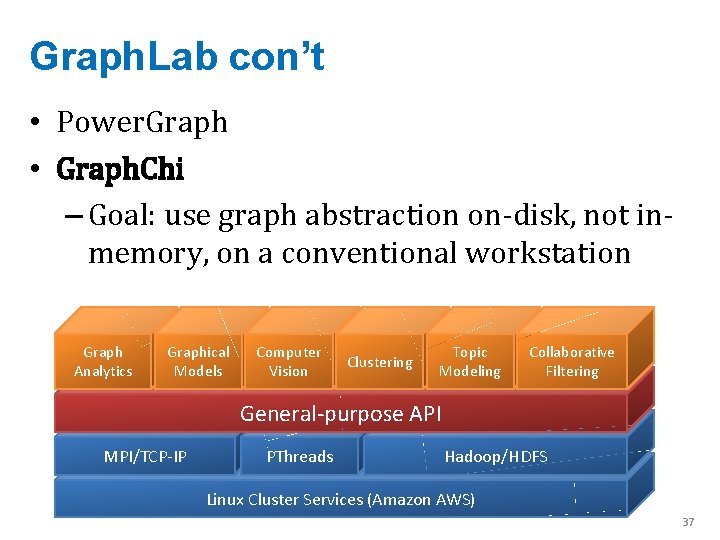

Graph. Lab con’t • Power. Graph • Graph. Chi – Goal: use graph abstraction on-disk, not inmemory, on a conventional workstation Graph Analytics Graphical Models Computer Vision Clustering Topic Modeling Collaborative Filtering General-purpose API MPI/TCP-IP PThreads Hadoop/HDFS Linux Cluster Services (Amazon AWS) 37

Graph. Lab con’t • Graph. Chi – Key insight: • in general we can’t easily stream the graph because neighbors will be scattered • but maybe we can limit the degree to which they’re scattered … enough to make streaming possible? – “almost-streaming”: keep P cursors in a file instead of one 38

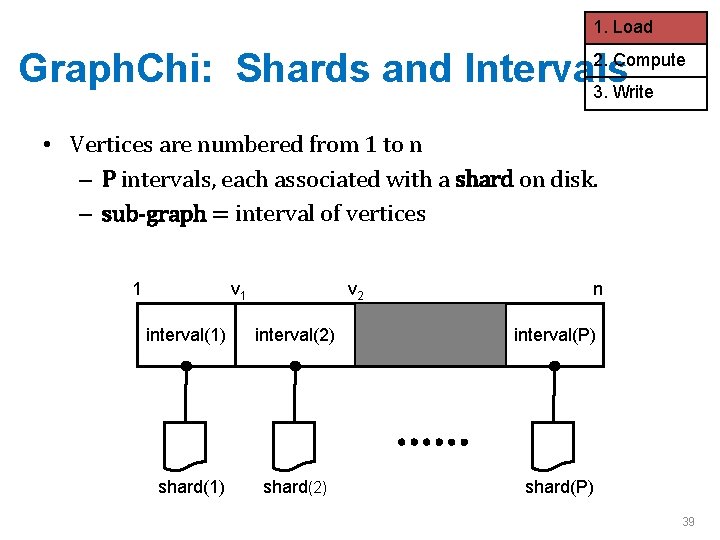

1. Load Graph. Chi: Shards and Intervals 3. Write 2. Compute • Vertices are numbered from 1 to n – P intervals, each associated with a shard on disk. – sub-graph = interval of vertices 1 v 2 n interval(1) interval(2) interval(P) shard(1) shard(2) shard(P) 39

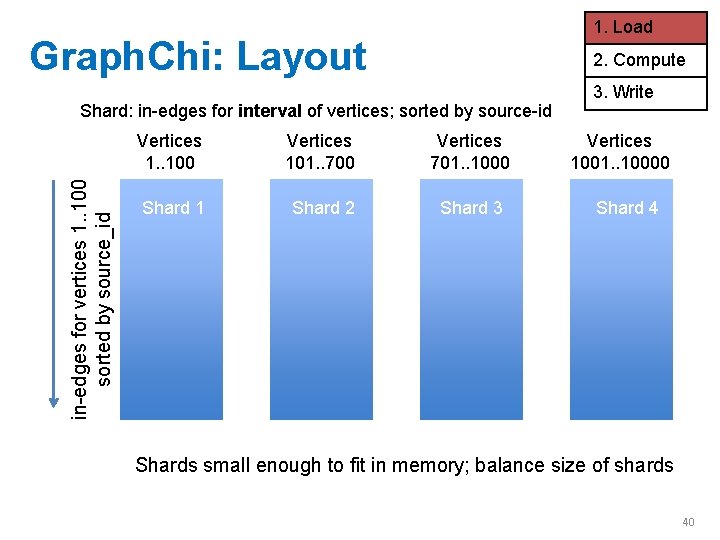

1. Load Graph. Chi: Layout 2. Compute in-edges for vertices 1. . 100 sorted by source_id Shard: in-edges for interval of vertices; sorted by source-id Vertices 1. . 100 Vertices 101. . 700 Vertices 701. . 1000 Shard 11 Shard 2 Shard 3 3. Write Vertices 1001. . 10000 Shard 4 Shards small enough to fit in memory; balance size of shards 40

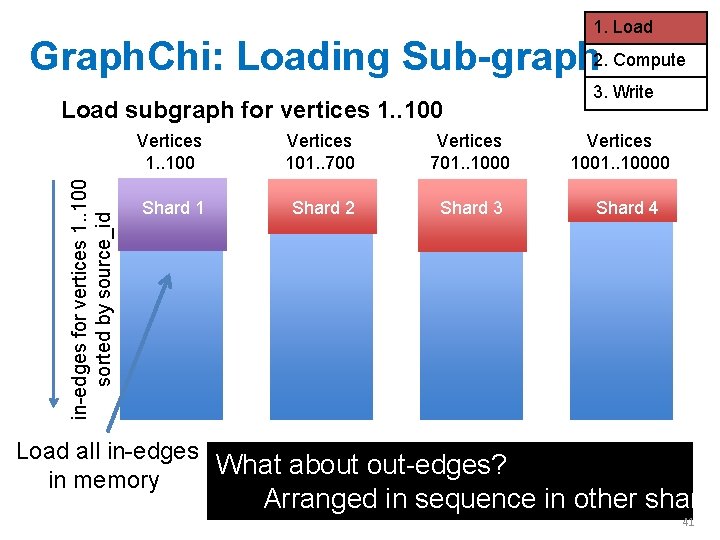

1. Load Graph. Chi: Loading Sub-graph 2. Compute in-edges for vertices 1. . 100 sorted by source_id Load subgraph for vertices 1. . 100 Vertices 101. . 700 Vertices 701. . 1000 Shard 1 Shard 2 Shard 3 3. Write Vertices 1001. . 10000 Shard 4 Load all in-edges What about out-edges? in memory Arranged in sequence in other shards 41

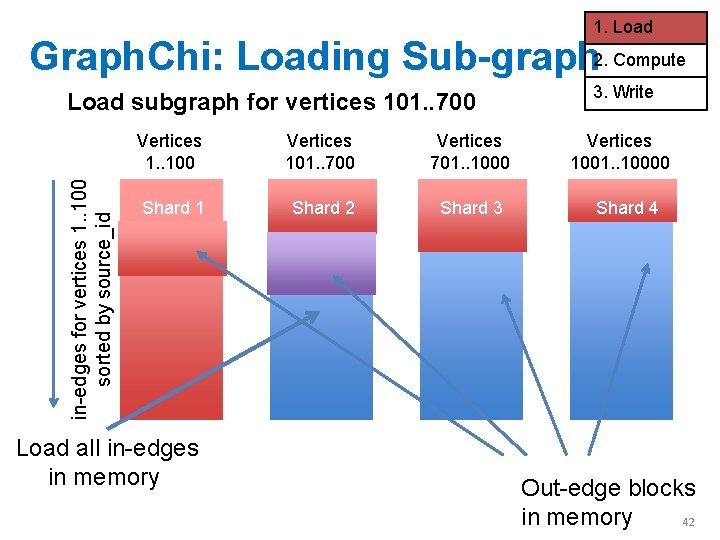

1. Load Graph. Chi: Loading Sub-graph 2. Compute in-edges for vertices 1. . 100 sorted by source_id Load subgraph for vertices 101. . 700 Vertices 1. . 100 Vertices 101. . 700 Vertices 701. . 1000 Shard 1 Shard 2 Shard 3 Load all in-edges in memory 3. Write Vertices 1001. . 10000 Shard 4 Out-edge blocks in memory 42

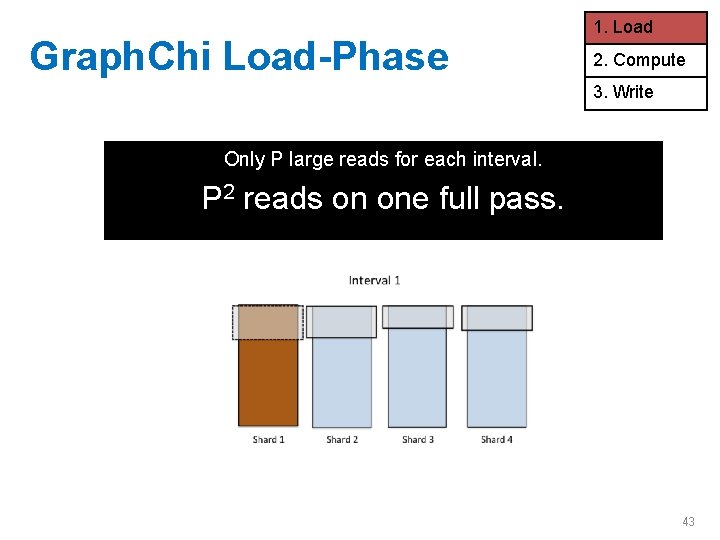

Graph. Chi Load-Phase 1. Load 2. Compute 3. Write Only P large reads for each interval. P 2 reads on one full pass. 43

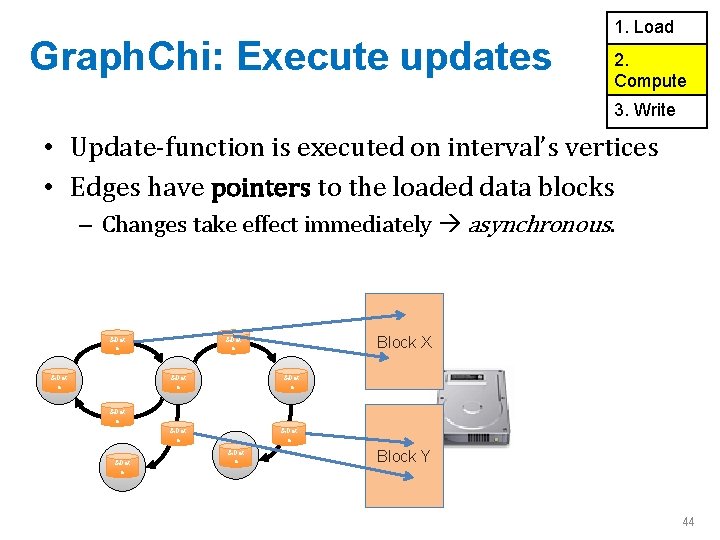

Graph. Chi: Execute updates 1. Load 2. Compute 3. Write • Update-function is executed on interval’s vertices • Edges have pointers to the loaded data blocks – Changes take effect immediately asynchronous. &Dat a Block X &Dat a &Dat a Block Y 44

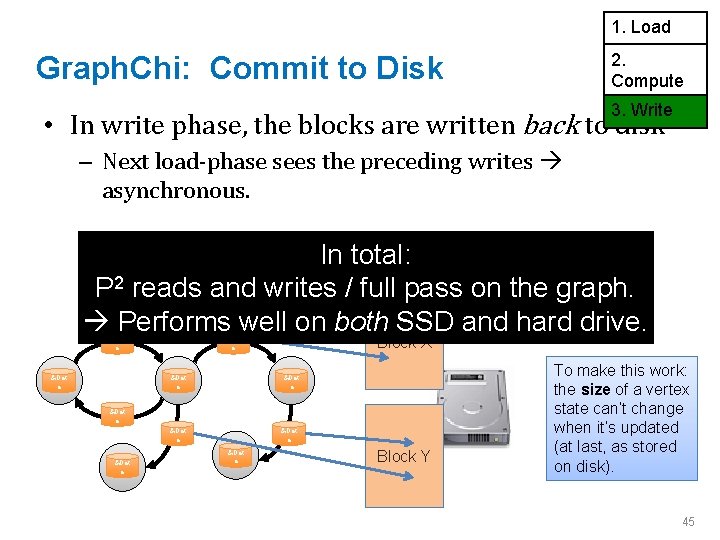

1. Load 2. Compute Graph. Chi: Commit to Disk 3. Write • In write phase, the blocks are written back to disk – Next load-phase sees the preceding writes asynchronous. In total: P 2 reads and writes / full pass on the graph. Performs well on both SSD and hard drive. &Dat a Block X &Dat a &Dat a Block Y To make this work: the size of a vertex state can’t change when it’s updated (at last, as stored on disk). 45

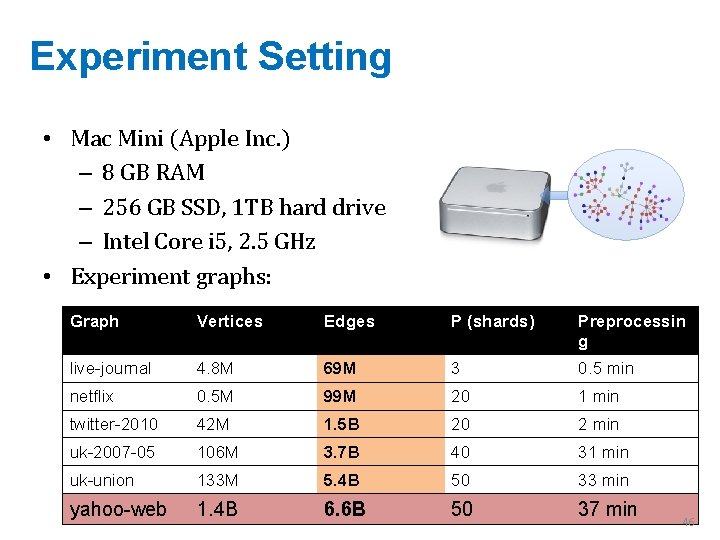

Experiment Setting • Mac Mini (Apple Inc. ) – 8 GB RAM – 256 GB SSD, 1 TB hard drive – Intel Core i 5, 2. 5 GHz • Experiment graphs: Graph Vertices Edges P (shards) Preprocessin g live-journal 4. 8 M 69 M 3 0. 5 min netflix 0. 5 M 99 M 20 1 min twitter-2010 42 M 1. 5 B 20 2 min uk-2007 -05 106 M 3. 7 B 40 31 min uk-union 133 M 5. 4 B 50 33 min yahoo-web 1. 4 B 6. 6 B 50 37 min 46

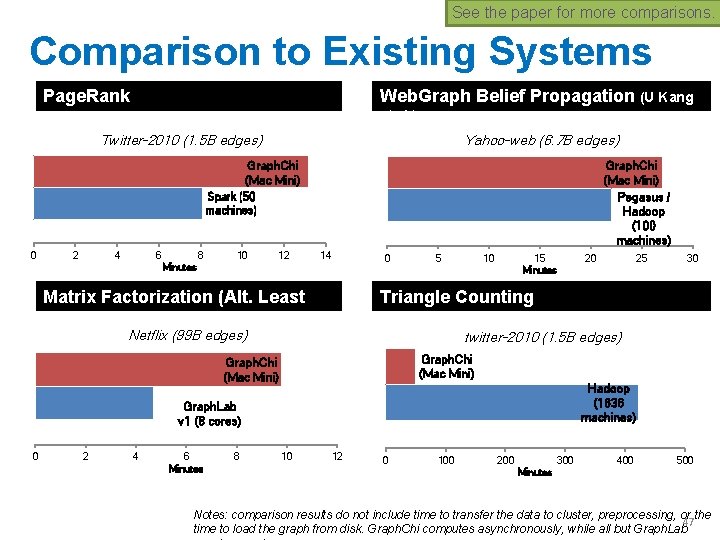

See the paper for more comparisons. Comparison to Existing Systems Page. Rank Web. Graph Belief Propagation (U Kang et al. ) Twitter-2010 (1. 5 B edges) Yahoo-web (6. 7 B edges) Graph. Chi (Mac Mini) Pegasus / Hadoop (100 machines) Graph. Chi (Mac Mini) Spark (50 machines) 0 2 4 6 8 10 12 14 0 Minutes Matrix Factorization (Alt. Least Sqr. ) 5 10 15 Minutes Graph. Chi (Mac Mini) Hadoop (1636 machines) Graph. Lab v 1 (8 cores) 4 6 Minutes 8 30 twitter-2010 (1. 5 B edges) Graph. Chi (Mac Mini) 2 25 Triangle Counting Netflix (99 B edges) 0 20 10 12 0 100 200 300 400 500 Minutes Notes: comparison results do not include time to transfer the data to cluster, preprocessing, or the 47 time to load the graph from disk. Graph. Chi computes asynchronously, while all but Graph. Lab

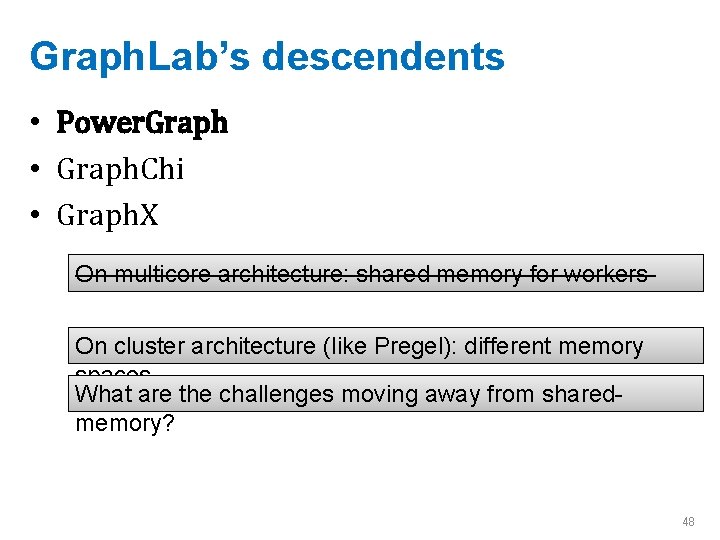

Graph. Lab’s descendents • Power. Graph • Graph. Chi • Graph. X On multicore architecture: shared memory for workers On cluster architecture (like Pregel): different memory spaces What are the challenges moving away from sharedmemory? 48

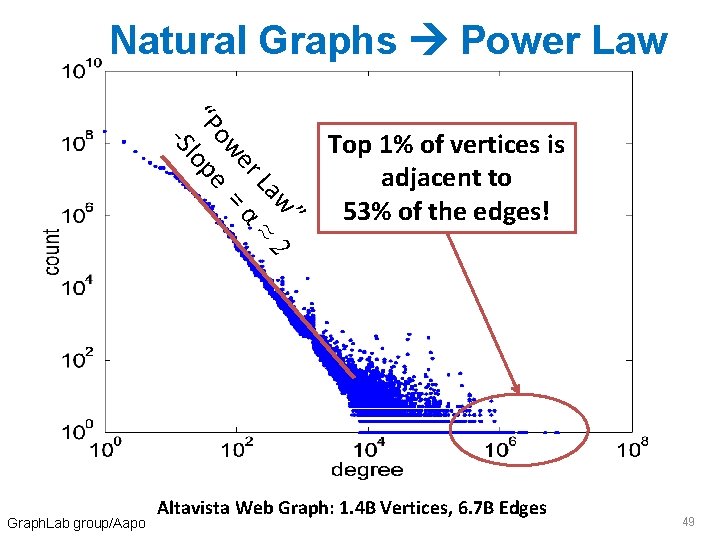

Natural Graphs Power Law w” La ≈ 2 er α ow = “P lope -S Graph. Lab group/Aapo Top 1% of vertices is adjacent to 53% of the edges! Altavista Web Graph: 1. 4 B Vertices, 6. 7 B Edges 49

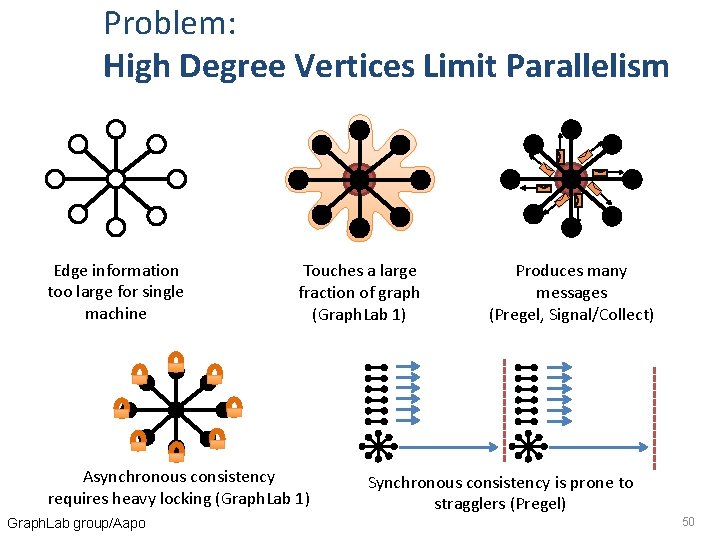

Problem: High Degree Vertices Limit Parallelism Edge information too large for single machine Touches a large fraction of graph (Graph. Lab 1) Asynchronous consistency requires heavy locking (Graph. Lab 1) Graph. Lab group/Aapo Produces many messages (Pregel, Signal/Collect) Synchronous consistency is prone to stragglers (Pregel) 50

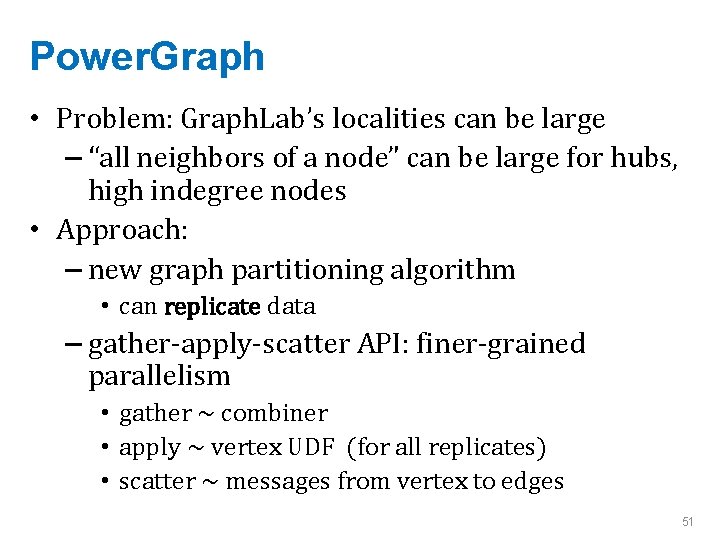

Power. Graph • Problem: Graph. Lab’s localities can be large – “all neighbors of a node” can be large for hubs, high indegree nodes • Approach: – new graph partitioning algorithm • can replicate data – gather-apply-scatter API: finer-grained parallelism • gather ~ combiner • apply ~ vertex UDF (for all replicates) • scatter ~ messages from vertex to edges 51

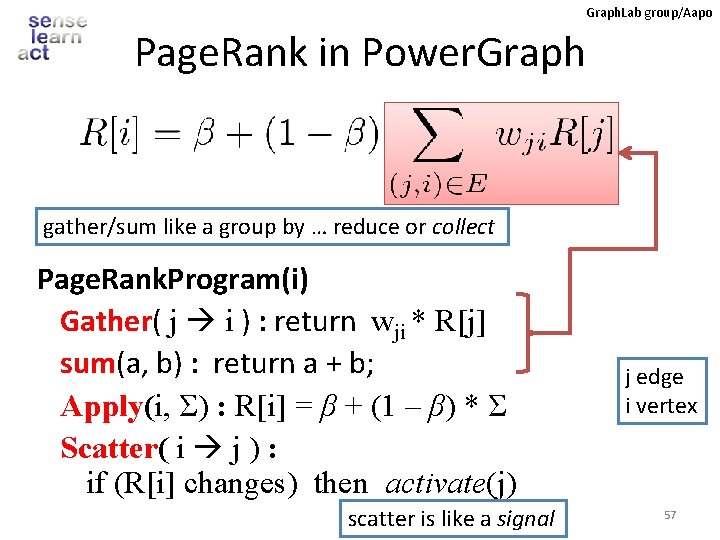

Graph. Lab group/Aapo Page. Rank in Power. Graph gather/sum like a group by … reduce or collect Page. Rank. Program(i) Gather( j i ) : return wji * R[j] sum(a, b) : return a + b; Apply(i, Σ) : R[i] = β + (1 – β) * Σ Scatter( i j ) : if (R[i] changes) then activate(j) scatter is like a signal j edge i vertex 57

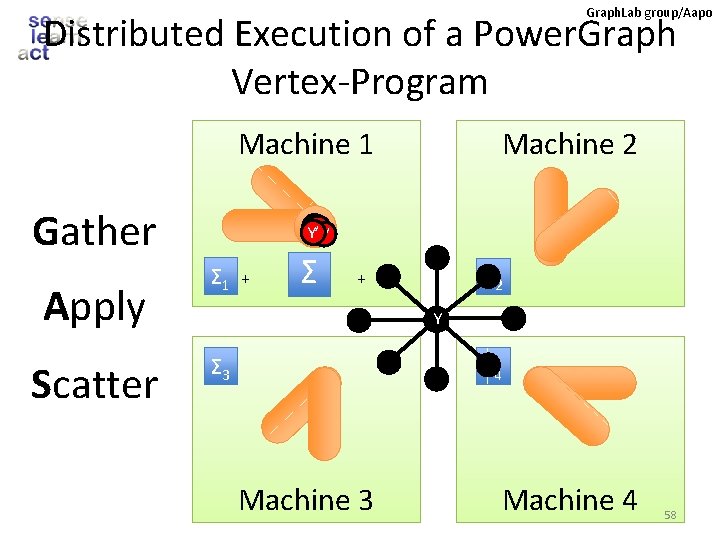

Graph. Lab group/Aapo Distributed Execution of a Power. Graph Vertex-Program Machine 1 Gather Apply Scatter Machine 2 Y’ Y’ Σ 1 + Σ 2 + Y Σ 3 Σ 4 Machine 3 Machine 4 58

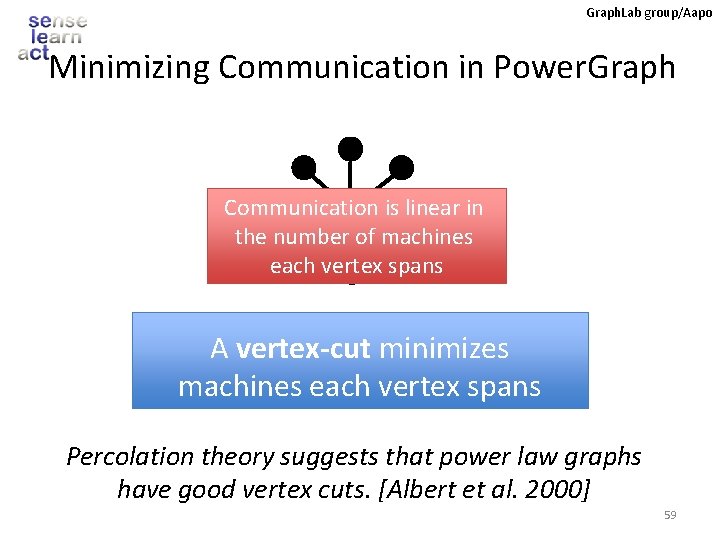

Graph. Lab group/Aapo Minimizing Communication in Power. Graph Communication is linear in Y the number of machines each vertex spans A vertex-cut minimizes machines each vertex spans Percolation theory suggests that power law graphs have good vertex cuts. [Albert et al. 2000] 59

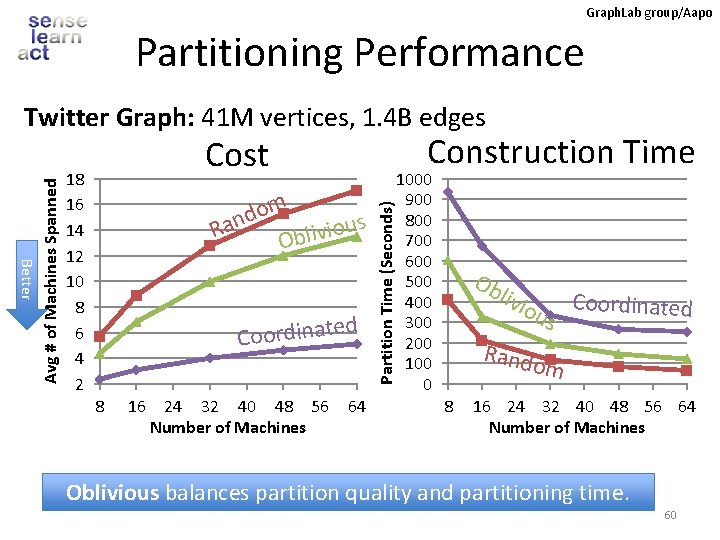

Graph. Lab group/Aapo Partitioning Performance 18 16 14 12 10 8 6 4 2 Construction Time Cost m o d Ran Oblivious d Coordinate 8 16 24 32 40 48 56 Number of Machines 64 1000 900 800 700 600 500 400 300 200 100 0 Partition Time (Seconds) Better Avg # of Machines Spanned Twitter Graph: 41 M vertices, 1. 4 B edges Ob liv iou s Coordinated Rando m 8 16 24 32 40 48 56 64 Number of Machines Oblivious balances partition quality and partitioning time. 60

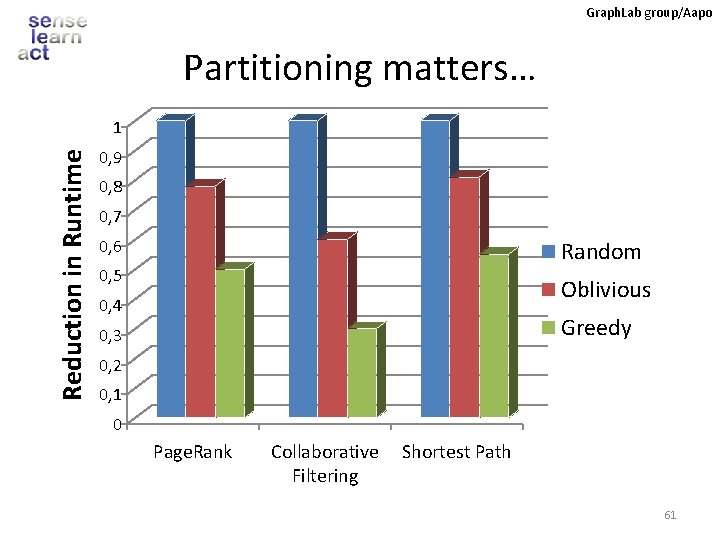

Graph. Lab group/Aapo Partitioning matters… Reduction in Runtime 1 0, 9 0, 8 0, 7 0, 6 Random 0, 5 Oblivious 0, 4 Greedy 0, 3 0, 2 0, 1 0 Page. Rank Collaborative Filtering Shortest Path 61

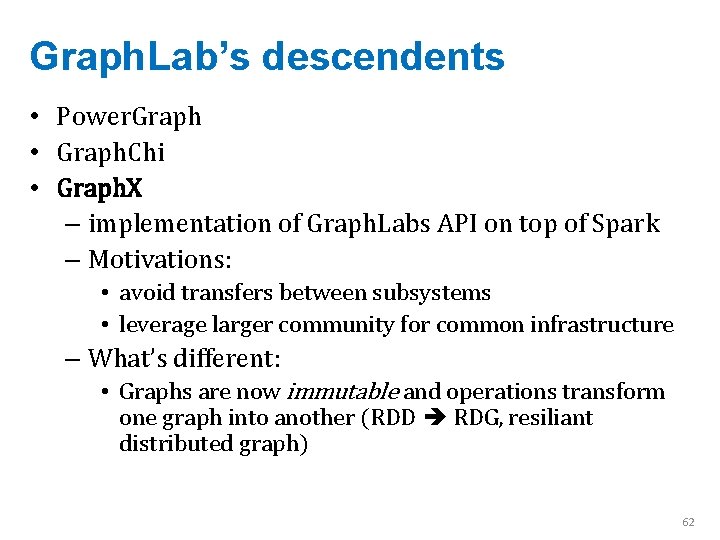

Graph. Lab’s descendents • Power. Graph • Graph. Chi • Graph. X – implementation of Graph. Labs API on top of Spark – Motivations: • avoid transfers between subsystems • leverage larger community for common infrastructure – What’s different: • Graphs are now immutable and operations transform one graph into another (RDD RDG, resiliant distributed graph) 62

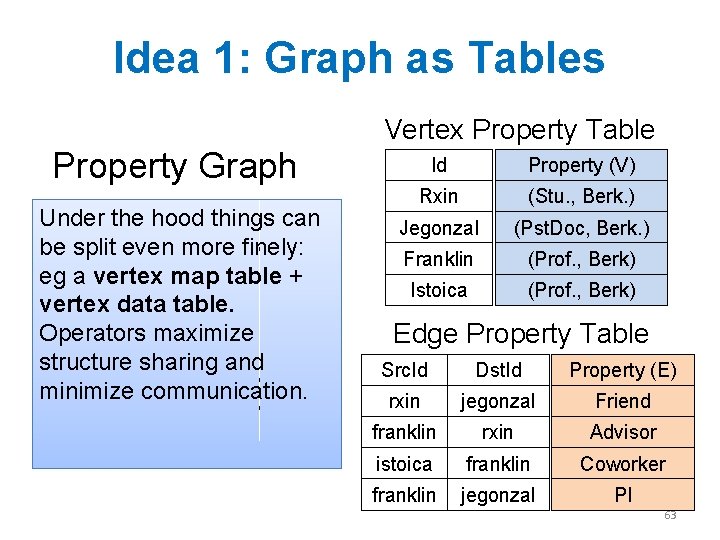

Idea 1: Graph as Tables Vertex Property Table Property Graph Under the hood things can R F be split even more finely: eg a vertex map table + vertex data table. Operators maximize structure sharing and minimize communication. J I Id Property (V) Rxin (Stu. , Berk. ) Jegonzal (Pst. Doc, Berk. ) Franklin (Prof. , Berk) Istoica (Prof. , Berk) Edge Property Table Src. Id Dst. Id Property (E) rxin jegonzal Friend franklin rxin Advisor istoica franklin Coworker franklin jegonzal PI 63

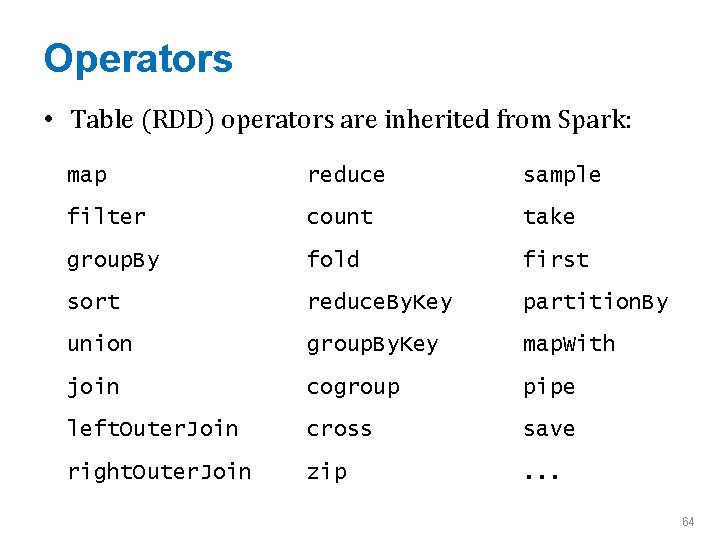

Operators • Table (RDD) operators are inherited from Spark: map reduce sample filter count take group. By fold first sort reduce. By. Key partition. By union group. By. Key map. With join cogroup pipe left. Outer. Join cross save right. Outer. Join zip . . . 64

![Graph Operators class Graph [ V, E ] { def Graph(vertices: Table[ (Id, V) Graph Operators class Graph [ V, E ] { def Graph(vertices: Table[ (Id, V)](http://slidetodoc.com/presentation_image_h2/e4768daa606215c79ca071ba2e026b43/image-54.jpg)

Graph Operators class Graph [ V, E ] { def Graph(vertices: Table[ (Id, V) ], edges: Table[ (Id, E) ]) Idea 2: mr. Triplets: lowlevel routine similar to scatter-gather-apply. // Table Views --------def vertices: Table[ (Id, V) ] def edges: Table[ (Id, E) ] Evolved to def triplets: Table [ ((Id, V), Eaggregate. Neighbors, ) ] // Transformations ---------------aggregate. Messages def reverse: Graph[V, E] def subgraph(p. V: (Id, V) => Boolean, p. E: Edge[V, E] => Boolean): Graph[V, E] def map. V(m: (Id, V) => T ): Graph[T, E] def map. E(m: Edge[V, E] => T ): Graph[V, T] // Joins --------------------def join. V(tbl: Table [(Id, T)]): Graph[(V, T), E ] def join. E(tbl: Table [(Id, T)]): Graph[V, (E, T)] // Computation -----------------def mr. Triplets(map. F: (Edge[V, E]) => List[(Id, T)], reduce. F: (T, T) => T): Graph[T, E] } 65

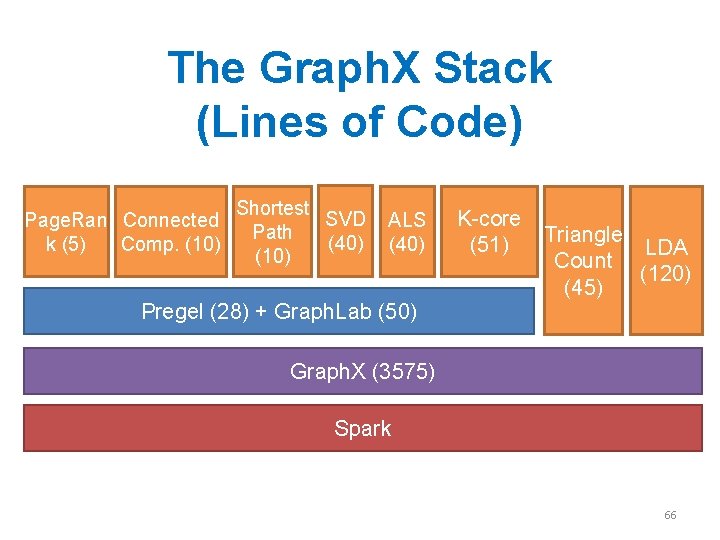

The Graph. X Stack (Lines of Code) Page. Ran Connected k (5) Comp. (10) Shortest SVD Path (40) (10) ALS (40) K-core (51) Triangle LDA Count (120) (45) Pregel (28) + Graph. Lab (50) Graph. X (3575) Spark 66

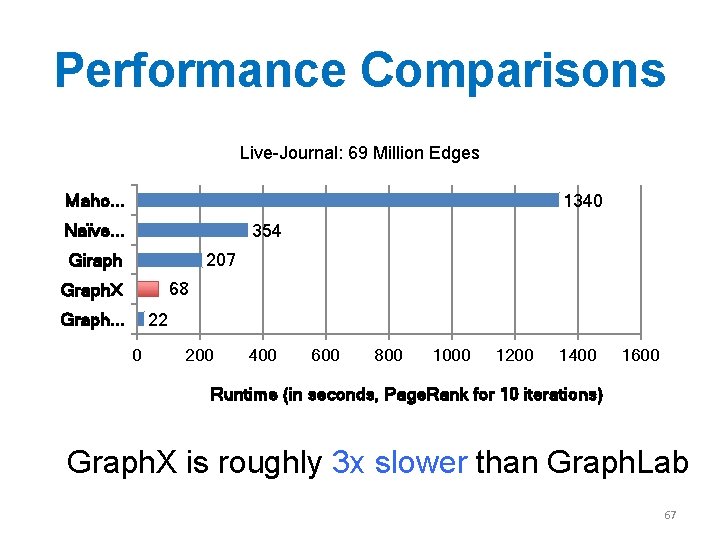

Performance Comparisons Live-Journal: 69 Million Edges Maho. . . 1340 Naïve. . . 354 Giraph 207 68 Graph. X Graph. . . 22 0 200 400 600 800 1000 1200 1400 1600 Runtime (in seconds, Page. Rank for 10 iterations) Graph. X is roughly 3 x slower than Graph. Lab 67

Wrapup 68

Summary • Large immutable data structures on (distributed) disk, processing by sweeping through then and creating new data structures: – stream-and-sort, Hadoop, PIG, Hive, … • Large immutable data structures in distributed memory: – Spark – distributed tables • Large mutable data structures in distributed memory: – parameter server: structure is a hashtable – Pregel, Graph. Lab, Graph. Chi, Graph. X: structure is a graph 69

Summary • APIs for the various systems vary in detail but have a similar flavor – Typical algorithms iteratively update vertex state – Changes in state are communicated with messages which need to be aggregated from neighbors • Biggest wins are – on problems where graph is fixed in each iteration, but vertex data changes – on graphs small enough to fit in (distributed) memory 70

Some things to take away • Platforms for iterative operations on graphs – Graph. X: if you want to integrate with Spark – Graph. Chi: if you don’t have a cluster – Graph. Lab/Dato: if you don’t need free software and performance is crucial – Pregel: if you work at Google – Giraph, Signal/collect, … ? ? • Important differences – Intended architecture: shared-memory and threads, distributed cluster memory, graph on disk – How graphs are partitioned for clusters – If processing is synchronous or asynchronous 71

- Slides: 60