GPU Programming using BUs Shared Computing Cluster Research

GPU Programming using BU’s Shared Computing Cluster Research Computing Services Boston University

GPU Programming Access to the SCC (see the whiteboard)

GPU Programming Access to the SCC GPU nodes # copy tutorial materials: % cp –r /project/scv/examples/gpu/tutorials. or % cp –r /scratch/tutorials. % cd tutorials # request a node with GPUs: % qsh –l gpus=1

GPU Programming Tutorial Materials # tutorial materials online: scv. bu. edu/examples # on the cluster: /project/scv/examples

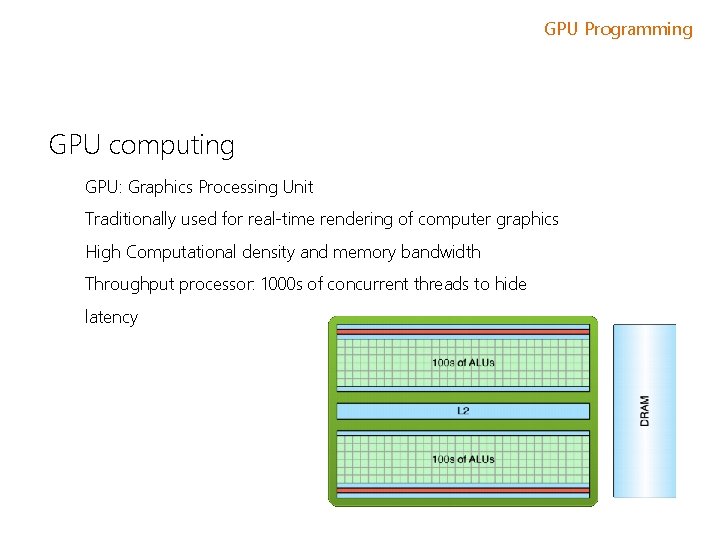

GPU Programming GPU computing GPU: Graphics Processing Unit Traditionally used for real-time rendering of computer graphics High Computational density and memory bandwidth Throughput processor: 1000 s of concurrent threads to hide latency

GPU Programming • GPU – graphics processing unit • Originally designed as a graphics processor • NVIDIA Ge. Force 256 (1999) – first GPU o o o single-chip processor for mathematically-intensive tasks transforms of vertices and polygons lighting polygon clipping texture mapping polygon rendering • NVIDIA Geforce 3, ATI Radeon 9700 – early 2000’s o Now programmable!

GPU Programming Modern GPUs are present in ü Embedded systems ü Personal Computers ü Game consoles ü Mobile Phones ü Workstations

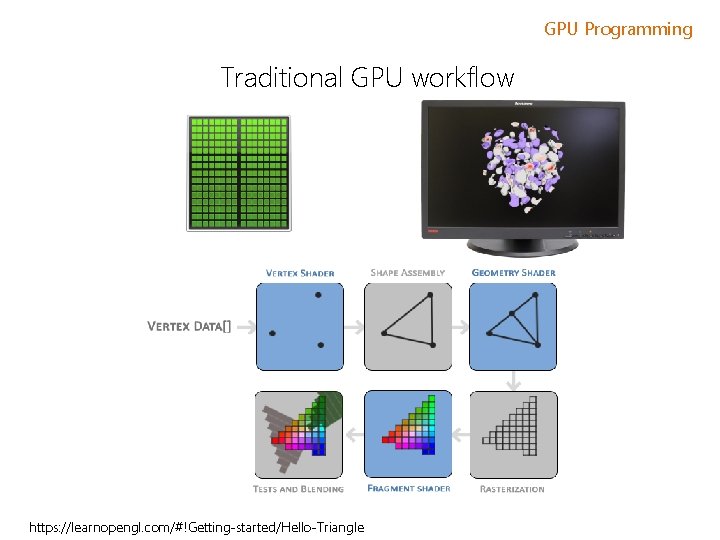

GPU Programming Traditional GPU workflow https: //learnopengl. com/#!Getting-started/Hello-Triangle

GPU Programming GPGPU 1999 -2000 computer scientists from various fields started using GPUs to accelerate a range of scientific applications. GPU programming required the use of graphics APIs such as Open. GL and Cg. 2001 – LU factorization implemented using GPUs 2002 James Fung (University of Toronto) developed Open. VIDIA. NVIDIA greatly invested in GPGPU movement and offered a number of options and libraries for a seamless experience for C, C++ and Fortran programmers.

GPU Programming GPGPU timeline In November 2006 NVIDIA launched CUDA, an API that allows to code algorithms for execution on Ge. Force GPUs using the C programming language. Khronus Group defined Open. CL in 2008 supported on AMD, NVIDIA and ARM platforms. In 2012 NVIDIA presented and demonstrated Open. ACC - a set of directives that greatly simplify parallel programming of heterogeneous systems.

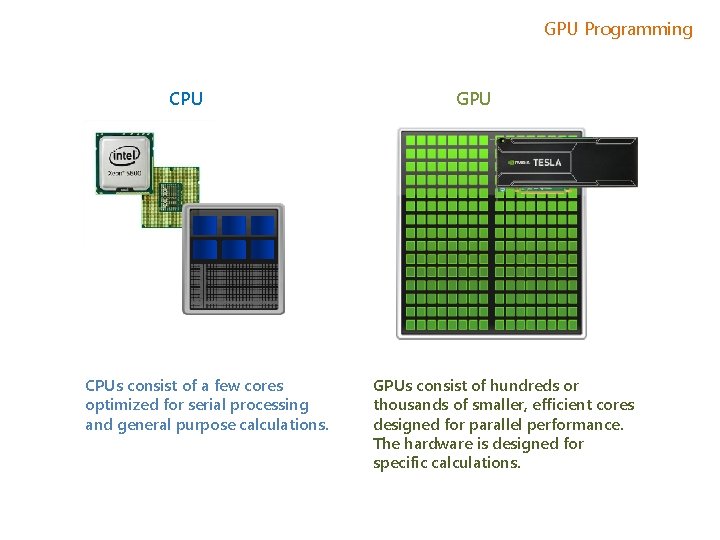

GPU Programming CPUs consist of a few cores optimized for serial processing and general purpose calculations. GPUs consist of hundreds or thousands of smaller, efficient cores designed for parallel performance. The hardware is designed for specific calculations.

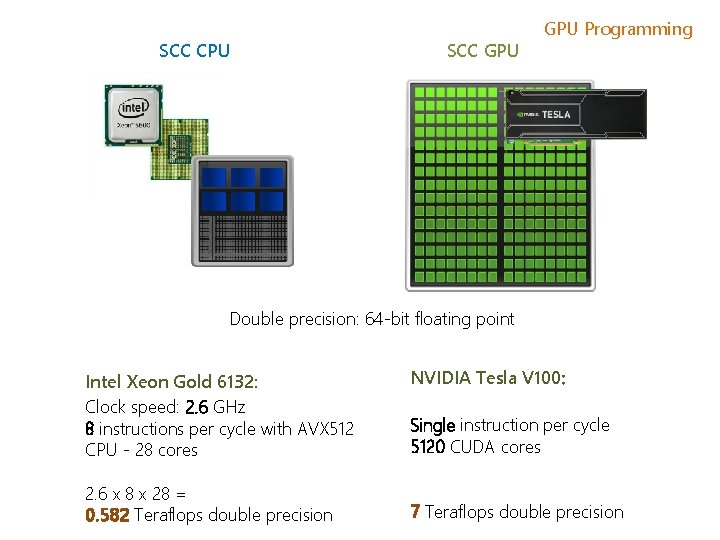

SCC CPU SCC GPU Programming Double precision: 64 -bit floating point Intel Xeon Gold 6132: Clock speed: 2. 6 GHz 8 instructions per cycle with AVX 512 CPU - 28 cores 2. 6 x 8 x 28 = 0. 582 Teraflops double precision NVIDIA Tesla V 100: Single instruction per cycle 5120 CUDA cores 7 Teraflops double precision

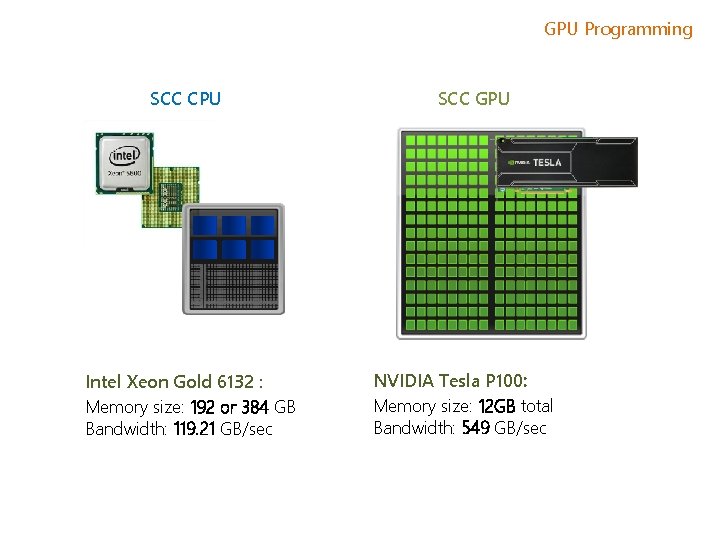

GPU Programming SCC CPU Intel Xeon Gold 6132 : Memory size: 192 or 384 GB Bandwidth: 119. 21 GB/sec SCC GPU NVIDIA Tesla P 100: Memory size: 12 GB total Bandwidth: 549 GB/sec

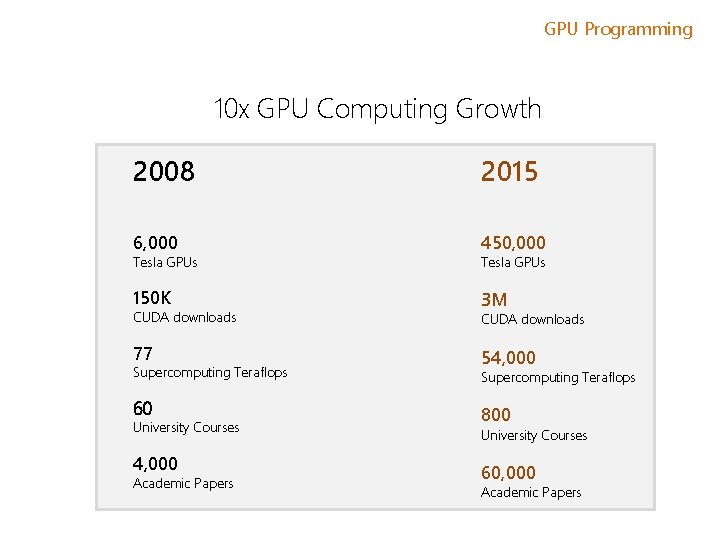

GPU Programming 10 x GPU Computing Growth 2008 2015 6, 000 450, 000 150 K 3 M Tesla GPUs CUDA downloads 77 Supercomputing Teraflops 60 University Courses 4, 000 Academic Papers Tesla GPUs CUDA downloads 54, 000 Supercomputing Teraflops 800 University Courses 60, 000 Academic Papers

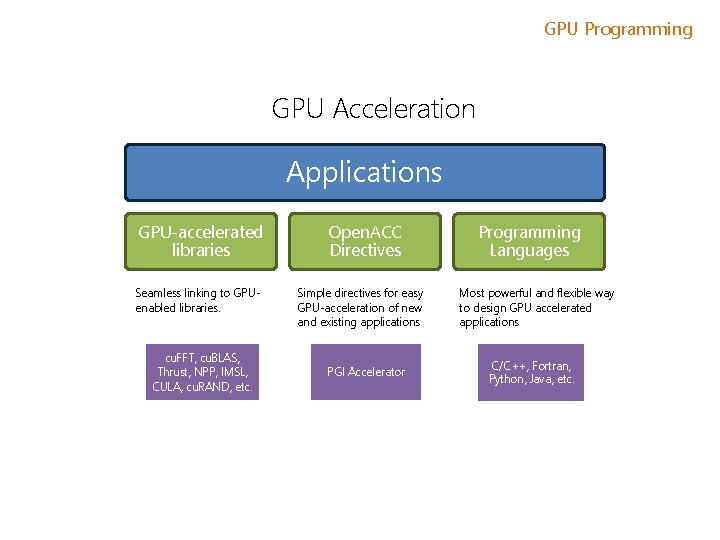

GPU Programming GPU Acceleration Applications GPU-accelerated libraries Seamless linking to GPUenabled libraries. cu. FFT, cu. BLAS, Thrust, NPP, IMSL, CULA, cu. RAND, etc. Open. ACC Directives Simple directives for easy GPU-acceleration of new and existing applications PGI Accelerator Programming Languages Most powerful and flexible way to design GPU accelerated applications C/C++, Fortran, Python, Java, etc.

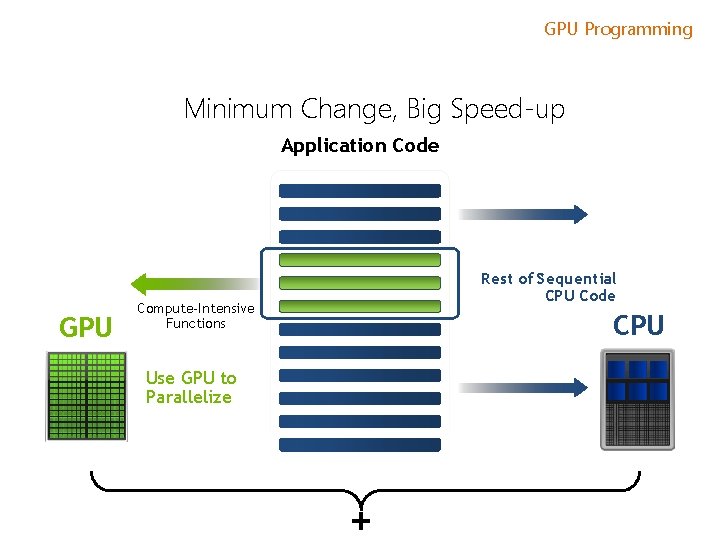

GPU Programming Minimum Change, Big Speed-up Application Code GPU Rest of Sequential CPU Code Compute-Intensive Functions CPU Use GPU to Parallelize +

GPU Programming Will Execution on a GPU Accelerate My Application? Computationally intensive—The time spent on computation significantly exceeds the time spent on transferring data to and from GPU memory. Massively parallel—The computations can be broken down into hundreds or thousands of independent units of work. Well suited to GPU architectures – some algorithms or implementations will not perform well on the GPU.

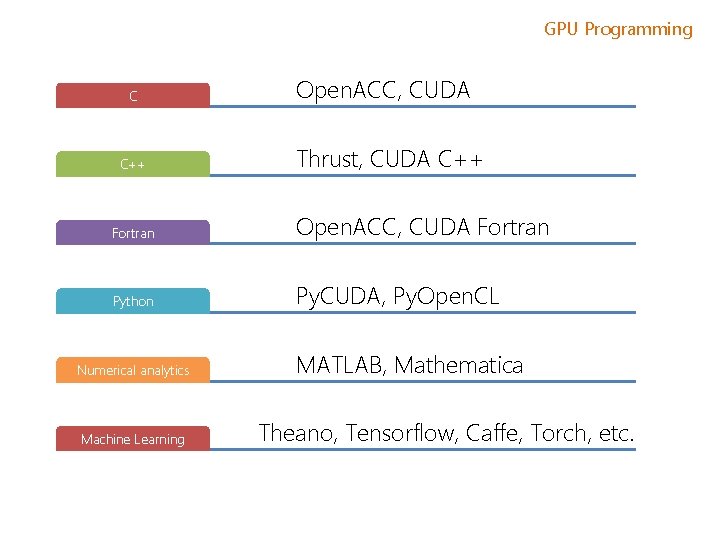

GPU Programming C C++ Open. ACC, CUDA Thrust, CUDA C++ Fortran Open. ACC, CUDA Fortran Python Py. CUDA, Py. Open. CL Numerical analytics Machine Learning MATLAB, Mathematica Theano, Tensorflow, Caffe, Torch, etc.

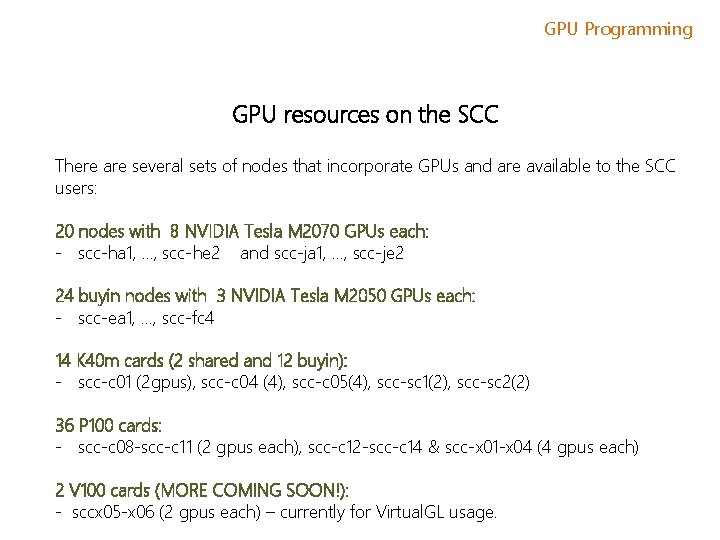

GPU Programming GPU resources on the SCC There are several sets of nodes that incorporate GPUs and are available to the SCC users: 20 nodes with 8 NVIDIA Tesla M 2070 GPUs each: - scc-ha 1, …, scc-he 2 and scc-ja 1, …, scc-je 2 24 buyin nodes with 3 NVIDIA Tesla M 2050 GPUs each: - scc-ea 1, …, scc-fc 4 14 K 40 m cards (2 shared and 12 buyin): - scc-c 01 (2 gpus), scc-c 04 (4), scc-c 05(4), scc-sc 1(2), scc-sc 2(2) 36 P 100 cards: - scc-c 08 -scc-c 11 (2 gpus each), scc-c 12 -scc-c 14 & scc-x 01 -x 04 (4 gpus each) 2 V 100 cards (MORE COMING SOON!): - sccx 05 -x 06 (2 gpus each) – currently for Virtual. GL usage.

GPU Programming Interactive GPU job Request xterm with access to 1 GPU for (12 hours default time limit). The first GPU available will be used: > qrsh -V -l gpus=1 Med. Campus users need to add project name: > qrsh -V -P project -l gpus=1

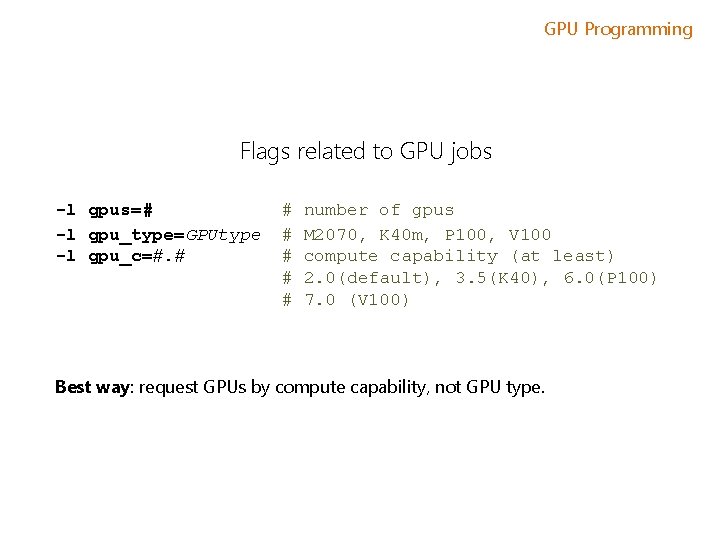

GPU Programming Flags related to GPU jobs -l gpus=# -l gpu_type=GPUtype -l gpu_c=#. # # # number of gpus M 2070, K 40 m, P 100, V 100 compute capability (at least) 2. 0(default), 3. 5(K 40), 6. 0(P 100) 7. 0 (V 100) Best way: request GPUs by compute capability, not GPU type.

GPU Programming NVIDIA Compute Capability This is how NVIDIA labels the different generations of its GPU architectures. Higher version numbers indicate more features are available to software developers. Example: Unified Memory • Allows for memory allocations to be visible automatically in both the GPU and CPU. • Avoids having programmer explicitly move data to and from the GPU. • Supported in compute capability 3. 5 and newer (i. e. K 40 m, P 100, V 100). • CC 6. 0 added hardware support in the GPU for this. • Provides: better performance, easier code development. • See: https: //devblogs. nvidia. com/unified-memory-cuda-beginners/

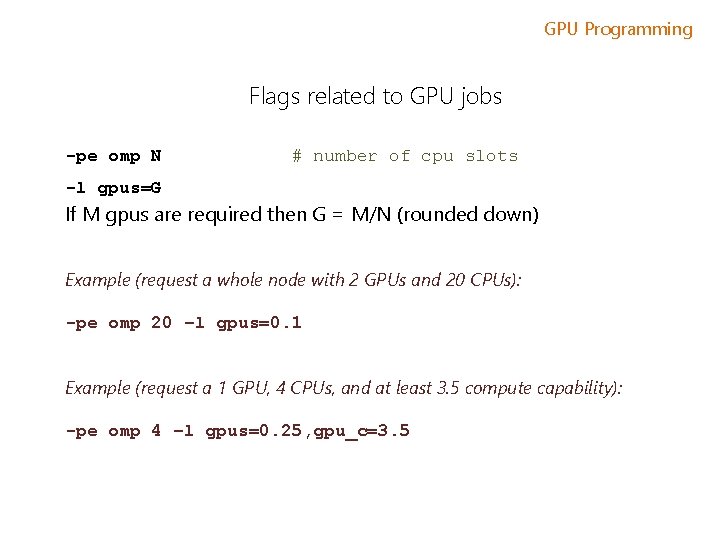

GPU Programming Flags related to GPU jobs -pe omp N # number of cpu slots -l gpus=G If M gpus are required then G = M/N (rounded down) Example (request a whole node with 2 GPUs and 20 CPUs): -pe omp 20 –l gpus=0. 1 Example (request a 1 GPU, 4 CPUs, and at least 3. 5 compute capability): -pe omp 4 –l gpus=0. 25, gpu_c=3. 5

GPU Programming Interactive Batch Examine GPU hardware and driver > nvidia-smi -h for help -q for long query of all GPUs PCIe Bus ID Power State/Fans/Temps/Clockspeed

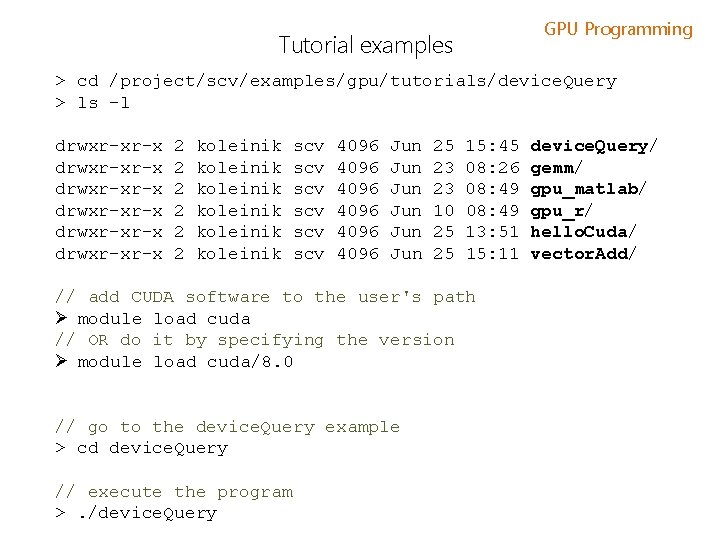

GPU Programming Tutorial examples > cd /project/scv/examples/gpu/tutorials/device. Query > ls -l drwxr-xr-x drwxr-xr-x 2 2 2 koleinik koleinik scv scv scv 4096 4096 Jun Jun Jun 25 23 23 10 25 25 15: 45 08: 26 08: 49 13: 51 15: 11 // add CUDA software to the user's path Ø module load cuda // OR do it by specifying the version Ø module load cuda/8. 0 // go to the device. Query example > cd device. Query // execute the program >. /device. Query/ gemm/ gpu_matlab/ gpu_r/ hello. Cuda/ vector. Add/

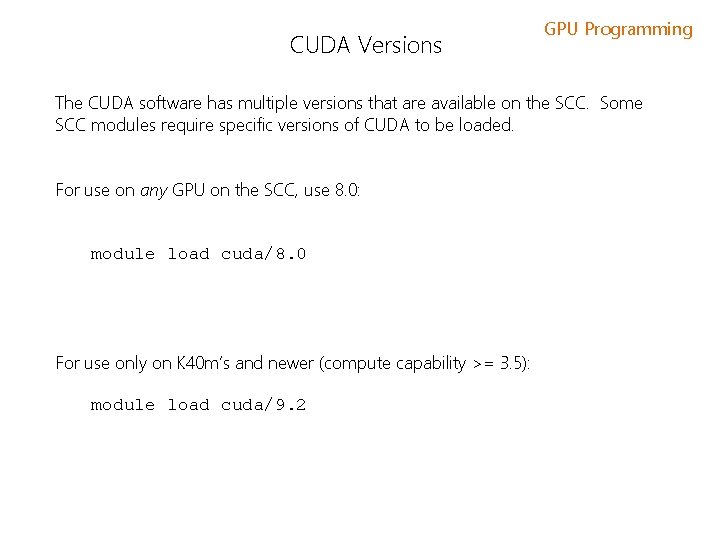

CUDA Versions GPU Programming The CUDA software has multiple versions that are available on the SCC. Some SCC modules require specific versions of CUDA to be loaded. For use on any GPU on the SCC, use 8. 0: module load cuda/8. 0 For use only on K 40 m’s and newer (compute capability >= 3. 5): module load cuda/9. 2

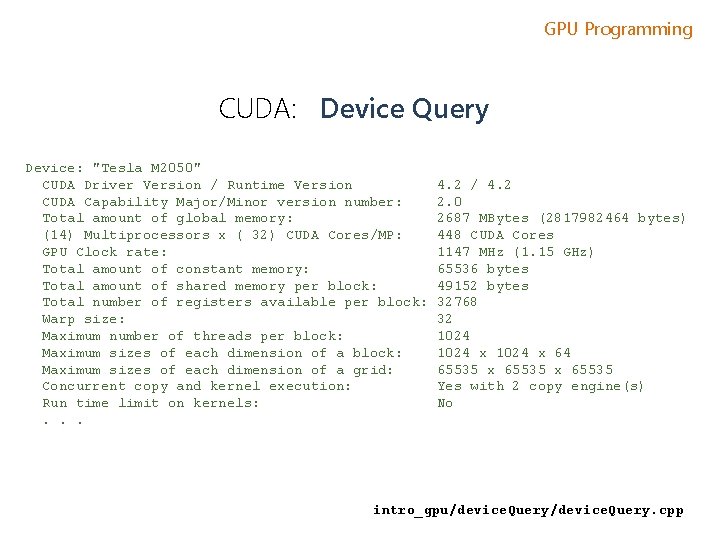

GPU Programming CUDA: Device Query Device: "Tesla M 2050" CUDA Driver Version / Runtime Version CUDA Capability Major/Minor version number: Total amount of global memory: (14) Multiprocessors x ( 32) CUDA Cores/MP: GPU Clock rate: Total amount of constant memory: Total amount of shared memory per block: Total number of registers available per block: Warp size: Maximum number of threads per block: Maximum sizes of each dimension of a grid: Concurrent copy and kernel execution: Run time limit on kernels: . . . 4. 2 / 4. 2 2. 0 2687 MBytes (2817982464 bytes) 448 CUDA Cores 1147 MHz (1. 15 GHz) 65536 bytes 49152 bytes 32768 32 1024 x 64 65535 x 65535 Yes with 2 copy engine(s) No intro_gpu/device. Query. cpp

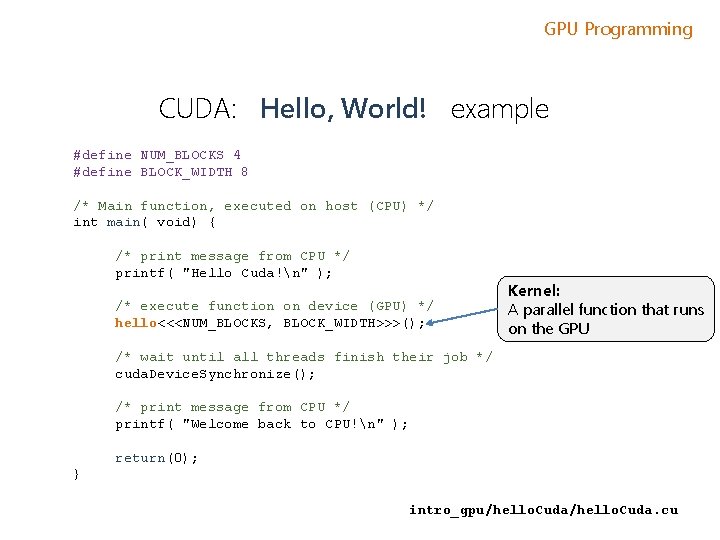

GPU Programming CUDA: Hello, World! example #define NUM_BLOCKS 4 #define BLOCK_WIDTH 8 /* Main function, executed on host (CPU) */ int main( void) { /* print message from CPU */ printf( "Hello Cuda!n" ); /* execute function on device (GPU) */ hello<<<NUM_BLOCKS, BLOCK_WIDTH>>>(); Kernel: A parallel function that runs on the GPU /* wait until all threads finish their job */ cuda. Device. Synchronize(); /* print message from CPU */ printf( "Welcome back to CPU!n" ); return(0); } intro_gpu/hello. Cuda. cu

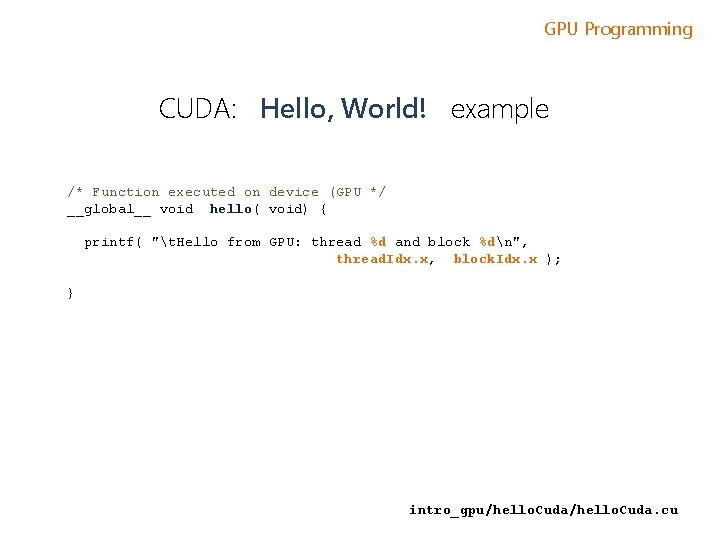

GPU Programming CUDA: Hello, World! example /* Function executed on device (GPU */ __global__ void hello( void) { printf( "t. Hello from GPU: thread %d and block %dn", thread. Idx. x, block. Idx. x ); } intro_gpu/hello. Cuda. cu

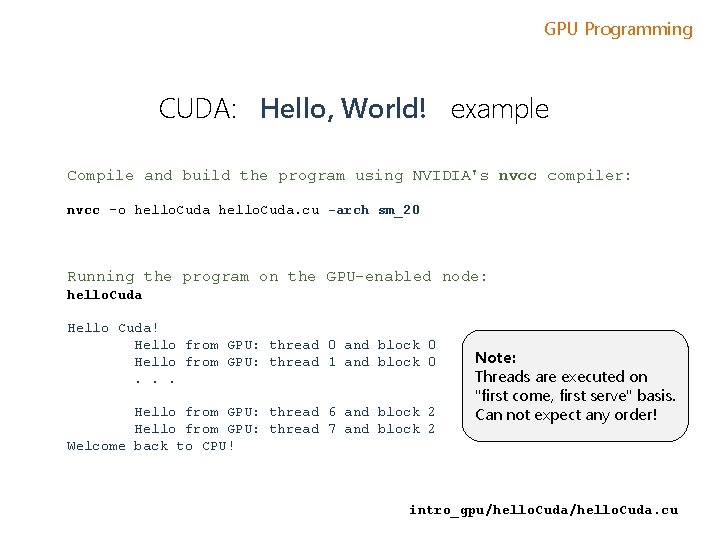

GPU Programming CUDA: Hello, World! example Compile and build the program using NVIDIA's nvcc compiler: nvcc -o hello. Cuda. cu -arch sm_20 Running the program on the GPU-enabled node: hello. Cuda Hello Cuda! Hello from GPU: thread 0 and block 0 Hello from GPU: thread 1 and block 0. . . Hello from GPU: thread 6 and block 2 Hello from GPU: thread 7 and block 2 Welcome back to CPU! Note: Threads are executed on "first come, first serve" basis. Can not expect any order! intro_gpu/hello. Cuda. cu

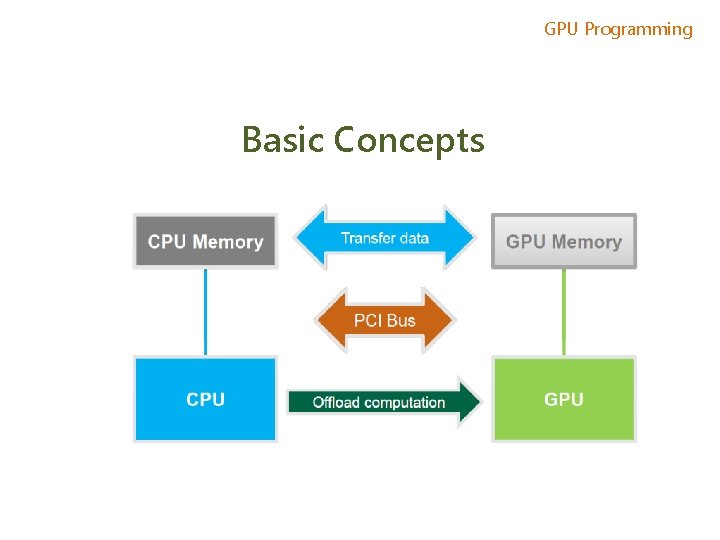

GPU Programming Basic Concepts

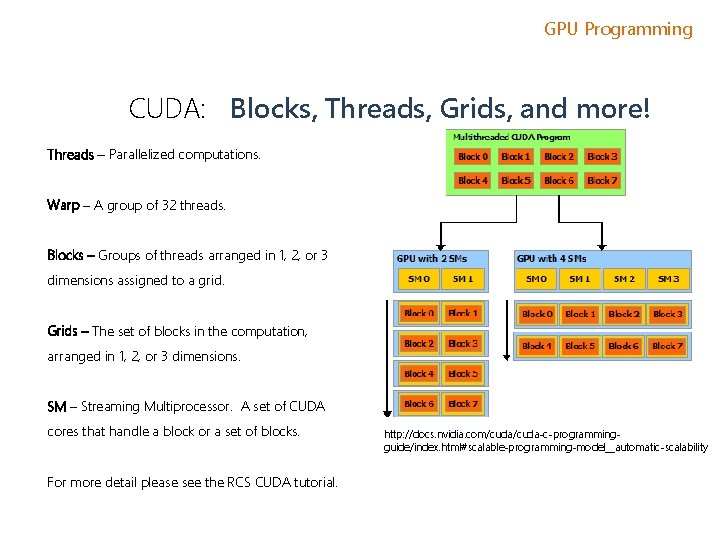

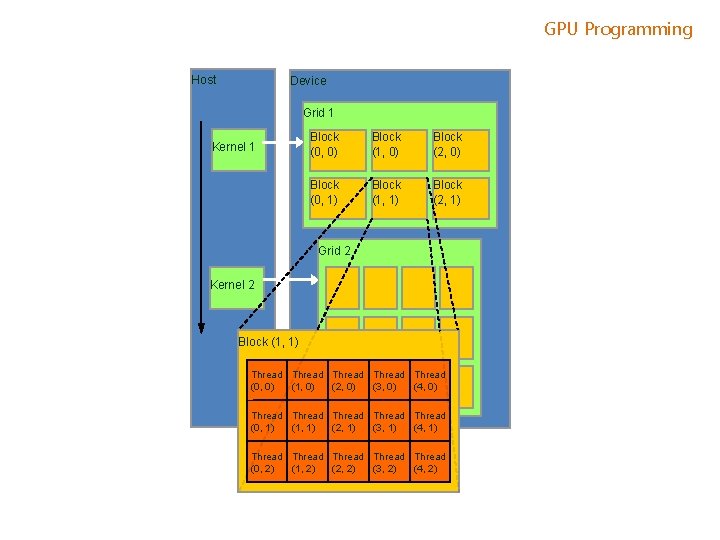

GPU Programming CUDA: Blocks, Threads, Grids, and more! Threads – Parallelized computations. Warp – A group of 32 threads. Blocks – Groups of threads arranged in 1, 2, or 3 dimensions assigned to a grid. Grids – The set of blocks in the computation, arranged in 1, 2, or 3 dimensions. SM – Streaming Multiprocessor. A set of CUDA cores that handle a block or a set of blocks. For more detail please see the RCS CUDA tutorial. http: //docs. nvidia. com/cuda-c-programmingguide/index. html#scalable-programming-model__automatic-scalability

GPU Programming Host Device Grid 1 Kernel 1 Block (0, 0) Block (1, 0) Block (2, 0) Block (0, 1) Block (1, 1) Block (2, 1) Grid 2 Kernel 2 Block (1, 1) Thread Thread (0, 0) (1, 0) (2, 0) (3, 0) (4, 0) Thread Thread (0, 1) (1, 1) (2, 1) (3, 1) (4, 1) Thread Thread (0, 2) (1, 2) (2, 2) (3, 2) (4, 2)

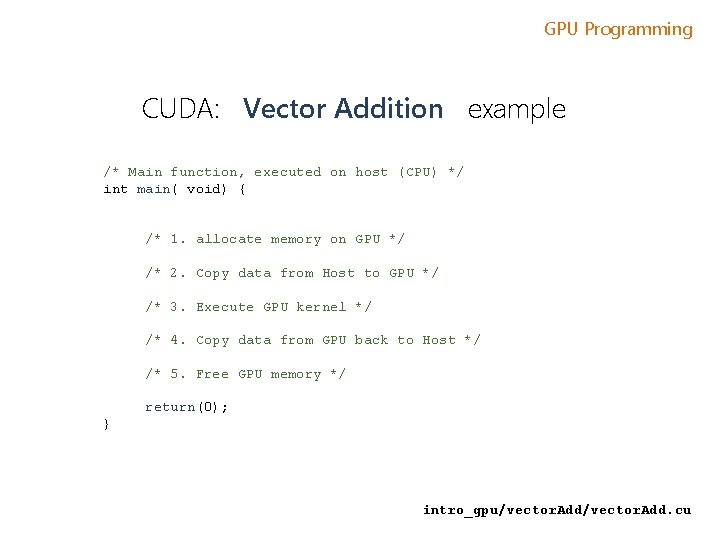

GPU Programming CUDA: Vector Addition example /* Main function, executed on host (CPU) */ int main( void) { /* 1. allocate memory on GPU */ /* 2. Copy data from Host to GPU */ /* 3. Execute GPU kernel */ /* 4. Copy data from GPU back to Host */ /* 5. Free GPU memory */ return(0); } intro_gpu/vector. Add. cu

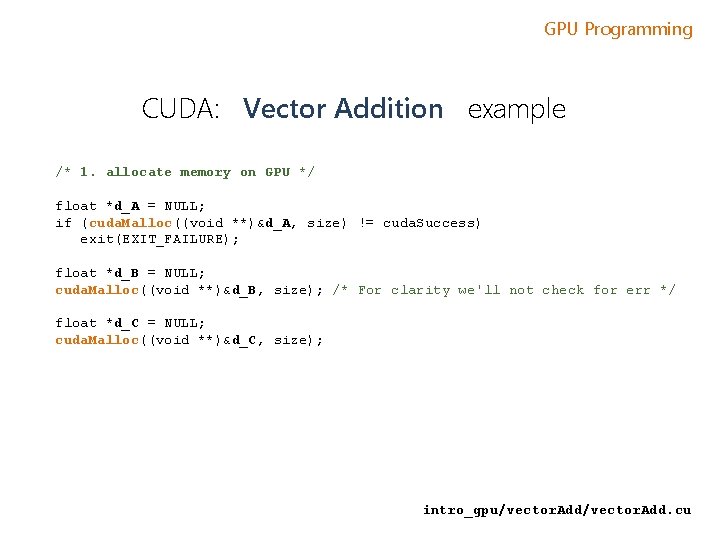

GPU Programming CUDA: Vector Addition example /* 1. allocate memory on GPU */ float *d_A = NULL; if (cuda. Malloc((void **)&d_A, size) != cuda. Success) exit(EXIT_FAILURE); float *d_B = NULL; cuda. Malloc((void **)&d_B, size); /* For clarity we'll not check for err */ float *d_C = NULL; cuda. Malloc((void **)&d_C, size); intro_gpu/vector. Add. cu

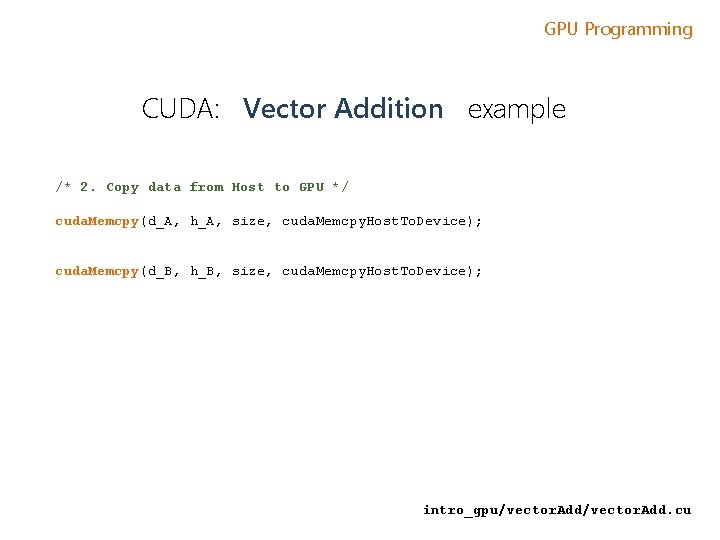

GPU Programming CUDA: Vector Addition example /* 2. Copy data from Host to GPU */ cuda. Memcpy(d_A, h_A, size, cuda. Memcpy. Host. To. Device); cuda. Memcpy(d_B, h_B, size, cuda. Memcpy. Host. To. Device); intro_gpu/vector. Add. cu

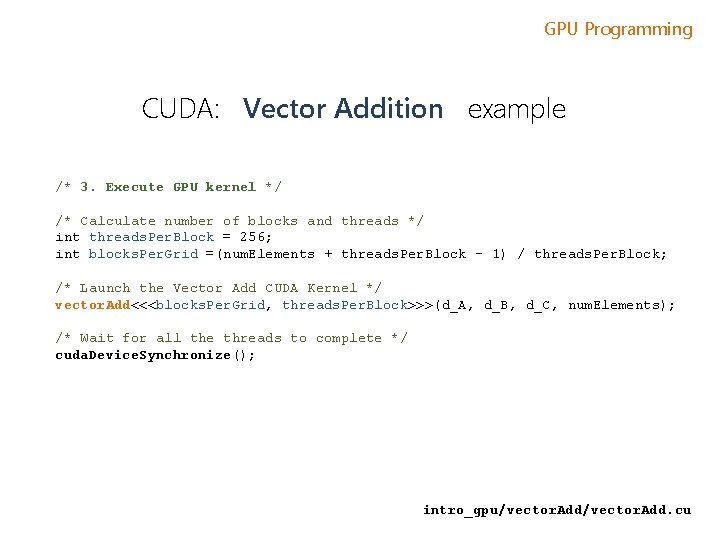

GPU Programming CUDA: Vector Addition example /* 3. Execute GPU kernel */ /* Calculate number of blocks and threads */ int threads. Per. Block = 256; int blocks. Per. Grid =(num. Elements + threads. Per. Block - 1) / threads. Per. Block; /* Launch the Vector Add CUDA Kernel */ vector. Add<<<blocks. Per. Grid, threads. Per. Block>>>(d_A, d_B, d_C, num. Elements); /* Wait for all the threads to complete */ cuda. Device. Synchronize(); intro_gpu/vector. Add. cu

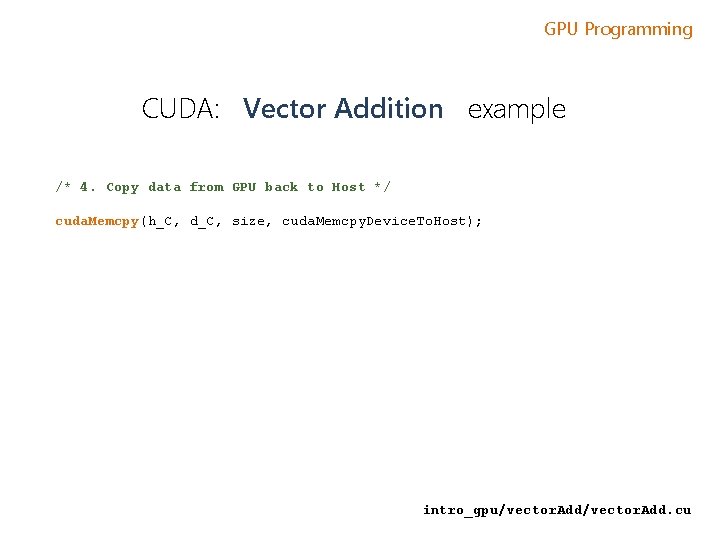

GPU Programming CUDA: Vector Addition example /* 4. Copy data from GPU back to Host */ cuda. Memcpy(h_C, d_C, size, cuda. Memcpy. Device. To. Host); intro_gpu/vector. Add. cu

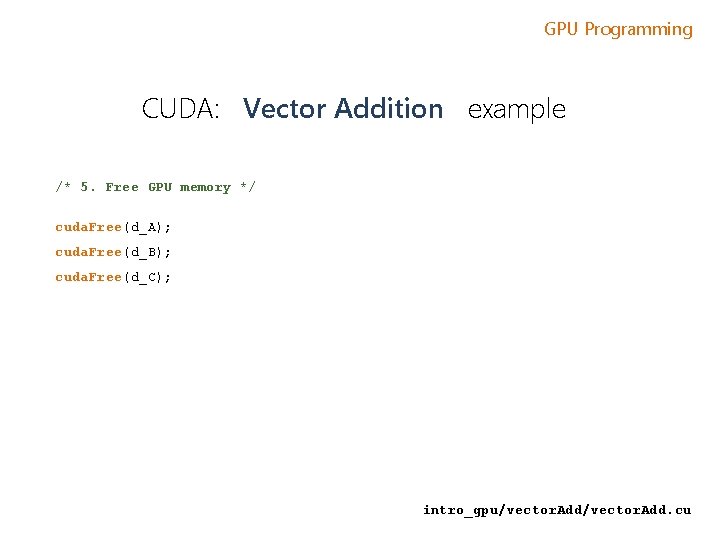

GPU Programming CUDA: Vector Addition example /* 5. Free GPU memory */ cuda. Free(d_A); cuda. Free(d_B); cuda. Free(d_C); intro_gpu/vector. Add. cu

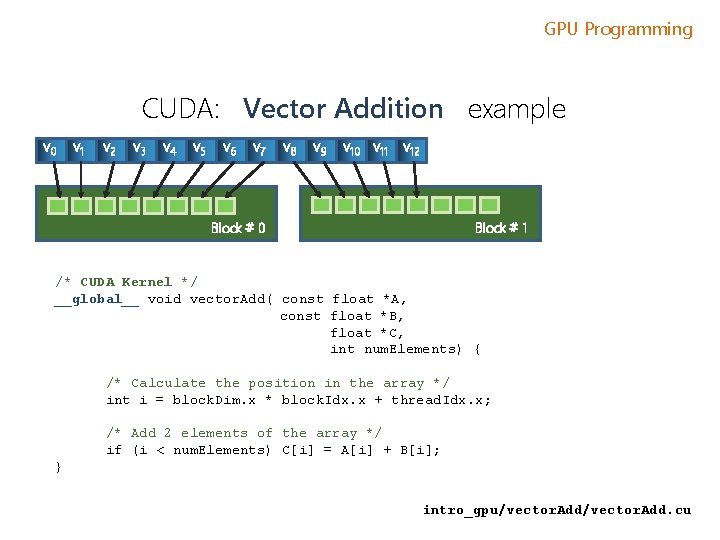

GPU Programming CUDA: Vector Addition example v 0 v 1 v 2 v 3 v 4 v 5 v 6 v 7 v 8 v 9 v 10 v 11 v 12 Block # 1 Block # 0 /* CUDA Kernel */ __global__ void vector. Add( const float *A, const float *B, float *C, int num. Elements) { /* Calculate the position in the array */ int i = block. Dim. x * block. Idx. x + thread. Idx. x; /* Add 2 elements of the array */ if (i < num. Elements) C[i] = A[i] + B[i]; } intro_gpu/vector. Add. cu

GPU Programming CUDA: Vector Addition example /* To build this example, execute Makefile */ > make /* To run, type vector. Add: */ > vector. Add [Vector addition of 50000 elements] Copy input data from the host memory to the CUDA device CUDA kernel launch with 196 blocks of 256 threads * Copy output data from the CUDA device to the host memory Done * Note: 196 x 256 = 50176 total threads intro_gpu/vector. Add. cu

GPU Programming GPU Accelerated Libraries NVIDIA cu. BLAS NVIDIA cu. SPARSE NVIDIA cu. RAND Sparse Linear Algebra NVIDIA NPP C++ STL Features for CUDA NVIDIA cu. FFT

GPU Programming GPU Accelerated Libraries powerful library of parallel algorithms and data structures; provides a flexible, high-level interface for GPU programming; For example, the thrust: : sort algorithm delivers 5 x to 100 x faster sorting performance than STL and TBB

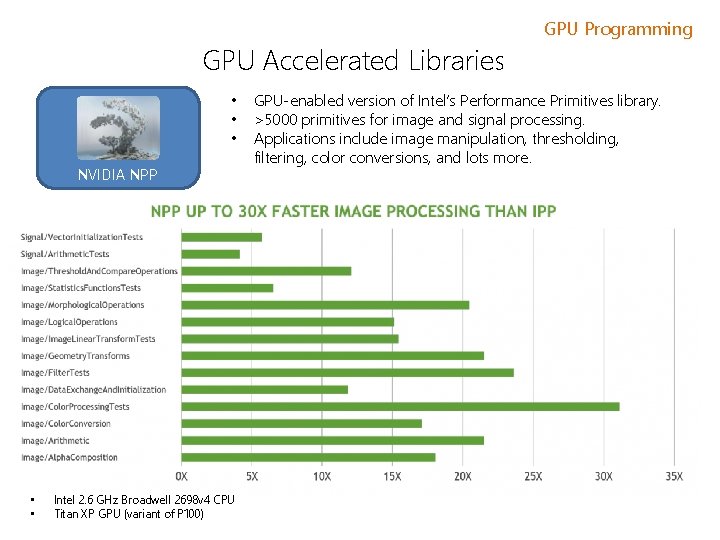

GPU Accelerated Libraries • • • NVIDIA NPP • • Intel 2. 6 GHz Broadwell 2698 v 4 CPU Titan XP GPU (variant of P 100) GPU Programming GPU-enabled version of Intel’s Performance Primitives library. >5000 primitives for image and signal processing. Applications include image manipulation, thresholding, filtering, color conversions, and lots more.

GPU Programming GPU Accelerated Libraries a GPU-accelerated version of the complete standard BLAS library; cu. BLAS 6 x to 17 x faster performance than the latest MKL BLAS Complete support for all 152 standard BLAS routines Single, double, complex, and double complex data types Fortran binding

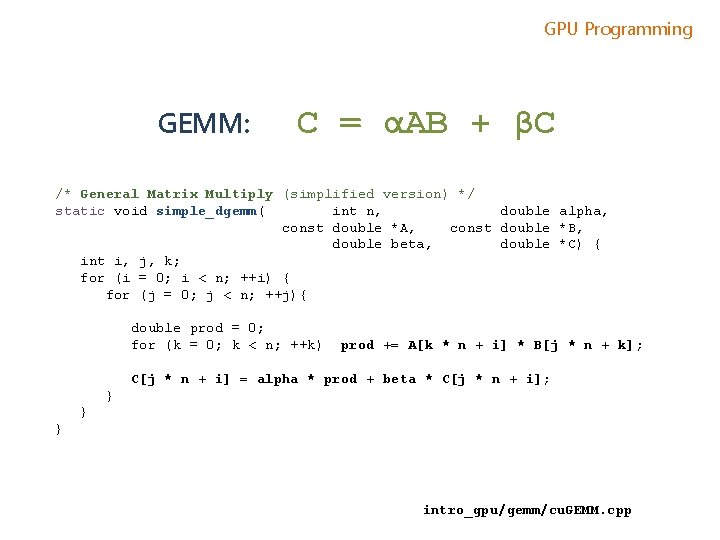

GPU Programming GEMM: C = αAB + βC /* General Matrix Multiply (simplified version) */ static void simple_dgemm( int n, double alpha, const double *A, const double *B, double beta, double *C) { int i, j, k; for (i = 0; i < n; ++i) { for (j = 0; j < n; ++j){ double prod = 0; for (k = 0; k < n; ++k) prod += A[k * n + i] * B[j * n + k]; C[j * n + i] = alpha * prod + beta * C[j * n + i]; } } } intro_gpu/gemm/cu. GEMM. cpp

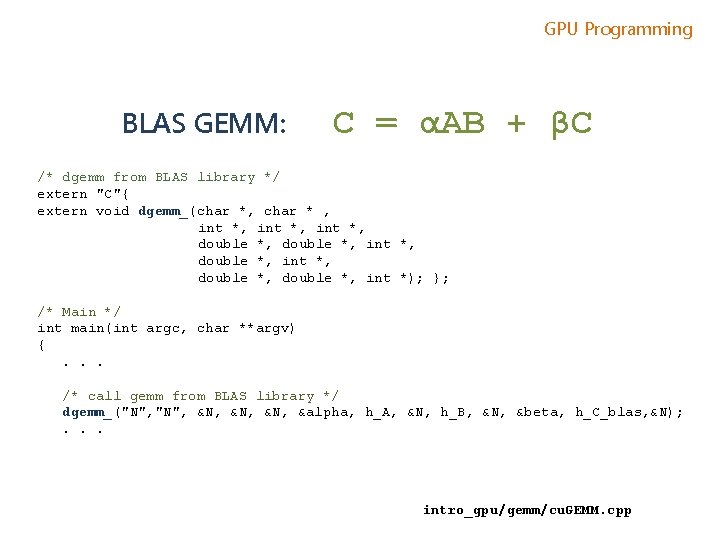

GPU Programming BLAS GEMM: C = αAB + βC /* dgemm from BLAS library */ extern "C"{ extern void dgemm_(char *, char * , int *, double *, int *, double *, int *); }; /* Main */ int main(int argc, char **argv) {. . . /* call gemm from BLAS library */ dgemm_("N", &N, &N, &alpha, h_A, &N, h_B, &N, &beta, h_C_blas, &N); . . . intro_gpu/gemm/cu. GEMM. cpp

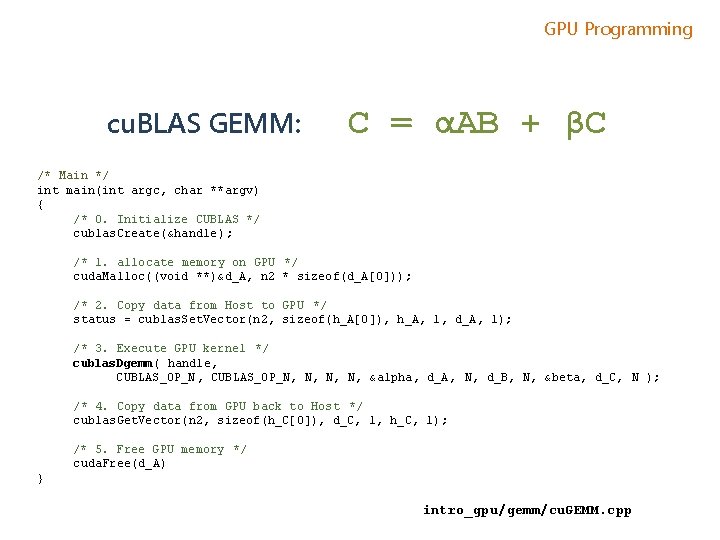

GPU Programming cu. BLAS GEMM: C = αAB + βC /* Main */ int main(int argc, char **argv) { /* 0. Initialize CUBLAS */ cublas. Create(&handle); /* 1. allocate memory on GPU */ cuda. Malloc((void **)&d_A, n 2 * sizeof(d_A[0])); /* 2. Copy data from Host to GPU */ status = cublas. Set. Vector(n 2, sizeof(h_A[0]), h_A, 1, d_A, 1); /* 3. Execute GPU kernel */ cublas. Dgemm( handle, CUBLAS_OP_N, N, &alpha, d_A, N, d_B, N, &beta, d_C, N ); /* 4. Copy data from GPU back to Host */ cublas. Get. Vector(n 2, sizeof(h_C[0]), d_C, 1, h_C, 1); /* 5. Free GPU memory */ cuda. Free(d_A) } intro_gpu/gemm/cu. GEMM. cpp

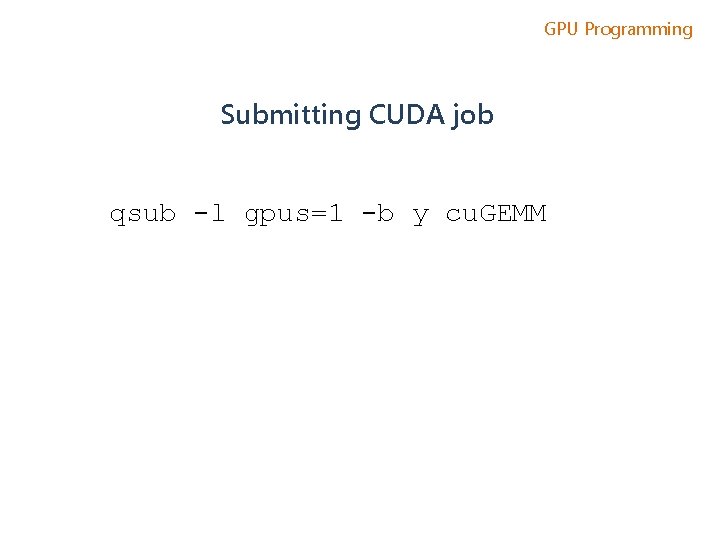

GPU Programming Submitting CUDA job qsub -l gpus=1 -b y cu. GEMM

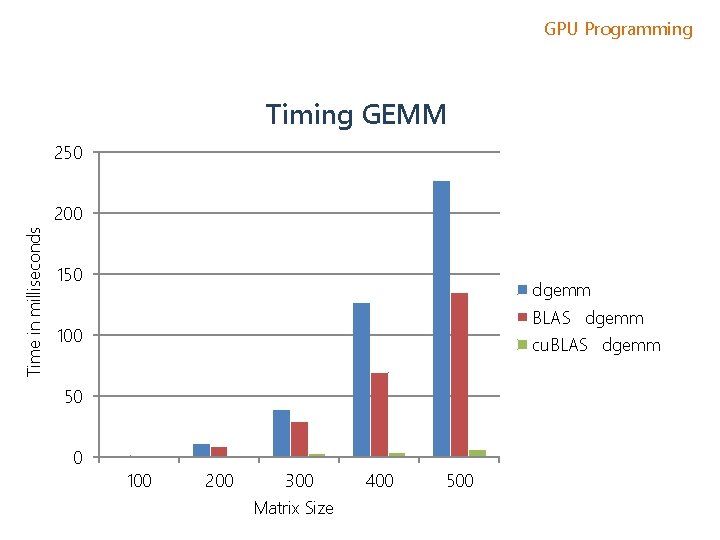

GPU Programming Timing GEMM 250 Time in milliseconds 200 150 dgemm BLASS dgemm 100 cu. BLASS dgemm 50 0 100 200 300 Matrix Size 400 500

GPU Programming Development Environment • Nsight IDE: Linux, Mac & Windows - GPU Debugging and profiling. Based on the Eclipse IDE system. • CUDA-GDB debugger (NVIDIA Visual Profiler)

GPU Programming CUDA Resources • RCS CUDA Tutorial • CUDA and CUDA libraries examples: http: //docs. nvidia. com/cuda-samples/; • NVIDIA's Cuda Resources: https: //developer. nvidia. com/cuda-education • Online course on Udacity: https: //www. udacity. com/course/cs 344 • CUDA C/C++ & Fortran: http: //developer. nvidia. com/cuda-toolkit • Py. CUDA (Python): http: //mathema. tician. de/software/pycuda

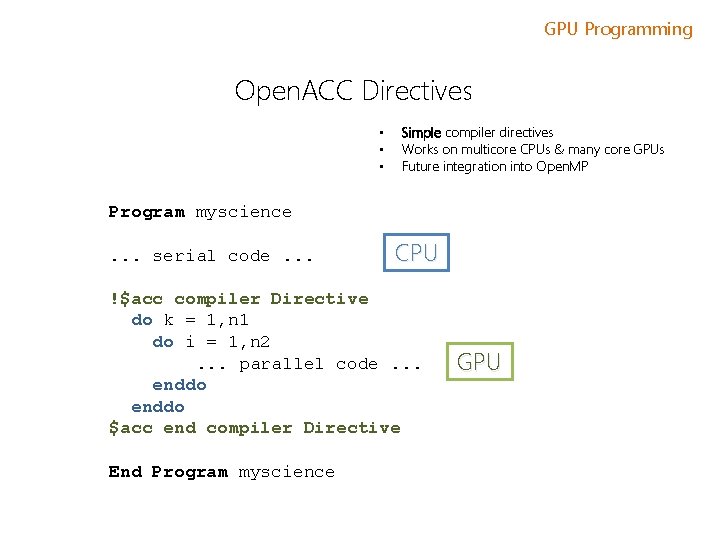

GPU Programming Open. ACC Directives • • • Simple compiler directives Works on multicore CPUs & many core GPUs Future integration into Open. MP Program myscience. . . serial code. . . CPU !$acc compiler Directive do k = 1, n 1 do i = 1, n 2. . . parallel code. . . enddo $acc end compiler Directive End Program myscience GPU

![GPU Programming Open. ACC Directives - Fortran !$acc directive [clause [, ] clause] …] GPU Programming Open. ACC Directives - Fortran !$acc directive [clause [, ] clause] …]](http://slidetodoc.com/presentation_image/2976c18f07192baff123d28a78b8271b/image-54.jpg)

GPU Programming Open. ACC Directives - Fortran !$acc directive [clause [, ] clause] …] Often paired with a matching end directive surrounding a structured code block !$acc end directive - C #pragma acc directive [clause [, ] clause] …] Often followed by a structured code block

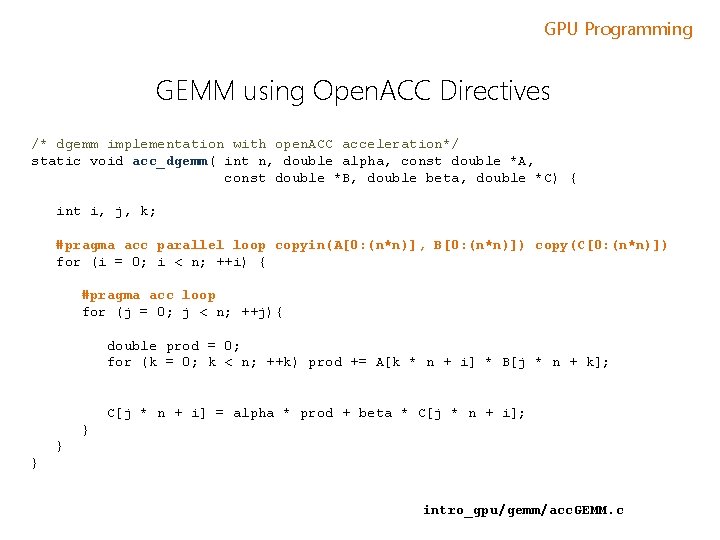

GPU Programming GEMM using Open. ACC Directives /* dgemm implementation with open. ACC acceleration*/ static void acc_dgemm( int n, double alpha, const double *A, const double *B, double beta, double *C) { int i, j, k; #pragma acc parallel loop copyin(A[0: (n*n)], B[0: (n*n)]) copy(C[0: (n*n)]) for (i = 0; i < n; ++i) { #pragma acc loop for (j = 0; j < n; ++j){ double prod = 0; for (k = 0; k < n; ++k) prod += A[k * n + i] * B[j * n + k]; C[j * n + i] = alpha * prod + beta * C[j * n + i]; } } } intro_gpu/gemm/acc. GEMM. c

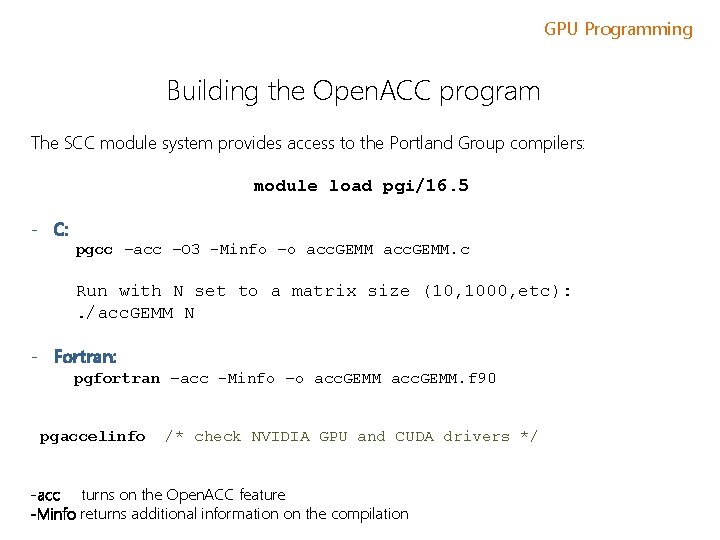

GPU Programming Building the Open. ACC program The SCC module system provides access to the Portland Group compilers: module load pgi/16. 5 - C: pgcc –acc –O 3 -Minfo –o acc. GEMM. c Run with N set to a matrix size (10, 1000, etc): . /acc. GEMM N - Fortran: pgfortran –acc -Minfo –o acc. GEMM. f 90 pgaccelinfo /* check NVIDIA GPU and CUDA drivers */ -acc turns on the Open. ACC feature -Minfo returns additional information on the compilation

![GPU Programming PGI compiler output: acc_dgemm: 34, Generating present_or_copyin(B[0: n*n]) Generating present_or_copyin(A[0: n*n]) Generating GPU Programming PGI compiler output: acc_dgemm: 34, Generating present_or_copyin(B[0: n*n]) Generating present_or_copyin(A[0: n*n]) Generating](http://slidetodoc.com/presentation_image/2976c18f07192baff123d28a78b8271b/image-57.jpg)

GPU Programming PGI compiler output: acc_dgemm: 34, Generating present_or_copyin(B[0: n*n]) Generating present_or_copyin(A[0: n*n]) Generating present_or_copy(C[0: n*n]) Accelerator kernel generated 35, #pragma acc loop gang /* block. Idx. x */ 41, #pragma acc loop vector(256) /* thread. Idx. x */ 34, Generating NVIDIA code Generating compute capability 1. 3 binary Generating compute capability 2. 0 binary Generating compute capability 3. 0 binary 38, Loop is parallelizable 41, Loop is parallelizable

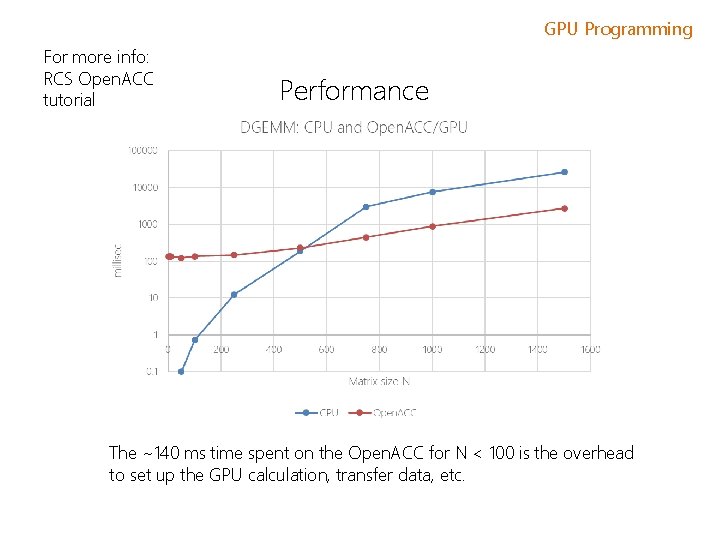

GPU Programming For more info: RCS Open. ACC tutorial Performance The ~140 ms time spent on the Open. ACC for N < 100 is the overhead to set up the GPU calculation, transfer data, etc.

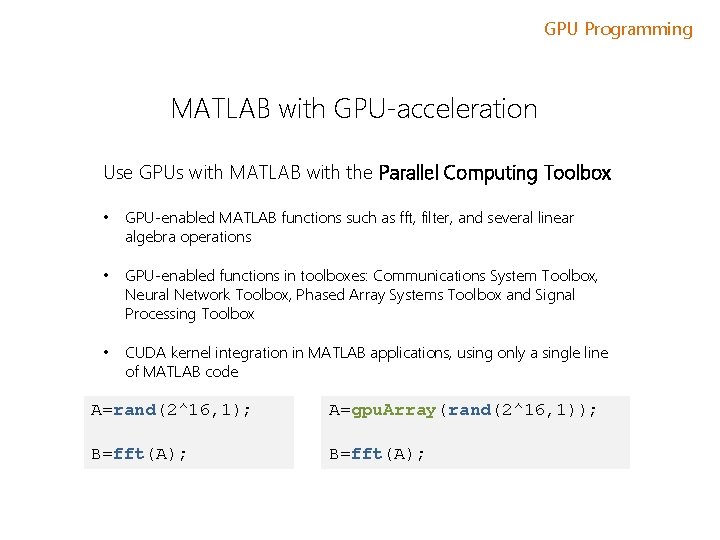

GPU Programming MATLAB with GPU-acceleration Use GPUs with MATLAB with the Parallel Computing Toolbox • GPU-enabled MATLAB functions such as fft, filter, and several linear algebra operations • GPU-enabled functions in toolboxes: Communications System Toolbox, Neural Network Toolbox, Phased Array Systems Toolbox and Signal Processing Toolbox • CUDA kernel integration in MATLAB applications, using only a single line of MATLAB code A=rand(2^16, 1); A=gpu. Array(rand(2^16, 1)); B=fft(A);

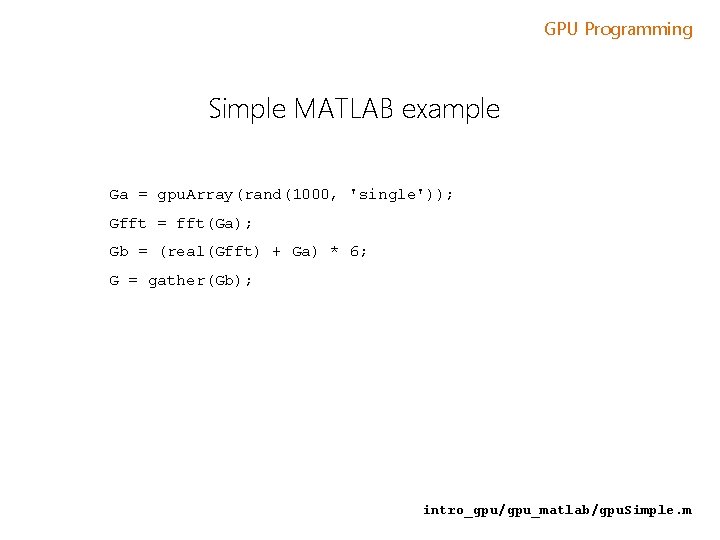

GPU Programming Simple MATLAB example Ga = gpu. Array(rand(1000, 'single')); Gfft = fft(Ga); Gb = (real(Gfft) + Ga) * 6; G = gather(Gb); intro_gpu/gpu_matlab/gpu. Simple. m

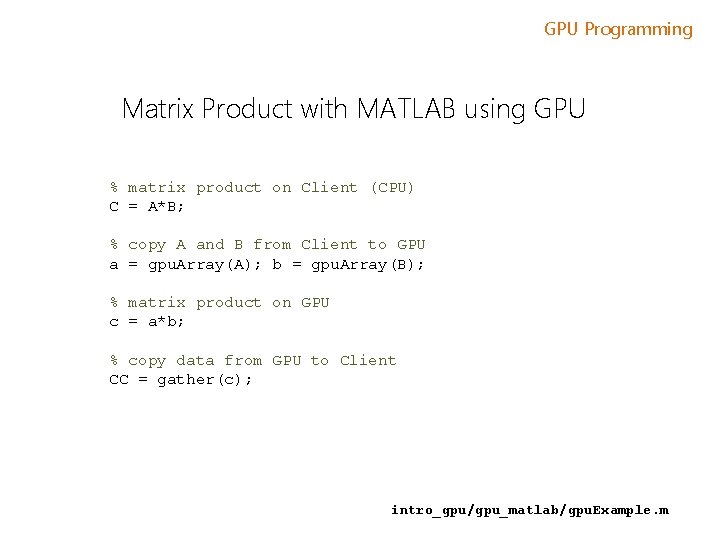

GPU Programming Matrix Product with MATLAB using GPU % matrix product on Client (CPU) C = A*B; % copy A and B from Client to GPU a = gpu. Array(A); b = gpu. Array(B); % matrix product on GPU c = a*b; % copy data from GPU to Client CC = gather(c); intro_gpu/gpu_matlab/gpu. Example. m

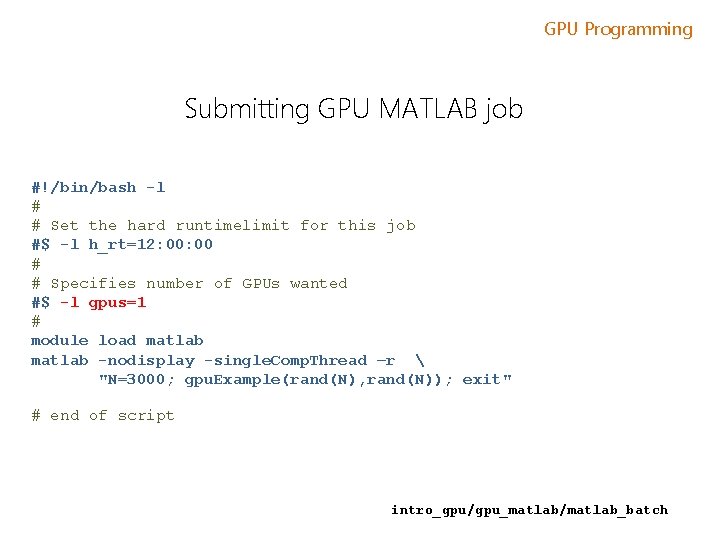

GPU Programming Submitting GPU MATLAB job #!/bin/bash -l # # Set the hard runtimelimit for this job #$ -l h_rt=12: 00 # # Specifies number of GPUs wanted #$ -l gpus=1 # module load matlab -nodisplay -single. Comp. Thread –r "N=3000; gpu. Example(rand(N), rand(N)); exit" # end of script intro_gpu/gpu_matlab/matlab_batch

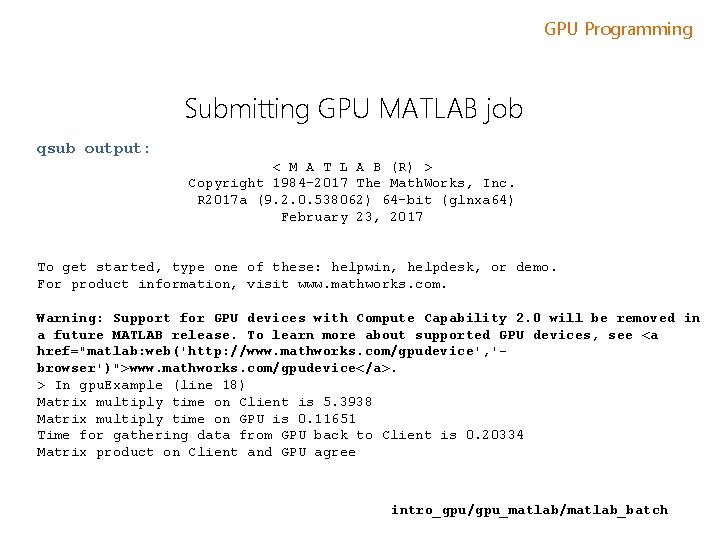

GPU Programming Submitting GPU MATLAB job qsub output: < M A T L A B (R) > Copyright 1984 -2017 The Math. Works, Inc. R 2017 a (9. 2. 0. 538062) 64 -bit (glnxa 64) February 23, 2017 To get started, type one of these: helpwin, helpdesk, or demo. For product information, visit www. mathworks. com. Warning: Support for GPU devices with Compute Capability 2. 0 will be removed in a future MATLAB release. To learn more about supported GPU devices, see <a href="matlab: web('http: //www. mathworks. com/gpudevice', 'browser')">www. mathworks. com/gpudevice</a>. > In gpu. Example (line 18) Matrix multiply time on Client is 5. 3938 Matrix multiply time on GPU is 0. 11651 Time for gathering data from GPU back to Client is 0. 20334 Matrix product on Client and GPU agree intro_gpu/gpu_matlab/matlab_batch

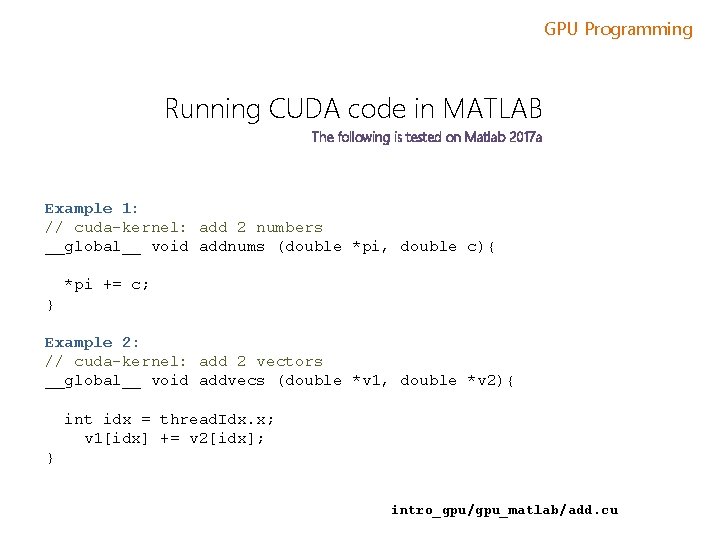

GPU Programming Running CUDA code in MATLAB The following is tested on Matlab 2017 a Example 1: // cuda-kernel: add 2 numbers __global__ void addnums (double *pi, double c){ *pi += c; } Example 2: // cuda-kernel: add 2 vectors __global__ void addvecs (double *v 1, double *v 2){ int idx = thread. Idx. x; v 1[idx] += v 2[idx]; } intro_gpu/gpu_matlab/add. cu

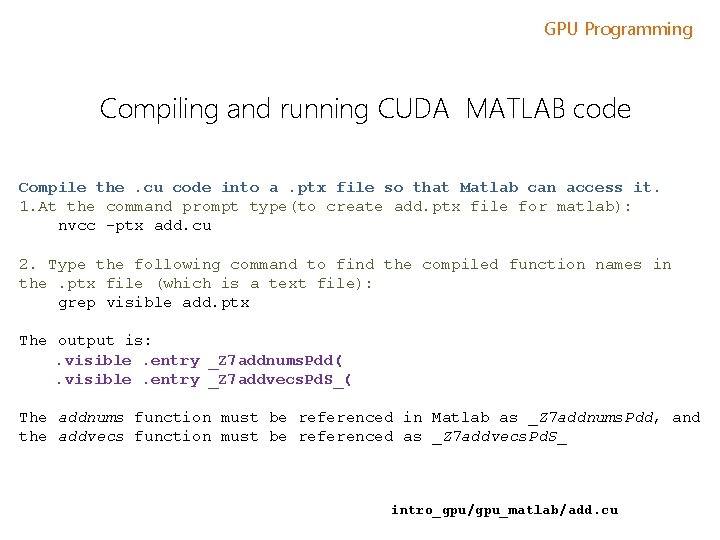

GPU Programming Compiling and running CUDA MATLAB code Compile the. cu code into a. ptx file so that Matlab can access it. 1. At the command prompt type(to create add. ptx file for matlab): nvcc -ptx add. cu 2. Type the following command to find the compiled function names in the. ptx file (which is a text file): grep visible add. ptx The output is: . visible. entry _Z 7 addnums. Pdd(. visible. entry _Z 7 addvecs. Pd. S_( The addnums function must be referenced in Matlab as _Z 7 addnums. Pdd, and the addvecs function must be referenced as _Z 7 addvecs. Pd. S_ intro_gpu/gpu_matlab/add. cu

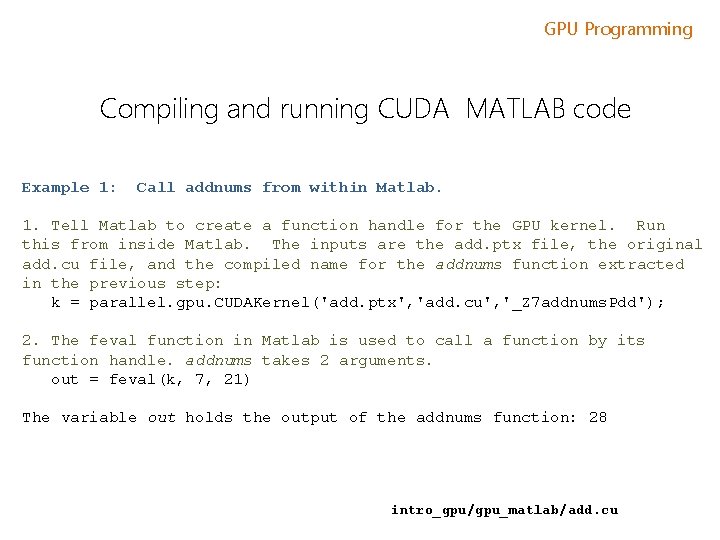

GPU Programming Compiling and running CUDA MATLAB code Example 1: Call addnums from within Matlab. 1. Tell Matlab to create a function handle for the GPU kernel. Run this from inside Matlab. The inputs are the add. ptx file, the original add. cu file, and the compiled name for the addnums function extracted in the previous step: k = parallel. gpu. CUDAKernel('add. ptx', 'add. cu', '_Z 7 addnums. Pdd'); 2. The feval function in Matlab is used to call a function by its function handle. addnums takes 2 arguments. out = feval(k, 7, 21) The variable out holds the output of the addnums function: 28 intro_gpu/gpu_matlab/add. cu

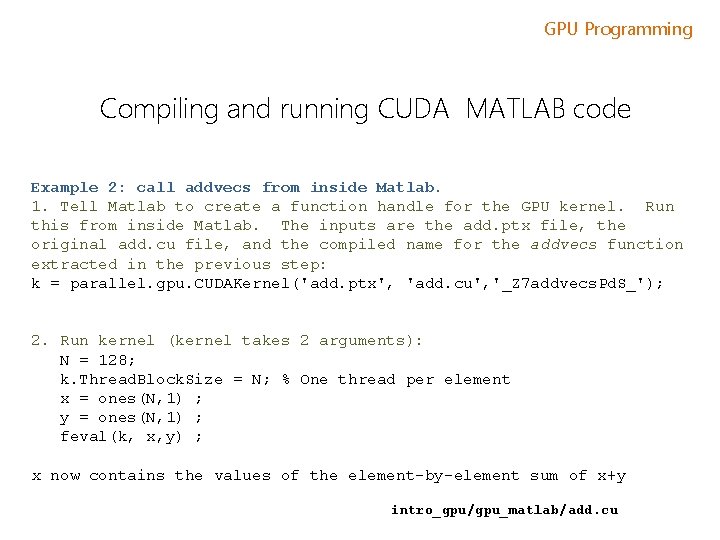

GPU Programming Compiling and running CUDA MATLAB code Example 2: call addvecs from inside Matlab. 1. Tell Matlab to create a function handle for the GPU kernel. Run this from inside Matlab. The inputs are the add. ptx file, the original add. cu file, and the compiled name for the addvecs function extracted in the previous step: k = parallel. gpu. CUDAKernel('add. ptx', 'add. cu', '_Z 7 addvecs. Pd. S_'); 2. Run kernel (kernel takes 2 arguments): N = 128; k. Thread. Block. Size = N; % One thread per element x = ones(N, 1) ; y = ones(N, 1) ; feval(k, x, y) ; x now contains the values of the element-by-element sum of x+y intro_gpu/gpu_matlab/add. cu

GPU Programming MATLAB GPU Resources • MATLAB GPU Computing Support for NVIDIA CUDA-Enabled GPUs: http: //www. mathworks. com/discovery/matlab-gpu. html; • GPU-enabled functions : http: //www. mathworks. com/help/distcomp/using-gpuarray. html#bsloua 3 -1 • GPU-enabled functions in toolboxes: http: //www. mathworks. com/products/parallel-computing/builtin-parallel-support. html

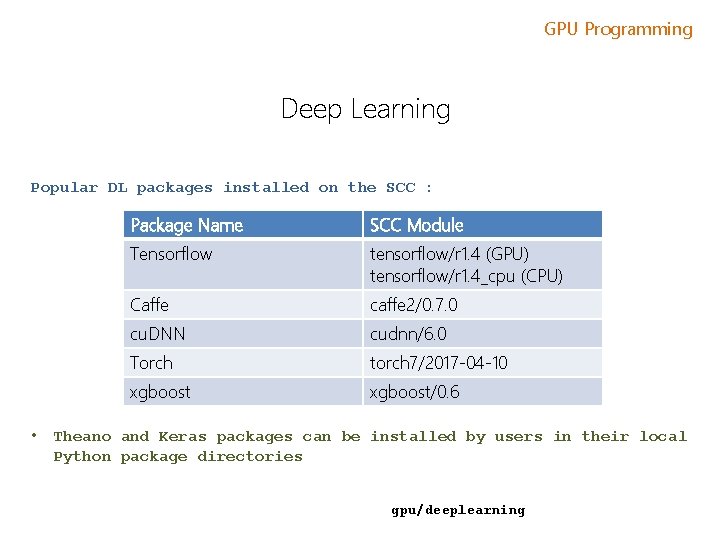

GPU Programming Deep Learning Popular DL packages installed on the SCC : • Package Name SCC Module Tensorflow tensorflow/r 1. 4 (GPU) tensorflow/r 1. 4_cpu (CPU) Caffe caffe 2/0. 7. 0 cu. DNN cudnn/6. 0 Torch torch 7/2017 -04 -10 xgboost/0. 6 Theano and Keras packages can be installed by users in their local Python package directories gpu/deeplearning

GPU Programming RCS GPU Related Tutorials • Matlab – Parallel Computing Toolbox. (includes GPU usage. ) • Sep 14, 2: 30 -4: 30 pm. • Introduction to CUDA • Sep. 26, 2: 30 -4: 30 pm • Introduction to Open. ACC • See online tutorial docs: http: //rcs. bu. edu

GPU Programming This tutorial has been made possible by Research Computing Services at Boston University. Brian Gregor bgregor@bu. edu

- Slides: 71